ContentBased Routing Different Plans for Different Data Pedro

Content-Based Routing: Different Plans for Different Data Pedro Bizarro Joint work with Shivnath Babu, David De. Witt, Jennifer Widom September 1, 2005 VLDB 2005

Introduction • The opportunity to impove: – Optimizers pick a single plan for a query – However, different subsets of data may have very different statistical properties – May be more efficient to use different plans for different subsets of data 2

Overview of CBR • Eliminates single plan assumption • Identifies tuple classes • Uses multiple plans, each customized for a different tuple class • CBR applies to any streaming data: – E. gs. : stream systems, regular DBMS operators using iterators, and acquisitional systems. • Adaptive and low overhead algorithm • Implemented in Telegraph. CQ as an extension to Eddies 3

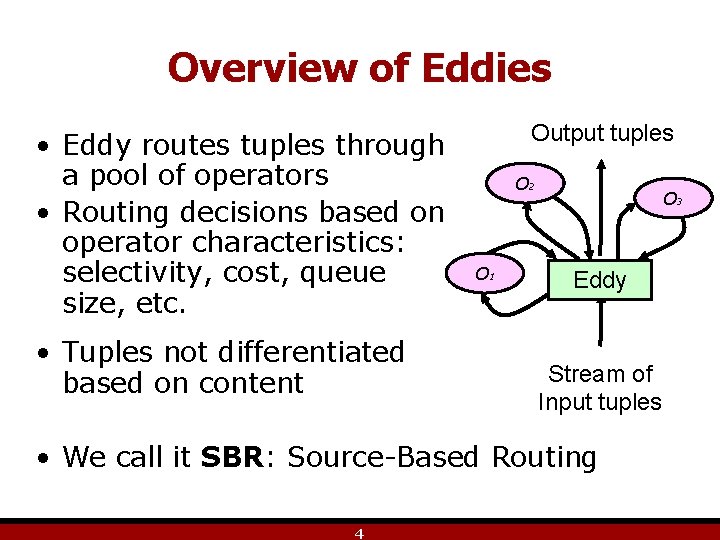

Overview of Eddies • Eddy routes tuples through a pool of operators • Routing decisions based on operator characteristics: selectivity, cost, queue size, etc. • Tuples not differentiated based on content Output tuples O 2 O 1 O 3 Eddy Stream of Input tuples • We call it SBR: Source-Based Routing 4

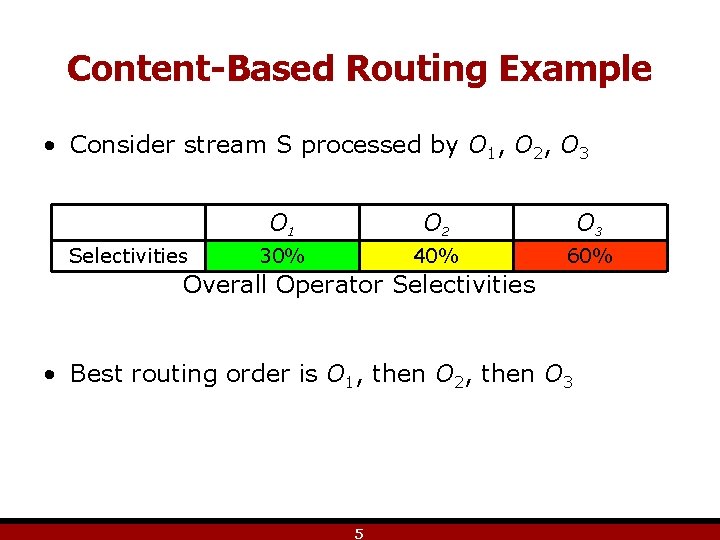

Content-Based Routing Example • Consider stream S processed by O 1, O 2, O 3 Selectivities O 1 O 2 O 3 30% 40% 60% Overall Operator Selectivities • Best routing order is O 1, then O 2, then O 3 5

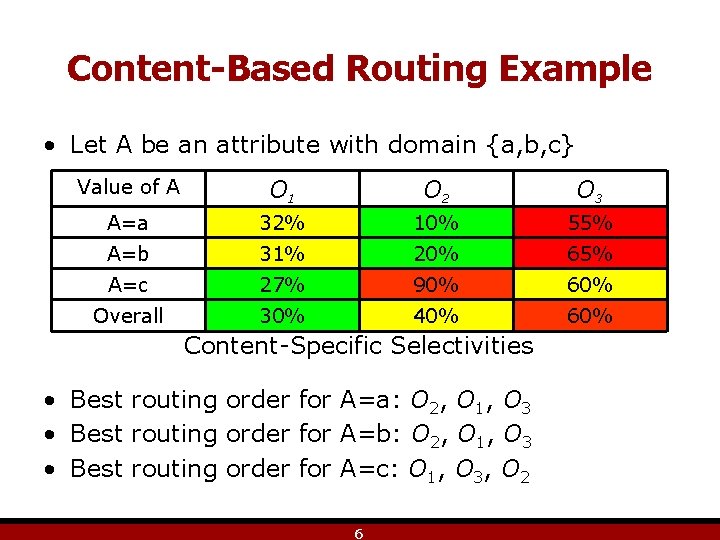

Content-Based Routing Example • Let A be an attribute with domain {a, b, c} Value of A O 1 O 2 O 3 A=a 32% 10% 55% A=b 31% 20% 65% A=c 27% 90% 60% Overall 30% 40% 60% Content-Specific Selectivities • Best routing order for A=a: O 2, O 1, O 3 • Best routing order for A=b: O 2, O 1, O 3 • Best routing order for A=c: O 1, O 3, O 2 6

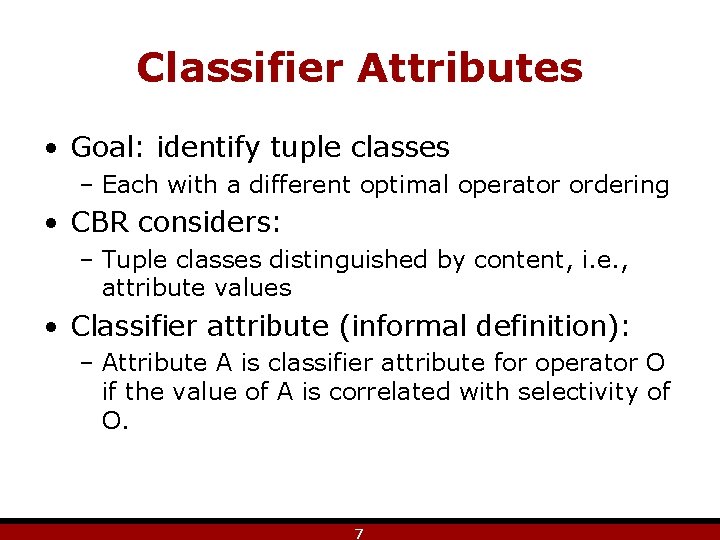

Classifier Attributes • Goal: identify tuple classes – Each with a different optimal operator ordering • CBR considers: – Tuple classes distinguished by content, i. e. , attribute values • Classifier attribute (informal definition): – Attribute A is classifier attribute for operator O if the value of A is correlated with selectivity of O. 7

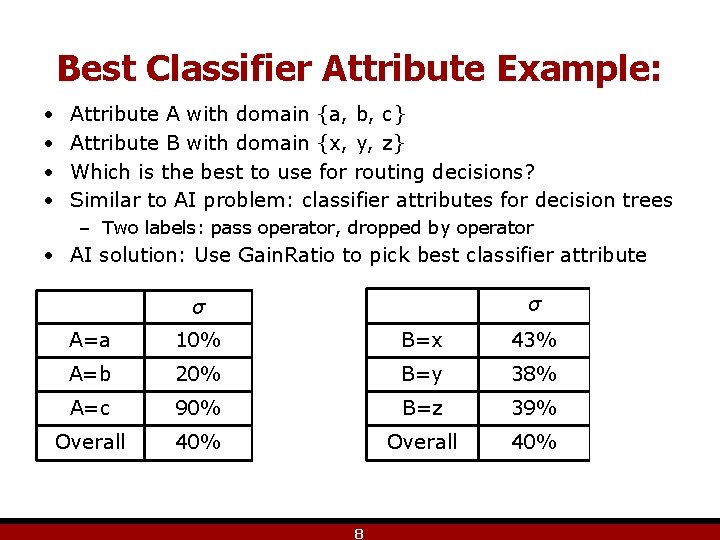

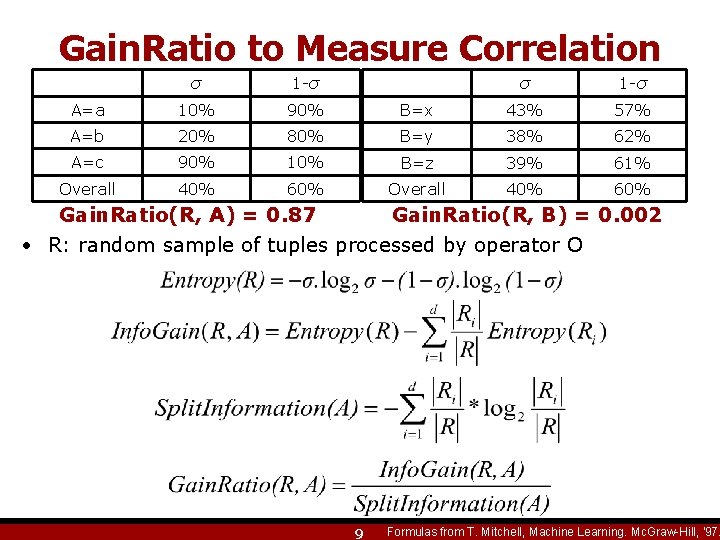

Best Classifier Attribute Example: • • Attribute A with domain {a, b, c} Attribute B with domain {x, y, z} Which is the best to use for routing decisions? Similar to AI problem: classifier attributes for decision trees – Two labels: pass operator, dropped by operator • AI solution: Use Gain. Ratio to pick best classifier attribute σ 1 -σ B=x 43% 57% 80% B=y 38% 62% 90% 10% B=z 39% 61% 40% 60% Overall 40% 60% σ 1 -σ A=a 10% 90% A=b 20% A=c Overall 8

Gain. Ratio to Measure Correlation σ 1 -σ A=a 10% 90% A=b 20% A=c Overall σ 1 -σ B=x 43% 57% 80% B=y 38% 62% 90% 10% B=z 39% 61% 40% 60% Overall 40% 60% Gain. Ratio(R, A) = 0. 87 Gain. Ratio(R, B) = 0. 002 • R: random sample of tuples processed by operator O 9 Formulas from T. Mitchell, Machine Learning. Mc. Graw-Hill, '97.

Classifier Attributes: Definition An attribute A is a classifier attribute for operator O, if for any large random sample R of tuples processed by O, Gain. Ratio(R, A)> , for some threshold 10

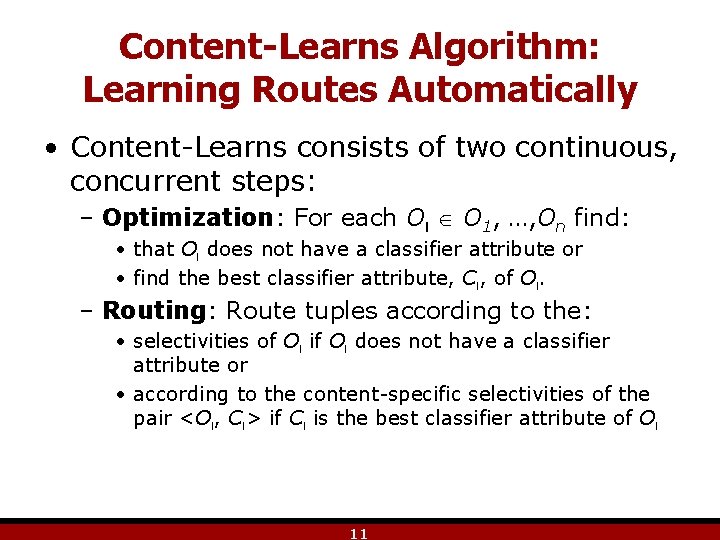

Content-Learns Algorithm: Learning Routes Automatically • Content-Learns consists of two continuous, concurrent steps: – Optimization: For each Ol O 1, …, On find: • that Ol does not have a classifier attribute or • find the best classifier attribute, Cl, of Ol. – Routing: Route tuples according to the: • selectivities of Ol if Ol does not have a classifier attribute or • according to the content-specific selectivities of the pair <Ol, Cl> if Cl is the best classifier attribute of Ol 11

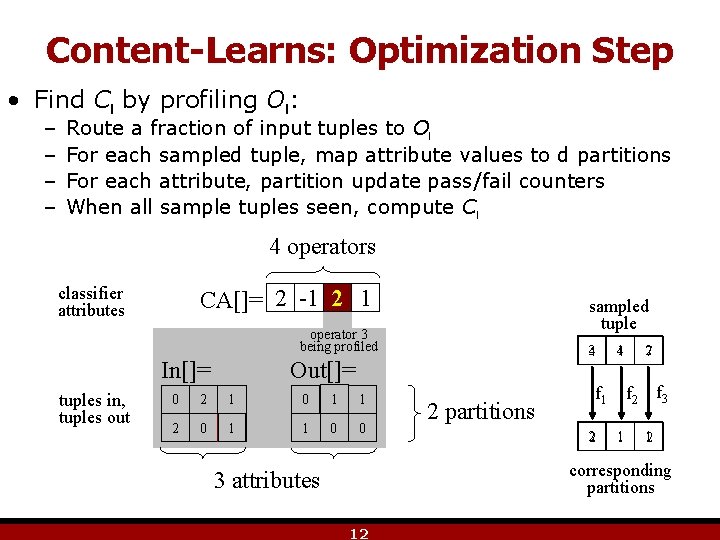

Content-Learns: Optimization Step • Find Cl by profiling Ol: – – Route a fraction of input tuples to Ol For each sampled tuple, map attribute values to d partitions For each attribute, partition update pass/fail counters When all sample tuples seen, compute Cl 4 operators classifier attributes CA[]= 2 -1 12 1 sampled tuple operator 3 being profiled In[]= tuples in, tuples out 34 Out[]= 0 102 10 0 01 10 012 0 10 01 0 0 2 partitions 14 f 2 f 3 f 1 2 27 1 12 corresponding partitions 3 attributes 12

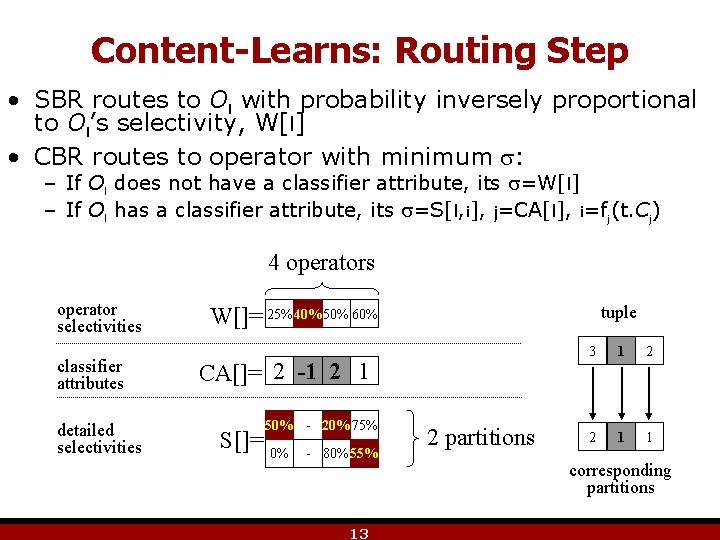

Content-Learns: Routing Step • SBR routes to Ol with probability inversely proportional to Ol’s selectivity, W[l] • CBR routes to operator with minimum : – If Ol does not have a classifier attribute, its =W[l] – If Ol has a classifier attribute, its =S[l, i], j=CA[l], i=fj(t. Cj) 4 operators operator selectivities classifier attributes detailed selectivities tuple W[]= 25% 40% 50% 60% CA[]= 2 -1 2 1 50% - 20% 75% S[]= 0% - 80% 55% 13 2 partitions 3 1 2 2 1 1 corresponding partitions

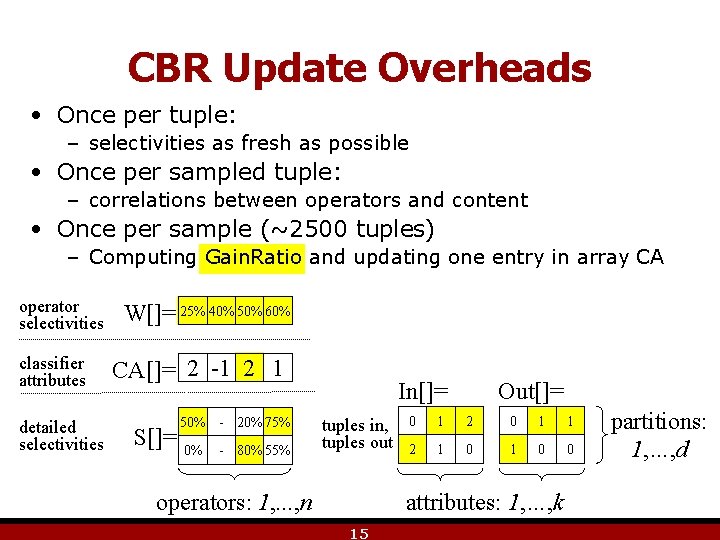

CBR Update Overheads • Once per tuple: – selectivities as fresh as possible • Once per sampled tuple: – correlations between operators and content • Once per sample (~2500 tuples) – Computing Gain. Ratio and updating one entry in array CA operator selectivities classifier attributes detailed selectivities W[]= 25% 40% 50% 60% CA[]= 2 -1 2 1 50% - 20% 75% S[]= 0% - 80% 55% In[]= tuples in, tuples out operators: 1, . . . , n Out[]= 0 1 2 0 1 1 2 1 0 0 attributes: 1, …, k 15 partitions: 1, …, d

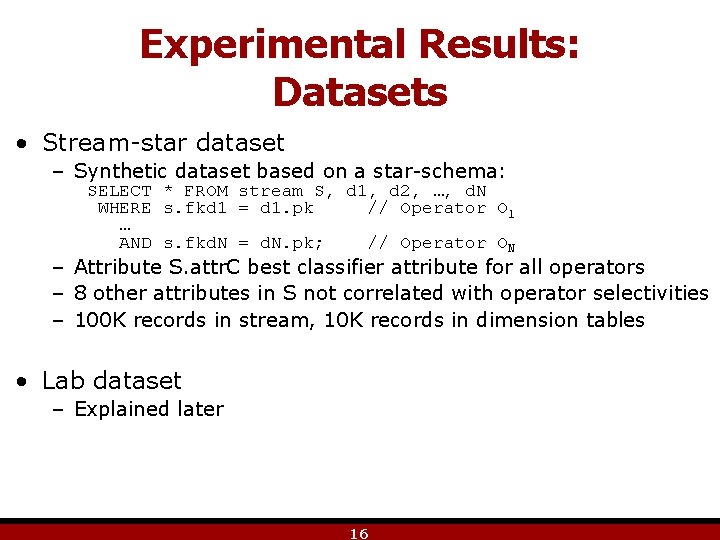

Experimental Results: Datasets • Stream-star dataset – Synthetic dataset based on a star-schema: SELECT * FROM stream S, d 1, d 2, …, d. N WHERE s. fkd 1 = d 1. pk // Operator O 1 … AND s. fkd. N = d. N. pk; // Operator ON – Attribute S. attr. C best classifier attribute for all operators – 8 other attributes in S not correlated with operator selectivities – 100 K records in stream, 10 K records in dimension tables • Lab dataset – Explained later 16

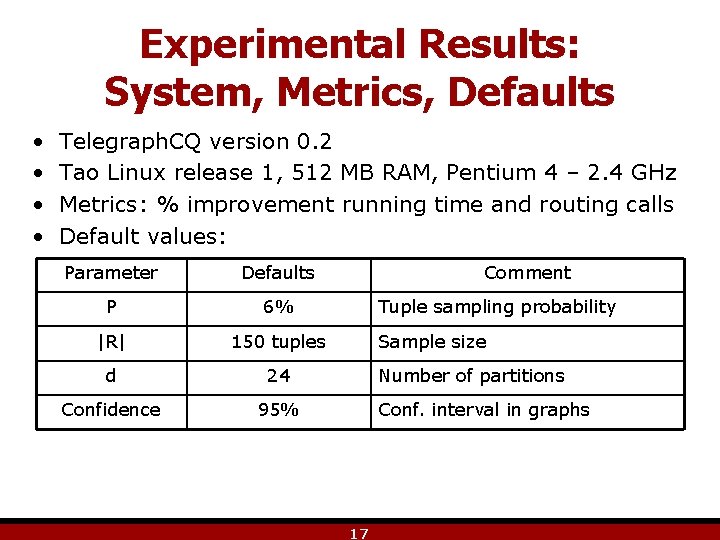

Experimental Results: System, Metrics, Defaults • • Telegraph. CQ version 0. 2 Tao Linux release 1, 512 MB RAM, Pentium 4 – 2. 4 GHz Metrics: % improvement running time and routing calls Default values: Parameter Defaults P 6% |R| 150 tuples d 24 Confidence 95% Comment Tuple sampling probability Sample size Number of partitions Conf. interval in graphs 17

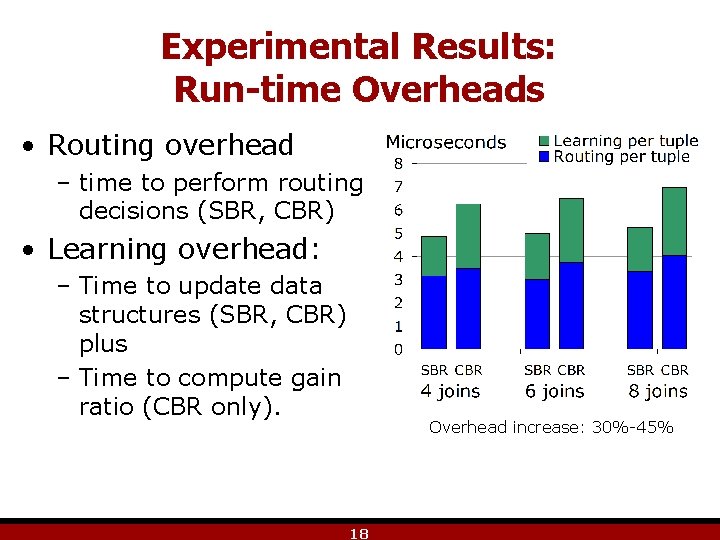

Experimental Results: Run-time Overheads • Routing overhead – time to perform routing decisions (SBR, CBR) • Learning overhead: – Time to update data structures (SBR, CBR) plus – Time to compute gain ratio (CBR only). Overhead increase: 30%-45% 18

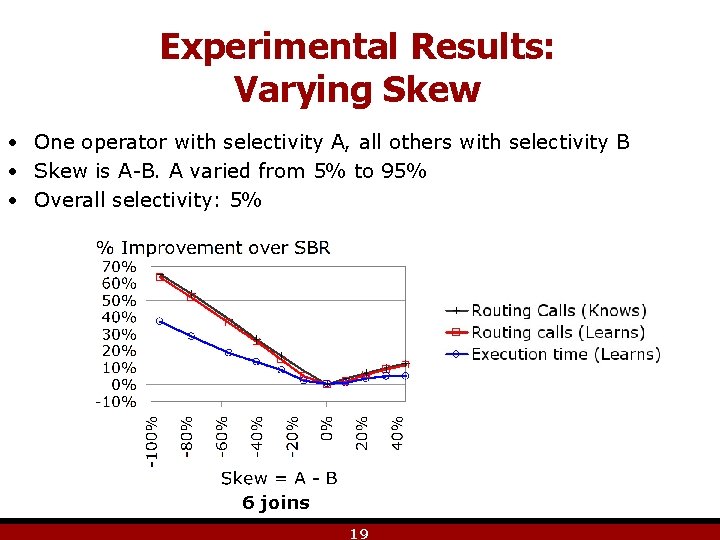

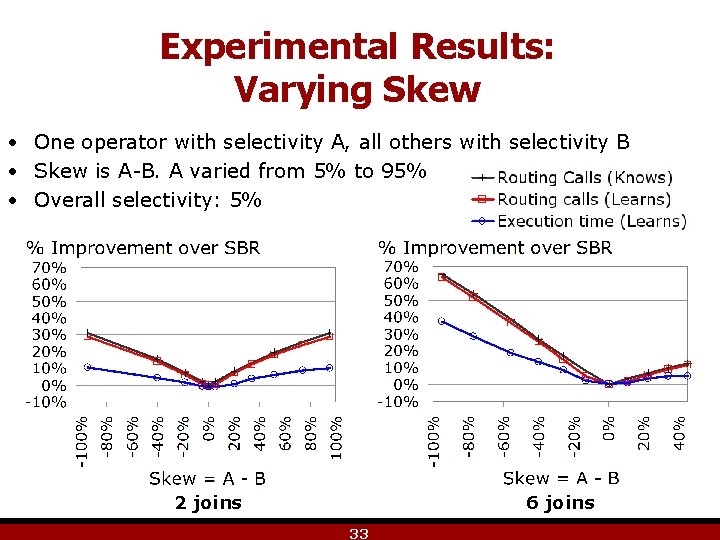

Experimental Results: Varying Skew • One operator with selectivity A, all others with selectivity B • Skew is A-B. A varied from 5% to 95% • Overall selectivity: 5% 6 joins 19

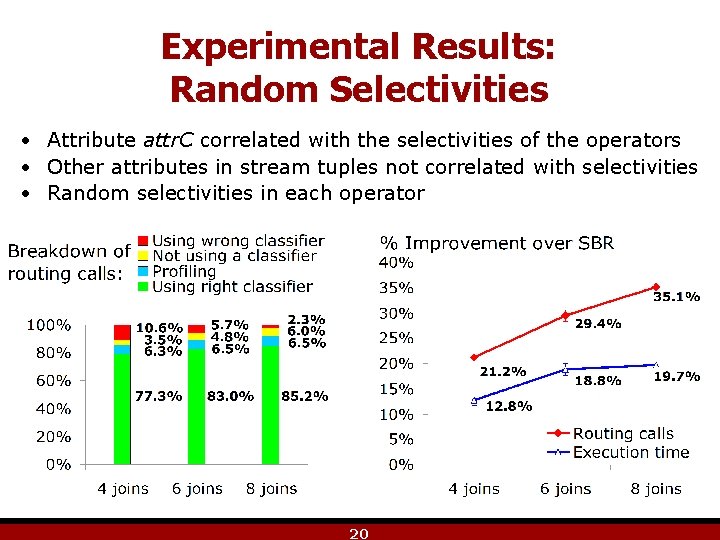

Experimental Results: Random Selectivities • Attribute attr. C correlated with the selectivities of the operators • Other attributes in stream tuples not correlated with selectivities • Random selectivities in each operator 20

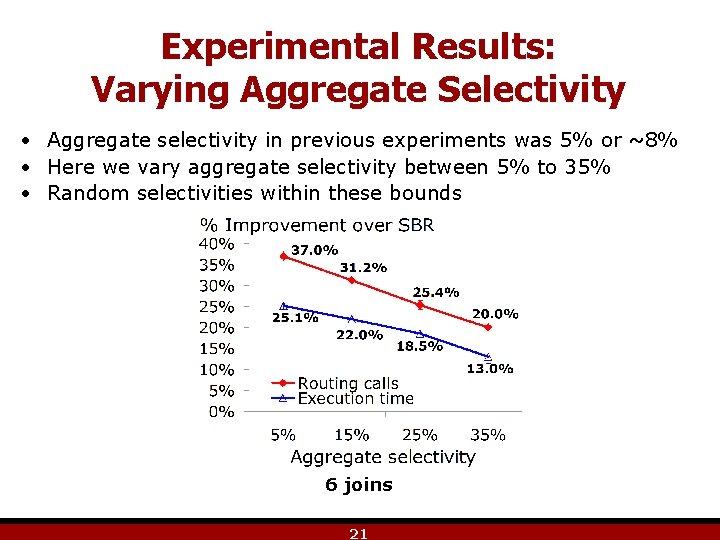

Experimental Results: Varying Aggregate Selectivity • Aggregate selectivity in previous experiments was 5% or ~8% • Here we vary aggregate selectivity between 5% to 35% • Random selectivities within these bounds 6 joins 21

Experimental Results: Datasets • Lab dataset – Real data – 2. 2 M readings from 54 sensors (Intel Research Berkeley Lab) – Single stream with attributes: • • • Light Humidity Temperature Voltage sensor. ID Year Month Day Hours Minutes Seconds 22

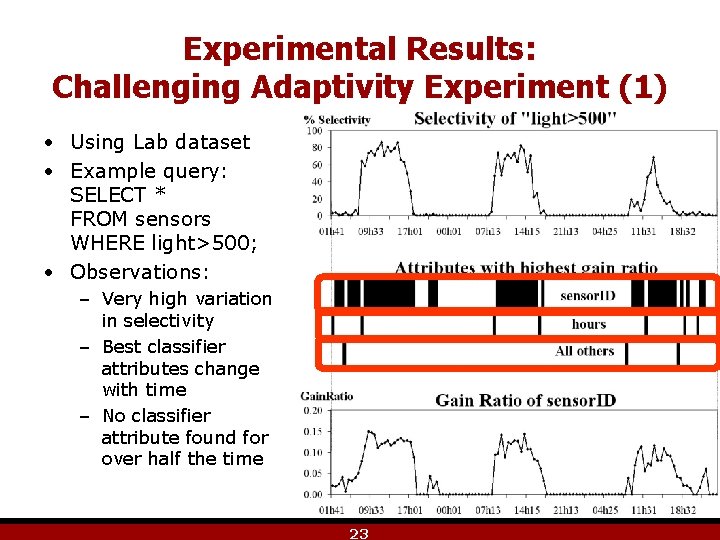

Experimental Results: Challenging Adaptivity Experiment (1) • Using Lab dataset • Example query: SELECT * FROM sensors WHERE light>500; • Observations: – Very high variation in selectivity – Best classifier attributes change with time – No classifier attribute found for over half the time 23

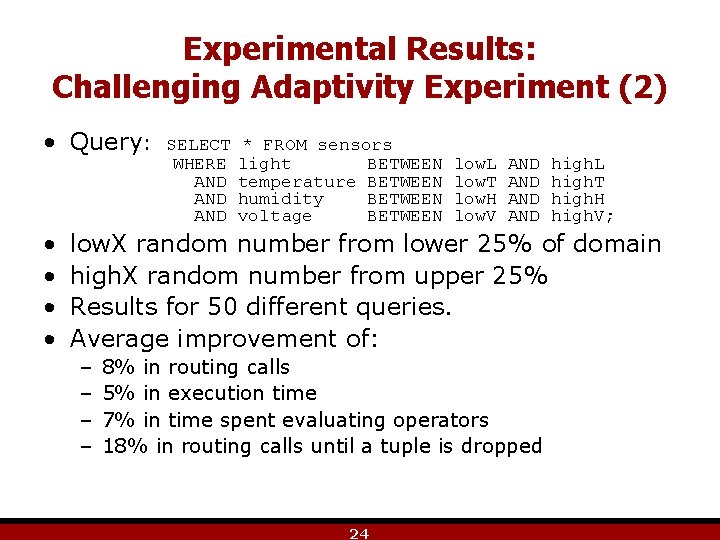

Experimental Results: Challenging Adaptivity Experiment (2) • Query: • • SELECT WHERE AND AND * FROM sensors light BETWEEN temperature BETWEEN humidity BETWEEN voltage BETWEEN low. L low. T low. H low. V AND AND high. L high. T high. H high. V; low. X random number from lower 25% of domain high. X random number from upper 25% Results for 50 different queries. Average improvement of: – – 8% in routing calls 5% in execution time 7% in time spent evaluating operators 18% in routing calls until a tuple is dropped 24

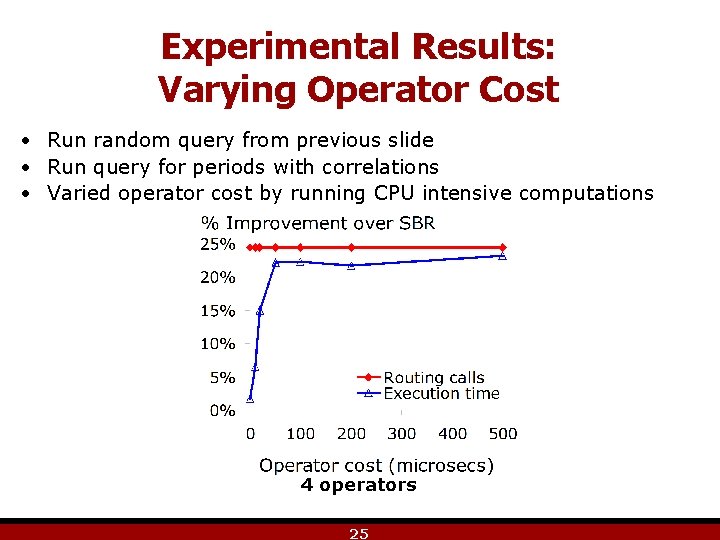

Experimental Results: Varying Operator Cost • Run random query from previous slide • Run query for periods with correlations • Varied operator cost by running CPU intensive computations 4 operators 25

Conclusions • CBR eliminates single plan assumption • Explores correlation between tuple content and operator selectivities • Adaptive learner of correlations with negligible overhead • Performance improvements over non-CBR routing • Selectivity changes much more than correlations 26

Acknowledgements • Sam Madden and Amol Deshpande for providing the Lab dataset. • Sailesh Krishnamurthy, Amol Deshpande, Joe Hellerstein, and the rest of the Telegraph. CQ team for providing Telegraph. CQ and answering all my questions. 27

Extra slides

Motivational Example (1): Intrusion Detection Query • “Track packets with destination address matching a prefix in table T, and containing the 100 -byte and 256 -byte sequences “ 0 xa. . . 8” and “ 0 x 7. . . b” respectively as subsequence” • SELECT * FROM packets WHERE matches(destination, T) O 1 AND contains(data, “ 0 xa. . . 8”) O 2 AND contains(data, “ 0 x 7. . . b”); O 3 30

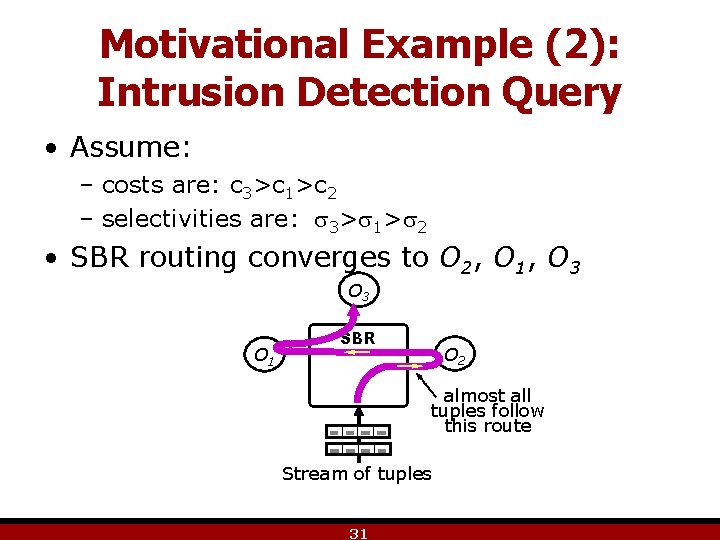

Motivational Example (2): Intrusion Detection Query • Assume: – costs are: c 3>c 1>c 2 – selectivities are: 3> 1> 2 • SBR routing converges to O 2, O 1, O 3 O 1 SBR O 2 almost all tuples follow this route Stream of tuples 31

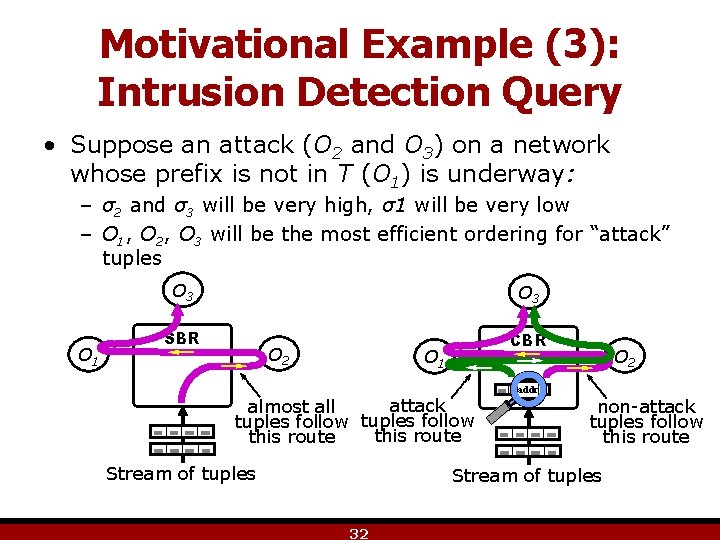

Motivational Example (3): Intrusion Detection Query • Suppose an attack (O 2 and O 3) on a network whose prefix is not in T (O 1) is underway: – σ2 and σ3 will be very high, σ1 will be very low – O 1, O 2, O 3 will be the most efficient ordering for “attack” tuples O 1 O 3 SBR CBR O 2 O 1 attack almost all tuples follow this route Stream of tuples addr O 2 non-attack tuples follow this route Stream of tuples 32

Experimental Results: Varying Skew • One operator with selectivity A, all others with selectivity B • Skew is A-B. A varied from 5% to 95% • Overall selectivity: 5% 2 joins 6 joins 33

![Related Work Adaptivity in Stream Systems • [Avnur+] Eddies: Continuously Adaptive Query Processing. SIGMOD'00. Related Work Adaptivity in Stream Systems • [Avnur+] Eddies: Continuously Adaptive Query Processing. SIGMOD'00.](http://slidetodoc.com/presentation_image_h2/1634ae6bd67243552245cf7c59b3bf3f/image-33.jpg)

Related Work Adaptivity in Stream Systems • [Avnur+] Eddies: Continuously Adaptive Query Processing. SIGMOD'00. • [Arasu+] STREAM: The Stanford Stream Data Manager. DEBul 26(1). • [Babu+] Adaptive ordering of pipelined stream filters. SIGMOD'04. • [Babu+] Strea. Mon: An Adaptive Engine for Stream Query Processing. SIGMOD'04. • [Carney+] Monitoring streams – a new class of data management applications. VLDB'02. • [Chandrasekaran+] Telegraph. CQ: Continuous dataflow processing for an uncertain world. CIDR'03. • [Chandrasekaran+] Psoup: a system for streaming queries over streaming data. VLDBj 12(2). • [Deshpande] An initial study of overheads of eddies. SIGMODrec 33(1). • [Deshpande+] Lifting the Burden of History from Adaptive Query Processing. VLDB'04. • [Madden+] Continuously adaptive continuous queries over streams. SIGMOD'02. • [Raman+] Using state modules for adaptive query processing. ICDE'03. • [Tian+] Tuple Routing Strategies for Distributed Eddies. VLDB'03. • [Viglas+] Maximizing the Output Rate of Multi. Way Join Queries over Streaming Information Sources. VLDB'03. AQP Surveys • [Babu+] Adaptive Query Processing in the Looking Glass. CIDR'05. • [Hellerstein+] Adaptive query processing: Technology in evolution. DEBul 23(2). Adaptivity in DBMS • [Babu+] Proactive Re-optimization. SIGMOD'05. • [Graefe+] Dynamic query evaluation plans. SIGMOD'89. • [Kabra+] Efficient Mid-Query Re-Optimization of Sub-Optimal Query Execution Plans. SIGMOD'98. • [Markl+] Robust Query Processing through Progressive Optimization. SIGMOD'04. • [Wong+] Decomposition - a strategy for query processing. TODS 1(3). Adaptivity in Distributed Systems • [Bouganim+] Dynamic query scheduling in data integration systems. ICDE'00. • [Ives+] An adaptive query execution system for data integration. SIGMOD'99. • [Ives+] Adaptive query processing for internet applications. DEBul 23(2). • [Ives+] Adapting to Source Properties in Processing Data Integration Queries. SIGMOD'04. • [Ives] Efficient Query Processing for Data Integration. Ph. D thesis. • [Ng+] Dynamic query re-optimization. ICSSDM'99. • [Urhan+] Dynamic pipeline scheduling for improving interactive performance of online queries. VLDB'01. • [Urhan+] Cost based query scrambling for initial delays. SIGMOD'98. • [Zhu+] Dynamic plan migration for continuous queries over data streams. SIGMOD'04. 34

![Related Work (cont’d) Optimization, Cardinality Estimation, Correlations Acquisitional Systems • [Madden+] The Design of Related Work (cont’d) Optimization, Cardinality Estimation, Correlations Acquisitional Systems • [Madden+] The Design of](http://slidetodoc.com/presentation_image_h2/1634ae6bd67243552245cf7c59b3bf3f/image-34.jpg)

Related Work (cont’d) Optimization, Cardinality Estimation, Correlations Acquisitional Systems • [Madden+] The Design of an Acquisitional Query Processor for Sensor Networks. SIGMOD'03. • [Deshpande+] Model-Driven Data Acquisition in Sensor Networks. VLDB'04 • [Deshpande+] Exploiting Correlated Attributes in Acquisitional Query Processing. ICDE'05. Multiple Plans in Same Query • [Antoshenkov+] Query processing and optimization in oracle rdb. VLDBj 5(4). • [Bizarro+] Content-Based Routing: Different Plans for Different Data. VLDB'05. • [Polyzotis] Selectivity-Based Partitioning: A Divide-and-Union Paradigm For Effective Query Optimization. Unpublished. URDs and Modeling Uncertainty • [Anderson+] Index key range estimator. U. S. Patent 4, 774, 657. • [Babcock+] Towards a Robust Query Optimizer: A Principled and Practical Approach. SIGMOD'05. • [Chu+] Least expected cost query optimization: An exercise in utility. So. Po. DS'99. • [Ramamurthy+] Buffer-pool Aware Query Optimization. CIDR'05. • [Viglas] Novel Query Optimization and Evaluation Techniques, Ph. D. Thesis. • [Selinger+] Access Path Selection in a Relational Database Management System. SIGMOD'79. • [Acharya+] Join Synopses for Approximate Query Answering. SIGMOD'99. • [Christodoulakis] Implications of certain assumptions in database performance evaluation. TODS 9(2). • [Graefe] Query Evaluation Techniques for Large Databases. ACM Comput. Surv. 25(2). • [Getoor+] Selectivity Estimation using Probabilistic Models. SIGMOD'01. • [Ioannidis+] On the Propagation of Errors in the Size of Join Results. SIGMOD'91. • [Ilyas+] CORDS: Automatic discovery of correlations and soft functional dependencies. SIGMOD'04. • [Stillger+] LEO - DB 2’s LEarning Optimizer. VLDB'01. AI and Learning from Streams • [Mitchell] Machine Learning. Mc. Graw-Hill, '97. • [Guha+] Data-streams and histograms. ACM Symp. on Theory of Computing, '01. • [Domingos+] Mining high-speed data streams. SIGKDD'00. • [Gehrke+] On computing correlated aggregates over continual data streams. SIGMOD'01. 35

- Slides: 34