ContentBased Image Retrieval 1 What is Contentbased Image

Content-Based Image Retrieval 1

What is Content-based Image Retrieval (CBIR)? • Image Search Systems that search for images by image content <-> Keyword-based Image/Video Retrieval (ex. Google Image Search, You. Tube) 2

How does CBIR work ? • Extract Features from Images • Let the user do Query – Query by Sketch – Query by Keywords – Query by Example • Refine the result by Relevance Feedback – Give feedback to the previous result 3

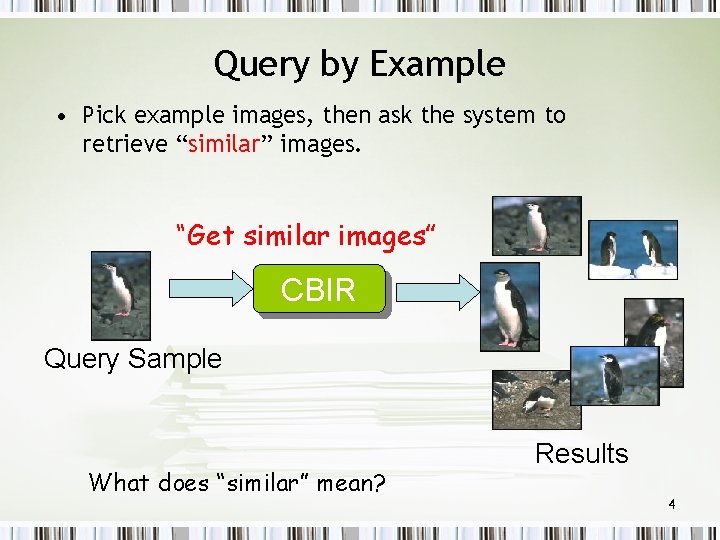

Query by Example • Pick example images, then ask the system to retrieve “similar” images. “Get similar images” CBIR Query Sample What does “similar” mean? Results 4

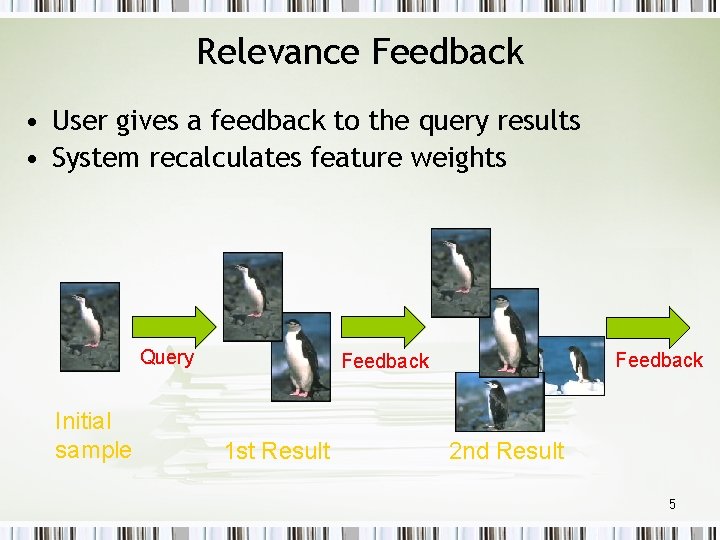

Relevance Feedback • User gives a feedback to the query results • System recalculates feature weights Query Initial sample Feedback 1 st Result 2 nd Result 5

Two Classes of CBIR Narrow vs. Broad Domain • Narrow – Medical Imagery Retrieval – Finger Print Retrieval – Satellite Imagery Retrieval • Broad – Photo Collections – Internet 6

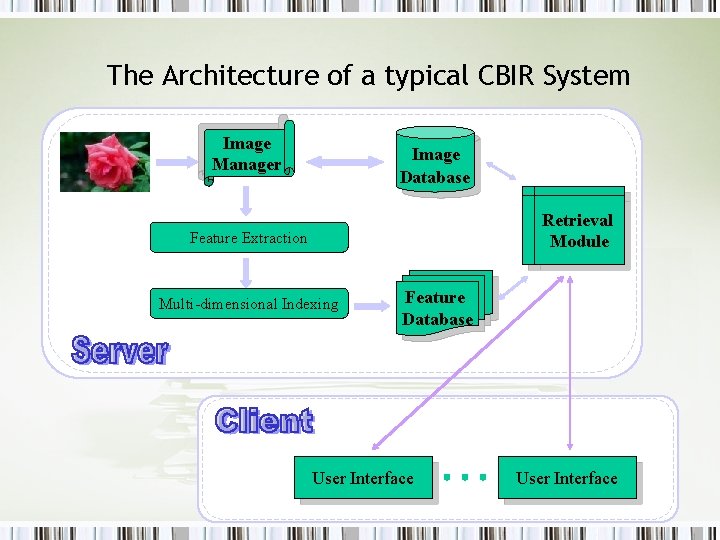

The Architecture of a typical CBIR System Image Manager Image Database Retrieval Module Feature Extraction Multi-dimensional Indexing Feature Database User Interface 7

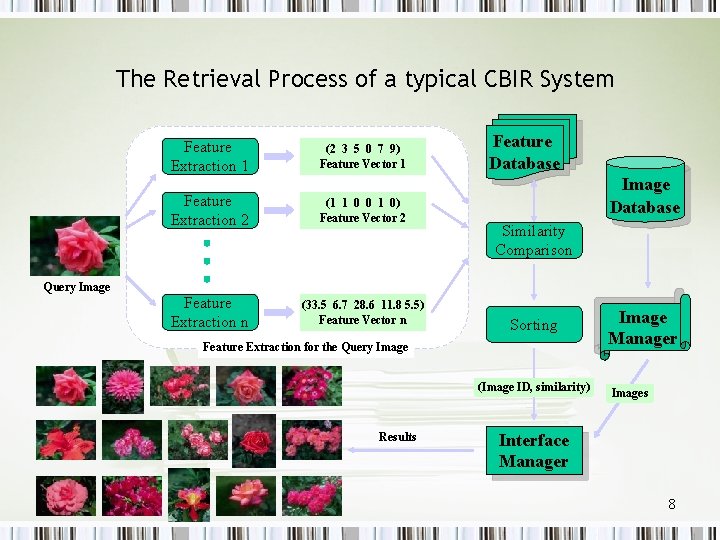

The Retrieval Process of a typical CBIR System Feature Extraction 1 (2 3 5 0 7 9) Feature Vector 1 Feature Extraction 2 (1 1 0 0 1 0) Feature Vector 2 Feature Extraction n (33. 5 6. 7 28. 6 11. 8 5. 5) Feature Vector n Feature Database Image Database Similarity Comparison Query Image Sorting Feature Extraction for the Query Image (Image ID, similarity) Results Image Manager Images Interface Manager 8

Basic Components of CBIR – Feature Extraction – Data indexing – Query and feedback processing 9

How Images are represented 10

Image Features • Representing the Images – Segmentation – Low Level Features • Color • Texture • Shape 11

Image Features • Information about color or texture or shape which are extracted from an image are known as image features – Also a low-level features • Red, sandy – As opposed to high level features or concepts • Beaches, mountains, happy 12

Global features • Averages across whole image O Tends to loose distinction between foreground and background O Poorly reflects human understanding of images PComputationally simple PA number of successful systems have been built using global image features 13

Local Features • Segment images into parts • Two sorts: – Tile Based – Region based 14

Regioning and Tiling Schemes Tiles Regions 15

Tiling q Break image down into simple geometric shapes O Similar Problems to Global O Plus dangers of breaking up significant objects PComputational Simple PSome Schemes seem to work well in practice 16

Regioning • Break Image down into visually coherent areas PCan identify meaningful areas and objects O Computationally intensive O Unreliable 17

Color • Produce a color signature for region/whole image • Typically done using color correllograms or color histograms 18

Color Features • Color Histograms – – – • Color Layout – – – • Color Space Selection Color Space Quantization Color Histogram Calculation Feature Indexing Similarity Measures Histograms based on spatial distribution of single color Histograms based on spatial distribution of color pair Histograms based on spatial distribution of color triple Other Color Features – – Color Moments Color Sets 19

Color Space Selection • Which Color Space? – RGB, CMY, YCr. Cb, CIE, YIQ, HLS, … • HSV? – Designed to be similar to human perception 20

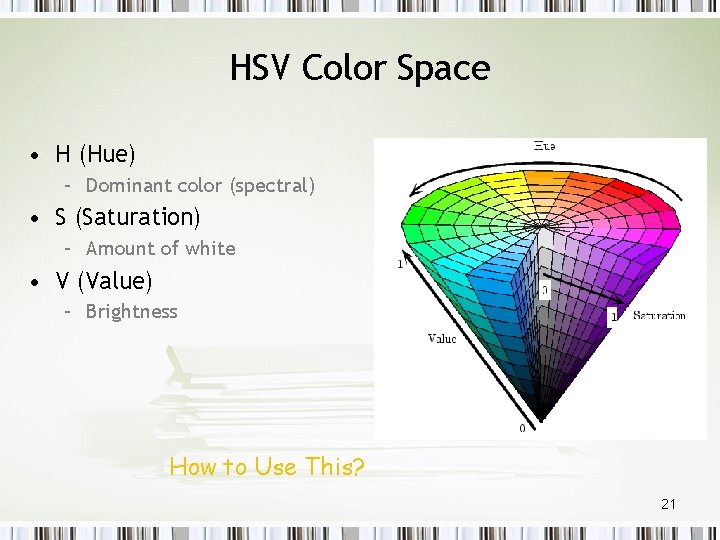

HSV Color Space • H (Hue) – Dominant color (spectral) • S (Saturation) – Amount of white • V (Value) – Brightness How to Use This? 21

Content Based Image Retrieval • CBIR – utilizes unique features (shape, color, texture) of images Users prefer – To retrieve relevant image by semantic categories – But, CBIR can not capture high-level semantics in user’s mind 22

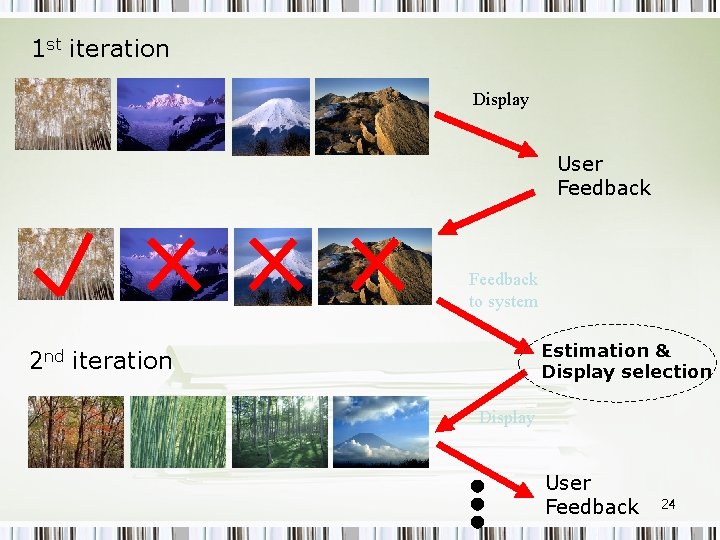

Relevance Feedback • Relevance Feedback – Learns the associations between high-level semantics and low-level features • Relevance Feedback Phase 1. 2. User identifies relevant images within the returned set System utilizes user feedback in the next round To modify the query (to retrieve better results) 3. This process repeats until user is satisfied 23

1 st iteration Display User Feedback to system Estimation & Display selection 2 nd iteration Display User Feedback 24

Now, We have many features (too many? ) • How to express visual “similarity” with these features? 25

Visual Similarity ? • “Similarity” is Subjective and Context-dependent. • “Similarity” is High-level Concept. – Cars, Flowers, … • But, our features are Low-level features. – Semantic Gap! 26

Which features are most important? • Not all features are always important. • “Similarity” measure is always changing • The system has to weight features on the fly. How ? 27

Q&A 28

- Slides: 28