Code Optimization CSCI 370 Computer Architecture Optimization Blockers

Code Optimization CSCI 370: Computer Architecture

Optimization Blockers Memory aliasing makes optimization difficult because. . . • A – there are only a limited number of registers • B – not all processors support register renaming • C – of side effects from global variables • D – two memory references could refer to the same address • E – no answer

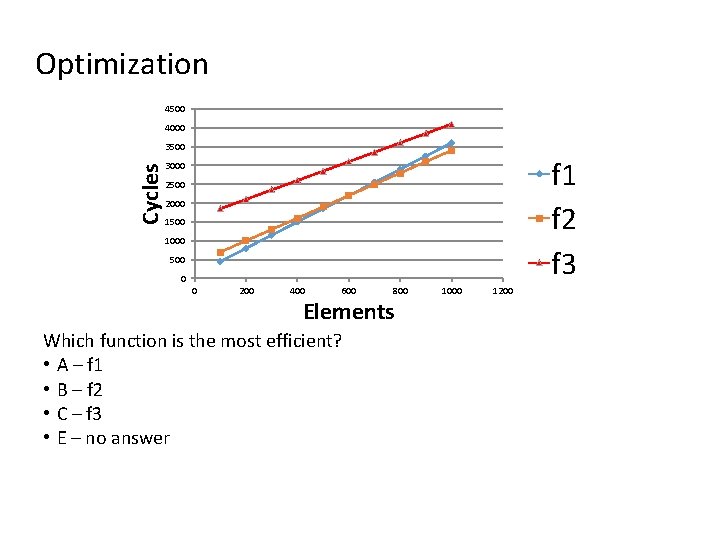

Optimization 4500 4000 Cycles 3500 f 1 f 2 f 3 3000 2500 2000 1500 1000 500 0 0 200 400 600 800 Elements Which function is the most efficient? • A – f 1 • B – f 2 • C – f 3 • E – no answer 1000 1200

Instruction Fetch In modern processors (P 6+), the Instruction Fetch stage involves specialized hardware that. . . • A – Reorders instructions to prevent data dependencies • B – Contains multiple functional units • C – Converts register references into tags • D – Detects mispredicted branches • E – no answer

Instruction Execution In modern processors (P 6+), out-of-order processing means that. . . • A – Instructions are re-ordered to prevent data dependencies • B – The program counter changes depending on what functional units are available • C – Instructions are flushed from the pipeline when they accidentally execute before they should have • D – A retirement unit tracks ongoing processing and commits results to the register file in order • E – no answer

Instruction Decode In modern CISC processors, primitive operations (or micro-operations). . . • A – are legacy instructions that are no longer used • B – allow for RISC-like pipelining • C – only operate on 16 -bits of data • D – reduce the overhead of function calls • E – no answer

Computation Which of the following is an example of a serial computation? • A – ((((((1 * x 0) * x 1) * x 2) * x 3) * x 4) * x 5) • B – (((1 * (x 0 * x 1)) * (x 2 * x 3)) * (x 4 * x 5)) • C – x 0 * x 1 * x 2 * x 3 * x 4 * x 5 • D – ((((1 * x 0) * x 1) * x 2) * (((1 * x 3) * x 4) *x 5)) • E – no answer

Loop Unrolling Which of the following is NOT an advantage of Loop Unrolling? • A – Amortizes loop overhead across multiple iterations • B – Enables additional out-of-order scheduling for non-dependant instructions • C – Enables pipelining of load instructions • D – Reduces the number of in-loop procedure calls • E – no answer

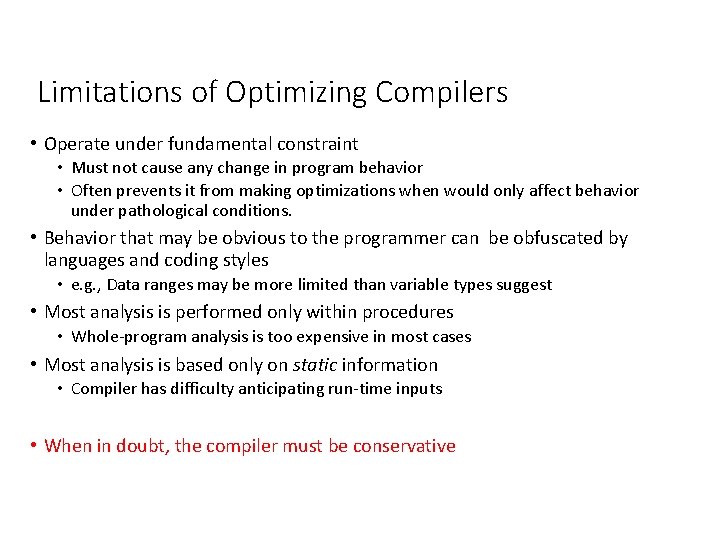

Limitations of Optimizing Compilers • Operate under fundamental constraint • Must not cause any change in program behavior • Often prevents it from making optimizations when would only affect behavior under pathological conditions. • Behavior that may be obvious to the programmer can be obfuscated by languages and coding styles • e. g. , Data ranges may be more limited than variable types suggest • Most analysis is performed only within procedures • Whole-program analysis is too expensive in most cases • Most analysis is based only on static information • Compiler has difficulty anticipating run-time inputs • When in doubt, the compiler must be conservative

Optimizing Compilers • Free / Open Source • GCC (GNU Compiler Collection) • Clang (C frontend of LLVM – UIUC ~2000) • Commercial • Intel Compiler • PGI Compiler • Others?

History • 1975 – The Design of an Optimizing Compiler • 1980 s – Programming in assembly declined • 2000 s – Compilers did a better job than experts with performance sensitive code • Now – RISC-like architectures, speculative execution were designed to be targeted by optimizing compilers

Static Code Analyses • Alias Analysis • Pointer Analysis • Shape Analysis • Escape Analysis • Array Access Analysis • Dependence Analysis • Control Flow Analysis • Data Flow Analysis

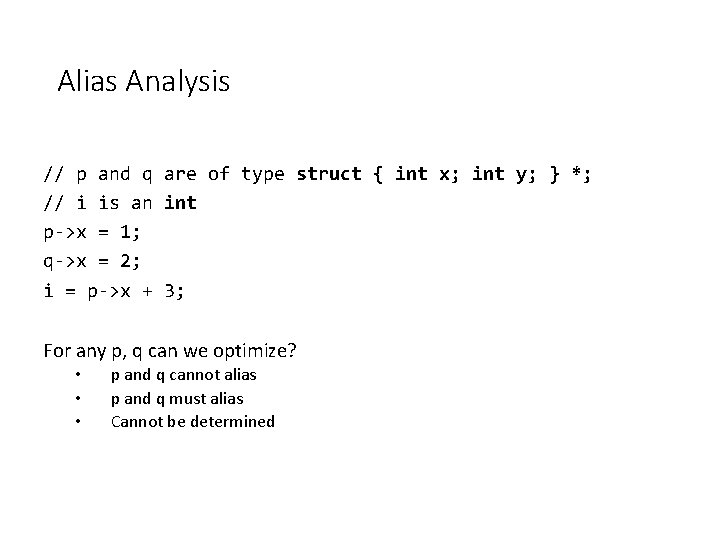

Alias Analysis // p and q are of type struct { int x; int y; } *; // i is an int p->x = 1; q->x = 2; i = p->x + 3; For any p, q can we optimize? • • • p and q cannot alias p and q must alias Cannot be determined

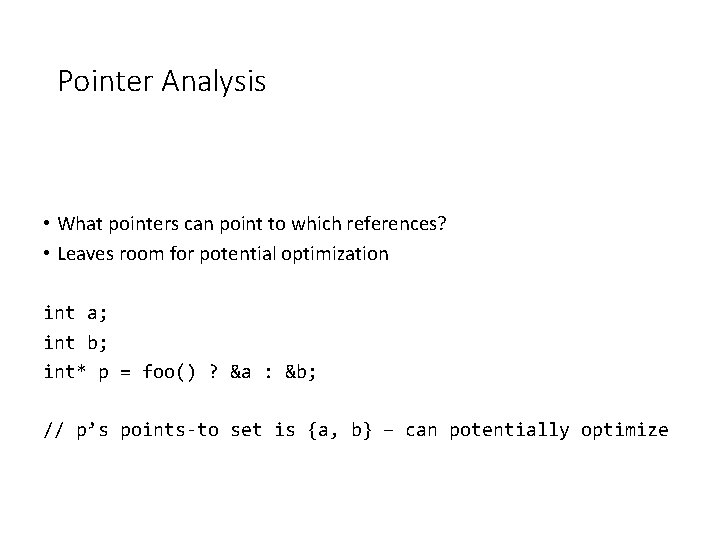

Pointer Analysis • What pointers can point to which references? • Leaves room for potential optimization int a; int b; int* p = foo() ? &a : &b; // p’s points-to set is {a, b} – can potentially optimize

Shape Analysis • Analyzes linked structures at compile time (e. g. linked lists) • Memory safety – detection of double-free, leaks • Array out of bounds

Escape Analysis • Does a pointer leave scope? (or “escape”) • Optimizations • heap to stack allocation • remove sync for multiple access • break up object into non-sequential parts ( so we can store in registers)

Array Access Analysis • Detect Access pattern of array at compile time • Why would we want to do this? • Autoparallelization • Autovectorization

Dependence Analysis • Our common “data hazard dependence” • Statement X must be executed before Statement Y, so don’t reorder

Control Flow Analysis • Analyze branching (conditional and unconditional) at compile-time • Determine if branch is always taken • Apply potential optimizations? • Dead code elimination • Dead store elimination

Data flow analysis • Determine data value propagation • Uses the control flow analysis to help with analysis

GCC – The GNU Compiler Collection • The GNU Compiler Collection includes front ends for C, C++, Objective-C, Fortran, Java, Ada, and Go, as well as libraries • GCC was originally written as the compiler for the GNU operating system. • The GNU system was developed to be 100% free software, free in the sense that it respects the user's freedom.

GCC Optimizations • Three main optimization levels: • -O 1, -O 2, -O 3 • Additionally able to optimize for code size: • -Os • Many individual optimizations • What do you think is done by default?

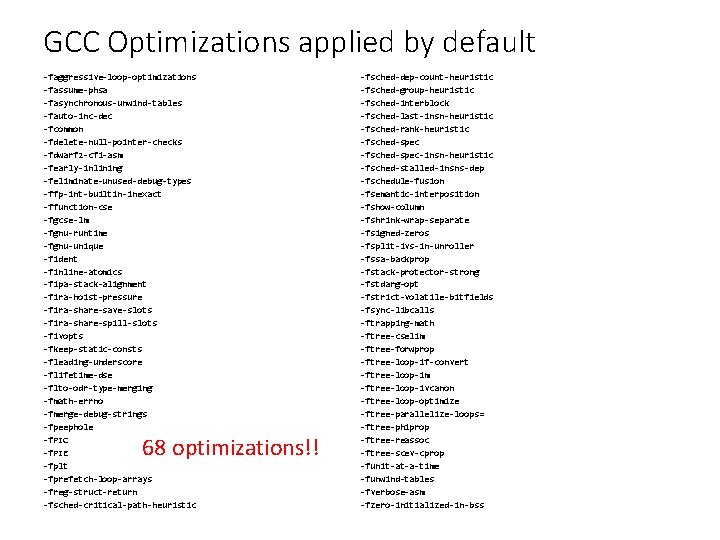

GCC Optimizations applied by default -faggressive-loop-optimizations -fassume-phsa -fasynchronous-unwind-tables -fauto-inc-dec -fcommon -fdelete-null-pointer-checks -fdwarf 2 -cfi-asm -fearly-inlining -feliminate-unused-debug-types -ffp-int-builtin-inexact -ffunction-cse -fgcse-lm -fgnu-runtime -fgnu-unique -fident -finline-atomics -fipa-stack-alignment -fira-hoist-pressure -fira-share-save-slots -fira-share-spill-slots -fivopts -fkeep-static-consts -fleading-underscore -flifetime-dse -flto-odr-type-merging -fmath-errno -fmerge-debug-strings -fpeephole -f. PIC -f. PIE -fplt -fprefetch-loop-arrays -freg-struct-return -fsched-critical-path-heuristic 68 optimizations!! -fsched-dep-count-heuristic -fsched-group-heuristic -fsched-interblock -fsched-last-insn-heuristic -fsched-rank-heuristic -fsched-spec-insn-heuristic -fsched-stalled-insns-dep -fschedule-fusion -fsemantic-interposition -fshow-column -fshrink-wrap-separate -fsigned-zeros -fsplit-ivs-in-unroller -fssa-backprop -fstack-protector-strong -fstdarg-opt -fstrict-volatile-bitfields -fsync-libcalls -ftrapping-math -ftree-cselim -ftree-forwprop -ftree-loop-if-convert -ftree-loop-im -ftree-loop-ivcanon -ftree-loop-optimize -ftree-parallelize-loops= -ftree-phiprop -ftree-reassoc -ftree-scev-cprop -funit-at-a-time -funwind-tables -fverbose-asm -fzero-initialized-in-bss

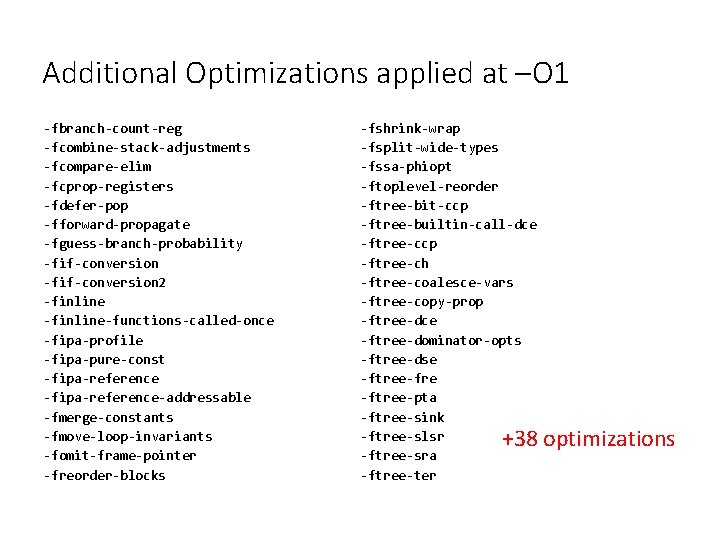

Additional Optimizations applied at –O 1 -fbranch-count-reg -fcombine-stack-adjustments -fcompare-elim -fcprop-registers -fdefer-pop -fforward-propagate -fguess-branch-probability -fif-conversion 2 -finline-functions-called-once -fipa-profile -fipa-pure-const -fipa-reference-addressable -fmerge-constants -fmove-loop-invariants -fomit-frame-pointer -freorder-blocks -fshrink-wrap -fsplit-wide-types -fssa-phiopt -ftoplevel-reorder -ftree-bit-ccp -ftree-builtin-call-dce -ftree-ccp -ftree-ch -ftree-coalesce-vars -ftree-copy-prop -ftree-dce -ftree-dominator-opts -ftree-dse -ftree-fre -ftree-pta -ftree-sink -ftree-slsr -ftree-sra -ftree-ter +38 optimizations

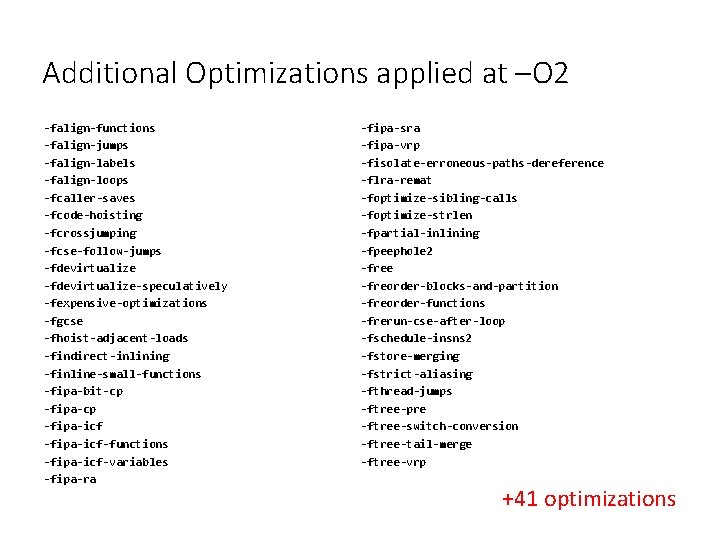

Additional Optimizations applied at –O 2 -falign-functions -falign-jumps -falign-labels -falign-loops -fcaller-saves -fcode-hoisting -fcrossjumping -fcse-follow-jumps -fdevirtualize-speculatively -fexpensive-optimizations -fgcse -fhoist-adjacent-loads -findirect-inlining -finline-small-functions -fipa-bit-cp -fipa-icf-functions -fipa-icf-variables -fipa-ra -fipa-sra -fipa-vrp -fisolate-erroneous-paths-dereference -flra-remat -foptimize-sibling-calls -foptimize-strlen -fpartial-inlining -fpeephole 2 -free -freorder-blocks-and-partition -freorder-functions -frerun-cse-after-loop -fschedule-insns 2 -fstore-merging -fstrict-aliasing -fthread-jumps -ftree-pre -ftree-switch-conversion -ftree-tail-merge -ftree-vrp +41 optimizations

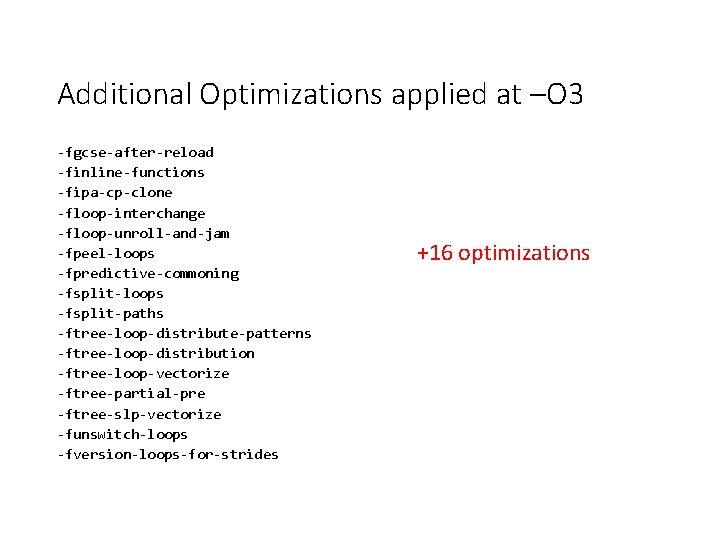

Additional Optimizations applied at –O 3 -fgcse-after-reload -finline-functions -fipa-cp-clone -floop-interchange -floop-unroll-and-jam -fpeel-loops -fpredictive-commoning -fsplit-loops -fsplit-paths -ftree-loop-distribute-patterns -ftree-loop-distribution -ftree-loop-vectorize -ftree-partial-pre -ftree-slp-vectorize -funswitch-loops -fversion-loops-for-strides +16 optimizations

But can we optimize further?

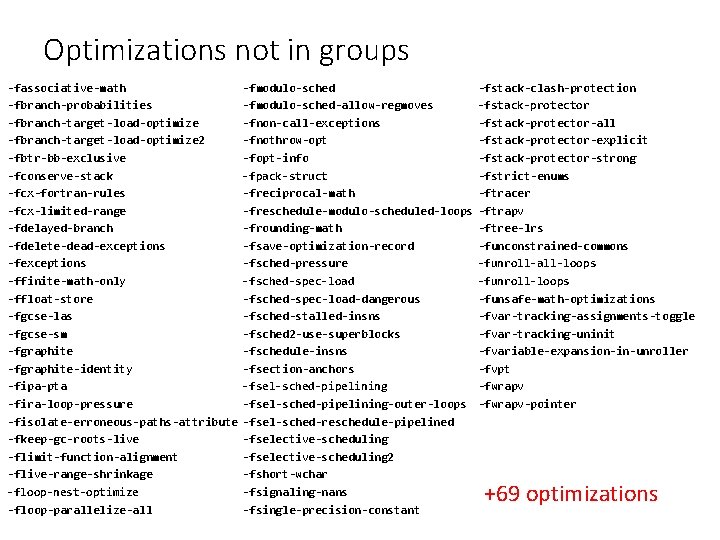

Optimizations not in groups -fassociative-math -fbranch-probabilities -fbranch-target-load-optimize 2 -fbtr-bb-exclusive -fconserve-stack -fcx-fortran-rules -fcx-limited-range -fdelayed-branch -fdelete-dead-exceptions -ffinite-math-only -ffloat-store -fgcse-las -fgcse-sm -fgraphite-identity -fipa-pta -fira-loop-pressure -fisolate-erroneous-paths-attribute -fkeep-gc-roots-live -flimit-function-alignment -flive-range-shrinkage -floop-nest-optimize -floop-parallelize-all -fmodulo-sched-allow-regmoves -fnon-call-exceptions -fnothrow-opt -fopt-info -fpack-struct -freciprocal-math -freschedule-modulo-scheduled-loops -frounding-math -fsave-optimization-record -fsched-pressure -fsched-spec-load-dangerous -fsched-stalled-insns -fsched 2 -use-superblocks -fschedule-insns -fsection-anchors -fsel-sched-pipelining-outer-loops -fsel-sched-reschedule-pipelined -fselective-scheduling 2 -fshort-wchar -fsignaling-nans -fsingle-precision-constant -fstack-clash-protection -fstack-protector-all -fstack-protector-explicit -fstack-protector-strong -fstrict-enums -ftracer -ftrapv -ftree-lrs -funconstrained-commons -funroll-all-loops -funroll-loops -funsafe-math-optimizations -fvar-tracking-assignments-toggle -fvar-tracking-uninit -fvariable-expansion-in-unroller -fvpt -fwrapv-pointer +69 optimizations

For those not keeping track • 68 optimizations applied without specifying! • 106 (38 new) applied at –O 1 • 147 (41 new) applied at –O 2 • 163 (16 new) applied at –O 3 • 69 additional optimizations not specified

Common “High Performance” Opts • Loop unrolling • Loop interchange • Reordering • Autoparallelization • Autovectorization • Dead code elimination • Dead store elimination • Alignment improvements

The other thing about GCC • New features are always being added • Existing optimizations are improved • You can try it out now (with patience)

Optimization Targets • We can use the optimizations listed previously • But. . . What if the optimizations • Don’t consider my functional units • Don’t consider my load-store units • Don’t consider my vector size (SIMD)

Tuning for your Architecture • -march=<arch> • Target code for specified architecture • Will (most likely) not run on older architectures • Uses ISA, Considers execution ports, cache, etc • -mtune=<arch> • Target code for specified architecture • Can run on older architectures, but will be “optimized” • Will not use updated ISA, considers architecture features

Dynamic Optimization • Normal workflow: 1. 2. 3. 4. Source code Compiler frontend will generate AST Optimizations Applied to AST Assembly generated from AST – tree-based representation of your program

Dynamic Optimization • Enhanced workflow: 1. 2. 3. 4. 5. Source Code Compiler Frontend generates AST is converted to an Intermediate Language Optimizations can be applied to IL IL can eventually be converted to Assembly What are some advantages of this?

Introducing LLVM • Low-level virtual machine • Developed in 2000 at University of Illinois at Urbana-Champaign • Currently supported mostly by Apple (creator works there, developed swift) • supports a language-independent instruction set and type system • has ability to accept the intermediate form from GCC • has its own compiler chains for various source languages such as C/C++ • can perform both static and dynamic optimization • Performance is competitive with GCC

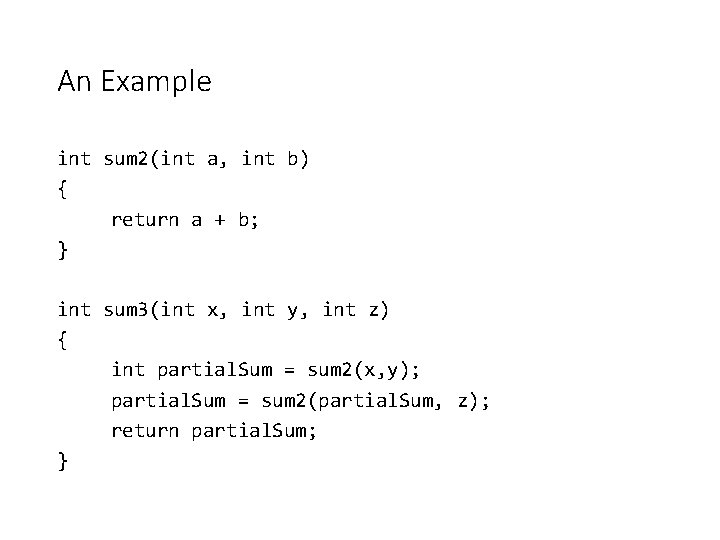

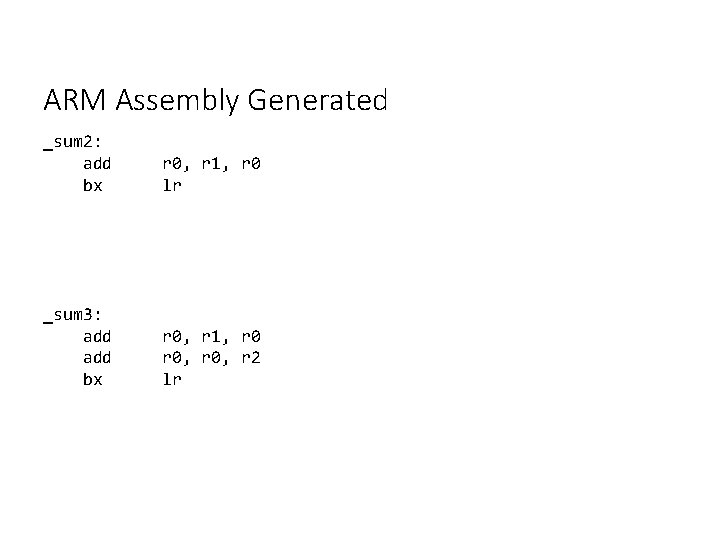

An Example int sum 2(int a, int b) { return a + b; } int sum 3(int x, int y, int z) { int partial. Sum = sum 2(x, y); partial. Sum = sum 2(partial. Sum, z); return partial. Sum; }

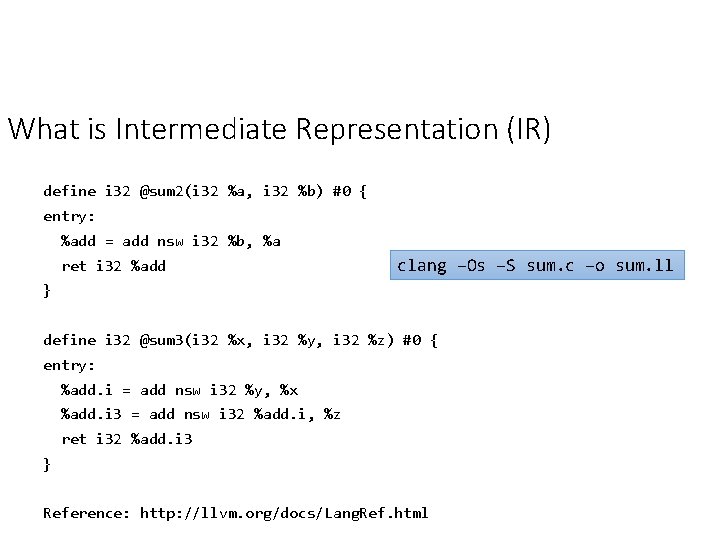

What is Intermediate Representation (IR) define i 32 @sum 2(i 32 %a, i 32 %b) #0 { entry: %add = add nsw i 32 %b, %a ret i 32 %add clang –Os –S sum. c –o sum. ll } define i 32 @sum 3(i 32 %x, i 32 %y, i 32 %z) #0 { entry: %add. i = add nsw i 32 %y, %x %add. i 3 = add nsw i 32 %add. i, %z ret i 32 %add. i 3 } Reference: http: //llvm. org/docs/Lang. Ref. html

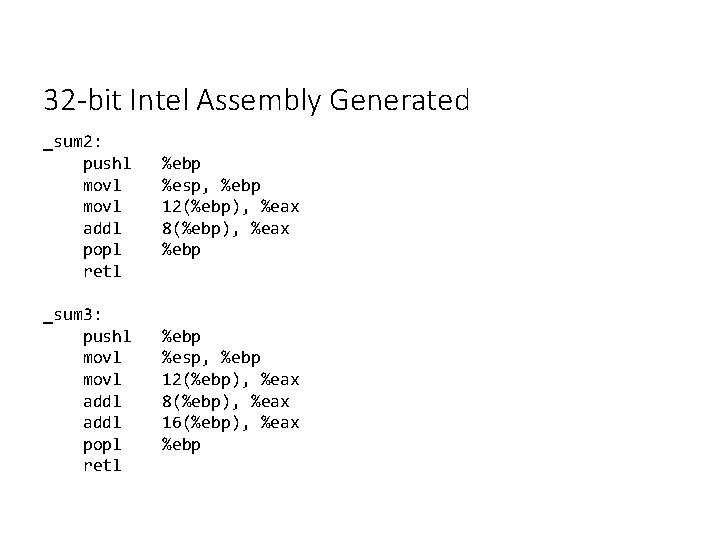

32 -bit Intel Assembly Generated _sum 2: pushl movl addl popl retl %ebp %esp, %ebp 12(%ebp), %eax 8(%ebp), %eax %ebp _sum 3: pushl movl addl popl retl %ebp %esp, %ebp 12(%ebp), %eax 8(%ebp), %eax 16(%ebp), %eax %ebp

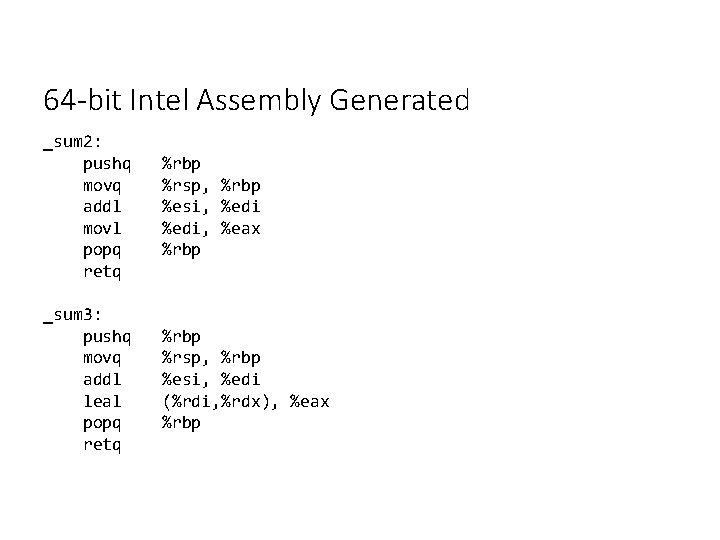

64 -bit Intel Assembly Generated _sum 2: pushq movq addl movl popq retq %rbp %rsp, %rbp %esi, %edi, %eax %rbp _sum 3: pushq movq addl leal popq retq %rbp %rsp, %rbp %esi, %edi (%rdi, %rdx), %eax %rbp

ARM Assembly Generated _sum 2: add bx r 0, r 1, r 0 lr _sum 3: add bx r 0, r 1, r 0, r 2 lr

Profile-Guided Optimization • Can we use execution information to drive what we optimize? • Yes – we do this already!

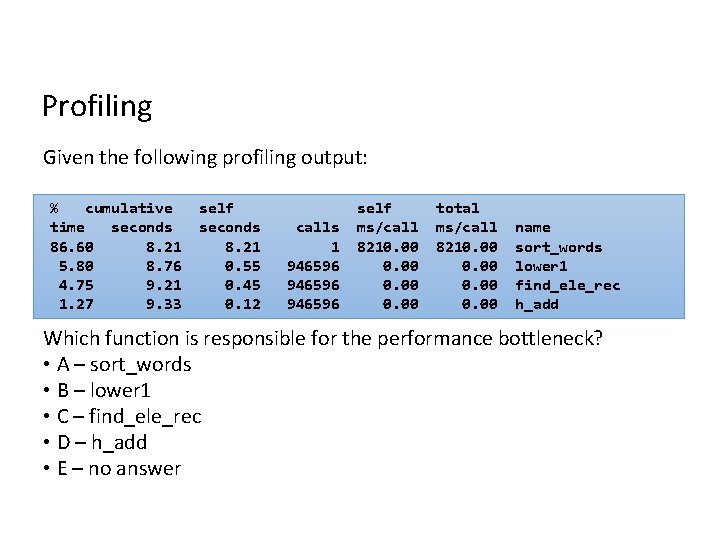

Profiling Given the following profiling output: % cumulative time seconds 86. 60 8. 21 5. 80 8. 76 4. 75 9. 21 1. 27 9. 33 self seconds 8. 21 0. 55 0. 45 0. 12 calls 1 946596 self ms/call 8210. 00 total ms/call 8210. 00 name sort_words lower 1 find_ele_rec h_add Which function is responsible for the performance bottleneck? • A – sort_words • B – lower 1 • C – find_ele_rec • D – h_add • E – no answer

Profile-Guided Optimization 1. 2. 3. 4. Run / Profile Program Find performance bottlenecks Tune “bottlenecked” code Repeat • Some compilers do this! (PGI, Intel, LLVM)

LLVM-MCA (Machine Code Analyzer) • A performance analysis tool • Currently works for processors with an out-of-order backend, for which there is a scheduling model available in LLVM. • The main goal of this tool is not just to predict the performance of the code when run on the target, but also help with diagnosing potential performance issues. Demo: https: //godbolt. org/z/o. By 7 iy

- Slides: 45