Code Optimization II Machine Dependent Optimizations Topics n

- Slides: 50

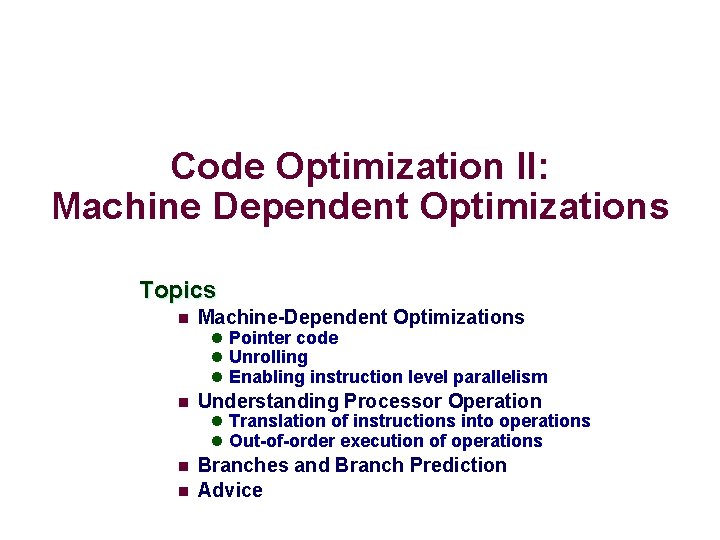

Code Optimization II: Machine Dependent Optimizations Topics n Machine-Dependent Optimizations l Pointer code l Unrolling l Enabling instruction level parallelism n Understanding Processor Operation l Translation of instructions into operations l Out-of-order execution of operations n n Branches and Branch Prediction Advice

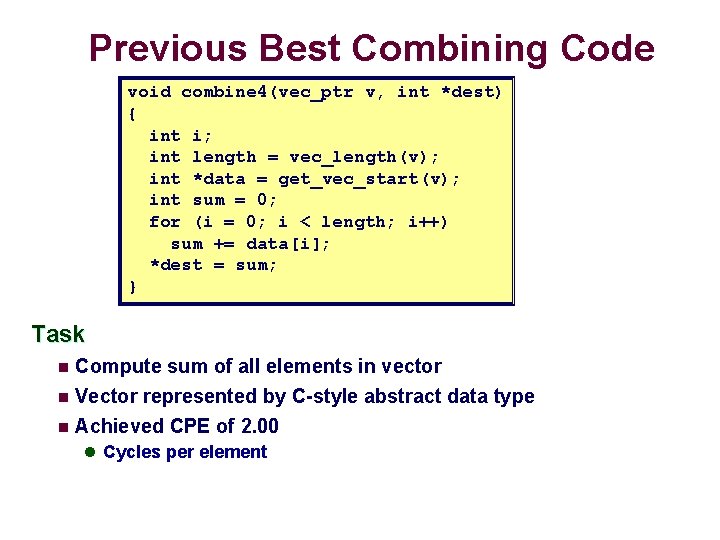

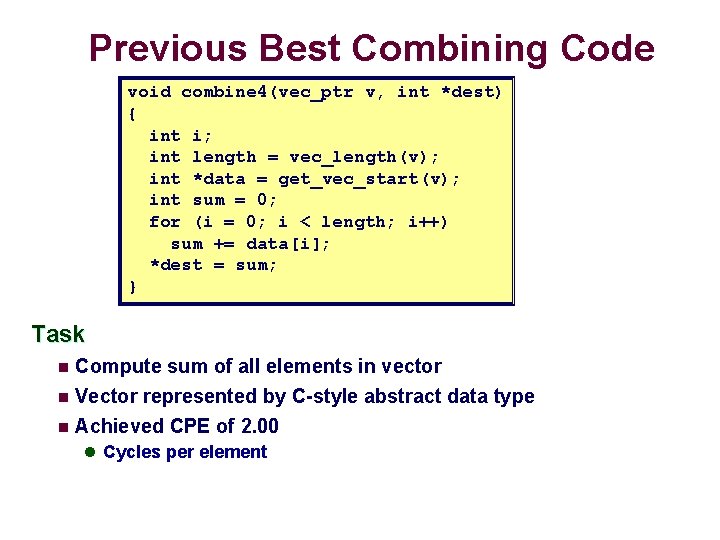

Previous Best Combining Code void combine 4(vec_ptr v, int *dest) { int i; int length = vec_length(v); int *data = get_vec_start(v); int sum = 0; for (i = 0; i < length; i++) sum += data[i]; *dest = sum; } Task n Compute sum of all elements in vector Vector represented by C-style abstract data type n Achieved CPE of 2. 00 n l Cycles per element

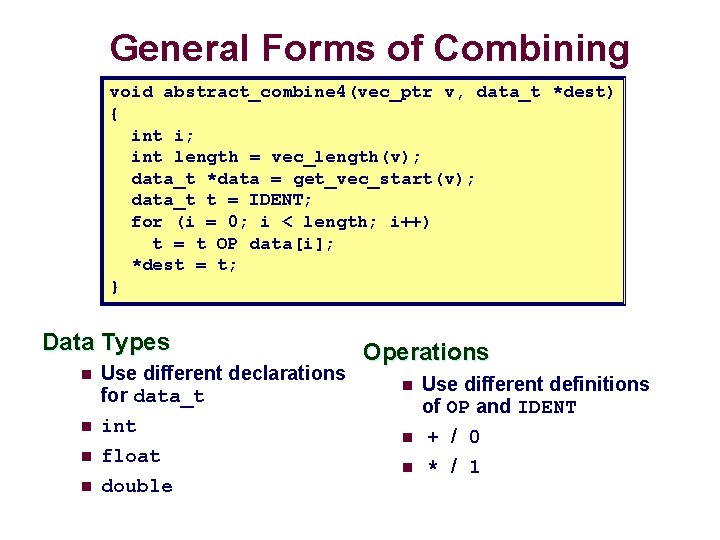

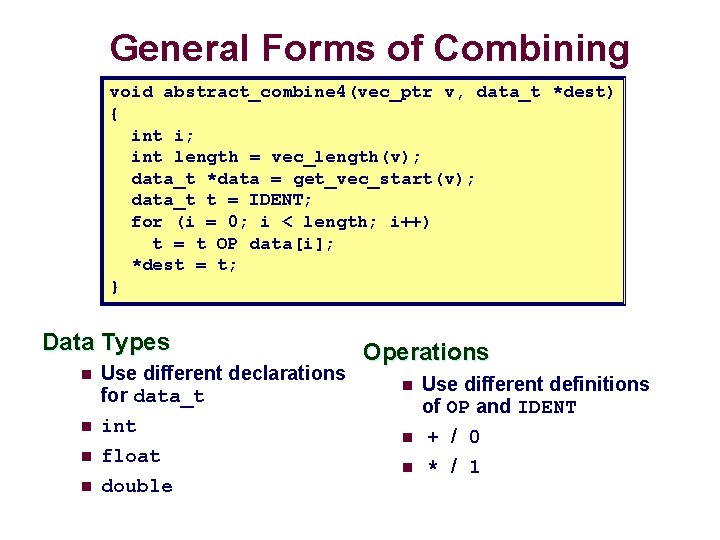

General Forms of Combining void abstract_combine 4(vec_ptr v, data_t *dest) { int i; int length = vec_length(v); data_t *data = get_vec_start(v); data_t t = IDENT; for (i = 0; i < length; i++) t = t OP data[i]; *dest = t; } Data Types n n Use different declarations for data_t int float double Operations n n n Use different definitions of OP and IDENT + / 0 * / 1

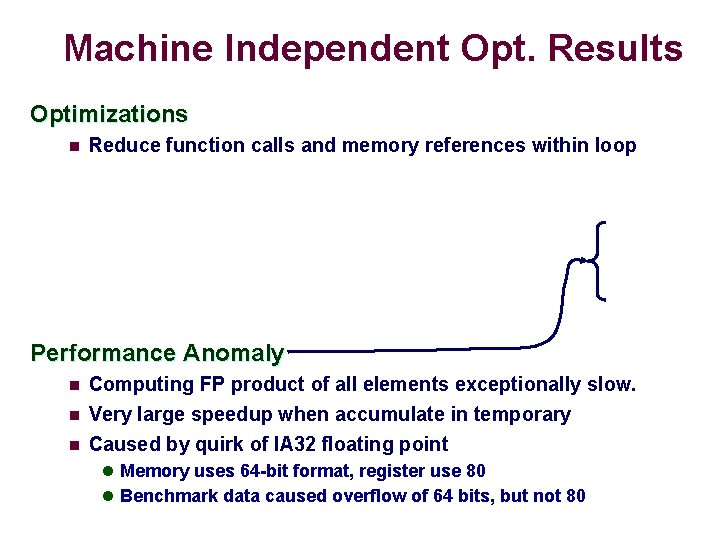

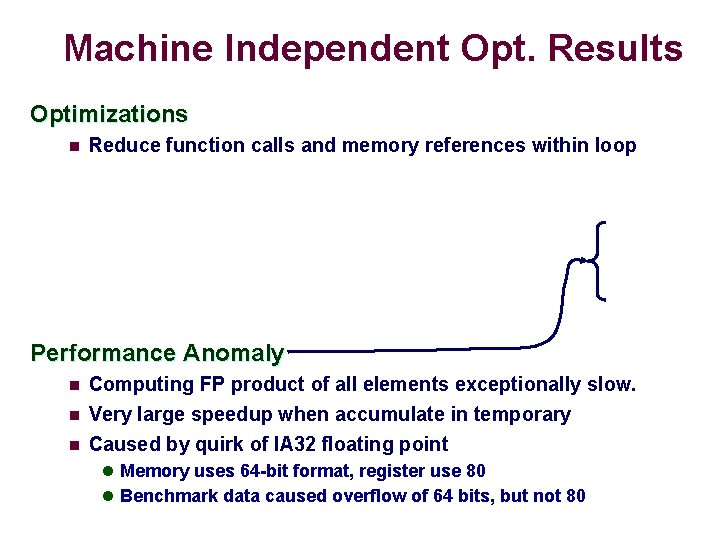

Machine Independent Opt. Results Optimizations n Reduce function calls and memory references within loop Performance Anomaly n n n Computing FP product of all elements exceptionally slow. Very large speedup when accumulate in temporary Caused by quirk of IA 32 floating point l Memory uses 64 -bit format, register use 80 l Benchmark data caused overflow of 64 bits, but not 80

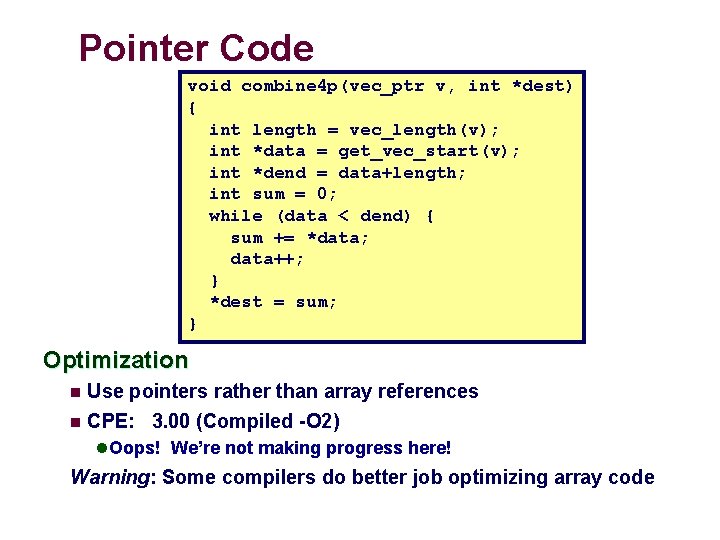

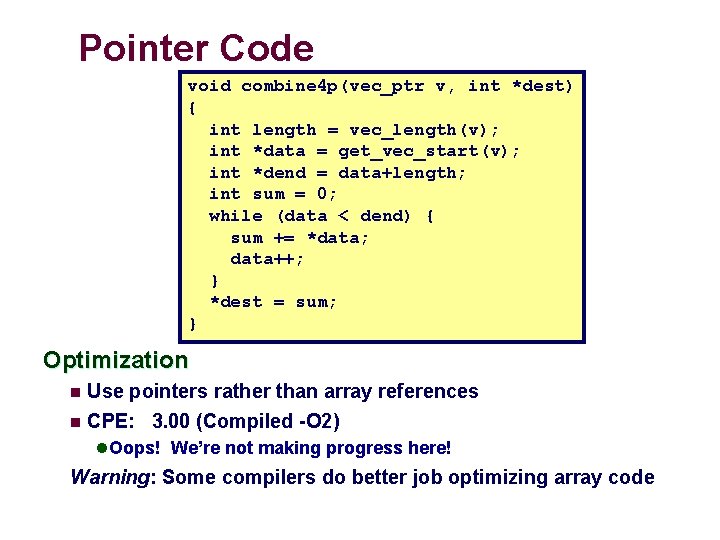

Pointer Code void combine 4 p(vec_ptr v, int *dest) { int length = vec_length(v); int *data = get_vec_start(v); int *dend = data+length; int sum = 0; while (data < dend) { sum += *data; data++; } *dest = sum; } Optimization n Use pointers rather than array references n CPE: 3. 00 (Compiled -O 2) l Oops! We’re not making progress here! Warning: Some compilers do better job optimizing array code

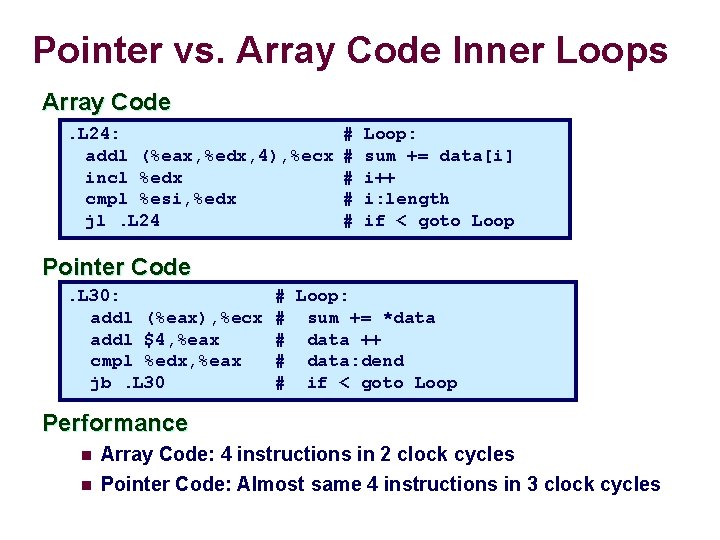

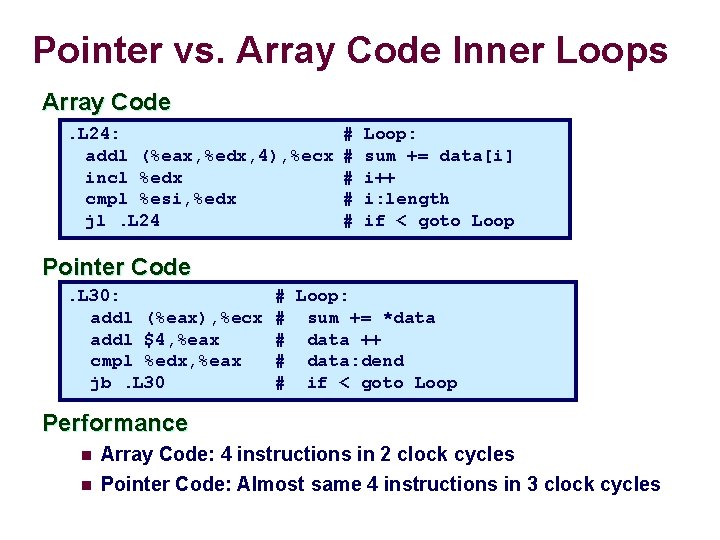

Pointer vs. Array Code Inner Loops Array Code. L 24: addl (%eax, %edx, 4), %ecx incl %edx cmpl %esi, %edx jl. L 24 # # # Loop: sum += data[i] i++ i: length if < goto Loop Pointer Code. L 30: addl (%eax), %ecx addl $4, %eax cmpl %edx, %eax jb. L 30 # Loop: # sum += *data # data ++ # data: dend # if < goto Loop Performance n n Array Code: 4 instructions in 2 clock cycles Pointer Code: Almost same 4 instructions in 3 clock cycles

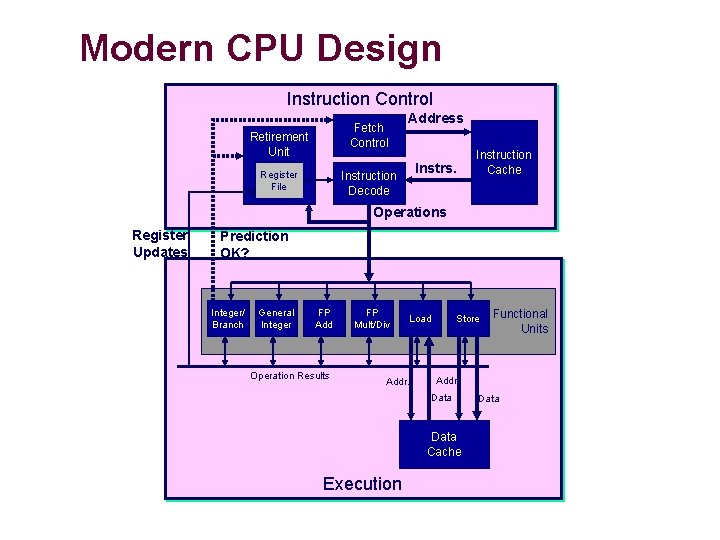

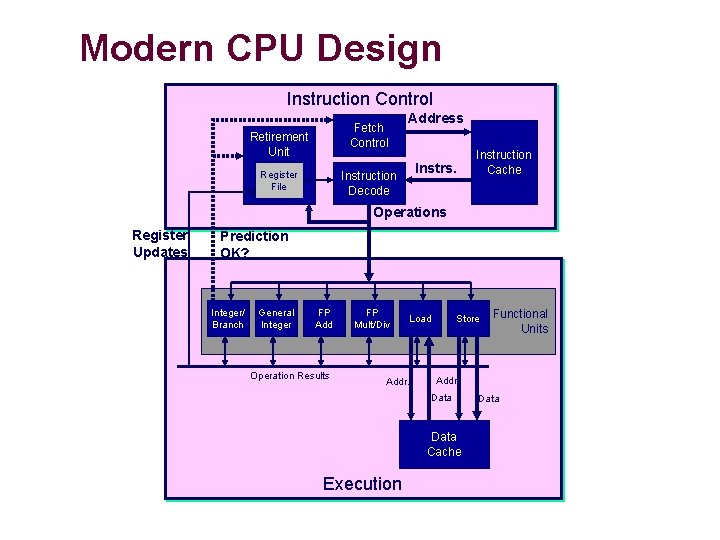

Modern CPU Design Instruction Control Fetch Control Retirement Unit Register File Address Instrs. Instruction Decode Instruction Cache Operations Register Updates Prediction OK? Integer/ Branch General Integer FP Add Operation Results FP Mult/Div Load Addr. Store Addr. Data Cache Execution Functional Units Data

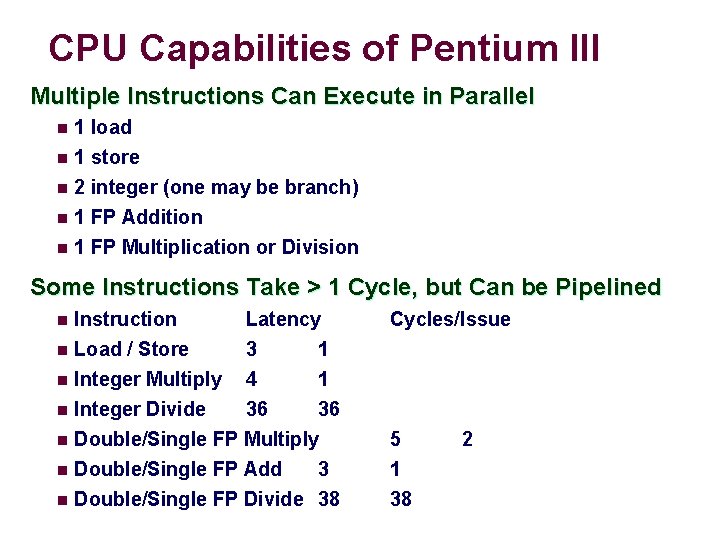

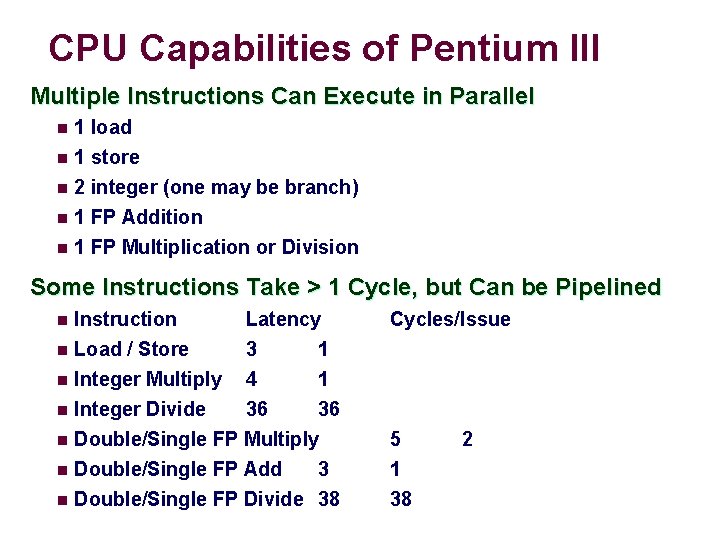

CPU Capabilities of Pentium III Multiple Instructions Can Execute in Parallel n 1 load 1 store n 2 integer (one may be branch) n 1 FP Addition n 1 FP Multiplication or Division n Some Instructions Take > 1 Cycle, but Can be Pipelined Instruction Latency n Load / Store 3 1 n Integer Multiply 4 1 n Integer Divide 36 36 n Double/Single FP Multiply n Double/Single FP Add 3 n Double/Single FP Divide 38 n Cycles/Issue 5 1 38 2

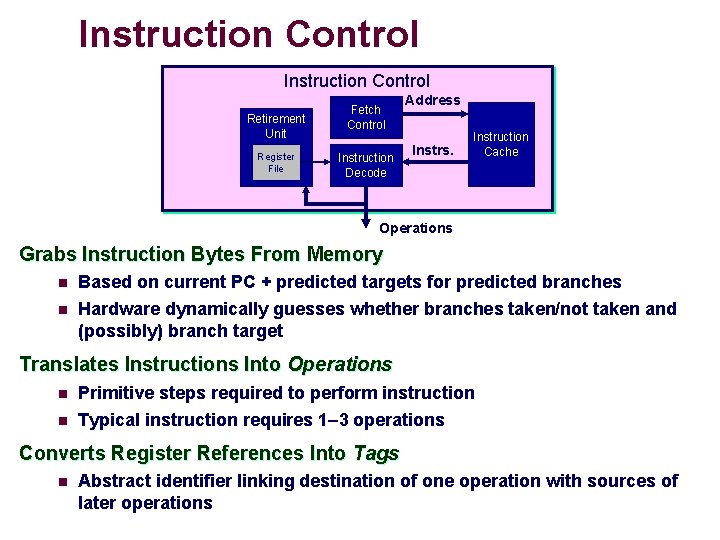

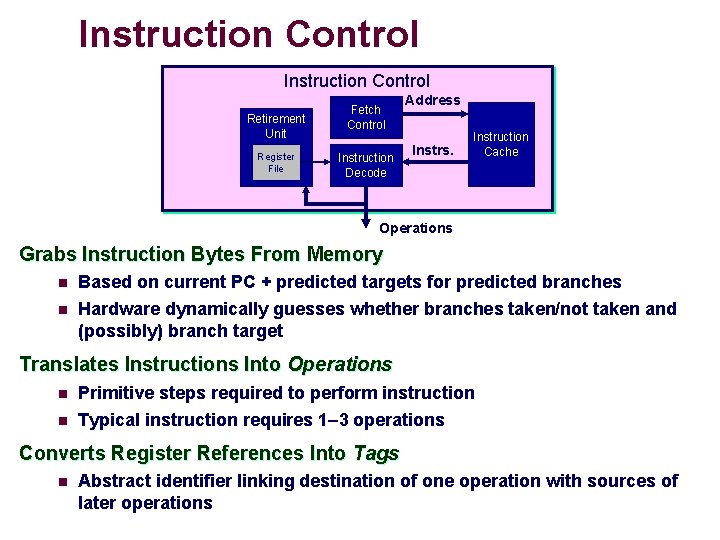

Instruction Control Retirement Unit Register File Fetch Control Instruction Decode Address Instrs. Instruction Cache Operations Grabs Instruction Bytes From Memory n n Based on current PC + predicted targets for predicted branches Hardware dynamically guesses whether branches taken/not taken and (possibly) branch target Translates Instructions Into Operations n n Primitive steps required to perform instruction Typical instruction requires 1– 3 operations Converts Register References Into Tags n Abstract identifier linking destination of one operation with sources of later operations

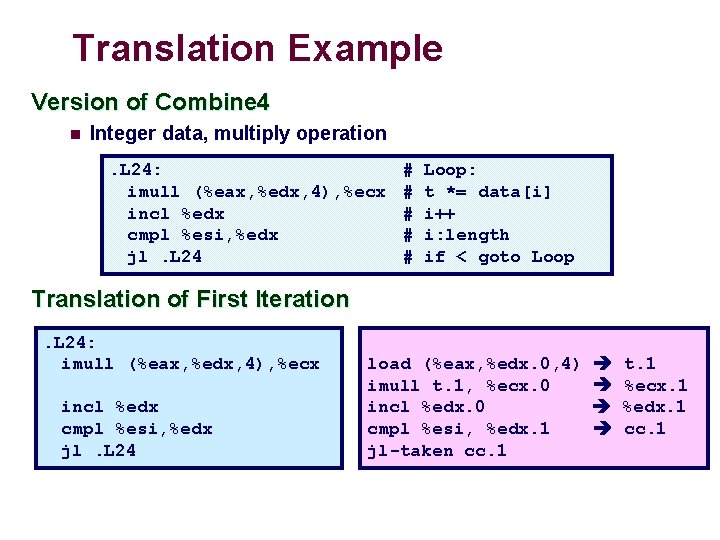

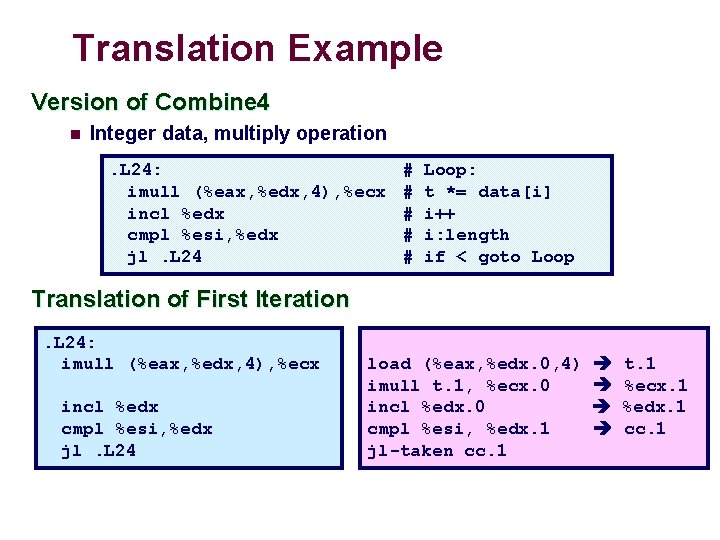

Translation Example Version of Combine 4 n Integer data, multiply operation. L 24: imull (%eax, %edx, 4), %ecx incl %edx cmpl %esi, %edx jl. L 24 # # # Loop: t *= data[i] i++ i: length if < goto Loop Translation of First Iteration. L 24: imull (%eax, %edx, 4), %ecx incl %edx cmpl %esi, %edx jl. L 24 load (%eax, %edx. 0, 4) imull t. 1, %ecx. 0 incl %edx. 0 cmpl %esi, %edx. 1 jl-taken cc. 1 t. 1 %ecx. 1 %edx. 1 cc. 1

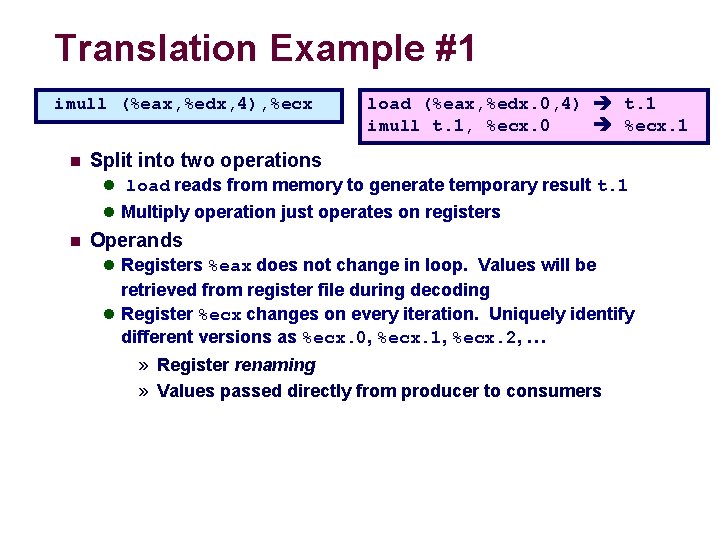

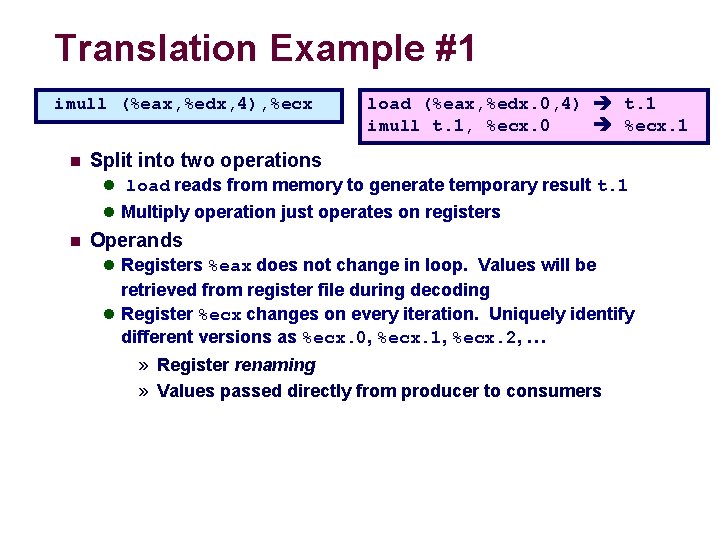

Translation Example #1 imull (%eax, %edx, 4), %ecx n load (%eax, %edx. 0, 4) t. 1 imull t. 1, %ecx. 0 %ecx. 1 Split into two operations l load reads from memory to generate temporary result t. 1 l Multiply operation just operates on registers n Operands l Registers %eax does not change in loop. Values will be retrieved from register file during decoding l Register %ecx changes on every iteration. Uniquely identify different versions as %ecx. 0, %ecx. 1, %ecx. 2, … » Register renaming » Values passed directly from producer to consumers

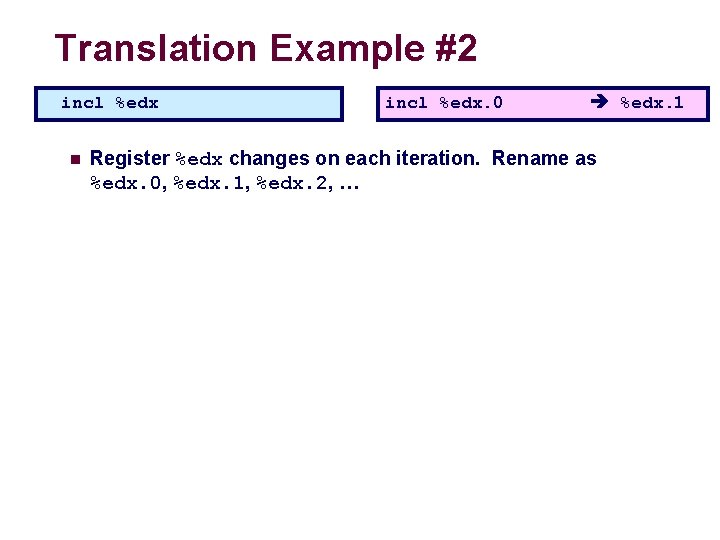

Translation Example #2 incl %edx n incl %edx. 0 %edx. 1 Register %edx changes on each iteration. Rename as %edx. 0, %edx. 1, %edx. 2, …

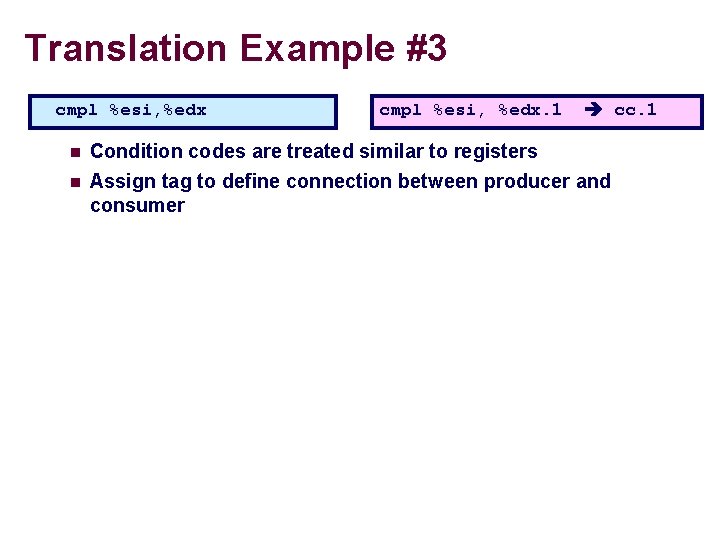

Translation Example #3 cmpl %esi, %edx. 1 cc. 1 n Condition codes are treated similar to registers n Assign tag to define connection between producer and consumer

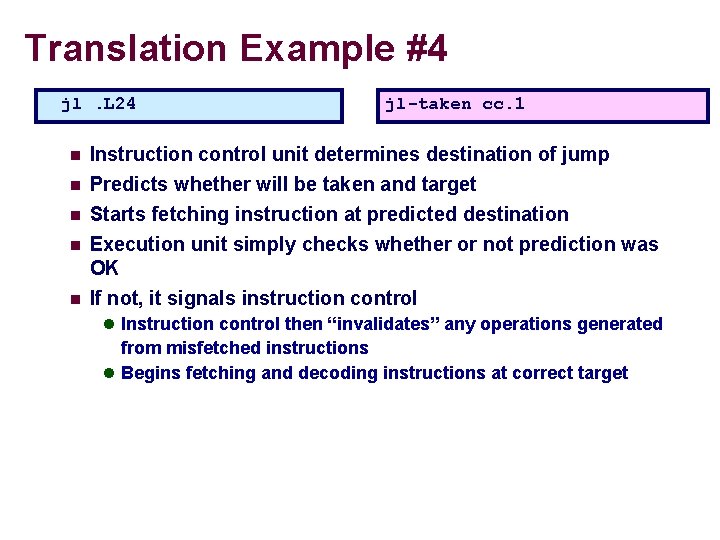

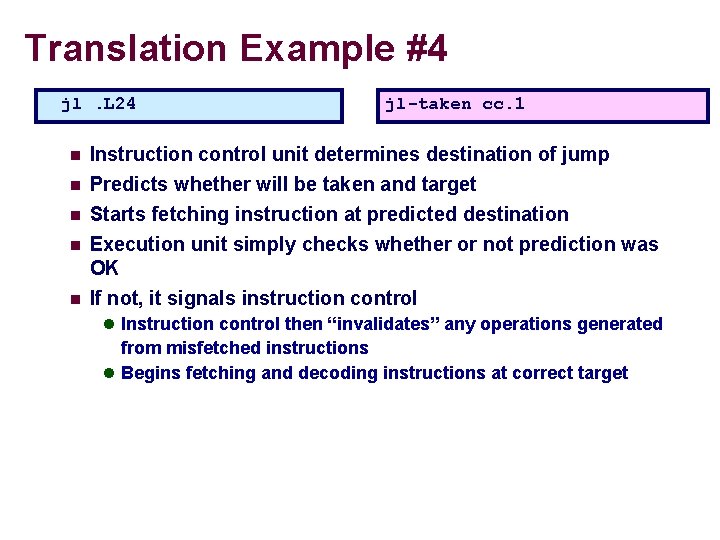

Translation Example #4 jl. L 24 n n n jl-taken cc. 1 Instruction control unit determines destination of jump Predicts whether will be taken and target Starts fetching instruction at predicted destination Execution unit simply checks whether or not prediction was OK If not, it signals instruction control l Instruction control then “invalidates” any operations generated from misfetched instructions l Begins fetching and decoding instructions at correct target

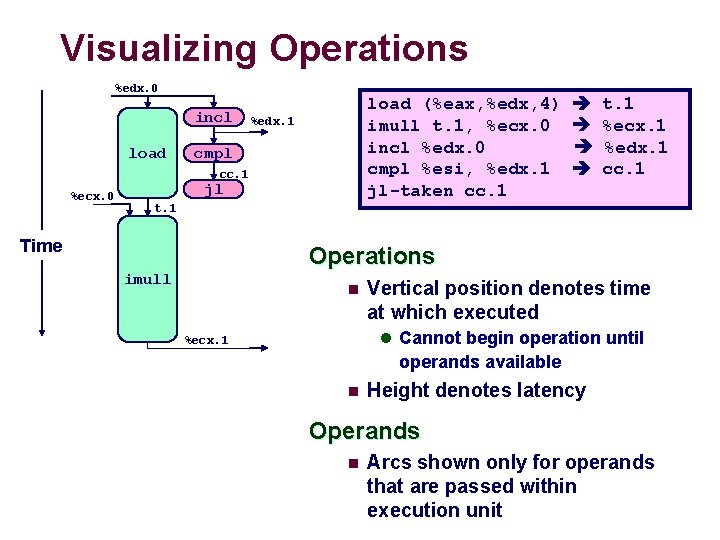

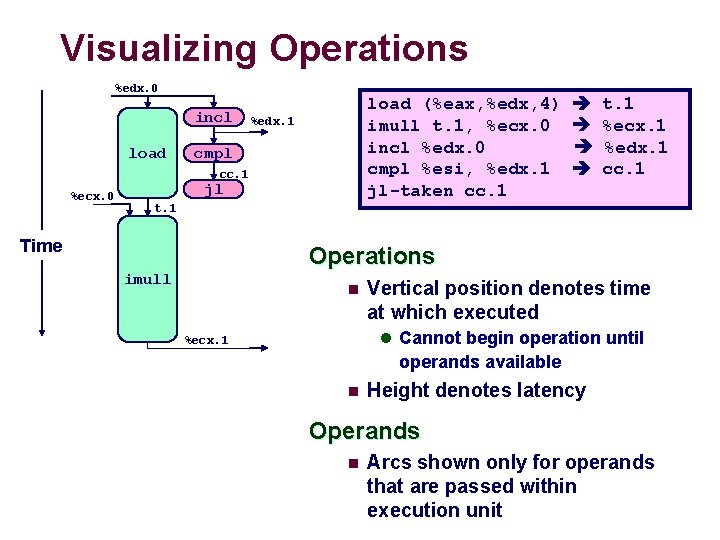

Visualizing Operations %edx. 0 incl load (%eax, %edx, 4) imull t. 1, %ecx. 0 incl %edx. 0 cmpl %esi, %edx. 1 jl-taken cc. 1 %edx. 1 cmpl cc. 1 %ecx. 0 jl t. 1 %ecx. 1 %edx. 1 cc. 1 t. 1 Time Operations imull n Vertical position denotes time at which executed l Cannot begin operation until %ecx. 1 operands available n Height denotes latency Operands n Arcs shown only for operands that are passed within execution unit

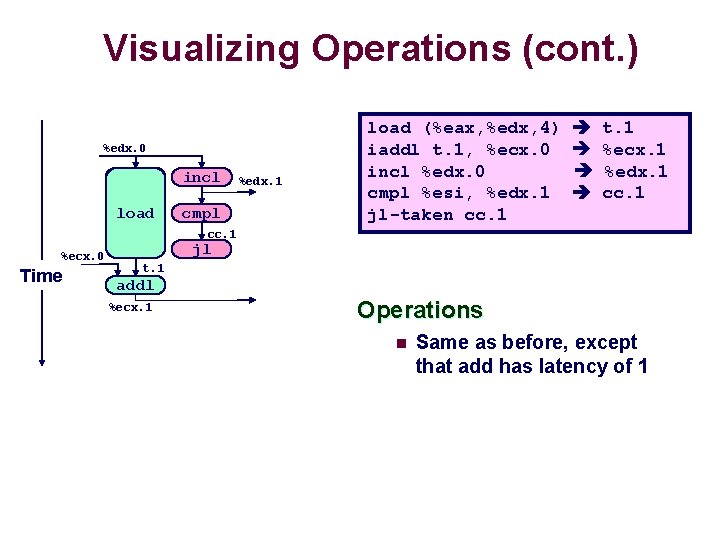

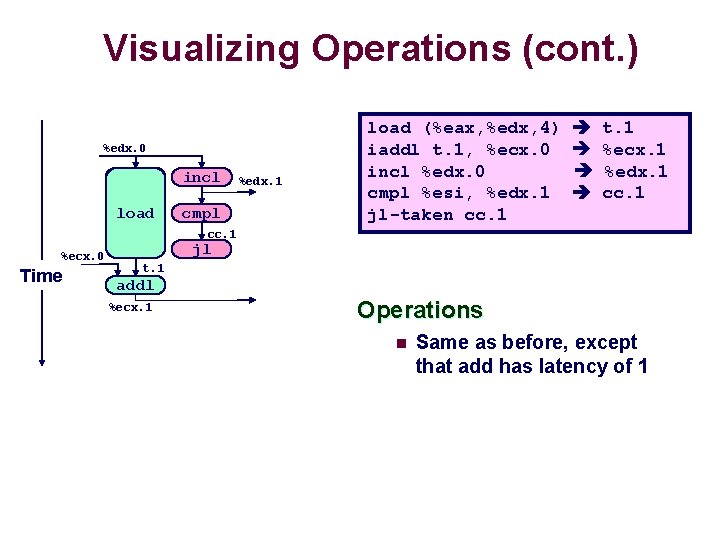

Visualizing Operations (cont. ) %edx. 0 load incl load cmpl+1 %ecx. i %edx. 1 load (%eax, %edx, 4) iaddl t. 1, %ecx. 0 incl %edx. 0 cmpl %esi, %edx. 1 jl-taken cc. 1 t. 1 %ecx. 1 %edx. 1 cc. 1 %ecx. 0 Time jl t. 1 addl %ecx. 1 Operations n Same as before, except that add has latency of 1

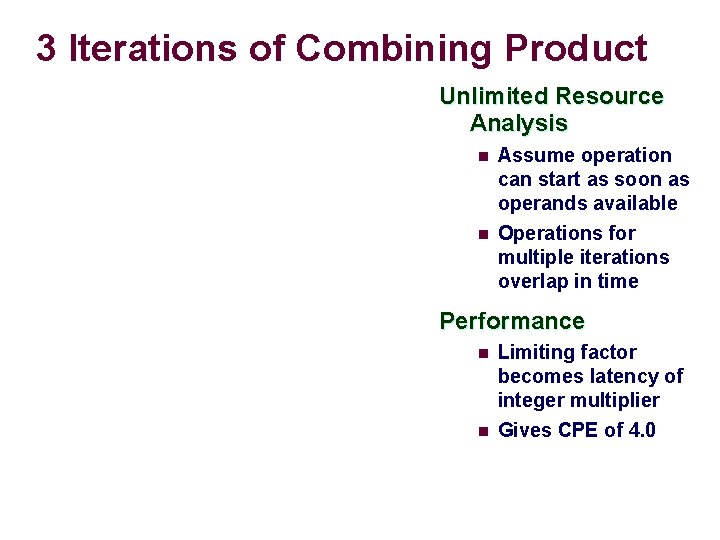

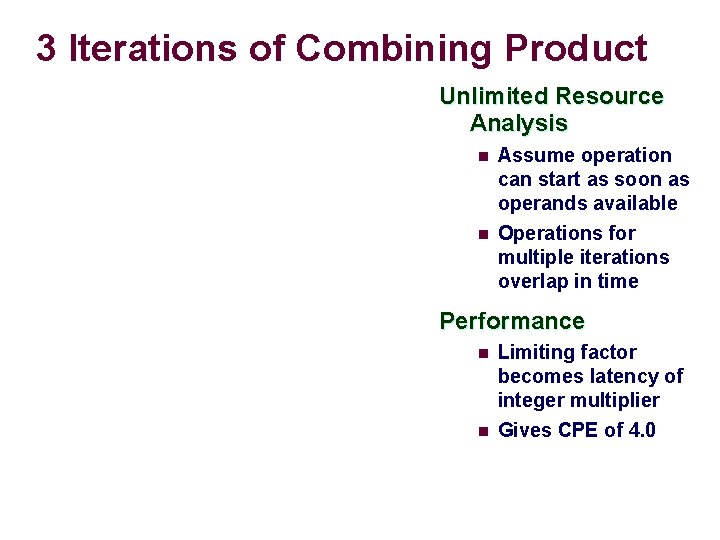

3 Iterations of Combining Product Unlimited Resource Analysis n n Assume operation can start as soon as operands available Operations for multiple iterations overlap in time Performance n n Limiting factor becomes latency of integer multiplier Gives CPE of 4. 0

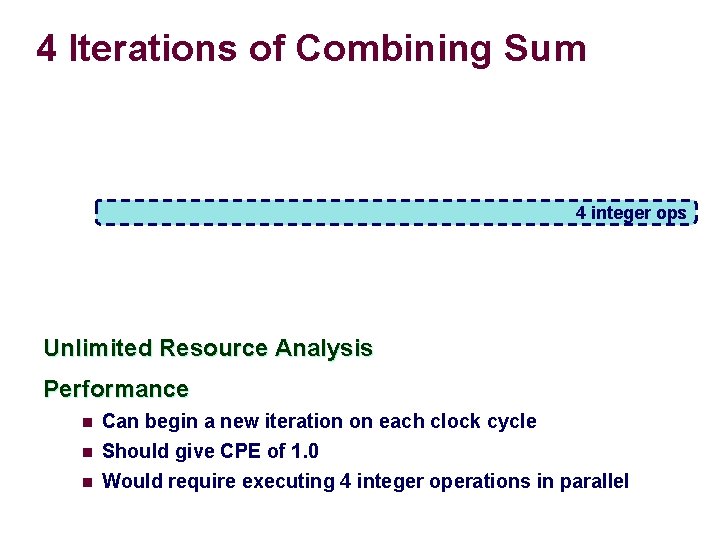

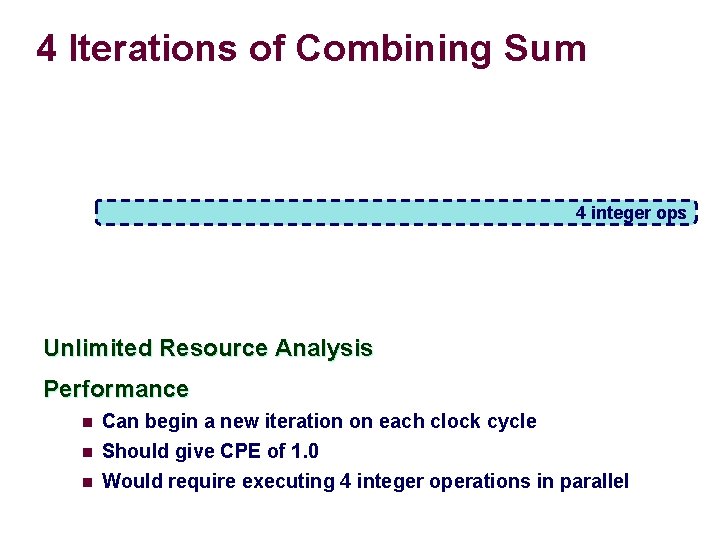

4 Iterations of Combining Sum 4 integer ops Unlimited Resource Analysis Performance n n n Can begin a new iteration on each clock cycle Should give CPE of 1. 0 Would require executing 4 integer operations in parallel

Combining Sum: Resource Constraints n n n Only have two integer functional units Some operations delayed even though operands available Set priority based on program order Performance n Sustain CPE of 2. 0

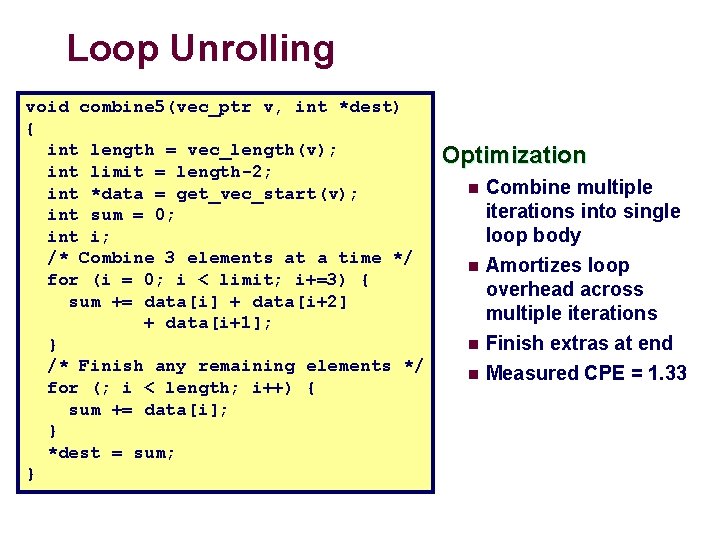

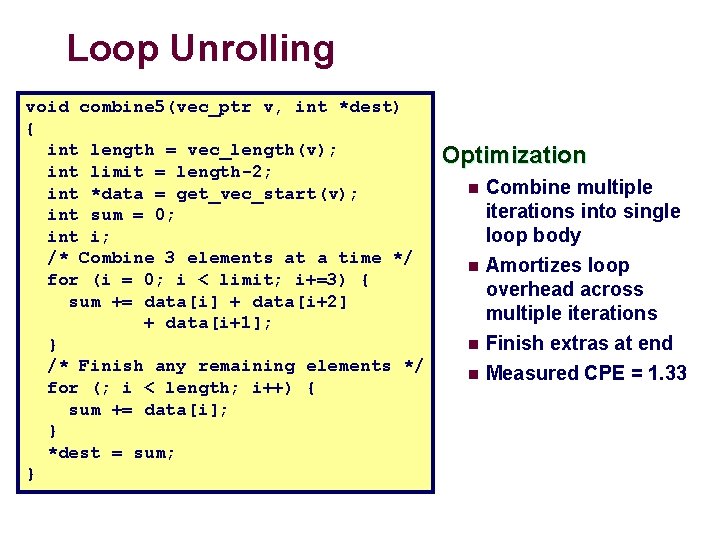

Loop Unrolling void combine 5(vec_ptr v, int *dest) { int length = vec_length(v); int limit = length-2; int *data = get_vec_start(v); int sum = 0; int i; /* Combine 3 elements at a time */ for (i = 0; i < limit; i+=3) { sum += data[i] + data[i+2] + data[i+1]; } /* Finish any remaining elements */ for (; i < length; i++) { sum += data[i]; } *dest = sum; } Optimization n Combine multiple iterations into single loop body Amortizes loop overhead across multiple iterations n Finish extras at end n Measured CPE = 1. 33 n

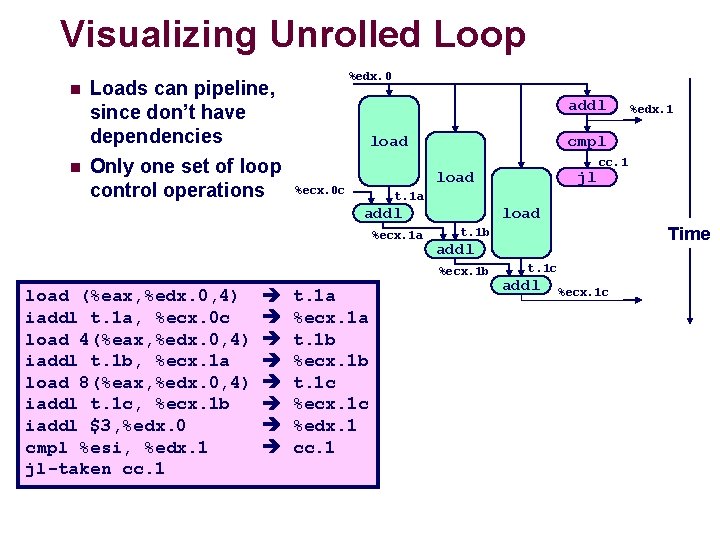

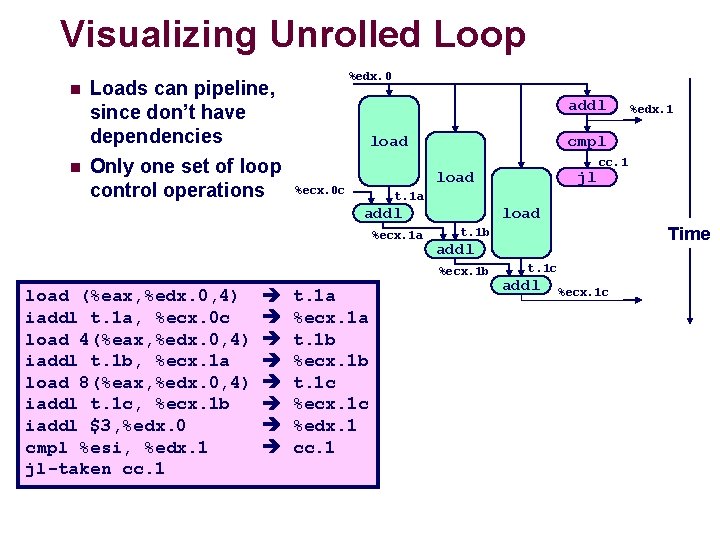

Visualizing Unrolled Loop n n Loads can pipeline, since don’t have dependencies Only one set of loop control operations %edx. 0 addl load cmpl+1 %ecx. i %ecx. 1 a load t. 1 a %ecx. 1 a t. 1 b %ecx. 1 b t. 1 c %ecx. 1 c %edx. 1 cc. 1 Time t. 1 b addl %ecx. 1 b cc. 1 t. 1 a addl load (%eax, %edx. 0, 4) iaddl t. 1 a, %ecx. 0 c load 4(%eax, %edx. 0, 4) iaddl t. 1 b, %ecx. 1 a load 8(%eax, %edx. 0, 4) iaddl t. 1 c, %ecx. 1 b iaddl $3, %edx. 0 cmpl %esi, %edx. 1 jl-taken cc. 1 jl load %ecx. 0 c %edx. 1 t. 1 c addl %ecx. 1 c

Executing with Loop Unrolling n Predicted Performance l Can complete iteration in 3 cycles l Should give CPE of 1. 0 n Measured Performance l CPE of 1. 33 l One iteration every 4 cycles

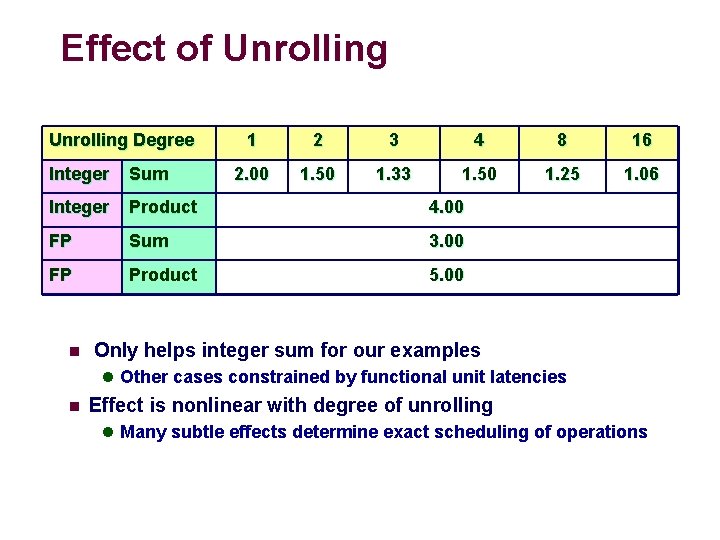

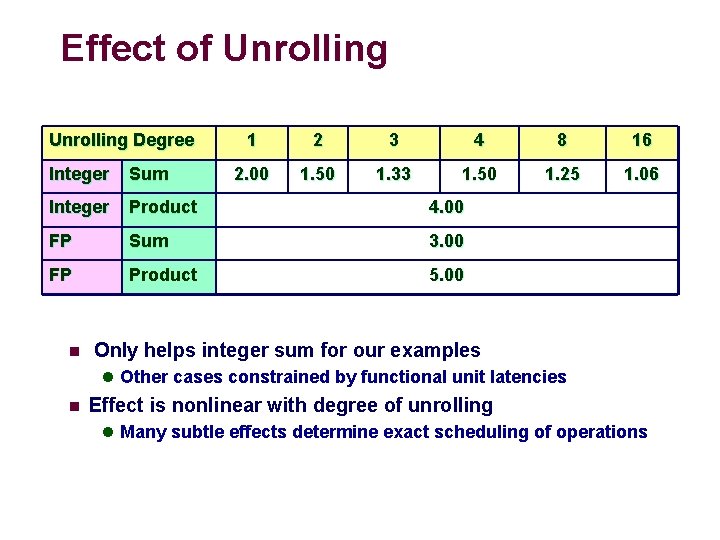

Effect of Unrolling Degree 1 2 3 4 8 16 2. 00 1. 50 1. 33 1. 50 1. 25 1. 06 Integer Sum Integer Product 4. 00 FP Sum 3. 00 FP Product 5. 00 n Only helps integer sum for our examples l Other cases constrained by functional unit latencies n Effect is nonlinear with degree of unrolling l Many subtle effects determine exact scheduling of operations

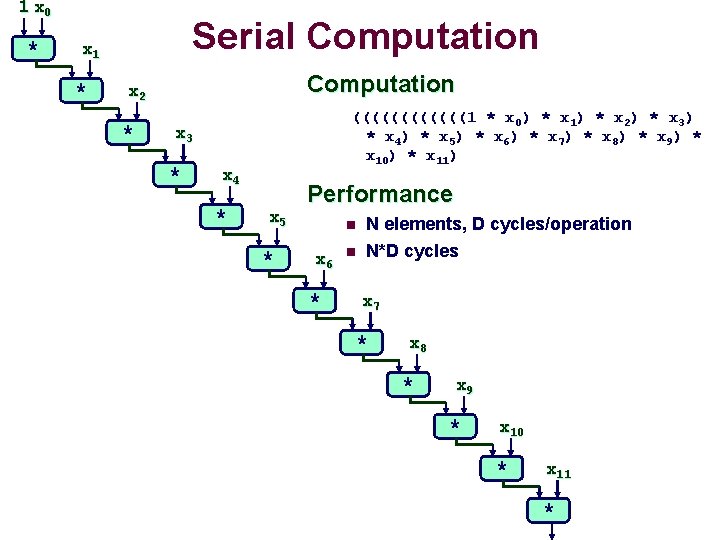

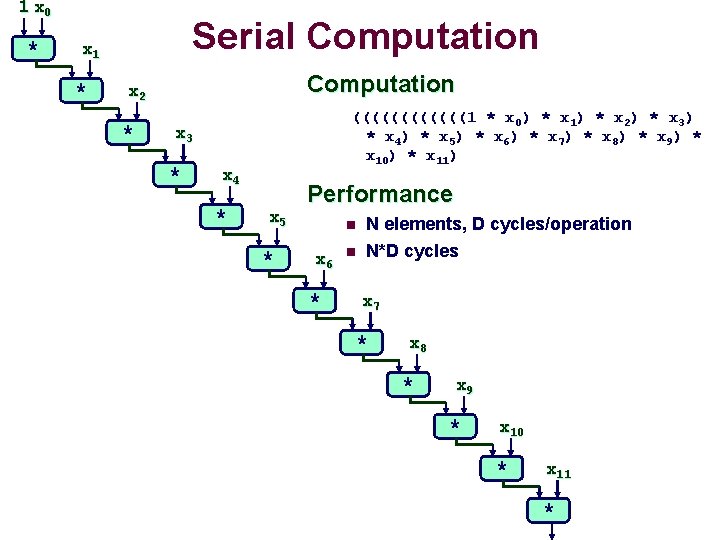

1 x 0 * Serial Computation x 1 * Computation x 2 * ((((((1 * x 0) * x 1) * x 2) * x 3) * x 4 ) * x 5 ) * x 6 ) * x 7 ) * x 8 ) * x 9 ) * x 10) * x 11) x 3 * x 4 * x 5 * Performance n x 6 * n N elements, D cycles/operation N*D cycles x 7 * x 8 * x 9 * x 10 * x 11 *

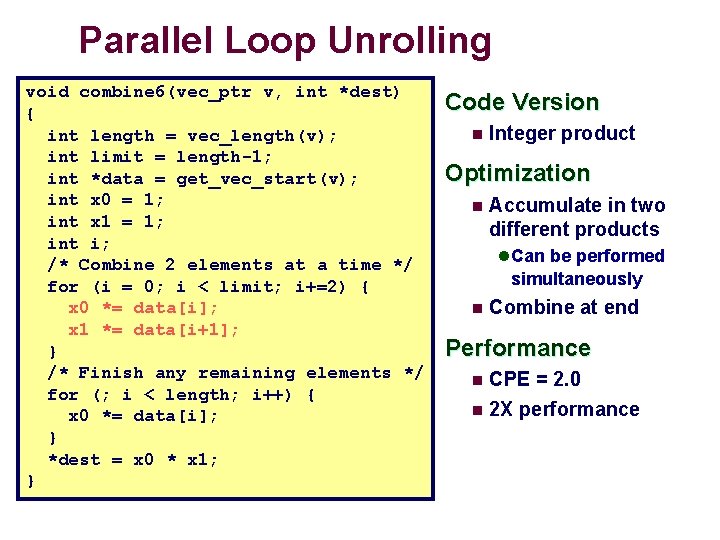

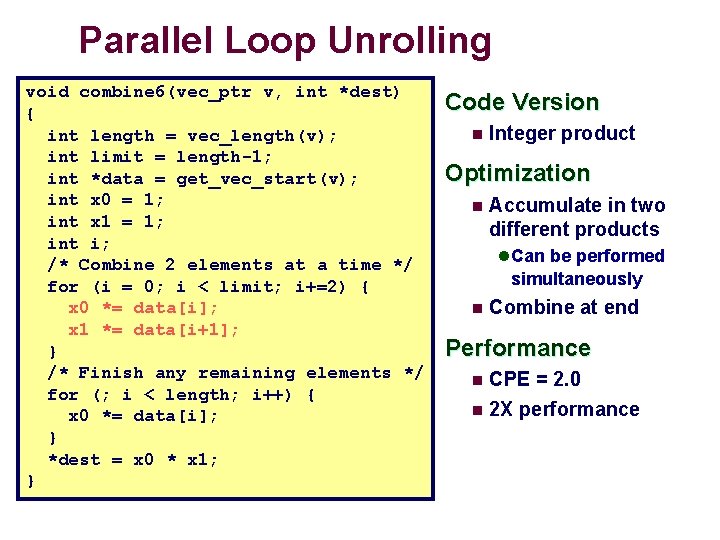

Parallel Loop Unrolling void combine 6(vec_ptr v, int *dest) { int length = vec_length(v); int limit = length-1; int *data = get_vec_start(v); int x 0 = 1; int x 1 = 1; int i; /* Combine 2 elements at a time */ for (i = 0; i < limit; i+=2) { x 0 *= data[i]; x 1 *= data[i+1]; } /* Finish any remaining elements */ for (; i < length; i++) { x 0 *= data[i]; } *dest = x 0 * x 1; } Code Version n Integer product Optimization n Accumulate in two different products l Can be performed simultaneously n Combine at end Performance CPE = 2. 0 n 2 X performance n

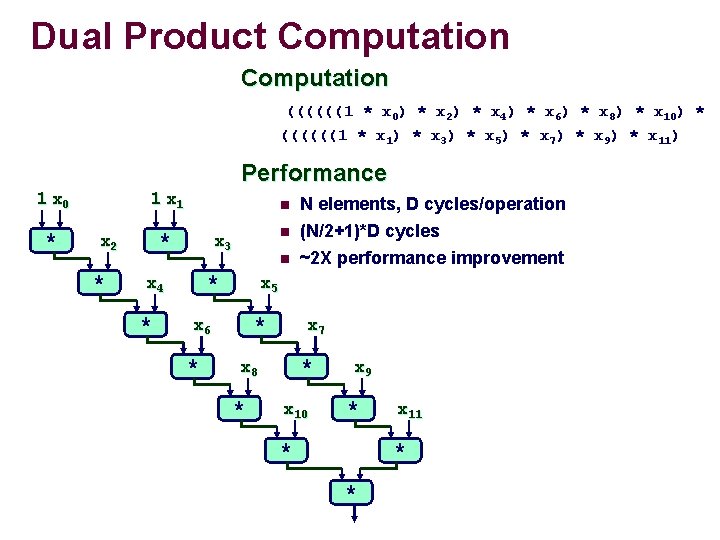

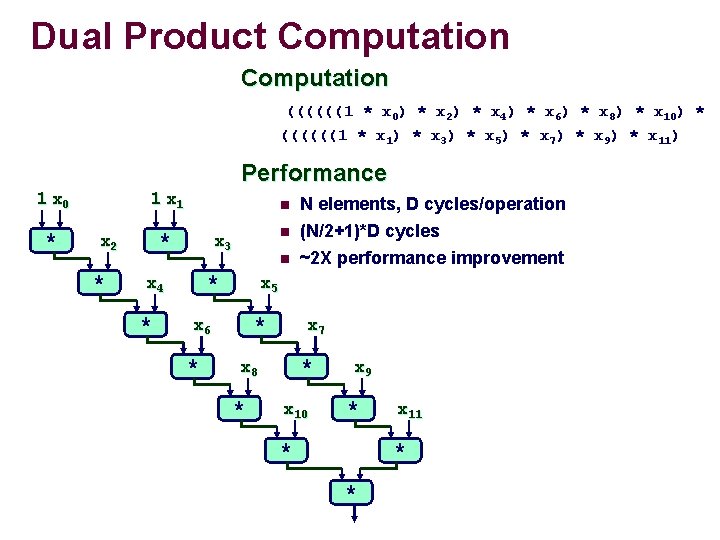

Dual Product Computation ((((((1 * x 0) * x 2) * x 4) * x 6) * x 8) * x 10) * ((((((1 * x 1) * x 3) * x 5) * x 7) * x 9) * x 11) Performance 1 x 0 * 1 x 1 * x 2 * n n * x 4 * n x 3 x 5 * x 6 * N elements, D cycles/operation (N/2+1)*D cycles ~2 X performance improvement x 7 * x 8 * x 10 x 9 * * x 11 * *

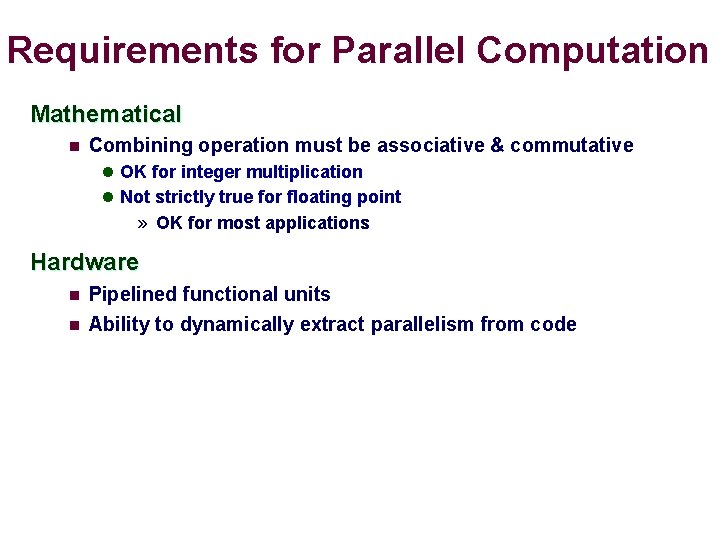

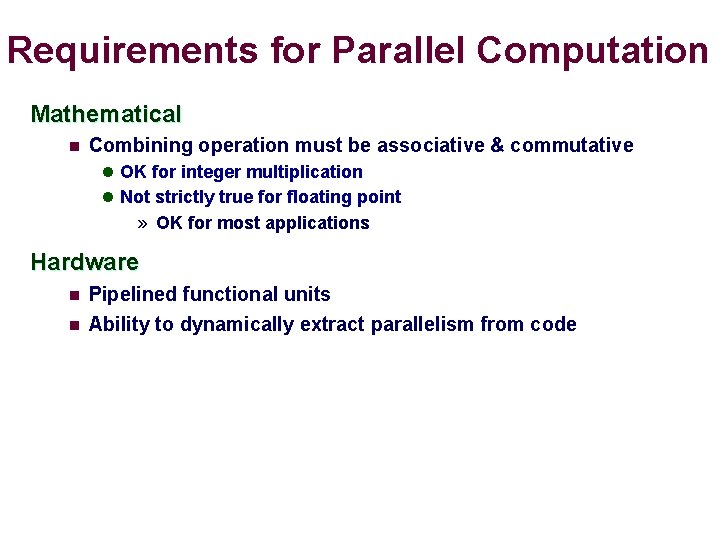

Requirements for Parallel Computation Mathematical n Combining operation must be associative & commutative l OK for integer multiplication l Not strictly true for floating point » OK for most applications Hardware n n Pipelined functional units Ability to dynamically extract parallelism from code

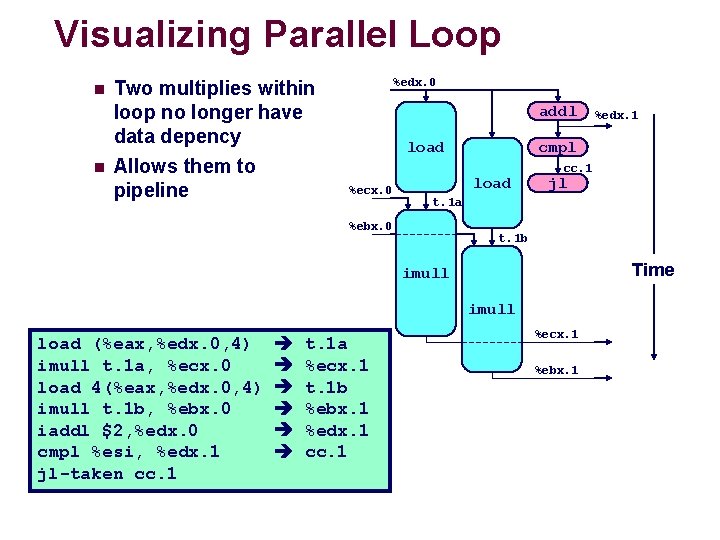

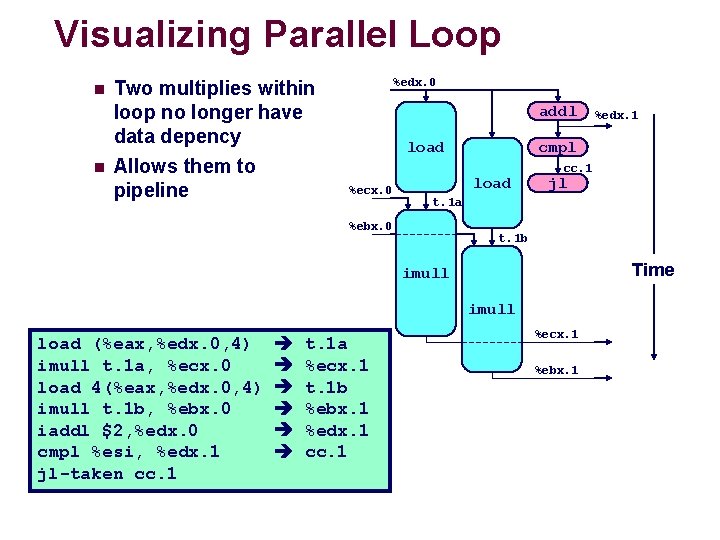

Visualizing Parallel Loop n n Two multiplies within loop no longer have data depency Allows them to pipeline %edx. 0 addl load %ecx. 0 cmpl load cc. 1 jl t. 1 a %ebx. 0 t. 1 b Time imull load (%eax, %edx. 0, 4) imull t. 1 a, %ecx. 0 load 4(%eax, %edx. 0, 4) imull t. 1 b, %ebx. 0 iaddl $2, %edx. 0 cmpl %esi, %edx. 1 jl-taken cc. 1 t. 1 a %ecx. 1 t. 1 b %ebx. 1 %edx. 1 cc. 1 %edx. 1 %ecx. 1 %ebx. 1

Executing with Parallel Loop n Predicted Performance l Can keep 4 -cycle multiplier busy performing two simultaneous multiplications l Gives CPE of 2. 0

Optimization Results for Combining

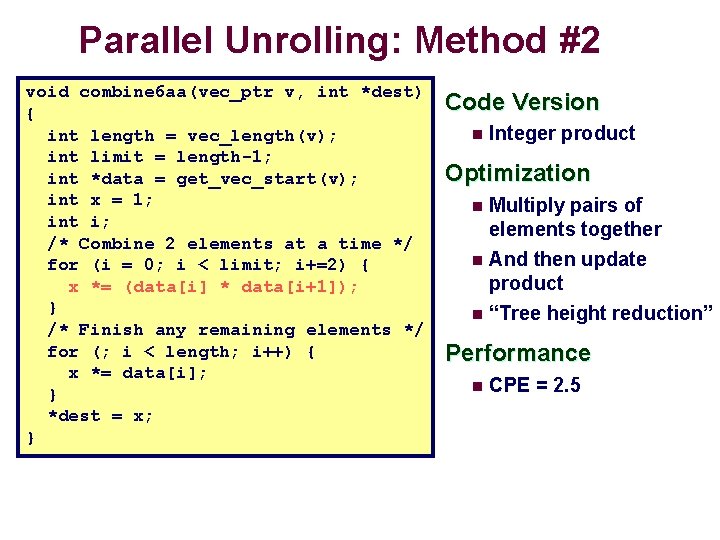

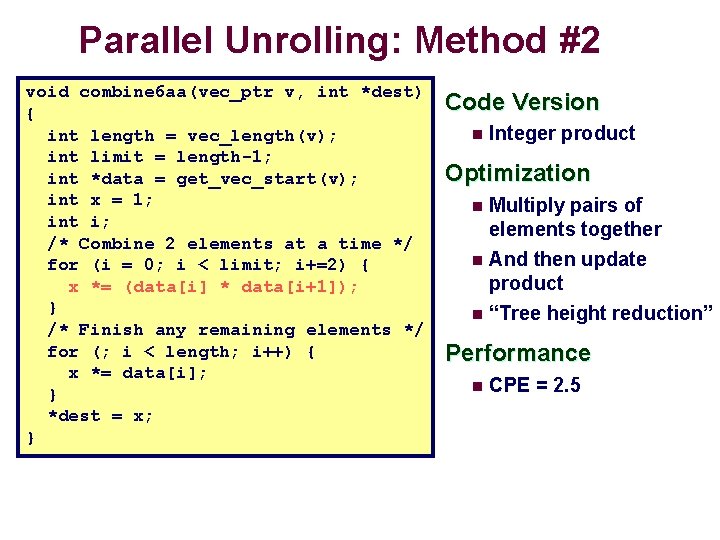

Parallel Unrolling: Method #2 void combine 6 aa(vec_ptr v, int *dest) { int length = vec_length(v); int limit = length-1; int *data = get_vec_start(v); int x = 1; int i; /* Combine 2 elements at a time */ for (i = 0; i < limit; i+=2) { x *= (data[i] * data[i+1]); } /* Finish any remaining elements */ for (; i < length; i++) { x *= data[i]; } *dest = x; } Code Version n Integer product Optimization Multiply pairs of elements together n And then update product n “Tree height reduction” n Performance n CPE = 2. 5

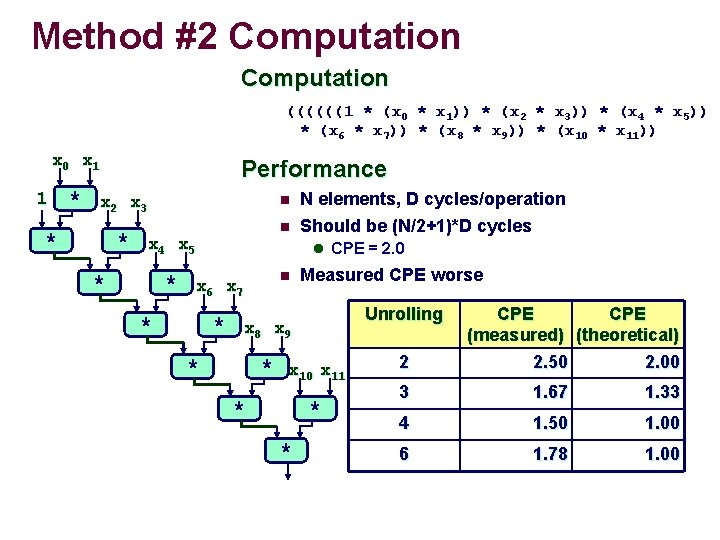

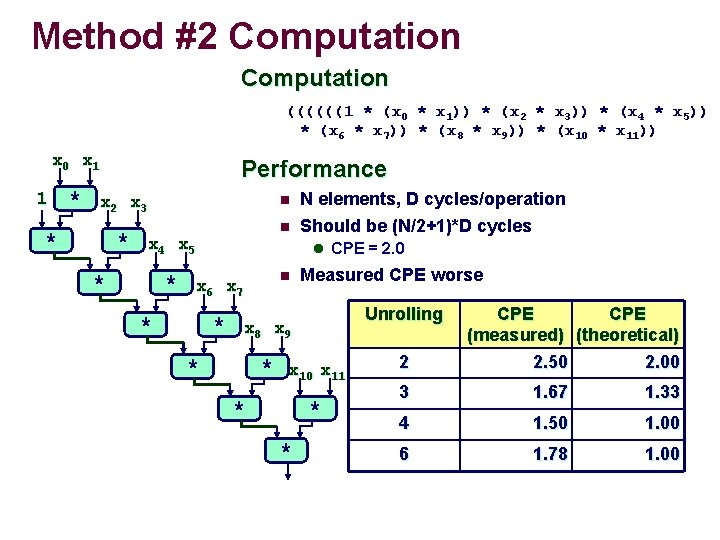

Method #2 Computation ((((((1 * (x 0 * x 1)) * (x 2 * x 3)) * (x 4 * x 5)) * (x 6 * x 7)) * (x 8 * x 9)) * (x 10 * x 11)) x 0 x 1 1 Performance * x 2 x 3 * n n * x 4 x 5 * N elements, D cycles/operation Should be (N/2+1)*D cycles l CPE = 2. 0 * x 6 x 7 n Measured CPE worse Unrolling * x 8 x 9 * * * x 10 x 11 * * * 2 CPE (measured) (theoretical) 2. 50 2. 00 3 1. 67 1. 33 4 1. 50 1. 00 6 1. 78 1. 00

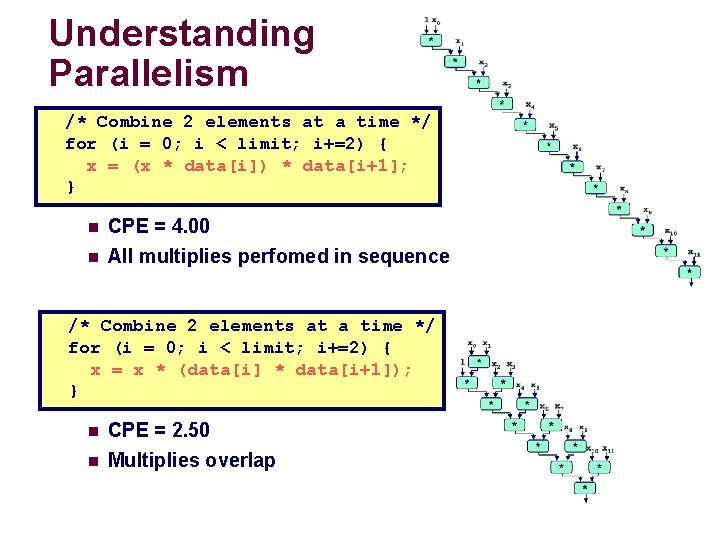

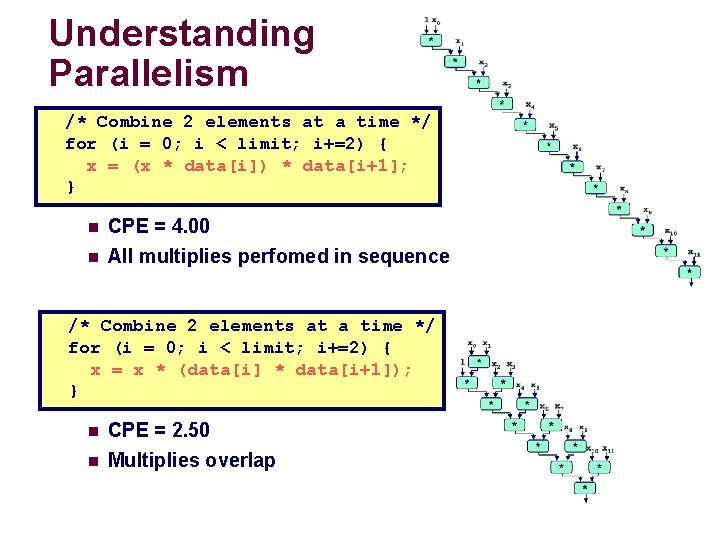

Understanding Parallelism /* Combine 2 elements at a time */ for (i = 0; i < limit; i+=2) { x = (x * data[i]) * data[i+1]; } n n CPE = 4. 00 All multiplies perfomed in sequence /* Combine 2 elements at a time */ for (i = 0; i < limit; i+=2) { x = x * (data[i] * data[i+1]); } n n CPE = 2. 50 Multiplies overlap

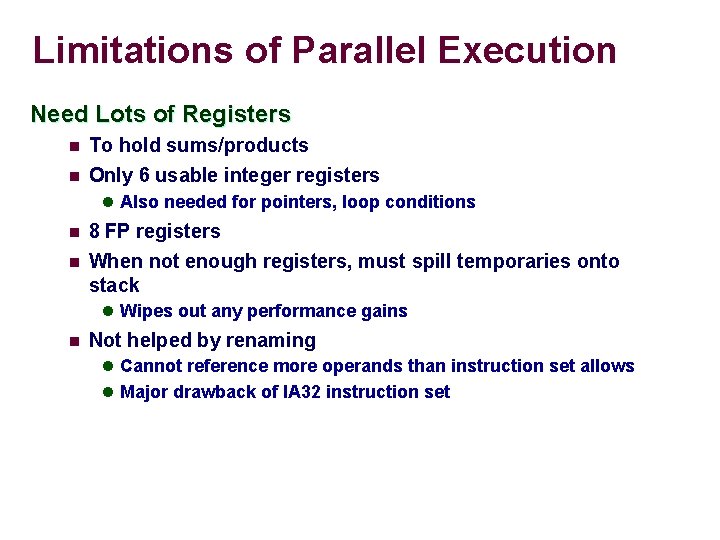

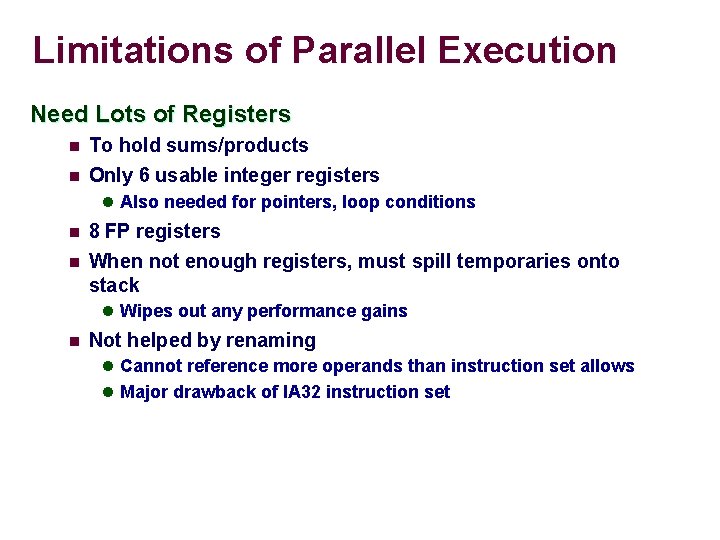

Limitations of Parallel Execution Need Lots of Registers n To hold sums/products n Only 6 usable integer registers l Also needed for pointers, loop conditions n n 8 FP registers When not enough registers, must spill temporaries onto stack l Wipes out any performance gains n Not helped by renaming l Cannot reference more operands than instruction set allows l Major drawback of IA 32 instruction set

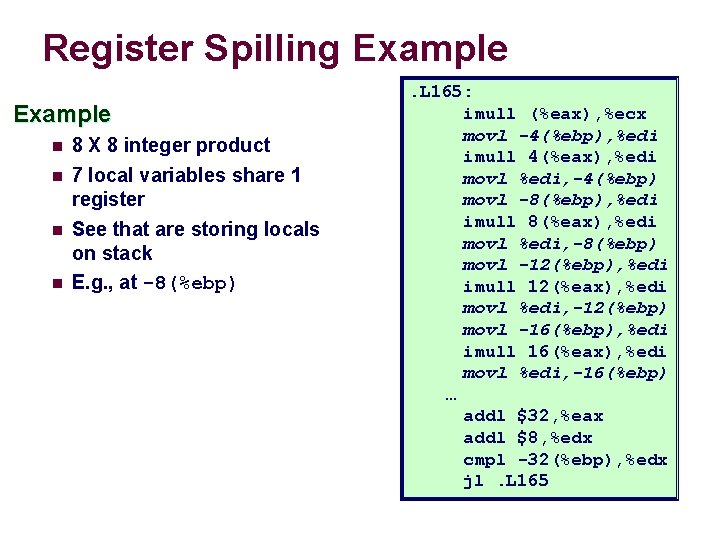

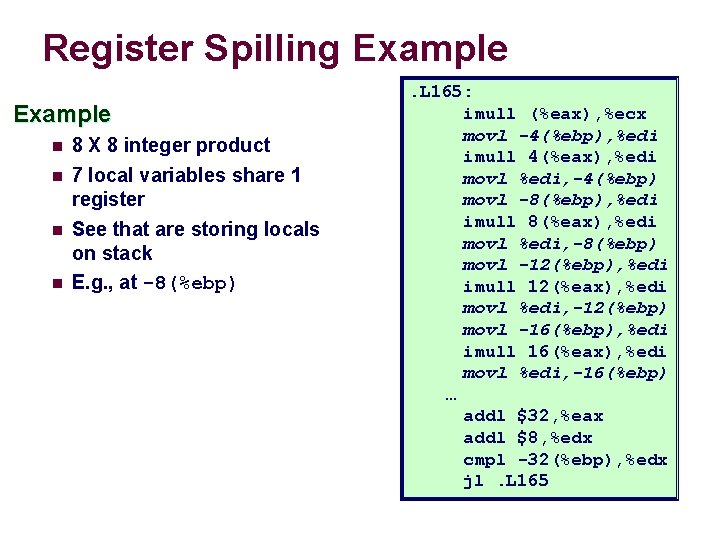

Register Spilling Example n 8 X 8 integer product n 7 local variables share 1 register See that are storing locals on stack E. g. , at -8(%ebp) n n . L 165: imull (%eax), %ecx movl -4(%ebp), %edi imull 4(%eax), %edi movl %edi, -4(%ebp) movl -8(%ebp), %edi imull 8(%eax), %edi movl %edi, -8(%ebp) movl -12(%ebp), %edi imull 12(%eax), %edi movl %edi, -12(%ebp) movl -16(%ebp), %edi imull 16(%eax), %edi movl %edi, -16(%ebp) … addl $32, %eax addl $8, %edx cmpl -32(%ebp), %edx jl. L 165

Summary: Results for Pentium III Biggest gain doing basic optimizations n But, last little bit helps n

Results for Alpha Processor Overall trends very similar to those for Pentium III. n Even though very different architecture and compiler n

Results for Pentium 4 n Higher latencies (int * = 14, fp + = 5. 0, fp * = 7. 0) l Clock runs at 2. 0 GHz l Not an improvement over 1. 0 GHz P 3 for integer * n Avoids FP multiplication anomaly

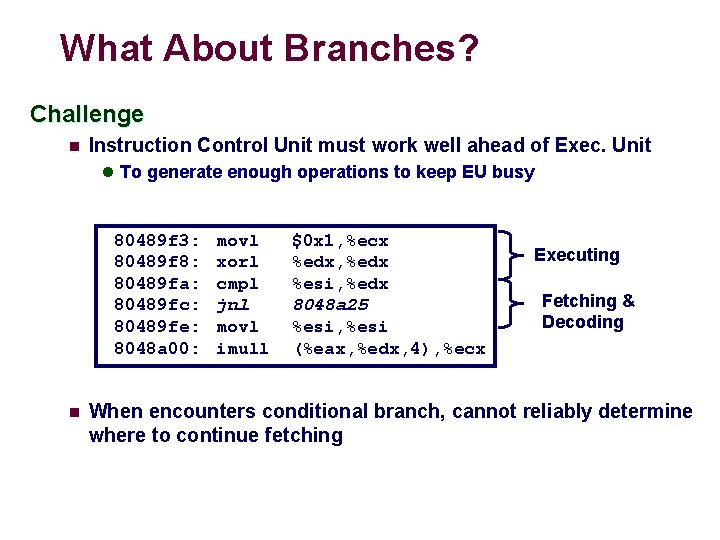

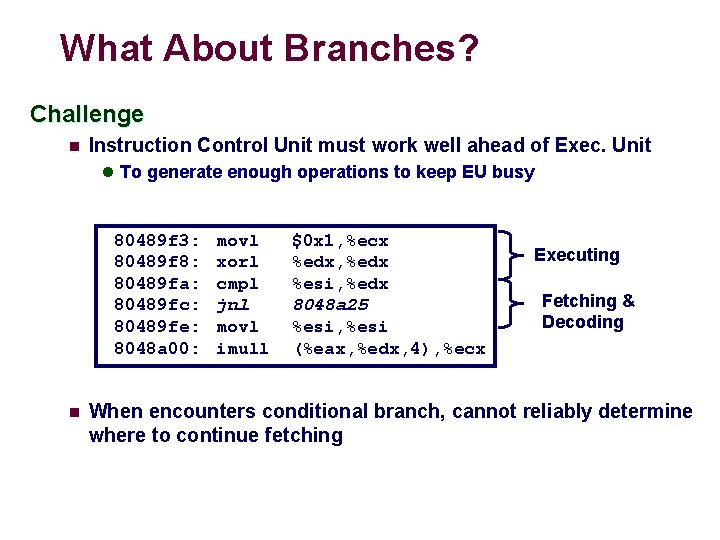

What About Branches? Challenge n Instruction Control Unit must work well ahead of Exec. Unit l To generate enough operations to keep EU busy 80489 f 3: 80489 f 8: 80489 fa: 80489 fc: 80489 fe: 8048 a 00: n movl xorl cmpl jnl movl imull $0 x 1, %ecx %edx, %edx %esi, %edx 8048 a 25 %esi, %esi (%eax, %edx, 4), %ecx Executing Fetching & Decoding When encounters conditional branch, cannot reliably determine where to continue fetching

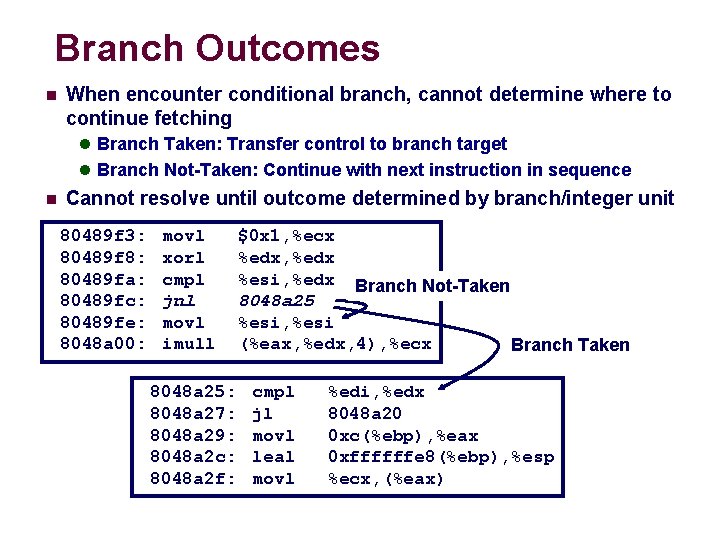

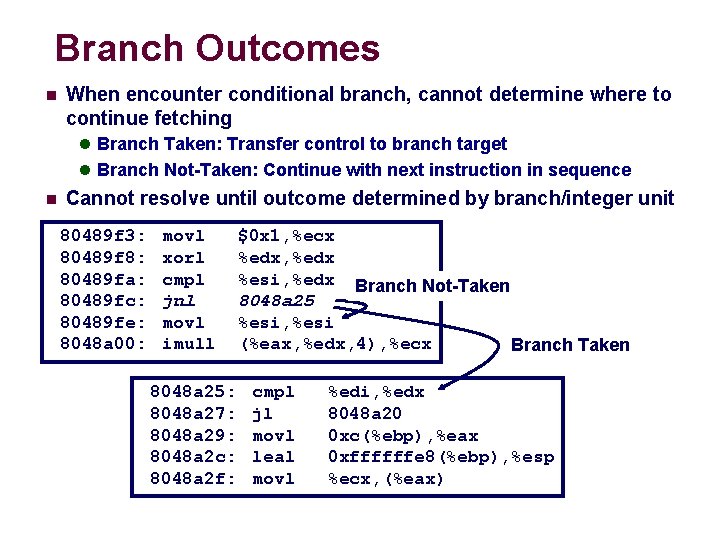

Branch Outcomes n When encounter conditional branch, cannot determine where to continue fetching l Branch Taken: Transfer control to branch target l Branch Not-Taken: Continue with next instruction in sequence n Cannot resolve until outcome determined by branch/integer unit 80489 f 3: 80489 f 8: 80489 fa: 80489 fc: 80489 fe: 8048 a 00: movl xorl cmpl jnl movl imull 8048 a 25: 8048 a 27: 8048 a 29: 8048 a 2 c: 8048 a 2 f: $0 x 1, %ecx %edx, %edx %esi, %edx Branch Not-Taken 8048 a 25 %esi, %esi (%eax, %edx, 4), %ecx Branch Taken cmpl jl movl leal movl %edi, %edx 8048 a 20 0 xc(%ebp), %eax 0 xffffffe 8(%ebp), %esp %ecx, (%eax)

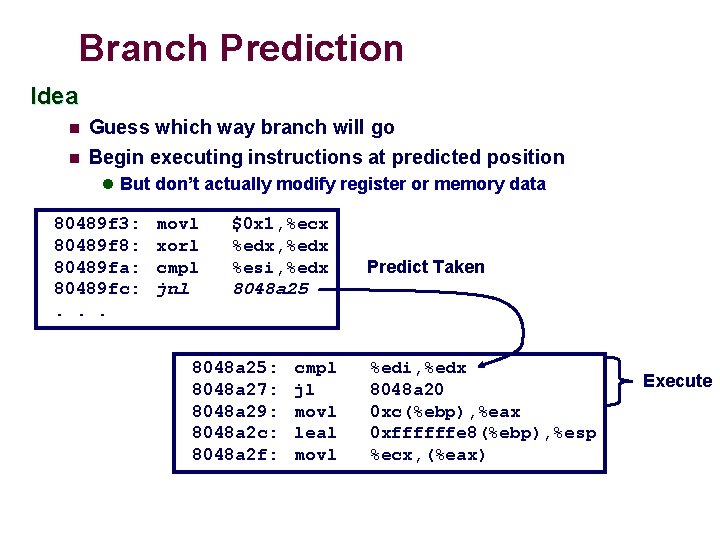

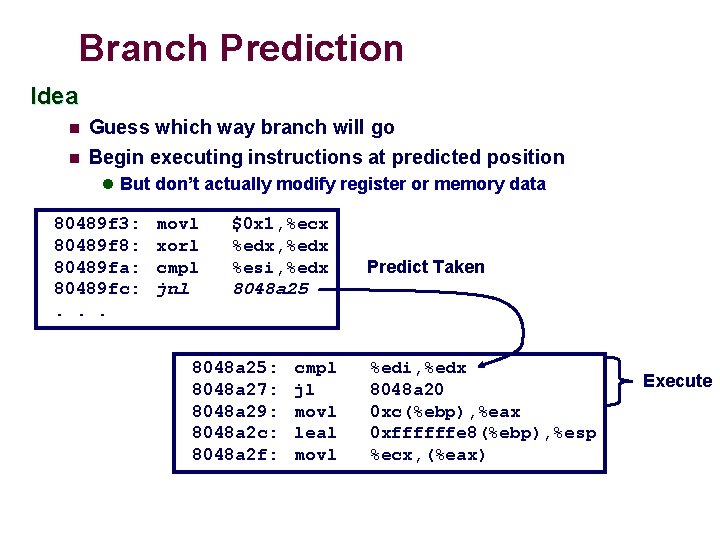

Branch Prediction Idea n Guess which way branch will go n Begin executing instructions at predicted position l But don’t actually modify register or memory data 80489 f 3: 80489 f 8: 80489 fa: 80489 fc: . . . movl xorl cmpl jnl $0 x 1, %ecx %edx, %edx %esi, %edx 8048 a 25: 8048 a 27: 8048 a 29: 8048 a 2 c: 8048 a 2 f: cmpl jl movl leal movl Predict Taken %edi, %edx 8048 a 20 0 xc(%ebp), %eax 0 xffffffe 8(%ebp), %esp %ecx, (%eax) Execute

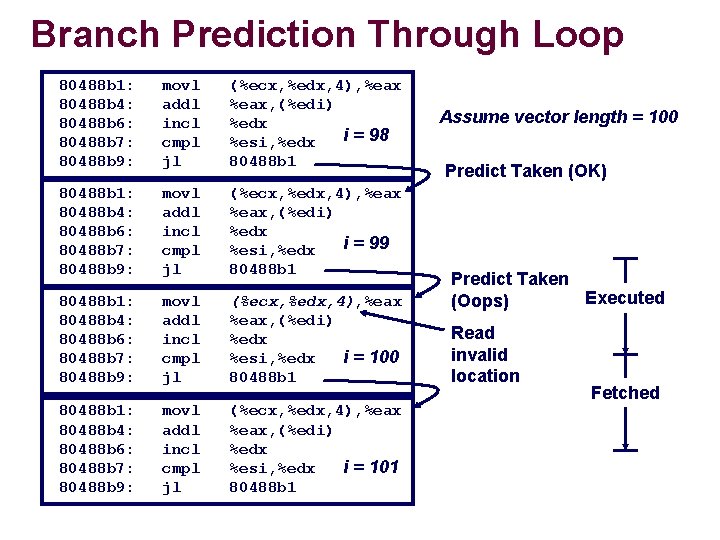

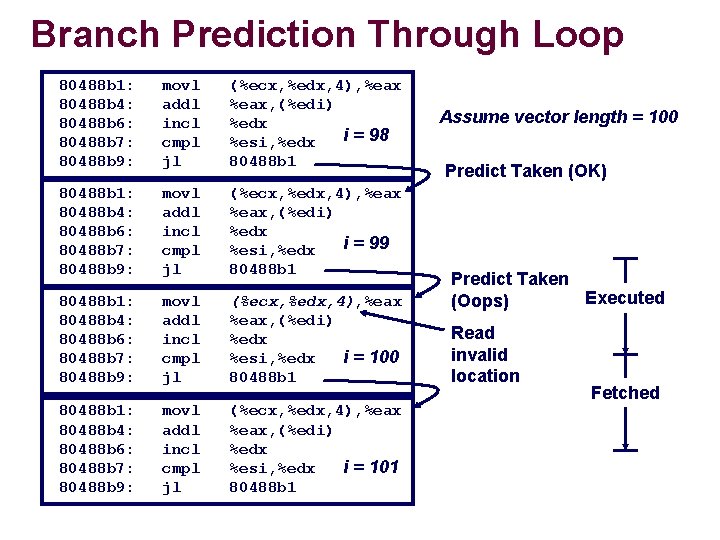

Branch Prediction Through Loop 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 98 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 99 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 100 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 101 %esi, %edx 80488 b 1 Assume vector length = 100 Predict Taken (OK) Predict Taken Executed (Oops) Read invalid location Fetched

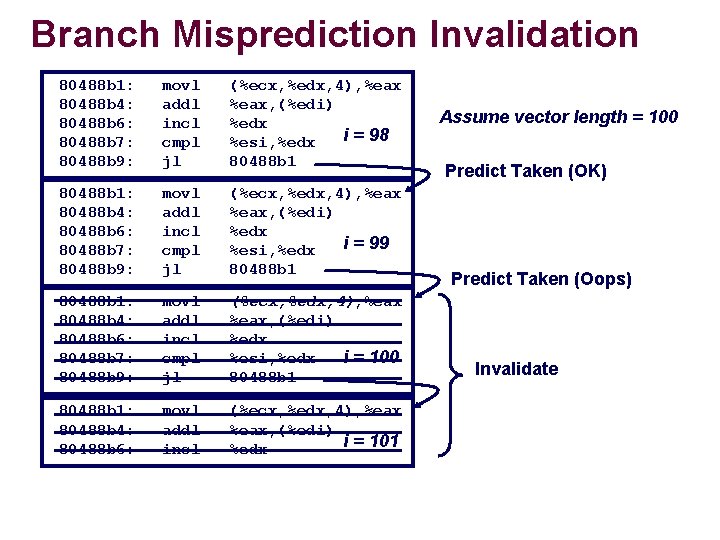

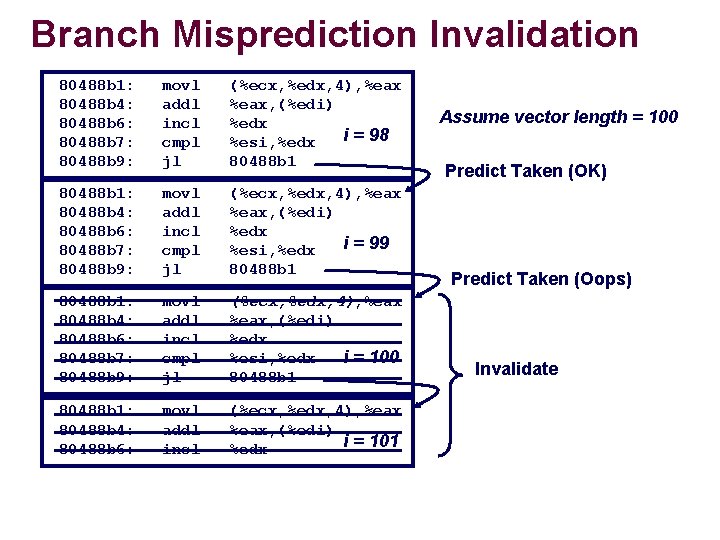

Branch Misprediction Invalidation 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 98 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 99 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 100 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: movl addl incl (%ecx, %edx, 4), %eax, (%edi) i = 101 %edx Assume vector length = 100 Predict Taken (OK) Predict Taken (Oops) Invalidate

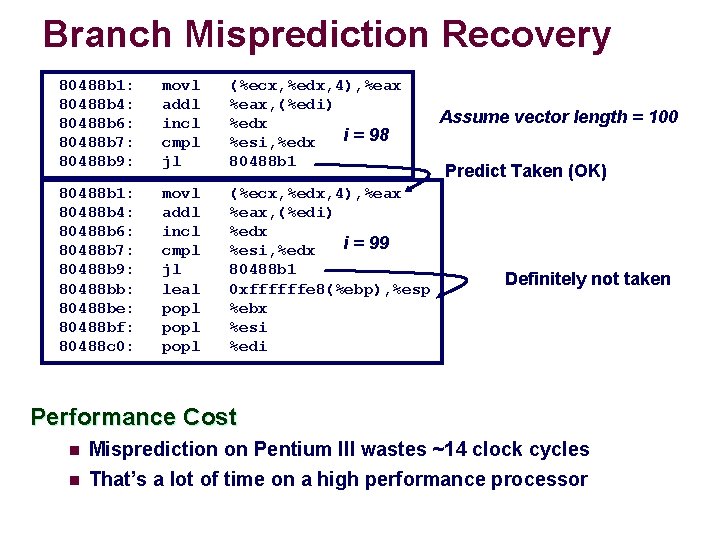

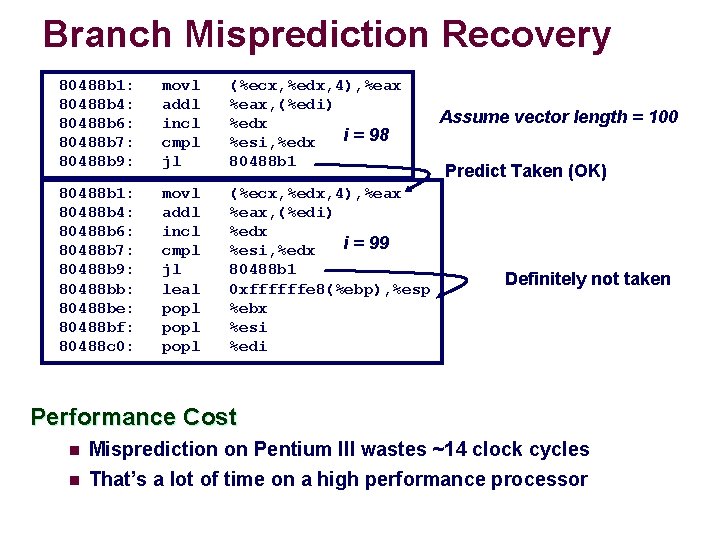

Branch Misprediction Recovery 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: movl addl incl cmpl jl (%ecx, %edx, 4), %eax, (%edi) %edx i = 98 %esi, %edx 80488 b 1: 80488 b 4: 80488 b 6: 80488 b 7: 80488 b 9: 80488 bb: 80488 be: 80488 bf: 80488 c 0: movl addl incl cmpl jl leal popl (%ecx, %edx, 4), %eax, (%edi) %edx i = 99 %esi, %edx 80488 b 1 0 xffffffe 8(%ebp), %esp %ebx %esi %edi Assume vector length = 100 Predict Taken (OK) Definitely not taken Performance Cost n Misprediction on Pentium III wastes ~14 clock cycles n That’s a lot of time on a high performance processor

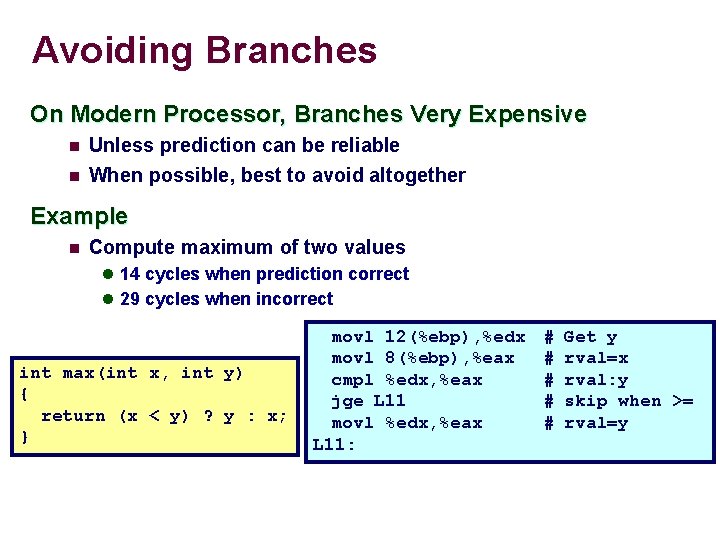

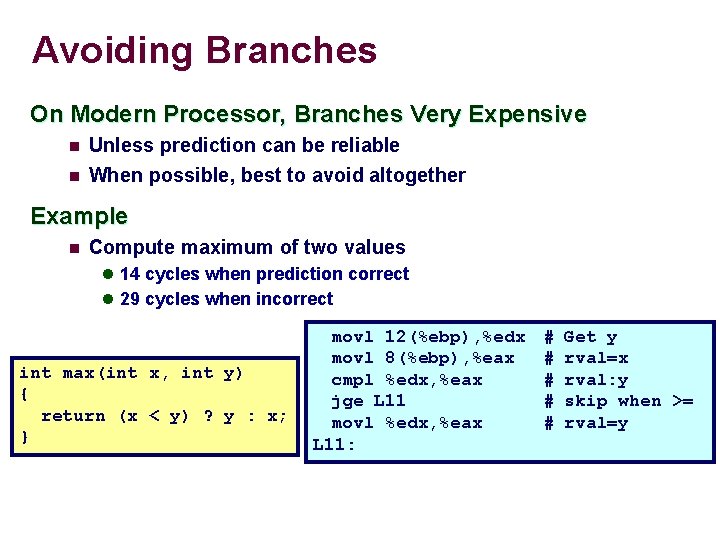

Avoiding Branches On Modern Processor, Branches Very Expensive n Unless prediction can be reliable n When possible, best to avoid altogether Example n Compute maximum of two values l 14 cycles when prediction correct l 29 cycles when incorrect int max(int x, int y) { return (x < y) ? y : x; } movl 12(%ebp), %edx movl 8(%ebp), %eax cmpl %edx, %eax jge L 11 movl %edx, %eax L 11: # # # Get y rval=x rval: y skip when >= rval=y

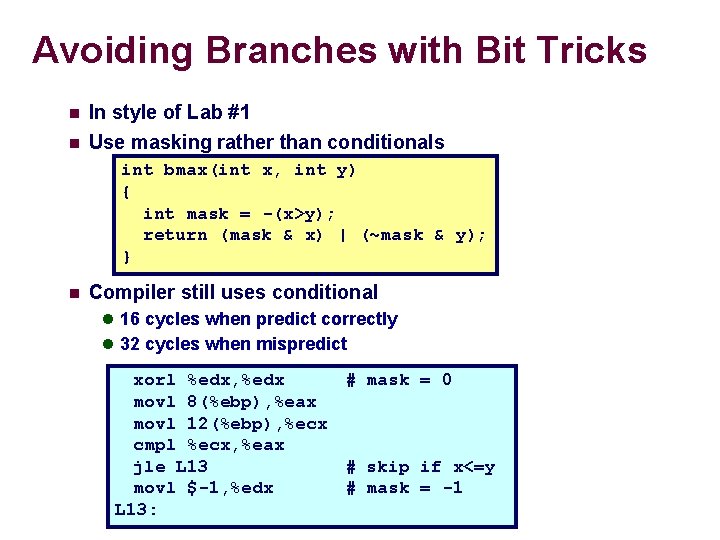

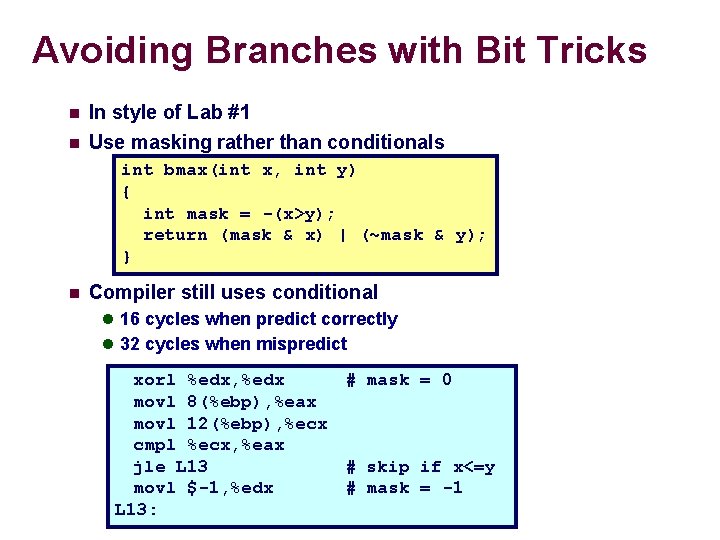

Avoiding Branches with Bit Tricks n n In style of Lab #1 Use masking rather than conditionals int bmax(int x, int y) { int mask = -(x>y); return (mask & x) | (~mask & y); } n Compiler still uses conditional l 16 cycles when predict correctly l 32 cycles when mispredict xorl %edx, %edx # mask = 0 movl 8(%ebp), %eax movl 12(%ebp), %ecx cmpl %ecx, %eax jle L 13 # skip if x<=y movl $-1, %edx # mask = -1 L 13:

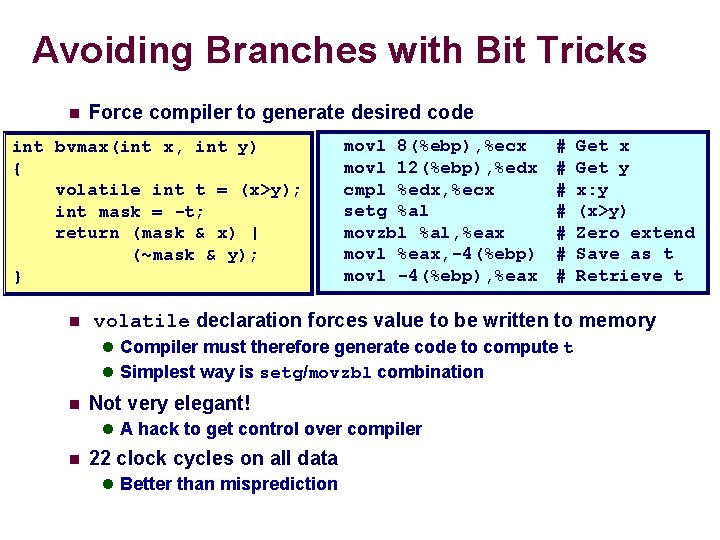

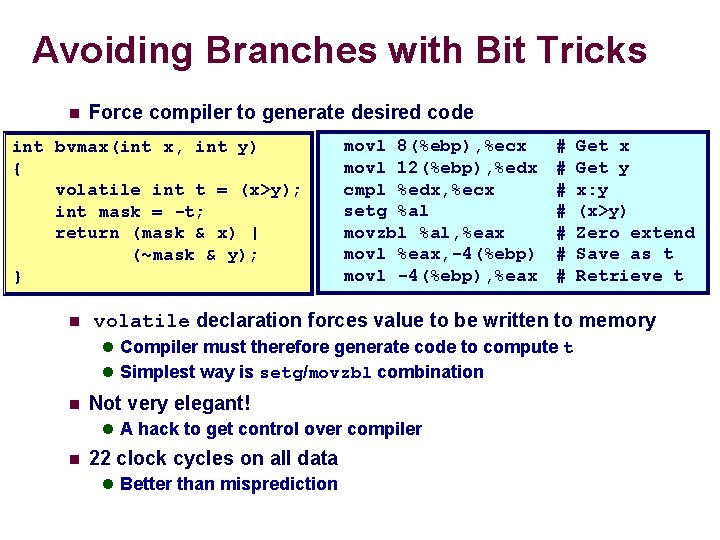

Avoiding Branches with Bit Tricks n Force compiler to generate desired code int bvmax(int x, int y) { volatile int t = (x>y); int mask = -t; return (mask & x) | (~mask & y); } n movl 8(%ebp), %ecx movl 12(%ebp), %edx cmpl %edx, %ecx setg %al movzbl %al, %eax movl %eax, -4(%ebp) movl -4(%ebp), %eax # # # # volatile declaration forces value to be written to memory l Compiler must therefore generate code to compute t l Simplest way is setg/movzbl combination n Not very elegant! l A hack to get control over compiler n Get x Get y x: y (x>y) Zero extend Save as t Retrieve t 22 clock cycles on all data l Better than misprediction

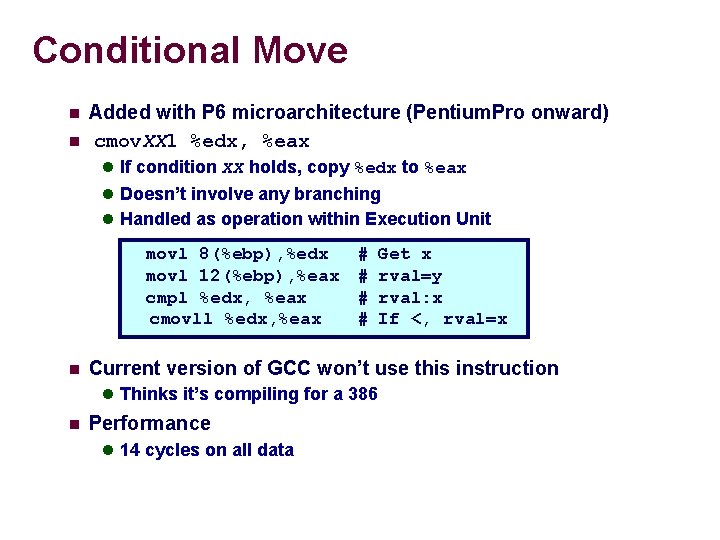

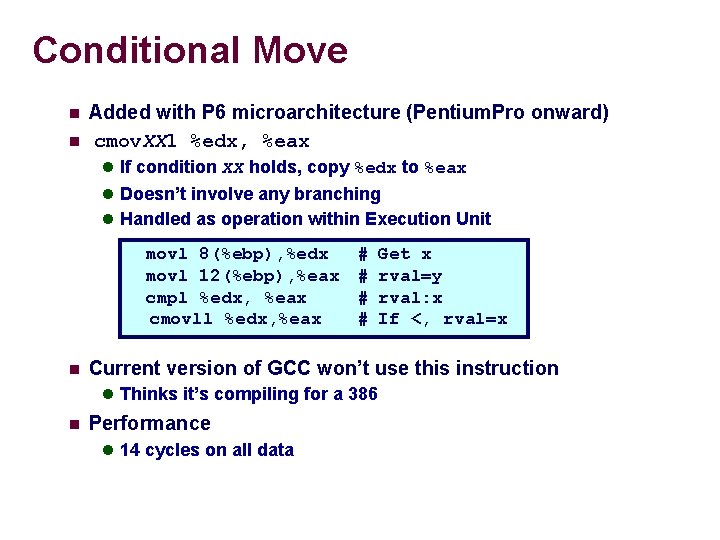

Conditional Move n n Added with P 6 microarchitecture (Pentium. Pro onward) cmov. XXl %edx, %eax l If condition XX holds, copy %edx to %eax l Doesn’t involve any branching l Handled as operation within Execution Unit movl 8(%ebp), %edx movl 12(%ebp), %eax cmpl %edx, %eax cmovll %edx, %eax n # # Current version of GCC won’t use this instruction l Thinks it’s compiling for a 386 n Get x rval=y rval: x If <, rval=x Performance l 14 cycles on all data

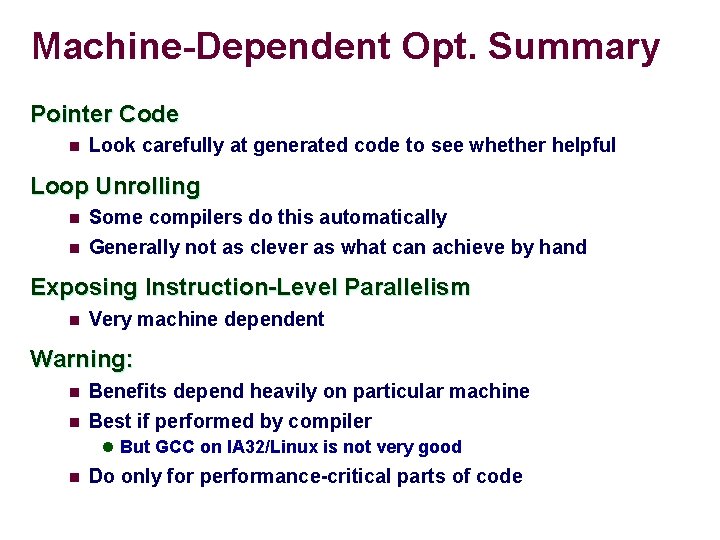

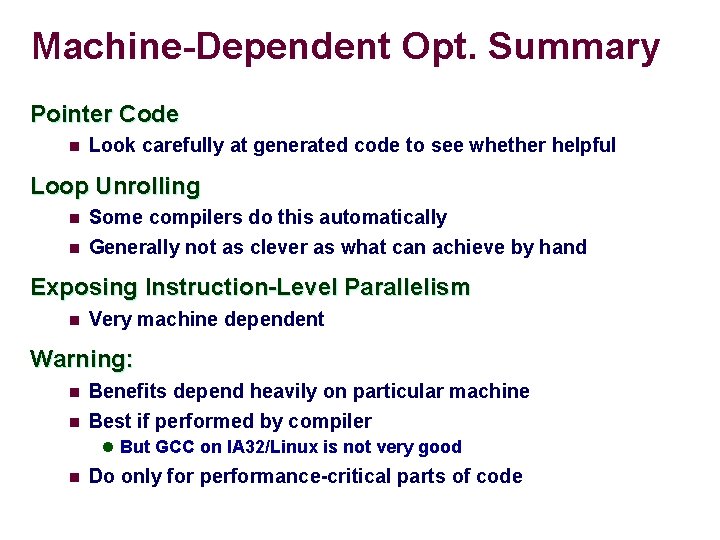

Machine-Dependent Opt. Summary Pointer Code n Look carefully at generated code to see whether helpful Loop Unrolling n n Some compilers do this automatically Generally not as clever as what can achieve by hand Exposing Instruction-Level Parallelism n Very machine dependent Warning: n n Benefits depend heavily on particular machine Best if performed by compiler l But GCC on IA 32/Linux is not very good n Do only for performance-critical parts of code

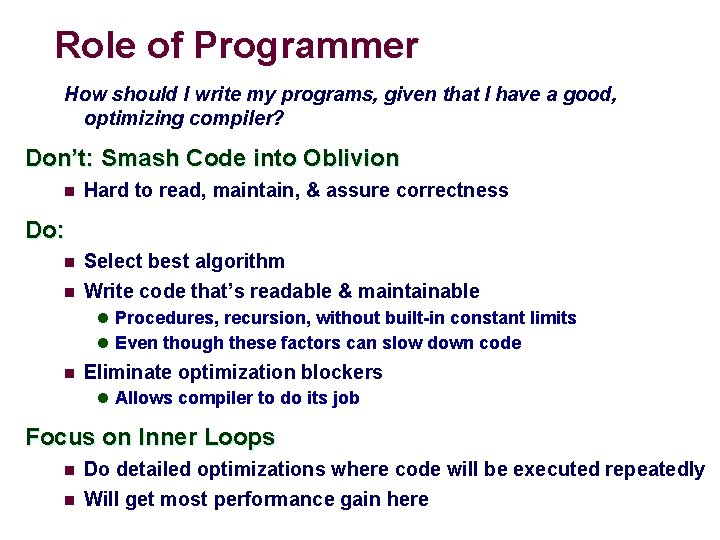

Role of Programmer How should I write my programs, given that I have a good, optimizing compiler? Don’t: Smash Code into Oblivion n Hard to read, maintain, & assure correctness Do: n n Select best algorithm Write code that’s readable & maintainable l Procedures, recursion, without built-in constant limits l Even though these factors can slow down code n Eliminate optimization blockers l Allows compiler to do its job Focus on Inner Loops n n Do detailed optimizations where code will be executed repeatedly Will get most performance gain here