BIOINFORMATICS Lecture 5 Hidden Markov Model Dr Aladdin

BIOINFORMATICS Lecture 5 Hidden Markov Model Dr. Aladdin Hamwieh Khalid Al-shamaa Abdulqader Jighly Aleppo University Faculty of technical engineering Department of Biotechnology 2010 -2011

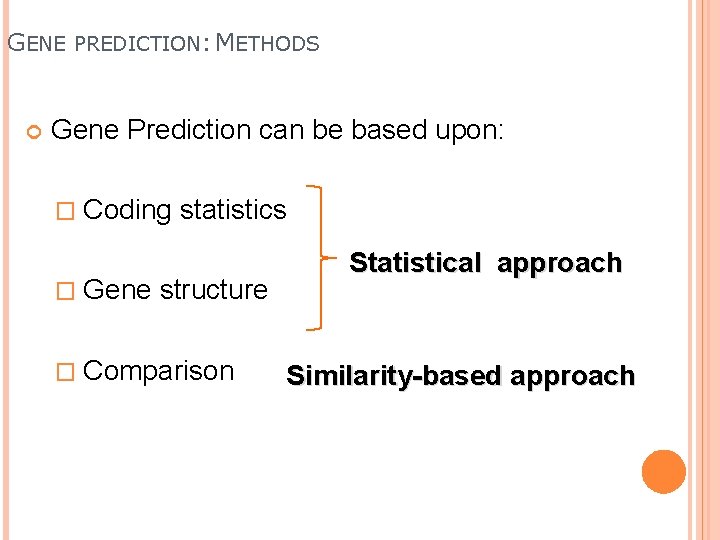

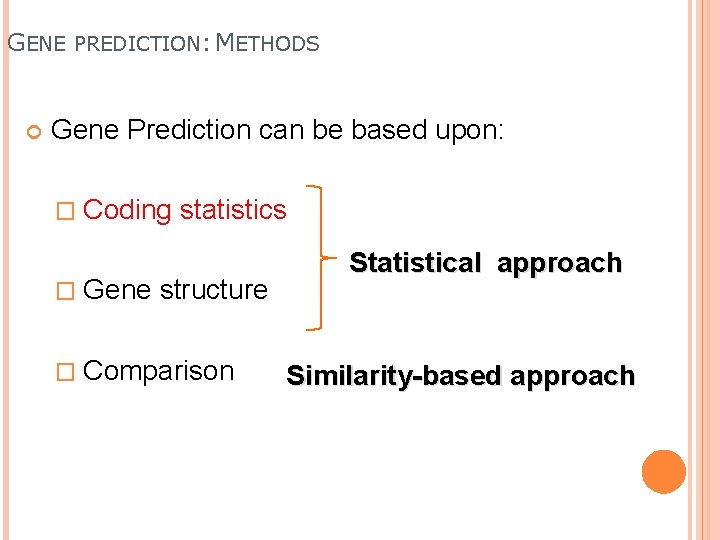

GENE PREDICTION: METHODS Gene Prediction can be based upon: � Coding � Gene statistics structure � Comparison Statistical approach Similarity-based approach

GENE PREDICTION: METHODS Gene Prediction can be based upon: � Coding � Gene statistics structure � Comparison Statistical approach Similarity-based approach

GENE PREDICTION: CODING STATISTICS Coding regions of the sequence have different properties than non-coding regions: non random properties of coding regions. � CG content � Codon bias (CODON USAGE).

MARKOV MODEL

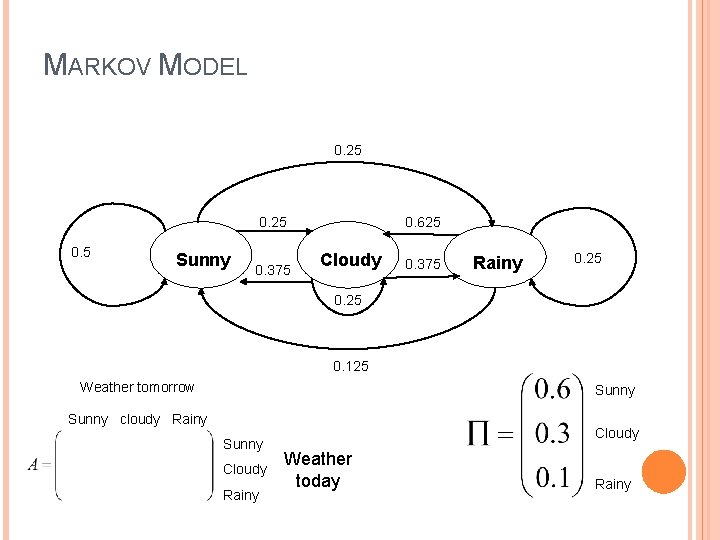

MARKOV MODEL A Markov model is a process, which moves from state to state depending (only) on the previous n states. For example, calculating the probability of getting this weather sequence states in one week from march: Sunny, Cloudy, Rainy, Sunny, Cloudy. � If today is Cloudy, it would be more appropriate to be Rainy tomorrow � On march it’s more appropriate to start with a Sunny day more than other situations � And so on.

MARKOV MODEL 0. 25 0. 5 Sunny 0. 375 0. 625 Cloudy 0. 375 Rainy 0. 25 0. 125 Weather tomorrow Sunny cloudy Rainy Sunny Cloudy Rainy Cloudy Weather today Rainy

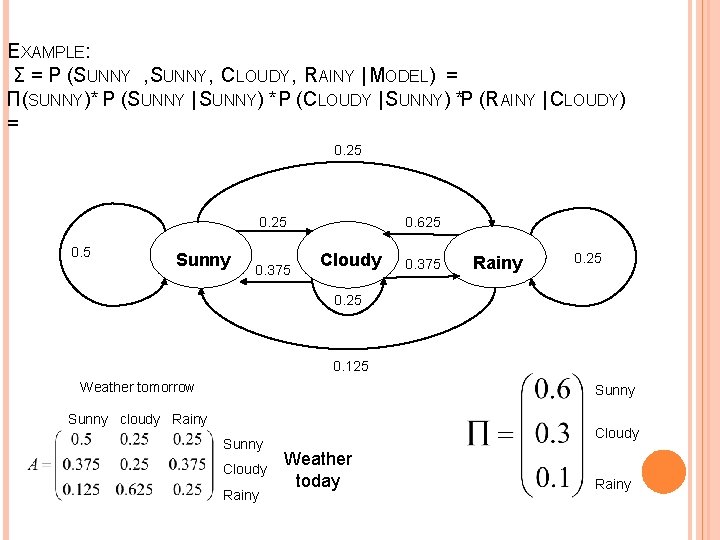

EXAMPLE: Σ = P (SUNNY , SUNNY, CLOUDY, RAINY | MODEL) = Π(SUNNY)* P (SUNNY | SUNNY) *P (CLOUDY | SUNNY) *P (RAINY | CLOUDY) = 0. 6 * 0. 5 * 0. 25 * 0. 375 = 0. 0281 0. 25 0. 5 Sunny 0. 375 0. 625 Cloudy 0. 375 Rainy 0. 25 0. 125 Weather tomorrow Sunny cloudy Rainy Sunny Cloudy Rainy Cloudy Weather today Rainy

HIDDEN MARKOV MODELS States are not observable Observations are probabilistic functions of state State transitions are still probabilistic

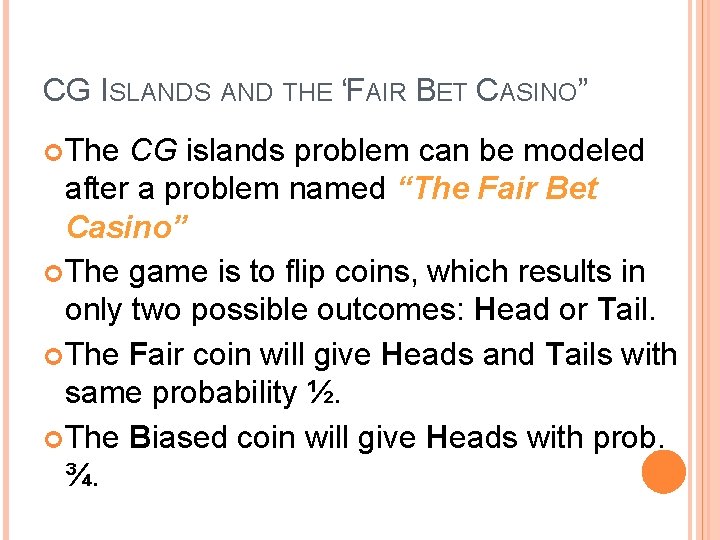

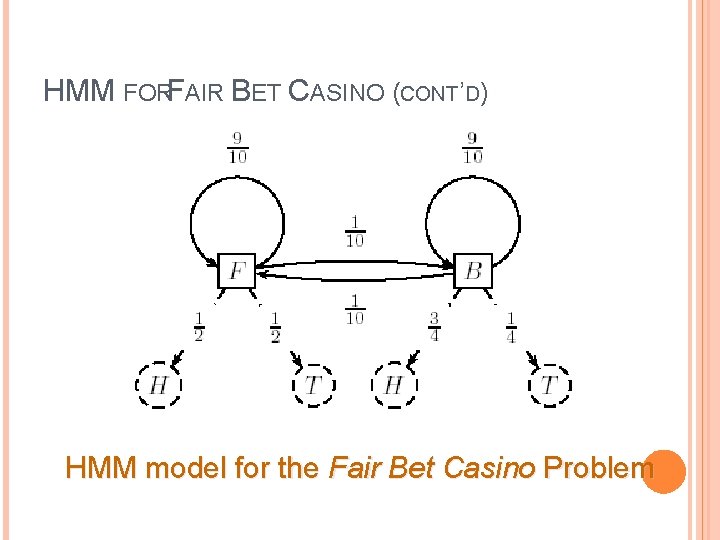

CG ISLANDS AND THE “FAIR BET CASINO” The CG islands problem can be modeled after a problem named “The Fair Bet Casino” The game is to flip coins, which results in only two possible outcomes: Head or Tail. The Fair coin will give Heads and Tails with same probability ½. The Biased coin will give Heads with prob. ¾. 10

THE “FAIR BET CASINO” (CONT’D) Thus, we define the probabilities: �P(H|F) = P(T|F) = ½ �P(H|B) = ¾, P(T|B) = ¼ �The crooked dealer chages between Fair and Biased coins with probability 10% 11

HMM FORFAIR BET CASINO (CONT’D) HMM model for the Fair Bet Casino Problem 12

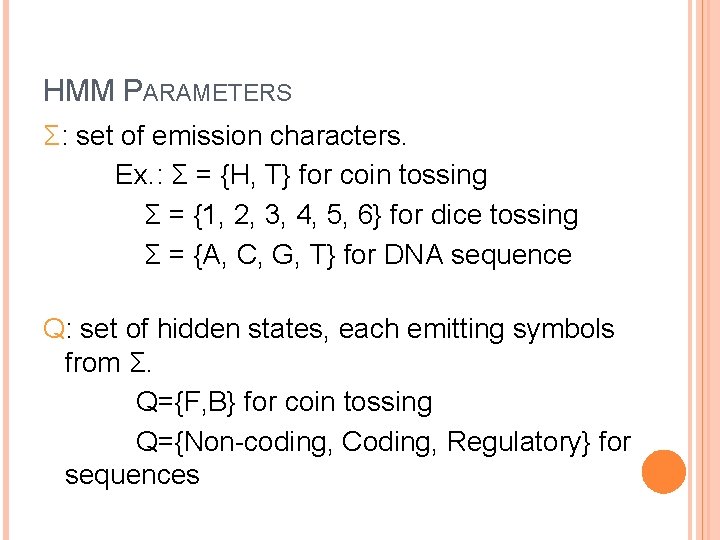

HMM PARAMETERS Σ: set of emission characters. Ex. : Σ = {H, T} for coin tossing Σ = {1, 2, 3, 4, 5, 6} for dice tossing Σ = {A, C, G, T} for DNA sequence Q: set of hidden states, each emitting symbols from Σ. Q={F, B} for coin tossing Q={Non-coding, Coding, Regulatory} for sequences

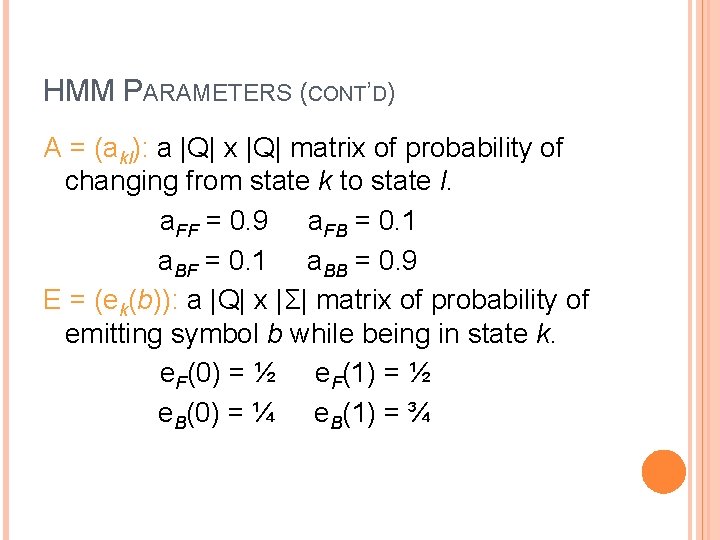

HMM PARAMETERS (CONT’D) A = (akl): a |Q| x |Q| matrix of probability of changing from state k to state l. a. FF = 0. 9 a. FB = 0. 1 a. BF = 0. 1 a. BB = 0. 9 E = (ek(b)): a |Q| x |Σ| matrix of probability of emitting symbol b while being in state k. e. F(0) = ½ e. F(1) = ½ e. B(0) = ¼ e. B(1) = ¾

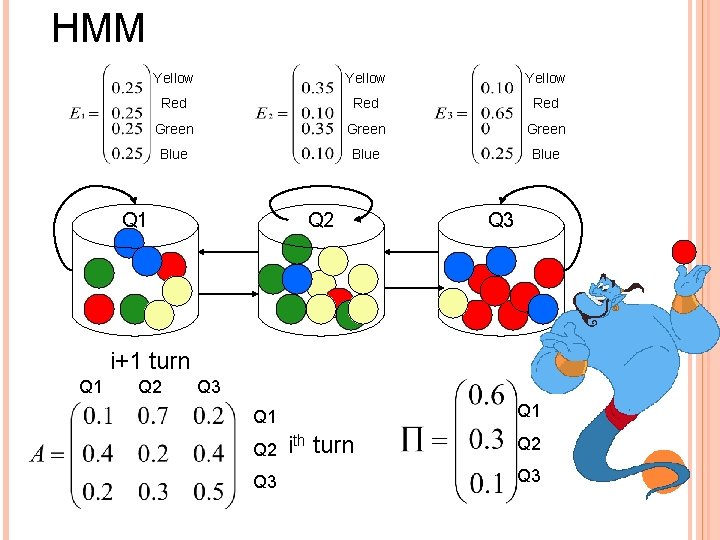

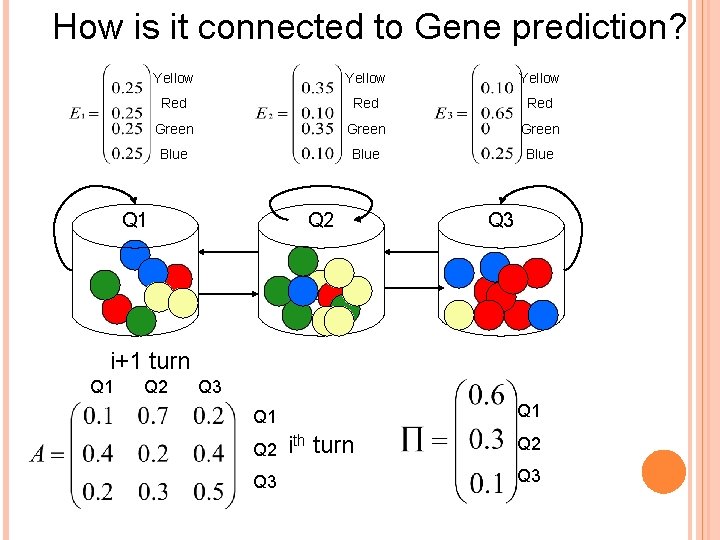

HMM Yellow Red Red Green Blue Q 1 Q 2 Q 3 i+1 turn Q 1 Q 2 Q 3 ith turn Q 2 Q 3

The three Basic problems of HMMs Problem 1: Given observation sequence Σ=O 1 O 2…OT and model M=(Π, A, E). Compute P(Σ | M). Problem 2: Given observation sequence Σ=O 1 O 2…OT and model M=(Π, A, E) how do we choose a corresponding state sequence Q=q 1 q 2…q. T , which best “explains” the observation. Problem 3: How do we adjust the model parameters Π, A, E to maximize P(Σ |{Π, A, E})?

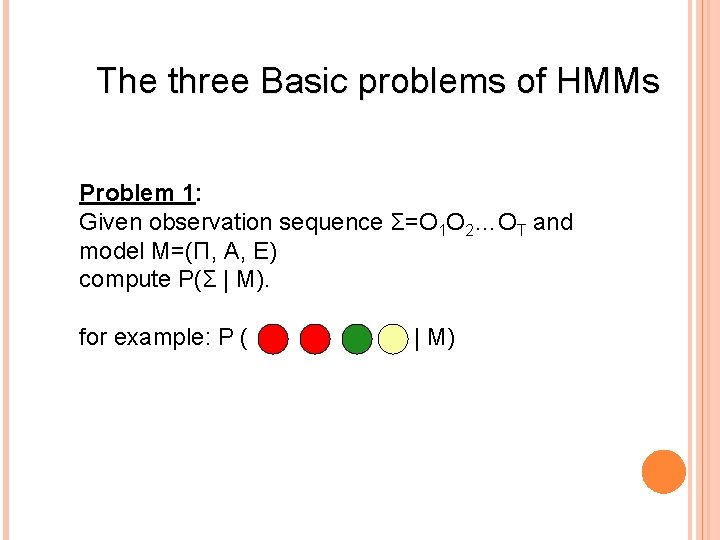

The three Basic problems of HMMs Problem 1: Given observation sequence Σ=O 1 O 2…OT and model M=(Π, A, E) compute P(Σ | M). for example: P ( | M)

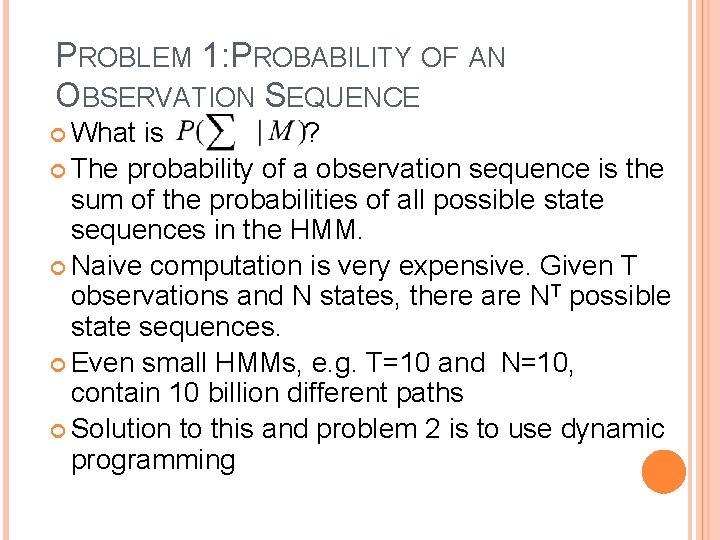

PROBLEM 1: PROBABILITY OF AN OBSERVATION SEQUENCE What is ? The probability of a observation sequence is the sum of the probabilities of all possible state sequences in the HMM. Naive computation is very expensive. Given T observations and N states, there are NT possible state sequences. Even small HMMs, e. g. T=10 and N=10, contain 10 billion different paths Solution to this and problem 2 is to use dynamic programming

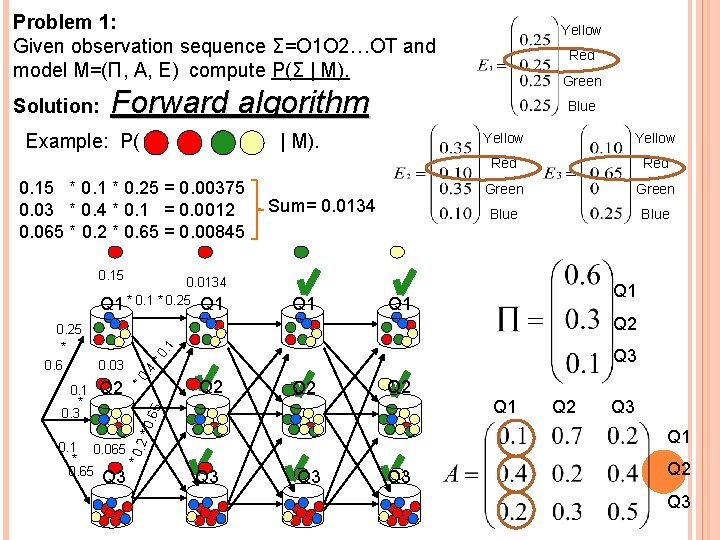

Problem 1: Given observation sequence Σ=O 1 O 2…OT and model M=(Π, A, E) compute P(Σ | M). Solution: Yellow Red Green Forward algorithm Example: P( Blue | M). 0. 15 * 0. 1 * 0. 25 = 0. 00375 0. 03 * 0. 4 * 0. 1 = 0. 0012 0. 065 * 0. 2 * 0. 65 = 0. 00845 Sum= 0. 0134 Yellow Red Green Blue 0. 15 Q 2 *0 Q 3 . 4 0. 03 *0 Q 2 Q 2 5 Q 2 0. 6 0. 1 * 0. 3 Q 1 Q 2 Q 3 Q 1 * 0. 1 0. 065 * 0. 65 2* 0. 25 * 0. 6 Q 1 . 1 0. 0134 Q 1 * 0. 25 Q 1 Q 3 Q 3 Q 2 Q 3

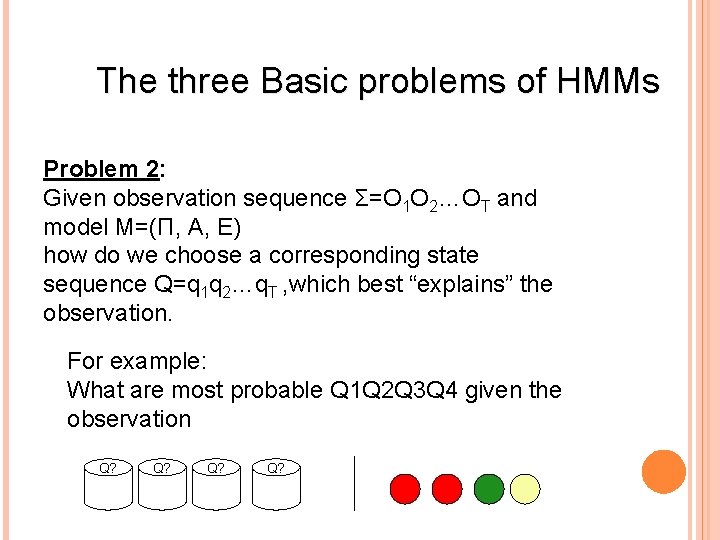

The three Basic problems of HMMs Problem 2: Given observation sequence Σ=O 1 O 2…OT and model M=(Π, A, E) how do we choose a corresponding state sequence Q=q 1 q 2…q. T , which best “explains” the observation. For example: What are most probable Q 1 Q 2 Q 3 Q 4 given the observation Q? Q?

PROBLEM 2: DECODING The solution to Problem 1 gives us the sum of all paths through an HMM efficiently. For Problem 2, we want to find the path with the highest probability.

Example: P( Yellow | M). Red 0. 15 * 0. 1 * 0. 25 = 0. 00375 0. 03 * 0. 4 * 0. 1 = 0. 0012 0. 065 * 0. 2 * 0. 65 = 0. 00845 THE LARGEST Green Blue Yellow Red Green Blue 0. 15 0. 00845 Q 1 * 0. 25 Q 1 *0 . 1 Q 2 *0 . 4 0. 03 Q 2 Q 2 Q 3 5 Q 2 0. 6 0. 1 * 0. 3 Q 1 * 0. 1 0. 065 * 0. 65 2* 0. 25 * 0. 6 Q 1 Q 3 Q 3 Q 2 Q 3 Q 1 Q 2 Q 3

HIDDEN MARKOV MODEL AND GENE PREDICTION

How is it connected to Gene prediction? Yellow Red Red Green Blue Q 1 Q 2 Q 3 i+1 turn Q 1 Q 2 Q 3 ith turn Q 2 Q 3

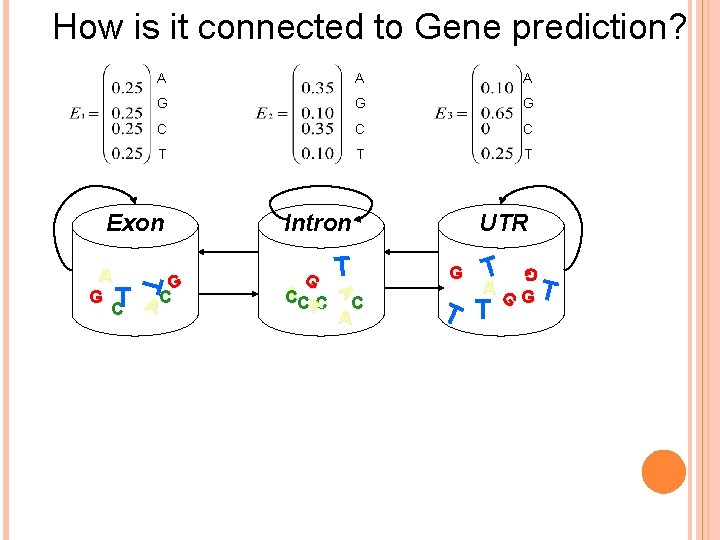

How is it connected to Gene prediction? A A A G G G C C C T T T G A CC C A T A G G T AC C Intron C A UTR T AG GT T T G G Exon

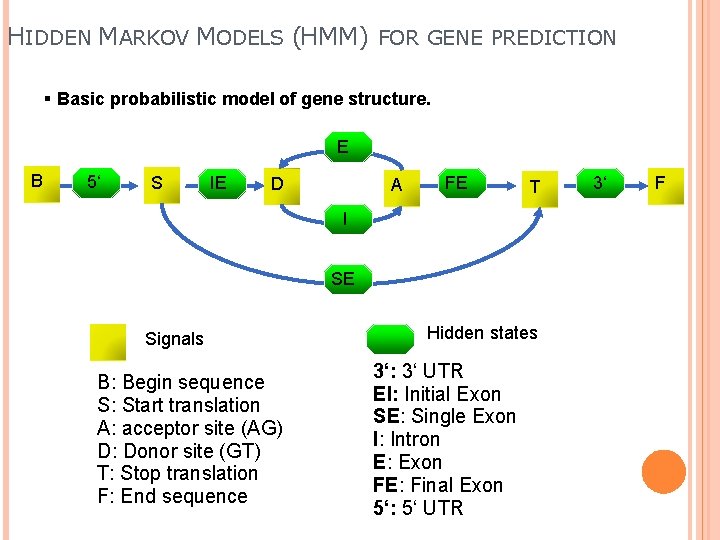

HIDDEN MARKOV MODELS (HMM) FOR GENE PREDICTION § Basic probabilistic model of gene structure. E B 5‘ S IE D A FE T I SE Signals B: Begin sequence S: Start translation A: acceptor site (AG) D: Donor site (GT) T: Stop translation F: End sequence Hidden states 3‘: 3‘ UTR EI: Initial Exon SE: Single Exon I: Intron E: Exon FE: Final Exon 5‘: 5‘ UTR 3‘ F

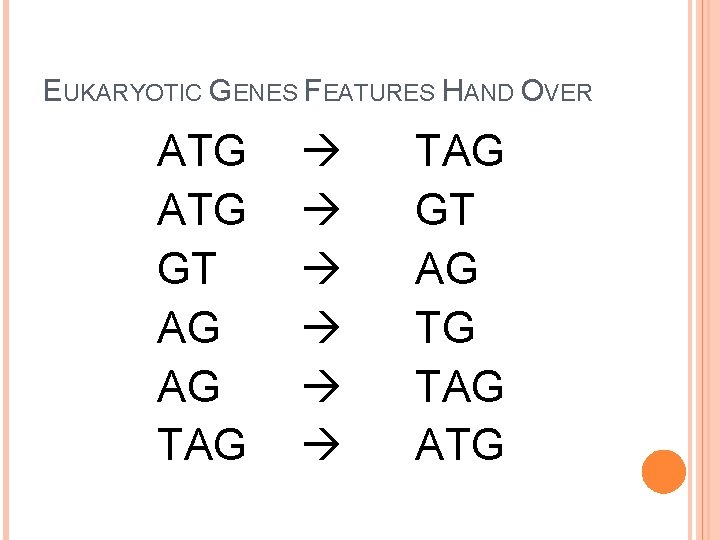

EUKARYOTIC GENES FEATURES HAND OVER ATG GT AG AG TAG TAG GT AG TG TAG ATG

THANK YOU

- Slides: 28