Bioinformatics Hidden Markov Models Markov Random Processes n

Bioinformatics Hidden Markov Models

Markov Random Processes n n n A random sequence has the Markov property if its distribution is determined solely by its current state. Any random process having this property is called a Markov random process. For observable state sequences (state is known from data), this leads to a Markov chain model. For non-observable states, this leads to a Hidden Markov Model (HMM).

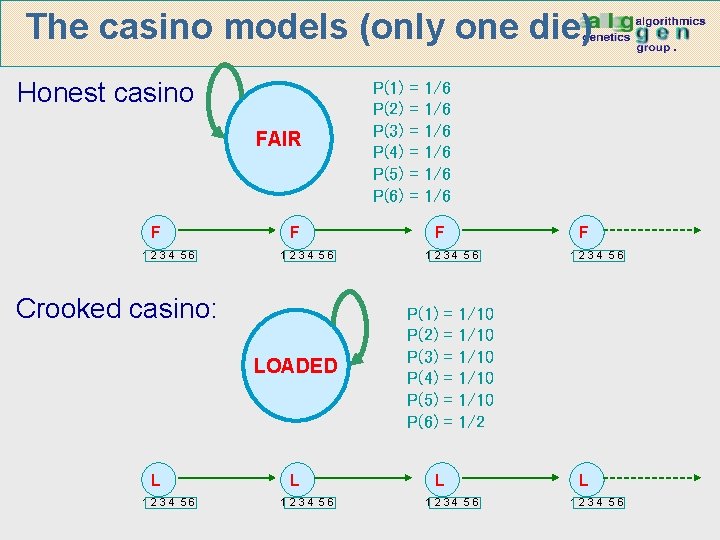

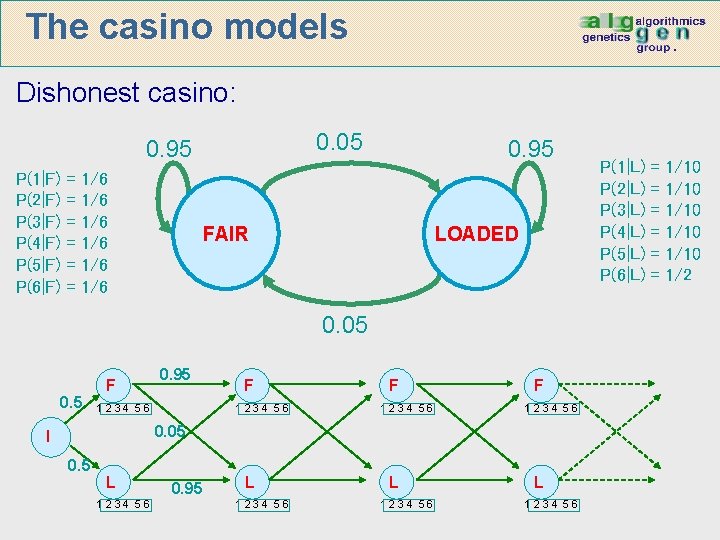

The casino models Game: You bet $1 You roll (always with a fair die) Casino player rolls (maybe with fair die, maybe with loaded die) Highest number wins $1 Honest casino: it has one dice: n Fair die: P(1) = P(2) = P(3) = P(5) =P(6) = 1/6 Crooked casino: it has one dice: n Loaded die: P(1) = P(2) = … = P(5) = 1/10 P(6) = 1/2 Dishonest casino: it has two dice: n Fair die: P(1) = P(2) = P(3) = P(5) =P(6) = 1/6 n Loaded die: P(1) = P(2) = … = P(5) = 1/10 P(6) = 1/2 Casino player approximately switches back-&-forth between fair and loaded die once every 20 turns

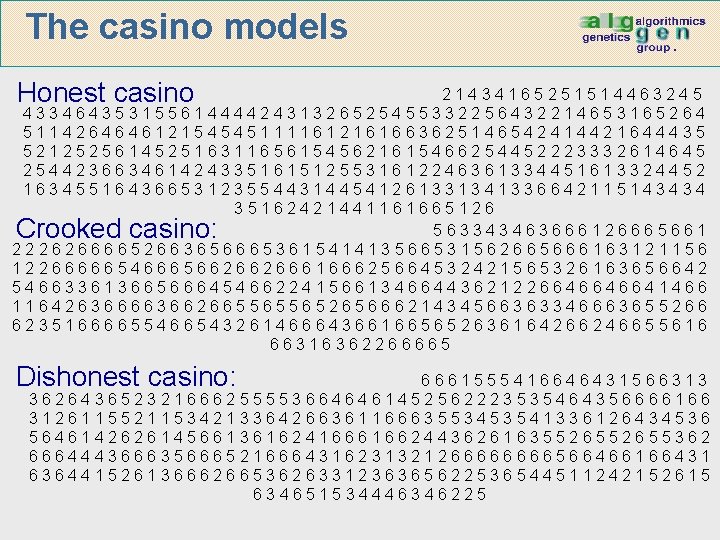

The casino models Honest casino 21434165251514463245 4334643531556144442431326525455332256432214653165264 5114264646121545451111612161663625146542414421644435 5212525614525163116561545621615466254452223332614645 2544236634614243351615125531612246361334451613324452 1634551643665312355443144541261331341336642115143434 35162421441161665126 563343463666126665661 22262666652663656665361541413566531562665666163121156 12266666546665662662666166625664532421565326163656642 54663361366566645466224156613466443621226646646641466 11642636666366266556556526566621434566363346663655266 62351666655466543261466643661665652636164266246655616 66316362266665 Crooked casino: Dishonest casino: 6661555416646431566313 3626436523216662555536646461452562223535464356666166 3126115521153421336426636116663553453541336126434536 564614262614566136162416661662443626163552655362 666444366635666521666431623132126666566466166431 6364415261366626653626331236365622536544511242152615 634651534446346225

The casino models (only one die) Honest casino FAIR F 1234 56 Crooked casino: LOADED L 1234 56 P(1) P(2) P(3) P(4) P(5) P(6) = = = 1/6 1/6 1/6 F F 1234 56 P(1) P(2) P(3) P(4) P(5) P(6) = = = 1234 56 1/10 1/10 1/2 L 1234 56

The casino models Dishonest casino: 0. 05 0. 95 P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 0. 95 FAIR LOADED 0. 05 0. 5 F 0. 95 1234 56 F 1234 56 0. 05 I 0. 5 L 1234 56 0. 95 L 1234 56 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2

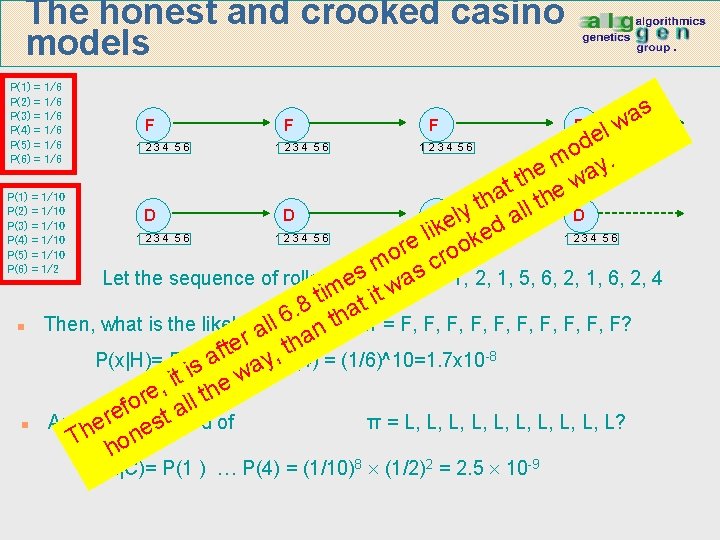

The honest and crooked casino models P(1) P(2) P(3) P(4) P(5) P(6) = = = 1/6 1/6 1/6 F F s a w l de o m y. e a th w t a the P(1) = 1/10 h t P(2) = 1/10 l l D D D ly D a e P(3) = 1/10 d k 1234 56 1 l 2 i 3 4 5 6 e 1234 56 P(4) = 1/10 k e o r P(5) = 1/10 o cro m P(6) = 1/2 sx = 1, 2, 1, 5, 6, 2, 1, 6, 2, 4 Let the sequence of rolls be: es a w im t it t. 8 tha 6 n Then, what is the likelyhood ll of n π = F, F, F, F? a er , tha t f a P(x|H)= P(1) P(2) a …y P(4) = (1/6)^10=1. 7 x 10 -8 s i w t i e , e ll th r o f ta e r n And hthe of π = L, L, L, L? s e likelyhood e T hon 8 2 -9 1234 56 P(x|C)= P(1 ) … P(4) = (1/10) (1/2) = 2. 5 10 1234 56

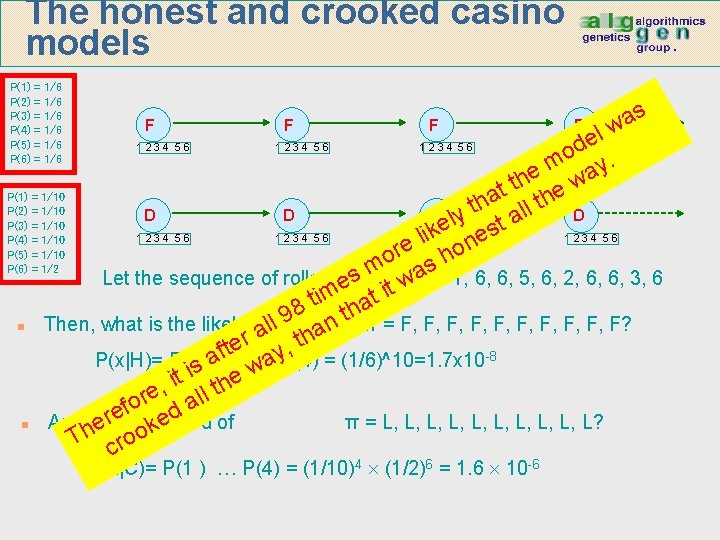

The honest and crooked casino models P(1) P(2) P(3) P(4) P(5) P(6) = = = 1/6 1/6 1/6 F F s a w el d o. m y e a h w t t e P(1) = 1/10 a l th h t P(2) = 1/10 l D D D ly D a P(3) = 1/10 t e 1234 56 1 li 2 k 3 4 5 6 es 1234 56 P(4) = 1/10 n e o r P(5) = 1/10 h o P(6) = 1/2 m axs= 1, 6, 6, 5, 6, 2, 6, 6, 3, 6 s Let the sequence of rolls be: w e it m at it 8 h t 9 l n Then, what is the likelyhood of π = F, F, F, F? n l a a er y, th t f a P(x|H)= P(1) P(2) w …a P(4) = (1/6)^10=1. 7 x 10 -8 s i t e i h , t re all o f ed of n And hthe π = L, L, L, L? erelikelyhood k o T cro 1234 56 P(x|C)= P(1 ) … P(4) = (1/10)4 (1/2)6 = 1. 6 10 -6 1234 56

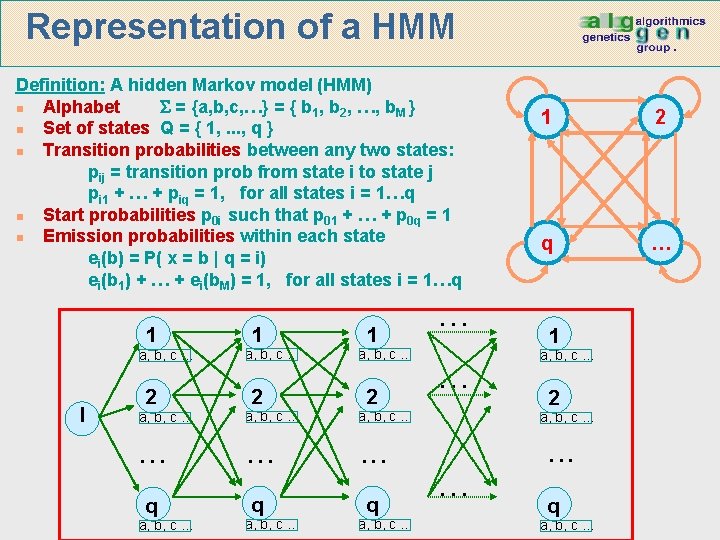

Representation of a HMM Definition: A hidden Markov model (HMM) n Alphabet = {a, b, c, …} = { b 1, b 2, …, b. M } n Set of states Q = { 1, . . . , q } n Transition probabilities between any two states: pij = transition prob from state i to state j pi 1 + … + piq = 1, for all states i = 1…q n Start probabilities p 0 i such that p 01 + … + p 0 q = 1 n Emission probabilities within each state ei(b) = P( x = b | q = i) ei(b 1) + … + ei(b. M) = 1, for all states i = 1…q 1 a, b, c … I 2 1 a, b, c … 2 a, b, c … . . q q q a, b, c … … … 1 2 q … 1 a, b, c … 2 a, b, c … … . . . q a, b, c …

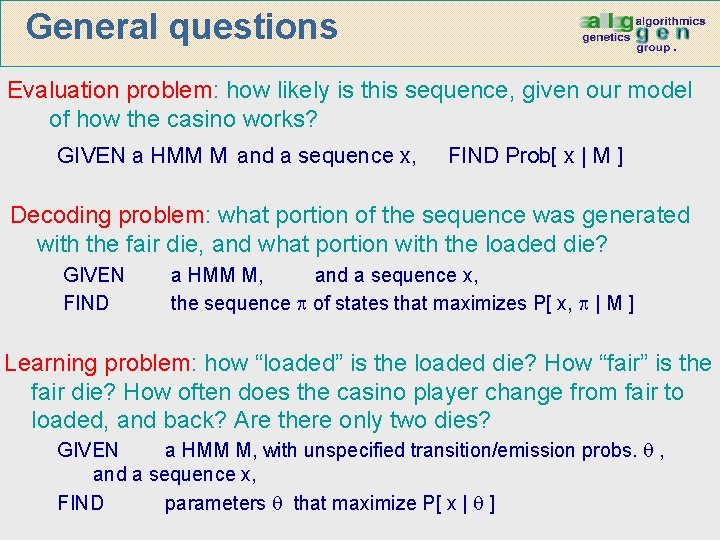

General questions Evaluation problem: how likely is this sequence, given our model of how the casino works? GIVEN a HMM M and a sequence x, FIND Prob[ x | M ] Decoding problem: what portion of the sequence was generated with the fair die, and what portion with the loaded die? GIVEN FIND a HMM M, and a sequence x, the sequence of states that maximizes P[ x, | M ] Learning problem: how “loaded” is the loaded die? How “fair” is the fair die? How often does the casino player change from fair to loaded, and back? Are there only two dies? GIVEN a HMM M, with unspecified transition/emission probs. , and a sequence x, FIND parameters that maximize P[ x | ]

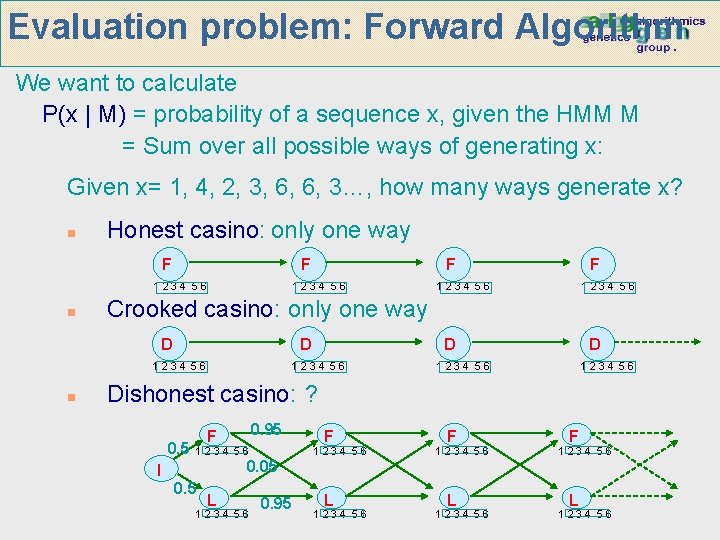

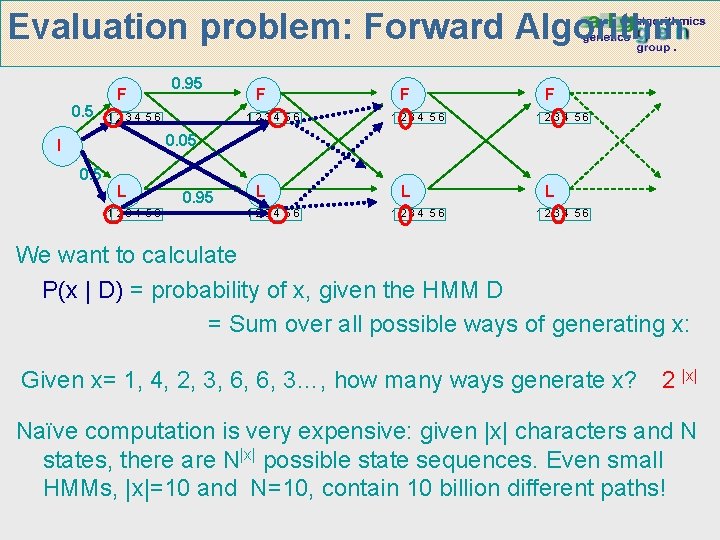

Evaluation problem: Forward Algorithm We want to calculate P(x | M) = probability of a sequence x, given the HMM M = Sum over all possible ways of generating x: Given x= 1, 4, 2, 3, 6, 6, 3…, how many ways generate x? n Honest casino: only one way F F 1234 56 n 1234 56 F 1234 56 Crooked casino: only one way D D 1234 56 n F D 1234 56 Dishonest casino: ? 0. 5 0. 95 F 1234 56 0. 05 I 0. 5 L 1234 56 0. 95 L 1234 56

Evaluation problem: Forward Algorithm 0. 5 F 0. 95 1234 56 F 1234 56 0. 05 I 0. 5 L 1234 56 0. 95 L 1234 56 We want to calculate P(x | D) = probability of x, given the HMM D = Sum over all possible ways of generating x: Given x= 1, 4, 2, 3, 6, 6, 3…, how many ways generate x? 2 |x| Naïve computation is very expensive: given |x| characters and N states, there are N|x| possible state sequences. Even small HMMs, |x|=10 and N=10, contain 10 billion different paths!

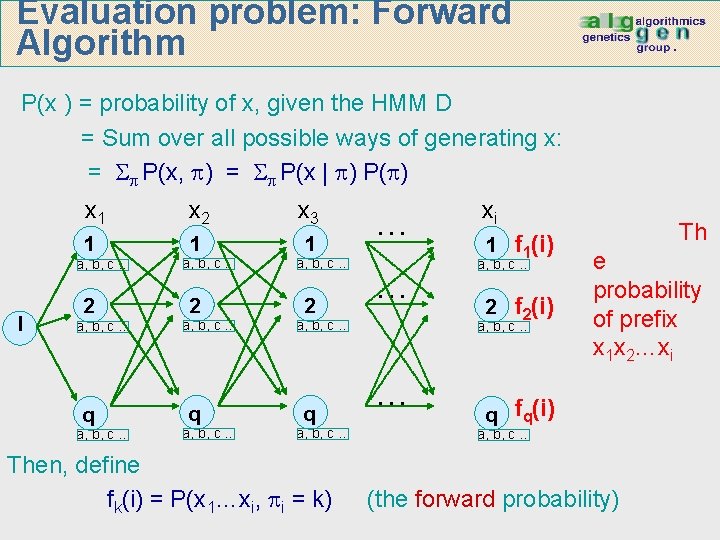

Evaluation problem: Forward Algorithm P(x ) = probability of x, given the HMM D = Sum over all possible ways of generating x: = P(x, ) = P(x | ) P( ) x 1 x 2 x 3 1 1 1 a, b, c … I 2 a, b, c … q a, b, c … 2 … … a, b, c … q a, b, c … Then, define fk(i) = P(x 1…xi, i = k) xi 1 f 1(i) a, b, c … 2 f 2(i) a, b, c … … Th e probability of prefix x 1 x 2…xi q fq(i) a, b, c … (the forward probability)

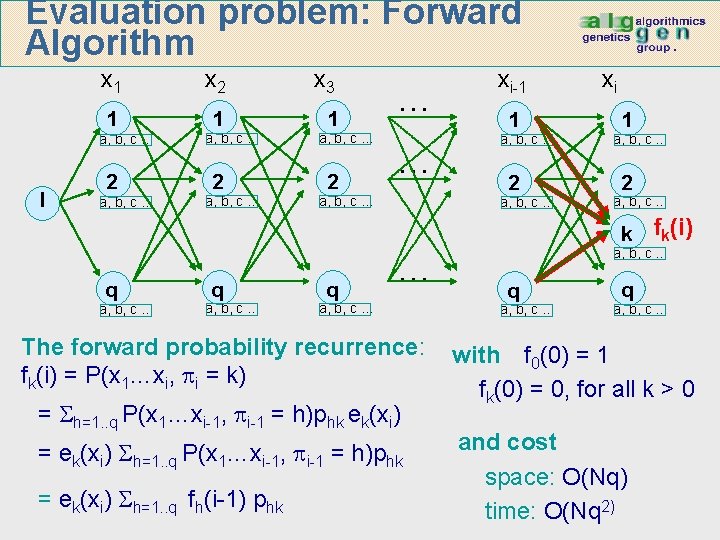

Evaluation problem: Forward Algorithm x 1 x 2 x 3 1 1 1 a, b, c … I 2 a, b, c … 2 … … a, b, c … xi-1 1 a, b, c … 2 a, b, c … xi 1 a, b, c … 2 a, b, c … k fk(i) q a, b, c … q … a, b, c … The forward probability recurrence: fk(i) = P(x 1…xi, i = k) = h=1. . q P(x 1…xi-1, i-1 = h)phk ek(xi) = ek(xi) h=1. . q P(x 1…xi-1, i-1 = h)phk = ek(xi) h=1. . q fh(i-1) phk a, b, c … q a, b, c … with f 0(0) = 1 fk(0) = 0, for all k > 0 and cost space: O(Nq) time: O(Nq 2)

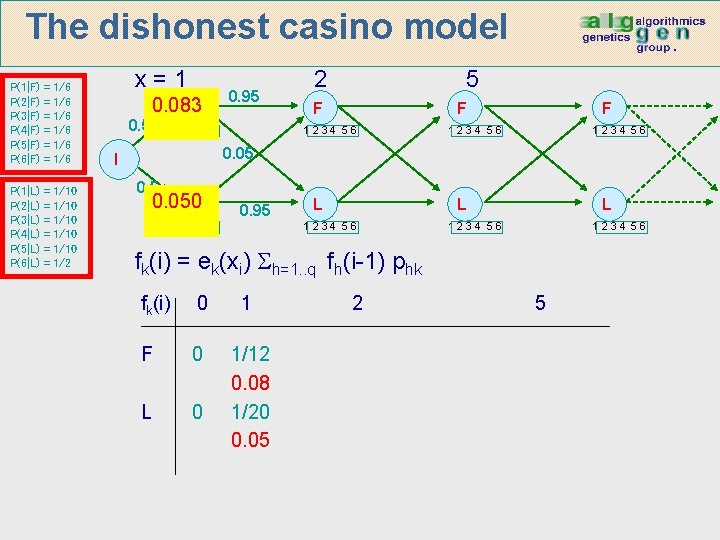

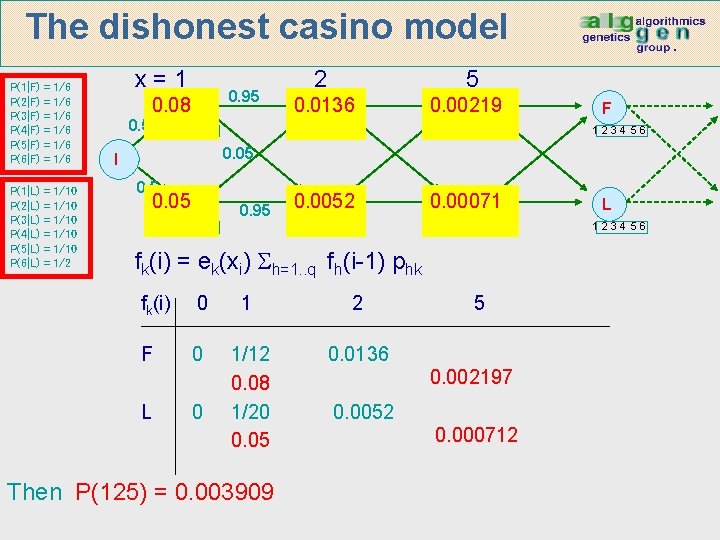

The dishonest casino model P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2 x=1 0. 5 0. 083 F 0. 95 1234 56 2 5 F F 1234 56 0. 05 I 0. 5 0. 050 L 0. 95 1234 56 L L 1234 56 fk(i) = ek(xi) h=1. . q fh(i-1) phk fk(i) 0 1 F 0 L 0 1/12 0. 08 1/20 0. 05 2 5

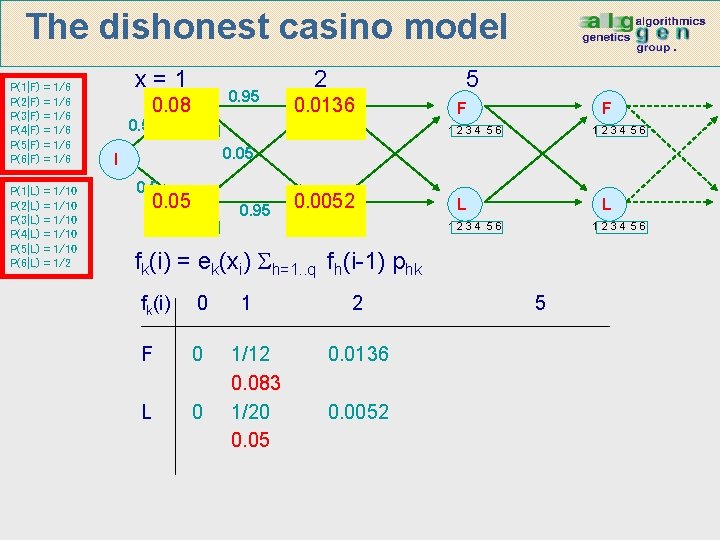

The dishonest casino model P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2 x=1 0. 5 0. 95 0. 08 F 1234 56 2 5 0. 0136 F 1234 56 F F 1234 56 0. 05 I 0. 5 0. 05 L 0. 95 1234 56 0. 0052 L 1234 56 L L 1234 56 fk(i) = ek(xi) h=1. . q fh(i-1) phk fk(i) 0 F 0 L 0 1 1/12 0. 083 1/20 0. 05 2 0. 0136 0. 0052 5

The dishonest casino model P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2 x=1 0. 5 0. 95 0. 08 F 1234 56 2 5 0. 0136 F 0. 00219 F 1234 56 0. 0052 L 0. 00071 L 1234 56 F 1234 56 0. 05 I 0. 5 0. 05 L 0. 95 1234 56 fk(i) = ek(xi) h=1. . q fh(i-1) phk fk(i) 0 1 2 F 0 1/12 0. 08 1/20 0. 05 0. 0136 L 0 Then P(125) = 0. 003909 5 0. 002197 0. 0052 0. 000712 L 1234 56

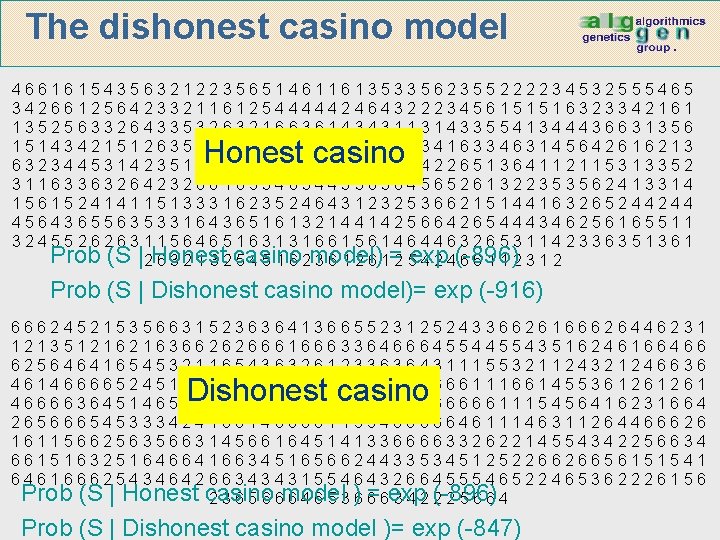

The dishonest casino model 4661615435632122356514611613533562355222234532555465 3426612564233211612544444246432223456151516323342161 1352563326433532632166361434311314335541344436631356 1514342151263564162655455222254341633463145642616213 6323445314235141433263263226345422651364112115313352 3116336326423266165346344336364565261322353562413314 1561524141151333162352464312325366215144163265244244 4564365563533164365161321441425664265444346256165511 3245526263115646516313166156146446326531142336351361 Prob (S |2 Honest 6 3 2 1 3 2 casino 5 4 5 1 6 2 model) 3 6 1 2 6 1= 2 5 exp 4 2 4(-896) 66112312 Honest casino Prob (S | Dishonest casino model)= exp (-916) 66624521535663152363641366552312524336626166626446231 1213512162163662626661666336466645543516246166466 62564641654532116543632612336364311155321124321246636 46146666524515231621511661443165666111661455361261261 46666364514656556636366614625656666661115456416231664 26566654533342416614666611554666664611146311264466626 16115662563566314566164514133666633262214554342256634 66151632516466416634516566244335345125226626656151541 64616662543464266343431554643266455546522465362226156 Prob (S | Honest casino =6 exp (-896) 2 3 6 5 6 6 model 6 4 6 5 3 )6 6 3422 25664 Dishonest casino Prob (S | Dishonest casino model )= exp (-847)

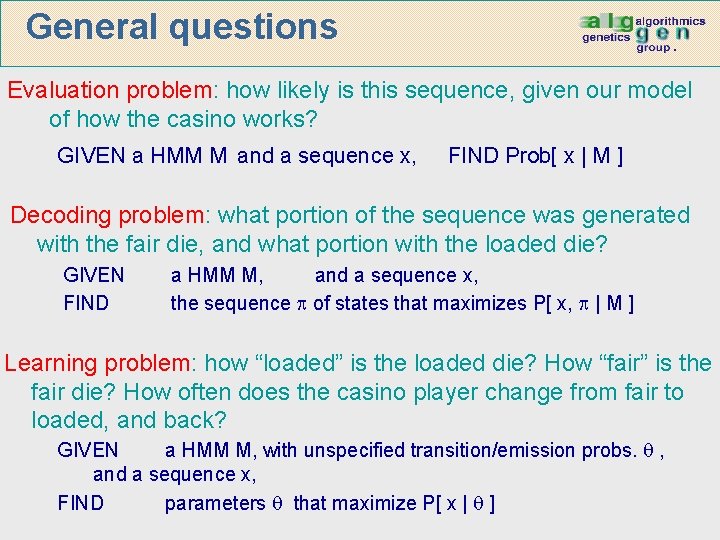

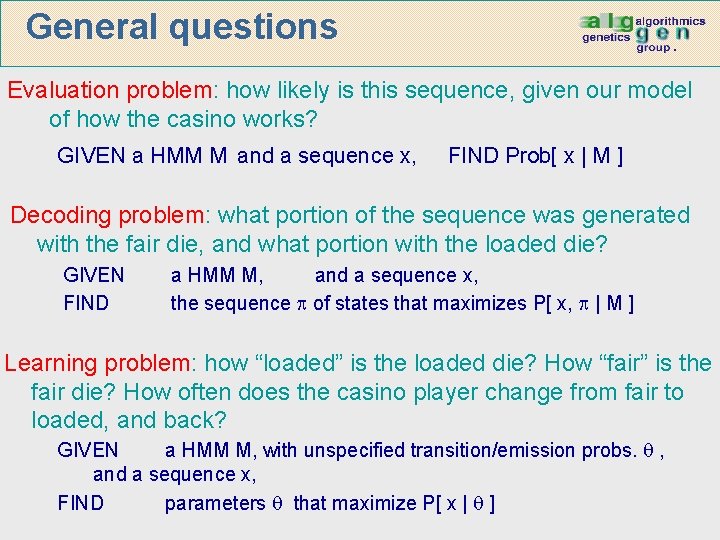

General questions Evaluation problem: how likely is this sequence, given our model of how the casino works? GIVEN a HMM M and a sequence x, FIND Prob[ x | M ] Decoding problem: what portion of the sequence was generated with the fair die, and what portion with the loaded die? GIVEN FIND a HMM M, and a sequence x, the sequence of states that maximizes P[ x, | M ] Learning problem: how “loaded” is the loaded die? How “fair” is the fair die? How often does the casino player change from fair to loaded, and back? GIVEN a HMM M, with unspecified transition/emission probs. , and a sequence x, FIND parameters that maximize P[ x | ]

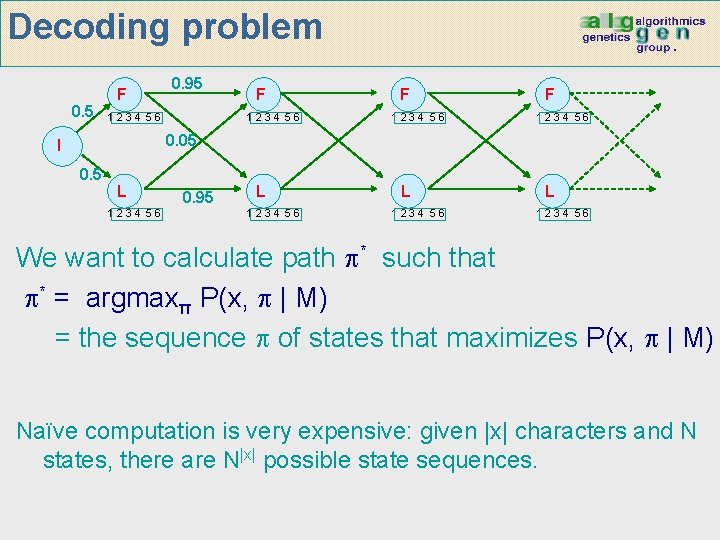

Decoding problem 0. 5 F 0. 95 1234 56 F 1234 56 0. 05 I 0. 5 L 1234 56 0. 95 L 1234 56 We want to calculate path * such that * = argmaxπ P(x, | M) = the sequence of states that maximizes P(x, | M) Naïve computation is very expensive: given |x| characters and N states, there are N|x| possible state sequences.

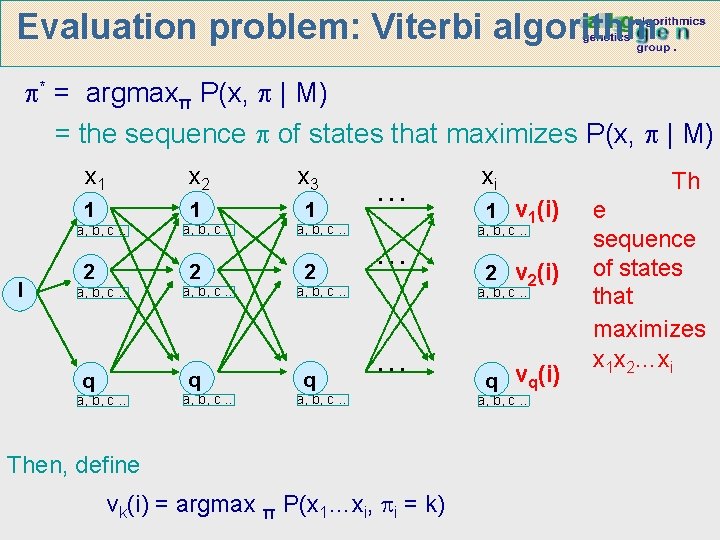

Evaluation problem: Viterbi algorithm * = argmaxπ P(x, | M) = the sequence of states that maximizes P(x, | M) x 1 x 2 x 3 1 1 1 a, b, c … I 2 a, b, c … q q a, b, c … 2 … … a, b, c … q xi 1 v 1(i) a, b, c … 2 v 2(i) a, b, c … … a, b, c … Then, define vk(i) = argmax π P(x 1…xi, i = k) q vq(i) a, b, c … Th e sequence of states that maximizes x 1 x 2…xi

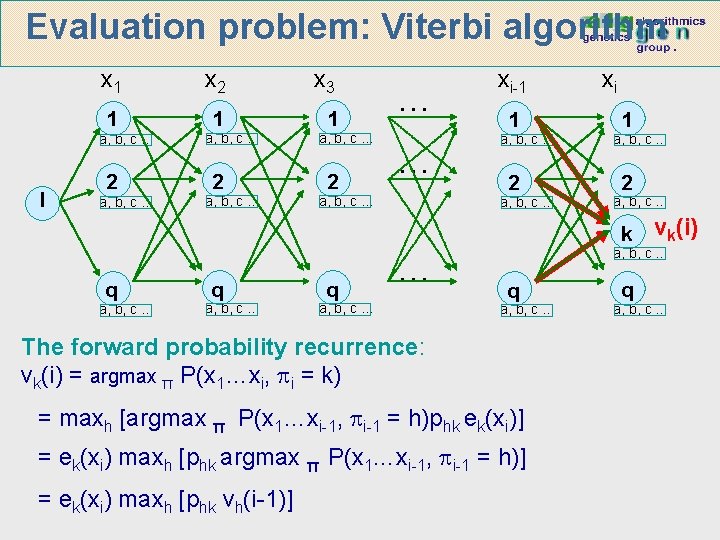

Evaluation problem: Viterbi algorithm x 1 x 2 x 3 1 1 1 a, b, c … I 2 a, b, c … 2 … … a, b, c … xi-1 1 a, b, c … 2 a, b, c … xi 1 a, b, c … 2 a, b, c … k vk(i) q a, b, c … q … a, b, c … q a, b, c … The forward probability recurrence: vk(i) = argmax π P(x 1…xi, i = k) = maxh [argmax π P(x 1…xi-1, i-1 = h)phk ek(xi)] = ek(xi) maxh [phk argmax π P(x 1…xi-1, i-1 = h)] = ek(xi) maxh [phk vh(i-1)] q a, b, c …

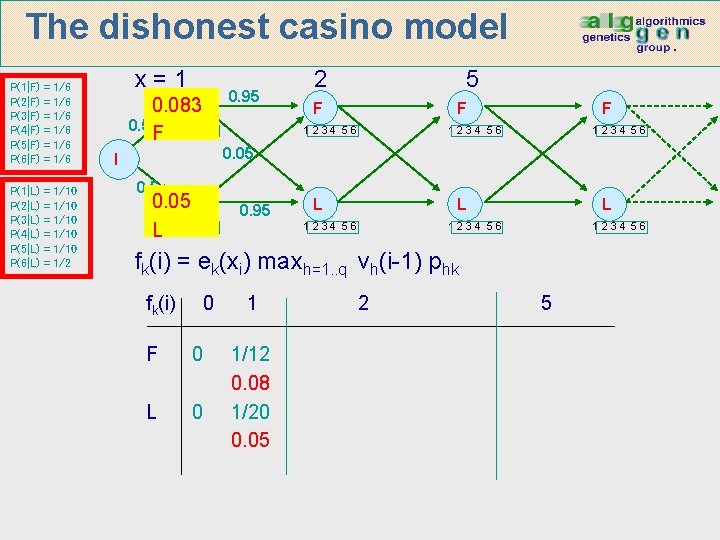

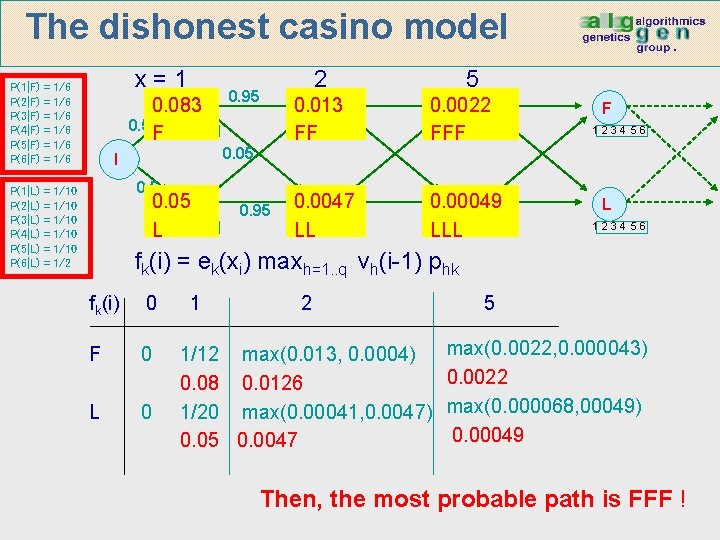

The dishonest casino model P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2 x=1 0. 083 F 0. 5 1 2 3 4 5 6 F I 0. 95 5 F F 1234 56 0. 05 L L 1234 56 2 0. 95 L L 1234 56 fk(i) = ek(xi) maxh=1. . q vh(i-1) phk 0 fk(i) F 0 L 0 1 1/12 0. 08 1/20 0. 05 2 5

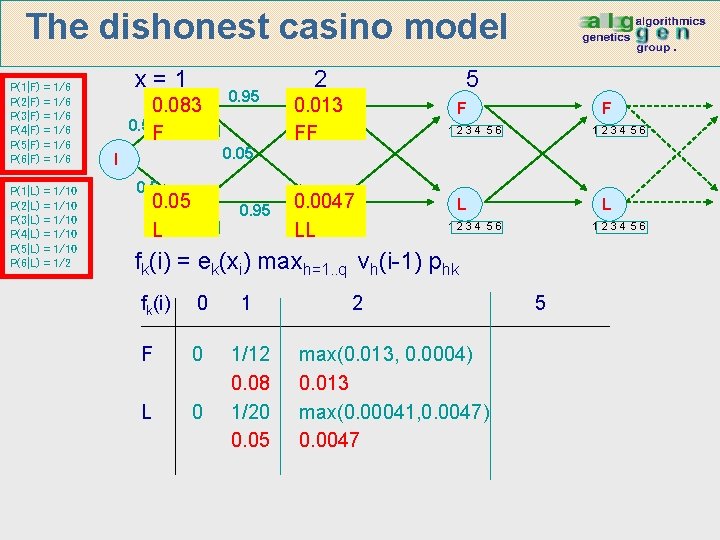

The dishonest casino model P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2 x=1 0. 083 F 0. 5 1 2 3 4 5 6 F I 0. 95 0. 05 L L 1234 56 0. 95 2 5 0. 013 F 1234 56 FF 0. 0047 L 1234 56 LL F F 1234 56 L L 1234 56 fk(i) = ek(xi) maxh=1. . q vh(i-1) phk fk(i) 0 1 F 0 L 0 1/12 0. 08 1/20 0. 05 2 max(0. 013, 0. 0004) 0. 013 max(0. 00041, 0. 0047) 0. 0047 5

The dishonest casino model P(1|F) P(2|F) P(3|F) P(4|F) P(5|F) P(6|F) = = = 1/6 1/6 1/6 P(1|L) P(2|L) P(3|L) P(4|L) P(5|L) P(6|L) = = = 1/10 1/10 1/2 x=1 0. 083 F 0. 5 1 2 3 4 5 6 F I 2 0. 95 0. 05 L L 1234 56 0. 95 5 0. 013 F 1234 56 FF 0. 0022 F 1234 56 FFF 0. 0047 L 1234 56 LL 0. 00049 L 1234 56 LLL F 1234 56 L 1234 56 fk(i) = ek(xi) maxh=1. . q vh(i-1) phk fk(i) 0 F 0 L 0 1 2 1/12 max(0. 013, 0. 0004) 0. 08 0. 0126 1/20 max(0. 00041, 0. 0047) 0. 05 0. 0047 5 max(0. 0022, 0. 000043) 0. 0022 max(0. 000068, 00049) 0. 00049 Then, the most probable path is FFF !

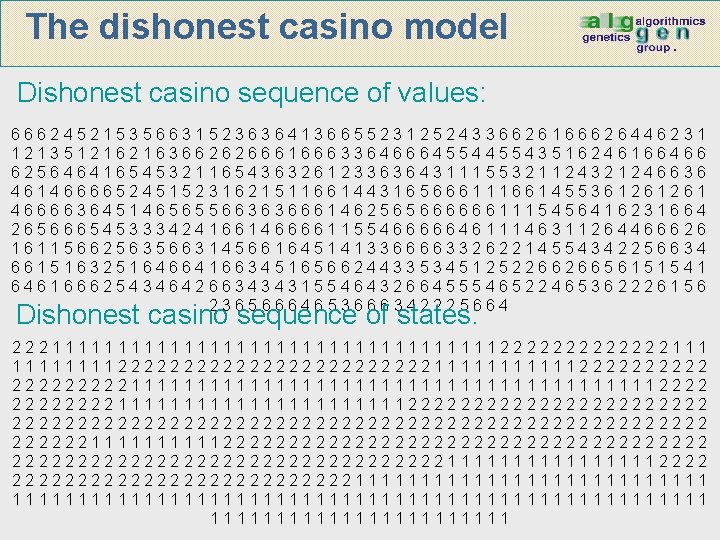

The dishonest casino model Dishonest casino sequence of values: 66624521535663152363641366552312524336626166626446231 1213512162163662626661666336466645543516246166466 62564641654532116543632612336364311155321124321246636 46146666524515231621511661443165666111661455361261261 46666364514656556636366614625656666661115456416231664 26566654533342416614666611554666664611146311264466626 16115662563566314566164514133666633262214554342256634 66151632516466416634516566244335345125226626656151541 64616662543464266343431554643266455546522465362226156 23656664653666342225664 Dishonest casino sequence of states: 222111111111111111112222222111 11112222222222221111112222211111111111111111111222211111111111222222222222222222222222222111112222222222222222222111111112222222222222111111111111111111111111111

General questions Evaluation problem: how likely is this sequence, given our model of how the casino works? GIVEN a HMM M and a sequence x, FIND Prob[ x | M ] Decoding problem: what portion of the sequence was generated with the fair die, and what portion with the loaded die? GIVEN FIND a HMM M, and a sequence x, the sequence of states that maximizes P[ x, | M ] Learning problem: how “loaded” is the loaded die? How “fair” is the fair die? How often does the casino player change from fair to loaded, and back? GIVEN a HMM M, with unspecified transition/emission probs. , and a sequence x, FIND parameters that maximize P[ x | ]

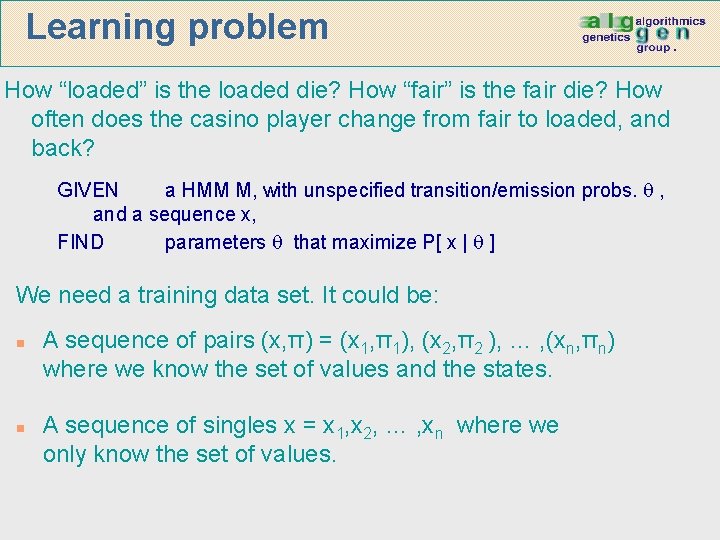

Learning problem How “loaded” is the loaded die? How “fair” is the fair die? How often does the casino player change from fair to loaded, and back? GIVEN a HMM M, with unspecified transition/emission probs. , and a sequence x, FIND parameters that maximize P[ x | ] We need a training data set. It could be: n n A sequence of pairs (x, π) = (x 1, π1), (x 2, π2 ), … , (xn, πn) where we know the set of values and the states. A sequence of singles x = x 1, x 2, … , xn where we only know the set of values.

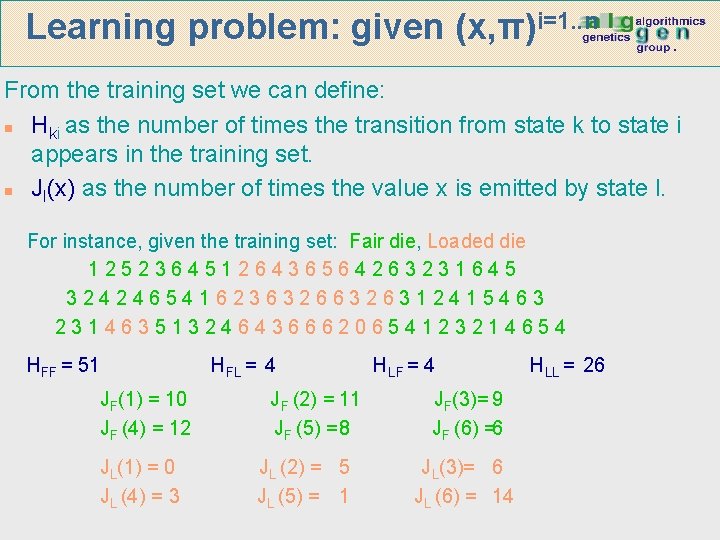

Learning problem: given (x, π)i=1. . n From the training set we can define: n Hki as the number of times the transition from state k to state i appears in the training set. n Jl(x) as the number of times the value x is emitted by state l. For instance, given the training set: Fair die, Loaded die 12523645126436564263231645 3242465416236326312415463 2314635132464366620654123214654 HFF = 51 JF(1) = 10 JF (4) = 12 JL(1) = 0 JL (4) = 3 HFL = 4 JF (2) = 11 JF (5) = 8 JL (2) = 5 JL (5) = 1 HLF = 4 JF(3)= 9 JF (6) =6 JL(3)= 6 JL (6) = 14 HLL = 26

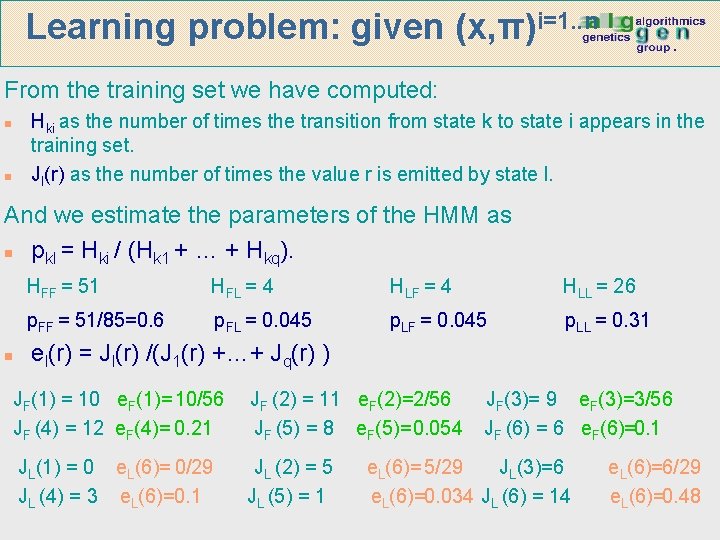

Learning problem: given (x, π)i=1. . n From the training set we have computed: Hki as the number of times the transition from state k to state i appears in the training set. Jl(r) as the number of times the value r is emitted by state l. n n And we estimate the parameters of the HMM as n pkl = Hki / (Hk 1 + … + Hkq). n HFF = 51 HFL = 4 HLF = 4 HLL = 26 p. FF = 51/85=0. 6 p. FL = 0. 045 p. LF = 0. 045 p. LL = 0. 31 el(r) = Jl(r) /(J 1(r) +…+ Jq(r) ) JF(1) = 10 e. F(1)= 10/56 JF (4) = 12 e. F(4)= 0. 21 JF (2) = 11 e. F(2)=2/56 JF (5) = 8 e. F(5)= 0. 054 JL(1) = 0 e. L(6)= 0/29 JL (4) = 3 e. L(6)=0. 1 JL (2) = 5 JL (5) = 1 JF(3)= 9 e. F(3)=3/56 JF (6) = 6 e. F(6)=0. 1 e. L(6)= 5/29 JL(3)=6 e. L(6)=0. 034 JL (6) = 14 e. L(6)=6/29 e. L(6)=0. 48

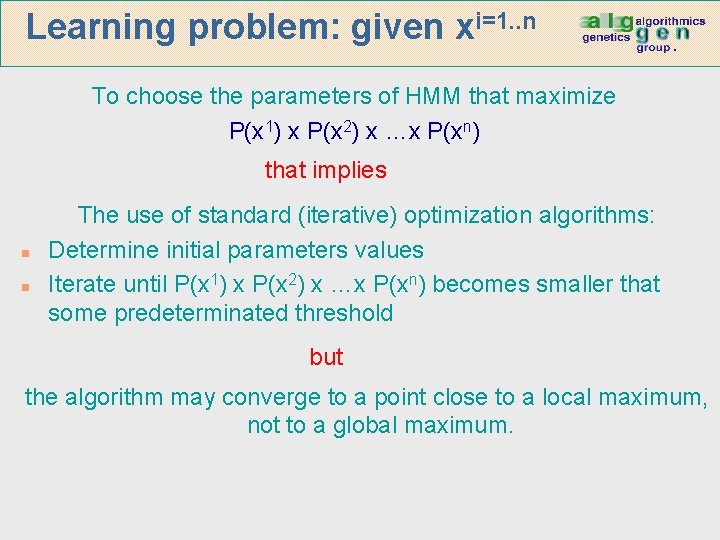

Learning problem: given xi=1. . n To choose the parameters of HMM that maximize P(x 1) x P(x 2) x …x P(xn) that implies n n The use of standard (iterative) optimization algorithms: Determine initial parameters values Iterate until P(x 1) x P(x 2) x …x P(xn) becomes smaller that some predeterminated threshold but the algorithm may converge to a point close to a local maximum, not to a global maximum.

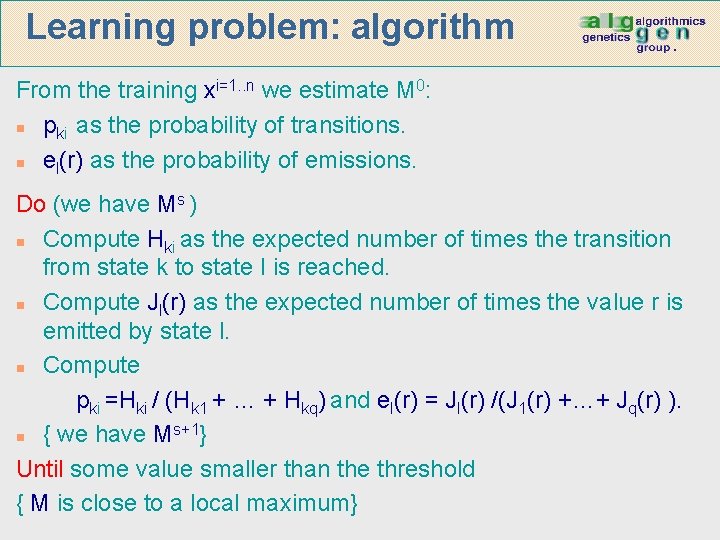

Learning problem: algorithm From the training xi=1. . n we estimate M 0: n pki as the probability of transitions. n el(r) as the probability of emissions. Do (we have Ms ) n Compute Hki as the expected number of times the transition from state k to state I is reached. n Compute Jl(r) as the expected number of times the value r is emitted by state l. n Compute pki =Hki / (Hk 1 + … + Hkq) and el(r) = Jl(r) /(J 1(r) +…+ Jq(r) ). s+1} n { we have M Until some value smaller than the threshold { M is close to a local maximum}

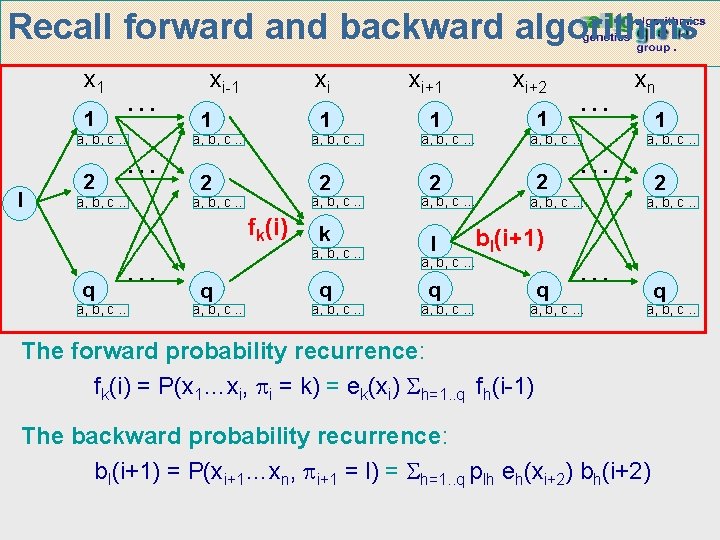

Recall forward and backward algorithms x 1 1 … a, b, c … I 2 … a, b, c … xi-1 1 a, b, c … 2 a, b, c … fk(i) q … a, b, c … xi xi+1 xi+2 1 1 1 a, b, c … 2 a, b, c … k a, b, c … q a, b, c … 2 2 a, b, c … … a, b, c … l bl(i+1) q q a, b, c … … xn 1 a, b, c … 2 a, b, c … … a, b, c … q a, b, c … The forward probability recurrence: fk(i) = P(x 1…xi, i = k) = ek(xi) h=1. . q fh(i-1) The backward probability recurrence: bl(i+1) = P(xi+1…xn, i+1 = l) = h=1. . q plh eh(xi+2) bh(i+2)

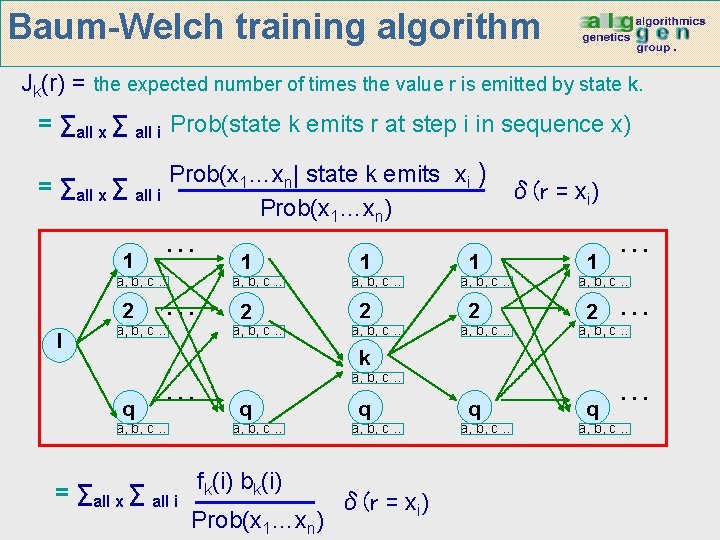

Baum-Welch training algorithm Jk(r) = the expected number of times the value r is emitted by state k. = ∑all x ∑ all i Prob(state k emits r at step i in sequence x) = ∑all x ∑ all i 1 Prob(x 1…xn| state k emits xi ) δ(r = xi) Prob(x 1…xn) … a, b, c … 2 I … a, b, c … q … a, b, c … 1 a, b, c … 2 a, b, c … q a, b, c … = ∑all x ∑ all i fk(i) bk(i) δ(r = xi) Prob(x 1…xn) q a, b, c … … a, b, c … k q … a, b, c …

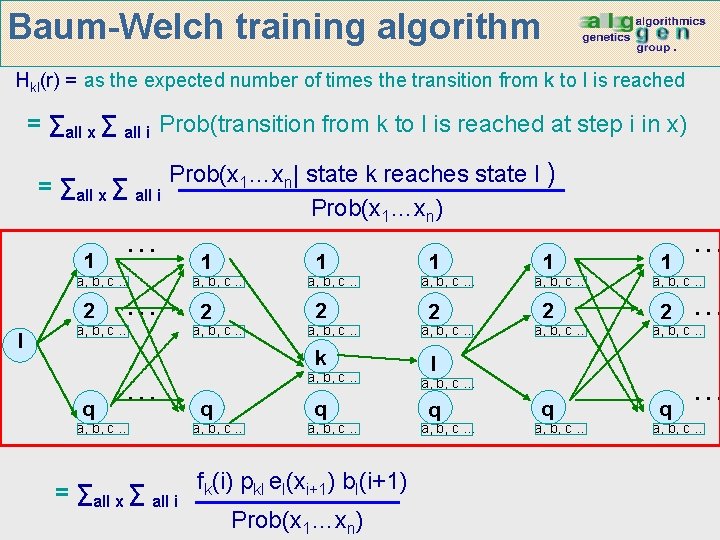

Baum-Welch training algorithm Hkl(r) = as the expected number of times the transition from k to I is reached = ∑all x ∑ all i Prob(transition from k to l is reached at step i in x) = ∑all x ∑ all i 1 … a, b, c … 2 I … a, b, c … q … a, b, c … Prob(x 1…xn| state k reaches state l ) Prob(x 1…xn) 1 a, b, c … 2 a, b, c … k a, b, c … q a, b, c … = ∑all x ∑ all i fk(i) pkl el(xi+1) bl(i+1) Prob(x 1…xn) 1 a, b, c … 2 a, b, c … q a, b, c … … a, b, c … l q … a, b, c …

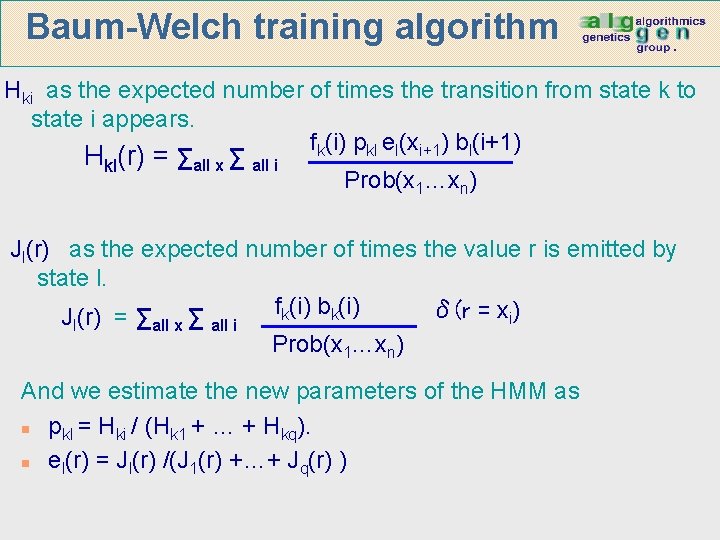

Baum-Welch training algorithm Hki as the expected number of times the transition from state k to state i appears. fk(i) pkl el(xi+1) bl(i+1) Hkl(r) = ∑all x ∑ all i Prob(x 1…xn) Jl(r) as the expected number of times the value r is emitted by state l. fk(i) bk(i) δ(r = xi) Jl(r) = ∑all x ∑ all i Prob(x 1…xn) And we estimate the new parameters of the HMM as n pkl = Hki / (Hk 1 + … + Hkq). n el(r) = Jl(r) /(J 1(r) +…+ Jq(r) )

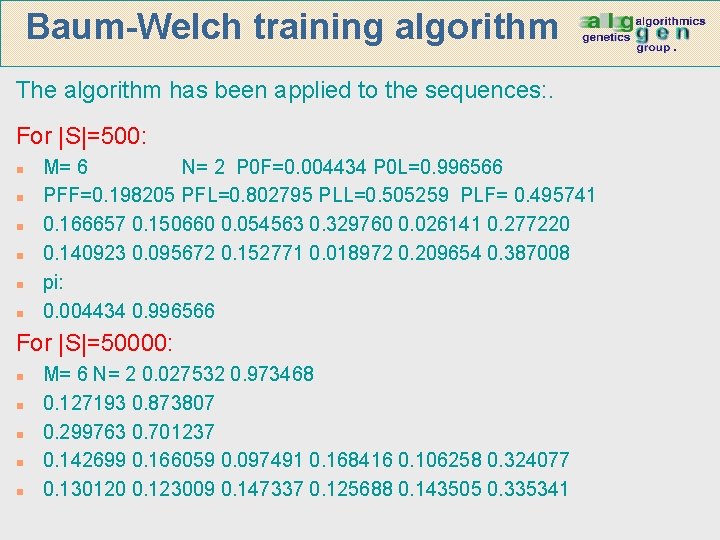

Baum-Welch training algorithm The algorithm has been applied to the sequences: . For |S|=500: n n n M= 6 N= 2 P 0 F=0. 004434 P 0 L=0. 996566 PFF=0. 198205 PFL=0. 802795 PLL=0. 505259 PLF= 0. 495741 0. 166657 0. 150660 0. 054563 0. 329760 0. 026141 0. 277220 0. 140923 0. 095672 0. 152771 0. 018972 0. 209654 0. 387008 pi: 0. 004434 0. 996566 For |S|=50000: n n n M= 6 N= 2 0. 027532 0. 973468 0. 127193 0. 873807 0. 299763 0. 701237 0. 142699 0. 166059 0. 097491 0. 168416 0. 106258 0. 324077 0. 130120 0. 123009 0. 147337 0. 125688 0. 143505 0. 335341

- Slides: 37