Advances in Random Matrix Theory Let there be

Advances in Random Matrix Theory: Let there be tools Alan Edelman Brian Sutton, Plamen Koev, Ioana Dumitriu, Raj Rao and others MIT: Dept of Mathematics, Computer Science AI Laboratories World Congress, Bernoulli Society Barcelona, Spain 9/25/2021 Wednesday July 28, 2004 1

Tools v So many applications … v Random matrix theory: catalyst for 21 st century special functions, analytical techniques, statistical techniques v In addition to mathematics and papers v Need tools for the novice! v Need tools for the engineers! v Need tools for the specialists! 9/25/2021 3

Themes of this talk v Tools for general beta v What is beta? Think of it as a measure of (inverse) volatility in “classical” random matrices. v Tools for complicated derived random matrices v Tools for numerical computation and simulation 9/25/2021 4

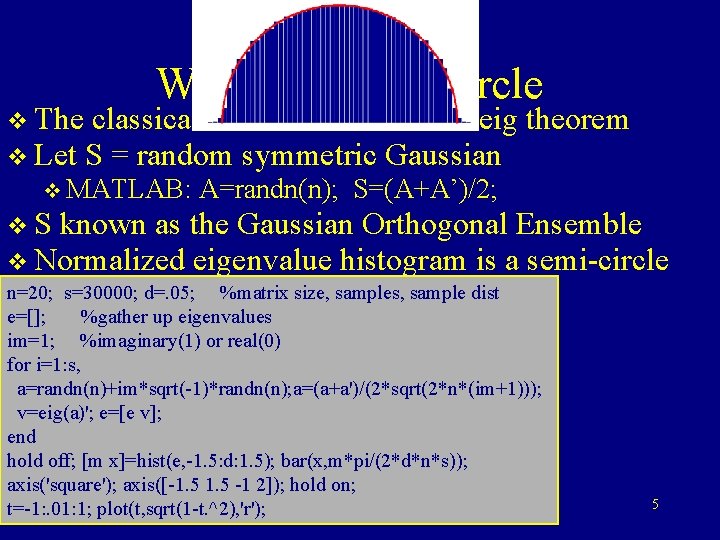

Wigner’s Semi-Circle v The classical & most famous rand eig v Let S = random symmetric Gaussian v MATLAB: theorem A=randn(n); S=(A+A’)/2; v S known as the Gaussian Orthogonal Ensemble v Normalized eigenvalue histogram is a semi-circle n=20; %matrix size, samples, sample v s=30000; Precised=. 05; statements require n etc. dist e=[]; %gather up eigenvalues im=1; %imaginary(1) or real(0) for i=1: s, a=randn(n)+im*sqrt(-1)*randn(n); a=(a+a')/(2*sqrt(2*n*(im+1))); v=eig(a)'; e=[e v]; end hold off; [m x]=hist(e, -1. 5: d: 1. 5); bar(x, m*pi/(2*d*n*s)); axis('square'); axis([-1. 5 -1 2]); hold on; t=-1: . 01: 1; plot(t, sqrt(1 -t. ^2), 'r'); 5

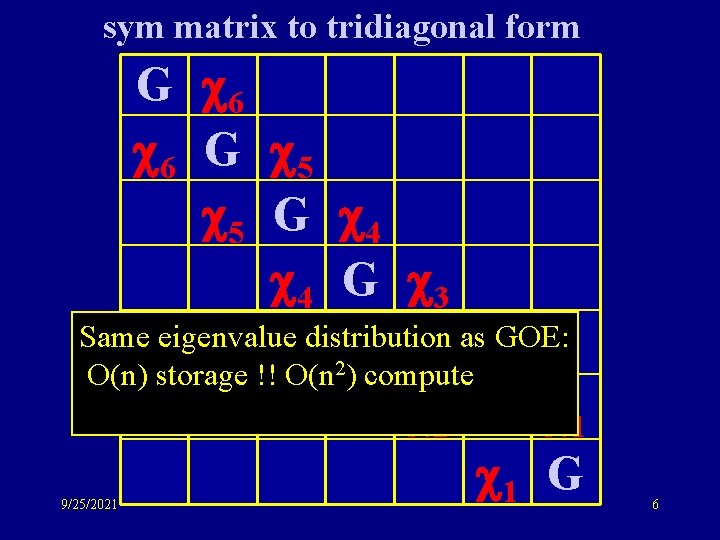

sym matrix to tridiagonal form G 6 6 G 5 5 G 4 4 G 3 Same eigenvalue distribution as GOE: G 3 2 2 O(n) storage !! O(n ) compute 2 G 1 1 G 9/25/2021 6

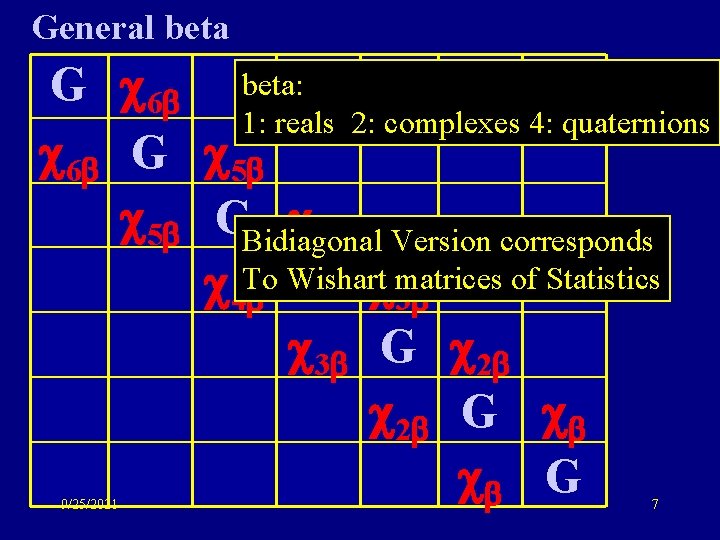

General beta G 6 beta: 1: reals 2: complexes 4: quaternions 6 G 5 5 GBidiagonal 4 Version corresponds of Statistics G matrices 4 To Wishart 3 3 G 2 2 G G 9/25/2021 7

Tools v v v Motivation: A condition number problem Jack & Hypergeometric of Matrix Argument MOPS: Ioana Dumitriu’s talk The Polynomial Method The tridiagonal numerical 109 trick 9/25/2021 8

Tools v v v Motivation: A condition number problem Jack & Hypergeometric of Matrix Argument MOPS: Ioana Dumitriu’s talk The Polynomial Method The tridiagonal numerical 109 trick 9/25/2021 9

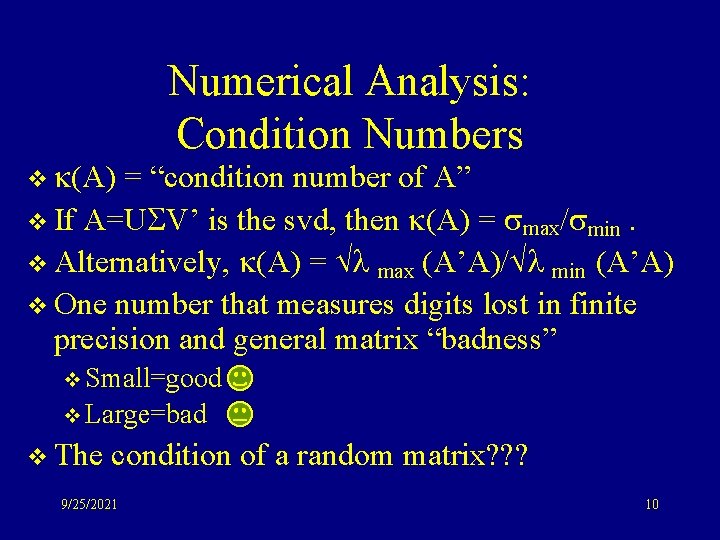

v (A) Numerical Analysis: Condition Numbers = “condition number of A” v If A=U V’ is the svd, then (A) = max/ min. v Alternatively, (A) = max (A’A)/ min (A’A) v One number that measures digits lost in finite precision and general matrix “badness” v Small=good v Large=bad v The condition of a random matrix? ? ? 9/25/2021 10

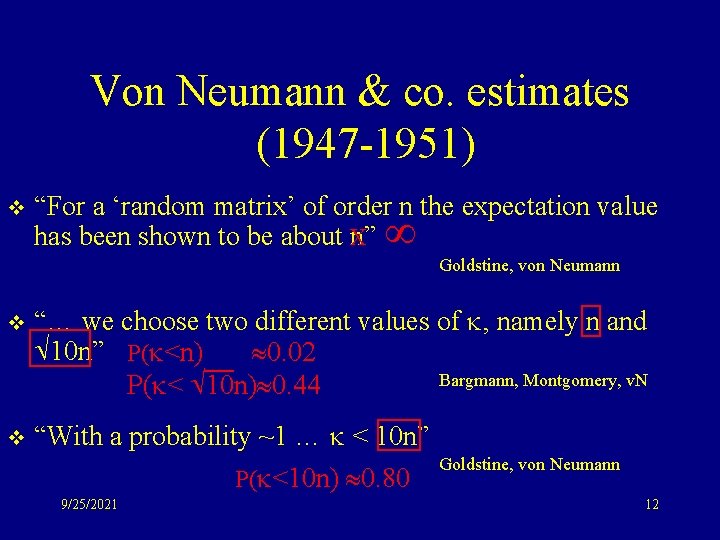

Von Neumann & co. estimates (1947 -1951) v “For a ‘random matrix’ of order n the expectation value has been shown to be about X n” Goldstine, von Neumann v “… we choose two different values of , namely n and 10 n” P( <n) 0. 02 Bargmann, Montgomery, v. N P( < 10 n) 0. 44 v “With a probability ~1 … < 10 n” P( <10 n) 0. 80 9/25/2021 Goldstine, von Neumann 12

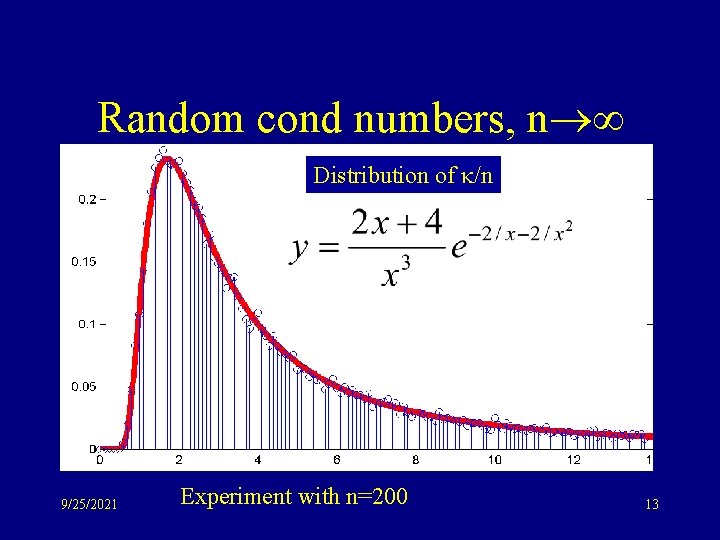

Random cond numbers, n Distribution of /n 9/25/2021 Experiment with n=200 13

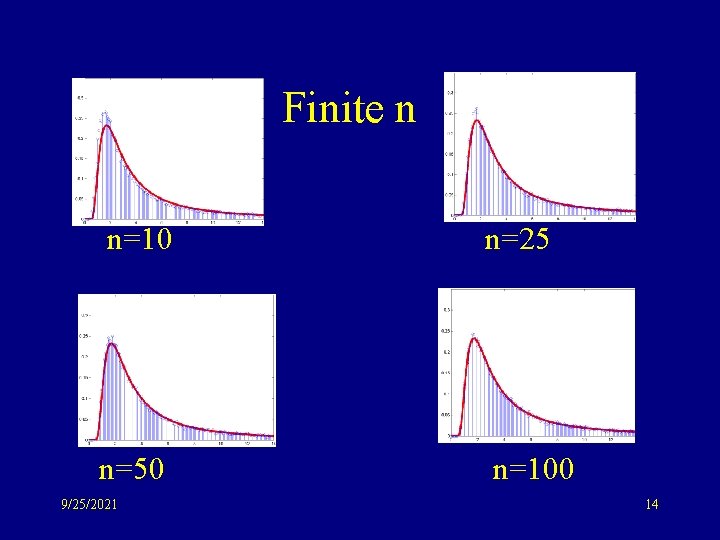

Finite n n=10 n=25 n=50 n=100 9/25/2021 14

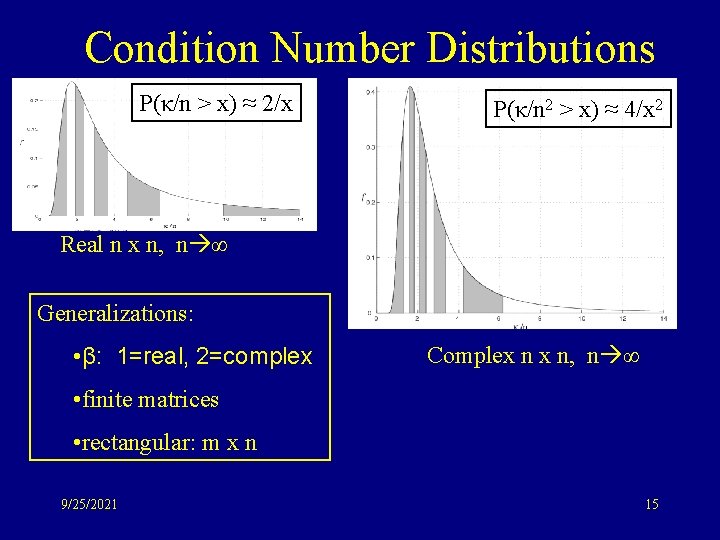

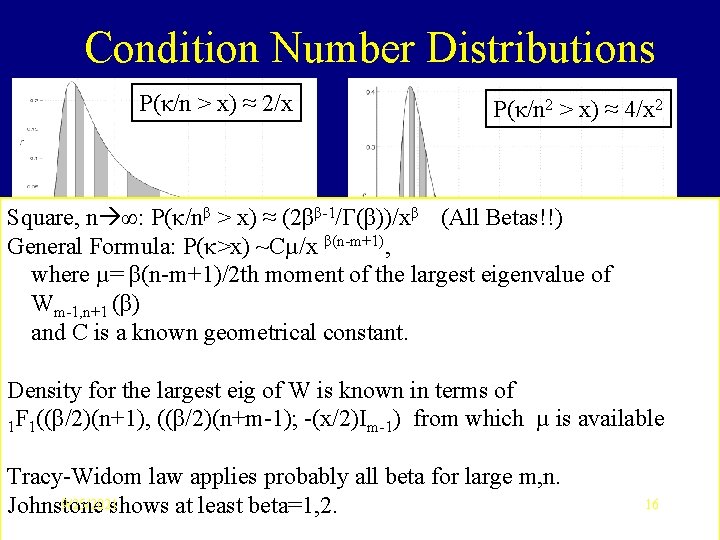

Condition Number Distributions P(κ/n > x) ≈ 2/x P(κ/n 2 > x) ≈ 4/x 2 Real n x n, n ∞ Generalizations: • β: 1=real, 2=complex Complex n x n, n ∞ • finite matrices • rectangular: m x n 9/25/2021 15

Condition Number Distributions P(κ/n > x) ≈ 2/x P(κ/n 2 > x) ≈ 4/x 2 Square, n ∞: P(κ/nβ > x) ≈ (2ββ-1/Γ(β))/xβ (All Betas!!) Real n x n, n ∞ General Formula: P(κ>x) ~Cµ/x β(n-m+1), where µ= β(n-m+1)/2 th moment of the largest eigenvalue of Wm-1, n+1 (β) and C is a known geometrical constant. Complex n x n, n ∞ Density for the largest eig of W is known in terms of 1 F 1((β/2)(n+1), ((β/2)(n+m-1); -(x/2)Im-1) from which µ is available Tracy-Widom law applies probably all beta for large m, n. 9/25/2021 Johnstone shows at least beta=1, 2. 16

Tools v v v Motivation: A condition number problem Jack & Hypergeometric of Matrix Argument MOPS: Ioana Dumitriu’s talk The Polynomial Method The tridiagonal numerical 109 trick 9/25/2021 17

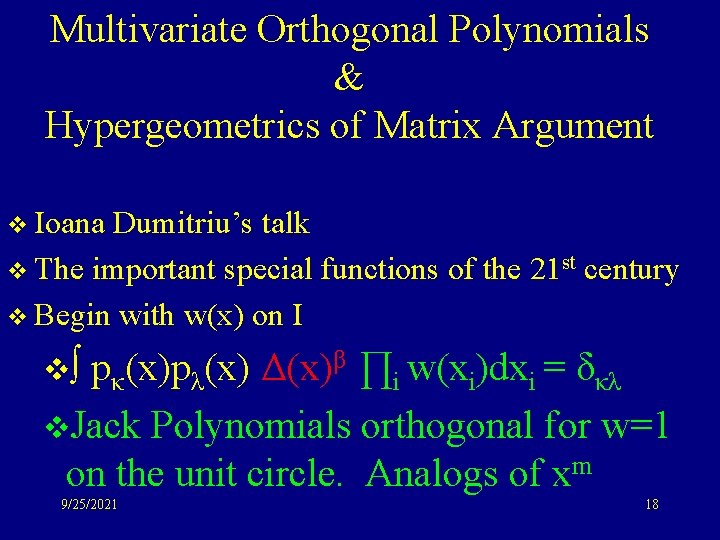

Multivariate Orthogonal Polynomials & Hypergeometrics of Matrix Argument v Ioana Dumitriu’s talk v The important special functions of the 21 st century v Begin with w(x) on I v∫ pκ(x)pλ(x) Δ(x)β ∏i w(xi)dxi = δκλ v. Jack Polynomials orthogonal for w=1 on the unit circle. Analogs of xm 9/25/2021 18

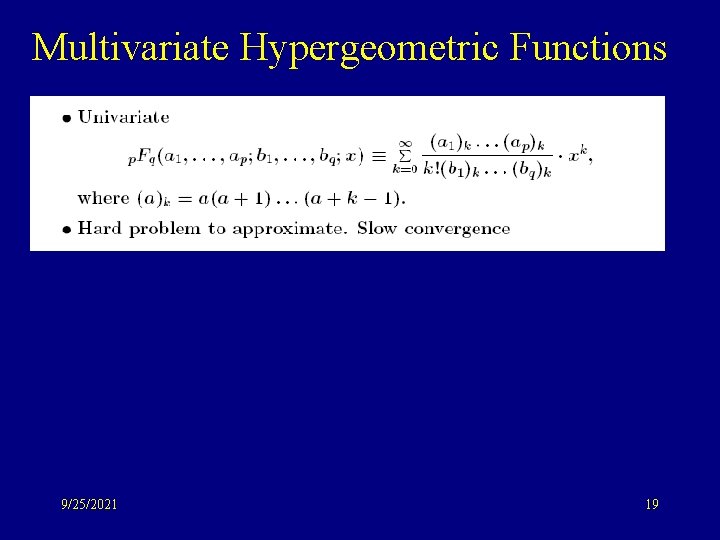

Multivariate Hypergeometric Functions 9/25/2021 19

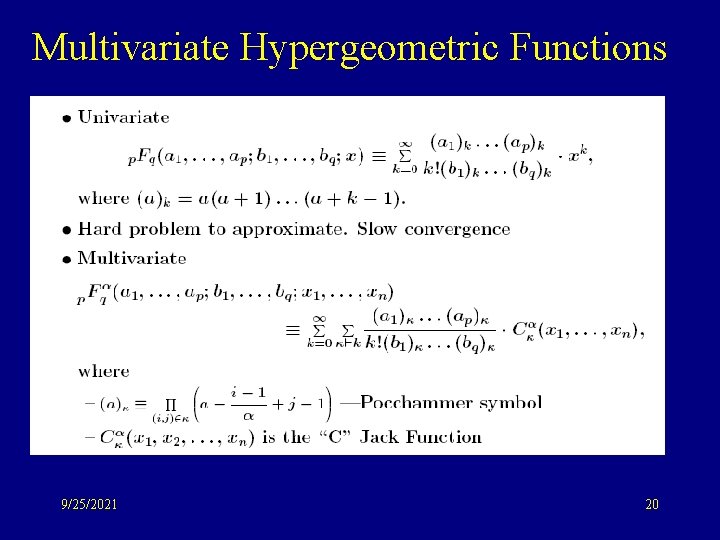

Multivariate Hypergeometric Functions 9/25/2021 20

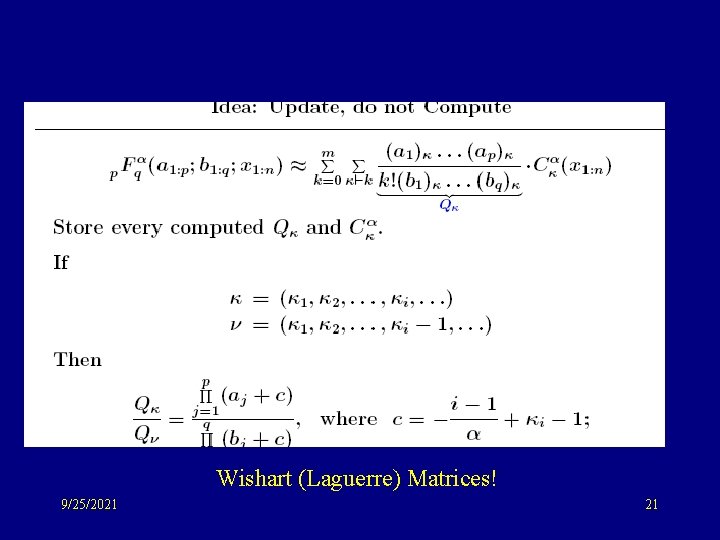

Wishart (Laguerre) Matrices! 9/25/2021 21

Plamen’s clever idea 9/25/2021 22

Tools v v v Motivation: A condition number problem Jack & Hypergeometric of Matrix Argument MOPS: Ioana Dumitriu’s talk The Polynomial Method The tridiagonal numerical 109 trick 9/25/2021 23

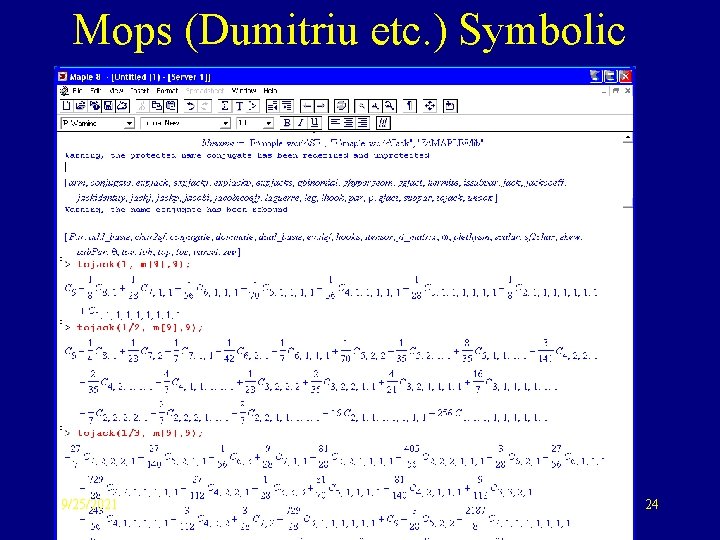

Mops (Dumitriu etc. ) Symbolic 9/25/2021 24

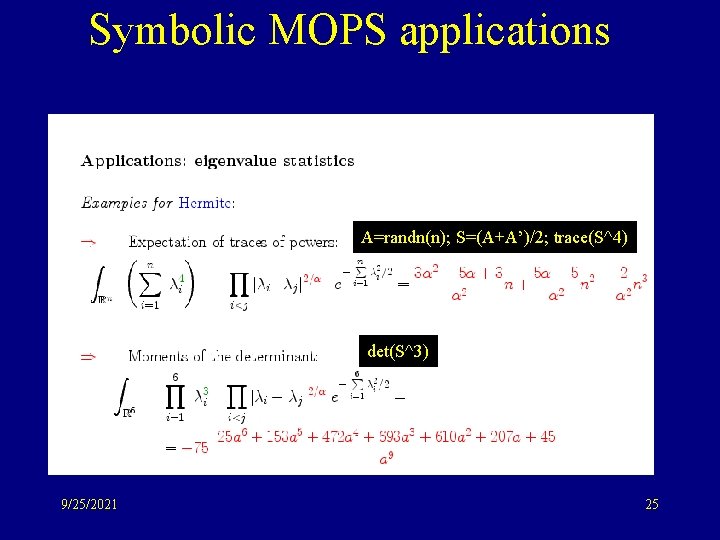

Symbolic MOPS applications A=randn(n); S=(A+A’)/2; trace(S^4) det(S^3) 9/25/2021 25

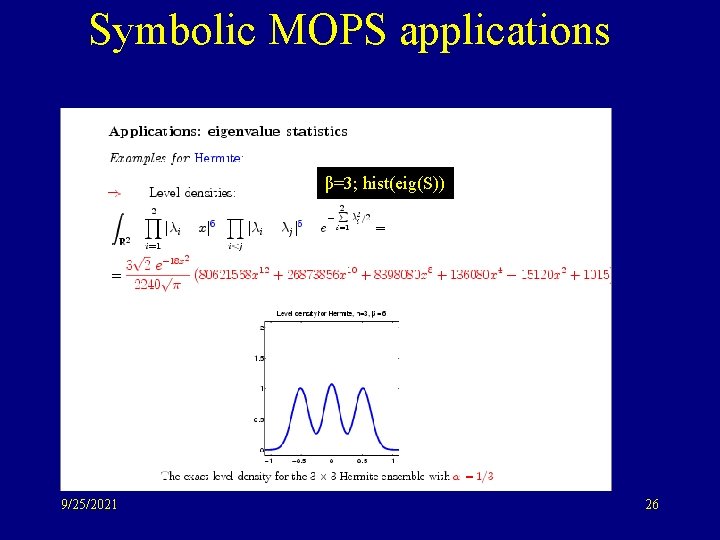

Symbolic MOPS applications β=3; hist(eig(S)) 9/25/2021 26

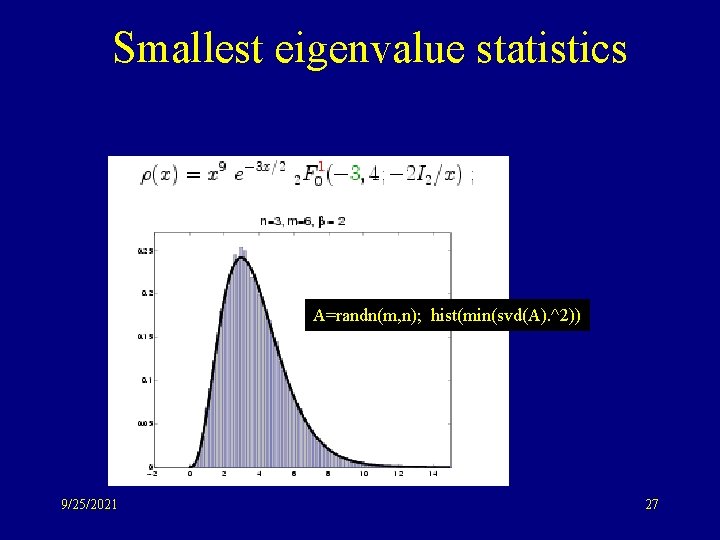

Smallest eigenvalue statistics A=randn(m, n); hist(min(svd(A). ^2)) 9/25/2021 27

Tools v v v Motivation: A condition number problem Jack & Hypergeometric of Matrix Argument MOPS: Ioana Dumitriu’s talk The Polynomial Method -- Raj! The tridiagonal numerical 109 trick 9/25/2021 28

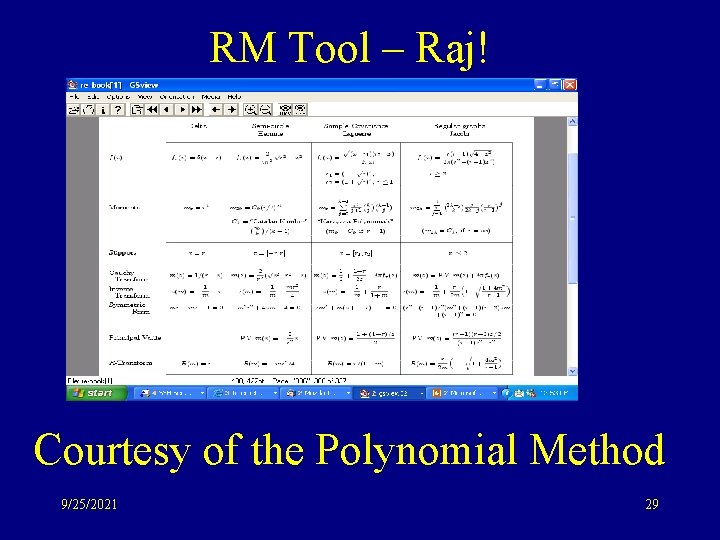

RM Tool – Raj! Courtesy of the Polynomial Method 9/25/2021 29

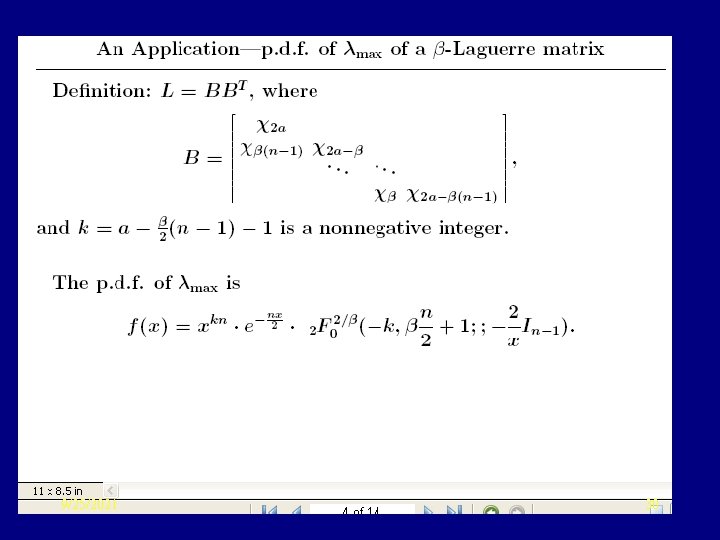

9/25/2021 30

Tools v v v Motivation: A condition number problem Jack & Hypergeometric of Matrix Argument MOPS: Ioana Dumitriu’s talk The Polynomial Method The tridiagonal numerical 109 trick 9/25/2021 38

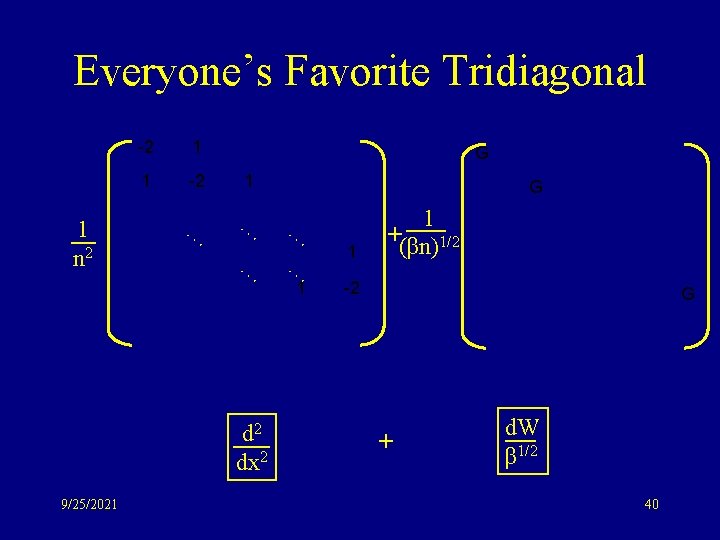

Everyone’s Favorite Tridiagonal 1 n 2 -2 1 1 -2 1 … … … 1 1 -2 d 2 dx 2 9/25/2021 39

Everyone’s Favorite Tridiagonal 1 n 2 -2 1 1 -2 1 … … … 1 1 -2 G d 2 dx 2 9/25/2021 G 1 +(βn)1/2 G + d. W β 1/2 40

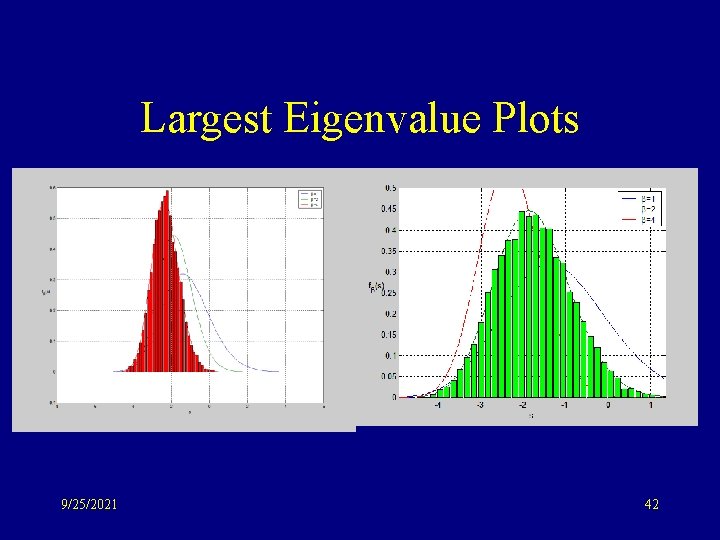

Largest Eigenvalue Plots 9/25/2021 42

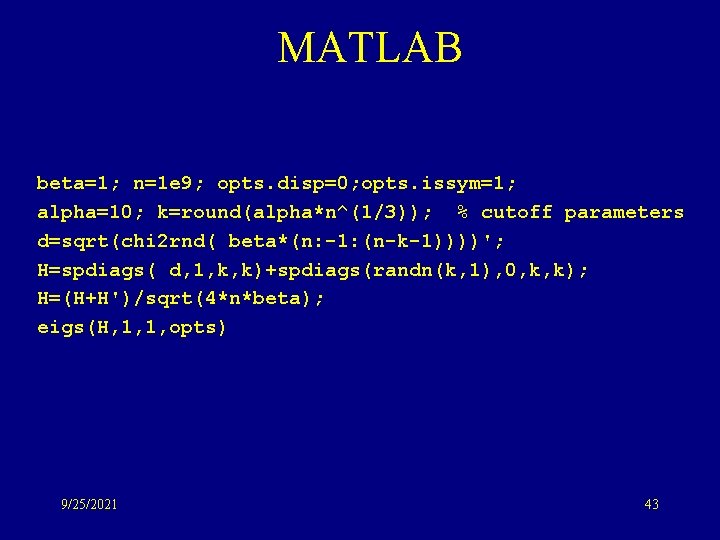

MATLAB beta=1; n=1 e 9; opts. disp=0; opts. issym=1; alpha=10; k=round(alpha*n^(1/3)); % cutoff parameters d=sqrt(chi 2 rnd( beta*(n: -1: (n-k-1))))'; H=spdiags( d, 1, k, k)+spdiags(randn(k, 1), 0, k, k); H=(H+H')/sqrt(4*n*beta); eigs(H, 1, 1, opts) 9/25/2021 43

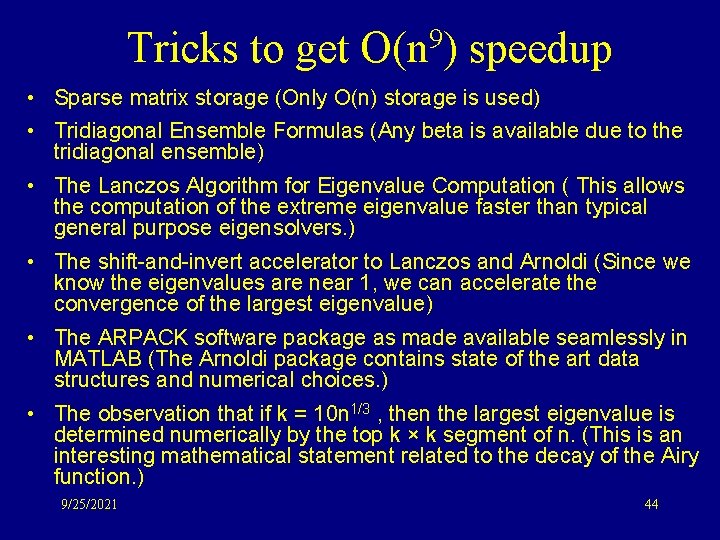

Tricks to get O(n 9) speedup • Sparse matrix storage (Only O(n) storage is used) • Tridiagonal Ensemble Formulas (Any beta is available due to the tridiagonal ensemble) • The Lanczos Algorithm for Eigenvalue Computation ( This allows the computation of the extreme eigenvalue faster than typical general purpose eigensolvers. ) • The shift-and-invert accelerator to Lanczos and Arnoldi (Since we know the eigenvalues are near 1, we can accelerate the convergence of the largest eigenvalue) • The ARPACK software package as made available seamlessly in MATLAB (The Arnoldi package contains state of the art data structures and numerical choices. ) • The observation that if k = 10 n 1/3 , then the largest eigenvalue is determined numerically by the top k × k segment of n. (This is an interesting mathematical statement related to the decay of the Airy function. ) 9/25/2021 44

Random matrix tools! 9/25/2021 47

- Slides: 36