1 Scalable Formal Dynamic Verification of MPI Programs

1 Scalable Formal Dynamic Verification of MPI Programs through Distributed Causality Tracking Dissertation Defense Anh Vo Committee: Prof. Ganesh Gopalakrishnan (co-advisor), Prof. Robert M. Kirby (co-advisor), Dr. Bronis R. de Supinski (LLNL), Prof. Mary Hall and Prof. Matthew Might

2 Our computational ambitions are endless! • Terascale • Petascale (where we are now) • Exascale • Zettascale • Correctness is important Jaguar, Courtesy ORNL Protein Folding, Courtesy Wikipedia Computation Astrophysics, Courtesy LBL

Yet we are falling behind when it comes to correctness 3 • Concurrent software debugging is hard • It gets harder as the degree of parallelism in applications increases – Node level: Message Passing Interface (MPI) – Core level: Threads, Open. MPI, CUDA • Hybrid programming will be the future – MPI + Threads – MPI + Open. MP – MPI + CUDA MPI Correctness Tools • Yet tools are lagging behind! – Many tools cannot operate at scale MPI Apps

4 We focus on dynamic verification for MPI • Lack of systematic verification tools for MPI • We need to build verification tools for MPI first – Realistic MPI programs run at large scale – Downscaling might mask bugs • MPI tools can be expanded to support hybrid programs

5 We choose MPI because of its ubiquity • Born 1994 when the world had 600 internet sites, 700 nm lithography, 68 MHz CPUs • Still the dominant API for HPC – Most widely supported and understood – High performance, flexible, portable

6 Thesis statement Scalable, modular and usable dynamic verification of realistic MPI programs is feasible and novel.

7 Contributions •

8 Agenda • • • Motivation and Contributions Background MPI ordering based on Matches-Before The centralized approach: ISP The distributed approach: DAMPI Conclusions

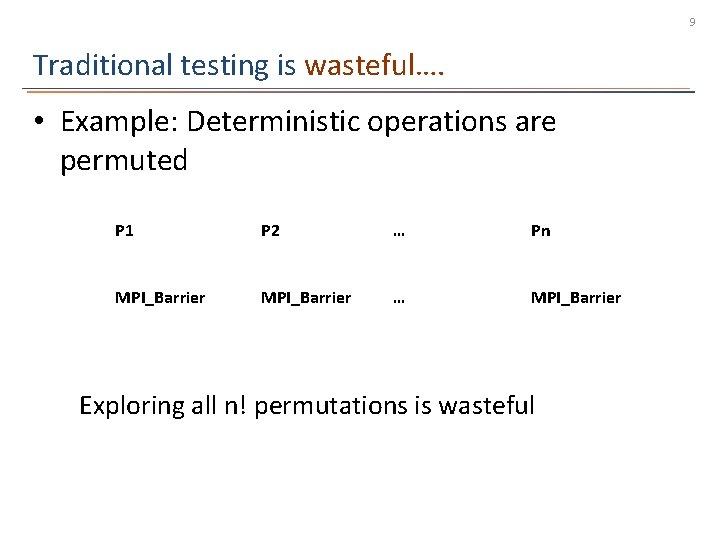

9 Traditional testing is wasteful…. • Example: Deterministic operations are permuted P 1 P 2 … Pn MPI_Barrier … MPI_Barrier Exploring all n! permutations is wasteful

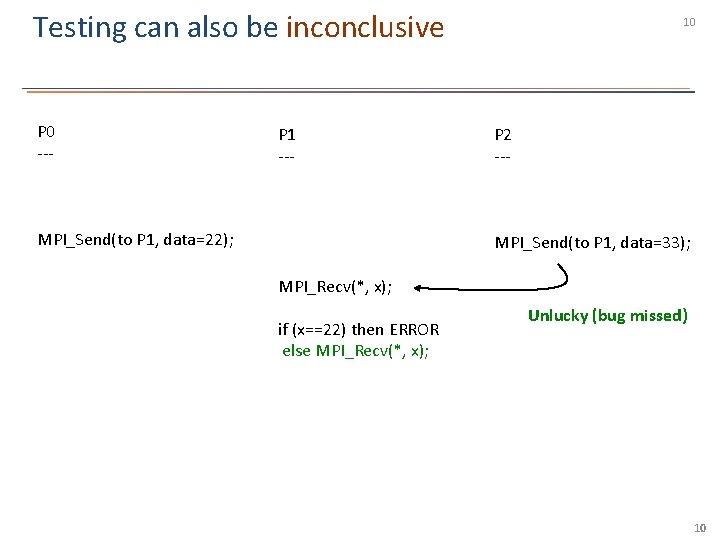

Testing can also be inconclusive; without nondeterminism coverage, we can miss bugs 10 P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then ERROR else MPI_Recv(*, x); Unlucky (bug missed) 10

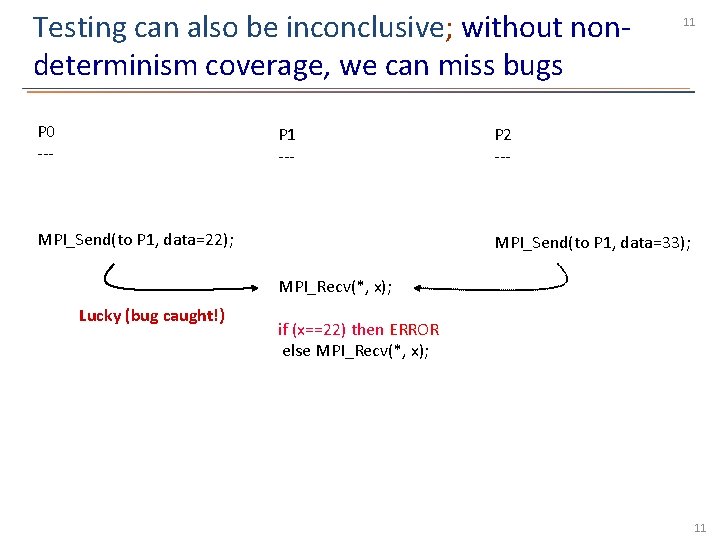

Testing can also be inconclusive; without nondeterminism coverage, we can miss bugs 11 P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); Lucky (bug caught!) if (x==22) then ERROR else MPI_Recv(*, x); 11

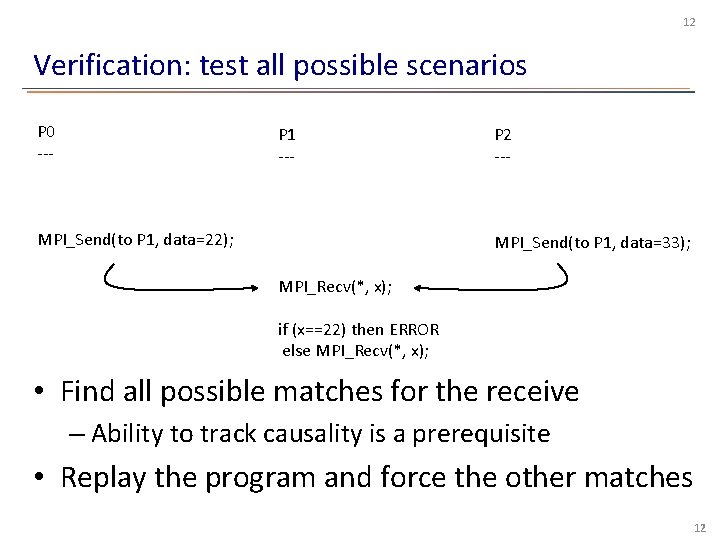

12 Verification: test all possible scenarios P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then ERROR else MPI_Recv(*, x); • Find all possible matches for the receive – Ability to track causality is a prerequisite • Replay the program and force the other matches 12

13 Dynamic verification of MPI • Dynamic verification combines strength of formal methods and testing – Avoids generating false alarms – Finds bugs with respect to actual binaries • Builds on the familiar approach of “testing” • Guarantee coverage over nondeterminism

14 Overview of Message Passing Interface (MPI) • An API specification for communication protocols between processes • Allows developers to write high performance and portable parallel code • Rich in features – Synchronous: easy to use and understand – Asynchronous: high performance – Nondeterministic constructs: reduce code complexity

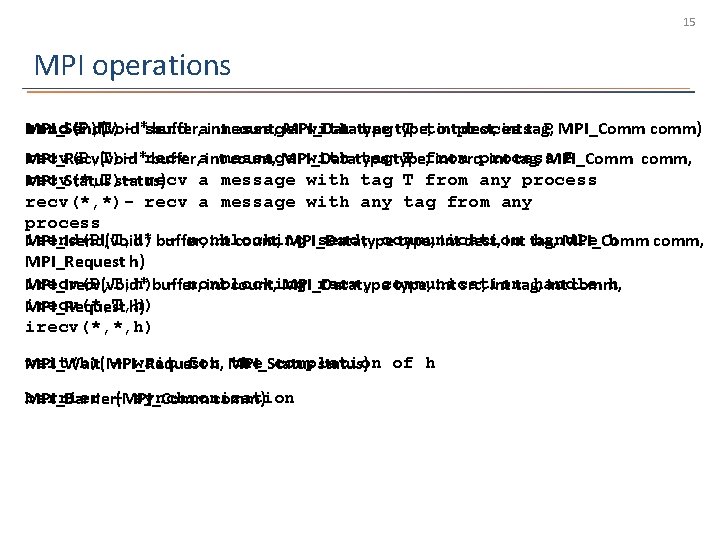

15 MPI operations send(P, T)with tag type, T to P MPI_Comm comm) MPI_Send(void*send buffer, aintmessage count, MPI_Datatype intprocess dest, int tag, recv(P, T)with tagtype, T from P MPI_Recv(void*recv buffer, aintmessage count, MPI_Datatype int src, process int tag, MPI_Comm comm, recv(*, T)recv a message with tag T from any process MPI_Status status) recv(*, *)- recv a message with any tag from any process isend(P, T, h) – nonblocking send, communication handle h MPI_Isend(void* buffer, int count, MPI_Datatype, int dest, int tag, MPI_Comm comm, MPI_Request h) irecv(P, T, h) – nonblocking recv, communication handle h MPI_Irecv(void* buffer, int count, MPI_Datatype, int src, int tag, int comm, irecv(*, T, h) MPI_Request h) irecv(*, *, h) wait(h) – wait for the completion MPI_Wait(MPI_Request h, MPI_Status status) of h barrier – synchronization MPI_Barrier(MPI_Comm comm)

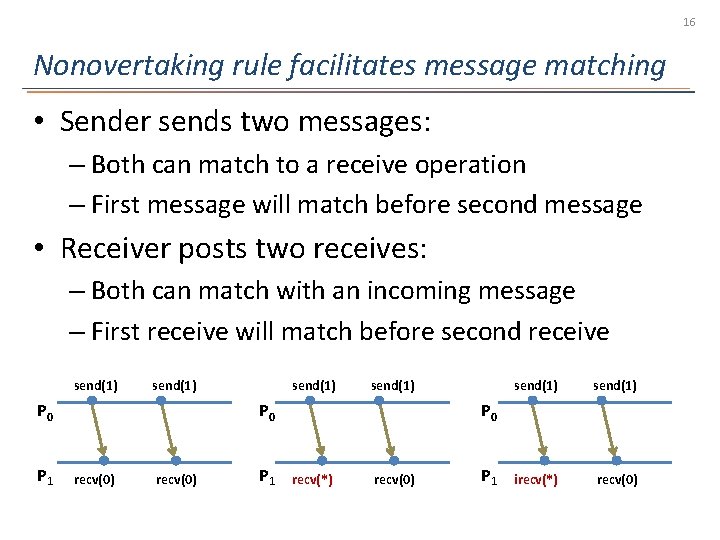

16 Nonovertaking rule facilitates message matching • Sender sends two messages: – Both can match to a receive operation – First message will match before second message • Receiver posts two receives: – Both can match with an incoming message – First receive will match before second receive send(1) P 0 P 1 send(1) P 0 recv(0) P 1 send(1) irecv(*) recv(0) P 0 recv(*) recv(0) P 1

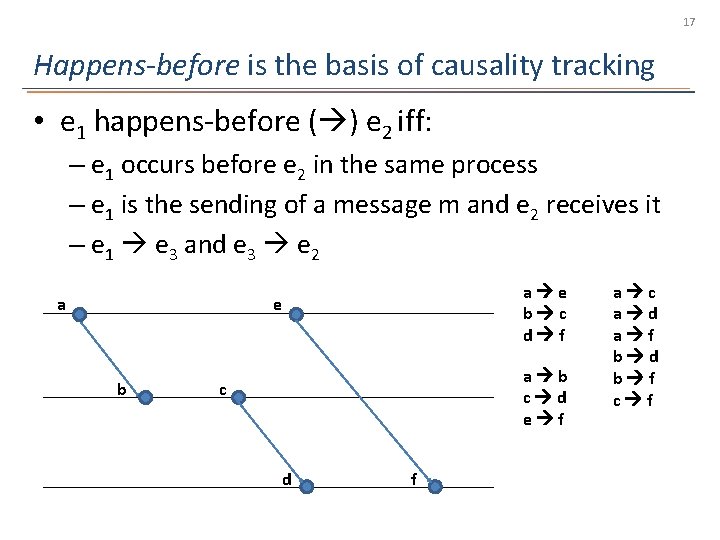

17 Happens-before is the basis of causality tracking • e 1 happens-before ( ) e 2 iff: – e 1 occurs before e 2 in the same process – e 1 is the sending of a message m and e 2 receives it – e 1 e 3 and e 3 e 2 a a e b c d f e b a b c d e f c d f a c a d a f b d b f c f

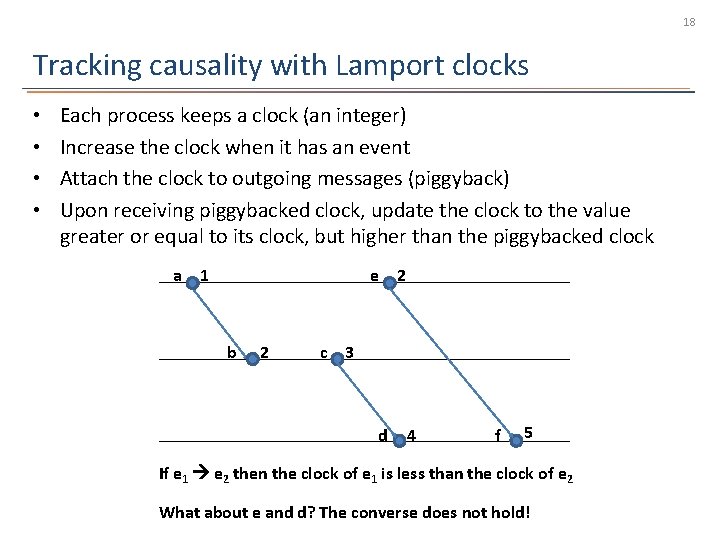

18 Tracking causality with Lamport clocks • • Each process keeps a clock (an integer) Increase the clock when it has an event Attach the clock to outgoing messages (piggyback) Upon receiving piggybacked clock, update the clock to the value greater or equal to its clock, but higher than the piggybacked clock a 1 e b 2 2 c 3 d 4 f 5 If e 1 e 2 then the clock of e 1 is less than the clock of e 2 What about e and d? The converse does not hold!

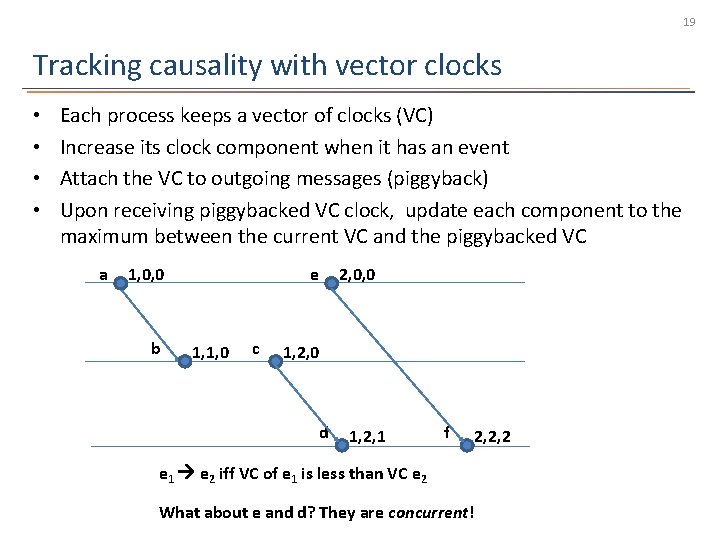

19 Tracking causality with vector clocks • • Each process keeps a vector of clocks (VC) Increase its clock component when it has an event Attach the VC to outgoing messages (piggyback) Upon receiving piggybacked VC clock, update each component to the maximum between the current VC and the piggybacked VC a 1, 0, 0 b e 1, 1, 0 c 2, 0, 0 1, 2, 0 d 1, 2, 1 f 2, 2, 2 e 1 e 2 iff VC of e 1 is less than VC e 2 What about e and d? They are concurrent!

20 Agenda • • • Motivation and Contributions Background Matches-Before for MPI The centralized approach: ISP The distributed approach: DAMPI Conclusions

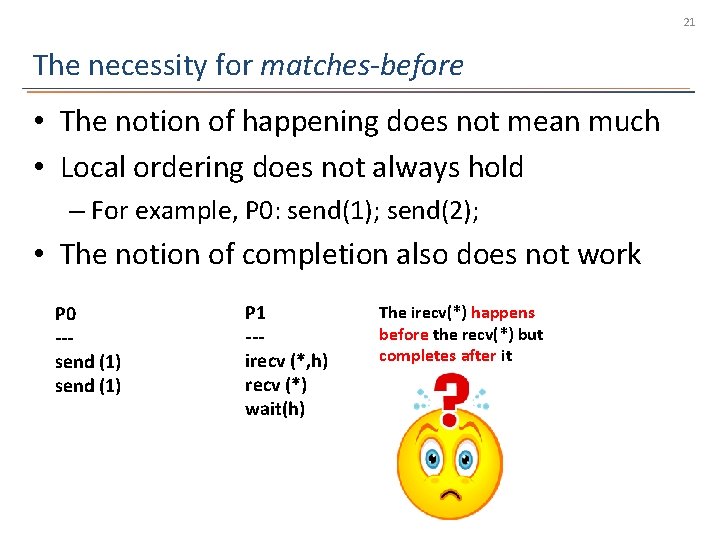

21 The necessity for matches-before • The notion of happening does not mean much • Local ordering does not always hold – For example, P 0: send(1); send(2); • The notion of completion also does not work P 0 --send (1) P 1 --irecv (*, h) recv (*) wait(h) The irecv(*) happens before the recv(*) but completes after it

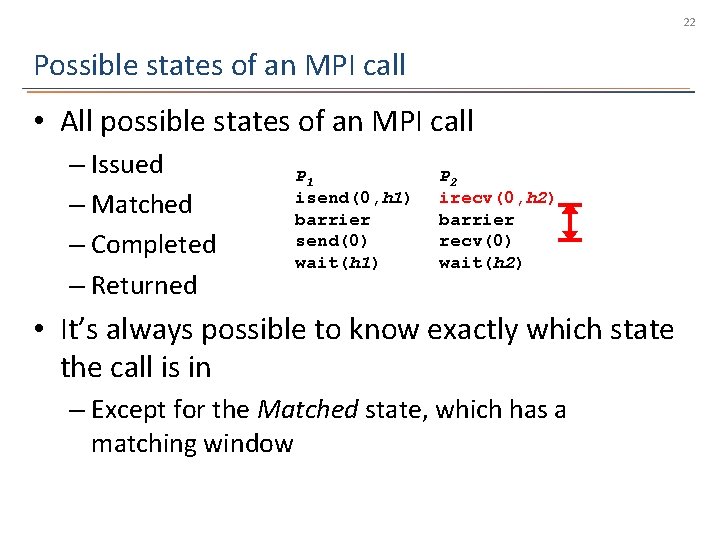

22 Possible states of an MPI call • All possible states of an MPI call – Issued – Matched – Completed – Returned P 1 isend(0, h 1) barrier send(0) wait(h 1) P 2 irecv(0, h 2) barrier recv(0) wait(h 2) • It’s always possible to know exactly which state the call is in – Except for the Matched state, which has a matching window

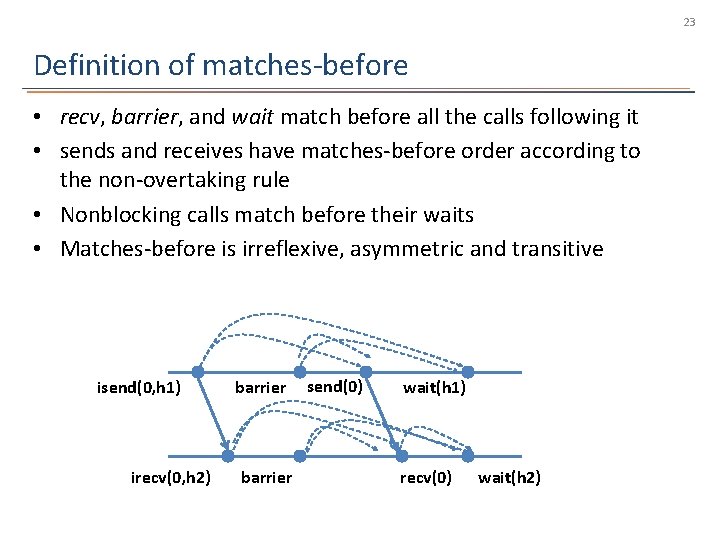

23 Definition of matches-before • recv, barrier, and wait match before all the calls following it • sends and receives have matches-before order according to the non-overtaking rule • Nonblocking calls match before their waits • Matches-before is irreflexive, asymmetric and transitive isend(0, h 1) irecv(0, h 2) barrier send(0) wait(h 1) recv(0) wait(h 2)

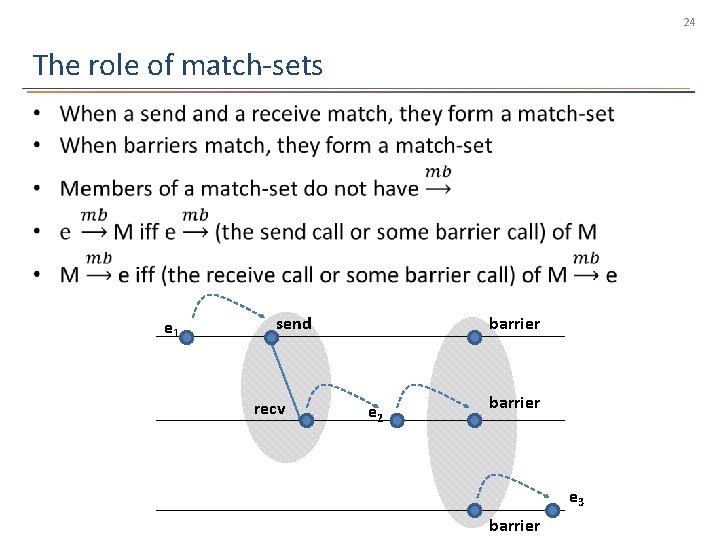

24 The role of match-sets • e 1 send recv barrier e 2 barrier e 3 barrier

25 Agenda • • • Motivation and Contributions Background Matches-Before for MPI The centralized approach: ISP The distributed approach: DAMPI Conclusions

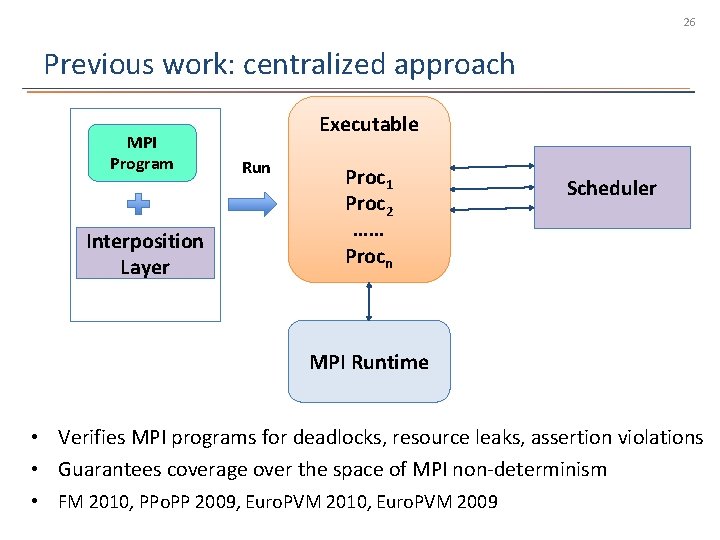

26 Previous work: centralized approach MPI Program Interposition Layer Executable Run Proc 1 Proc 2 …… Procn Scheduler MPI Runtime • Verifies MPI programs for deadlocks, resource leaks, assertion violations • Guarantees coverage over the space of MPI non-determinism • FM 2010, PPo. PP 2009, Euro. PVM 2010, Euro. PVM 2009

27 Drawbacks of ISP • Scales only up to 32 -64 processes • Large programs (of 1000 s of processes) often exhibit bugs that are not triggered at low ends – Index out of bounds – Buffer overflows – MPI implementation bugs • Need a truly In-Situ verification method for codes deployed on large-scale clusters! – Verify an MPI program as deployed on a cluster

28 Agenda • • • Motivation and Contributions Background Matches-Before for MPI The centralized approach: ISP The distributed approach: DAMPI Conclusions

29 DAMPI • Distributed Analyzer of MPI Programs • Dynamic verification focusing on coverage over the space of MPI non-determinism • Verification occurs on the actual deployed code • DAMPI’s features: – Detect and enforce alternative outcomes – Scalable – User-configurable coverage

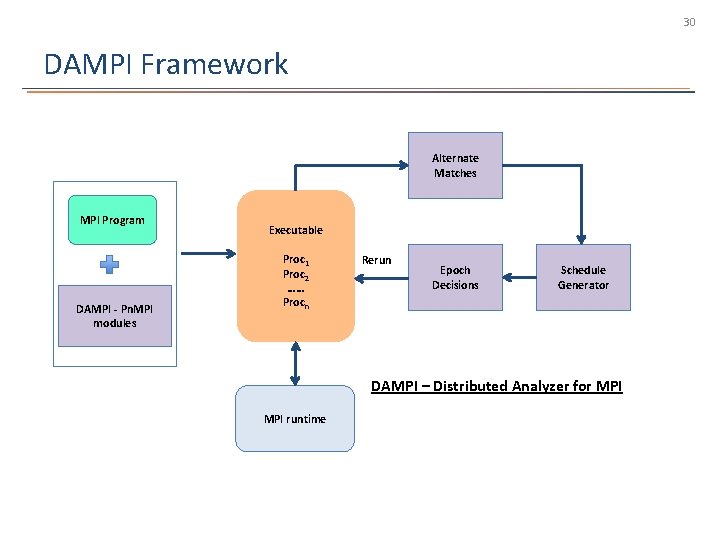

30 DAMPI Framework Alternate Matches MPI Program DAMPI - Pn. MPI modules Executable Proc 1 Proc 2 …… Procn Rerun Epoch Decisions Schedule Generator DAMPI – Distributed Analyzer for MPI runtime

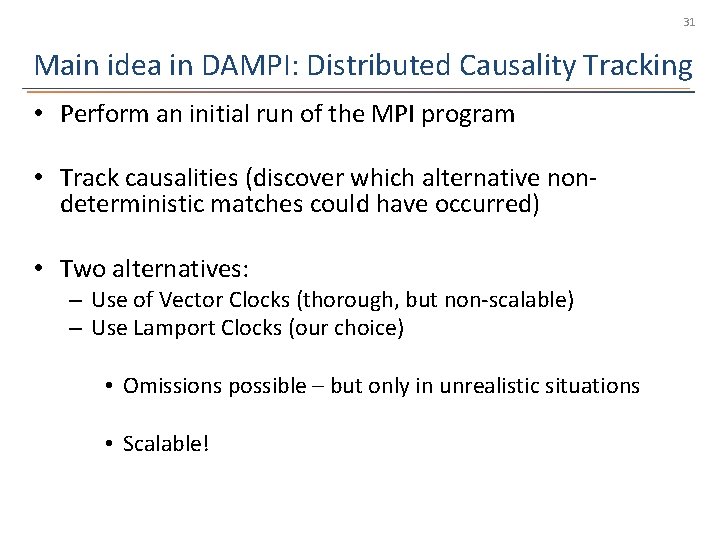

31 Main idea in DAMPI: Distributed Causality Tracking • Perform an initial run of the MPI program • Track causalities (discover which alternative nondeterministic matches could have occurred) • Two alternatives: – Use of Vector Clocks (thorough, but non-scalable) – Use Lamport Clocks (our choice) • Omissions possible – but only in unrealistic situations • Scalable!

![DAMPI uses Lamport clocks to maintain Matches-Before 32 barrier [0] P 0 send(1) [0] DAMPI uses Lamport clocks to maintain Matches-Before 32 barrier [0] P 0 send(1) [0]](http://slidetodoc.com/presentation_image/2d52f23e7bf1282ce5cb6a4d3feb1929/image-32.jpg)

DAMPI uses Lamport clocks to maintain Matches-Before 32 barrier [0] P 0 send(1) [0] barrier [0] P 1 irecv(*) [0] wait [2] recv(*) [1] P 2 send(1) [0] Excuse me, why is the second send RED? barrier [0] • Use Lamport clock to track Matches-Before – Each process keeps a logical clock – Attach clock to each outgoing message – Increases it after a nondeterministic receive has matched • Mb allows us to infer when irecv’s match – Compare incoming clock to detect potential matches

![How we use Matches-Before relationship to detect alternative matches barrier [0] P 0 send(1) How we use Matches-Before relationship to detect alternative matches barrier [0] P 0 send(1)](http://slidetodoc.com/presentation_image/2d52f23e7bf1282ce5cb6a4d3feb1929/image-33.jpg)

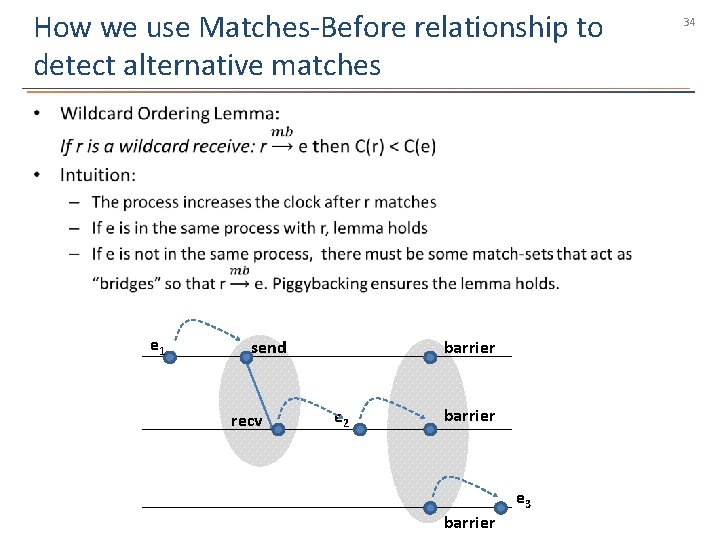

How we use Matches-Before relationship to detect alternative matches barrier [0] P 0 send(1) [0] barrier [0] P 1 irecv(*) [0] recv(*) [1] P 2 send(1) [0] wait [2] barrier [0] 33

How we use Matches-Before relationship to detect alternative matches • e 1 send recv barrier e 2 barrier e 3 barrier 34

![How we use Matches-Before relationship to detect alternative matches barrier [0] P 0 send(1) How we use Matches-Before relationship to detect alternative matches barrier [0] P 0 send(1)](http://slidetodoc.com/presentation_image/2d52f23e7bf1282ce5cb6a4d3feb1929/image-35.jpg)

How we use Matches-Before relationship to detect alternative matches barrier [0] P 0 send(1) [0] barrier [0] P 1 R = irecv(*) [0] R’= recv(*) [1] P 2 S = send(1) [0] • wait [2] barrier [0] 35

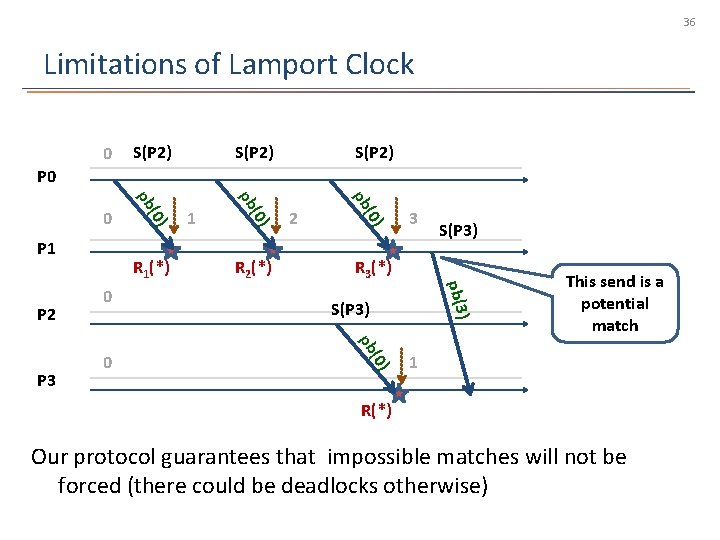

36 Limitations of Lamport Clock 0 S(P 2) P 0 3 S(P 3) R 3(*) ) pb(3 S(P 3) (0) pb 0 (0) 0 R 2(*) 2 pb P 3 R 1(*) 1 (0) pb P 2 (0) P 1 pb 0 This send is a potential match 1 R(*) Our protocol guarantees that impossible matches will not be forced (there could be deadlocks otherwise)

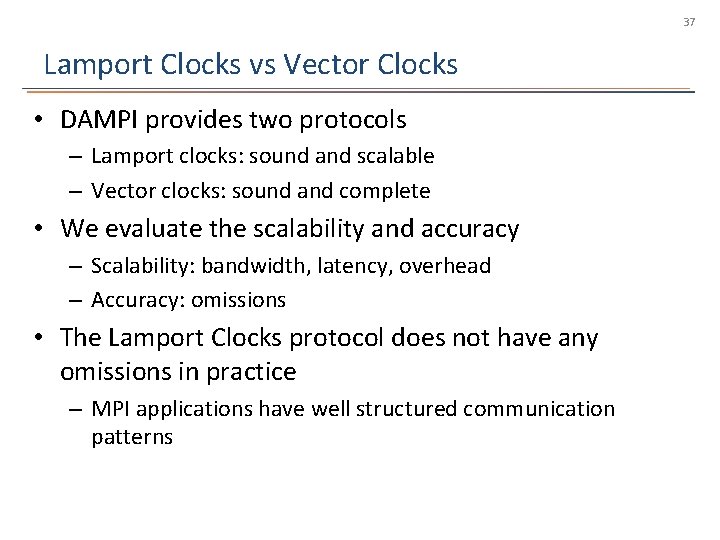

37 Lamport Clocks vs Vector Clocks • DAMPI provides two protocols – Lamport clocks: sound and scalable – Vector clocks: sound and complete • We evaluate the scalability and accuracy – Scalability: bandwidth, latency, overhead – Accuracy: omissions • The Lamport Clocks protocol does not have any omissions in practice – MPI applications have well structured communication patterns

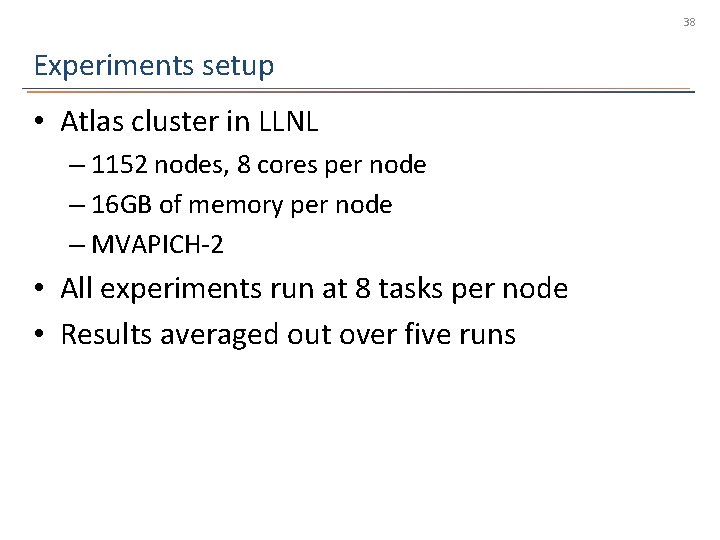

38 Experiments setup • Atlas cluster in LLNL – 1152 nodes, 8 cores per node – 16 GB of memory per node – MVAPICH-2 • All experiments run at 8 tasks per node • Results averaged out over five runs

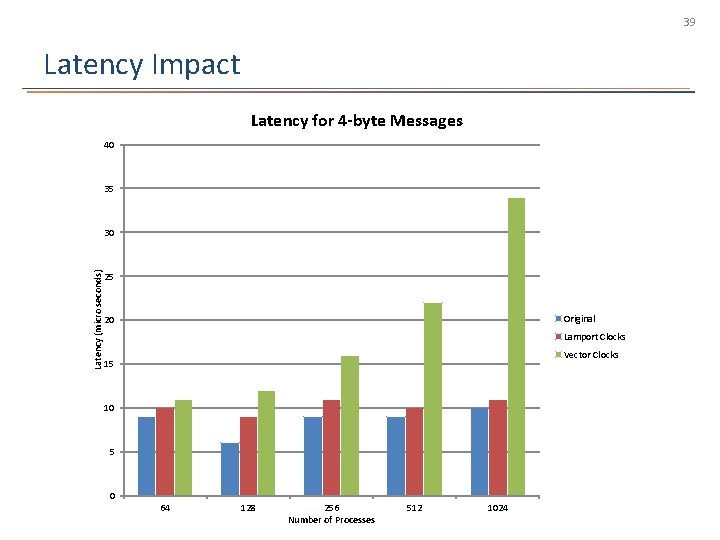

39 Latency Impact Latency for 4 -byte Messages 40 35 Latency (micro seconds) 30 25 Original 20 Lamport Clocks Vector Clocks 15 10 5 0 64 128 256 Number of Processes 512 1024

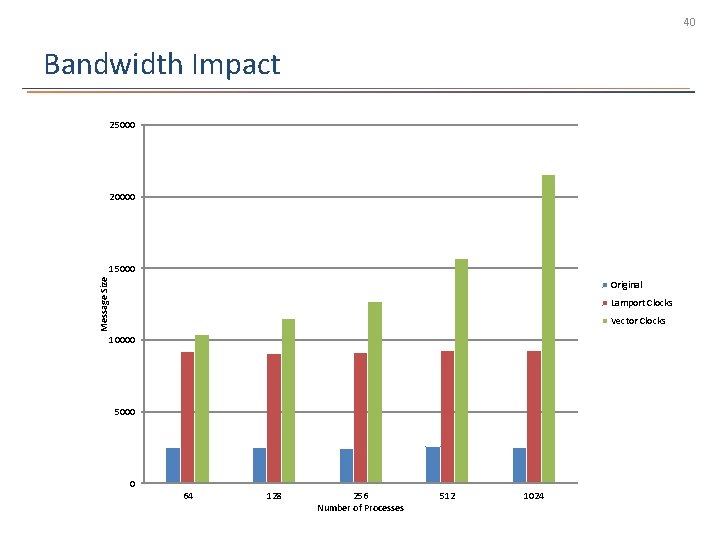

40 Bandwidth Impact 25000 20000 Message Size 15000 Original Lamport Clocks Vector Clocks 10000 5000 0 64 128 256 Number of Processes 512 1024

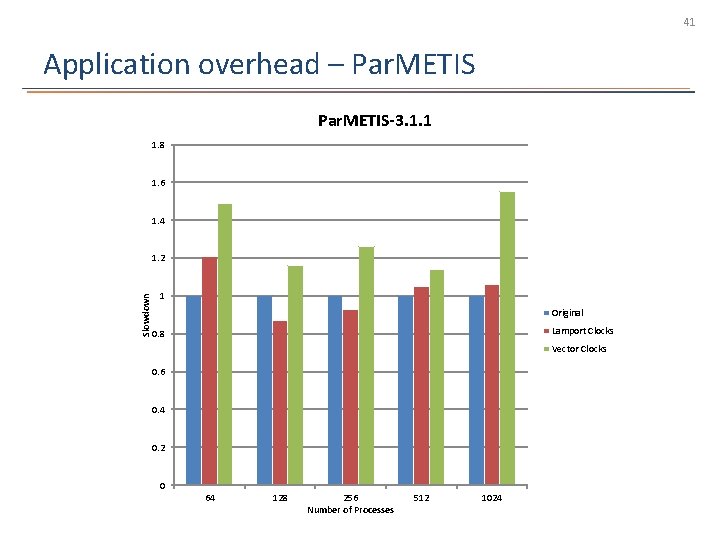

41 Application overhead – Par. METIS-3. 1. 1 1. 8 1. 6 1. 4 Slowdown 1. 2 1 Original Lamport Clocks 0. 8 Vector Clocks 0. 6 0. 4 0. 2 0 64 128 256 Number of Processes 512 1024

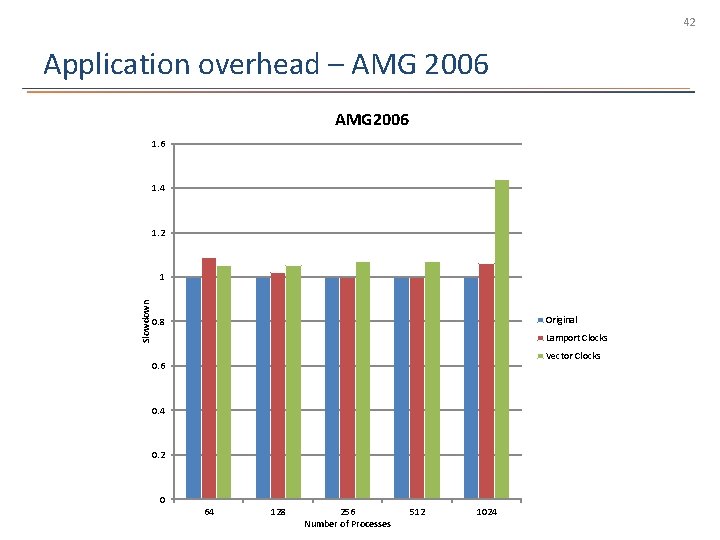

42 Application overhead – AMG 2006 1. 4 1. 2 Slowdown 1 Original 0. 8 Lamport Clocks Vector Clocks 0. 6 0. 4 0. 2 0 64 128 256 Number of Processes 512 1024

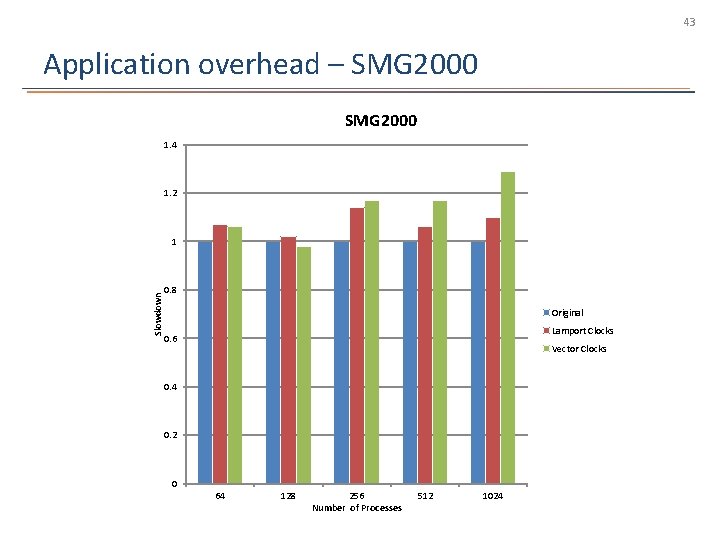

43 Application overhead – SMG 2000 1. 4 1. 2 Slowdown 1 0. 8 Original Lamport Clocks 0. 6 Vector Clocks 0. 4 0. 2 0 64 128 256 Number of Processes 512 1024

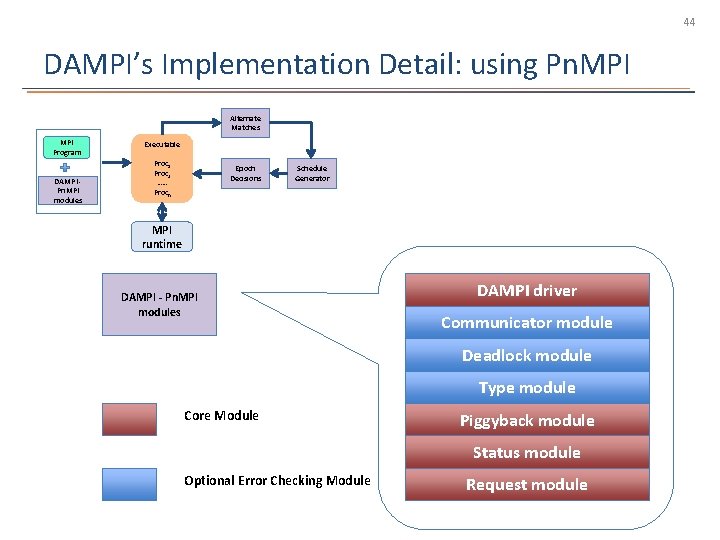

44 DAMPI’s Implementation Detail: using Pn. MPI Alternate Matches MPI Program DAMPI Pn. MPI modules Executable Proc 1 Proc 2 …… Procn Epoch Decisions Schedule Generator MPI runtime DAMPI - Pn. MPI modules DAMPI driver Communicator module Deadlock module Type module Core Module Piggyback module Status module Optional Error Checking Module Request module

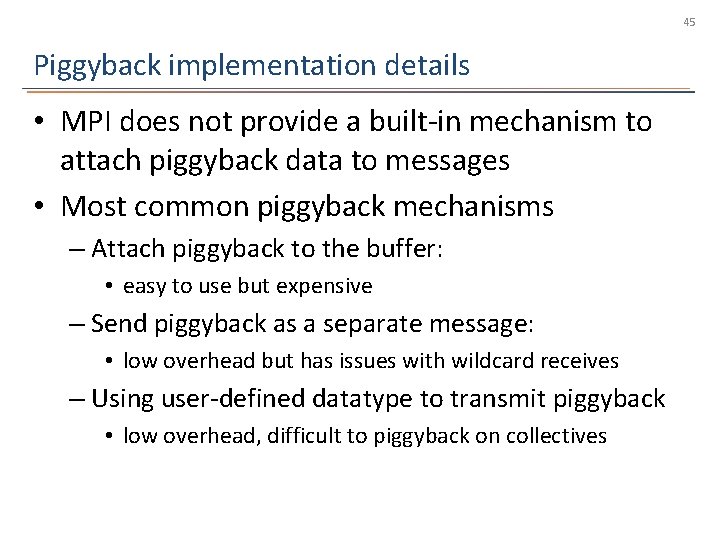

45 Piggyback implementation details • MPI does not provide a built-in mechanism to attach piggyback data to messages • Most common piggyback mechanisms – Attach piggyback to the buffer: • easy to use but expensive – Send piggyback as a separate message: • low overhead but has issues with wildcard receives – Using user-defined datatype to transmit piggyback • low overhead, difficult to piggyback on collectives

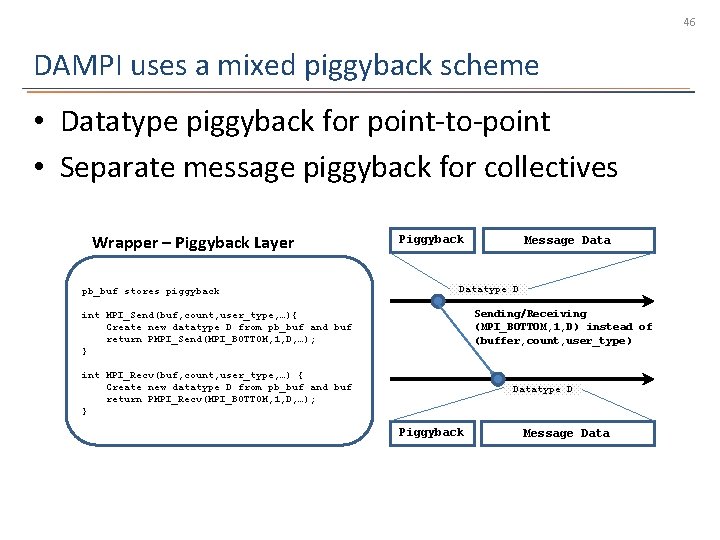

46 DAMPI uses a mixed piggyback scheme • Datatype piggyback for point-to-point • Separate message piggyback for collectives Wrapper – Piggyback Layer pb_buf stores piggyback Piggyback Message Datatype D Sending/Receiving (MPI_BOTTOM, 1, D) instead of (buffer, count, user_type) int MPI_Send(buf, count, user_type, …){ Create new datatype D from pb_buf and buf return PMPI_Send(MPI_BOTTOM, 1, D, …); } int MPI_Recv(buf, count, user_type, …) { Create new datatype D from pb_buf and buf return PMPI_Recv(MPI_BOTTOM, 1, D, …); } Datatype D Piggyback Message Data

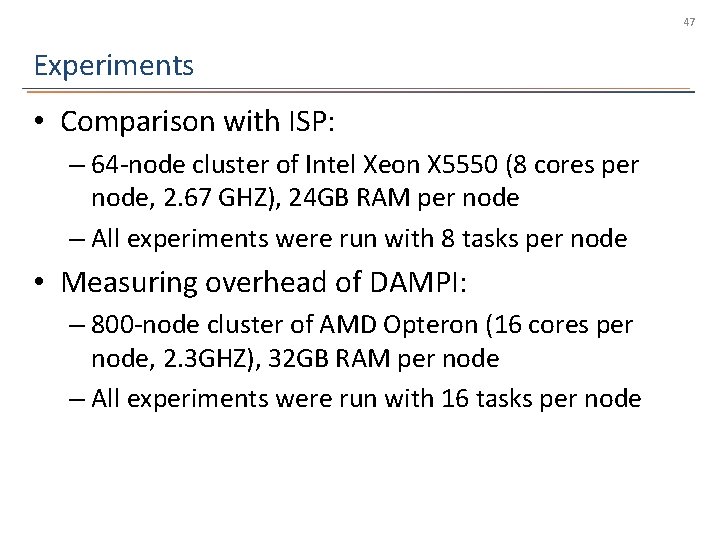

47 Experiments • Comparison with ISP: – 64 -node cluster of Intel Xeon X 5550 (8 cores per node, 2. 67 GHZ), 24 GB RAM per node – All experiments were run with 8 tasks per node • Measuring overhead of DAMPI: – 800 -node cluster of AMD Opteron (16 cores per node, 2. 3 GHZ), 32 GB RAM per node – All experiments were run with 16 tasks per node

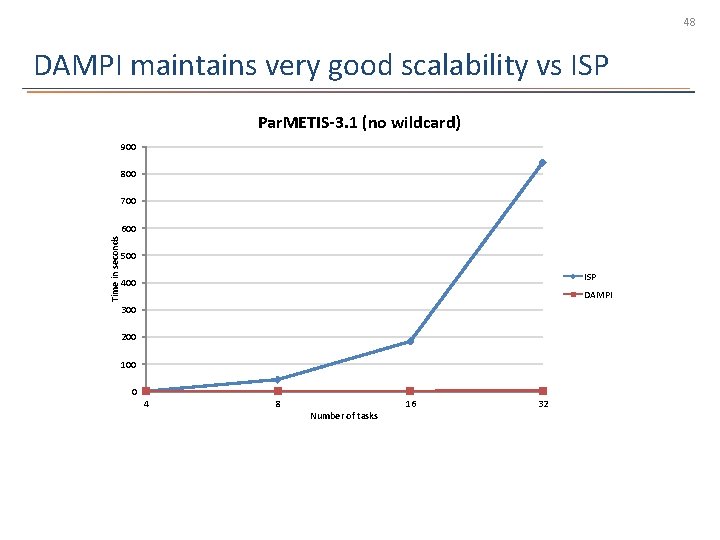

48 DAMPI maintains very good scalability vs ISP Par. METIS-3. 1 (no wildcard) 900 800 700 Time in seconds 600 500 ISP 400 DAMPI 300 200 100 0 4 8 Number of tasks 16 32

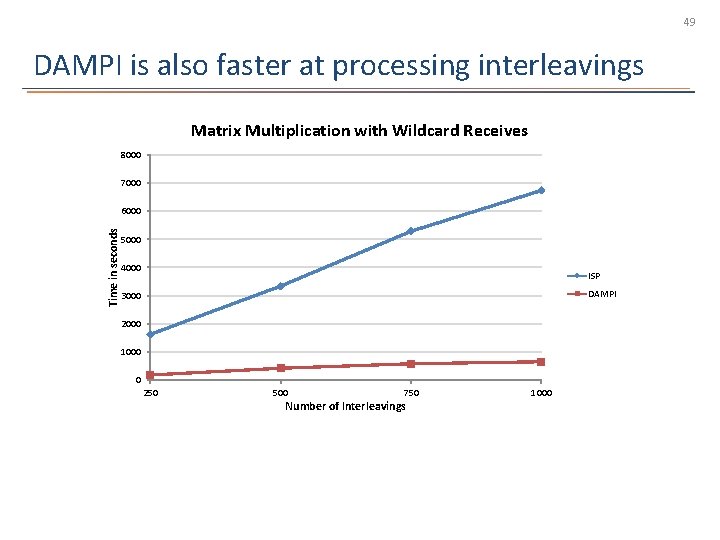

49 DAMPI is also faster at processing interleavings Matrix Multiplication with Wildcard Receives 8000 7000 Time in seconds 6000 5000 4000 ISP DAMPI 3000 2000 1000 0 250 500 750 Number of Interleavings 1000

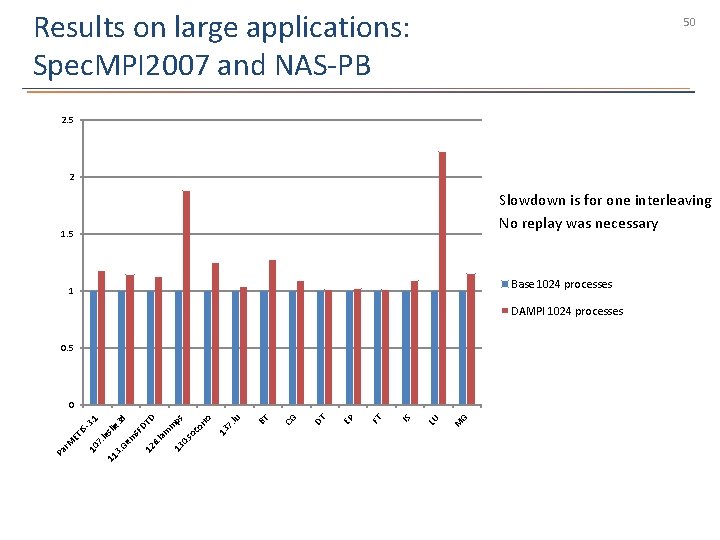

Results on large applications: Spec. MPI 2007 and NAS-PB 50 2. 5 2 Slowdown is for one interleaving No replay was necessary 1. 5 Base 1024 processes 1 DAMPI 1024 processes 0. 5 G M LU IS FT EP DT CG BT 7. lu 13 co rro ps m m 13 0 . so D la 6. 12 em s. F DT d e 3 sli 11 3 . G le 7. 10 Pa r. M ET IS -3. 1 0

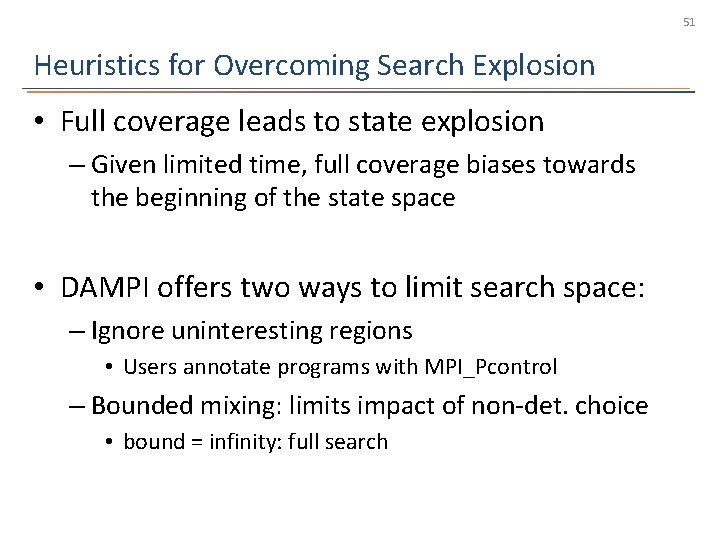

51 Heuristics for Overcoming Search Explosion • Full coverage leads to state explosion – Given limited time, full coverage biases towards the beginning of the state space • DAMPI offers two ways to limit search space: – Ignore uninteresting regions • Users annotate programs with MPI_Pcontrol – Bounded mixing: limits impact of non-det. choice • bound = infinity: full search

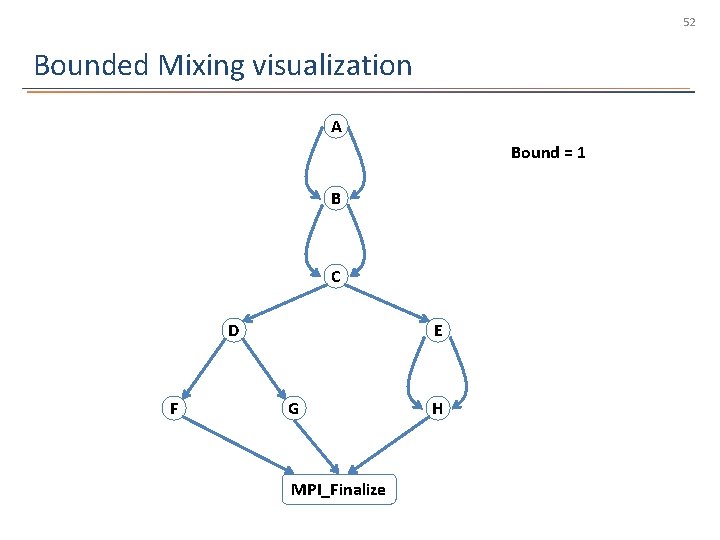

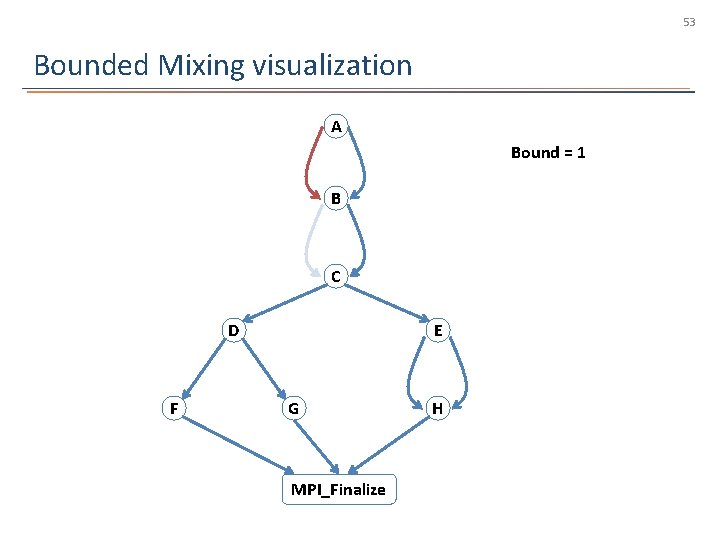

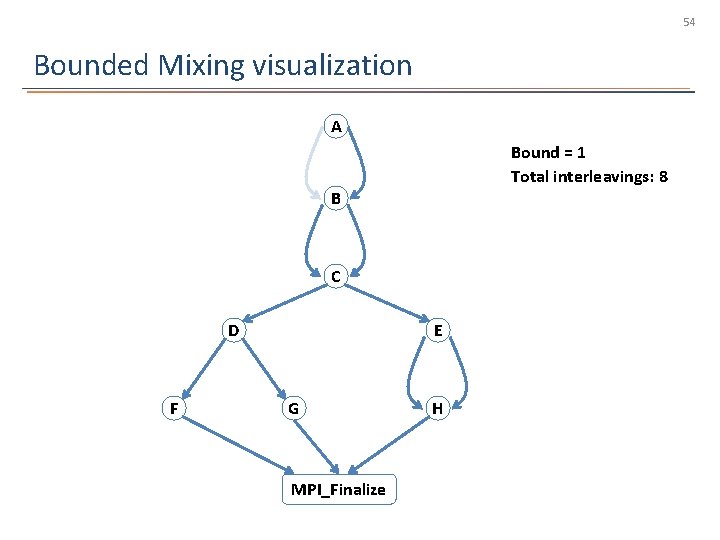

52 Bounded Mixing visualization A Bound = 1 B C D F E G MPI_Finalize H

53 Bounded Mixing visualization A Bound = 1 B C D F E G MPI_Finalize H

54 Bounded Mixing visualization A Bound = 1 Total interleavings: 8 B C D F E G MPI_Finalize H

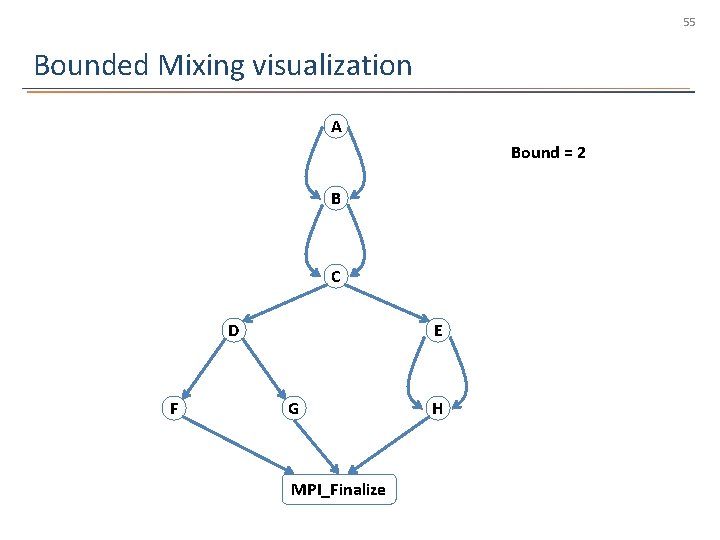

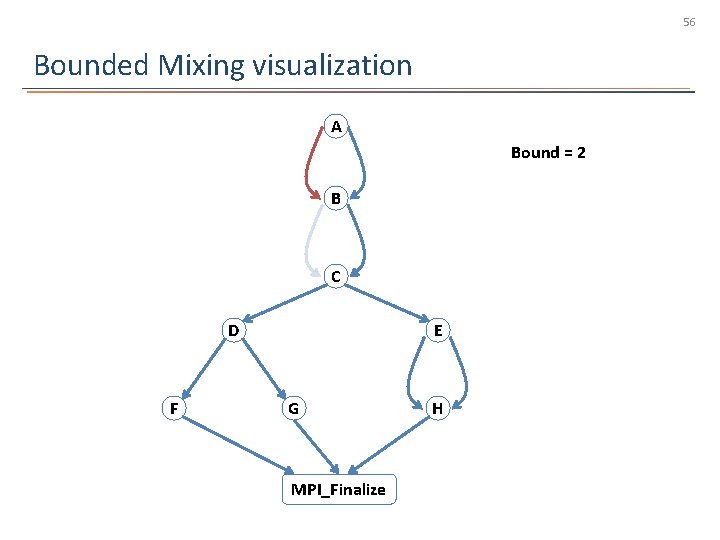

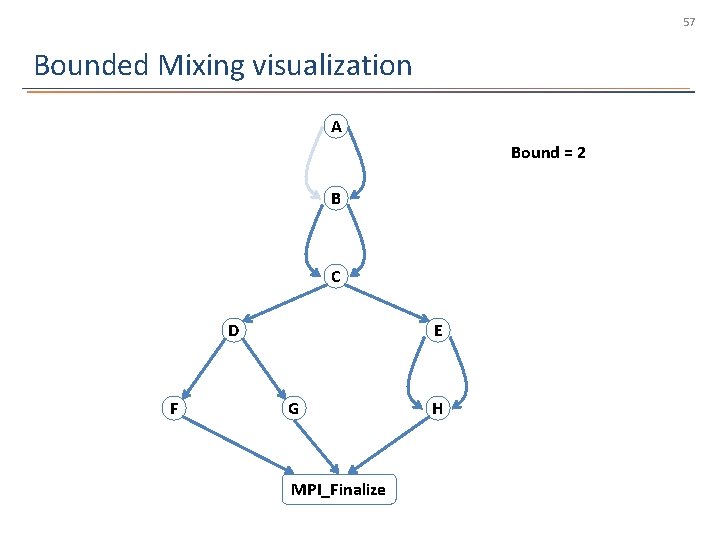

55 Bounded Mixing visualization A Bound = 2 B C D F E G MPI_Finalize H

56 Bounded Mixing visualization A Bound = 2 B C D F E G MPI_Finalize H

57 Bounded Mixing visualization A Bound = 2 B C D F E G MPI_Finalize H

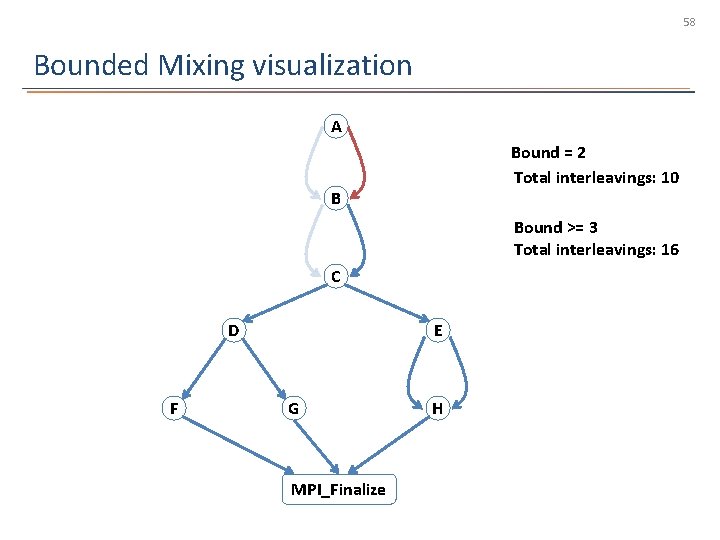

58 Bounded Mixing visualization A Bound = 2 Total interleavings: 10 B Bound >= 3 Total interleavings: 16 C D F E G MPI_Finalize H

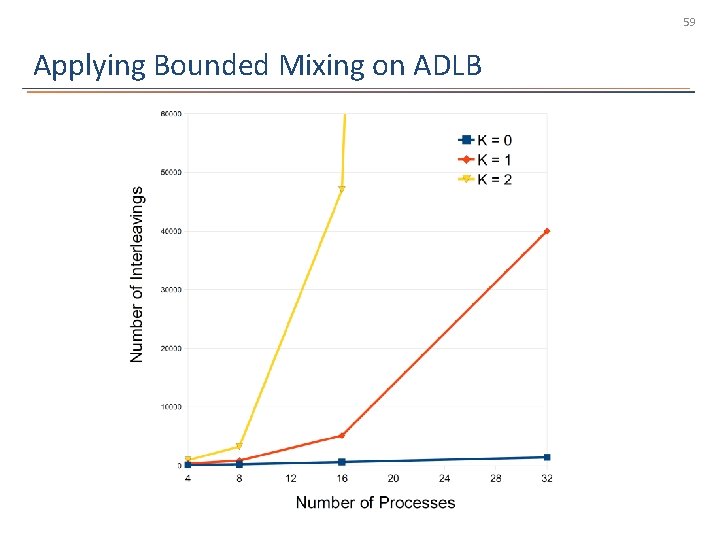

59 Applying Bounded Mixing on ADLB

60 How well did we do? • DAMPI achieves scalable verification – Coverage over the space of nondeterminism – Works on realistic MPI programs at large scale • Further correctness checking capabilities can be added as modules to DAMPI

61 • Questions?

62 Concluding Remarks • Scalable dynamic verification for MPI is feasible – Combines strength of testing and formal methods – Guarantees coverage over nondeterminism • Matches-before ordering for MPI provides the basis for tracking causality in MPI • DAMPI is the first MPI verifier that can scale beyond hundreds of processes

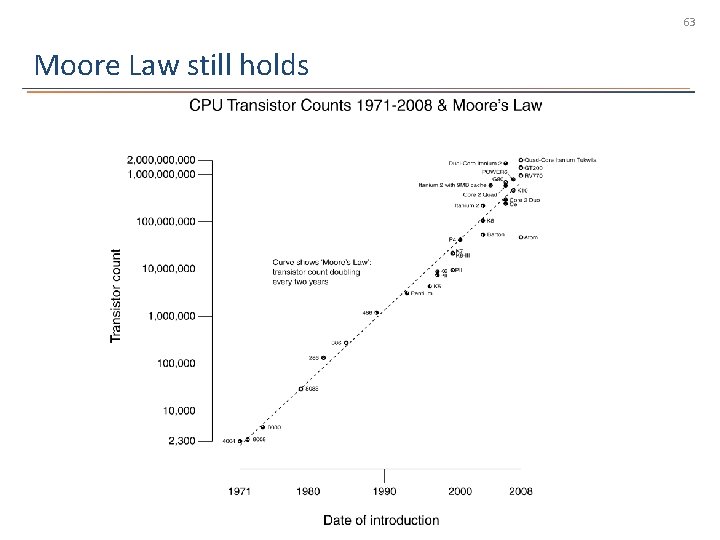

63 Moore Law still holds

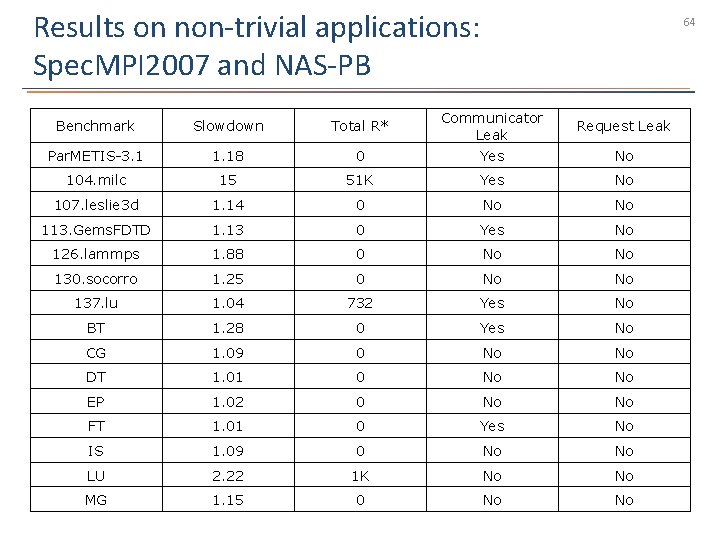

Results on non-trivial applications: Spec. MPI 2007 and NAS-PB 64 Benchmark Slowdown Total R* Communicator Leak Request Leak Par. METIS-3. 1 1. 18 0 Yes No 104. milc 15 51 K Yes No 107. leslie 3 d 1. 14 0 No No 113. Gems. FDTD 1. 13 0 Yes No 126. lammps 1. 88 0 No No 130. socorro 1. 25 0 No No 137. lu 1. 04 732 Yes No BT 1. 28 0 Yes No CG 1. 09 0 No No DT 1. 01 0 No No EP 1. 02 0 No No FT 1. 01 0 Yes No IS 1. 09 0 No No LU 2. 22 1 K No No MG 1. 15 0 No No

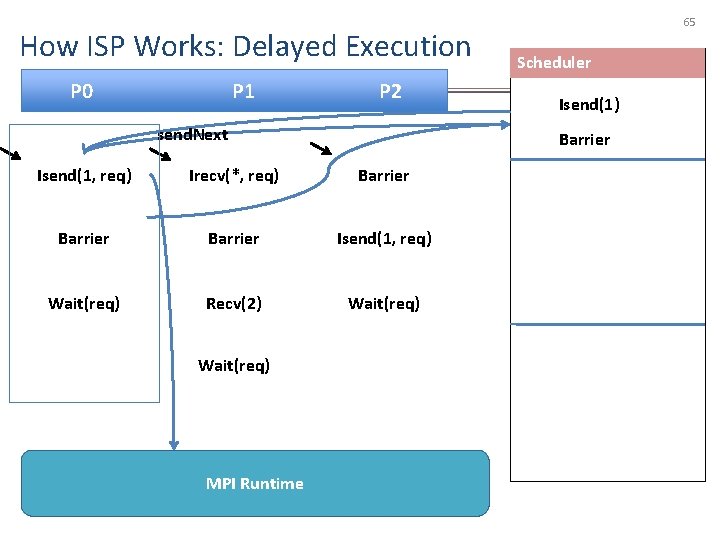

How ISP Works: Delayed Execution P 0 P 1 P 2 send. Next Irecv(*, req) Barrier Isend(1, req) Wait(req) Recv(2) Wait(req) MPI Runtime Scheduler Isend(1) Barrier Isend(1, req) Wait(req) 65

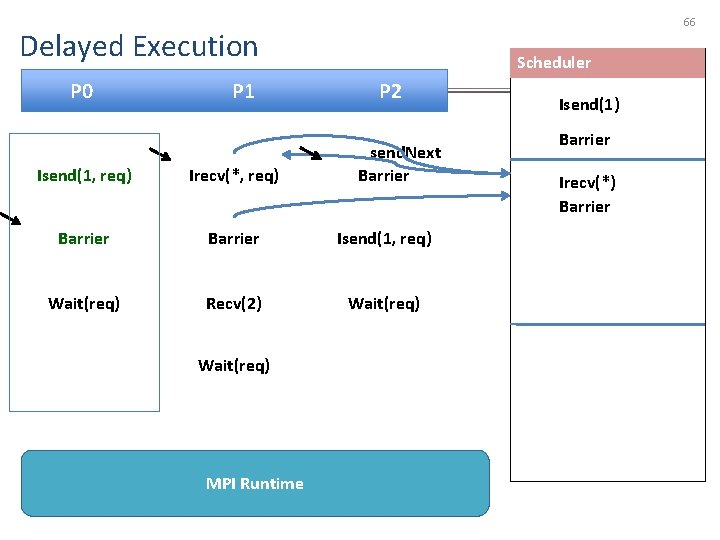

66 Delayed Execution P 0 P 1 Scheduler P 2 send. Next Barrier Isend(1, req) Irecv(*, req) Barrier Isend(1, req) Wait(req) Recv(2) Wait(req) MPI Runtime Isend(1) Barrier Irecv(*) Barrier

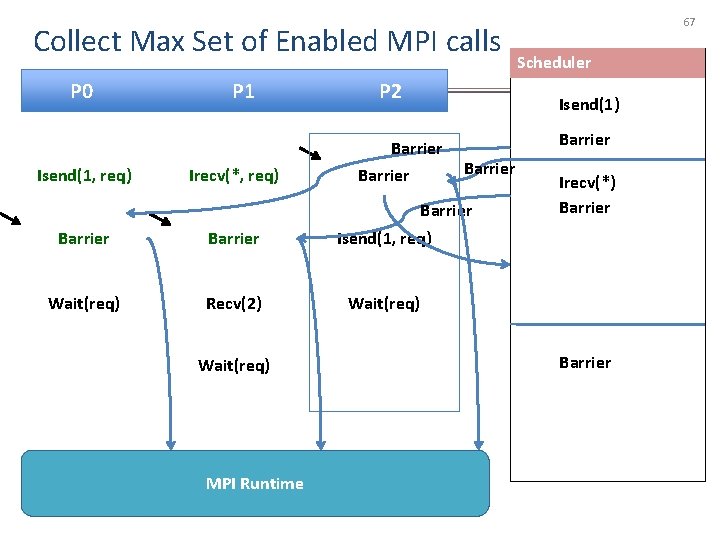

Collect Max Set of Enabled MPI calls P 0 Isend(1, req) P 1 Irecv(*, req) P 2 Barrier Isend(1, req) Wait(req) Recv(2) Wait(req) MPI Runtime Scheduler Isend(1) Barrier Wait(req) 67 Irecv(*) Barrier

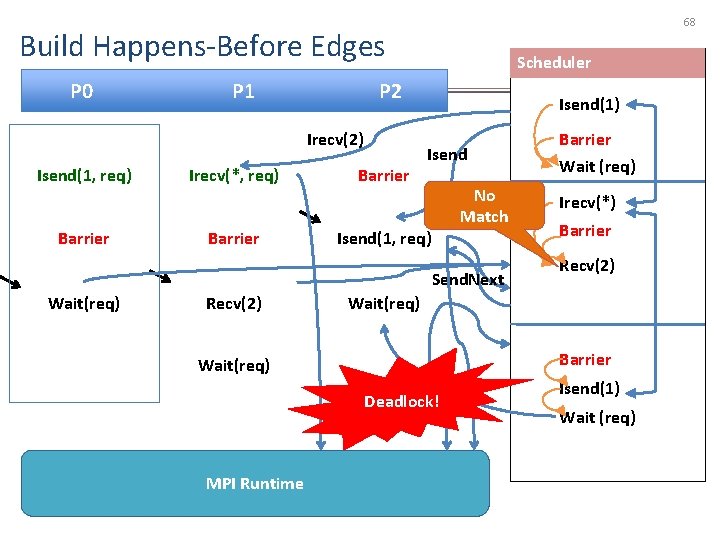

68 Build Happens-Before Edges P 0 P 1 P 2 Irecv(2) Isend(1, req) Barrier Irecv(*, req) Barrier Scheduler Barrier Isend(1) Isend No Match Isend(1, req) Send. Next Wait(req) Recv(2) Wait (req) Irecv(*) Barrier Recv(2) Wait(req) Barrier Wait(req) Wait Deadlock! MPI Runtime Barrier Isend(1) Wait (req)

- Slides: 68