Speeding Up Algorithms for Hidden Markov Models by

Speeding Up Algorithms for Hidden Markov Models by Exploiting Repetitions Shay Mozes Oren Weimann (MIT) Michal Ziv-Ukelson (Tel-Aviv U. )

Shortly: • Hidden Markov Models are extensively used to model processes in many fields • The runtime of HMM algorithms is usually linear in the length of the input • We show to exploit repetitions to obtain speedup • First provable speedup of Viterbi’s algorithm • Can use different compression schemes • Applies to several decoding and training algorithms 2

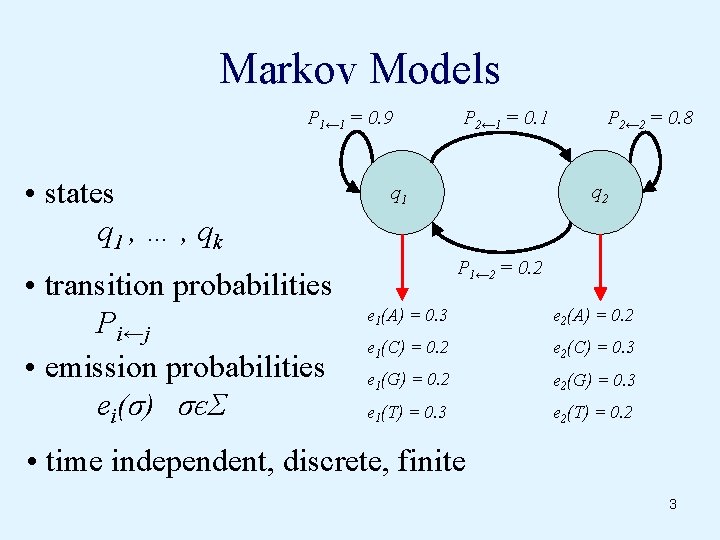

Markov Models P 1← 1 = 0. 9 • states q 1 , … , qk • transition probabilities Pi←j • emission probabilities ei(σ) σєΣ P 2← 1 = 0. 1 P 2← 2 = 0. 8 q 2 q 1 P 1← 2 = 0. 2 e 1(A) = 0. 3 e 2(A) = 0. 2 e 1(C) = 0. 2 e 2(C) = 0. 3 e 1(G) = 0. 2 e 2(G) = 0. 3 e 1(T) = 0. 3 e 2(T) = 0. 2 • time independent, discrete, finite 3

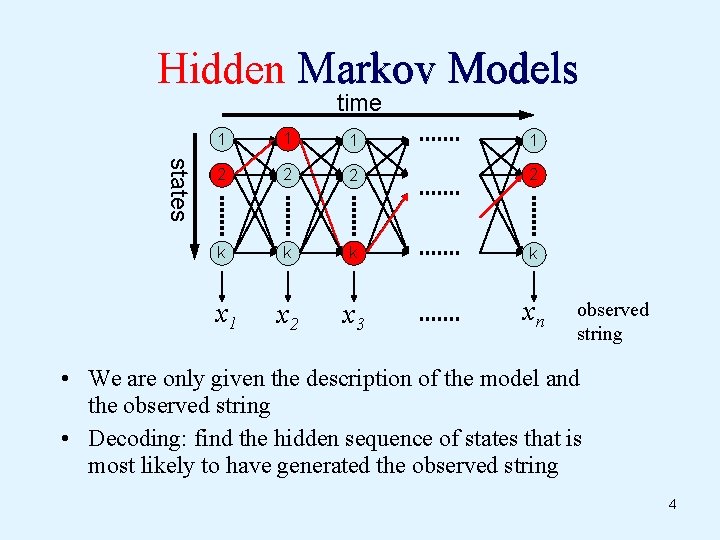

Hidden Markov Models time states 1 1 2 2 k k x 2 x 3 xn x 1 observed string • We are only given the description of the model and the observed string • Decoding: find the hidden sequence of states that is most likely to have generated the observed string 4

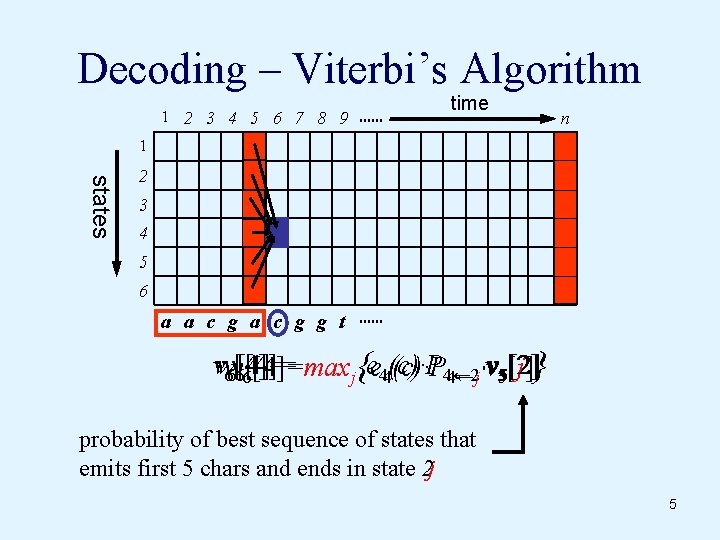

Decoding – Viterbi’s Algorithm 1 2 3 4 5 6 7 8 9 time n 1 states 2 3 4 5 6 a a c g g t vvv 66 v[4]= P 4← 2 [4]=maxj{ee 44(c)·P [j]} 6[4]= 4←j·v 55[2] probability of best sequence of states that emits first 5 chars and ends in state 2 j 5

Outline • • • Overview Exploiting repetitions Using LZ 78 Using Run-Length Encoding Summary of results 6

![VA in Matrix Notation v 1[i]=maxj{ei(x 1)·Pi←j · v 0[j]} v 1[i]=maxj{ Mij(x 1) VA in Matrix Notation v 1[i]=maxj{ei(x 1)·Pi←j · v 0[j]} v 1[i]=maxj{ Mij(x 1)](http://slidetodoc.com/presentation_image_h/d640b96371b15efdcc15527fa22b8549/image-7.jpg)

VA in Matrix Notation v 1[i]=maxj{ei(x 1)·Pi←j · v 0[j]} v 1[i]=maxj{ Mij(x 1) · v 0[j]} Mij(σ) = ei (σ)·Pi←j (A⊗B)ij= maxk{Aik ·Bkj } Viterbi’s algorithm: O(k 2 n) ⊗ ⊗ vn=M(xn) ⊗ M(xn-1) ⊗ ··· v 1⊗=M(x 1)1) v 0 v 0 O(k 3 n) v 2 = M(x 2) ⊗ M(x 1) ⊗ v 0 7

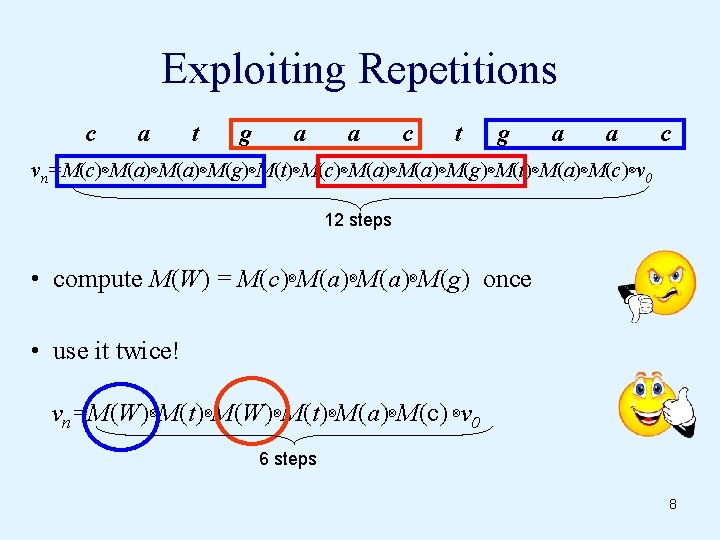

Exploiting Repetitions c a t g a a c vn=M(c)⊗M(a)⊗M(a)⊗M(g)⊗M(t)⊗M(a)⊗M(c)⊗v 0 12 steps • compute M(W) = M(c)⊗M(a)⊗M(g) once • use it twice! vn=M(W)⊗M(t)⊗M(a)⊗M(c) ⊗v 0 6 steps 8

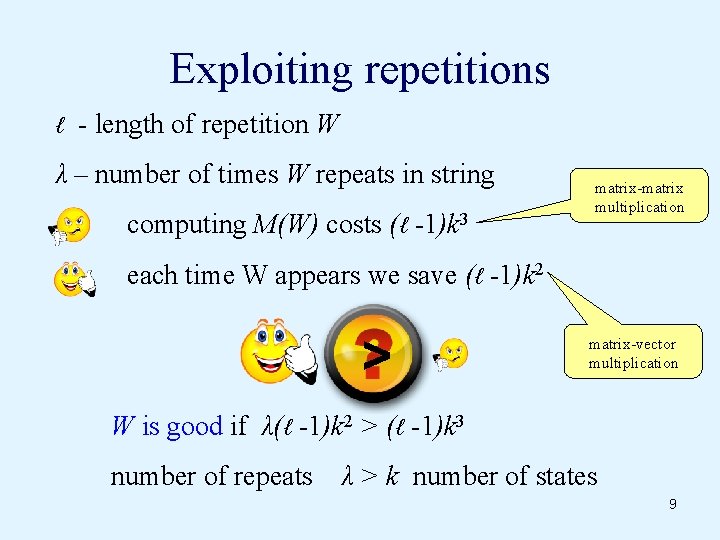

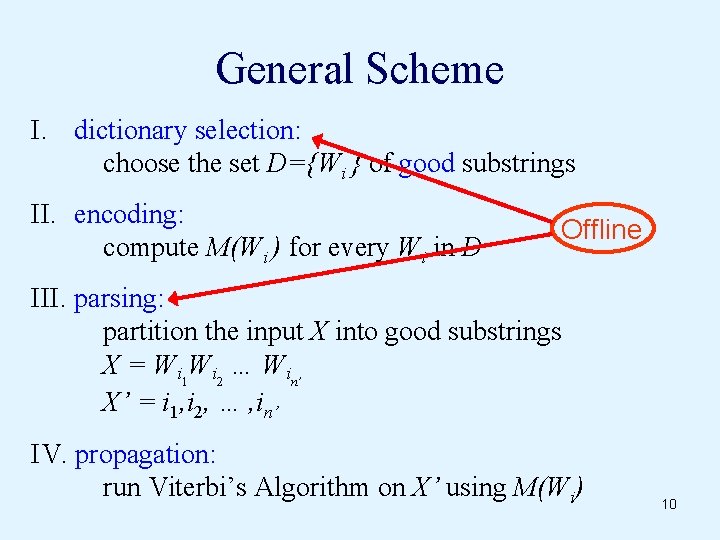

Exploiting repetitions ℓ - length of repetition W λ – number of times W repeats in string computing M(W) costs (ℓ -1)k 3 matrix-matrix multiplication each time W appears we save (ℓ -1)k 2 > matrix-vector multiplication W is good if λ(ℓ -1)k 2 > (ℓ -1)k 3 number of repeats λ > k number of states 9

General Scheme I. dictionary selection: choose the set D={Wi } of good substrings II. encoding: compute M(Wi ) for every Wi in D Offline III. parsing: partition the input X into good substrings X = Wi 1 Wi 2 … Win’ X’ = i 1, i 2, … , in’ IV. propagation: run Viterbi’s Algorithm on X’ using M(Wi) 10

Outline • • • Overview Exploiting repetitions Using LZ 78 Using Run-Length Encoding Summary of results 11

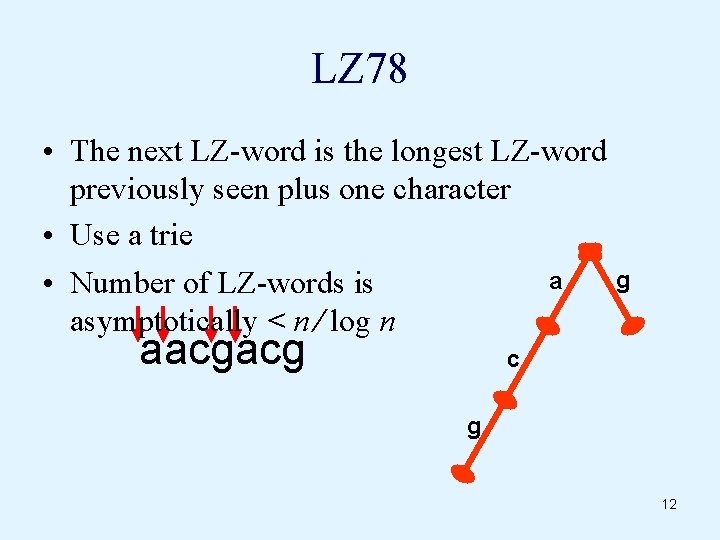

LZ 78 • The next LZ-word is the longest LZ-word previously seen plus one character • Use a trie g a • Number of LZ-words is asymptotically < n ∕ log n aacgacg c g 12

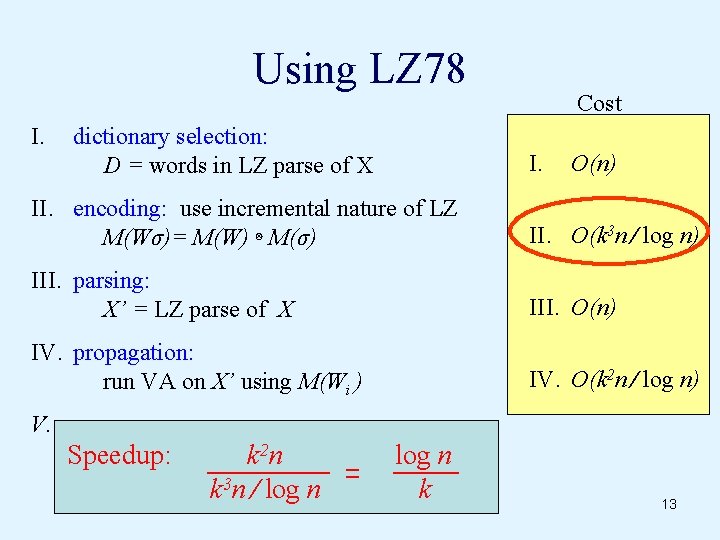

Using LZ 78 I. dictionary selection: D = words in LZ parse of X Cost I. O(n) II. encoding: use incremental nature of LZ M(Wσ)= M(W) ⊗ M(σ) II. O(k 3 n ∕ log n) III. parsing: X’ = LZ parse of X III. O(n) IV. propagation: run VA on X’ using M(Wi ) IV. O(k 2 n ∕ log n) V. Speedup: k 2 n k 3 n ∕ log n k 13

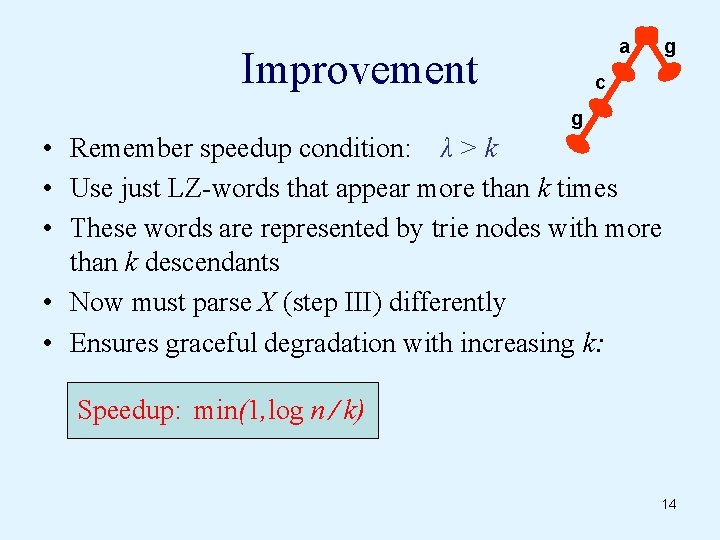

a Improvement g c g • Remember speedup condition: λ > k • Use just LZ-words that appear more than k times • These words are represented by trie nodes with more than k descendants • Now must parse X (step III) differently • Ensures graceful degradation with increasing k: Speedup: min(1, log n ∕ k) 14

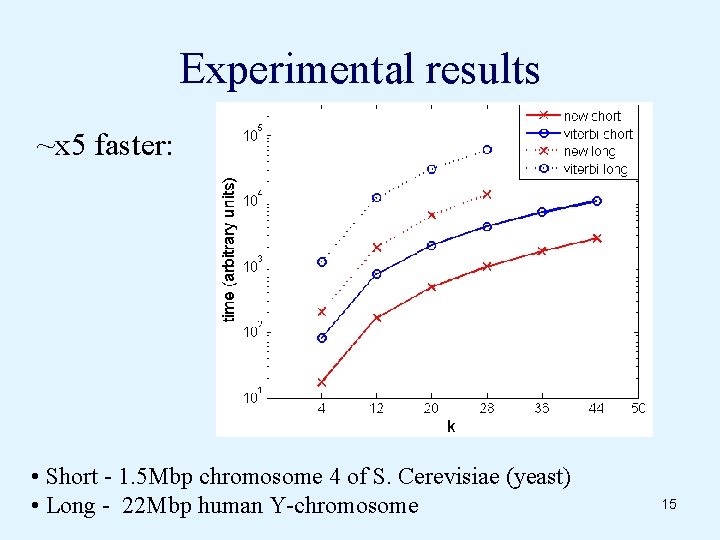

Experimental results ~x 5 faster: • Short - 1. 5 Mbp chromosome 4 of S. Cerevisiae (yeast) • Long - 22 Mbp human Y-chromosome 15

Outline • • • Overview Exploiting repetitions Using LZ 78 Using Run-Length Encoding Summary of results 16

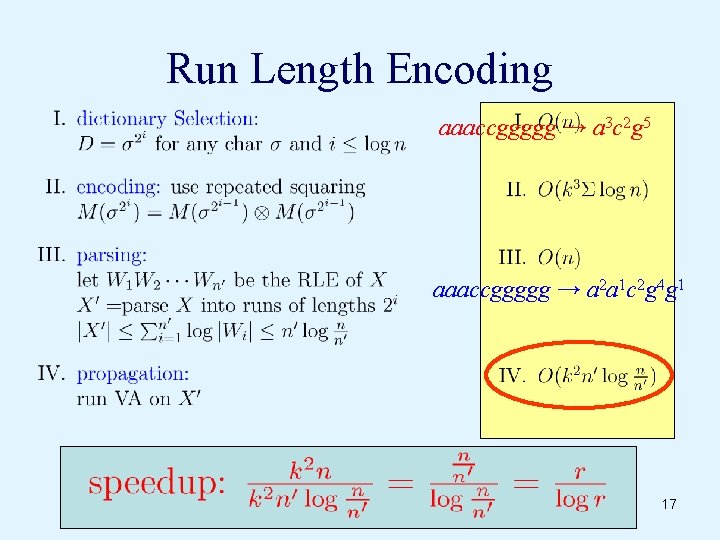

Run Length Encoding aaaccggggg → a 3 c 2 g 5 aaaccggggg → a 2 a 1 c 2 g 4 g 1 17

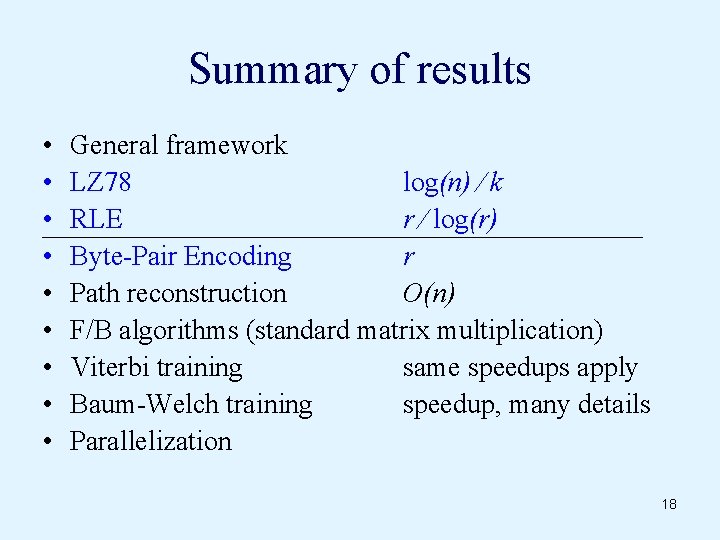

Summary of results • • • General framework LZ 78 log(n) ∕ k RLE r ∕ log(r) Byte-Pair Encoding r Path reconstruction O(n) F/B algorithms (standard matrix multiplication) Viterbi training same speedups apply Baum-Welch training speedup, many details Parallelization 18

Thank you! Any questions? 19

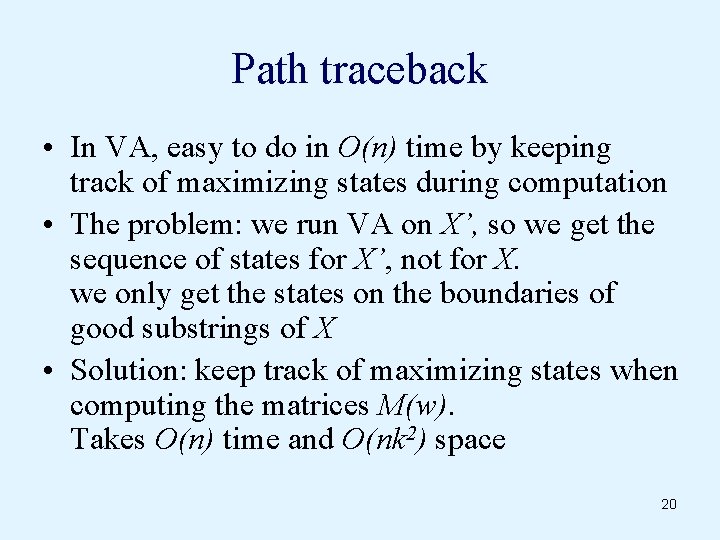

Path traceback • In VA, easy to do in O(n) time by keeping track of maximizing states during computation • The problem: we run VA on X’, so we get the sequence of states for X’, not for X. we only get the states on the boundaries of good substrings of X • Solution: keep track of maximizing states when computing the matrices M(w). Takes O(n) time and O(nk 2) space 20

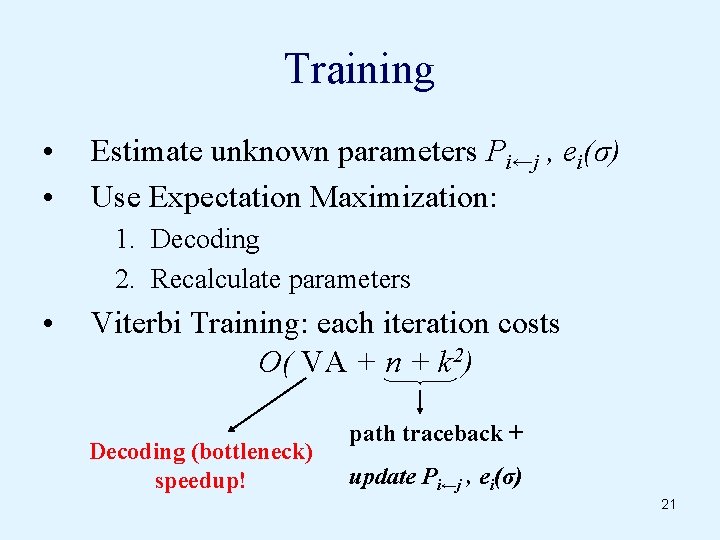

Training • • Estimate unknown parameters Pi←j , ei(σ) Use Expectation Maximization: 1. Decoding 2. Recalculate parameters • Viterbi Training: each iteration costs O( VA + n + k 2) Decoding (bottleneck) speedup! path traceback + update Pi←j , ei(σ) 21

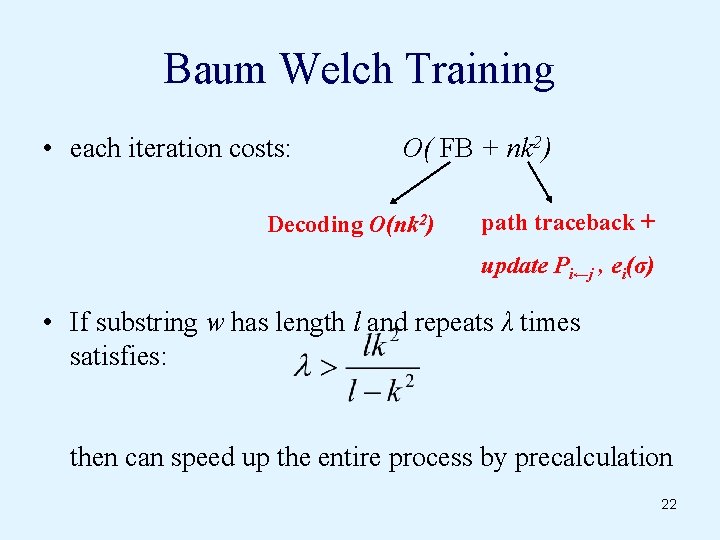

Baum Welch Training • each iteration costs: O( FB + nk 2) Decoding O(nk 2) path traceback + update Pi←j , ei(σ) • If substring w has length l and repeats λ times satisfies: then can speed up the entire process by precalculation 22

- Slides: 22