Software Testing Fault Injection and Black Balls and

Software Testing, Fault Injection, and Black Balls and Urns Jeffrey Voas, Ph. D, FIEEE, FAAAS Computer Scientist Jeff. Voas@nist. gov J. voas@ieee. org

Part 1 Why Software Testing According to Operational Profiles is Not Sufficient

Terminology 1. 2. 3. 4. 5. 6. Error Fault, Defect, Bug, Flaw Failure Execution Infection Propagation

Dispel Myth #1: Models That software reliability models are capable of guaranteeing that software will always behave with some level of pre-specified, high integrity (e. g. , safety-critical, mission-critical, businesscritical, etc. ).

Dispel Myth #2: Testing Traditional operational reliability testing (prior to software release) is sufficient to determine that the software will always behave with high integrity.

Myth # 1: Software Reliability Models

Failure Intensity Time Reliability

Time n Software does not wear out over time. If it is logically incorrect today, it will be logically incorrect tomorrow. n Testing some systems for 10, 000 hours means a lot; for other systems, it means little. n Models need to consider the quality of the test cases and complexity of the software (e. g. , 1 LOC versus 1 M LOC, etc. ). Problem 1:

Problem 2: Mass-Marketed Software Operational Profile n Established definition: Operational profile is: 1. The set of input events that the software will receive during execution, along with the probability that the events will occur. n n The probability density function is simply the derivative of the cumulative distribution function. Modified definition: Operational profile (usage) is: 1. The set of input events that the software will receive during execution, along with the probability that the events will occur. 2. The set of context-sensitive input events generated by external hardware and software systems that the software can interact with during execution. This is the configuration (C) and machine (M).

R 1(r, y) = 0. 99 R 2(r, y) = 0. 9 R 3(r, y) = 0. 94 Problem 3: Conflicting Results

Three United States Regulatory Positions n U. S. Nuclear Regulatory Commission is not for, not against; it is up to the reviewer n U. S. Food and Drug Administration 510(K) never mentions it n U. S. Federal Aviation Administration n Standard DO-178 B: “… currently available methods do not provide results in which confidence can be placed … ” [Section 12. 3. 4]

The overall problem with software reliability models is that while they are quantitative, their results should probably only be trusted in a qualitative manner, such as when to halt testing (MTTF). Great for trend analysis over time

Myth # 2: Operational Reliability Testing – Legal Inputs

Operational Reliability Testing Repeated Trials

Operational testing would be sufficient if exhaustive testing under every possible operational input scenario (legal or anomalous) could be performed.

Legal vs. Anomalous Scenarios Input requirements: x>4 and y<300 and z = true Legal: x=5 and y=200 and z = true Anomalous: x = 0 and y= 299 and z = true

Dificulty Testing with only legal inputs is generally infeasible (232 x 232 = 264), and thus we are forced to rely on predictors such as software reliability models to quantify “reliability, ” MTBF, when to halt testing, etc. And this infeasibility problem is the same for anomalous inputs and possibly worse!

Large vs. Larger is almost always ignored during specification and V&V Anomalous Legal

Question: Why Then Have Software Reliability Models and Perform Reliability Testing?

The “Culprit” Phenomenon Software Behavior’s Unpredictability It is more difficult to directly measure software quality than to achieve it.

Reasons for Unpredictability Software systems are inherently unpredictable due to: n Rare inputs n Unanticipated events (faults and bad input data) that corrupt internal program states during execution n Design Oversights n Non-exhaustive testing n These 4 issues collapse down to the problem of COMPLEXITY both of the software and input domain

And in Software … n The ability to pinpoint precisely how software will behave in the future requires, at a minimum, knowledge of the consequences of each fault in the software. n This requires knowing about each fault, and knowing about each fault requires selecting the appropriate inputs that detect those faults during test. Not practical. n Further, reliability testing can encourage faults of severe consequence to hide – Why? Because the most likely inputs events are the ones employed during testing.

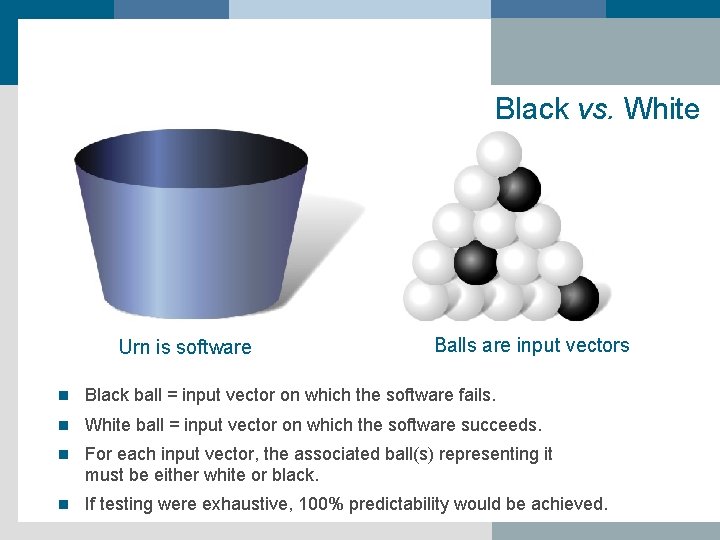

Black vs. White Urn is software Balls are input vectors n Black ball = input vector on which the software fails. n White ball = input vector on which the software succeeds. n For each input vector, the associated ball(s) representing it must be either white or black. n If testing were exhaustive, 100% predictability would be achieved.

Scenario 1: Software That Always Fails n This urn represents a software system that fails on every possible input.

Scenario 2: Correct Code n This urn represents a software system that succeeds on every possible input

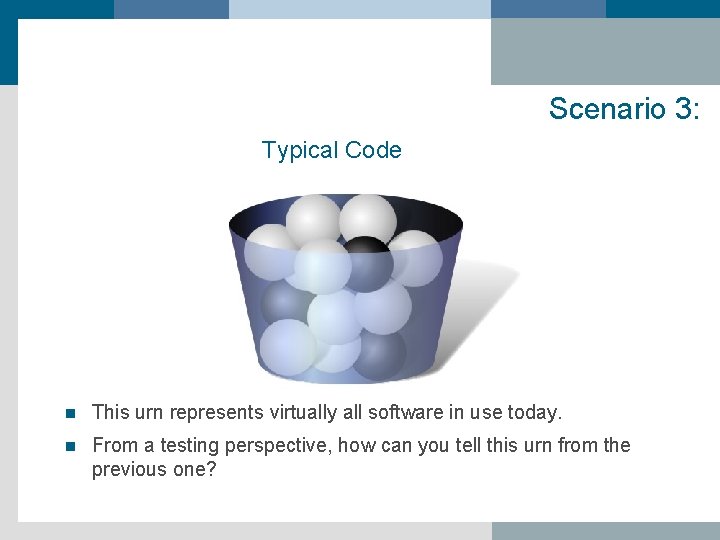

Scenario 3: Typical Code n This urn represents virtually all software in use today. n From a testing perspective, how can you tell this urn from the previous one?

And thus the real problem from a testing perspective is …

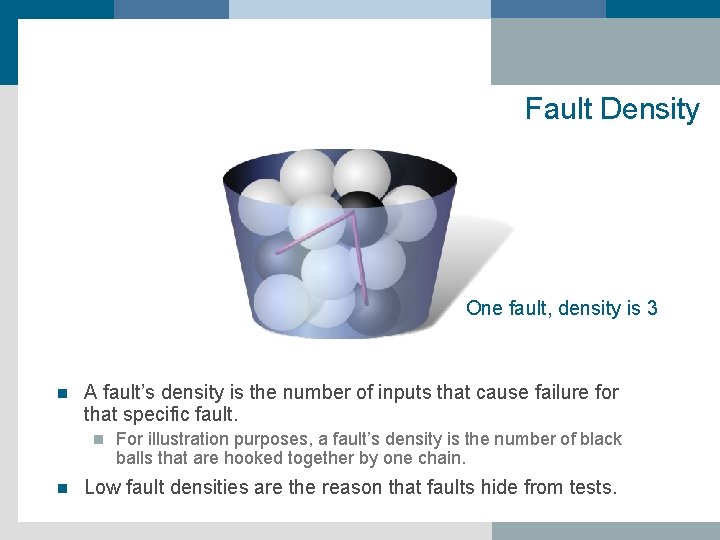

Fault Density One fault, density is 3 n A fault’s density is the number of inputs that cause failure for that specific fault. n n For illustration purposes, a fault’s density is the number of black balls that are hooked together by one chain. Low fault densities are the reason that faults hide from tests.

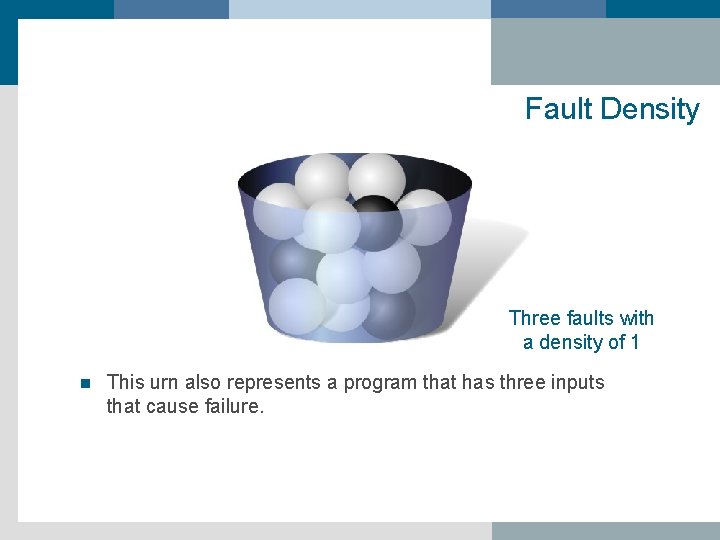

Fault Density Three faults with a density of 1 n This urn also represents a program that has three inputs that cause failure.

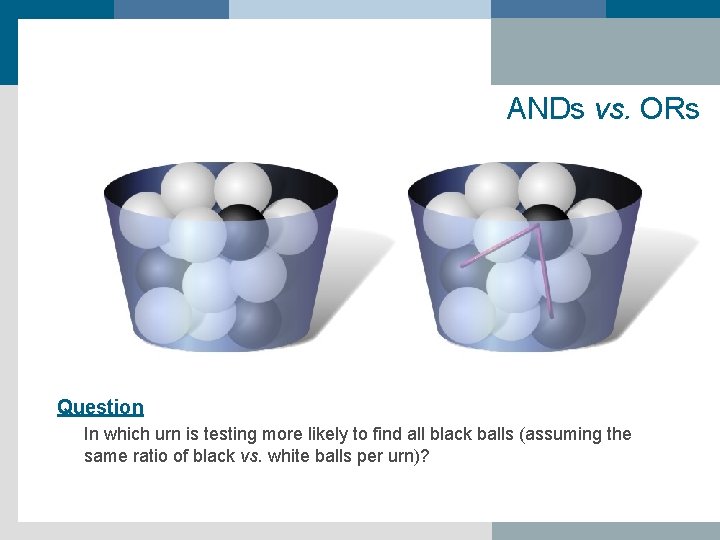

ANDs vs. ORs Question In which urn is testing more likely to find all black balls (assuming the same ratio of black vs. white balls per urn)?

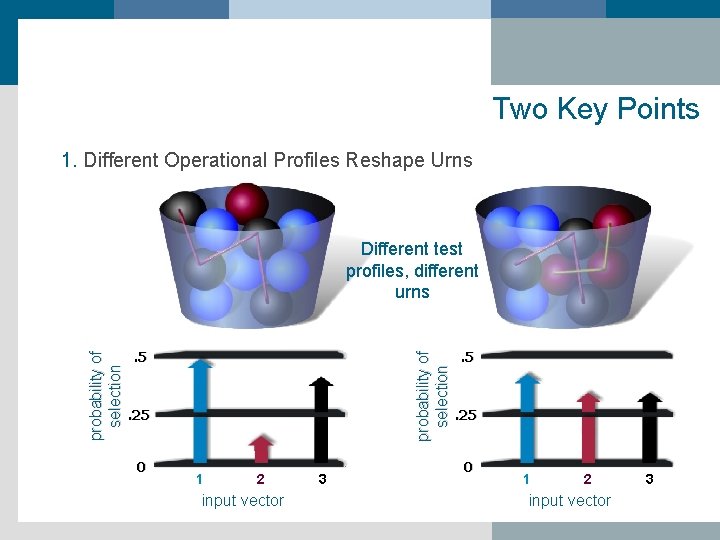

Two Key Points 1. Different Operational Profiles Reshape Urns . 5 probability of selection Different test profiles, different urns . 25 0 1 2 input vector 3 . 5 . 25 0 1 2 input vector 3

Key Point The Number of Faults May or May Not Reshape the Urn n i. e. , the number of faults does not necessarily impact the reliability of the software.

![One fault = 50% Pr[failure] probability of selection . 5 . 25 0 1 One fault = 50% Pr[failure] probability of selection . 5 . 25 0 1](http://slidetodoc.com/presentation_image/de17c021924967ef20d29711385646ab/image-33.jpg)

One fault = 50% Pr[failure] probability of selection . 5 . 25 0 1 2 input vector 3

![Two faults = 50% Pr[failure] probability of selection . 5 . 25 0 1 Two faults = 50% Pr[failure] probability of selection . 5 . 25 0 1](http://slidetodoc.com/presentation_image/de17c021924967ef20d29711385646ab/image-34.jpg)

Two faults = 50% Pr[failure] probability of selection . 5 . 25 0 1 2 input vector 3

Voas Position Statement Unfortunately, software engineering’s “current wisdom” is geared towards lowering the number of faults instead of increasing the size of the faults to magnify their detectability. This is the goal of software design-for-testability (DFT).

And So the Question Becomes What can be done to more accurately predict how a software component/application will behave in the future?

Answer Attempt to flush out rarely occurring behaviors that operational reliability testing is likely to overlook

Recommendations 1. Test with respect to complete environment using the broader definition of operational profile 2. Perform off-nominal (rare input) testing – mangle and/or invert the “assumed” operational profile 3. Perform software fault injection 4. Combine ALL THREE ABOVE

Summary n Reliability testing is necessary but not sufficient. Do not read more into the results of this form of testing than you should. n Every software testing profile, including operational profiles, can engender faults of severe (but infrequent) consequence to hide by decreasing densities. Therefore, not only is reliability testing insufficient, but its results can be misleading. n Reliability testing is only one technique in the “bag of tricks” for assessing software integrity. Other techniques should be applied if the costs are tolerable.

Introduce: Part 2 Fault Injection 1. An alternative form of dynamic software testing, and to contrast it to traditional reliability testing (along with several key applications), 2. An interesting problem that occurs when test cases fail to reveal faults, why this occurs, and what, if anything, fault injection can do towards addressing this problem. 3. How this technique applies to the interoperability (non-interference) problem and the testing stoppage criteria problem

Software Fault Injection n Is a form of dynamic software testing n Is like “crash-testing” software n Code-based or interface based n Demonstrates the consequences of incorrect code or data n Is a “what if” game n The more you play “what if, ” the more confident you become that your software can deal with anomalous and unanticipated events

Reliability Testing vs. Fault Injection • Reliability testing executes the software under expected scenarios, and it tests for correct behavior • Fault injection executes the software under anomalous scenarios, and tests to see “if this bad thing happens, what are the consequences”? • Fault injection fills a gap in predicting software behaviors.

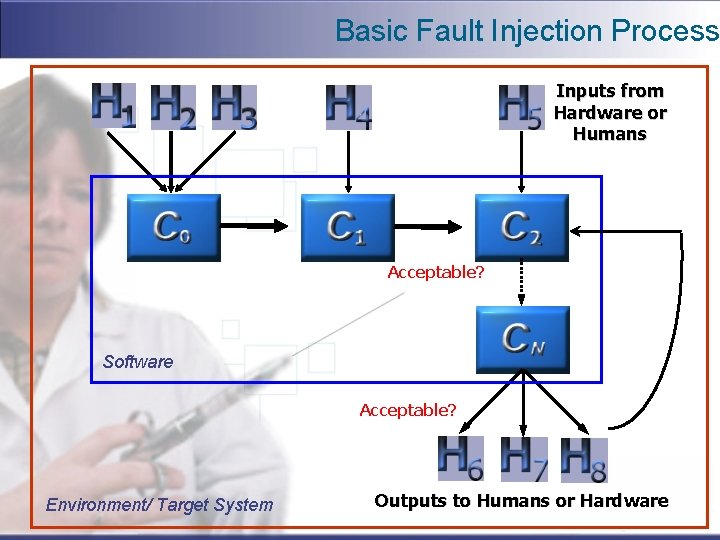

Basic Fault Injection Process Inputs from Hardware or Humans Acceptable? Software Acceptable? Environment/ Target System Outputs to Humans or Hardware

Acceptable refers to output or internal behaviors that do not violate pre-defined definitions or predicates

Environment/Target System Is our Modified definition: Operational profile should be: 1. Above definition + 2. The set of context-sensitive input events generated by external hardware and software systems that the software can interact with during execution. This includes the configuration (C) and machine (M) and human (H).

Usage profile: Features Used? Keystrokes? Files Loaded?

The “real” profile also includes the input signals from the “Invisible Users” So the “real” profile is mixture of these two spaces

Two Basic Ways to Implement Software FI n Code/Syntax Mutation e. g. , replace x+1 with x+2 n Data Mutation e. g. , replace x with perturb (x) State anomaly e. g. , corrupting programmer variable, memory, time, etc.

Two Key Decisions q What anomalies should be injected? q q Depends on the application What should be observed for? q Acceptable

Anomaly Definition: Corrupted internal state information that exists during software execution for only a snapshot in time. Anomalies are the final ingredients that precipitate software failure.

Key Point Any injected anomaly has the potential to reveal unknown internal or output behaviors, some of which may be highly unacceptable.

Key Applications A. Demonstrate that the software is unlikely to do what it shouldn’t. B. Search for new output states that before have never been observed nor contemplated. C. Testing stoppage criteria D. Safety case formulation

A. Test Against Unacceptable Output States 1. Begin with a list of unacceptable outputs (and under what conditions). 2. Execute the software while corrupting internal variables or data traveling through interfaces. 3. Determine whether the software exhibited any unacceptable behavior. If so, determine if this would be possible in real use.

UVA Prototype Magneto Stereotaxis System

n There was concern about three software-induced hazards: 1. Coil Status flag - device shutdown flag 2. Current update limit threshold [± 15 amps / time step] 3. Current Bounds threshold [-100 amps , +100 amps] n Result: By perturbing the electric current value after it was computed, we were able to force a software-induced hazard to occur. That led us to a defective software safety monitor.

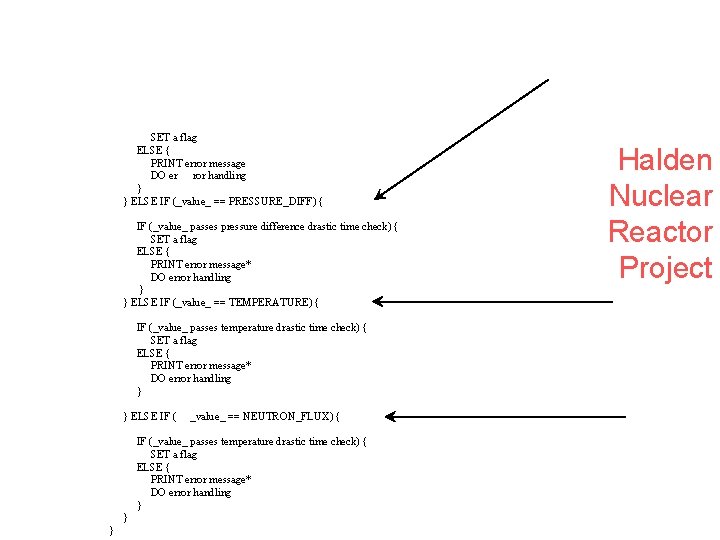

FUNCTION: _drastic_time_check_ INPUTS: int _value_, int _type_ { IF (_type_ == PRESSURE) { IF (_value_ passes pressure drastic time check) { SET a flag ELSE { PRINT error message DO er ror handling } } ELSE IF (_value_ == PRESSURE_DIFF) { IF (_value_ passes pressure difference drastic time check) { SET a flag ELSE { PRINT error message* DO error handling } } ELSE IF (_value_ == TEMPERATURE) { IF (_value_ passes temperature drastic time check) { SET a flag ELSE { PRINT error message* DO error handling } ELSE IF ( _value_ == NEUTRON_FLUX) { IF (_value_ passes temperature drastic time check) { SET a flag ELSE { PRINT error message* DO error handling } } } Halden Nuclear Reactor Project

B. Hazard Mining I. Pre-defined unacceptable outputs: H = {h 1, h 2, h 3, …} II. Collect each acceptable output observed during fault injection into a set: {o 1, o 2, o 3, …}. III. Allow system experts to sift through this “acceptable” set and augment H when warranted: H = H {o 344, o 1020, …}

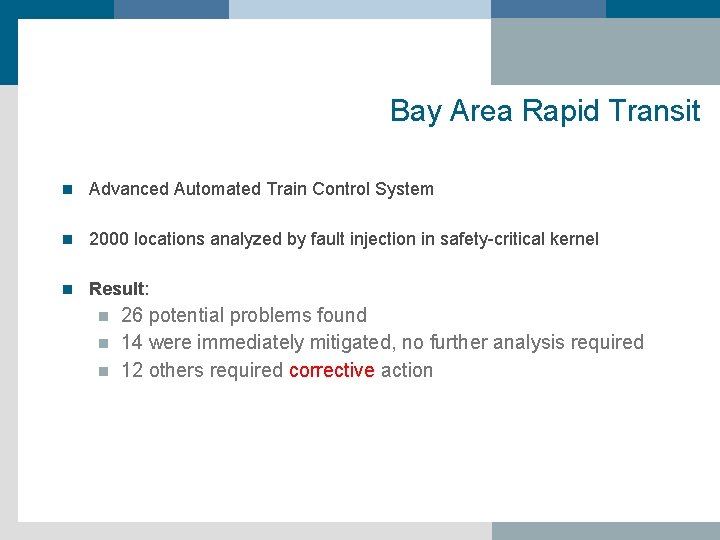

Bay Area Rapid Transit n Advanced Automated Train Control System n 2000 locations analyzed by fault injection in safety-critical kernel n Result: 26 potential problems found n 14 were immediately mitigated, no further analysis required n 12 others required corrective action n

C. Test Stoppage Criteria

![All Software Has a POF in [0, 1] 0. 0 Software Probability of Failure All Software Has a POF in [0, 1] 0. 0 Software Probability of Failure](http://slidetodoc.com/presentation_image/de17c021924967ef20d29711385646ab/image-60.jpg)

All Software Has a POF in [0, 1] 0. 0 Software Probability of Failure (POF) 1. 0

0. 0 Software Probability of Failure (POF) 1. 0

0. 0 Software Probability of Failure (POF) 1. 0

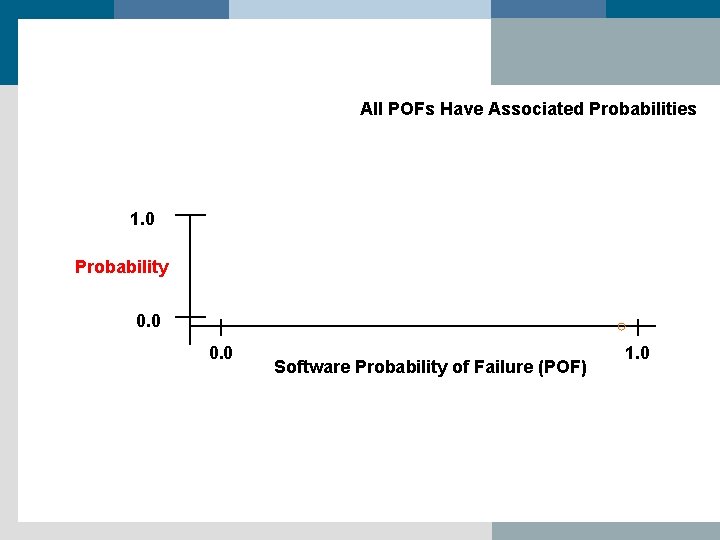

All POFs Have Associated Probabilities 1. 0 Probability 0. 0 Software Probability of Failure (POF) 1. 0

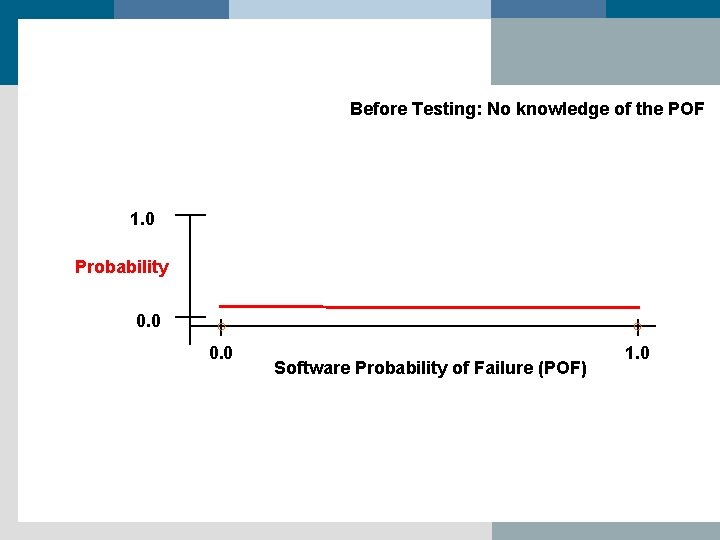

Before Testing: No knowledge of the POF 1. 0 Probability 0. 0 Software Probability of Failure (POF) 1. 0

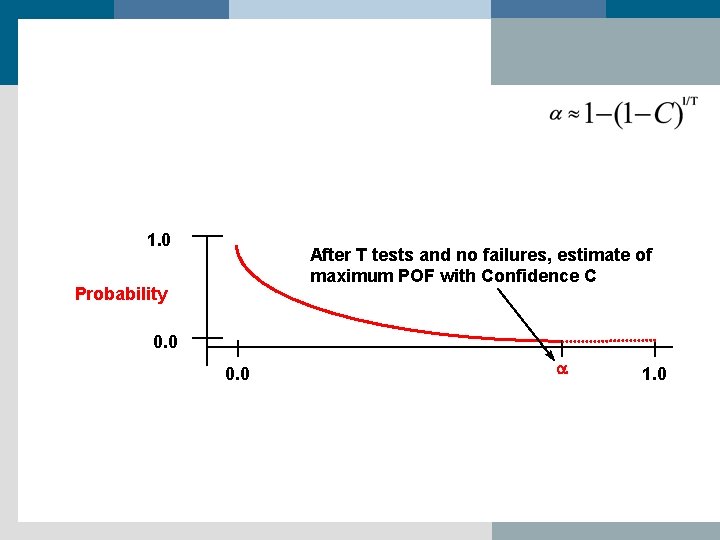

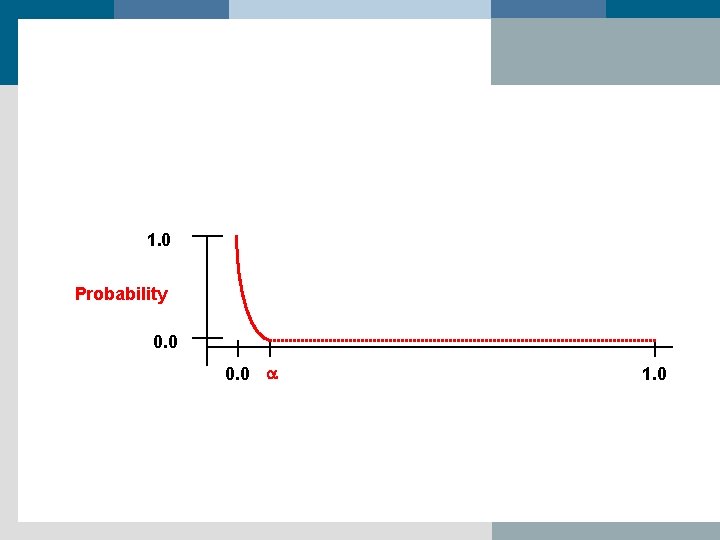

1. 0 After T tests and no failures, estimate of maximum POF with Confidence C Probability 0. 0 a 1. 0

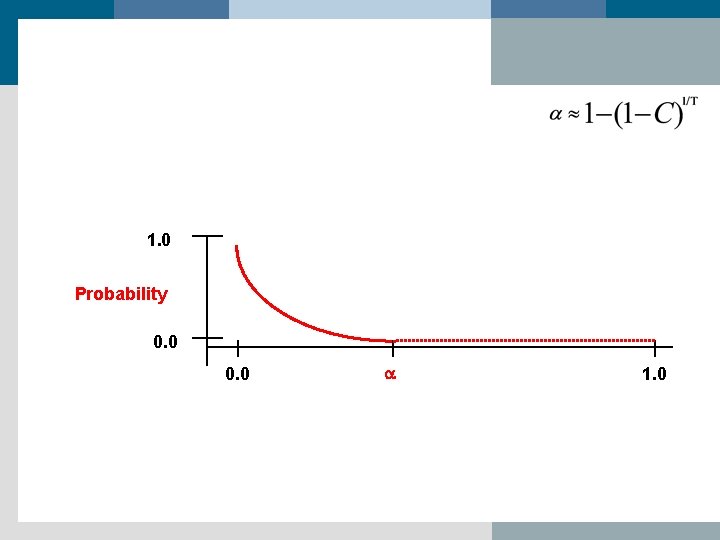

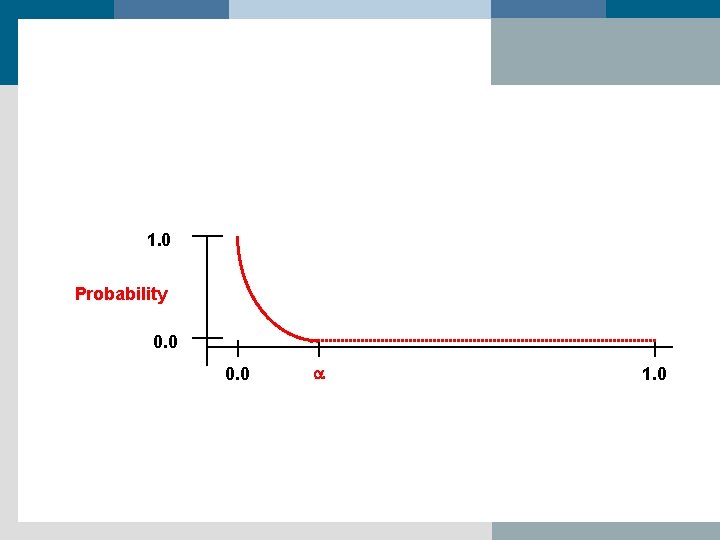

1. 0 Probability 0. 0 a 1. 0

1. 0 Probability 0. 0 a 1. 0

1. 0 Probability 0. 0 a 1. 0

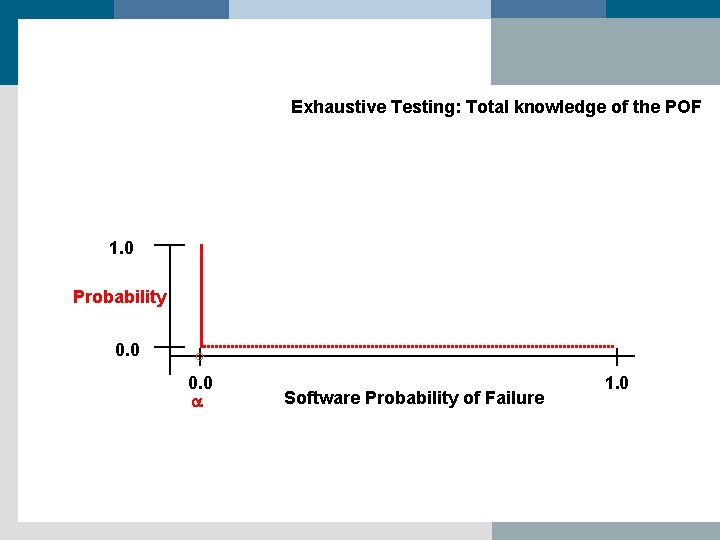

Exhaustive Testing: Total knowledge of the POF 1. 0 Probability 0. 0 a Software Probability of Failure 1. 0

Fault Injection and POFs n Fault injection can be used to predict fault densities. n Fault density impacts POFs; faults of large size create high POFs. n This allows testing to be halted when enough testing has occurred to demonstrate that faults of certain densities are unlikely to be resident in the code.

D. Making A Safety Case 1. Most software safety/reliability standards are vague and offer little guidance with respect to what tools or technologies to use in order to attain certification 2. All safety-related standards require some demonstration of safety against fire, loss of life, loss of mission, electric shock, etc. 3. But demonstrating that the actual risk reduction achieved is equivalent to the necessary risk reduction in order to meet the tolerable risk is difficult to do without very convincing arguments and convincing evidence.

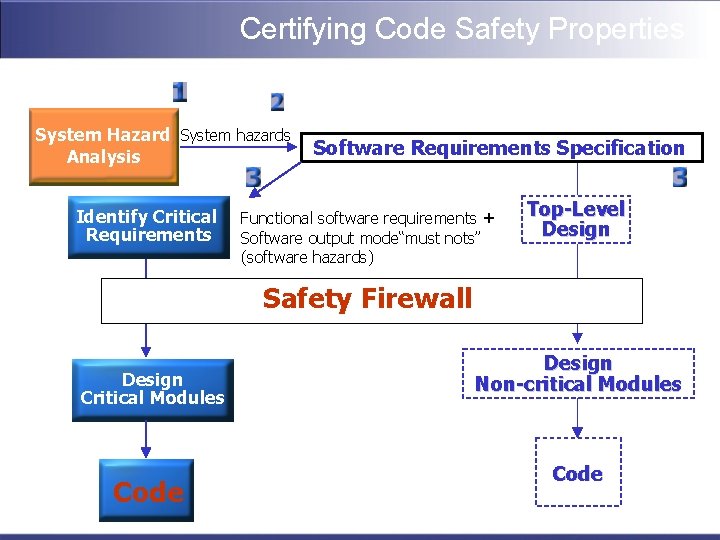

Certifying Code Safety Properties System Hazard System hazards Analysis Identify Critical Requirements Software Requirements Specification Functional software requirements + Software output mode“must nots” (software hazards) Top-Level Design Safety Firewall Design Critical Modules Code Design Non-critical Modules Code

Certifying Firewall Properties System Hazard System hazards Analysis Identify Critical Requirements Software Requirements Specification Functional software requirements + Software output mode“must nots” (software hazards) Top-Level Design Safety Firewall Design Critical Modules Code Design Non-critical Modules Code

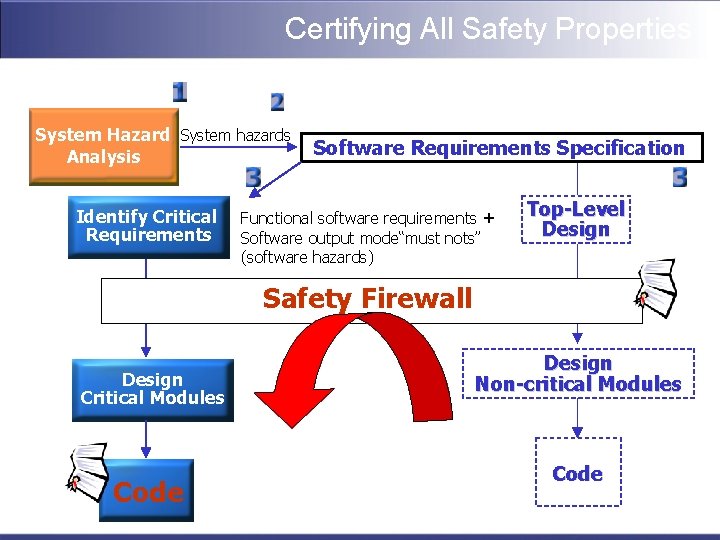

Certifying All Safety Properties System Hazard System hazards Analysis Identify Critical Requirements Software Requirements Specification Functional software requirements + Software output mode“must nots” (software hazards) Top-Level Design Safety Firewall Design Critical Modules Code Design Non-critical Modules Code

Part 3: Interoperability and the “ilities”: Design in, Build in, Glue on?

Premise #1 To achieve and measure interoperability requires standards.

Premise #2 Standards and Certification are inseparable.

What is Interoperability? “Ability of two or more systems or components to exchange information and to use the information that has been exchanged. ” Source: IEEE Standard Computer Dictionary: A Compilation of IEEE Standard Computer Glossaries, IEEE, 1990

“Functional” vs. “Semantic” Interoperability Functional Interoperability: • Shared Architectures • Shared Methods • Shared Frameworks Semantic Interoperability: • Shared Datatypes • Shared Terminologies • Shared Codings Source: IEEE 1073/ISO TC 215 WG 2. 1/CEN TC 251 WGIV Point of Care Medical Device Communications

What are Standards? Simply “lines in the sand” from which a certificate of compliance or non-compliance can occur.

Are Standards the Way to Go?

Pros Any bar or hurdle is better than no bar or hurdle

Cons Possibly the developers would have done more to improve quality but now feel they have a license to do less.

Premise #3 Third party software should be tagged with some guarantee (or at least a “warm fuzzy”) as to how “good” the software is, i. e. , a certificate Problem: Software Of Unknown Pedigree (SOUP) Goal of Certification: Software of Known Pedigree Problem: What is “good enough” software?

Three Key Messages That Certification Can Convey n Compliance with development process standards vs. n Fitness for purpose vs. n Compliance with the requirements

Data to Support Certification Information to support the creation of certificates should be based on something similar to the claims-evidence-arguments framework (Adelard, U. K. ), much as is done in courts of law. Goal Structuring Notation (U. of York, U. K. )

Arguments and Evidence Supporting Evidence: Results from observing, analyzing, testing, simulating and estimating the properties of a system that provide the fundamental information from which a claim (i. e. , certificate) can be made n High Level Argument: Explanation of how the available evidence can be reasonably interpreted as indicating acceptability for use (Fitness for Purpose), usually by demonstrating Compliance with Requirements n Argument without Evidence is unfounded n Evidence without Argument is unexplained n

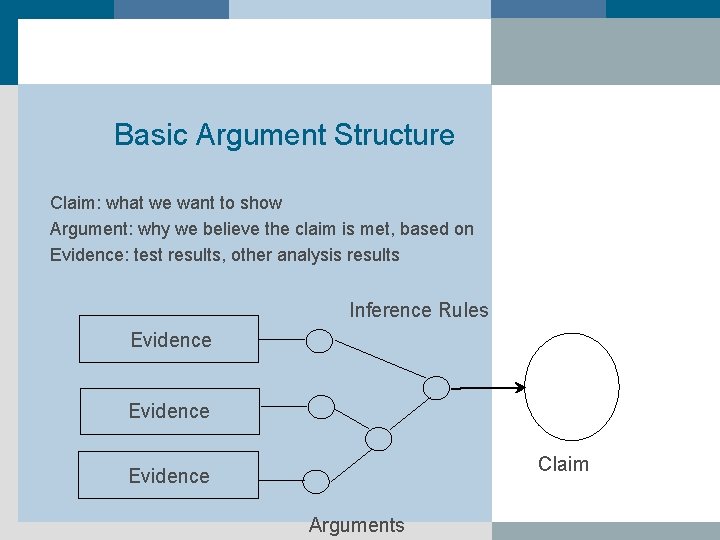

Basic Argument Structure Claim: what we want to show Argument: why we believe the claim is met, based on Evidence: test results, other analysis results Inference Rules Evidence Claim Evidence Arguments

Goal Structuring Notation n Purpose of a goal structure: To show goals are broken down into sub-goals, and eventually supported by evidence, while making clear the strategies adopted, the rationale for the approach (assumptions, justifications), and the context in which the goals are stated. n Similar to a process flow chart n Useful for defining all processes that must be performed during development prior to contract award; thus useful for certification as well as acquisition.

Standards are Not Perfect n n n n Vague: Develop software that only does "good" things n Common sense "dos" and "don'ts" - Very watered done by voting time Disclaimers by publishing organizations n Profitable to organization that publishes them Used only if mandated Return-on-investment is un-quantified Thwart intellectual creativity n "Protectionist" legislation Paperwork n 2167 A: ~400 English words per Ada code statement "Old news" before being ratified Relating one to another is very hard n Hundreds in existence

Standards are Not Perfect (cont) n n n Different interpretations Process certifications are just documentation checks unless personnel remain on site during the project Re-certification n Client: over 300 mods to a safety-critical medical device that never requested re-certification for any of those mods. Cannot be easily tested for compliance n Mis-certifications are common Lack of fairness during certification judgment n FDA Center for Devices and Radiological Health (CDRH) So much legacy functionality exists that complies with no standards yet still gets integrated, making it’s impact to the system unknown. n WAAS

![State-of-the-Practice/Art “A consumer [patient] may not be able to assess accurately whether a particular State-of-the-Practice/Art “A consumer [patient] may not be able to assess accurately whether a particular](http://slidetodoc.com/presentation_image/de17c021924967ef20d29711385646ab/image-92.jpg)

State-of-the-Practice/Art “A consumer [patient] may not be able to assess accurately whether a particular drug is safe, but [they] can be reasonably confident that drugs obtained from approved sources have the endorsement of the U. S. Food and Drug Administration (FDA) which confers important safety information. Computer system trustworthiness has nothing comparable to the FDA. The problem is both the absence of standard metrics and a generally accepted organization that could conduct such assessments. There is no Consumer Reports for Trustworthiness. ” [Source: “Trust in Cyberspace, ” National Academy of Sciences report, National Academy Press, 1998. ]

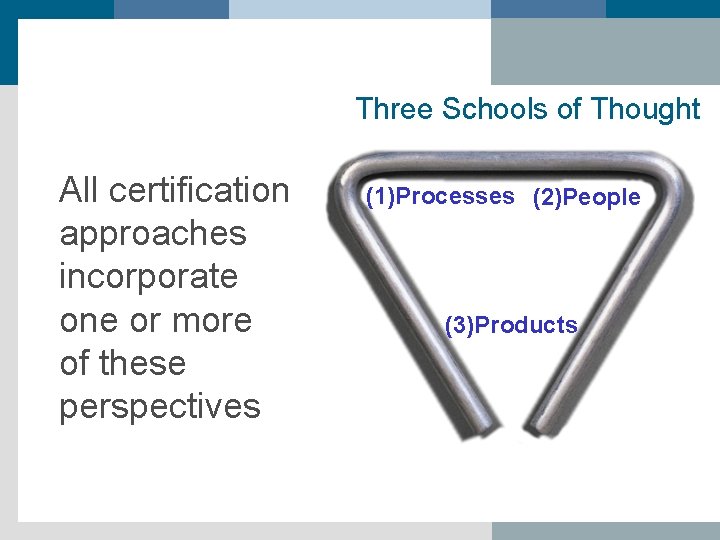

Three Schools of Thought All certification approaches incorporate one or more of these perspectives (1)Processes (2)People (3)Products

1. Process: Clean Pipes, Dirty Water? Certifying that you know how to do things correctly does not mean that you do them correctly!

On a positive note, process improvement schemes at least, from an acquisition standpoint, alleviate some of the concerns associated with SOUP

2. People The IEEE Computer Society has developed a program to certify software engineering professionals. This program provides formal recognition of professionals who have successfully achieved a level of proficiency commonly accepted and valued by the industry.

Serious Question What does process maturity and personnel accreditation say specifically about how the software will behave in the future?

3. Product: The Software Itself 0% confidence 100% confidence Spectrum of possibilities as to what a certificate proclaiming that some “quantified” level of quality has been built in could state --- it could say anything in the range between “Nothing” (e. g. , “here is a piece of software”, etc. ) to “This software will always work perfectly under all conditions” (i. e. , a 100% guarantee of perfection).

And So How Should a Certification Standard Be Created?

What Attribute is Being Certified? n n n Reliability? n RTCA’s DO 178 B (FAA) That the degree of testing done was appropriate? n RTCA’s DO 178 B (FAA) Safety? n System (process) vs. component (product) safety n IEC 61508 vs. UL 1998 Security? , Availability? , Fault Tolerance? Performance? , etc. That certain development procedures were followed? n SEI Capability Maturity Model n ISO 900 x n CMMI

Qo. S Interoperability

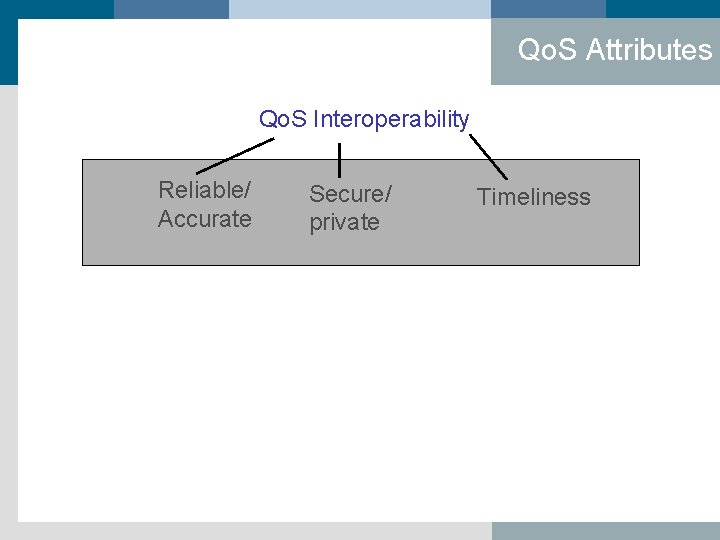

Qo. S Attributes Qo. S Interoperability Reliable/ Accurate Secure/ private Timeliness

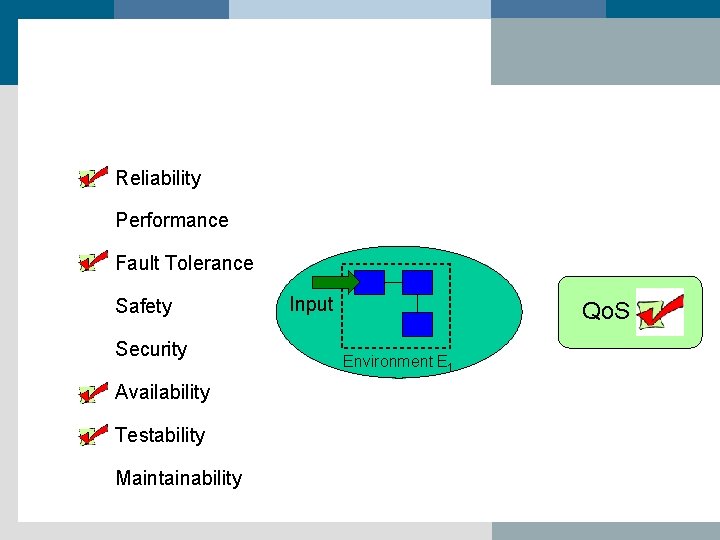

Qo. S Attributes Qo. S Interoperability Reliable/ Accurate reliability Secure/ private Timeliness privacy security availability confidentiality fault tolerance testability performance intrusion tolerance fault tolerance Qo. S attributes (“ilities”)

Position Statement Software’s Qo. S interoperability is some combination of: (1) the degree to which the functional requirements are met, as well as, (2) the degree to which the non-functional requirements are met.

Two Components x y

With Attributes x y x has the following properties: (a. R, b. P, c. F, d. Sa, e. Se, f. A, g. T, h. M) y has the following properties: (i. R, j. P, k. F, l. Sa, m. Se, n. A, o. T, p. M)

What Have You Got? x y Then F(x y) will inherit some level of Quality of Service (Qo. S) from the individual components. Is that level of quality an integer? Probability? An n-tuple of values? Color coded (green red yellow)? Key Point: The composite Qo. S must represent something from which predictions of future behavior can be made.

Difficult Because … Qo. S attributes have little meaning in terms of their ability to be measured and traded off until they are defined in the context of the target system, i. e. , their environment.

Reliability Performance Fault Tolerance Safety Security Availability Testability Maintainability Input Qo. S Environment E 1

Qo. S Environment E 1 Environment E’ 1 Qo. S ? Environment E’ 2 Environment E’ 3 ? ?

Bottom Line for Certifying Software Interoperability The following 8 characteristics must be considered: (1) compos-ability, (2) predictability, (3) attribute measurement, (4) Qo. S attribute trade-off analysis (technical and economic), (5) fault tolerance and noninterference analysis, (6) requirements trace-ability, (7) access to pre-qualified components, and (8) precise bounding of software’s mission, environment, and threat space.

Mis-certification Risks 1. Certifying at the incorrect Certification Integrity Level (CIL) 2. Incorrect understanding of system influences (operational environment and threat space) 3. Failure to certify for the correct Qo. S attribute 4. Employing incomplete or incorrect evidence and arguments 5. Failure to re-certify when system influences change 6. Reusing certificates for a single component in totally different environment without re-certification 7. Substituting process certificates for product assessments

Other Issues n De-certification n Re-certification

“Designing in” the “ilities”

Attributes Need to Be Pre-Defined n Requirements should prescribe at some level of granularity as to what the weights are for various “ilities”, as well as how much of each “ility” is desired. n But HOW? n Ignoring the attributes is not an option for high assurance and trustworthy systems! Make an attempt to discuss them with the client even if quantification is not possible. Just get the issue on the table!

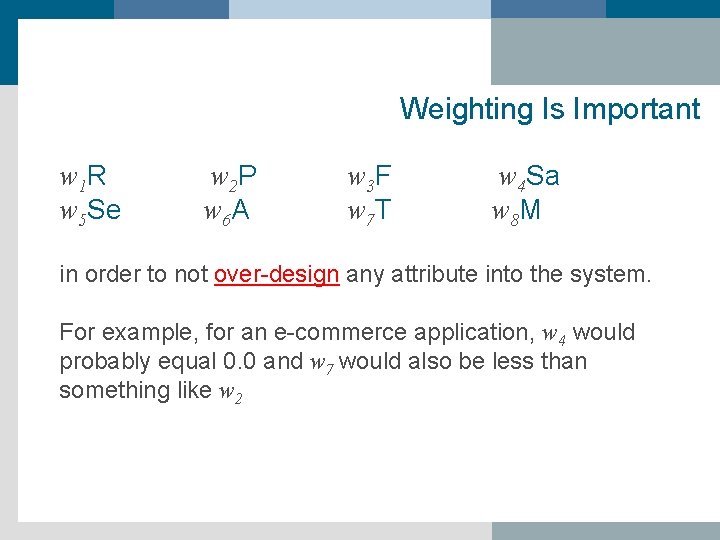

Weighting Is Important w 1 R w 5 Se w 2 P w 6 A w 3 F w 7 T w 4 Sa w 8 M in order to not over-design any attribute into the system. For example, for an e-commerce application, w 4 would probably equal 0. 0 and w 7 would also be less than something like w 2

Tradeoffs How much will you spend for increased reliability knowing that doing so will take needed, financial resources away from security or performance or …?

n Security vs. Performance n Fault tolerance vs. Testability n Fault tolerance vs. Performance n etc.

Counterintuitive Realities n 100% safety and 0% reliability n 100% reliability and 0% safety n 0% functionality/reliability and 100% security n 100% availability and 0% reliability n 100% availability and 0% performance n 0% performance and 100% safety

Closing Thoughts 1. Standards and certification are inseparable in order to achieve the 1. goal of interoperable and safe behavior 2. Product certification is distinct from process certification and personnel accreditation 3. The blending of existing standards, collecting quantifiable metrics, defining precisely what Qo. S attributes are warranted, and defining what a certificate implies or does not imply is pivotal to believable certificates. 4. “You cannot improve what you cannot measure” – Lord Kelvin

Additional Last Thoughts n n n Information integrity is some combination of: software integrity + data integrity Requirements should define the functional characteristics and nonfunctional attributes Testing must incorporate the nominal test cases (high probability according to the operational profile) and the off-nominal (rarely experienced test cases) Misuse cases (malicious and non-malicious should be defined for testing) Software fault injection, a form of testing, can flush out hidden behaviors and force hard to reach code to be executed. SOA appears to be the next big architectural idea for IT. The fundamentals concerning how to define, measure, and balance the Qo. S attributes remains a key difficulty in composing subsystems and services.

Talk References § § § § § Eric Newcomer and Greg Lomow, Understanding SOA with Web Services, Addison-Wesley, 2004. Software Safety and Reliability, Debra S. Herrmann, IEEE Computer Society Press, 1999. Software Engineering Standards, James W. Moore, IEEE Computer Society Press, 1998. Guide to Software Engineering Standards and Specifications, Stan Magee and Leonard L. Tripp, Artech House, 1997. J. Voas, F. Charron, and L. Beltracchi, “Error Propagation analysis studies in a Nuclear Research Code”, Proceedings of the 1998 IEEE Aerospace Conference , Snowmass, CO. J. Voas. “ `Toward a Usage-Based Software Certification Process, ” IEEE Computer , 33(8): 32 -37, August 2000. J. Whittacker and J. Voas. “Towards a More Reliable Theory of Software Reliability, ” IEEE Computer , 33(12): 36 -42, December 2000. J. Voas. “Certifying Off-the-Shelf Software Components, ” IEEE Computer , 31(6): 53 -59, June 1998. (Translated into Japanese and reprinted in Nikkei Computer magazine) J. Voas. “Maintaining Component-based Systems, ” IEEE Software, 15(4): 22 -27, July 1998. (Reprinted in the Moscow Open Systems Journal , Volume 6, 1998)

Talk Summary n Off nominal testing, inverted profile testing, and fault injection analysis are implemented differently according to the goal of the results, but the fundamental techniques for doing so change little. n Most major corporations today use software fault injection as a means of testing code robustness, and most have “homegrown” tools for doing so. n Standards and certification are inseparable n Interoperability requires non-interference, and fault injection is a means for detecting the likelihood of non-interference.

- Slides: 123