Performance best Practices for a Successful Dynamics 365

Performance best Practices for a Successful Dynamics 365 Finance & Operations Implementation (Fast. Track for Dynamics 365)

Performance Best Practices 1. Design Principles 2. Test for Performance 3. Key Patterns and Anti-patterns 4. Tools for Performance

Design Principles

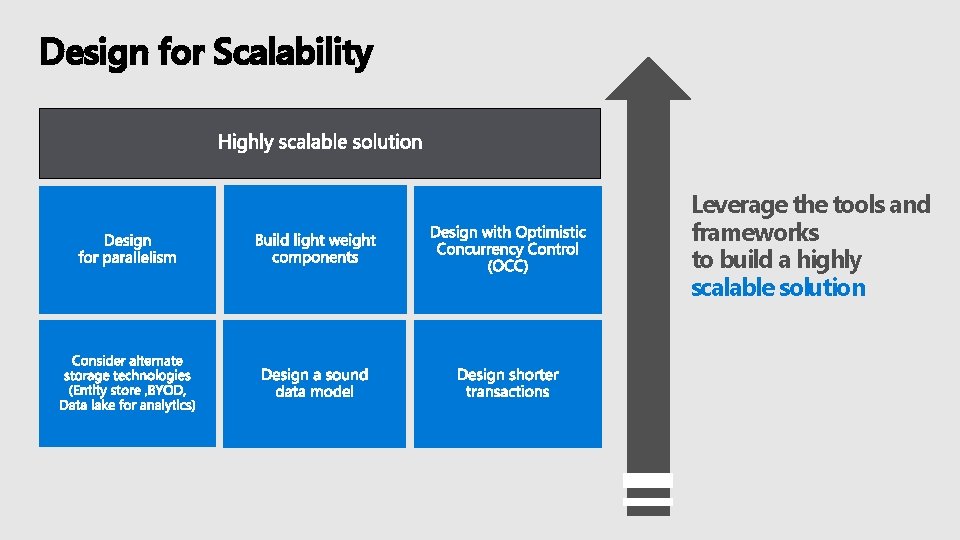

Design for Scalability Leverage the tools and frameworks to build a highly scalable solution

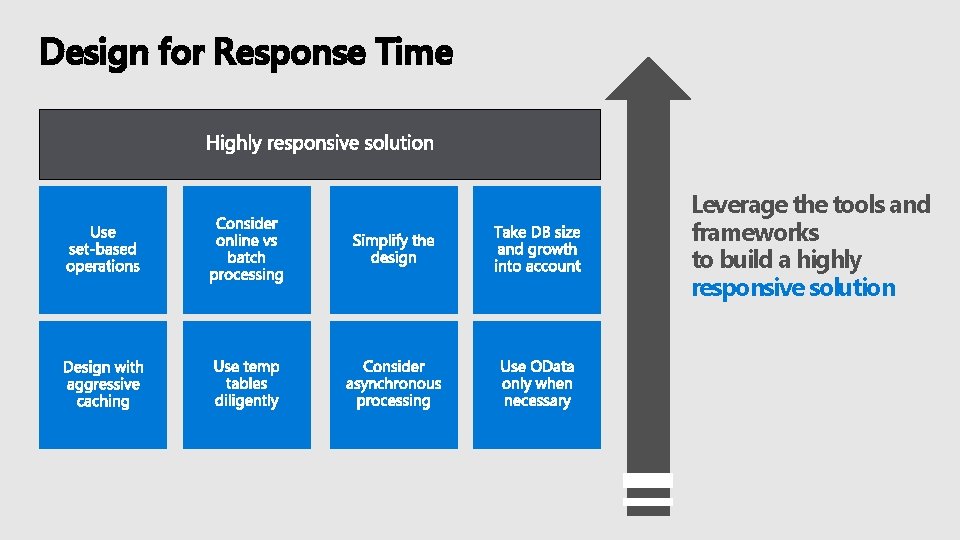

Design for Response Time Leverage the tools and frameworks to build a highly responsive solution

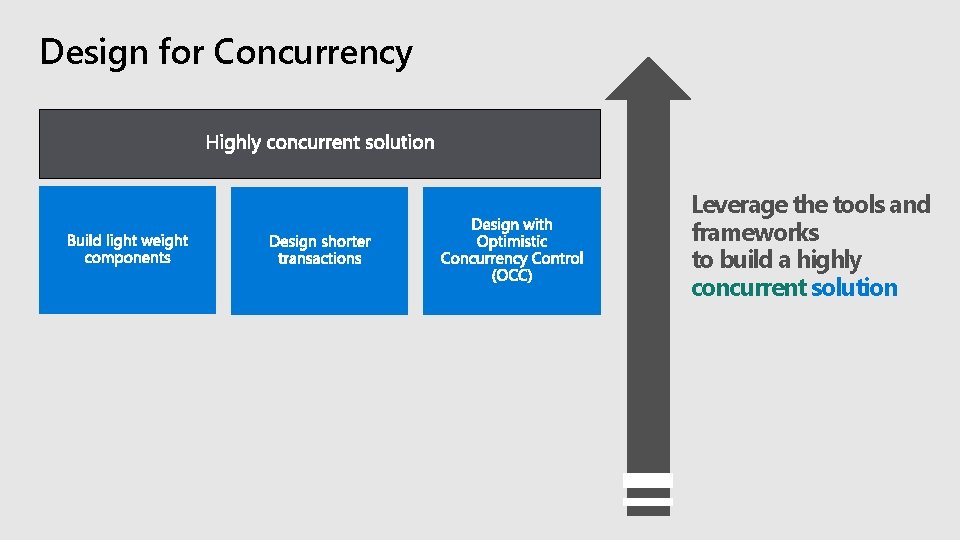

Design for Concurrency Leverage the tools and frameworks to build a highly concurrent solution

Test for Performance

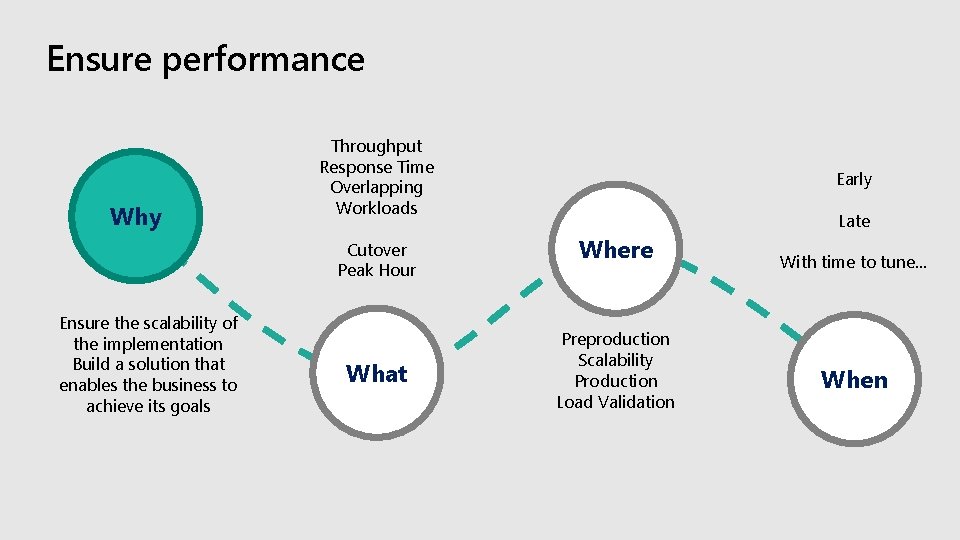

Ensure performance Why Throughput Response Time Overlapping Workloads Cutover Peak Hour Ensure the scalability of the implementation Build a solution that enables the business to achieve its goals What Early Late Where Preproduction Scalability Production Load Validation With time to tune… When

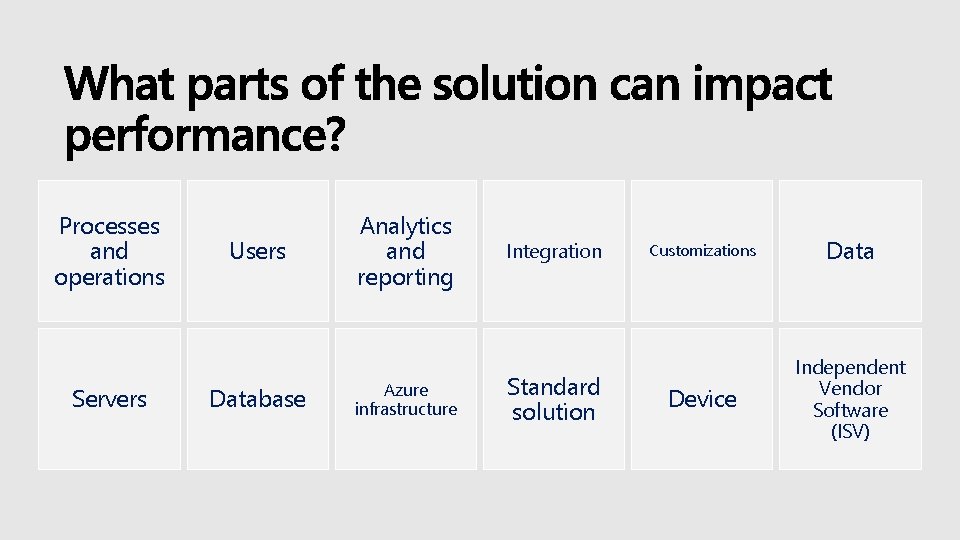

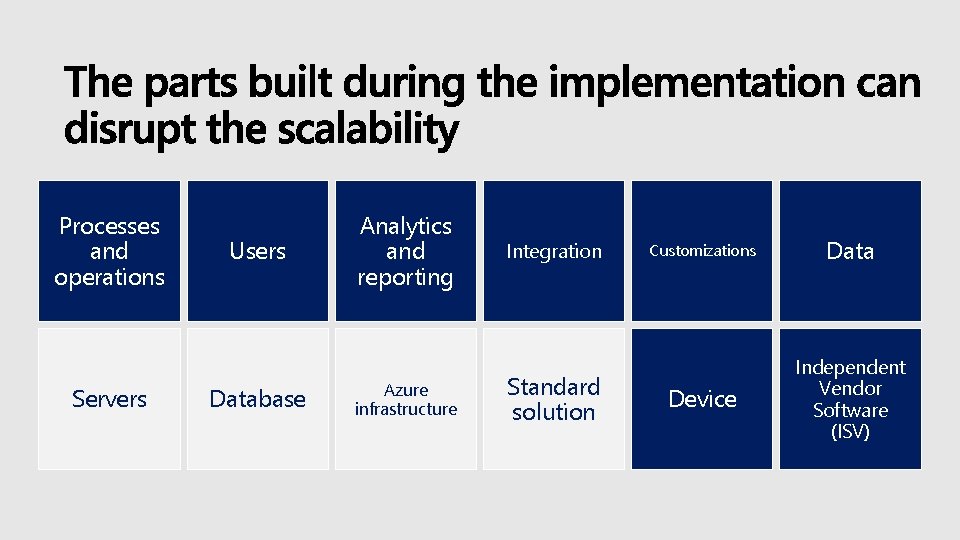

Processes and operations Servers Users Database Analytics and reporting Azure infrastructure Integration Standard solution Customizations Data Device Independent Vendor Software (ISV)

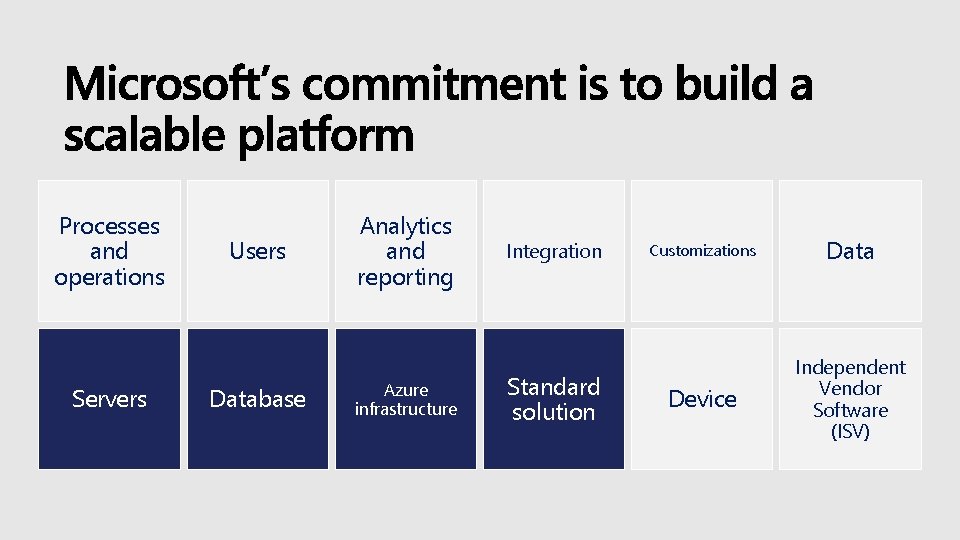

Processes and operations Servers Users Database Analytics and reporting Azure infrastructure Integration Standard solution Customizations Data Device Independent Vendor Software (ISV)

Processes and operations Servers Users Database Analytics and reporting Azure infrastructure Integration Standard solution Customizations Data Device Independent Vendor Software (ISV)

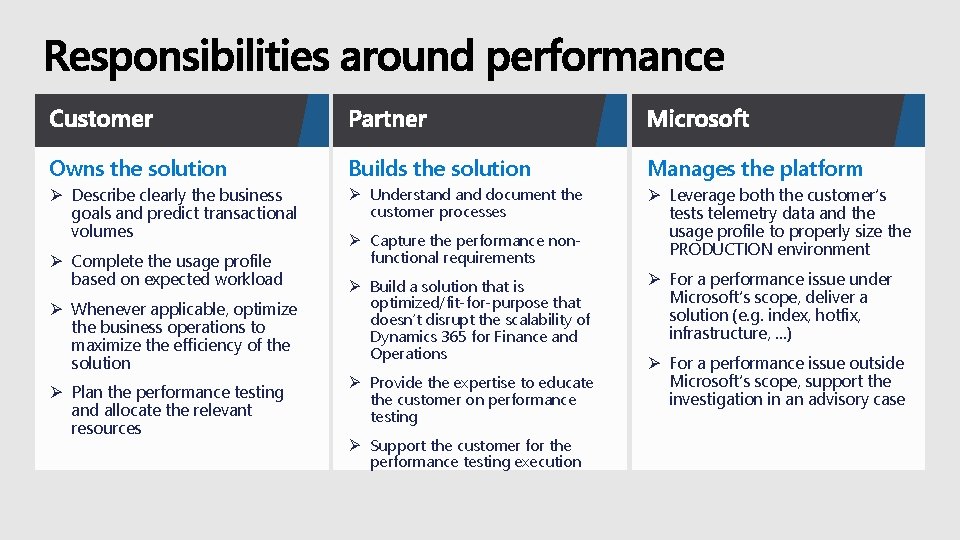

Owns the solution Builds the solution Manages the platform Ø Describe clearly the business goals and predict transactional volumes Ø Understand document the customer processes Ø Leverage both the customer’s tests telemetry data and the usage profile to properly size the PRODUCTION environment Ø Complete the usage profile based on expected workload Ø Whenever applicable, optimize the business operations to maximize the efficiency of the solution Ø Plan the performance testing and allocate the relevant resources Ø Capture the performance nonfunctional requirements Ø Build a solution that is optimized/fit-for-purpose that doesn’t disrupt the scalability of Dynamics 365 for Finance and Operations Ø Provide the expertise to educate the customer on performance testing Ø Support the customer for the performance testing execution Ø For a performance issue under Microsoft’s scope, deliver a solution (e. g. index, hotfix, infrastructure, …) Ø For a performance issue outside Microsoft’s scope, support the investigation in an advisory case

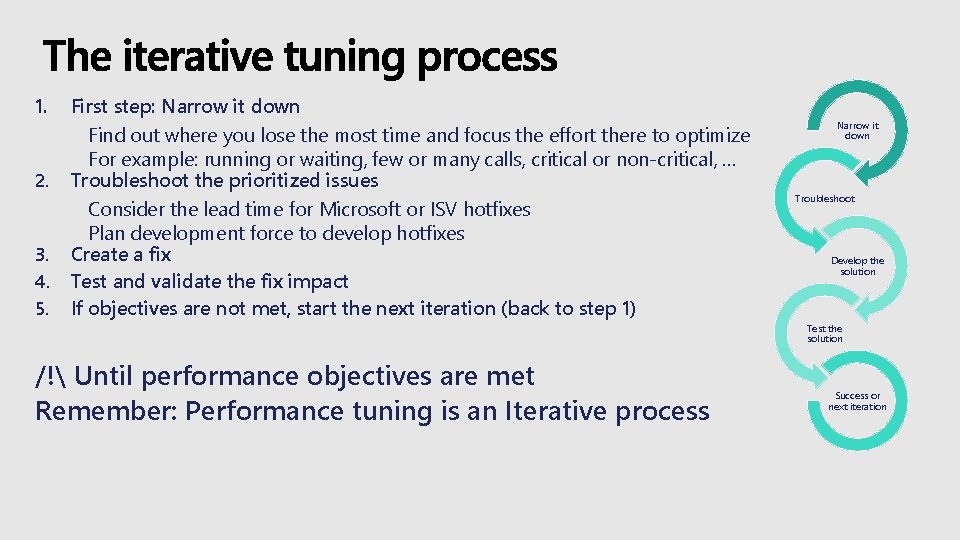

1. 2. 3. 4. 5. First step: Narrow it down Find out where you lose the most time and focus the effort there to optimize For example: running or waiting, few or many calls, critical or non-critical, … Troubleshoot the prioritized issues Consider the lead time for Microsoft or ISV hotfixes Plan development force to develop hotfixes Create a fix Test and validate the fix impact If objectives are not met, start the next iteration (back to step 1) Narrow it down Troubleshoot Develop the solution Test the solution /! Until performance objectives are met Remember: Performance tuning is an Iterative process Success or next iteration

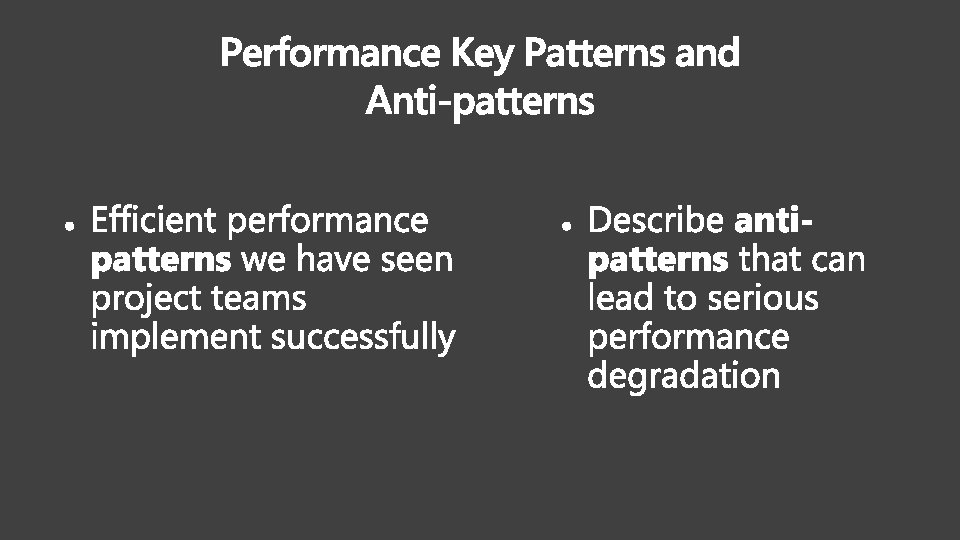

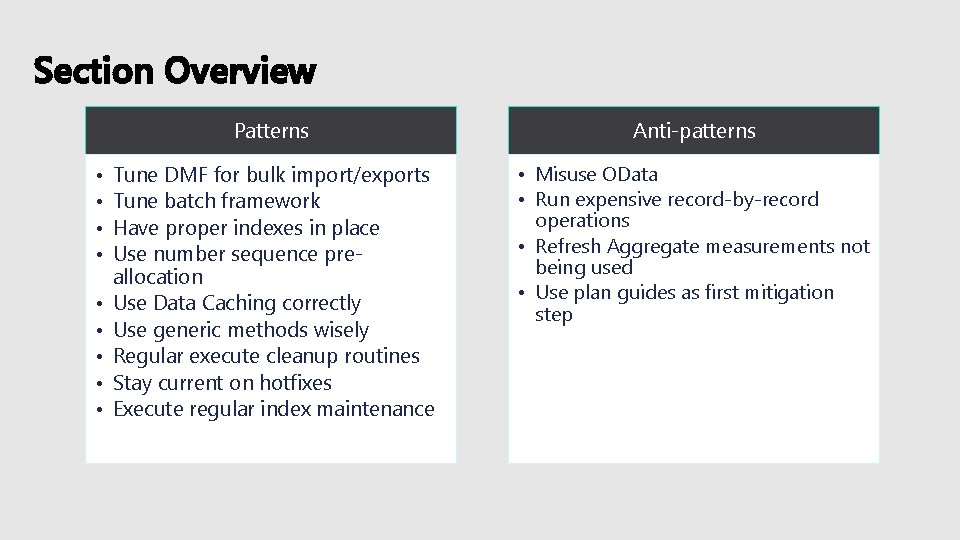

Section Overview • • • Patterns Anti-patterns Tune DMF for bulk import/exports Tune batch framework Have proper indexes in place Use number sequence preallocation Use Data Caching correctly Use generic methods wisely Regular execute cleanup routines Stay current on hotfixes Execute regular index maintenance • Misuse OData • Run expensive record-by-record operations • Refresh Aggregate measurements not being used • Use plan guides as first mitigation step

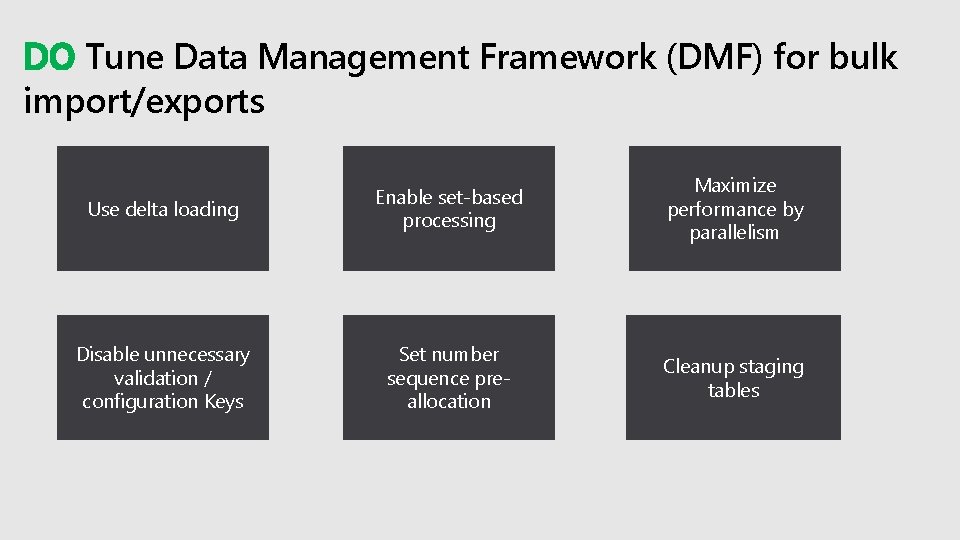

DO Tune Data Management Framework (DMF) for bulk import/exports Use delta loading Enable set-based processing Maximize performance by parallelism Disable unnecessary validation / configuration Keys Set number sequence preallocation Cleanup staging tables

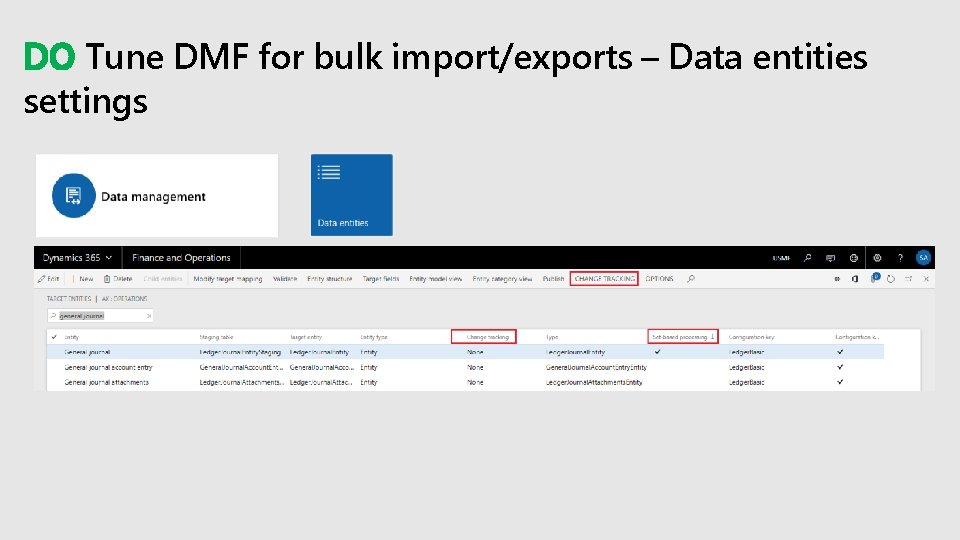

DO Tune DMF for bulk import/exports – Data entities settings

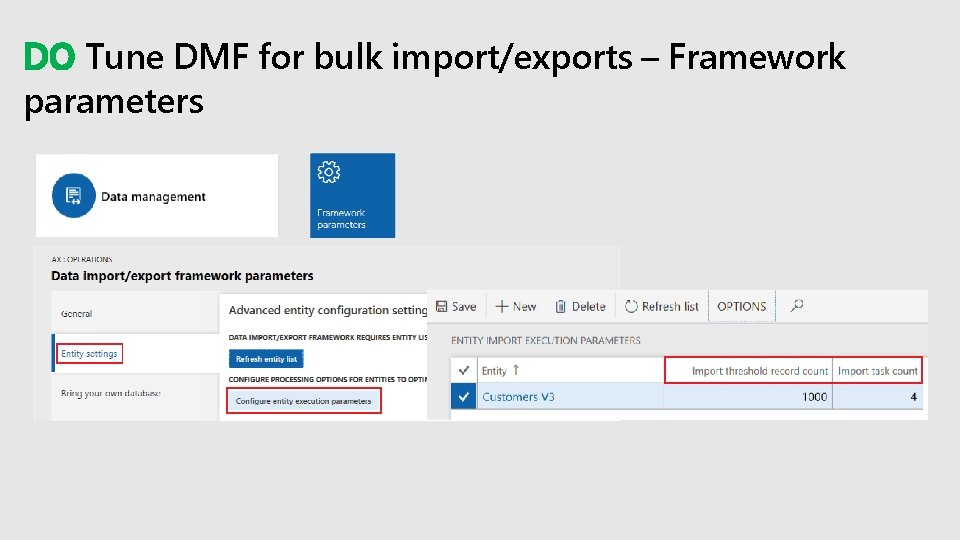

DO Tune DMF for bulk import/exports – Framework parameters

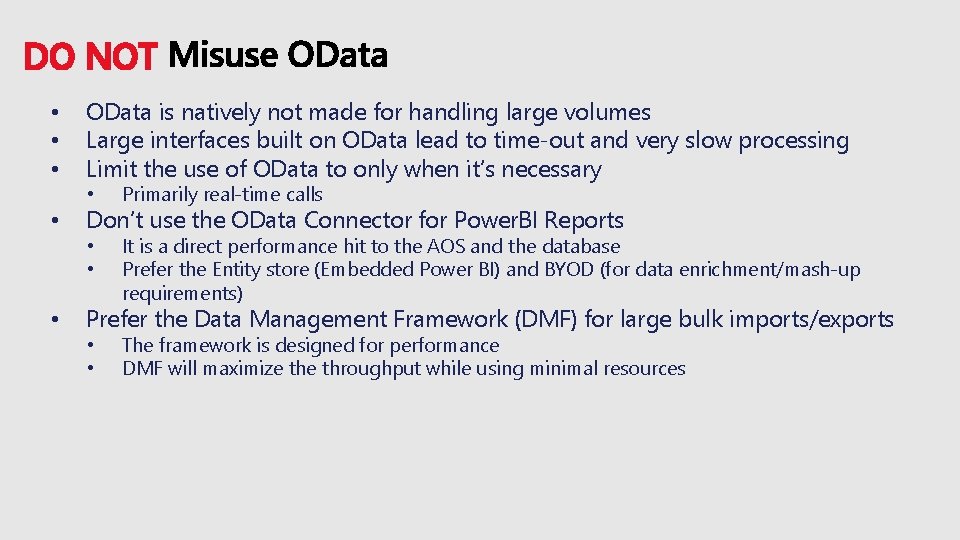

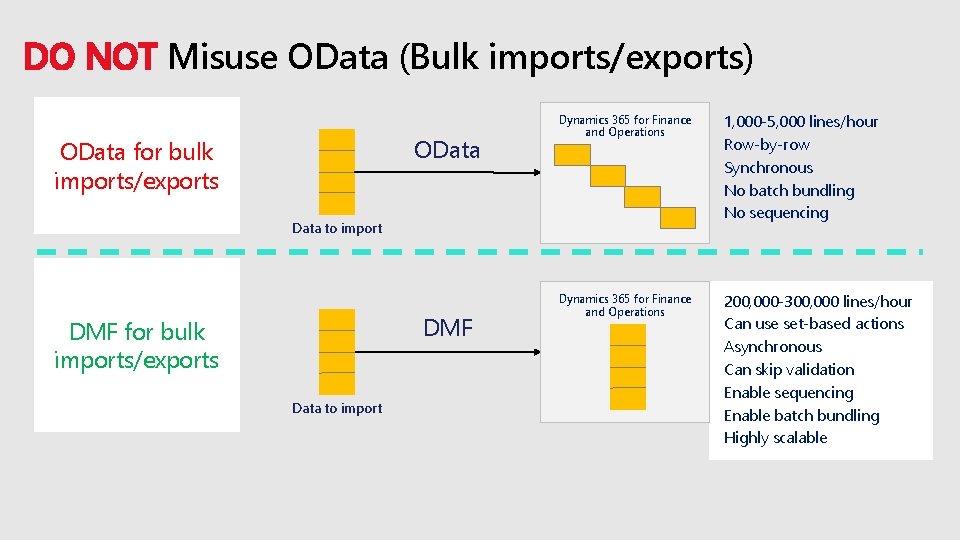

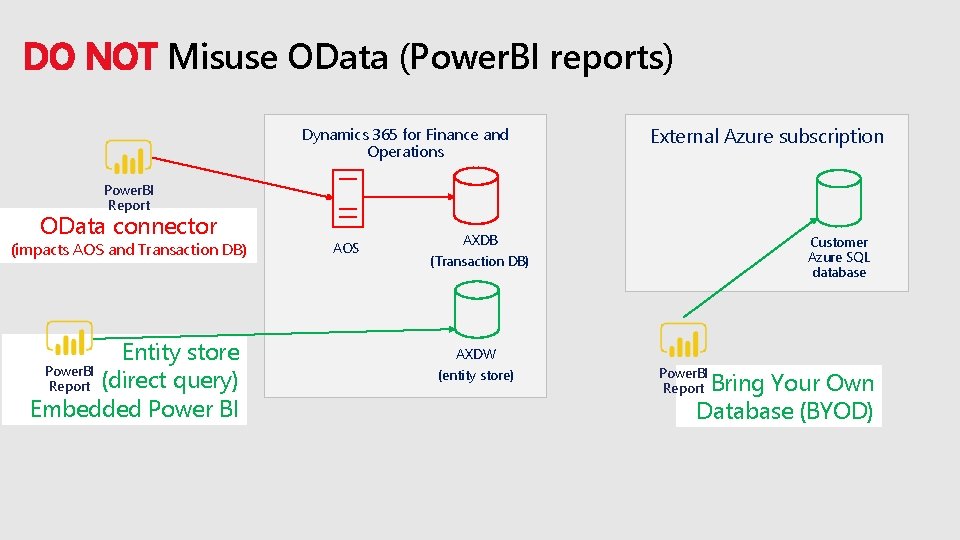

DO NOT • • • OData is natively not made for handling large volumes Large interfaces built on OData lead to time-out and very slow processing Limit the use of OData to only when it’s necessary • Primarily real-time calls • • It is a direct performance hit to the AOS and the database Prefer the Entity store (Embedded Power BI) and BYOD (for data enrichment/mash-up requirements) Don’t use the OData Connector for Power. BI Reports Prefer the Data Management Framework (DMF) for large bulk imports/exports • • The framework is designed for performance DMF will maximize throughput while using minimal resources

DO NOT Misuse OData (Bulk imports/exports) OData for bulk imports/exports Dynamics 365 for Finance and Operations 1, 000 -5, 000 lines/hour Row-by-row Synchronous No batch bundling No sequencing Dynamics 365 for Finance and Operations 200, 000 -300, 000 lines/hour Can use set-based actions Asynchronous Can skip validation Enable sequencing Enable batch bundling Highly scalable Data to import DMF for bulk imports/exports Data to import

DO NOT Misuse OData (Power. BI reports) Dynamics 365 for Finance and Operations External Azure subscription Power. BI Report OData connector (impacts AOS and Transaction DB) Entity store Power. BI Report (direct query) Embedded Power BI AOS AXDB Customer Azure SQL database (Transaction DB) AXDW (entity store) Power. BI Report Bring Your Own Database (BYOD)

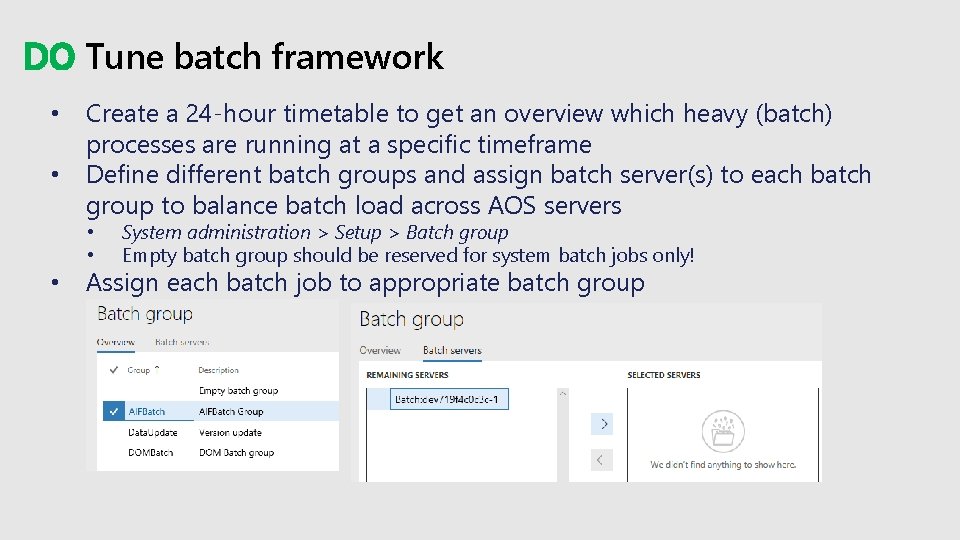

DO Tune batch framework • • Create a 24 -hour timetable to get an overview which heavy (batch) processes are running at a specific timeframe Define different batch groups and assign batch server(s) to each batch group to balance batch load across AOS servers • • • System administration > Setup > Batch group Empty batch group should be reserved for system batch jobs only! Assign each batch job to appropriate batch group

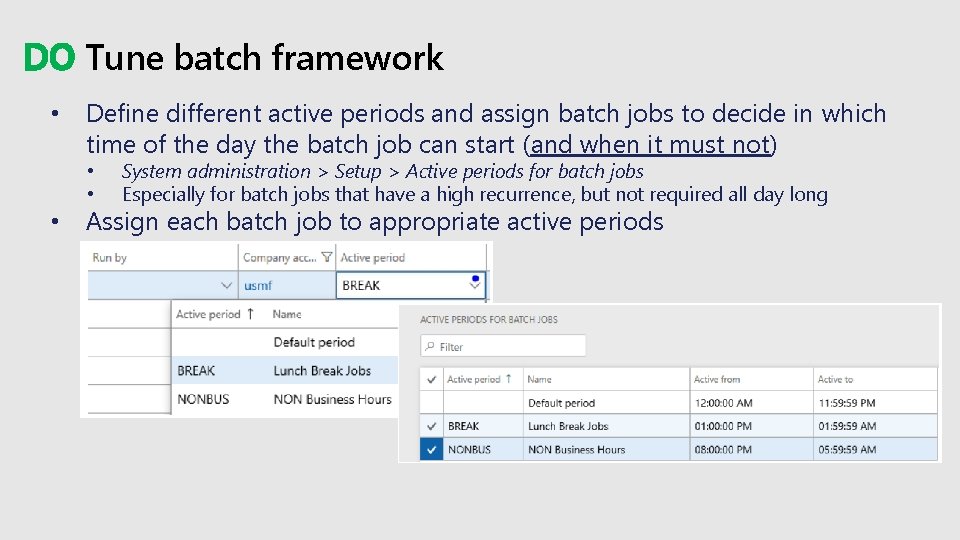

DO Tune batch framework • Define different active periods and assign batch jobs to decide in which time of the day the batch job can start (and when it must not) • • • System administration > Setup > Active periods for batch jobs Especially for batch jobs that have a high recurrence, but not required all day long Assign each batch job to appropriate active periods

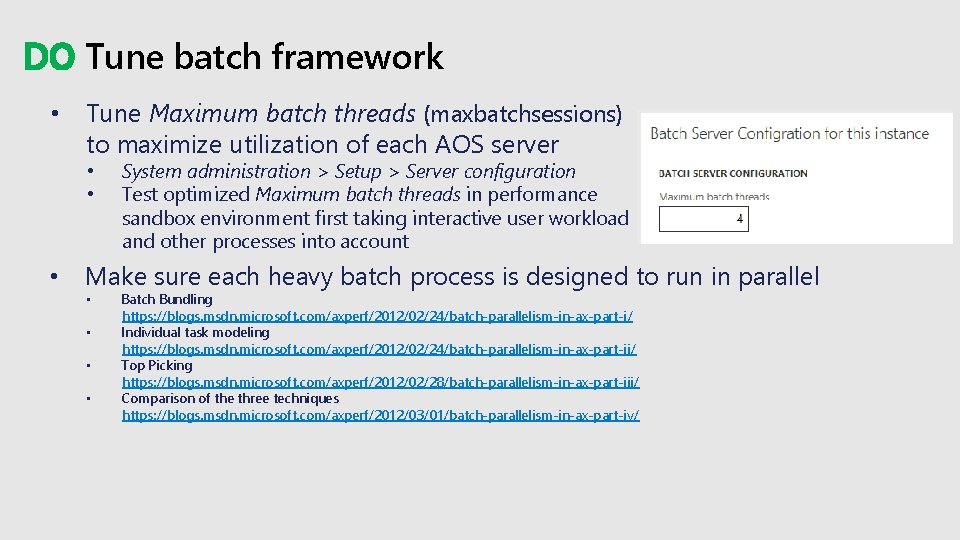

DO Tune batch framework • Tune Maximum batch threads (maxbatchsessions) to maximize utilization of each AOS server • • • System administration > Setup > Server configuration Test optimized Maximum batch threads in performance sandbox environment first taking interactive user workload and other processes into account Make sure each heavy batch process is designed to run in parallel • • Batch Bundling https: //blogs. msdn. microsoft. com/axperf/2012/02/24/batch-parallelism-in-ax-part-i/ Individual task modeling https: //blogs. msdn. microsoft. com/axperf/2012/02/24/batch-parallelism-in-ax-part-ii/ Top Picking https: //blogs. msdn. microsoft. com/axperf/2012/02/28/batch-parallelism-in-ax-part-iii/ Comparison of the three techniques https: //blogs. msdn. microsoft. com/axperf/2012/03/01/batch-parallelism-in-ax-part-iv/

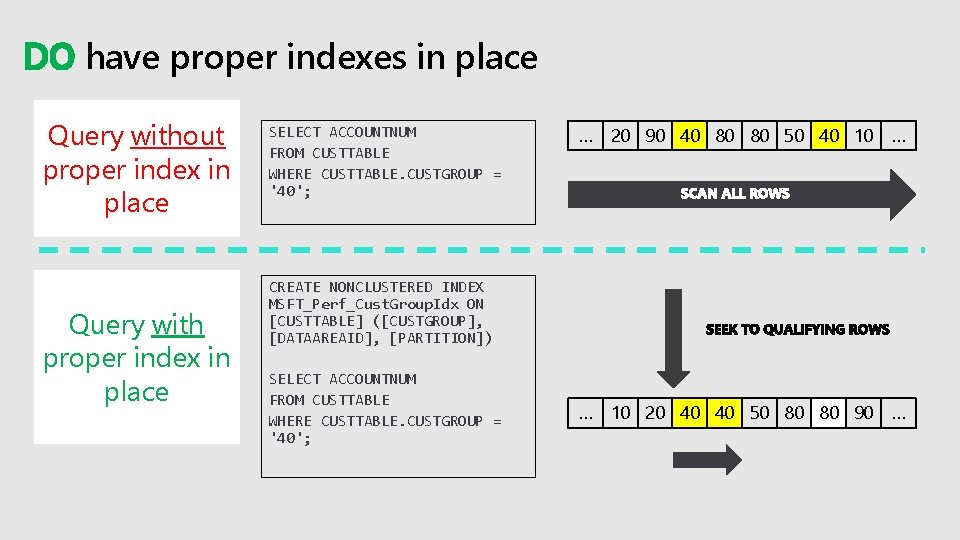

DO have proper indexes in place Query without proper index in place Query with proper index in place SELECT ACCOUNTNUM FROM CUSTTABLE WHERE CUSTTABLE. CUSTGROUP = '40'; … 20 90 40 80 80 50 40 10 … … 10 20 40 40 50 80 80 90 … CREATE NONCLUSTERED INDEX MSFT_Perf_Cust. Group. Idx ON [CUSTTABLE] ([CUSTGROUP], [DATAAREAID], [PARTITION]) SELECT ACCOUNTNUM FROM CUSTTABLE WHERE CUSTTABLE. CUSTGROUP = '40';

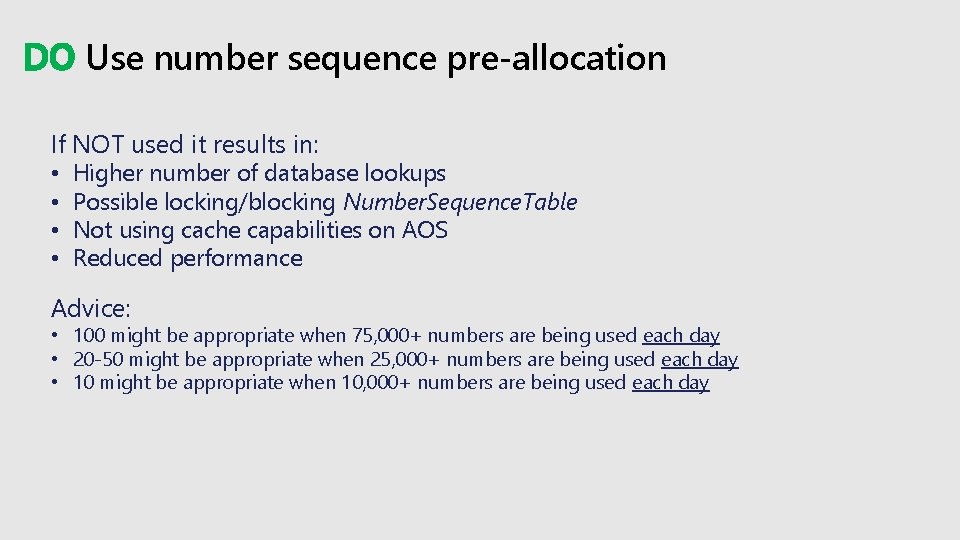

DO Use number sequence pre-allocation If NOT used it results in: • • Higher number of database lookups Possible locking/blocking Number. Sequence. Table Not using cache capabilities on AOS Reduced performance Advice: • 100 might be appropriate when 75, 000+ numbers are being used each day • 20 -50 might be appropriate when 25, 000+ numbers are being used each day • 10 might be appropriate when 10, 000+ numbers are being used each day

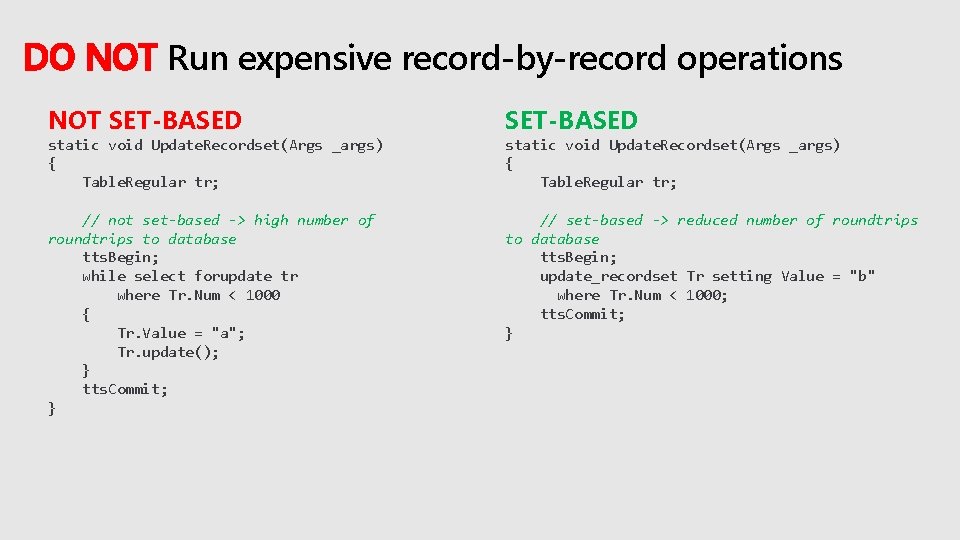

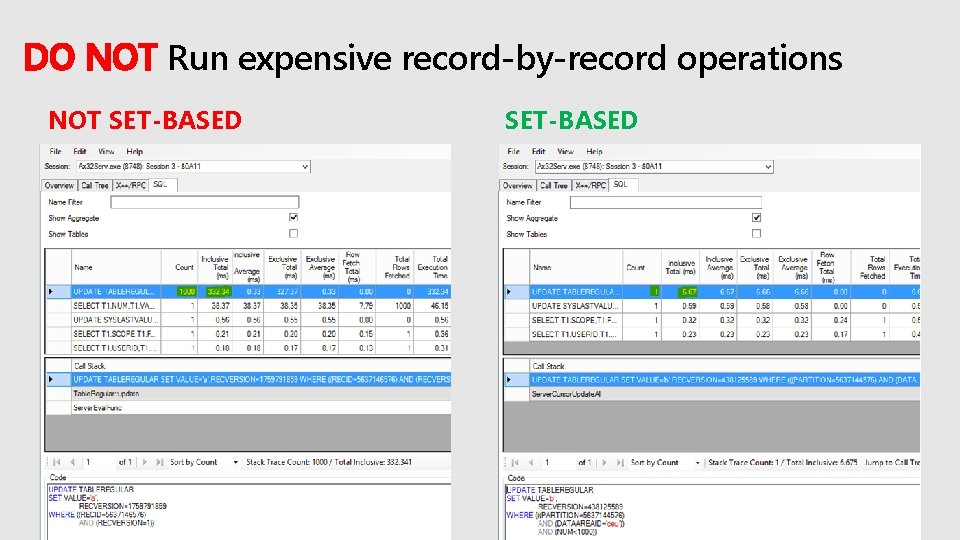

DO NOT Run expensive record-by-record operations NOT SET-BASED // not set-based -> high number of roundtrips to database tts. Begin; while select forupdate tr where Tr. Num < 1000 { Tr. Value = "a"; Tr. update(); } tts. Commit; } // set-based -> reduced number of roundtrips to database tts. Begin; update_recordset Tr setting Value = "b" where Tr. Num < 1000; tts. Commit; } static void Update. Recordset(Args _args) { Table. Regular tr;

DO NOT Run expensive record-by-record operations NOT SET-BASED

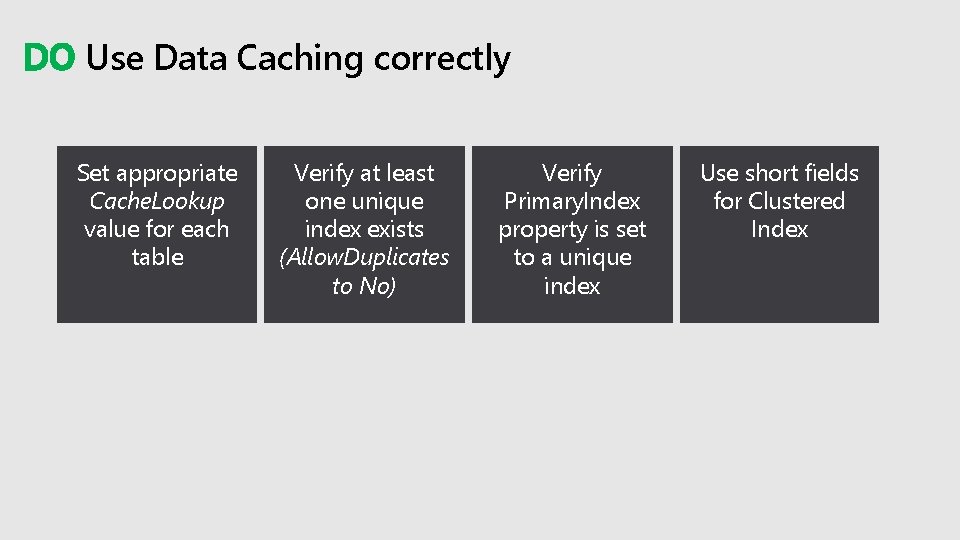

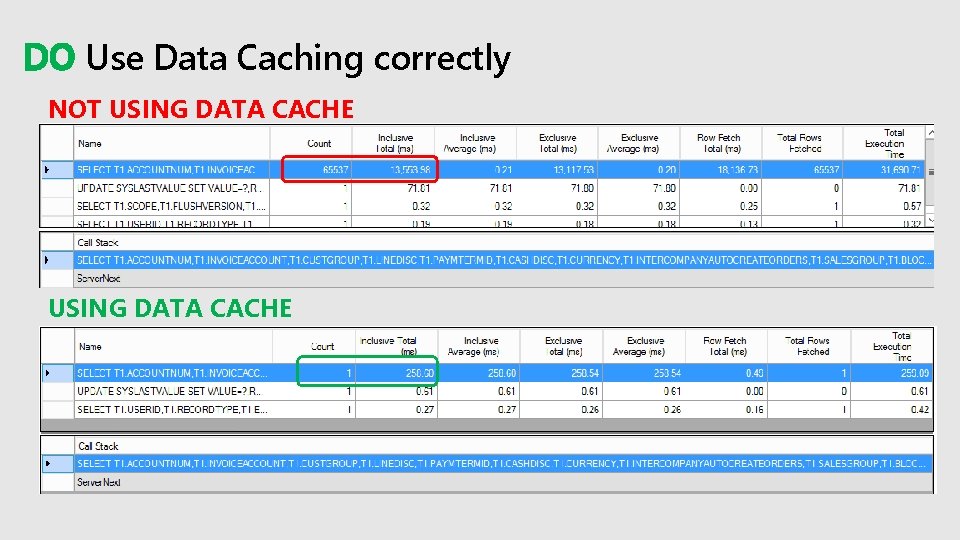

DO Use Data Caching correctly Set appropriate Cache. Lookup value for each table Verify at least one unique index exists (Allow. Duplicates to No) Verify Primary. Index property is set to a unique index Use short fields for Clustered Index

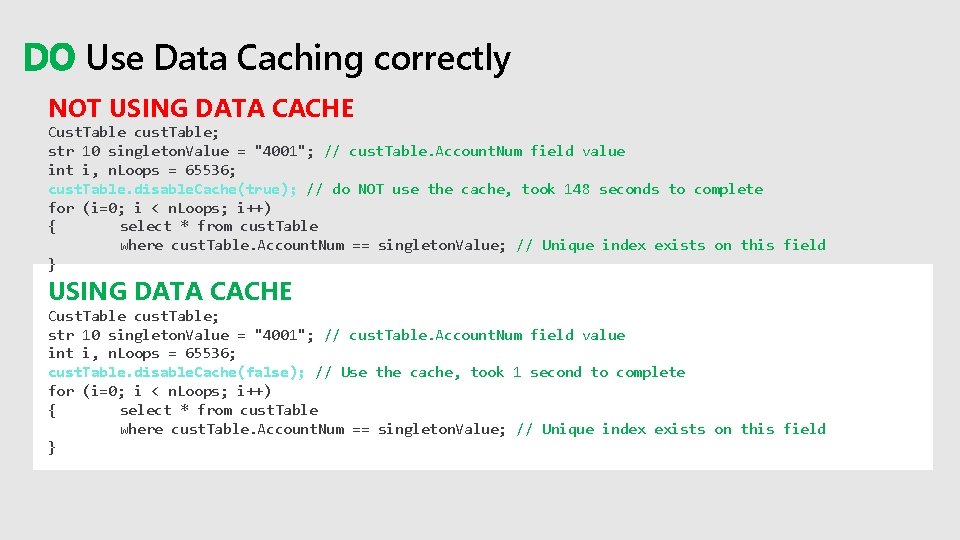

DO Use Data Caching correctly NOT USING DATA CACHE Cust. Table cust. Table; str 10 singleton. Value = "4001"; // cust. Table. Account. Num field value int i, n. Loops = 65536; cust. Table. disable. Cache(true); // do NOT use the cache, took 148 seconds to complete for (i=0; i < n. Loops; i++) { select * from cust. Table where cust. Table. Account. Num == singleton. Value; // Unique index exists on this field } USING DATA CACHE Cust. Table cust. Table; str 10 singleton. Value = "4001"; // cust. Table. Account. Num field value int i, n. Loops = 65536; cust. Table. disable. Cache(false); // Use the cache, took 1 second to complete for (i=0; i < n. Loops; i++) { select * from cust. Table where cust. Table. Account. Num == singleton. Value; // Unique index exists on this field }

DO Use Data Caching correctly NOT USING DATA CACHE

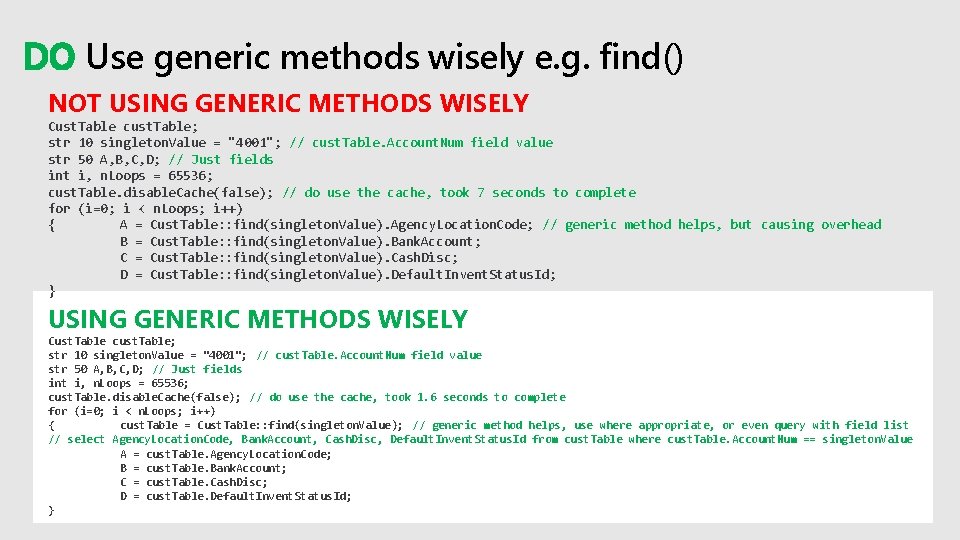

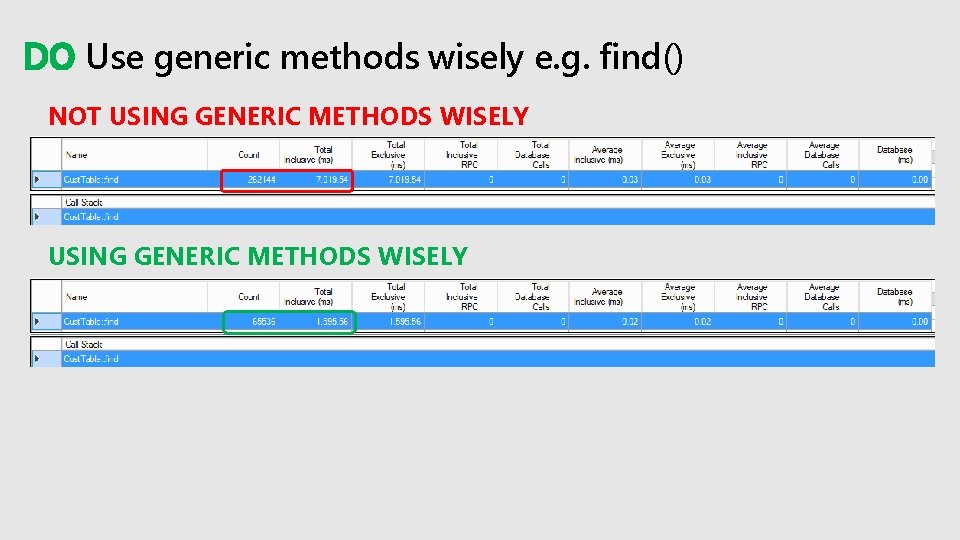

DO Use generic methods wisely e. g. find() NOT USING GENERIC METHODS WISELY Cust. Table cust. Table; str 10 singleton. Value = "4001"; // cust. Table. Account. Num field value str 50 A, B, C, D; // Just fields int i, n. Loops = 65536; cust. Table. disable. Cache(false); // do use the cache, took 7 seconds to complete for (i=0; i < n. Loops; i++) { A = Cust. Table: : find(singleton. Value). Agency. Location. Code; // generic method helps, but causing overhead B = Cust. Table: : find(singleton. Value). Bank. Account; C = Cust. Table: : find(singleton. Value). Cash. Disc; D = Cust. Table: : find(singleton. Value). Default. Invent. Status. Id; } USING GENERIC METHODS WISELY Cust. Table cust. Table; str 10 singleton. Value = "4001"; // cust. Table. Account. Num field value str 50 A, B, C, D; // Just fields int i, n. Loops = 65536; cust. Table. disable. Cache(false); // do use the cache, took 1. 6 seconds to complete for (i=0; i < n. Loops; i++) { cust. Table = Cust. Table: : find(singleton. Value); // generic method helps, use where appropriate, or even query with field list // select Agency. Location. Code, Bank. Account, Cash. Disc, Default. Invent. Status. Id from cust. Table where cust. Table. Account. Num == singleton. Value A = cust. Table. Agency. Location. Code; B = cust. Table. Bank. Account; C = cust. Table. Cash. Disc; D = cust. Table. Default. Invent. Status. Id; }

DO Use generic methods wisely e. g. find() NOT USING GENERIC METHODS WISELY

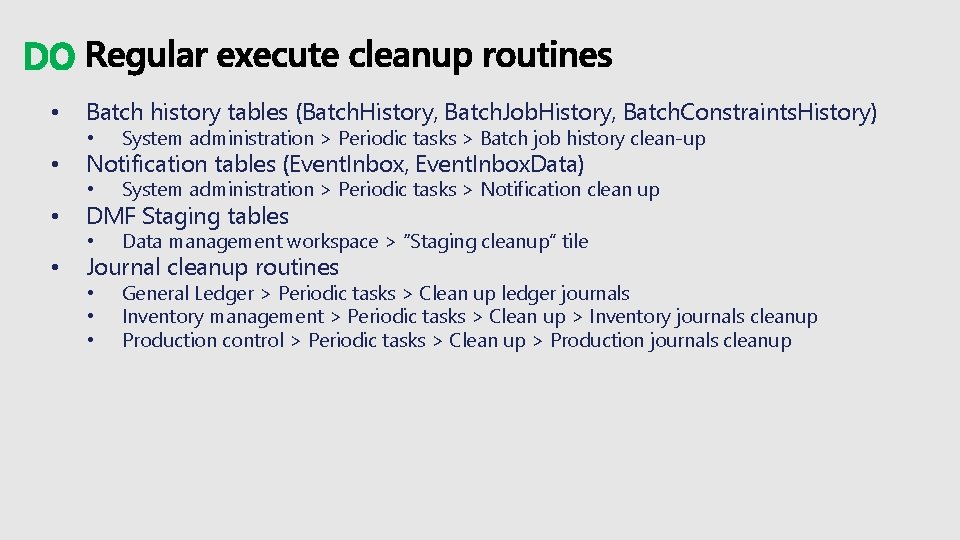

DO • • Batch history tables (Batch. History, Batch. Job. History, Batch. Constraints. History) • System administration > Periodic tasks > Batch job history clean-up • System administration > Periodic tasks > Notification clean up • Data management workspace > “Staging cleanup” tile • • • General Ledger > Periodic tasks > Clean up ledger journals Inventory management > Periodic tasks > Clean up > Inventory journals cleanup Production control > Periodic tasks > Clean up > Production journals cleanup Notification tables (Event. Inbox, Event. Inbox. Data) DMF Staging tables Journal cleanup routines

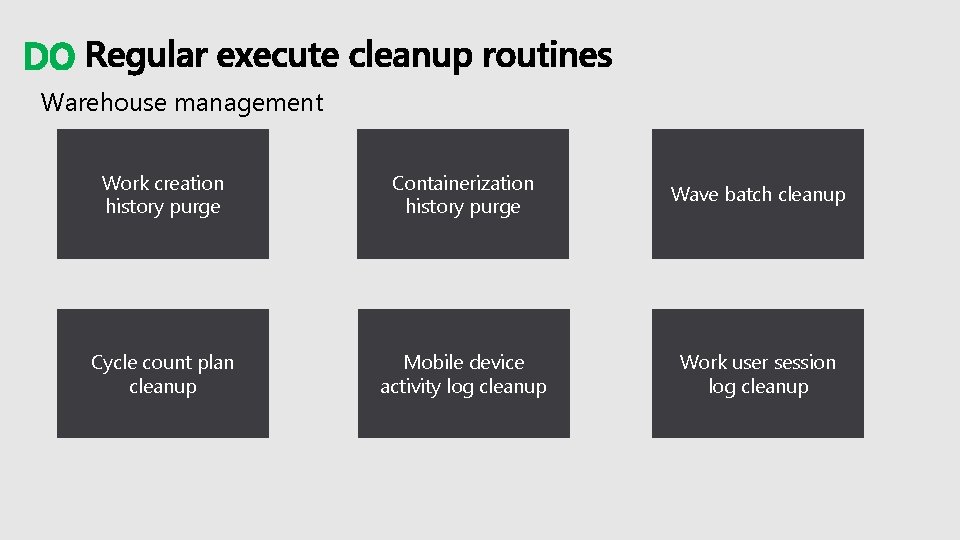

DO Warehouse management Work creation history purge Containerization history purge Wave batch cleanup Cycle count plan cleanup Mobile device activity log cleanup Work user session log cleanup

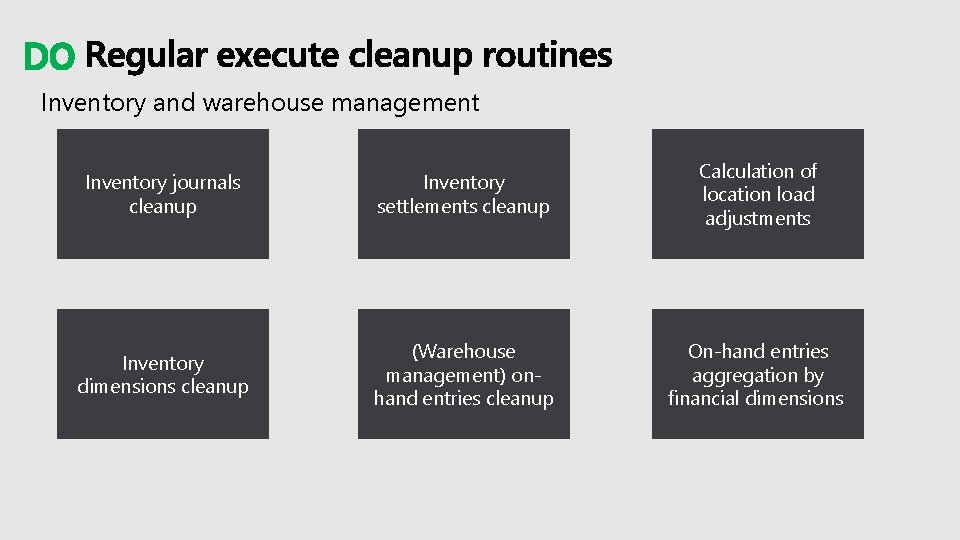

DO Inventory and warehouse management Inventory journals cleanup Inventory settlements cleanup Calculation of location load adjustments Inventory dimensions cleanup (Warehouse management) onhand entries cleanup On-hand entries aggregation by financial dimensions

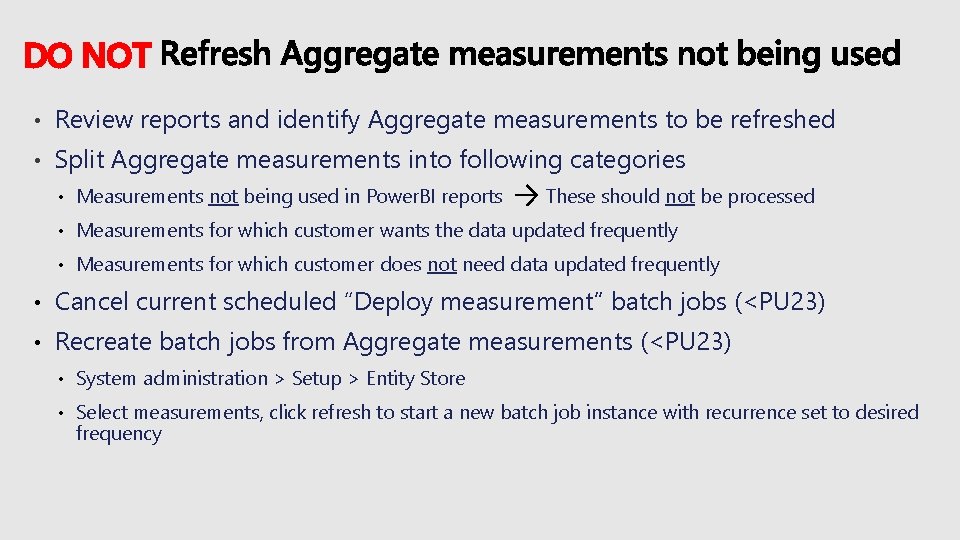

DO NOT • Review reports and identify Aggregate measurements to be refreshed • Split Aggregate measurements into following categories • Measurements not being used in Power. BI reports These should not be processed • Measurements for which customer wants the data updated frequently • Measurements for which customer does not need data updated frequently • Cancel current scheduled “Deploy measurement” batch jobs (<PU 23) • Recreate batch jobs from Aggregate measurements (<PU 23) • System administration > Setup > Entity Store • Select measurements, click refresh to start a new batch job instance with recurrence set to desired frequency

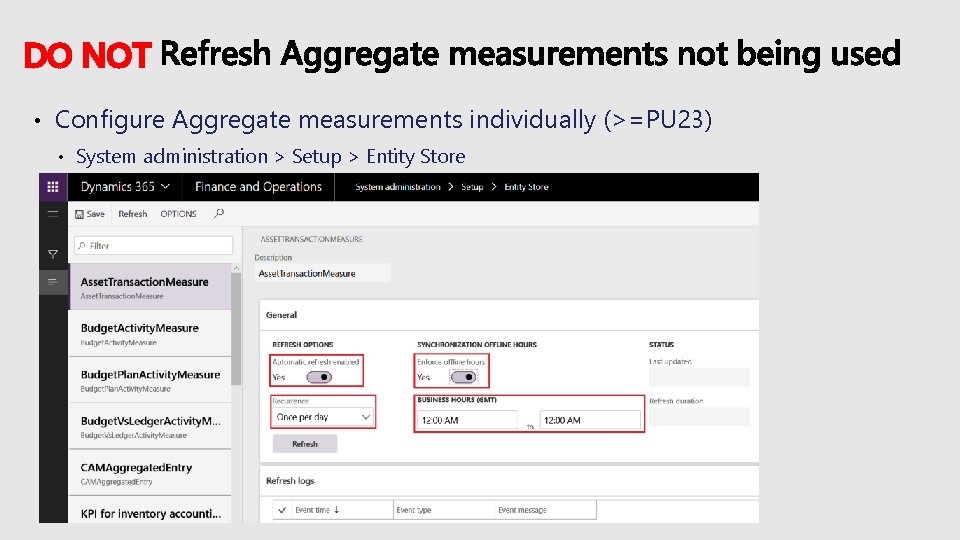

DO NOT • Configure Aggregate measurements individually (>=PU 23) • System administration > Setup > Entity Store

DO • • Hotfixes (X++ and Binary updates) delivered by Microsoft • Fix standard product bugs • Improve performance/stability • Improve customizability (e. g. new extension points) Define a periodic process to include them in releases • • Staying current on hotfixes will limit the risk of facing • • Include Binary and Critical X++ updates (visible in LCS environment detail page) Standard bugs, Crashes, Performance issues Enforced by One version

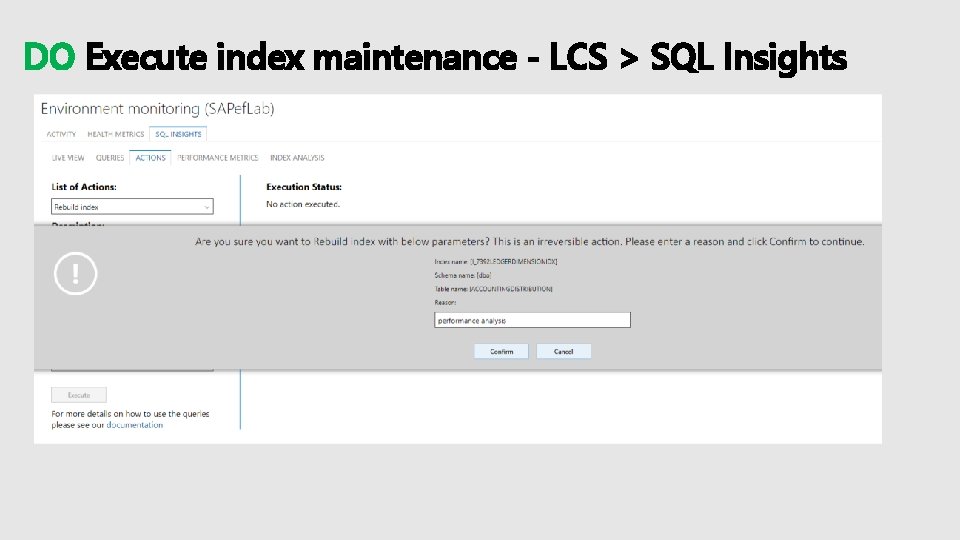

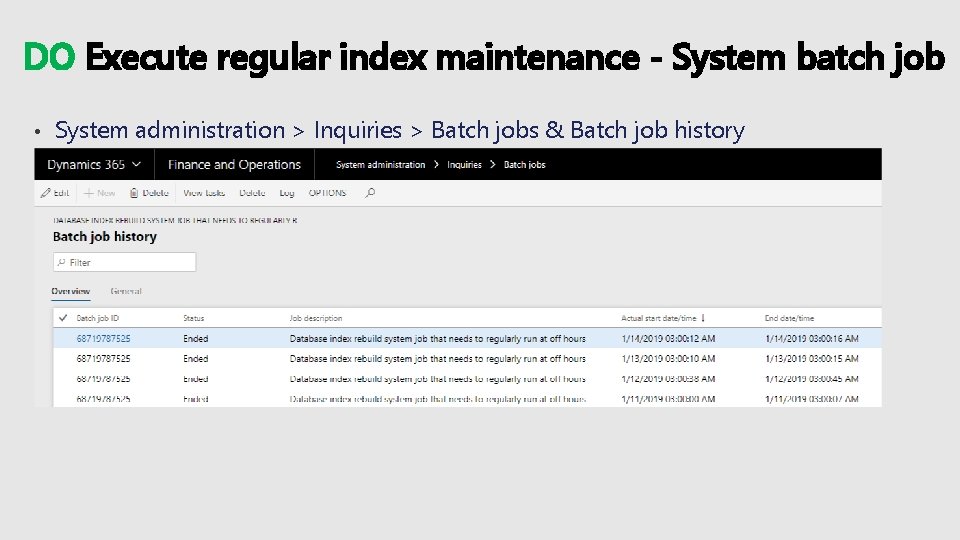

DO • Index fragmentation has not same impact as past due to improvements in storage technologies • For some index's fragmentation is still impactful • These indexes are difficult to rebuild automatically by Microsoft as it can impact critical workloads • Current options for doing index maintenance • LCS > SQL Insights (ad-hoc troubleshooting, Self-service) • System batch job (>=PU 22) • Scheduling capability based on business rhythm; index maintenance based on parameter settings

DO Execute index maintenance - LCS > SQL Insights

DO Execute regular index maintenance - System batch job • System administration > Inquiries > Batch jobs & Batch job history

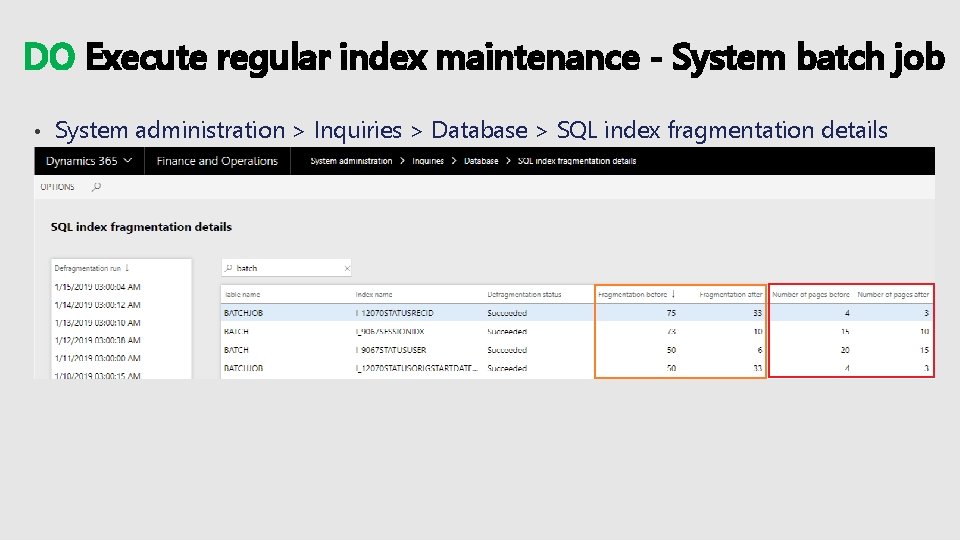

DO Execute regular index maintenance - System batch job • System administration > Inquiries > Database > SQL index fragmentation details

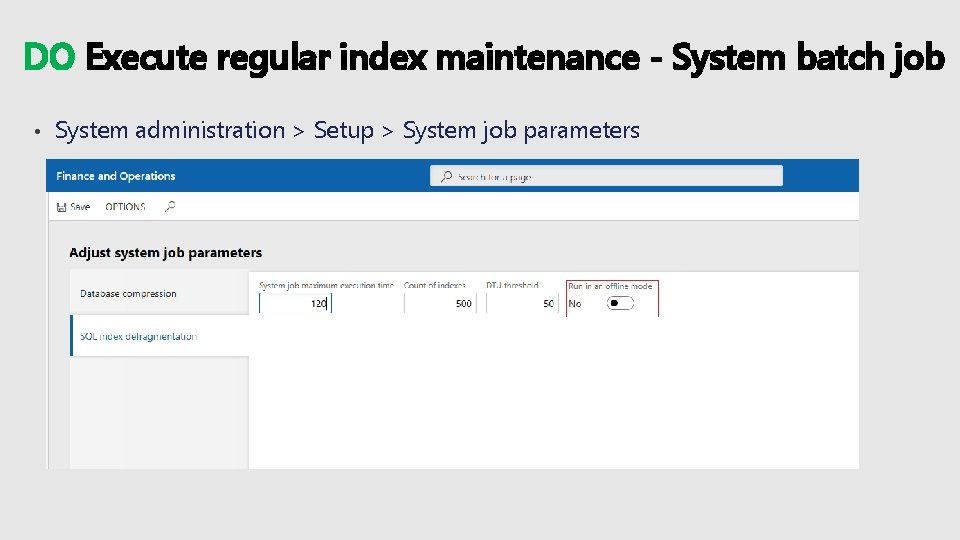

DO Execute regular index maintenance - System batch job • System administration > Setup > System job parameters

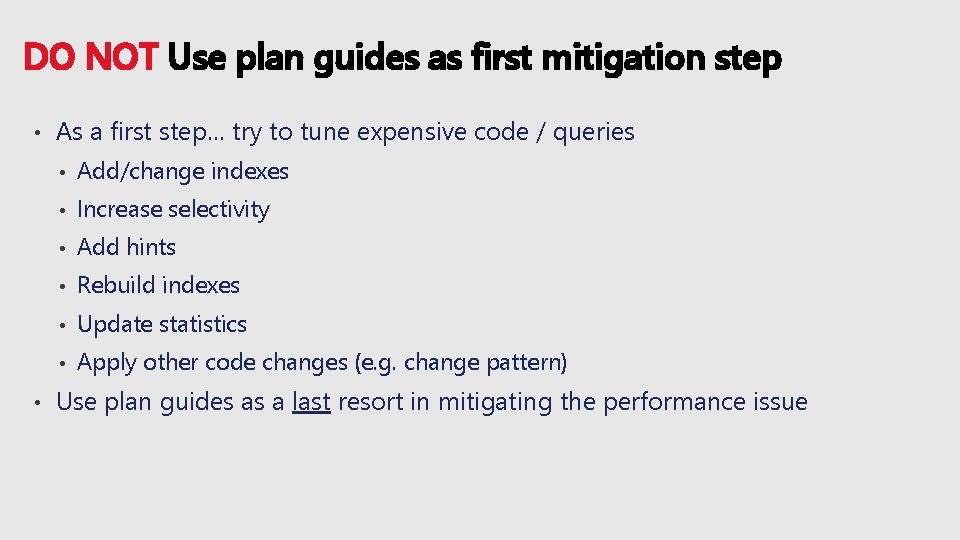

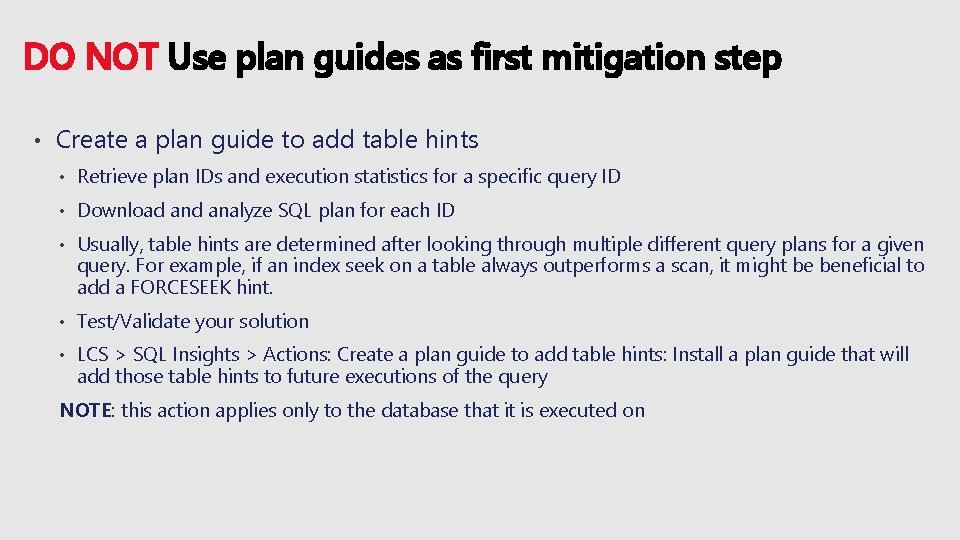

DO NOT Use plan guides as first mitigation step • • As a first step… try to tune expensive code / queries • Add/change indexes • Increase selectivity • Add hints • Rebuild indexes • Update statistics • Apply other code changes (e. g. change pattern) Use plan guides as a last resort in mitigating the performance issue

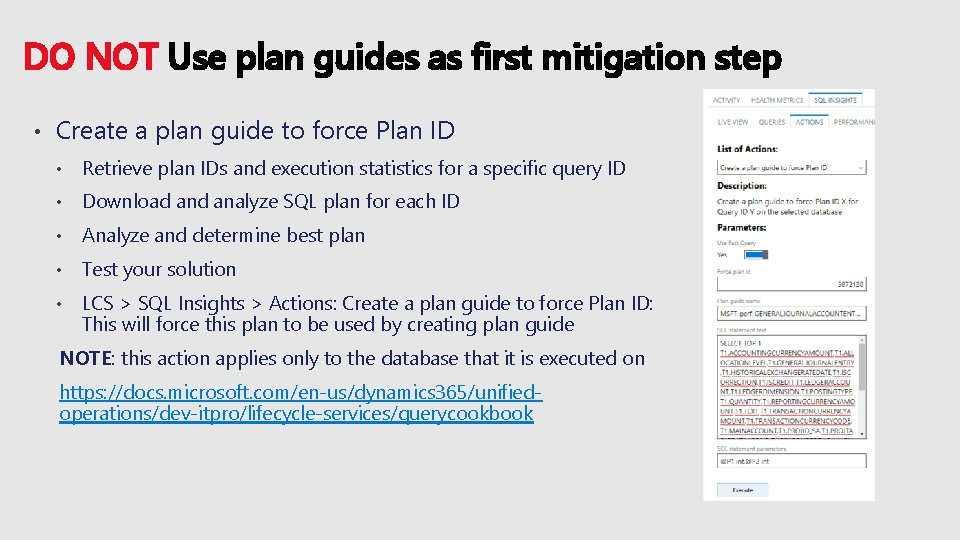

DO NOT Use plan guides as first mitigation step • Create a plan guide to force Plan ID • Retrieve plan IDs and execution statistics for a specific query ID • Download analyze SQL plan for each ID • Analyze and determine best plan • Test your solution • LCS > SQL Insights > Actions: Create a plan guide to force Plan ID: This will force this plan to be used by creating plan guide NOTE: this action applies only to the database that it is executed on https: //docs. microsoft. com/en-us/dynamics 365/unifiedoperations/dev-itpro/lifecycle-services/querycookbook

DO NOT Use plan guides as first mitigation step • Create a plan guide to add table hints • Retrieve plan IDs and execution statistics for a specific query ID • Download analyze SQL plan for each ID • Usually, table hints are determined after looking through multiple different query plans for a given query. For example, if an index seek on a table always outperforms a scan, it might be beneficial to add a FORCESEEK hint. • Test/Validate your solution • LCS > SQL Insights > Actions: Create a plan guide to add table hints: Install a plan guide that will add those table hints to future executions of the query NOTE: this action applies only to the database that it is executed on

Case study After go live customer was trying to post FA Journals of 200 K lines, which was taking over ~20 hours. After reviewing the all setup and telemetry, we suggested following changes that reduced FA journal posting time form ~20 hours to ~4 hours. • Ensure that the batch group being used has multiple AOSs assigned. • Ensure they are posting via batch. • Ensure all the appropriate number sequences are set to non-continuous with pre-allocation. • Stop using “Synchronous” batch transfer rule • Stop using one voucher for all 200 k journals lines. If same voucher is shared for all journal lines, then batch bundling doesn’t work. Journal posting happens in single threaded. • Specify a value for Lines limit at General ledger > Journal setup > Journal names > Lines limit, if the journals has huge line numbers then suggestion is to split in several small journals to speed up posting.

Tools for Performance and Troubleshooting

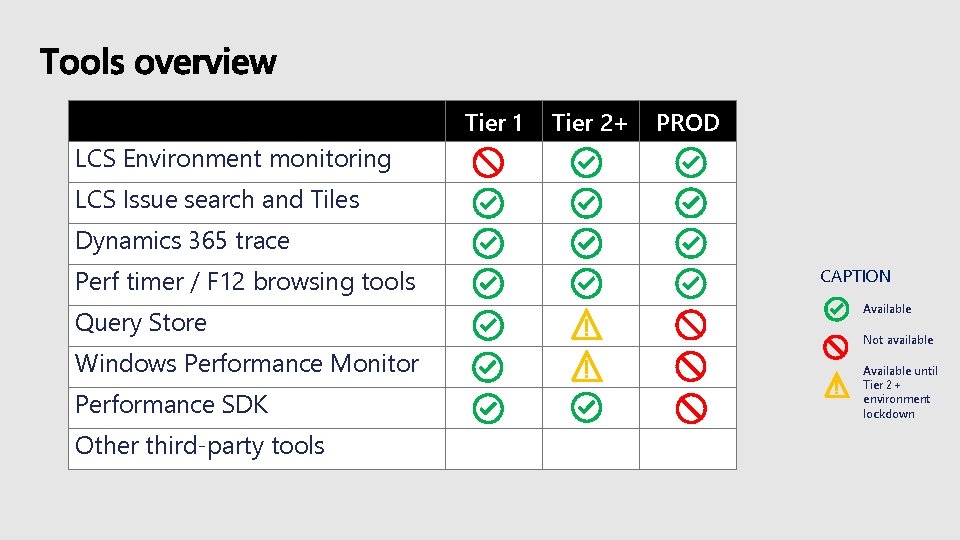

Tier 1 Tier 2+ PROD LCS Environment monitoring LCS Issue search and Tiles Dynamics 365 trace Perf timer / F 12 browsing tools Query Store Windows Performance Monitor Performance SDK Other third-party tools CAPTION Available Not available Available until Tier 2+ environment lockdown

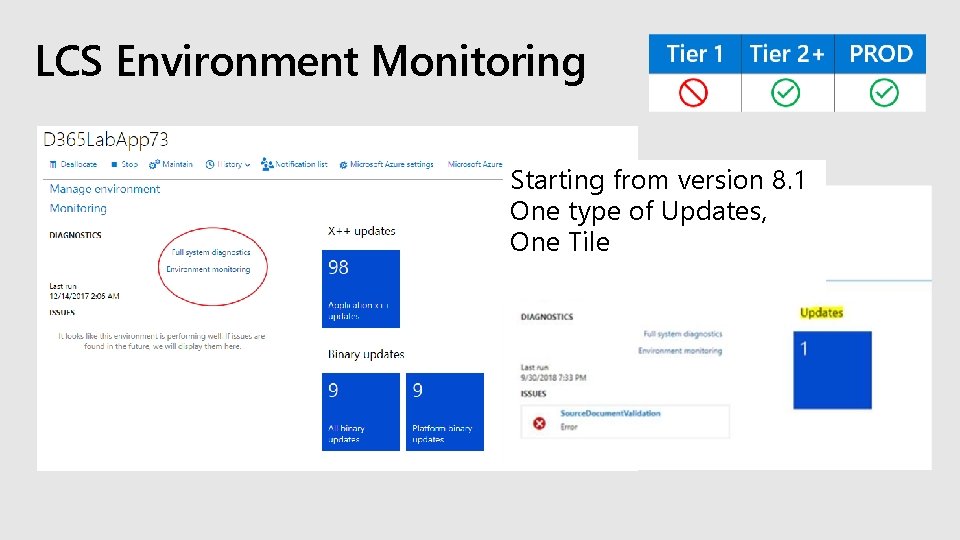

LCS Environment Monitoring Starting from version 8. 1 One type of Updates, One Tile

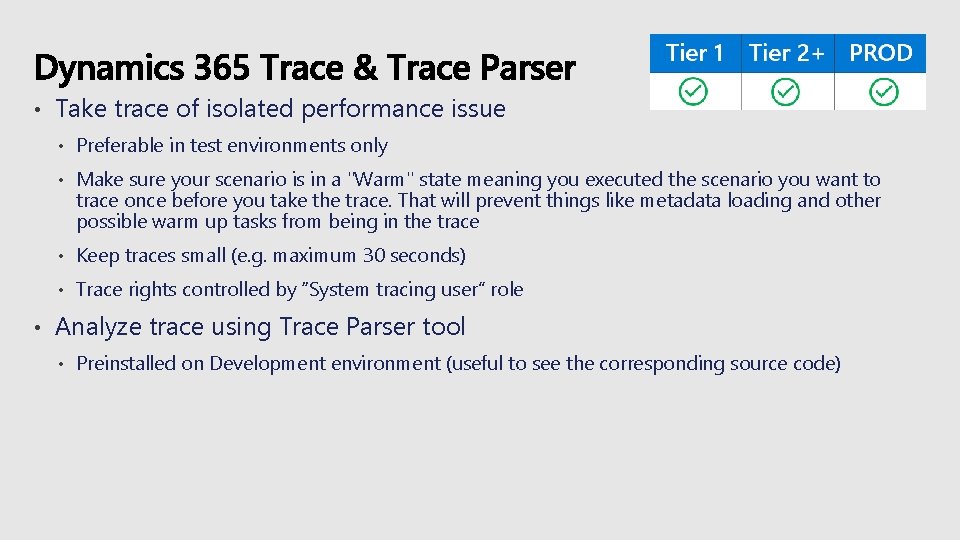

• • Take trace of isolated performance issue • Preferable in test environments only • Make sure your scenario is in a "Warm" state meaning you executed the scenario you want to trace once before you take the trace. That will prevent things like metadata loading and other possible warm up tasks from being in the trace • Keep traces small (e. g. maximum 30 seconds) • Trace rights controlled by “System tracing user” role Analyze trace using Trace Parser tool • Preinstalled on Development environment (useful to see the corresponding source code)

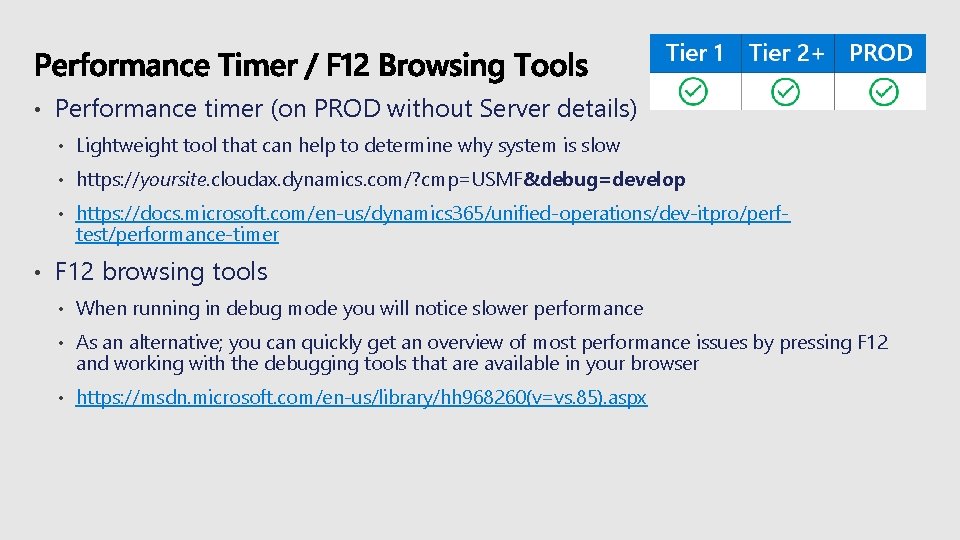

• • Performance timer (on PROD without Server details) • Lightweight tool that can help to determine why system is slow • https: //yoursite. cloudax. dynamics. com/? cmp=USMF&debug=develop • https: //docs. microsoft. com/en-us/dynamics 365/unified-operations/dev-itpro/perftest/performance-timer F 12 browsing tools • When running in debug mode you will notice slower performance • As an alternative; you can quickly get an overview of most performance issues by pressing F 12 and working with the debugging tools that are available in your browser • https: //msdn. microsoft. com/en-us/library/hh 968260(v=vs. 85). aspx

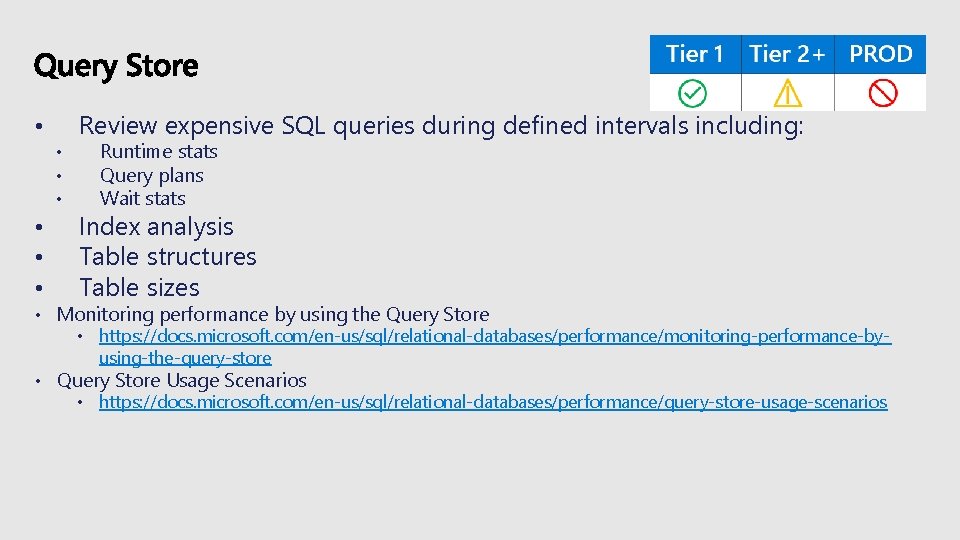

Review expensive SQL queries during defined intervals including: • • Runtime stats Query plans Wait stats Index analysis Table structures Table sizes • Monitoring performance by using the Query Store • https: //docs. microsoft. com/en-us/sql/relational-databases/performance/monitoring-performance-byusing-the-query-store • Query Store Usage Scenarios • https: //docs. microsoft. com/en-us/sql/relational-databases/performance/query-store-usage-scenarios

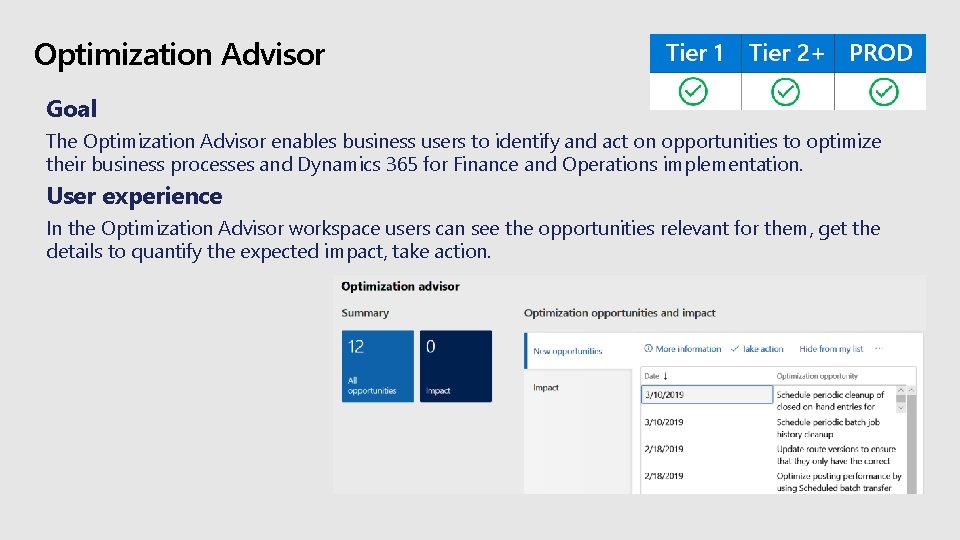

Optimization Advisor Goal The Optimization Advisor enables business users to identify and act on opportunities to optimize their business processes and Dynamics 365 for Finance and Operations implementation. User experience In the Optimization Advisor workspace users can see the opportunities relevant for them, get the details to quantify the expected impact, take action.

Q&A

Evaluate this session https: //aka. ms/MBASeventapp

- Slides: 59