Part III Hierarchical Bayesian Models Universal Grammar Phrase

Part III Hierarchical Bayesian Models

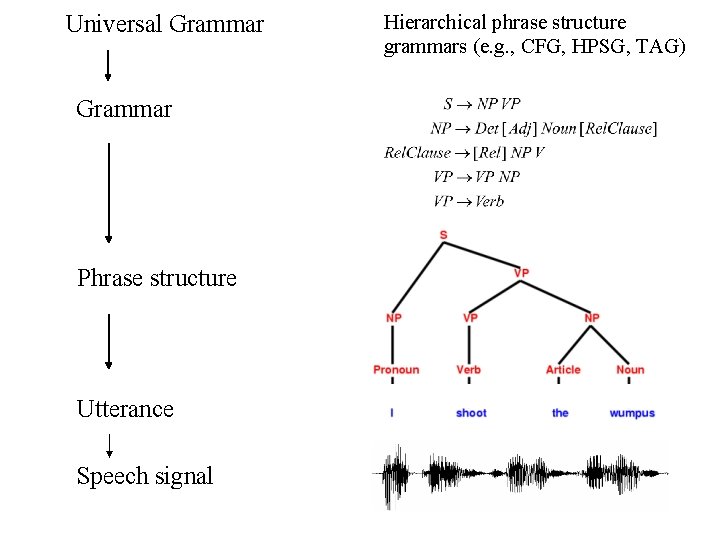

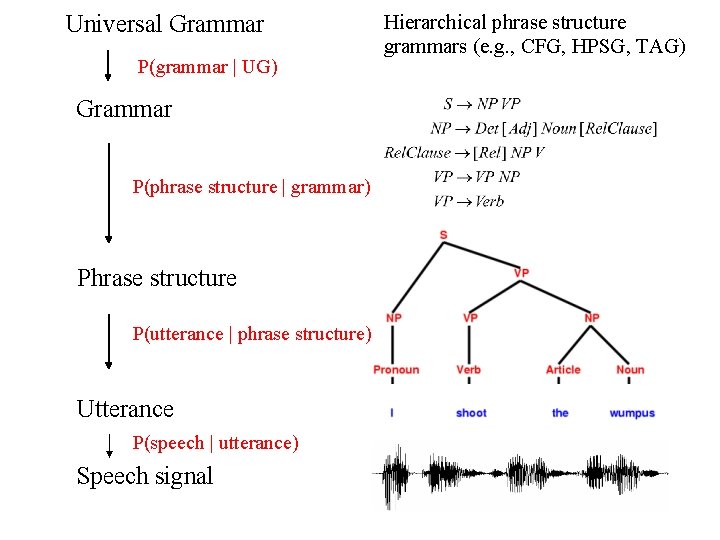

Universal Grammar Phrase structure Utterance Speech signal Hierarchical phrase structure grammars (e. g. , CFG, HPSG, TAG)

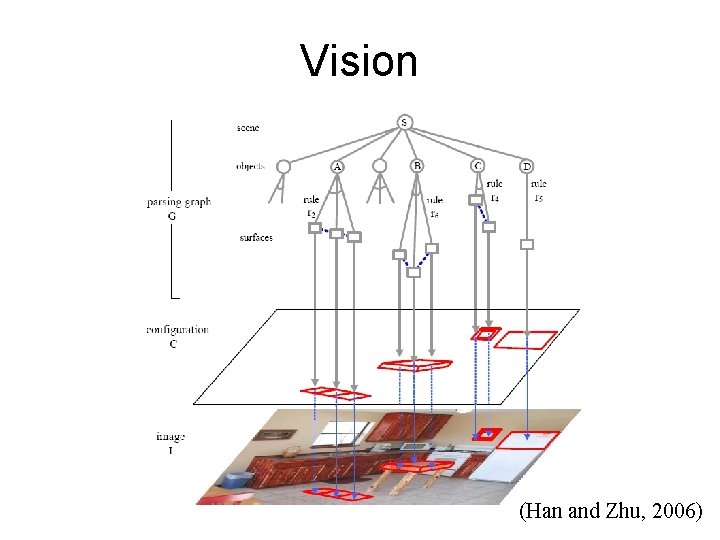

Vision (Han and Zhu, 2006)

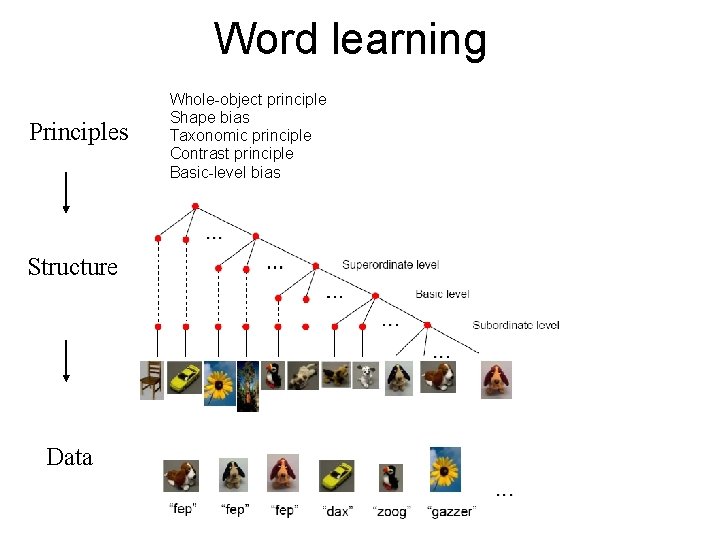

Word learning Principles Structure Data Whole-object principle Shape bias Taxonomic principle Contrast principle Basic-level bias

Hierarchical Bayesian models • Can represent and reason about knowledge at multiple levels of abstraction. • Have been used by statisticians for many years.

Hierarchical Bayesian models • Can represent and reason about knowledge at multiple levels of abstraction. • Have been used by statisticians for many years. • Have been applied to many cognitive problems: – causal reasoning – language – vision – word learning – decision making (Mansinghka et al, 06) (Chater and Manning, 06) (Fei-Fei, Fergus, Perona, 03) (Kemp, Perfors, Tenenbaum, 06) (Lee, 06)

Outline • A high-level view of HBMs • A case study – Semantic knowledge

Universal Grammar P(grammar | UG) Grammar P(phrase structure | grammar) Phrase structure P(utterance | phrase structure) Utterance P(speech | utterance) Speech signal Hierarchical phrase structure grammars (e. g. , CFG, HPSG, TAG)

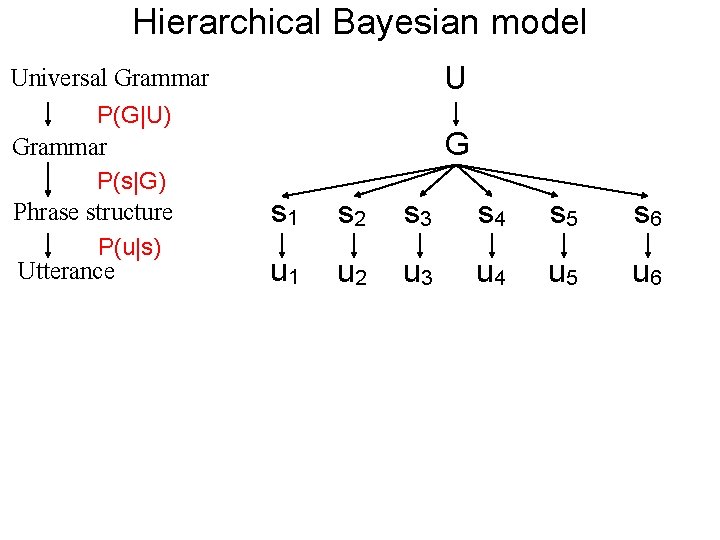

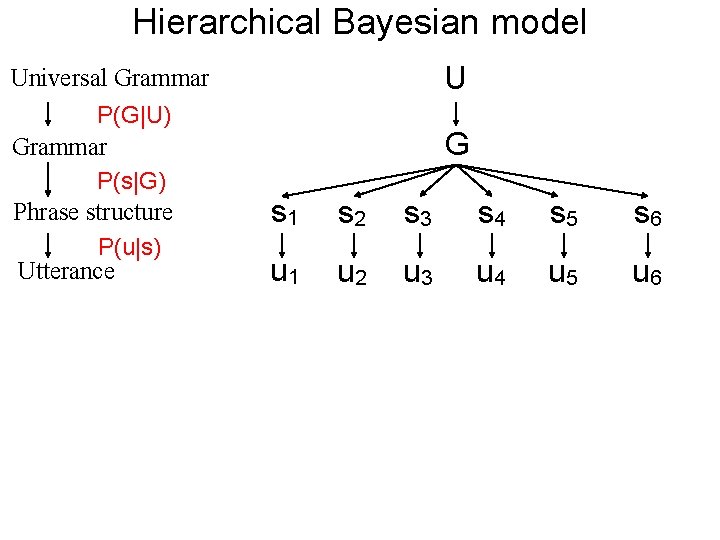

Hierarchical Bayesian model Universal Grammar P(G|U) Grammar P(s|G) Phrase structure P(u|s) Utterance U G s 1 s 2 s 3 s 4 s 5 s 6 u 1 u 2 u 3 u 4 u 5 u 6

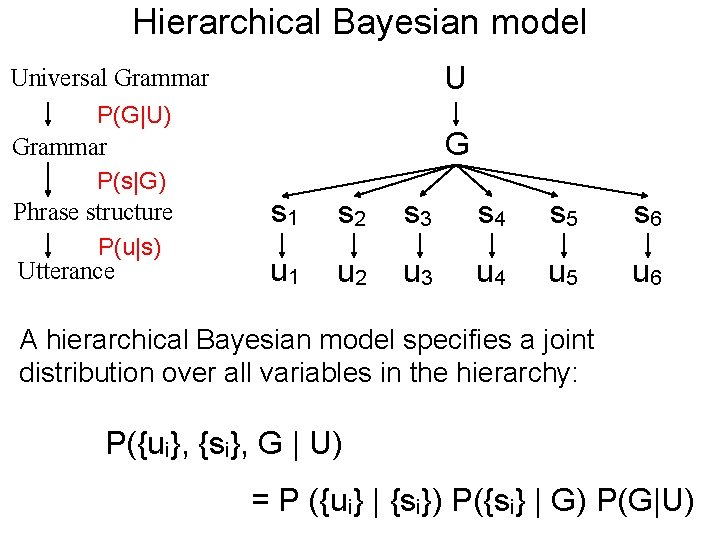

Hierarchical Bayesian model Universal Grammar P(G|U) Grammar P(s|G) Phrase structure P(u|s) Utterance U G s 1 s 2 s 3 s 4 s 5 s 6 u 1 u 2 u 3 u 4 u 5 u 6 A hierarchical Bayesian model specifies a joint distribution over all variables in the hierarchy: P({ui}, {si}, G | U) = P ({ui} | {si}) P({si} | G) P(G|U)

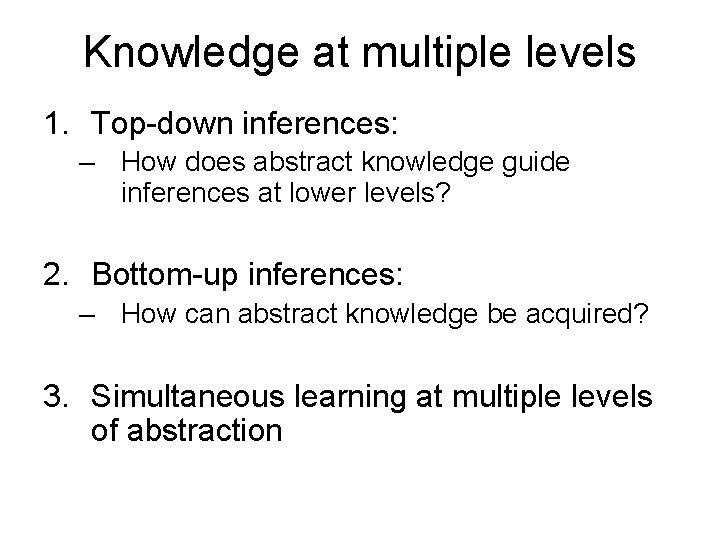

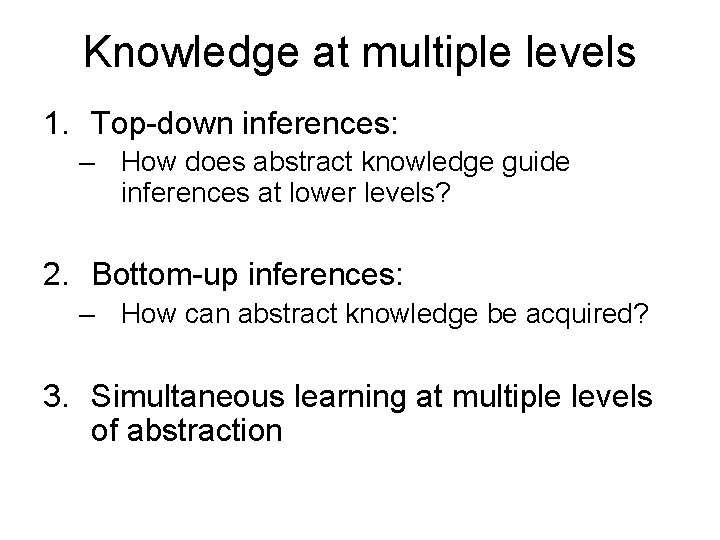

Knowledge at multiple levels 1. Top-down inferences: – How does abstract knowledge guide inferences at lower levels? 2. Bottom-up inferences: – How can abstract knowledge be acquired? 3. Simultaneous learning at multiple levels of abstraction

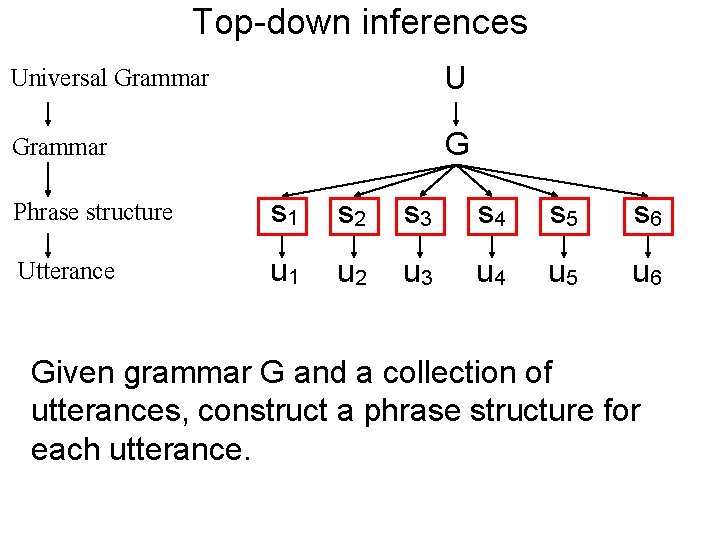

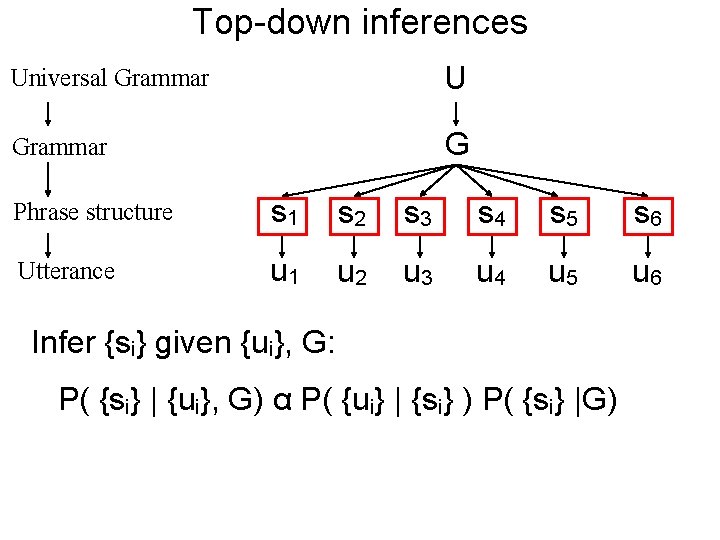

Top-down inferences Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 Given grammar G and a collection of utterances, construct a phrase structure for each utterance.

Top-down inferences Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 Infer {si} given {ui}, G: P( {si} | {ui}, G) α P( {ui} | {si} ) P( {si} |G)

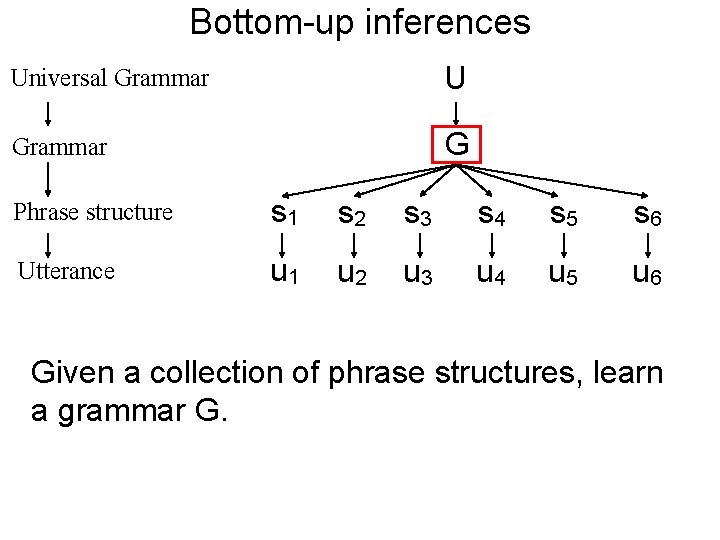

Bottom-up inferences Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 Given a collection of phrase structures, learn a grammar G.

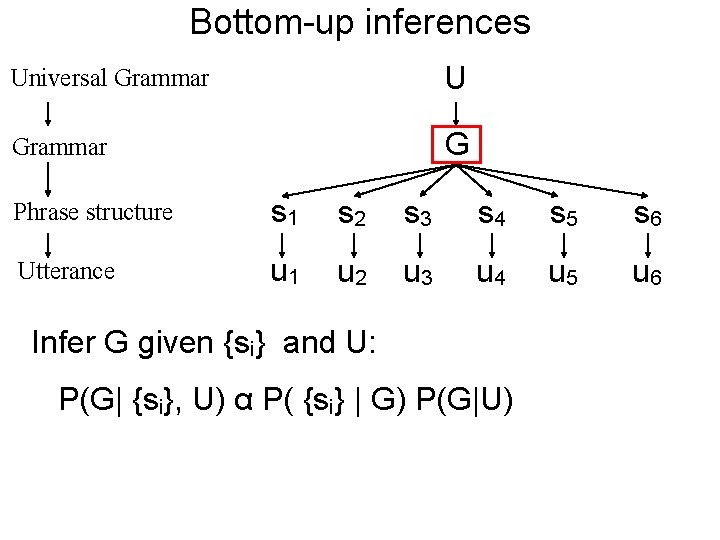

Bottom-up inferences Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 Infer G given {si} and U: P(G| {si}, U) α P( {si} | G) P(G|U)

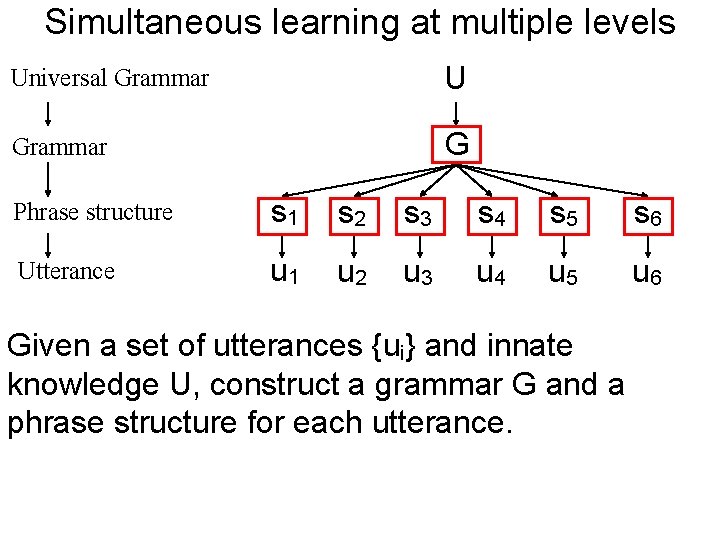

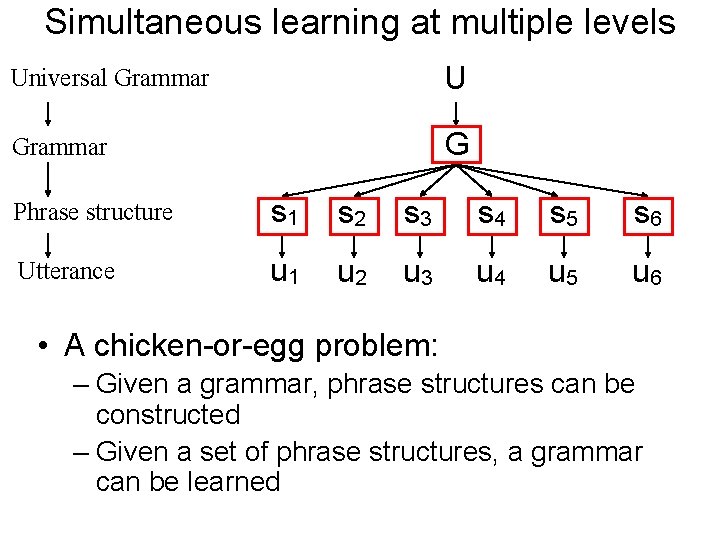

Simultaneous learning at multiple levels Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 Given a set of utterances {ui} and innate knowledge U, construct a grammar G and a phrase structure for each utterance.

Simultaneous learning at multiple levels Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 • A chicken-or-egg problem: – Given a grammar, phrase structures can be constructed – Given a set of phrase structures, a grammar can be learned

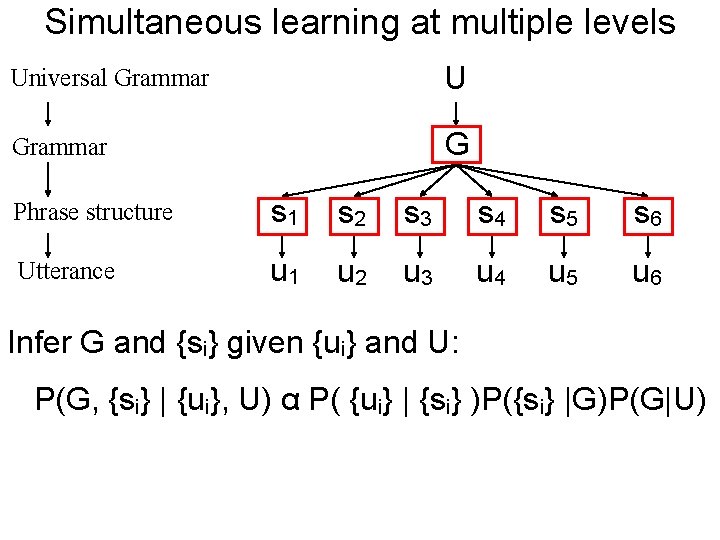

Simultaneous learning at multiple levels Universal Grammar U Grammar G Phrase structure s 1 s 2 s 3 s 4 s 5 s 6 Utterance u 1 u 2 u 3 u 4 u 5 u 6 Infer G and {si} given {ui} and U: P(G, {si} | {ui}, U) α P( {ui} | {si} )P({si} |G)P(G|U)

Hierarchical Bayesian model Universal Grammar P(G|U) Grammar P(s|G) Phrase structure P(u|s) Utterance U G s 1 s 2 s 3 s 4 s 5 s 6 u 1 u 2 u 3 u 4 u 5 u 6

Knowledge at multiple levels 1. Top-down inferences: – How does abstract knowledge guide inferences at lower levels? 2. Bottom-up inferences: – How can abstract knowledge be acquired? 3. Simultaneous learning at multiple levels of abstraction

Outline • A high-level view of HBMs • A case study: Semantic knowledge

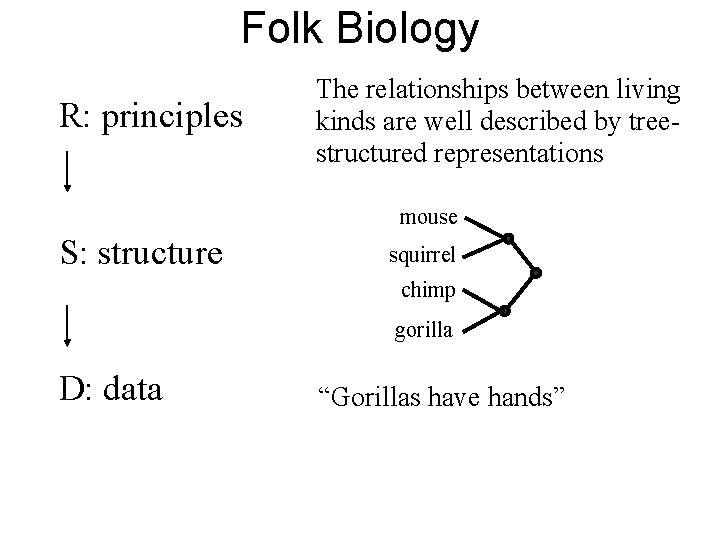

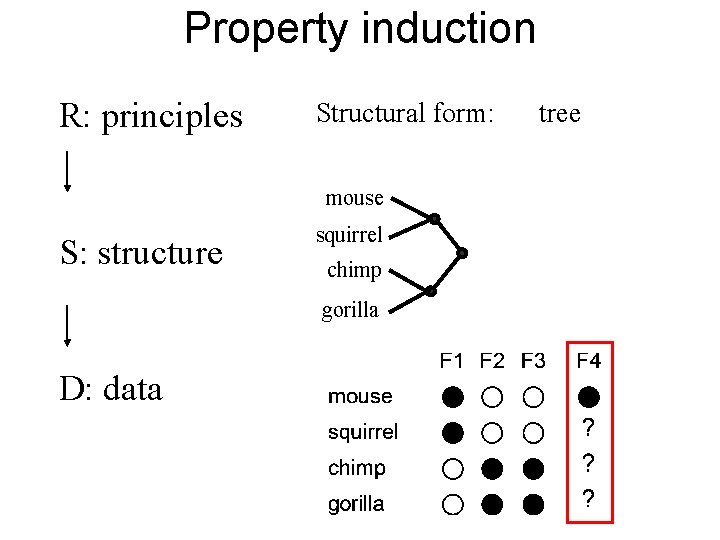

Folk Biology R: principles The relationships between living kinds are well described by treestructured representations mouse S: structure squirrel chimp gorilla D: data “Gorillas have hands”

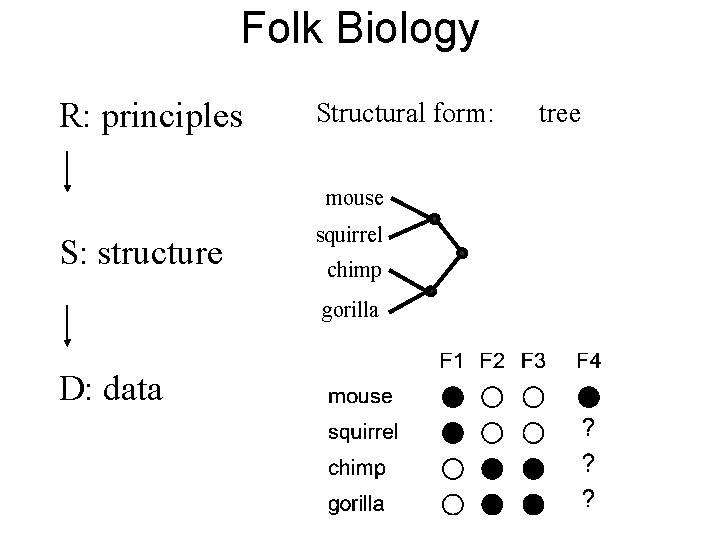

Folk Biology R: principles Structural form: mouse S: structure squirrel chimp gorilla D: data tree

Outline • A high-level view of HBMs • A case study: Semantic knowledge – Property induction – Learning structured representations – Learning the abstract organizing principles of a domain

Property induction R: principles Structural form: mouse S: structure squirrel chimp gorilla D: data tree

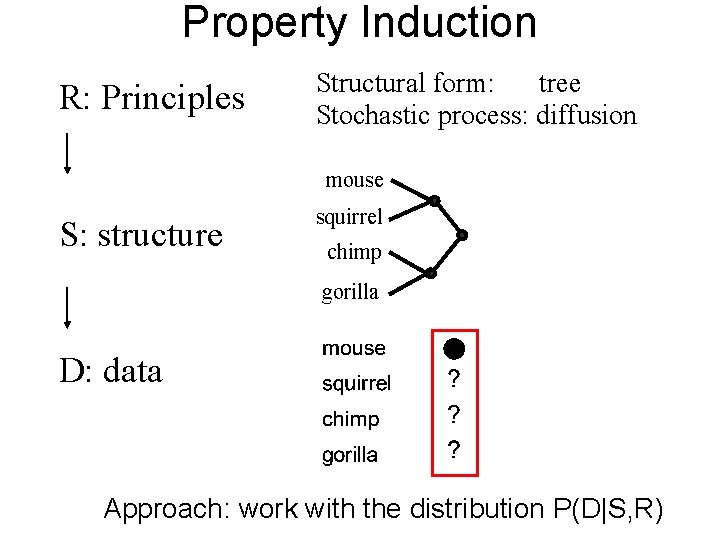

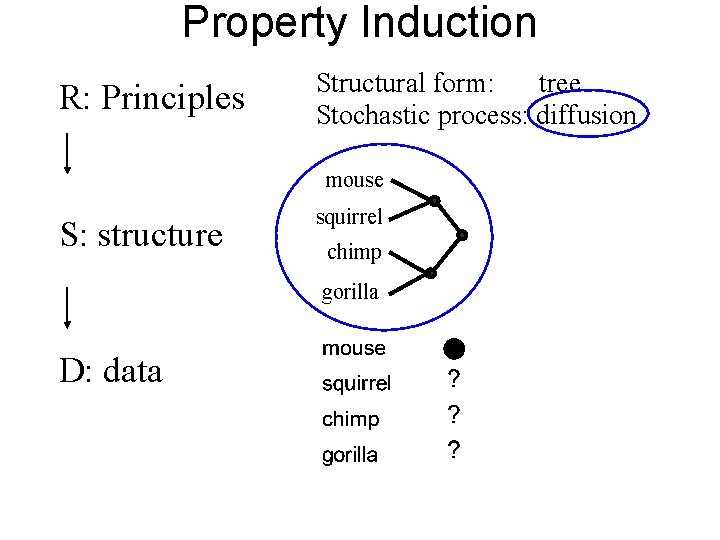

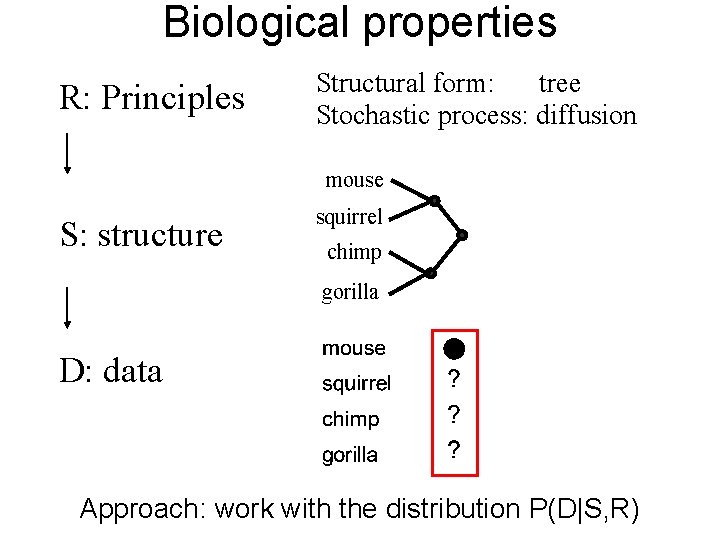

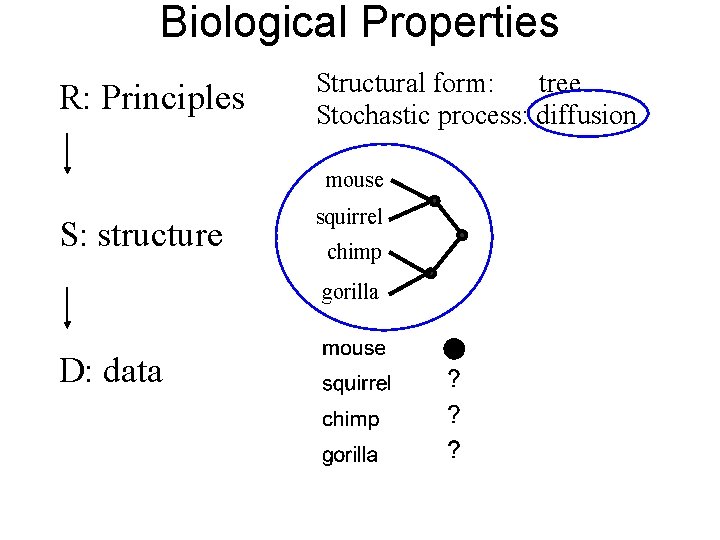

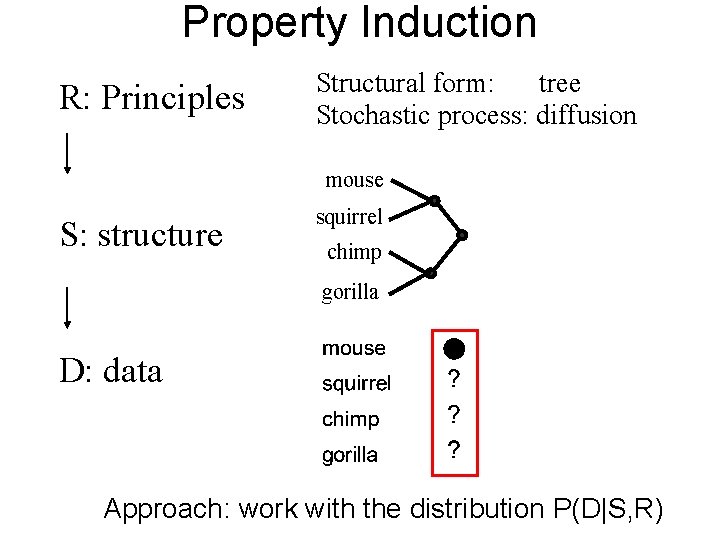

Property Induction R: Principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data Approach: work with the distribution P(D|S, R)

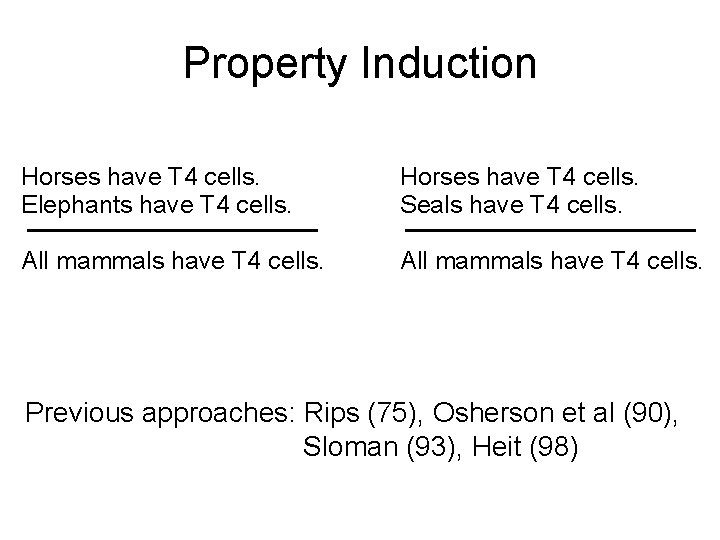

Property Induction Horses have T 4 cells. Elephants have T 4 cells. Horses have T 4 cells. Seals have T 4 cells. All mammals have T 4 cells. Previous approaches: Rips (75), Osherson et al (90), Sloman (93), Heit (98)

Bayesian Property Induction Hypotheses

Bayesian Property Induction Hypotheses

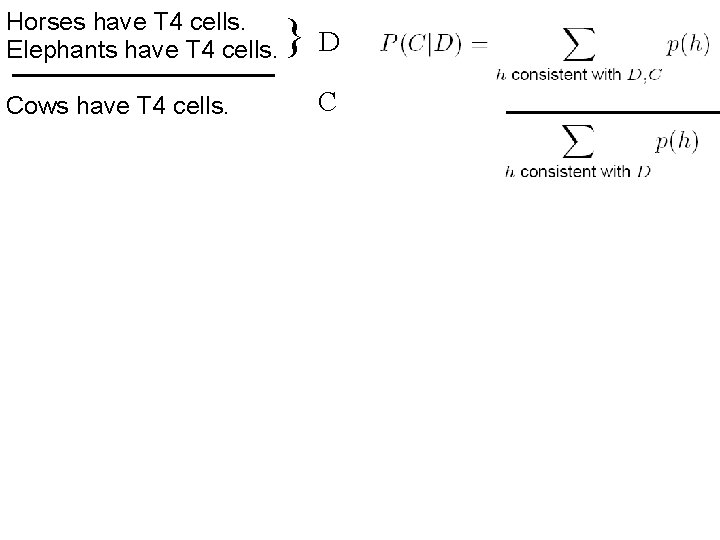

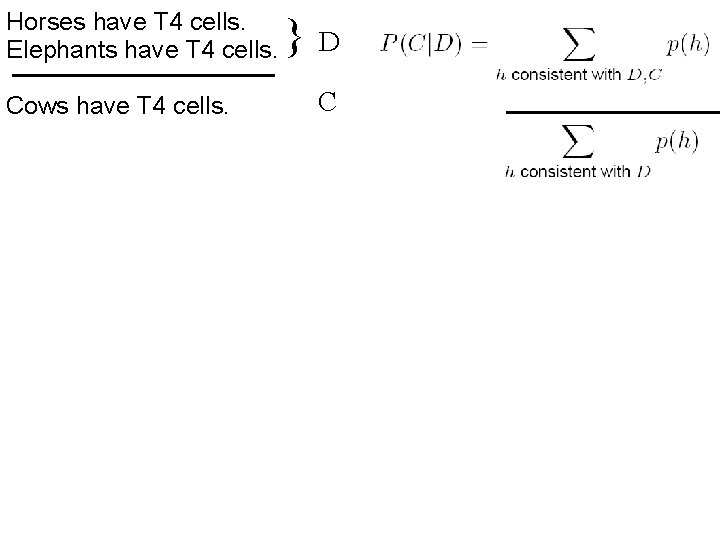

Horses have T 4 cells. Elephants have T 4 cells. Cows have T 4 cells. } D C

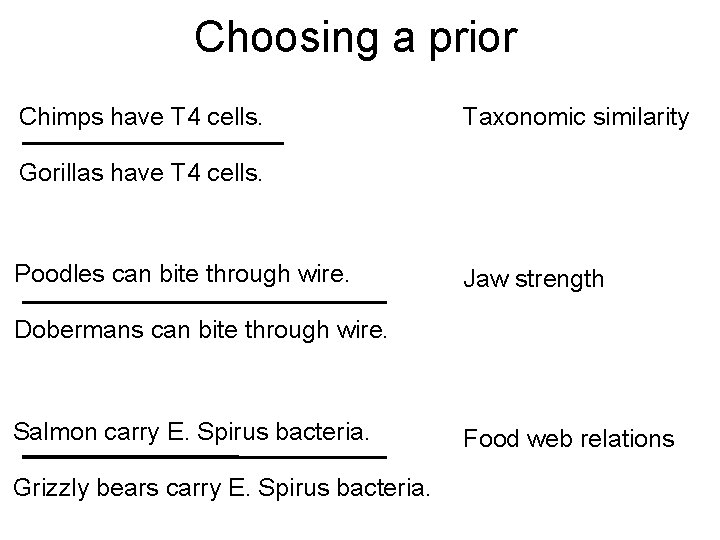

Choosing a prior Chimps have T 4 cells. Taxonomic similarity Gorillas have T 4 cells. Poodles can bite through wire. Jaw strength Dobermans can bite through wire. Salmon carry E. Spirus bacteria. Grizzly bears carry E. Spirus bacteria. Food web relations

Bayesian Property Induction • A challenge: – We have to specify the prior, which typically includes many numbers • An opportunity: – The prior can capture knowledge about the problem.

Property Induction R: Principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data

Biological properties • Structure: – Living kinds are organized into a tree • Stochastic process: – Nearby species in the tree tend to share properties

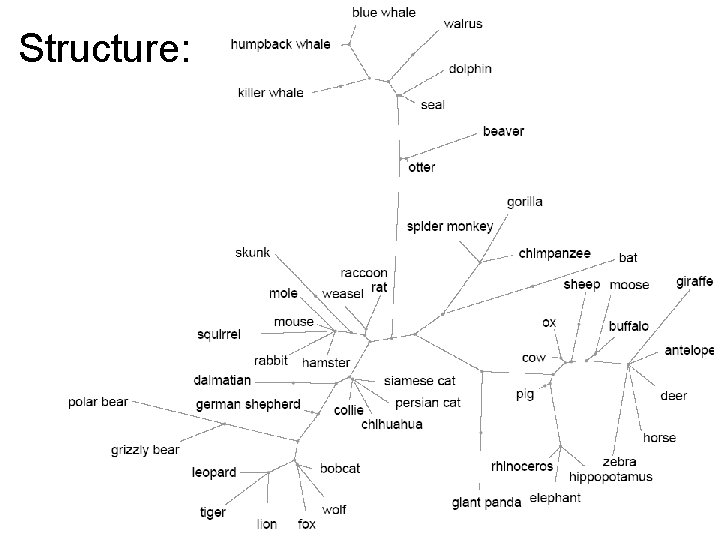

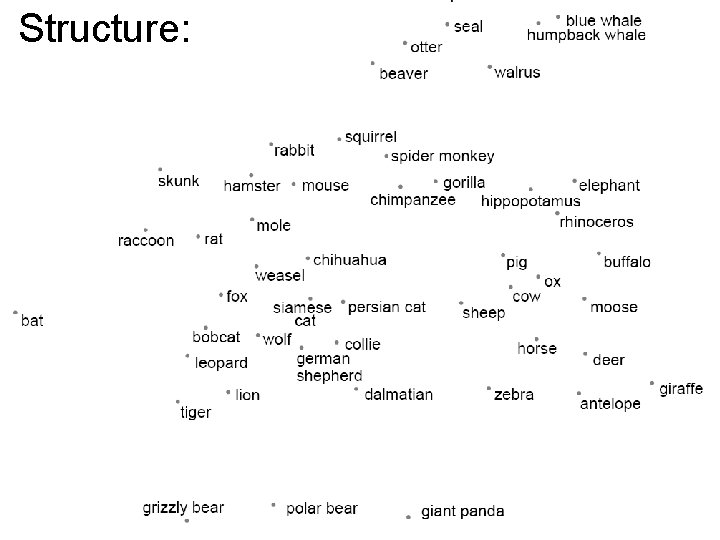

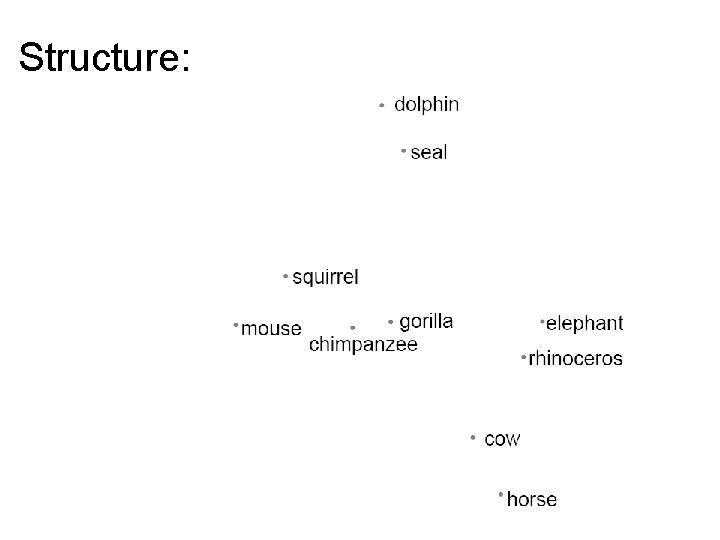

Structure:

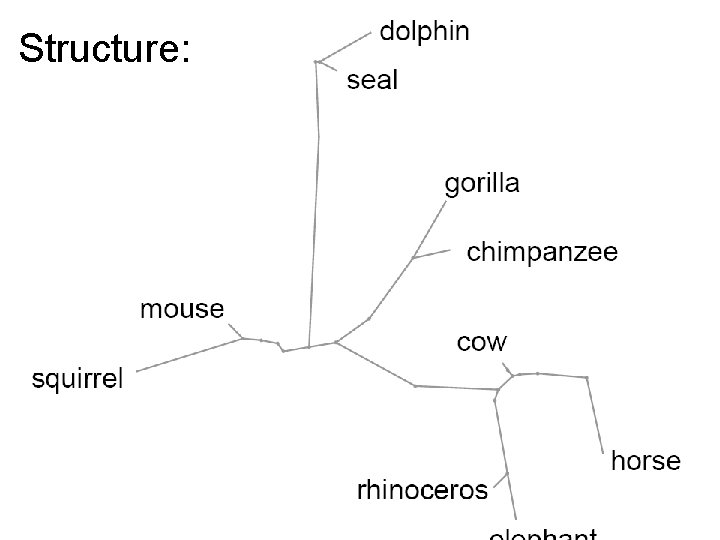

Structure:

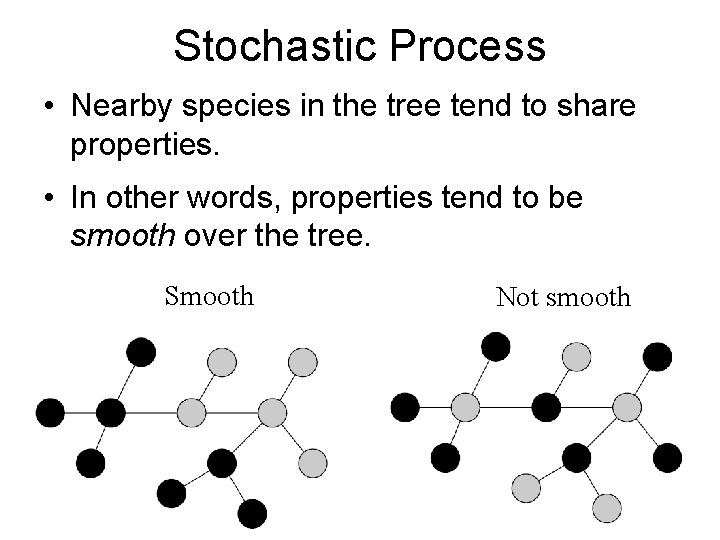

Stochastic Process • Nearby species in the tree tend to share properties. • In other words, properties tend to be smooth over the tree. Smooth Not smooth

Stochastic process Hypotheses

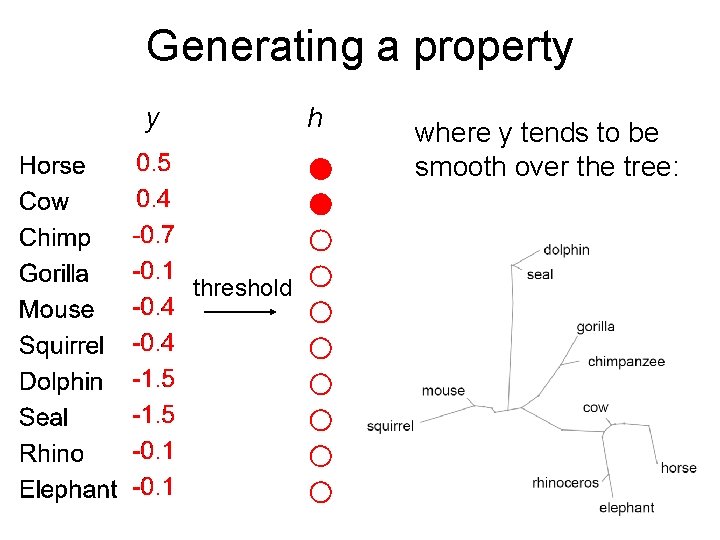

Generating a property y h threshold where y tends to be smooth over the tree:

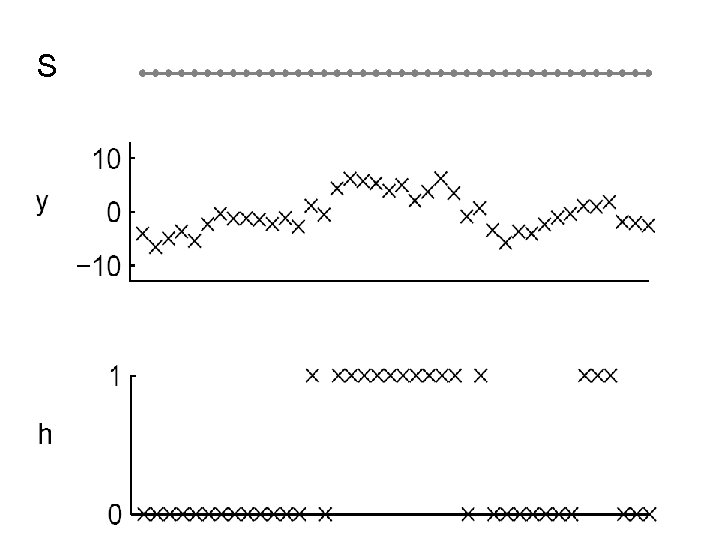

S

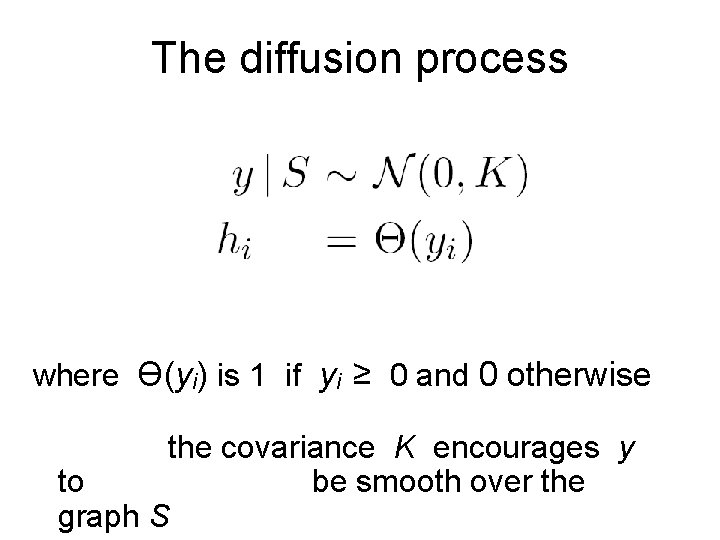

The diffusion process where Ө(yi) is 1 if yi ≥ 0 and 0 otherwise the covariance K encourages y to be smooth over the graph S

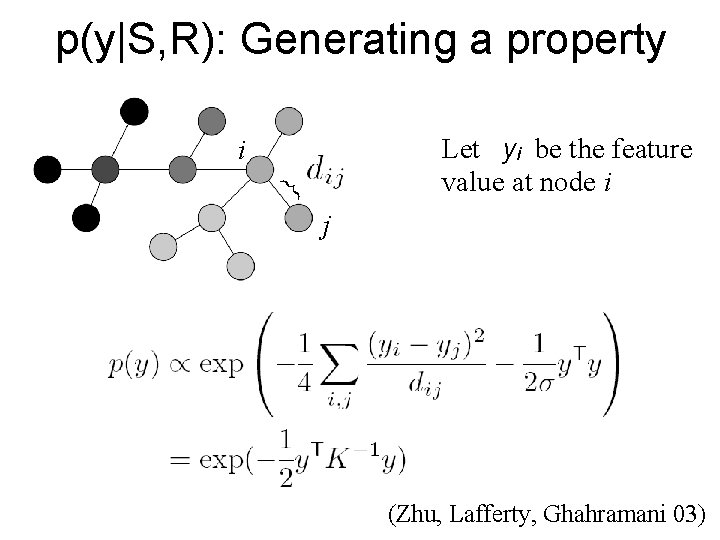

p(y|S, R): Generating a property Let yi be the feature value at node i } i j (Zhu, Lafferty, Ghahramani 03)

Biological properties R: Principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data Approach: work with the distribution P(D|S, R)

Horses have T 4 cells. Elephants have T 4 cells. Cows have T 4 cells. } D C

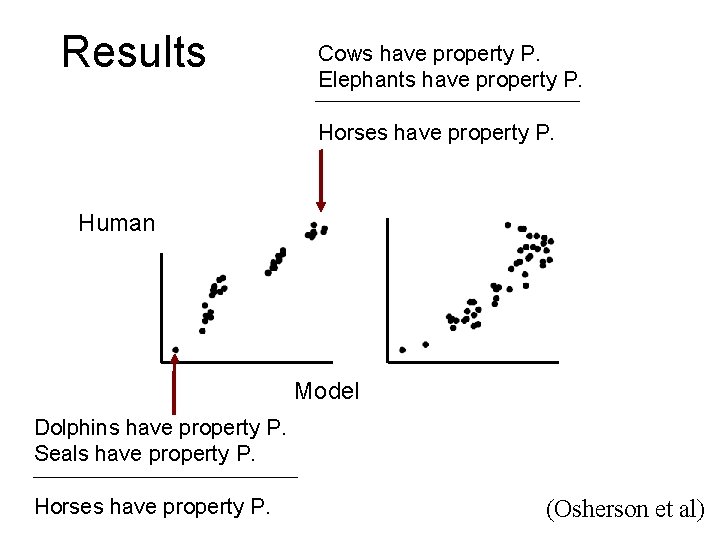

Results Cows have property P. Elephants have property P. Horses have property P. Human Model Dolphins have property P. Seals have property P. Horses have property P. (Osherson et al)

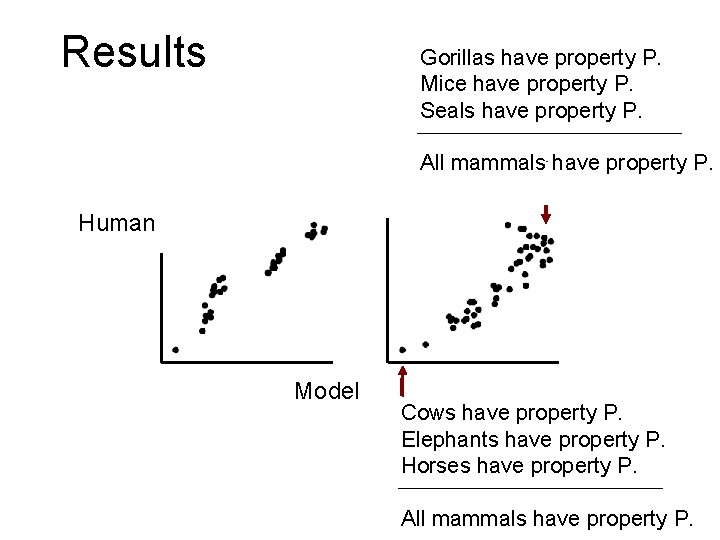

Results Gorillas have property P. Mice have property P. Seals have property P. All mammals have property P. Human Model Cows have property P. Elephants have property P. Horses have property P. All mammals have property P.

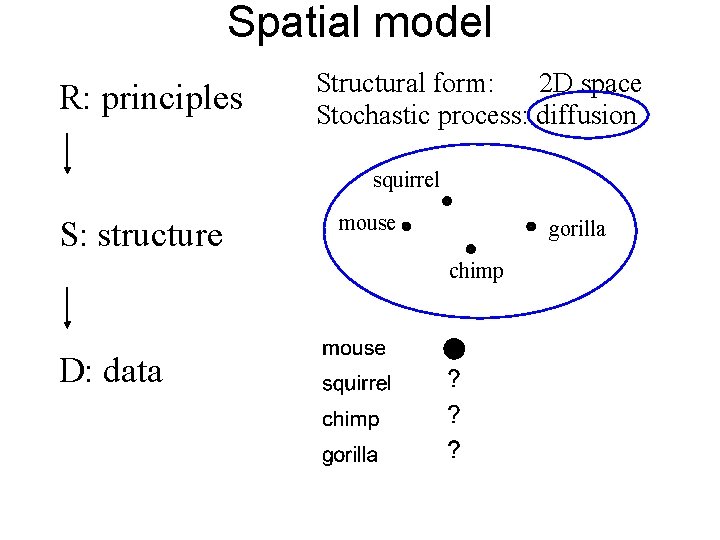

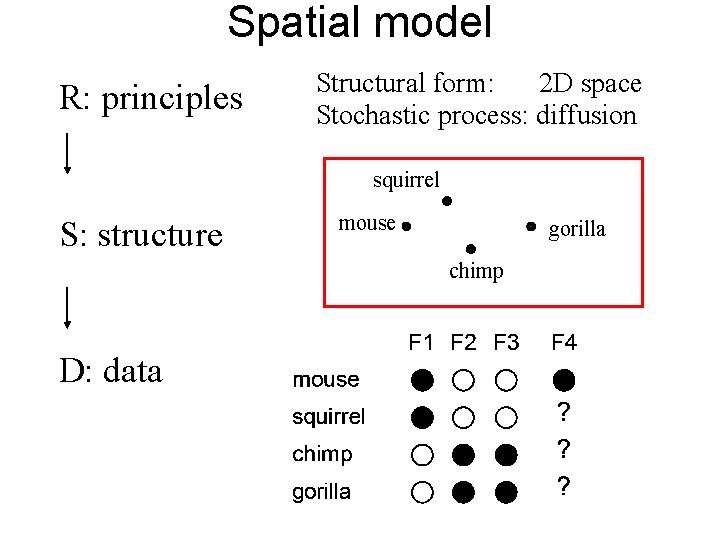

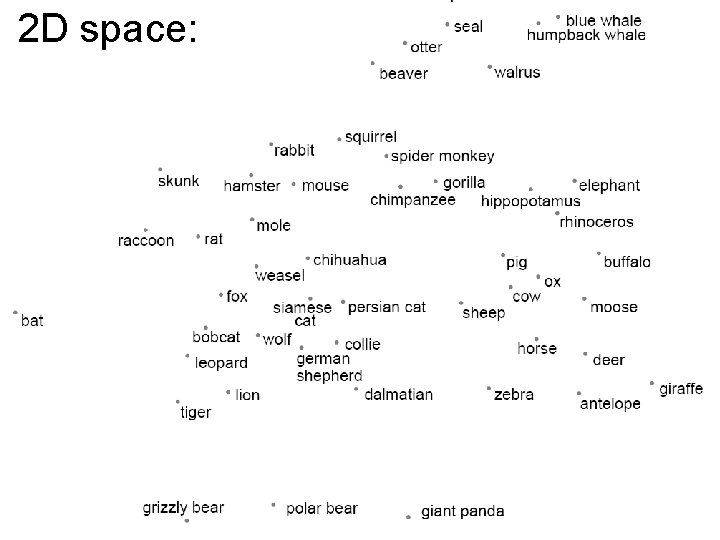

Spatial model R: principles Structural form: 2 D space Stochastic process: diffusion squirrel S: structure mouse gorilla chimp D: data

Structure:

Structure:

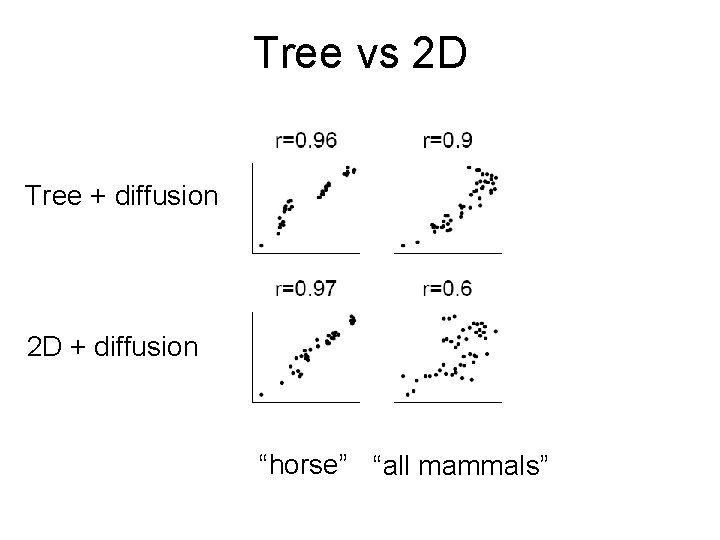

Tree vs 2 D Tree + diffusion 2 D + diffusion “horse” “all mammals”

Biological Properties R: Principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data

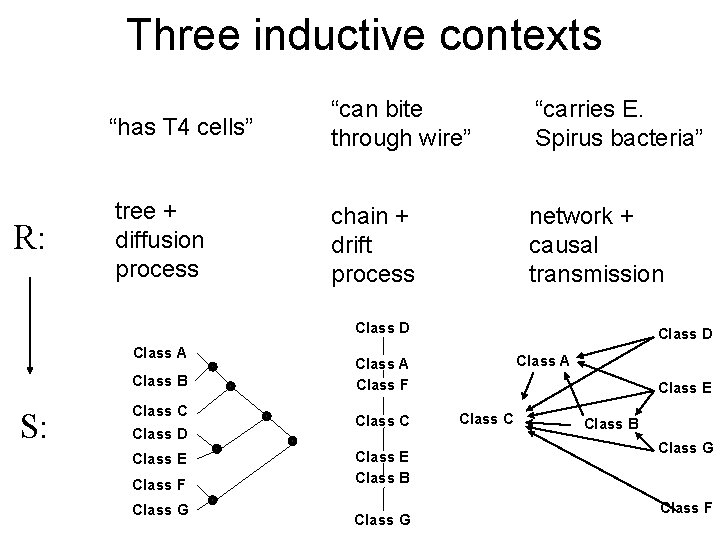

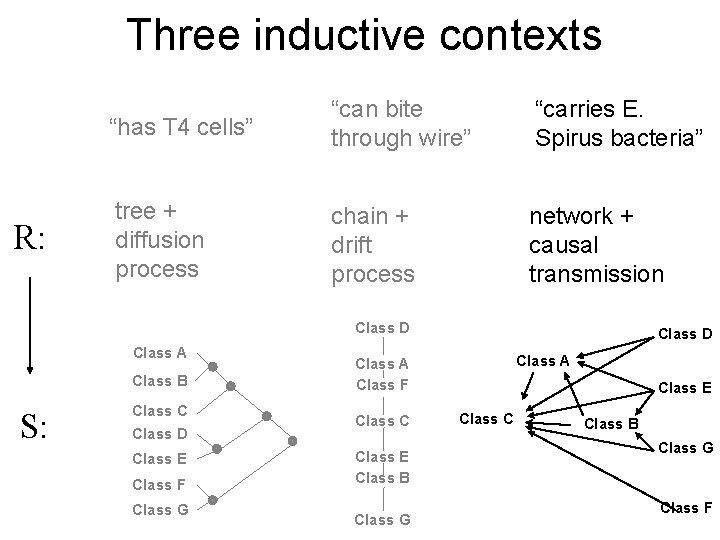

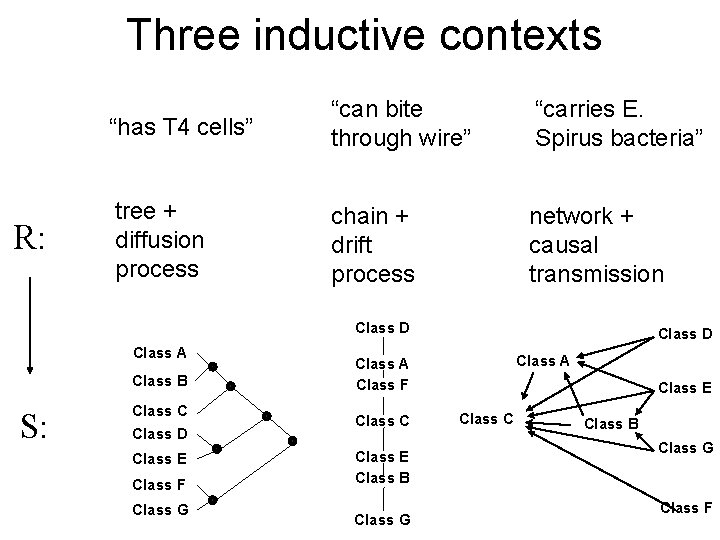

Three inductive contexts R: “has T 4 cells” “can bite through wire” “carries E. Spirus bacteria” tree + diffusion process chain + drift process network + causal transmission Class D Class A Class B S: Class C Class D Class E Class F Class G Class D Class A Class F Class C Class E Class B Class G Class E Class C Class B Class G Class F

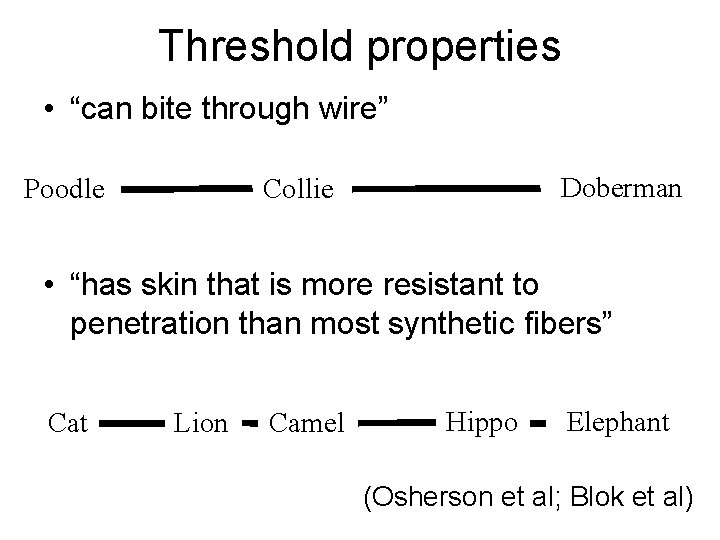

Threshold properties • “can bite through wire” Poodle Doberman Collie • “has skin that is more resistant to penetration than most synthetic fibers” Cat Lion Camel Hippo Elephant (Osherson et al; Blok et al)

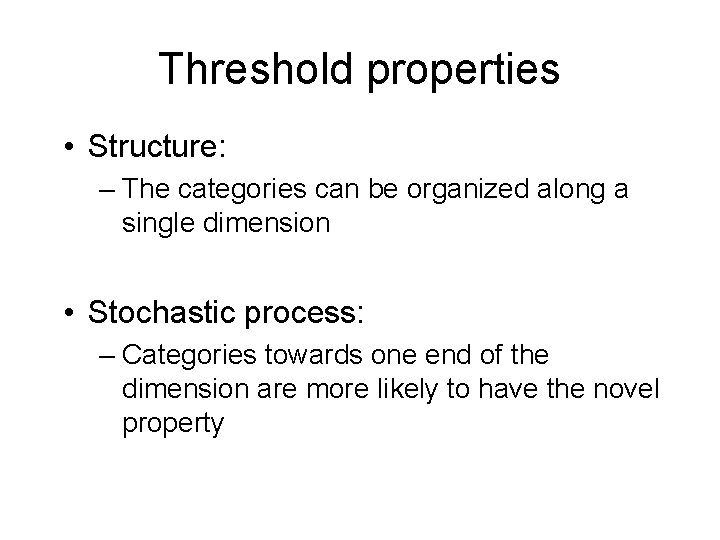

Threshold properties • Structure: – The categories can be organized along a single dimension • Stochastic process: – Categories towards one end of the dimension are more likely to have the novel property

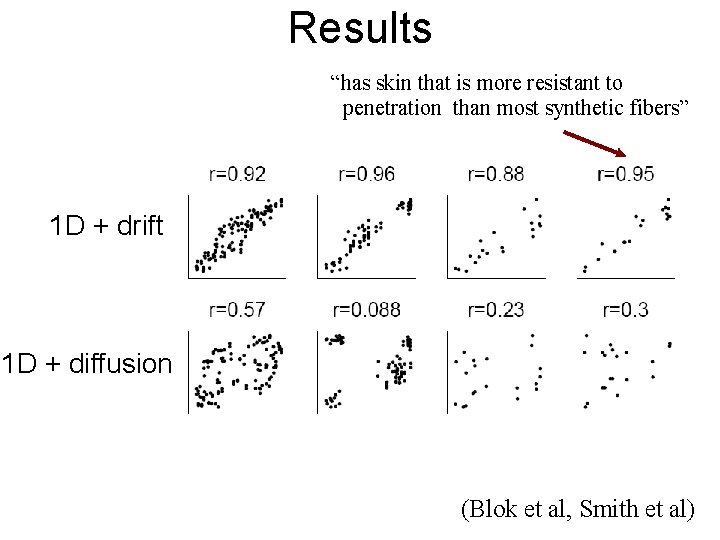

Results “has skin that is more resistant to penetration than most synthetic fibers” 1 D + drift 1 D + diffusion (Blok et al, Smith et al)

Three inductive contexts R: “has T 4 cells” “can bite through wire” “carries E. Spirus bacteria” tree + diffusion process chain + drift process network + causal transmission Class D Class A Class B S: Class C Class D Class E Class F Class G Class D Class A Class F Class C Class E Class B Class G Class E Class C Class B Class G Class F

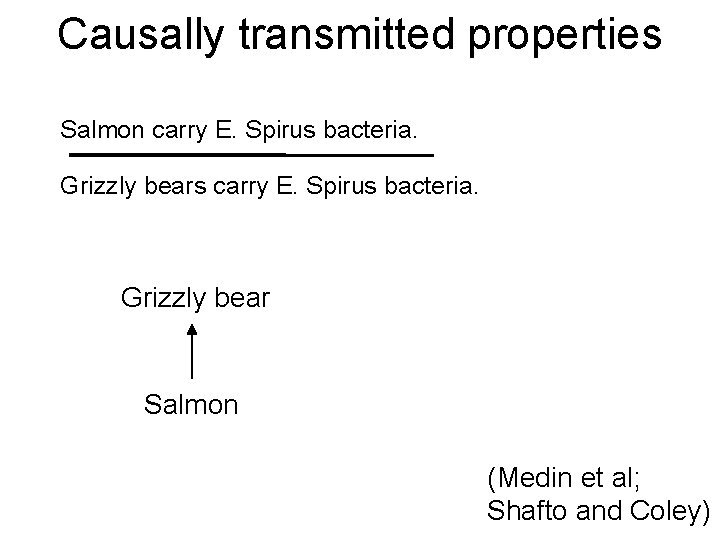

Causally transmitted properties Salmon carry E. Spirus bacteria. Grizzly bears carry E. Spirus bacteria. Grizzly bear Salmon (Medin et al; Shafto and Coley)

Causally transmitted properties • Structure: – The categories can be organized into a directed network • Stochastic process: – Properties are generated by a noisy transmission process

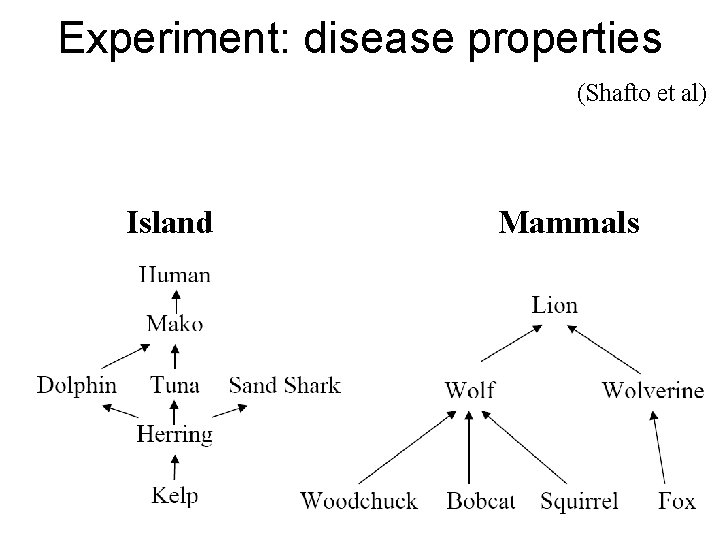

Experiment: disease properties (Shafto et al) Island Mammals

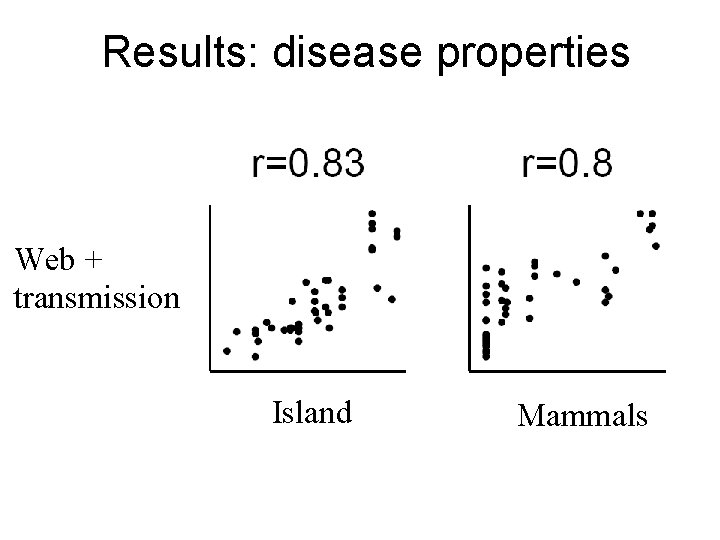

Results: disease properties Web + transmission Island Mammals

Three inductive contexts R: “has T 4 cells” “can bite through wire” “carries E. Spirus bacteria” tree + diffusion process chain + drift process network + causal transmission Class D Class A Class B S: Class C Class D Class E Class F Class G Class D Class A Class F Class C Class E Class B Class G Class E Class C Class B Class G Class F

Property Induction R: Principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data Approach: work with the distribution P(D|S, R)

Conclusions : property induction • Hierarchical Bayesian models help to explain how abstract knowledge can be used for induction

Outline • A high-level view of HBMs • A case study: Semantic knowledge – Property induction – Learning structured representations – Learning the abstract organizing principles of a domain

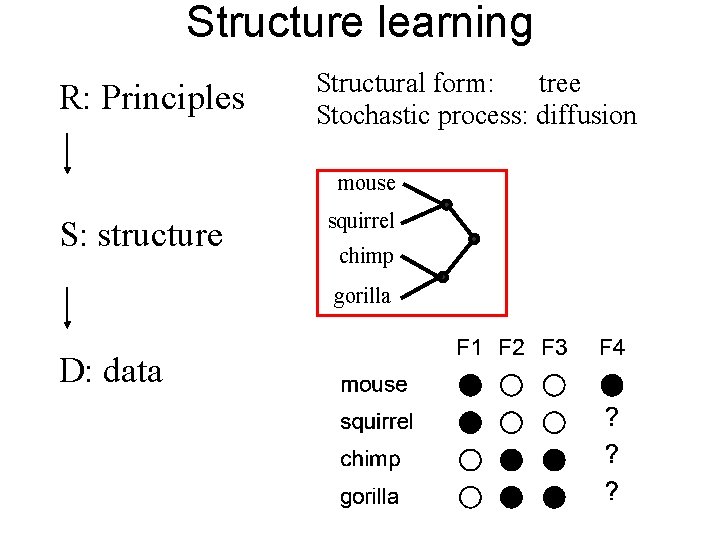

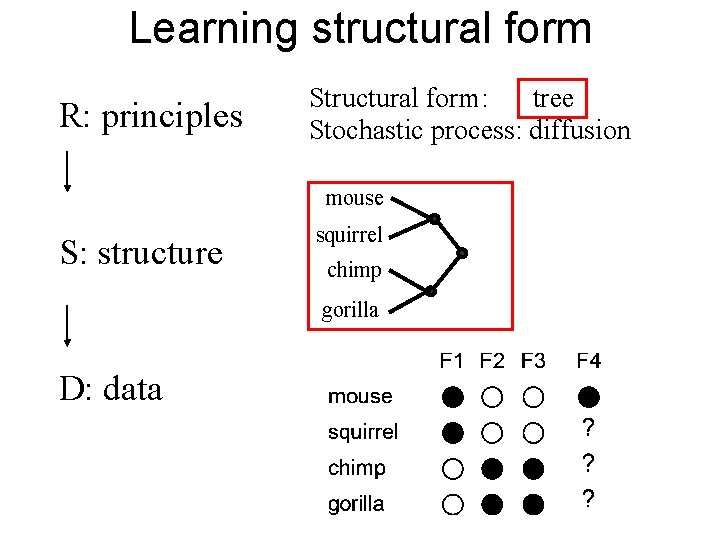

Structure learning R: Principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data

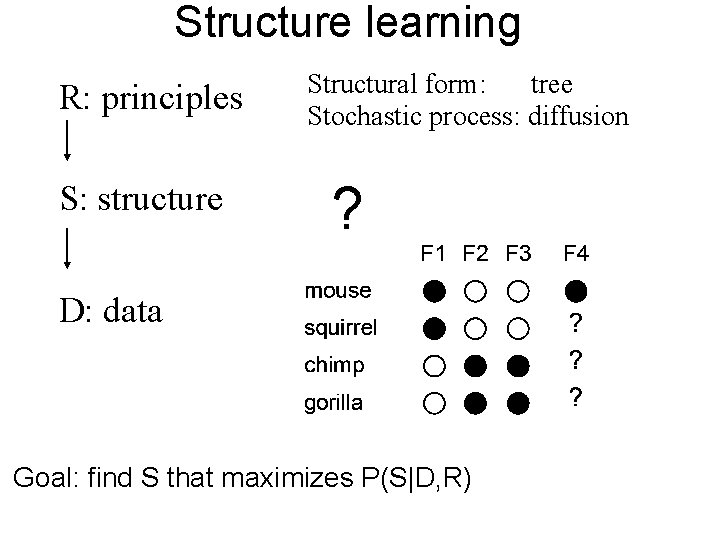

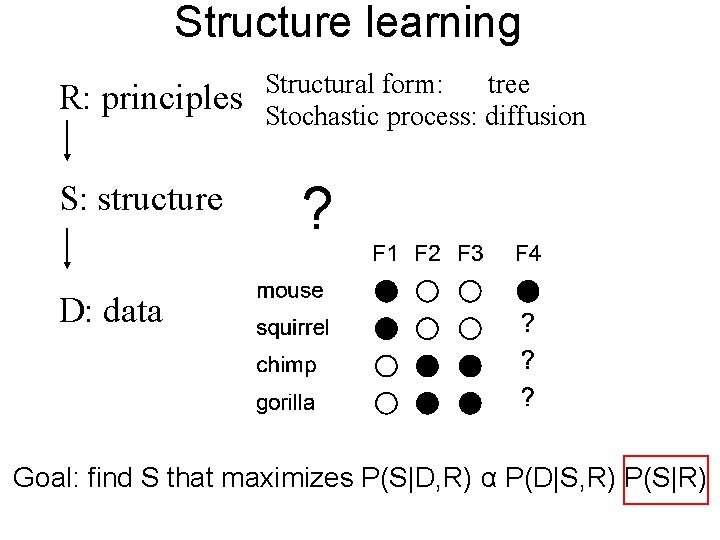

Structure learning R: principles S: structure Structural form: tree Stochastic process: diffusion ? D: data Goal: find S that maximizes P(S|D, R)

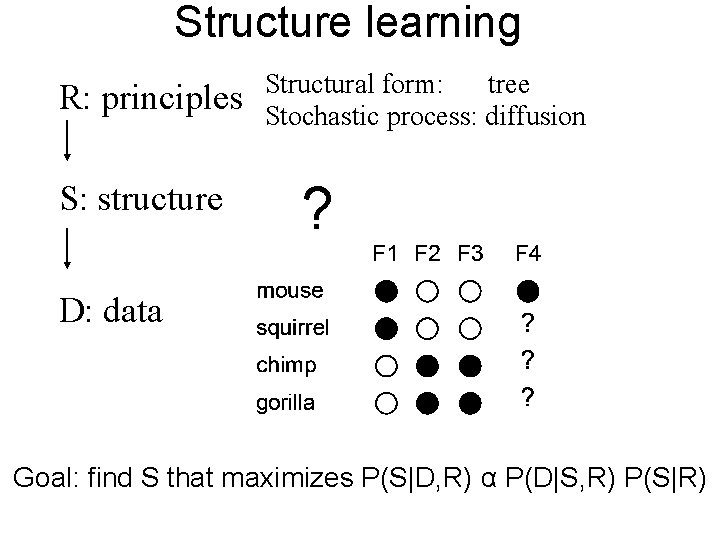

Structure learning R: principles S: structure Structural form: tree Stochastic process: diffusion ? D: data Goal: find S that maximizes P(S|D, R) α P(D|S, R) P(S|R)

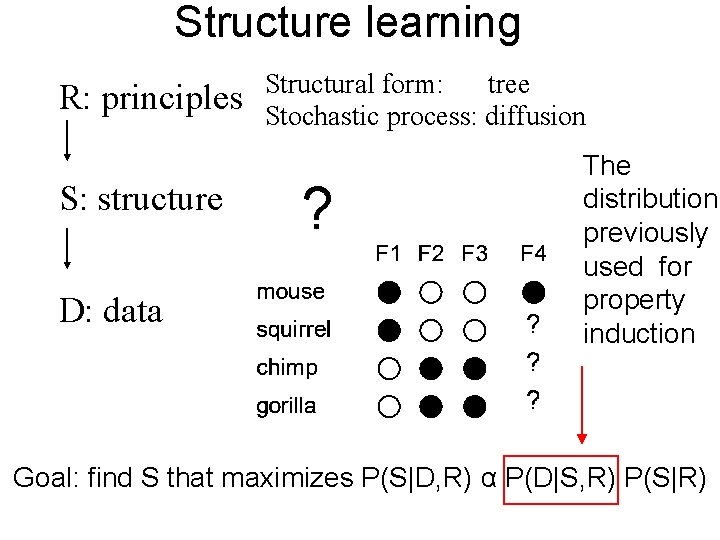

Structure learning R: principles S: structure D: data Structural form: tree Stochastic process: diffusion ? The distribution previously used for property induction Goal: find S that maximizes P(S|D, R) α P(D|S, R) P(S|R)

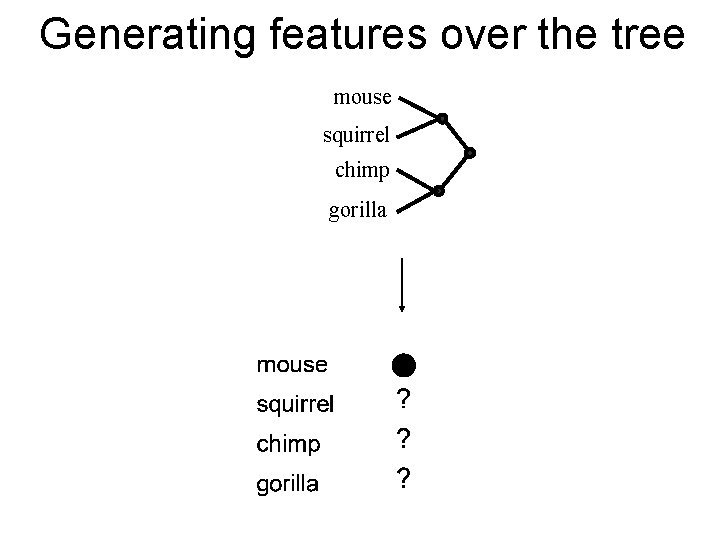

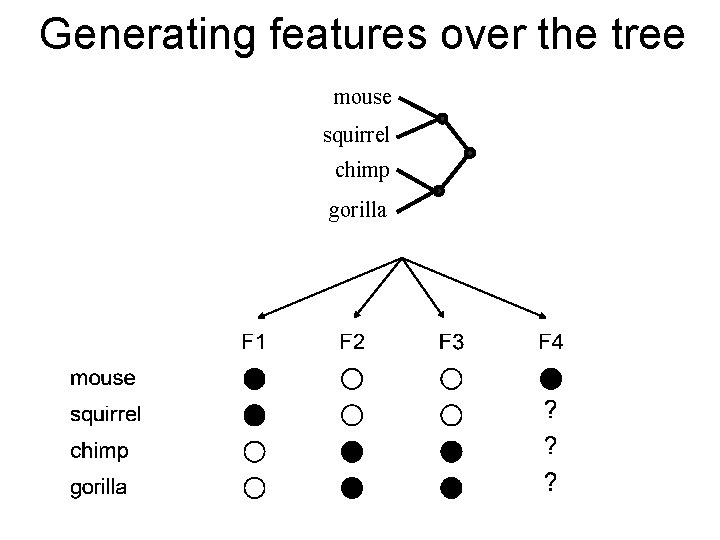

Generating features over the tree mouse squirrel chimp gorilla

Generating features over the tree mouse squirrel chimp gorilla

Structure learning R: principles S: structure Structural form: tree Stochastic process: diffusion ? D: data Goal: find S that maximizes P(S|D, R) α P(D|S, R) P(S|R)

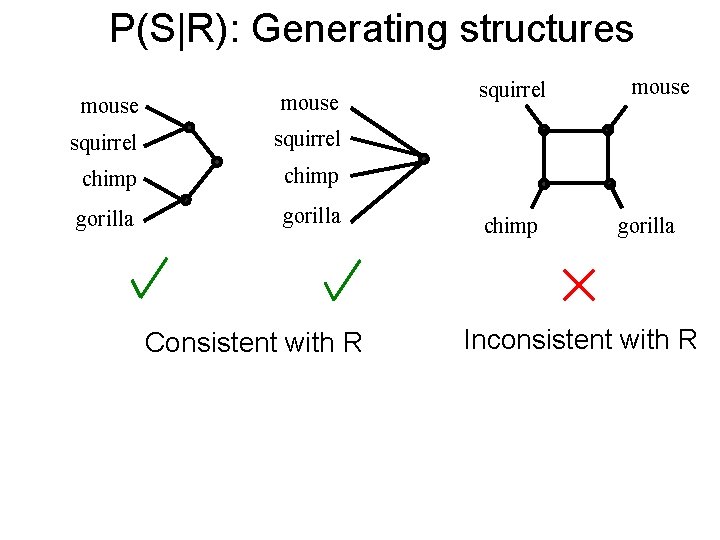

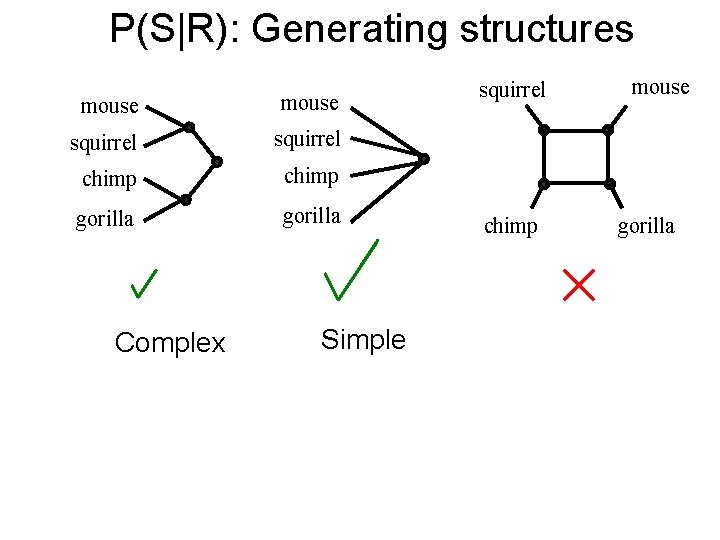

P(S|R): Generating structures mouse squirrel chimp gorilla Consistent with R squirrel chimp mouse gorilla Inconsistent with R

P(S|R): Generating structures mouse squirrel chimp gorilla Complex Simple squirrel chimp mouse gorilla

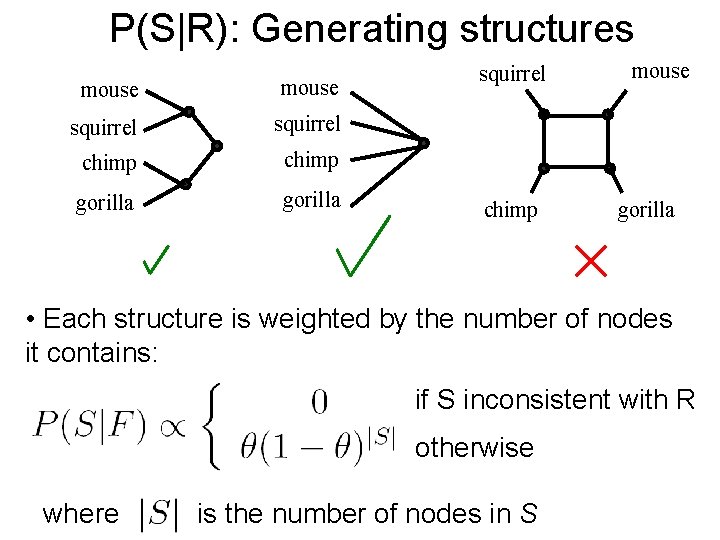

P(S|R): Generating structures mouse squirrel chimp gorilla squirrel chimp mouse gorilla • Each structure is weighted by the number of nodes it contains: if S inconsistent with R otherwise where is the number of nodes in S

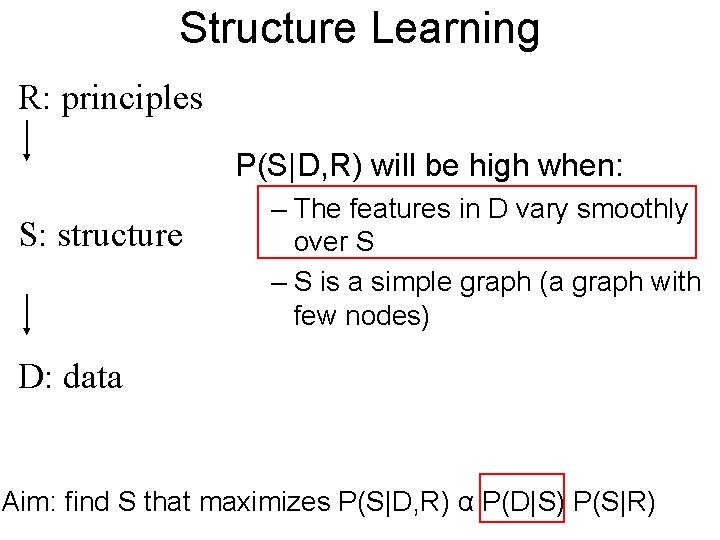

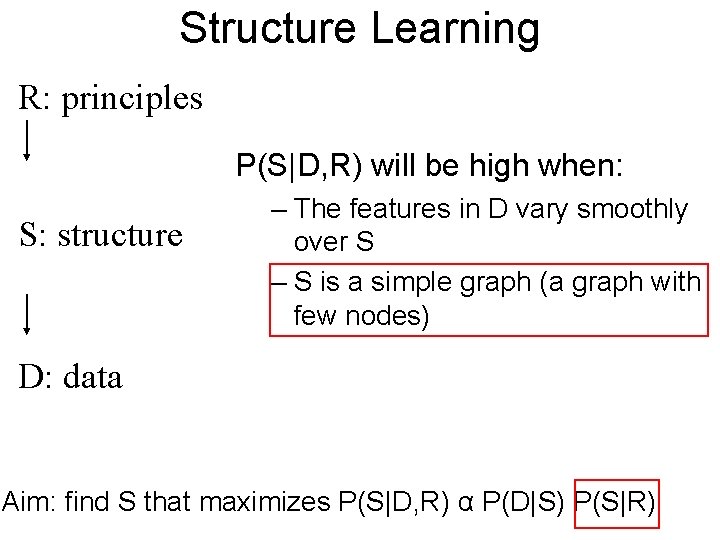

Structure Learning R: principles P(S|D, R) will be high when: S: structure – The features in D vary smoothly over S – S is a simple graph (a graph with few nodes) D: data Aim: find S that maximizes P(S|D, R) α P(D|S) P(S|R)

Structure Learning R: principles P(S|D, R) will be high when: S: structure – The features in D vary smoothly over S – S is a simple graph (a graph with few nodes) D: data Aim: find S that maximizes P(S|D, R) α P(D|S) P(S|R)

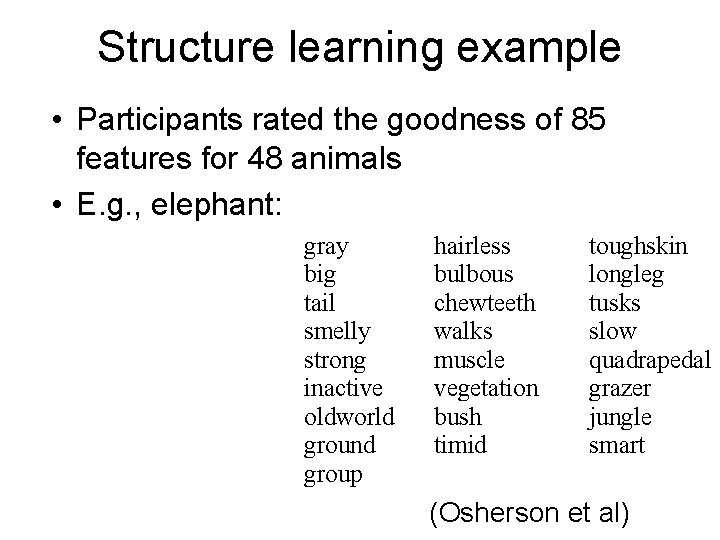

Structure learning example • Participants rated the goodness of 85 features for 48 animals • E. g. , elephant: gray big tail smelly strong inactive oldworld ground group hairless bulbous chewteeth walks muscle vegetation bush timid toughskin longleg tusks slow quadrapedal grazer jungle smart (Osherson et al)

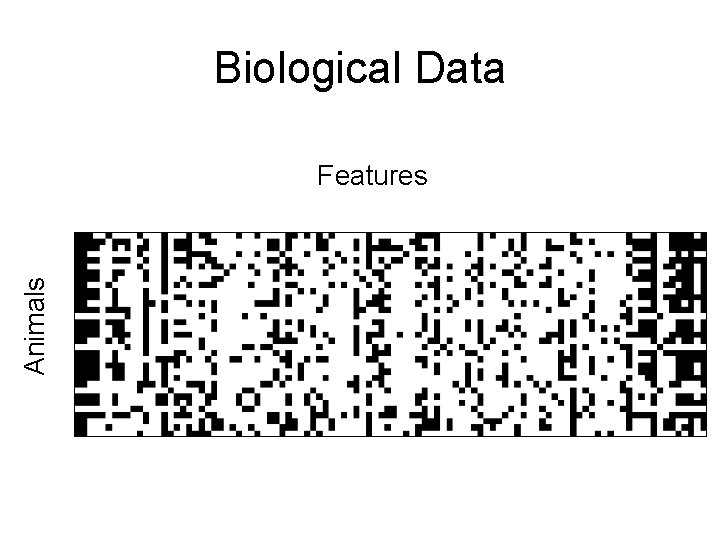

Biological Data Animals Features

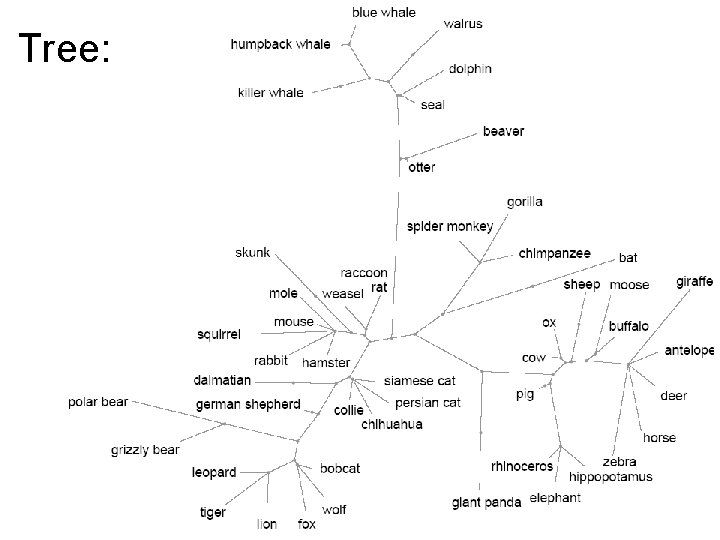

Tree:

Spatial model R: principles Structural form: 2 D space Stochastic process: diffusion squirrel S: structure mouse gorilla chimp D: data

2 D space:

Conclusions: structure learning • Hierarchical Bayesian models provide a unified framework for the acquisition and use of structured representations

Outline • A high-level view of HBMs • A case study: Semantic knowledge – Property induction – Learning structured representations – Learning the abstract organizing principles of a domain

Learning structural form R: principles Structural form: tree Stochastic process: diffusion mouse S: structure squirrel chimp gorilla D: data

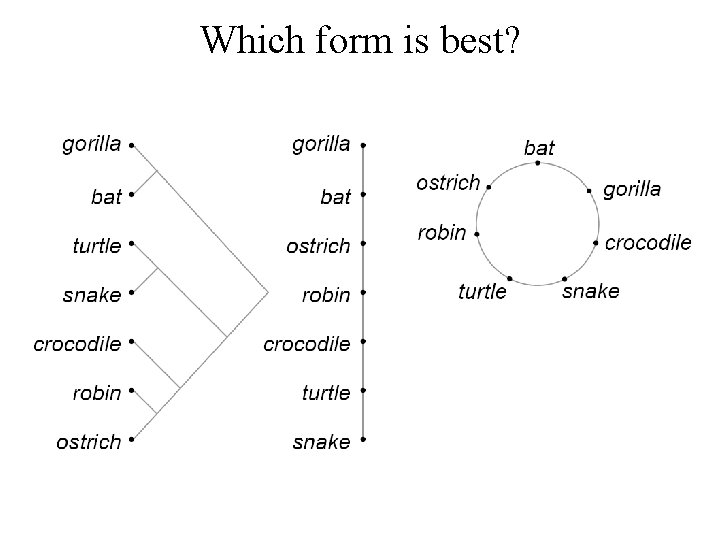

e Cr e oc od ile Ro bi n O str ic h Ba O t ra ng ut an rtl Tu ak Sn Which form is best?

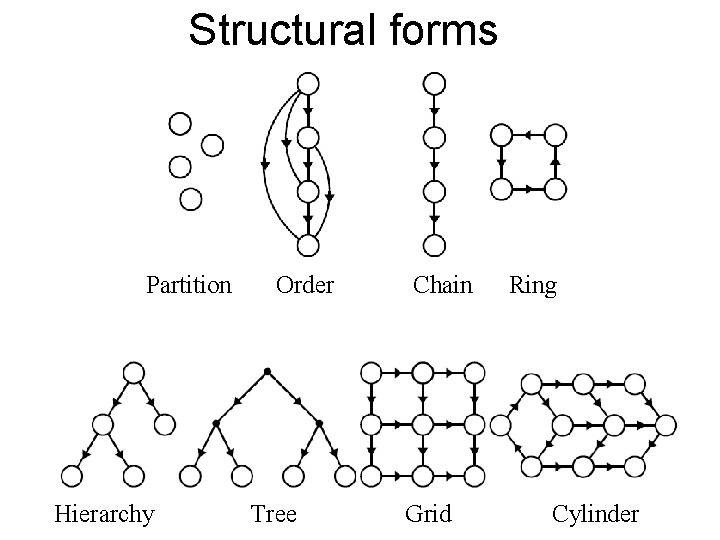

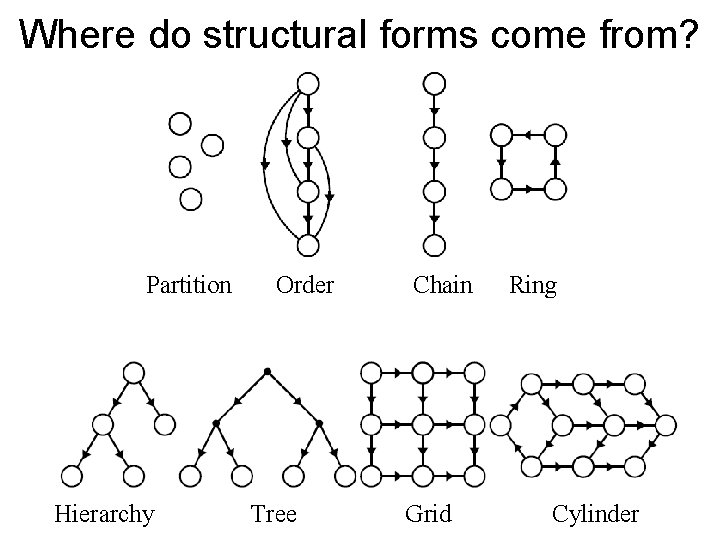

Structural forms Partition Hierarchy Order Tree Chain Grid Ring Cylinder

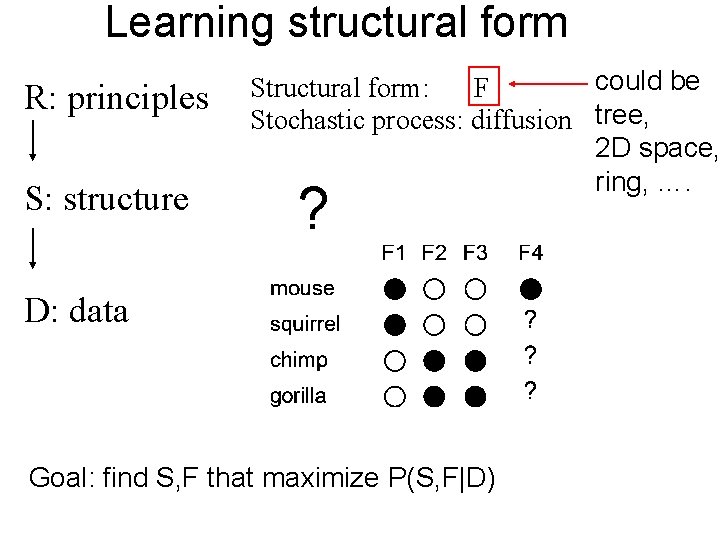

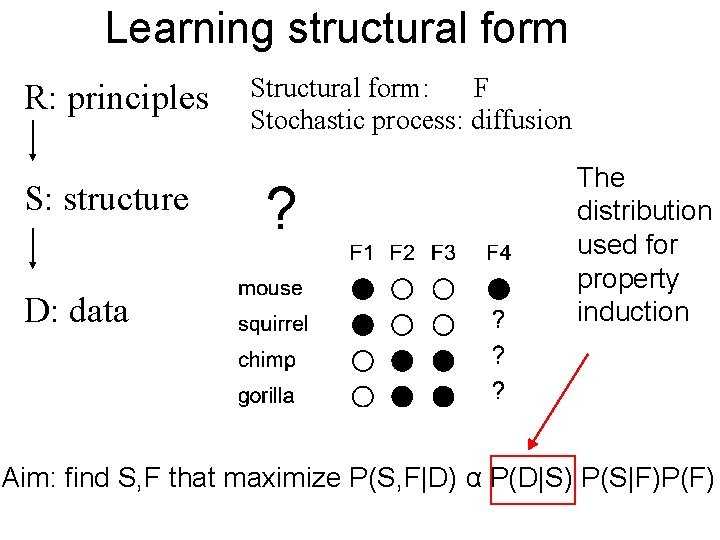

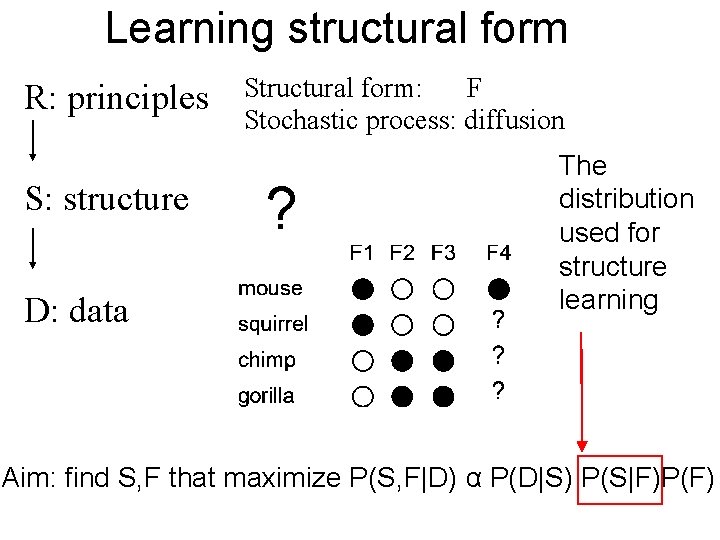

Learning structural form R: principles S: structure could be Structural form: F Stochastic process: diffusion tree, 2 D space, ring, …. ? D: data Goal: find S, F that maximize P(S, F|D)

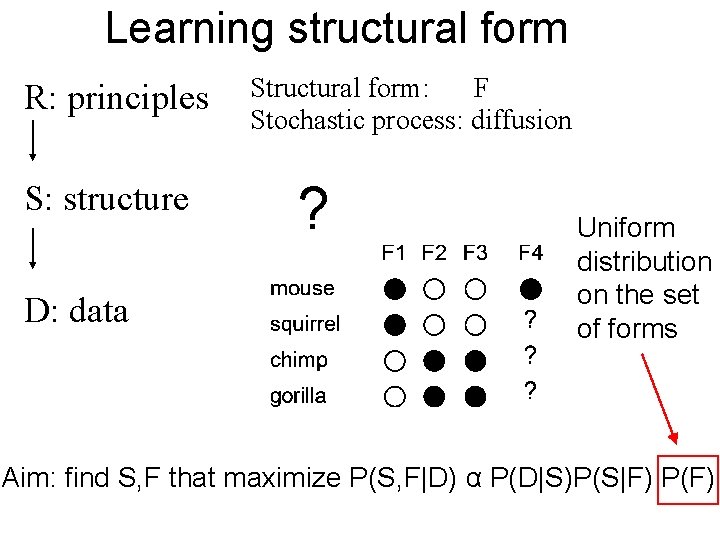

Learning structural form R: principles S: structure D: data Structural form: F Stochastic process: diffusion ? Uniform distribution on the set of forms Aim: find S, F that maximize P(S, F|D) α P(D|S)P(S|F) P(F)

Learning structural form R: principles S: structure D: data Structural form: F Stochastic process: diffusion ? The distribution used for property induction Aim: find S, F that maximize P(S, F|D) α P(D|S) P(S|F)P(F)

Learning structural form R: principles S: structure D: data Structural form: F Stochastic process: diffusion ? The distribution used for structure learning Aim: find S, F that maximize P(S, F|D) α P(D|S) P(S|F)P(F)

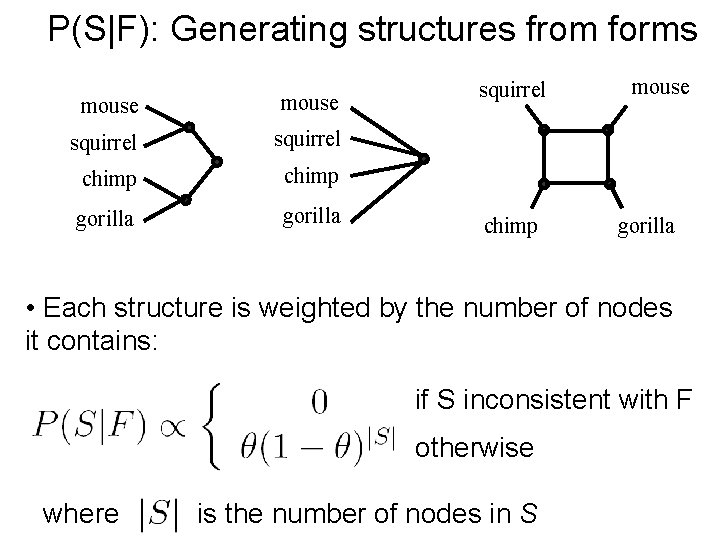

P(S|F): Generating structures from forms mouse squirrel chimp gorilla squirrel chimp mouse gorilla • Each structure is weighted by the number of nodes it contains: if S inconsistent with F otherwise where is the number of nodes in S

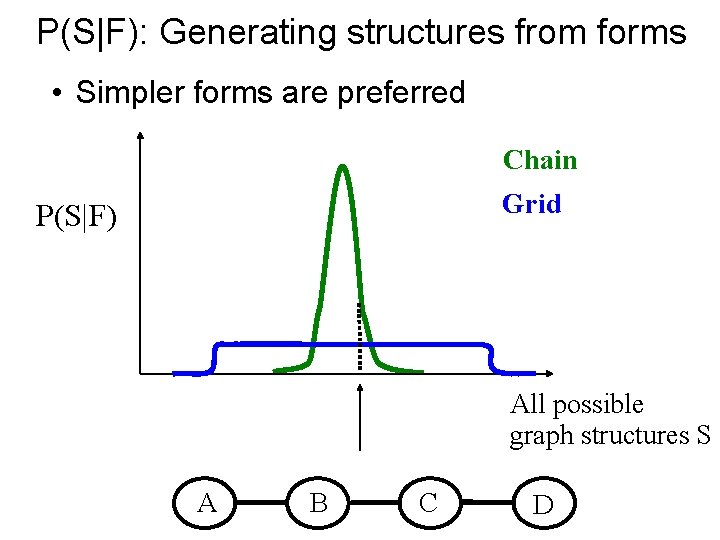

P(S|F): Generating structures from forms • Simpler forms are preferred Chain Grid P(S|F) All possible graph structures S A B C D

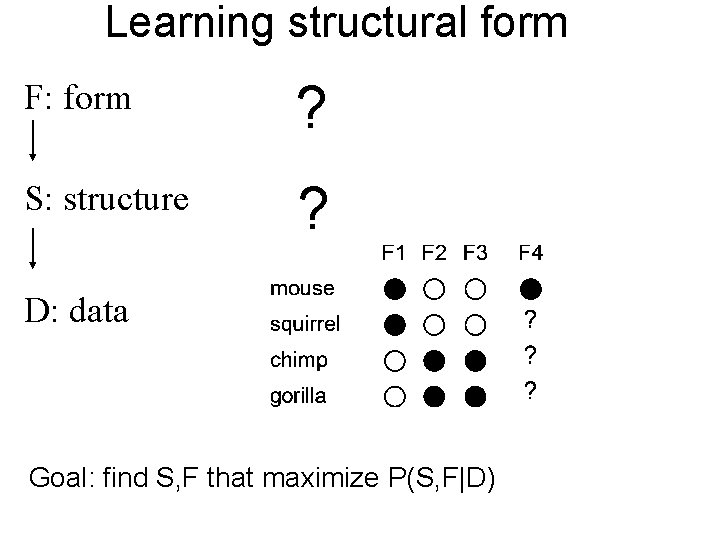

Learning structural form F: form ? S: structure ? D: data Goal: find S, F that maximize P(S, F|D)

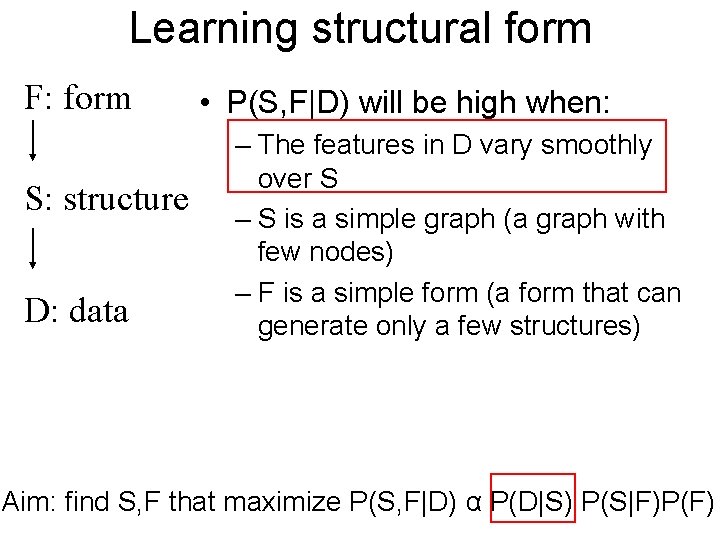

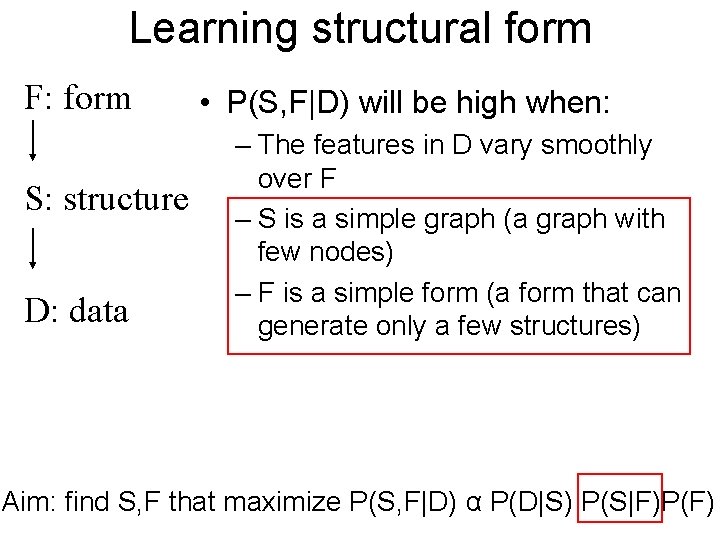

Learning structural form F: form S: structure D: data • P(S, F|D) will be high when: – The features in D vary smoothly over S – S is a simple graph (a graph with few nodes) – F is a simple form (a form that can generate only a few structures) Aim: find S, F that maximize P(S, F|D) α P(D|S) P(S|F)P(F)

Learning structural form F: form S: structure D: data • P(S, F|D) will be high when: – The features in D vary smoothly over F – S is a simple graph (a graph with few nodes) – F is a simple form (a form that can generate only a few structures) Aim: find S, F that maximize P(S, F|D) α P(D|S) P(S|F)P(F)

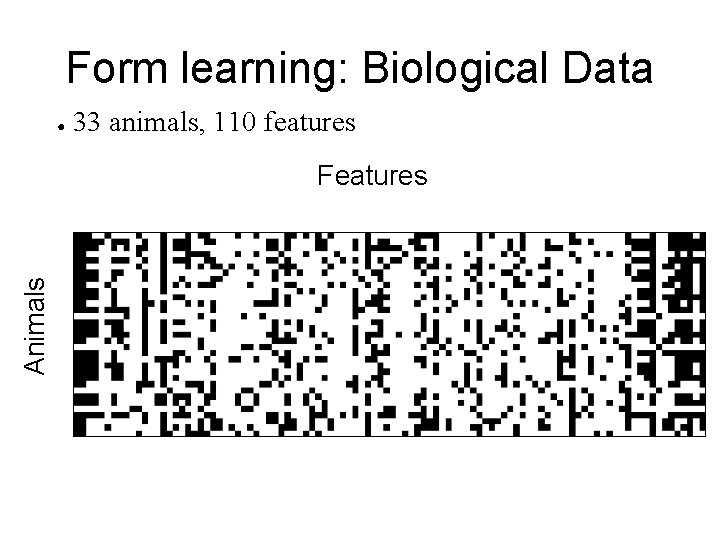

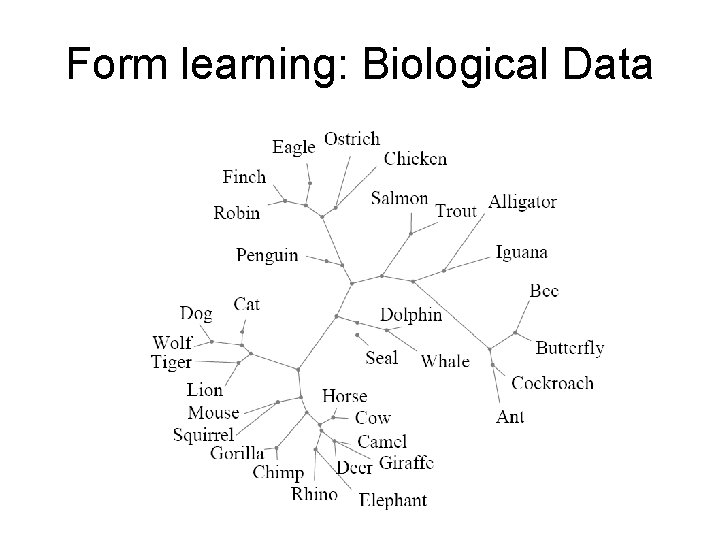

Form learning: Biological Data ● 33 animals, 110 features Animals Features

Form learning: Biological Data

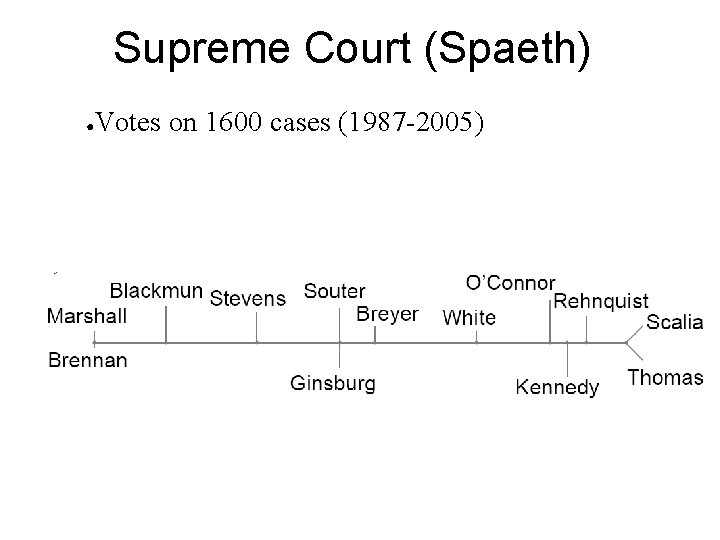

Supreme Court (Spaeth) ● Votes on 1600 cases (1987 -2005)

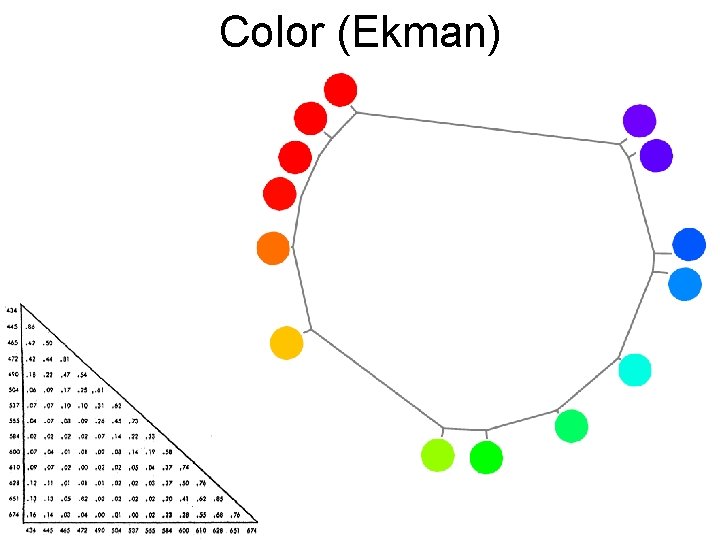

Color (Ekman)

Outline • A high-level view of HBMs • A case study: Semantic knowledge – Property induction – Learning structured representations – Learning the abstract organizing principles of a domain

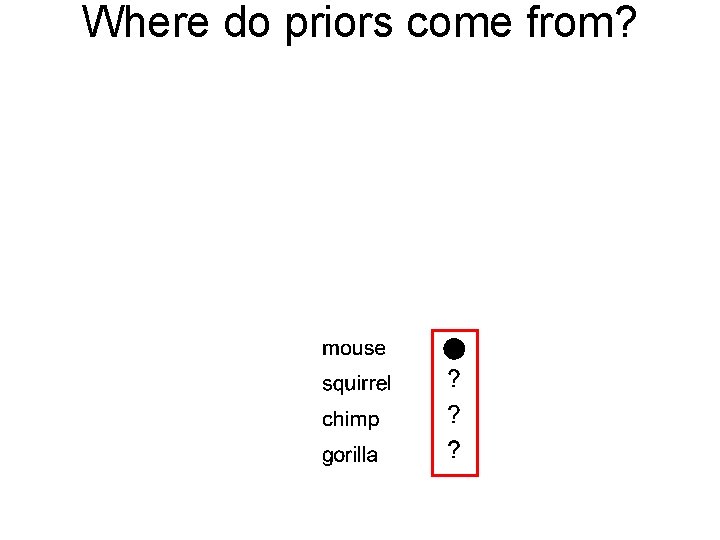

Where do priors come from?

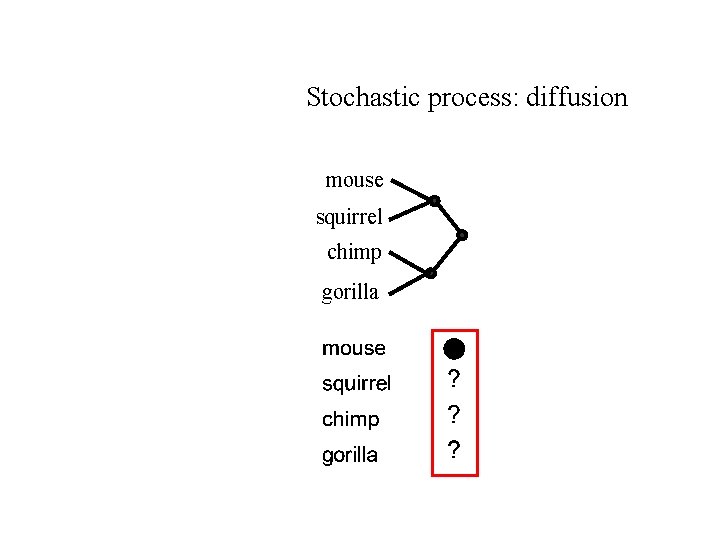

Stochastic process: diffusion mouse squirrel chimp gorilla

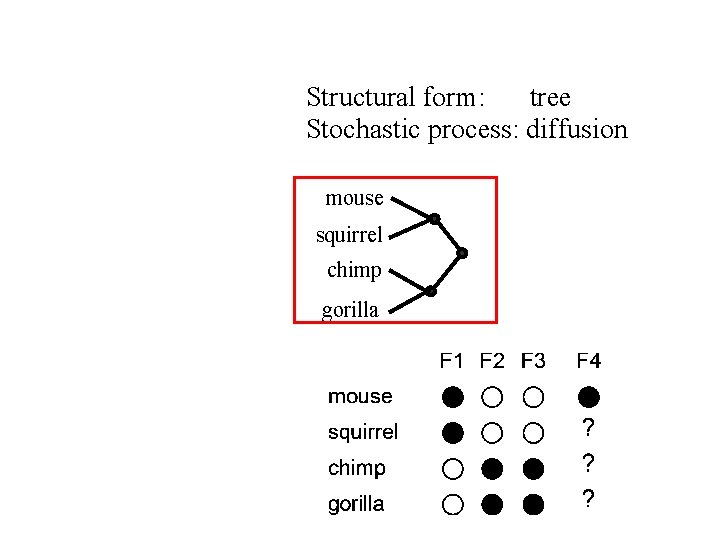

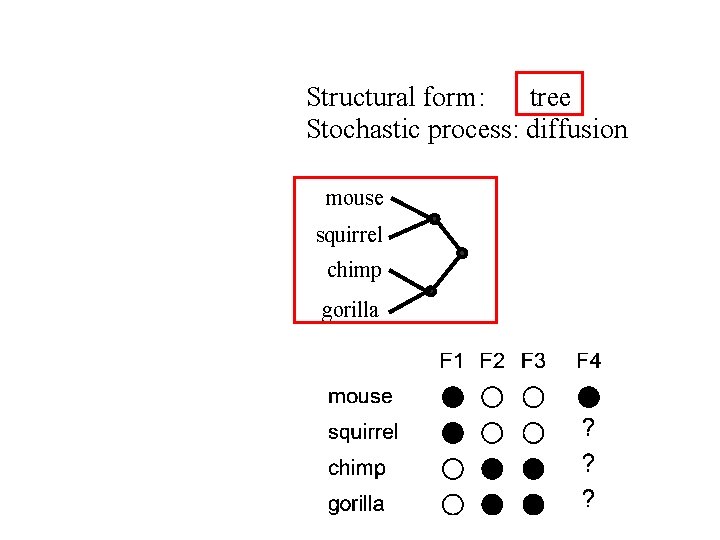

Structural form: tree Stochastic process: diffusion mouse squirrel chimp gorilla

Structural form: tree Stochastic process: diffusion mouse squirrel chimp gorilla

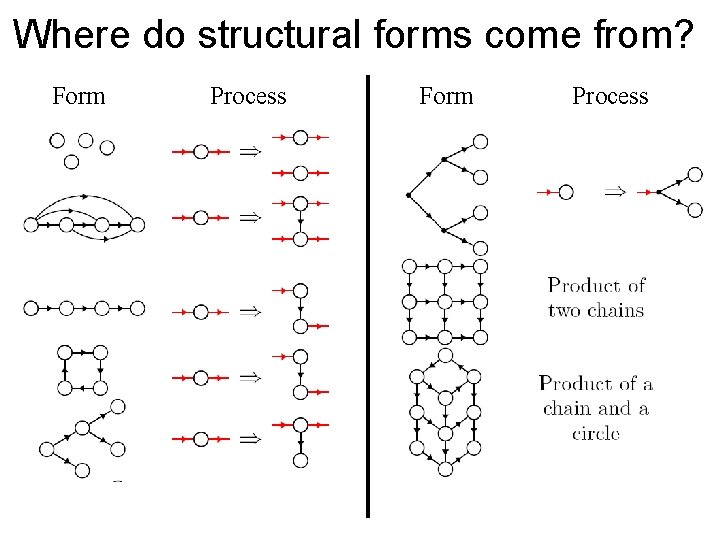

Where do structural forms come from? Partition Hierarchy Order Tree Chain Grid Ring Cylinder

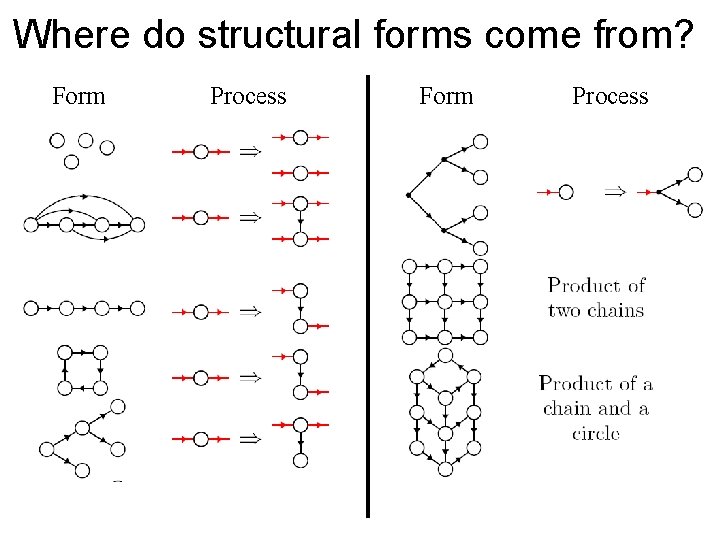

Where do structural forms come from? Form Process

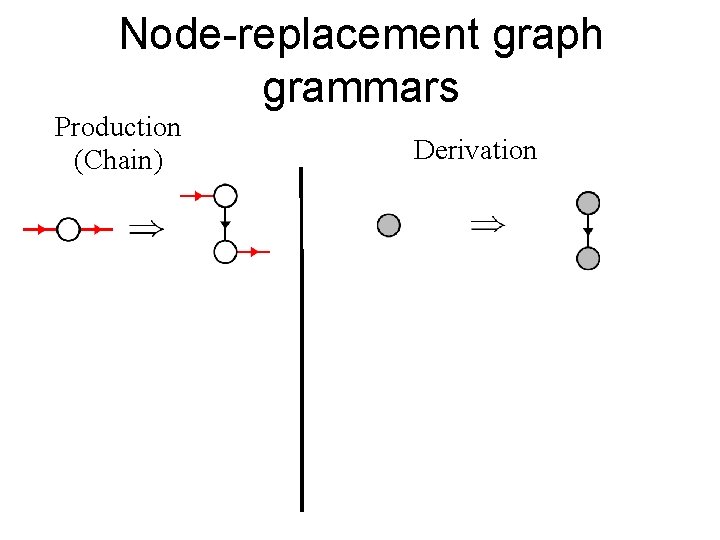

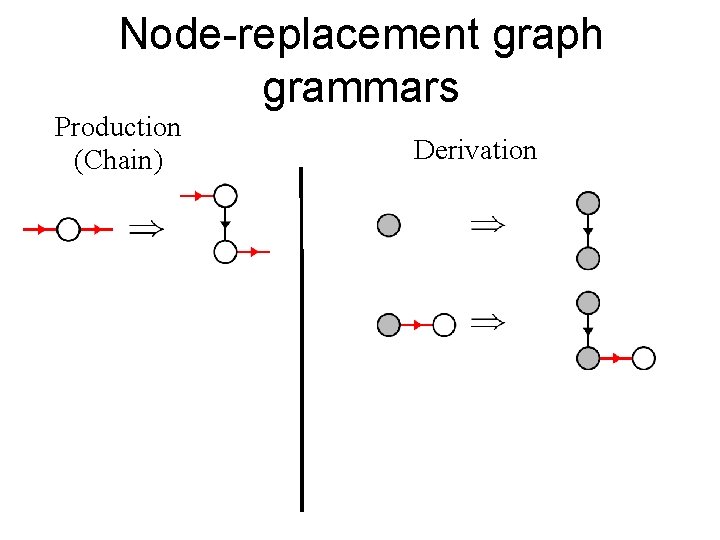

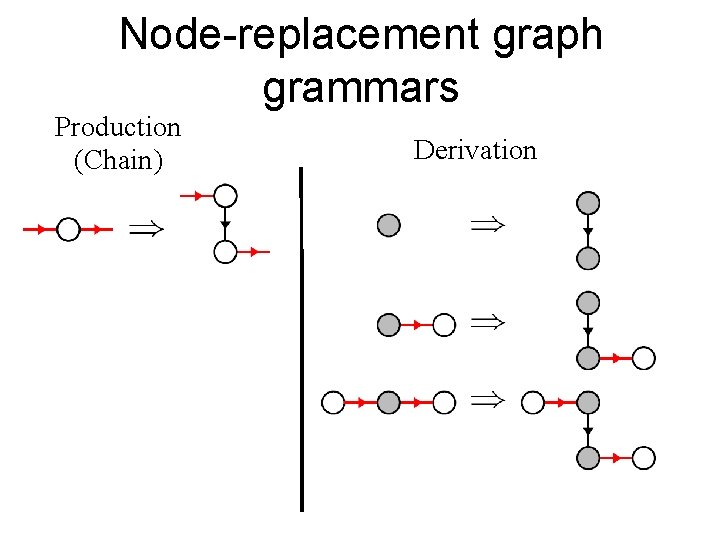

Node-replacement graph grammars Production (Chain) Derivation

Node-replacement graph grammars Production (Chain) Derivation

Node-replacement graph grammars Production (Chain) Derivation

Where do structural forms come from? Form Process

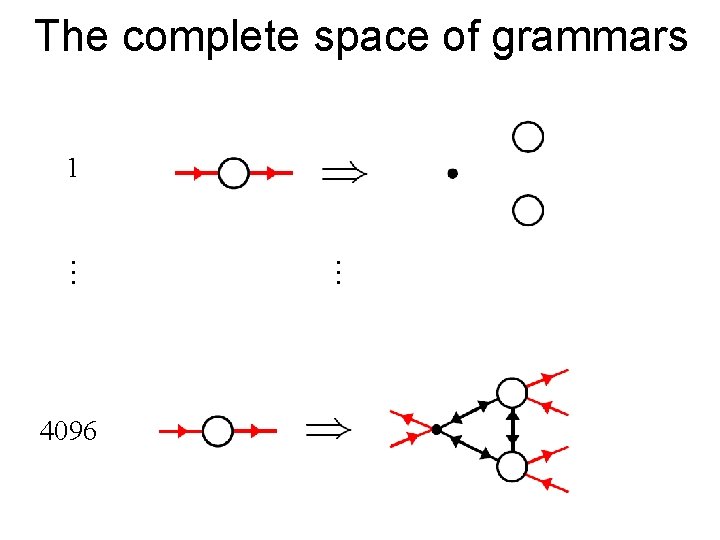

The complete space of grammars 1 . . . 4096

When can we stop adding levels? • When the knowledge at the top level is simple or general enough that it can be plausibly assumed to be innate.

Conclusions • Hierarchical Bayesian models provide a unified framework which can – Explain how abstract knowledge is used for induction – Explain how abstract knowledge can be acquired

Learning abstract knowledge Applications of hierarchical Bayesian models at this conference: 1. Semantic knowledge: Schmidt et al. – Learning the M-constraint 2. Syntax: Perfors et al. – Learning that language is hierarchically organized 3. Word learning: Kemp et al. – Learning the shape bias

- Slides: 114