Parallel Computing Overview Johnnie Baker References Michael Quinn

Parallel Computing Overview Johnnie Baker

References • Michael Quinn, Parallel Programming in C with MPI and Open MP, Chapters 1 -2, Mc. Graw Hill, 2004. • Selim Akl, “Parallel Computation: Models and Methods”, Chapter 1, Prentice Hall, 1997, – Updated online version online 2

Outline • • • Why use parallel computing Moore’s Law Flynn’s Taxonomy Basic Types of Parallel Computers Different Types of Parallel Computation Three Computer Interconnection Network Examples • Performance Evaluation • Amdahl’s Law 3

Why Use Parallel Computers • Solve compute-intensive problems faster – Make infeasible problems feasible – Reduce design time • Solve larger problems in same amount of time – Improve answer’s precision – Reduce design time • Increase memory size – More data can be kept in memory – Dramatically reduces slowdown due to accessing external storage increases computation time • Gain competitive advantage 4

Weather Prediction • Atmosphere is divided into 3 D cells • Data includes temperature, pressure, humidity, wind speed and direction, etc – Recorded at regular time intervals in each cell • There about 5× 103 cells of 1 mile cubes. • Calculations would take a modern computer more than 10 days to perform calculations needed for a 10 day forecast (100 days in 2003) 5

![Moore’s Law • In 1965, Gordon Moore [87] observed that the density of chips Moore’s Law • In 1965, Gordon Moore [87] observed that the density of chips](http://slidetodoc.com/presentation_image/5fc25d3f9dc0dcb8f5f6489f4314f2a3/image-6.jpg)

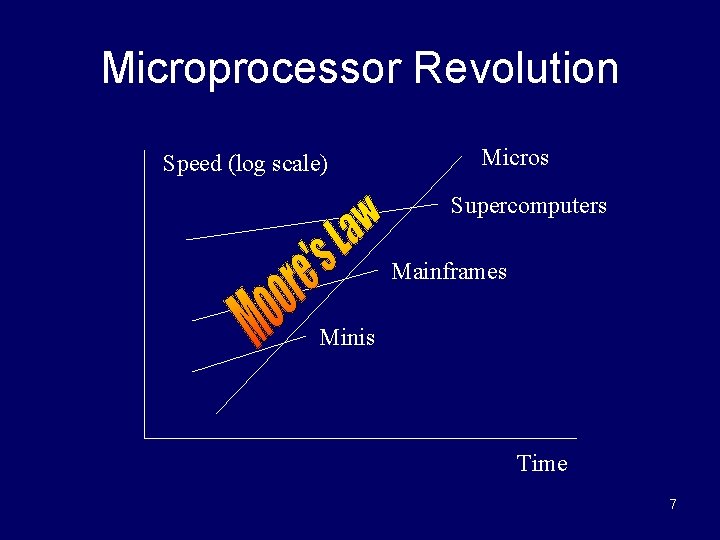

Moore’s Law • In 1965, Gordon Moore [87] observed that the density of chips doubled every year. – That is, the chip size is being halved yearly. – This is an exponential rate of increase. • By the late 1980’s, the doubling period had slowed to 18 months. • Reduction of the silicon area causes speed of the processors to increase. • Moore’s law is sometimes stated: “The processor speed doubles every 18 months” 6

Microprocessor Revolution Speed (log scale) Micros Supercomputers Mainframes Minis Time 7

Some Definitions • Concurrent – Sequential events or processes which seem to occur or progress at the same time. • Parallel –Events or processes which occur or progress at the same time • Parallel computing provides simultaneous execution of operations within a single parallel computer unit • Distributed computing provides simultaneous execution of operations across a number of systems. 8

Flynn’s Taxonomy • Best known classification scheme for parallel computers. • Depends on parallelism it exhibits with its – Flow of Instructions to processors (Instruction streams) – Flow of data to processors (Data stream) • Each instruction stream manipulates a sequence of operations on one or more data streams • The instruction stream (I) and the data stream (D) can be either single (S) or multiple (M) • Four combinations: SISD, SIMD, MISD, MIMD 9

SISD Model • Single Instruction, Single Data • The primary example: Sequential Computers – i. e. , uniprocessors – Note: co-processors don’t count as more processors • Concurrent processing allowed – Instruction prefetching – Pipelined execution of instructions – Independent concurrent tasks can execute different sequences of operations. 10

SIMD • Single instruction, multiple data • One instruction stream is broadcast to all processors • Each processor, also called a processing element (or PE), is very simplistic and is essentially an ALU; – PEs do not store a copy of the program nor have a program control unit. • Selected processors can execute a block of instructions – Handled using a data test. 11

SIMD (cont. ) • All active processor executes the same instruction synchronously, but on different data • On a memory access, all active processors must access the same location in their local memory. • The data items at any time can be viewed as a vector and an instruction can act on the entire vector in one cycle. 12

SIMD (cont. ) • Quinn calls this architecture a processor array. • Examples include – The STARAN and MPP (Dr. Batcher architect) – Connection Machine CM 2, built by Thinking Machines). 13

How to View a SIMD Machine • Think of soldiers all in a unit. • The commander selects certain soldiers as active. – For example, every even numbered row. • The commander shouts an order to all the active soldiers, who execute the order synchronously. 14

MISD • Multiple instruction streams, single data stream • Not considered to be an important type of computer, so we won’t discuss it further. 15

MIMD • Multiple Instruction, Multiple Data • Processors are asynchronous and can independently execute different programs on different data sets. • Communications are handled either – through shared memory. (multiprocessors) – by use of message passing (multicomputers) • MIMD’s are currently the primary type of parallel computers built. 16

MIMD (continued 2) • Have major communication costs – When compared to SIMDs – Internal “housekeeping activities” are often overlooked • Maintaining distributed memory & distributed databases • Synchronization or scheduling of tasks • Balancing workloads between different processors • The SPMD method of programming MIMDs – All processors execute the same program. – SPMD stands for Single Program, Multiple Data. – An easy programming method to use when number of processors are large. – While processors have same code, they can each can be executing different parts at any point in time. 17

MIMD (cont. 3) • Currently a more common technique for programming MIMDs is use of multi-tasking – The problem solution is broken up into various tasks. – Tasks are initially distributed among processors. – If new tasks are produced during executions, these may handled by parent processor or distributed – All processors execute their collection of assigned tasks concurrently. • If some of its tasks must wait for results from other tasks or new data , the processor will focus the remaining tasks. 18

MIMD (cont. 4) – MIMD programs often require (especially if real-time) a load balancing algorithm to rebalance tasks between processors during execution • A static load balancing algorithm distributes tasks to processors using fixed preset rules. • A dynamic load balancing algorithm monitors and adjusts the distribution of tasks in real-time to keep processor loads reasonably balanced. (NP-hard for optimal performance) – MIMD programs often require (especially if real-time) a scheduling algorithm to determine the order in which processors executes its tasks • Static scheduling algorithms are those in which scheduling decisions are based on complete knowledge regarding the task set and their constraints, such as computation time, deadline, precedence constraints. etc • Dynamic scheduling algorithms are those in which scheduling decisions are based on dynamic task constraints that can change. Also, unexpected new tasks may arrive in the future. 19 (NP-hard)

MIMD (cont. 5) • Recall, there are two principle types of MIMD computers: – Multiprocessors (uses shared memory) – Multicomputers (uses message passing) • Both are important and will be covered next. 20

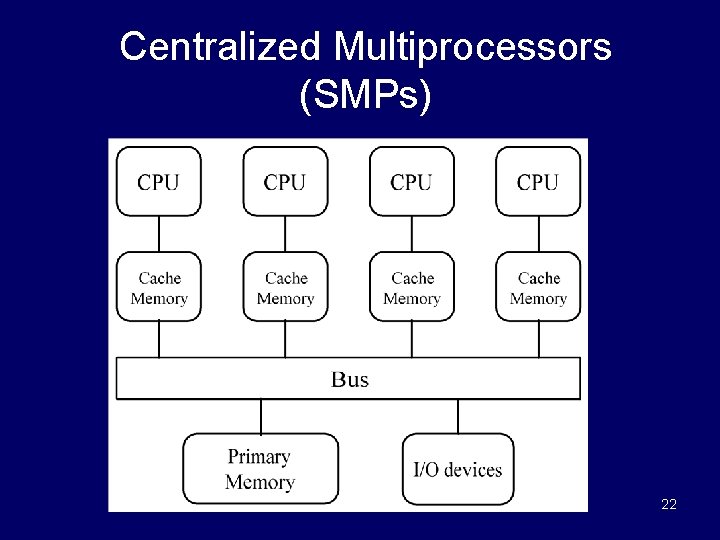

Multiprocessors (Shared Memory MIMDs) • Consists of two types – Centralized Multiprocessors • Also called UMA (Uniform Memory Access) • Also called Symmetric Multiprocessors (i. e. , SMPs) – Distributed Multiprocessors • Also called NUMA (Nonuniform Memory Access) 21

Centralized Multiprocessors (SMPs) 22

Centralized Multiprocessors (SMPs) • Consists of identical CPUs connected by a bus and to common block of memory. • Each processor requires the same amount of time to access memory. • Usually limited to a few dozen processors due to memory bandwidth. • Each processor uses a cache to minimize the number of memory access – Creates a cache coherency problem for keeping the multiple value for the same variable identical. 23

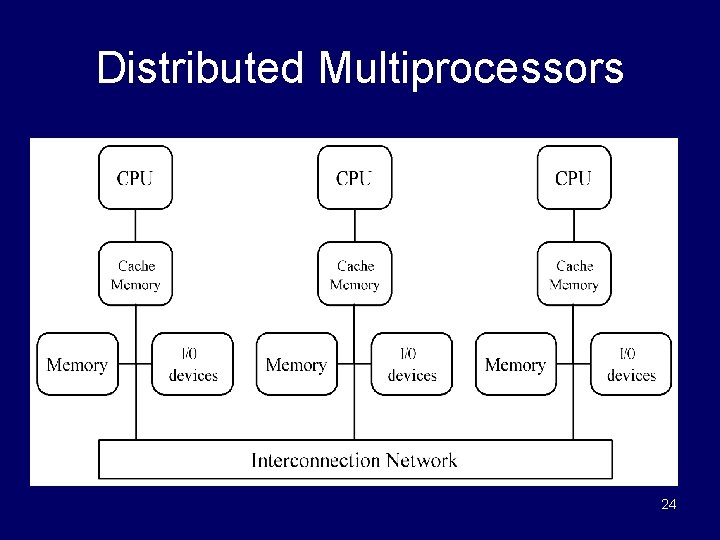

Distributed Multiprocessors 24

Distributed Multiprocessors (or NUMA) • Has a distributed memory system • Each memory location has the same address for all processors. – Access time to a given memory location varies considerably for different CPUs. • Normally, uses fast cache to reduce the problem of different memory access time for processors. – Creates problem of ensuring all copies of the same data in different memory locations are identical. 25

Multicomputers (Message-Passing MIMDs) • Processors are connected by a network – Normally an interconnection network • Connects processors to each other • A common example is the 2 D mesh network • Each processor has a local memory and can only access its own local memory. • Data is passed between processors using messages, when specified by the program. 26

Multicomputers (cont) • Message passing between processors is controlled by a message passing language – e. g. , MPI, PVM • The problem is divided into processes or tasks that can be executed concurrently on individual processors. • Each processor is normally assigned multiple tasks. 27

Multiprocessors vs Multicomputers • Programming disadvantages of messagepassing – Programmers must make explicit messagepassing calls in the code – This is low-level programming and is error prone. – Data is not shared but copied into private memories, increasing the total data size. – Data Integrity: difficulty in maintaining correctness of multiple copies of data item. 28

Multiprocessors vs Multicomputers (cont) • Programming advantages of message-passing – No problem with simultaneous access to data. – Allows different PCs to operate on the same data independently. – Allows PCs on a network to be easily upgraded when faster processors become available. 29

Types of Parallel Execution • Data parallelism • Job parallelism – Sometimes called control parallelism – Also called Functional parallelism by Quinn • Pipelining • Virtual parallelism 30

Data Parallelism • All tasks (or processors) apply the same set of operations to different data. • Example: for i 0 to 99 do a[i] b[i] + c[i] endfor • Operations may be executed concurrently • Accomplished on SIMDs by having all active processors execute the operations synchronously. • Can be accomplished on MIMDs by assigning 100/p tasks to each processor and having each processor to calculated its share asynchronously. 31

Supporting MIMD Data Parallelism • SPMD (single program, multiple data) programming is not really data parallel execution, as processors typically execute different sections of the program concurrently. • Data parallel programming can be strictly enforced when using SPMD as follows: – Processors execute the same block of instructions concurrently but asynchronously – No communication or synchronization occurs within these concurrent instruction blocks. – Each instruction block is normally followed by a synchronization and communication block of steps 32

MIMD Data Parallelism (cont. ) • Strict data parallel programming is unusual for MIMDs, as it forces all processors to complete each portion of the program before any can move to the next part of program. • Fairly common to force some parts of program to be completed by all processors before any can proceed to the next part of the program. 33

Job Parallelism Features • Also called control parallelism • Problem is divided into different nonidentical tasks • Tasks are divided between the processors so that their workload is roughly balanced – Division of tasks occurs initially or by use of a scheduling algorithm. 34

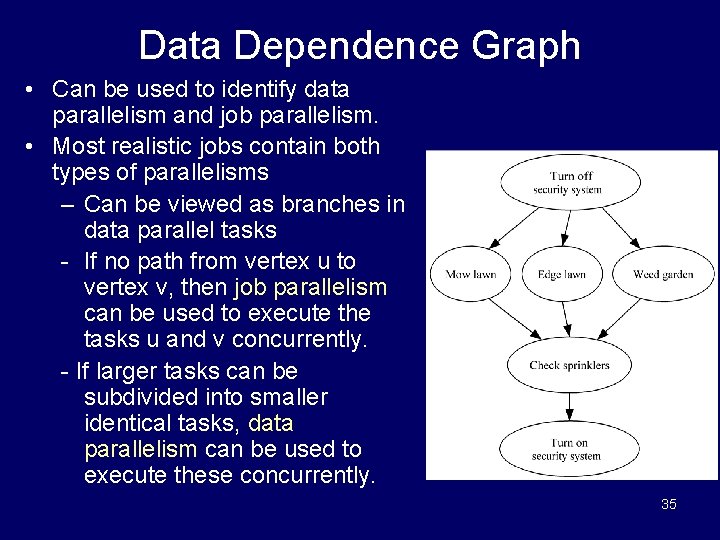

Data Dependence Graph • Can be used to identify data parallelism and job parallelism. • Most realistic jobs contain both types of parallelisms – Can be viewed as branches in data parallel tasks - If no path from vertex u to vertex v, then job parallelism can be used to execute the tasks u and v concurrently. - If larger tasks can be subdivided into smaller identical tasks, data parallelism can be used to execute these concurrently. 35

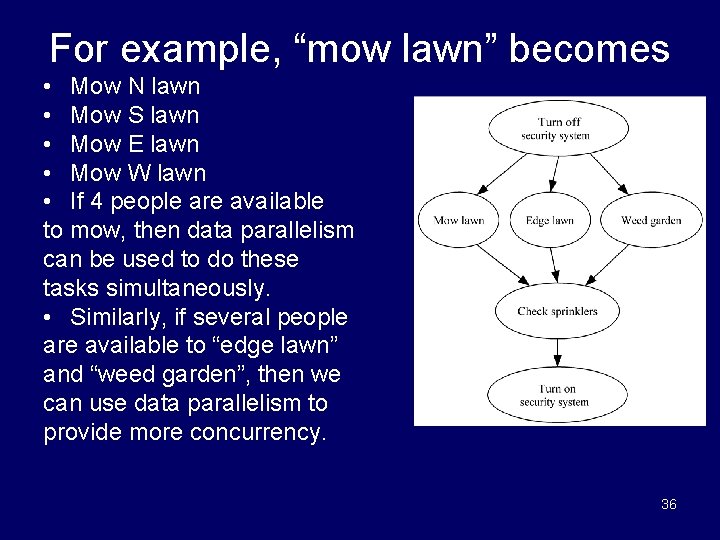

For example, “mow lawn” becomes • Mow N lawn • Mow S lawn • Mow E lawn • Mow W lawn • If 4 people are available to mow, then data parallelism can be used to do these tasks simultaneously. • Similarly, if several people are available to “edge lawn” and “weed garden”, then we can use data parallelism to provide more concurrency. 36

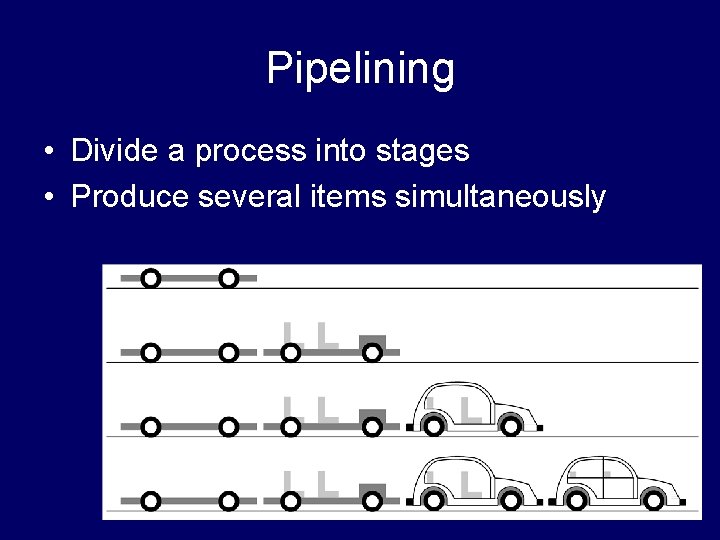

Pipelining • Divide a process into stages • Produce several items simultaneously 37

![Compute Partial Sums Consider the for loop: p[0] a[0] for i 1 to 3 Compute Partial Sums Consider the for loop: p[0] a[0] for i 1 to 3](http://slidetodoc.com/presentation_image/5fc25d3f9dc0dcb8f5f6489f4314f2a3/image-38.jpg)

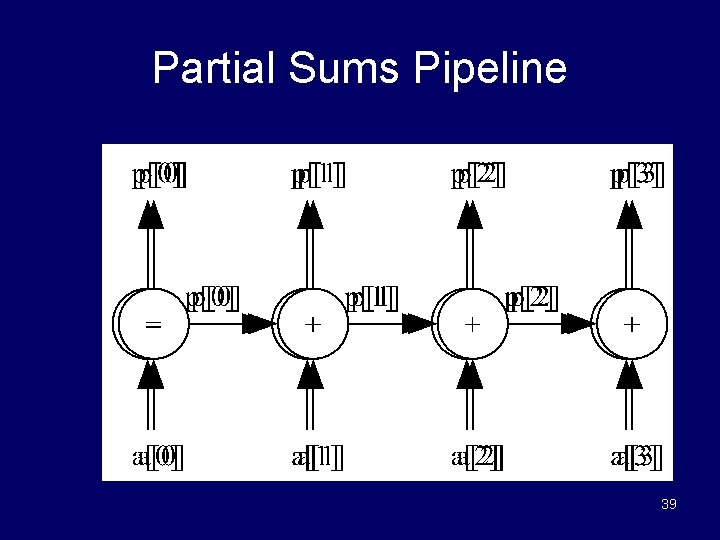

Compute Partial Sums Consider the for loop: p[0] a[0] for i 1 to 3 do p[i] p[i-1] + a[i] endfor • This computes the partial sums: p[0] a[0] p[1] a[0] + a[1] p[2] a[0] + a[1] + a[2] p[3] a[0] + a[1] + a[2] + a[3] • The loop is not data parallel as there are dependencies. • However, we can stage the calculations in order to obtain parallelism. 38

Partial Sums Pipeline 39

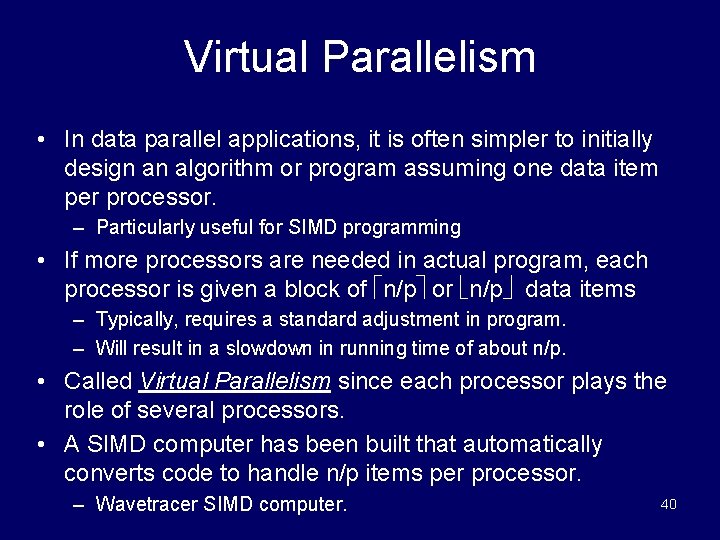

Virtual Parallelism • In data parallel applications, it is often simpler to initially design an algorithm or program assuming one data item per processor. – Particularly useful for SIMD programming • If more processors are needed in actual program, each processor is given a block of n/p or n/p data items – Typically, requires a standard adjustment in program. – Will result in a slowdown in running time of about n/p. • Called Virtual Parallelism since each processor plays the role of several processors. • A SIMD computer has been built that automatically converts code to handle n/p items per processor. – Wavetracer SIMD computer. 40

Interconnection Networks • Uses of interconnection networks – Connect processors to shared memory – Connect processors to each other • Different interconnection networks define different parallel machines. • The interconnection network’s properties influence their effectiveness in data movement.

Terminology for Evaluating Switch Topologies • We will discuss 4 characteristics of a network in order to help us understand their effectiveness • These are – – The diameter The bisection width The edges per node The constant edge length • Afterwards, we will introduce several different interconnection networks.

Terminology for Evaluating Switch Topologies • Diameter – Largest distance between two network nodes. – A low diameter is desirable – It puts a lower bound on the complexity of parallel algorithms which requires communication between arbitrary pairs of nodes.

Terminology for Evaluating Switch Topologies • Bisection width – The minimum number of edges between switch nodes that must be removed in order to divide the network into two halves. – Or within 1 node of one-half if the number of processors is odd. • High bisection width is desirable. • In algorithms requiring large amounts of data movement, the size of the data set divided by the bisection width puts a lower bound on the running time of an algorithm.

Terminology for Evaluating Switch Topologies • Number of edges per node – It is best if the maximum number of edges/node is a constant independent of network size, as this allows the processor organization to scale more easily to a larger number of nodes. – Degree is the maximum number of edges per node. • Constant edge length? (yes/no) – Again, for scalability, it is best if the nodes and edges can be laid out in 3 D space so that the maximum edge length is a constant independent of network size.

Three Important Interconnection Networks • We will consider the following three well known interconnection networks: – 2 -D mesh – linear network – hypercube • All three of these networks have been used to build many commercial parallel computers.

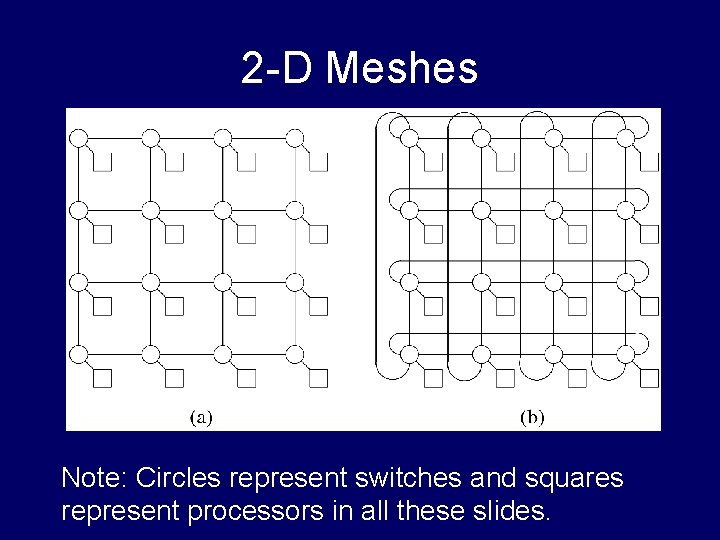

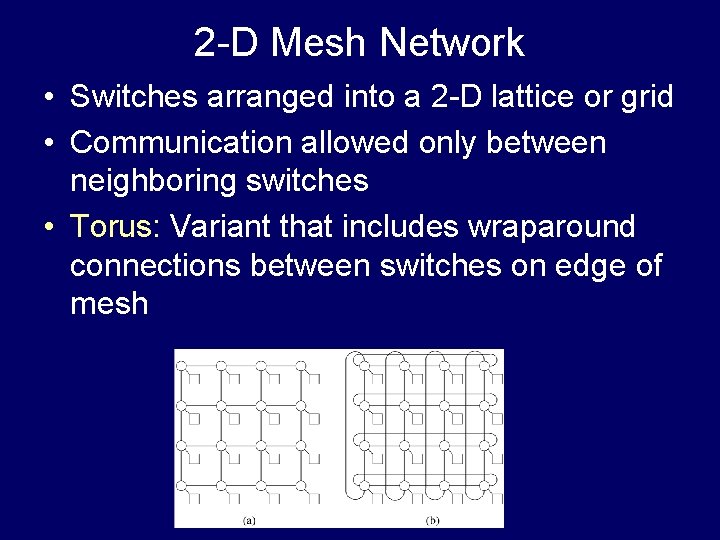

2 -D Meshes Note: Circles represent switches and squares represent processors in all these slides.

2 -D Mesh Network • Switches arranged into a 2 -D lattice or grid • Communication allowed only between neighboring switches • Torus: Variant that includes wraparound connections between switches on edge of mesh

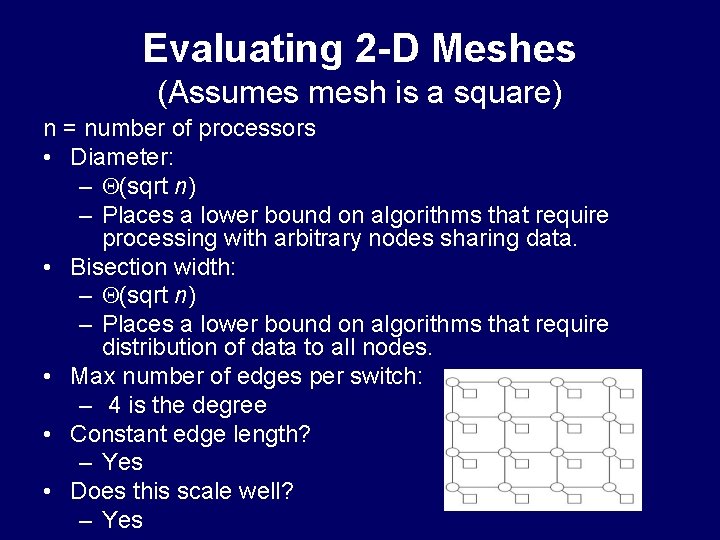

Evaluating 2 -D Meshes (Assumes mesh is a square) n = number of processors • Diameter: – (sqrt n) – Places a lower bound on algorithms that require processing with arbitrary nodes sharing data. • Bisection width: – (sqrt n) – Places a lower bound on algorithms that require distribution of data to all nodes. • Max number of edges per switch: – 4 is the degree • Constant edge length? – Yes • Does this scale well? – Yes

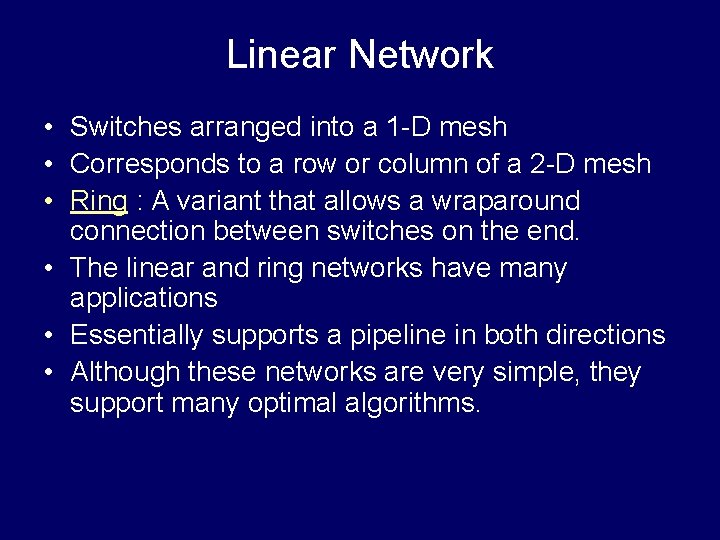

Linear Network • Switches arranged into a 1 -D mesh • Corresponds to a row or column of a 2 -D mesh • Ring : A variant that allows a wraparound connection between switches on the end. • The linear and ring networks have many applications • Essentially supports a pipeline in both directions • Although these networks are very simple, they support many optimal algorithms.

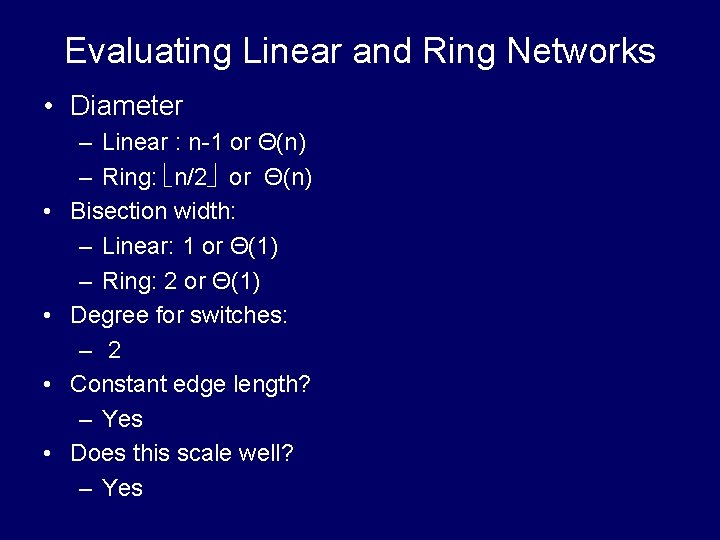

Evaluating Linear and Ring Networks • Diameter • • – Linear : n-1 or Θ(n) – Ring: n/2 or Θ(n) Bisection width: – Linear: 1 or Θ(1) – Ring: 2 or Θ(1) Degree for switches: – 2 Constant edge length? – Yes Does this scale well? – Yes

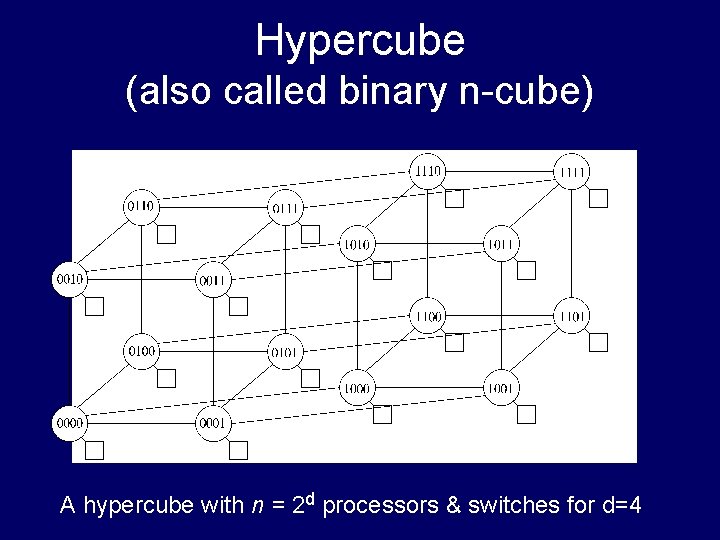

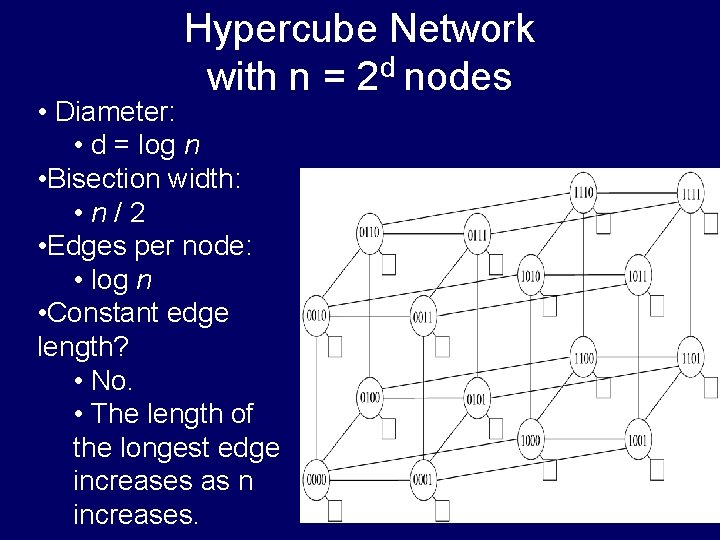

Hypercube (also called binary n-cube) A hypercube with n = 2 d processors & switches for d=4

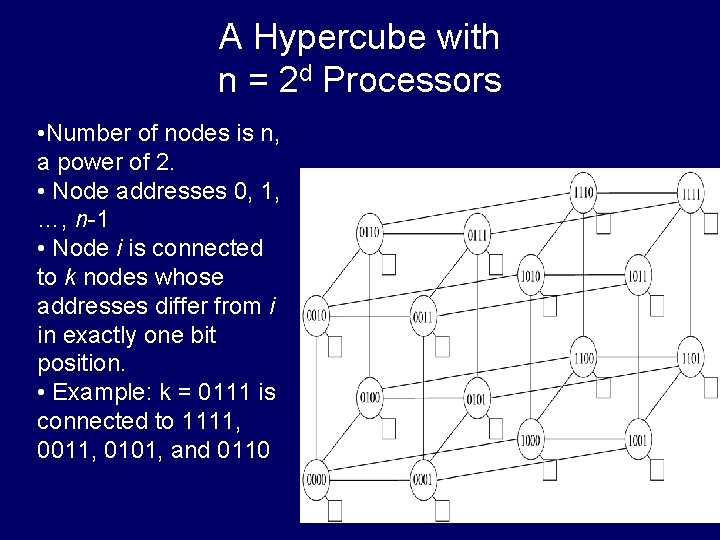

A Hypercube with n = 2 d Processors • Number of nodes is n, a power of 2. • Node addresses 0, 1, …, n-1 • Node i is connected to k nodes whose addresses differ from i in exactly one bit position. • Example: k = 0111 is connected to 1111, 0011, 0101, and 0110

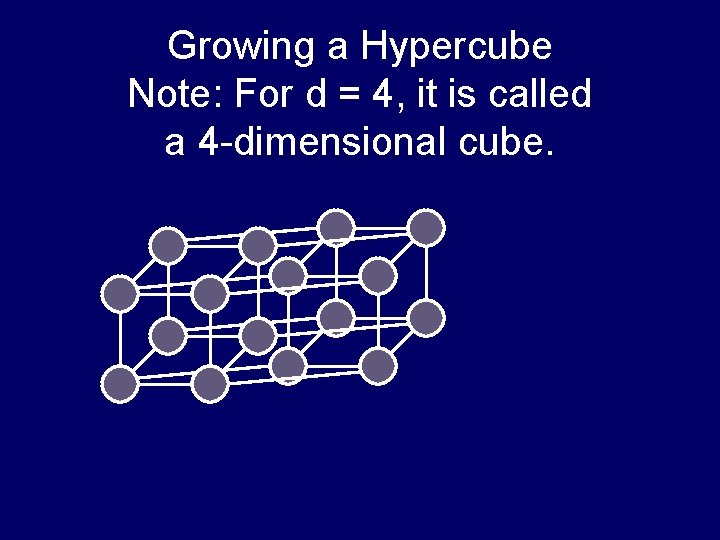

Growing a Hypercube Note: For d = 4, it is called a 4 -dimensional cube.

Hypercube Network with n = 2 d nodes • Diameter: • d = log n • Bisection width: • n/2 • Edges per node: • log n • Constant edge length? • No. • The length of the longest edge increases as n increases.

Performance Analysis Used in evaluating effectiveness of parallel algorithms & software

Speedup • • Speedup measures increase in running time due to parallelism. The number of PEs is given by n. S(n) = ts/tp , where – – • ts is the running time on a single processor, using the fastest known sequential algorithm tp is the running time of parallel algorithm or program. In simplest terms,

Linear Speedup is Usually Optimal • Speedup is linear if S(n) = (n) • Claim: The maximum possible speedup for parallel computers with n PEs is n. • Usual argument: (Assume ideal conditions) – Assume the computation can be partitioned perfectly into n processes of equal duration. – Assume no overhead is incurred as a result of this partitioning of the computation – (e. g. , partitioning process, information passing, coordination of processes, etc), – Under these ideal conditions, the parallel computation will execute n times faster than the sequential computation and • the parallel running time will be ts /n. – Then the parallel speedup in this “ideal situation” is S(n) = ts /tp = ts /(ts /n) = n

Linear Speedup Usually Optimal (cont) • This argument shows that for typical problems, linear speedup to be optimal • This argument is valid for traditional problems, but is invalid for some types of nontraditional problems.

Speedup Usually Smaller Than Linear • Unfortunately, the best speedup possible for most applications is considerably smaller than n – The “ideal conditions” performance mentioned in earlier argument are usually unattainable. – Normally, some parts of programs are sequential and allow only one PE to be active. – Sometimes several processors are idle for portions of the program. For example • Some PEs may be waiting to receive or to send data during parts of the program. • Congestion may occur during message passing

Cost • The cost of a parallel algorithm (or program) is Cost = Parallel running time #processors • The cost of a parallel algorithm should be compared to the running time of a sequential algorithm. – Cost removes the advantage of parallelism by charging for each additional processor. – A parallel algorithm whose cost is O(running time) of an optimal sequential algorithm is called cost-optimal.

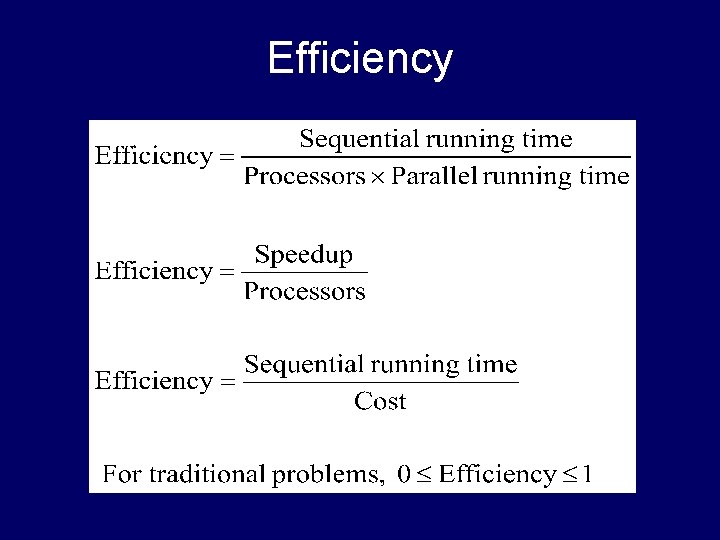

Efficiency

Amdahl’s Law • Having a detailed understanding of Amdahl’s law is not essential for this course. • However, having a brief, non-technical introduction to this important law may be useful. 63

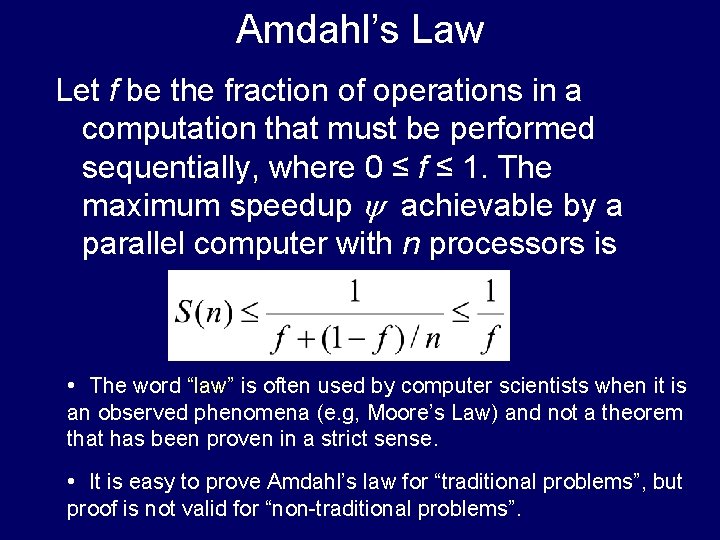

Amdahl’s Law Let f be the fraction of operations in a computation that must be performed sequentially, where 0 ≤ f ≤ 1. The maximum speedup achievable by a parallel computer with n processors is • The word “law” is often used by computer scientists when it is an observed phenomena (e. g, Moore’s Law) and not a theorem that has been proven in a strict sense. • It is easy to prove Amdahl’s law for “traditional problems”, but proof is not valid for “non-traditional problems”.

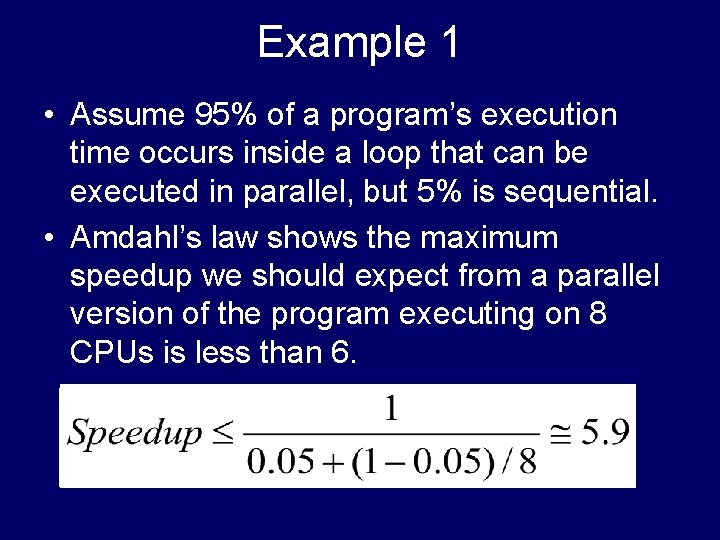

Example 1 • Assume 95% of a program’s execution time occurs inside a loop that can be executed in parallel, but 5% is sequential. • Amdahl’s law shows the maximum speedup we should expect from a parallel version of the program executing on 8 CPUs is less than 6.

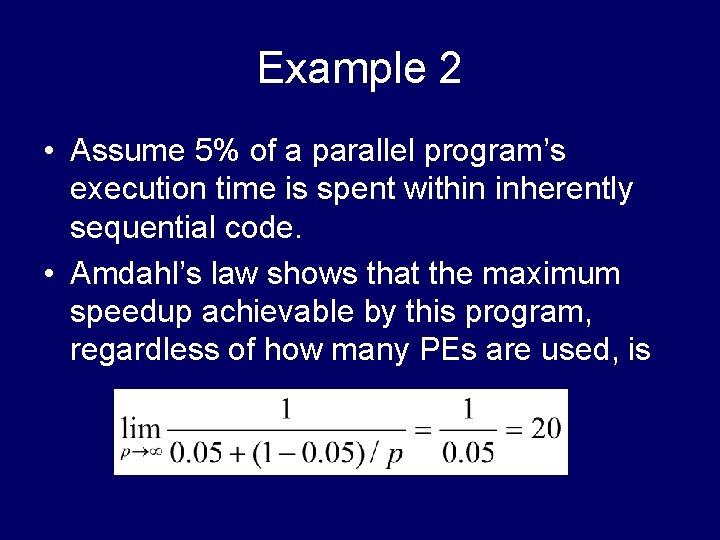

Example 2 • Assume 5% of a parallel program’s execution time is spent within inherently sequential code. • Amdahl’s law shows that the maximum speedup achievable by this program, regardless of how many PEs are used, is

- Slides: 66