Object recognition BY KARAM ABU ALHIJA IBRAHEM IGBARIA

Object recognition BY: KARAM ABU ALHIJA IBRAHEM IGBARIA LECTURER: HAGIT HEL-OR

Outline Introduction: What is object recognition? What does object recognition involve. Classification vs detection Bag of words: Bag of words Bag of features Part based models: Problem with bag of words Pascal challenge

What is object recognition?

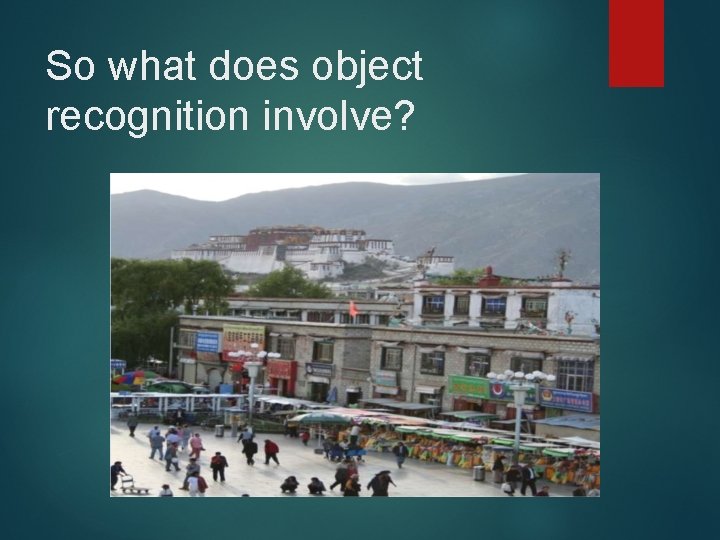

So what does object recognition involve?

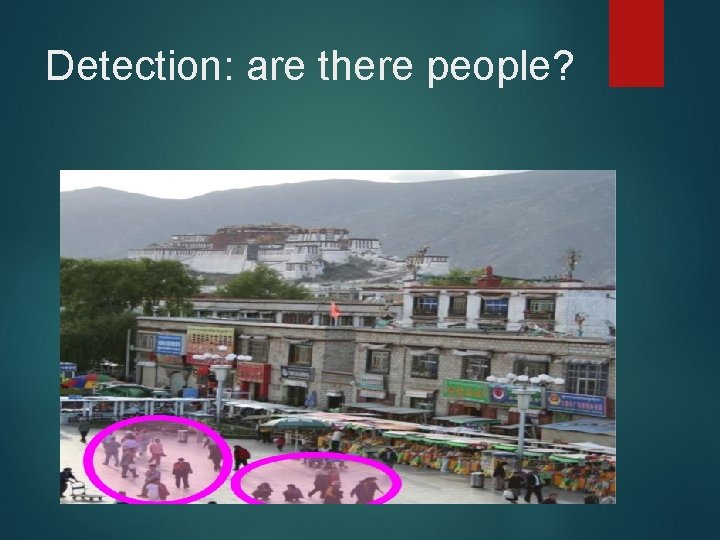

Detection: are there people?

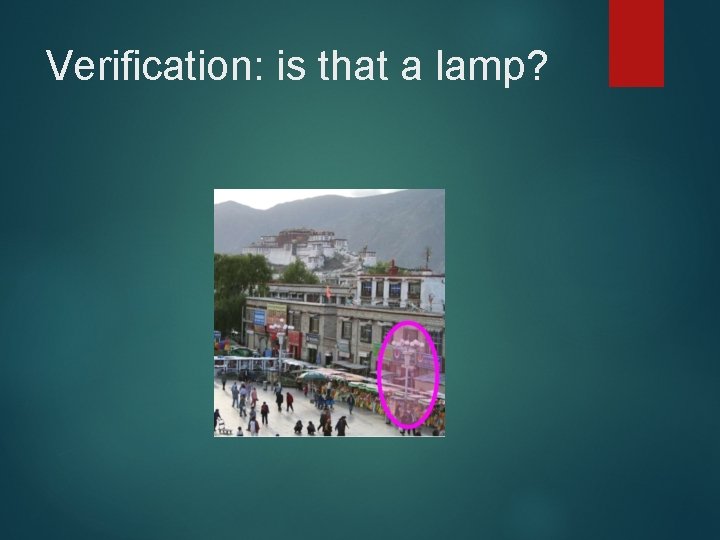

Verification: is that a lamp?

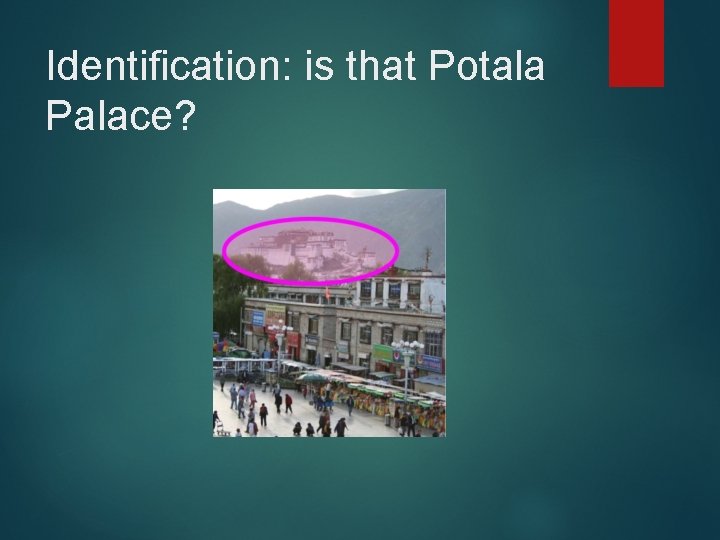

Identification: is that Potala Palace?

Image classification: assigning a class label to the image People: present buildings: present cars: not present …. .

Application

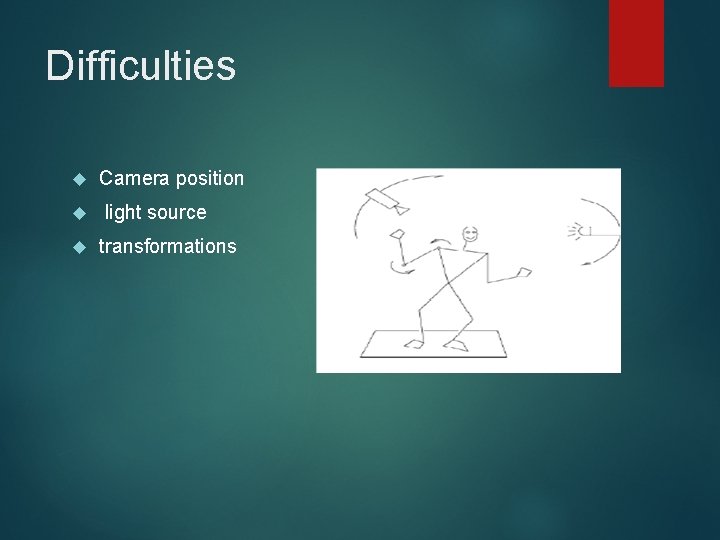

Difficulties Camera position light source transformations

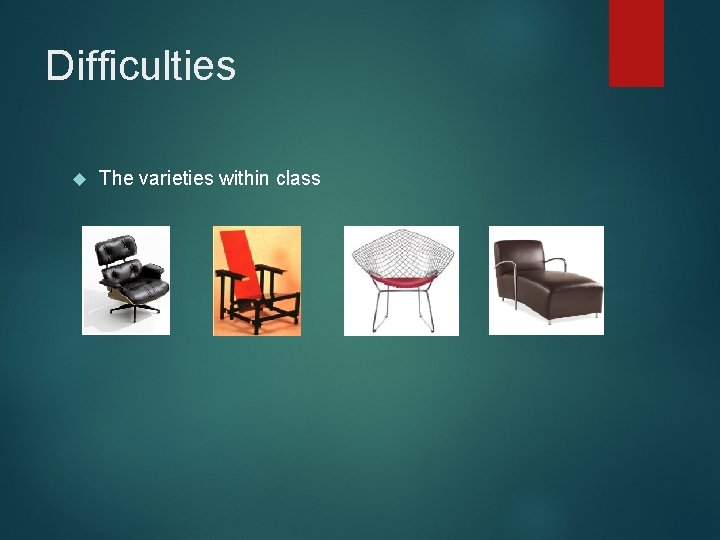

Difficulties The varieties within class

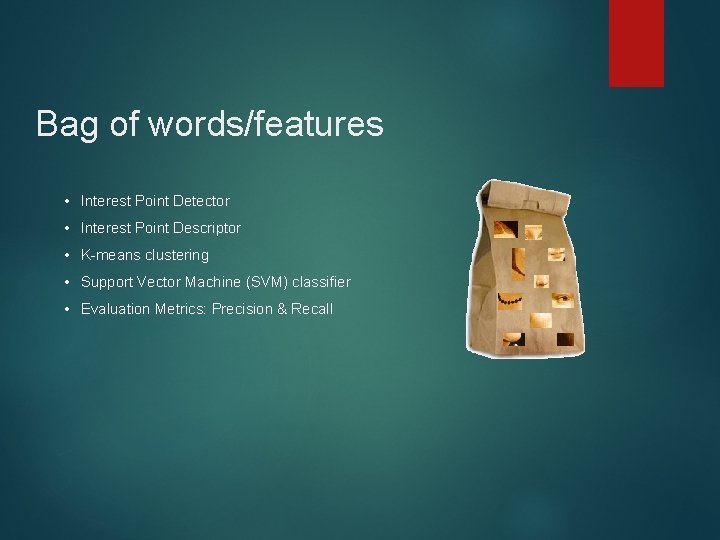

Bag of words/features • Interest Point Detector • Interest Point Descriptor • K-means clustering • Support Vector Machine (SVM) classifier • Evaluation Metrics: Precision & Recall

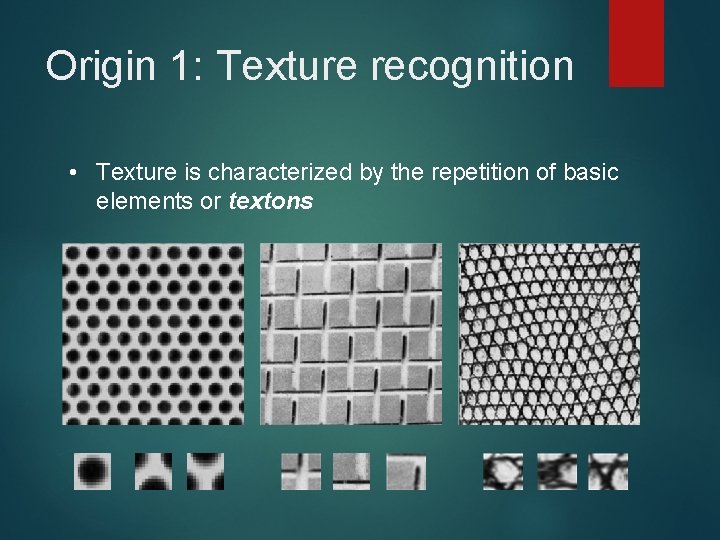

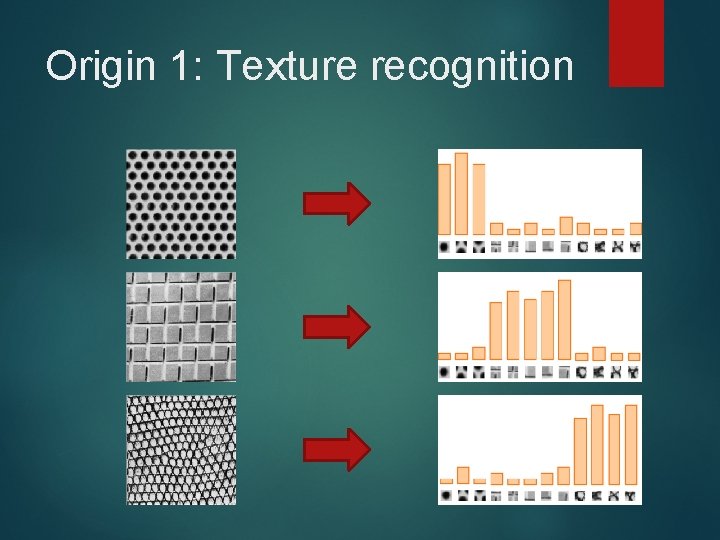

Origin 1: Texture recognition • Texture is characterized by the repetition of basic elements or textons

Origin 1: Texture recognition

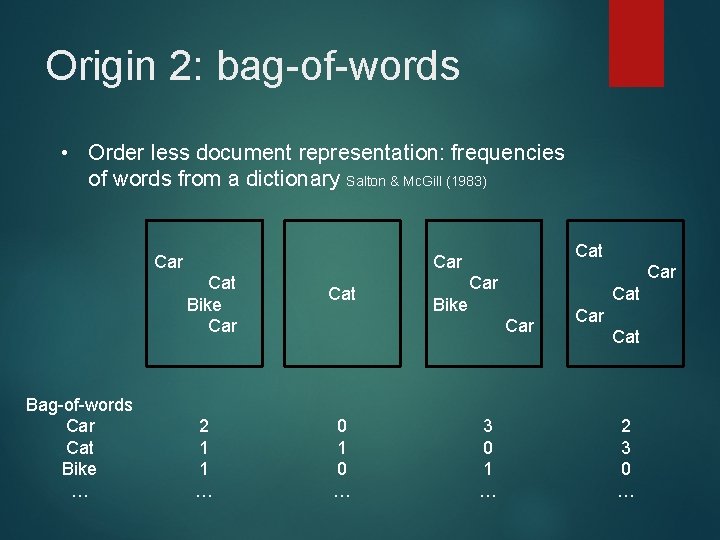

Origin 2: bag-of-words • Order less document representation: frequencies of words from a dictionary Salton & Mc. Gill (1983) Car Cat Bike Car Bag-of-words Car Cat Bike … Cat Car 2 1 1 … Cat Car Cat Bike Car 0 1 0 … 3 0 1 … Car Cat 2 3 0 …

Bags of features People, ball, grass

Bag of features: outline 1. Extract features

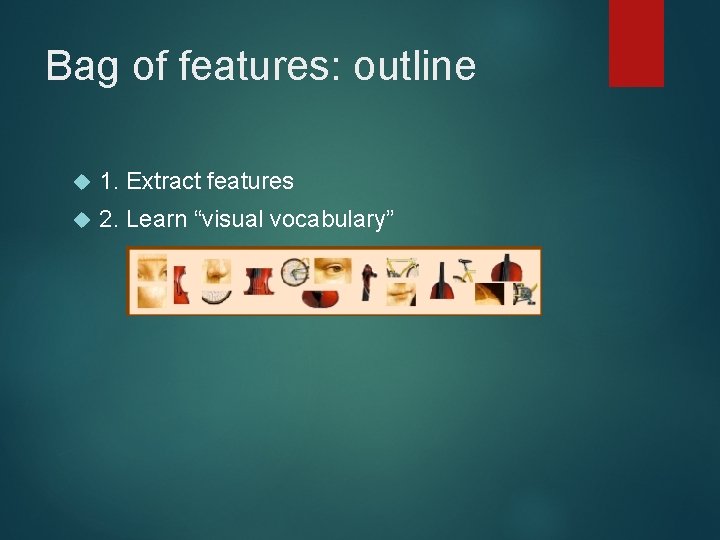

Bag of features: outline 1. Extract features 2. Learn “visual vocabulary”

Bag of features: outline 1. Extract features 2. Learn “visual vocabulary” 3. Quantize features using visual vocabulary

Bag of features: outline 1. Extract features 2. Learn “visual vocabulary” 3. Quantize features using visual vocabulary 4. Represent images by frequencies of “visual words”

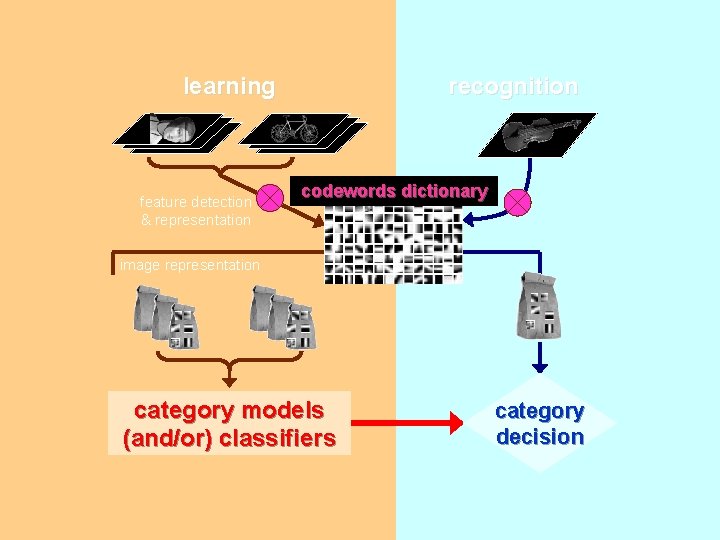

learning feature detection & representation recognition codewords dictionary image representation category models (and/or) classifiers category decision

Feature detection Regular grid

Feature detection Regular grid Point of interest Harris SIFT

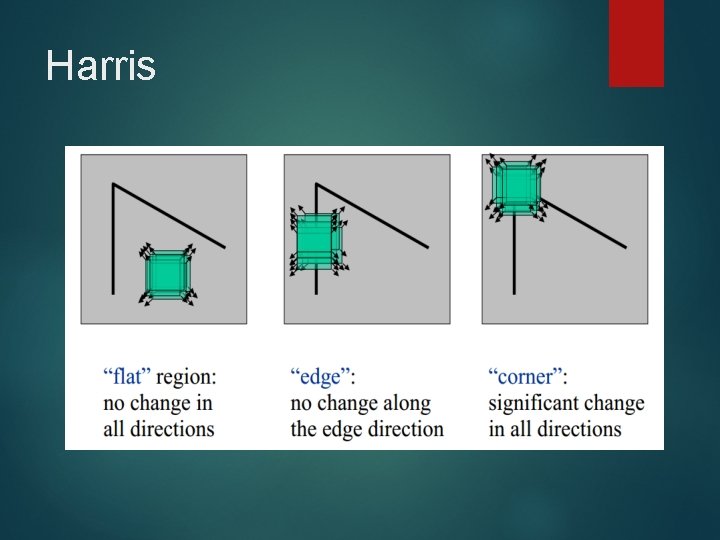

Harris

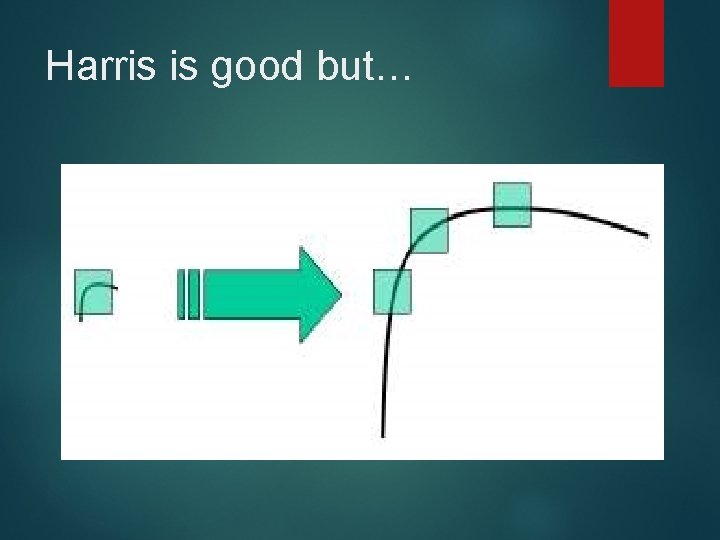

Harris is good but…

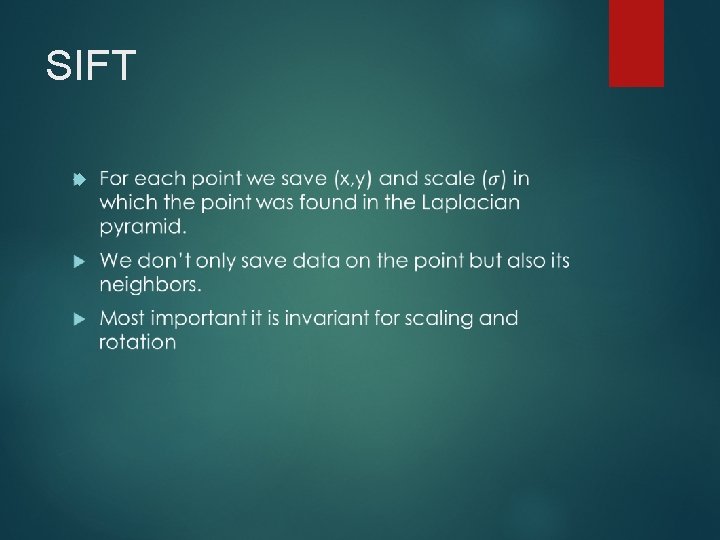

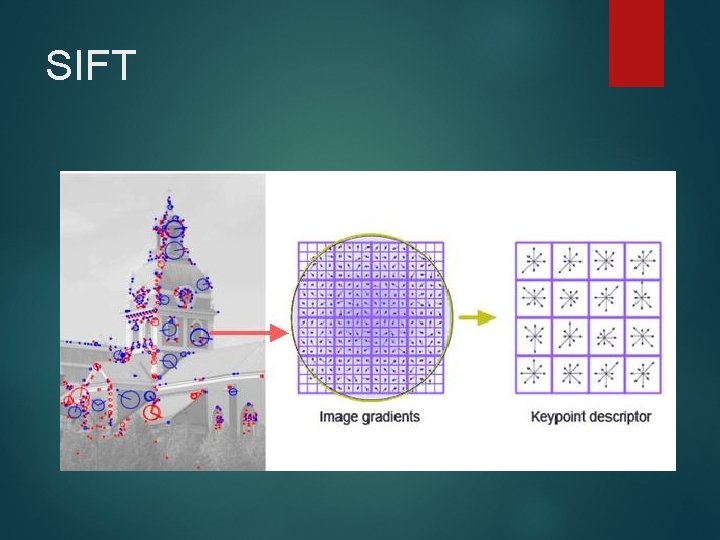

SIFT

SIFT

![Representation of the features Compute SIFT descriptor Normalize patch [Lowe’ 99] Detect patches Representation of the features Compute SIFT descriptor Normalize patch [Lowe’ 99] Detect patches](http://slidetodoc.com/presentation_image_h/217bef0c79247be16a6d81ddf51b08bb/image-28.jpg)

Representation of the features Compute SIFT descriptor Normalize patch [Lowe’ 99] Detect patches

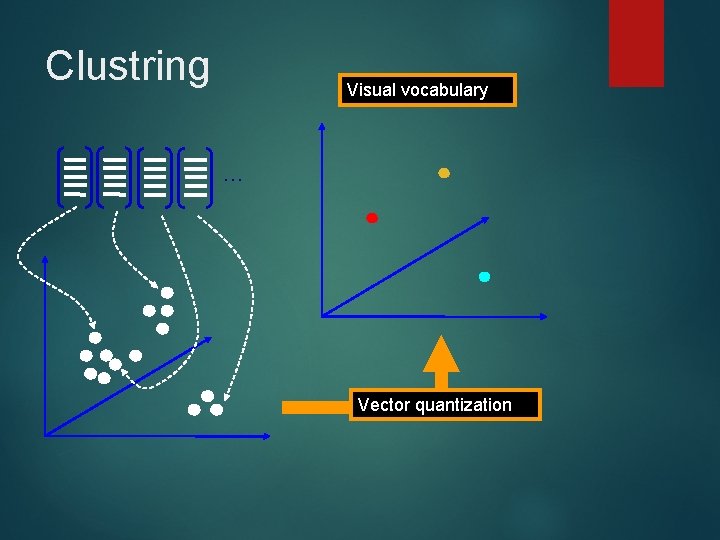

Clustring Visual vocabulary … Vector quantization

Why cluster Clustering is a common method for learning a visual vocabulary or codebook Unsupervised learning process Each cluster center produced by k-means becomes a codevector Codebook can be learned on separate training set Provided the training set is sufficiently representative, the codebook will be “universal”

The codebook is used for quantizing features A vector quantizer takes a feature vector and maps it to the index of the nearest codevector in a codebook

K - means Choose randomly k – points as the center of the cluster Assign the to every point the points closest to it Calculate the new mean and back to step 2 We do it until we get unchanging centers

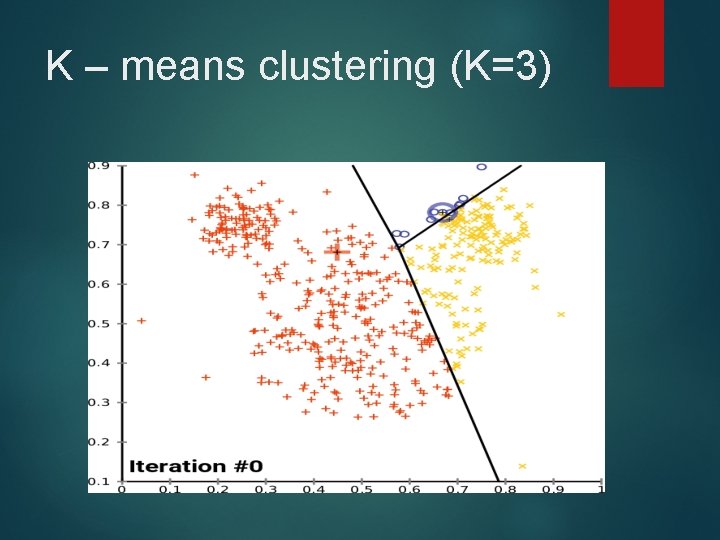

K – means clustering (K=3)

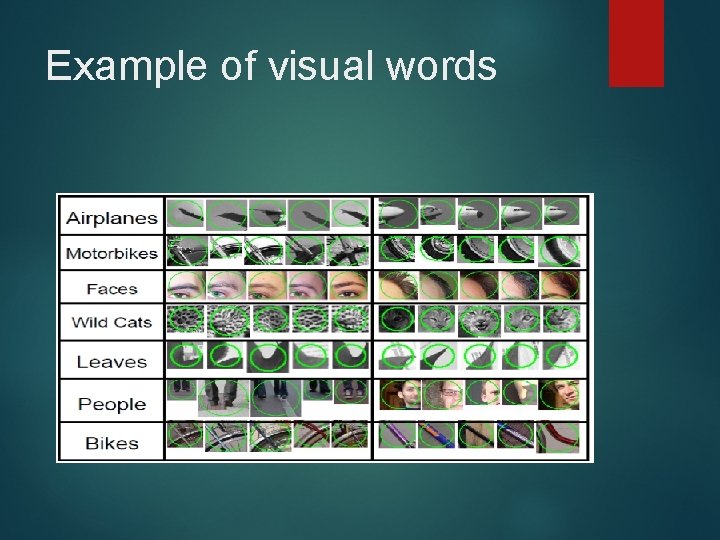

Example of visual words

Visual vocabularies: Issues How to choose vocabulary size? Too small: visual words not representative of all patches Too large: quantization artifacts, overfitting

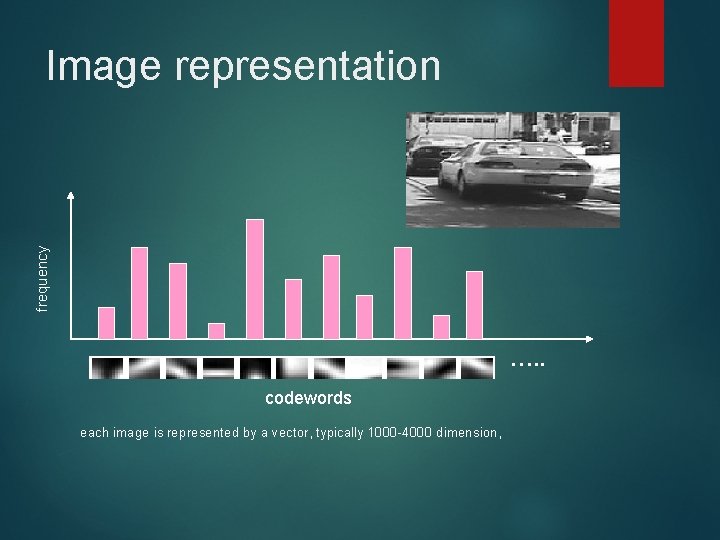

frequency Image representation …. . codewords each image is represented by a vector, typically 1000 -4000 dimension,

Classification Learn a decision rule (classifier) assigning bag-offeatures representations of images to different classes Motorcycle Not motorcycle

classifier The classifier must be trained using a set of negative and positive examples. The classifier “learns” the regularities in the data. If training was successful classifier can classify an unknown example with a high degree of accuracy.

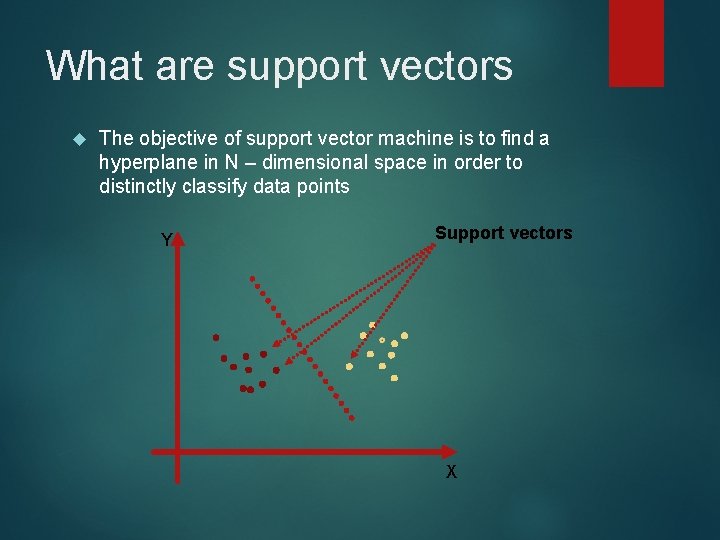

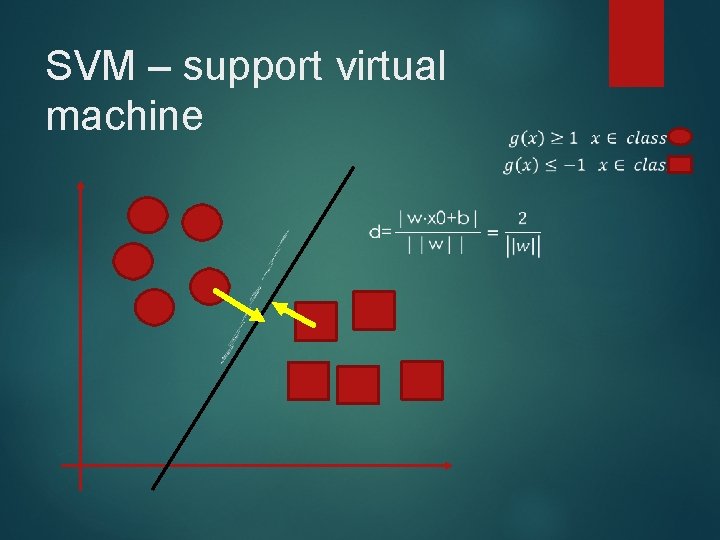

What are support vectors The objective of support vector machine is to find a hyperplane in N – dimensional space in order to distinctly classify data points Y Support vectors X

SVM – support virtual machine

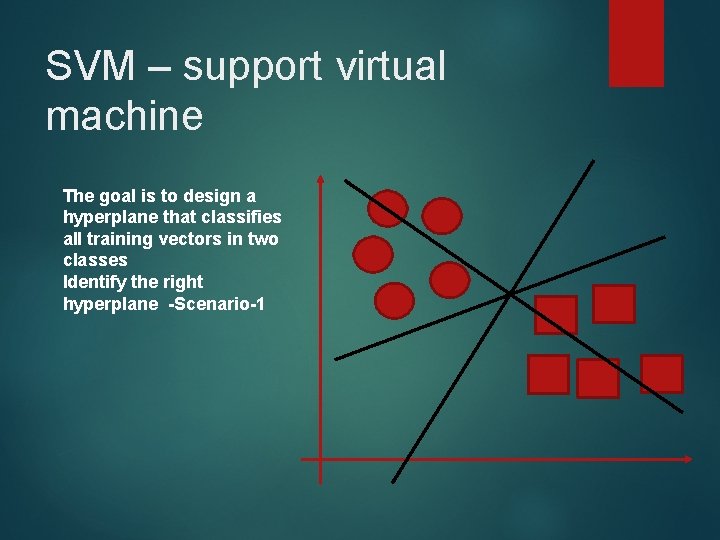

SVM – support virtual machine The goal is to design a hyperplane that classifies all training vectors in two classes Identify the right hyperplane -Scenario-1

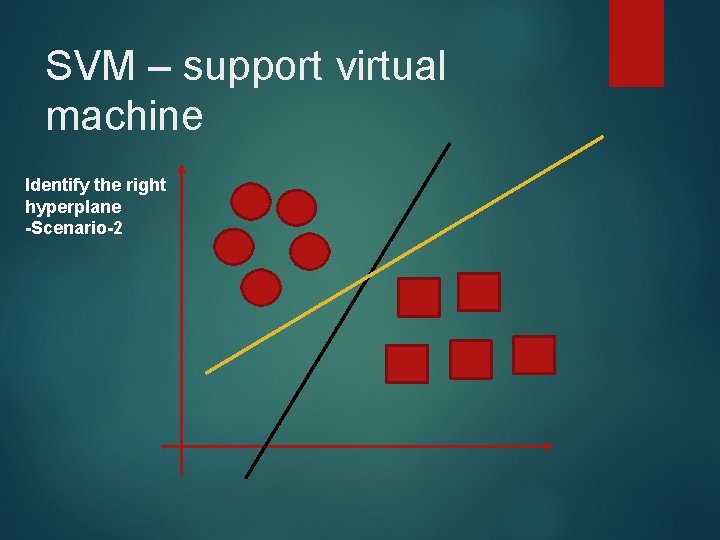

SVM – support virtual machine Identify the right hyperplane -Scenario-2

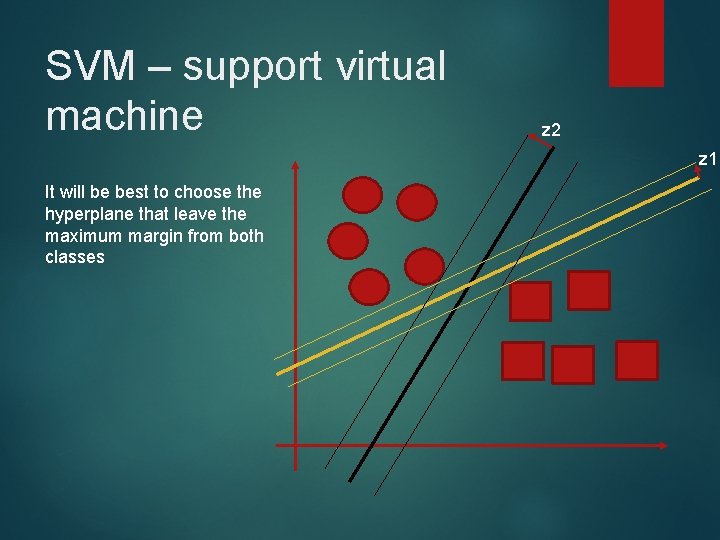

SVM – support virtual machine z 2 z 1 It will be best to choose the hyperplane that leave the maximum margin from both classes

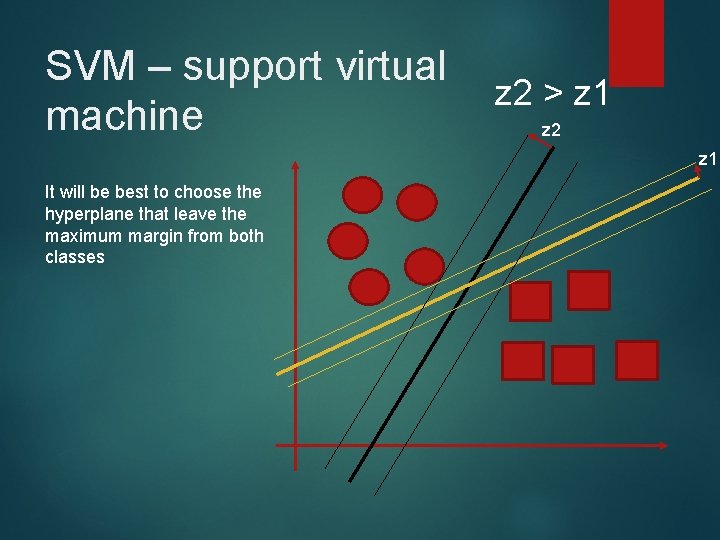

SVM – support virtual z 2 > z 1 machine z 2 z 1 It will be best to choose the hyperplane that leave the maximum margin from both classes

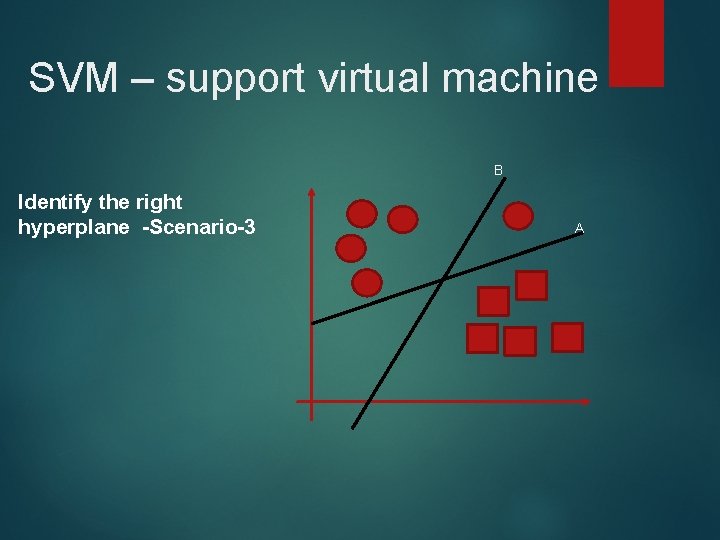

SVM – support virtual machine B Identify the right hyperplane -Scenario-3 A

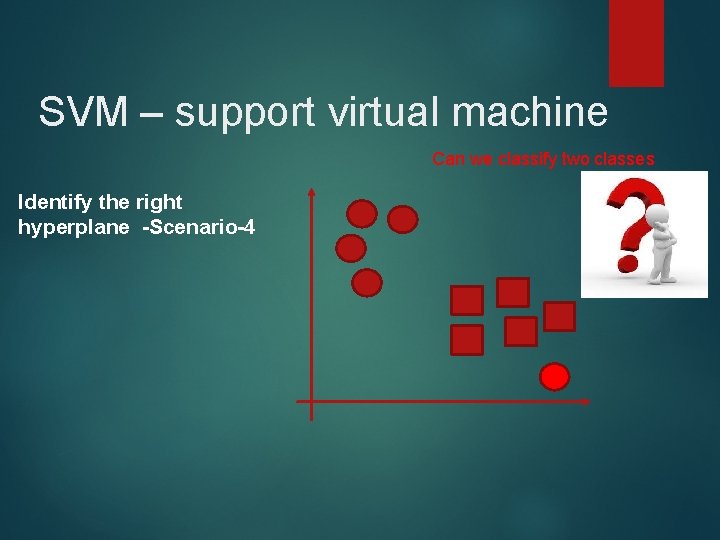

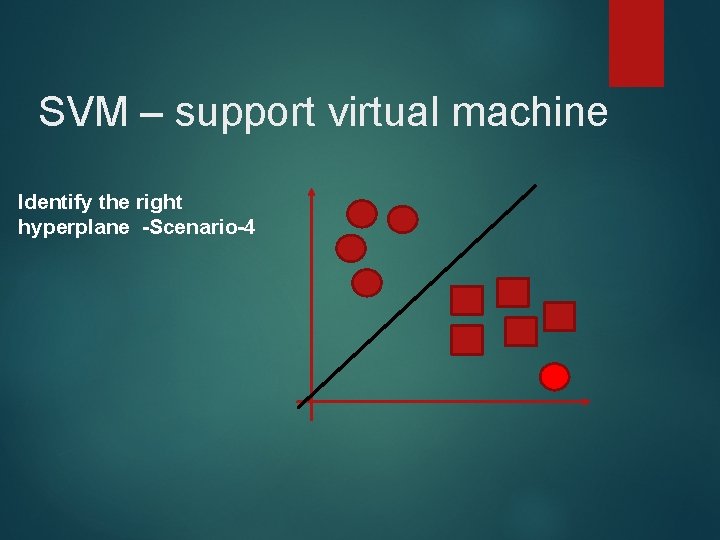

SVM – support virtual machine Can we classify two classes Identify the right hyperplane -Scenario-4

SVM – support virtual machine Identify the right hyperplane -Scenario-4

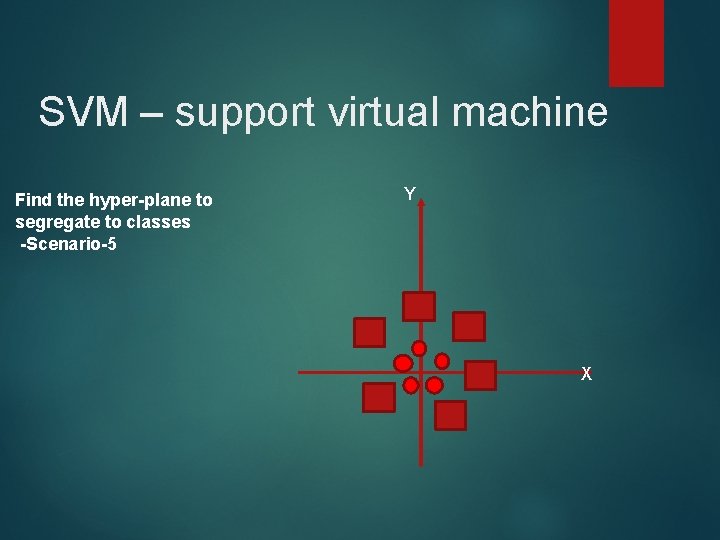

SVM – support virtual machine Find the hyper-plane to segregate to classes -Scenario-5 Y X

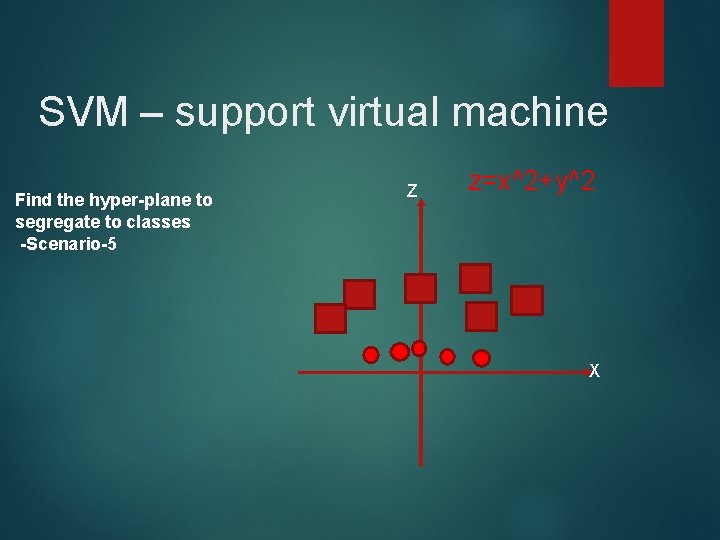

SVM – support virtual machine Find the hyper-plane to segregate to classes -Scenario-5 Z z=x^2+y^2 X

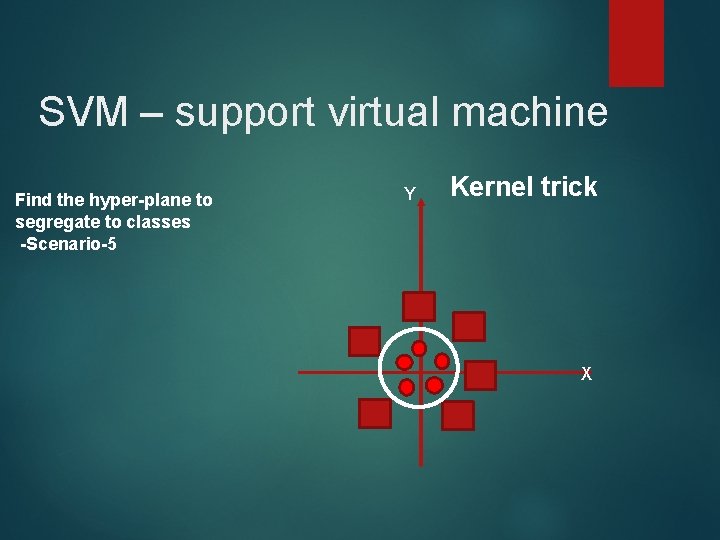

SVM – support virtual machine Find the hyper-plane to segregate to classes -Scenario-5 Y Kernel trick X

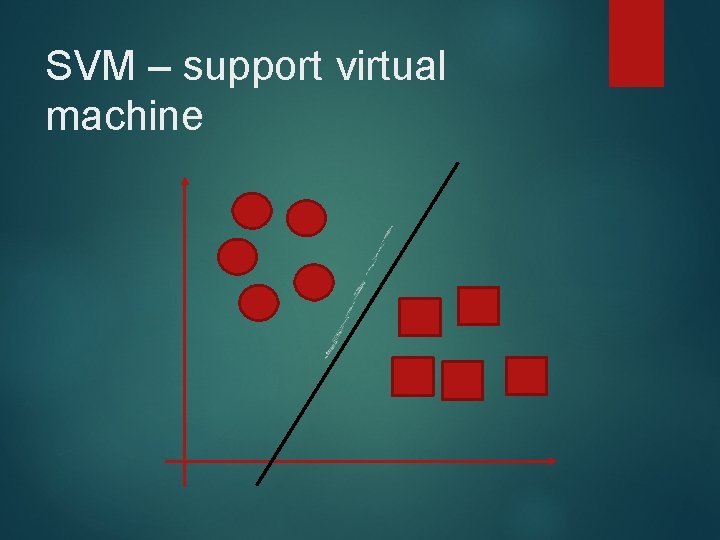

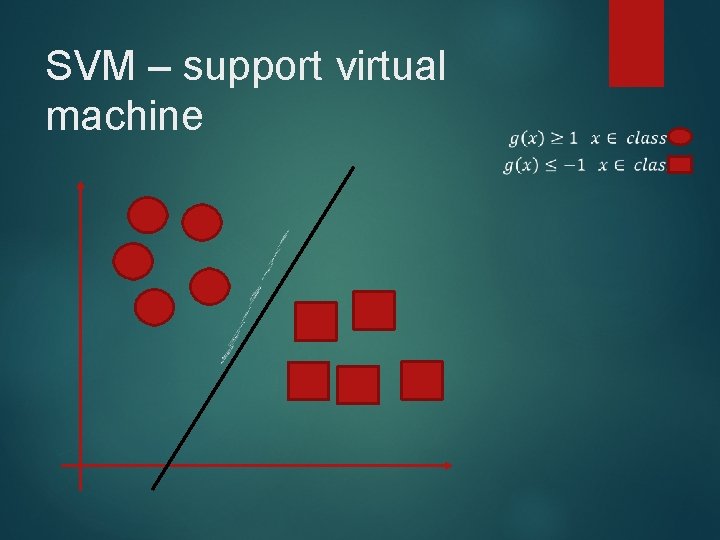

SVM – support virtual machine

SVM – support virtual machine

SVM – support virtual machine

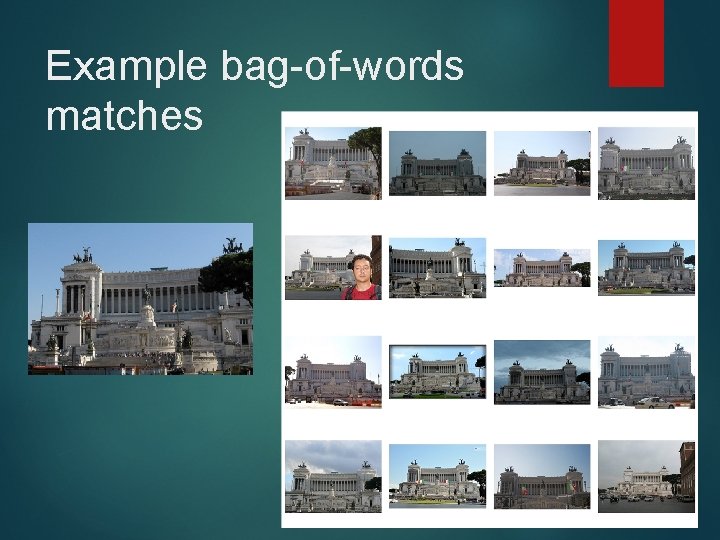

Example bag-of-words matches

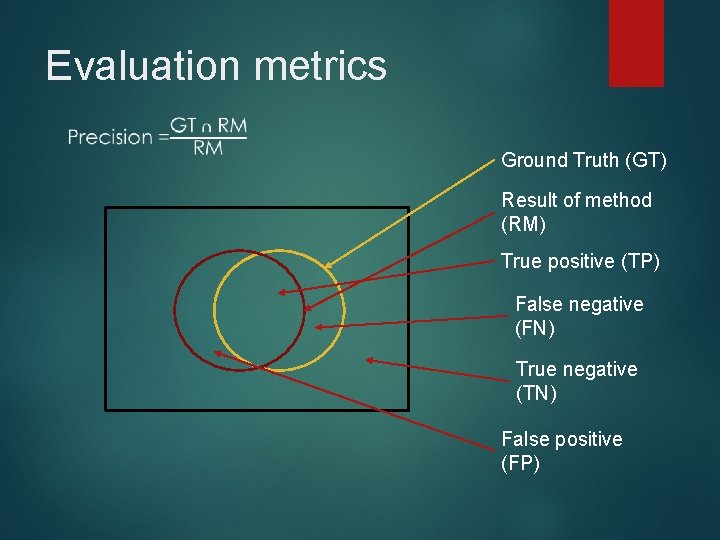

Evaluation metrics Ground Truth (GT) Result of method (RM) True positive (TP) False negative (FN) True negative (TN) False positive (FP)

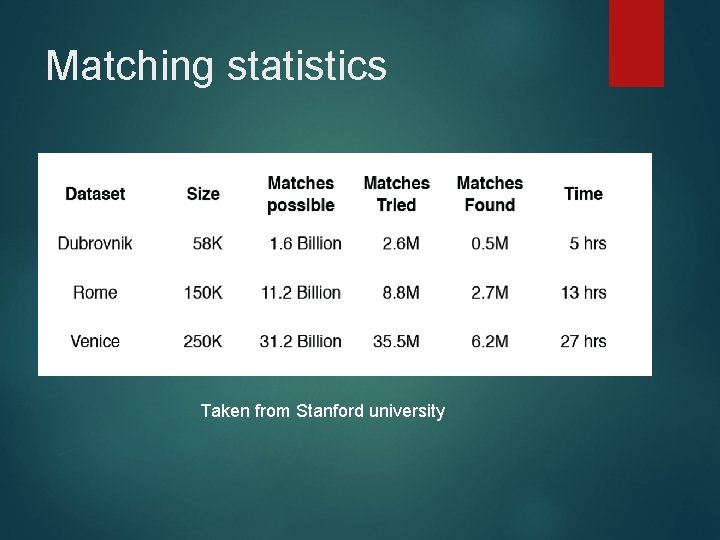

Matching statistics Taken from Stanford university

So what happens when getting new image extract features build a histogram for each feature, find the closest visual word in the dictionary And by the histogram we can classify what objects in the image

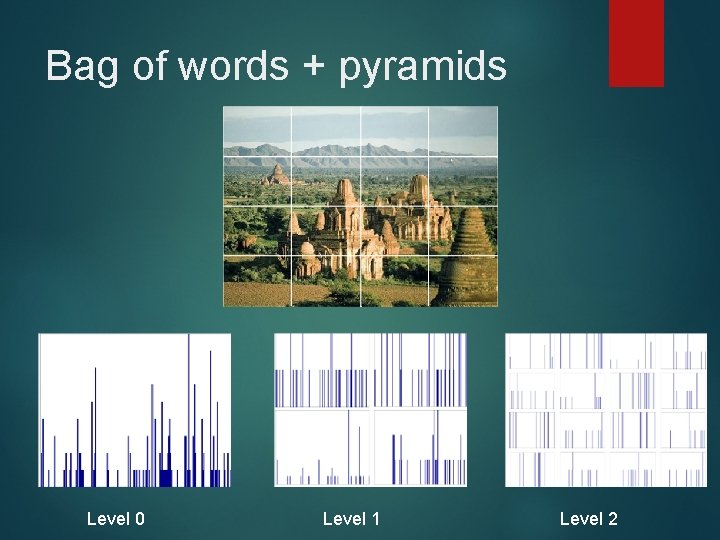

What about spatial info? Spatial pyramids matching

Pyramids Very useful for representing images. Pyramid is built by using multiple copies of image. Each level in the pyramid is 1/4 of the size of previous level. The lowest level is of the highest resolution. The highest level is of the lowest resolution.

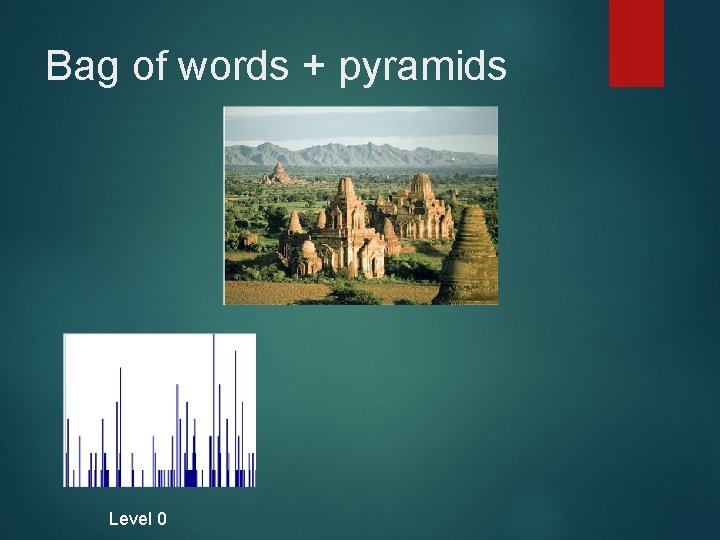

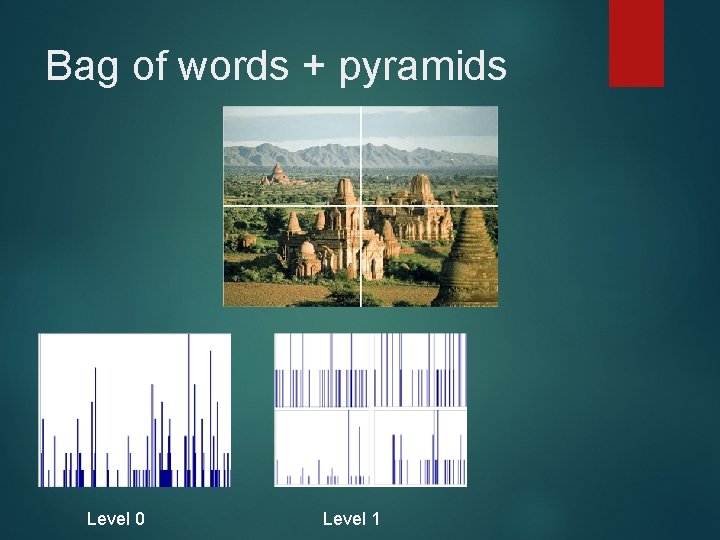

Bag of words + pyramids Level 0

Bag of words + pyramids Level 0 Level 1

Bag of words + pyramids Level 0 Level 1 Level 2

Scene category dataset

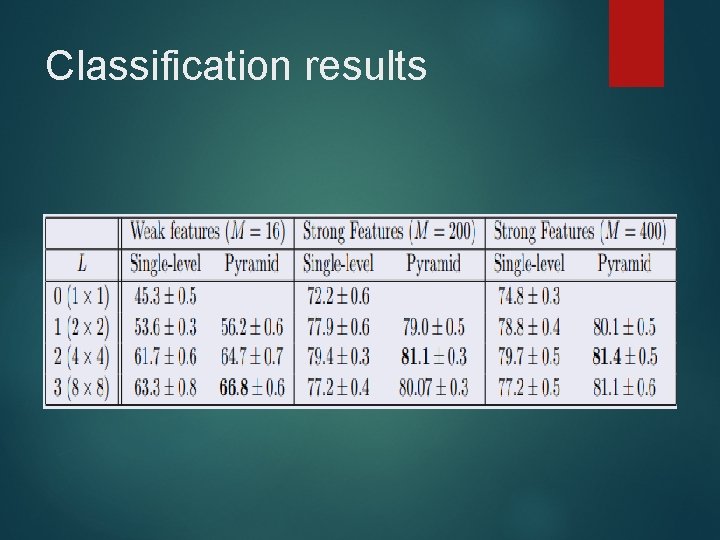

Classification results

Part 2: part-based models

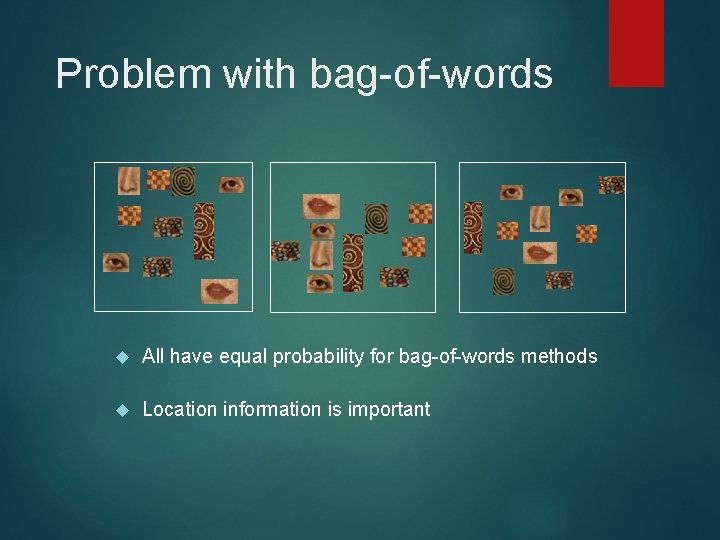

Problem with bag-of-words All have equal probability for bag-of-words methods Location information is important

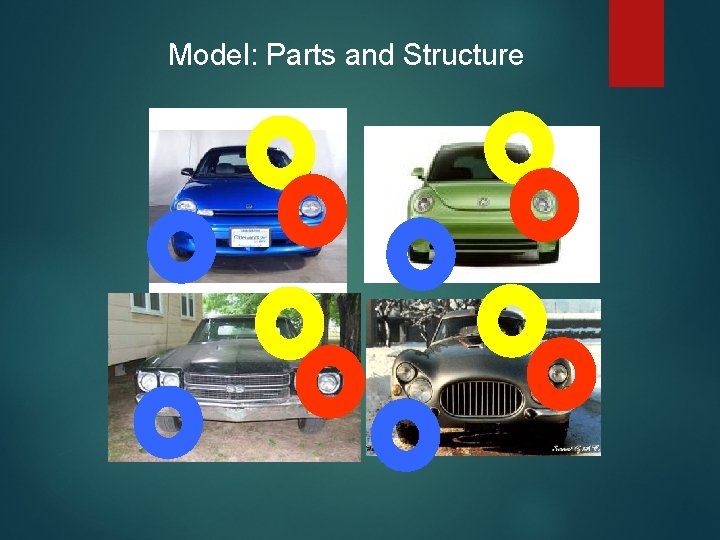

Model: Parts and Structure

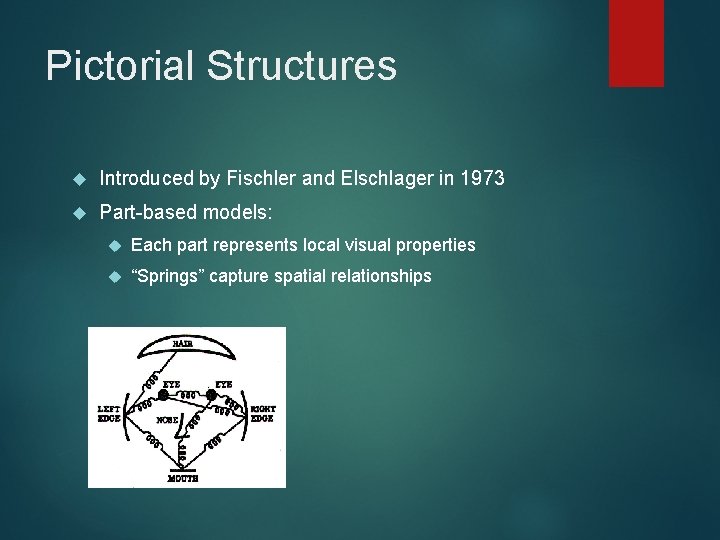

Pictorial Structures Introduced by Fischler and Elschlager in 1973 Part-based models: Each part represents local visual properties “Springs” capture spatial relationships

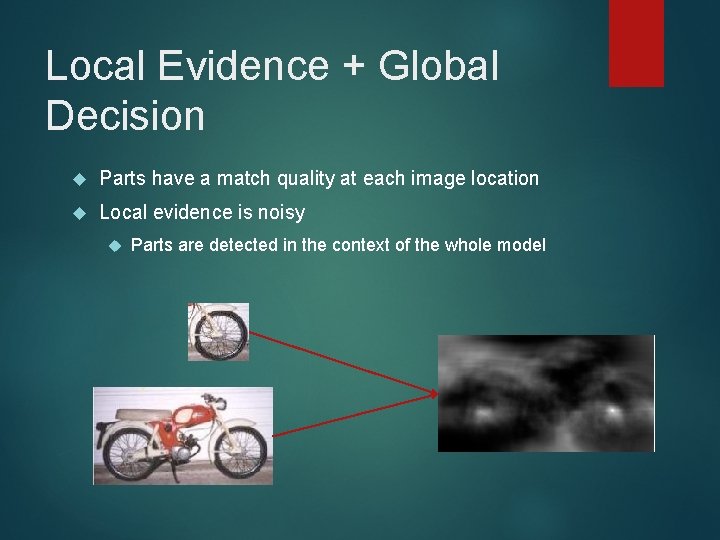

Local Evidence + Global Decision Parts have a match quality at each image location Local evidence is noisy Parts are detected in the context of the whole model

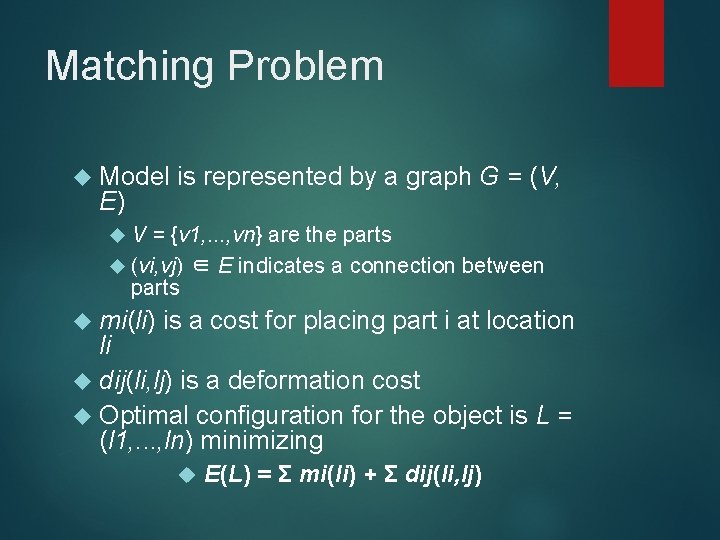

Matching Problem Model is represented by a graph G E) = (V, V = {v 1, . . . , vn} are the parts (vi, vj) ∈ E indicates a connection between parts mi(li) is a cost for placing part i at location li dij(li, lj) is a deformation cost Optimal configuration for the object is L = (l 1, . . . , ln) minimizing E(L) = Σ mi(li) + Σ dij(li, lj)

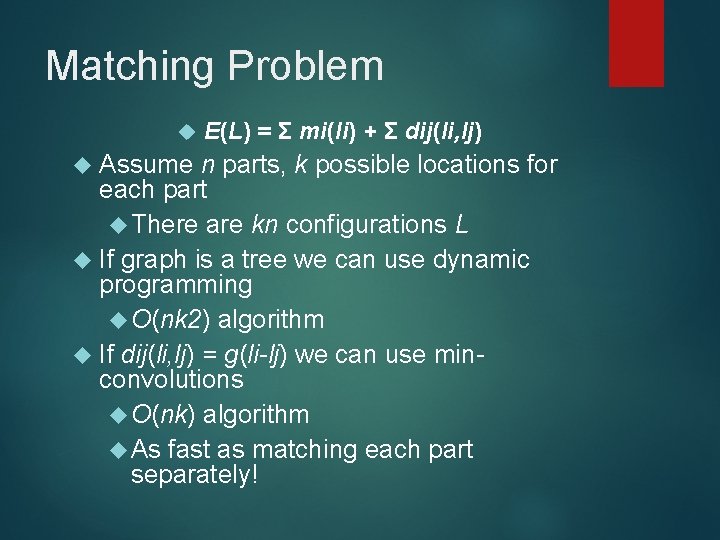

Matching Problem E(L) = Σ mi(li) + Σ dij(li, lj) Assume n parts, k possible locations for each part There are kn configurations L If graph is a tree we can use dynamic programming O(nk 2) algorithm If dij(li, lj) = g(li-lj) we can use minconvolutions O(nk) algorithm As fast as matching each part separately!

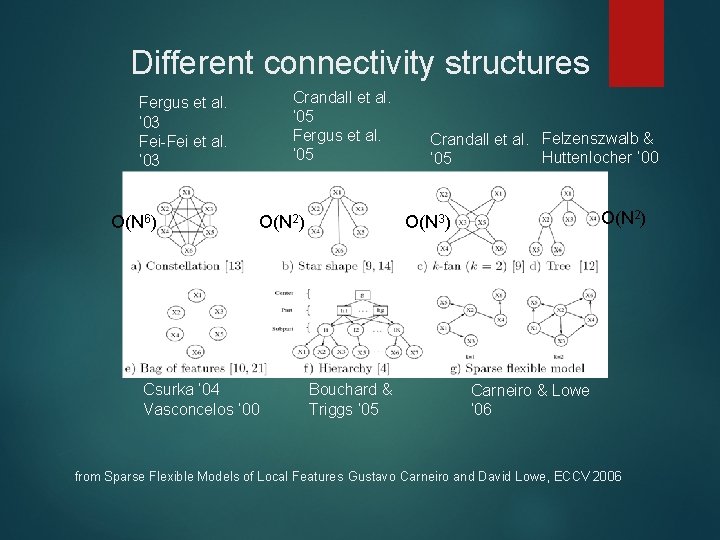

Different connectivity structures Crandall et al. ‘ 05 Fergus et al. ’ 03 Fei-Fei et al. ‘ 03 O(N 6) O(N 2) Csurka ’ 04 Vasconcelos ‘ 00 Crandall et al. Felzenszwalb & Huttenlocher ‘ 00 ‘ 05 O(N 2) O(N 3) Bouchard & Triggs ‘ 05 Carneiro & Lowe ‘ 06 from Sparse Flexible Models of Local Features Gustavo Carneiro and David Lowe, ECCV 2006

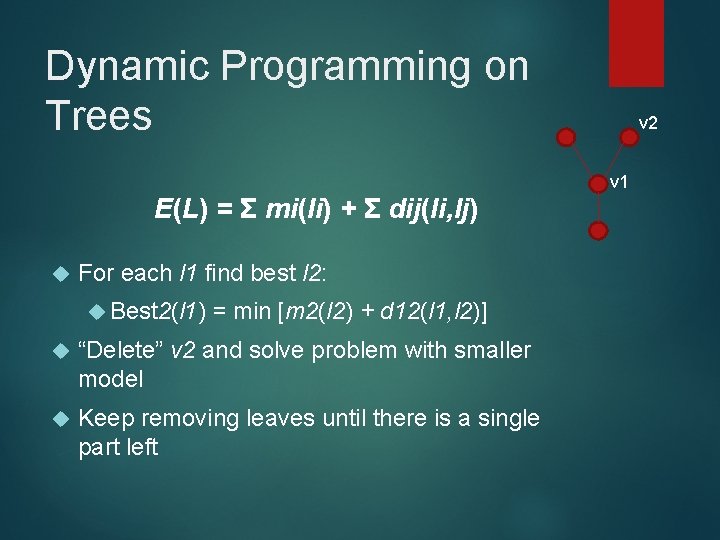

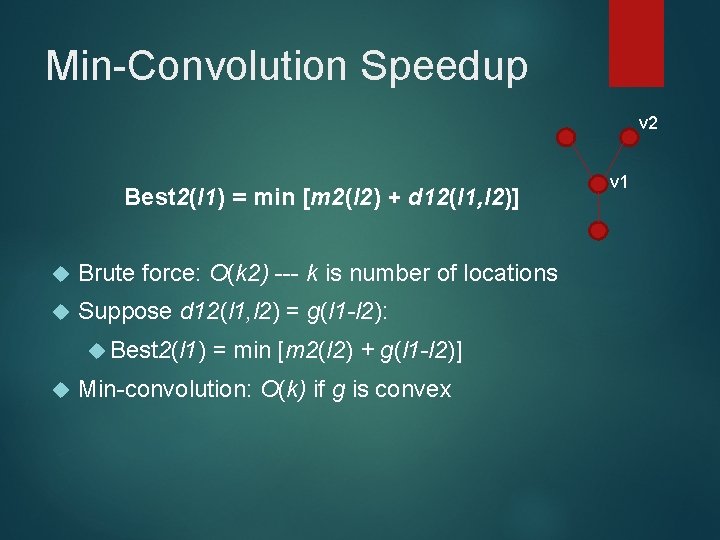

Dynamic Programming on Trees v 2 v 1 E(L) = Σ mi(li) + Σ dij(li, lj) For each l 1 find best l 2: Best 2(l 1) = min [m 2(l 2) + d 12(l 1, l 2)] “Delete” v 2 and solve problem with smaller model Keep removing leaves until there is a single part left

Min-Convolution Speedup v 2 Best 2(l 1) = min [m 2(l 2) + d 12(l 1, l 2)] Brute force: O(k 2) --- k is number of locations Suppose d 12(l 1, l 2) = g(l 1 -l 2): Best 2(l 1) = min [m 2(l 2) + g(l 1 -l 2)] Min-convolution: O(k) if g is convex v 1

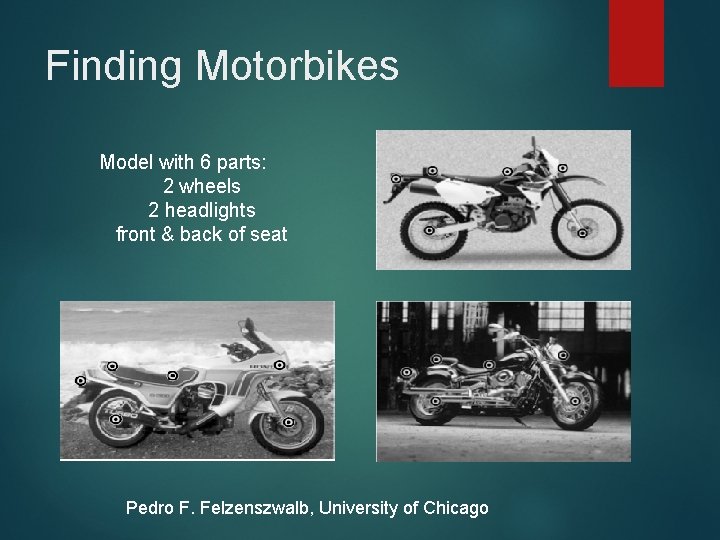

Finding Motorbikes Model with 6 parts: 2 wheels 2 headlights front & back of seat Pedro F. Felzenszwalb, University of Chicago

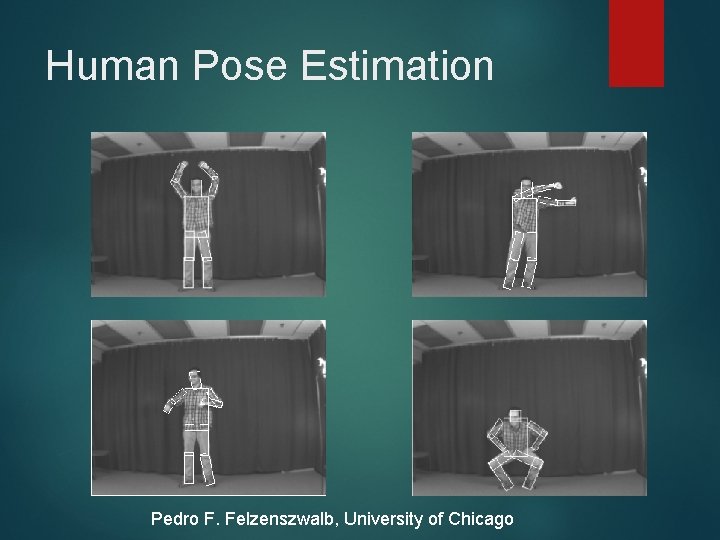

Human Pose Estimation Pedro F. Felzenszwalb, University of Chicago

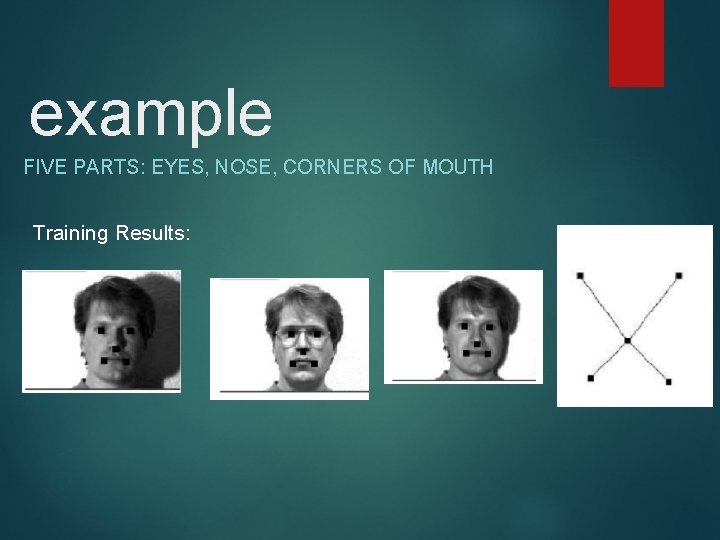

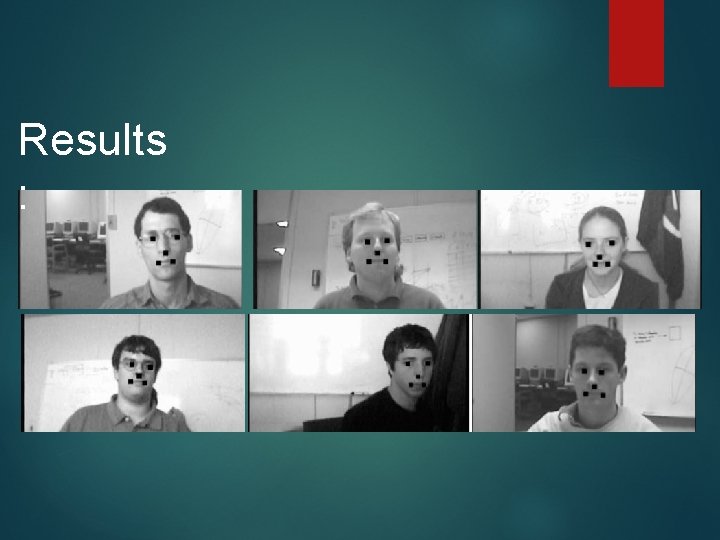

example FIVE PARTS: EYES, NOSE, CORNERS OF MOUTH Training Results:

Results : Results

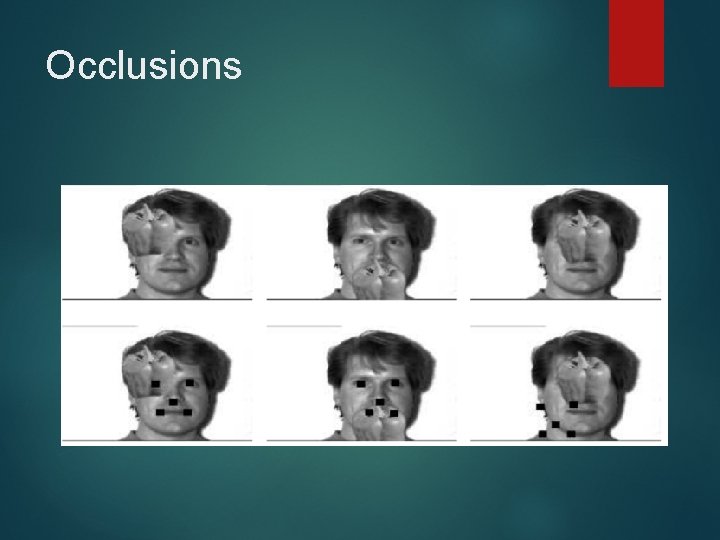

Occlusions

Pascal challenge recognize objects from a few visual object classes in realistic scenes There is only 20 object classes that been selected Participants may use systems built or trained using any methods or data excluding the provided test sets. Systems are to be built or trained using only the provided training/validation data.

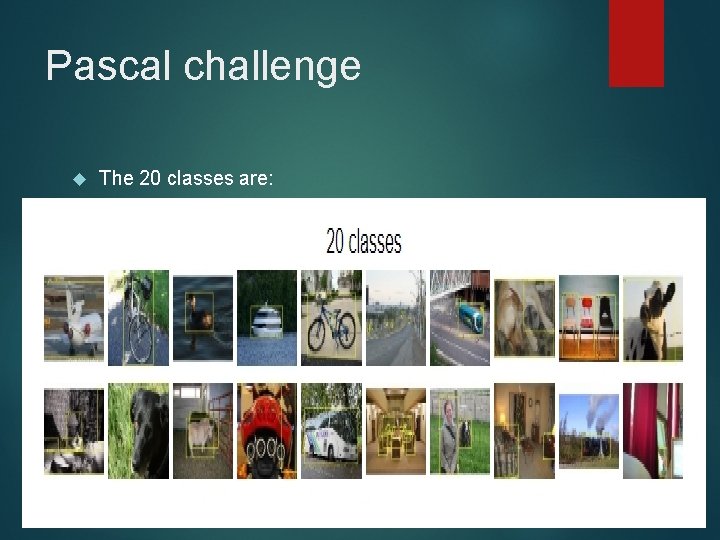

Pascal challenge The 20 classes are:

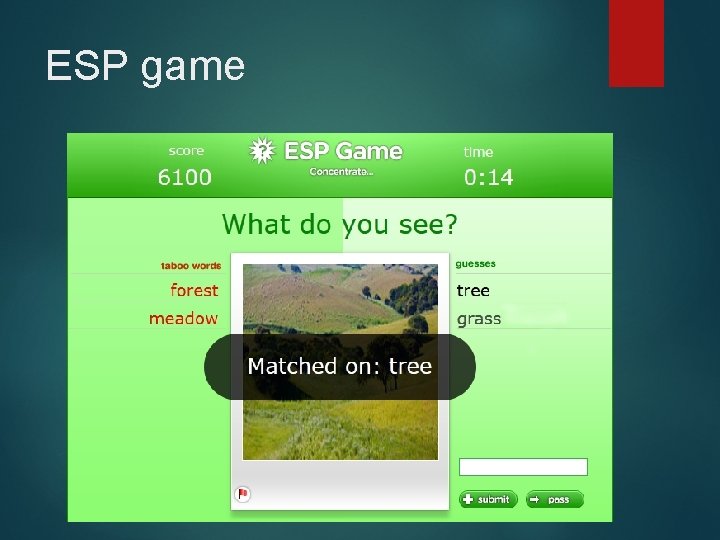

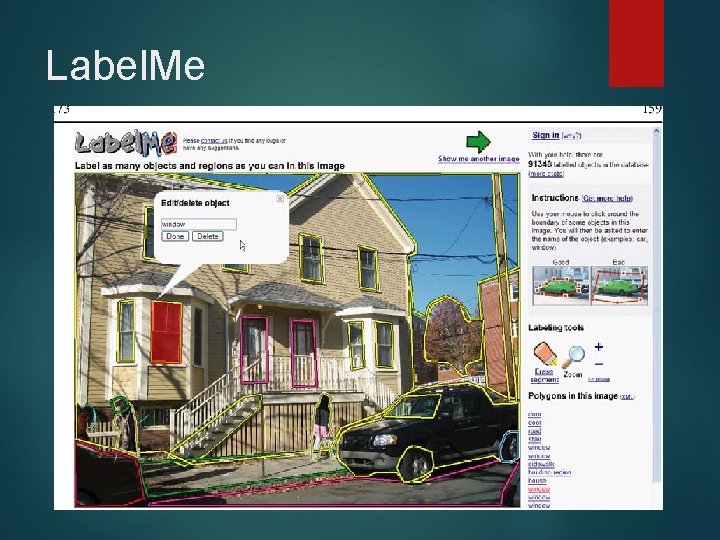

Other dataset Web-Based Annotation - building large annotated databases by relying on the collaborative effort of a large population of users: ESP and Peekaboom internet games - “bored human intelligence” Label. Me

ESP game

Label. Me

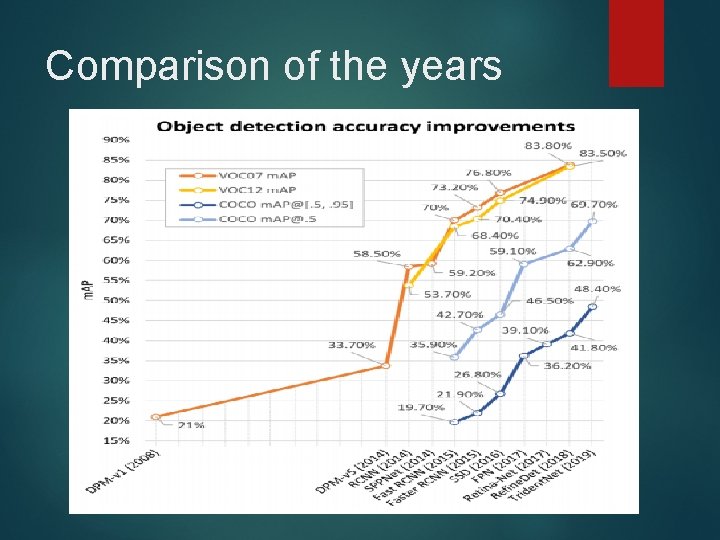

Comparison of the years

References Computer Vision: Algorithms and Applications by Richard Szeliski: http: //szeliski. org/Book/drafts/Szeliski. Book_20100903_ draft. pdf Pedro F. Felzenszwalb, University of Chicago Juan Carlos Niebles and Ranjay Krishna, Stanford university http: //host. robots. ox. ac. uk/pascal/VOC/voc 2012/index. html Wikipidea, bag of words

Thanks for listening

- Slides: 87