Measuring Computer Performance A Practitioners Guide David J

- Slides: 39

Measuring Computer Performance: A Practitioner’s Guide David J. Lilja Electrical and Computer Engineering University of Minnesota Minneapolis (lilja@ece. umn. edu) Copyright 2004 David J. Lilja 1

Course Goals l l l Understand the inherent trade-offs involved in using simulation, measurement, and analytical modeling. Rigorously compare the performance of computer systems in the presence of measurement noise. Determine whether a change made to a system has a statistically significant impact on performance. Copyright 2004 David J. Lilja 2

Course Goals l l Use statistical tools to reduce the number of simulations that need to be performed of a computer system. Design a set of experiments to obtain the most information for a given level of effort. Copyright 2004 David J. Lilja 3

Course Goals Provide intuitive conceptual background for some standard statistical tools. l • • Draw meaningful conclusions in presence of noisy measurements. Allow you to correctly and intelligently apply techniques in new situations. → Don’t simply plug and crank from a formula. Copyright 2004 David J. Lilja 4

Course Goals Present techniques for aggregating large quantities of data. l • • Obtain a big-picture view of your results. Obtain new insights from complex measurement and simulation results. → E. g. How does a new feature impact the overall system? Copyright 2004 David J. Lilja 5

Agenda l Introduction l Solution techniques l l l Measurement Simulation Analytical modeling Goals of performance measurement Rules of thumb Amdahl’s Law Copyright 2004 David J. Lilja 6

Agenda (cont. ) l Performance metrics l l l Characteristics of good metrics Standard processor and system metrics Speedup and relative change Copyright 2004 David J. Lilja 7

Agenda (cont. ) l Measurement tools and techniques l l l Fundamental strategies Interval timers Program profiling Tracing Indirect measurement Copyright 2004 David J. Lilja 8

Agenda (cont. ) l Statistical interpretation of measured data l l Arithmetic, harmonic, geometric means Sources of measurement error Confidence intervals Statistically comparing alternatives Copyright 2004 David J. Lilja 9

Agenda (cont. ) l Design of experiments l l l Terminology One-factor analysis of variance (ANOVA) Two-factor ANOVA Generalized m-factor experiments Fractional factorial designs Multifactorial designs (Plackett and Berman) Copyright 2004 David J. Lilja 10

Agenda (cont. ) l Simulation l l l Types of simulations Random number generation Verification and validation Copyright 2004 David J. Lilja 11

Let’s Begin… Copyright 2004 David J. Lilja 12

Introduction l Solution techniques l l l Measurement Simulation Analytical modeling Goals of performance measurement Rules of thumb Copyright 2004 David J. Lilja 13

Performance Evaluation == Decision-Making Process 1. 2. 3. 4. Recognition of need. Problem formulation/identification Model building Data collection, model parameterization Copyright 2004 David J. Lilja 14

Performance Evaluation == Decision-Making Process Model solution 5. 1. 2. 3. 6. 7. 8. Analytic Measurement Simulation Model validation and analysis Results interpretation Decision making Copyright 2004 David J. Lilja 15

Common Goals of Performance Measurement l Compare alternatives l l l Should I add this feature? Which system is better? Find “optimal” parameter values l System tuning Copyright 2004 David J. Lilja 16

Common Goals of Performance Measurement l Identify good/bad performance relative to l l l Peak Expectations History (e. g. previous generations) Competition Performance debugging l l l Fix performance problems Find bottlenecks System errors Copyright 2004 David J. Lilja 17

Common Goals of Performance Measurement l Enhance performance l l Compiler optimizations Larger memory, cache More processors Set expectations l Tell a customer what is reasonable Copyright 2004 David J. Lilja 18

Common Goals of Performance Measurement l Measure execution time for your program l l l Will it meet requirements? Should I buy it? Compare relative performance l Which one is faster? Copyright 2004 David J. Lilja 19

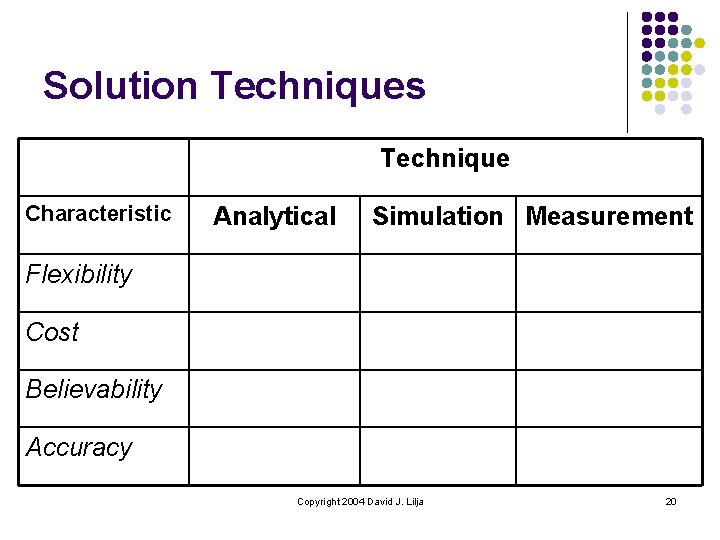

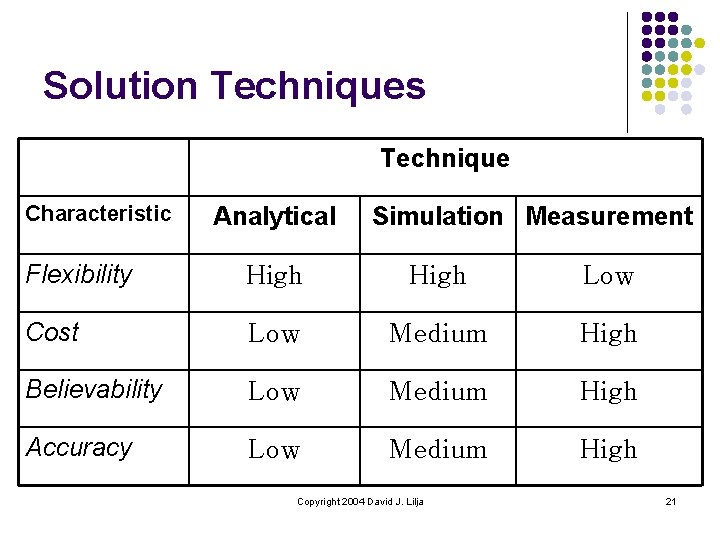

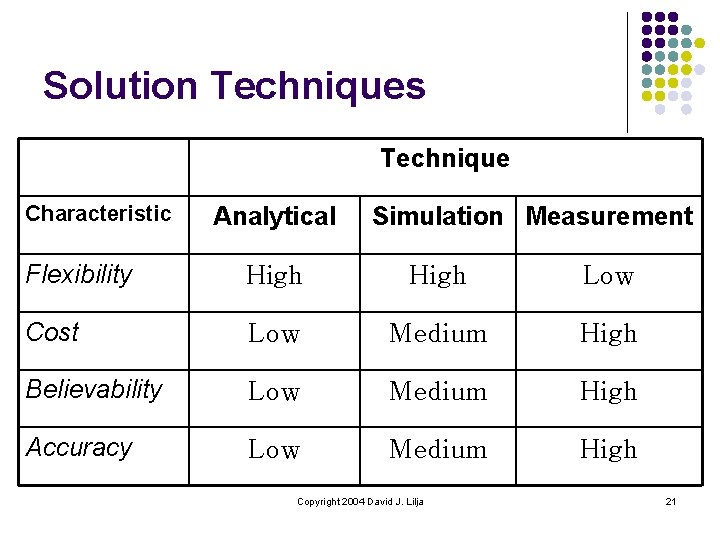

Solution Techniques Technique Characteristic Analytical Simulation Measurement Flexibility Cost Believability Accuracy Copyright 2004 David J. Lilja 20

Solution Techniques Technique Characteristic Analytical Simulation Measurement Flexibility High Low Cost Low Medium High Believability Low Medium High Accuracy Low Medium High Copyright 2004 David J. Lilja 21

Solution Techniques l Never trust results until validated with second solution technique l l l Analytical model l Good model? l Correct solution technique? Simulation l Programming errors? l Untested corner cases? l Are the random numbers really random? Measurement l Measuring perturbs system l → Not really the system we want to measure! Copyright 2004 David J. Lilja 22

Solution Techniques l Sometimes just intuition l Measure memory BW l l 1 Mbyte/sec Clock = 40 ns, 32 -bit bus Clock → 100 Mbytes/sec Is the measurement reasonable? Copyright 2004 David J. Lilja 23

Performance Measurement “Rules of Thumb” l Performance is limited by slowest component l l l Amdahl’s Law of diminishing returns (More later) Copyright 2004 David J. Lilja 24

Performance Measurement “Rules of Thumb” l Know what you are saying/reading l l l Paper design? Simulation results only? Elapsed time versus CPU time? Time-sharing? l l l “My Mac is faster than your Cray. ” Compiler options, OS release, hardware configuration? Slowest component eliminated? l No I/O? Copyright 2004 David J. Lilja 25

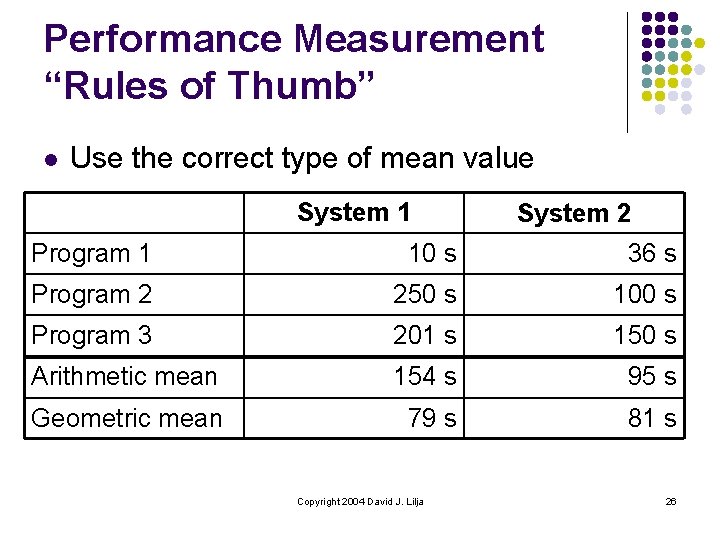

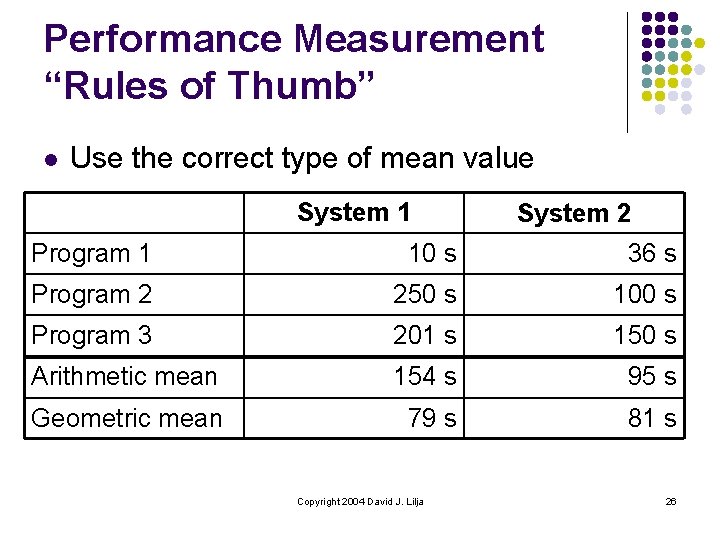

Performance Measurement “Rules of Thumb” l Use the correct type of mean value System 1 System 2 Program 1 10 s 36 s Program 2 250 s 100 s Program 3 201 s 150 s Arithmetic mean 154 s 95 s Geometric mean 79 s 81 s Copyright 2004 David J. Lilja 26

Performance Measurement “Rules of Thumb” l Know the program being tested l Real program? l l l Kernel program? l l Includes all I/O? Real inputs? Exclude I/O, loops, subroutines, … Benchmark program? l l Scaled-down application? Synthetic behavior? Copyright 2004 David J. Lilja 27

Performance Measurement “Rules of Thumb” l Define all terms l E. g. “% parallelized” means l l l % of all loops parallelized? % of execution time spent in parallel code? % of instructions executed in parallel? Parallel speedup / number of processors? Specify all hardware and software features l Options, release numbers, etc. Copyright 2004 David J. Lilja 28

Performance Measurement “Rules of Thumb” l Performance is always relative to something else, such as … l l l Previous machines Expectations Peak performance Competition “Performance may be poor, but can it ever be wrong? ” Jim Kohn, Cray, Inc. Copyright 2004 David J. Lilja 29

Performance Measurement “Rules of Thumb” l Know your system’s hardware and software l Peak capabilities l l Is your system balanced? l l l Are your measurements reasonable? Enough memory, I/O BW, network BW, …? Are there operating modes, strange regimes of behavior? Deviations from language standards? Copyright 2004 David J. Lilja 30

Performance Measurement “Rules of Thumb” l Know your tools l l Timer resolution, accuracy, precision Background noise Compiler does fewer/more optimizations on instrumented code? Perturbations due to measurement l Computer performance measurement uncertainty principle – “Accuracy is inversely proportional to resolution. ” Copyright 2004 David J. Lilja 31

Performance Measurement “Rules of Thumb” l Bottom line Are the results reproducible by someone else using only the information you provided? Copyright 2004 David J. Lilja 32

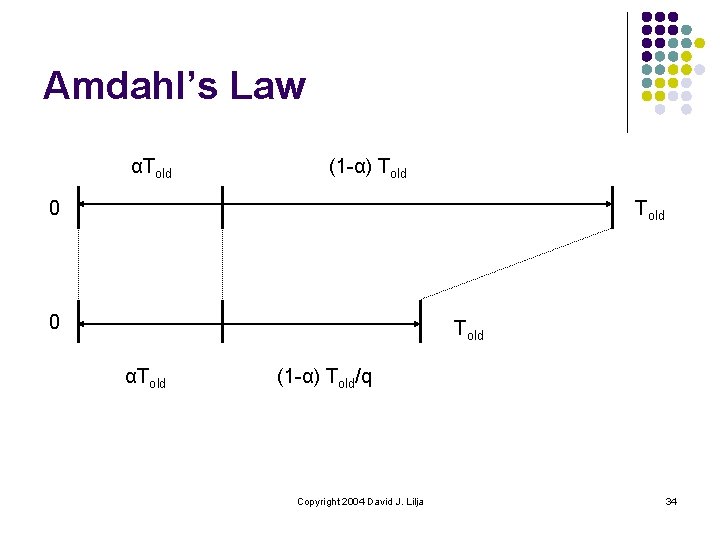

Amdahl’s Law l l Suggested by Gene Amdahl in 1967 Inherent performance limitations from adding more processors Generalized law of diminishing returns “The overall performance improvement observed in an application program is limited by that portion of the application that is unaffected by the change made to the system. ” Copyright 2004 David J. Lilja 33

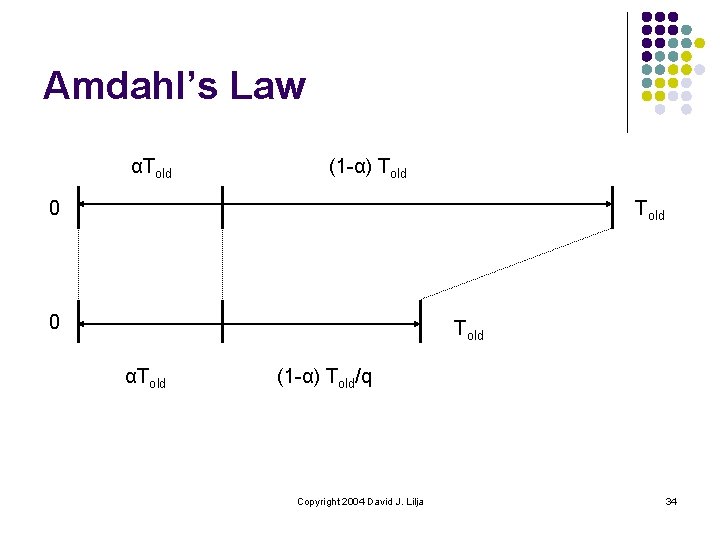

Amdahl’s Law αTold (1 -α) Told 0 Told αTold (1 -α) Told/q Copyright 2004 David J. Lilja 34

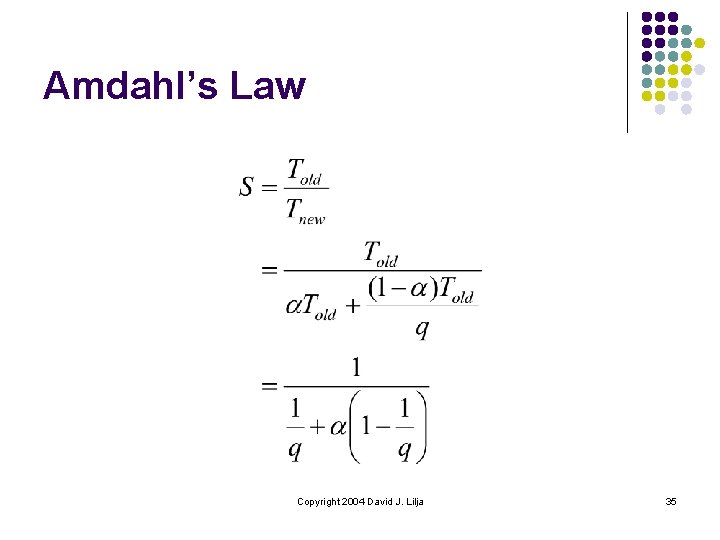

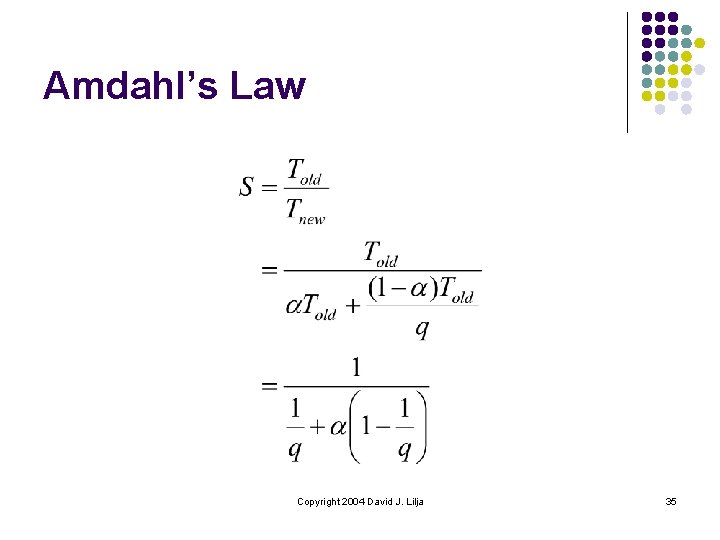

Amdahl’s Law Copyright 2004 David J. Lilja 35

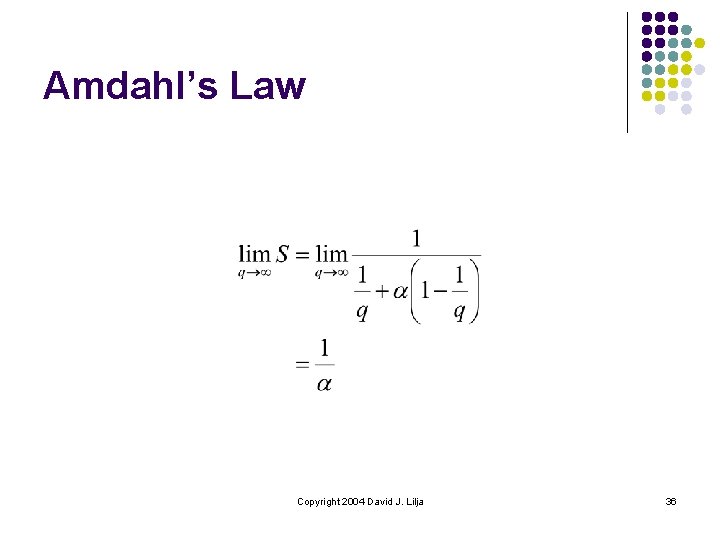

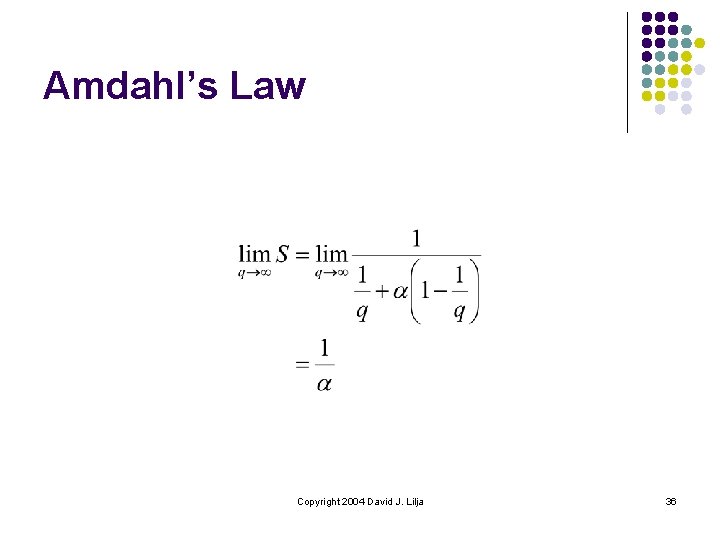

Amdahl’s Law Copyright 2004 David J. Lilja 36

Amdahl’s Law l l → Speedup is limited by part unaffected by a change in the system, α Example l l 10% of program is not parallelizable → maximum speedup < 1/0. 1 = 10 x Copyright 2004 David J. Lilja 37

Important Points l l Performance measurement == decisionmaking process Common goals l l l Performance debugging Set expectations Etc. Copyright 2004 David J. Lilja 38

Important Points l Fundamental solution techniques l l Rules of thumb l l Simulation Measurement Analytical Modeling Bottom line → reproducibility Amdhal’s Law l Performance limited by unaffected part of program Copyright 2004 David J. Lilja 39