HeteroLabeled LDA A partially supervised topic model with

Hetero-Labeled LDA: A partially supervised topic model with heterogeneous label information Dongyeop Kang 1, Youngja Park 2, Suresh Chari 2 1. IT Convergence Laboratory, KAIST Institute, Korea 2. IBM T. J. Watson Research Center, NY, USA © 2009 IBM Corporation

Topic Discovery - Supervised § Topic classification – Learn decision boundaries of classes by learning from data with labels – Accurate topic classification for general domains § Very hard to build a model for business applications due to data bottleneck © 2009 IBM Corporation

Topic Discovery – Unsupervised § Probabilistic topic modeling – Learn topic distribution for each class by learning from data without label information, and choose topic of new data from most similar topic distribution – e. g. , Latent Dirichlet Allocation (LDA) § Not sufficiently accurate or interpretable © 2009 IBM Corporation

Topic Discovery – Semi-supervised § Supervised topic modeling methods – Supervised LDA [Blei&Mc. Auliffe, 2007], Labeled LDA [Ramage, 2009]: document labels provided § Semi-supervised topic modeling methods – Seeded LDA [Jagarlamudi, 2012], z. LDA [Andrzejewski, 2009]: word labels/constraints provided § Limitations 1. Only one kind of domain knowledge is supported 2. The labels should cover the entire topic space, |L| = |T| 3. All documents should be labeled in training data, |Dunlabeled| = Ф © 2009 IBM Corporation

Partially Semi-supervised Topic Modeling with Heterogeneous Labels § Generation of labeled training samples is much more challenging for real-world applications § In most large companies, data are generated and managed independently by many different divisions § Different types of domain knowledge are available in different divisions Can we discover accurate and meaningful topics with small amount of various types of domain knowledge? © 2009 IBM Corporation

Hetero-Lableled LDA: Main Contributions § Heterogeneity – Domain knowledge (labels) come in different forms – e. g. , document labels, topic-indicative features, a partial taxonomy § Partialness – Small amount of labels are given – We address two kinds of partialness • Partially labeled documents: |L| << |T| • Partially labeled corpus: |Dlabeled| << |Dunlabeled| § Three levels of domain information – Group Information: – Label Information: – Topic Distribution: © 2009 IBM Corporation

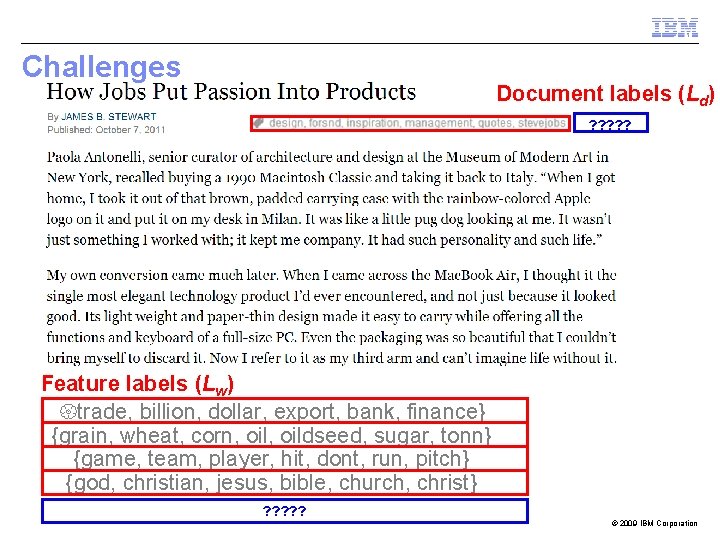

Challenges Document labels (Ld) ? ? ? Feature labels (Lw) {trade, billion, dollar, export, bank, finance} {grain, wheat, corn, oildseed, sugar, tonn} {game, team, player, hit, dont, run, pitch} {god, christian, jesus, bible, church, christ} ? ? ? © 2009 IBM Corporation

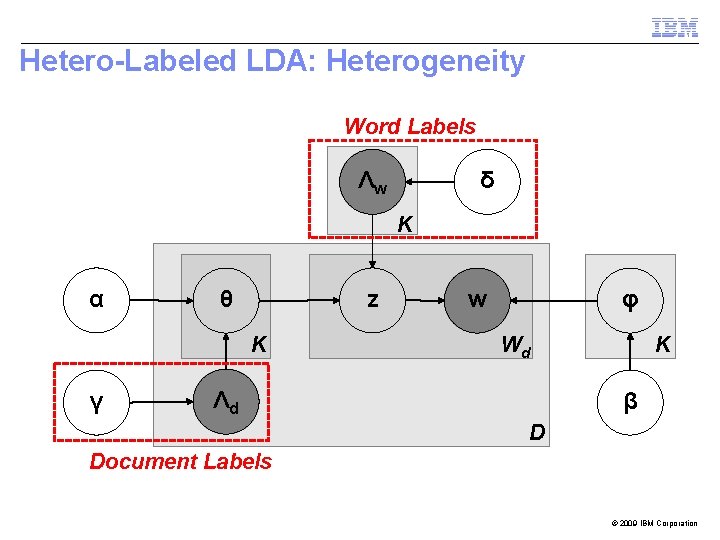

Hetero-Labeled LDA: Heterogeneity Word Labels Λw δ K α z θ K γ w φ K Wd Λd β D Document Labels © 2009 IBM Corporation

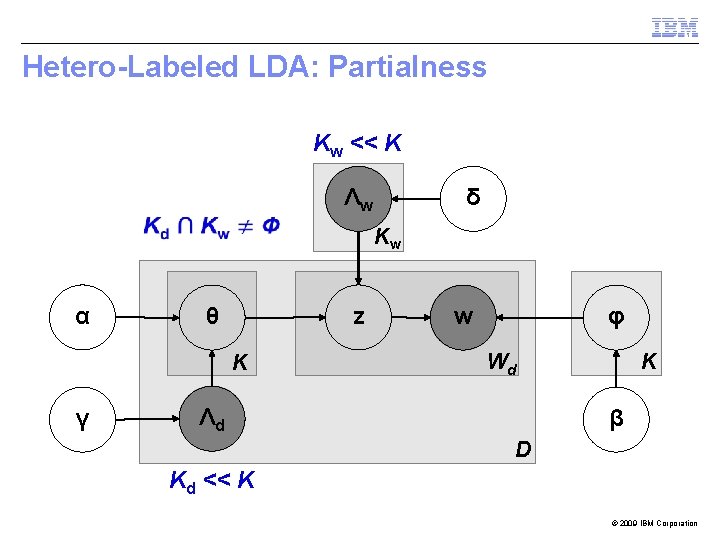

Hetero-Labeled LDA: Partialness Kw << K Λw δ Kw α z θ K γ w φ K Wd Λd β D Kd << K © 2009 IBM Corporation

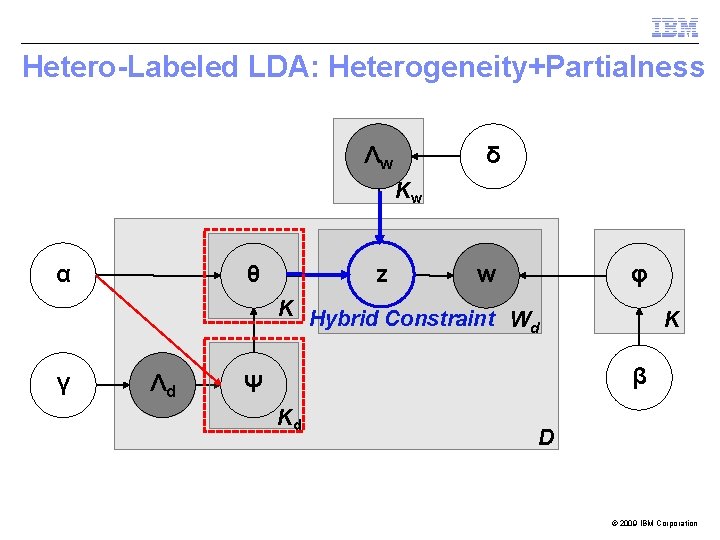

Hetero-Labeled LDA: Heterogeneity+Partialness Λw δ Kw α z θ w φ K Hybrid Constraint Wd γ Λd K β Ψ Kd D © 2009 IBM Corporation

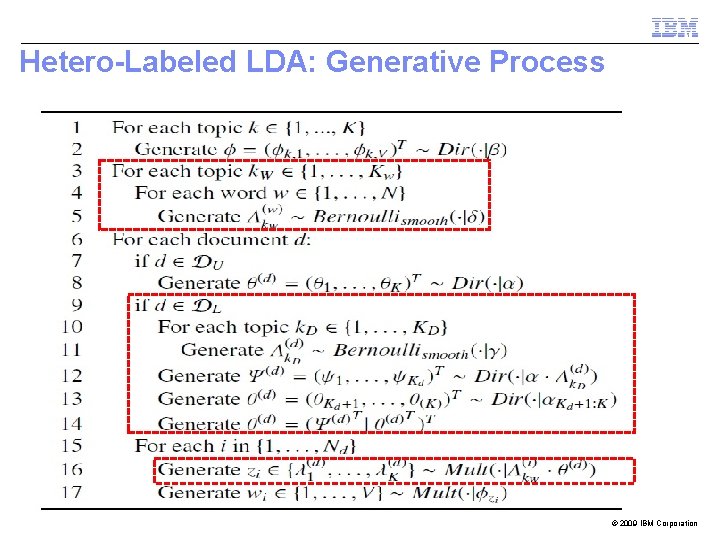

Hetero-Labeled LDA: Generative Process © 2009 IBM Corporation

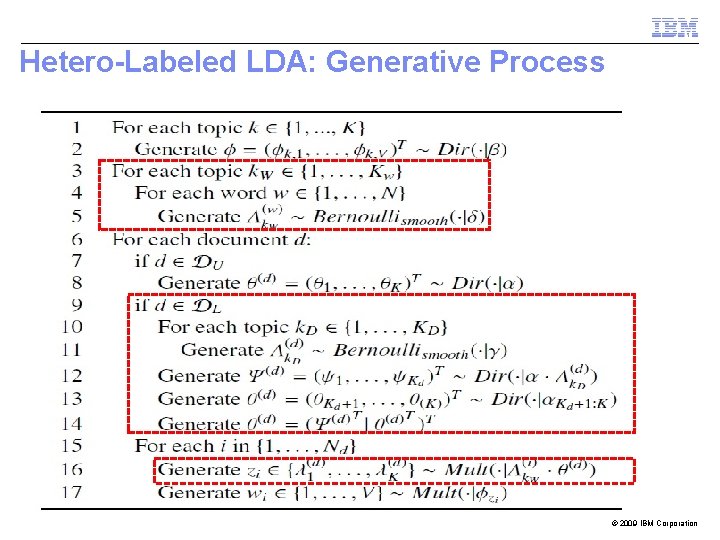

Hetero-Labeled LDA: Generative Process © 2009 IBM Corporation

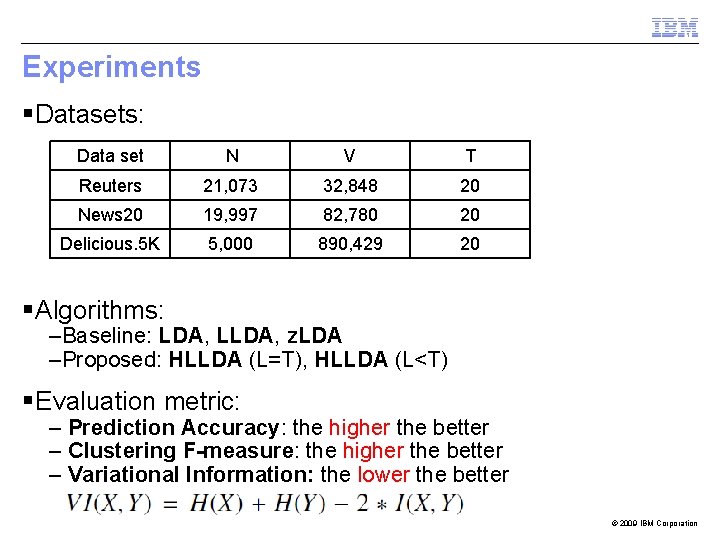

Experiments § Datasets: Data set N V T Reuters 21, 073 32, 848 20 News 20 19, 997 82, 780 20 Delicious. 5 K 5, 000 890, 429 20 § Algorithms: –Baseline: LDA, LLDA, z. LDA –Proposed: HLLDA (L=T), HLLDA (L<T) § Evaluation metric: – Prediction Accuracy: the higher the better – Clustering F-measure: the higher the better – Variational Information: the lower the better © 2009 IBM Corporation

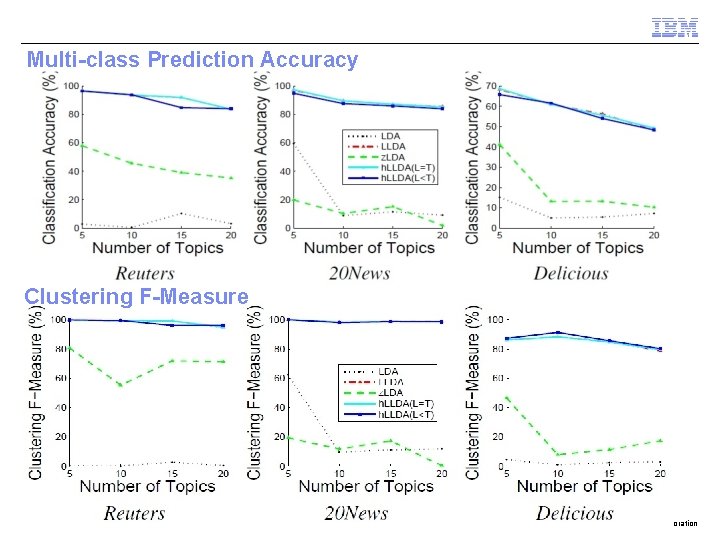

Experiment: Questions § Q 1. How does mixture of heterogeneous label information improve performance of classification and clustering? © 2009 IBM Corporation

Multi-class Prediction Accuracy Clustering F-Measure © 2009 IBM Corporation

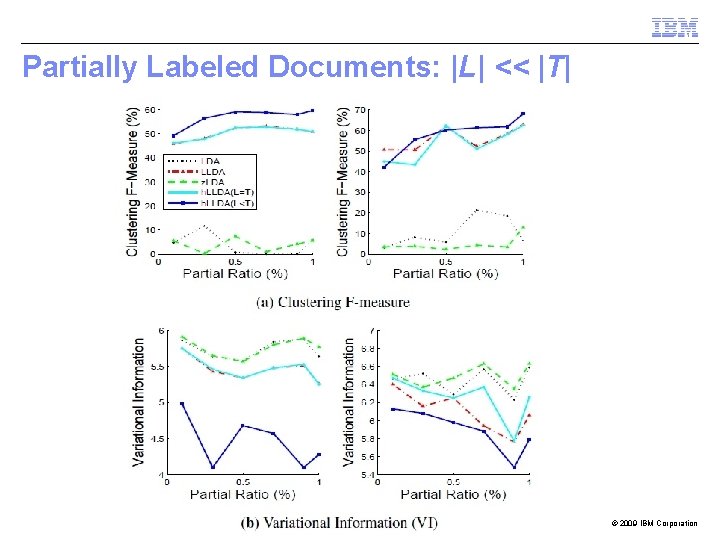

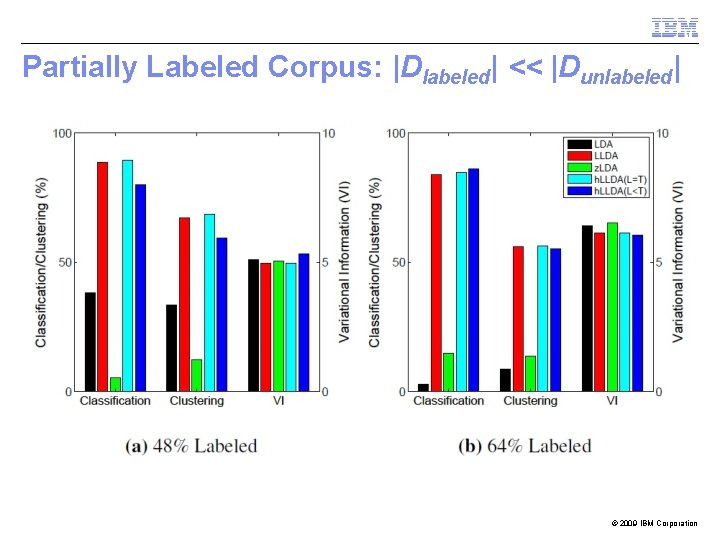

Experiment: Questions § Q 2. How does HLLDA improve performance of partially labeled documents? – – Partially labeled corpus: |Dlabeled| << |Dunlabeled| Partially labeled document: |L| << |T| For a document, the provided label set covers a subset of all the topics the document belongs to. Our goal is to predict the full set of topics for each document. © 2009 IBM Corporation

Partially Labeled Documents: |L| << |T| © 2009 IBM Corporation

Partially Labeled Corpus: |Dlabeled| << |Dunlabeled| © 2009 IBM Corporation

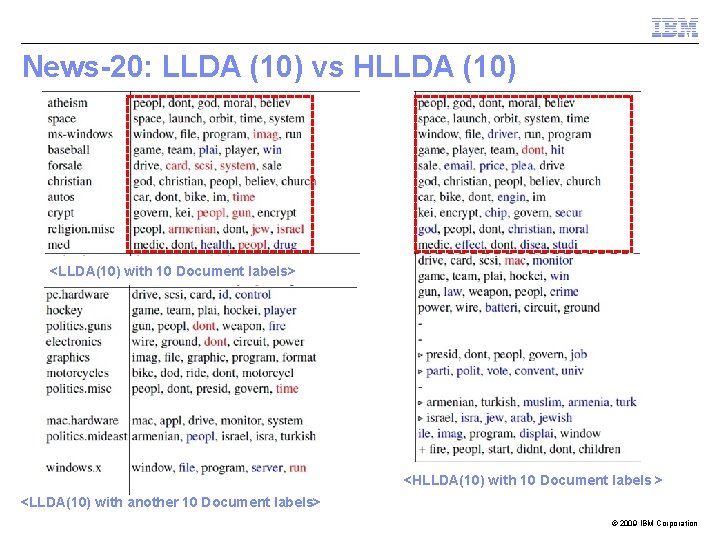

Experiment: Questions § Q 3. How good are the generated topics interpretable? – Comparison between LLDA and HLLDA – User study for topic quality © 2009 IBM Corporation

News-20: LLDA (10) vs HLLDA (10) <LLDA(10) with 10 Document labels> <HLLDA(10) with 10 Document labels > <LLDA(10) with another 10 Document labels> © 2009 IBM Corporation

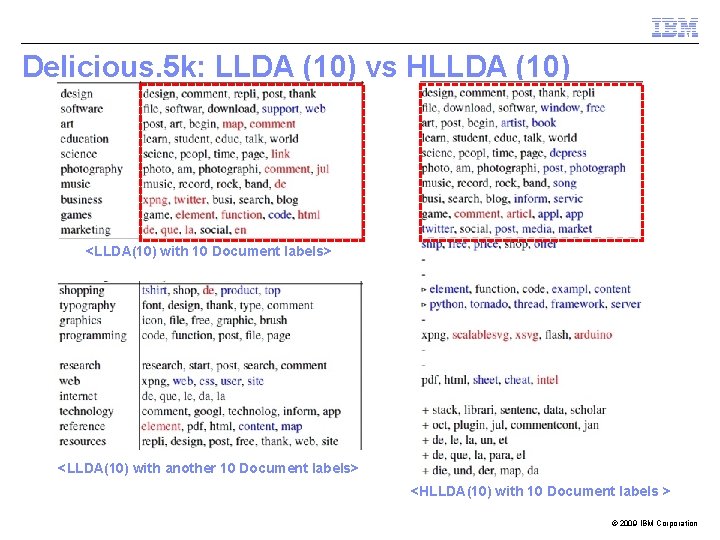

Delicious. 5 k: LLDA (10) vs HLLDA (10) <LLDA(10) with 10 Document labels> <LLDA(10) with another 10 Document labels> <HLLDA(10) with 10 Document labels > © 2009 IBM Corporation

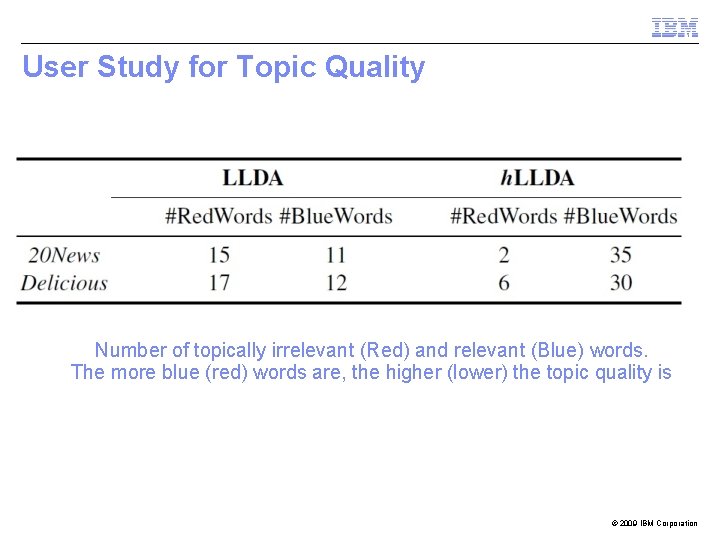

User Study for Topic Quality Number of topically irrelevant (Red) and relevant (Blue) words. The more blue (red) words are, the higher (lower) the topic quality is © 2009 IBM Corporation

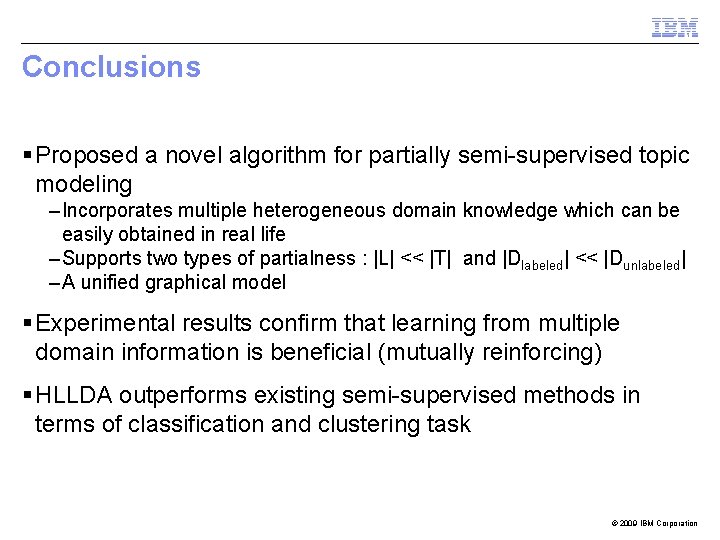

Conclusions § Proposed a novel algorithm for partially semi-supervised topic modeling – Incorporates multiple heterogeneous domain knowledge which can be easily obtained in real life – Supports two types of partialness : |L| << |T| and |Dlabeled| << |Dunlabeled| – A unified graphical model § Experimental results confirm that learning from multiple domain information is beneficial (mutually reinforcing) § HLLDA outperforms existing semi-supervised methods in terms of classification and clustering task © 2009 IBM Corporation

THANK YOU contact: young_park@us. ibm. com 25 © 2009 IBM Corporation

- Slides: 24