Partially Supervised Classification of Text Documents by Bing

Partially Supervised Classification of Text Documents by Bing Liu, Philip Yu, and Xiaoli Li Presented by: Rick Knowles 7 April 2005

Agenda n n n Problem Statement Related Work Theoretical Foundations Proposed Technique Evaluation Conclusions

Problem Statement: Common Approach n n Text categorization: automated assigning of text documents to pre-defined classes Common Approach: Supervised Learning n n Manually label a set of documents to pre-defined classes Use a learning algorithm to build a classifier + + _ _ + ++ + _ _ _ + +_ _ + + _

Problem Statement: Common Approach (cont. ) n Problem: bottleneck associated with large number of labeled training documents to build the classifier n +. Nigram, et al, have shown that using a large dose of unlabeled data can help. . . +. + . _ _ +_ _ + +. _. + _ . . _

A different approach: Partially supervised classification n Two class problem: positive and unlabeled Key feature is that there is no labeled negative document Can be posed as a constrained optimization problem n n Develop a function that correctly classifies all positive docs and minimizes the number of mixed docs classified as positive will have an expected error rate of no more than e. n n Examplar: Finding matching (i. e. , positive documents) from a large collection such as the Web. Matching documents are positive All others are negative n n . . + . . + +. +. . + +. . .

Related Work n Text Classification techniques n n n Naïve Bayesian K-nearest neighbor Support vector machines Each requires labeled data for all classes Problem similar to traditional information retrieval n n Rank orders documents according to their similarities to the query document Does not perform document classification

Theoretical Foundations n Some discussion regarding theoretical foundations. Focused primarily on n n (1) n Minimization of the probability of error Expected recall and precision of functions Pr[f(X)=Y] = Pr[f(X)=1] - Pr[Y=1] + 2 Pr Pr[f(X)=0 | Y=1]Pr[Y=1] / Painful, painful… but it did show you can build accurate classifiers with high probability when sufficient documents in P (the positive document set) and M (the unlabeled set) are available.

Theoretical Foundations (cont. ) n Two serious practical drawbacks to theoretical method n n Constrained optimization problem may not be easy to solve for the function class in which we are interested Not easy to choose a desired recall level that will give a good classifier using the function class we are using

Proposed Technique n n n Theory be darned! Paper introduces a practical technique based on the naïve Bayes classifier and the Expectation-Maximization (EM) algorithm After introducing a general technique, the authors offer an enhancement using spies

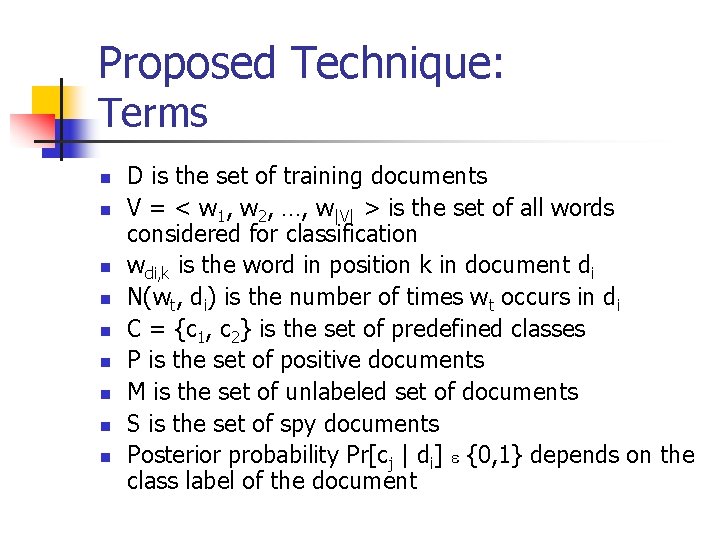

Proposed Technique: Terms n n n n n D is the set of training documents V = < w 1, w 2, …, w|V| > is the set of all words considered for classification wdi, k is the word in position k in document di N(wt, di) is the number of times wt occurs in di C = {c 1, c 2} is the set of predefined classes P is the set of positive documents M is the set of unlabeled set of documents S is the set of spy documents Posterior probability Pr[cj | di] e {0, 1} depends on the class label of the document

![Proposed Technique: naïve Bayesian classifer (NB-C) (2) (3) Pr[cj] = Si Pr[cj|di] / |D| Proposed Technique: naïve Bayesian classifer (NB-C) (2) (3) Pr[cj] = Si Pr[cj|di] / |D|](http://slidetodoc.com/presentation_image_h/25f5d9d729739900842837aadbf7cf9f/image-11.jpg)

Proposed Technique: naïve Bayesian classifer (NB-C) (2) (3) Pr[cj] = Si Pr[cj|di] / |D| Pr[wt|cj] = 1 + |V| |D| Si=1 P[cj|di] |V| |D| + Ss=1 Si=1 N(wt, di) P[cj|di] N(ws, di) and assuming the words are independent given the class (4) |di| Pr[cj|di] = Pr[cj] Pk=1 Pr[wdi, k|cj] |C| |di| Sr=1 Pr[cr] Pk=1 Pr[wdi, k|cr] The class with the highest Pr[cj|di] is assigned as the class of the doc

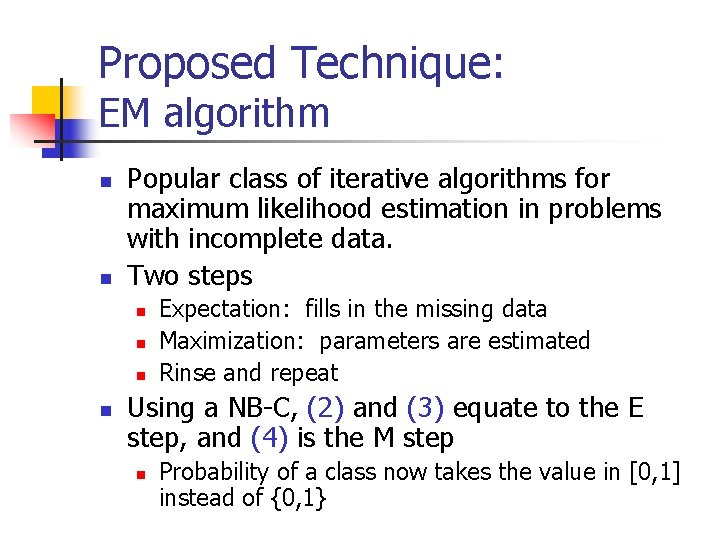

Proposed Technique: EM algorithm n n Popular class of iterative algorithms for maximum likelihood estimation in problems with incomplete data. Two steps n n Expectation: fills in the missing data Maximization: parameters are estimated Rinse and repeat Using a NB-C, (2) and (3) equate to the E step, and (4) is the M step n Probability of a class now takes the value in [0, 1] instead of {0, 1}

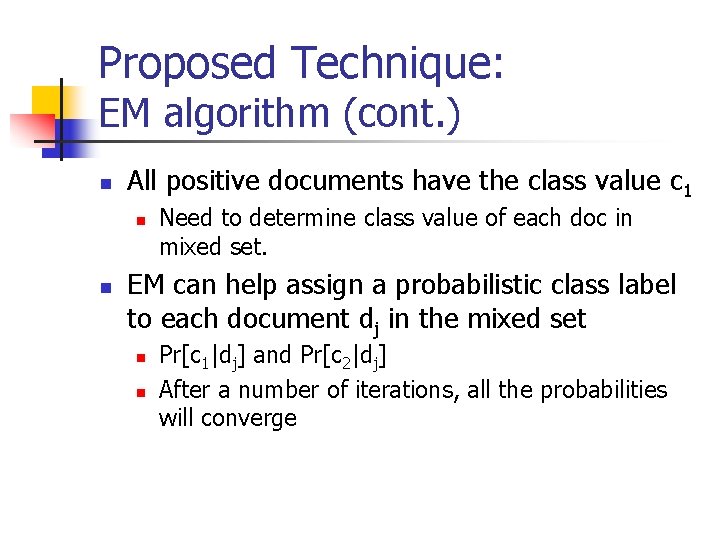

Proposed Technique: EM algorithm (cont. ) n All positive documents have the class value c 1 n n Need to determine class value of each doc in mixed set. EM can help assign a probabilistic class label to each document dj in the mixed set n n Pr[c 1|dj] and Pr[c 2|dj] After a number of iterations, all the probabilities will converge

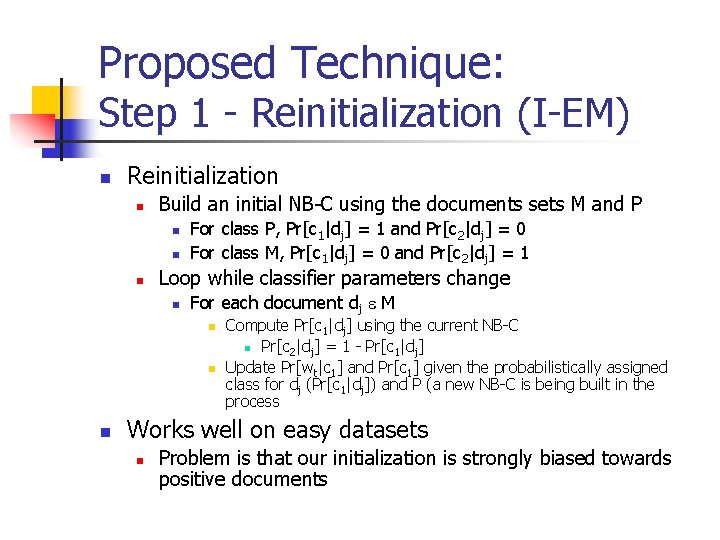

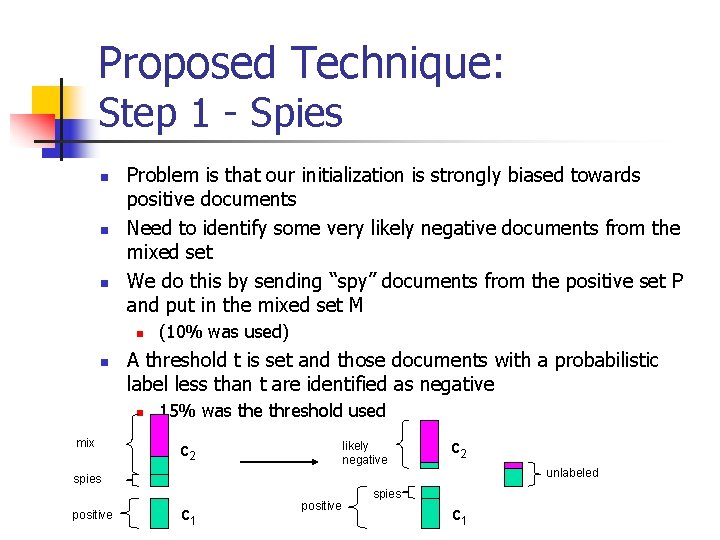

Proposed Technique: Step 1 - Reinitialization (I-EM) n Reinitialization n Build an initial NB-C using the documents sets M and P n n n For class P, Pr[c 1|dj] = 1 and Pr[c 2|dj] = 0 For class M, Pr[c 1|dj] = 0 and Pr[c 2|dj] = 1 Loop while classifier parameters change n For each document dj e M n n n Compute Pr[c 1|dj] using the current NB-C n Pr[c 2|dj] = 1 - Pr[c 1|dj] Update Pr[wt|c 1] and Pr[c 1] given the probabilistically assigned class for dj (Pr[c 1|dj]) and P (a new NB-C is being built in the process Works well on easy datasets n Problem is that our initialization is strongly biased towards positive documents

Proposed Technique: Step 1 - Spies n n n Problem is that our initialization is strongly biased towards positive documents Need to identify some very likely negative documents from the mixed set We do this by sending “spy” documents from the positive set P and put in the mixed set M n n A threshold t is set and those documents with a probabilistic label less than t are identified as negative n mix (10% was used) 15% was the threshold used likely negative c 2 unlabeled spies positive c 1 positive spies c 1

Proposed Technique: Step 1 - Spies (cont) n n n n n N (most likely negative docs) = U (unlabeled docs) = S (spies) = sample(P, s%) MS = M U S P=P-S Assign every document di in P the class c 1 Assign every document dj in MS the class c 2 Run I-EM(MS, P) Classify each document dj in MS Determine the probability threshold t using S For each document dj in M n If its probability Pr[c 1|dj] < t n n N=N U {dj} Else U = U U {dj} f

Proposed Technique: Step 2 - Building the final classifier n Using P, N and U as developed in the previous step n n n n Put all the spy documents S back in P Assign Pr[c 1 | di] =1 for all documents in P Assign Pr[c 2 | di] =1 for all documents in N. This will change with each iteration of EM Each doc dk in U is not assigned a label initially. At the end of the first iteration, it will have a probabilistic label Pr[c 1 | dk] Run EM using the document sets P, N and U until it converges When EM stops, the final classifier has been produced. This two step technique is called S-EM (Spy EM)

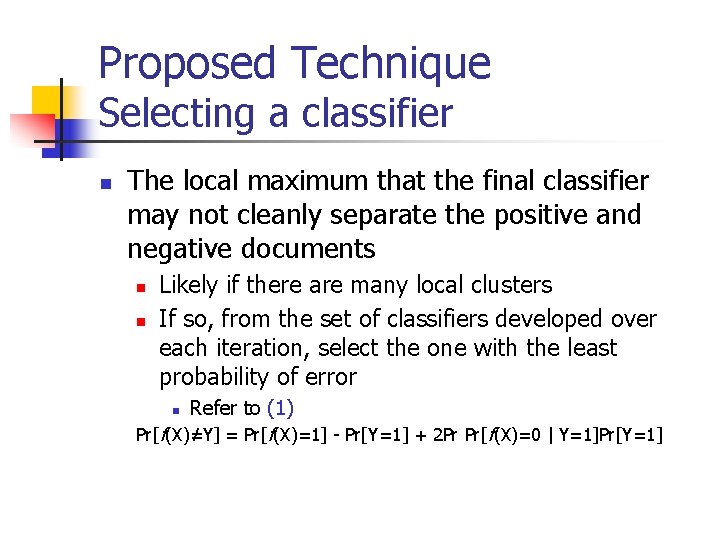

Proposed Technique Selecting a classifier n The local maximum that the final classifier may not cleanly separate the positive and negative documents n n Likely if there are many local clusters If so, from the set of classifiers developed over each iteration, select the one with the least probability of error Refer to (1) Pr[f(X)=Y] = Pr[f(X)=1] - Pr[Y=1] + 2 Pr Pr[f(X)=0 | Y=1]Pr[Y=1] / n

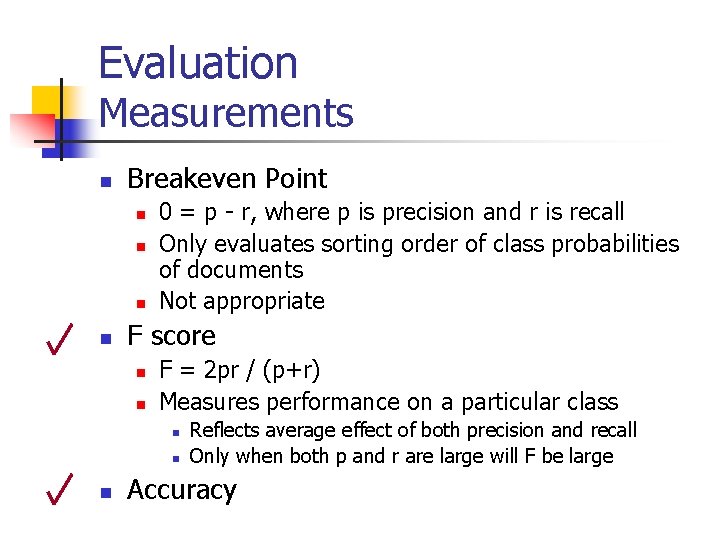

Evaluation Measurements n Breakeven Point n n 0 = p - r, where p is precision and r is recall Only evaluates sorting order of class probabilities of documents Not appropriate F score n n F = 2 pr / (p+r) Measures performance on a particular class n n n Reflects average effect of both precision and recall Only when both p and r are large will F be large Accuracy

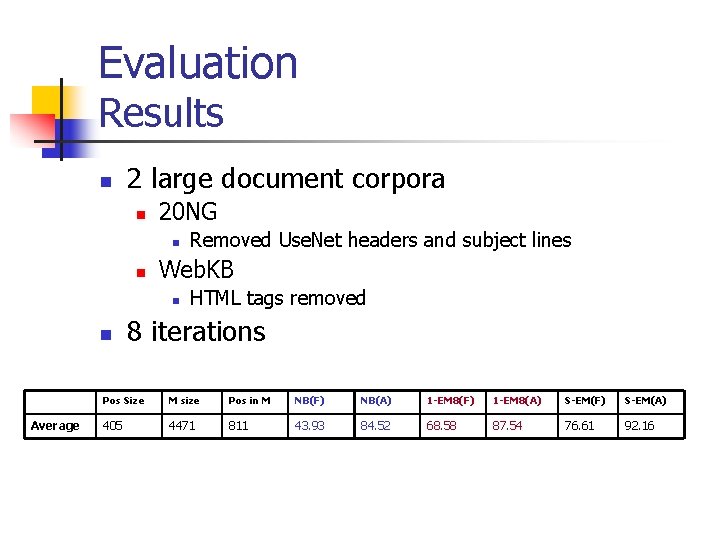

Evaluation Results n 2 large document corpora n 20 NG n n Web. KB n n Average Removed Use. Net headers and subject lines HTML tags removed 8 iterations Pos Size M size Pos in M NB(F) NB(A) 1 -EM 8(F) 1 -EM 8(A) S-EM(F) S-EM(A) 405 4471 811 43. 93 84. 52 68. 58 87. 54 76. 61 92. 16

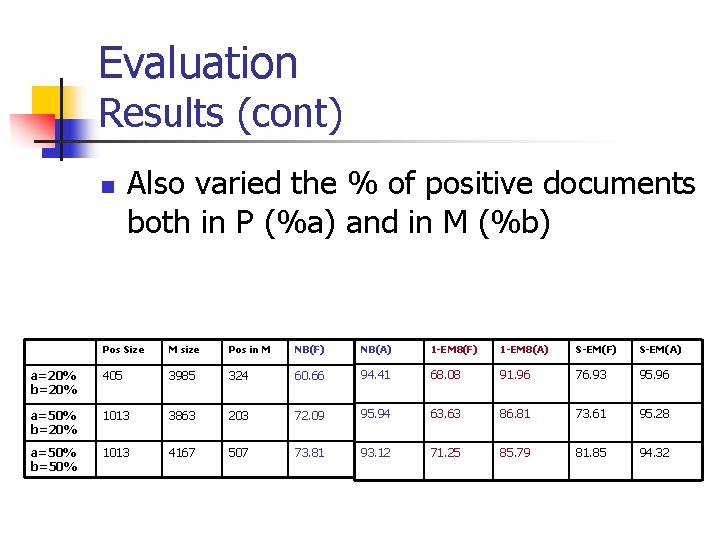

Evaluation Results (cont) n Also varied the % of positive documents both in P (%a) and in M (%b) Pos Size M size Pos in M NB(F) NB(A) 1 -EM 8(F) 1 -EM 8(A) S-EM(F) S-EM(A) a=20% b=20% 405 3985 324 60. 66 94. 41 68. 08 91. 96 76. 93 95. 96 a=50% b=20% 1013 3863 203 72. 09 95. 94 63. 63 86. 81 73. 61 95. 28 a=50% b=50% 1013 4167 507 73. 81 93. 12 71. 25 85. 79 81. 85 94. 32

Conclusions n n This paper studied the problem of classification with only partial information: one class and a set of mixed documents Technique n n Naïve Bayes classifier Expectation Maximization algorithm n n n Reinitialized using the positive documents and the most likely negative documents to compensate bias Use estimate of classification error to select a good classifier Extremely accurate results

- Slides: 22