ENVISION ACCELERATE ARRIVE Real Acceleration for Mathematica Simon

![New Cholesky. Decomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute. New Cholesky. Decomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute.](https://slidetodoc.com/presentation_image_h/24df296822a50a6faa527cf45115c08c/image-24.jpg)

![New QRDecomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute. Timing[QRDecomposition[B]; New QRDecomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute. Timing[QRDecomposition[B];](https://slidetodoc.com/presentation_image_h/24df296822a50a6faa527cf45115c08c/image-25.jpg)

![New complex Dot[] performance A=Table[Complex[1. 5, 1. 5], {n}]; Absolute. Timing[Dot[Transpose[A], A]; ] Copyright New complex Dot[] performance A=Table[Complex[1. 5, 1. 5], {n}]; Absolute. Timing[Dot[Transpose[A], A]; ] Copyright](https://slidetodoc.com/presentation_image_h/24df296822a50a6faa527cf45115c08c/image-26.jpg)

- Slides: 29

ENVISION. ACCELERATE. ARRIVE. Real Acceleration for Mathematica® Simon Mc. Intosh-Smith VP of Applications, Clear. Speed Technology simon@clearspeed. com Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 1

Agenda • • • Introduction Accelerators Clear. Speed math acceleration technology Accelerating Mathematica Summary Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 2

ENVISION. ACCELERATE. ARRIVE. Introduction Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 3

Introduction • Mathematica® is being used to solve more and more computationally intensive problems • General purpose CPUs keep getting faster, but a new wave of application accelerators are emerging that could give much greater performance – Much as GPUs have done for graphics • Clear. Speed has been developing hardware accelerators specifically focused on scientific computing, and which accelerate the low-level math libraries used by Mathematica Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 4

ENVISION. ACCELERATE. ARRIVE. Accelerators Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 5

Accelerator technologies • Visualization and media processing – Good for graphics, video, game physics, speech, … – Graphics Processing Units (GPUs) are well established in the mainstream – But there was a time not too long ago when your PC still did all the graphics in software on the main CPU… – Can be applied to some 32 -bit applications today (64 -bit coming at much lower speed), but currently they are fairly hard to program and very power hungry – 200 W! • Embedded content processing – Data mining, encryption, XML, compression – Field Programmable Gate Arrays (FPGAs) are often being used here, mainly to accelerate integer-intensive codes – Poor at floating point, especially 64 -bit, and cut corners on precision so don’t get good accuracy – Very hard to program and get good performance Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 6

Accelerator technologies continued • Math Accelerators – Mostly floating point, 64 -bit performance is crucial, high precision, supporting true IEEE 754 floating point – Can accelerate numerically-intensive applications in • • • Finance Oil and Gas Economics Electromagnetics Bioinformatics And many, many more – This is what Clear. Speed has developed • To accelerate Mathematica, a true Math Accelerator is needed… Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 7

The other benefit of accelerators – low power • Running 1 watt for 1 years costs about $1 • Modern CPUs can consume around 100 W – $100/year running cost for the CPU alone if used 24/7 – Significant associated CO 2 emissions • Accelerators typically bring significant performance per watt gains – Examples later in this presentation show 1 CPU plus a 25 W Clear. Speed board running as fast as a 4 CPU (8 core) machine – This power consumption reduction of around 275 W, if applied 24/7, is a $275 energy cost saving – Not to mention how much smaller and quieter the accelerated system can be… Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 8

ENVISION. ACCELERATE. ARRIVE. Clear. Speed’s Math Acceleration Technology Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 9

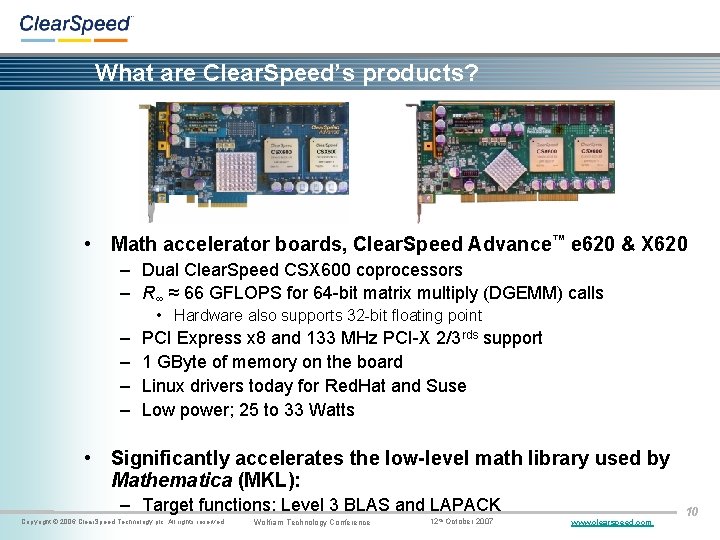

What are Clear. Speed’s products? • Math accelerator boards, Clear. Speed Advance™ e 620 & X 620 – Dual Clear. Speed CSX 600 coprocessors – R∞ ≈ 66 GFLOPS for 64 -bit matrix multiply (DGEMM) calls • Hardware also supports 32 -bit floating point – – PCI Express x 8 and 133 MHz PCI-X 2/3 rds support 1 GByte of memory on the board Linux drivers today for Red. Hat and Suse Low power; 25 to 33 Watts • Significantly accelerates the low-level math library used by Mathematica (MKL): – Target functions: Level 3 BLAS and LAPACK Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 10

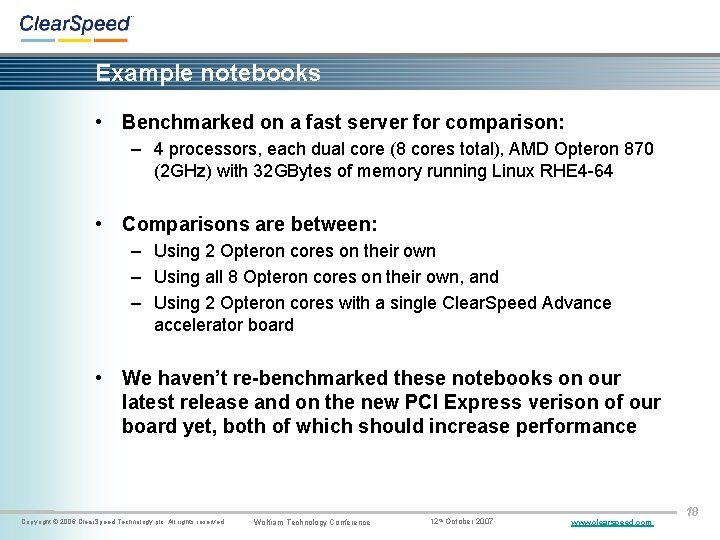

Which MKL functions can Clear. Speed accelerate? Previous release – CSXL 2. 51 and before: • L 3 BLAS: large matrix arithmetic (preferably at least 1, 000 on a side): – DGEMM – real matrix multiply • LAPACK: factorize and solve for large systems of linear equations – LU (DGETRF) New release – CSXL 2. 52: • L 3 BLAS: – ZGEMM – complex matrix multiply – DTRSM – triangular solve – Future release: DTRMM, DSYRK and others • LAPACK: – – LU (DGETRS) QR (DGEQRF, DORGQR & DORMQR) Cholesky (DPOTRF & DPOTRS) Future release – complex versions of the above Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 11

Software development kit (SDK) • C compiler with vector extensions (ANSI-C based commercial compiler), assembler, libraries, ddd/gdbbased debugger, newlib-based C-rtl etc. • Clear. Speed Advance development boards • Available for Linux, Windows Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 12

ENVISION. ACCELERATE. ARRIVE. Accelerating Mathematica Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 13

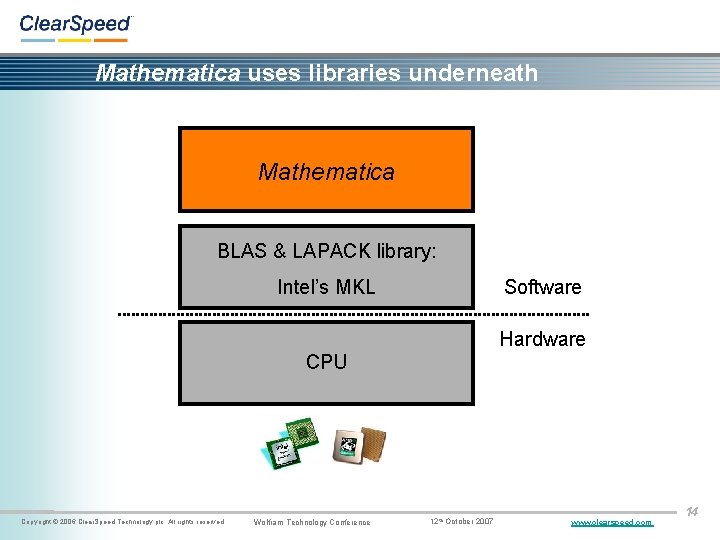

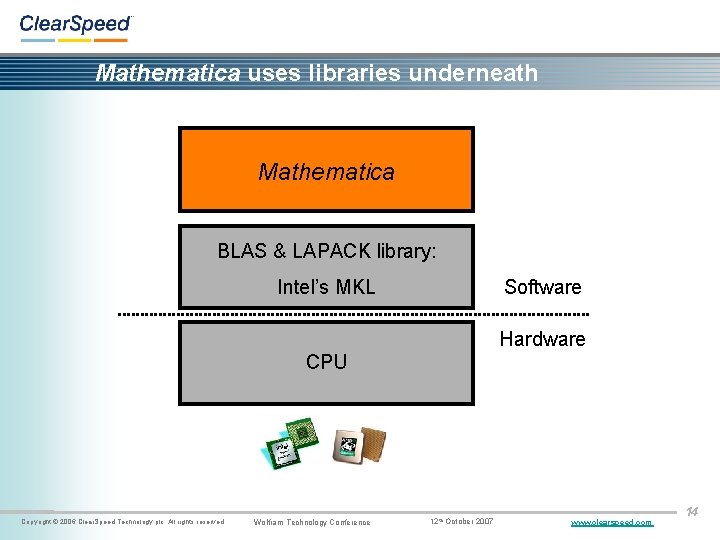

Mathematica uses libraries underneath Mathematica BLAS & LAPACK library: Software Intel’s MKL Hardware CPU Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 14

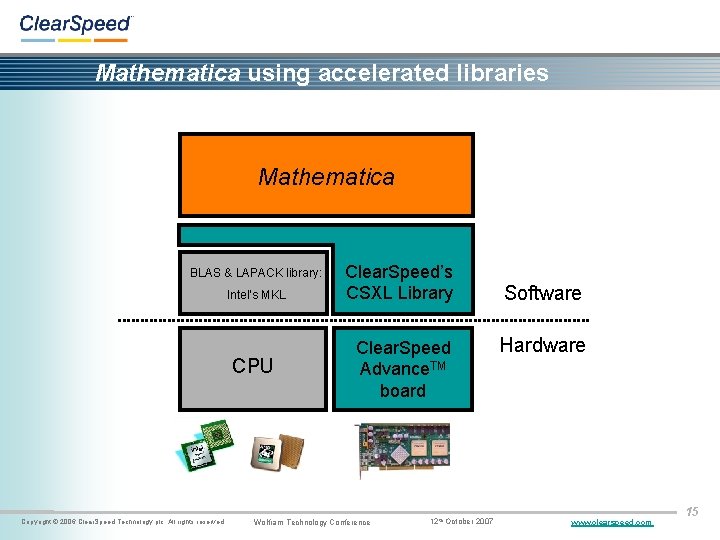

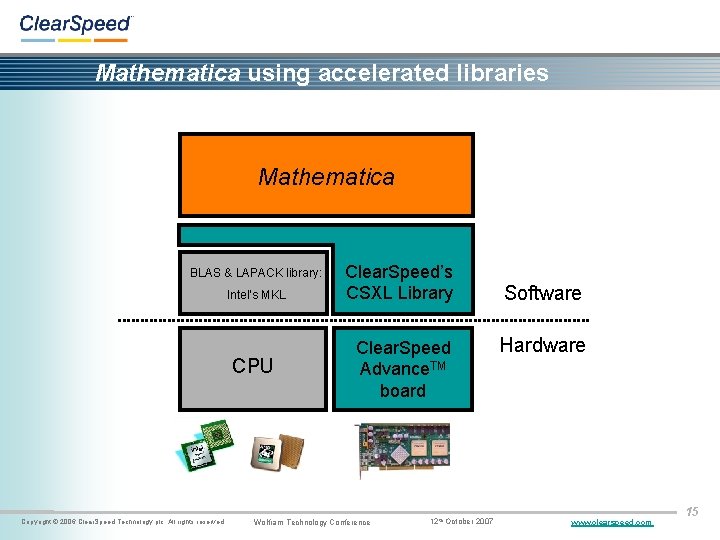

Mathematica using accelerated libraries Mathematica BLAS & LAPACK library: Intel’s MKL CPU Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Clear. Speed’s CSXL Library Clear. Speed Advance. TM board Wolfram Technology Conference 12 th October 2007 Software Hardware www. clearspeed. com 15

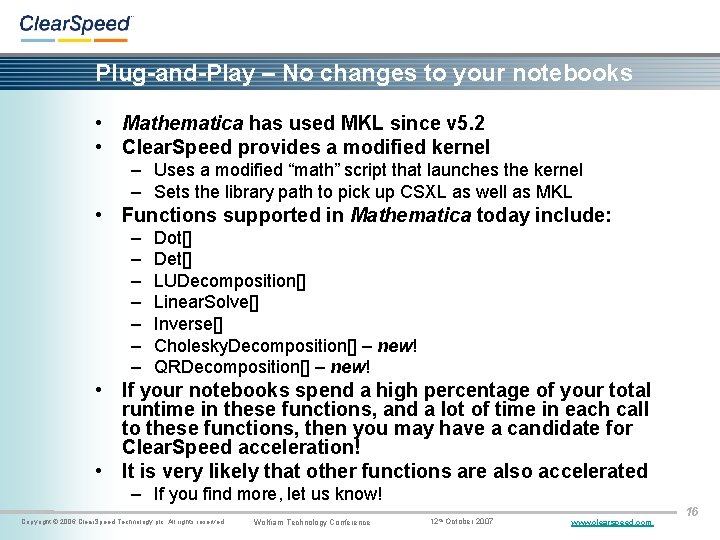

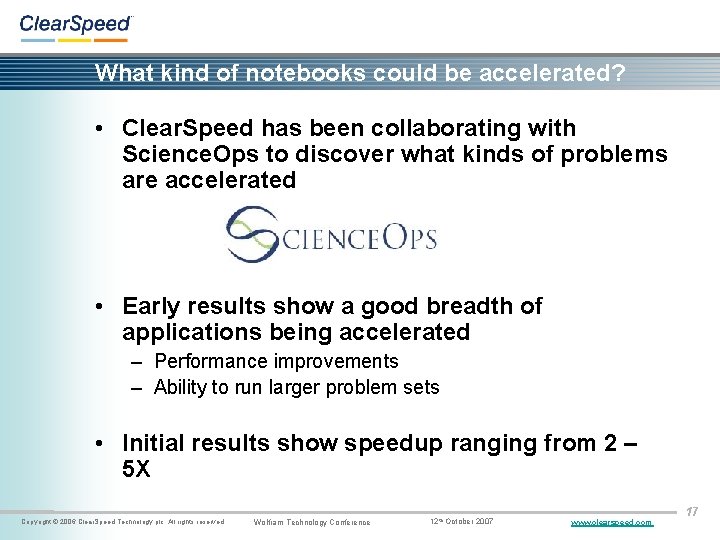

Plug-and-Play – No changes to your notebooks • Mathematica has used MKL since v 5. 2 • Clear. Speed provides a modified kernel – Uses a modified “math” script that launches the kernel – Sets the library path to pick up CSXL as well as MKL • Functions supported in Mathematica today include: – – – – Dot[] Det[] LUDecomposition[] Linear. Solve[] Inverse[] Cholesky. Decomposition[] – new! QRDecomposition[] – new! • If your notebooks spend a high percentage of your total runtime in these functions, and a lot of time in each call to these functions, then you may have a candidate for Clear. Speed acceleration! • It is very likely that other functions are also accelerated – If you find more, let us know! Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 16

What kind of notebooks could be accelerated? • Clear. Speed has been collaborating with Science. Ops to discover what kinds of problems are accelerated • Early results show a good breadth of applications being accelerated – Performance improvements – Ability to run larger problem sets • Initial results show speedup ranging from 2 – 5 X Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 17

Example notebooks • Benchmarked on a fast server for comparison: – 4 processors, each dual core (8 cores total), AMD Opteron 870 (2 GHz) with 32 GBytes of memory running Linux RHE 4 -64 • Comparisons are between: – Using 2 Opteron cores on their own – Using all 8 Opteron cores on their own, and – Using 2 Opteron cores with a single Clear. Speed Advance accelerator board • We haven’t re-benchmarked these notebooks on our latest release and on the new PCI Express verison of our board yet, both of which should increase performance Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 18

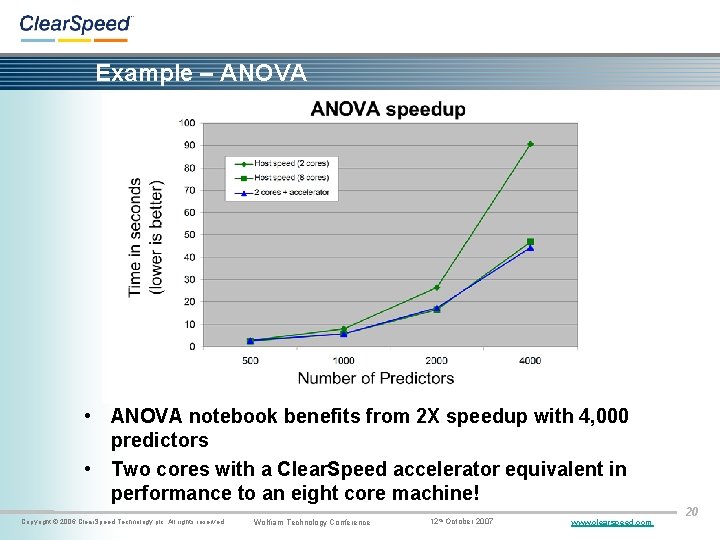

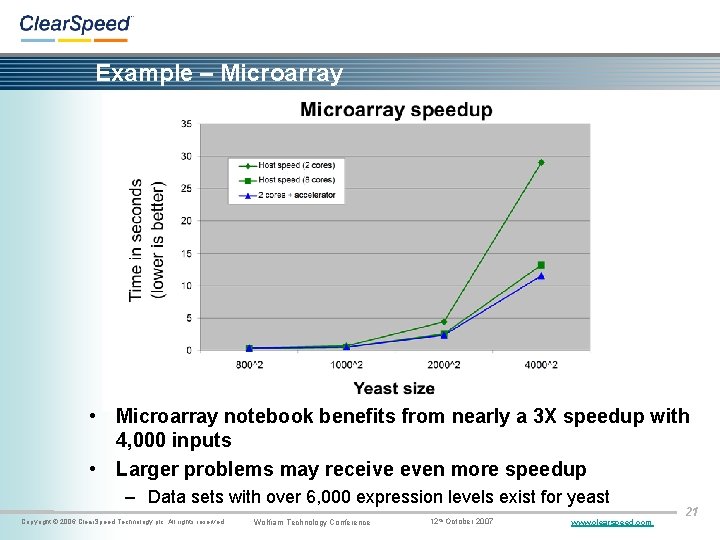

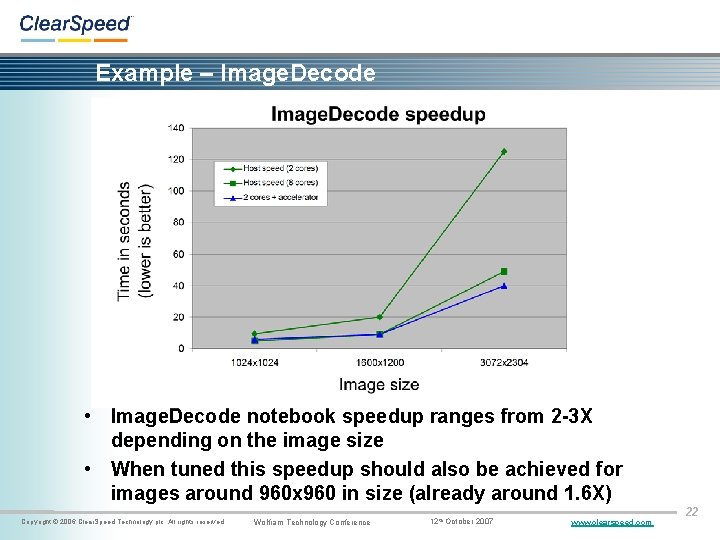

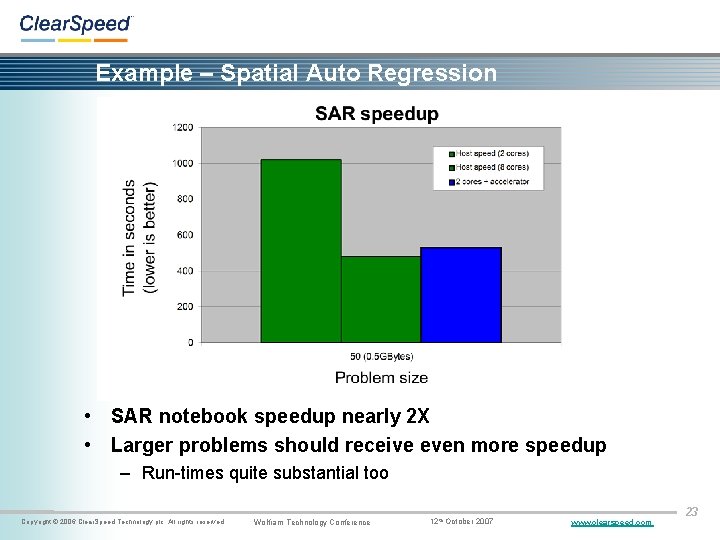

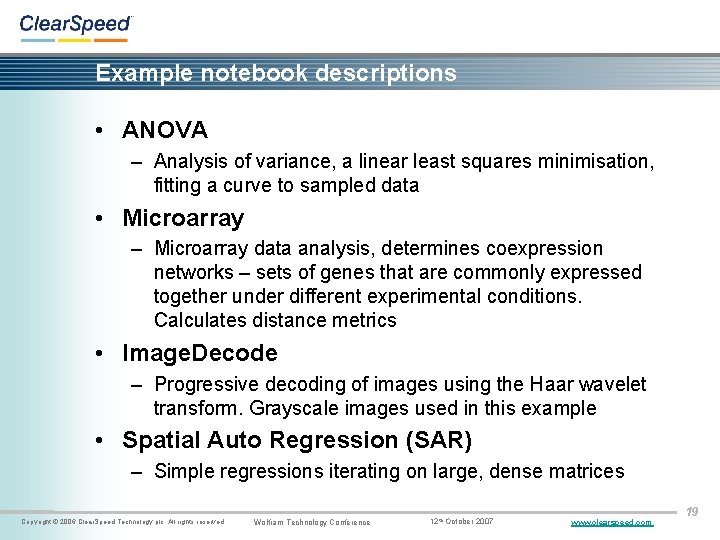

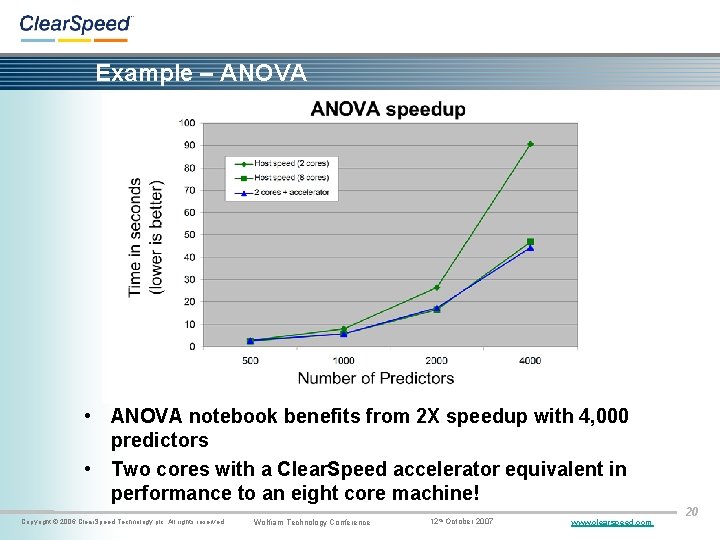

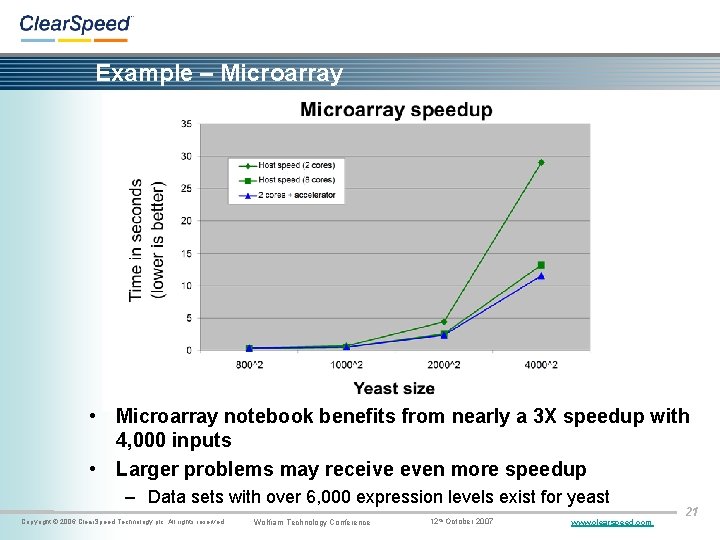

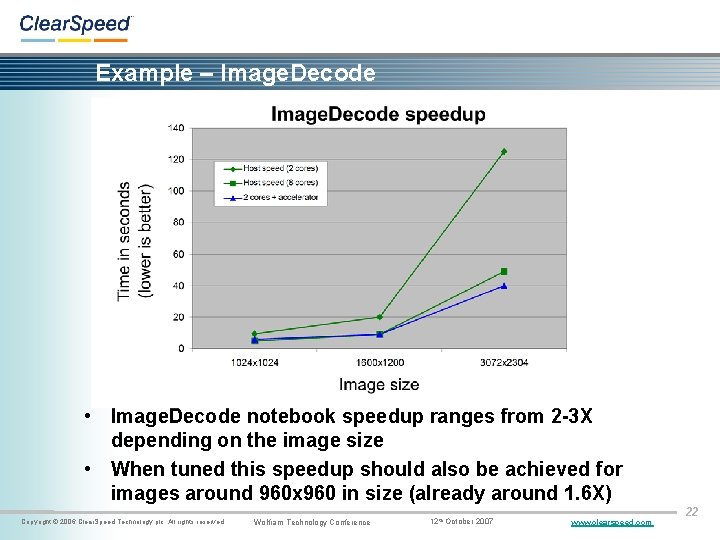

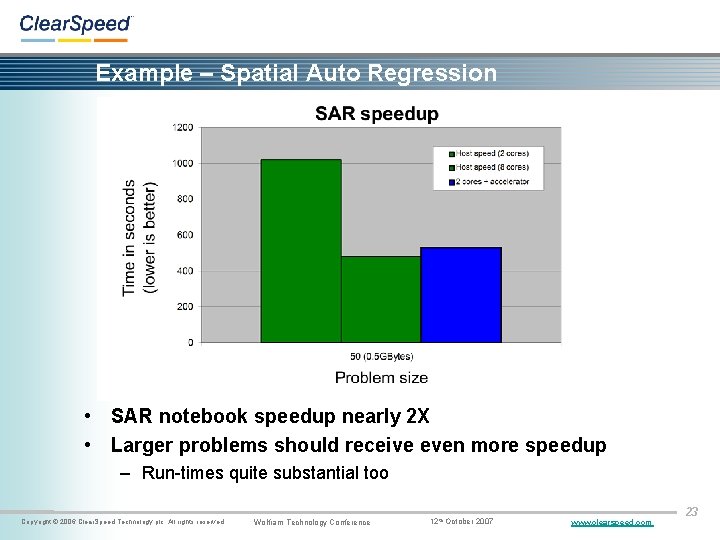

Example notebook descriptions • ANOVA – Analysis of variance, a linear least squares minimisation, fitting a curve to sampled data • Microarray – Microarray data analysis, determines coexpression networks – sets of genes that are commonly expressed together under different experimental conditions. Calculates distance metrics • Image. Decode – Progressive decoding of images using the Haar wavelet transform. Grayscale images used in this example • Spatial Auto Regression (SAR) – Simple regressions iterating on large, dense matrices Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 19

Example – ANOVA • ANOVA notebook benefits from 2 X speedup with 4, 000 predictors • Two cores with a Clear. Speed accelerator equivalent in performance to an eight core machine! Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 20

Example – Microarray • Microarray notebook benefits from nearly a 3 X speedup with 4, 000 inputs • Larger problems may receive even more speedup – Data sets with over 6, 000 expression levels exist for yeast Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 21

Example – Image. Decode • Image. Decode notebook speedup ranges from 2 -3 X depending on the image size • When tuned this speedup should also be achieved for images around 960 x 960 in size (already around 1. 6 X) Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 22

Example – Spatial Auto Regression • SAR notebook speedup nearly 2 X • Larger problems should receive even more speedup – Run-times quite substantial too Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 23

![New Cholesky Decomposition performance A TableRandom n B DotTransposeA A ClearA Absolute New Cholesky. Decomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute.](https://slidetodoc.com/presentation_image_h/24df296822a50a6faa527cf45115c08c/image-24.jpg)

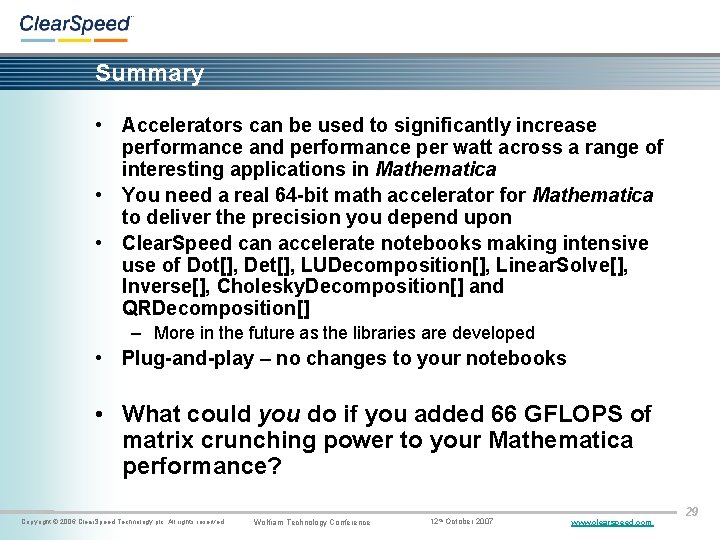

New Cholesky. Decomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute. Timing[Cholesky. Decomposition[B]; ] Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 24

![New QRDecomposition performance A TableRandom n B DotTransposeA A ClearA Absolute TimingQRDecompositionB New QRDecomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute. Timing[QRDecomposition[B];](https://slidetodoc.com/presentation_image_h/24df296822a50a6faa527cf45115c08c/image-25.jpg)

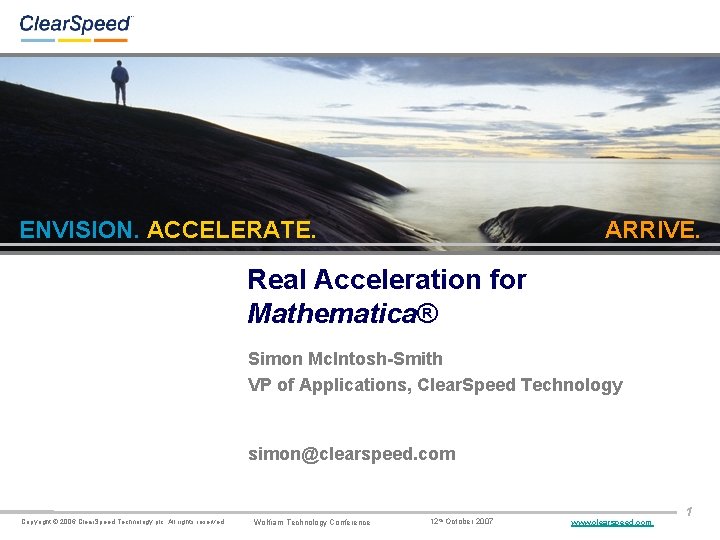

New QRDecomposition[] performance A = Table[Random[], {n}]; B = Dot[Transpose[A], A]; Clear[A]; Absolute. Timing[QRDecomposition[B]; ] Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 25

![New complex Dot performance ATableComplex1 5 1 5 n Absolute TimingDotTransposeA A Copyright New complex Dot[] performance A=Table[Complex[1. 5, 1. 5], {n}]; Absolute. Timing[Dot[Transpose[A], A]; ] Copyright](https://slidetodoc.com/presentation_image_h/24df296822a50a6faa527cf45115c08c/image-26.jpg)

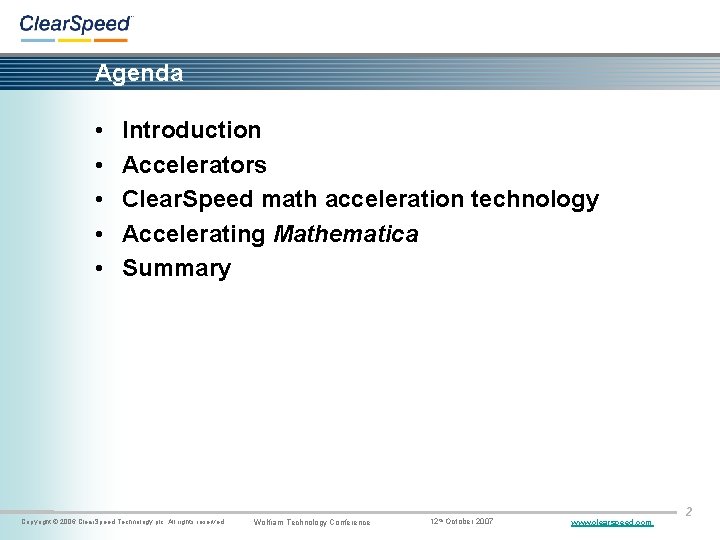

New complex Dot[] performance A=Table[Complex[1. 5, 1. 5], {n}]; Absolute. Timing[Dot[Transpose[A], A]; ] Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 26

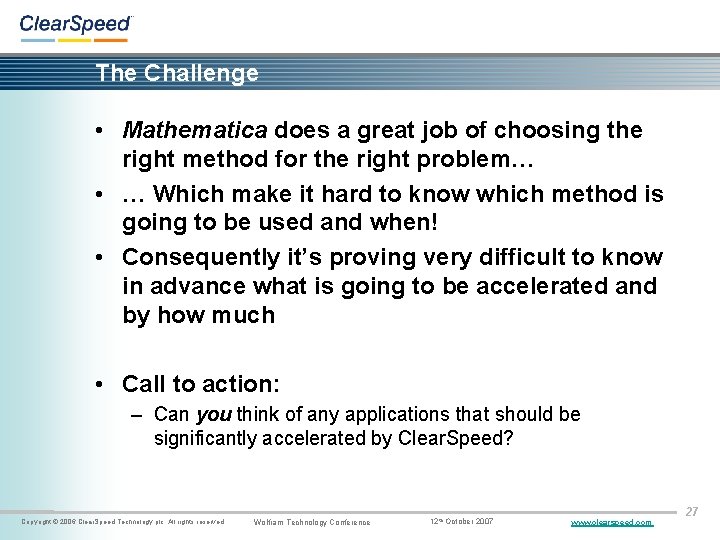

The Challenge • Mathematica does a great job of choosing the right method for the right problem… • … Which make it hard to know which method is going to be used and when! • Consequently it’s proving very difficult to know in advance what is going to be accelerated and by how much • Call to action: – Can you think of any applications that should be significantly accelerated by Clear. Speed? Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 27

ENVISION. ACCELERATE. ARRIVE. Summary Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 28

Summary • Accelerators can be used to significantly increase performance and performance per watt across a range of interesting applications in Mathematica • You need a real 64 -bit math accelerator for Mathematica to deliver the precision you depend upon • Clear. Speed can accelerate notebooks making intensive use of Dot[], Det[], LUDecomposition[], Linear. Solve[], Inverse[], Cholesky. Decomposition[] and QRDecomposition[] – More in the future as the libraries are developed • Plug-and-play – no changes to your notebooks • What could you do if you added 66 GFLOPS of matrix crunching power to your Mathematica performance? Copyright © 2006 Clear. Speed Technology plc. All rights reserved. Wolfram Technology Conference 12 th October 2007 www. clearspeed. com 29