COSC 121 Computer Systems Basic Architecture and Performance

COSC 121: Computer Systems. Basic Architecture and Performance Jeremy Bolton, Ph. D Assistant Teaching Professor Constructed using materials: - Patt and Patel Introduction to Computing Systems (2 nd) - Patterson and Hennessy Computer Organization and Design (4 th) **A special thanks to Eric Roberts and Mary Jane Irwin

Notes • Project 3 Assigned due soon. • Read PH. 1

Outline • • • Review: From Electron to Programming Languages Basic Computer Architecture How can we improve our computing model? (Improve wrt efficiency) – Performance Measures • • – Hardware is fast, software is slow • • – Speed Cost Power consumption Memory CISC RISC Parallelism • • • ILP DLP MLP – Going to memory is slow – – Interrupt-based communication: Polling can be slow. More and more parallelism • • Caching schemes Multi-threading, multi-cores, distributed computing … and more

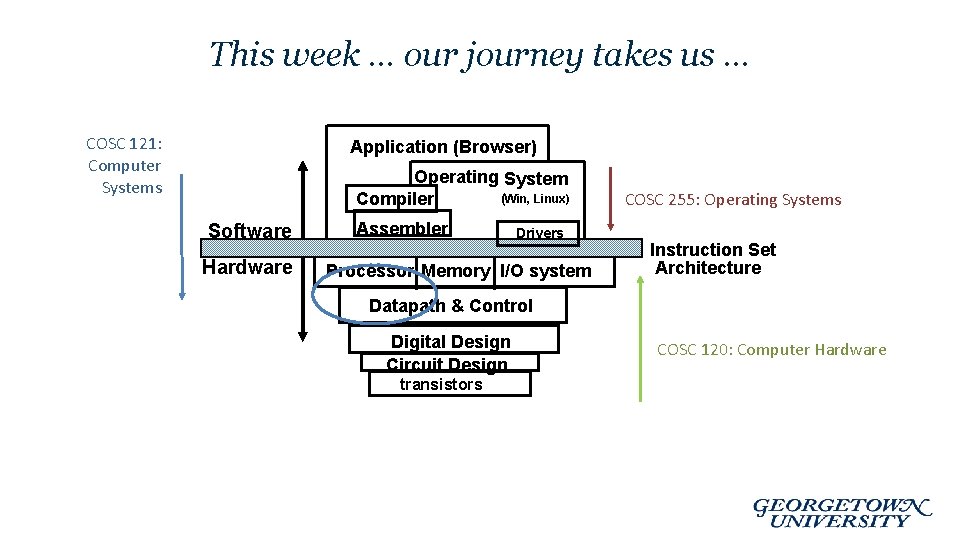

This week … our journey takes us … COSC 121: Computer Systems Application (Browser) Operating System (Win, Linux) Compiler Software Hardware Assembler Drivers Processor Memory I/O system COSC 255: Operating Systems Instruction Set Architecture Datapath & Control Digital Design Circuit Design transistors COSC 120: Computer Hardware

Assessing the performance of a Computer • Consider – Computers are defined by and limited by their hardware / ISAs – Many different computing models

Classes of Computers • Personal computers – Designed to deliver good performance to a single user at low cost usually executing 3 rd party software, usually incorporating a graphics display, a keyboard, and a mouse • Servers – Used to run larger programs for multiple, simultaneous users typically accessed only via a network and that places a greater emphasis on dependability and (often) security • Supercomputers – A high performance, high cost class of servers with hundreds to thousands of processors, terabytes of memory and petabytes of storage that are used for highend scientific and engineering applications • Embedded computers (processors) – A computer inside another device used for running one predetermined application

How do we define “better”: Performance Metrics • Purchasing perspective – given a collection of machines, which has the • best performance/cost? • least cost ? • best cost/performance? • Design perspective – faced with design options, which has the • best performance improvement ? • least cost ? • best cost/performance? • Both require 1. basis for comparison 2. metric for evaluation • Our goal is to understand what factors in the architecture contribute to overall system performance and the relative importance (and cost) of these factors

Throughput versus Response Time • Response time (execution time) – the time between the start and the completion of a task – Important to individual users • Throughput (bandwidth) – the total amount of work done in a given time – Important to data center managers q Will need different performance metrics as well as a different set of applications to benchmark embedded and desktop computers, which are more focused on response time, versus servers, which are more focused on throughput

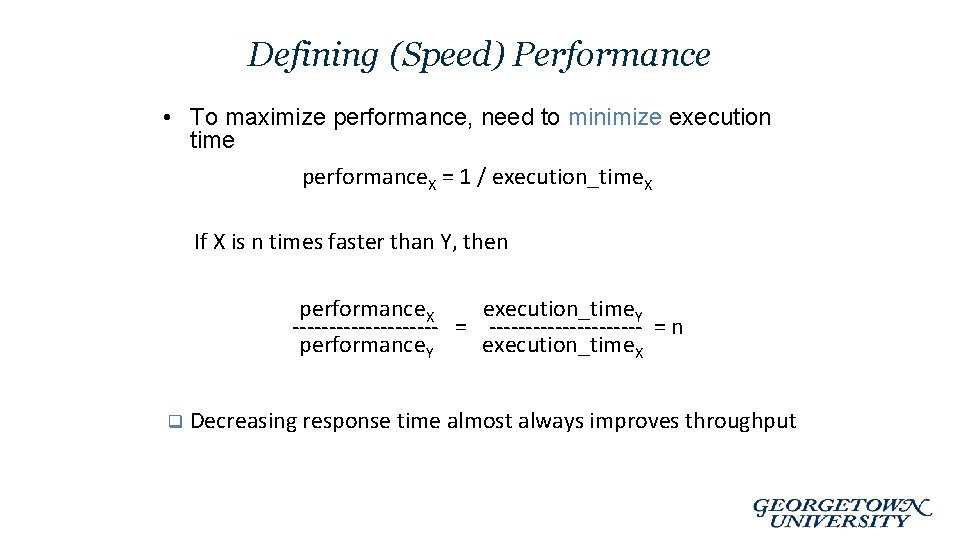

Defining (Speed) Performance • To maximize performance, need to minimize execution time performance. X = 1 / execution_time. X If X is n times faster than Y, then performance. X execution_time. Y ---------- = ----------- = n performance. Y execution_time. X q Decreasing response time almost always improves throughput

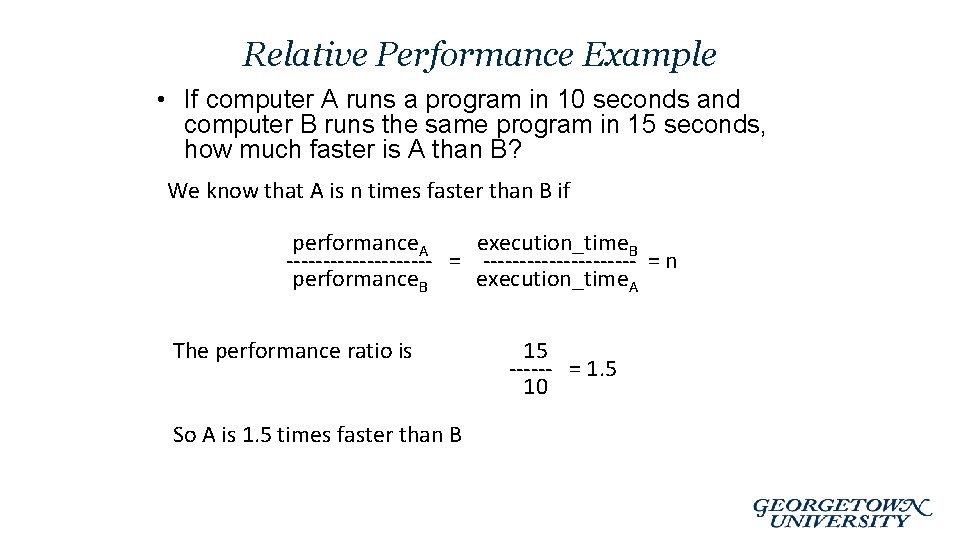

Relative Performance Example • If computer A runs a program in 10 seconds and computer B runs the same program in 15 seconds, how much faster is A than B? We know that A is n times faster than B if performance. A execution_time. B ---------- = ----------- = n performance. B execution_time. A The performance ratio is So A is 1. 5 times faster than B 15 ------ = 1. 5 10

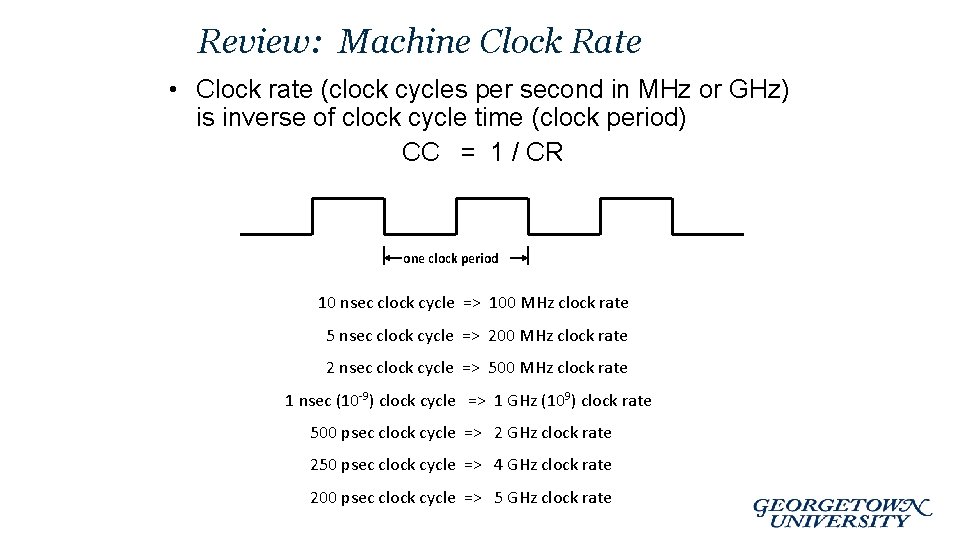

Review: Machine Clock Rate • Clock rate (clock cycles per second in MHz or GHz) is inverse of clock cycle time (clock period) CC = 1 / CR one clock period 10 nsec clock cycle => 100 MHz clock rate 5 nsec clock cycle => 200 MHz clock rate 2 nsec clock cycle => 500 MHz clock rate 1 nsec (10 -9) clock cycle => 1 GHz (109) clock rate 500 psec clock cycle => 2 GHz clock rate 250 psec clock cycle => 4 GHz clock rate 200 psec clock cycle => 5 GHz clock rate

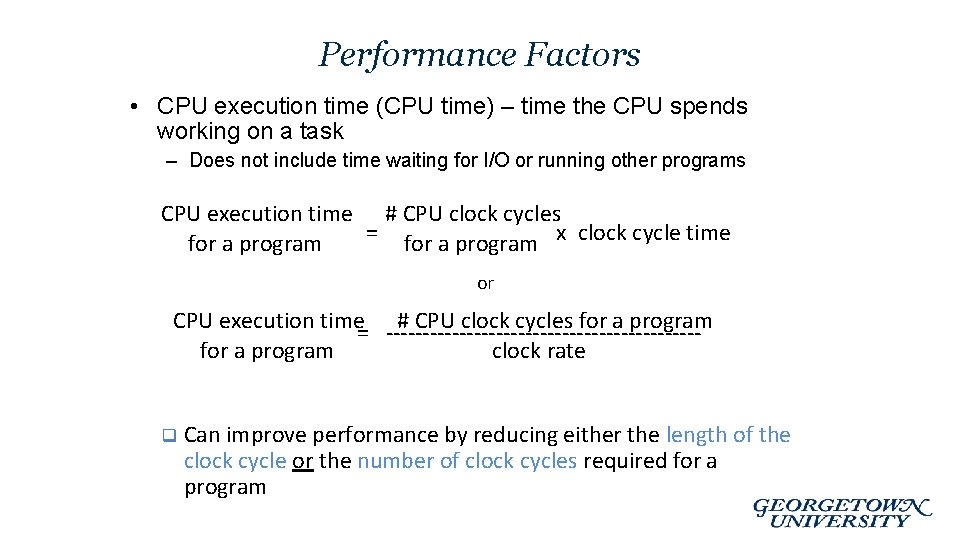

Performance Factors • CPU execution time (CPU time) – time the CPU spends working on a task – Does not include time waiting for I/O or running other programs CPU execution time # CPU clock cycles = for a program x clock cycle time for a program or CPU execution time= ---------------------# CPU clock cycles for a program clock rate q Can improve performance by reducing either the length of the clock cycle or the number of clock cycles required for a program

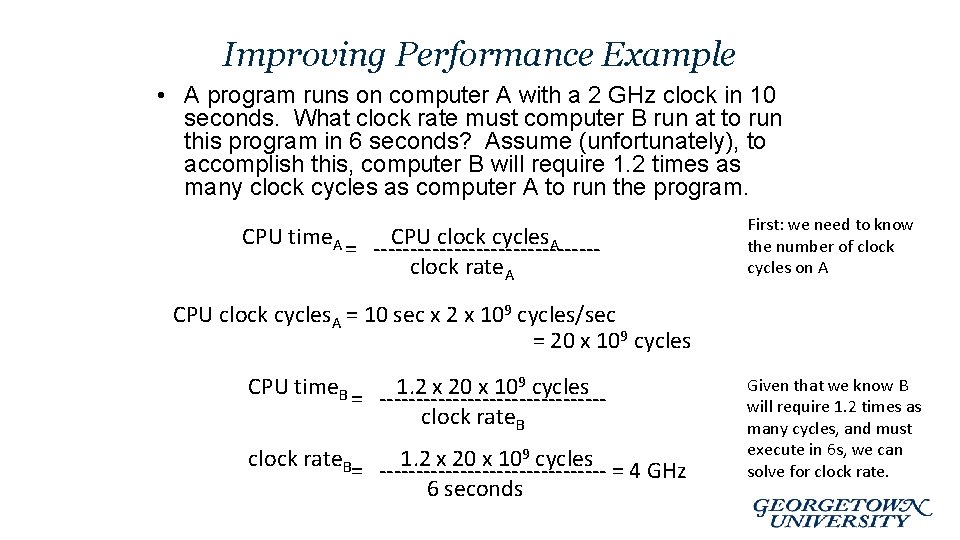

Improving Performance Example • A program runs on computer A with a 2 GHz clock in 10 seconds. What clock rate must computer B run at to run this program in 6 seconds? Assume (unfortunately), to accomplish this, computer B will require 1. 2 times as many clock cycles as computer A to run the program. CPU time. A = ---------------CPU clock cycles. A clock rate. A First: we need to know the number of clock cycles on A CPU clock cycles. A = 10 sec x 2 x 109 cycles/sec = 20 x 109 cycles CPU time. B = ---------------1. 2 x 20 x 109 cycles clock rate. B= ---------------1. 2 x 20 x 109 cycles = 4 GHz 6 seconds Given that we know B will require 1. 2 times as many cycles, and must execute in 6 s, we can solve for clock rate.

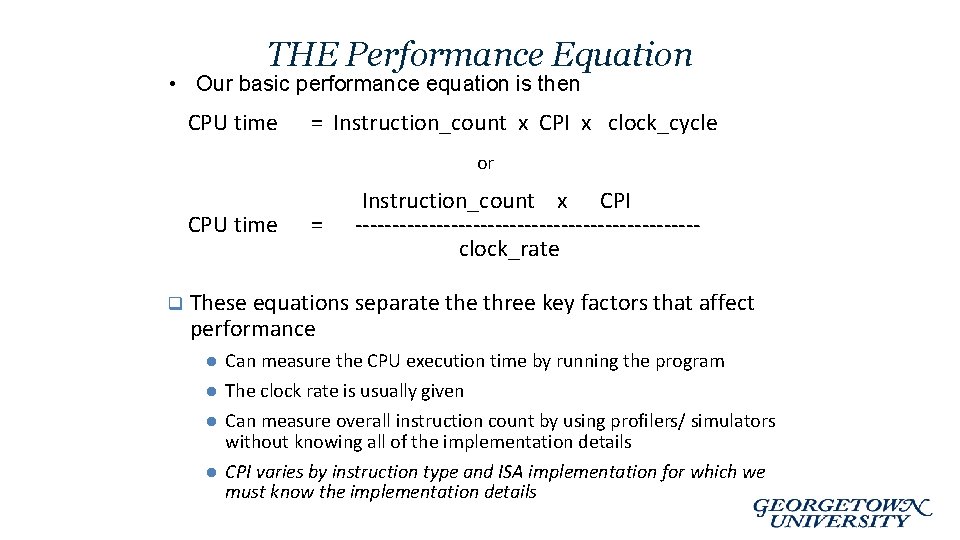

THE Performance Equation • Our basic performance equation is then CPU time = Instruction_count x CPI x clock_cycle or CPU time q = Instruction_count x CPI -----------------------clock_rate These equations separate three key factors that affect performance l l Can measure the CPU execution time by running the program The clock rate is usually given l Can measure overall instruction count by using profilers/ simulators without knowing all of the implementation details l CPI varies by instruction type and ISA implementation for which we must know the implementation details

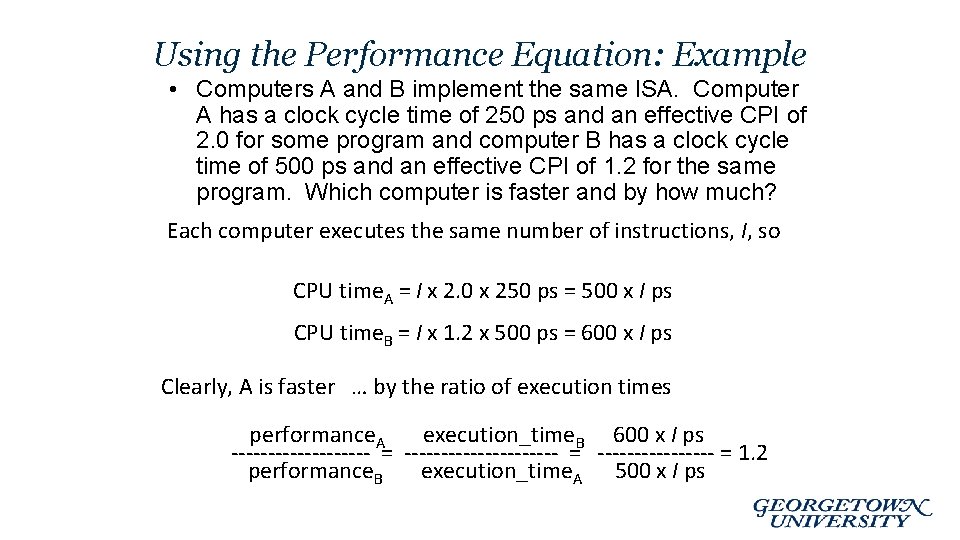

Using the Performance Equation: Example • Computers A and B implement the same ISA. Computer A has a clock cycle time of 250 ps and an effective CPI of 2. 0 for some program and computer B has a clock cycle time of 500 ps and an effective CPI of 1. 2 for the same program. Which computer is faster and by how much? Each computer executes the same number of instructions, I, so CPU time. A = I x 2. 0 x 250 ps = 500 x I ps CPU time. B = I x 1. 2 x 500 ps = 600 x I ps Clearly, A is faster … by the ratio of execution times performance. A execution_time. B 600 x I ps ------------------- = 1. 2 performance. B execution_time. A 500 x I ps

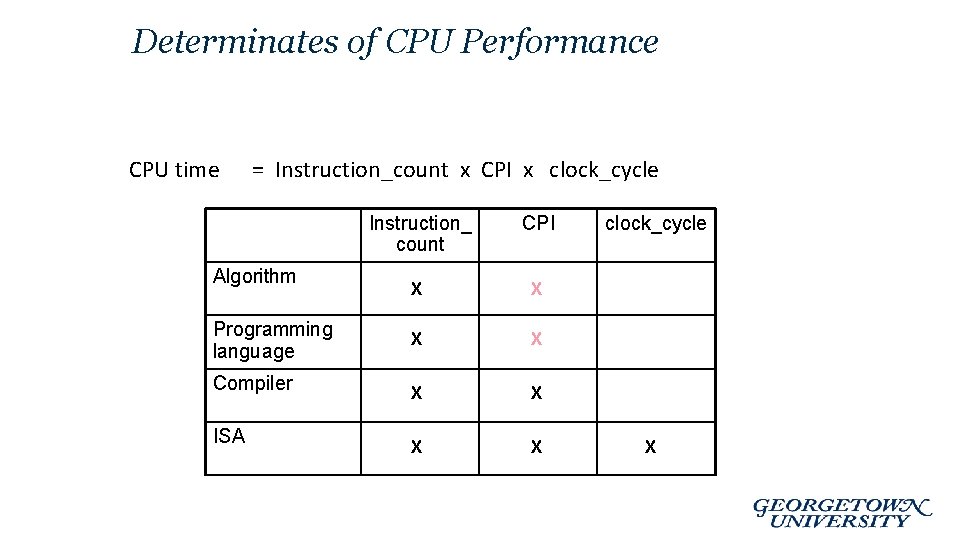

Determinates of CPU Performance CPU time = Instruction_count x CPI x clock_cycle Instruction_ count CPI X X Programming language X X Compiler X X ISA X X Algorithm clock_cycle X

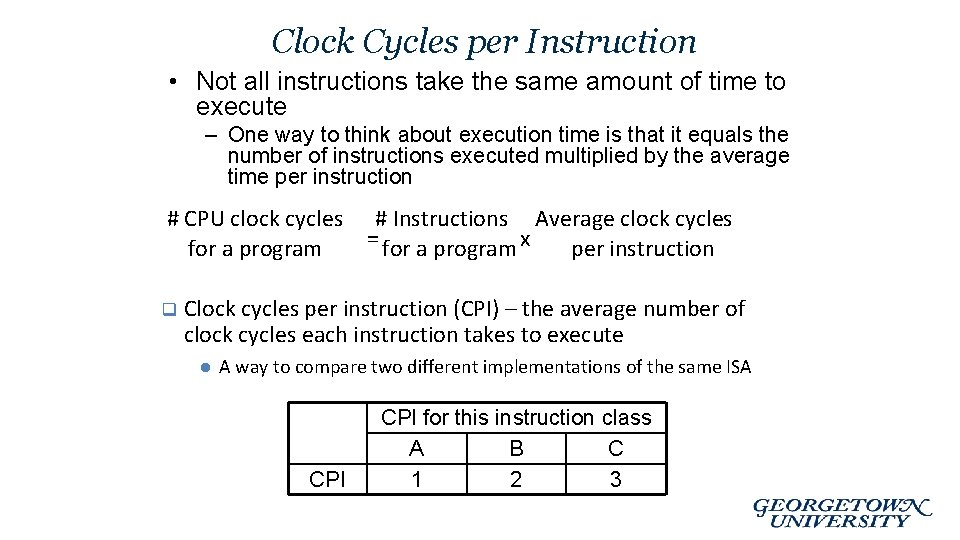

Clock Cycles per Instruction • Not all instructions take the same amount of time to execute – One way to think about execution time is that it equals the number of instructions executed multiplied by the average time per instruction # CPU clock cycles for a program q # Instructions Average clock cycles = for a program x per instruction Clock cycles per instruction (CPI) – the average number of clock cycles each instruction takes to execute l A way to compare two different implementations of the same ISA CPI for this instruction class A B C 1 2 3

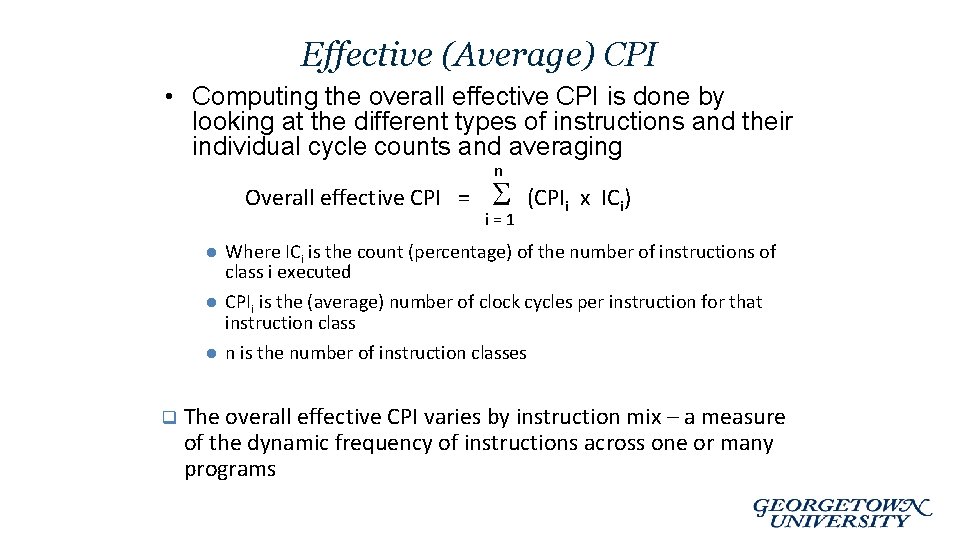

Effective (Average) CPI • Computing the overall effective CPI is done by looking at the different types of instructions and their individual cycle counts and averaging n Overall effective CPI = l l l q i=1 (CPIi x ICi) Where ICi is the count (percentage) of the number of instructions of class i executed CPIi is the (average) number of clock cycles per instruction for that instruction class n is the number of instruction classes The overall effective CPI varies by instruction mix – a measure of the dynamic frequency of instructions across one or many programs

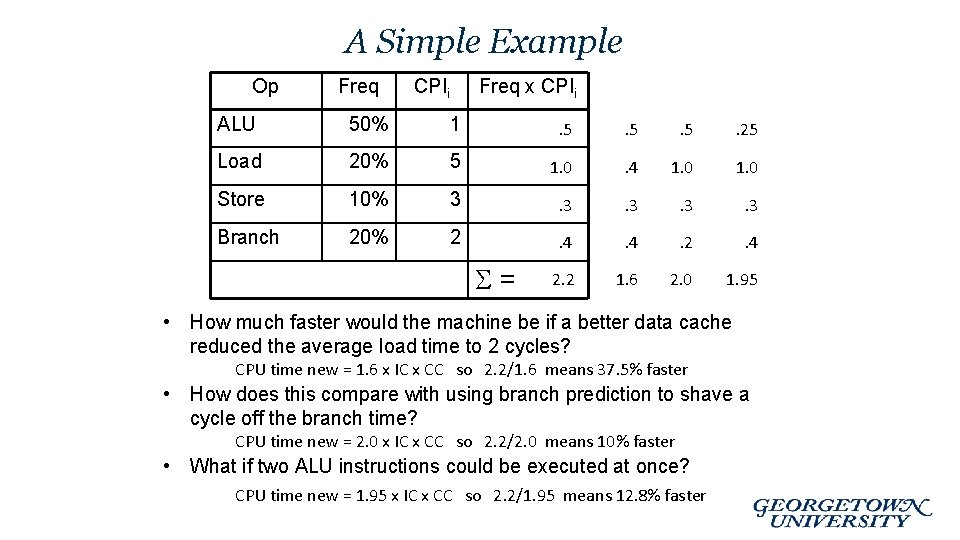

A Simple Example Op Freq CPIi Freq x CPIi ALU 50% 1 . 5 . 5 . 25 Load 20% 5 1. 0 . 4 1. 0 Store 10% 3 . 3 . 3 Branch 20% 2 . 4 2. 2 1. 6 2. 0 1. 95 = • How much faster would the machine be if a better data cache reduced the average load time to 2 cycles? CPU time new = 1. 6 x IC x CC so 2. 2/1. 6 means 37. 5% faster • How does this compare with using branch prediction to shave a cycle off the branch time? CPU time new = 2. 0 x IC x CC so 2. 2/2. 0 means 10% faster • What if two ALU instructions could be executed at once? CPU time new = 1. 95 x IC x CC so 2. 2/1. 95 means 12. 8% faster

Workloads and Benchmarks • Benchmarks – a set of programs that form a “workload” specifically chosen to measure performance • SPEC (System Performance Evaluation Cooperative) creates standard sets of benchmarks starting with SPEC 89. The latest is SPEC CPU 2006 which consists of 12 integer benchmarks (CINT 2006) and 17 floating-point benchmarks (CFP 2006). www. spec. org • There also benchmark collections for power workloads (SPECpower_ssj 2008), for mail workloads (SPECmail 2008), for multimedia workloads (mediabench), …

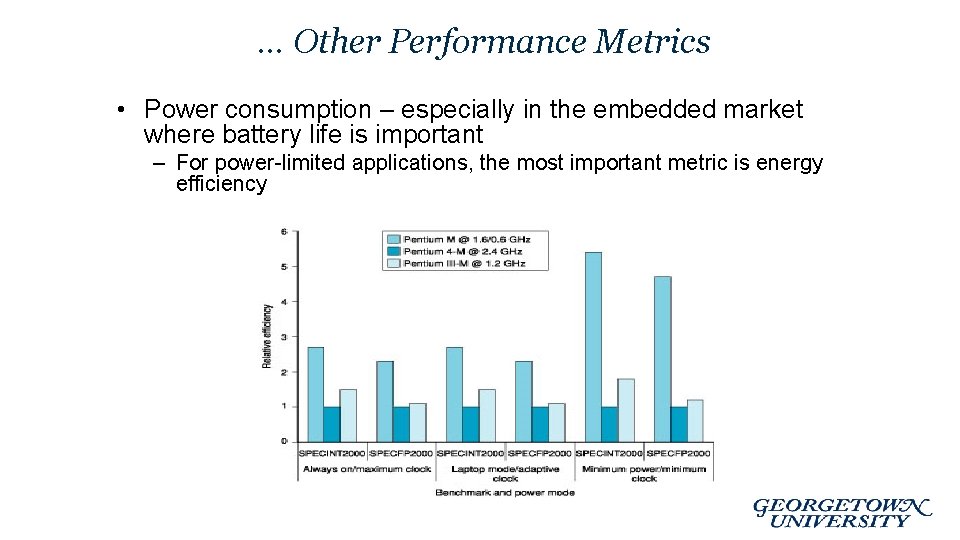

… Other Performance Metrics • Power consumption – especially in the embedded market where battery life is important – For power-limited applications, the most important metric is energy efficiency

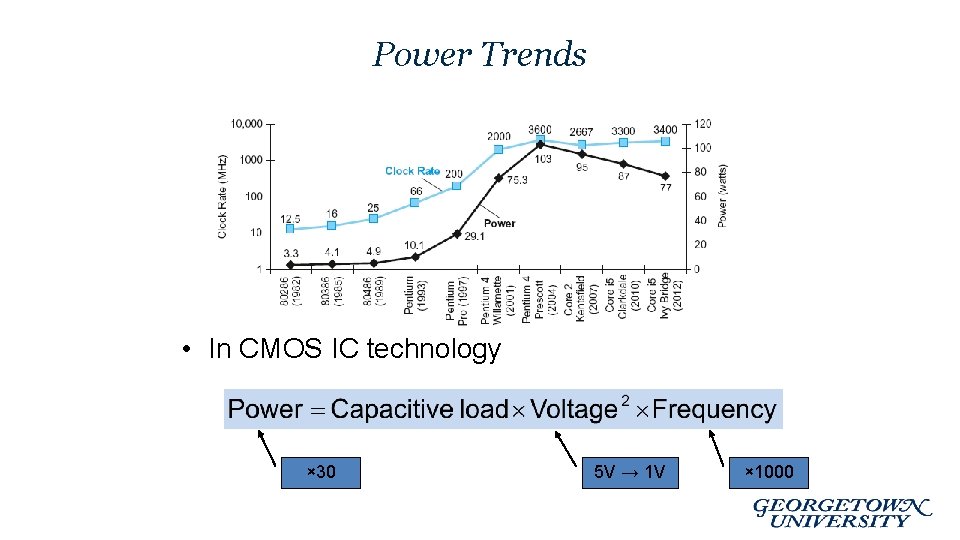

Power Trends • In CMOS IC technology × 30 5 V → 1 V × 1000

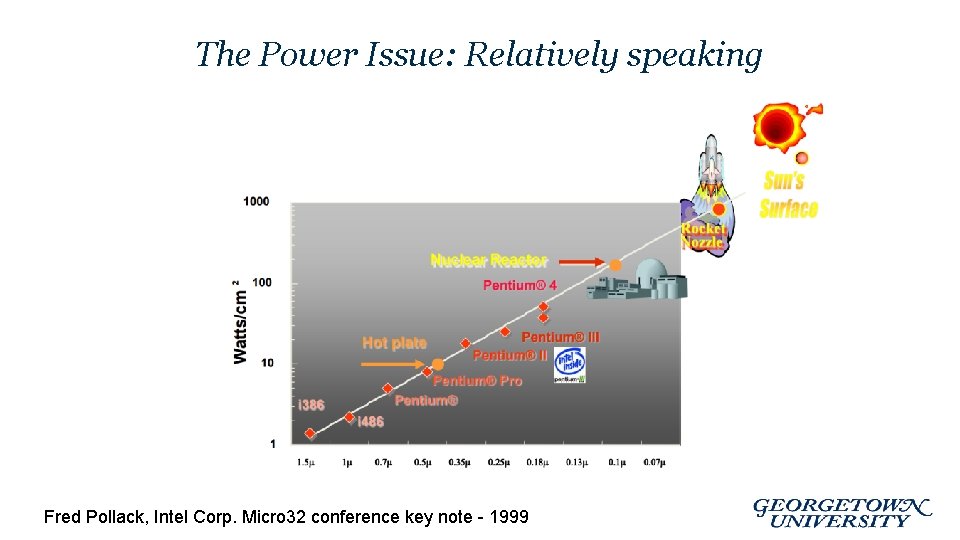

The Power Issue: Relatively speaking Fred Pollack, Intel Corp. Micro 32 conference key note - 1999

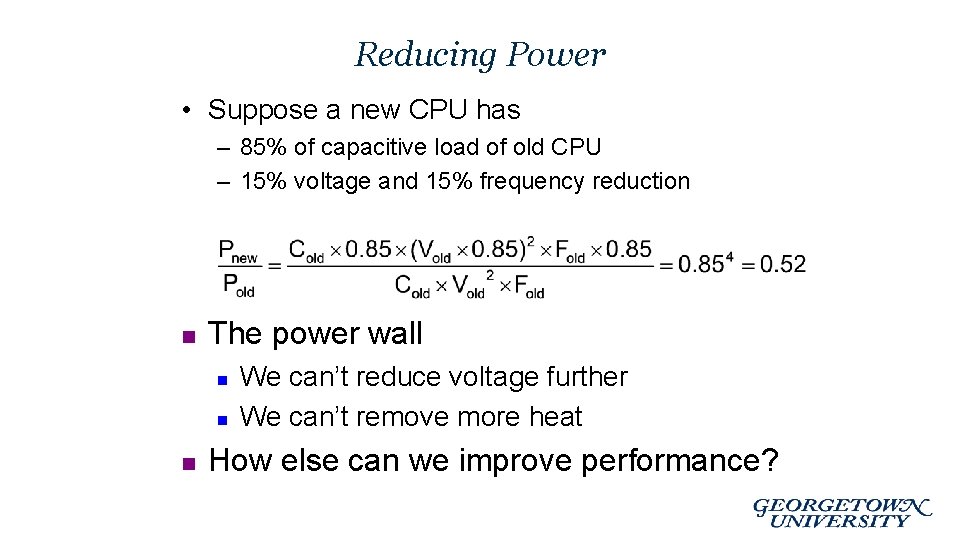

Reducing Power • Suppose a new CPU has – 85% of capacitive load of old CPU – 15% voltage and 15% frequency reduction n The power wall n n n We can’t reduce voltage further We can’t remove more heat How else can we improve performance?

Parallelism for improved performance • Given physical constraints – Clock Speeds (Power) – Transistor size (Moore’s Law is coming to an end … ? ) most current improvements have come from parallelism Parallelism in both hardware and software

Parallelism • Classes of parallelism in applications: – Data-Level Parallelism (DLP) – Task-Level Parallelism (TLP) • Classes of architectural parallelism: – – Instruction-Level Parallelism (ILP) Vector architectures/Graphic Processor Units (GPUs) Thread-Level Parallelism Request-Level Parallelism

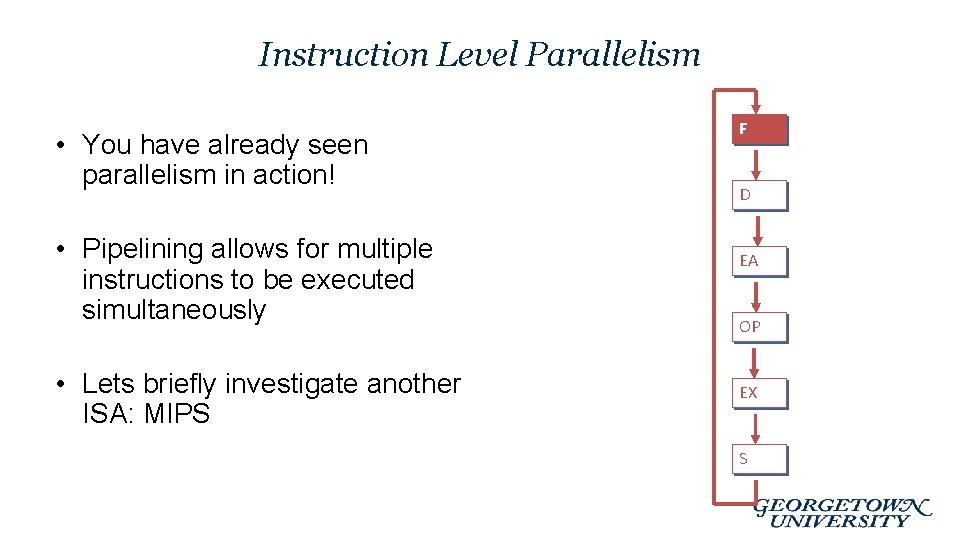

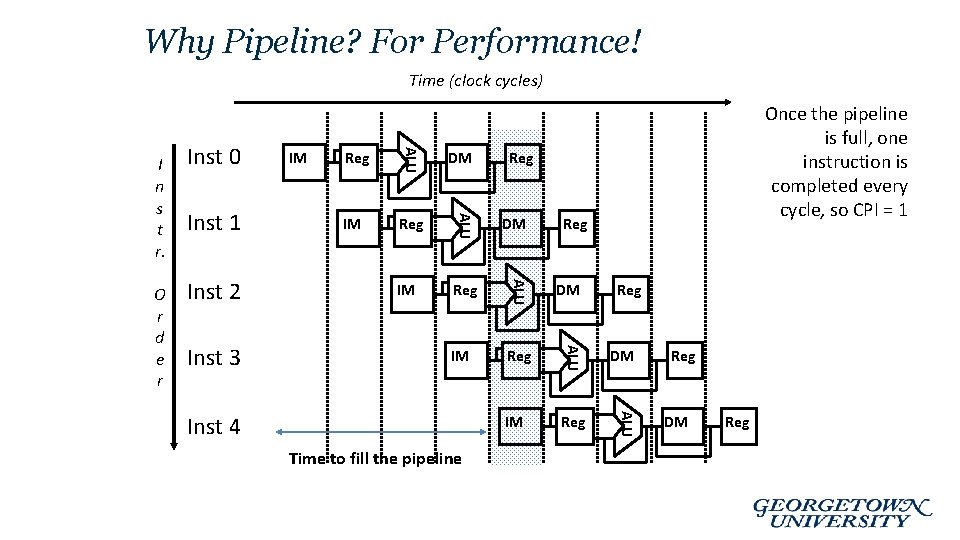

Instruction Level Parallelism • You have already seen parallelism in action! • Pipelining allows for multiple instructions to be executed simultaneously • Lets briefly investigate another ISA: MIPS F D EA OP EX S

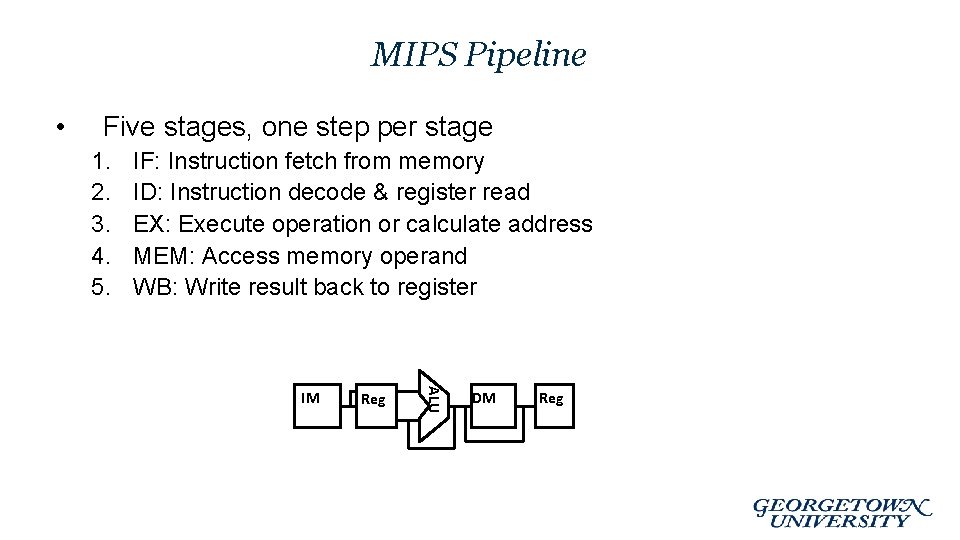

MIPS Pipeline • Five stages, one step per stage 1. 2. 3. 4. 5. IF: Instruction fetch from memory ID: Instruction decode & register read EX: Execute operation or calculate address MEM: Access memory operand WB: Write result back to register Reg ALU IM DM Reg

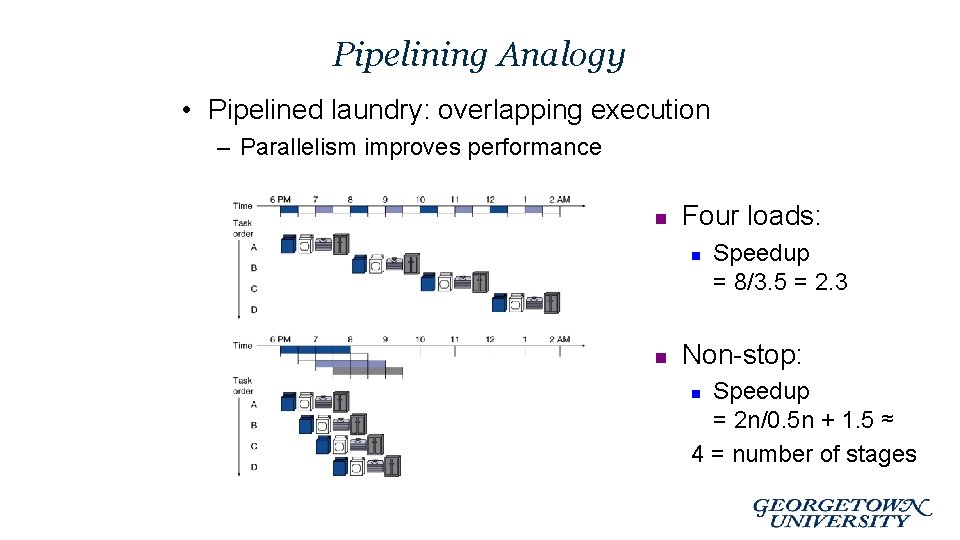

Pipelining Analogy • Pipelined laundry: overlapping execution – Parallelism improves performance n Four loads: n n Speedup = 8/3. 5 = 2. 3 Non-stop: Speedup = 2 n/0. 5 n + 1. 5 ≈ 4 = number of stages n

Why Pipeline? For Performance! Time (clock cycles) IM Reg DM IM Reg ALU Inst 3 DM ALU Inst 2 Reg ALU Inst 1 IM ALU O r d e r Inst 0 ALU I n s t r. Once the pipeline is full, one instruction is completed every cycle, so CPI = 1 Inst 4 Time to fill the pipeline Reg Reg DM Reg

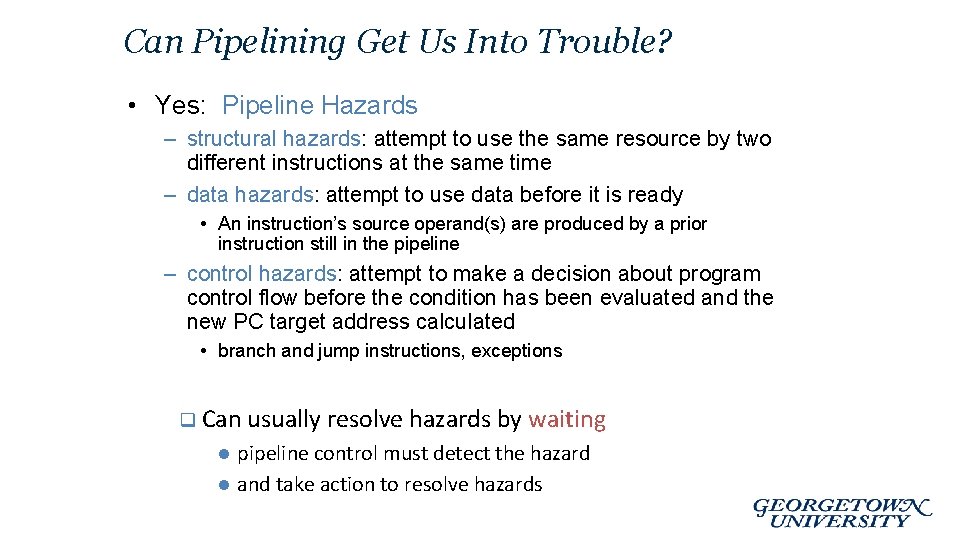

Can Pipelining Get Us Into Trouble? • Yes: Pipeline Hazards – structural hazards: attempt to use the same resource by two different instructions at the same time – data hazards: attempt to use data before it is ready • An instruction’s source operand(s) are produced by a prior instruction still in the pipeline – control hazards: attempt to make a decision about program control flow before the condition has been evaluated and the new PC target address calculated • branch and jump instructions, exceptions q Can usually resolve hazards by l l waiting pipeline control must detect the hazard and take action to resolve hazards

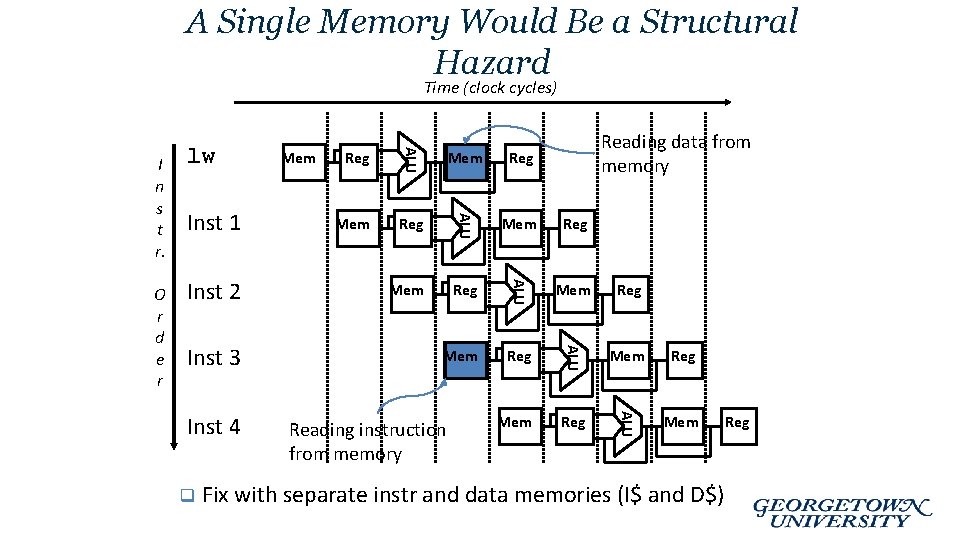

A Single Memory Would Be a Structural Hazard Time (clock cycles) q Reg Mem Reg Mem Reg ALU Inst 4 Reg ALU Inst 3 Mem Reading data from memory Mem ALU Inst 2 Reg ALU O r d e r Inst 1 Mem ALU I n s t r. lw Mem Reading instruction from memory Fix with separate instr and data memories (I$ and D$) Reg

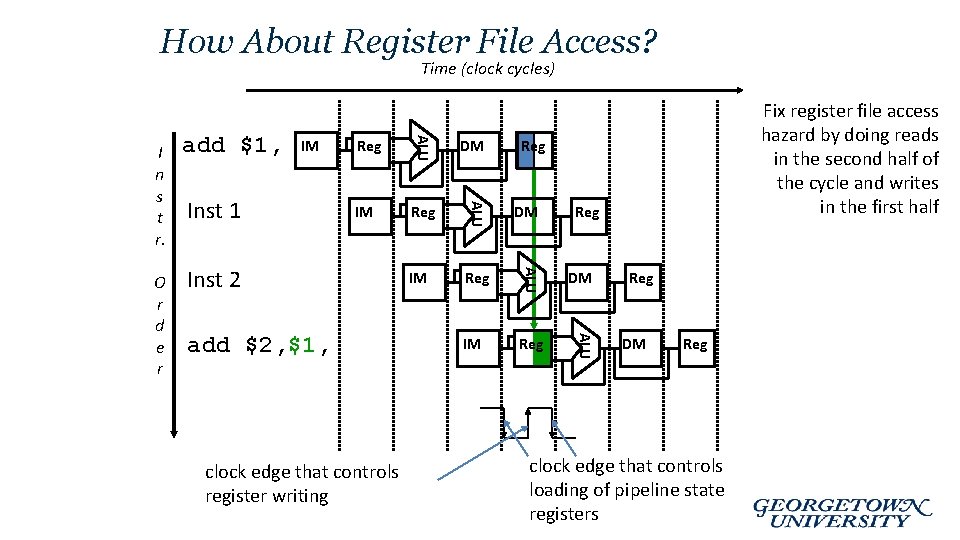

How About Register File Access? Time (clock cycles) DM IM Reg ALU Inst 1 Reg ALU IM ALU O r d e r add $1, ALU I n s t r. Inst 2 add $2, $1, clock edge that controls register writing Fix register file access hazard by doing reads in the second half of the cycle and writes in the first half Reg Reg DM Reg clock edge that controls loading of pipeline state registers

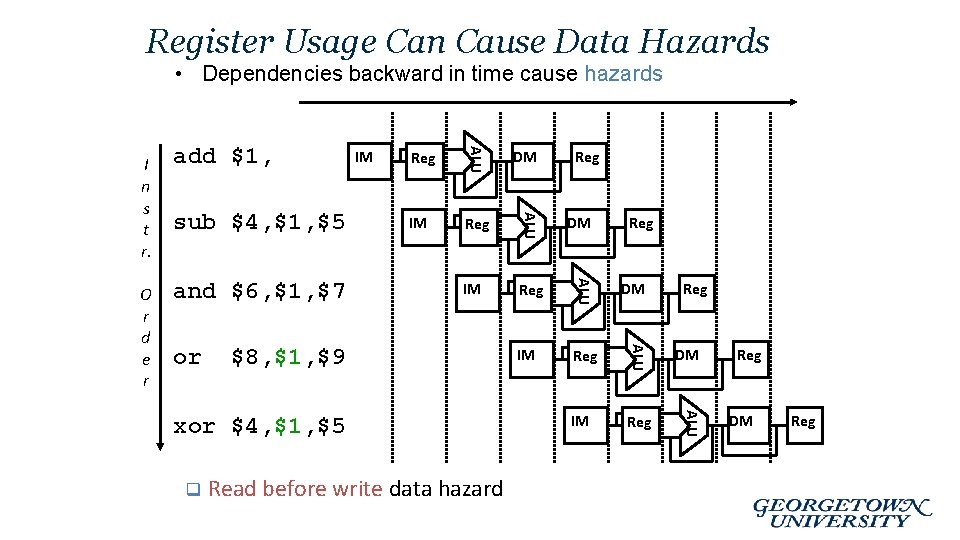

Register Usage Can Cause Data Hazards • Dependencies backward in time cause hazards IM Reg DM IM Reg ALU or DM ALU and $6, $1, $7 Reg ALU sub $4, $1, $5 IM ALU O r d e r add $1, ALU I n s t r. $8, $1, $9 xor $4, $1, $5 q Read before write data hazard Reg Reg DM Reg

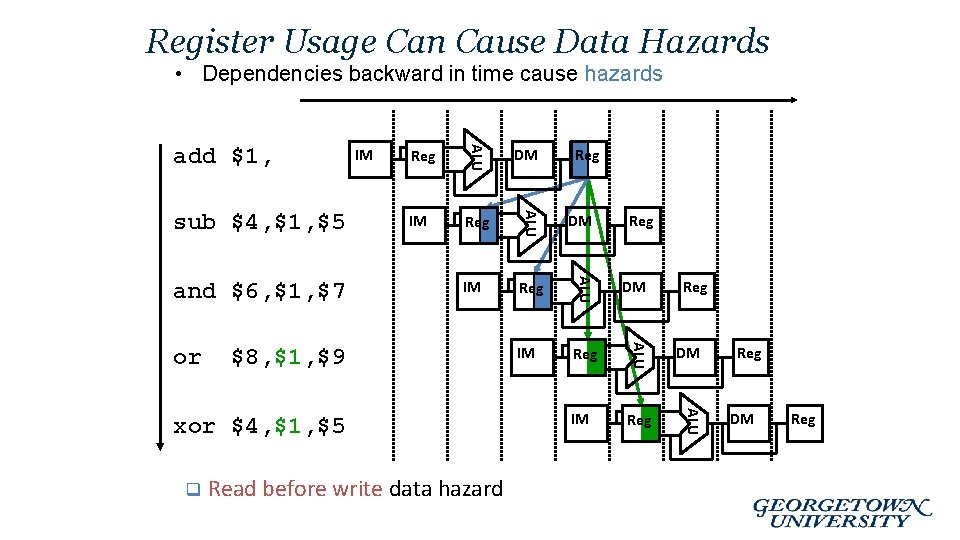

Register Usage Can Cause Data Hazards • Dependencies backward in time cause hazards IM Reg DM IM Reg ALU or DM ALU and $6, $1, $7 Reg ALU sub $4, $1, $5 IM ALU add $1, $8, $1, $9 xor $4, $1, $5 q Read before write data hazard Reg Reg DM Reg

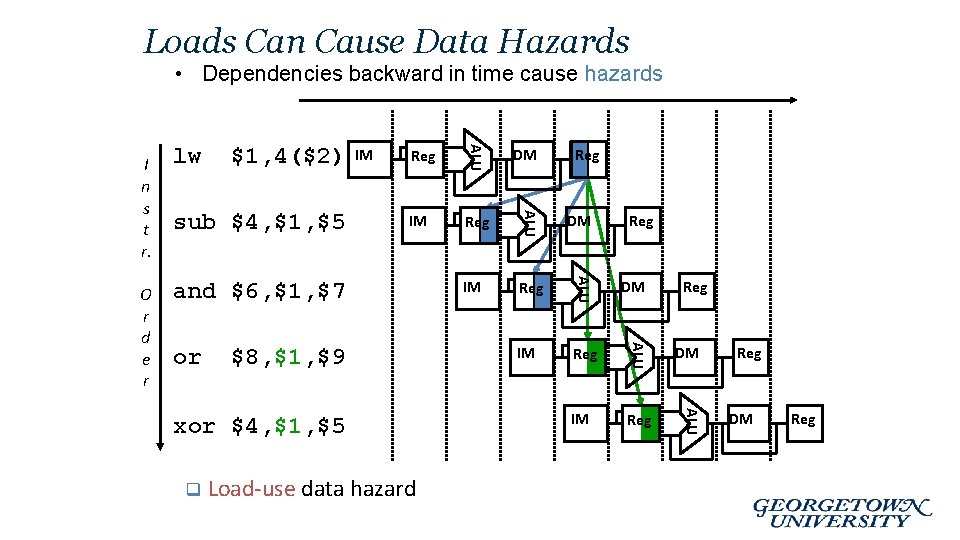

Loads Can Cause Data Hazards • Dependencies backward in time cause hazards Reg DM IM Reg ALU sub $4, $1, $5 IM ALU $1, 4($2) ALU O r d e r lw ALU I n s t r. and $6, $1, $7 or $8, $1, $9 xor $4, $1, $5 q Load-use data hazard Reg Reg DM Reg

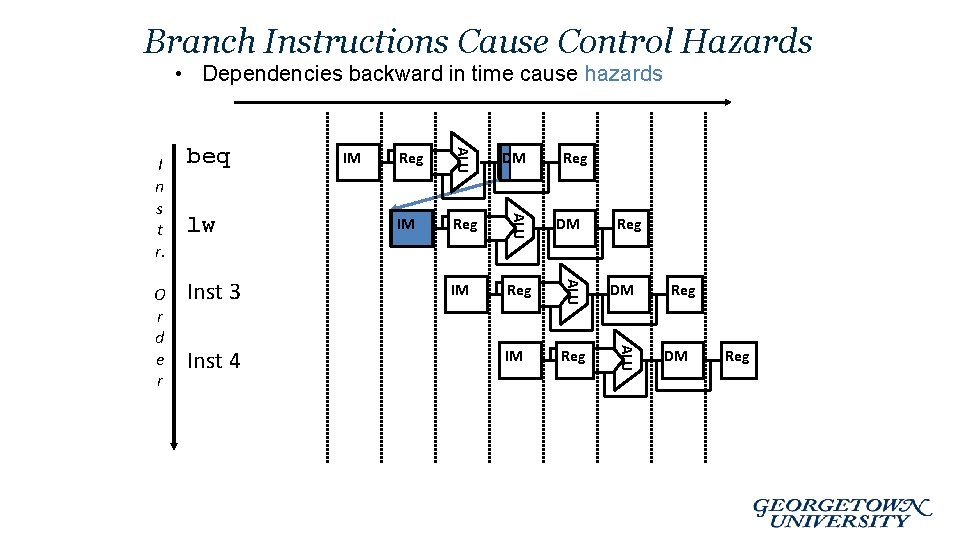

Branch Instructions Cause Control Hazards • Dependencies backward in time cause hazards Inst 4 IM Reg DM IM Reg ALU Inst 3 Reg ALU lw IM ALU O r d e r beq ALU I n s t r. DM Reg Reg DM Reg

Even with all the hazards … • Pipelining is worth the overhead! • We will discuss the different hazards in more detail …

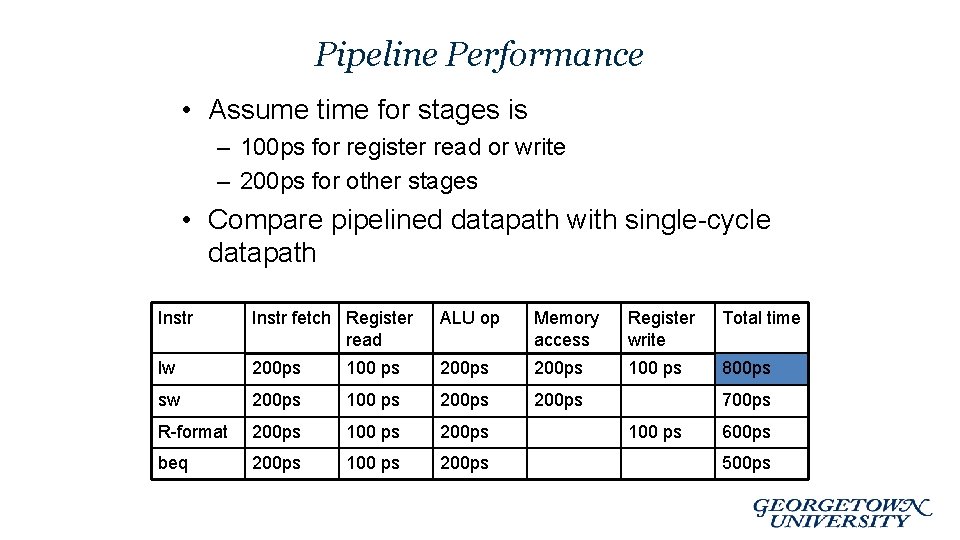

Pipeline Performance • Assume time for stages is – 100 ps for register read or write – 200 ps for other stages • Compare pipelined datapath with single-cycle datapath Instr fetch Register read ALU op Memory access Register write Total time lw 200 ps 100 ps 800 ps sw 200 ps 100 ps 200 ps R-format 200 ps 100 ps 200 ps beq 200 ps 100 ps 200 ps 700 ps 100 ps 600 ps 500 ps

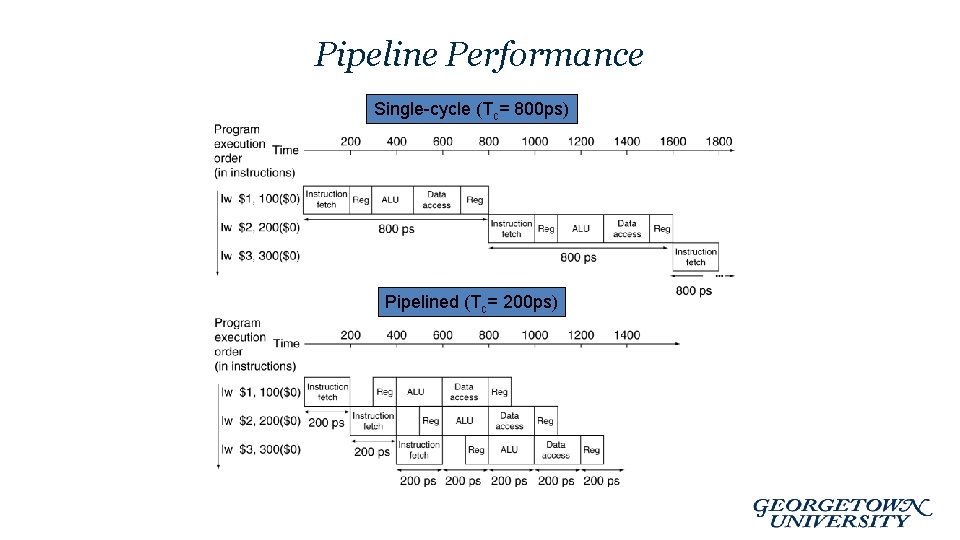

Pipeline Performance Single-cycle (Tc= 800 ps) Pipelined (Tc= 200 ps)

Pipeline Speedup • If all stages are balanced – i. e. , all take the same time – Time between instructionspipelined= Time between instructionsnonpipelined Number of stages • If not balanced, speedup is less! • Speedup due to increased throughput – Latency (time for each instruction) does not decrease

Pipelining and ISA Design • MIPS ISA designed for pipelining – All instructions are 32 -bits • Easier to fetch and decode in one cycle • c. f. x 86: 1 - to 17 -byte instructions – Few and regular instruction formats • Can decode and read registers in one step – Load/store addressing • Can calculate address in 3 rd stage, access memory in 4 th stage – Alignment of memory operands • Memory access takes only one cycle

Current Trends in Architecture • Benefits reaped from Instruction-Level parallelism (ILP) have largely been maxed out. • New models for performance: – Data-level parallelism (DLP) – Thread-level parallelism (TLP) • These require explicit restructuring of the application

Summary • All modern day processors use pipelining • Pipelining doesn’t help latency of single task, it helps throughput of entire workload • Potential speedup: a CPI of 1 and fast a CC • Pipeline rate limited by slowest pipeline stage – Unbalanced pipe stages makes for inefficiencies – The time to “fill” pipeline and time to “drain” it can impact speedup for deep pipelines and short code runs • Must detect and resolve hazards – Stalling negatively affects CPI (makes CPI less than the ideal of 1)

Future Topics (time permitting) • Parallel Computing • Distributed and Cloud Computing • GPUs and Vectored Architectures

Appendix

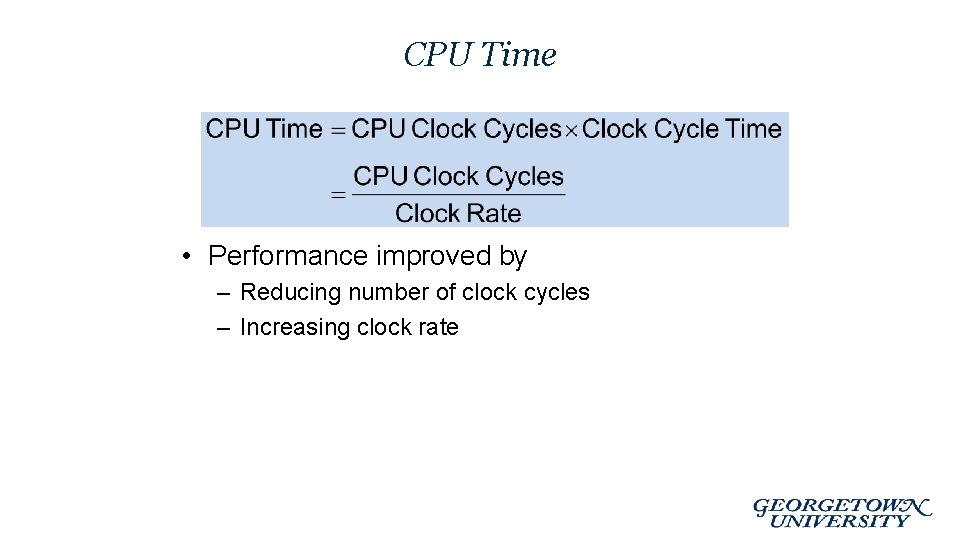

CPU Time • Performance improved by – Reducing number of clock cycles – Increasing clock rate

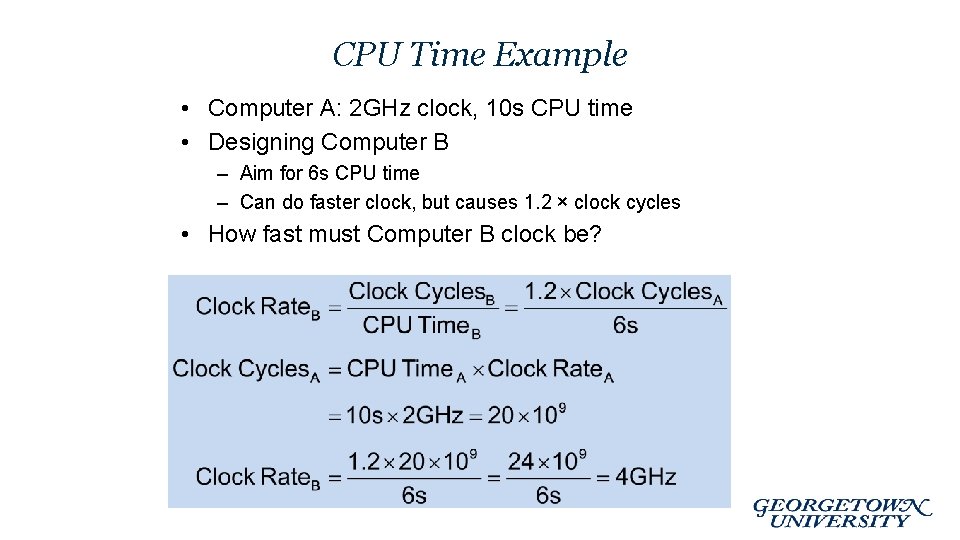

CPU Time Example • Computer A: 2 GHz clock, 10 s CPU time • Designing Computer B – Aim for 6 s CPU time – Can do faster clock, but causes 1. 2 × clock cycles • How fast must Computer B clock be?

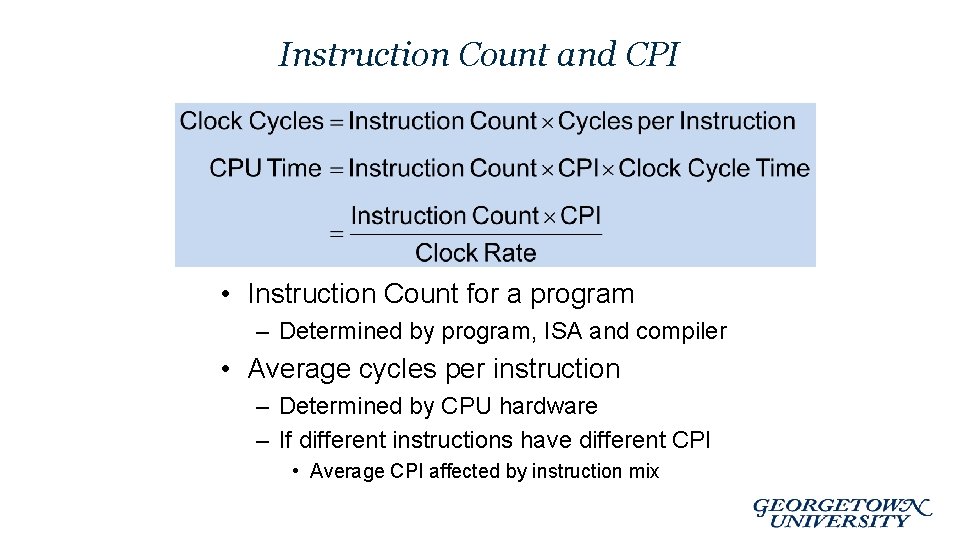

Instruction Count and CPI • Instruction Count for a program – Determined by program, ISA and compiler • Average cycles per instruction – Determined by CPU hardware – If different instructions have different CPI • Average CPI affected by instruction mix

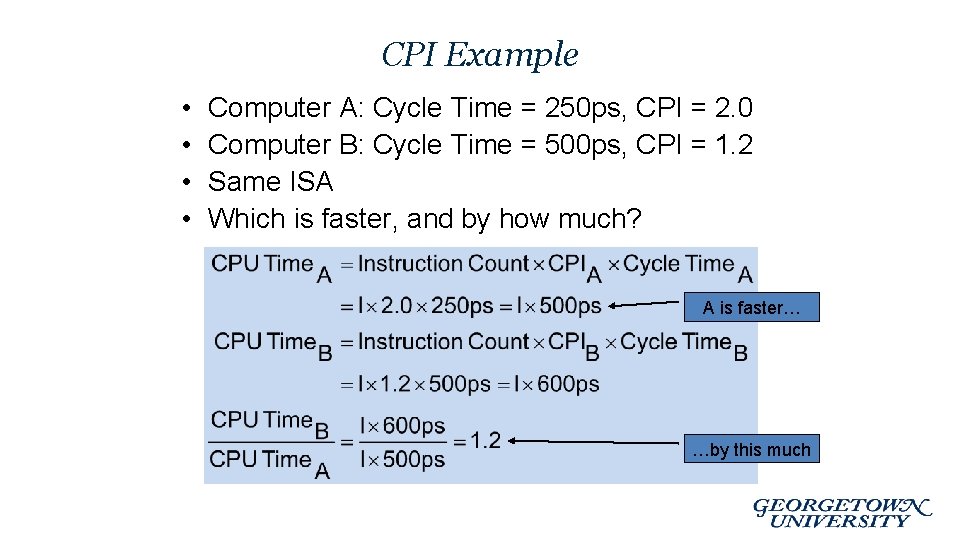

CPI Example • • Computer A: Cycle Time = 250 ps, CPI = 2. 0 Computer B: Cycle Time = 500 ps, CPI = 1. 2 Same ISA Which is faster, and by how much? A is faster… …by this much

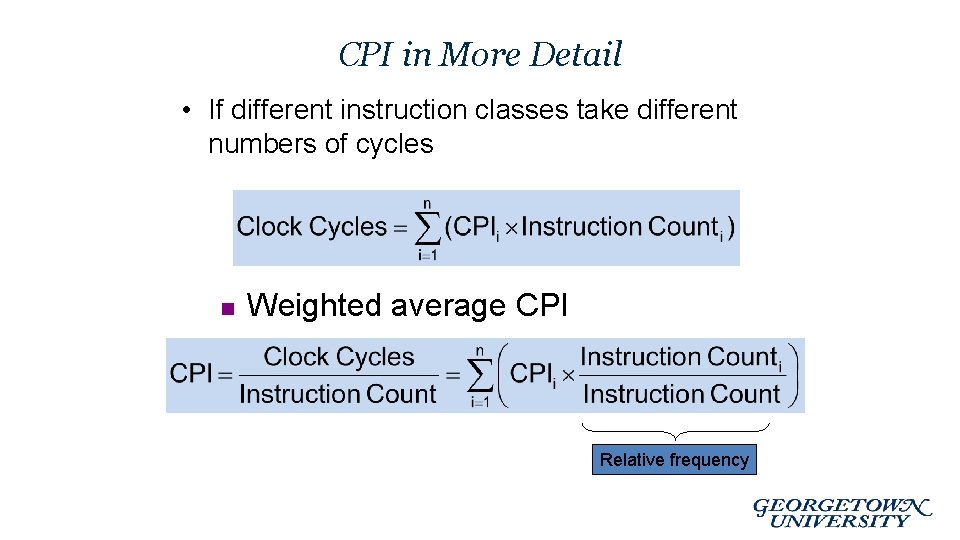

CPI in More Detail • If different instruction classes take different numbers of cycles n Weighted average CPI Relative frequency

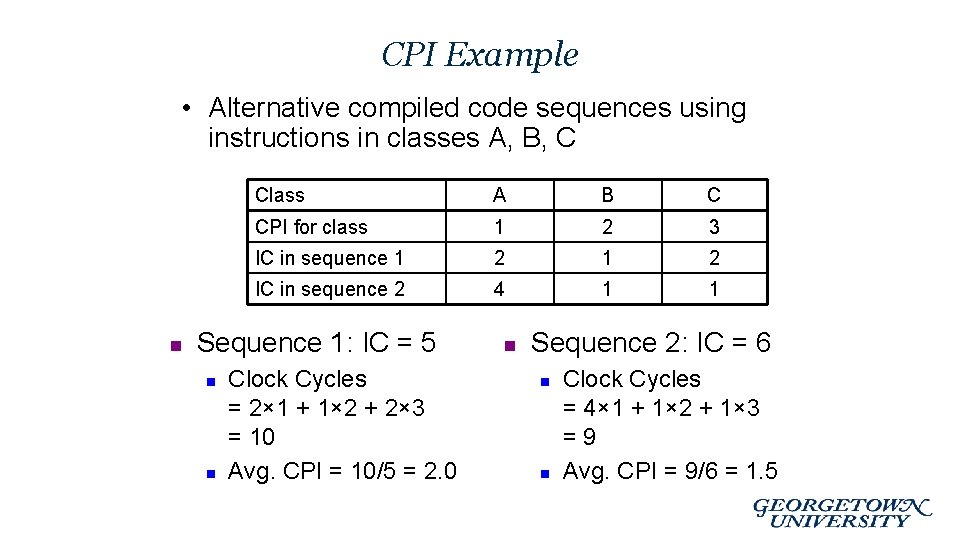

CPI Example • Alternative compiled code sequences using instructions in classes A, B, C n Class A B C CPI for class 1 2 3 IC in sequence 1 2 IC in sequence 2 4 1 1 Sequence 1: IC = 5 n n Clock Cycles = 2× 1 + 1× 2 + 2× 3 = 10 Avg. CPI = 10/5 = 2. 0 n Sequence 2: IC = 6 n n Clock Cycles = 4× 1 + 1× 2 + 1× 3 =9 Avg. CPI = 9/6 = 1. 5

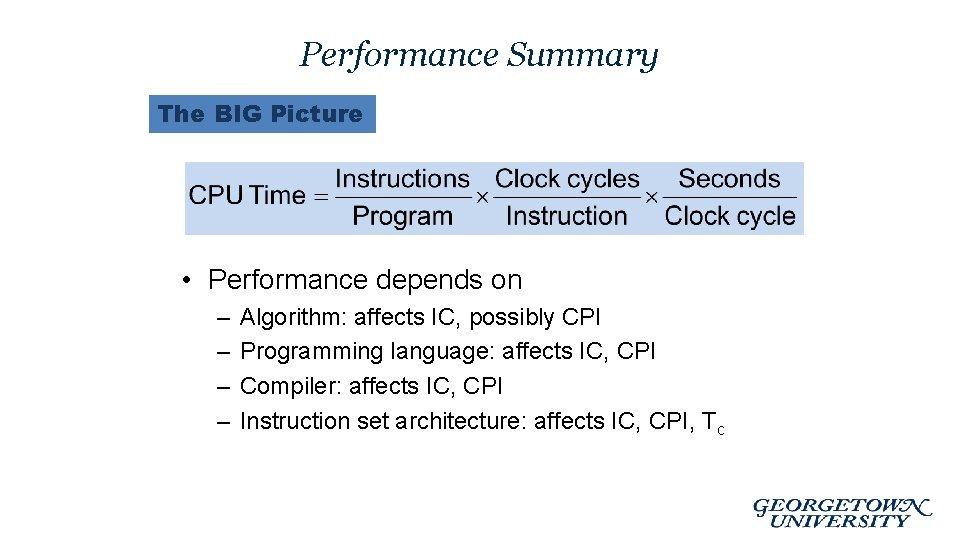

Performance Summary The BIG Picture • Performance depends on – – Algorithm: affects IC, possibly CPI Programming language: affects IC, CPI Compiler: affects IC, CPI Instruction set architecture: affects IC, CPI, Tc

Flynn’s Taxonomy • Single instruction stream, single data stream (SISD) • Single instruction stream, multiple data streams (SIMD) – Vector architectures – Multimedia extensions – Graphics processor units • Multiple instruction streams, single data stream (MISD) – No commercial implementation • Multiple instruction streams, multiple data streams (MIMD) – Tightly-coupled MIMD – Loosely-coupled MIMD

- Slides: 55