Chapter 2 BY RAUF UR RAHIM Table to

Chapter 2 BY RAUF UR RAHIM

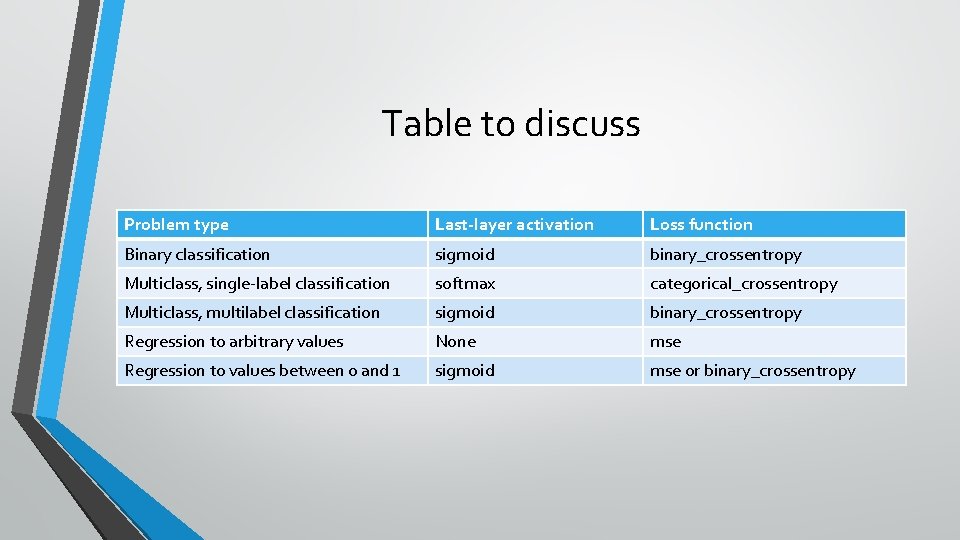

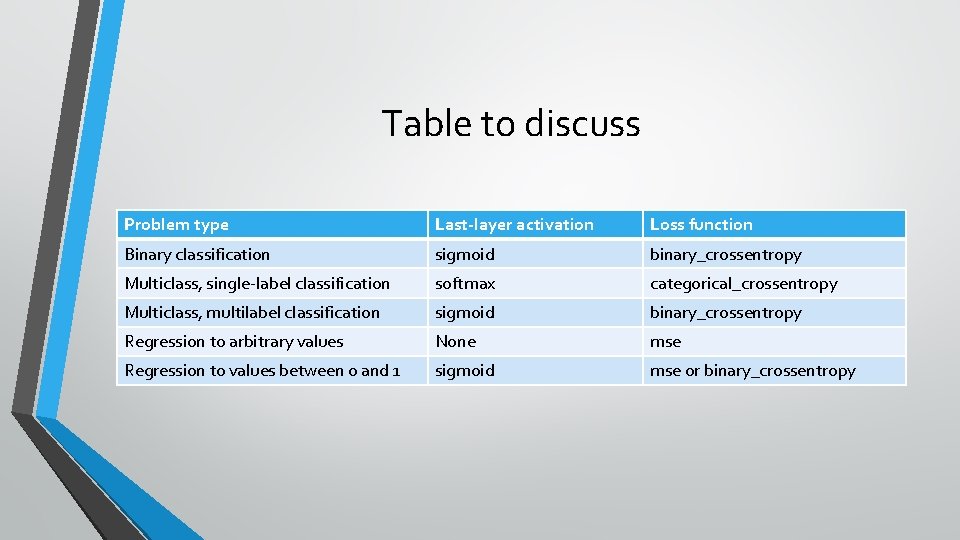

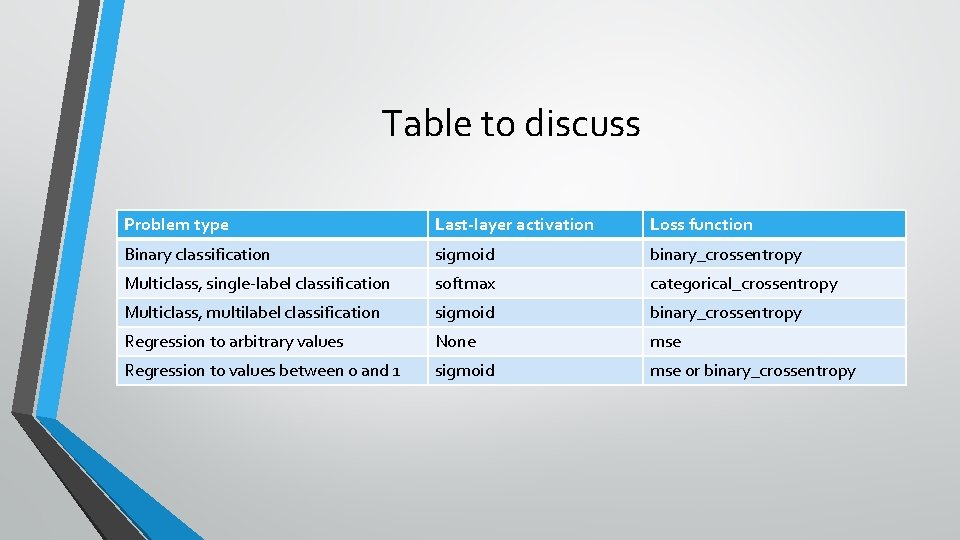

Table to discuss Problem type Last-layer activation Loss function Binary classification sigmoid binary_crossentropy Multiclass, single-label classification softmax categorical_crossentropy Multiclass, multilabel classification sigmoid binary_crossentropy Regression to arbitrary values None mse Regression to values between 0 and 1 sigmoid mse or binary_crossentropy

Binary Classification

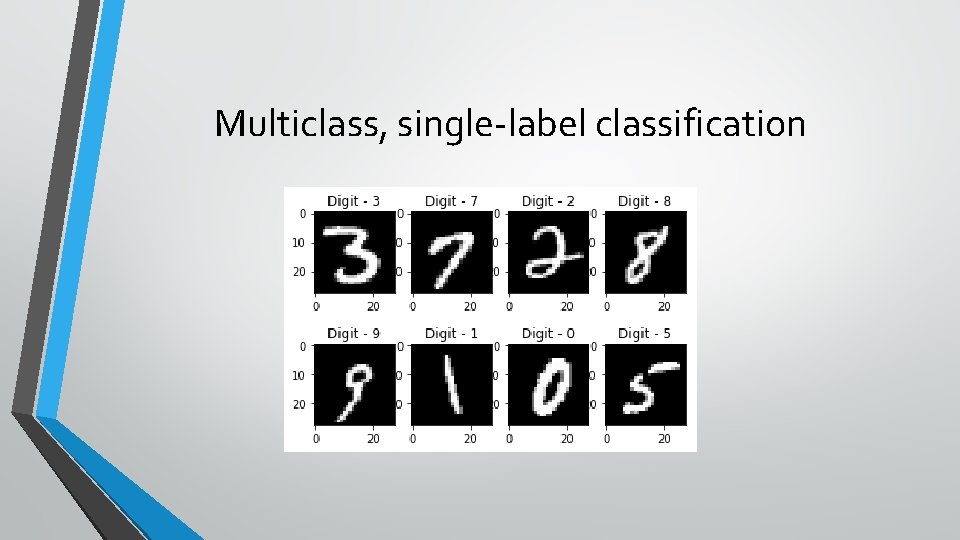

Multiclass, single-label classification

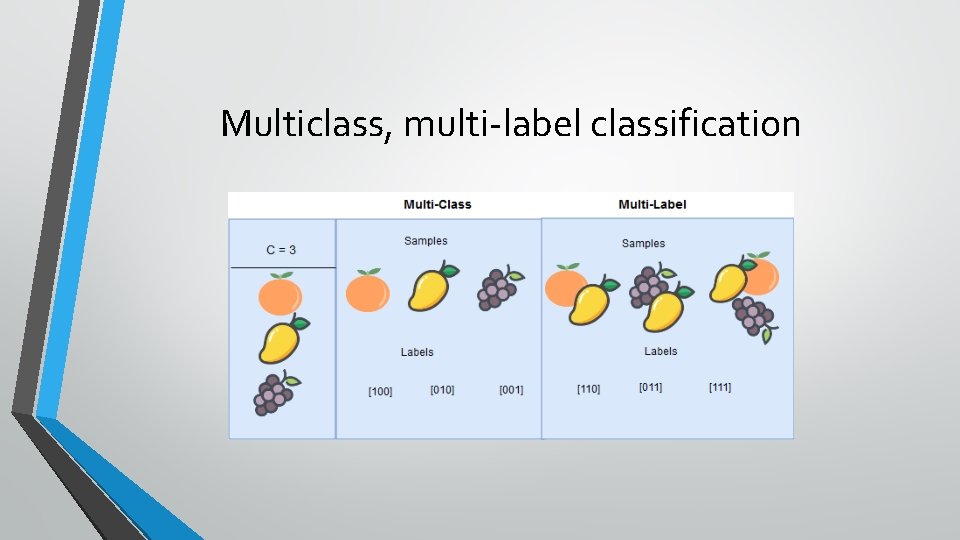

Multiclass, multi-label classification

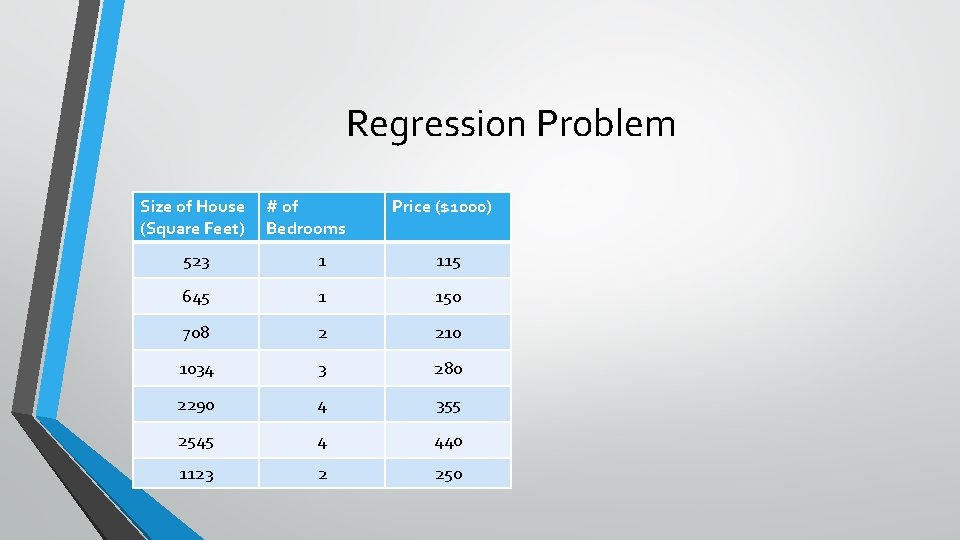

Regression Problem Size of House (Square Feet) # of Bedrooms Price ($1000) 523 1 115 645 1 150 708 2 210 1034 3 280 2290 4 355 2545 4 440 1123 2 250

Table to discuss Problem type Last-layer activation Loss function Binary classification sigmoid binary_crossentropy Multiclass, single-label classification softmax categorical_crossentropy Multiclass, multilabel classification sigmoid binary_crossentropy Regression to arbitrary values None mse Regression to values between 0 and 1 sigmoid mse or binary_crossentropy

Activation function • Which talk to listen?

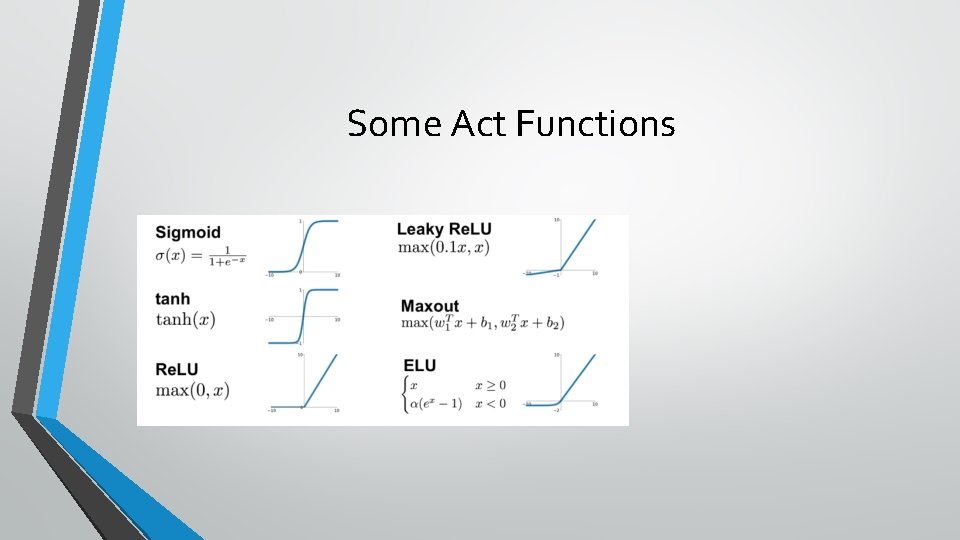

Some Act Functions

sigmoid vs softmax • Sigmoid returns probability of binary class • Softmax returns probability of each class

Table to discuss Problem type Last-layer activation Loss function Binary classification sigmoid binary_crossentropy Multiclass, single-label classification softmax categorical_crossentropy Multiclass, multilabel classification sigmoid binary_crossentropy Regression to arbitrary values None mse Regression to values between 0 and 1 sigmoid mse or binary_crossentropy

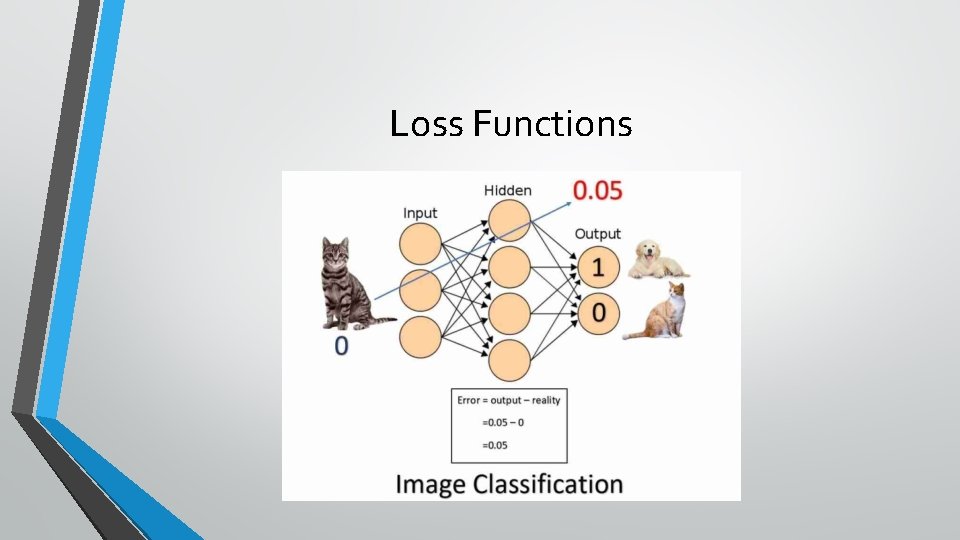

Loss Functions

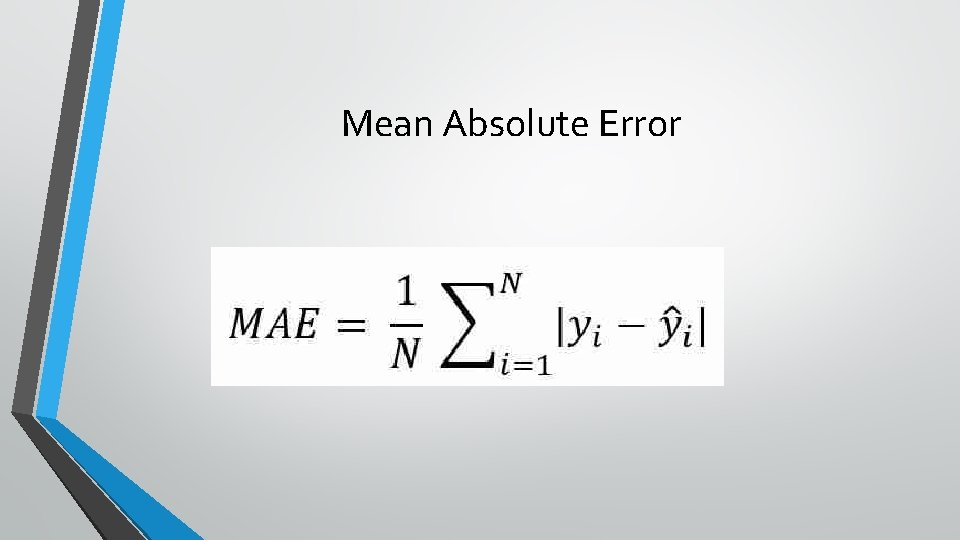

Mean Absolute Error

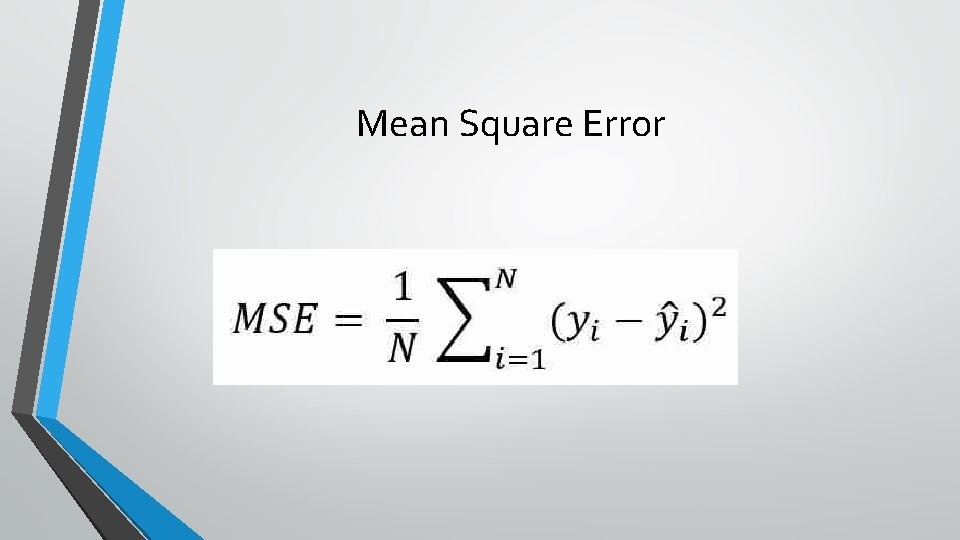

Mean Square Error

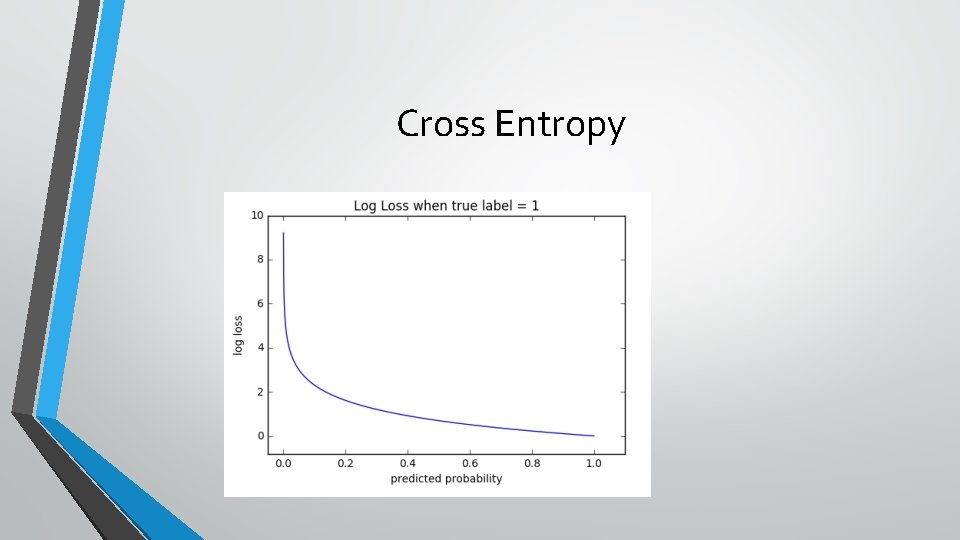

Cross Entropy

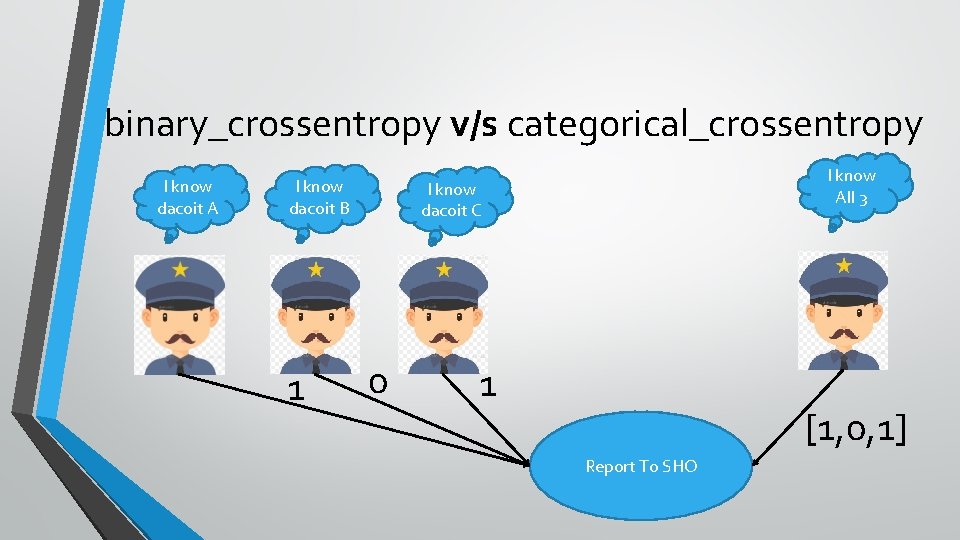

binary_crossentropy v/s categorical_crossentropy I know dacoit A I know dacoit B 1 I know All 3 I know dacoit C 0 1 [1, 0, 1] Report To SHO

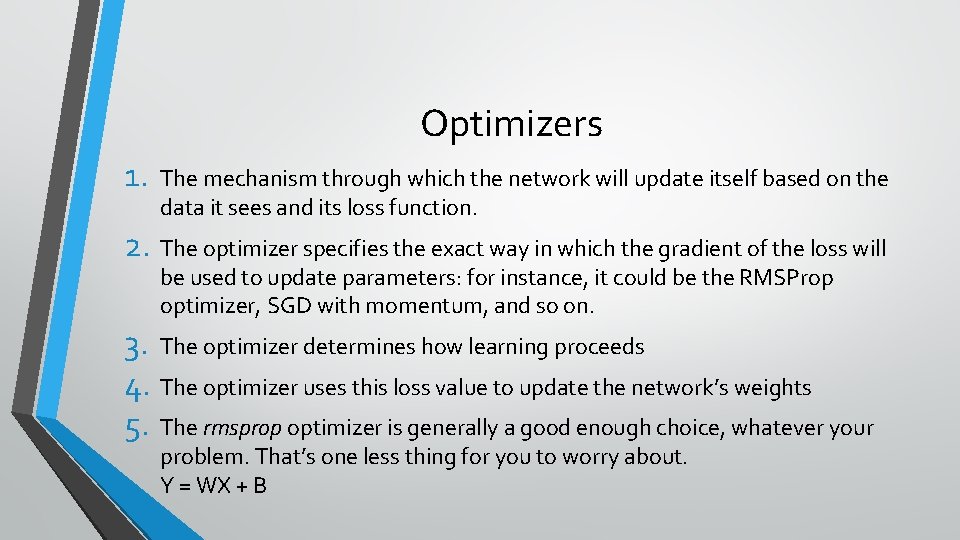

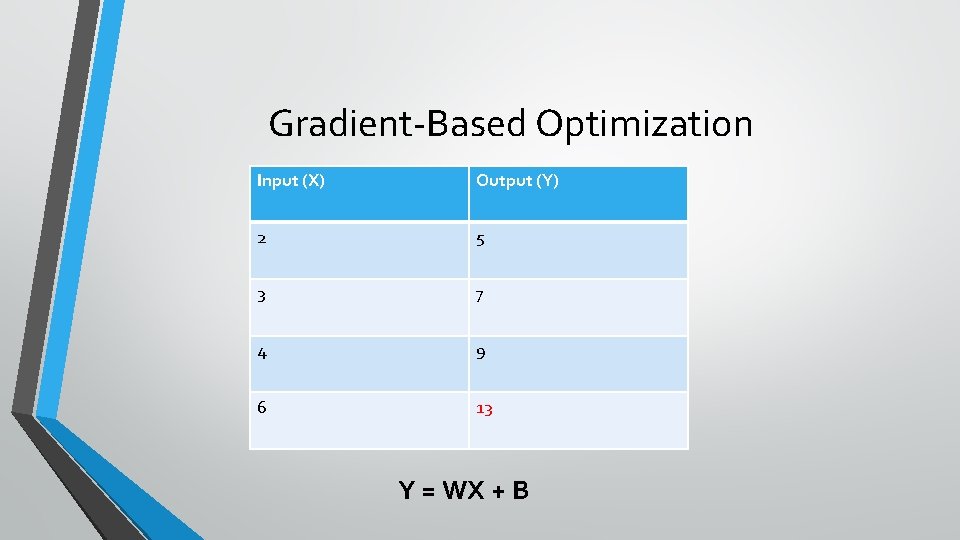

Optimizers 1. The mechanism through which the network will update itself based on the data it sees and its loss function. 2. The optimizer specifies the exact way in which the gradient of the loss will be used to update parameters: for instance, it could be the RMSProp optimizer, SGD with momentum, and so on. 3. The optimizer determines how learning proceeds 4. The optimizer uses this loss value to update the network’s weights 5. The rmsprop optimizer is generally a good enough choice, whatever your problem. That’s one less thing for you to worry about. Y = WX + B

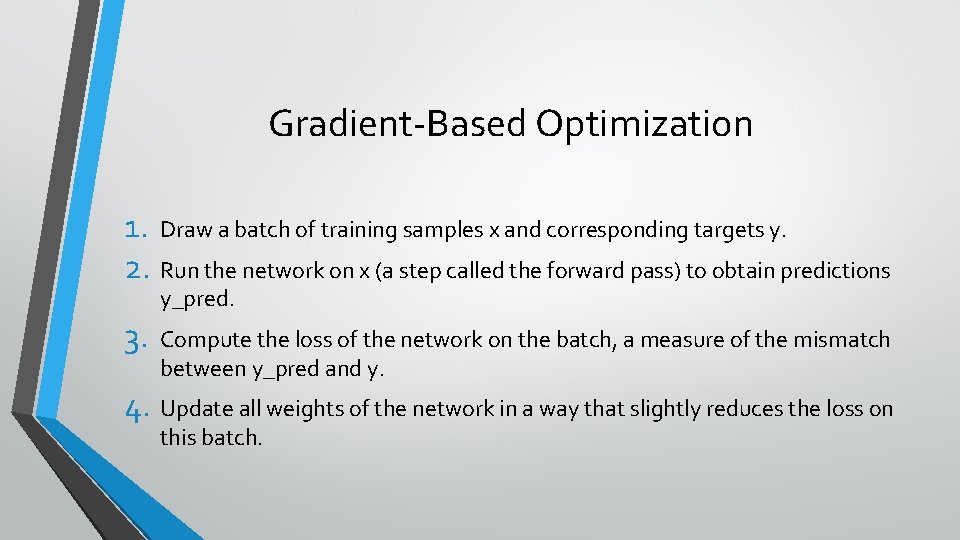

Gradient-Based Optimization 1. Draw a batch of training samples x and corresponding targets y. 2. Run the network on x (a step called the forward pass) to obtain predictions y_pred. 3. Compute the loss of the network on the batch, a measure of the mismatch between y_pred and y. 4. Update all weights of the network in a way that slightly reduces the loss on this batch.

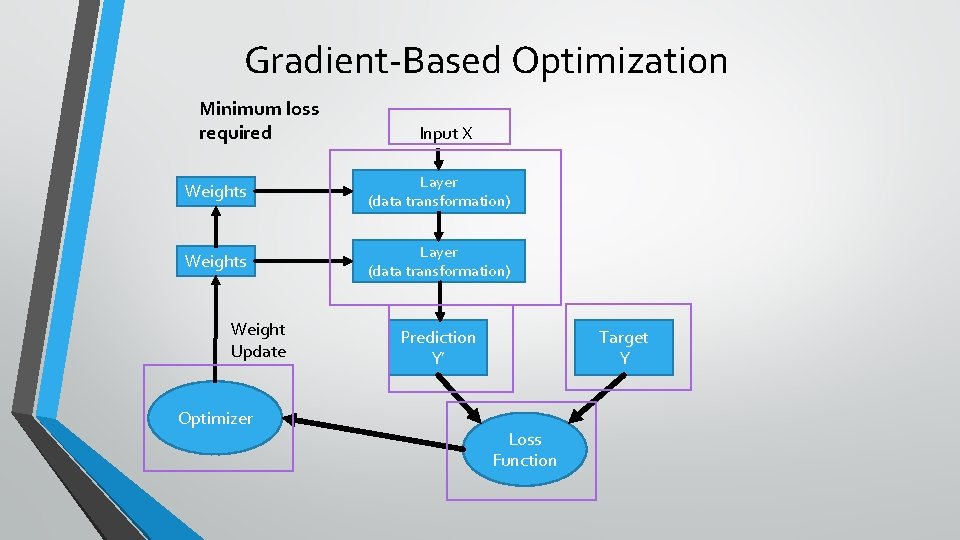

Gradient-Based Optimization Minimum loss required Input X Weights Layer (data transformation) Weight Update Optimizer Prediction Y’ Target Y Loss Function

Gradient-Based Optimization Input (X) Output (Y) 2 5 3 7 4 9 6 13 Y = WX + B

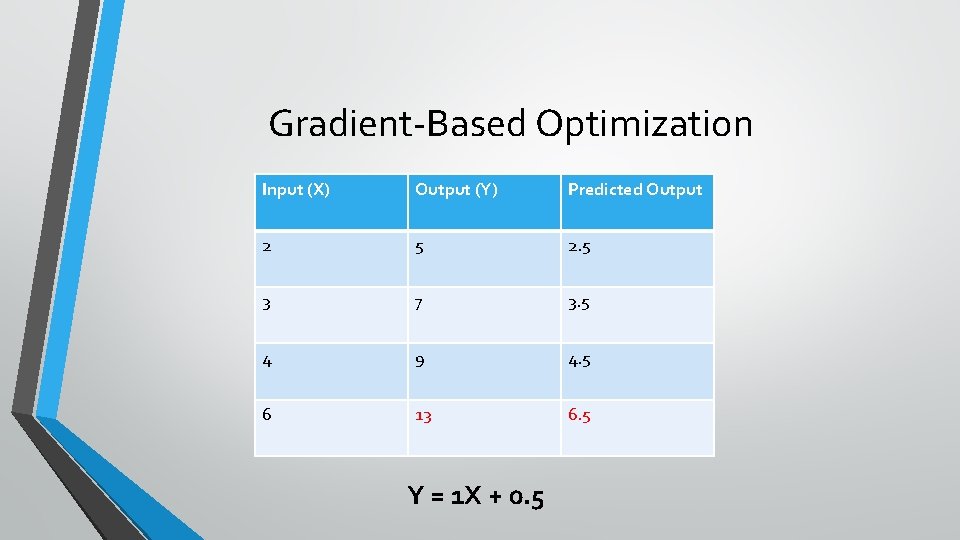

Gradient-Based Optimization Input (X) Output (Y) Predicted Output 2 5 2. 5 3 7 3. 5 4 9 4. 5 6 13 6. 5 Y = 1 X + 0. 5

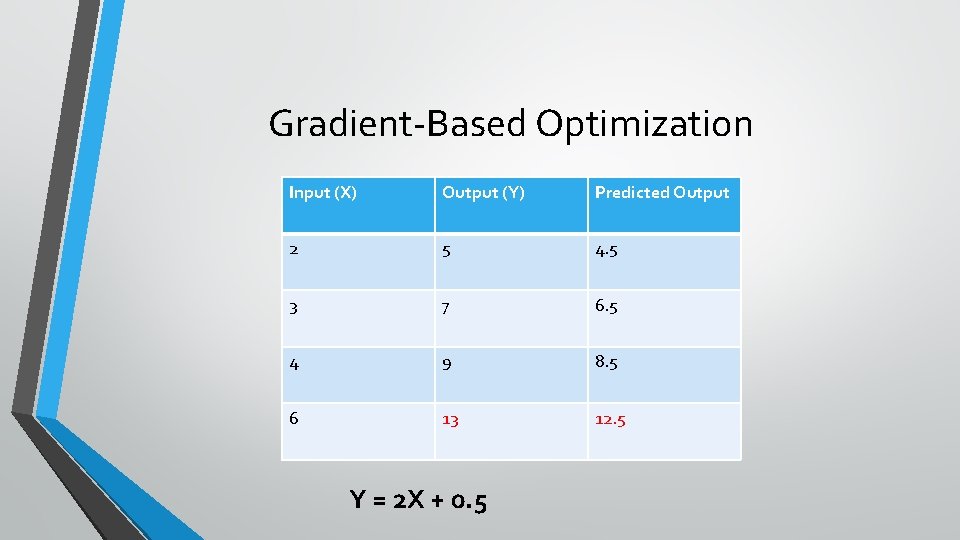

Gradient-Based Optimization Input (X) Output (Y) Predicted Output 2 5 4. 5 3 7 6. 5 4 9 8. 5 6 13 12. 5 Y = 2 X + 0. 5

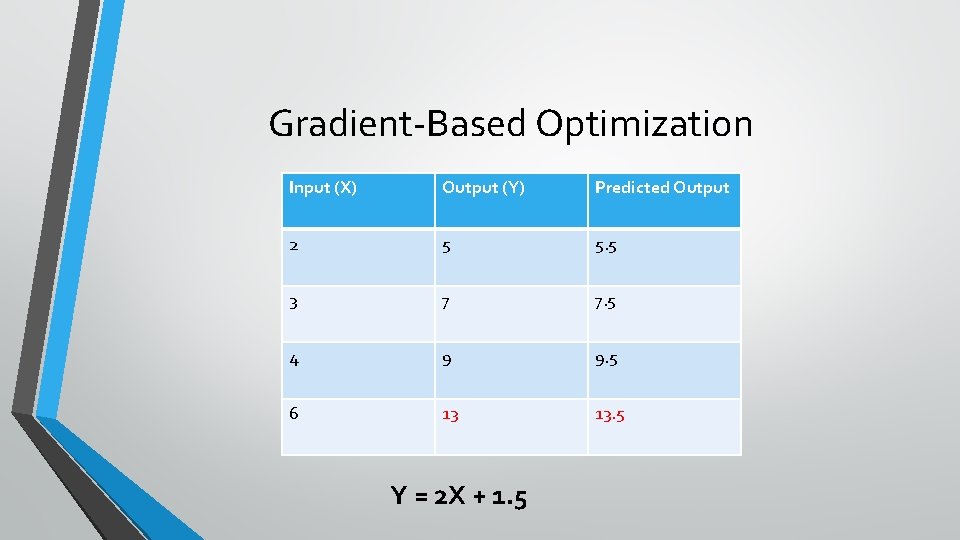

Gradient-Based Optimization Input (X) Output (Y) Predicted Output 2 5 5. 5 3 7 7. 5 4 9 9. 5 6 13 13. 5 Y = 2 X + 1. 5

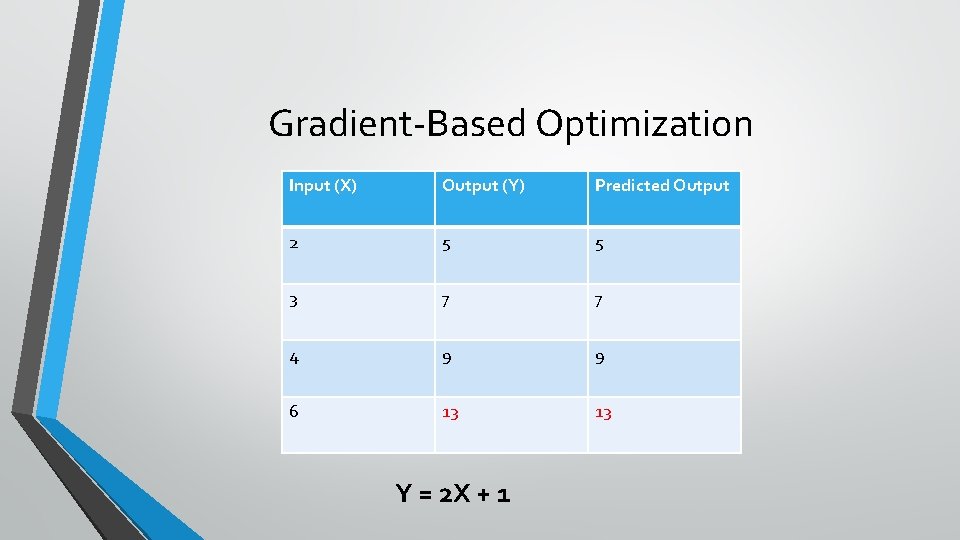

Gradient-Based Optimization Input (X) Output (Y) Predicted Output 2 5 5 3 7 7 4 9 9 6 13 13 Y = 2 X + 1

Gradient-Based Optimization • Do you think this is an efficient approach? • Hit and trial • Who will decide • • The magnitude of change The direction of change • Cong, you have differential and gradient

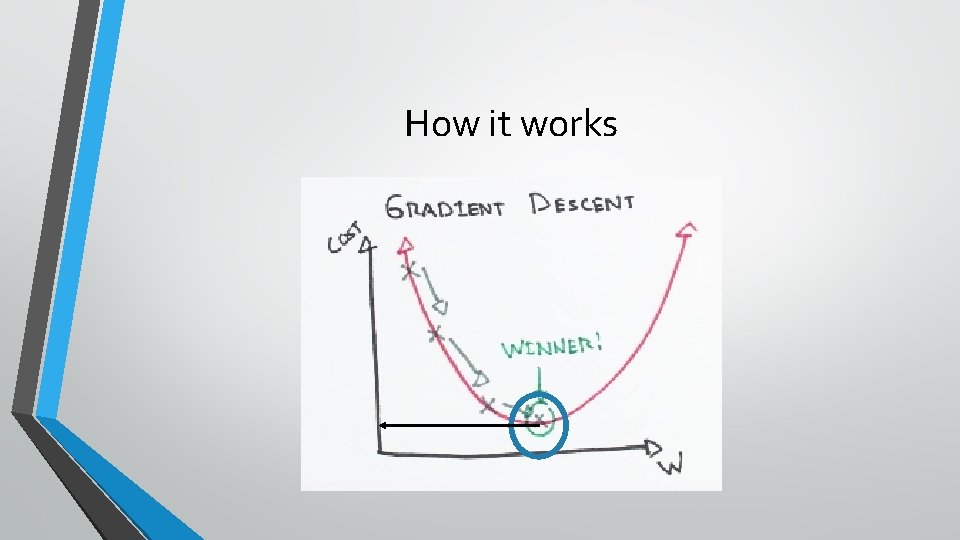

How it works

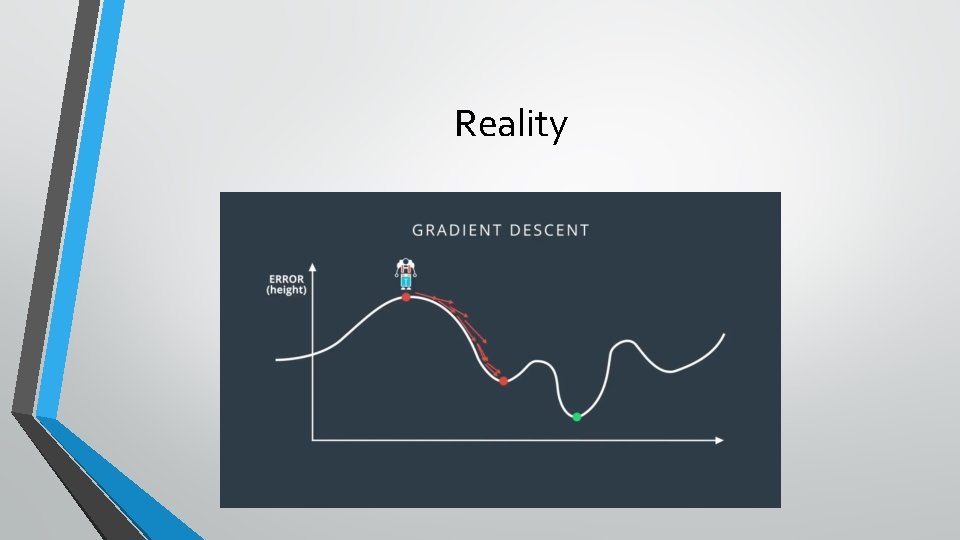

Reality

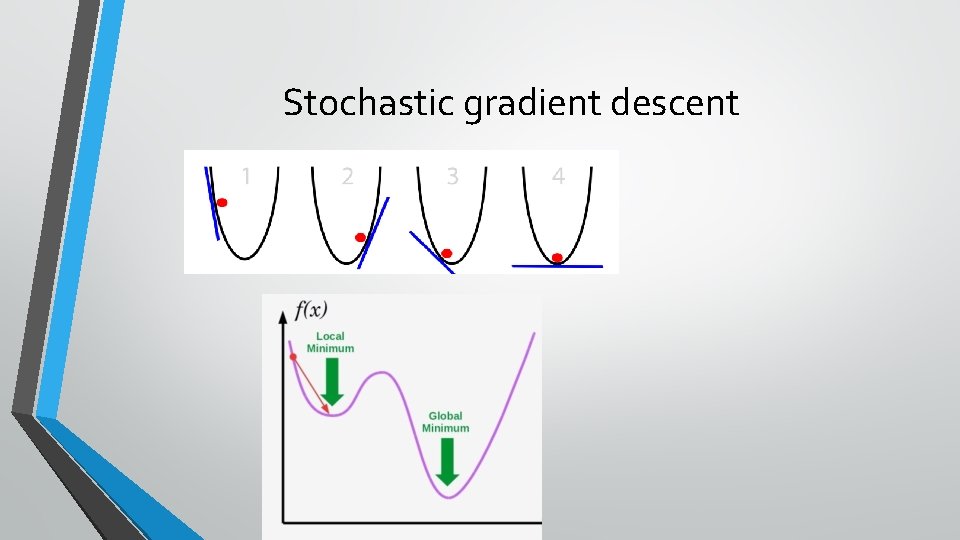

Stochastic gradient descent

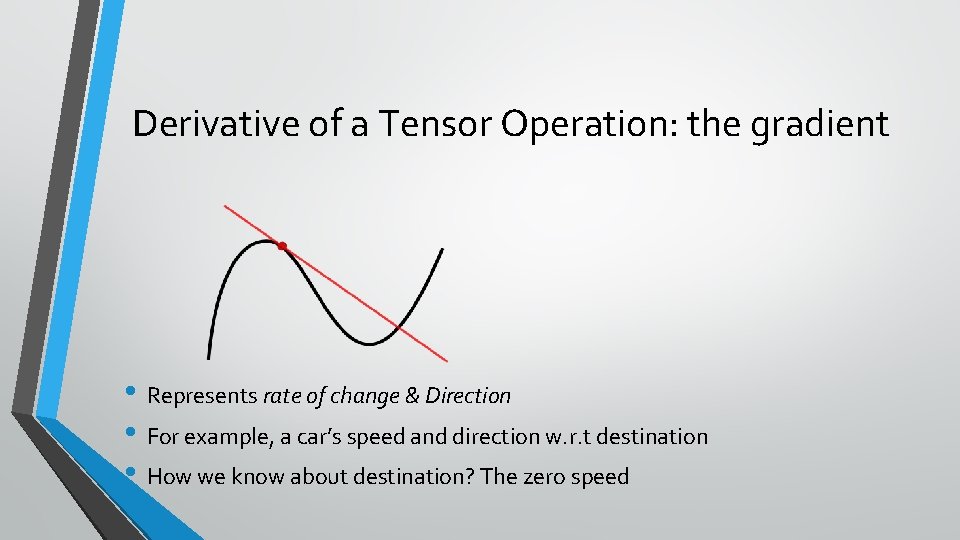

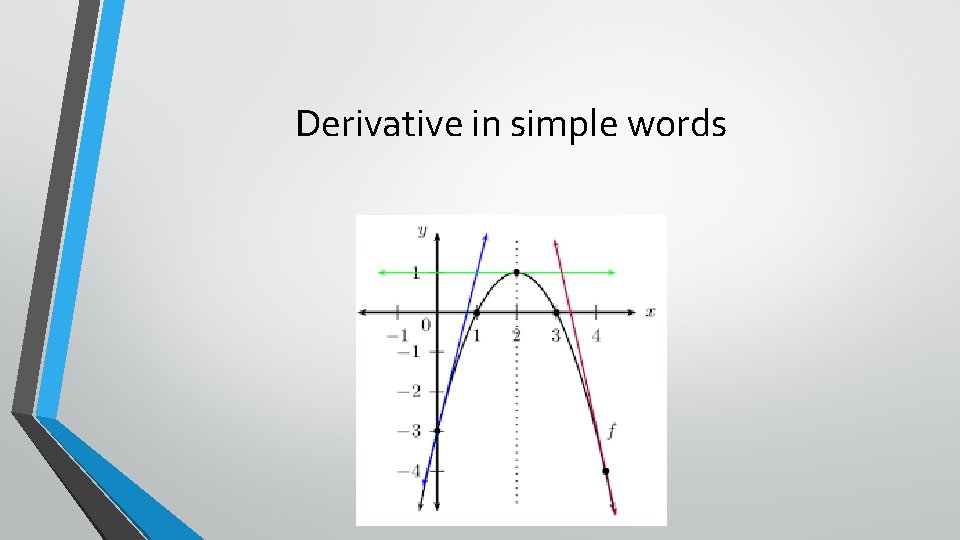

Derivative of a Tensor Operation: the gradient • Represents rate of change & Direction • For example, a car’s speed and direction w. r. t destination • How we know about destination? The zero speed

Derivative in simple words

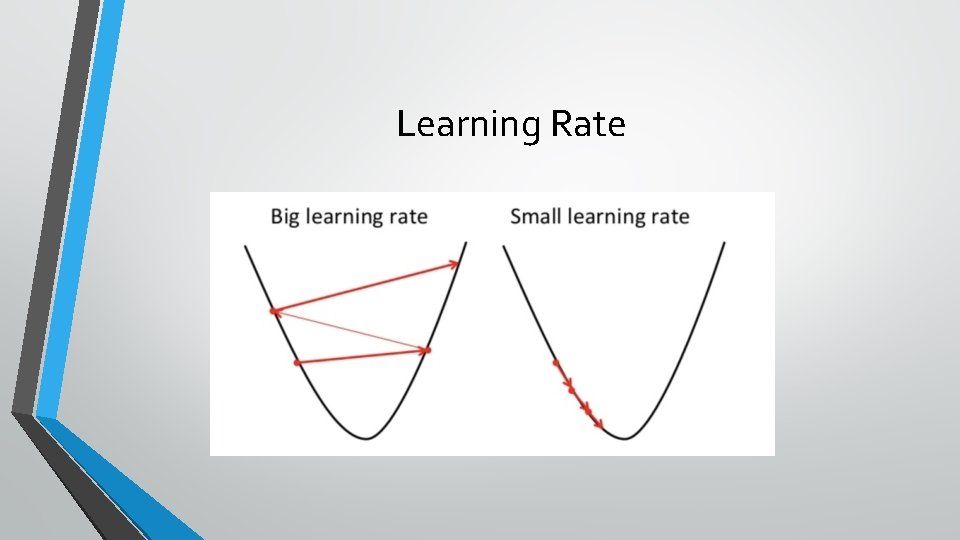

Learning Rate

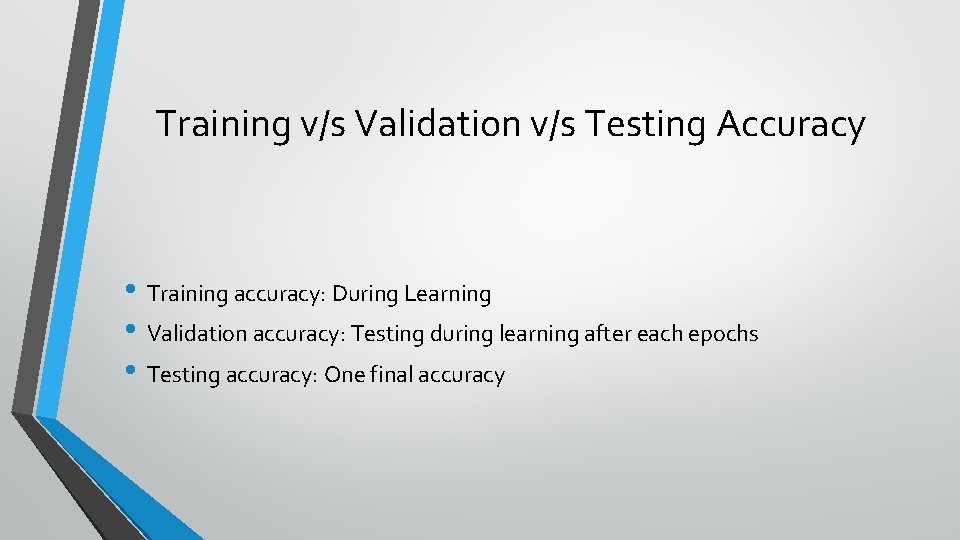

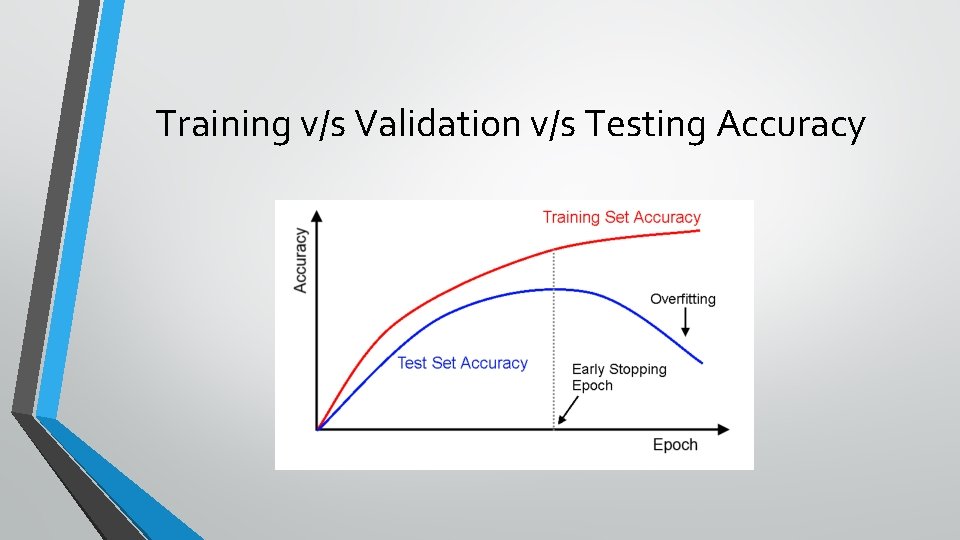

Training v/s Validation v/s Testing Accuracy • Training accuracy: During Learning • Validation accuracy: Testing during learning after each epochs • Testing accuracy: One final accuracy

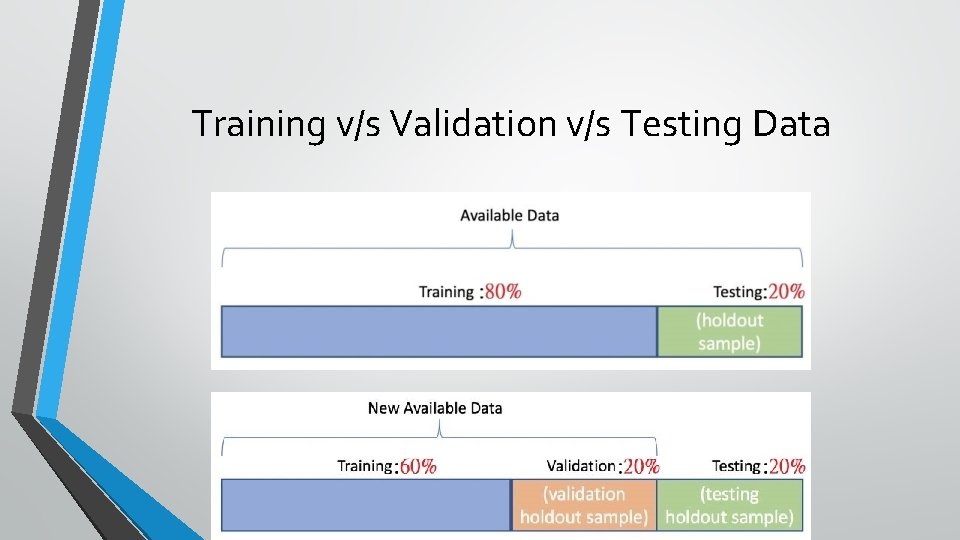

Training v/s Validation v/s Testing Data

Training v/s Validation v/s Testing Accuracy

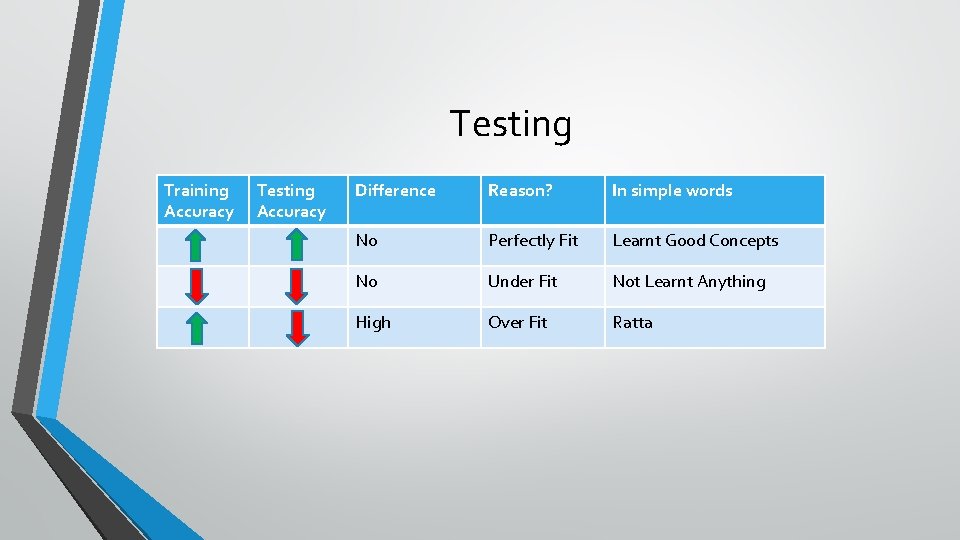

Testing Training Accuracy Testing Accuracy Difference Reason? In simple words No Perfectly Fit Learnt Good Concepts No Under Fit Not Learnt Anything High Over Fit Ratta

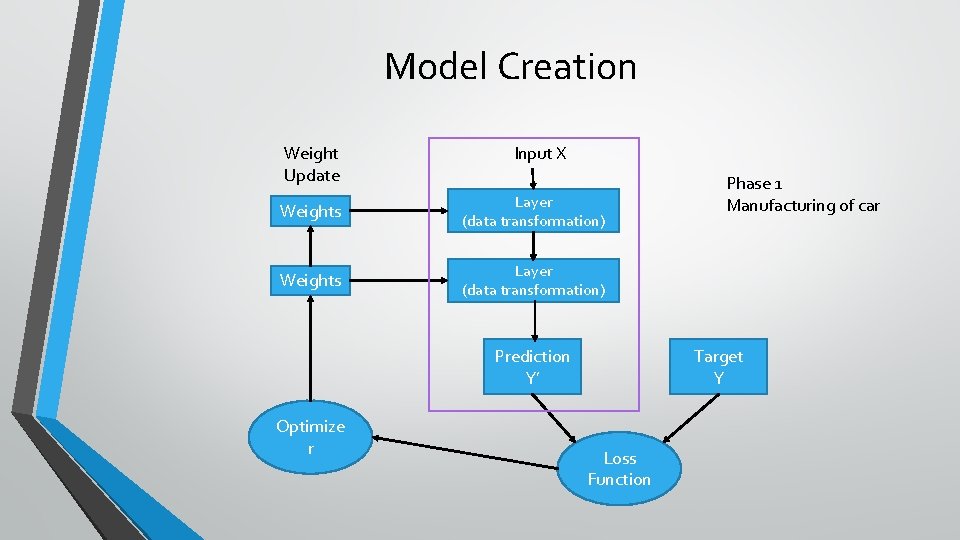

Model Creation Weight Update Input X Weights Layer (data transformation) Prediction Y’ Optimize r Phase 1 Manufacturing of car Target Y Loss Function

- Slides: 36