Autoregressive dynamical models Continuous form of Markov process

- Slides: 32

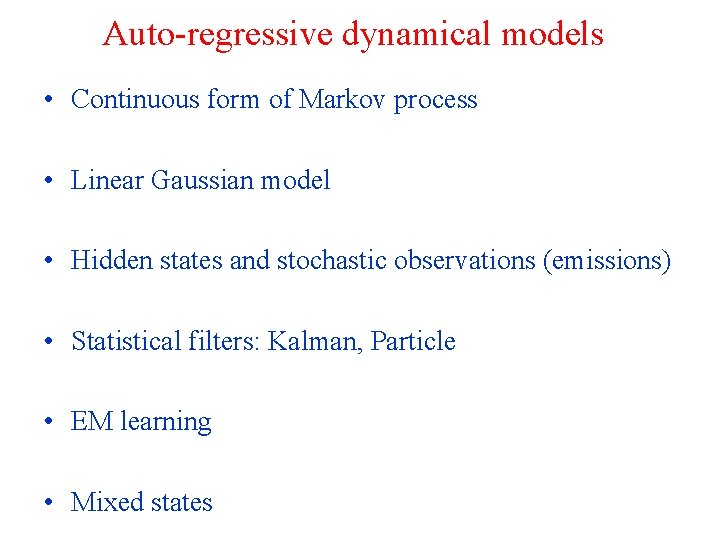

Auto-regressive dynamical models • Continuous form of Markov process • Linear Gaussian model • Hidden states and stochastic observations (emissions) • Statistical filters: Kalman, Particle • EM learning • Mixed states

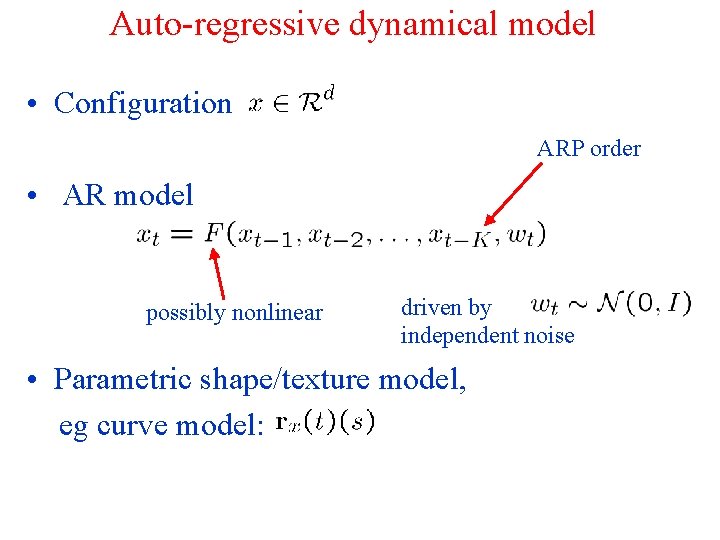

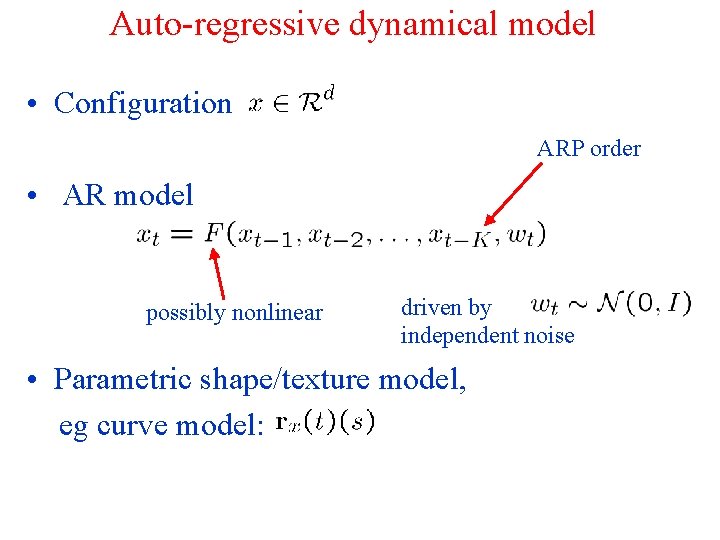

Auto-regressive dynamical model • Configuration ARP order • AR model possibly nonlinear driven by independent noise • Parametric shape/texture model, eg curve model:

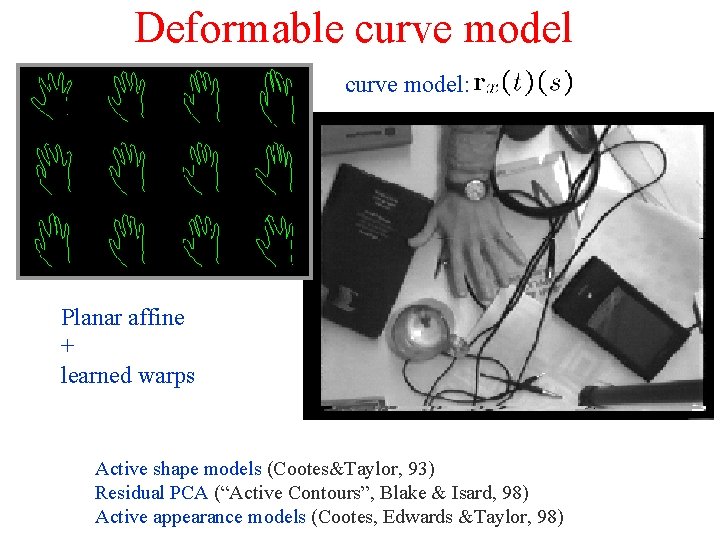

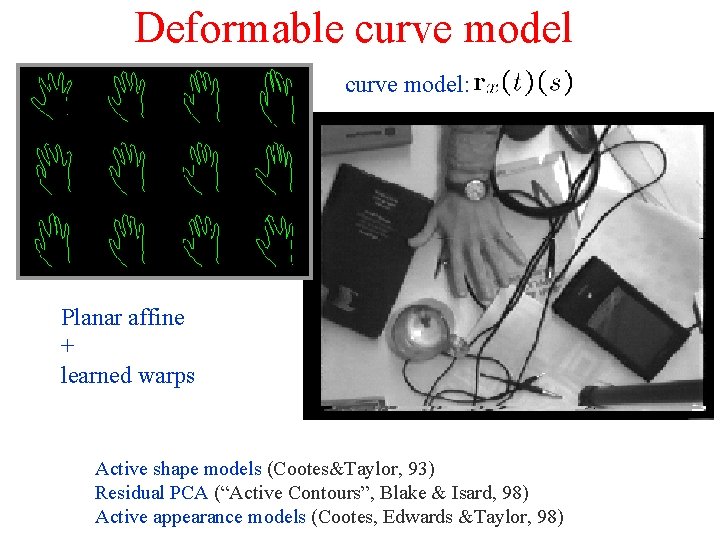

Deformable curve model: Planar affine + learned warps Active shape models (Cootes&Taylor, 93) Residual PCA (“Active Contours”, Blake & Isard, 98) Active appearance models (Cootes, Edwards &Taylor, 98)

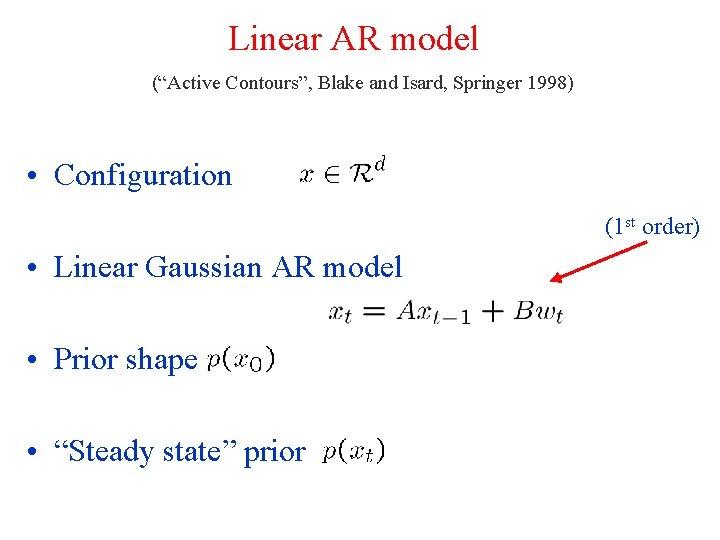

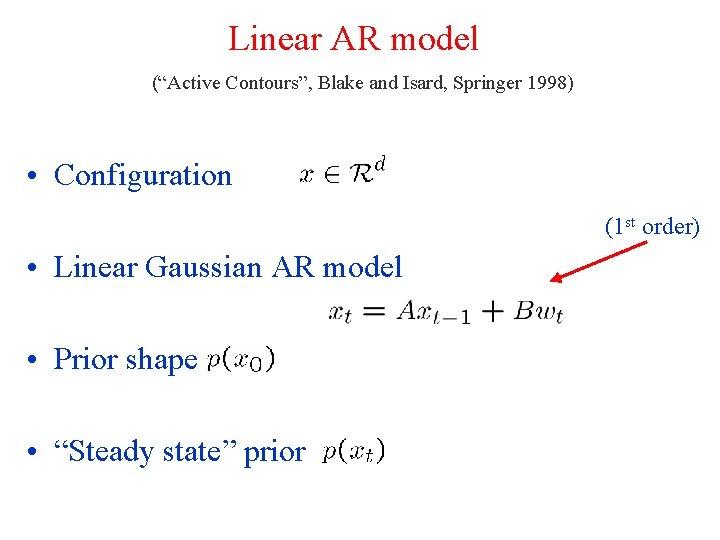

Linear AR model (“Active Contours”, Blake and Isard, Springer 1998) • Configuration (1 st order) • Linear Gaussian AR model • Prior shape • “Steady state” prior

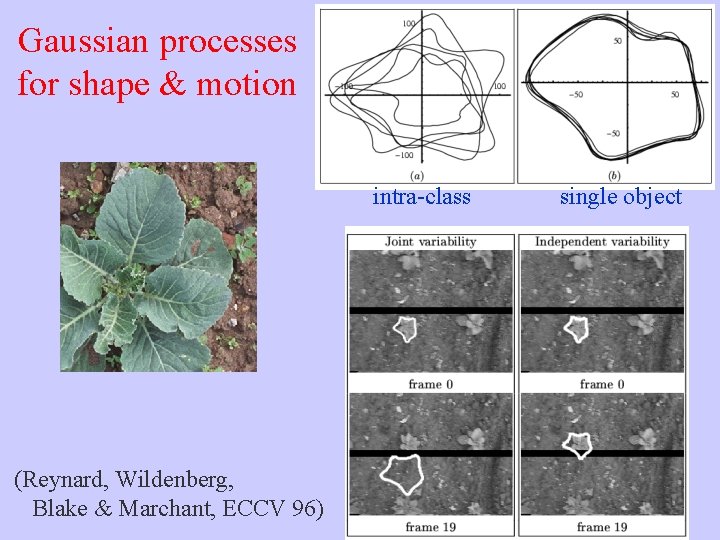

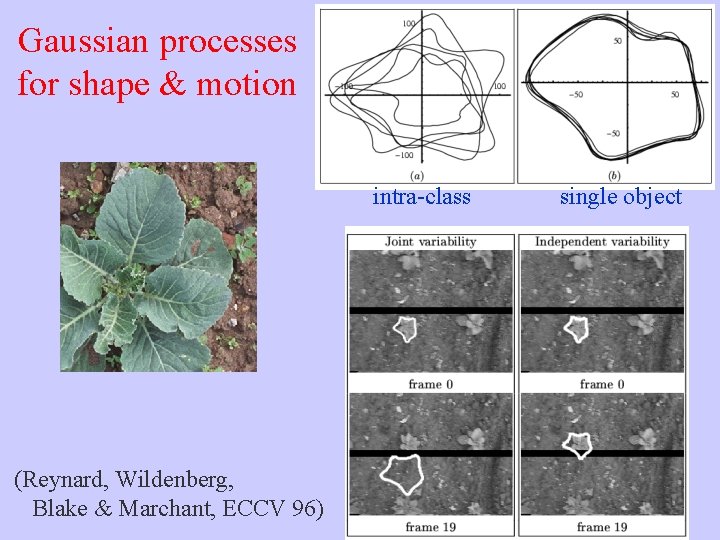

Gaussian processes for shape & motion intra-class (Reynard, Wildenberg, Blake & Marchant, ECCV 96) single object

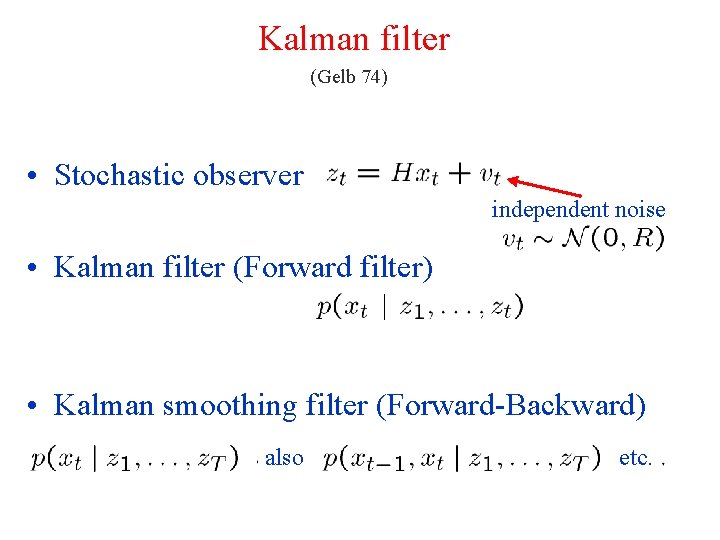

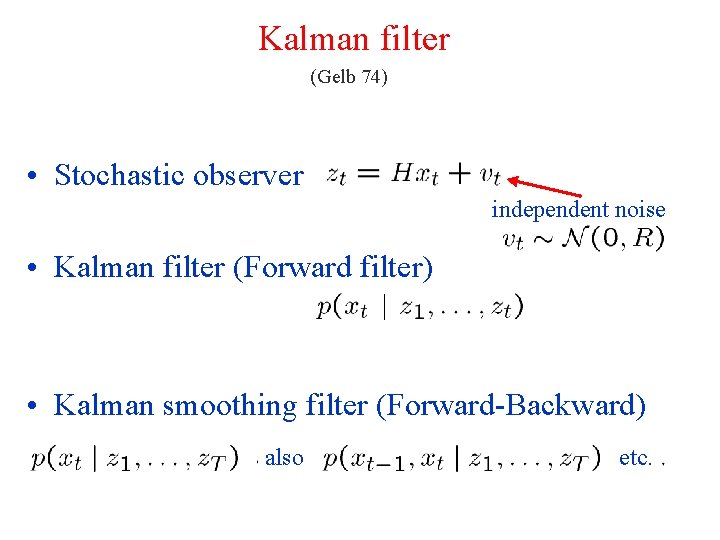

Kalman filter (Gelb 74) • Stochastic observer independent noise • Kalman filter (Forward filter) • Kalman smoothing filter (Forward-Backward) also etc.

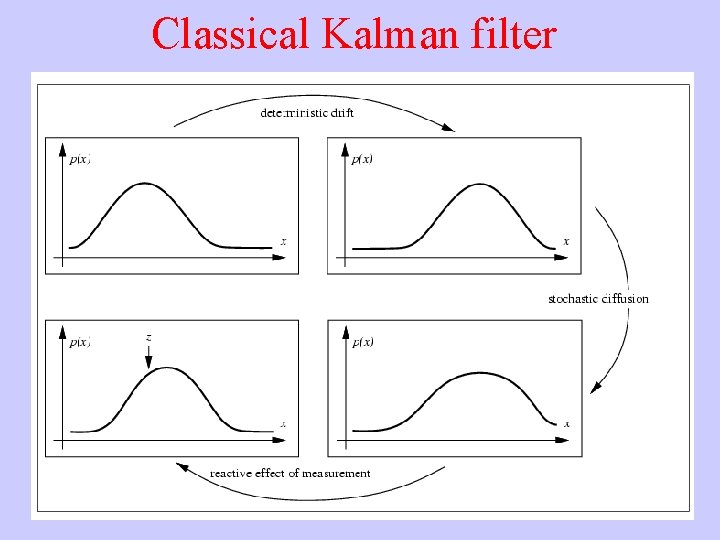

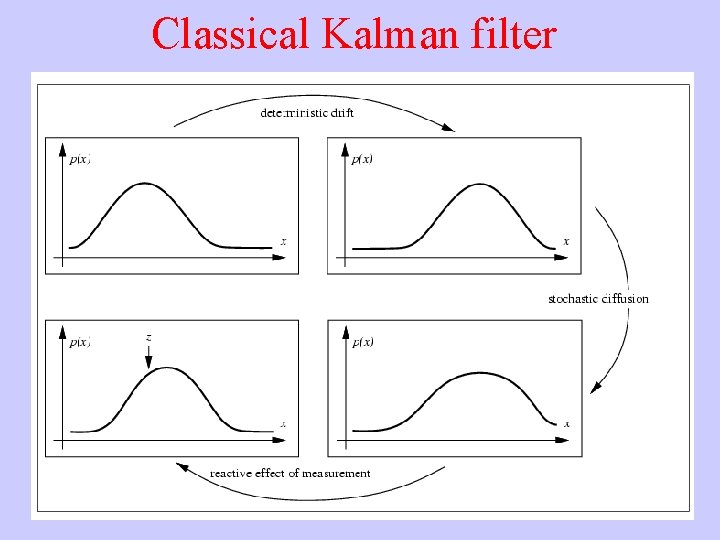

Classical Kalman filter

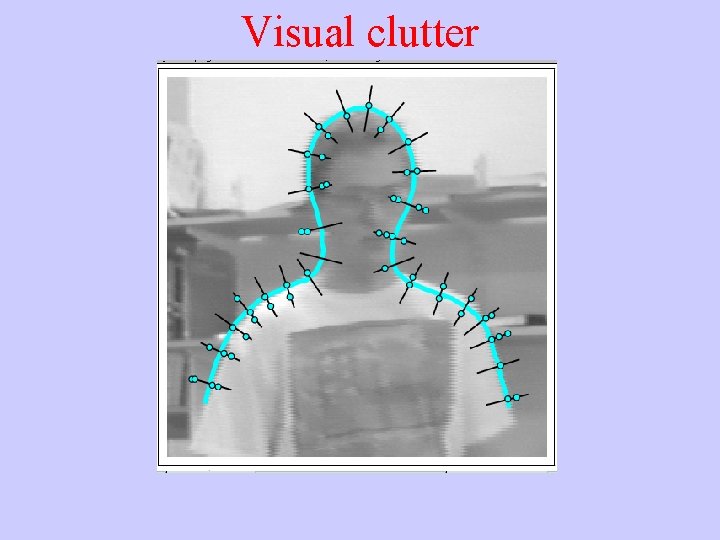

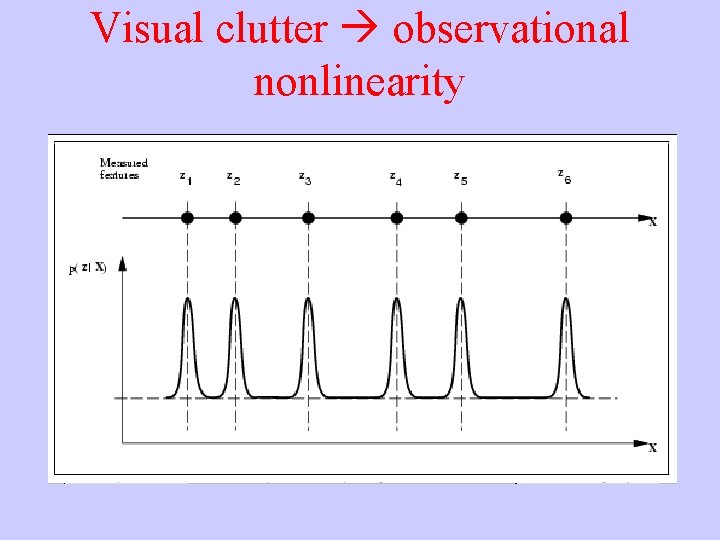

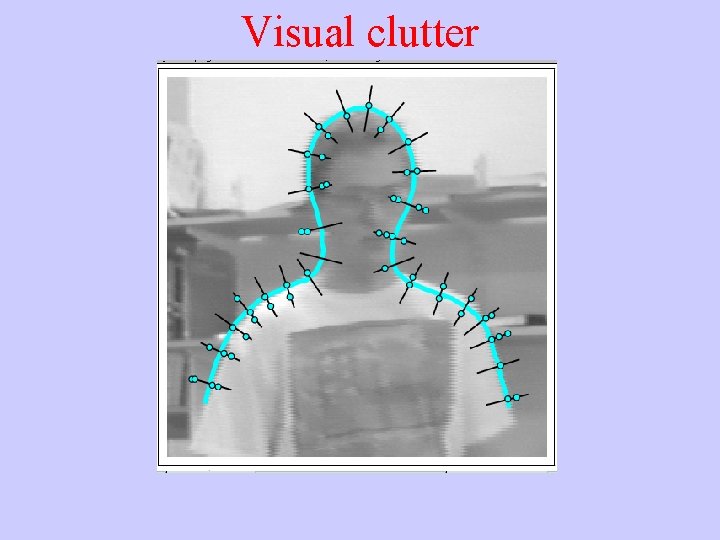

Visual clutter

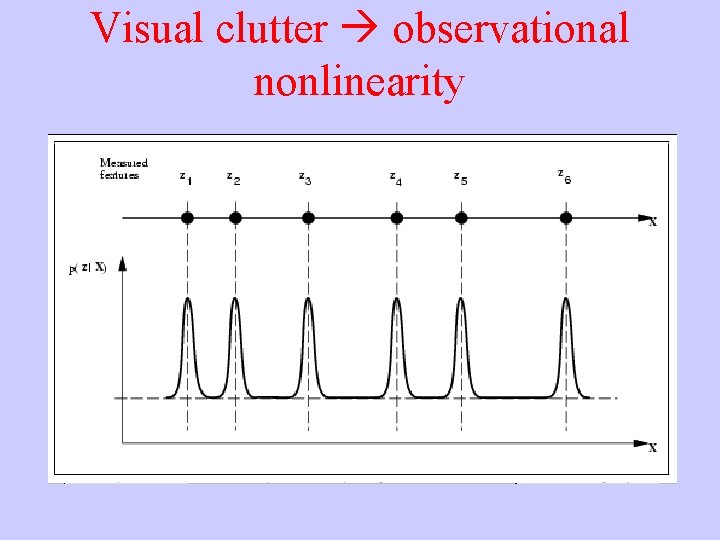

Visual clutter observational nonlinearity

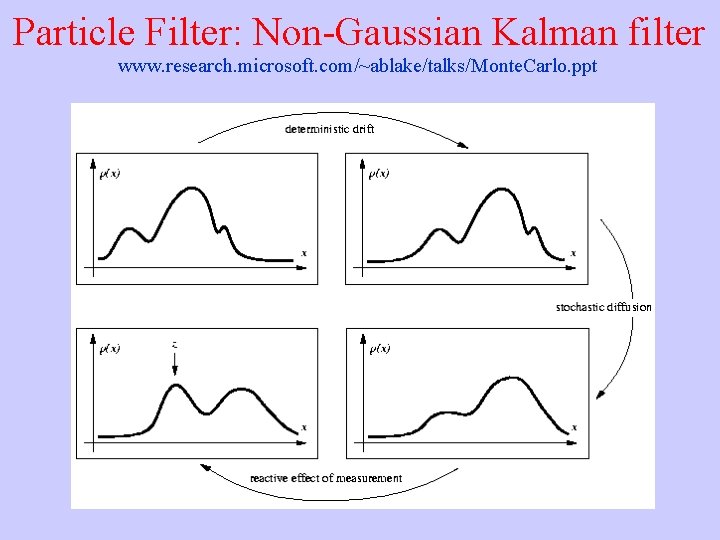

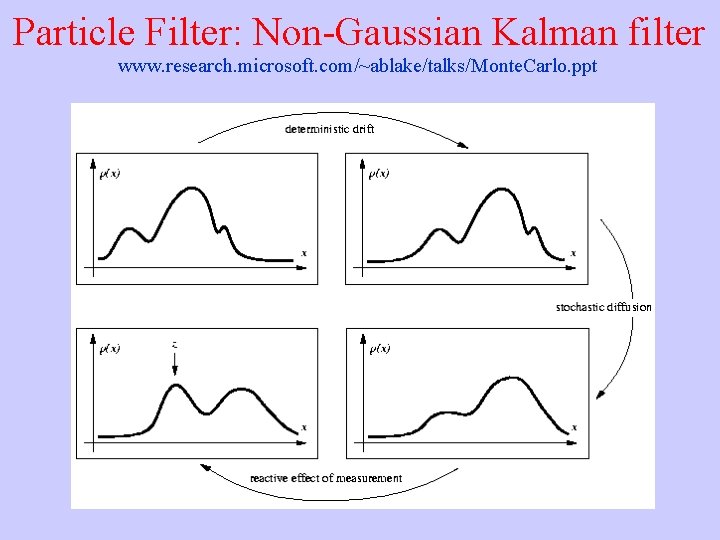

Particle Filter: Non-Gaussian Kalman filter www. research. microsoft. com/~ablake/talks/Monte. Carlo. ppt

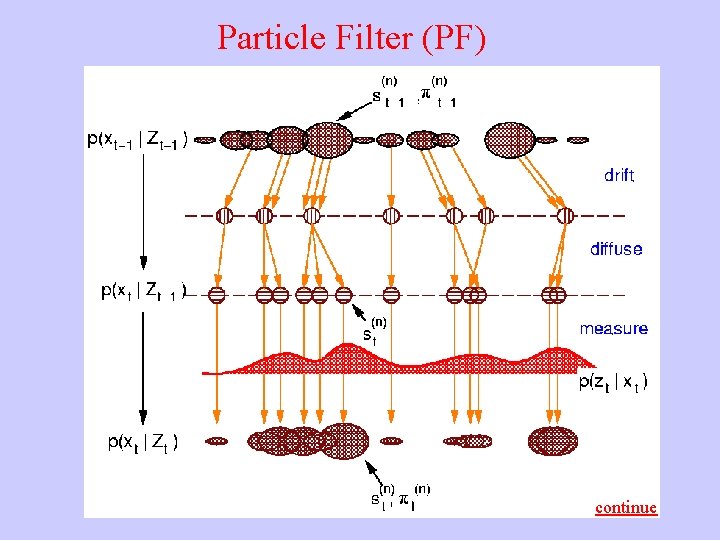

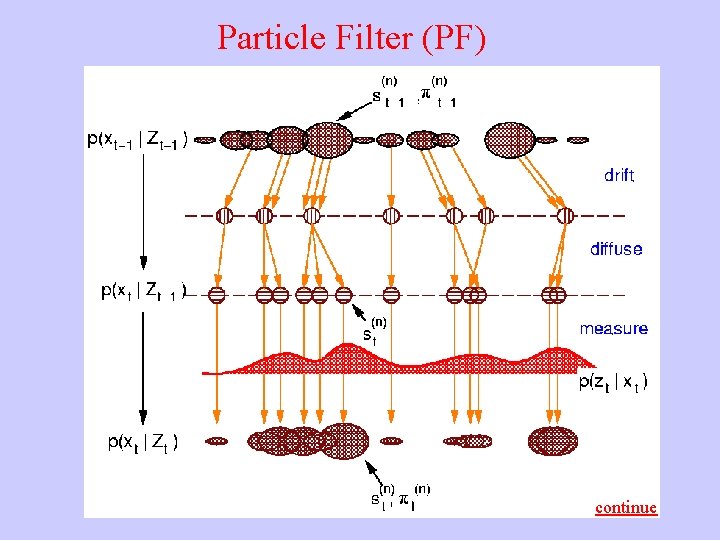

Particle Filter (PF) continue

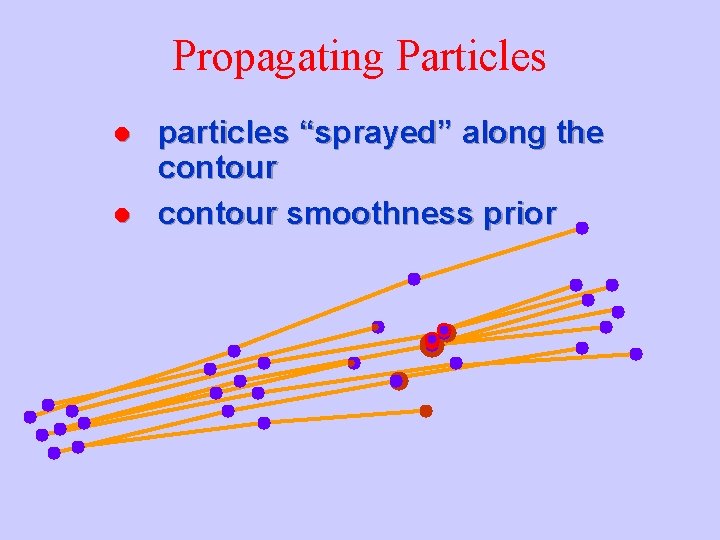

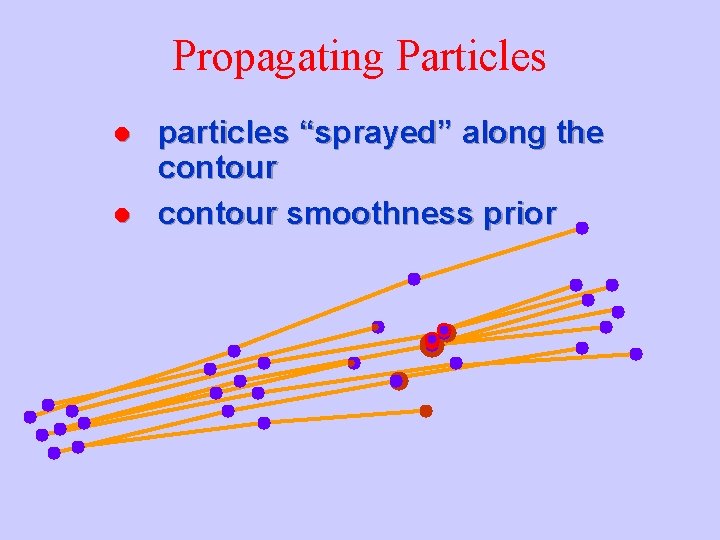

“Jet. Stream”: cut-and-paste by particle filtering • particles “sprayed” along the contour

Propagating Particles l l particles “sprayed” along the contour smoothness prior

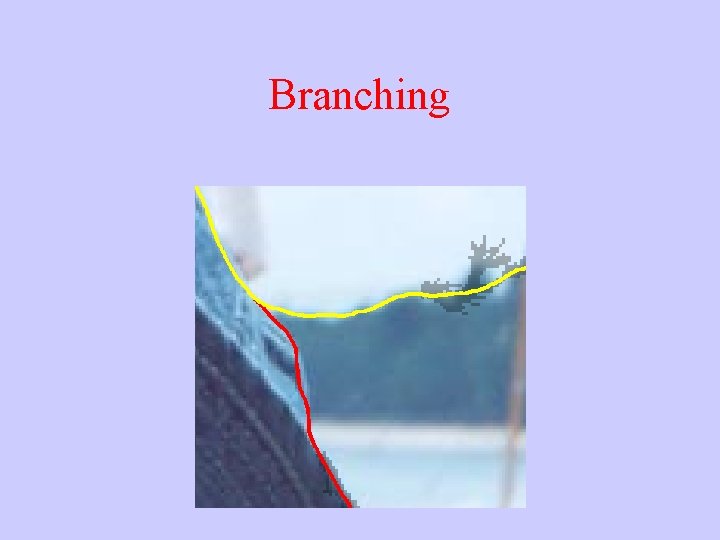

Branching

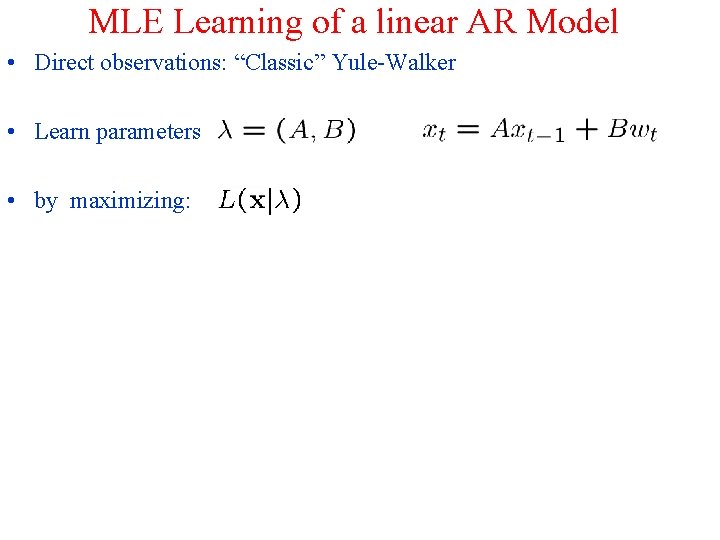

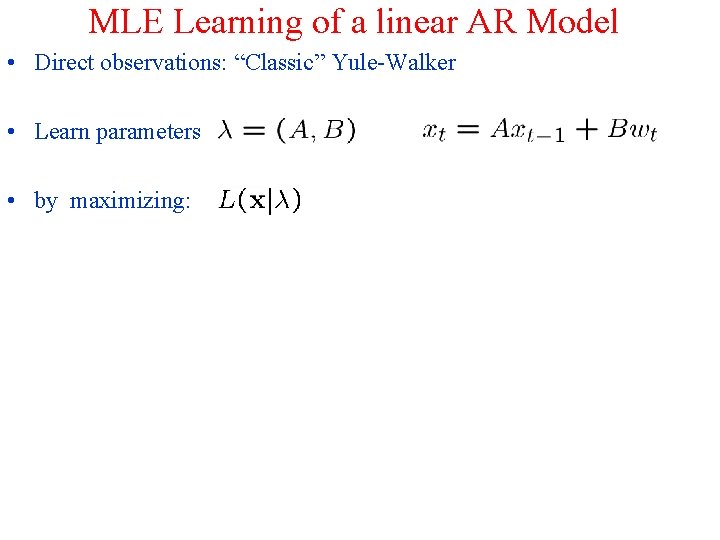

MLE Learning of a linear AR Model • Direct observations: “Classic” Yule-Walker • Learn parameters • by maximizing: • which for linear AR process minimizing • Finally solve: • where “sufficient statistics” are:

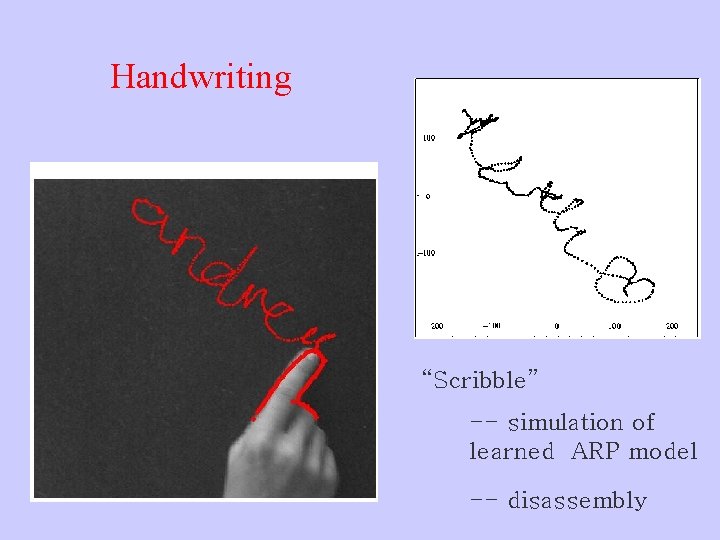

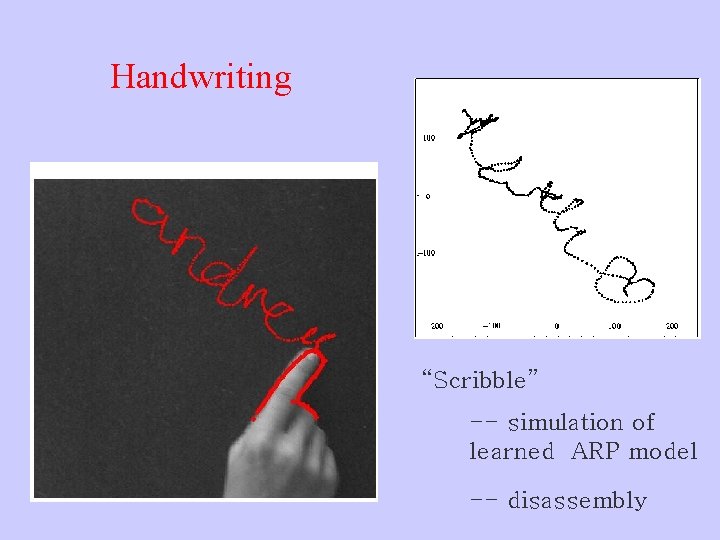

Handwriting “Scribble” -- simulation of learned ARP model -- disassembly

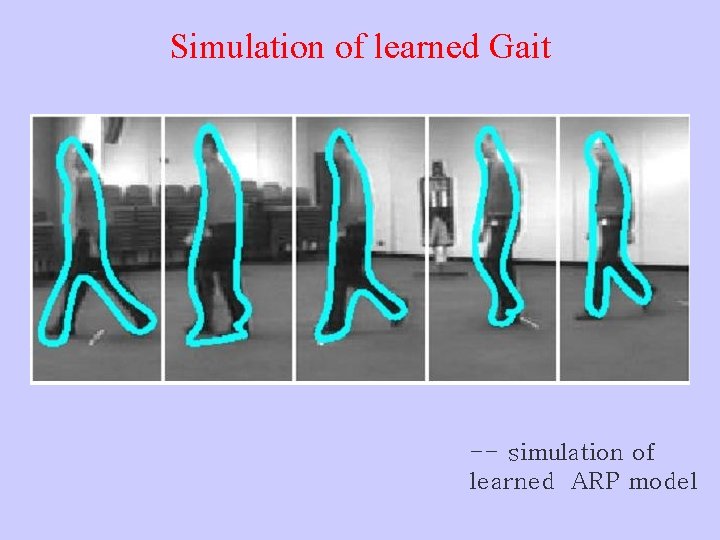

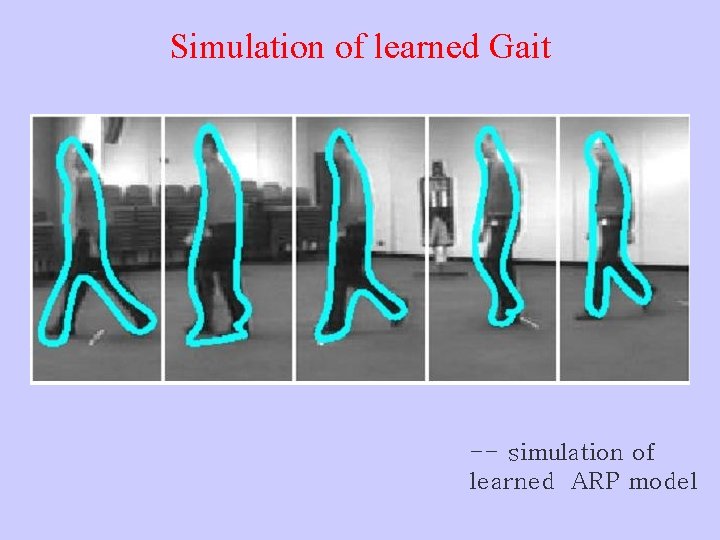

Simulation of learned Gait -- simulation of learned ARP model

Walking Simulation (ARP)

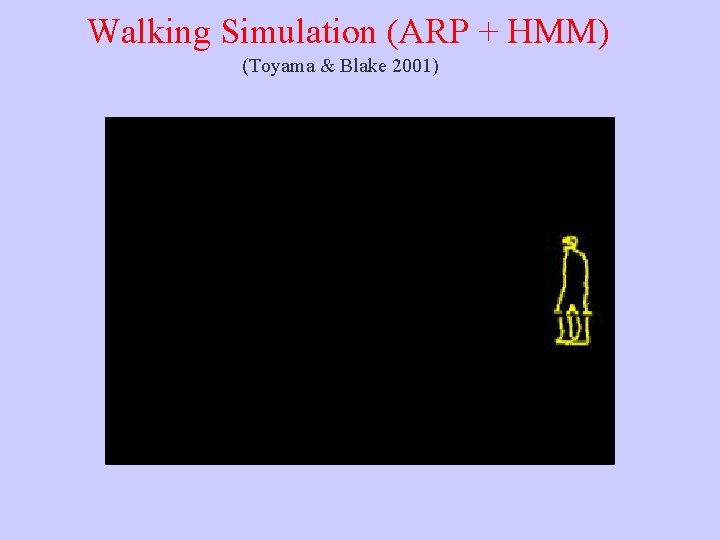

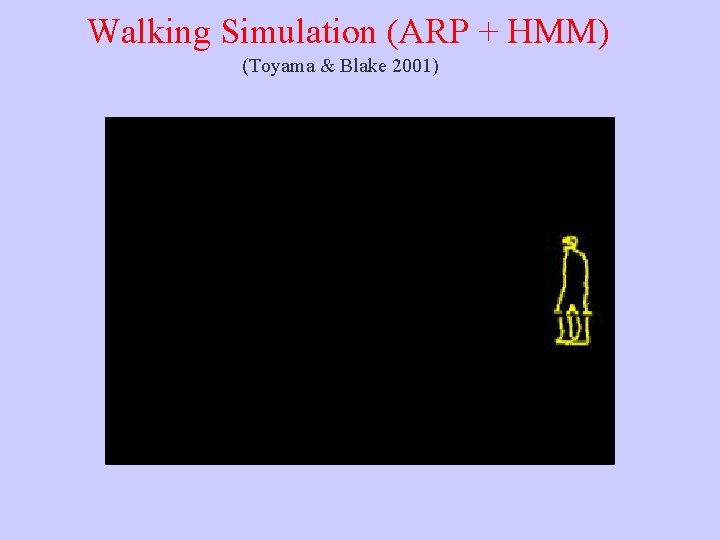

Walking Simulation (ARP + HMM) (Toyama & Blake 2001)

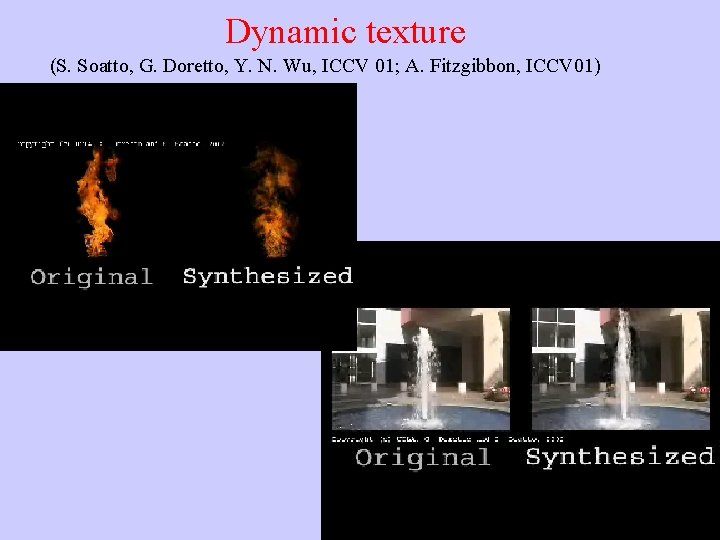

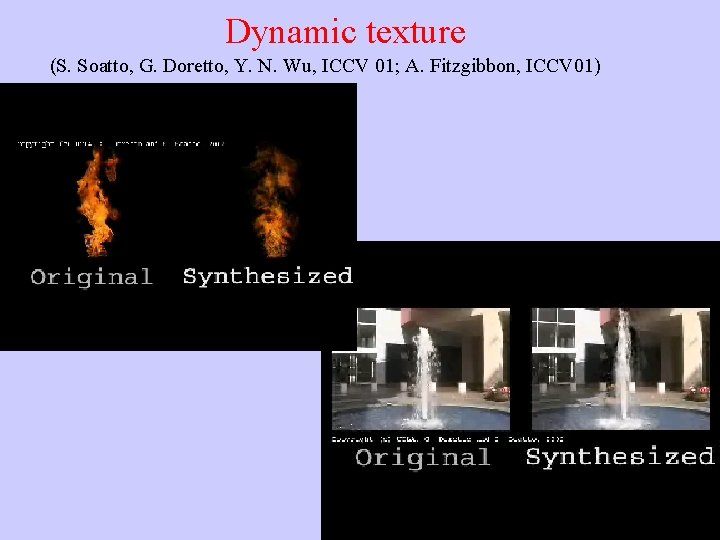

Dynamic texture (S. Soatto, G. Doretto, Y. N. Wu, ICCV 01; A. Fitzgibbon, ICCV 01)

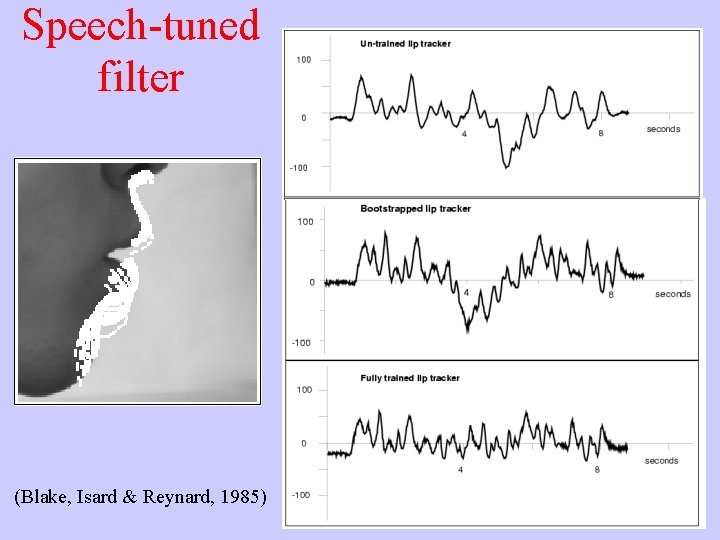

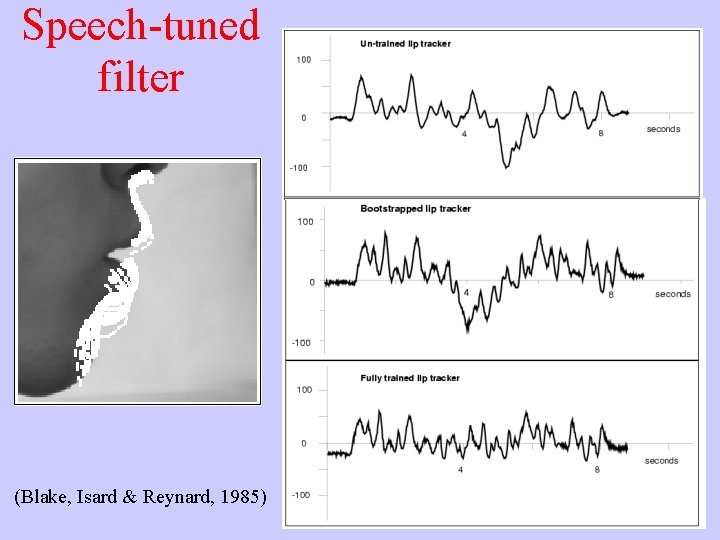

Speech-tuned filter (Blake, Isard & Reynard, 1985)

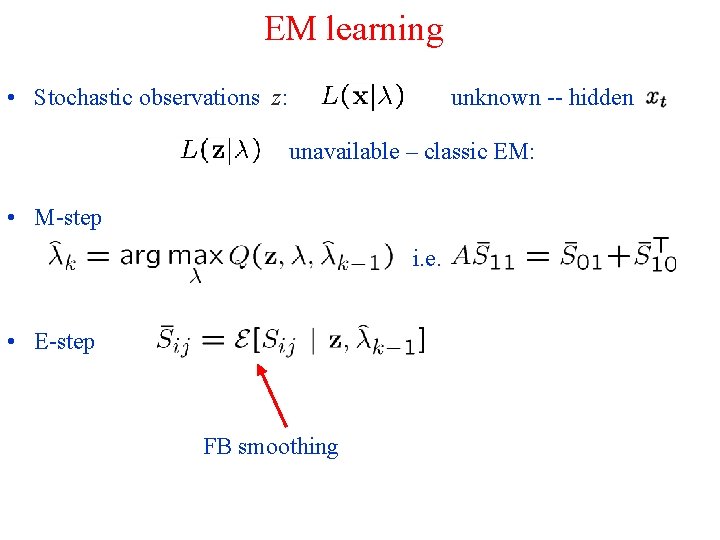

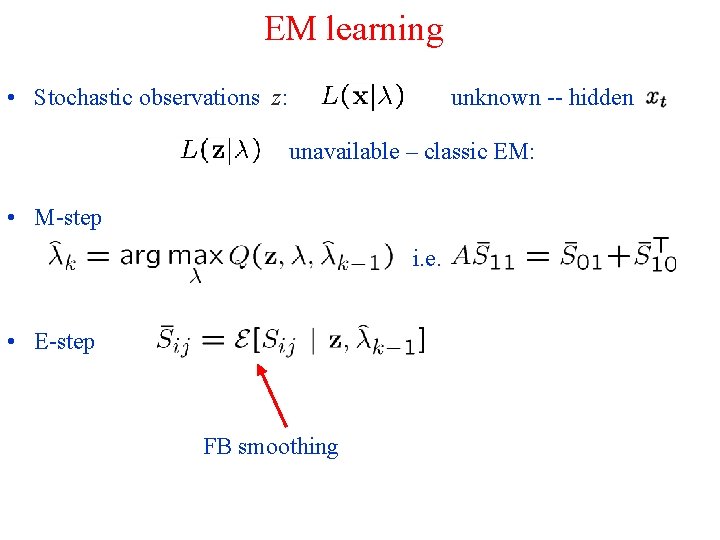

EM learning • Stochastic observations z: unknown -- hidden unavailable – classic EM: • M-step i. e. • E-step FB smoothing

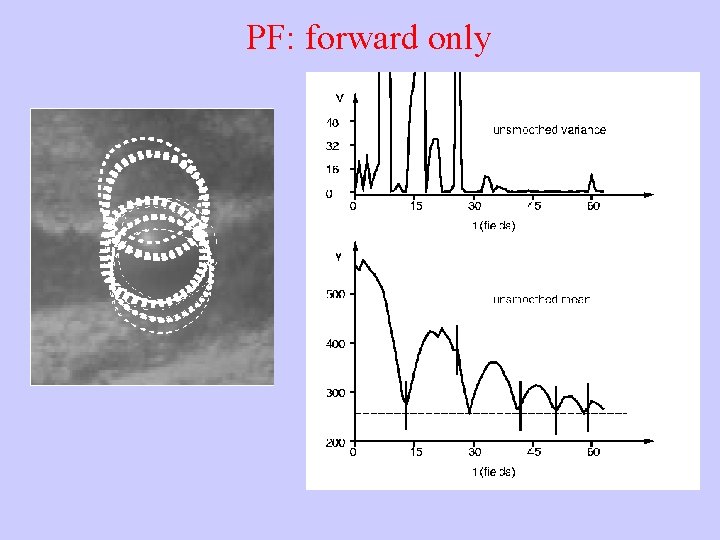

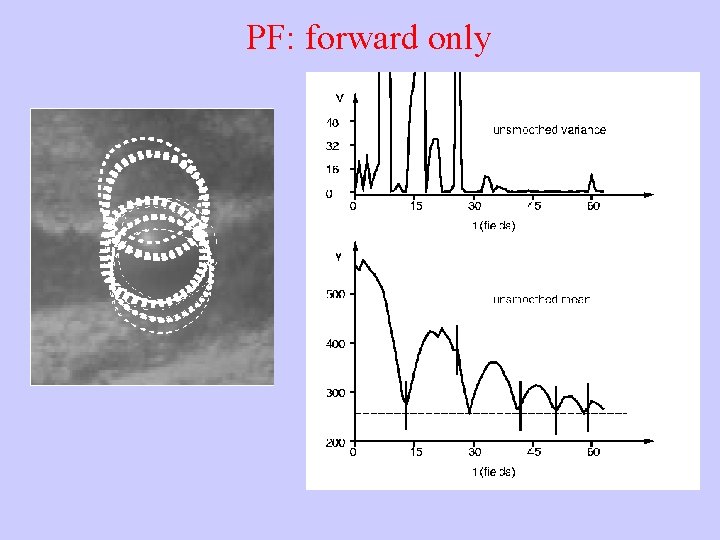

PF: forward only

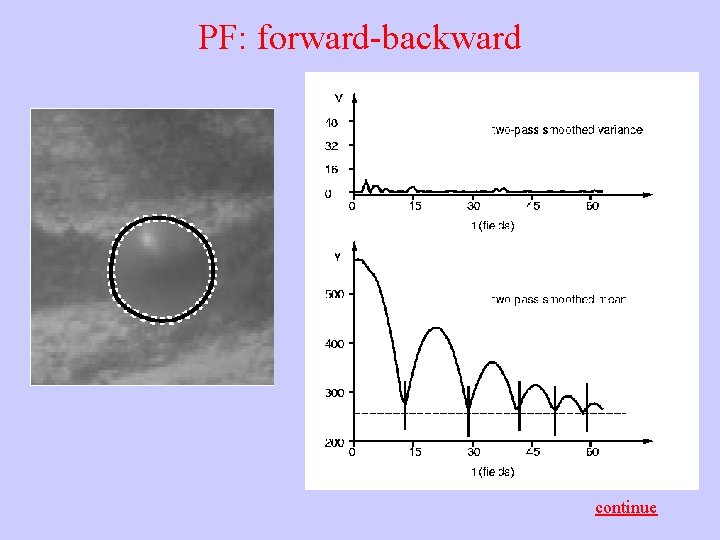

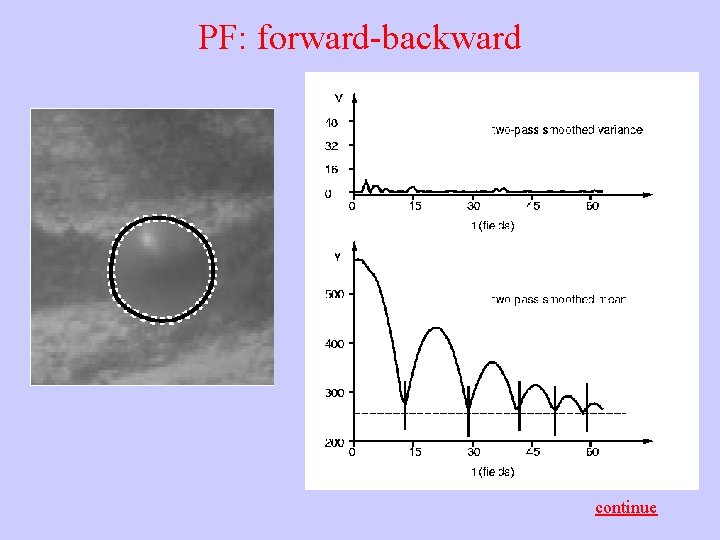

PF: forward-backward continue

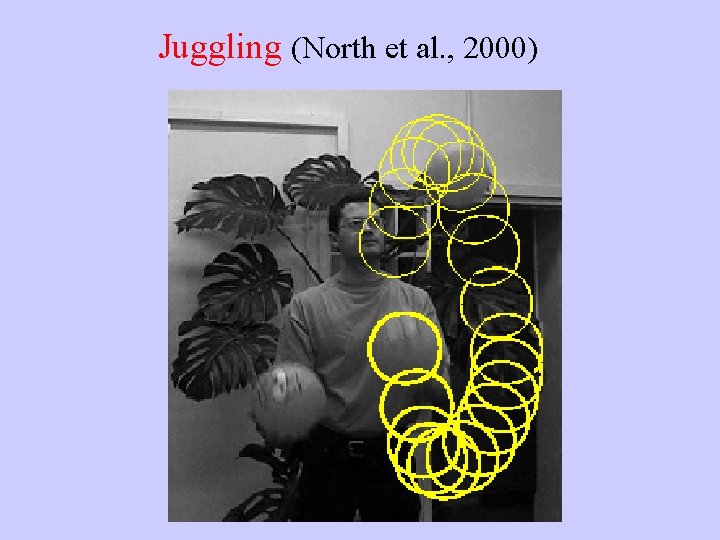

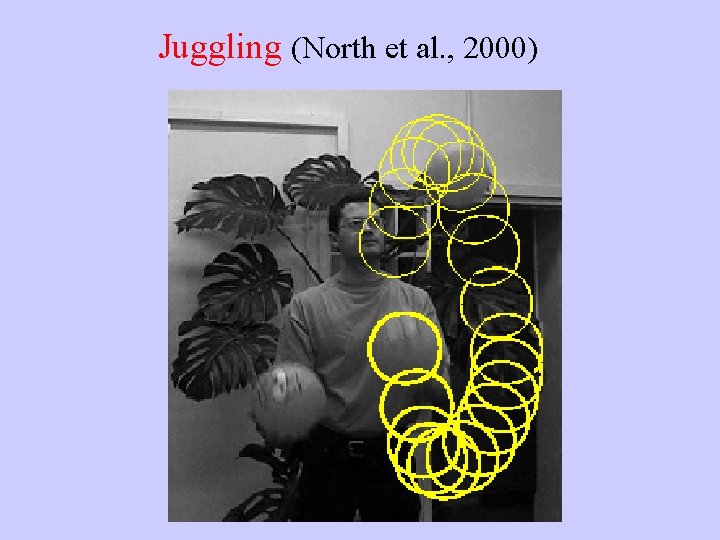

Juggling (North et al. , 2000)

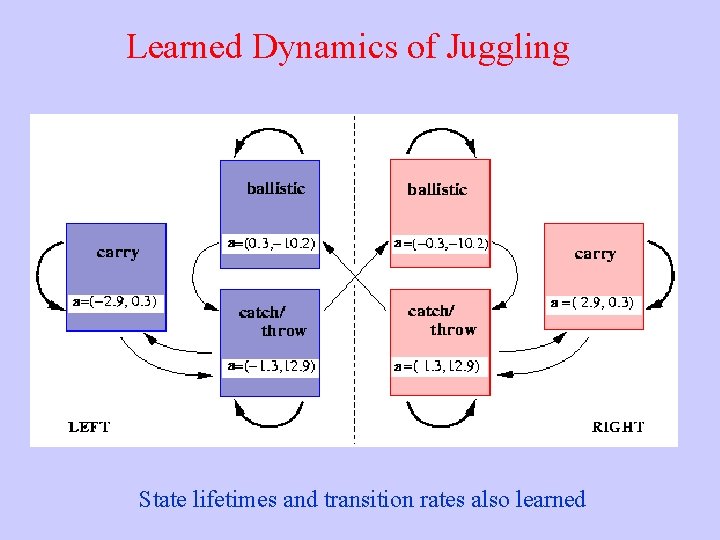

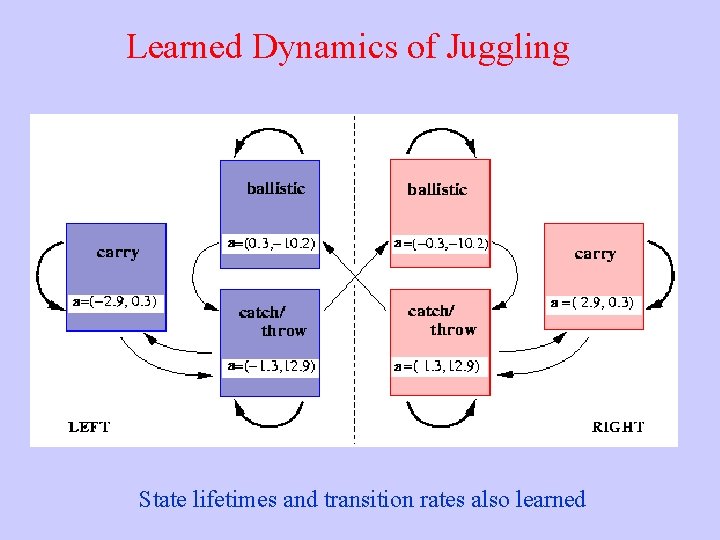

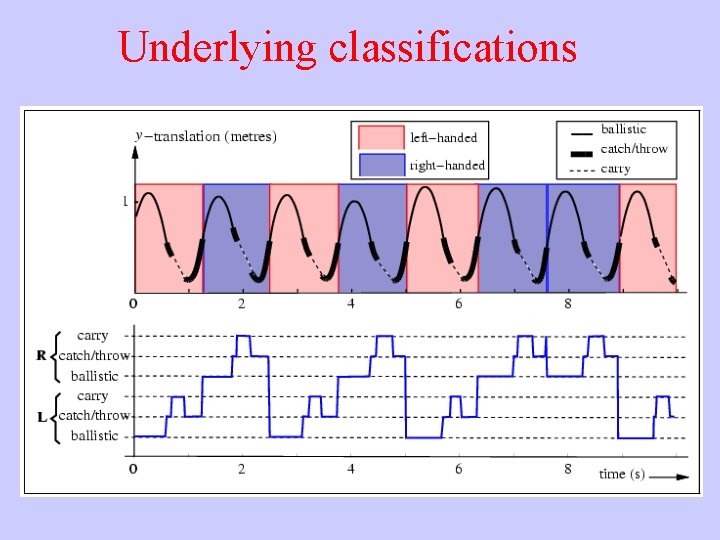

Learned Dynamics of Juggling State lifetimes and transition rates also learned

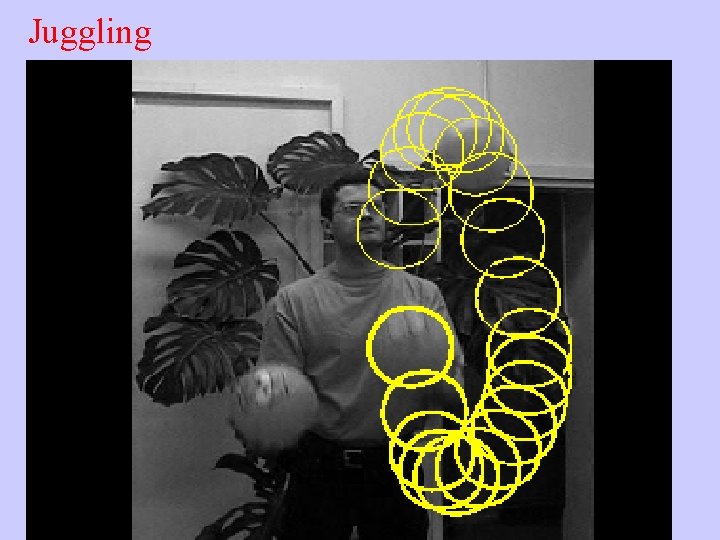

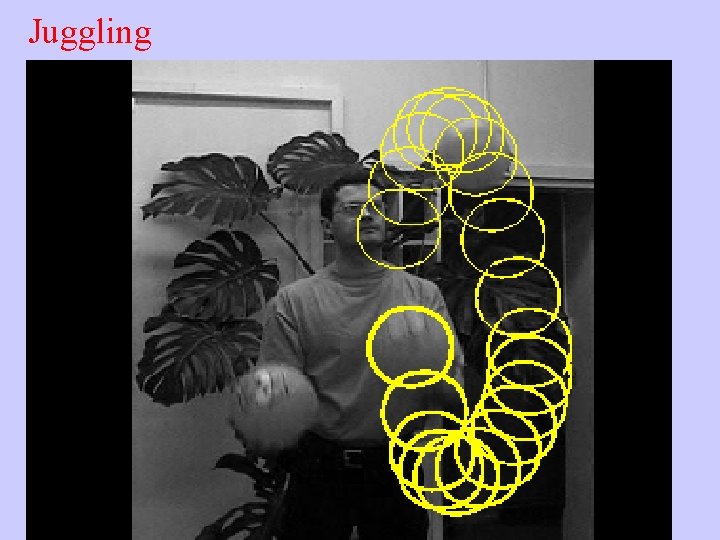

Juggling

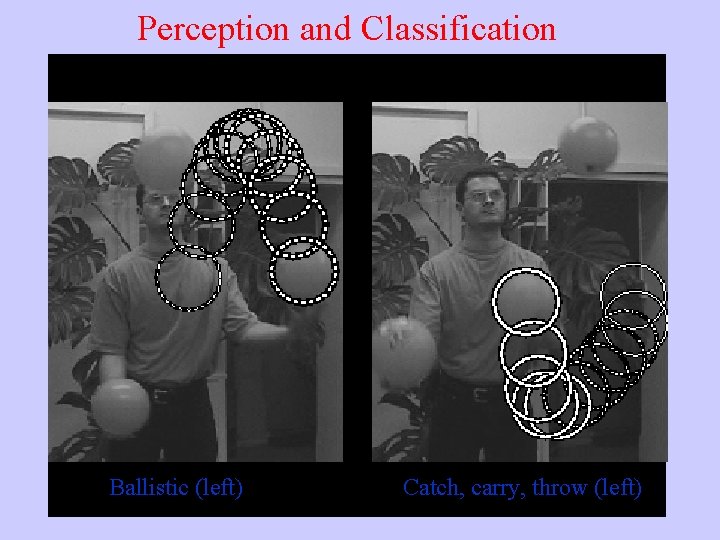

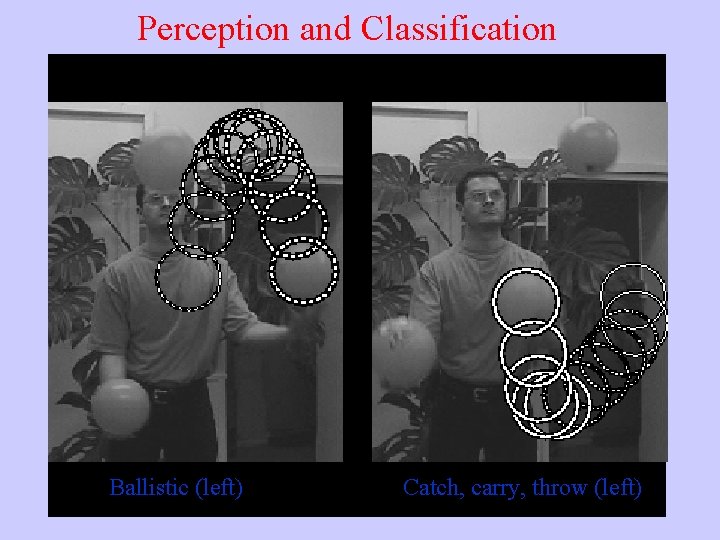

Perception and Classification Ballistic (left) Catch, carry, throw (left)

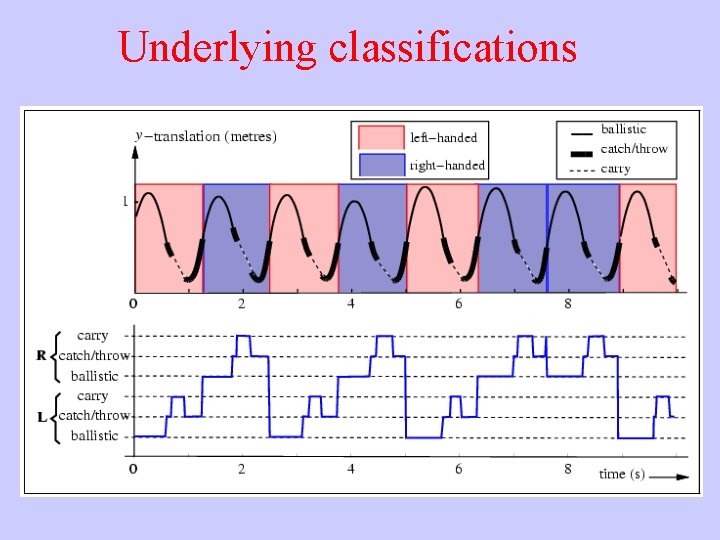

Underlying classifications

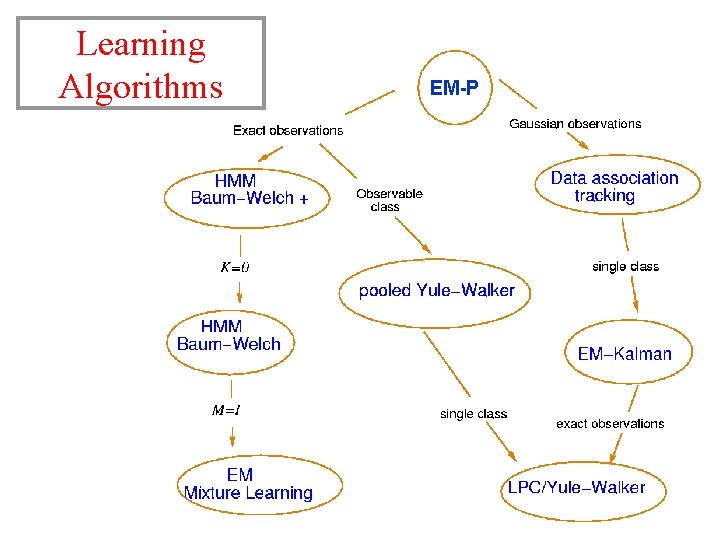

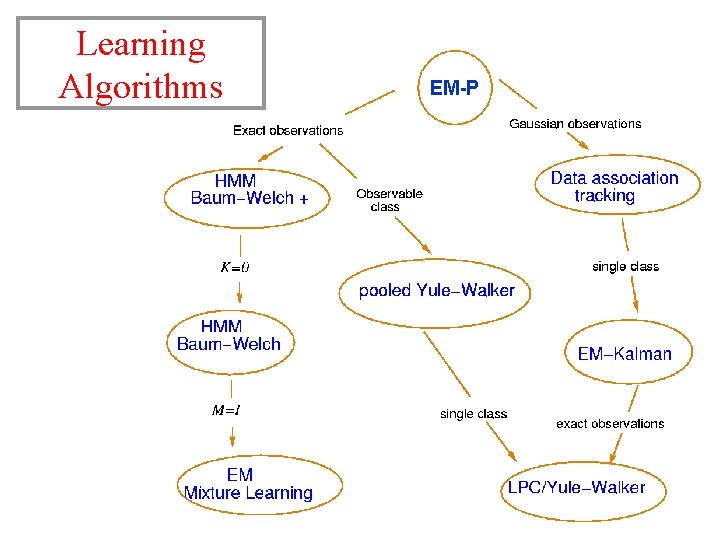

Learning Algorithms EM-P

ü 1 D Markov models • 2 D Markov models

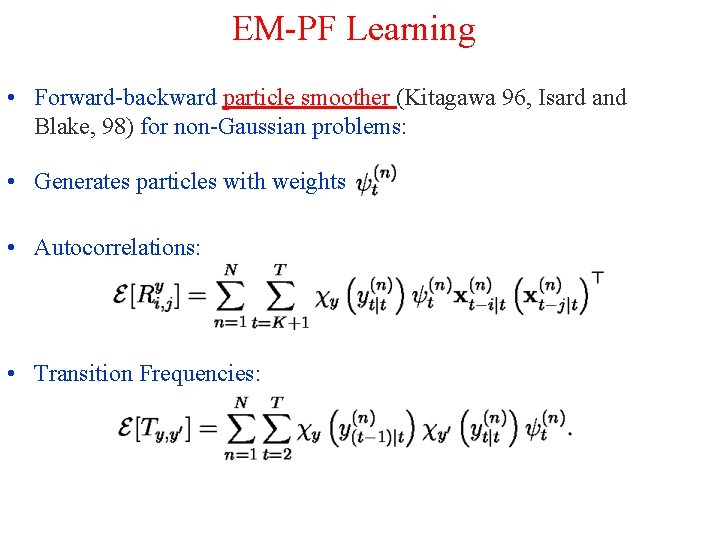

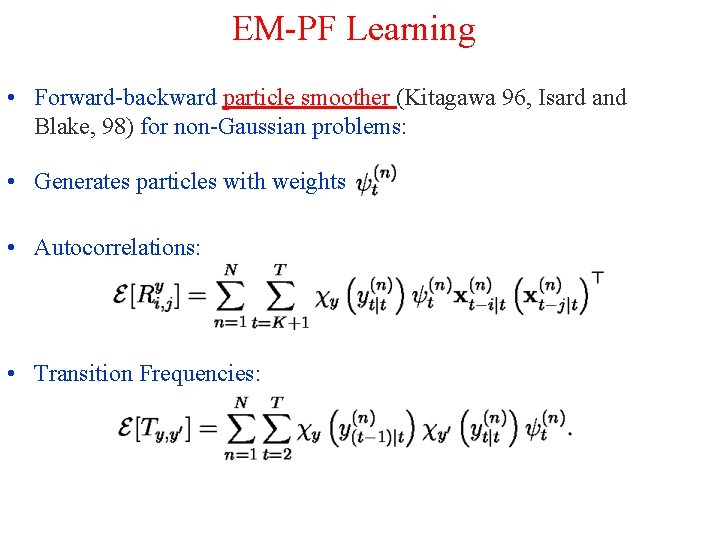

EM-PF Learning • Forward-backward particle smoother (Kitagawa 96, Isard and Blake, 98) for non-Gaussian problems: • Generates particles with weights • Autocorrelations: • Transition Frequencies: