Planning to Maximize Reward Markov Decision Processes Brian

Planning to Maximize Reward: Markov Decision Processes Brian C. Williams 16. 412 J/6. 835 J October 23 rd, 2002 Slides adapted from: Manuela Veloso, Reid Simmons, & Tom Mitchell, CMU 9/10/2020 1

Markov Decision Processes • • Motivation What are Markov Decision Processes? Computing Action Policies From a Model Summary 2

Reading and Assignments • Markov Decision Processes • Read AIMA Chapters 17 sections 1 – 4. Or equivalent in new text • Reinforcement Learning • Read AIMA Chapter 20 Or equivalent in new text • Next Homework: • involves coding MDPs, RL and HMM Belief Update v Lecture based on development in: “Machine Learning” by Tom Mitchell Chapter 13: Reinforcement Learning 3

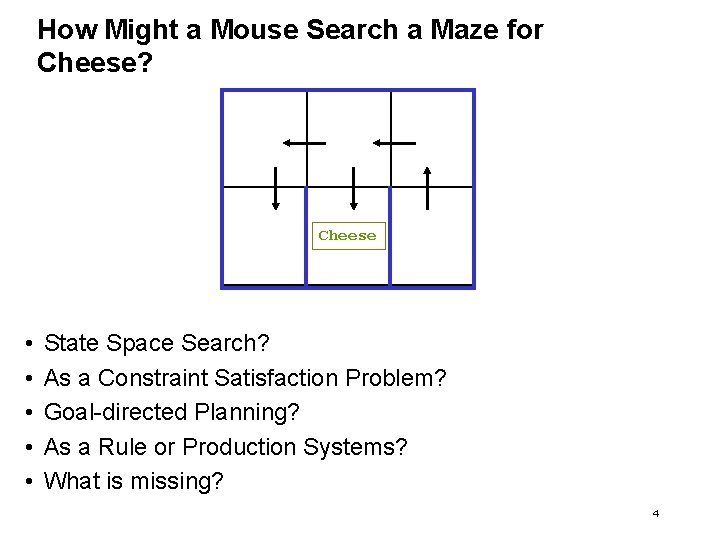

How Might a Mouse Search a Maze for Cheese? Cheese • • • State Space Search? As a Constraint Satisfaction Problem? Goal-directed Planning? As a Rule or Production Systems? What is missing? 4

Ideas in this lecture • Objective is to accumulate rewards, rather than goal states. • Objectives are achieved along the way, rather than at the end. • Task is to generate policies for how to act in all situations, rather than a plan for a single starting situation. • Policies fall out of value functions, which describe the greatest lifetime reward achievable at every state. • Value functions are iteratively approximated. 5

Markov Decision Processes • Motivation • What are Markov Decision Processes (MDPs)? • Models • Lifetime Reward • Policies • Computing Policies From a Model • Summary 6

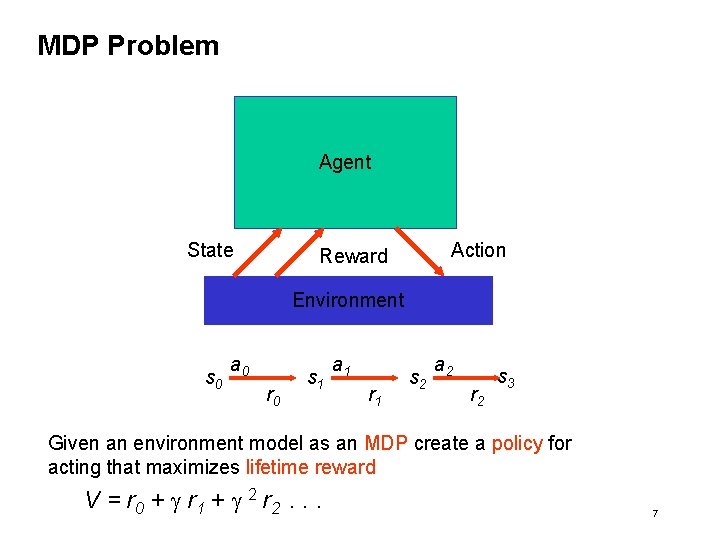

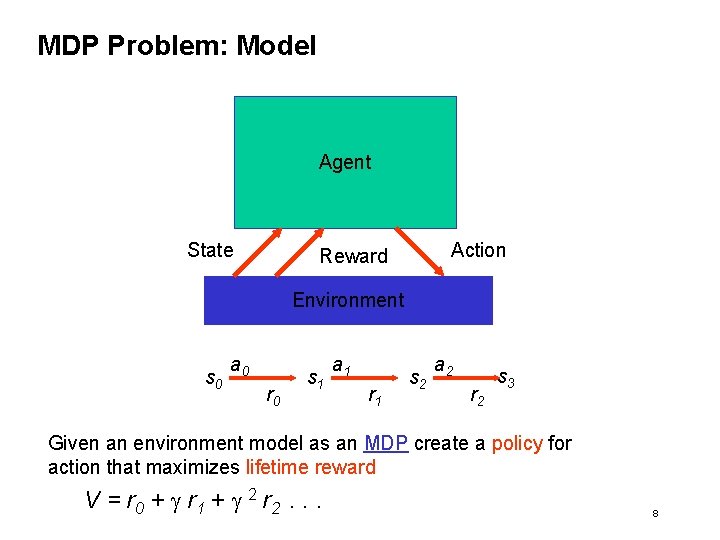

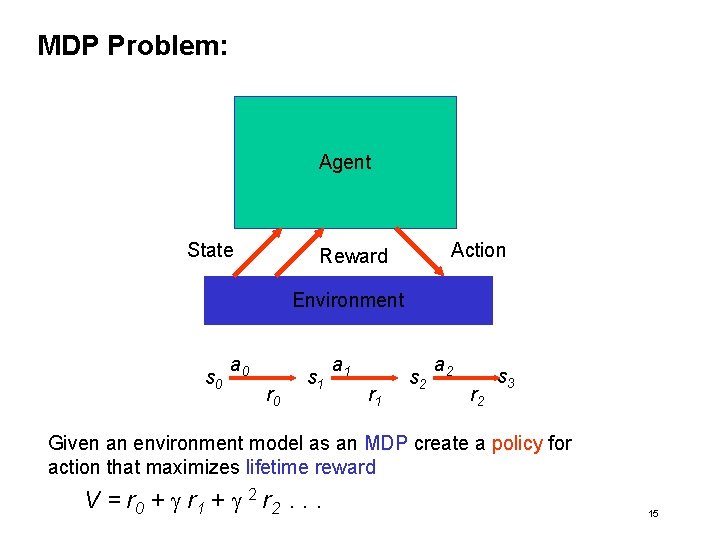

MDP Problem Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Given an environment model as an MDP create a policy for acting that maximizes lifetime reward V = r 0 + g r 1 + g 2 r 2. . . 7

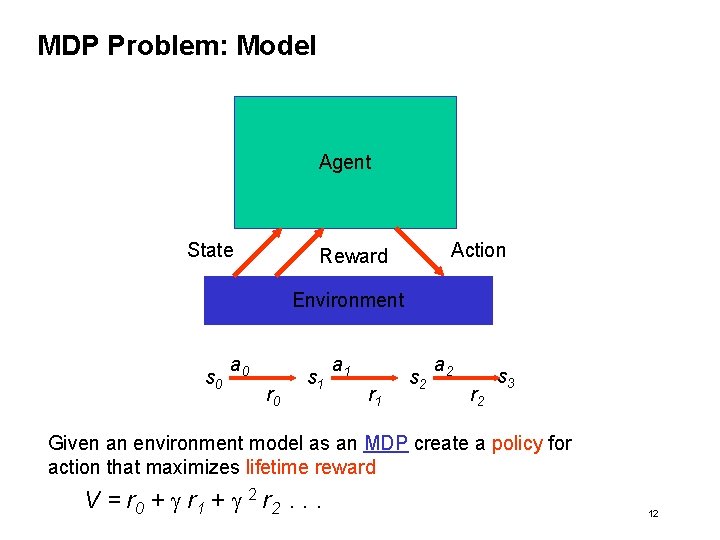

MDP Problem: Model Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Given an environment model as an MDP create a policy for action that maximizes lifetime reward V = r 0 + g r 1 + g 2 r 2. . . 8

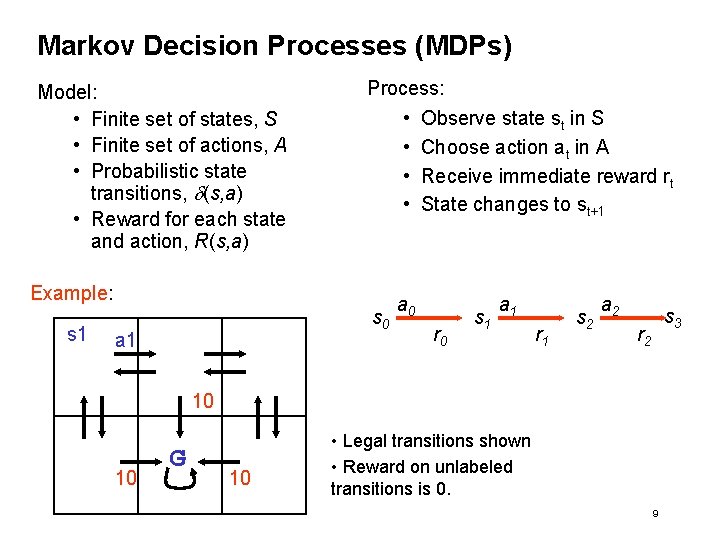

Markov Decision Processes (MDPs) Model: • Finite set of states, S • Finite set of actions, A • Probabilistic state transitions, d(s, a) • Reward for each state and action, R(s, a) Process: • Observe state st in S • Choose action at in A • Receive immediate reward rt • State changes to st+1 Example: s 1 s 0 a 1 a 0 r 0 s 1 a 1 r 1 s 2 a 2 s 3 r 2 10 10 G 10 • Legal transitions shown • Reward on unlabeled transitions is 0. 9

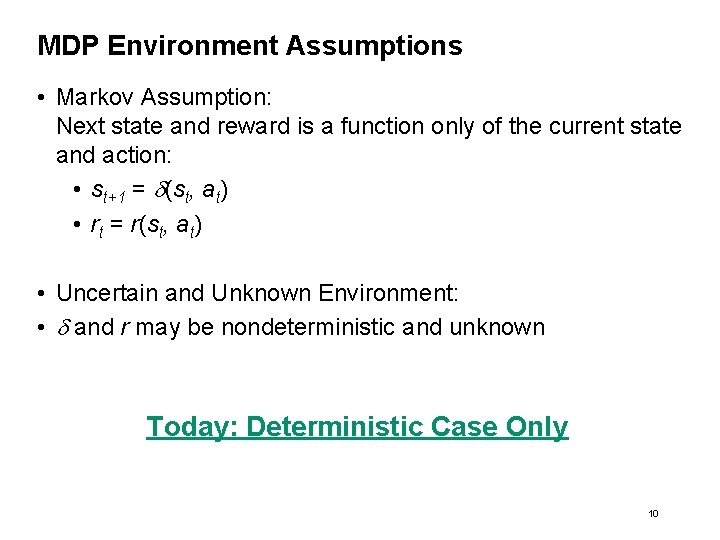

MDP Environment Assumptions • Markov Assumption: Next state and reward is a function only of the current state and action: • st+1 = d(st, at) • rt = r(st, at) • Uncertain and Unknown Environment: • d and r may be nondeterministic and unknown Today: Deterministic Case Only 10

MDP Problem: Model Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Given an environment model as an MDP create a policy for action that maximizes lifetime reward V = r 0 + g r 1 + g 2 r 2. . . 12

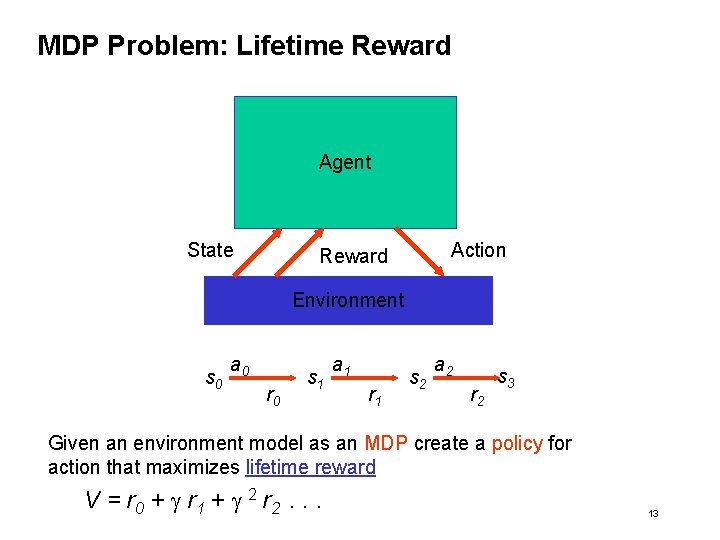

MDP Problem: Lifetime Reward Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Given an environment model as an MDP create a policy for action that maximizes lifetime reward V = r 0 + g r 1 + g 2 r 2. . . 13

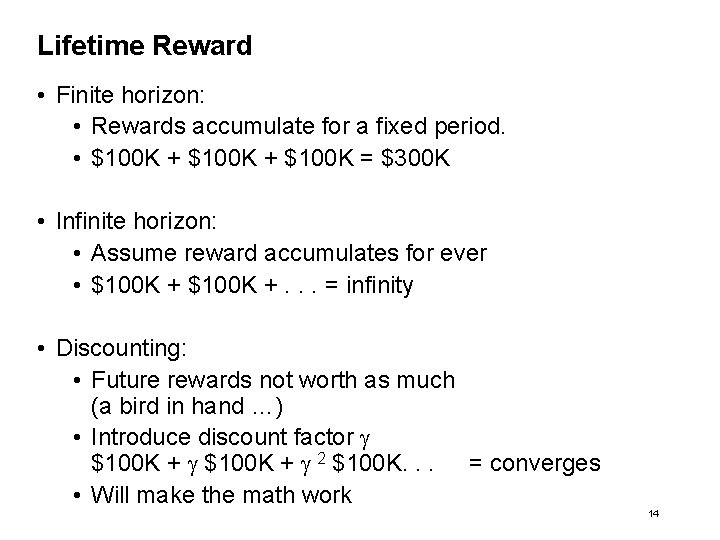

Lifetime Reward • Finite horizon: • Rewards accumulate for a fixed period. • $100 K + $100 K = $300 K • Infinite horizon: • Assume reward accumulates for ever • $100 K +. . . = infinity • Discounting: • Future rewards not worth as much (a bird in hand …) • Introduce discount factor g $100 K + g 2 $100 K. . . = converges • Will make the math work 14

MDP Problem: Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Given an environment model as an MDP create a policy for action that maximizes lifetime reward V = r 0 + g r 1 + g 2 r 2. . . 15

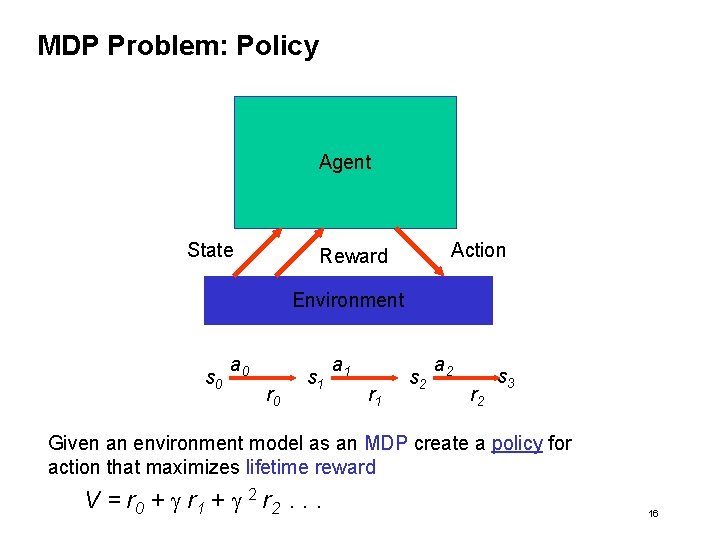

MDP Problem: Policy Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Given an environment model as an MDP create a policy for action that maximizes lifetime reward V = r 0 + g r 1 + g 2 r 2. . . 16

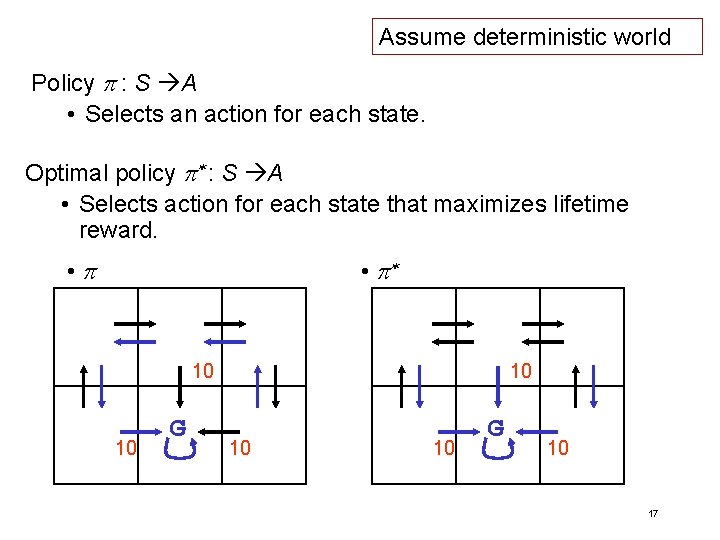

Assume deterministic world Policy p : S A • Selects an action for each state. Optimal policy p* : S A • Selects action for each state that maximizes lifetime reward. • p* 10 10 G 10 17

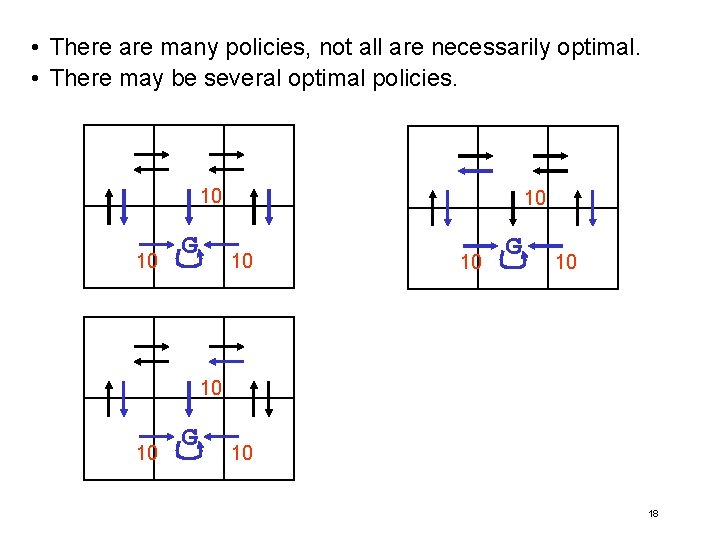

• There are many policies, not all are necessarily optimal. • There may be several optimal policies. 10 10 G 10 18

Markov Decision Processes • Motivation • Markov Decision Processes • Computing Policies From a Model • Value Functions • Mapping Value Functions to Policies • Computing Value Functions • Summary 19

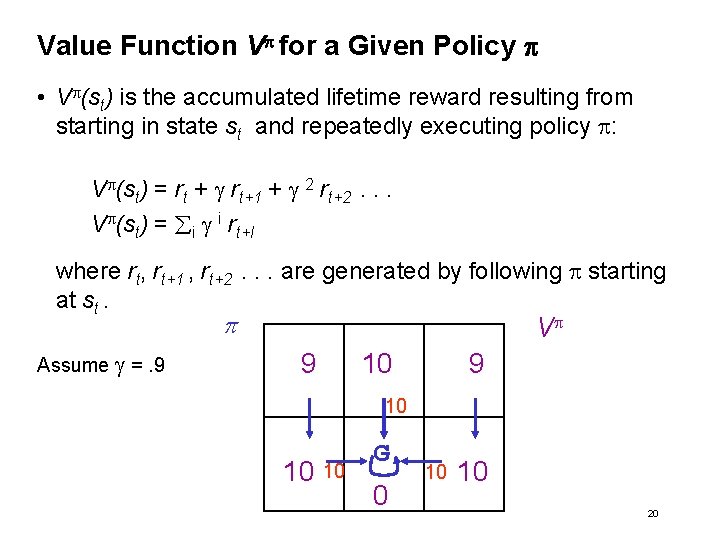

Value Function Vp for a Given Policy p • Vp(st) is the accumulated lifetime reward resulting from starting in state st and repeatedly executing policy p: Vp(st) = rt + g rt+1 + g 2 rt+2. . . Vp(st) = i g i rt+I where rt, rt+1 , rt+2. . . are generated by following p starting at st. p Assume g =. 9 Vp 9 10 10 10 G 0 10 10 20

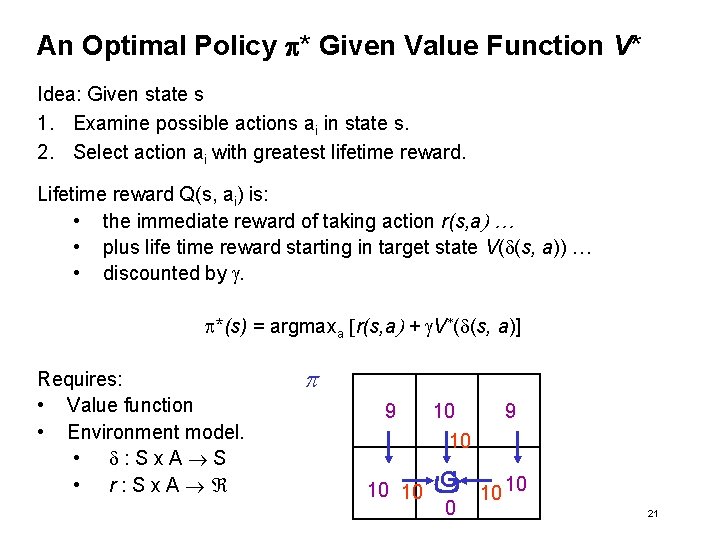

An Optimal Policy p* Given Value Function V* Idea: Given state s 1. Examine possible actions ai in state s. 2. Select action ai with greatest lifetime reward. Lifetime reward Q(s, ai) is: • the immediate reward of taking action r(s, a) … • plus life time reward starting in target state V(d(s, a)) … • discounted by g. p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] Requires: • Value function • Environment model. • d: Sx. A S • r: Sx. A p 9 10 10 10 G 0 10 10 21

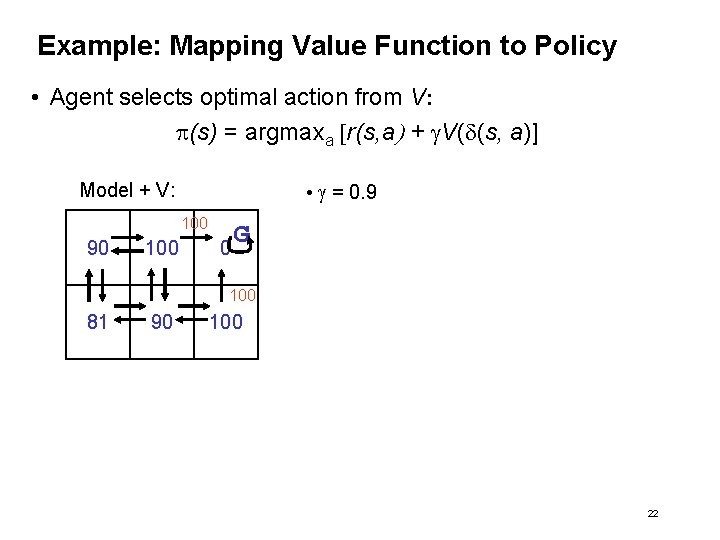

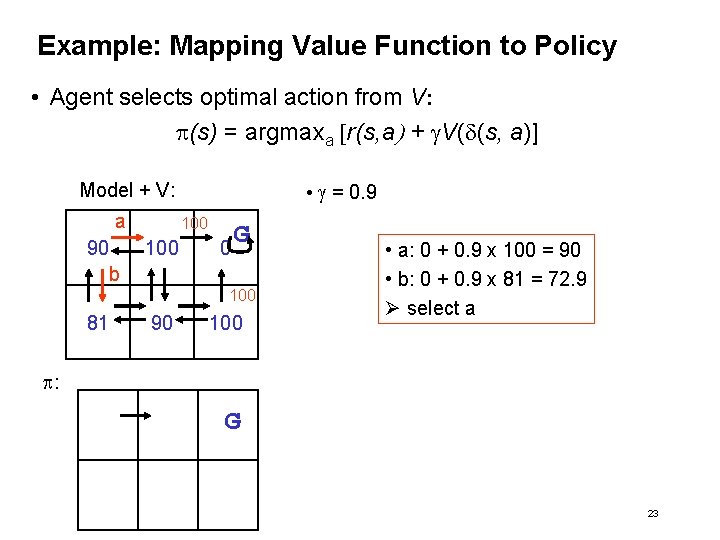

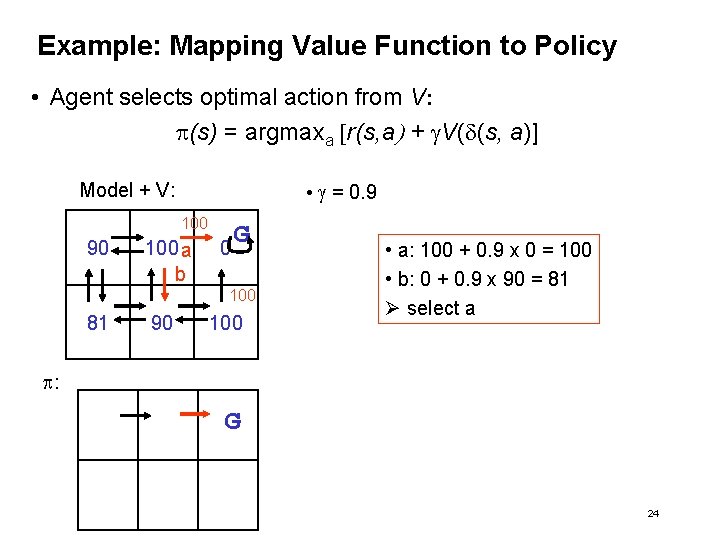

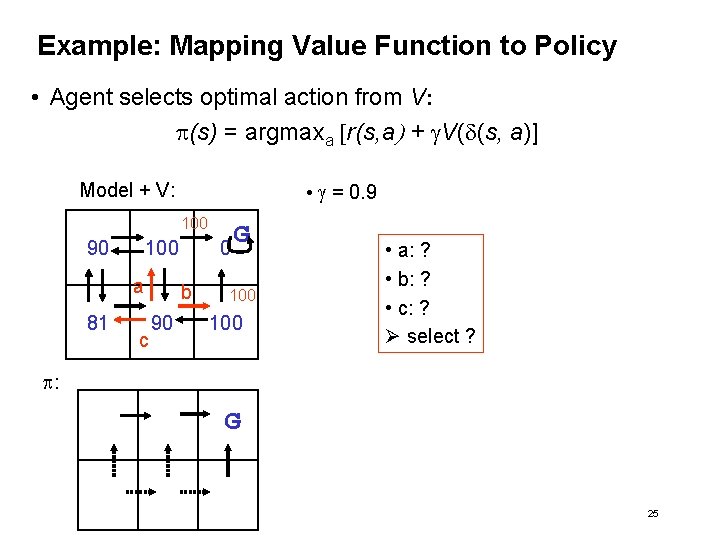

Example: Mapping Value Function to Policy • Agent selects optimal action from V: p(s) = argmaxa [r(s, a) + g. V(d(s, a)] • g = 0. 9 Model + V: 100 90 100 0 G 100 81 90 100 22

Example: Mapping Value Function to Policy • Agent selects optimal action from V: p(s) = argmaxa [r(s, a) + g. V(d(s, a)] • g = 0. 9 Model + V: a 90 100 0 G b 100 81 90 100 • a: 0 + 0. 9 x 100 = 90 • b: 0 + 0. 9 x 81 = 72. 9 Ø select a p: G 23

Example: Mapping Value Function to Policy • Agent selects optimal action from V: p(s) = argmaxa [r(s, a) + g. V(d(s, a)] • g = 0. 9 Model + V: 100 90 100 a b 0 G 100 81 90 100 • a: 100 + 0. 9 x 0 = 100 • b: 0 + 0. 9 x 90 = 81 Ø select a p: G 24

Example: Mapping Value Function to Policy • Agent selects optimal action from V: p(s) = argmaxa [r(s, a) + g. V(d(s, a)] • g = 0. 9 Model + V: 100 90 100 a 81 c 0 b 90 G 100 • a: ? • b: ? • c: ? Ø select ? p: G 25

Markov Decision Processes • Motivation • Markov Decision Processes • Computing Policies From a Model • Value Functions • Mapping Value Functions to Policies • Computing Value Functions • Summary 26

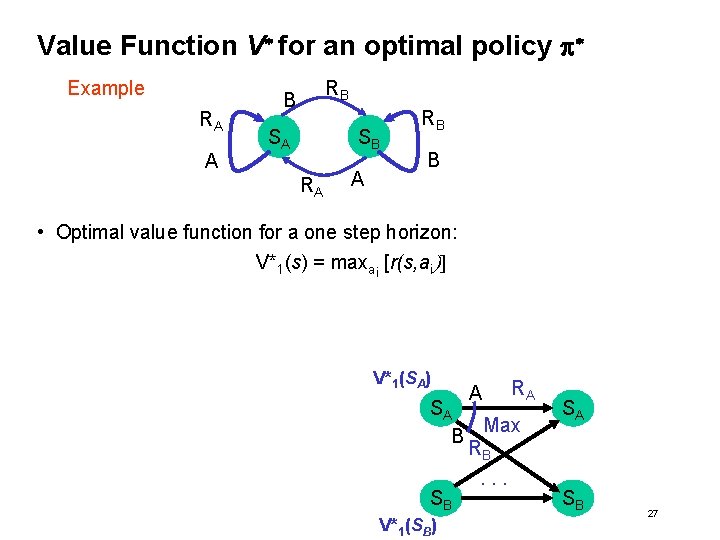

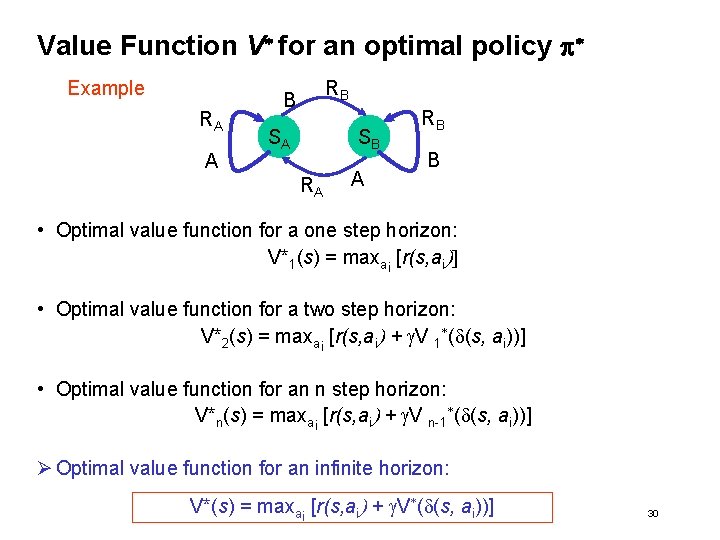

Value Function V* for an optimal policy p* Example RA A RB B SA SB RA A RB B • Optimal value function for a one step horizon: V*1(s) = maxai [r(s, ai)] V*1(SA) A RA SA Max B RB. . . SB V*1(SB) SA SB 27

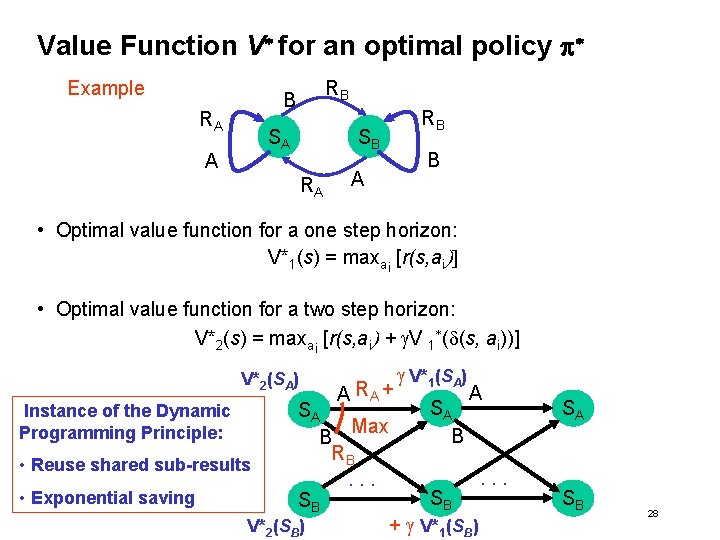

Value Function V* for an optimal policy p* Example RB B RA SA A RB SB RA B A • Optimal value function for a one step horizon: V*1(s) = maxai [r(s, ai)] • Optimal value function for a two step horizon: V*2(s) = maxai [r(s, ai) + g. V 1*(d(s, ai))] V*2(SA) Instance of the Dynamic Programming Principle: • Reuse shared sub-results • Exponential saving A RA + SA Max B RB. . . SB V*2(SB) g V*1(SA) SA B SB A + g V*1(SB) . . . SA SB 28

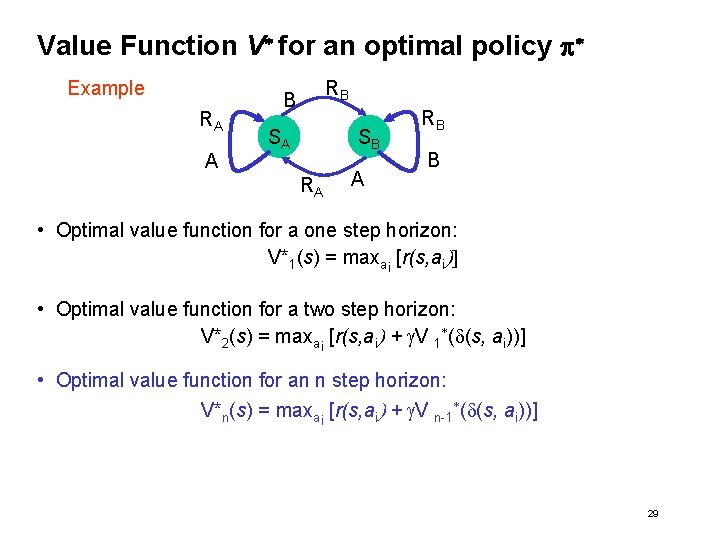

Value Function V* for an optimal policy p* Example RA A RB B SA SB RA A RB B • Optimal value function for a one step horizon: V*1(s) = maxai [r(s, ai)] • Optimal value function for a two step horizon: V*2(s) = maxai [r(s, ai) + g. V 1*(d(s, ai))] • Optimal value function for an n step horizon: V*n(s) = maxai [r(s, ai) + g. V n-1*(d(s, ai))] 29

Value Function V* for an optimal policy p* Example RA A RB B SA SB RA A RB B • Optimal value function for a one step horizon: V*1(s) = maxai [r(s, ai)] • Optimal value function for a two step horizon: V*2(s) = maxai [r(s, ai) + g. V 1*(d(s, ai))] • Optimal value function for an n step horizon: V*n(s) = maxai [r(s, ai) + g. V n-1*(d(s, ai))] Ø Optimal value function for an infinite horizon: V*(s) = maxai [r(s, ai) + g. V*(d(s, ai))] 30

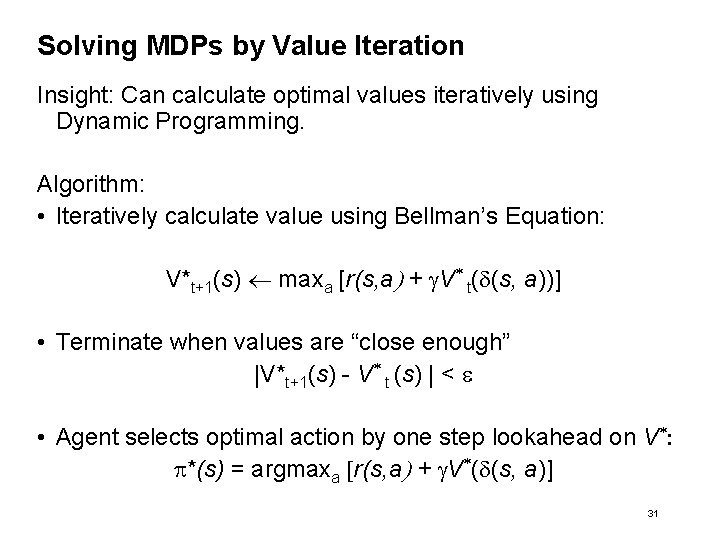

Solving MDPs by Value Iteration Insight: Can calculate optimal values iteratively using Dynamic Programming. Algorithm: • Iteratively calculate value using Bellman’s Equation: V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Terminate when values are “close enough” |V*t+1(s) - V* t (s) | < e • Agent selects optimal action by one step lookahead on V*: p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] 31

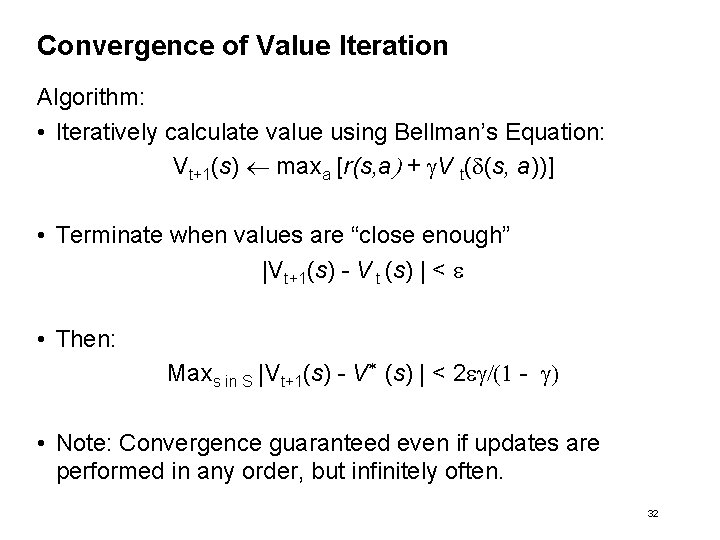

Convergence of Value Iteration Algorithm: • Iteratively calculate value using Bellman’s Equation: Vt+1(s) maxa [r(s, a) + g. V t(d(s, a))] • Terminate when values are “close enough” |Vt+1(s) - V t (s) | < e • Then: Maxs in S |Vt+1(s) - V* (s) | < 2 eg/(1 - g) • Note: Convergence guaranteed even if updates are performed in any order, but infinitely often. 32

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-32.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 a 0 0 0 G b 100 0 0 100 0 G 100 0 • a: 0 + 0. 9 x 0 = 0 • b: 0 + 0. 9 x 0 = 0 Ø Max = 0 33

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-33.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 0 0 a c b 0 G 100 0 0 100 G 100 0 • a: 100 + 0. 9 x 0 = 100 • b: 0 + 0. 9 x 0 = 0 • c: 0 + 0. 9 x 0 = 0 Ø Max = 100 34

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-34.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 0 G a 100 0 0 100 0 G 100 0 • a: 0 + 0. 9 x 0 = 0 Ø Max = 0 35

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-35.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 0 G 100 0 0 0 100 0 G 100 0 36

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-36.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 0 G 100 0 0 G 100 0 0 37

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-37.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 0 G 100 0 0 G 100 0 0 100 38

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-38.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 0 G 100 90 100 0 G 100 0 90 100 39

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-39.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 90 100 0 G 100 90 100 0 G 100 81 90 100 40

![Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] •](http://slidetodoc.com/presentation_image/137b96bf31da7b8768942bfce29d0e05/image-40.jpg)

Example of Value Iteration V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • g = 0. 9 V* t+1 100 90 100 0 G 100 90 100 81 90 100 0 G 100 81 90 100 41

Markov Decision Processes • • Motivation Markov Decision Processes Computing policies from a model Summary 42

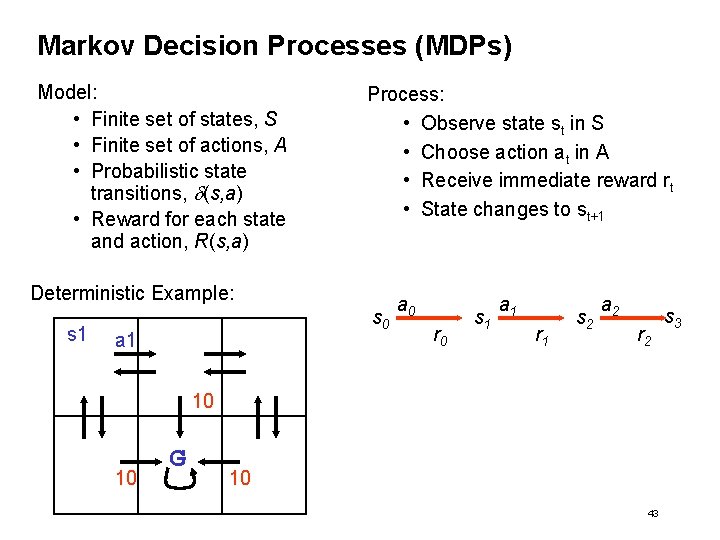

Markov Decision Processes (MDPs) Model: • Finite set of states, S • Finite set of actions, A • Probabilistic state transitions, d(s, a) • Reward for each state and action, R(s, a) Process: • Observe state st in S • Choose action at in A • Receive immediate reward rt • State changes to st+1 Deterministic Example: s 1 s 0 a 1 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 10 10 G 10 43 s 3

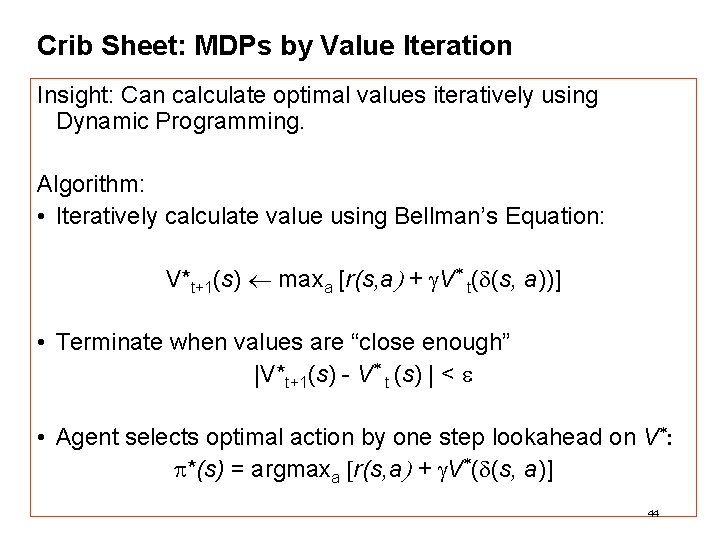

Crib Sheet: MDPs by Value Iteration Insight: Can calculate optimal values iteratively using Dynamic Programming. Algorithm: • Iteratively calculate value using Bellman’s Equation: V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Terminate when values are “close enough” |V*t+1(s) - V* t (s) | < e • Agent selects optimal action by one step lookahead on V*: p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] 44

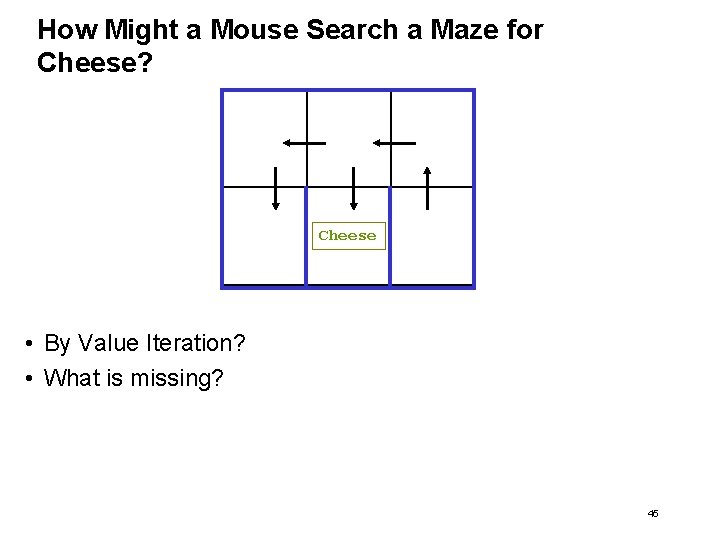

How Might a Mouse Search a Maze for Cheese? Cheese • By Value Iteration? • What is missing? 45

- Slides: 44