Markov Models Basics Markov Models With observation uncertainty

Markov Models (Basics)

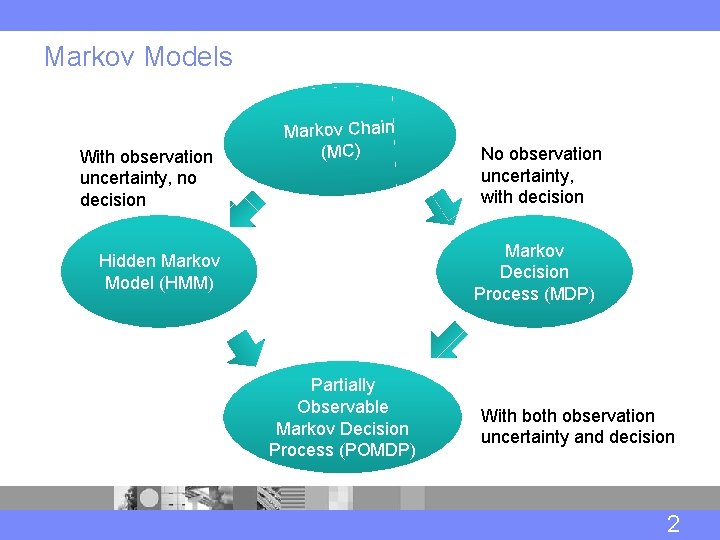

Markov Models With observation uncertainty, no decision Markov Chain (MC) No observation uncertainty, with decision Markov Decision Process (MDP) Hidden Markov Model (HMM) Partially Observable Markov Decision Process (POMDP) With both observation uncertainty and decision 2

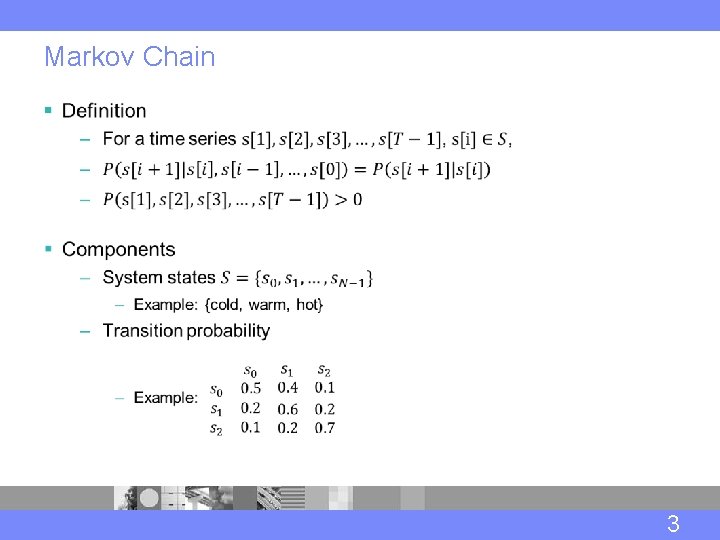

Markov Chain § 3

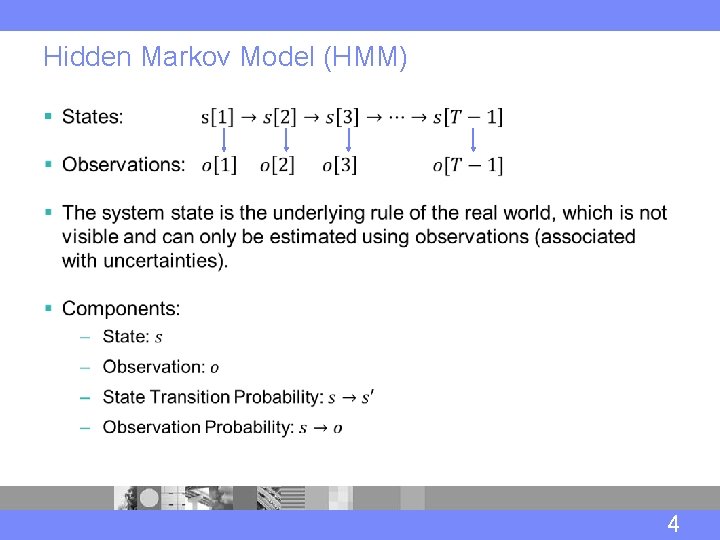

Hidden Markov Model (HMM) § 4

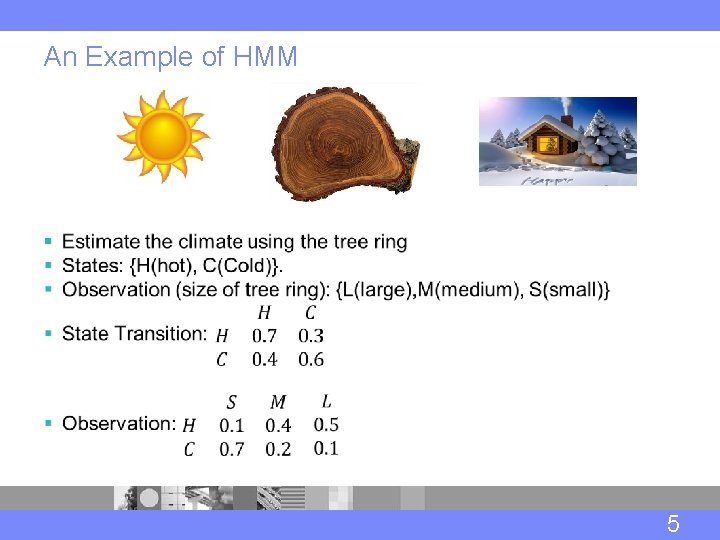

An Example of HMM § 5

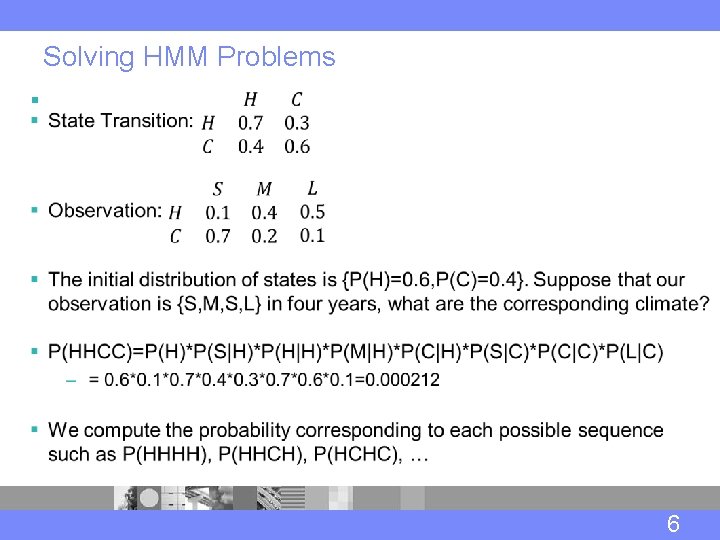

Solving HMM Problems § 6

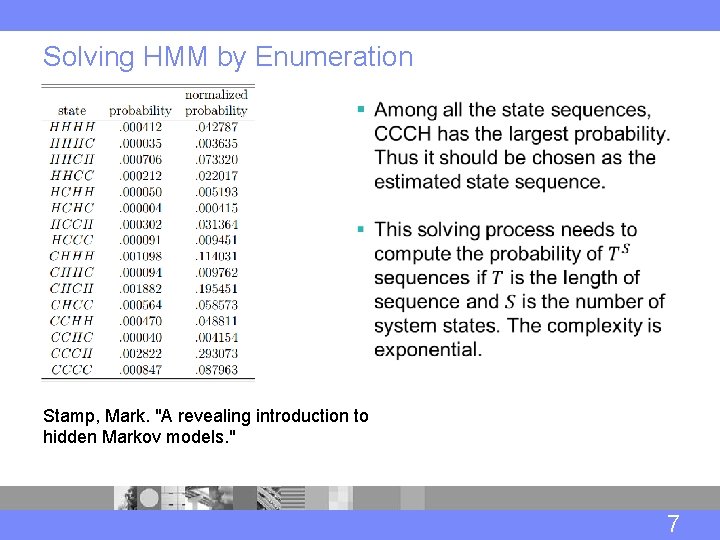

Solving HMM by Enumeration § Stamp, Mark. "A revealing introduction to hidden Markov models. " 7

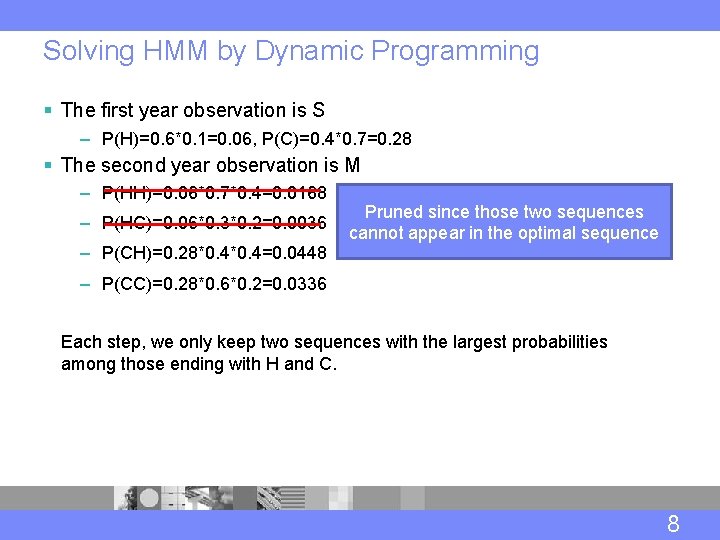

Solving HMM by Dynamic Programming § The first year observation is S – P(H)=0. 6*0. 1=0. 06, P(C)=0. 4*0. 7=0. 28 § The second year observation is M – P(HH)=0. 06*0. 7*0. 4=0. 0168 – P(HC)=0. 06*0. 3*0. 2=0. 0036 – P(CH)=0. 28*0. 4=0. 0448 Pruned since those two sequences cannot appear in the optimal sequence – P(CC)=0. 28*0. 6*0. 2=0. 0336 Each step, we only keep two sequences with the largest probabilities among those ending with H and C. 8

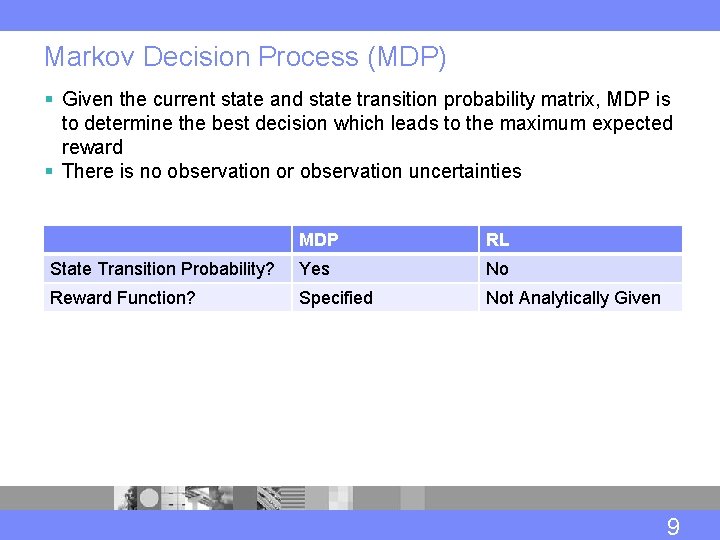

Markov Decision Process (MDP) § Given the current state and state transition probability matrix, MDP is to determine the best decision which leads to the maximum expected reward § There is no observation or observation uncertainties MDP RL State Transition Probability? Yes No Reward Function? Specified Not Analytically Given 9

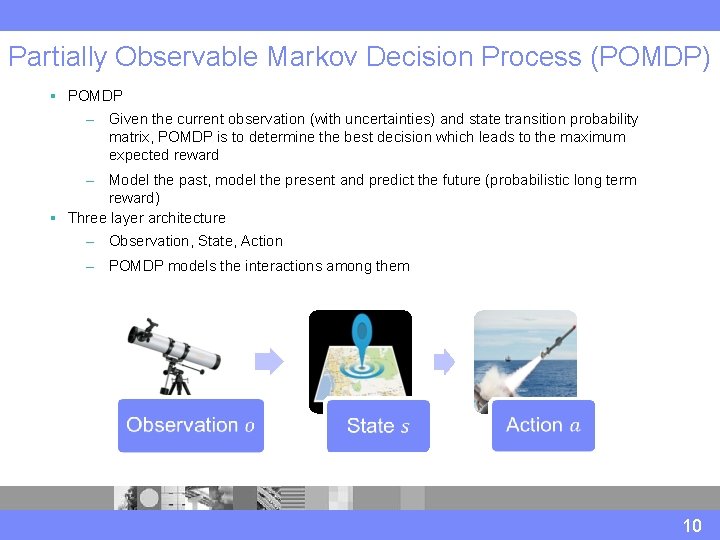

Partially Observable Markov Decision Process (POMDP) § POMDP – Given the current observation (with uncertainties) and state transition probability matrix, POMDP is to determine the best decision which leads to the maximum expected reward – Model the past, model the present and predict the future (probabilistic long term reward) § Three layer architecture – Observation, State, Action – POMDP models the interactions among them 10

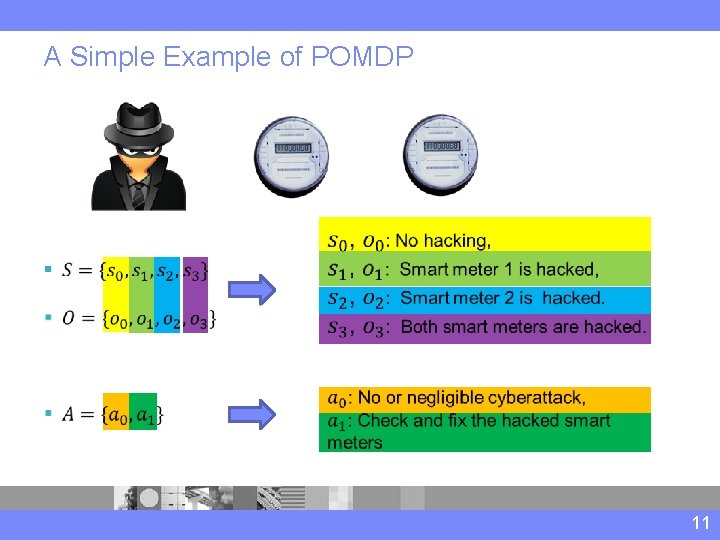

A Simple Example of POMDP § 11

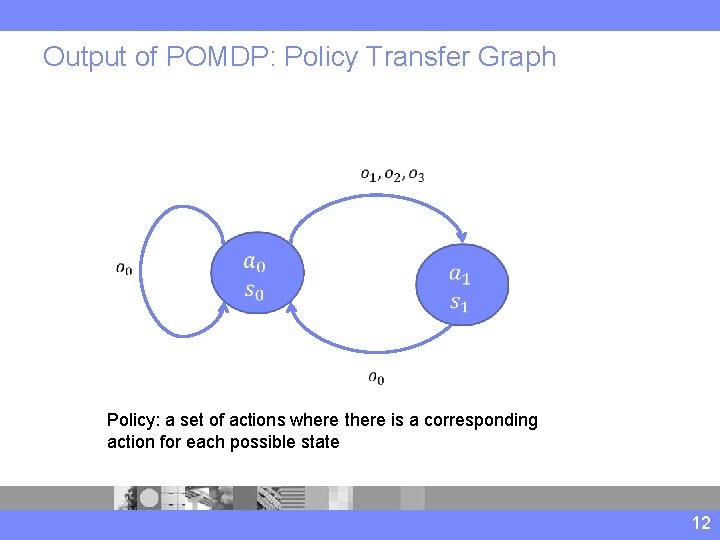

Output of POMDP: Policy Transfer Graph Policy: a set of actions where there is a corresponding action for each possible state 12

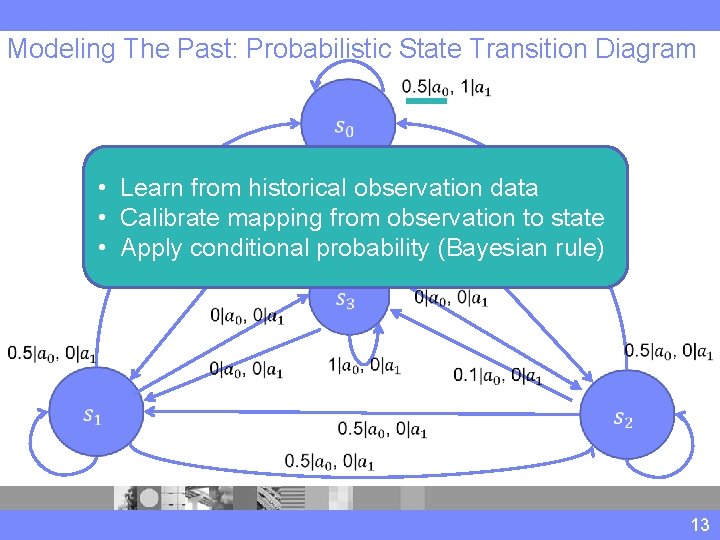

Modeling The Past: Probabilistic State Transition Diagram • Learn from historical observation data • Calibrate mapping from observation to state • Apply conditional probability (Bayesian rule) 13

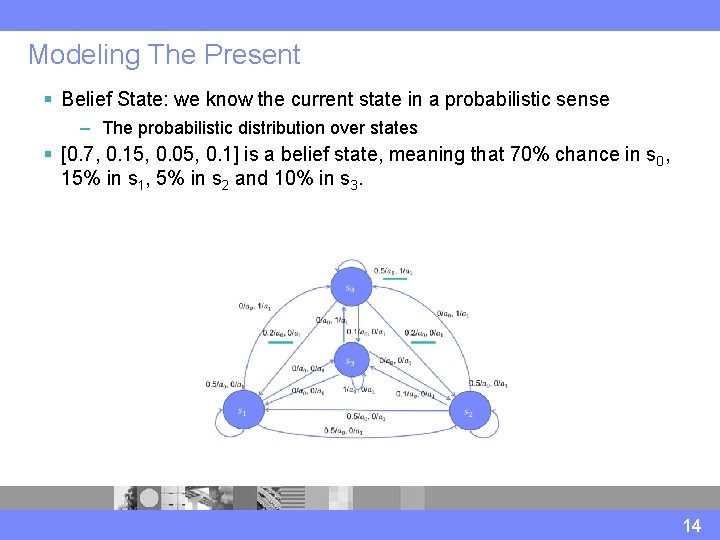

Modeling The Present § Belief State: we know the current state in a probabilistic sense – The probabilistic distribution over states § [0. 7, 0. 15, 0. 05, 0. 1] is a belief state, meaning that 70% chance in s 0, 15% in s 1, 5% in s 2 and 10% in s 3. 14

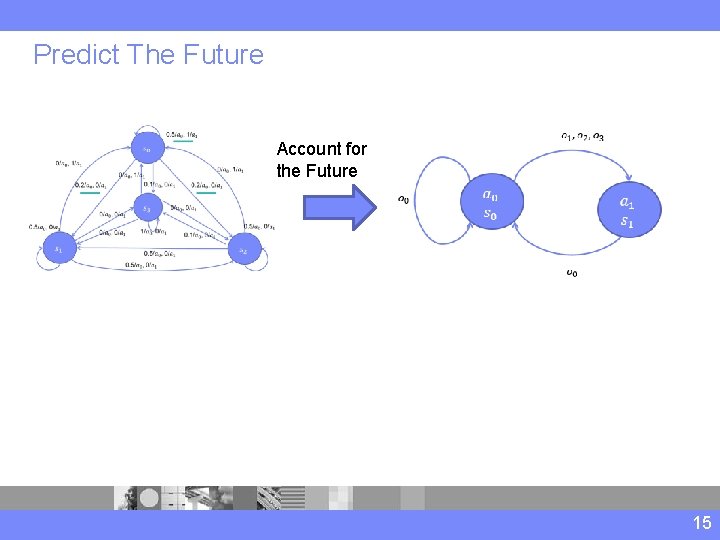

Predict The Future Account for the Future 15

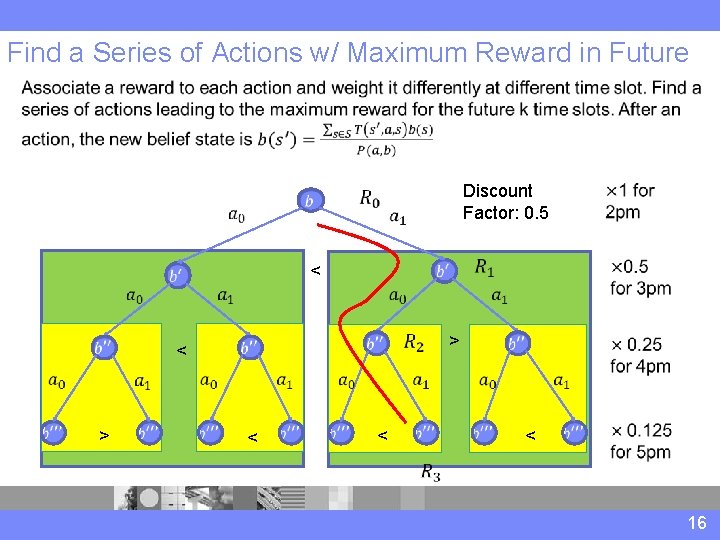

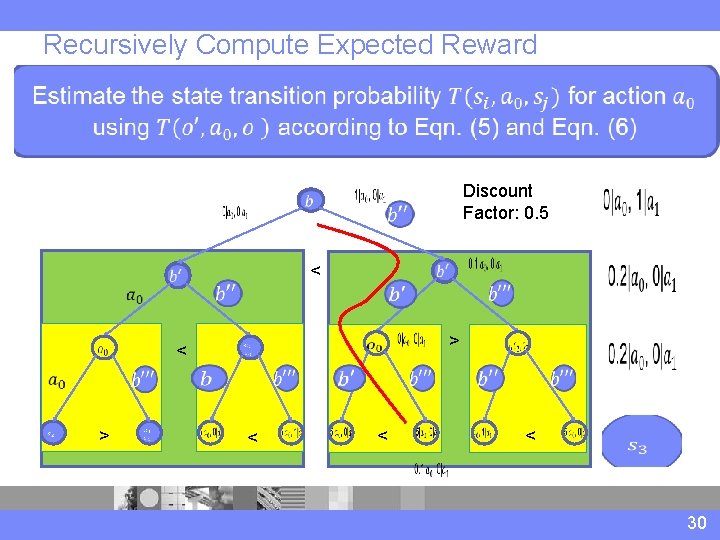

Find a Series of Actions w/ Maximum Reward in Future < > < Discount Factor: 0. 5 < > < 16

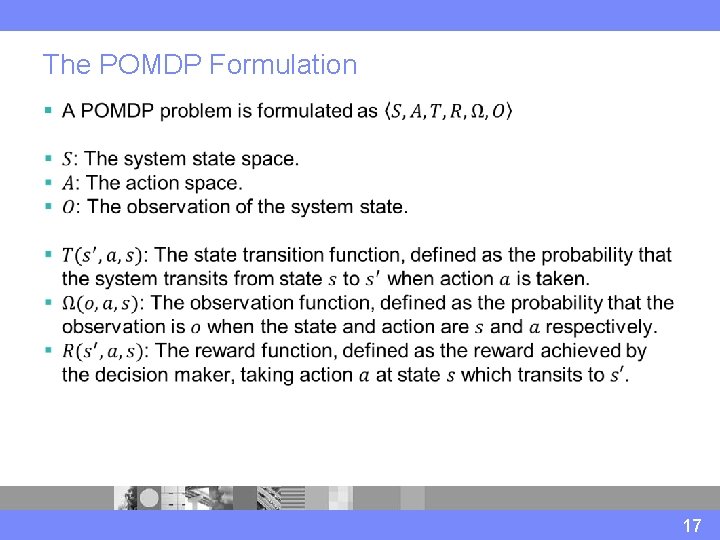

The POMDP Formulation § 17

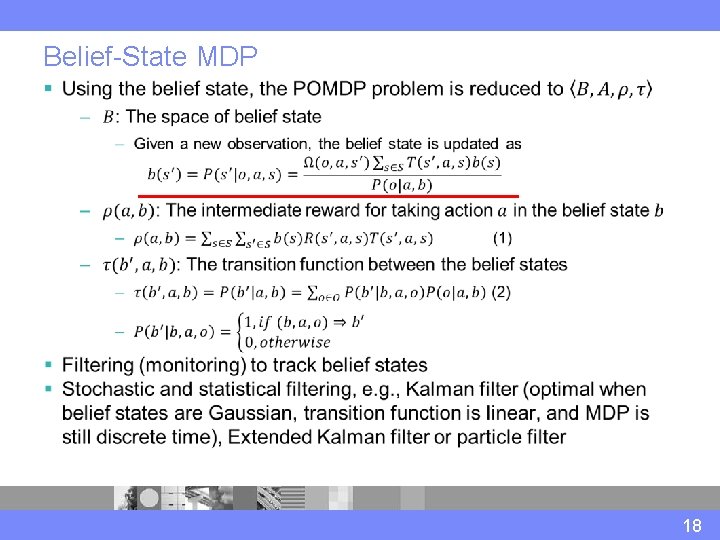

Belief-State MDP § 18

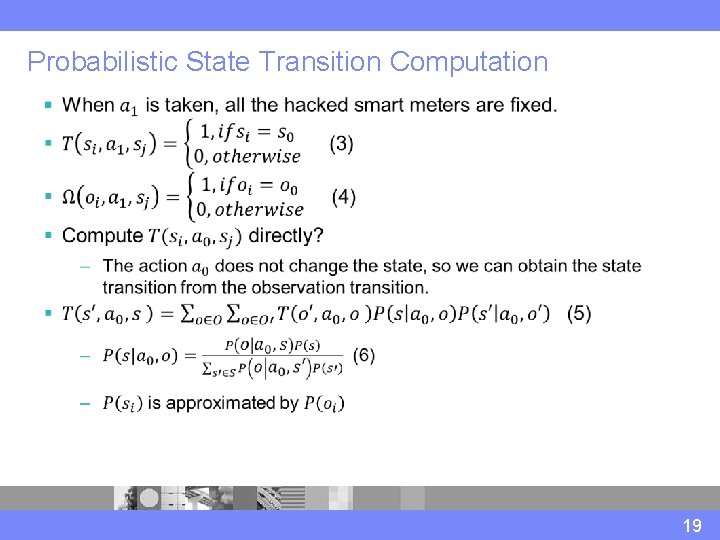

Probabilistic State Transition Computation § 19

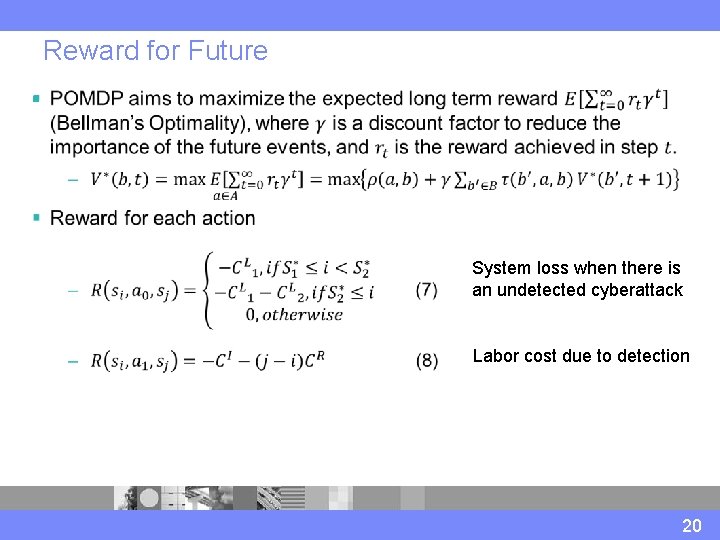

Reward for Future § System loss when there is an undetected cyberattack Labor cost due to detection 20

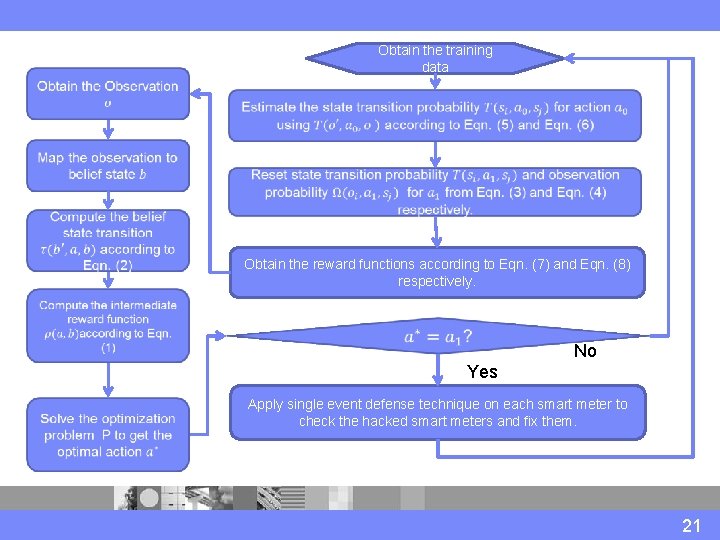

Obtain the training data Obtain the reward functions according to Eqn. (7) and Eqn. (8) respectively. No IYes Apply single event defense technique on each smart meter to check the hacked smart meters and fix them. 21

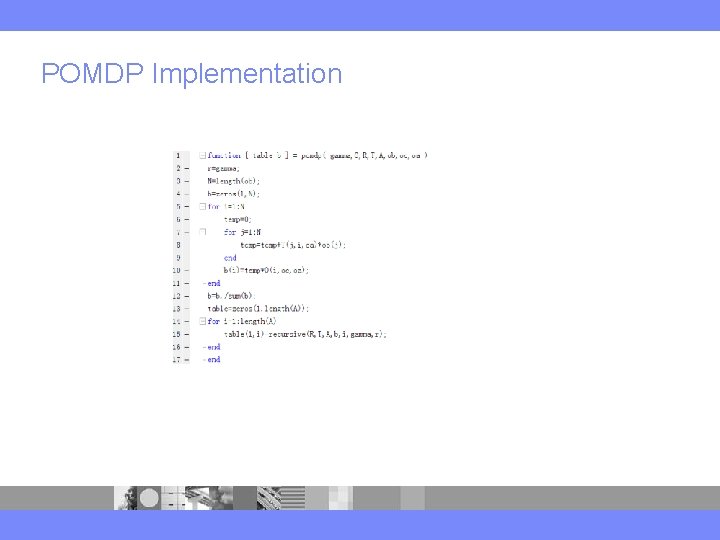

POMDP Implementation

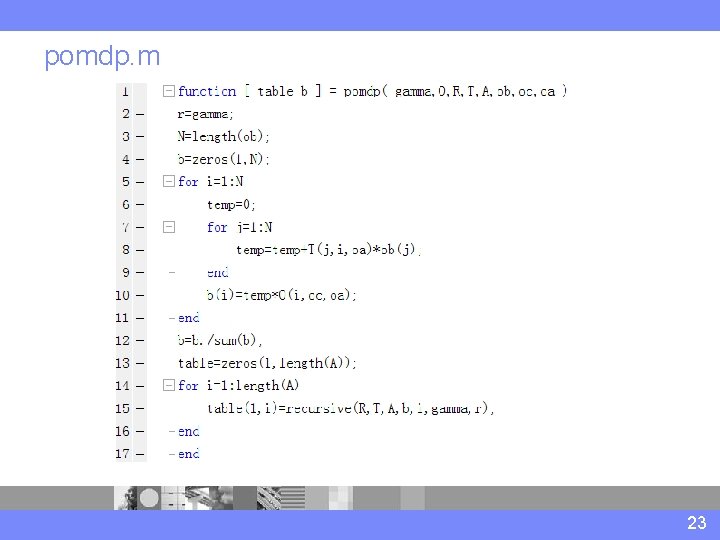

pomdp. m 23

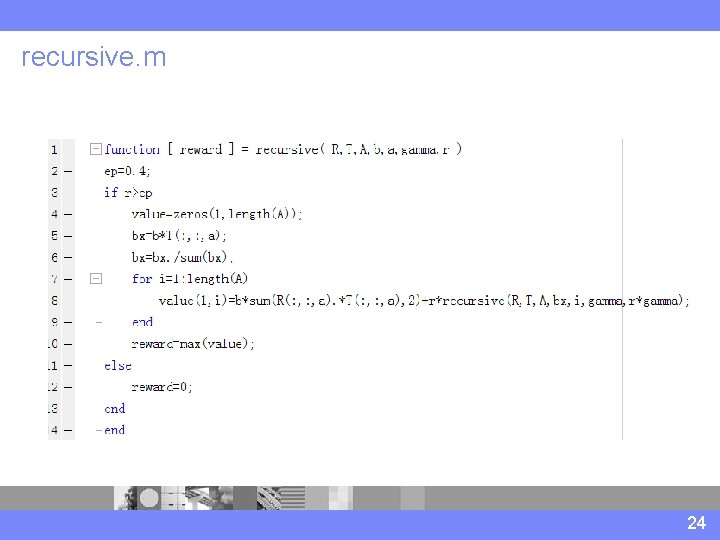

recursive. m 24

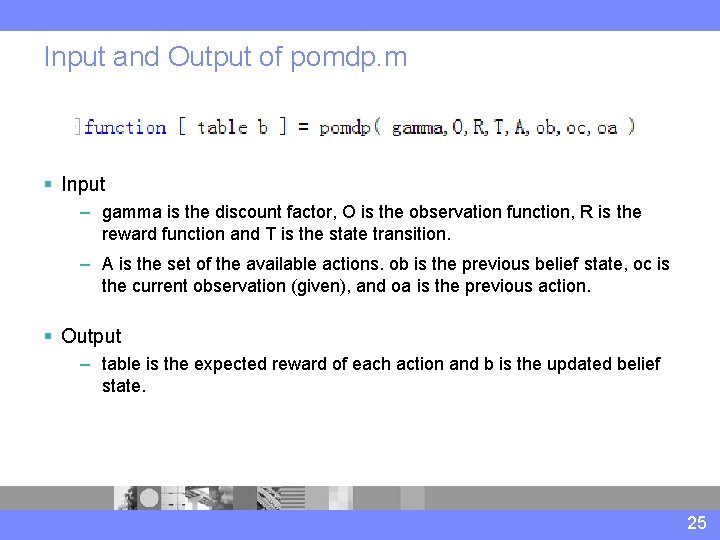

Input and Output of pomdp. m § Input – gamma is the discount factor, O is the observation function, R is the reward function and T is the state transition. – A is the set of the available actions. ob is the previous belief state, oc is the current observation (given), and oa is the previous action. § Output – table is the expected reward of each action and b is the updated belief state. 25

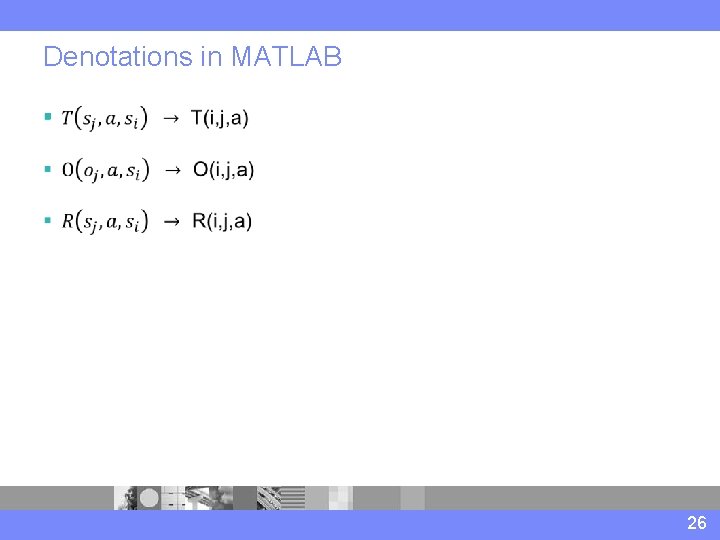

Denotations in MATLAB § 26

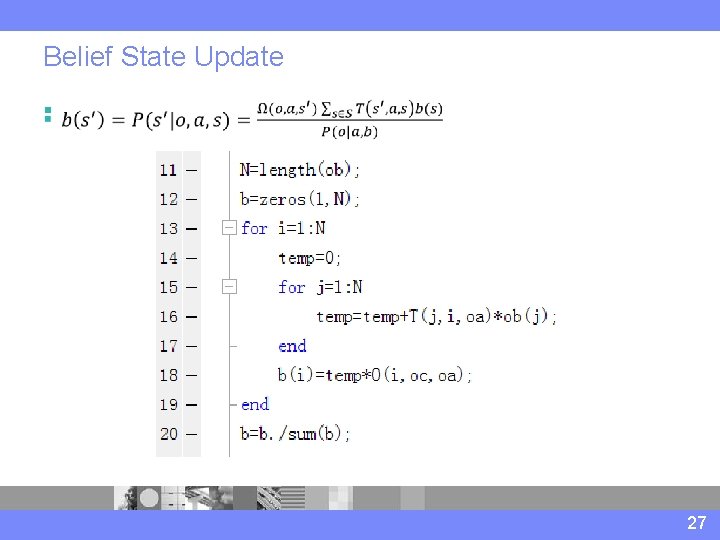

Belief State Update § 27

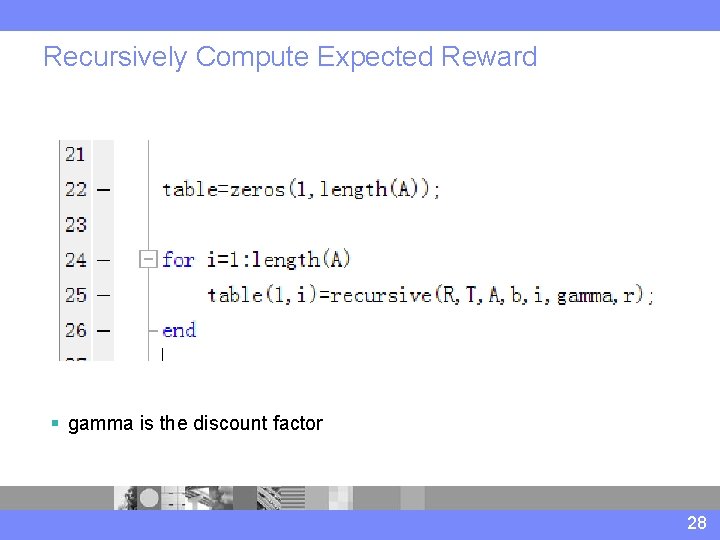

Recursively Compute Expected Reward § gamma is the discount factor 28

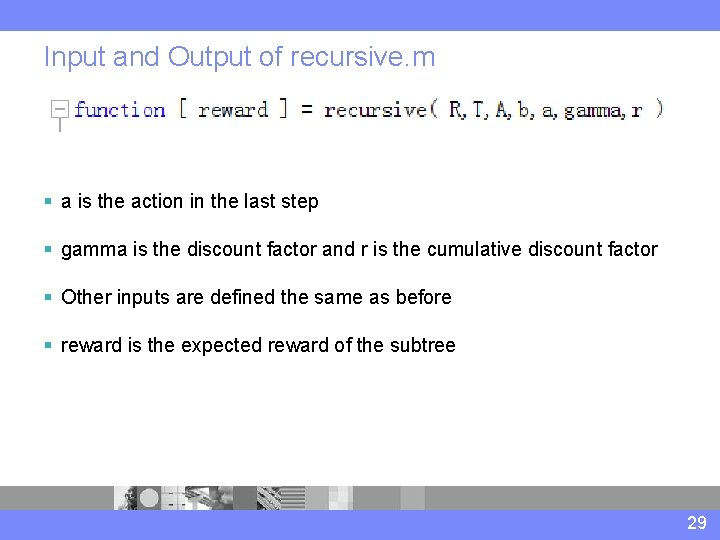

Input and Output of recursive. m § a is the action in the last step § gamma is the discount factor and r is the cumulative discount factor § Other inputs are defined the same as before § reward is the expected reward of the subtree 29

Recursively Compute Expected Reward < > < Discount Factor: 0. 5 < > < 30

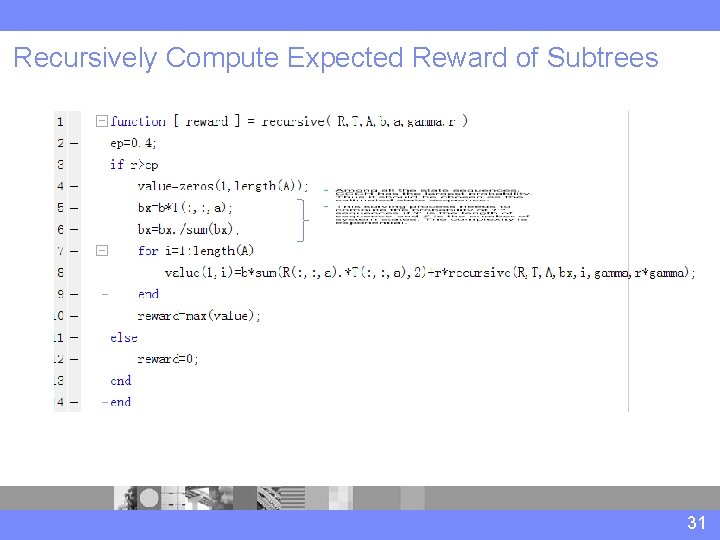

Recursively Compute Expected Reward of Subtrees 31

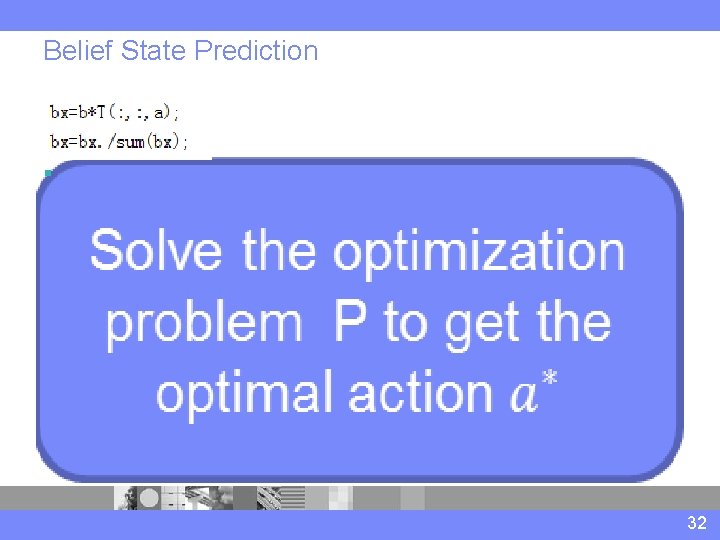

Belief State Prediction § 32

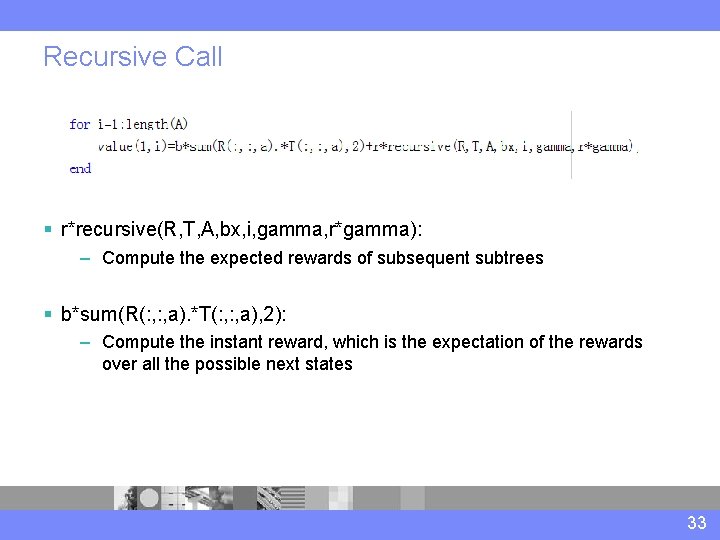

Recursive Call § r*recursive(R, T, A, bx, i, gamma, r*gamma): – Compute the expected rewards of subsequent subtrees § b*sum(R(: , a). *T(: , a), 2): – Compute the instant reward, which is the expectation of the rewards over all the possible next states 33

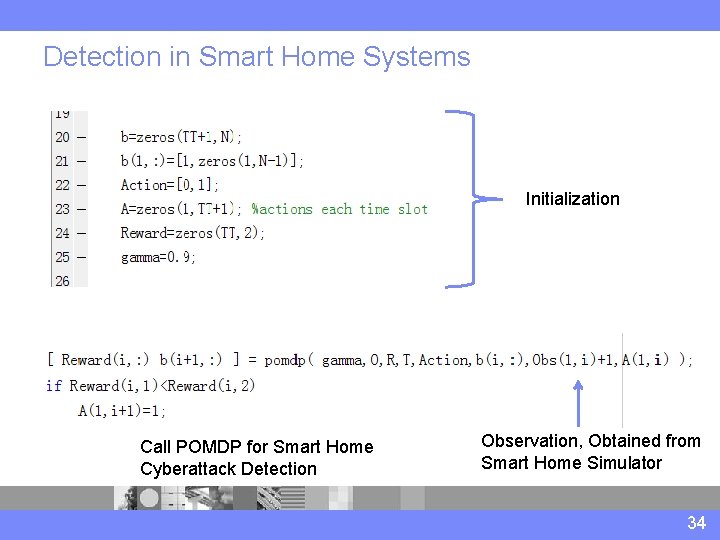

Detection in Smart Home Systems I Call POMDP for Smart Home Cyberattack Detection Initialization Observation, Obtained from Smart Home Simulator 34

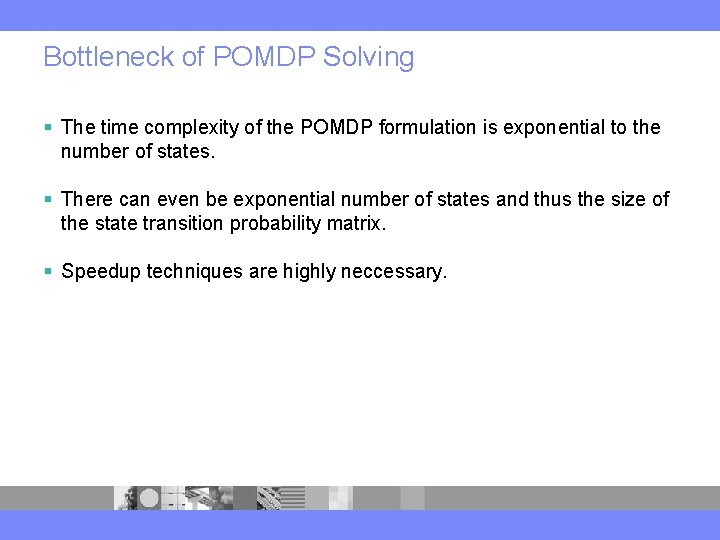

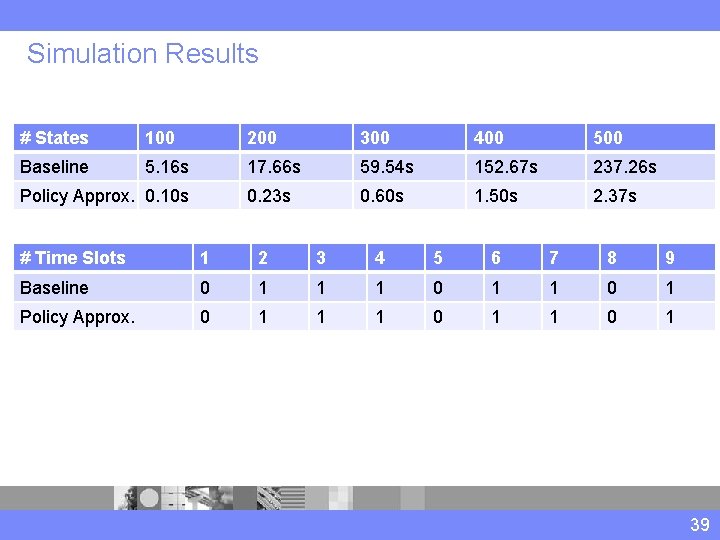

Bottleneck of POMDP Solving § The time complexity of the POMDP formulation is exponential to the number of states. § There can even be exponential number of states and thus the size of the state transition probability matrix. § Speedup techniques are highly neccessary.

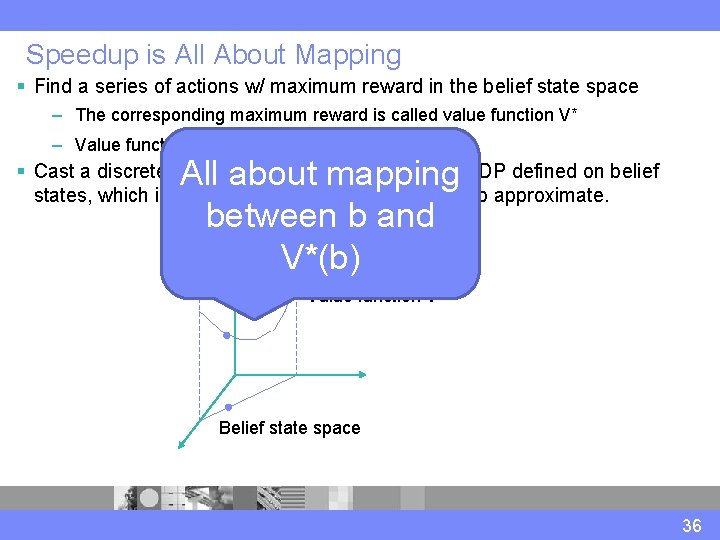

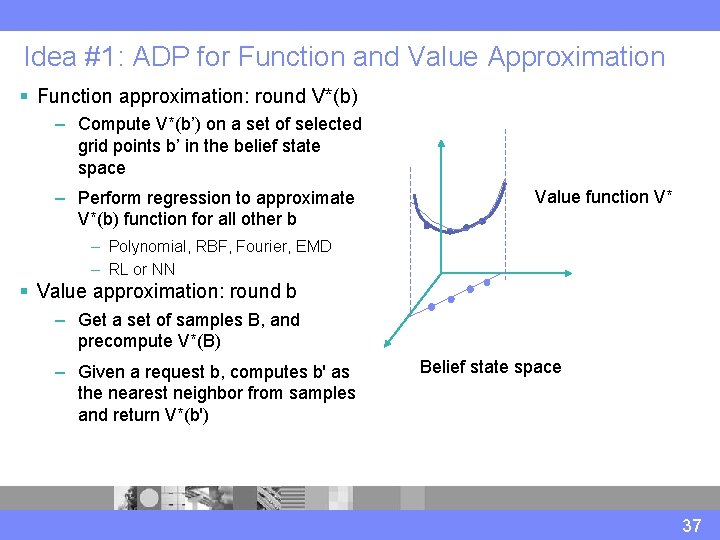

Speedup is All About Mapping § Find a series of actions w/ maximum reward in the belief state space – The corresponding maximum reward is called value function V* – Value function is piece-wise linear and convex. All about mapping between b and V*(b) § Cast a discrete POMDP with uncertainty into an MDP defined on belief states, which is continuous and potentially easier to approximate. Value function V* Belief state space 36

Idea #1: ADP for Function and Value Approximation § Function approximation: round V*(b) – Compute V*(b’) on a set of selected grid points b’ in the belief state space – Perform regression to approximate V*(b) function for all other b Value function V* – Polynomial, RBF, Fourier, EMD – RL or NN § Value approximation: round b – Get a set of samples B, and precompute V*(B) – Given a request b, computes b' as the nearest neighbor from samples and return V*(b') Belief state space 37

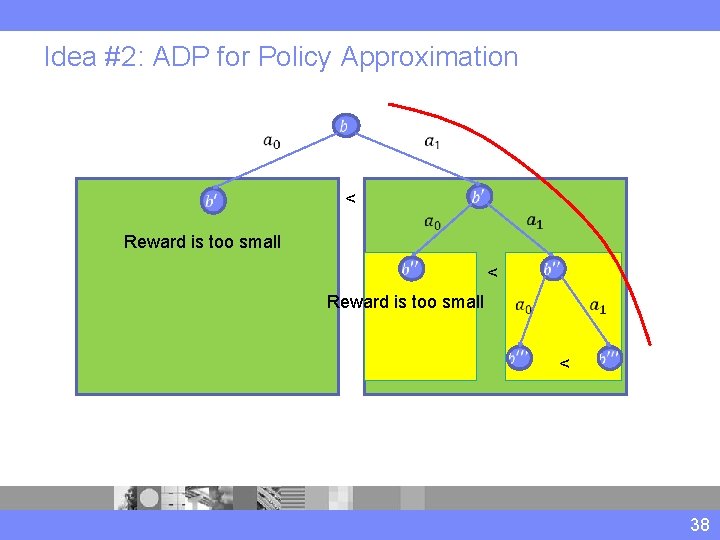

Idea #2: ADP for Policy Approximation < Reward is too small < Reward is too small < 38

Simulation Results # States 100 200 300 400 500 Baseline 5. 16 s 17. 66 s 59. 54 s 152. 67 s 237. 26 s 0. 23 s 0. 60 s 1. 50 s 2. 37 s Policy Approx. 0. 10 s # Time Slots 1 2 3 4 5 6 7 8 9 Baseline 0 1 1 1 0 1 Policy Approx. 0 1 1 1 0 1 39

- Slides: 39