Approximate Inference ParticleBased Methods Eran Segal Weizmann Institute

Approximate Inference: Particle-Based Methods Eran Segal Weizmann Institute

Inference Complexity Summary n NP-Hard n n Exact inference Approximate inference n n n with relative error with absolute error < 0. 5 (given evidence) Hopeless? n No, we will see many network structures that have provably efficient algorithms and we will see cases when approximate inference works efficiently with high accuracy

Approximate Inference n Particle-based methods n Create instances that represent part of the probability mass n n n Random sampling Deterministically search for high probability assignments Global methods n Approximate the distribution in its entirety n n Use exact inference on a simpler (but close) network Perform inference in the original network but approximate some steps of the process (e. g. , ignore certain computations or approximate some intermediate results)

Particle-Based Methods n Particle generation process n n n Generate particles deterministically Generate particles by sampling Particle definition n n Full particles – complete assignments to all variables Distributional particles – assignment to part of the variables General framework § Generate samples x[1], . . . , x[M] § Estimate function by

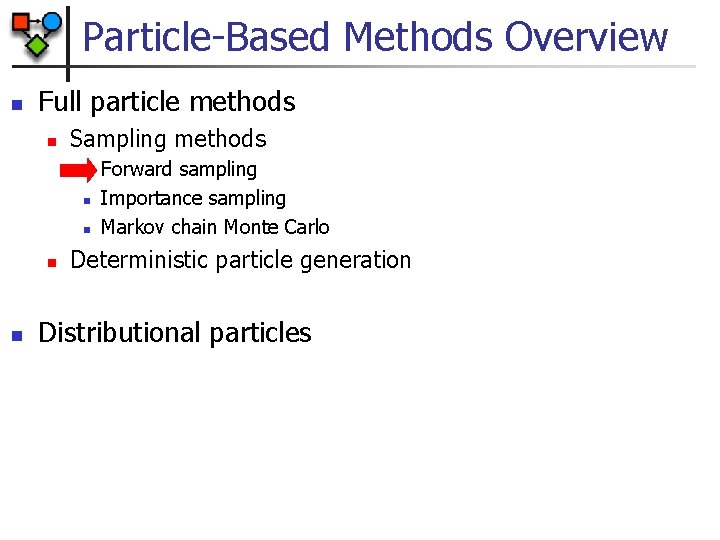

Particle-Based Methods Overview n Full particle methods n Sampling methods n n n Forward sampling Importance sampling Markov chain Monte Carlo Deterministic particle generation Distributional particles

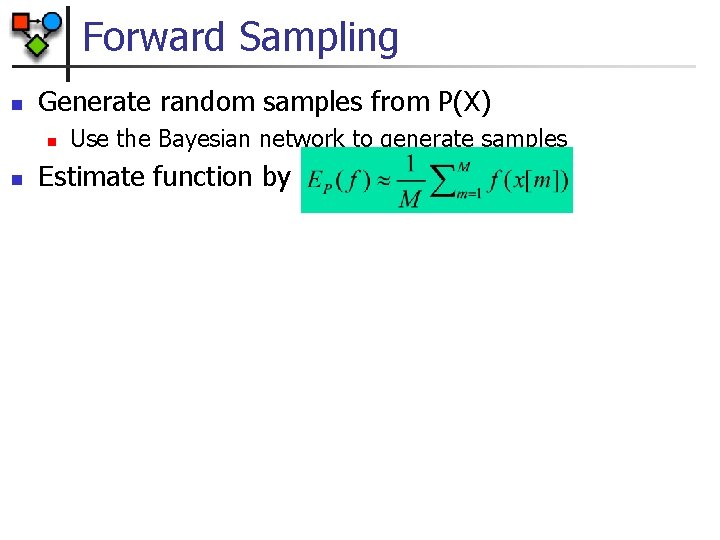

Forward Sampling n Generate random samples from P(X) n n Use the Bayesian network to generate samples Estimate function by

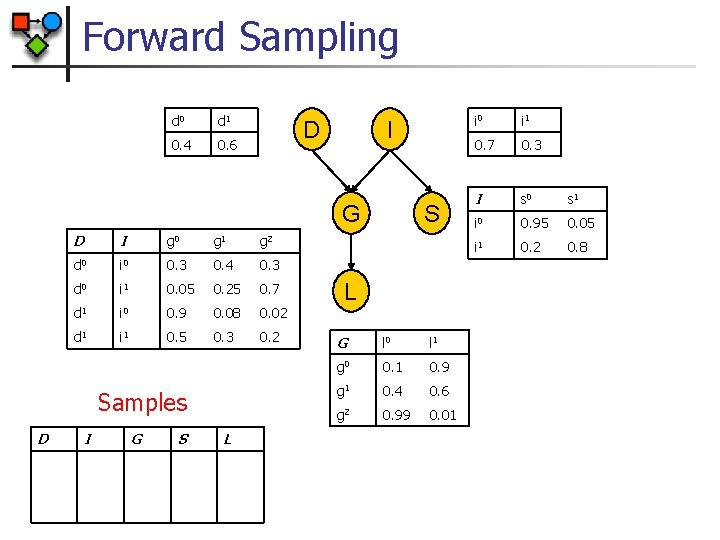

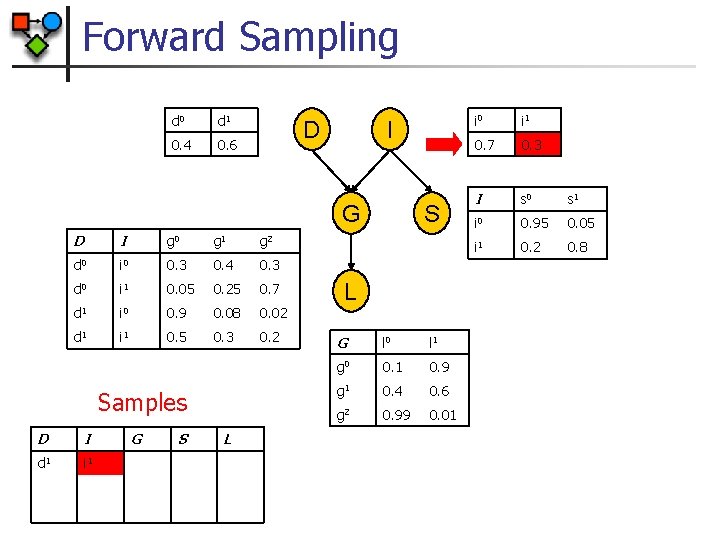

Forward Sampling d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8

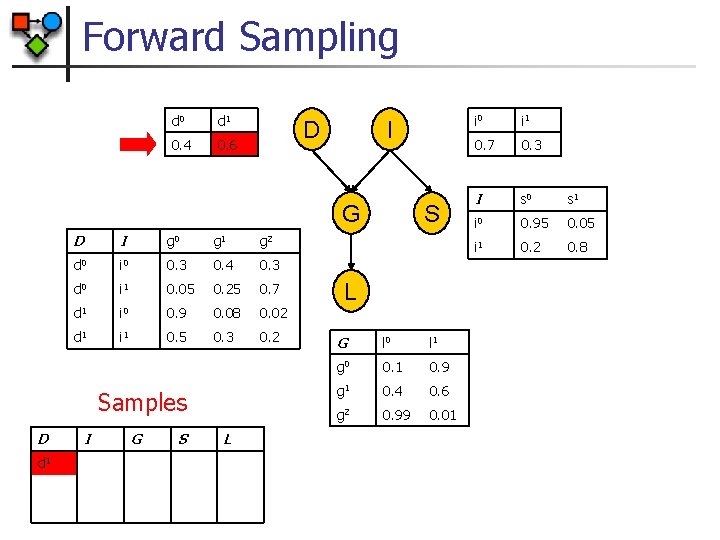

Forward Sampling d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D d 1 I G S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8

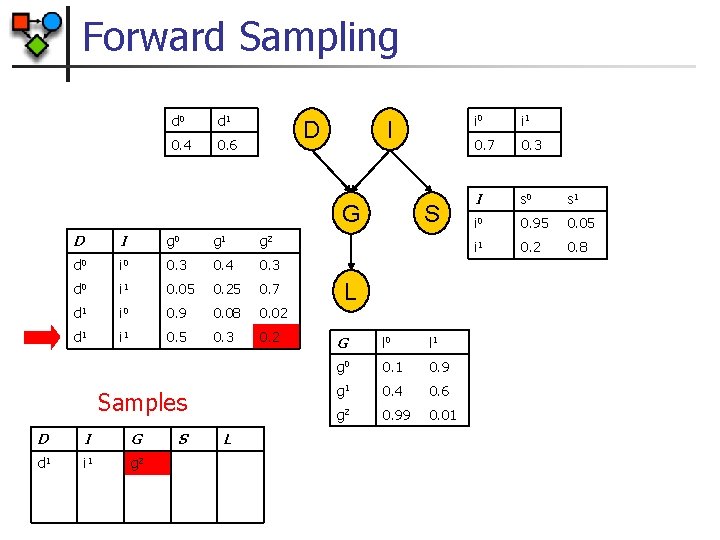

Forward Sampling d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I d 1 i 1 G S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8

Forward Sampling d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G d 1 i 1 g 2 S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8

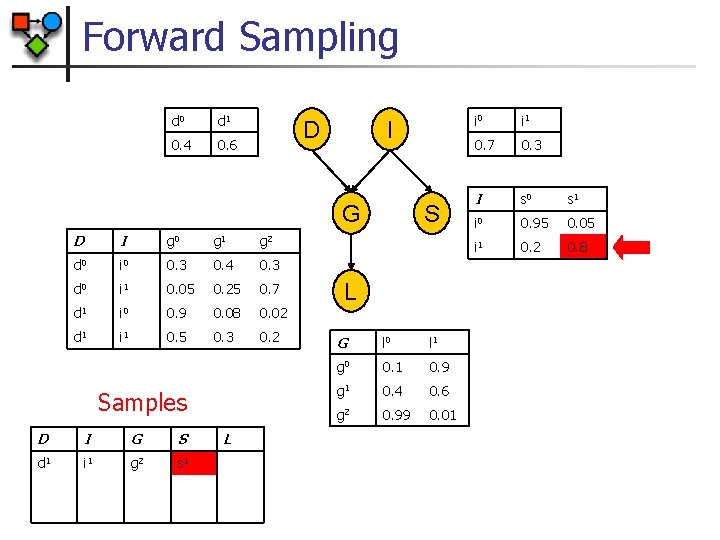

Forward Sampling d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G S d 1 i 1 g 2 s 1 L S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8

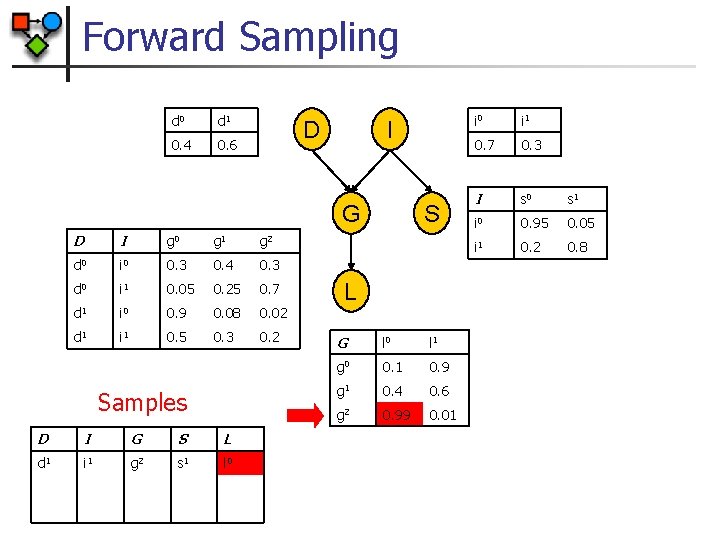

Forward Sampling d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G S L d 1 i 1 g 2 s 1 l 0 S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8

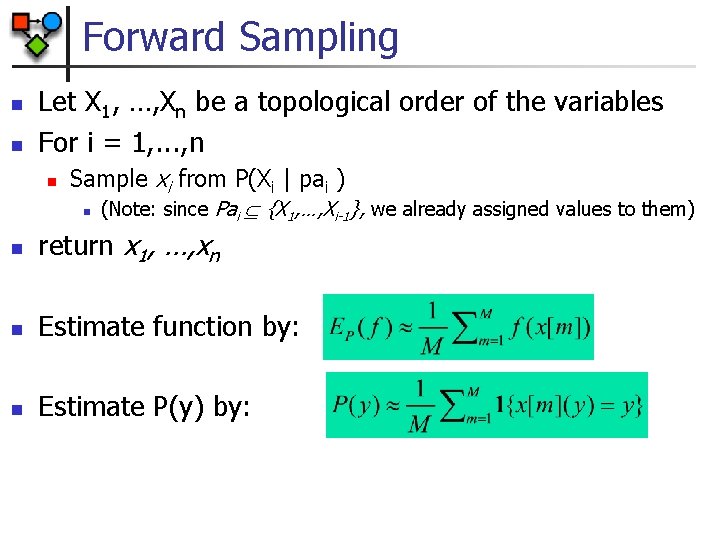

Forward Sampling n n Let X 1, …, Xn be a topological order of the variables For i = 1, . . . , n n Sample xi from P(Xi | pai ) n (Note: since Pai {X 1, …, Xi-1}, we already assigned values to them) n return x 1, …, xn n Estimate function by: n Estimate P(y) by:

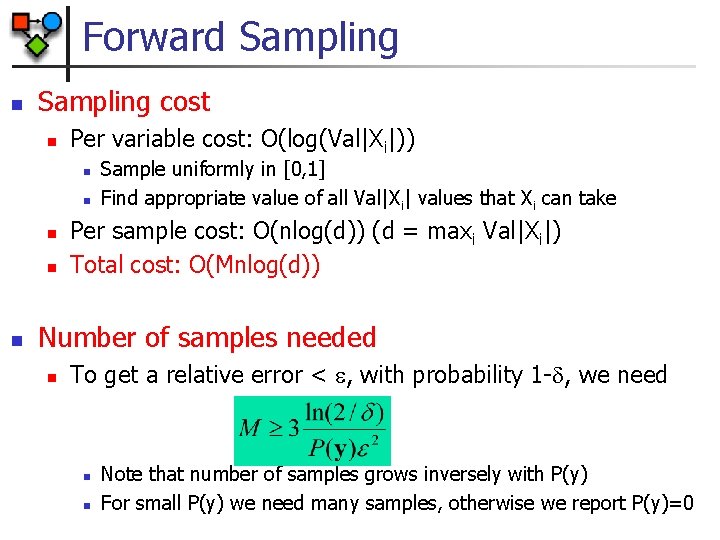

Forward Sampling n Sampling cost n Per variable cost: O(log(Val|Xi|)) n n n Sample uniformly in [0, 1] Find appropriate value of all Val|Xi| values that Xi can take Per sample cost: O(nlog(d)) (d = maxi Val|Xi|) Total cost: O(Mnlog(d)) Number of samples needed n To get a relative error < , with probability 1 - , we need n n Note that number of samples grows inversely with P(y) For small P(y) we need many samples, otherwise we report P(y)=0

Rejection Sampling n n In general we need to compute P(Y|e) We can do so with rejection sampling n n Generate samples as in forward sampling Reject samples in which E e Estimate function from accepted samples Problem: if evidence is unlikely (e. g. , P(e)=0. 001)) then we generate many rejected samples

Particle-Based Methods Overview n Full particle methods n Sampling methods n n n Forward sampling Importance sampling Markov chain Monte Carlo Deterministic particle generation Distributional particles

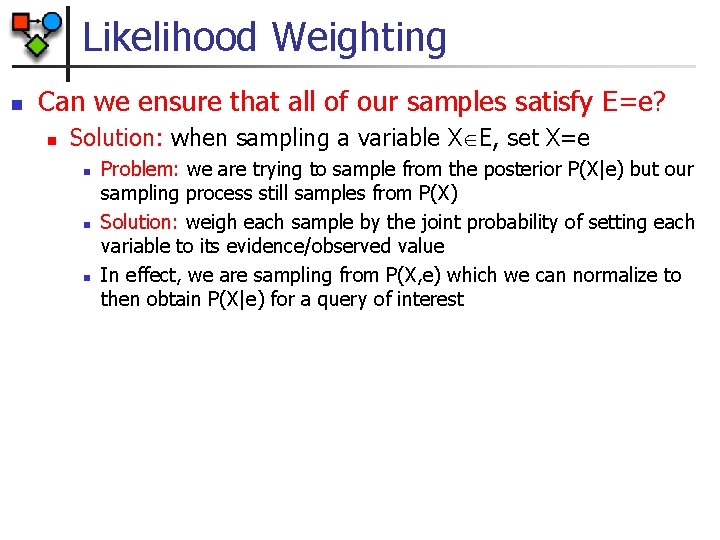

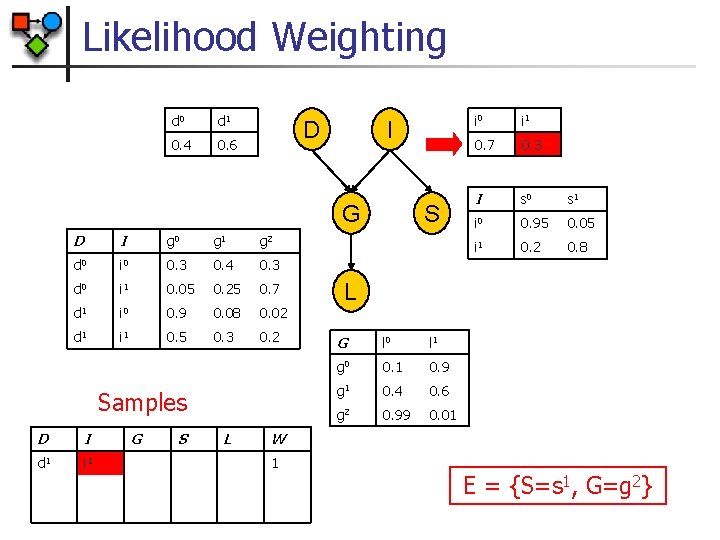

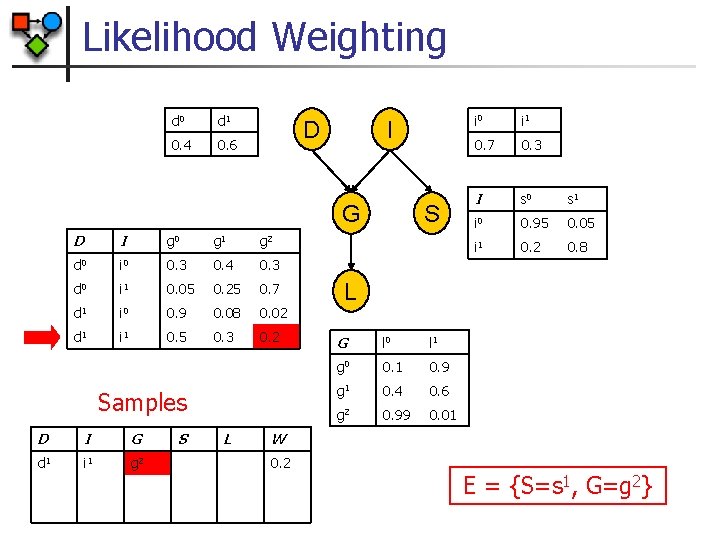

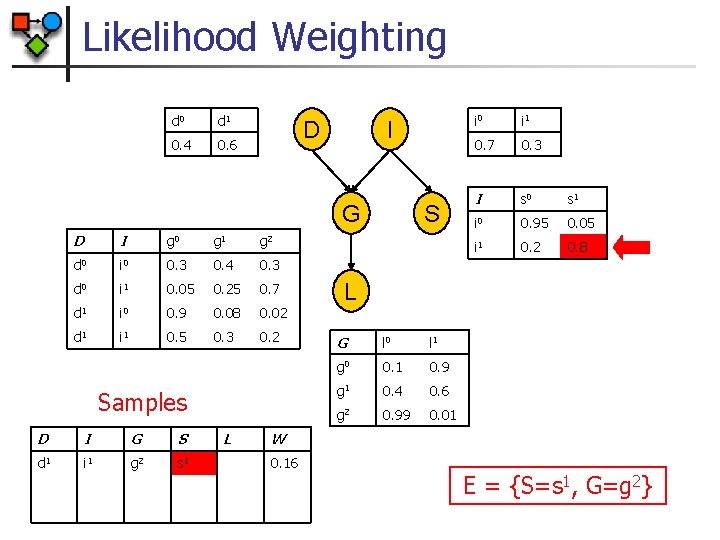

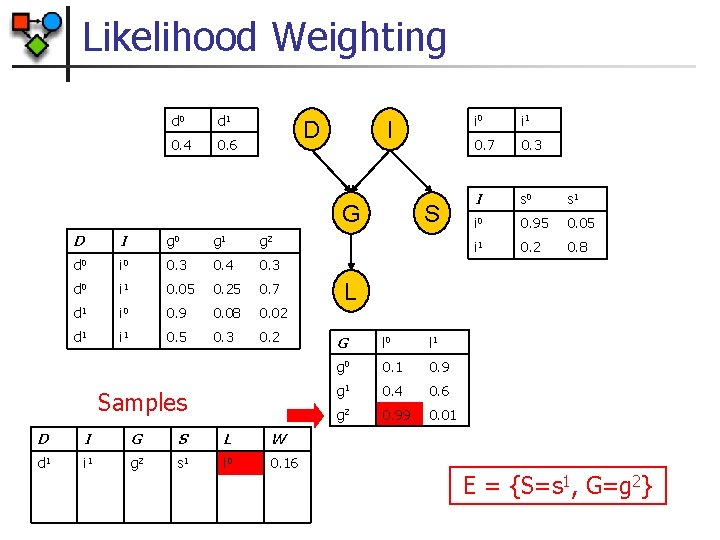

Likelihood Weighting n Can we ensure that all of our samples satisfy E=e? n Solution: when sampling a variable X E, set X=e n n n Problem: we are trying to sample from the posterior P(X|e) but our sampling process still samples from P(X) Solution: weigh each sample by the joint probability of setting each variable to its evidence/observed value In effect, we are sampling from P(X, e) which we can normalize to then obtain P(X|e) for a query of interest

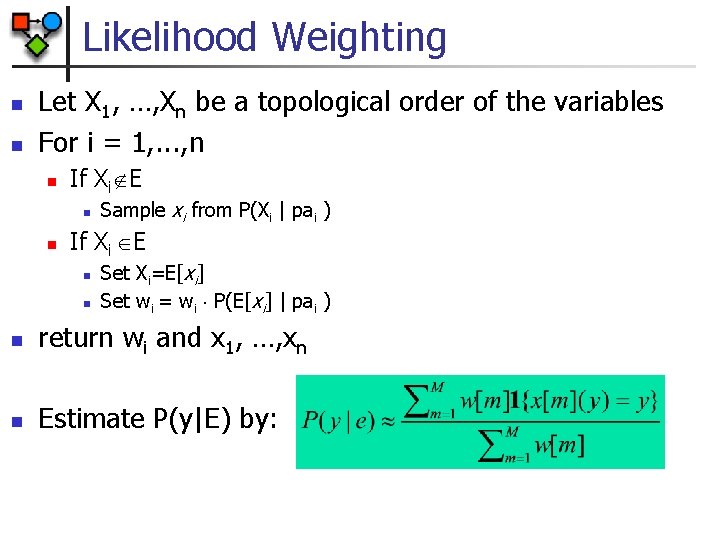

Likelihood Weighting n n Let X 1, …, Xn be a topological order of the variables For i = 1, . . . , n n If Xi E n n Sample xi from P(Xi | pai ) If Xi E n n Set Xi=E[xi] Set wi = wi P(E[xi] | pai ) n return wi and x 1, …, xn n Estimate P(y|E) by:

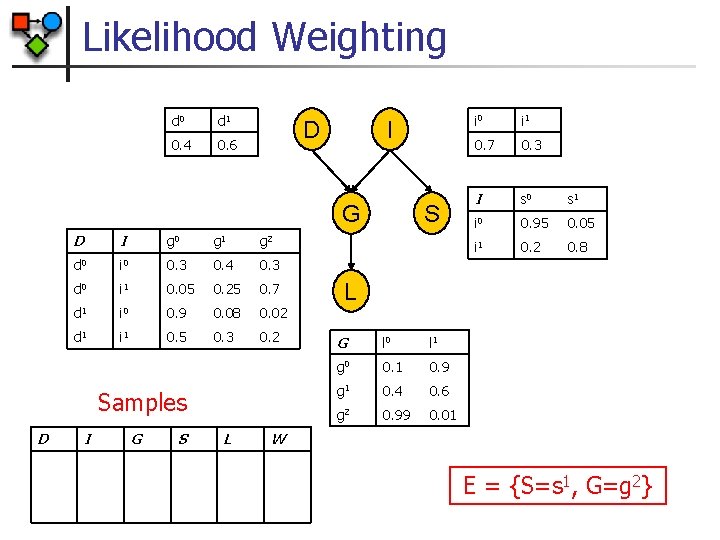

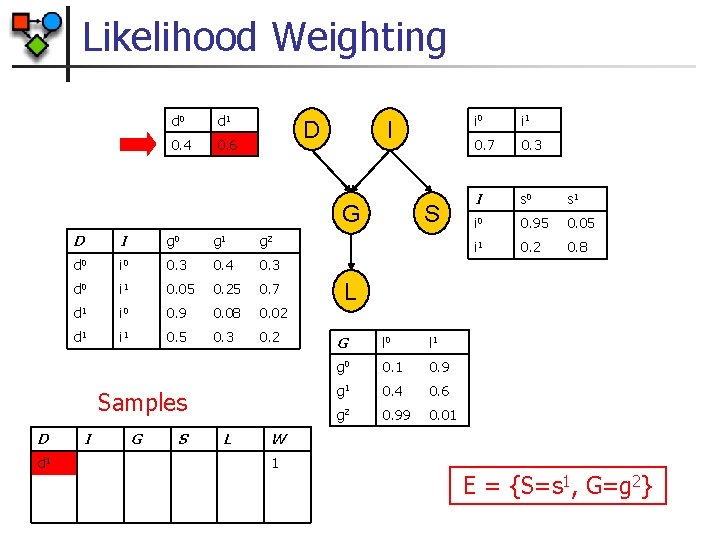

Likelihood Weighting d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G S L S i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8 L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 W E = {S=s 1, G=g 2}

Likelihood Weighting d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D d 1 I G S L S i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8 L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 W 1 E = {S=s 1, G=g 2}

Likelihood Weighting d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I d 1 i 1 G S L S i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8 L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 W 1 E = {S=s 1, G=g 2}

Likelihood Weighting d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G d 1 i 1 g 2 S L S i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8 L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 W 0. 2 E = {S=s 1, G=g 2}

Likelihood Weighting d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G S d 1 i 1 g 2 s 1 L S i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8 L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 W 0. 16 E = {S=s 1, G=g 2}

Likelihood Weighting d 0 d 1 0. 4 0. 6 D I G D I g 0 d 0 i 0 0. 3 0. 4 0. 3 d 0 i 1 0. 05 0. 25 0. 7 d 1 i 0 0. 9 0. 08 0. 02 d 1 i 1 0. 5 0. 3 0. 2 g 1 g 2 Samples D I G S L W d 1 i 1 g 2 s 1 l 0 0. 16 S i 0 i 1 0. 7 0. 3 I s 0 s 1 i 0 0. 95 0. 05 i 1 0. 2 0. 8 L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 E = {S=s 1, G=g 2}

Importance Sampling n Idea: to estimate a function relative to P, rather than sampling from the distribution P, sample from another distribution Q n n n P is called the target distribution Q is called the proposal or the sampling distribution Requirement from Q: P(x) > 0 Q(x) > 0 n n Q does not ‘ignore’ any non-zero probability events in P In practice, performance depends on similarity between Q and P

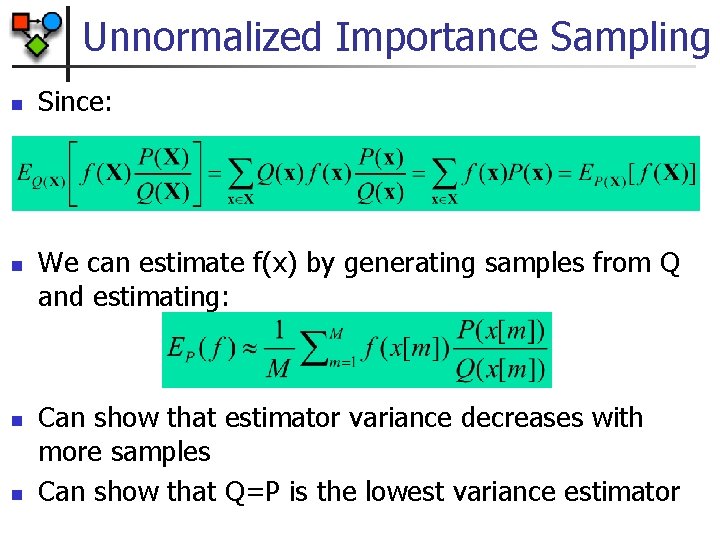

Unnormalized Importance Sampling n n Since: We can estimate f(x) by generating samples from Q and estimating: Can show that estimator variance decreases with more samples Can show that Q=P is the lowest variance estimator

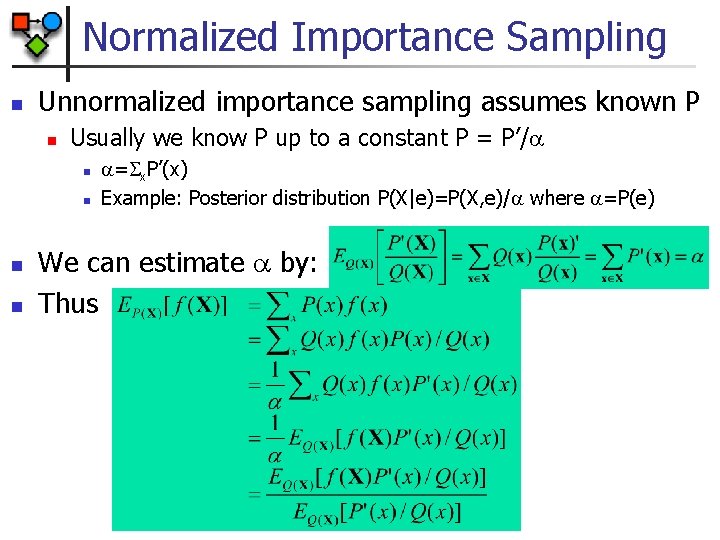

Normalized Importance Sampling n Unnormalized importance sampling assumes known P n Usually we know P up to a constant P = P’/ n n = x. P’(x) Example: Posterior distribution P(X|e)=P(X, e)/ where =P(e) We can estimate by: Thus

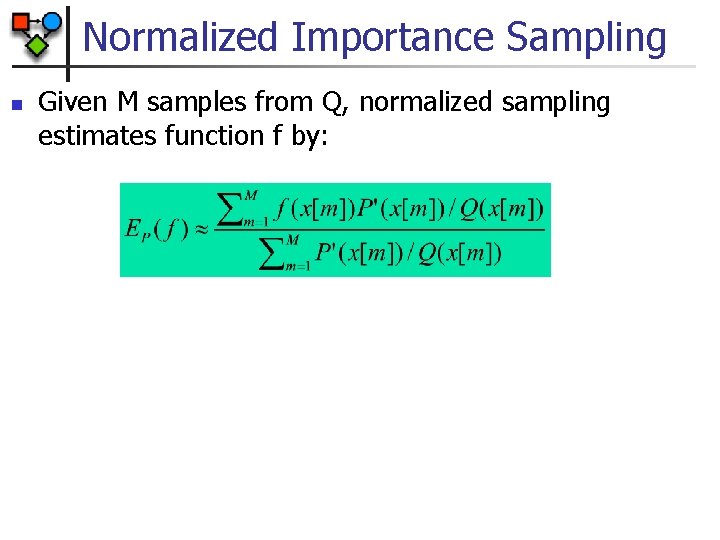

Normalized Importance Sampling n Given M samples from Q, normalized sampling estimates function f by:

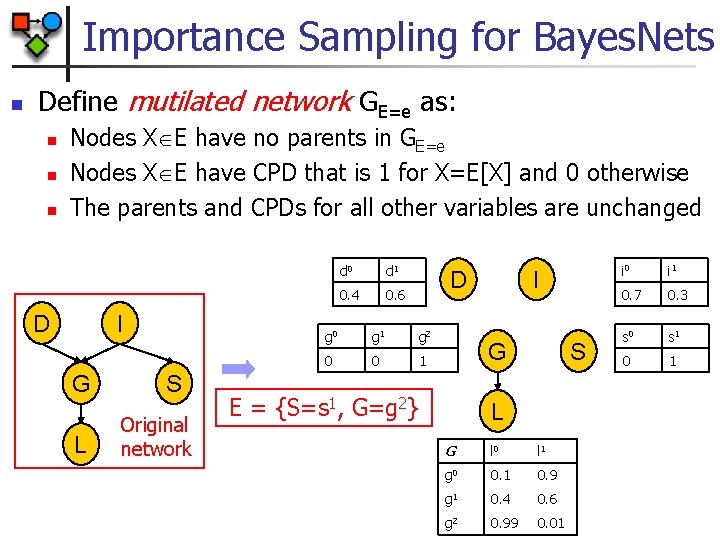

Importance Sampling for Bayes. Nets n Define mutilated network GE=e as: n n n Nodes X E have no parents in GE=e Nodes X E have CPD that is 1 for X=E[X] and 0 otherwise The parents and CPDs for all other variables are unchanged D I G L S Original network d 0 d 1 0. 4 0. 6 D g 0 g 1 g 2 0 0 1 I G E = {S=s 1, G=g 2} S L G l 0 l 1 g 0 0. 1 0. 9 g 1 0. 4 0. 6 g 2 0. 99 0. 01 i 0 i 1 0. 7 0. 3 s 0 s 1 0 1

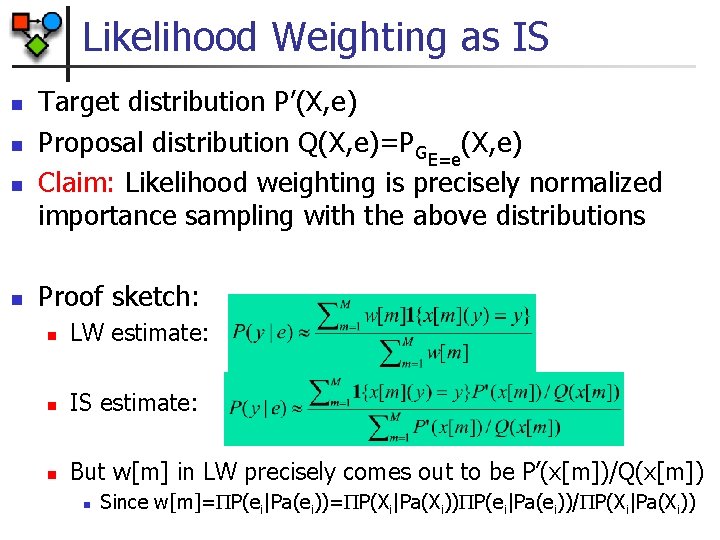

Likelihood Weighting as IS n n Target distribution P’(X, e) Proposal distribution Q(X, e)=PGE=e(X, e) Claim: Likelihood weighting is precisely normalized importance sampling with the above distributions Proof sketch: n LW estimate: n IS estimate: n But w[m] in LW precisely comes out to be P’(x[m])/Q(x[m]) n Since w[m]= P(ei|Pa(ei))= P(Xi|Pa(Xi)) P(ei|Pa(ei))/ P(Xi|Pa(Xi))

Particle-Based Methods Overview n Full particle methods n Sampling methods n n n Forward sampling Importance sampling Markov chain Monte Carlo Deterministic particle generation Distributional particles

Markov Chain Monte Carlo n General idea: n n n Define a sampling process that is guaranteed to converge to taking samples from the posterior distribution of interest Generate samples from the sampling process Estimate f(X) from the samples

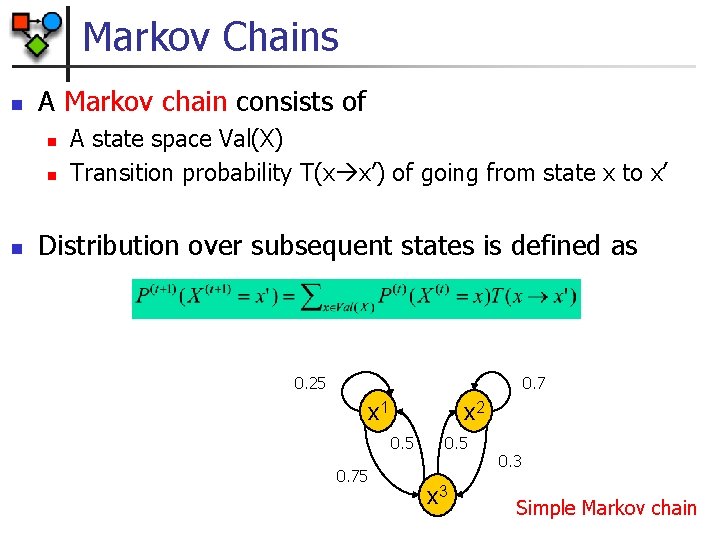

Markov Chains n A Markov chain consists of n n n A state space Val(X) Transition probability T(x x’) of going from state x to x’ Distribution over subsequent states is defined as 0. 25 0. 7 x 1 x 2 0. 5 0. 75 0. 5 x 3 0. 3 Simple Markov chain

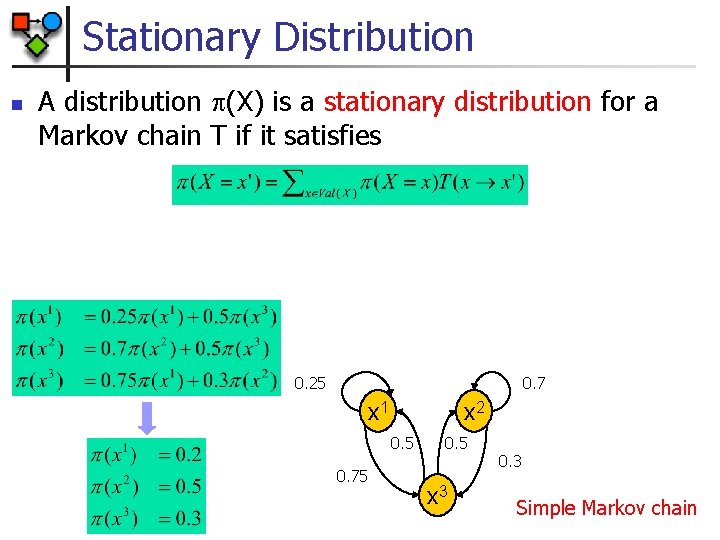

Stationary Distribution n A distribution (X) is a stationary distribution for a Markov chain T if it satisfies 0. 25 0. 7 x 1 x 2 0. 5 0. 75 0. 5 x 3 0. 3 Simple Markov chain

Markov Chains & Stationary Dist. n n n A Markov chain is regular if there is k such that for every x, x’ Val(X), the probability of getting from x to x’ in exactly k steps is greater than zero Theorem: A finite state Markov chain T has a unique stationary distribution if and only if it is regular Goal: Define a Markov chain whose stationary distribution is P(X|e)

Gibbs Sampling n States n n Transition probability n n n Legal (=consistent with evidence) assignments to variables T = T 1. . . Tk Ti = T((ui, xi) (ui, x’i)) = P(x’i|ui) Claim: P(X|e) is a stationary distribution to the chain

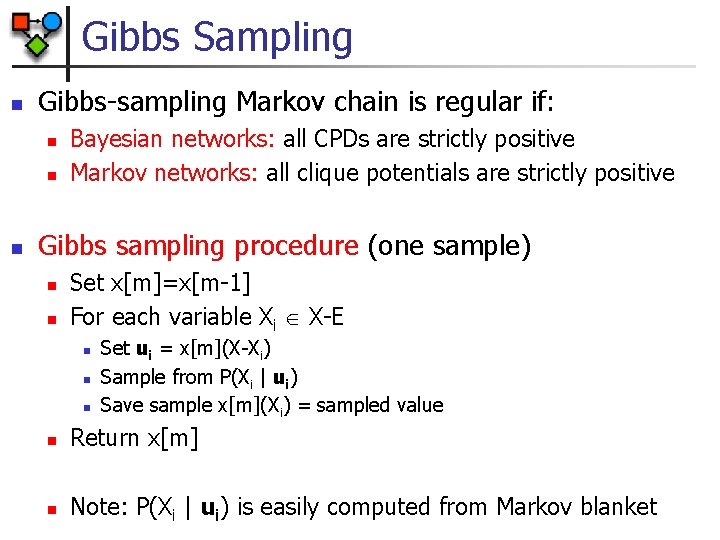

Gibbs Sampling n Gibbs-sampling Markov chain is regular if: n n n Bayesian networks: all CPDs are strictly positive Markov networks: all clique potentials are strictly positive Gibbs sampling procedure (one sample) n n Set x[m]=x[m-1] For each variable Xi X-E n n n Set ui = x[m](X-Xi) Sample from P(Xi | ui) Save sample x[m](Xi) = sampled value n Return x[m] n Note: P(Xi | ui) is easily computed from Markov blanket

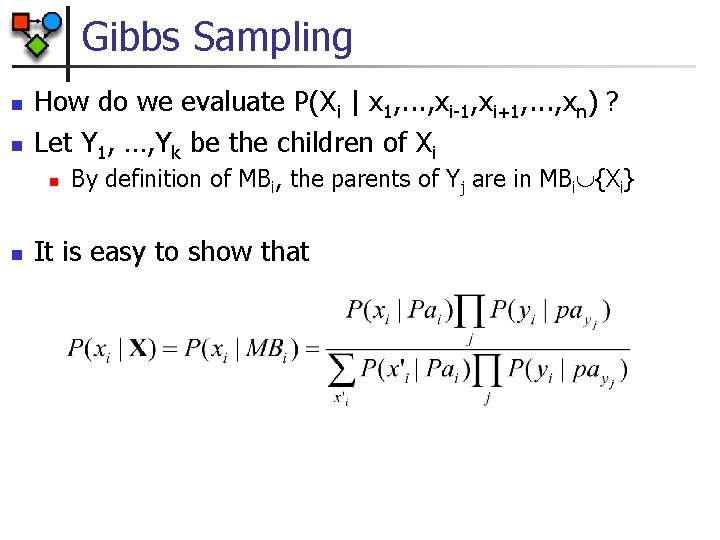

Gibbs Sampling n n How do we evaluate P(Xi | x 1, . . . , xi-1, xi+1, . . . , xn) ? Let Y 1, …, Yk be the children of Xi n n By definition of MBi, the parents of Yj are in MBi {Xi} It is easy to show that

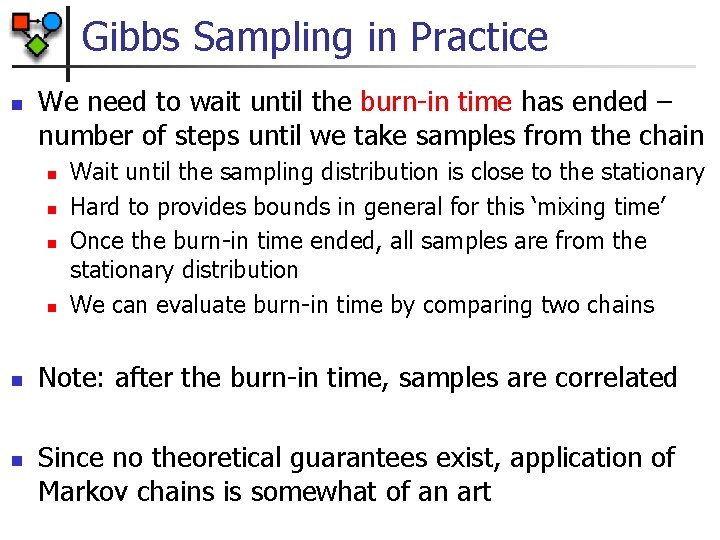

Gibbs Sampling in Practice n We need to wait until the burn-in time has ended – number of steps until we take samples from the chain n n n Wait until the sampling distribution is close to the stationary Hard to provides bounds in general for this ‘mixing time’ Once the burn-in time ended, all samples are from the stationary distribution We can evaluate burn-in time by comparing two chains Note: after the burn-in time, samples are correlated Since no theoretical guarantees exist, application of Markov chains is somewhat of an art

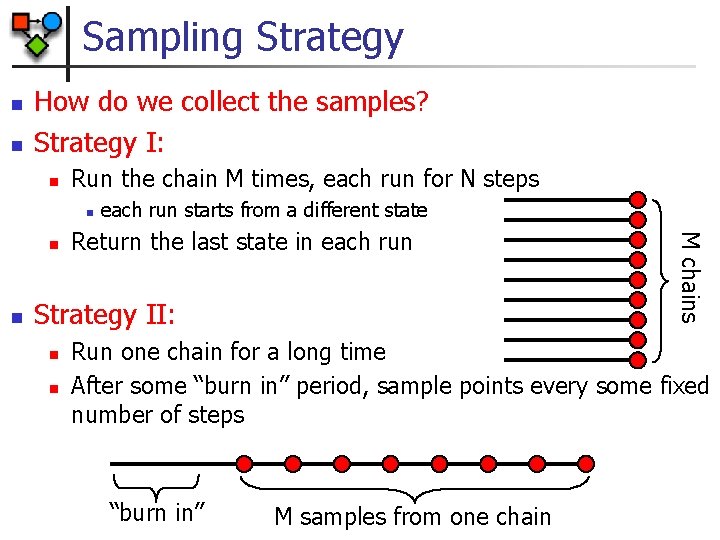

Sampling Strategy n n How do we collect the samples? Strategy I: n Run the chain M times, each run for N steps n n Return the last state in each run Strategy II: n n M chains n each run starts from a different state Run one chain for a long time After some “burn in” period, sample points every some fixed number of steps “burn in” M samples from one chain

Comparing Strategies Strategy I: n n Better chance of “covering” the space of points, especially if the chain is slow to reach the stationary distribution Have to perform “burn in” steps for each chain Strategy II: n n Perform “burn in” only once Samples might be correlated (although only weakly) Hybrid strategy: n n run several chains, and sample few samples from each Combines benefits of both strategies

Particle-Based Methods Overview n Full particle methods n Sampling methods n n n Forward sampling Importance sampling Markov chain Monte Carlo Deterministic particle generation Distributional particles

Deterministic Search Methods n Idea: if the distribution is dominated by a small set of instances, it suffices to consider only them for approximating the function n For instances that we generate x[1], . . . x[m], estimate is: Note: we can obtain lower and upper bounds by examining the part of the probability mass covered by P(x[m]) Key: how can we enumerate highly probably instantiations? n Note: the single most probable instantiation is MPE which itself is an NP-hard problem

Particle-Based Methods Overview n Full particle methods n Sampling methods n n n Forward sampling Importance sampling Markov chain Monte Carlo Deterministic particle generation Distributional particles

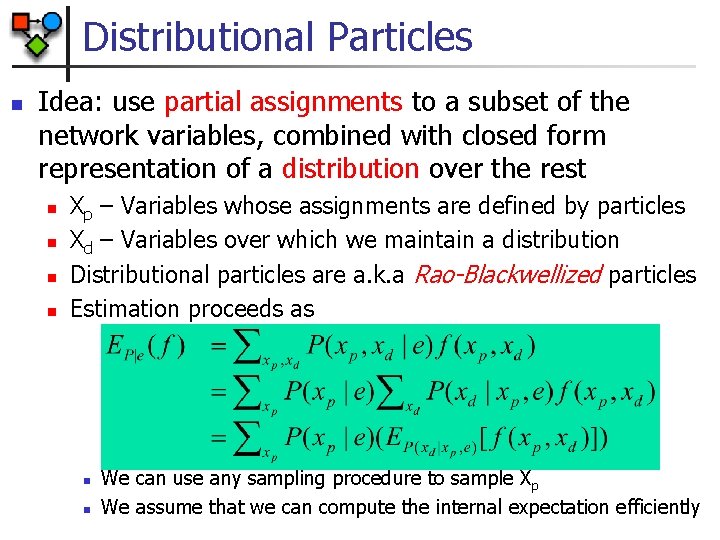

Distributional Particles n Idea: use partial assignments to a subset of the network variables, combined with closed form representation of a distribution over the rest n n Xp – Variables whose assignments are defined by particles Xd – Variables over which we maintain a distribution Distributional particles are a. k. a Rao-Blackwellized particles Estimation proceeds as n n We can use any sampling procedure to sample Xp We assume that we can compute the internal expectation efficiently

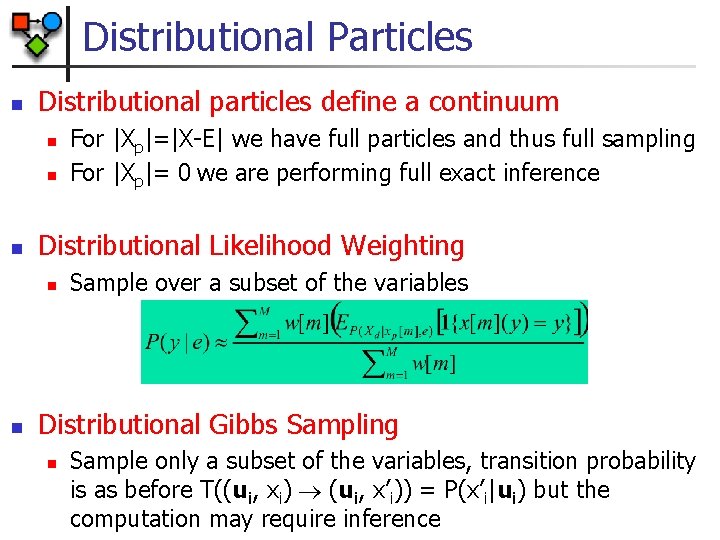

Distributional Particles n Distributional particles define a continuum n n n Distributional Likelihood Weighting n n For |Xp|=|X-E| we have full particles and thus full sampling For |Xp|= 0 we are performing full exact inference Sample over a subset of the variables Distributional Gibbs Sampling n Sample only a subset of the variables, transition probability is as before T((ui, xi) (ui, x’i)) = P(x’i|ui) but the computation may require inference

- Slides: 46