Topic Flow Model Modeling topicspecific global authority Ramesh

Topic. Flow Model: Modeling topic-specific global authority Ramesh Nallapati Natural Language Processing Group Stanford University 2/16/2010

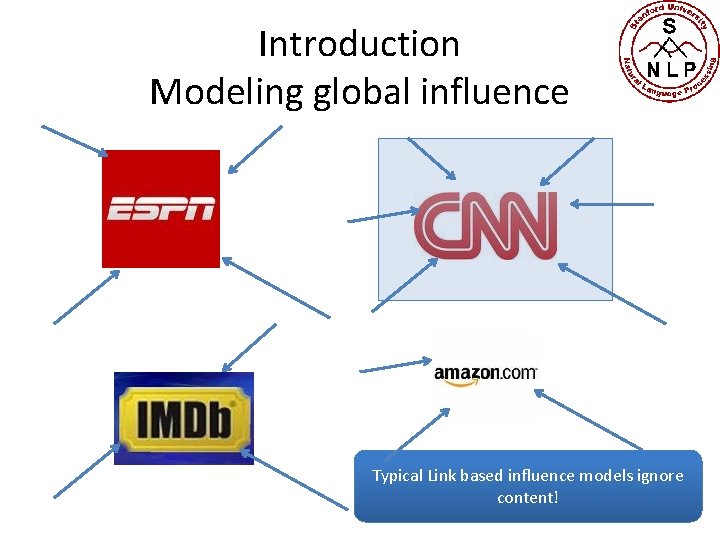

Introduction Modeling global influence Typical Link based influence models ignore content!

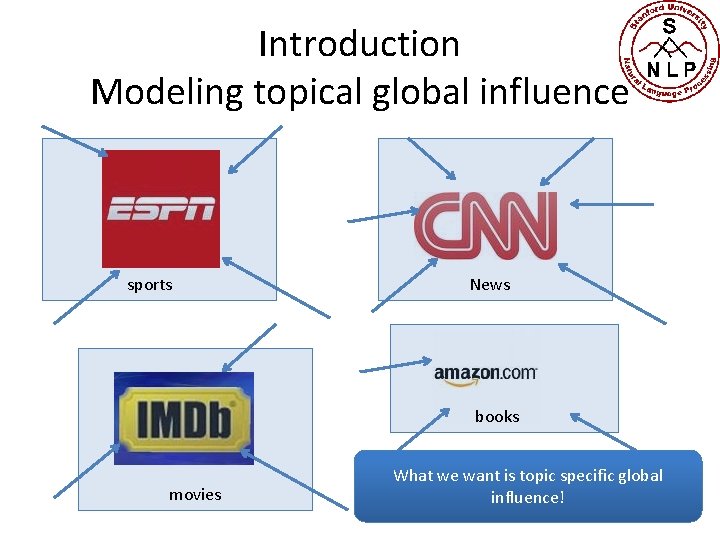

Introduction Modeling topical global influence sports News books movies What we want is topic specific global influence!

Introduction • Finding authoritative entities an important problem in networked data – Web search – academic papers – Friendship networks • Popular algorithms exploit link structure but ignore text – Page. Rank [Page et al, 1998] – HITS [Kleinberg, 1999] • Often, we want to find authoritative entities on a specific topic – An Entity’s authoritativeness is highly topic dependent – E. g. : Obama scores high in politics but is unknown in sports!

![Introduction • Candidate solution: Topic Sensitive Page. Rank [Haveliwala, 2003] – Page. Rank is Introduction • Candidate solution: Topic Sensitive Page. Rank [Haveliwala, 2003] – Page. Rank is](http://slidetodoc.com/presentation_image/1c166ca52fd85575eff4d956949d4e67/image-5.jpg)

Introduction • Candidate solution: Topic Sensitive Page. Rank [Haveliwala, 2003] – Page. Rank is run independently for each topic • Stationary distribution of a random web surfer • Has a teleportation probability to reach any document from any other document to satisfy ergodicity and acyclicity – ‘Teleportation’ probability is assigned to only documents on that topic – However: requires topic labels to be pre-specified • Many document corpora are unlabeled – Question: can we learn both topics and topical authoritativeness simultaneously?

![Introduction • One possible candidate family: Topic models [Blei et al, 2003] – Unsupervised Introduction • One possible candidate family: Topic models [Blei et al, 2003] – Unsupervised](http://slidetodoc.com/presentation_image/1c166ca52fd85575eff4d956949d4e67/image-6.jpg)

Introduction • One possible candidate family: Topic models [Blei et al, 2003] – Unsupervised generative models for text – Automatically learn `topics’ from a corpus • Latent Dirichlet Allocation:

Past work

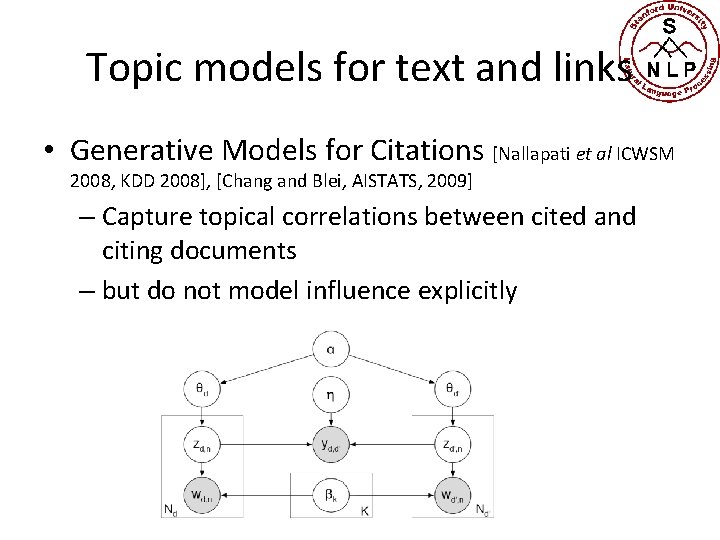

Topic models for text and links • Generative Models for Citations [Nallapati et al ICWSM 2008, KDD 2008], [Chang and Blei, AISTATS, 2009] – Capture topical correlations between cited and citing documents – but do not model influence explicitly

![Topic models for text and links • Citation influence [Dietz et al, ICML 2007] Topic models for text and links • Citation influence [Dietz et al, ICML 2007]](http://slidetodoc.com/presentation_image/1c166ca52fd85575eff4d956949d4e67/image-9.jpg)

Topic models for text and links • Citation influence [Dietz et al, ICML 2007] • HTM [Sun et al, EMNLP 2009] – Only capture local influences, not global

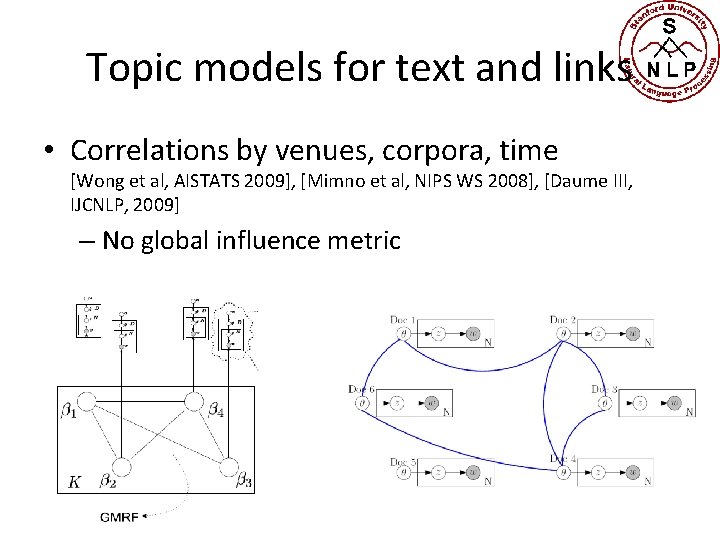

Topic models for text and links • Correlations by venues, corpora, time [Wong et al, AISTATS 2009], [Mimno et al, NIPS WS 2008], [Daume III, IJCNLP, 2009] – No global influence metric

Topic. Flow model

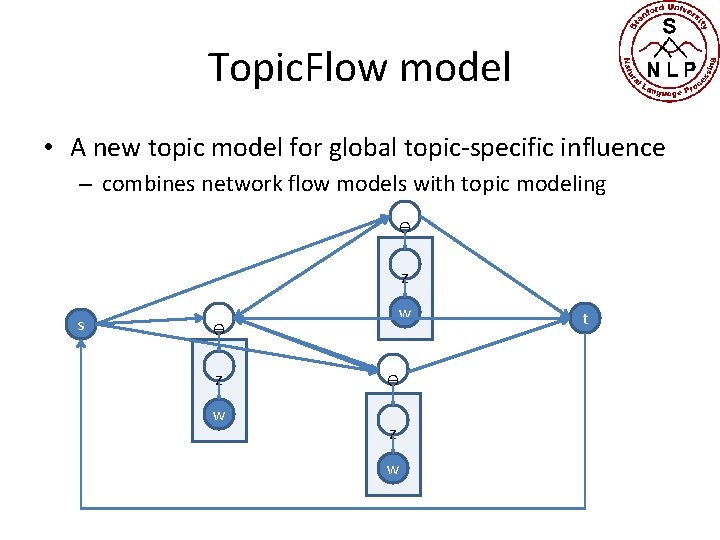

Topic. Flow model • A new topic model for global topic-specific influence – combines network flow models with topic modeling Ѳ z s w Ѳ z w t

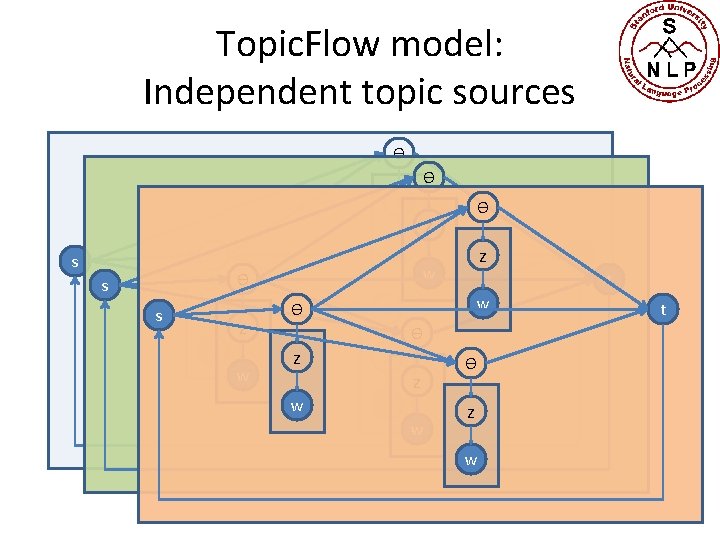

Topic. Flow model: Independent topic sources Ѳ Ѳ z z w Ѳ s s z z w w z w Ѳ s Ѳ Ѳ t w Ѳ Ѳ z z w w z w t Ѳ z w t

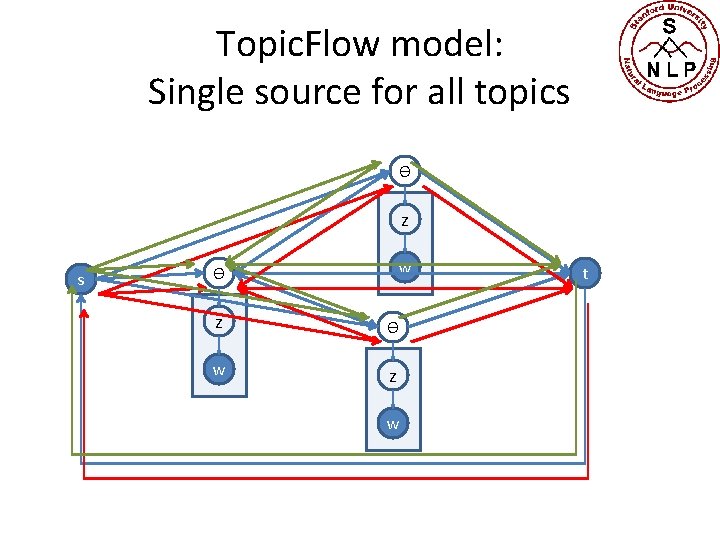

Topic. Flow model: Single source for all topics Ѳ z s w Ѳ z Ѳ w z w t

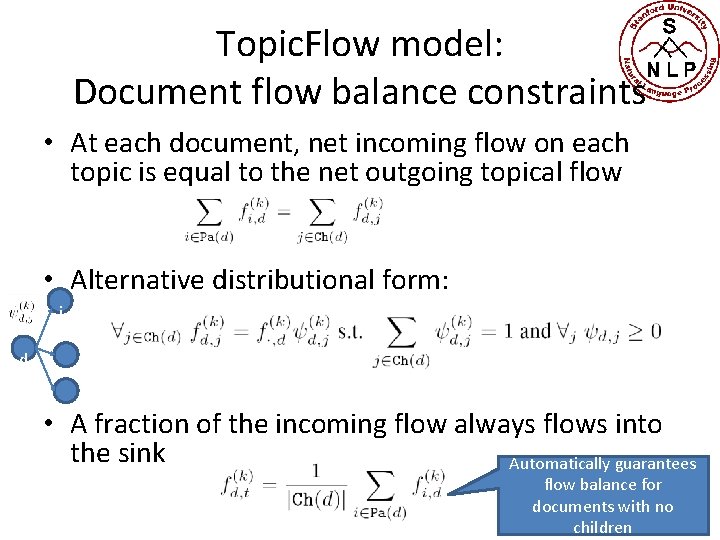

Topic. Flow model: Document flow balance constraints • At each document, net incoming flow on each topic is equal to the net outgoing topical flow • Alternative distributional form: j d • A fraction of the incoming flow always flows into the sink Automatically guarantees flow balance for documents with no children

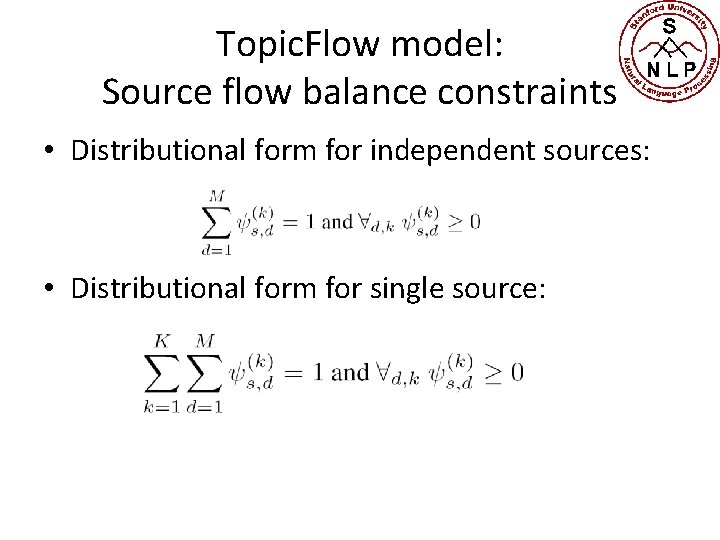

Topic. Flow model: Source flow balance constraints • Distributional form for independent sources: • Distributional form for single source:

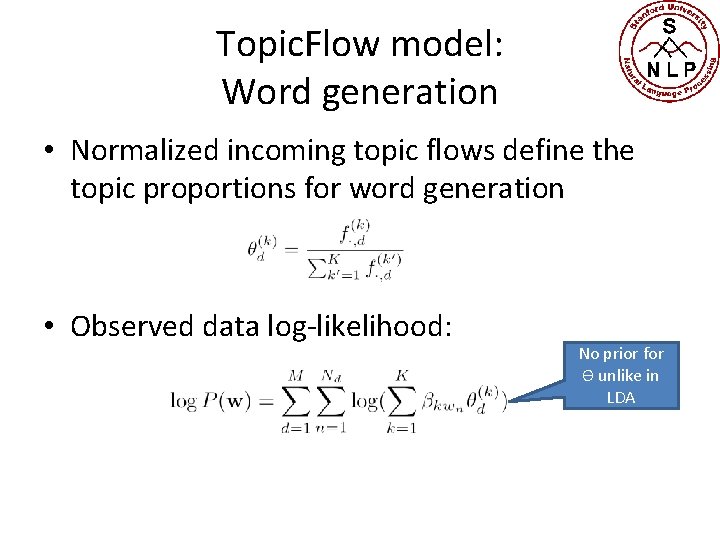

Topic. Flow model: Word generation • Normalized incoming topic flows define the topic proportions for word generation • Observed data log-likelihood: No prior for Ѳ unlike in LDA

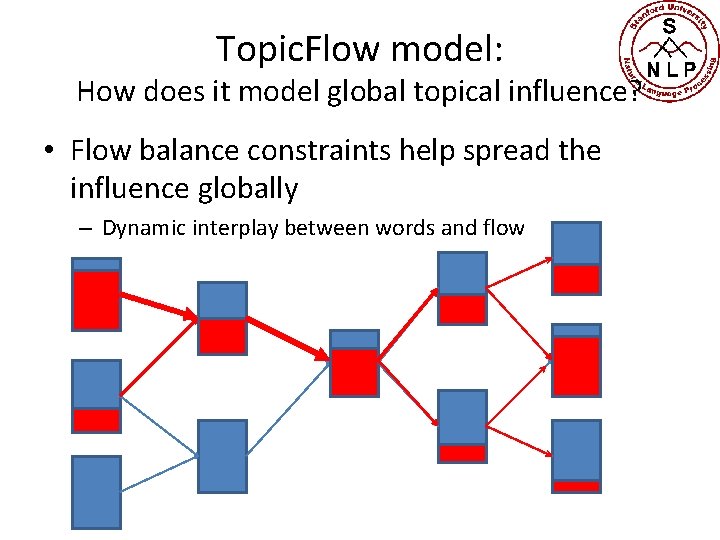

Topic. Flow model: How does it model global topical influence? • Flow balance constraints help spread the influence globally – Dynamic interplay between words and flow

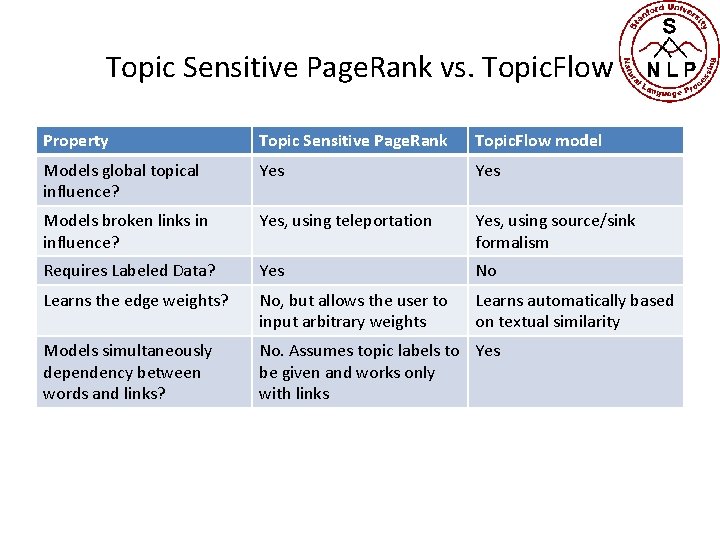

Topic Sensitive Page. Rank vs. Topic. Flow Property Topic Sensitive Page. Rank Topic. Flow model Models global topical influence? Yes Models broken links in influence? Yes, using teleportation Yes, using source/sink formalism Requires Labeled Data? Yes No Learns the edge weights? No, but allows the user to input arbitrary weights Learns automatically based on textual similarity Models simultaneously dependency between words and links? No. Assumes topic labels to Yes be given and works only with links

Learning and inference

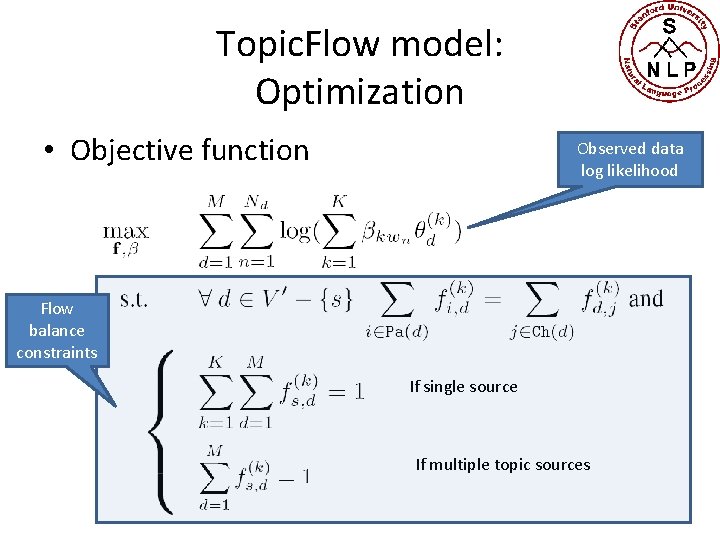

Topic. Flow model: Optimization • Objective function Observed data log likelihood Flow balance constraints If single source If multiple topic sources

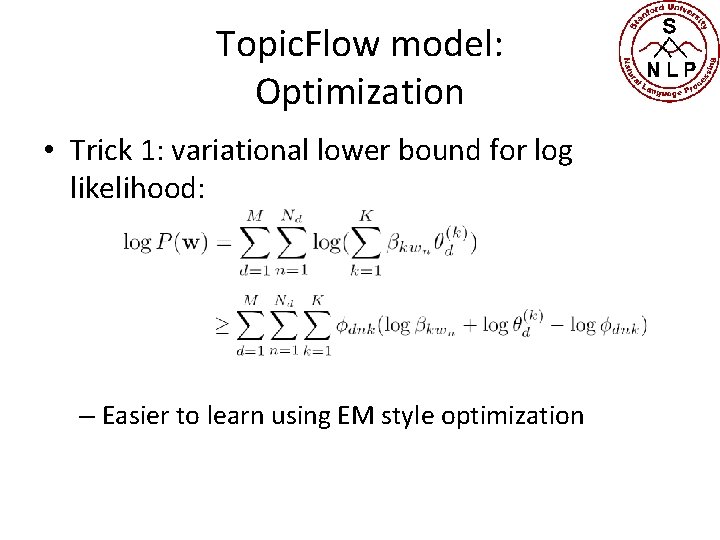

Topic. Flow model: Optimization • Trick 1: variational lower bound for log likelihood: – Easier to learn using EM style optimization

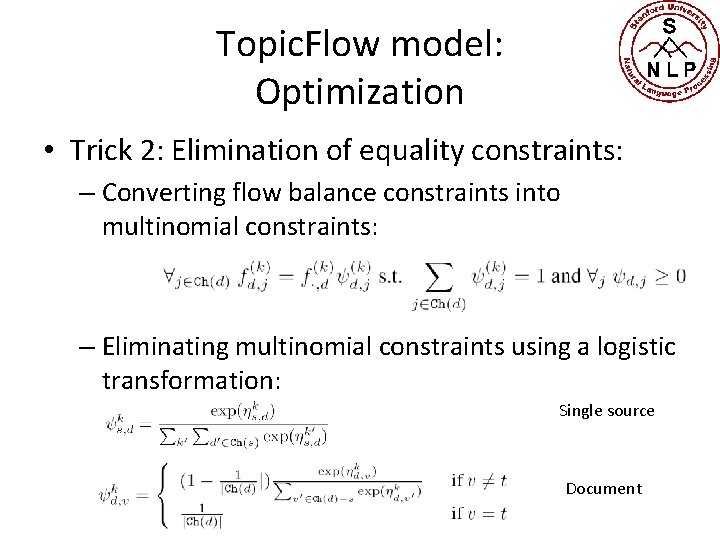

Topic. Flow model: Optimization • Trick 2: Elimination of equality constraints: – Converting flow balance constraints into multinomial constraints: – Eliminating multinomial constraints using a logistic transformation: Single source Document

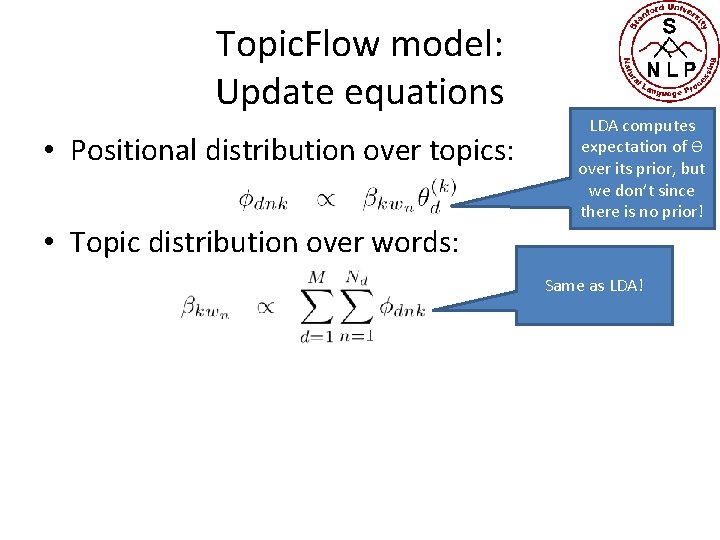

Topic. Flow model: Update equations • Positional distribution over topics: • Topic distribution over words: LDA computes expectation of Ѳ over its prior, but we don’t since there is no prior! Same as LDA!

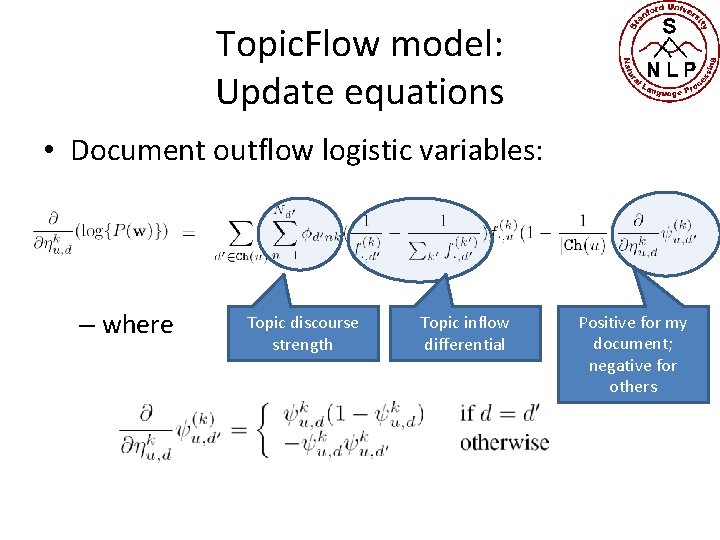

Topic. Flow model: Update equations • Document outflow logistic variables: – where Topic discourse strength Topic inflow differential Positive for my document; negative for others

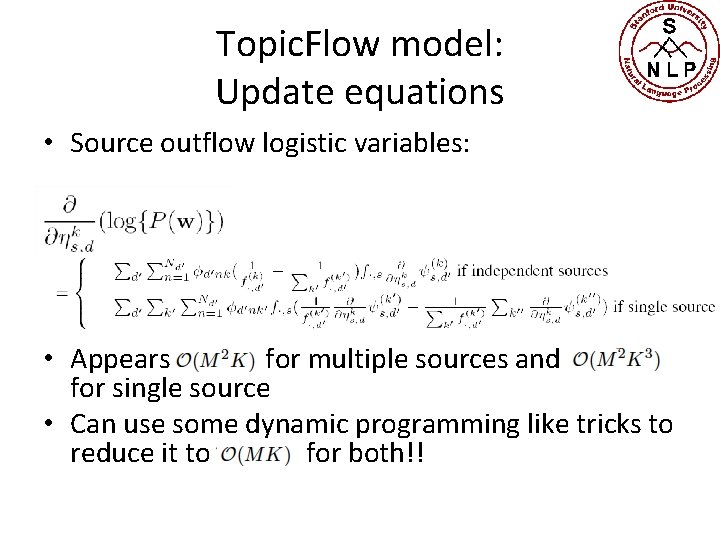

Topic. Flow model: Update equations • Source outflow logistic variables: • Appears for multiple sources and for single source • Can use some dynamic programming like tricks to reduce it to for both!!

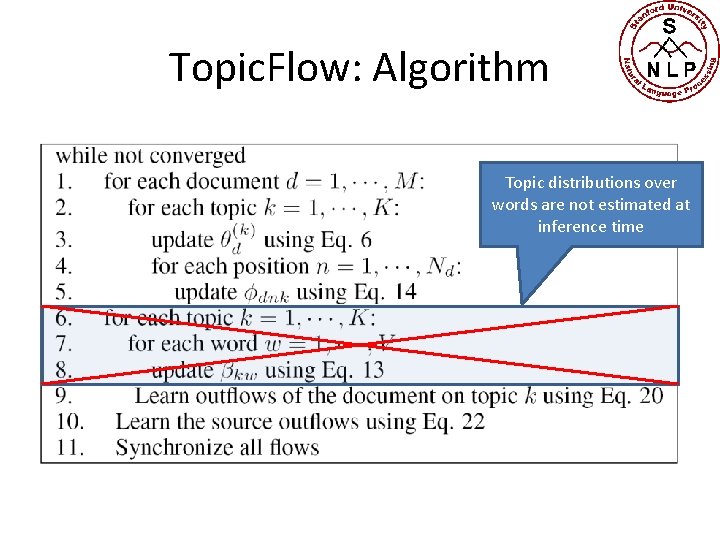

Topic. Flow: Algorithm Topic distributions over words are not estimated at inference time

Experiments and results

Dataset 1: Cora • Abstracts of research papers in CS • Removed documents with no links • Training set: – 11, 442 documents – 24, 582 links • Test set – 11, 230 documents – 23, 379 links • 7, 185 unique words after stopping and stemming

Dataset 2: ACL anthology • Training set: – full-content of 9, 824 papers published in or before 2005 – 36, 604 hyperlinks • Test set: – abstracts of 1, 040 test document published after 2005 – No hyperlinks within the test set – Hyperlinks to the training documents used only for testing on citation recommendation task • 46, 160 unique words after stopping and stemming

Qualitative results

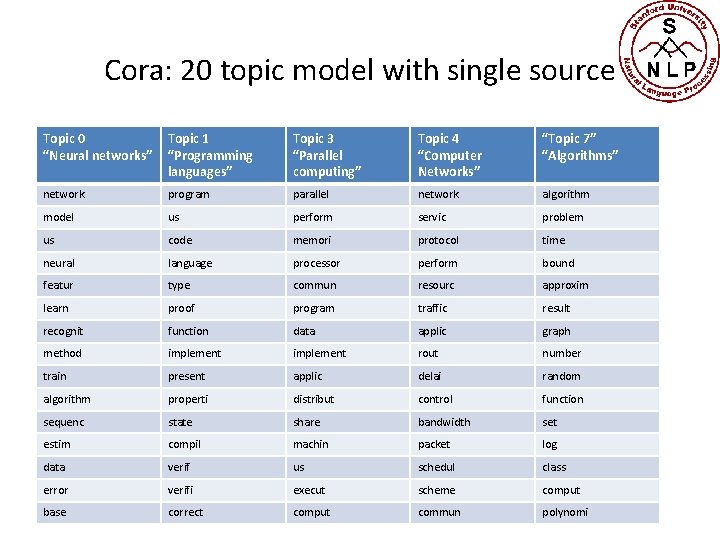

Cora: 20 topic model with single source Topic 0 “Neural networks” Topic 1 “Programming languages” Topic 3 “Parallel computing” Topic 4 “Computer Networks” “Topic 7” “Algorithms” network program parallel network algorithm model us perform servic problem us code memori protocol time neural language processor perform bound featur type commun resourc approxim learn proof program traffic result recognit function data applic graph method implement rout number train present applic delai random algorithm properti distribut control function sequenc state share bandwidth set estim compil machin packet log data verif us schedul class error verifi execut scheme comput base correct comput commun polynomi

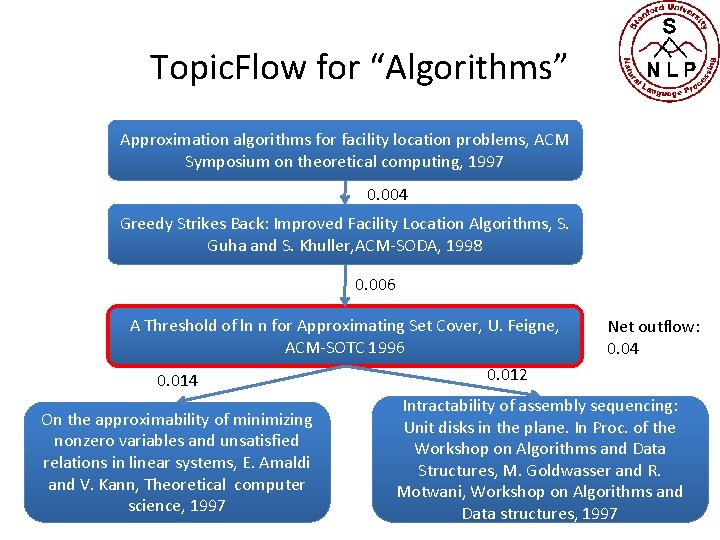

Topic. Flow for “Algorithms” Approximation algorithms for facility location problems, ACM Symposium on theoretical computing, 1997 0. 004 Greedy Strikes Back: Improved Facility Location Algorithms, S. Guha and S. Khuller, ACM-SODA, 1998 0. 006 A Threshold of ln n for Approximating Set Cover, U. Feigne, ACM-SOTC 1996 0. 014 On the approximability of minimizing nonzero variables and unsatisfied relations in linear systems, E. Amaldi and V. Kann, Theoretical computer science, 1997 Net outflow: 0. 04 0. 012 Intractability of assembly sequencing: Unit disks in the plane. In Proc. of the Workshop on Algorithms and Data Structures, M. Goldwasser and R. Motwani, Workshop on Algorithms and Data structures, 1997

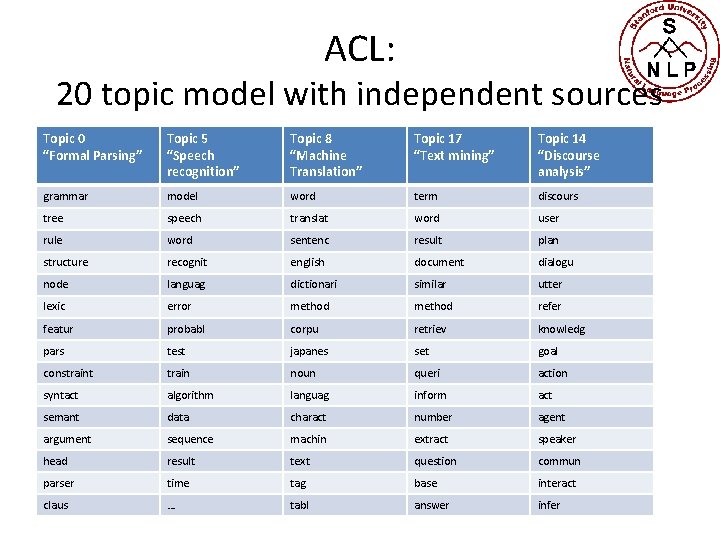

ACL: 20 topic model with independent sources Topic 0 “Formal Parsing” Topic 5 “Speech recognition” Topic 8 “Machine Translation” Topic 17 “Text mining” Topic 14 “Discourse analysis” grammar model word term discours tree speech translat word user rule word sentenc result plan structure recognit english document dialogu node languag dictionari similar utter lexic error method refer featur probabl corpu retriev knowledg pars test japanes set goal constraint train noun queri action syntact algorithm languag inform act semant data charact number agent argument sequence machin extract speaker head result text question commun parser time tag base interact claus … tabl answer infer

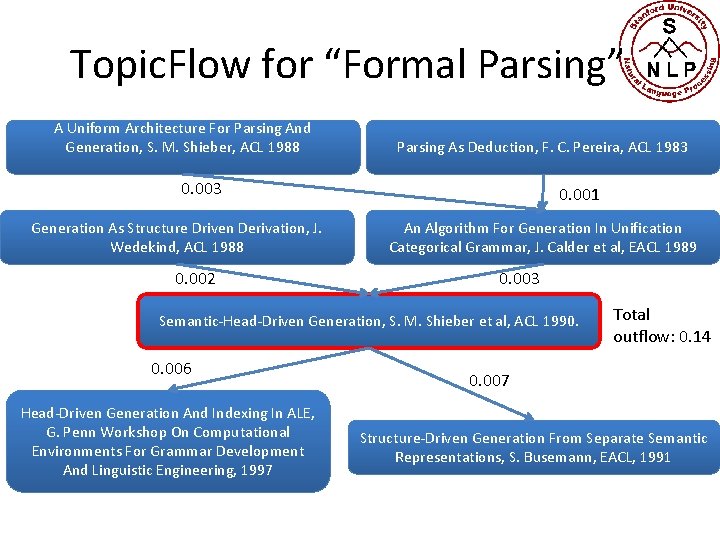

Topic. Flow for “Formal Parsing” A Uniform Architecture For Parsing And Generation, S. M. Shieber, ACL 1988 Parsing As Deduction, F. C. Pereira, ACL 1983 0. 003 Generation As Structure Driven Derivation, J. Wedekind, ACL 1988 0. 002 0. 001 An Algorithm For Generation In Unification Categorical Grammar, J. Calder et al, EACL 1989 0. 003 Semantic-Head-Driven Generation, S. M. Shieber et al, ACL 1990. 0. 006 Head-Driven Generation And Indexing In ALE, G. Penn Workshop On Computational Environments For Grammar Development And Linguistic Engineering, 1997 Total outflow: 0. 14 0. 007 Structure-Driven Generation From Separate Semantic Representations, S. Busemann, EACL, 1991

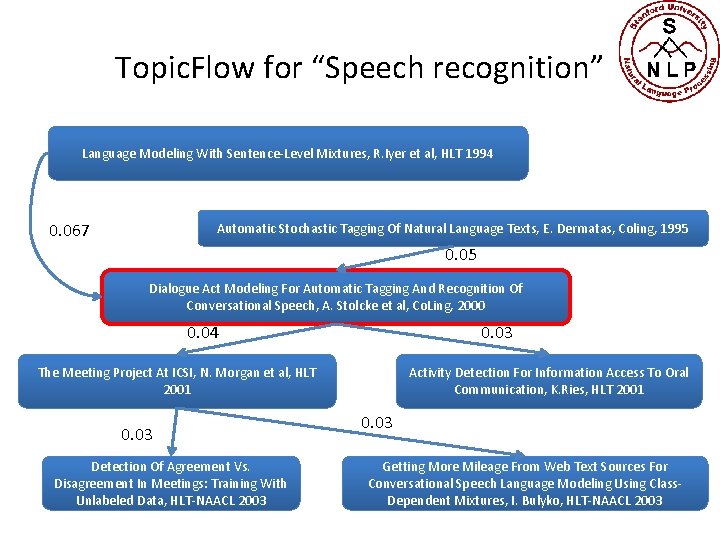

Topic. Flow for “Speech recognition” Language Modeling With Sentence-Level Mixtures, R. Iyer et al, HLT 1994 0. 067 Automatic Stochastic Tagging Of Natural Language Texts, E. Dermatas, Coling, 1995 0. 05 Dialogue Act Modeling For Automatic Tagging And Recognition Of Conversational Speech, A. Stolcke et al, Co. Ling, 2000 0. 04 0. 03 The Meeting Project At ICSI, N. Morgan et al, HLT 2001 0. 03 Detection Of Agreement Vs. Disagreement In Meetings: Training With Unlabeled Data, HLT-NAACL 2003 Activity Detection For Information Access To Oral Communication, K. Ries, HLT 2001 0. 03 Getting More Mileage From Web Text Sources For Conversational Speech Language Modeling Using Class. Dependent Mixtures, I. Bulyko, HLT-NAACL 2003

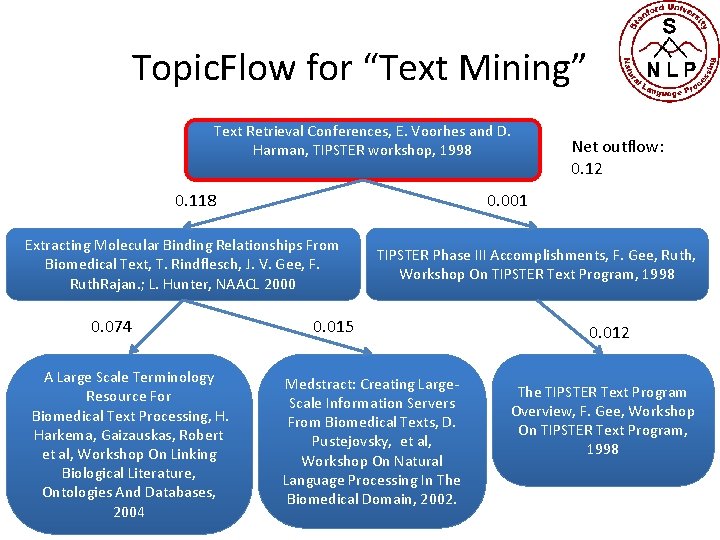

Topic. Flow for “Text Mining” Text Retrieval Conferences, E. Voorhes and D. Harman, TIPSTER workshop, 1998 0. 118 0. 001 Extracting Molecular Binding Relationships From Biomedical Text, T. Rindflesch, J. V. Gee, F. Ruth. Rajan. ; L. Hunter, NAACL 2000 0. 074 A Large Scale Terminology Resource For Biomedical Text Processing, H. Harkema, Gaizauskas, Robert et al, Workshop On Linking Biological Literature, Ontologies And Databases, 2004 Net outflow: 0. 12 TIPSTER Phase III Accomplishments, F. Gee, Ruth, Workshop On TIPSTER Text Program, 1998 0. 015 Medstract: Creating Large. Scale Information Servers From Biomedical Texts, D. Pustejovsky, et al, Workshop On Natural Language Processing In The Biomedical Domain, 2002. 0. 012 The TIPSTER Text Program Overview, F. Gee, Workshop On TIPSTER Text Program, 1998

Quantitative results

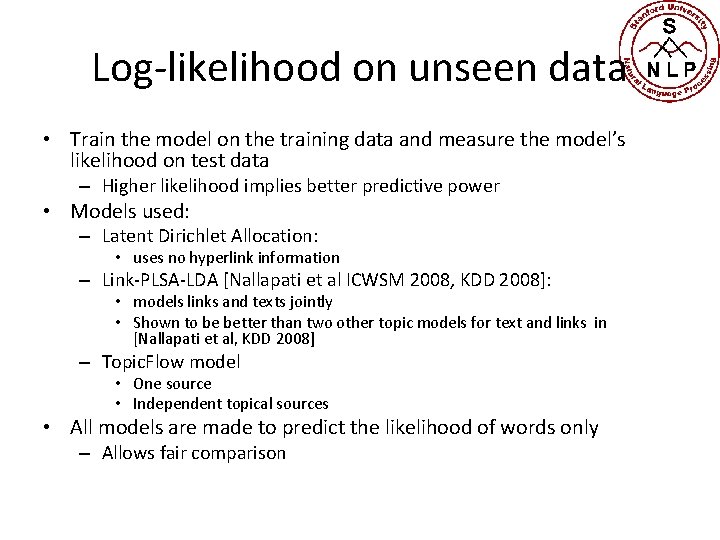

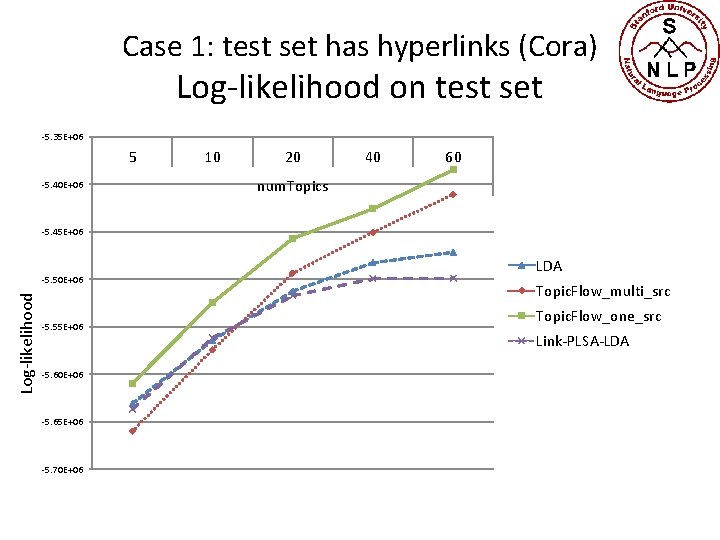

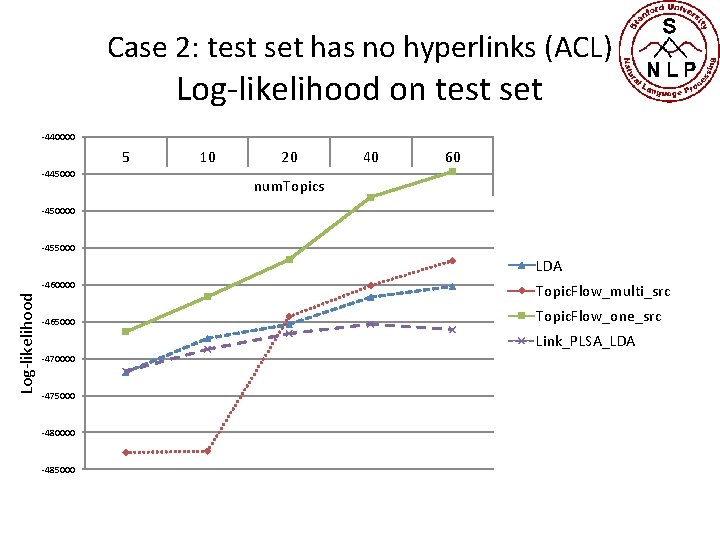

Log-likelihood on unseen data • Train the model on the training data and measure the model’s likelihood on test data – Higher likelihood implies better predictive power • Models used: – Latent Dirichlet Allocation: • uses no hyperlink information – Link-PLSA-LDA [Nallapati et al ICWSM 2008, KDD 2008]: • models links and texts jointly • Shown to be better than two other topic models for text and links in [Nallapati et al, KDD 2008] – Topic. Flow model • One source • Independent topical sources • All models are made to predict the likelihood of words only – Allows fair comparison

Case 1: test set has hyperlinks (Cora) Log-likelihood on test set -5. 35 E+06 5 -5. 40 E+06 10 20 40 60 num. Topics -5. 45 E+06 Log-likelihood -5. 50 E+06 -5. 55 E+06 -5. 60 E+06 -5. 65 E+06 -5. 70 E+06 LDA Topic. Flow_multi_src Topic. Flow_one_src Link-PLSA-LDA

Case 2: test set has no hyperlinks (ACL) Log-likelihood on test set -440000 5 -445000 10 20 40 60 num. Topics -450000 -455000 LDA Log-likelihood -460000 -465000 Topic. Flow_multi_src Topic. Flow_one_src Link_PLSA_LDA -470000 -475000 -480000 -485000

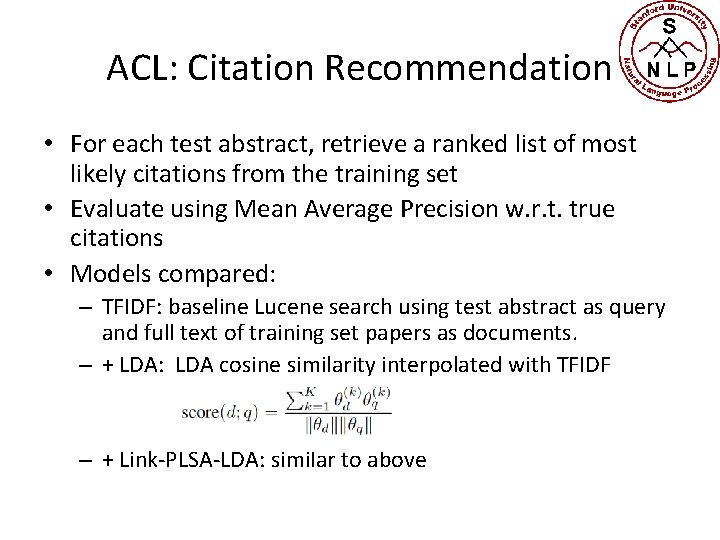

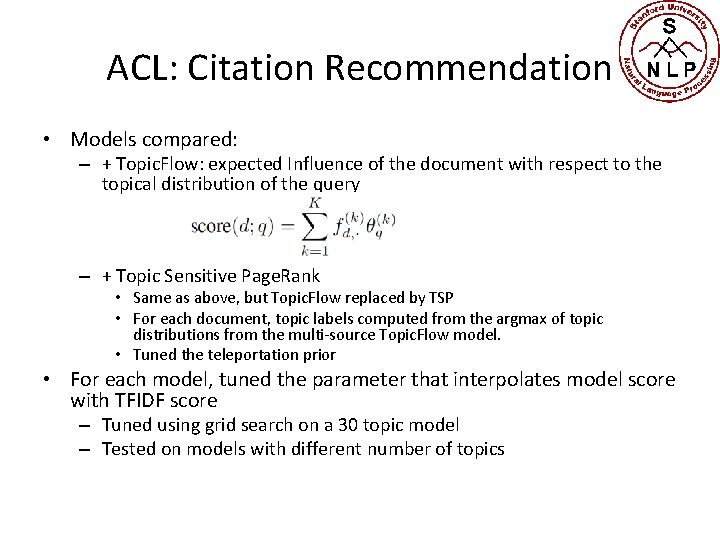

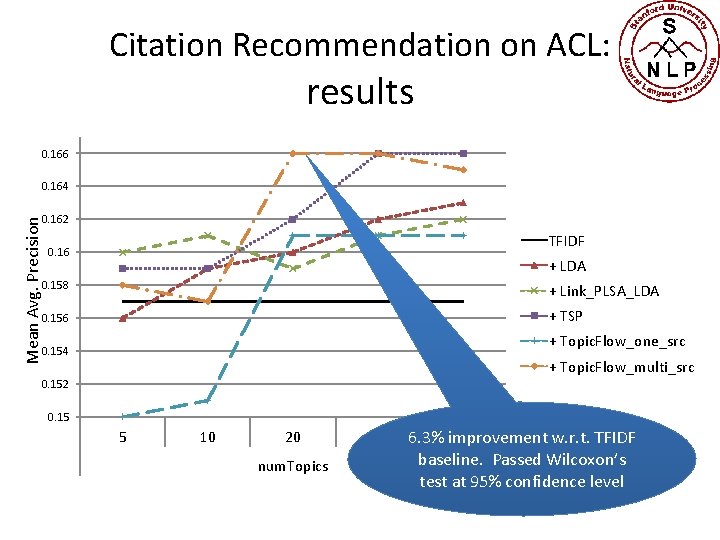

ACL: Citation Recommendation • For each test abstract, retrieve a ranked list of most likely citations from the training set • Evaluate using Mean Average Precision w. r. t. true citations • Models compared: – TFIDF: baseline Lucene search using test abstract as query and full text of training set papers as documents. – + LDA: LDA cosine similarity interpolated with TFIDF – + Link-PLSA-LDA: similar to above

ACL: Citation Recommendation • Models compared: – + Topic. Flow: expected Influence of the document with respect to the topical distribution of the query – + Topic Sensitive Page. Rank • Same as above, but Topic. Flow replaced by TSP • For each document, topic labels computed from the argmax of topic distributions from the multi-source Topic. Flow model. • Tuned the teleportation prior • For each model, tuned the parameter that interpolates model score with TFIDF score – Tuned using grid search on a 30 topic model – Tested on models with different number of topics

Citation Recommendation on ACL: results 0. 166 0. 164 Mean Avg. Precision 0. 162 TFIDF 0. 16 + LDA 0. 158 + Link_PLSA_LDA 0. 156 + TSP + Topic. Flow_one_src 0. 154 + Topic. Flow_multi_src 0. 152 0. 15 5 10 20 num. Topics 40 60 6. 3% improvement w. r. t. TFIDF baseline. Passed Wilcoxon’s test at 95% confidence level

Conclusions • New Topic. Flow model automatically learns topic-specific global influence of entities • Better model of text than LDA as well as several joint models of text and citations • Is able to capture the notion of topical influence on par with TSP (as measured w. r. t citation recommendation) – Unlike TSP, does not require topic labels to be pre-specified • Future work – Model can be applied to a wide range of other textual networked data such as web, blogs, social media, etc. – Plan to build a graphical browser that allows users to track the propagation of influence on a specific topic

Resources • Implementation available in Java. NLP under Research project as edu. stanford. nlp. topicmodeling. topicflow – Read Package. html for usage instructions • A more detailed version of this presentation is available as a manuscript for svn-checkout at: • svn+ssh: //username@jamie. stanford. edu/u/nlp/svnroot/trunk/papers/t opicflow • This presentation is available at – www. cs. stanford. edu/~nmramesh/Topic. Flow. pptx – Link not visible from the web page • This is unpublished work, so please do not share or distribute!!

Acknowledgments • Dan Ramage – TSP implementation – Other important modeling suggestions • David Vickrey and Rajat Raina – Suggestions on optimization • Steve Bethard – Citation retrieval implementation – Wilcoxon test software

- Slides: 47