Software Distributed Shared Memory SDSM Multi View 1

Software Distributed Shared Memory (SDSM): Multi. View 1. SDSM, false sharing. 2. Solution: Multi. View. 3. Granularity adaptation. 4. Integrated services. Ayal Itzkovitz, Assaf Schuster DSM Innovations - Multi. View

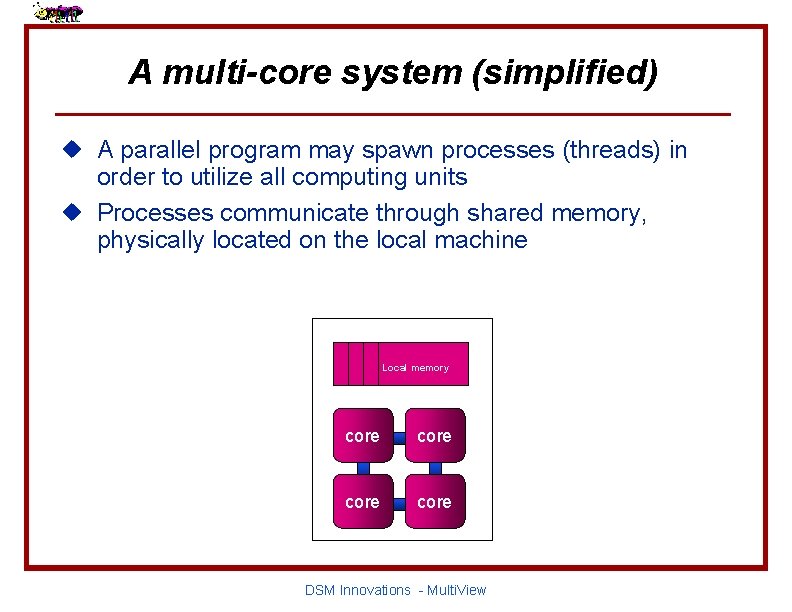

A multi-core system (simplified) u A parallel program may spawn processes (threads) in order to utilize all computing units u Processes communicate through shared memory, physically located on the local machine Local memory core DSM Innovations - Multi. View

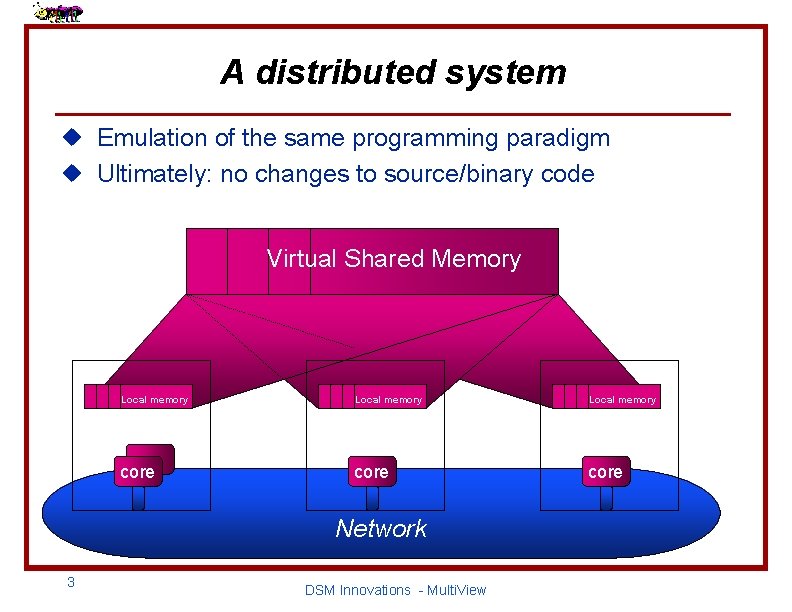

A distributed system u Emulation of the same programming paradigm u Ultimately: no changes to source/binary code Virtual Shared Memory Local memory core Network 3 DSM Innovations - Multi. View

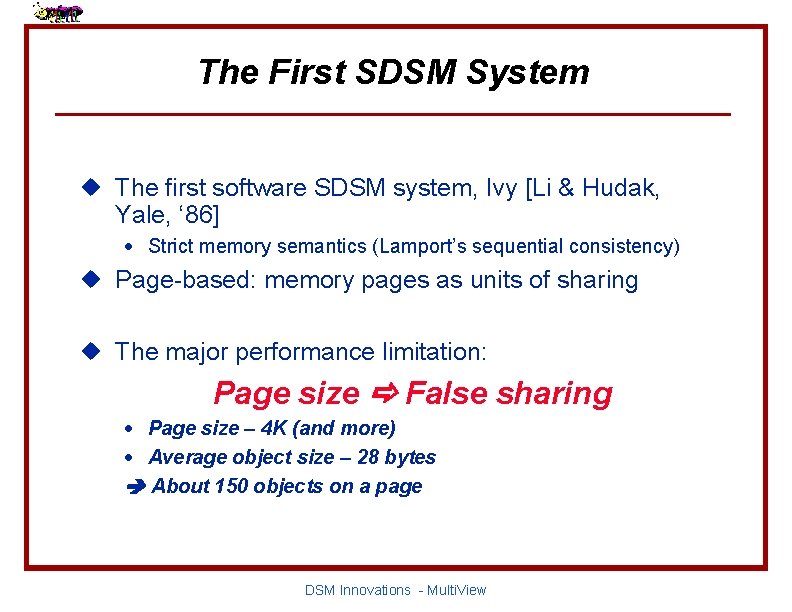

The First SDSM System u The first software SDSM system, Ivy [Li & Hudak, Yale, ‘ 86] · Strict memory semantics (Lamport’s sequential consistency) u Page-based: memory pages as units of sharing u The major performance limitation: Page size False sharing · Page size – 4 K (and more) · Average object size – 28 bytes About 150 objects on a page DSM Innovations - Multi. View

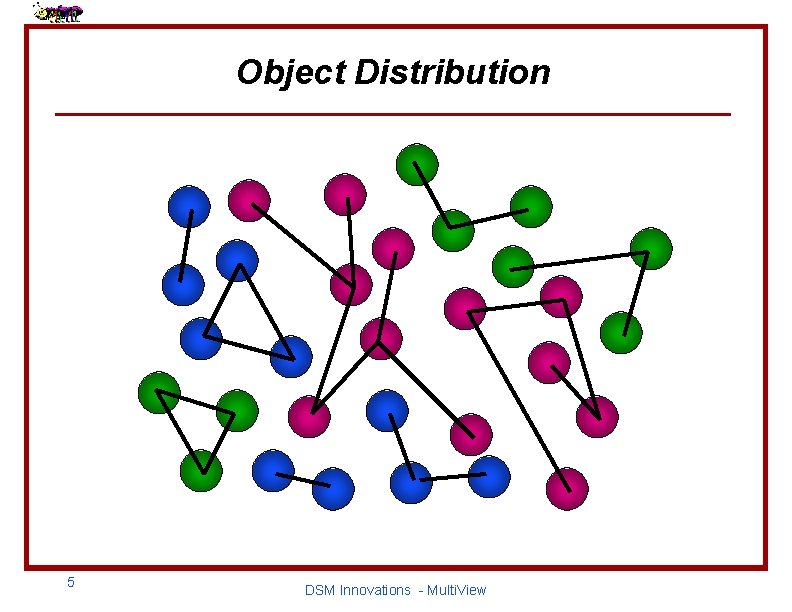

Object Distribution 5 DSM Innovations - Multi. View

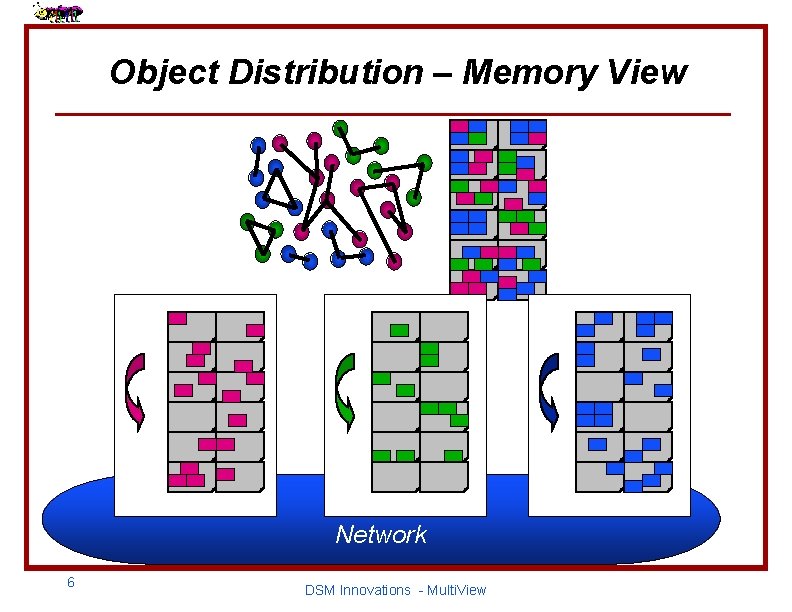

Object Distribution – Memory View Network 6 DSM Innovations - Multi. View

![False Sharing “…the conventional wisdom remains that the overhead of false sharing […] in False Sharing “…the conventional wisdom remains that the overhead of false sharing […] in](http://slidetodoc.com/presentation_image_h/764ab6290ce24908510ec339d698657b/image-7.jpg)

False Sharing “…the conventional wisdom remains that the overhead of false sharing […] in page-based consistency protocols is the primary factor limiting the performance of software SDSM” [Amza, Cox, Ramajamni, and Zwaenepoel, PPo. PP ‘ 97] “[The] conventional wisdom holds that fine-grain performance and false sharing doom page-based approaches” [Buck and Keleher, IPPS ‘ 98] 7 DSM Innovations - Multi. View

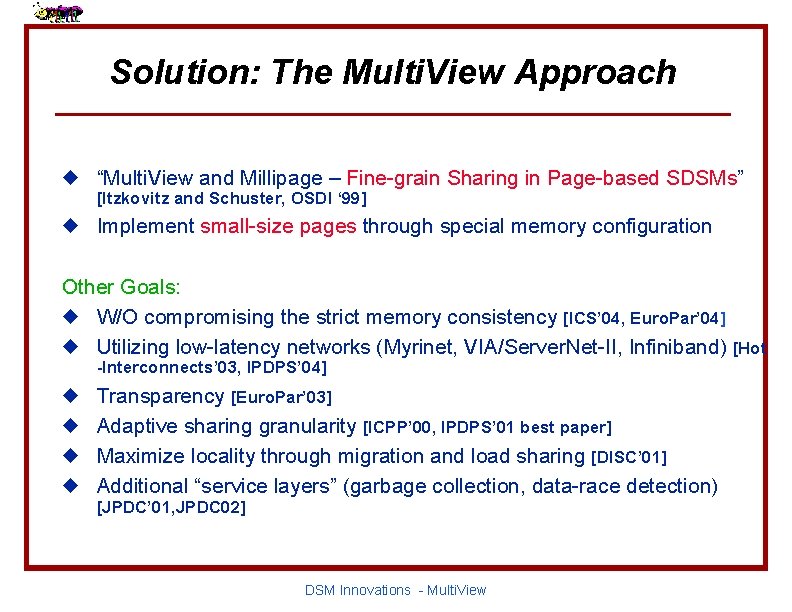

Solution: The Multi. View Approach u “Multi. View and Millipage – Fine-grain Sharing in Page-based SDSMs” [Itzkovitz and Schuster, OSDI ‘ 99] u Implement small-size pages through special memory configuration Other Goals: u W/O compromising the strict memory consistency [ICS’ 04, Euro. Par’ 04] u Utilizing low-latency networks (Myrinet, VIA/Server. Net-II, Infiniband) [Hot -Interconnects’ 03, IPDPS’ 04] u u Transparency [Euro. Par’ 03] Adaptive sharing granularity [ICPP’ 00, IPDPS’ 01 best paper] Maximize locality through migration and load sharing [DISC’ 01] Additional “service layers” (garbage collection, data-race detection) [JPDC’ 01, JPDC 02] DSM Innovations - Multi. View

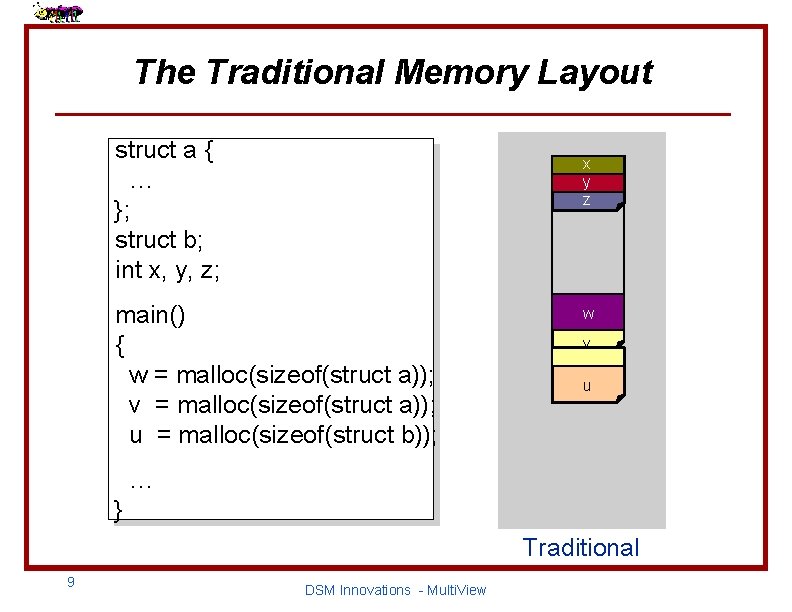

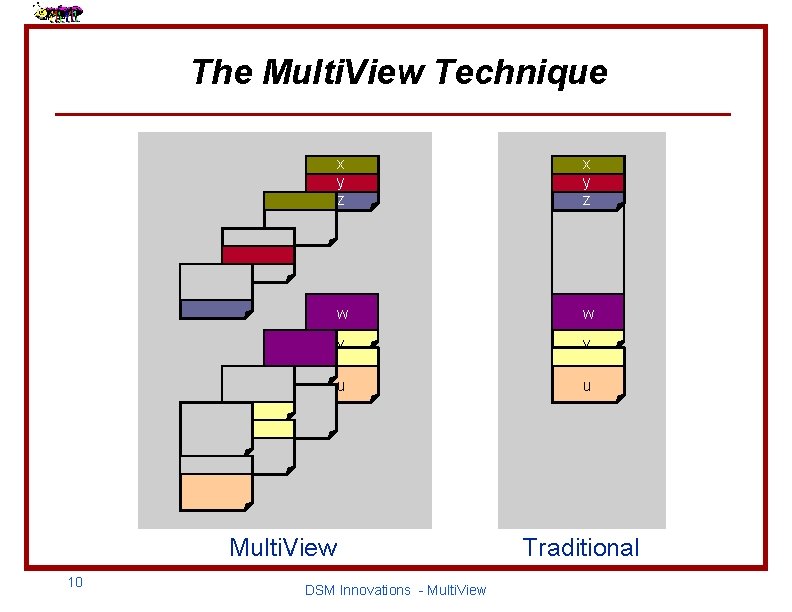

The Traditional Memory Layout struct a { … }; struct b; int x, y, z; x y z main() { w = malloc(sizeof(struct a)); v = malloc(sizeof(struct a)); u = malloc(sizeof(struct b)); w v u … } Traditional 9 DSM Innovations - Multi. View

The Multi. View Technique x y z w w v v u u Multi. View 10 DSM Innovations - Multi. View Traditional

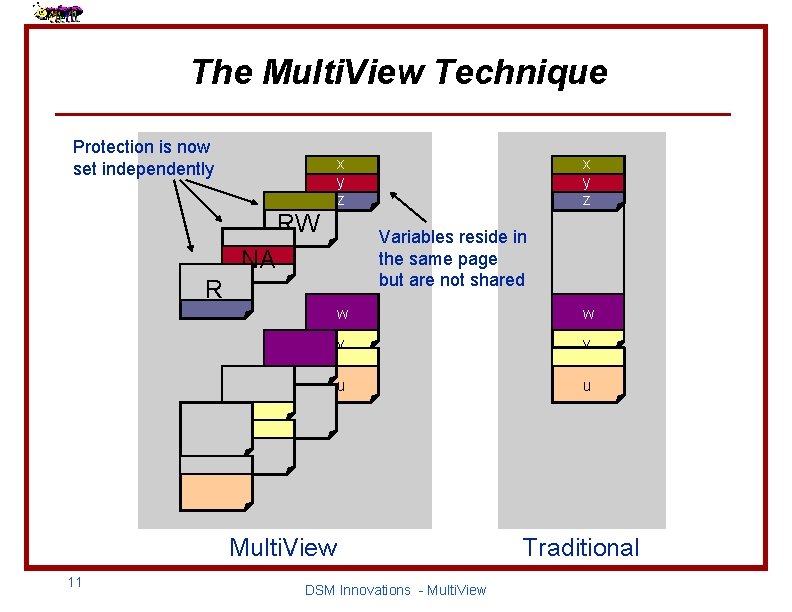

The Multi. View Technique Protection is now set independently x y z RW x y z Variables reside in the same page but are not shared NA R w w v v u u Multi. View 11 DSM Innovations - Multi. View Traditional

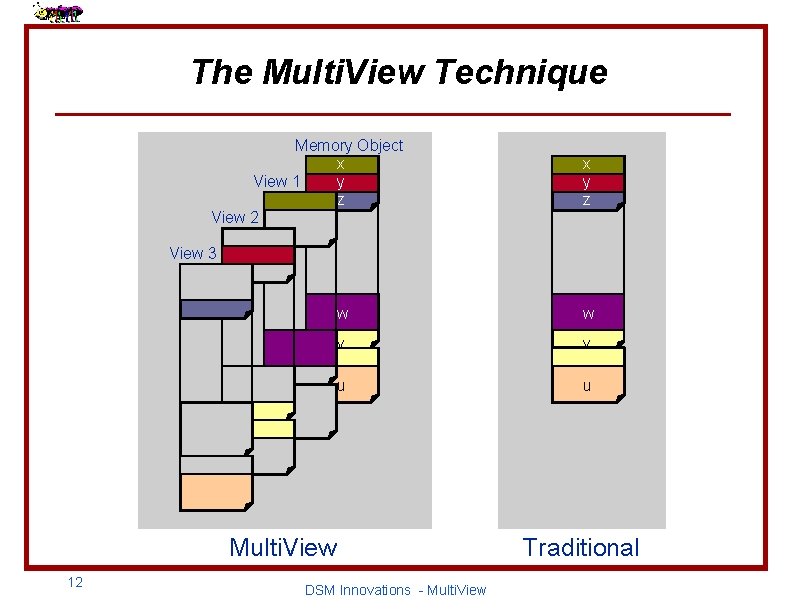

The Multi. View Technique Memory Object x y View 1 z View 2 x y z View 3 w w v v u u Multi. View 12 DSM Innovations - Multi. View Traditional

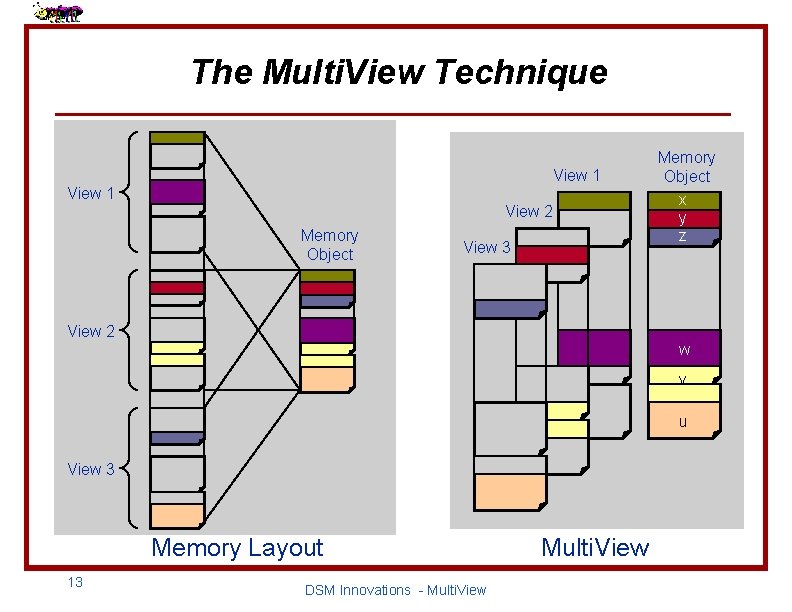

The Multi. View Technique View 1 View 2 Memory Object View 3 Memory Object x y z View 2 w v u View 3 Memory Layout 13 DSM Innovations - Multi. View

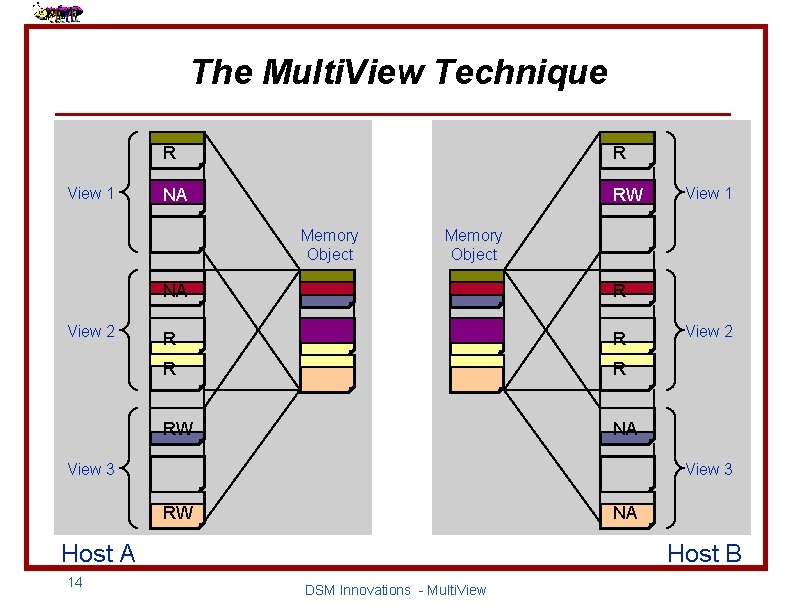

The Multi. View Technique View 1 R R NA RW Memory Object View 2 Memory Object NA R R RW NA View 3 View 2 View 3 RW NA Host A 14 View 1 Host B DSM Innovations - Multi. View

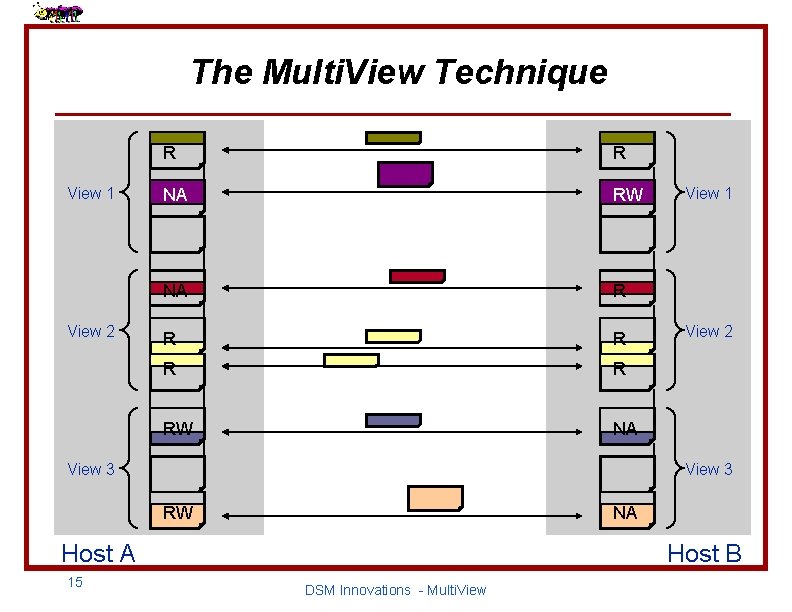

The Multi. View Technique View 1 View 2 R R NA RW NA R R RW NA View 3 View 2 View 3 RW NA Host A 15 View 1 Host B DSM Innovations - Multi. View

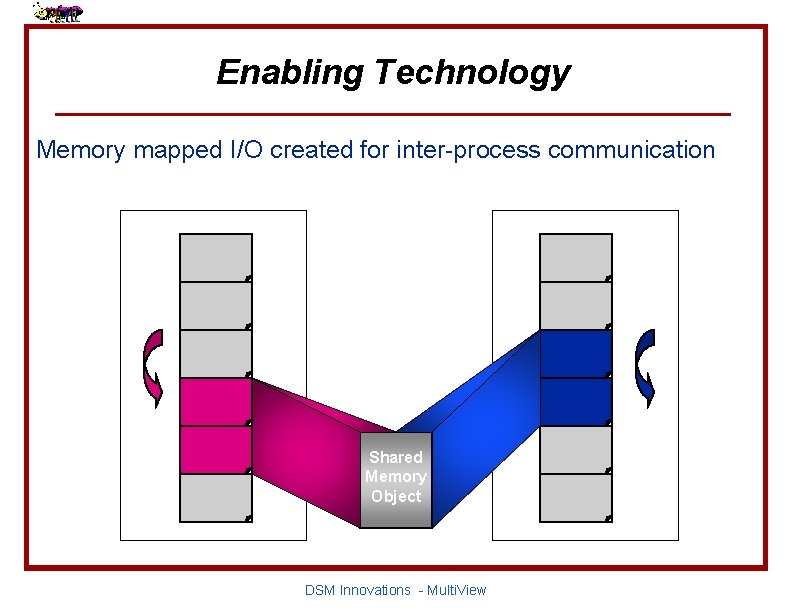

Enabling Technology Memory mapped I/O created for inter-process communication Shared Memory Object DSM Innovations - Multi. View

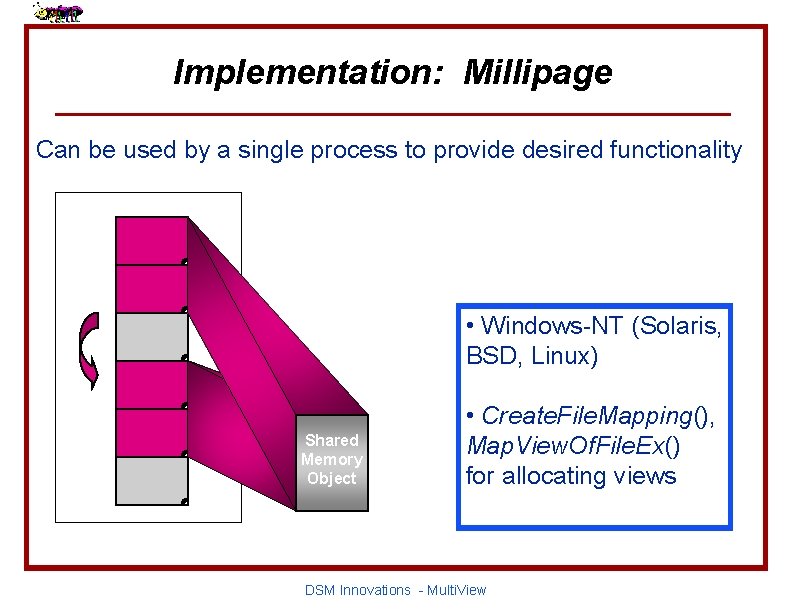

Implementation: Millipage Can be used by a single process to provide desired functionality • Windows-NT (Solaris, BSD, Linux) Shared Memory Object • Create. File. Mapping(), Map. View. Of. File. Ex() for allocating views DSM Innovations - Multi. View

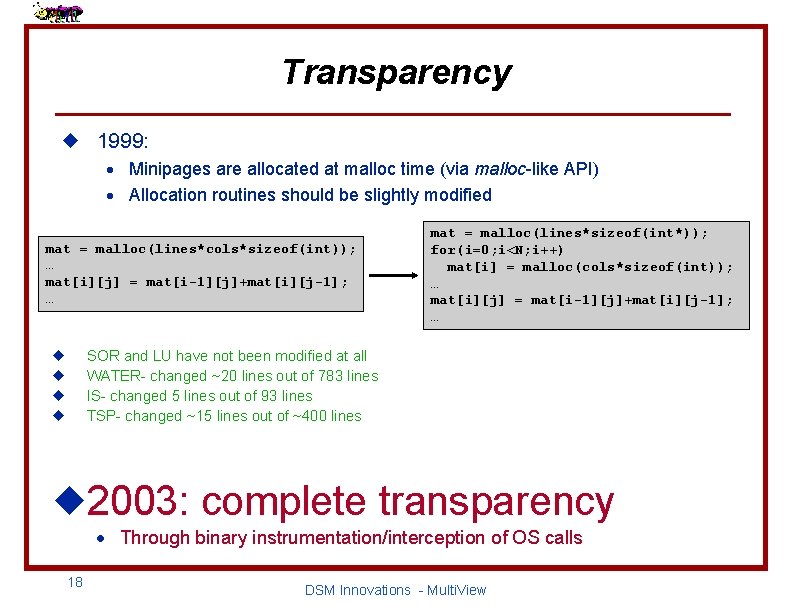

Transparency u 1999: · Minipages are allocated at malloc time (via malloc-like API) · Allocation routines should be slightly modified mat = malloc(lines*cols*sizeof(int)); … mat[i][j] = mat[i-1][j]+mat[i][j-1]; … mat = malloc(lines*sizeof(int*)); for(i=0; i<N; i++) mat[i] = malloc(cols*sizeof(int)); … mat[i][j] = mat[i-1][j]+mat[i][j-1]; … SOR and LU have not been modified at all WATER- changed ~20 lines out of 783 lines IS- changed 5 lines out of 93 lines TSP- changed ~15 lines out of ~400 lines u u u 2003: complete transparency · Through binary instrumentation/interception of OS calls 18 DSM Innovations - Multi. View

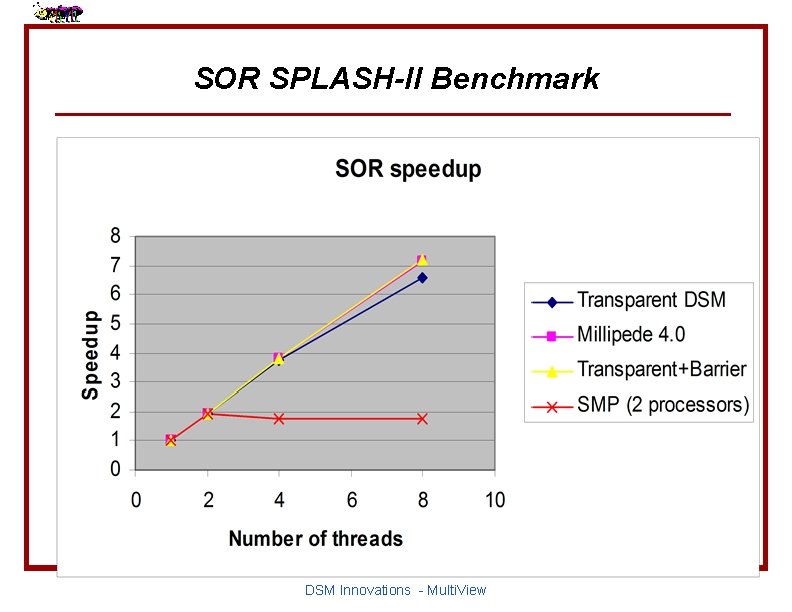

SOR SPLASH-II Benchmark DSM Innovations - Multi. View

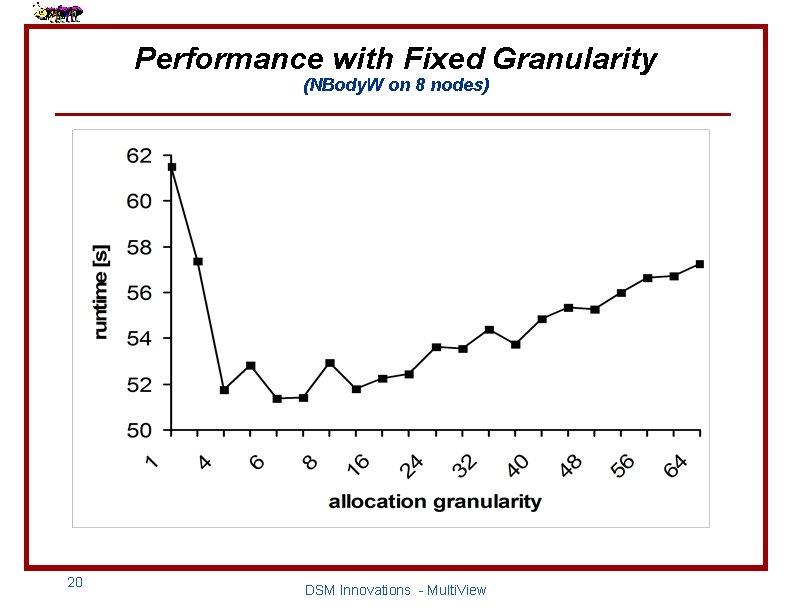

Performance with Fixed Granularity (NBody. W on 8 nodes) 20 DSM Innovations - Multi. View

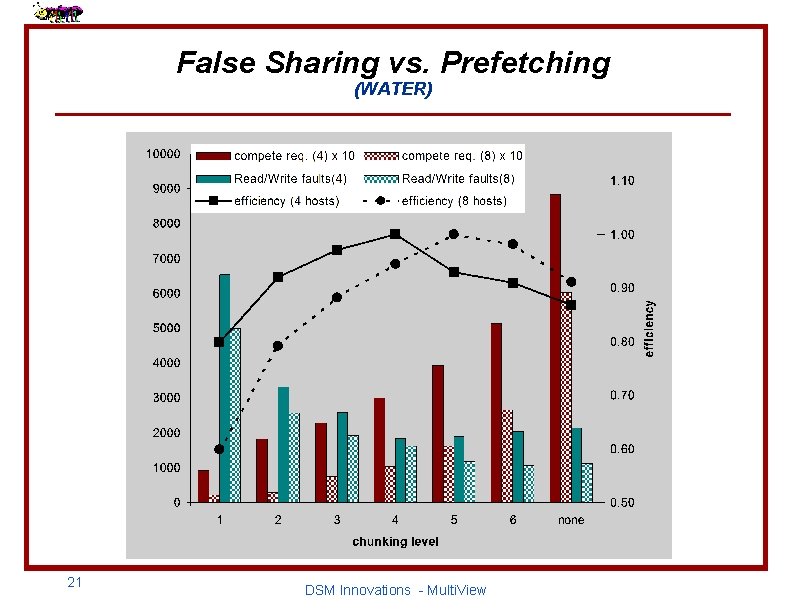

False Sharing vs. Prefetching (WATER) 21 DSM Innovations - Multi. View

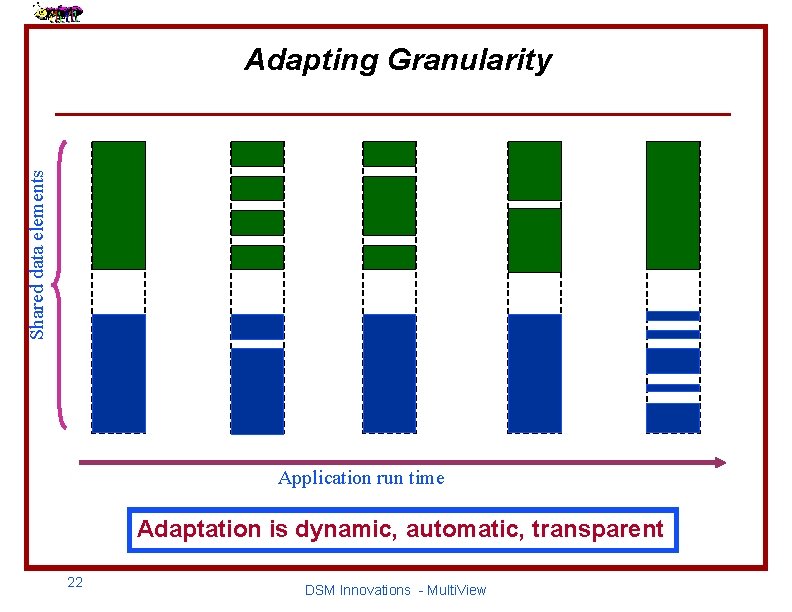

Shared data elements Adapting Granularity Application run time Adaptation is dynamic, automatic, transparent 22 DSM Innovations - Multi. View

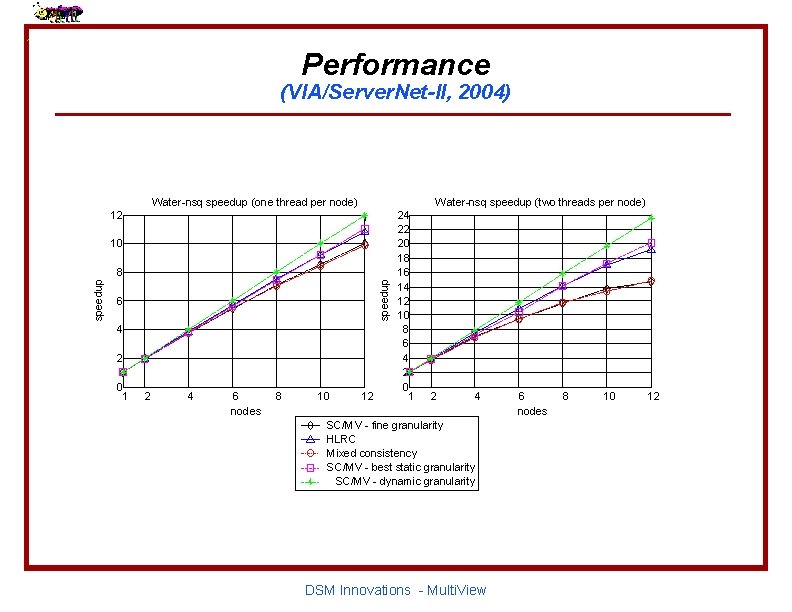

Performance (VIA/Server. Net-II, 2004) Water-nsq speedup (one thread per node) Water-nsq speedup (two threads per node) 12 10 speedup 8 6 4 2 0 1 2 4 6 nodes 8 10 12 24 22 20 18 16 14 12 10 8 6 4 2 0 1 2 4 SC/MV - fine granularity HLRC Mixed consistency SC/MV - best static granularity SC/MV - dynamic granularity DSM Innovations - Multi. View 6 nodes 8 10 12

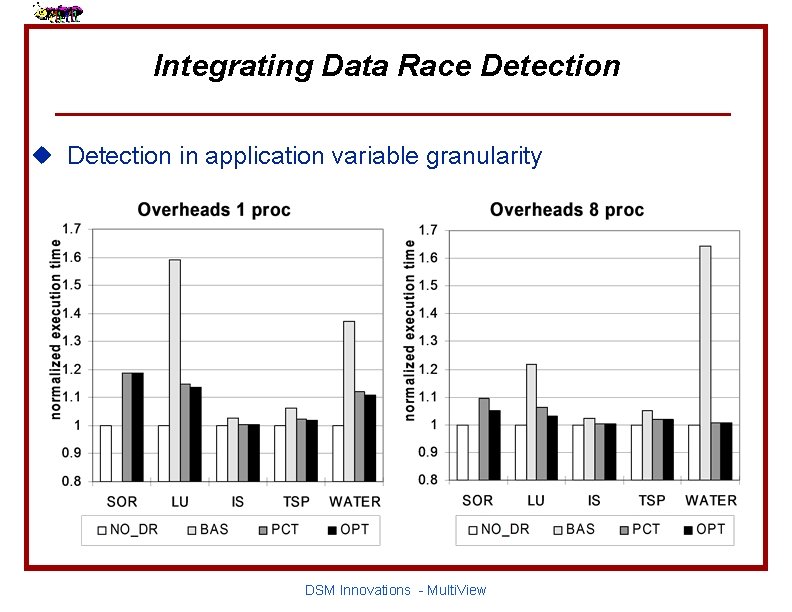

Integrating Data Race Detection u Detection in application variable granularity DSM Innovations - Multi. View

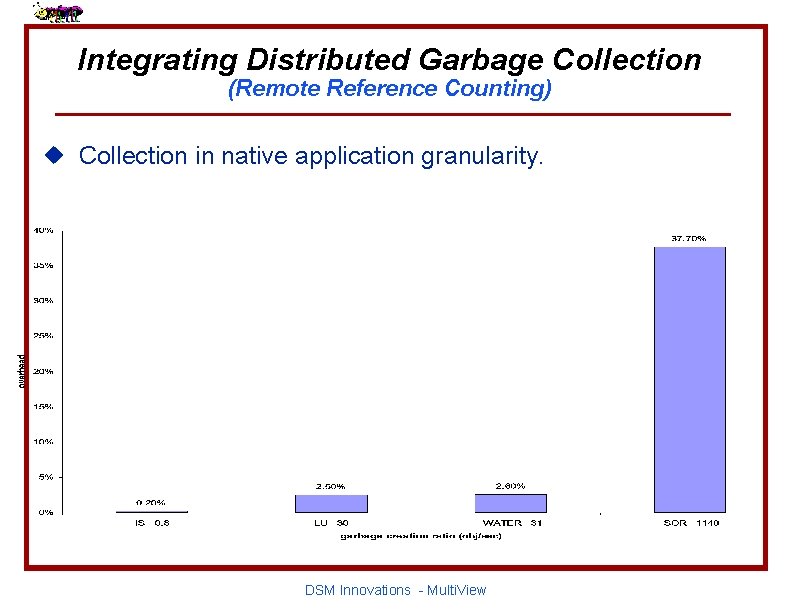

Integrating Distributed Garbage Collection (Remote Reference Counting) u Collection in native application granularity. DSM Innovations - Multi. View

Questions? 26 DSM Innovations - Multi. View

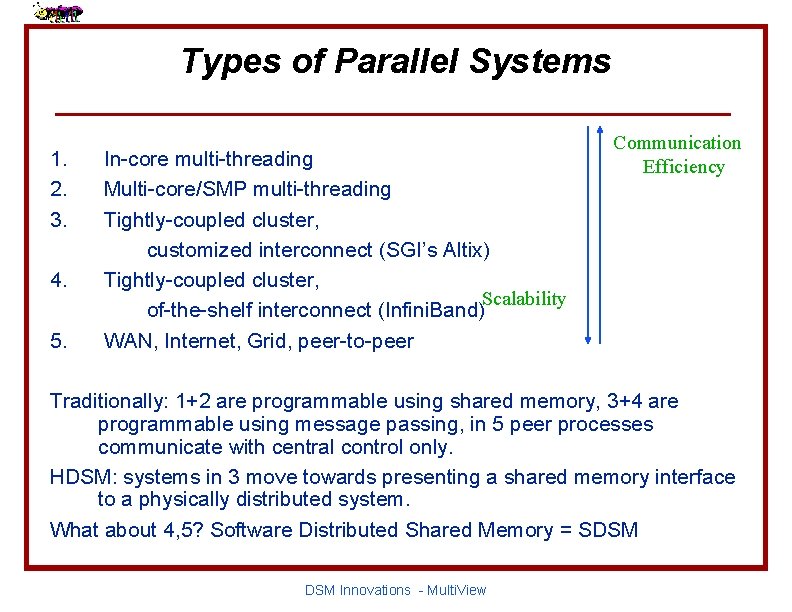

Types of Parallel Systems 1. 2. 3. 4. 5. In-core multi-threading Multi-core/SMP multi-threading Tightly-coupled cluster, customized interconnect (SGI’s Altix) Tightly-coupled cluster, Scalability of-the-shelf interconnect (Infini. Band) WAN, Internet, Grid, peer-to-peer Communication Efficiency Traditionally: 1+2 are programmable using shared memory, 3+4 are programmable using message passing, in 5 peer processes communicate with central control only. HDSM: systems in 3 move towards presenting a shared memory interface to a physically distributed system. What about 4, 5? Software Distributed Shared Memory = SDSM Innovations - Multi. View

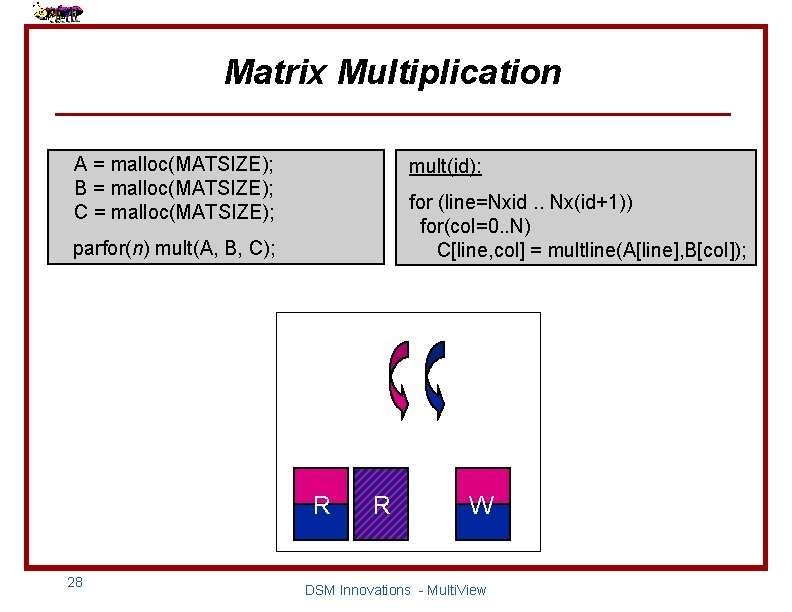

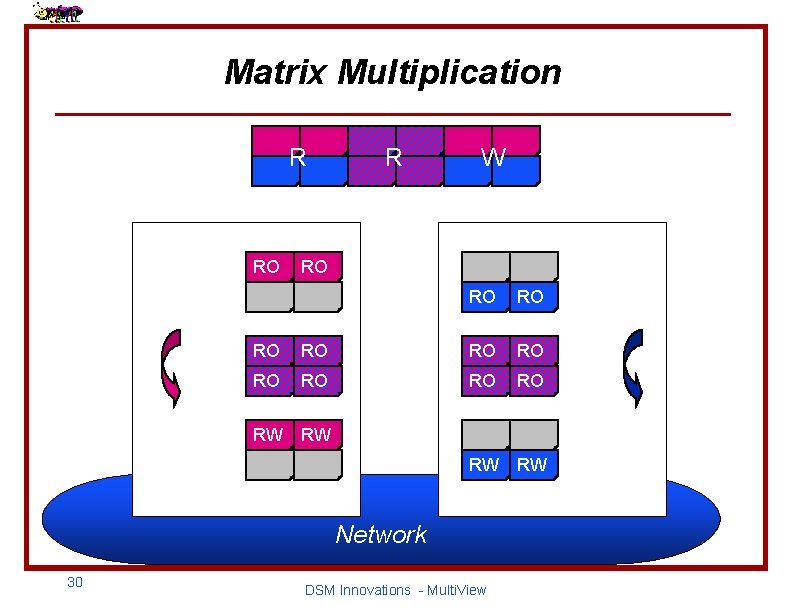

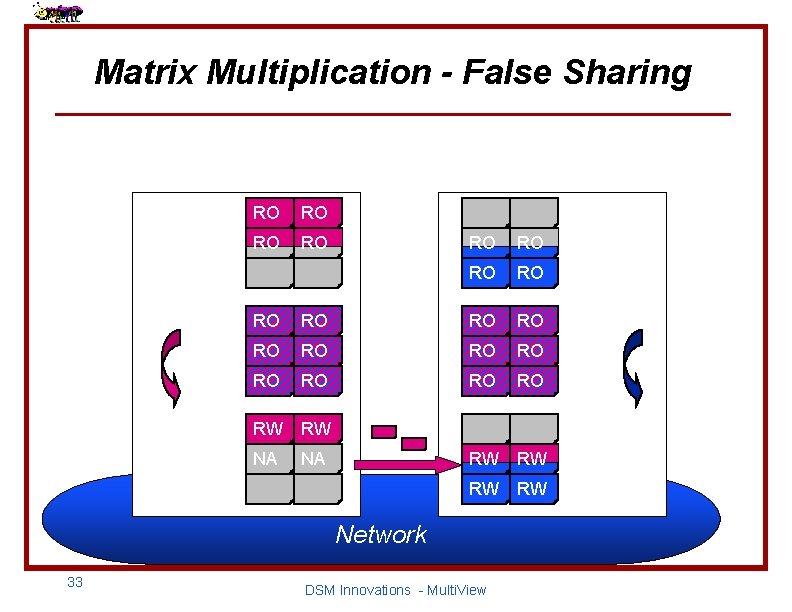

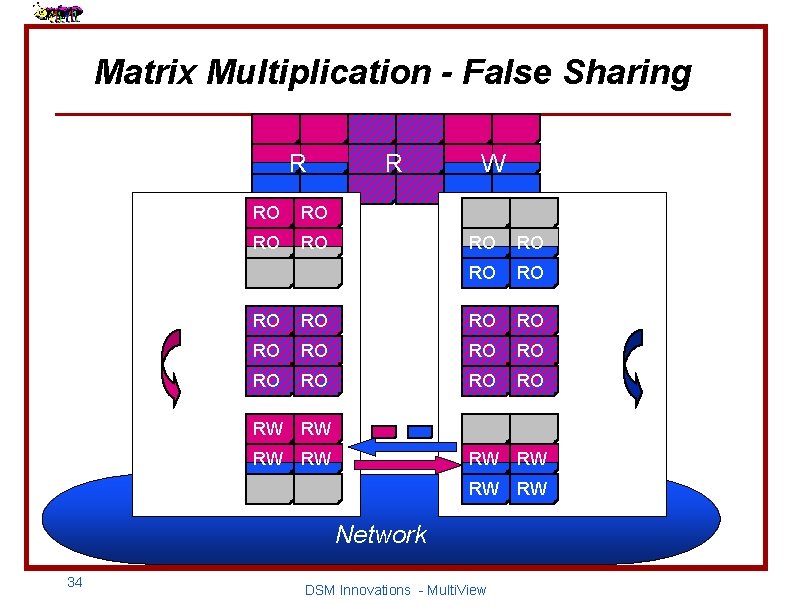

Matrix Multiplication A = malloc(MATSIZE); B = malloc(MATSIZE); C = malloc(MATSIZE); mult(id): for (line=Nxid. . Nx(id+1)) for(col=0. . N) C[line, col] = multline(A[line], B[col]); parfor(n) mult(A, B, C); two threads Read/only matrices R 28 R Write matrix W DSM Innovations - Multi. View

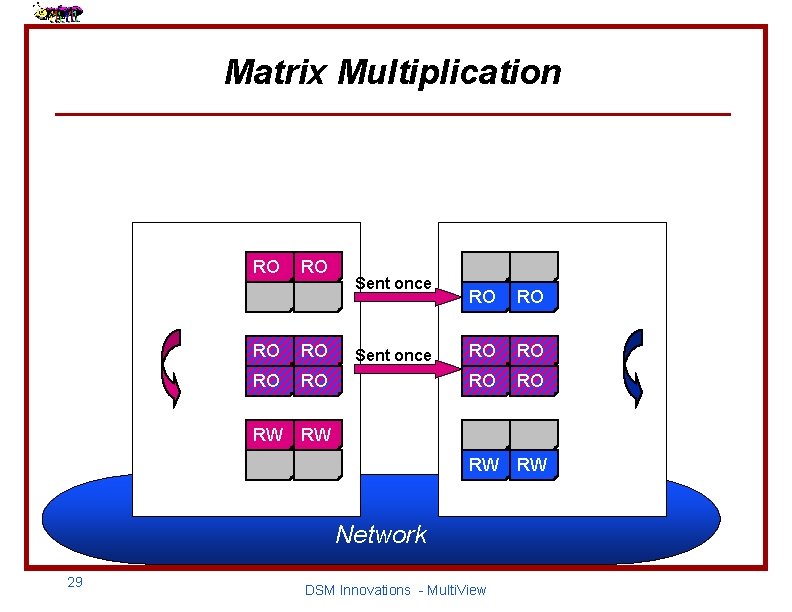

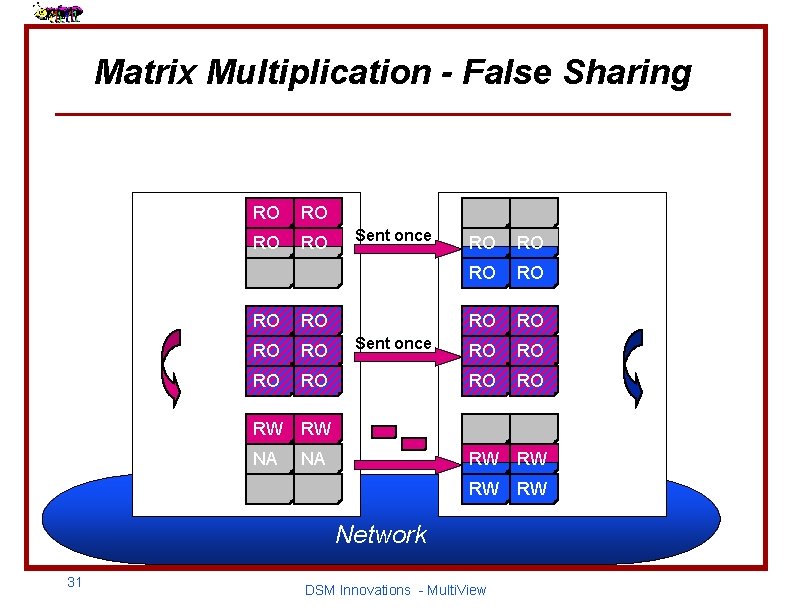

Matrix Multiplication A RO RO Sent once RO RO x B x RO RO Sent once RO RO = C B = RW RW RW Network 29 A DSM Innovations - Multi. View RW C

Matrix Multiplication R A RO R W RO RO RO x B x RO RO = C B = RW RW RW Network 30 A DSM Innovations - Multi. View RW C

Matrix Multiplication - False Sharing A RO RO Sent once RO RO x B = C x RO RO RO RW RW NA NA Sent once RO RO RO B = RW RW Network 31 A DSM Innovations - Multi. View C

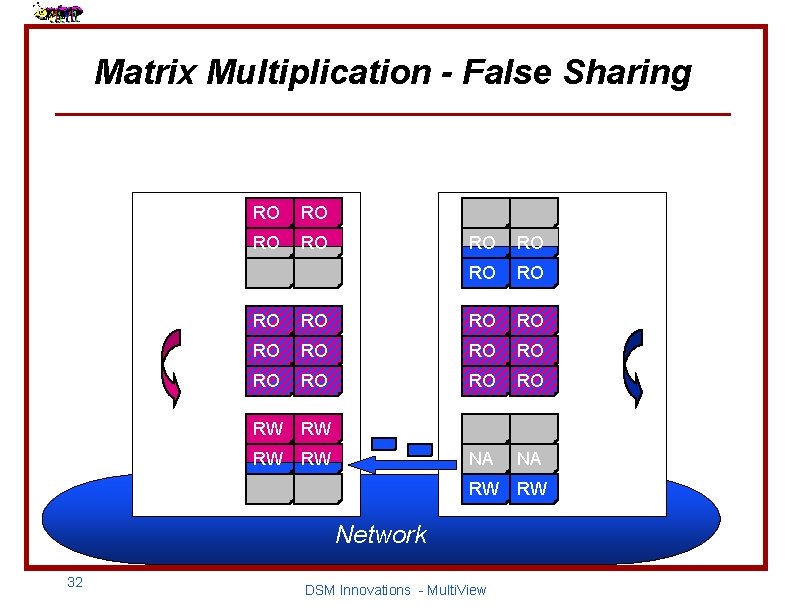

Matrix Multiplication - False Sharing A RO RO x B = C x RO RO RO RW RW B = NA NA RW RW Network 32 A DSM Innovations - Multi. View C

Matrix Multiplication - False Sharing A RO RO x B = C x RO RO RO RW RW NA NA B = RW RW Network 33 A DSM Innovations - Multi. View C

Matrix Multiplication - False Sharing R A RO RO R W RO RO x B = C x RO RO RO RW RW B = RW RW Network 34 A DSM Innovations - Multi. View C

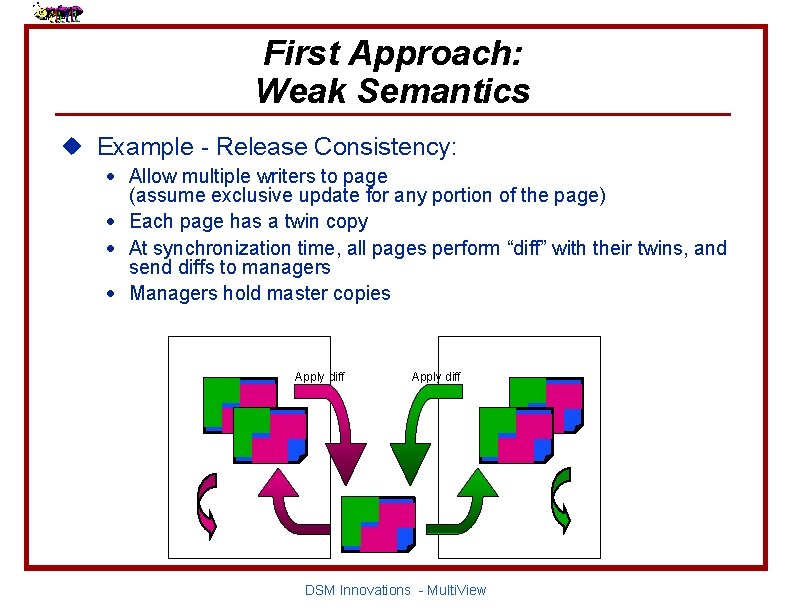

First Approach: Weak Semantics u Example - Release Consistency: · Allow multiple writers to page (assume exclusive update for any portion of the page) · Each page has a twin copy · At synchronization time, all pages perform “diff” with their twins, and send diffs to managers · Managers hold master copies twin Apply diff twin RW RW DSM Innovations - Multi. View

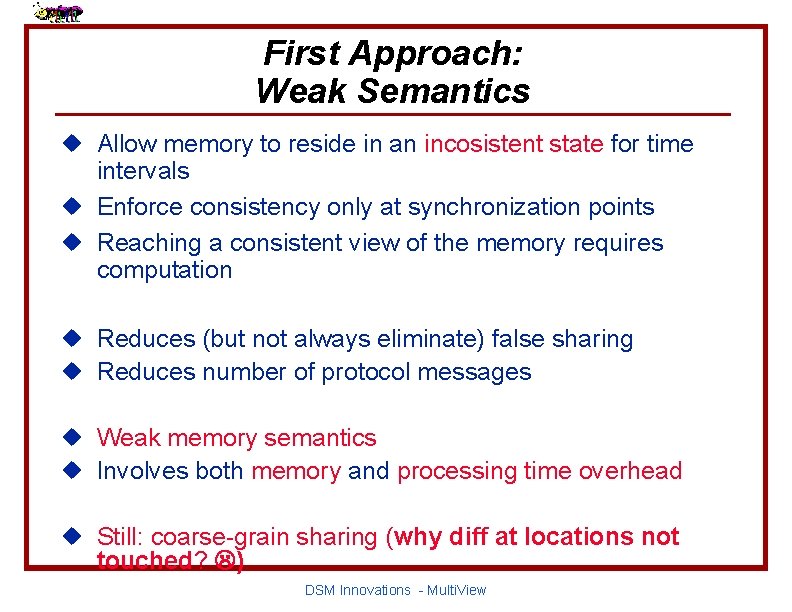

First Approach: Weak Semantics u Allow memory to reside in an incosistent state for time intervals u Enforce consistency only at synchronization points u Reaching a consistent view of the memory requires computation u Reduces (but not always eliminate) false sharing u Reduces number of protocol messages u Weak memory semantics u Involves both memory and processing time overhead u Still: coarse-grain sharing (why diff at locations not touched? ) DSM Innovations - Multi. View

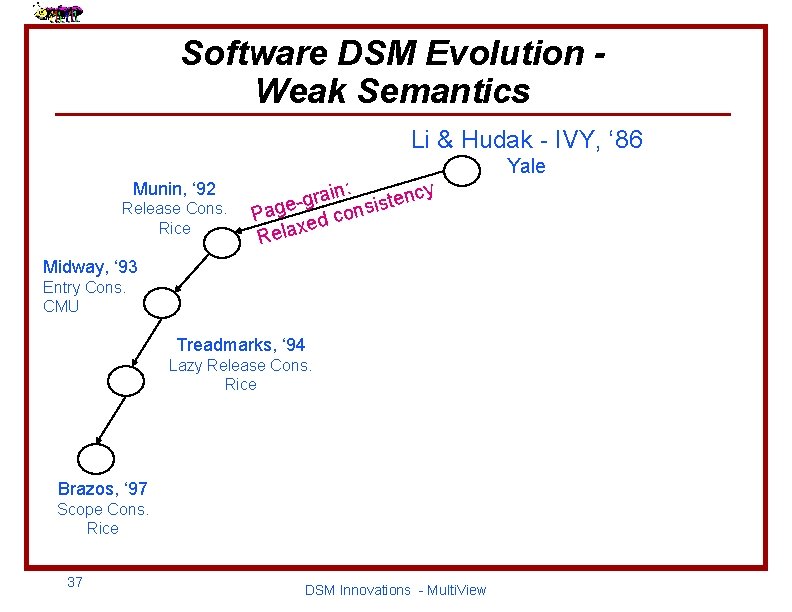

Software DSM Evolution Weak Semantics Li & Hudak - IVY, ‘ 86 Munin, ‘ 92 Release Cons. Rice n: i cy a n r e g t s i Page ed cons x Rela Midway, ‘ 93 Entry Cons. CMU Treadmarks, ‘ 94 Lazy Release Cons. Rice Brazos, ‘ 97 Scope Cons. Rice 37 DSM Innovations - Multi. View Yale

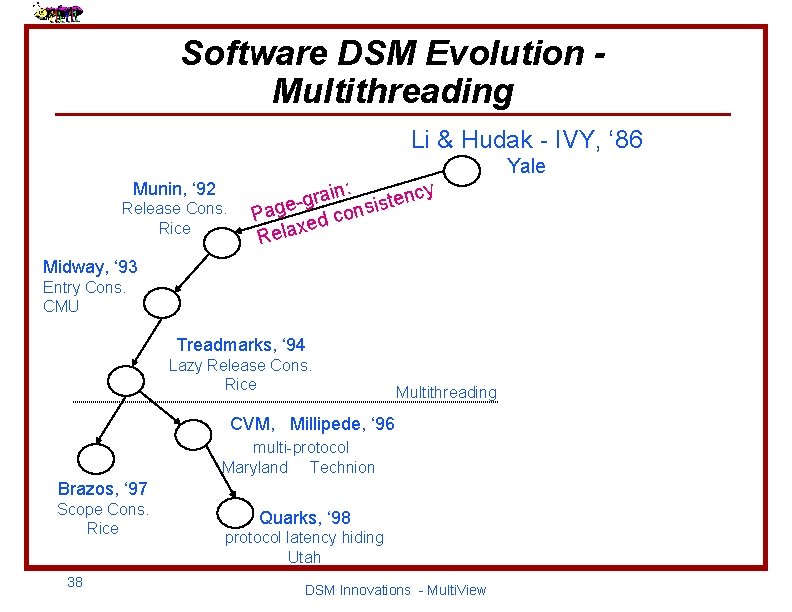

Software DSM Evolution Multithreading Li & Hudak - IVY, ‘ 86 Munin, ‘ 92 Release Cons. Rice n: i cy a n r e g t s i Page ed cons x Rela Midway, ‘ 93 Entry Cons. CMU Treadmarks, ‘ 94 Lazy Release Cons. Rice Multithreading CVM, Millipede, ‘ 96 multi-protocol Maryland Technion Brazos, ‘ 97 Scope Cons. Rice 38 Quarks, ‘ 98 protocol latency hiding Utah DSM Innovations - Multi. View Yale

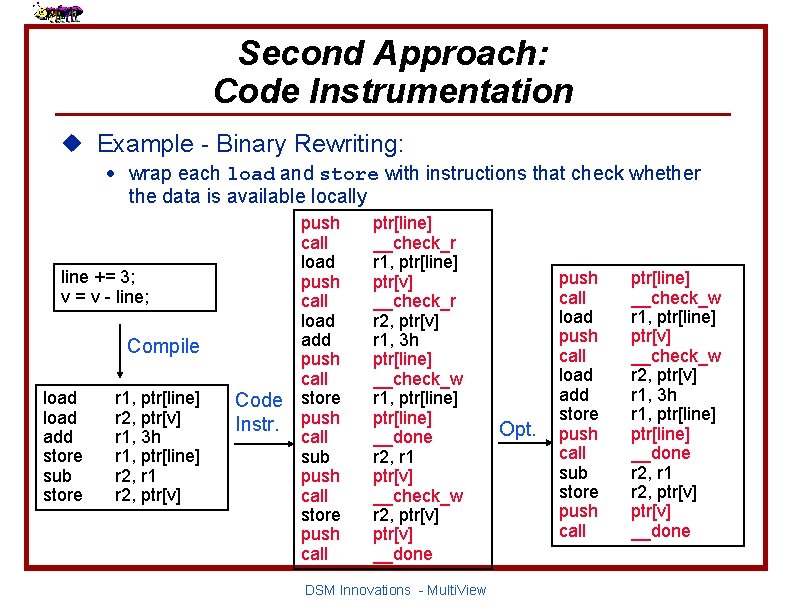

Second Approach: Code Instrumentation u Example - Binary Rewriting: · wrap each load and store with instructions that check whether the data is available locally line += 3; v = v - line; Compile load add store sub store r 1, ptr[line] r 2, ptr[v] r 1, 3 h r 1, ptr[line] r 2, r 1 r 2, ptr[v] Code Instr. push call load add push call store push call sub push call store push call ptr[line] __check_r r 1, ptr[line] ptr[v] __check_r r 2, ptr[v] r 1, 3 h ptr[line] __check_w r 1, ptr[line] __done r 2, r 1 ptr[v] __check_w r 2, ptr[v] __done DSM Innovations - Multi. View Opt. push call load add store push call sub store push call ptr[line] __check_w r 1, ptr[line] ptr[v] __check_w r 2, ptr[v] r 1, 3 h r 1, ptr[line] __done r 2, r 1 r 2, ptr[v] __done

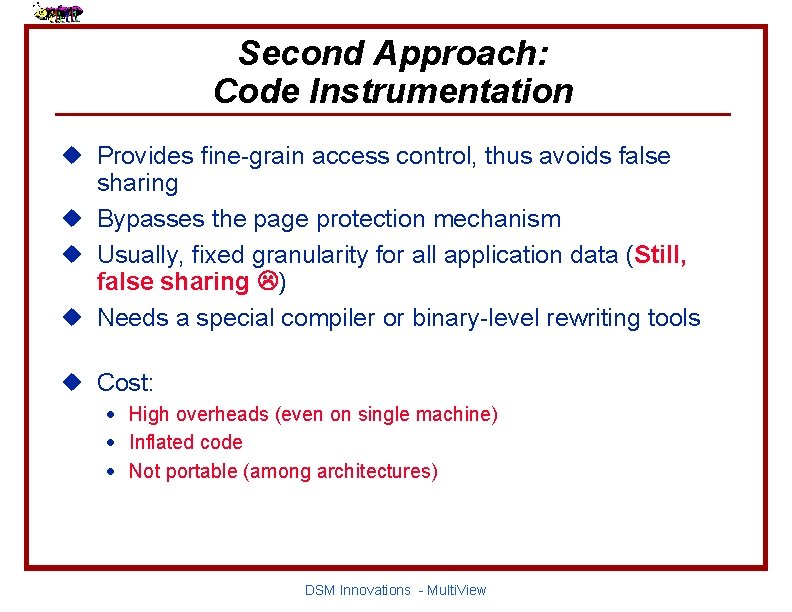

Second Approach: Code Instrumentation u Provides fine-grain access control, thus avoids false sharing u Bypasses the page protection mechanism u Usually, fixed granularity for all application data (Still, false sharing ) u Needs a special compiler or binary-level rewriting tools u Cost: · High overheads (even on single machine) · Inflated code · Not portable (among architectures) DSM Innovations - Multi. View

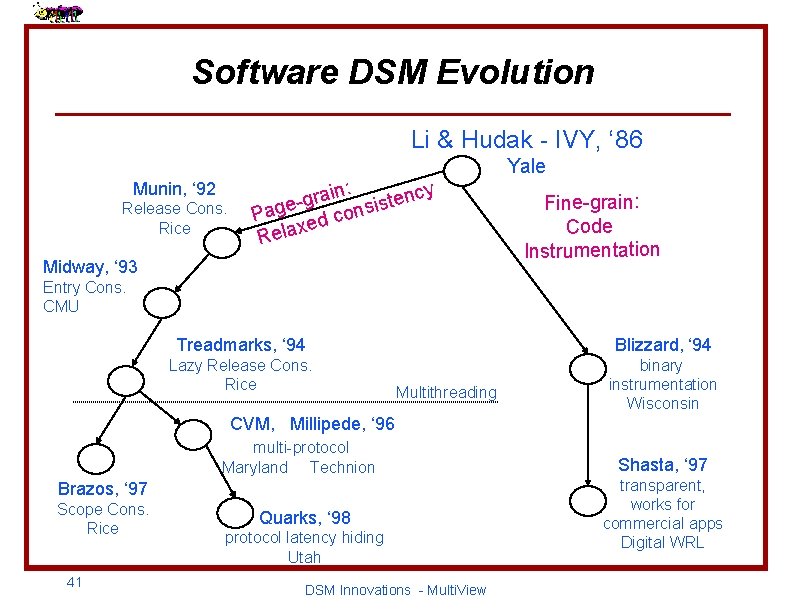

Software DSM Evolution Li & Hudak - IVY, ‘ 86 Munin, ‘ 92 Release Cons. Rice n: i cy a n r e g t s i Page ed cons x Rela Midway, ‘ 93 Yale Fine-grain: Code Instrumentation Entry Cons. CMU Treadmarks, ‘ 94 Blizzard, ‘ 94 Lazy Release Cons. Rice binary instrumentation Wisconsin Multithreading CVM, Millipede, ‘ 96 multi-protocol Maryland Technion Brazos, ‘ 97 Scope Cons. Rice 41 Quarks, ‘ 98 protocol latency hiding Utah DSM Innovations - Multi. View Shasta, ‘ 97 transparent, works for commercial apps Digital WRL

Multi. View - Overheads u Application: traverse an array of integers, all packed up in minipages u The number of minipages is derived from the value of max views in page u Limitations of the experiments: · 1. 63 GB contiguous address space available · Up to 1664 views · Need 64 bits!!! 42 DSM Innovations - Multi. View

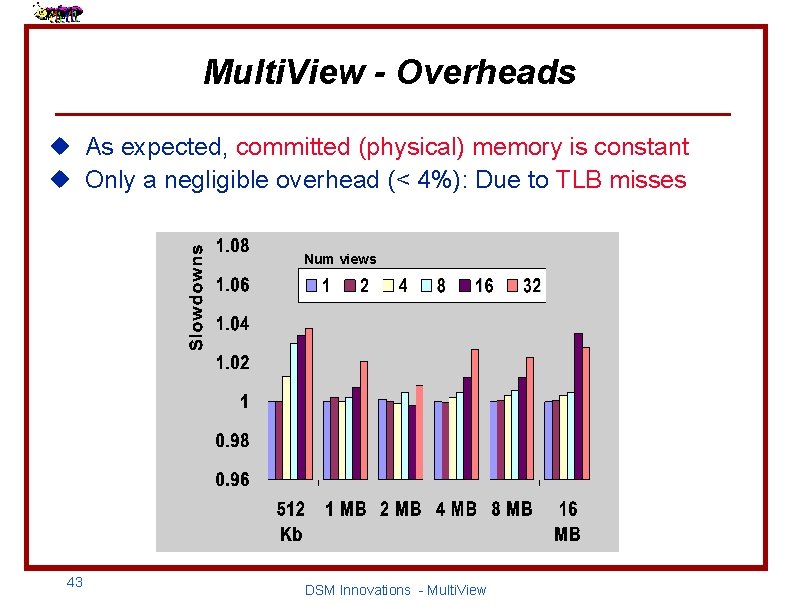

Multi. View - Overheads u As expected, committed (physical) memory is constant u Only a negligible overhead (< 4%): Due to TLB misses Num views 43 DSM Innovations - Multi. View

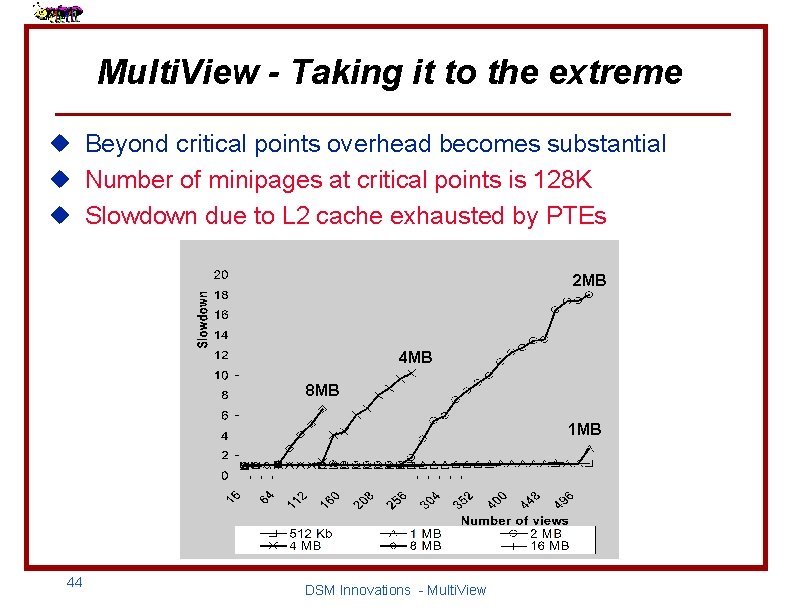

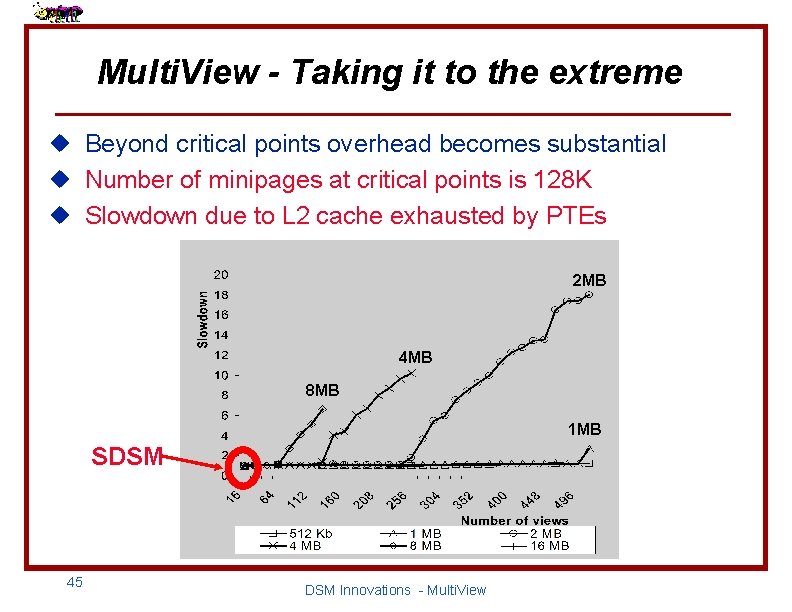

Multi. View - Taking it to the extreme u Beyond critical points overhead becomes substantial u Number of minipages at critical points is 128 K u Slowdown due to L 2 cache exhausted by PTEs 2 MB 4 MB 8 MB 1 MB 44 DSM Innovations - Multi. View

Multi. View - Taking it to the extreme u Beyond critical points overhead becomes substantial u Number of minipages at critical points is 128 K u Slowdown due to L 2 cache exhausted by PTEs 2 MB 4 MB 8 MB 1 MB SDSM 45 DSM Innovations - Multi. View

The Transparent DSM: System Initialization u For most DSM systems, initialization is an almost trivial task u The transparent DSM system cannot use such a simple solution u In order to initialize a DSM system transparently we have to inject the initialization code into the loaded application DSM Innovations - Multi. View

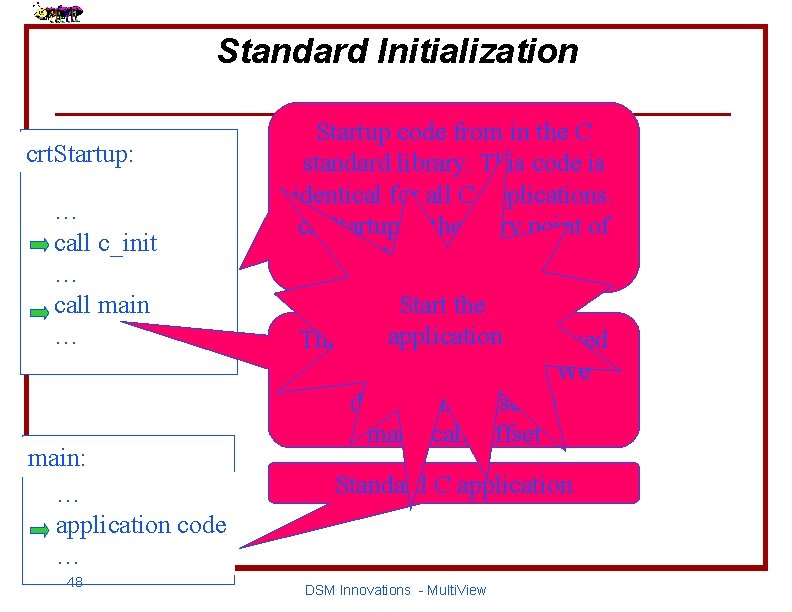

Standard Initialization crt. Startup: … call c_init … call main … main: … application code … 48 Startup code from in the C standard library. This code is identical for all C applications. crt. Startup is the entry point of the executable. Initialize Start thethe C runtime application library This instruction lies at a fixed offset from crt. Startup. We denote this offset as main_call_offset Standard C application DSM Innovations - Multi. View

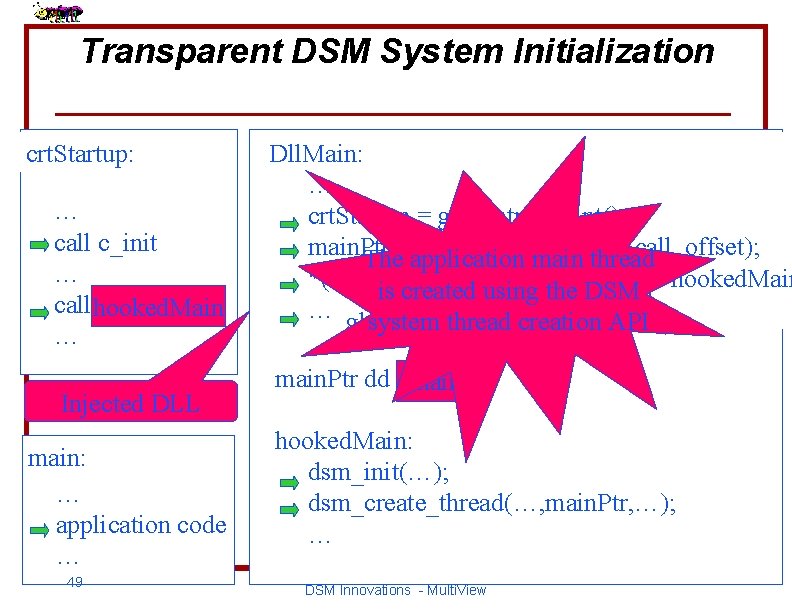

Transparent DSM System Initialization crt. Startup: … call c_init … call hooked. Main main … Injected DLL main: … application code … 49 Dll. Main: … crt. Startup = get_entry_point(); main. Ptr =application *(crt. Startup + main_call_offset); The Initialize The OS the passes DSM main control system thread Initialize the *(crt. Startup + main_call_offset) The (the is created main to OSDll. Main() API thread using is intercepted, isthe after resumed DSM = hooked. Main C runtime library … globals system the DLL arethread moved has been creation to the loaded DSM) API main. Ptr dd main NULL hooked. Main: dsm_init(…); dsm_create_thread(…, main. Ptr, …); … DSM Innovations - Multi. View

SDSMs on Emerging Fast Networks u Fast networking is an emerging technology u Multi. View provides only one aspect: reducing message sizes u The next magnitude of improvement shifts from the network layer to the system architectures and protocols that use those networks u Challenges: · Efficiently employ and integrate fast networks · Provide a “thin” protocol layer: reduce protocol complexity, eliminate buffer copying, use home-based management, etc. 50 DSM Innovations - Multi. View

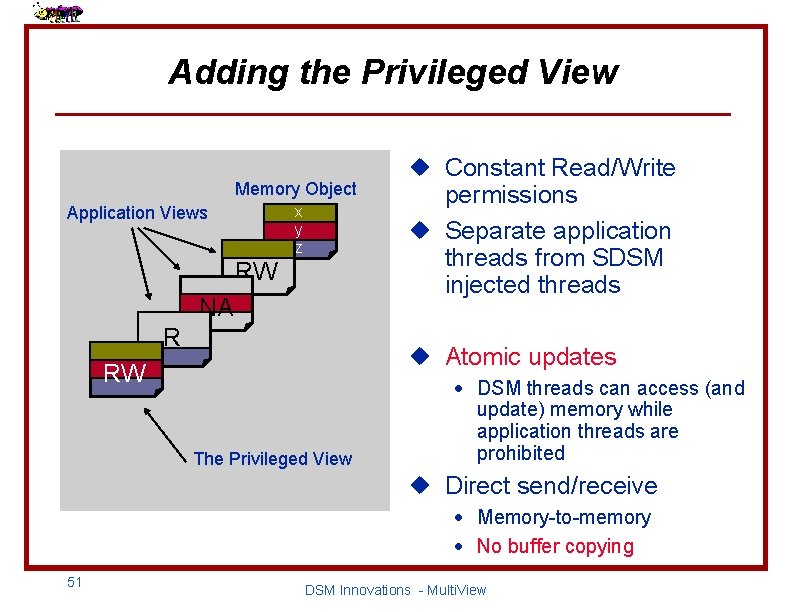

Adding the Privileged View Memory Object x y z Application Views RW NA R u Constant Read/Write permissions u Separate application threads from SDSM injected threads u Atomic updates RW The Privileged View · DSM threads can access (and update) memory while application threads are prohibited u Direct send/receive · Memory-to-memory · No buffer copying 51 DSM Innovations - Multi. View

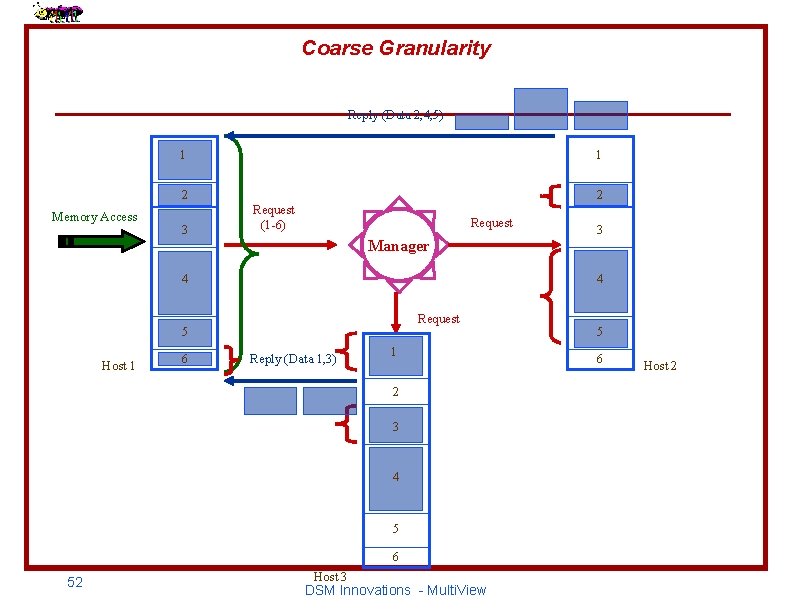

Coarse Granularity Reply (Data 2, 4, 5) Memory Access 1 1 2 2 3 Request (1 -6) Request Manager 4 4 Request 5 Host 1 6 Reply (Data 1, 3) 1 2 3 4 5 6 52 3 Host 3 DSM Innovations - Multi. View 5 6 Host 2

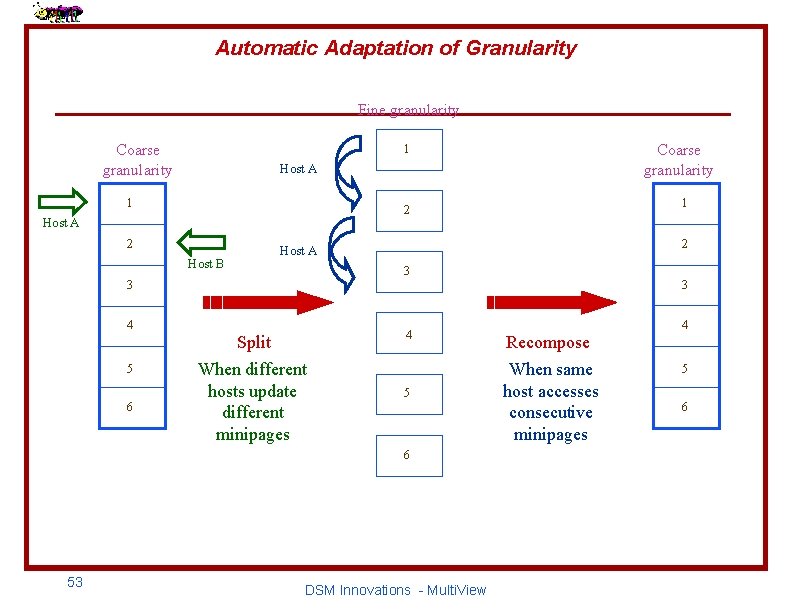

Automatic Adaptation of Granularity Fine granularity 1 Coarse granularity Host A 1 2 3 3 6 2 Host A Host B 5 4 Split When different hosts update different minipages 5 6 53 1 2 Host A 4 Coarse granularity DSM Innovations - Multi. View 3 Recompose When same host accesses consecutive minipages 4 5 6

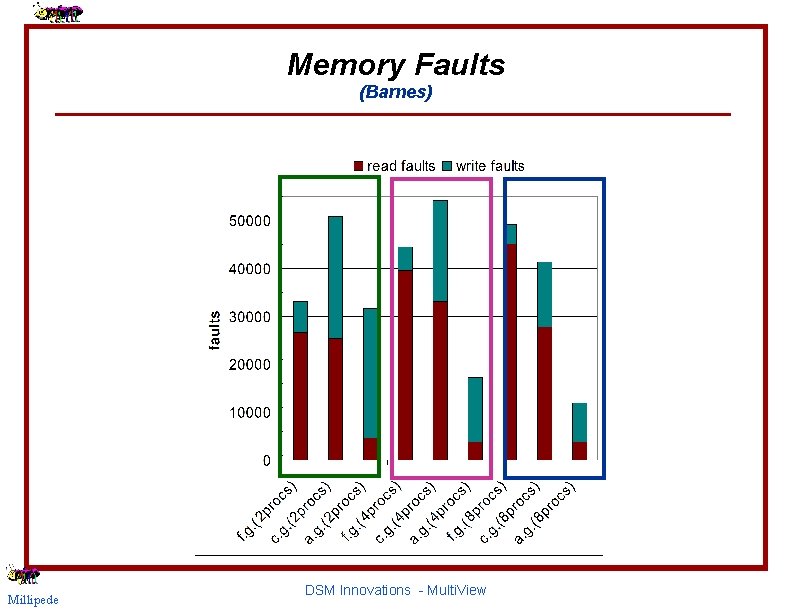

Memory Faults (Barnes) Millipede DSM Innovations - Multi. View

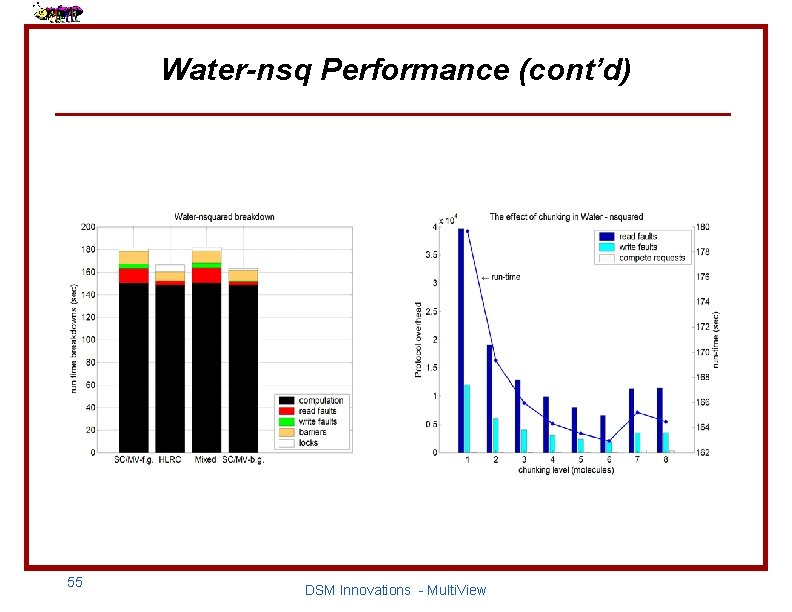

Water-nsq Performance (cont’d) 55 DSM Innovations - Multi. View

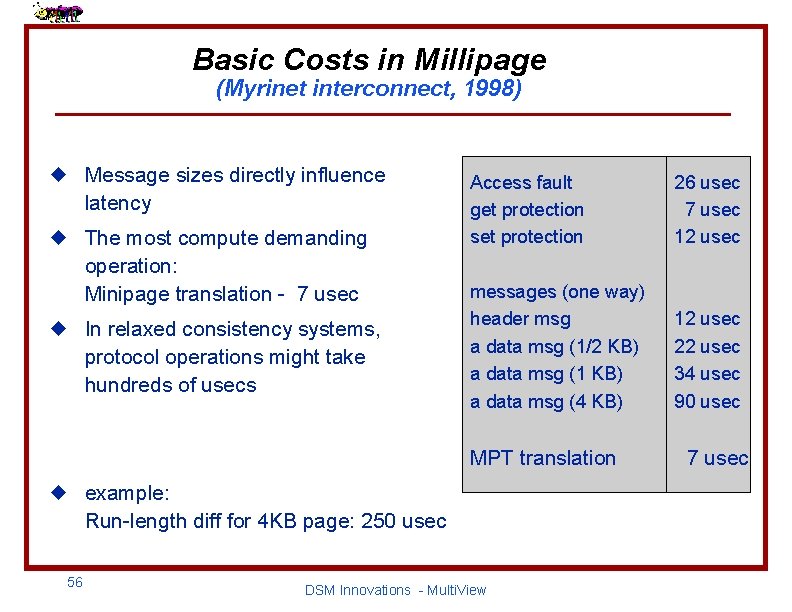

Basic Costs in Millipage (Myrinet interconnect, 1998) u Message sizes directly influence latency u The most compute demanding operation: Minipage translation - 7 usec u In relaxed consistency systems, protocol operations might take hundreds of usecs Access fault get protection set protection 26 usec 7 usec 12 usec messages (one way) header msg a data msg (1/2 KB) a data msg (1 KB) a data msg (4 KB) 12 usec 22 usec 34 usec 90 usec MPT translation u example: Run-length diff for 4 KB page: 250 usec 56 DSM Innovations - Multi. View 7 usec

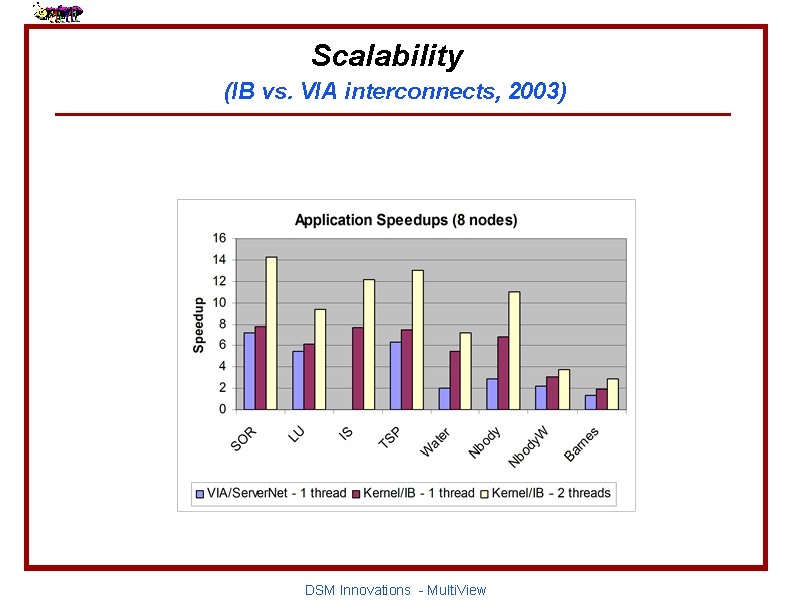

Scalability (IB vs. VIA interconnects, 2003) DSM Innovations - Multi. View

- Slides: 56