Programming Massively Parallel Processors Lecture Slides for Chapter

![Extended C • Declspecs – global, device, shared, local, constant __device__ float filter[N]; __global__ Extended C • Declspecs – global, device, shared, local, constant __device__ float filter[N]; __global__](https://slidetodoc.com/presentation_image_h2/78fd8f1e956e15ae37ef62ee1f696007/image-14.jpg)

![Compiling a CUDA Program C/C++ CUDA Application float 4 me = gx[gtid]; me. x Compiling a CUDA Program C/C++ CUDA Application float 4 me = gx[gtid]; me. x](https://slidetodoc.com/presentation_image_h2/78fd8f1e956e15ae37ef62ee1f696007/image-40.jpg)

- Slides: 45

Programming Massively Parallel Processors Lecture Slides for Chapter 3: CUDA Programming Model © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 1

What is (Historical) GPGPU ? • General Purpose computation using GPU and graphics API in applications other than 3 D graphics – GPU accelerates critical path of application • Data parallel algorithms leverage GPU attributes – Large data arrays, streaming throughput – Fine-grain SIMD parallelism – Low-latency floating point (FP) computation • Applications – see //GPGPU. org – Game effects (FX) physics, image processing – Physical modeling, computational engineering, matrix algebra, convolution, correlation, sorting © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 2

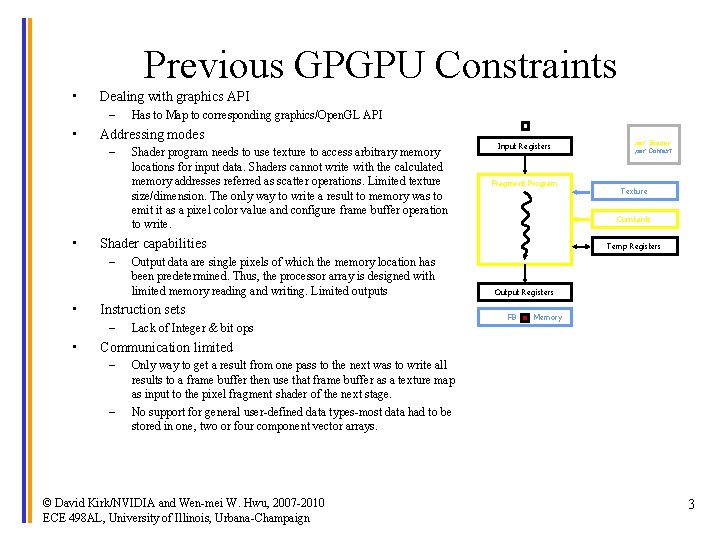

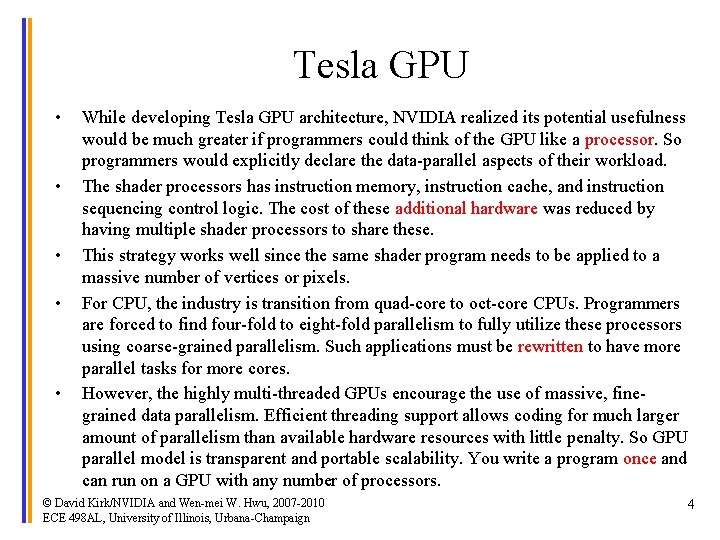

Previous GPGPU Constraints • Dealing with graphics API – • Addressing modes – • Output data are single pixels of which the memory location has been predetermined. Thus, the processor array is designed with limited memory reading and writing. Limited outputs Instruction sets – • Shader program needs to use texture to access arbitrary memory locations for input data. Shaders cannot write with the calculated memory addresses referred as scatter operations. Limited texture size/dimension. The only way to write a result to memory was to emit it as a pixel color value and configure frame buffer operation to write. Input Registers Fragment Program Lack of Integer & bit ops per thread per Shader per Context Texture Constants Shader capabilities – • Has to Map to corresponding graphics/Open. GL API Temp Registers Output Registers FB Memory Communication limited – – Only way to get a result from one pass to the next was to write all results to a frame buffer then use that frame buffer as a texture map as input to the pixel fragment shader of the next stage. No support for general user-defined data types-most data had to be stored in one, two or four component vector arrays. © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 3

Tesla GPU • • • While developing Tesla GPU architecture, NVIDIA realized its potential usefulness would be much greater if programmers could think of the GPU like a processor. So programmers would explicitly declare the data-parallel aspects of their workload. The shader processors has instruction memory, instruction cache, and instruction sequencing control logic. The cost of these additional hardware was reduced by having multiple shader processors to share these. This strategy works well since the same shader program needs to be applied to a massive number of vertices or pixels. For CPU, the industry is transition from quad-core to oct-core CPUs. Programmers are forced to find four-fold to eight-fold parallelism to fully utilize these processors using coarse-grained parallelism. Such applications must be rewritten to have more parallel tasks for more cores. However, the highly multi-threaded GPUs encourage the use of massive, finegrained data parallelism. Efficient threading support allows coding for much larger amount of parallelism than available hardware resources with little penalty. So GPU parallel model is transparent and portable scalability. You write a program once and can run on a GPU with any number of processors. © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 4

CUDA • • “Compute Unified Device Architecture” General purpose programming model – User kicks off batches of threads on the GPU – GPU = dedicated super-threaded, massively data parallel co-processor • Targeted software stack – Compute oriented drivers, language, and tools – Extension to the popular C programming language with new keywords and application programming interfaces for programmers to use both CPU and GPU – To a CUD programmer, the computing system consists of a host and one or more devices with a massive number of arithmetic units. • Driver for loading computation programs into GPU – – – Standalone Driver - Optimized for computation Interface designed for compute – graphics-free API Data sharing with Open. GL buffer objects Guaranteed maximum download & readback speeds Explicit GPU memory management © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 5

Data Parallelism • Many modern software applications have sections that exhibit a rich amount of data parallelism, a phenomenon that allows arithmetic operations to be safely performed on different parts of the data structures in parallel. CUDA devices accelerate the execution of these applications by applying their massive number of arithmetic units to these sections. • A physical world, different parts of capture simultaneous, independent physical events. E. g. rigid body physics and fluid dynamics model natural forces and movements that can be independently evaluated within small time steps. Such independent evaluation is the basis of data parallelism in these applications. • Another important parallelism is task parallelism. Many independent tasks can be done concurrently. E. g. molecular dynamics, many tasks are independent such as vibrational forces, rotational forces, neighborhood identification, velocity and position. © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 6

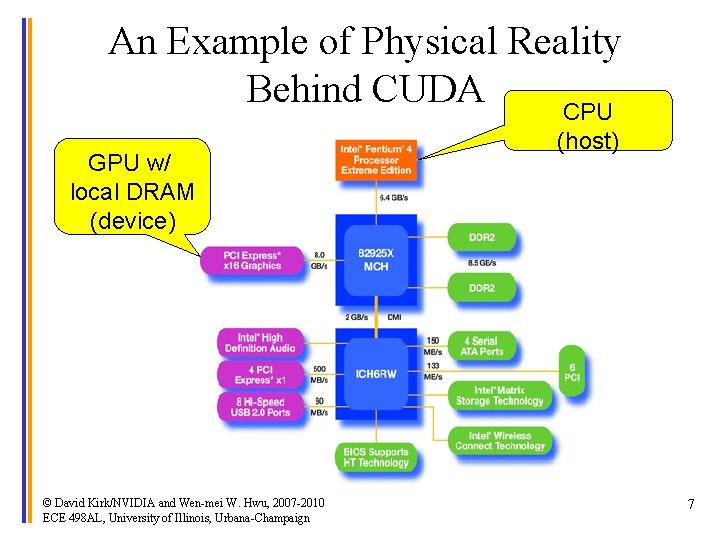

An Example of Physical Reality Behind CUDA CPU GPU w/ local DRAM (device) © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign (host) 7

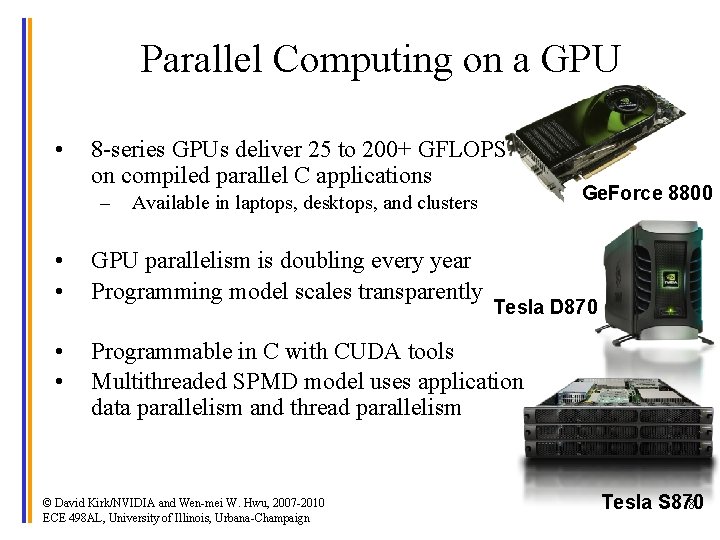

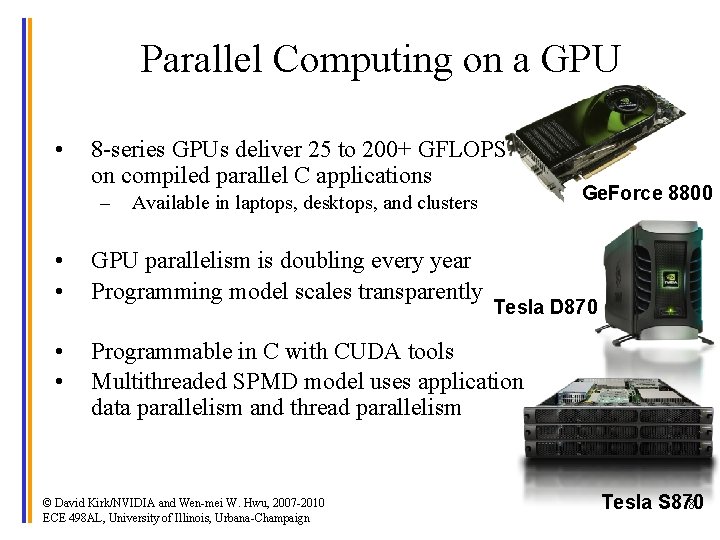

Parallel Computing on a GPU • 8 -series GPUs deliver 25 to 200+ GFLOPS on compiled parallel C applications – Available in laptops, desktops, and clusters • • GPU parallelism is doubling every year Programming model scales transparently • • Programmable in C with CUDA tools Multithreaded SPMD model uses application data parallelism and thread parallelism © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign Ge. Force 8800 Tesla D 870 Tesla S 870 8

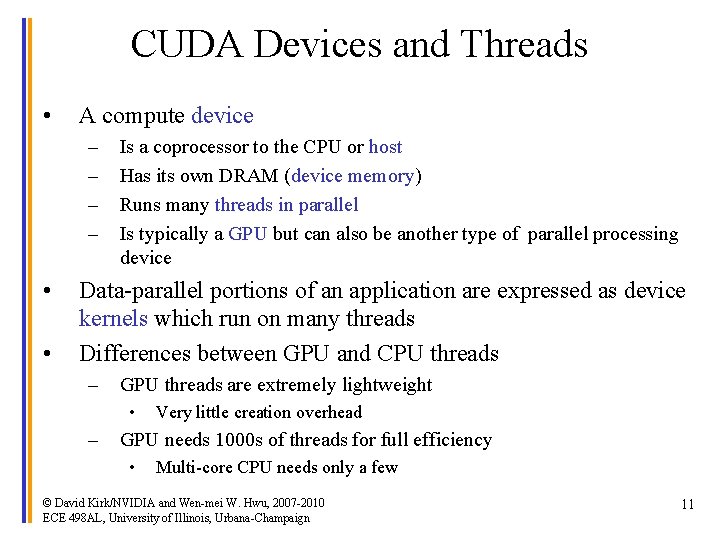

Overview • CUDA programming model – basic concepts and data types • CUDA application programming interface - basic • Simple examples to illustrate basic concepts and functionalities • Performance features will be covered later © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 9

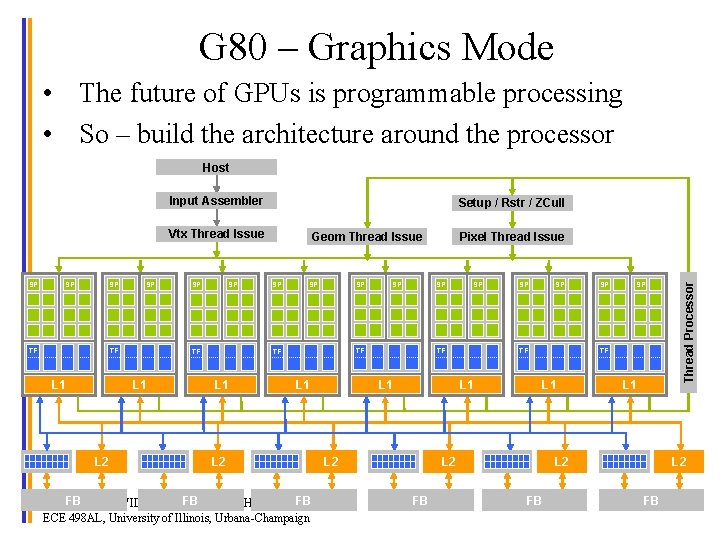

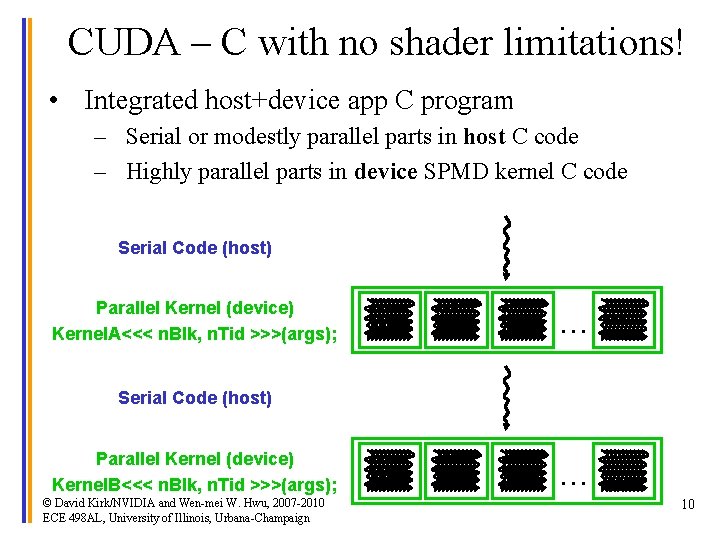

CUDA – C with no shader limitations! • Integrated host+device app C program – Serial or modestly parallel parts in host C code – Highly parallel parts in device SPMD kernel C code Serial Code (host) Parallel Kernel (device) Kernel. A<<< n. Blk, n. Tid >>>(args); . . . Serial Code (host) Parallel Kernel (device) Kernel. B<<< n. Blk, n. Tid >>>(args); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign . . . 10

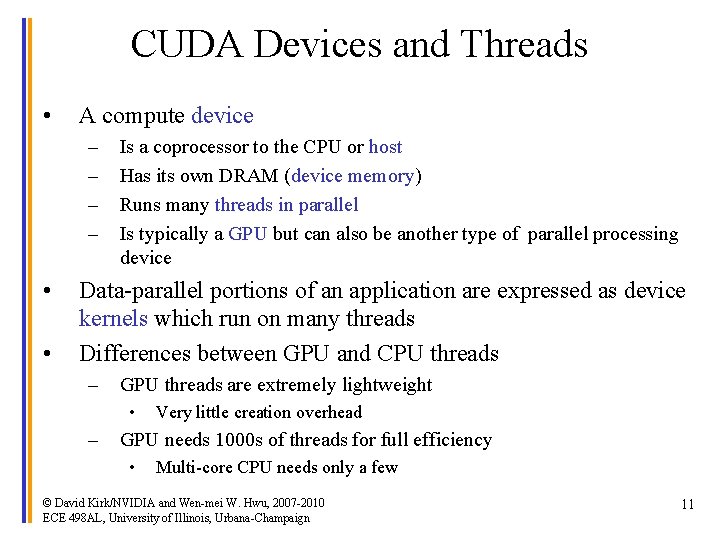

CUDA Devices and Threads • A compute device – – • • Is a coprocessor to the CPU or host Has its own DRAM (device memory) Runs many threads in parallel Is typically a GPU but can also be another type of parallel processing device Data-parallel portions of an application are expressed as device kernels which run on many threads Differences between GPU and CPU threads – GPU threads are extremely lightweight • – Very little creation overhead GPU needs 1000 s of threads for full efficiency • Multi-core CPU needs only a few © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 11

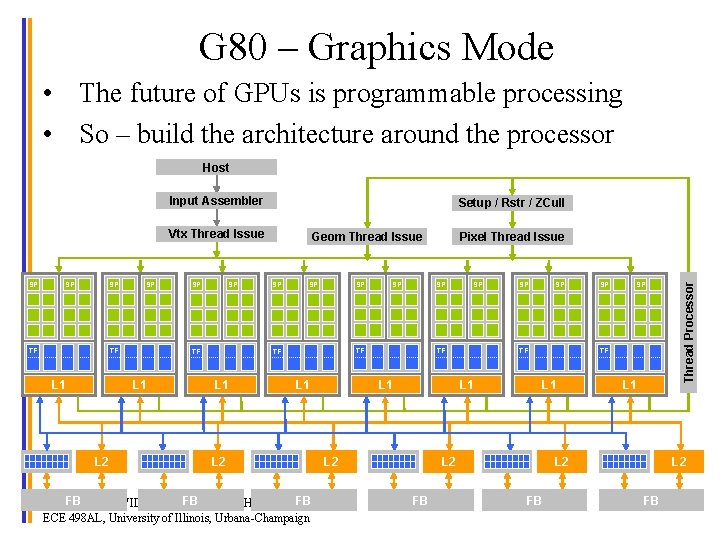

G 80 – Graphics Mode • The future of GPUs is programmable processing • So – build the architecture around the processor Host Input Assembler Setup / Rstr / ZCull SP SP SP TF L 1 L 2 SP Geom Thread Issue SP SP SP L 2 SP TF TF L 1 SP Pixel Thread Issue TF L 1 SP SP TF L 1 L 2 FB Kirk/NVIDIA and Wen-mei FB FB © David W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign SP SP TF L 1 L 2 FB SP L 1 L 2 FB Thread Processor Vtx Thread Issue L 2 FB 12

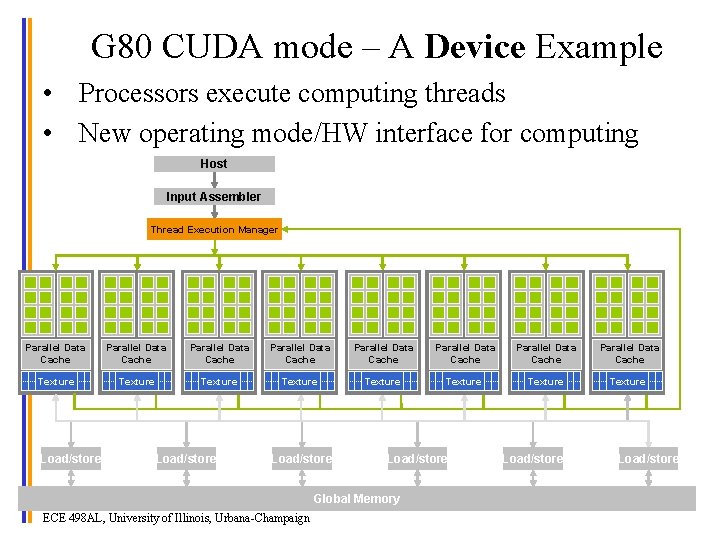

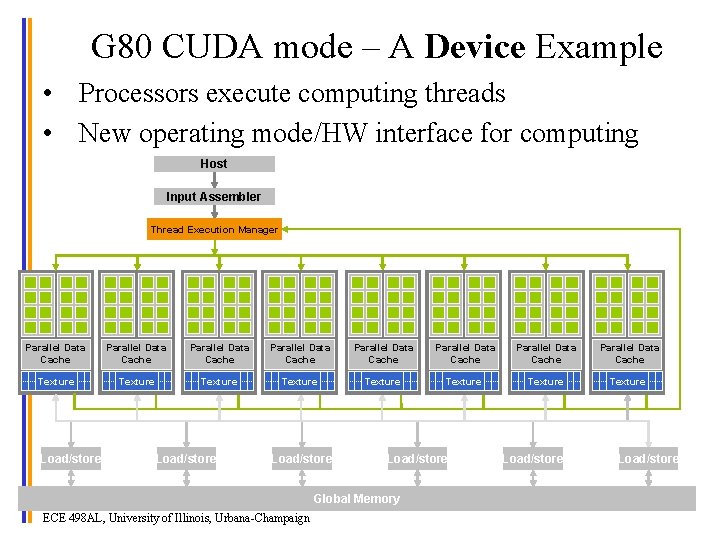

G 80 CUDA mode – A Device Example • Processors execute computing threads • New operating mode/HW interface for computing Host Input Assembler Thread Execution Manager Parallel Data Cache Parallel Data Cache Texture Texture Texture Load/store Global Memory © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign Load/store 13

![Extended C Declspecs global device shared local constant device float filterN global Extended C • Declspecs – global, device, shared, local, constant __device__ float filter[N]; __global__](https://slidetodoc.com/presentation_image_h2/78fd8f1e956e15ae37ef62ee1f696007/image-14.jpg)

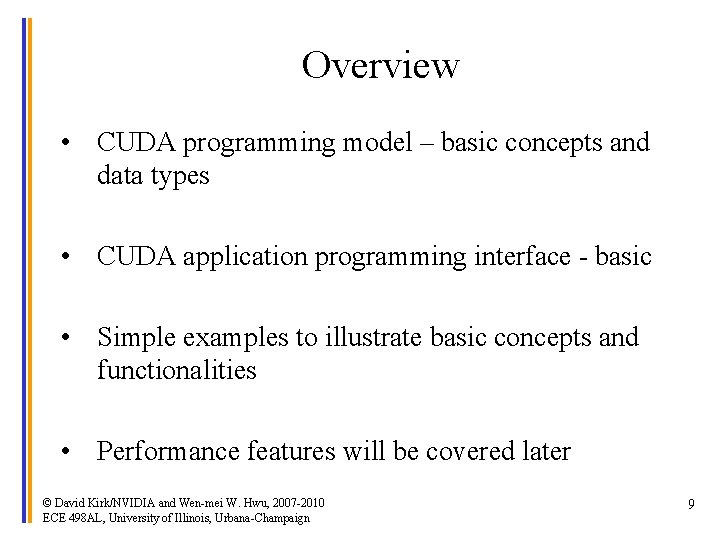

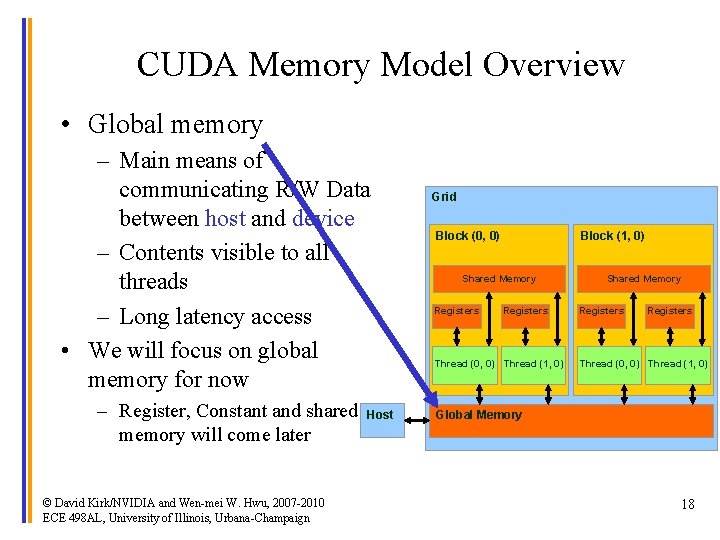

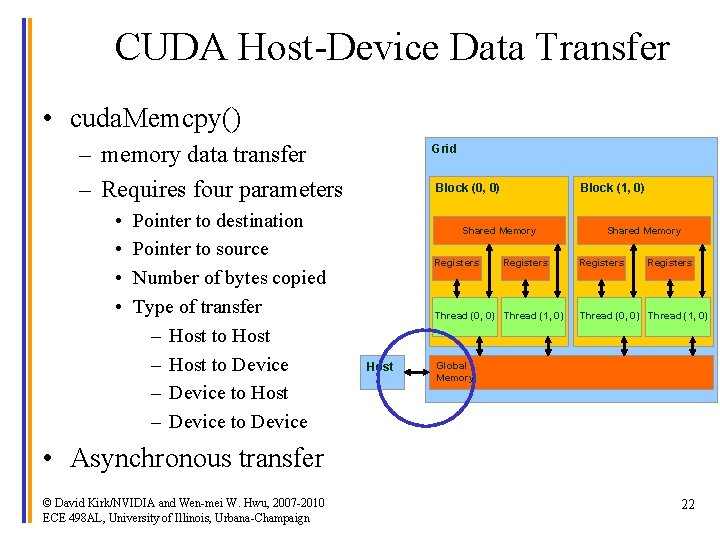

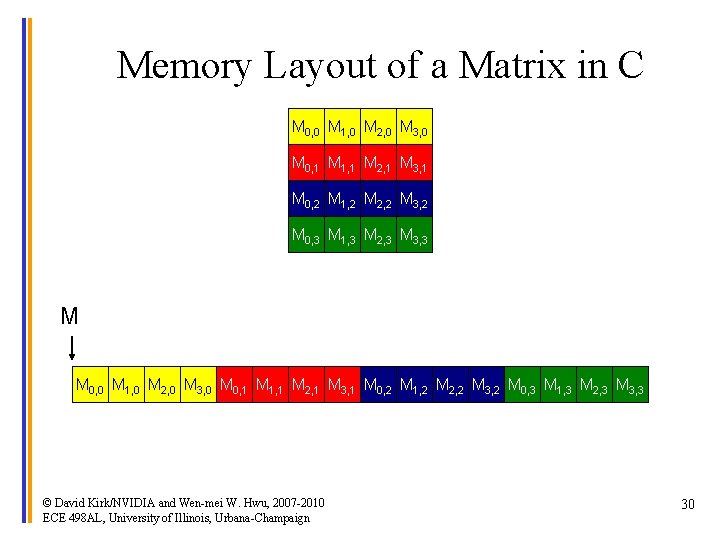

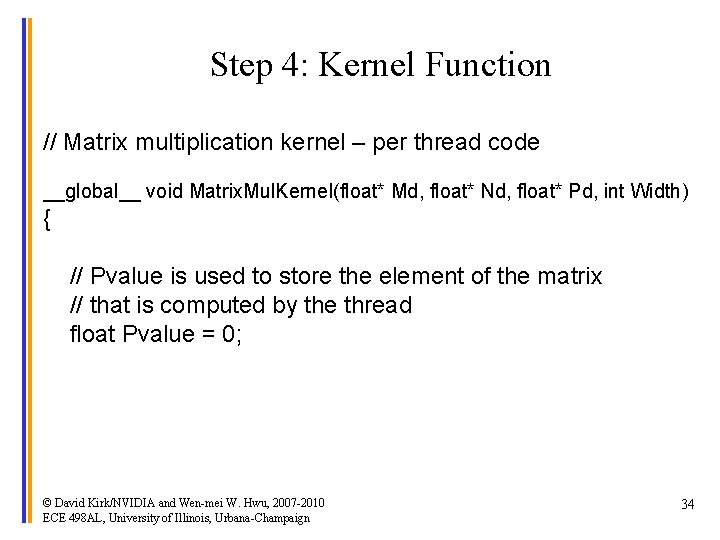

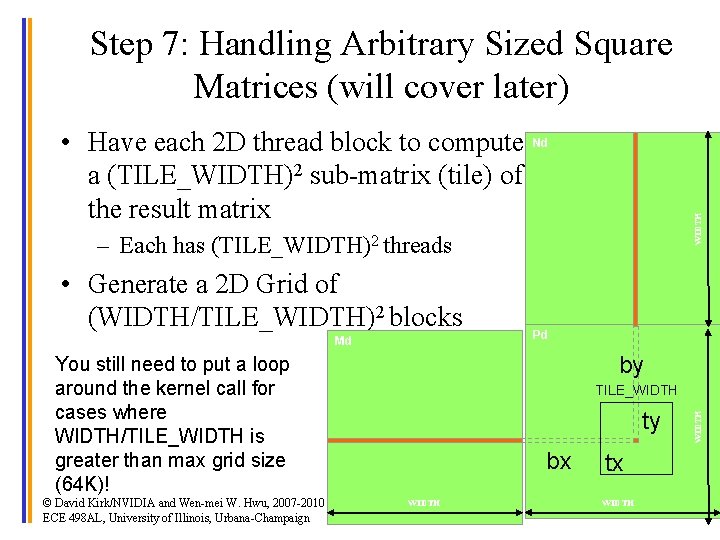

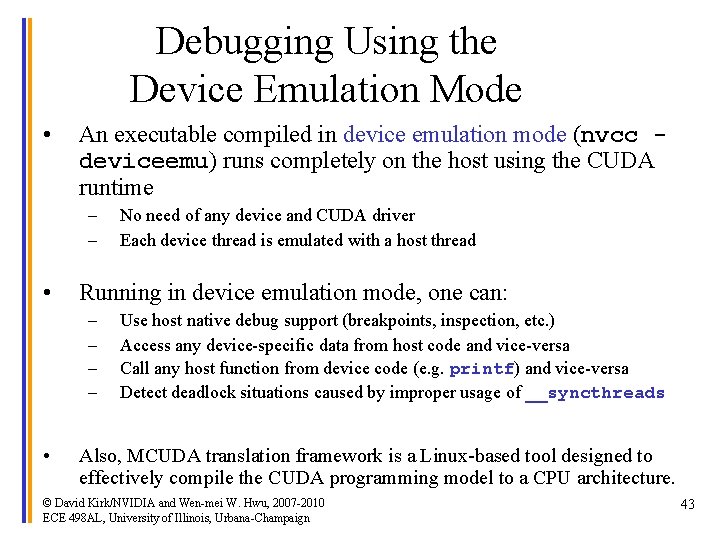

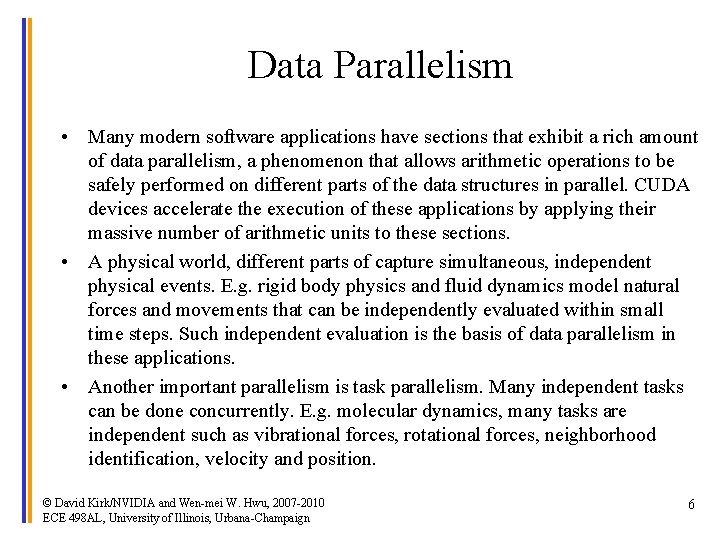

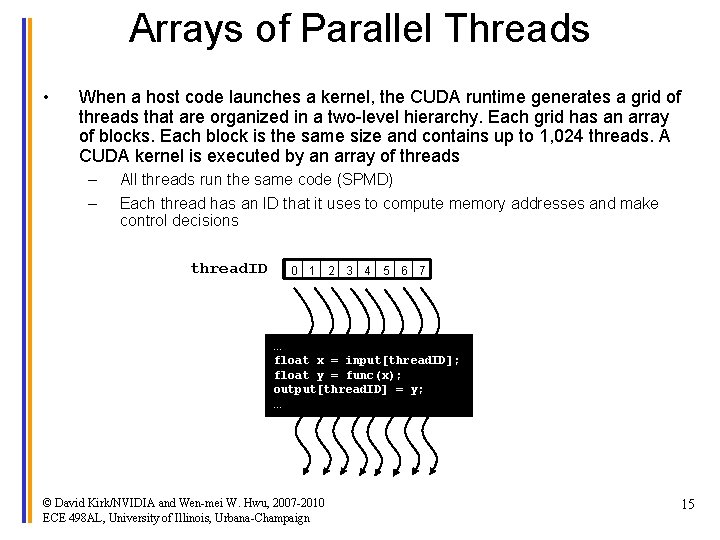

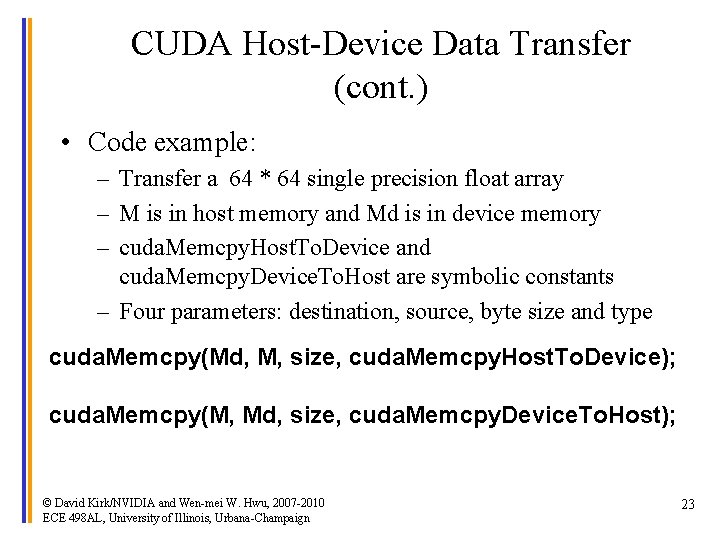

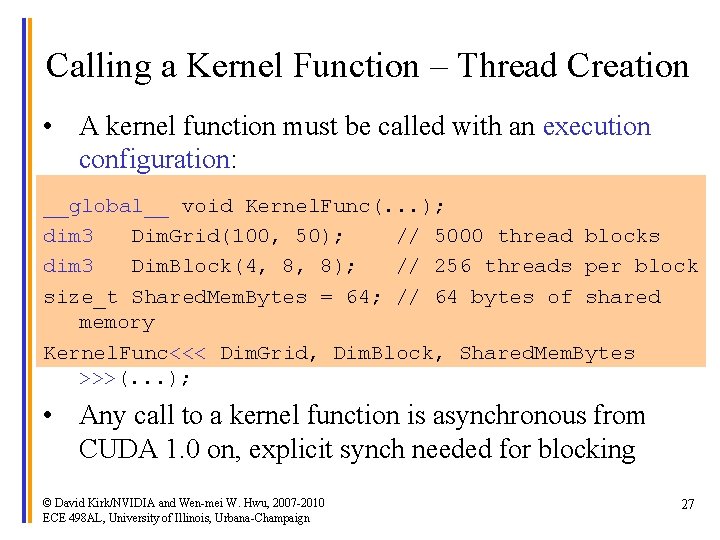

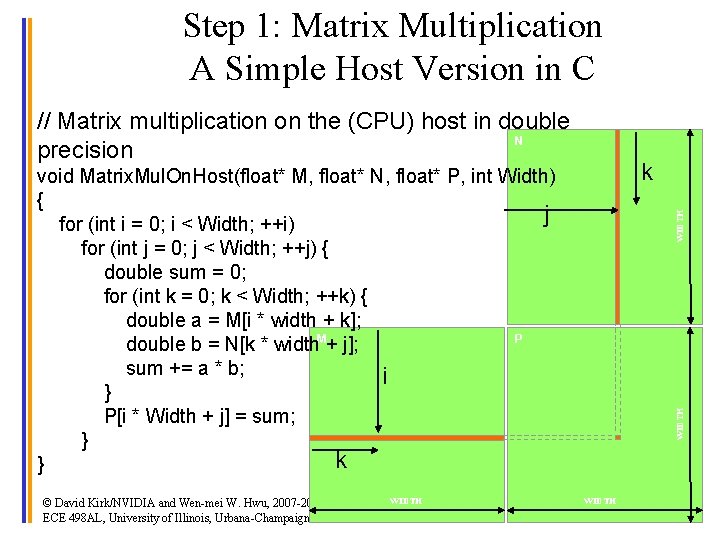

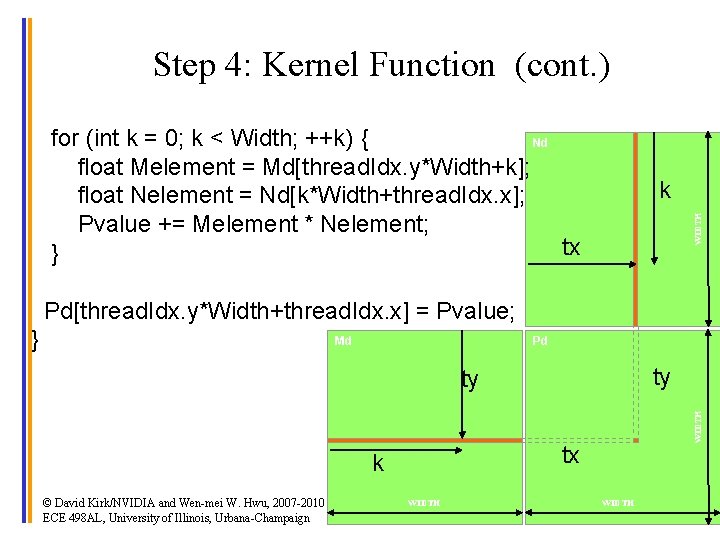

Extended C • Declspecs – global, device, shared, local, constant __device__ float filter[N]; __global__ void convolve (float *image) { __shared__ float region[M]; . . . • Keywords – thread. Idx, block. Idx region[thread. Idx] = image[i]; • Intrinsics __syncthreads(). . . – __syncthreads image[j] = result; • Runtime API – Memory, symbol, execution management • Function launch © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign } // Allocate GPU memory void *myimage = cuda. Malloc(bytes) // 100 blocks, 10 threads per block convolve<<<100, 10>>> (myimage); 14

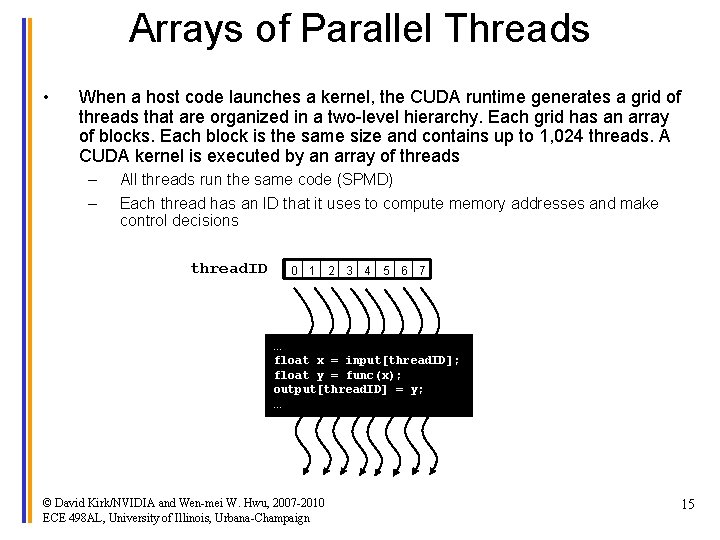

Arrays of Parallel Threads • When a host code launches a kernel, the CUDA runtime generates a grid of threads that are organized in a two-level hierarchy. Each grid has an array of blocks. Each block is the same size and contains up to 1, 024 threads. A CUDA kernel is executed by an array of threads – – All threads run the same code (SPMD) Each thread has an ID that it uses to compute memory addresses and make control decisions thread. ID 0 1 2 3 4 5 6 7 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 15

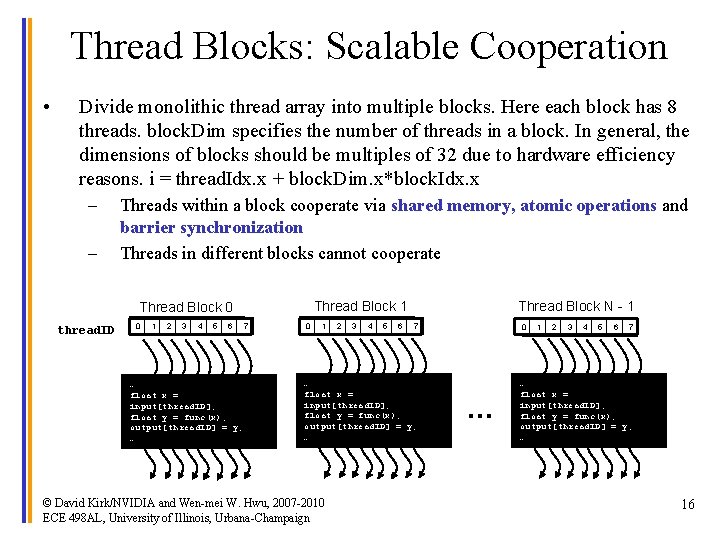

Thread Blocks: Scalable Cooperation • Divide monolithic thread array into multiple blocks. Here each block has 8 threads. block. Dim specifies the number of threads in a block. In general, the dimensions of blocks should be multiples of 32 due to hardware efficiency reasons. i = thread. Idx. x + block. Dim. x*block. Idx. x – – Threads within a block cooperate via shared memory, atomic operations and barrier synchronization Threads in different blocks cannot cooperate Thread Block 1 Thread Block 0 thread. ID 0 1 2 3 4 5 6 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … 7 0 1 2 3 4 5 6 Thread Block N - 1 7 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 0 … 1 2 3 4 5 6 7 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … 16

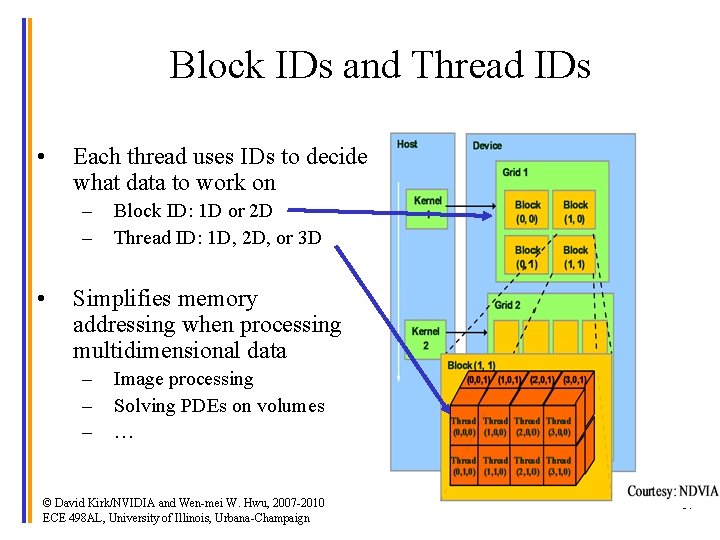

Block IDs and Thread IDs • Each thread uses IDs to decide what data to work on – – • Block ID: 1 D or 2 D Thread ID: 1 D, 2 D, or 3 D Simplifies memory addressing when processing multidimensional data – – – Image processing Solving PDEs on volumes … © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 17

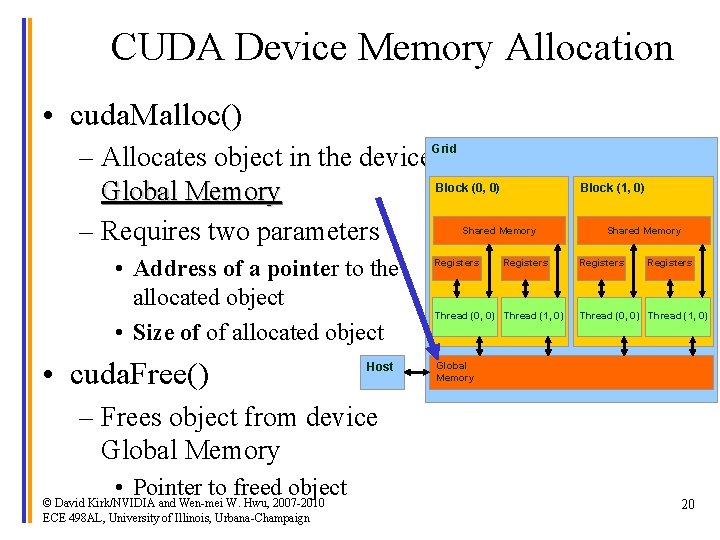

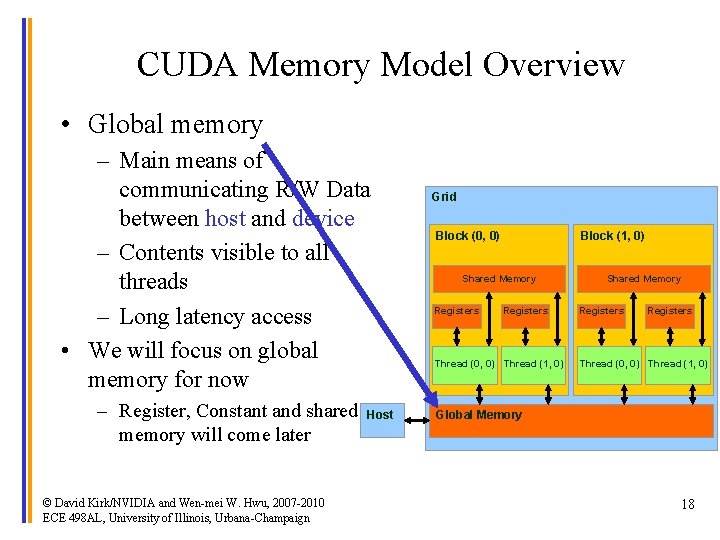

CUDA Memory Model Overview • Global memory – Main means of communicating R/W Data between host and device – Contents visible to all threads – Long latency access • We will focus on global memory for now – Register, Constant and shared memory will come later © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign Host Grid Block (0, 0) Block (1, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory 18

CUDA API Highlights: Easy and Lightweight • The API is an extension to the ANSI C programming language Low learning curve • The hardware is designed to enable lightweight runtime and driver High performance © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 19

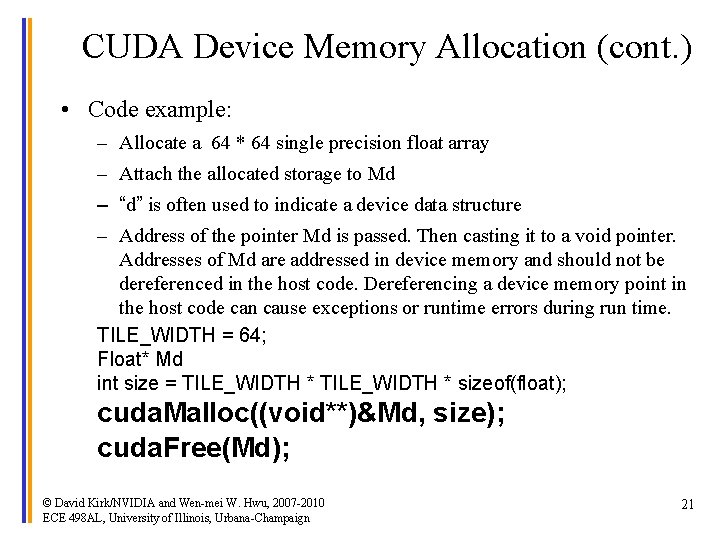

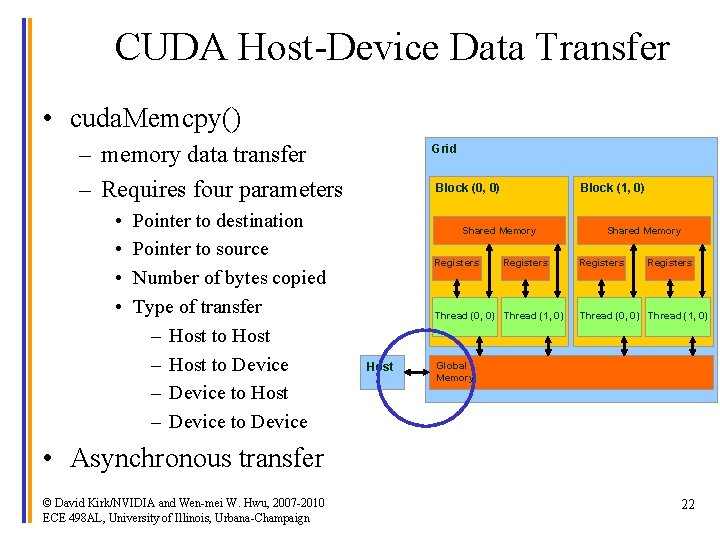

CUDA Device Memory Allocation • cuda. Malloc() – Allocates object in the device. Grid Block (0, 0) Global Memory – Requires two parameters Block (1, 0) Shared Memory • Address of a pointer to the allocated object • Size of of allocated object • cuda. Free() Host Registers Thread (0, 0) Thread (1, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory – Frees object from device Global Memory • Pointer to freed object © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 20

CUDA Device Memory Allocation (cont. ) • Code example: – Allocate a 64 * 64 single precision float array – Attach the allocated storage to Md – “d” is often used to indicate a device data structure – Address of the pointer Md is passed. Then casting it to a void pointer. Addresses of Md are addressed in device memory and should not be dereferenced in the host code. Dereferencing a device memory point in the host code can cause exceptions or runtime errors during run time. TILE_WIDTH = 64; Float* Md int size = TILE_WIDTH * sizeof(float); cuda. Malloc((void**)&Md, size); cuda. Free(Md); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 21

CUDA Host-Device Data Transfer • cuda. Memcpy() – memory data transfer – Requires four parameters • • Pointer to destination Pointer to source Number of bytes copied Type of transfer – Host to Host – Host to Device – Device to Host – Device to Device Grid Block (0, 0) Block (1, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Host Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory • Asynchronous transfer © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 22

CUDA Host-Device Data Transfer (cont. ) • Code example: – Transfer a 64 * 64 single precision float array – M is in host memory and Md is in device memory – cuda. Memcpy. Host. To. Device and cuda. Memcpy. Device. To. Host are symbolic constants – Four parameters: destination, source, byte size and type cuda. Memcpy(Md, M, size, cuda. Memcpy. Host. To. Device); cuda. Memcpy(M, Md, size, cuda. Memcpy. Device. To. Host); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 23

CUDA Keywords © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 24

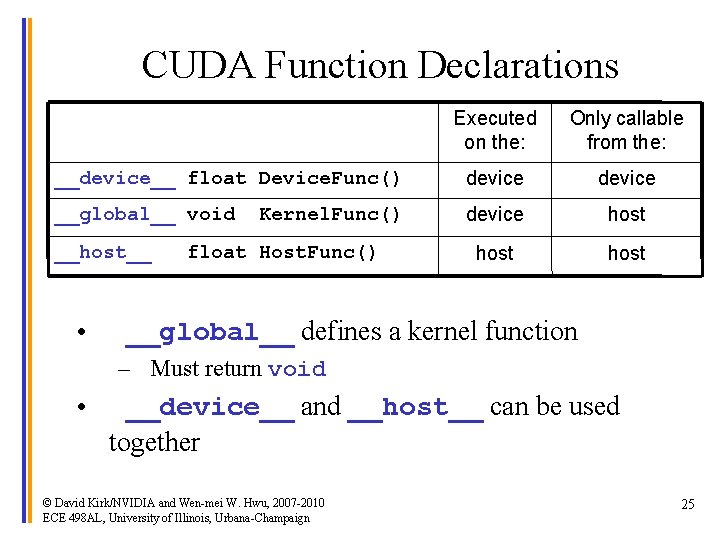

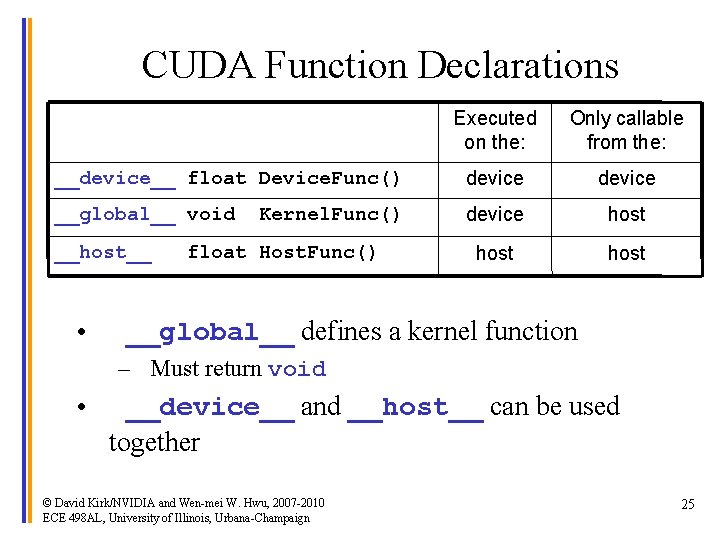

CUDA Function Declarations Executed on the: Only callable from the: __device__ float Device. Func() device __global__ void device host __host__ • Kernel. Func() float Host. Func() __global__ defines a kernel function – Must return void • __device__ and __host__ can be used together © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 25

CUDA Function Declarations (cont. ) • __device__ functions cannot have their address taken • For functions executed on the device: – No recursion – No static variable declarations inside the function – No variable number of arguments © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 26

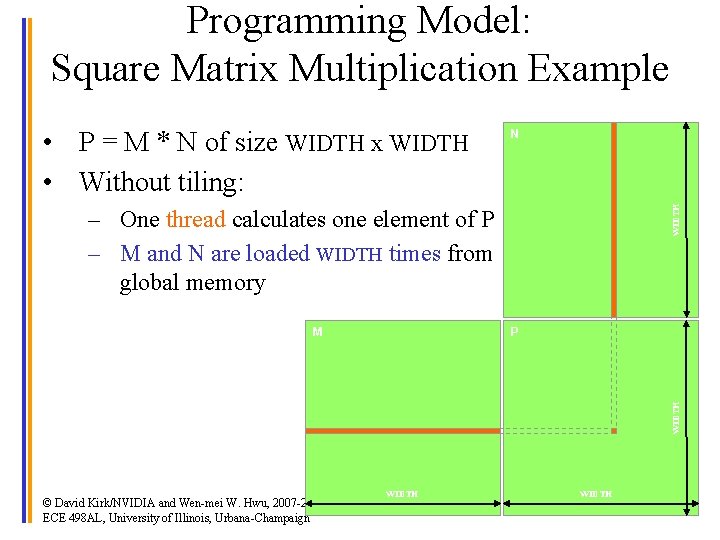

Calling a Kernel Function – Thread Creation • A kernel function must be called with an execution configuration: __global__ void Kernel. Func(. . . ); dim 3 Dim. Grid(100, 50); // 5000 thread blocks dim 3 Dim. Block(4, 8, 8); // 256 threads per block size_t Shared. Mem. Bytes = 64; // 64 bytes of shared memory Kernel. Func<<< Dim. Grid, Dim. Block, Shared. Mem. Bytes >>>(. . . ); • Any call to a kernel function is asynchronous from CUDA 1. 0 on, explicit synch needed for blocking © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 27

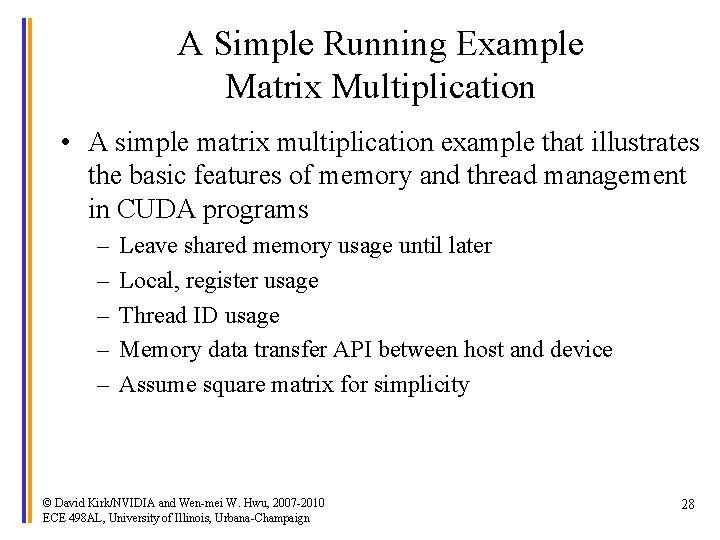

A Simple Running Example Matrix Multiplication • A simple matrix multiplication example that illustrates the basic features of memory and thread management in CUDA programs – – – Leave shared memory usage until later Local, register usage Thread ID usage Memory data transfer API between host and device Assume square matrix for simplicity © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 28

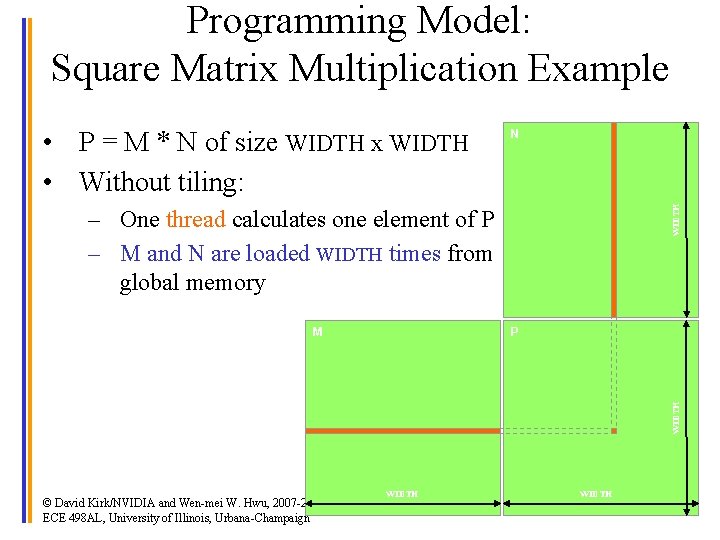

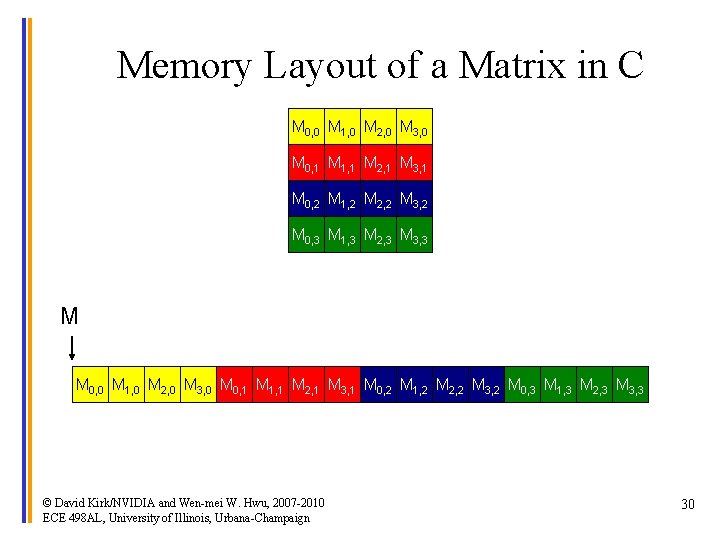

Programming Model: Square Matrix Multiplication Example N WIDTH • P = M * N of size WIDTH x WIDTH • Without tiling: – One thread calculates one element of P – M and N are loaded WIDTH times from global memory P WIDTH M © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign WIDTH 29

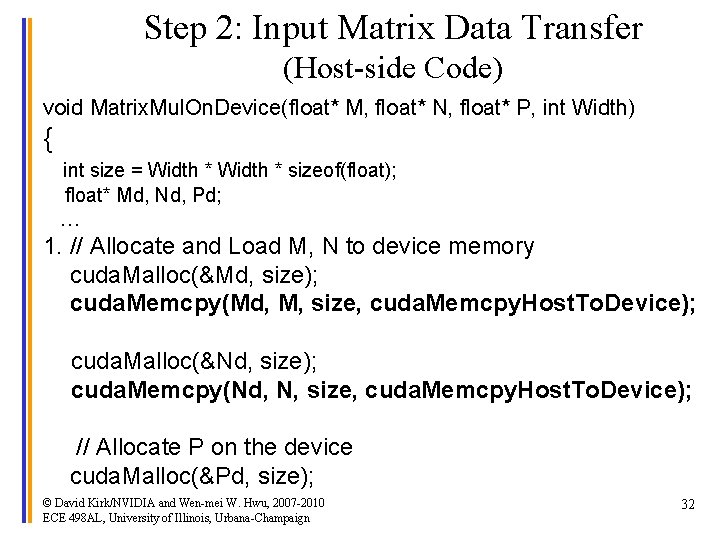

Memory Layout of a Matrix in C M 0, 0 M 1, 0 M 2, 0 M 3, 0 M 0, 1 M 1, 1 M 2, 1 M 3, 1 M 0, 2 M 1, 2 M 2, 2 M 3, 2 M 0, 3 M 1, 3 M 2, 3 M 3, 3 M M 0, 0 M 1, 0 M 2, 0 M 3, 0 M 0, 1 M 1, 1 M 2, 1 M 3, 1 M 0, 2 M 1, 2 M 2, 2 M 3, 2 M 0, 3 M 1, 3 M 2, 3 M 3, 3 © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 30

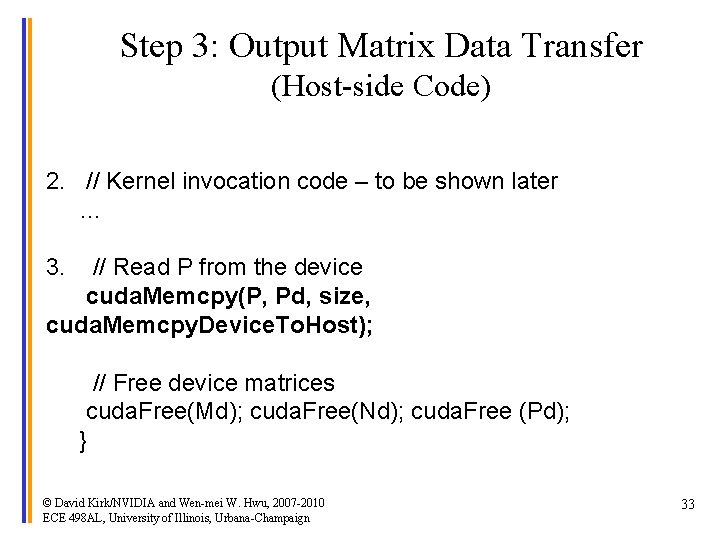

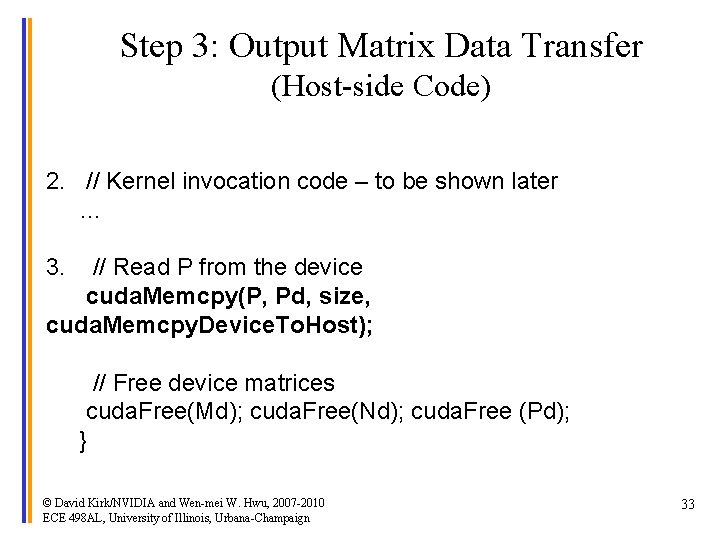

Step 1: Matrix Multiplication A Simple Host Version in C // Matrix multiplication on the (CPU) host in double N precision k © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign WIDTH void Matrix. Mul. On. Host(float* M, float* N, float* P, int Width) { j for (int i = 0; i < Width; ++i) for (int j = 0; j < Width; ++j) { double sum = 0; for (int k = 0; k < Width; ++k) { double a = M[i * width + k]; P double b = N[k * width. M+ j]; sum += a * b; i } P[i * Width + j] = sum; } k } WIDTH 31

Step 2: Input Matrix Data Transfer (Host-side Code) void Matrix. Mul. On. Device(float* M, float* N, float* P, int Width) { int size = Width * sizeof(float); float* Md, Nd, Pd; … 1. // Allocate and Load M, N to device memory cuda. Malloc(&Md, size); cuda. Memcpy(Md, M, size, cuda. Memcpy. Host. To. Device); cuda. Malloc(&Nd, size); cuda. Memcpy(Nd, N, size, cuda. Memcpy. Host. To. Device); // Allocate P on the device cuda. Malloc(&Pd, size); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 32

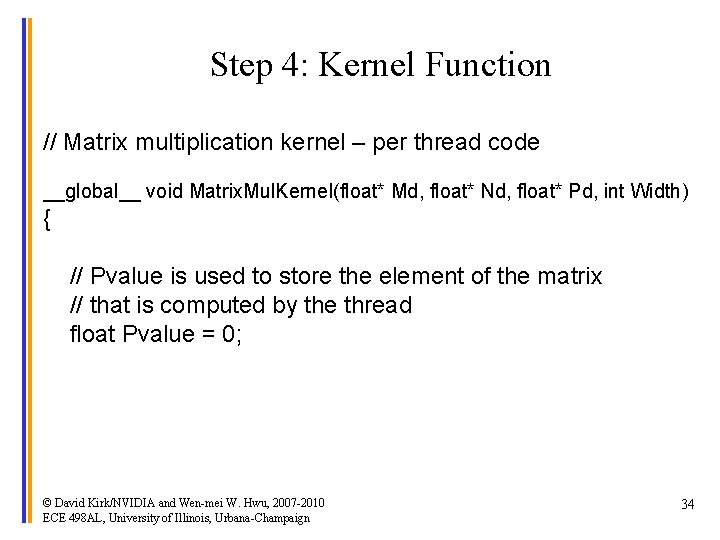

Step 3: Output Matrix Data Transfer (Host-side Code) 2. // Kernel invocation code – to be shown later … 3. // Read P from the device cuda. Memcpy(P, Pd, size, cuda. Memcpy. Device. To. Host); // Free device matrices cuda. Free(Md); cuda. Free(Nd); cuda. Free (Pd); } © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 33

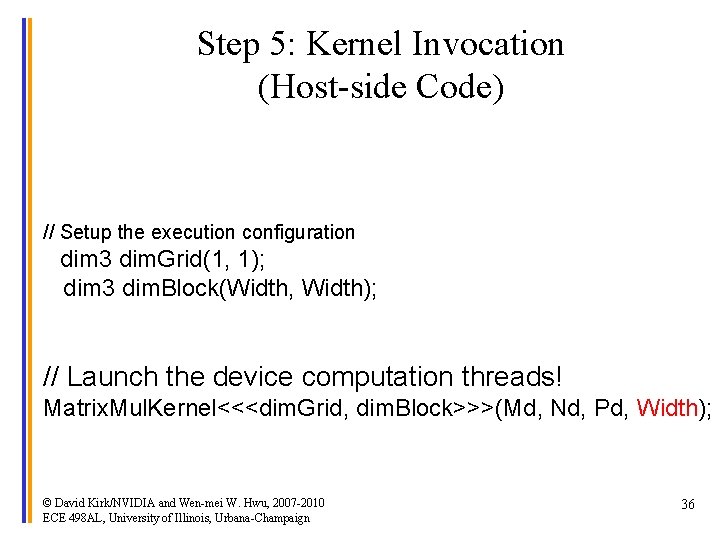

Step 4: Kernel Function // Matrix multiplication kernel – per thread code __global__ void Matrix. Mul. Kernel(float* Md, float* Nd, float* Pd, int Width) { // Pvalue is used to store the element of the matrix // that is computed by the thread float Pvalue = 0; © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 34

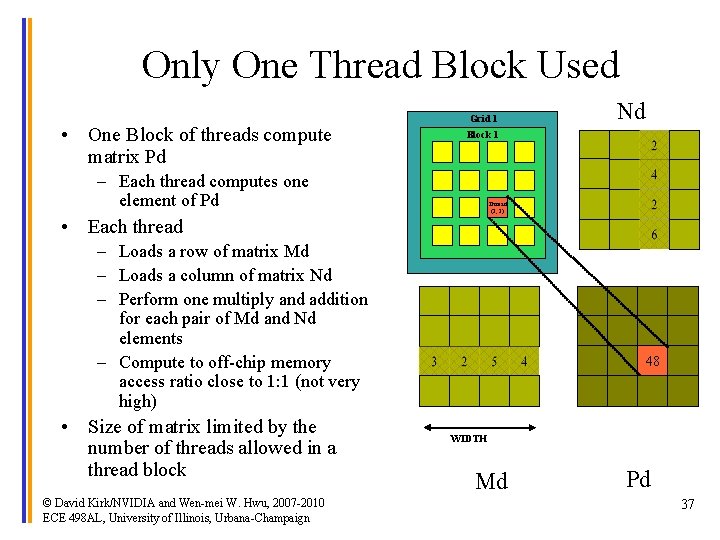

Step 4: Kernel Function (cont. ) for (int k = 0; k < Width; ++k) { Nd float Melement = Md[thread. Idx. y*Width+k]; float Nelement = Nd[k*Width+thread. Idx. x]; Pvalue += Melement * Nelement; tx } WIDTH k Pd[thread. Idx. y*Width+thread. Idx. x] = Pvalue; } Md Pd ty tx k © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign WIDTH ty WIDTH 35

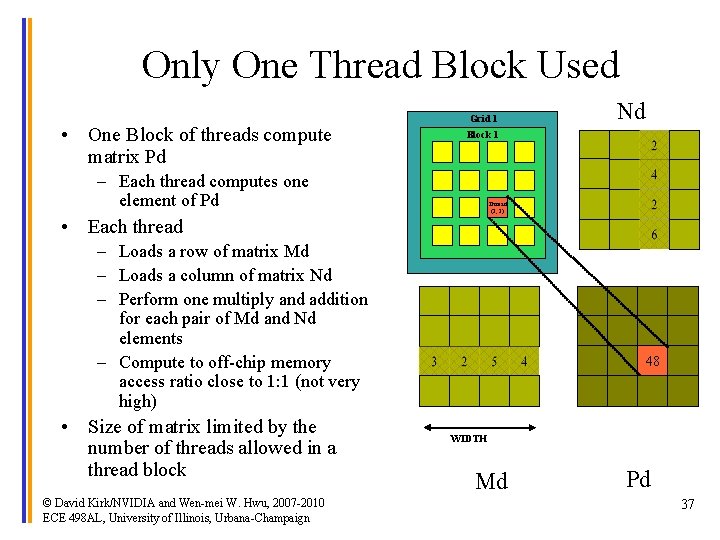

Step 5: Kernel Invocation (Host-side Code) // Setup the execution configuration dim 3 dim. Grid(1, 1); dim 3 dim. Block(Width, Width); // Launch the device computation threads! Matrix. Mul. Kernel<<<dim. Grid, dim. Block>>>(Md, Nd, Pd, Width); © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 36

Only One Thread Block Used • One Block of threads compute matrix Pd Grid 1 Nd Block 1 – Each thread computes one element of Pd Thread (2, 2) • Each thread – Loads a row of matrix Md – Loads a column of matrix Nd – Perform one multiply and addition for each pair of Md and Nd elements – Compute to off-chip memory access ratio close to 1: 1 (not very high) • Size of matrix limited by the number of threads allowed in a thread block © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 48 WIDTH Md Pd 37

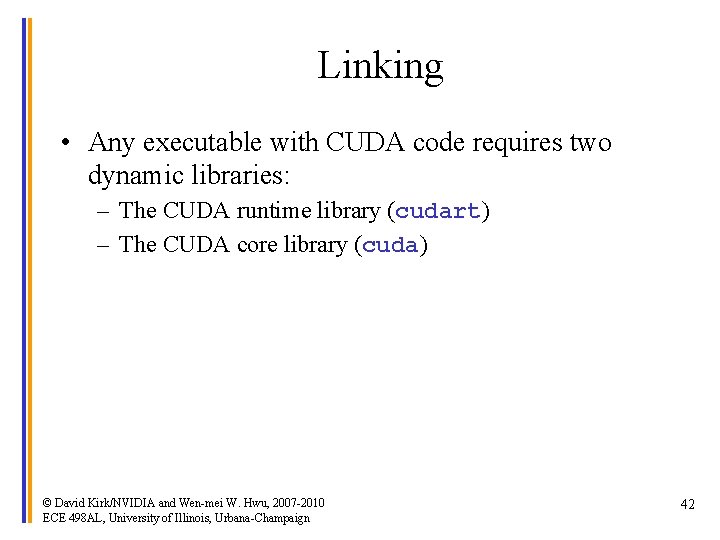

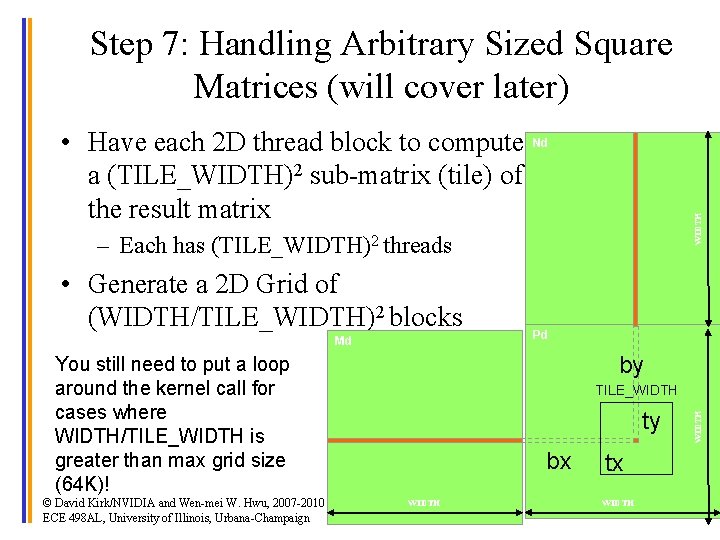

Step 7: Handling Arbitrary Sized Square Matrices (will cover later) WIDTH • Have each 2 D thread block to compute Nd a (TILE_WIDTH)2 sub-matrix (tile) of the result matrix – Each has (TILE_WIDTH)2 threads • Generate a 2 D Grid of (WIDTH/TILE_WIDTH)2 blocks Md by You still need to put a loop around the kernel call for cases where WIDTH/TILE_WIDTH is greater than max grid size (64 K)! © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign Pd ty bx WIDTH TILE_WIDTH tx WIDTH 38

Some Useful Information on Tools © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 39

![Compiling a CUDA Program CC CUDA Application float 4 me gxgtid me x Compiling a CUDA Program C/C++ CUDA Application float 4 me = gx[gtid]; me. x](https://slidetodoc.com/presentation_image_h2/78fd8f1e956e15ae37ef62ee1f696007/image-40.jpg)

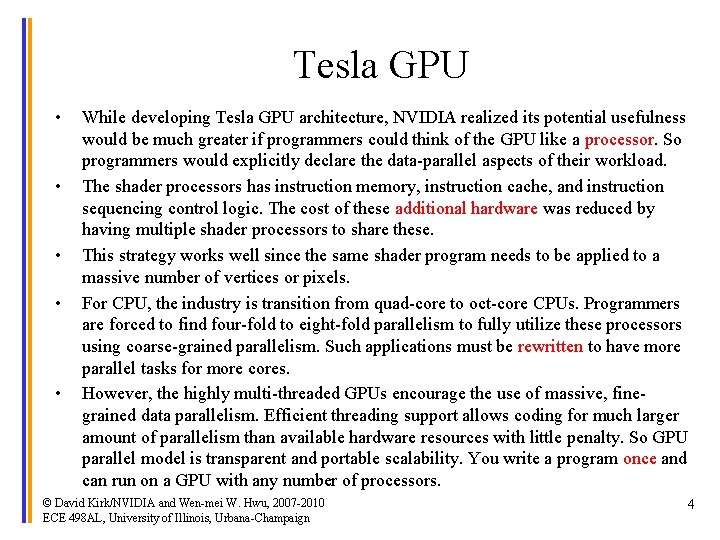

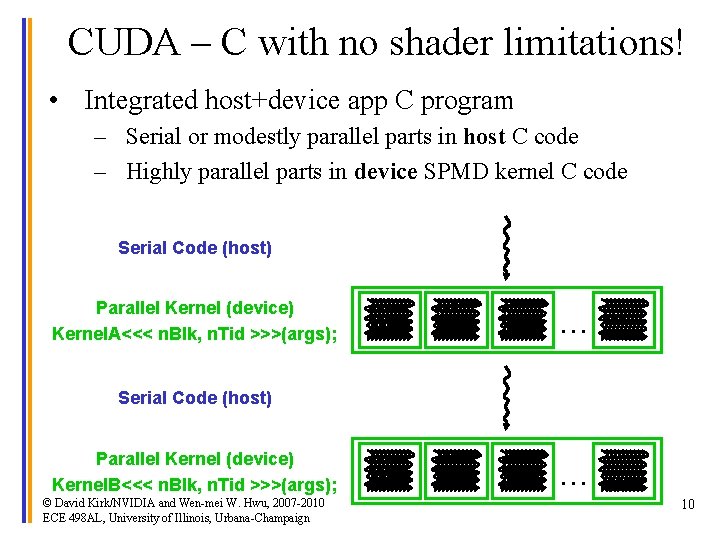

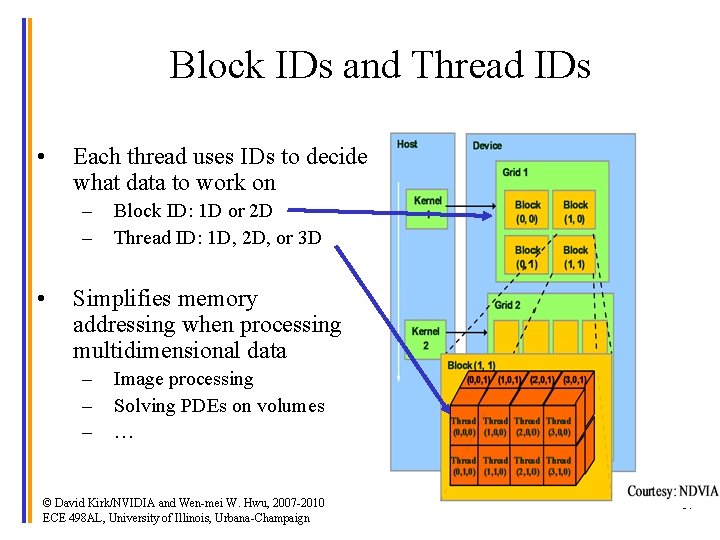

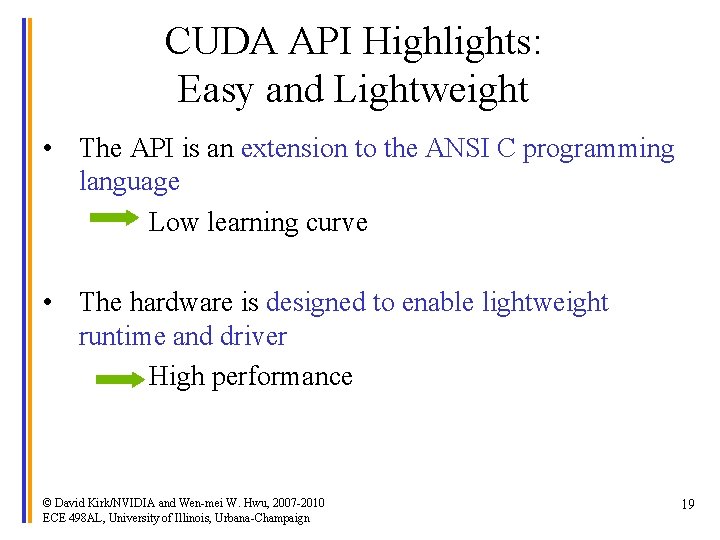

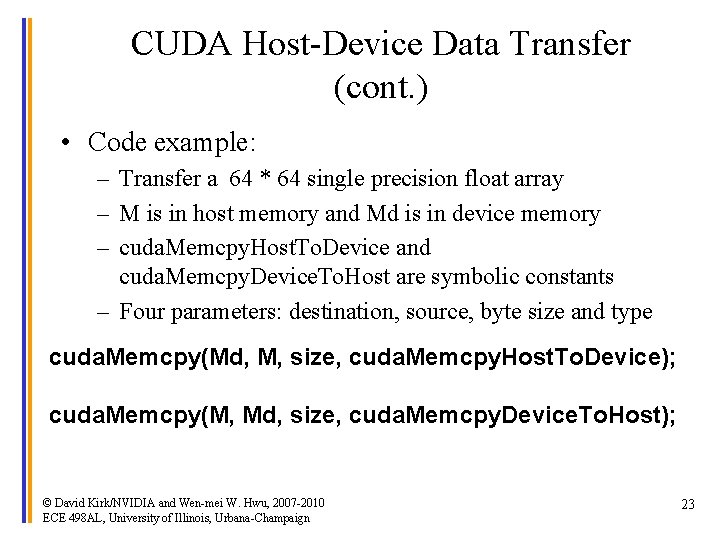

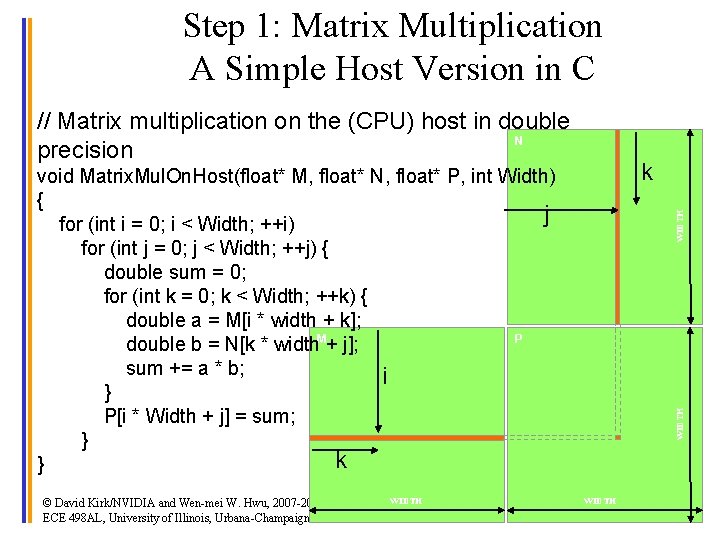

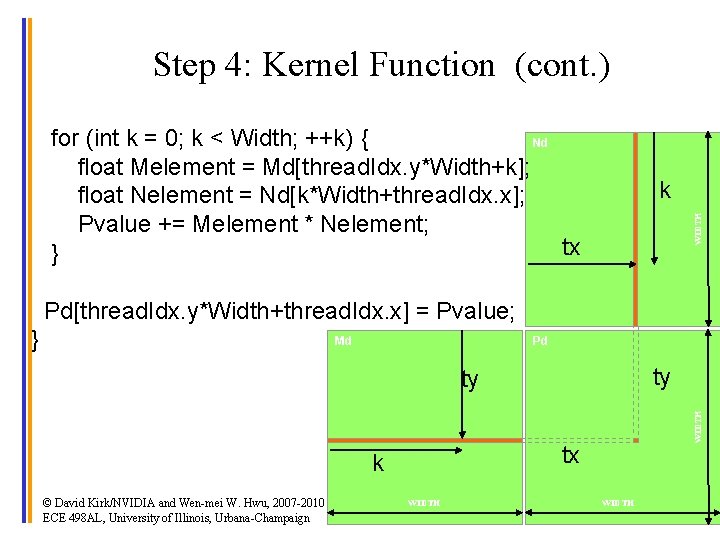

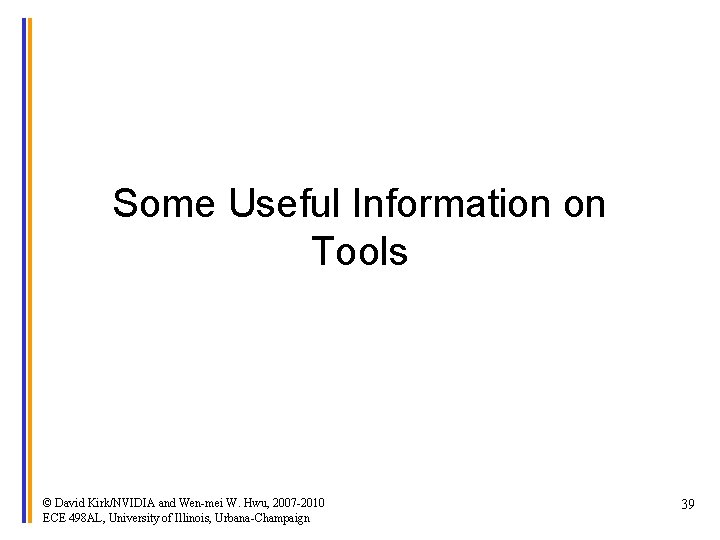

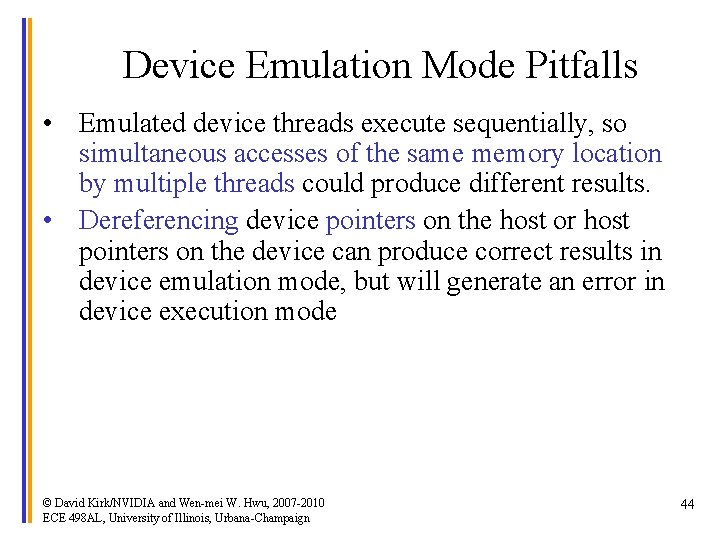

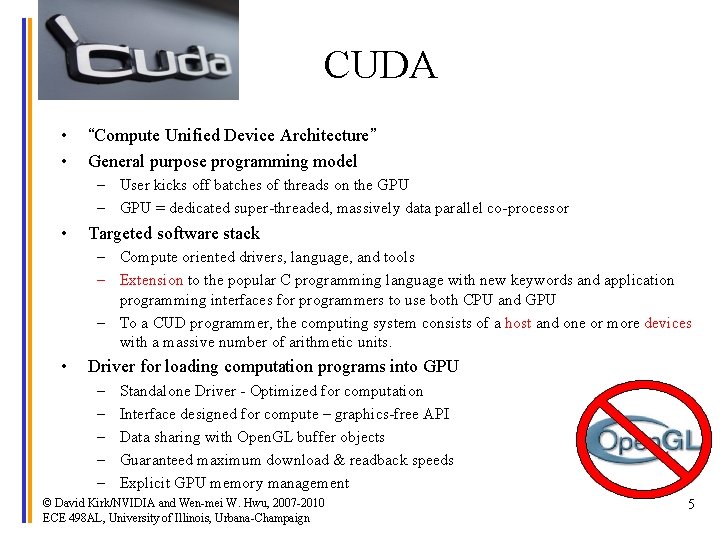

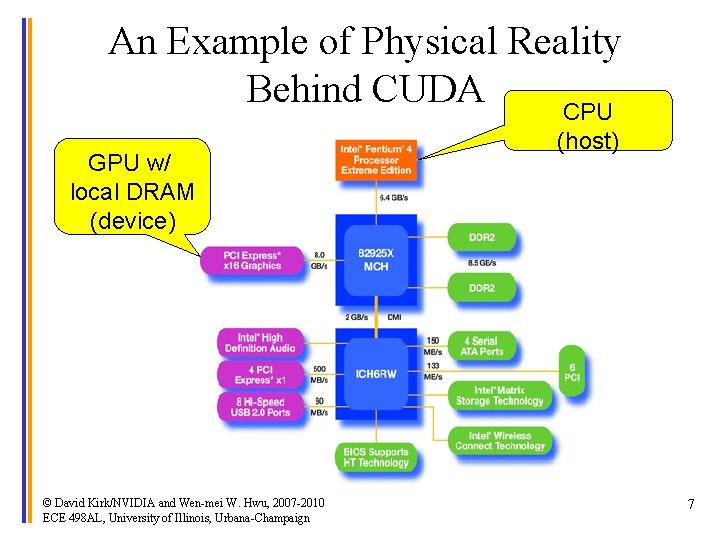

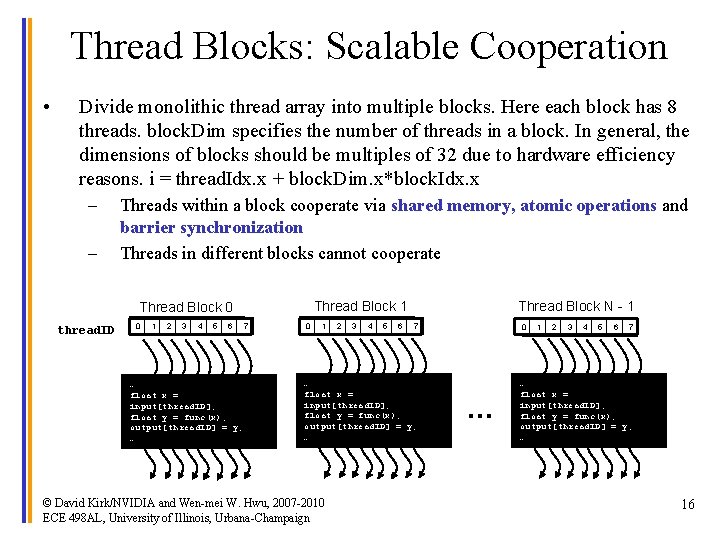

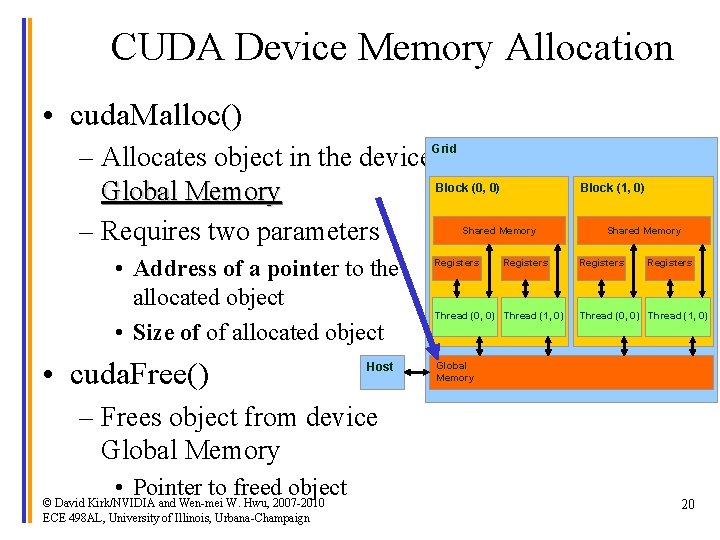

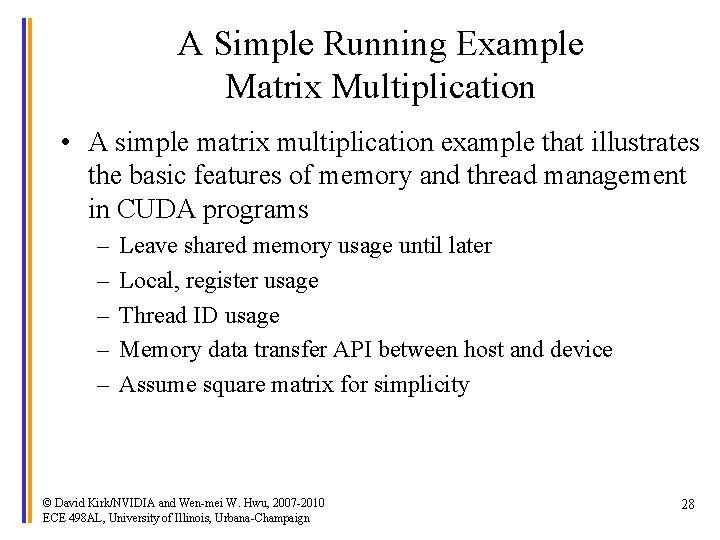

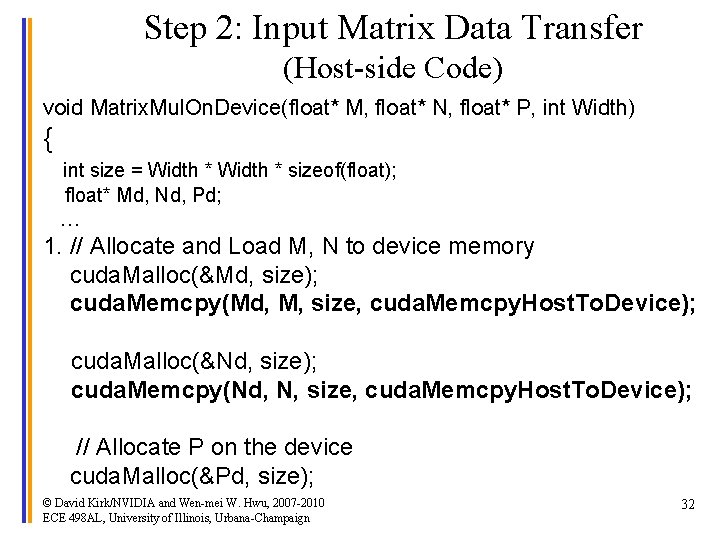

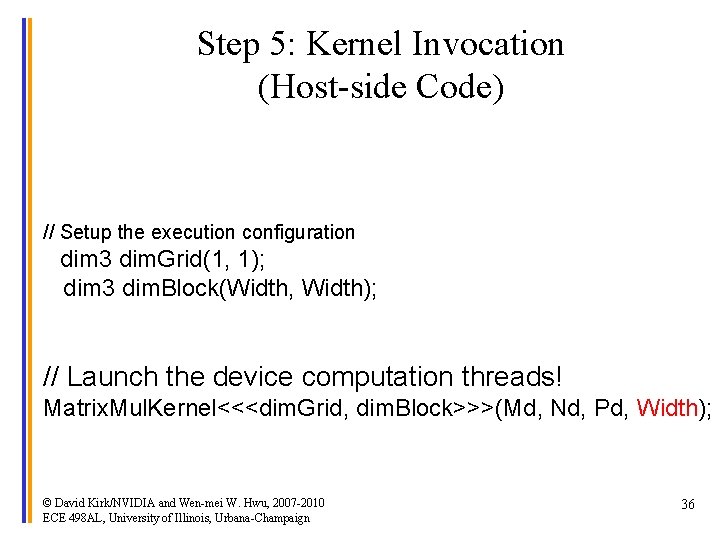

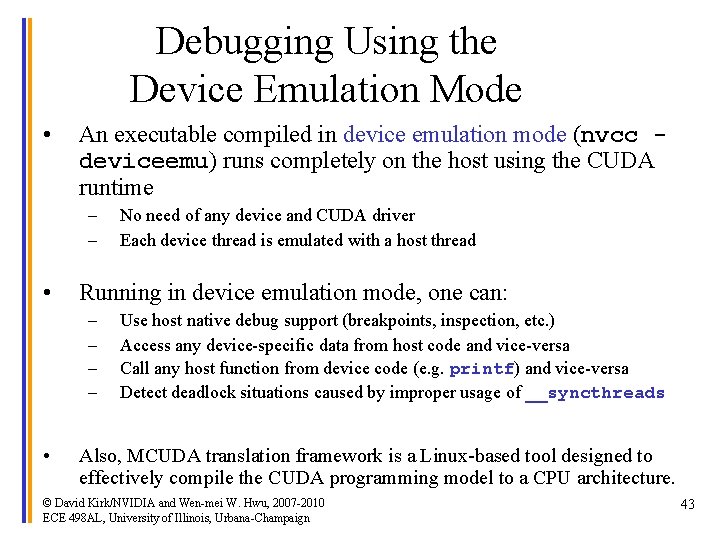

Compiling a CUDA Program C/C++ CUDA Application float 4 me = gx[gtid]; me. x += me. y * me. z; CPU Code NVCC PTX Code Virtual Physical. PTX to Target Compiler G 80 … ld. global. v 4. f 32 mad. f 32 • Parallel Thread e. Xecution (PTX) – Virtual Machine and ISA – Programming model – Execution resources and state {$f 1, $f 3, $f 5, $f 7}, [$r 9+0]; $f 1, $f 5, $f 3, $f 1; GPU Target code © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 40

Compilation • Any source file containing CUDA language extensions must be compiled with NVCC • NVCC is a compiler driver – Works by invoking all the necessary tools and compilers like cudacc, g++, cl, . . . • NVCC outputs: – C code (host CPU Code) • Must then be compiled with the rest of the application using another tool – PTX • Object code directly • Or, PTX source, interpreted at runtime © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 41

Linking • Any executable with CUDA code requires two dynamic libraries: – The CUDA runtime library (cudart) – The CUDA core library (cuda) © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 42

Debugging Using the Device Emulation Mode • An executable compiled in device emulation mode (nvcc deviceemu) runs completely on the host using the CUDA runtime – – • Running in device emulation mode, one can: – – • No need of any device and CUDA driver Each device thread is emulated with a host thread Use host native debug support (breakpoints, inspection, etc. ) Access any device-specific data from host code and vice-versa Call any host function from device code (e. g. printf) and vice-versa Detect deadlock situations caused by improper usage of __syncthreads Also, MCUDA translation framework is a Linux-based tool designed to effectively compile the CUDA programming model to a CPU architecture. © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 43

Device Emulation Mode Pitfalls • Emulated device threads execute sequentially, so simultaneous accesses of the same memory location by multiple threads could produce different results. • Dereferencing device pointers on the host or host pointers on the device can produce correct results in device emulation mode, but will generate an error in device execution mode © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 44

Floating Point • Results of floating-point computations will slightly differ because of: – Different compiler outputs, instruction sets – Use of extended precision for intermediate results • There are various options to force strict single precision on the host © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2010 ECE 498 AL, University of Illinois, Urbana-Champaign 45