POSTECH DialogBased Computer Assisted Language Learning System Intelligent

POSTECH Dialog-Based Computer Assisted Language Learning System Intelligent Software Lab. POSTECH Prof. Gary Geunbae Lee

Contents Introduction Methods DB-CALL System Language Learner Simulation Example-based Dialog Modeling Feedback Generation Translation Assistance Comprehension Assistance User Simulation Grammar Error Simulation Discussion

RESEARCH BACKGROUND • • Globalization makes English more important as a world language Extremely high cost of native speaker tutors Most language learning software dedicated to pronunciation practice Dialog-based Computer-assisted Language Learning will be an excellent solution ISSUES • DB-CALL system should be able to understand student’s poor and nonnative expressions • DB-CALL system should have high domain scalability to support various practical scenarios • DB-CALL system should provide educational functionalities which help students improve their linguistic ability

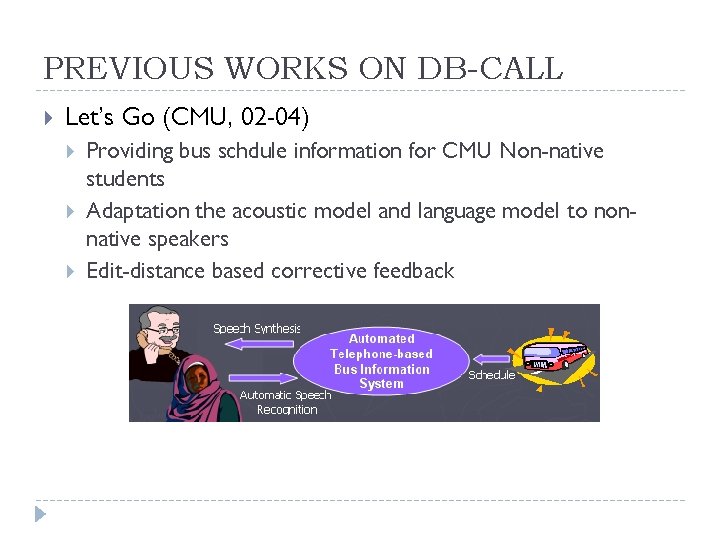

PREVIOUS WORKS ON DB-CALL Let’s Go (CMU, 02 -04) Providing bus schdule information for CMU Non-native students Adaptation the acoustic model and language model to nonnative speakers Edit-distance based corrective feedback

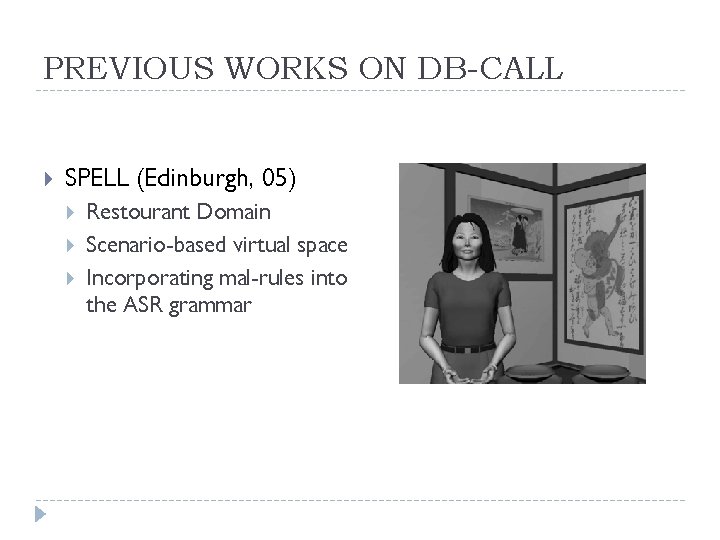

PREVIOUS WORKS ON DB-CALL SPELL (Edinburgh, 05) Restourant Domain Scenario-based virtual space Incorporating mal-rules into the ASR grammar

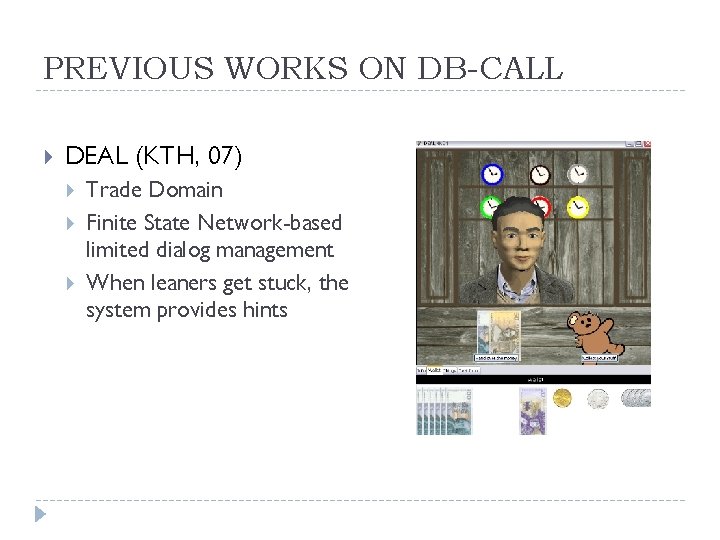

PREVIOUS WORKS ON DB-CALL DEAL (KTH, 07) Trade Domain Finite State Network-based limited dialog management When leaners get stuck, the system provides hints

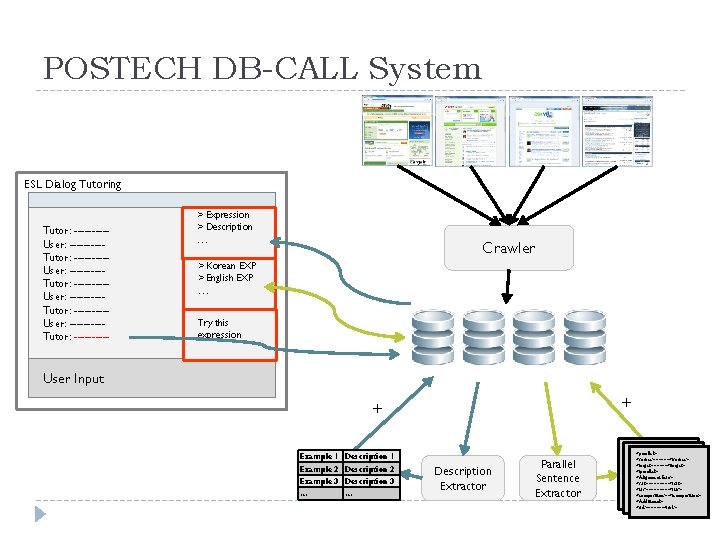

POSTECH DB-CALL System ESL Dialog Tutoring Tutor: ---------User: ---------Tutor: ----- > Expression > Description … Crawler > Korean EXP > English EXP … Try this expression User Input + + Example 1 Description 1 Example 2 Description 2 Example 3 Description 3 … … Description Extractor Parallel Sentence Extractor <parallel> <source>~~~~~</source> <source>~~~~ <target>~~~~~</target> </parallel> <Alignment Info> ~~~</source> <s 2 t>~~~~</s 2 t> <t 2 s>~~~~</t 2 s> <target <composition>~</composition> <Additional> <url>~~~~~~</url>

DB-CALL System

1. Example-based Dialog Modeling

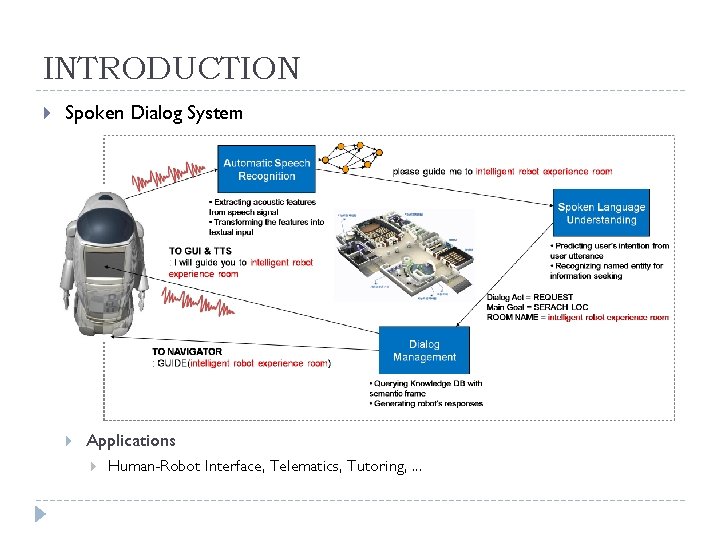

INTRODUCTION Spoken Dialog System Applications Human-Robot Interface, Telematics, Tutoring, . . .

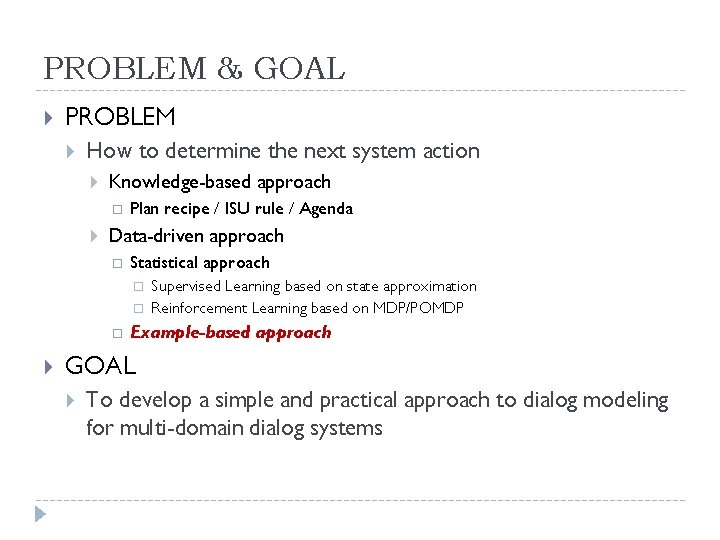

PROBLEM & GOAL PROBLEM How to determine the next system action Knowledge-based approach Plan recipe / ISU rule / Agenda Data-driven approach Statistical approach Supervised Learning based on state approximation Reinforcement Learning based on MDP/POMDP Example-based approach GOAL To develop a simple and practical approach to dialog modeling for multi-domain dialog systems

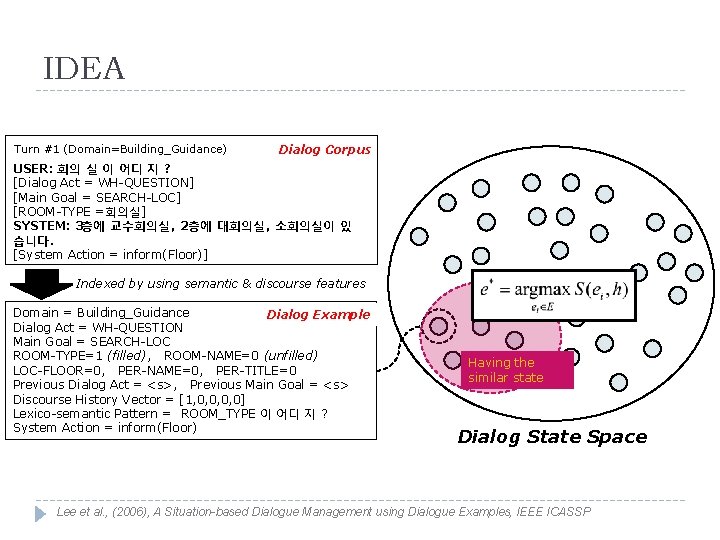

IDEA Turn #1 (Domain=Building_Guidance) Dialog Corpus USER: 회의 실 이 어디 지 ? [Dialog Act = WH-QUESTION] [Main Goal = SEARCH-LOC] [ROOM-TYPE =회의실] SYSTEM: 3층에 교수회의실 , 2층에 대회의실, 소회의실이 있 습니다. [System Action = inform(Floor)] Indexed by using semantic & discourse features Domain = Building_Guidance Dialog Example Dialog Act = WH-QUESTION Main Goal = SEARCH-LOC ROOM-TYPE=1 (filled), ROOM-NAME=0 (unfilled) LOC-FLOOR=0, PER-NAME=0, PER-TITLE=0 Previous Dialog Act = <s>, Previous Main Goal = <s> Discourse History Vector = [1, 0, 0] Lexico-semantic Pattern = ROOM_TYPE 이 어디 지 ? System Action = inform(Floor) Having the similar state Dialog State Space Lee et al. , (2006), A Situation-based Dialogue Management using Dialogue Examples, IEEE ICASSP

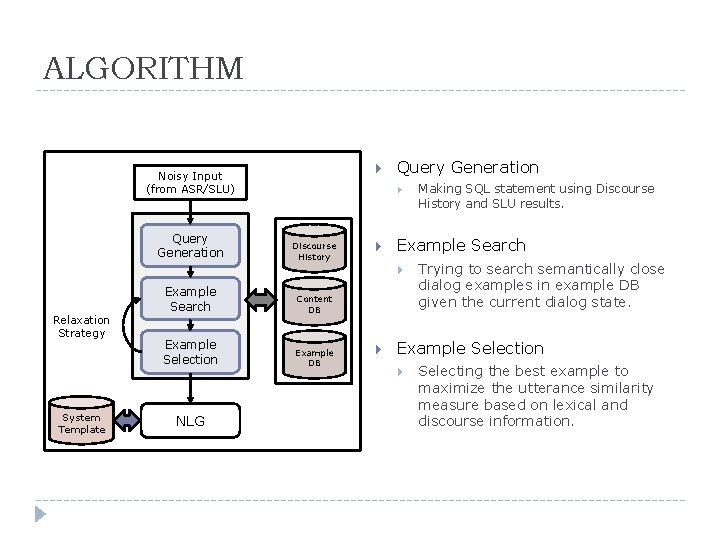

ALGORITHM Noisy Input (from ASR/SLU) Query Generation Discourse History Example Search Relaxation Strategy System Template Example Search Content DB Example Selection Example DB NLG Making SQL statement using Discourse History and SLU results. Trying to search semantically close dialog examples in example DB given the current dialog state. Example Selection Selecting the best example to maximize the utterance similarity measure based on lexical and discourse information.

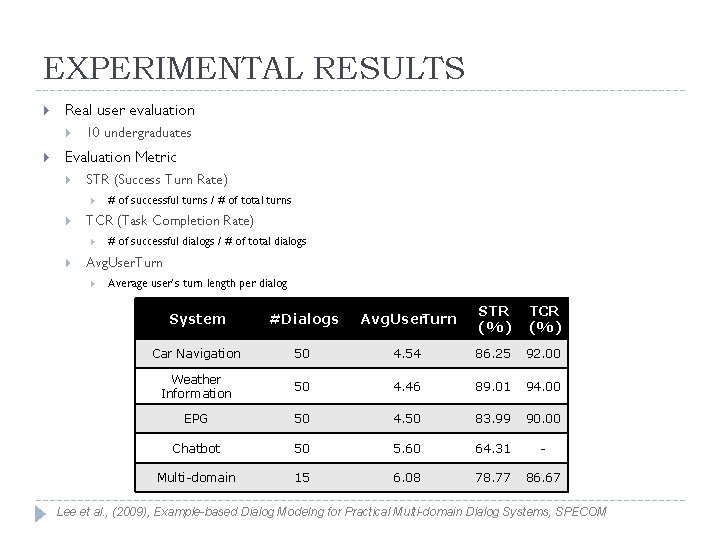

EXPERIMENTAL RESULTS Real user evaluation 10 undergraduates Evaluation Metric STR (Success Turn Rate) TCR (Task Completion Rate) # of successful turns / # of total turns # of successful dialogs / # of total dialogs Avg. User. Turn Average user’s turn length per dialog System #Dialogs Avg. User. Turn STR (%) TCR (%) Car Navigation 50 4. 54 86. 25 92. 00 Weather Information 50 4. 46 89. 01 94. 00 EPG 50 4. 50 83. 99 90. 00 Chatbot 50 5. 60 64. 31 - Multi-domain 15 6. 08 78. 77 86. 67 Lee et al. , (2009), Example-based Dialog Modelng for Practical Multi-domain Dialog Systems, SPECOM

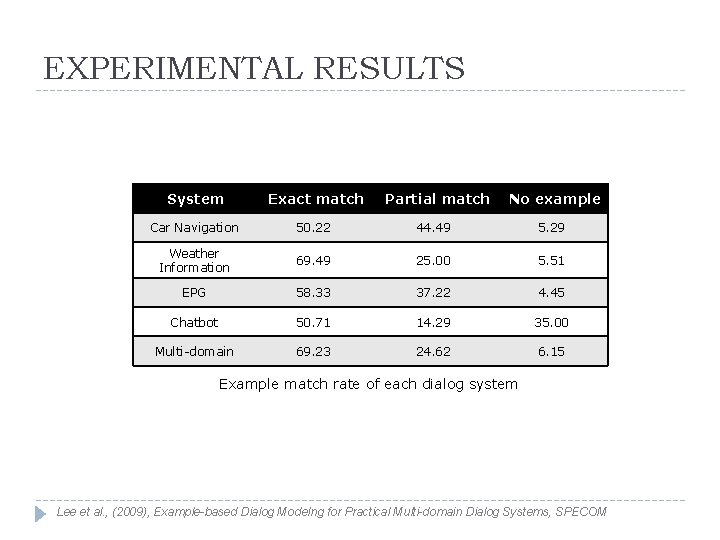

EXPERIMENTAL RESULTS System Exact match Partial match No example Car Navigation 50. 22 44. 49 5. 29 Weather Information 69. 49 25. 00 5. 51 EPG 58. 33 37. 22 4. 45 Chatbot 50. 71 14. 29 35. 00 Multi-domain 69. 23 24. 62 6. 15 Example match rate of each dialog system Lee et al. , (2009), Example-based Dialog Modelng for Practical Multi-domain Dialog Systems, SPECOM

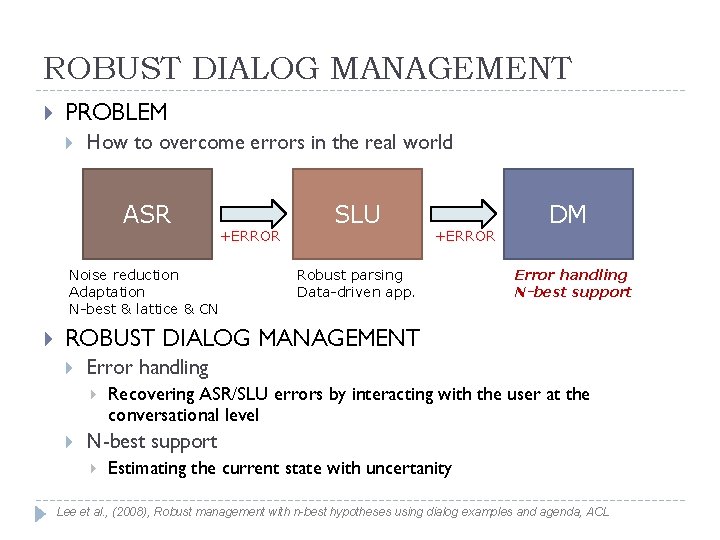

ROBUST DIALOG MANAGEMENT PROBLEM How to overcome errors in the real world ASR Noise reduction Adaptation N-best & lattice & CN +ERROR SLU +ERROR Robust parsing Data-driven app. DM Error handling N-best support ROBUST DIALOG MANAGEMENT Error handling Recovering ASR/SLU errors by interacting with the user at the conversational level N-best support Estimating the current state with uncertanity Lee et al. , (2008), Robust management with n-best hypotheses using dialog examples and agenda, ACL

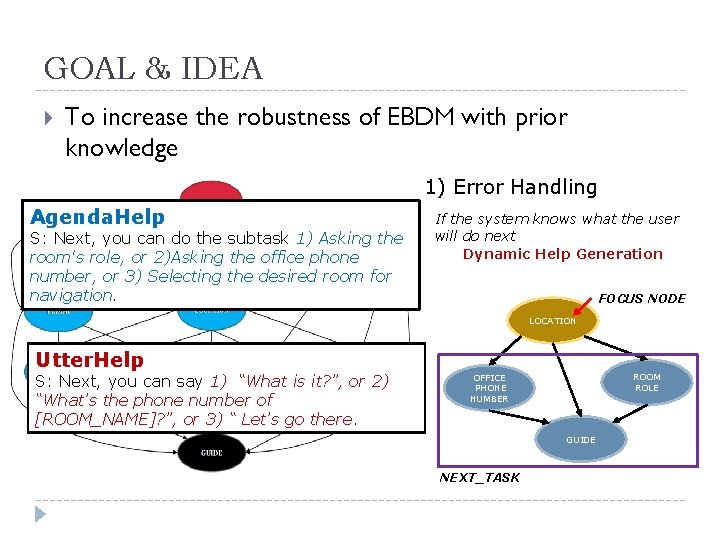

GOAL & IDEA To increase the robustness of EBDM with prior knowledge 1) Error Handling Agenda. Help S: Next, you can do the subtask 1) Asking the room's role, or 2)Asking the office phone number, or 3) Selecting the desired room for navigation. If the system knows what the user will do next Dynamic Help Generation FOCUS NODE LOCATION Utter. Help S: Next, you can say 1) “What is it? ”, or 2) “What’s the phone number of [ROOM_NAME]? ”, or 3) “ Let’s go there. ROOM ROLE OFFICE PHONE NUMBER GUIDE NEXT_TASK

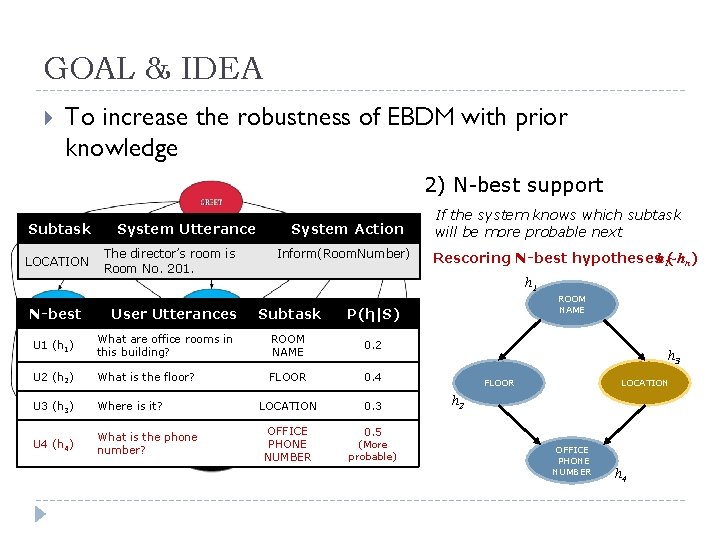

GOAL & IDEA To increase the robustness of EBDM with prior knowledge 2) N-best support Subtask LOCATION N-best System Utterance The director’s room is Room No. 201. User Utterances System Action Inform(Room. Number) If the system knows which subtask will be more probable next Rescoring N-best hypothesesh 1(~hn) h 1 Subtask P(hi|S) U 1 (h 1) What are office rooms in this building? ROOM NAME 0. 2 U 2 (h 2) What is the floor? FLOOR 0. 4 U 3 (h 3) Where is it? LOCATION 0. 3 U 4 (h 4) What is the phone number? OFFICE PHONE NUMBER 0. 5 (More probable) ROOM NAME h 3 FLOOR LOCATION h 2 OFFICE PHONE NUMBER h 4

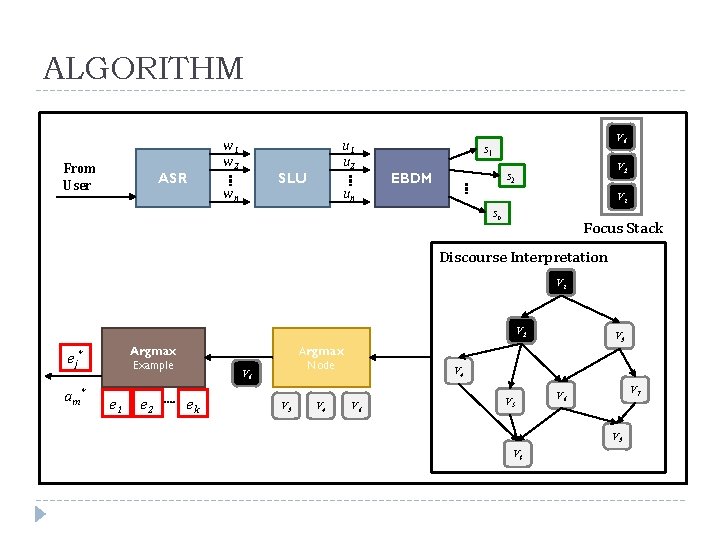

ALGORITHM From User ASR w 1 w 2 u 1 u 2 SLU wn un V 6 s 1 V 2 s 2 EBDM V 1 sn Focus Stack Discourse Interpretation V 1 V 2 Argmax Example e j* am* e 1 e 2 Argmax Node V 6 ek V 3 V 4 V 6 V 5 V 7 V 6 V 9 V 8

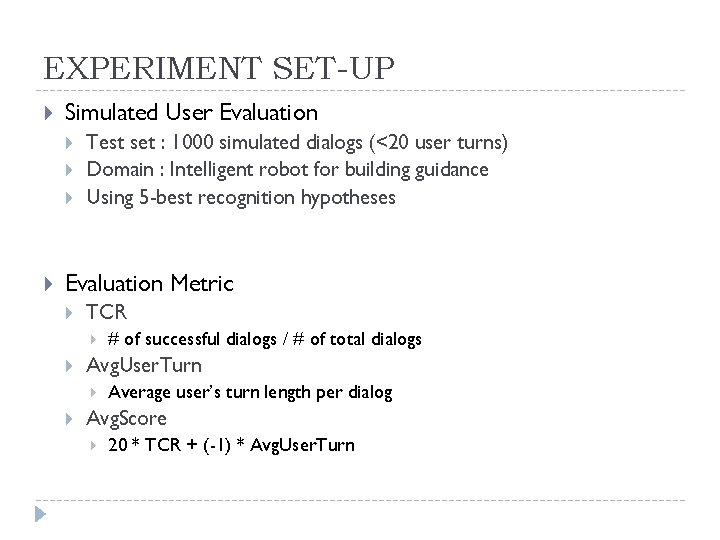

EXPERIMENT SET-UP Simulated User Evaluation Test set : 1000 simulated dialogs (<20 user turns) Domain : Intelligent robot for building guidance Using 5 -best recognition hypotheses Evaluation Metric TCR Avg. User. Turn # of successful dialogs / # of total dialogs Average user’s turn length per dialog Avg. Score 20 * TCR + (-1) * Avg. User. Turn

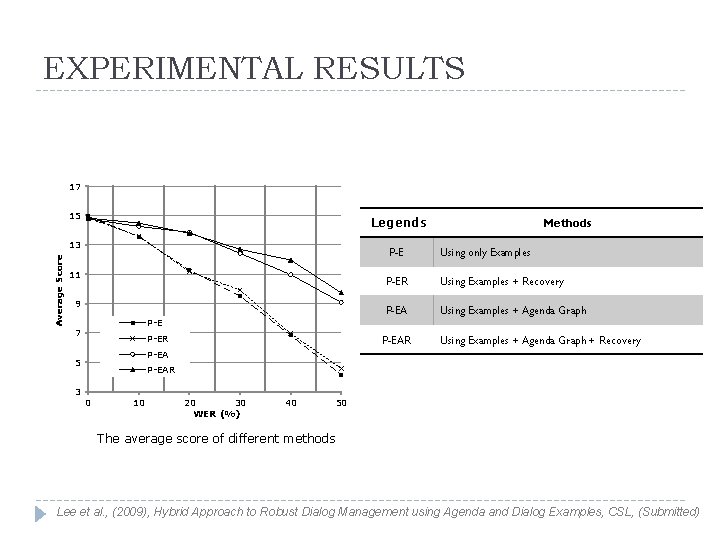

EXPERIMENTAL RESULTS 17 15 Legends Average Score 13 P-E 11 9 Using only Examples P-ER Using Examples + Recovery P-EA Using Examples + Agenda Graph P-E 7 P-ER P-EAR Using Examples + Agenda Graph + Recovery P-EA 5 3 Methods P-EAR 0 10 20 30 WER (%) 40 50 The average score of different methods Lee et al. , (2009), Hybrid Approach to Robust Dialog Management using Agenda and Dialog Examples, CSL, (Submitted)

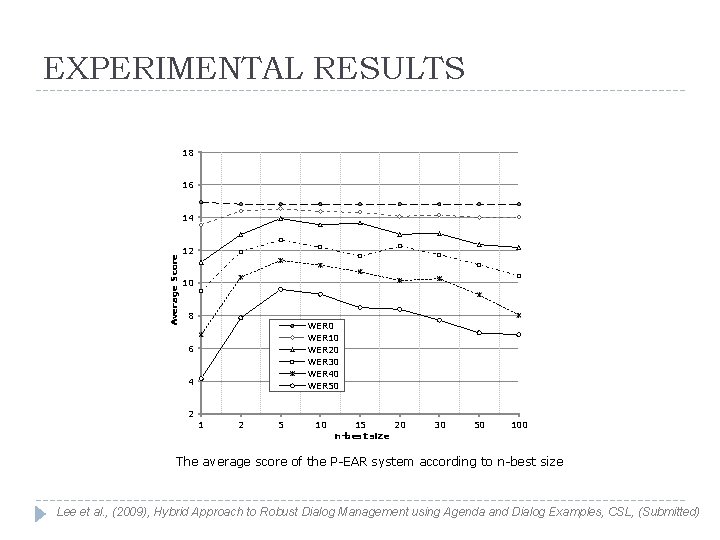

EXPERIMENTAL RESULTS 18 16 Average Score 14 12 10 8 WER 0 WER 10 WER 20 WER 30 WER 40 WER 50 6 4 2 1 2 5 10 15 20 n-best size 30 50 100 The average score of the P-EAR system according to n-best size Lee et al. , (2009), Hybrid Approach to Robust Dialog Management using Agenda and Dialog Examples, CSL, (Submitted)

DEMO VIDEO PC demo

DEMO VIDEO Robot demo

2. Feedback Generation

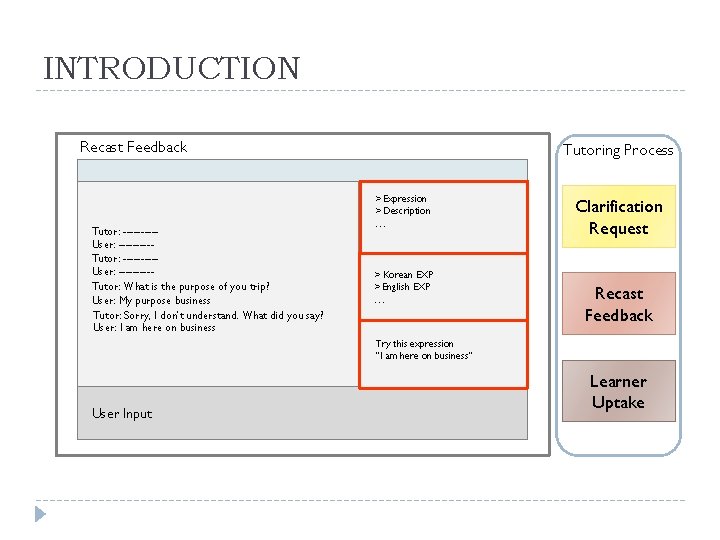

INTRODUCTION Recast Feedback Tutoring Process > Expression > Description Tutor: ---------User: ---------Tutor: What is the purpose of you trip? User: My purpose business Tutor: Sorry, I don’t understand. What did you say? User: I am here on business … > Korean EXP > English EXP … Clarification Request Recast Feedback Try this expression “I am here on business” User Input Learner Uptake

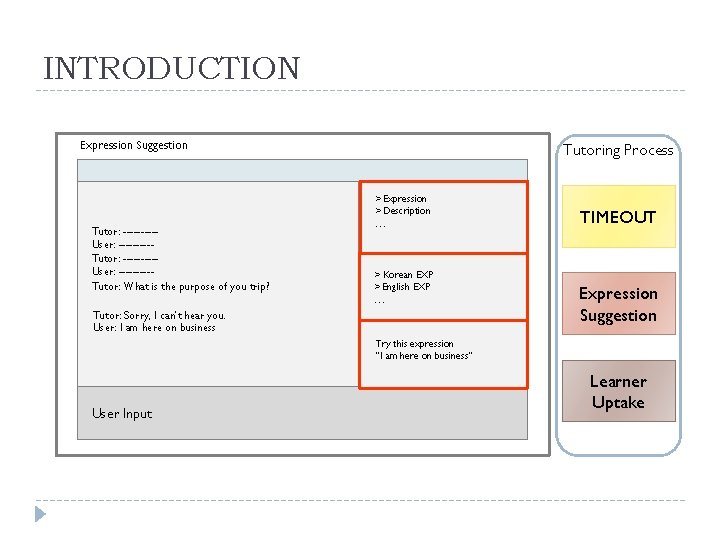

INTRODUCTION Expression Suggestion Tutoring Process > Expression > Description Tutor: ---------User: ---------Tutor: What is the purpose of you trip? … > Korean EXP > English EXP … Tutor: Sorry, I can’t hear you. User: I am here on business TIMEOUT Expression Suggestion Try this expression “I am here on business” User Input Learner Uptake

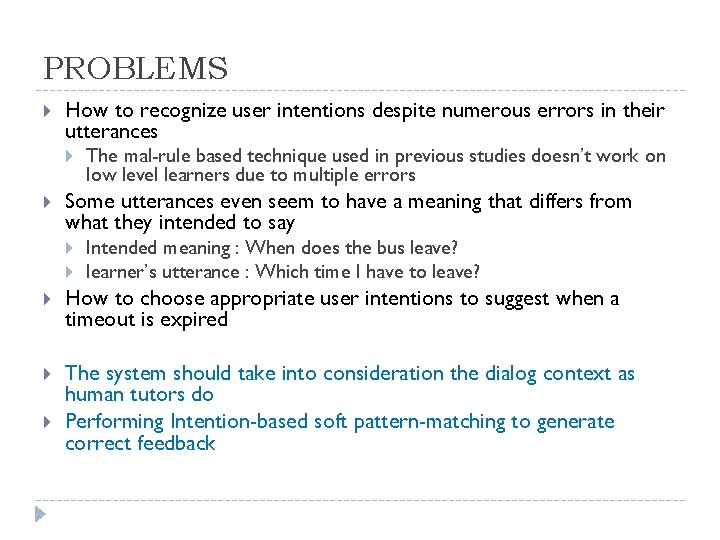

PROBLEMS How to recognize user intentions despite numerous errors in their utterances The mal-rule based technique used in previous studies doesn’t work on low level learners due to multiple errors Some utterances even seem to have a meaning that differs from what they intended to say Intended meaning : When does the bus leave? learner’s utterance : Which time I have to leave? How to choose appropriate user intentions to suggest when a timeout is expired The system should take into consideration the dialog context as human tutors do Performing Intention-based soft pattern-matching to generate correct feedback

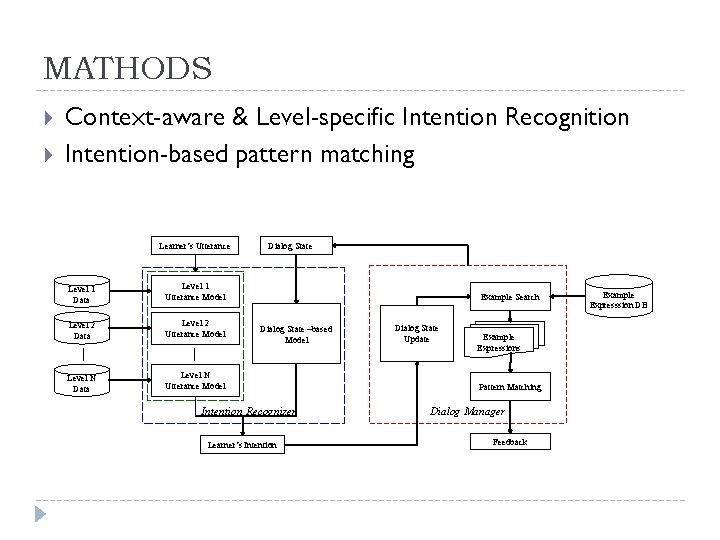

MATHODS Context-aware & Level-specific Intention Recognition Intention-based pattern matching Learner’s Utterance Level 1 Data Level 1 Utterance Model Level 2 Data Level 2 Utterance Model Level N Data Level N Utterance Model Dialog State Example Search Dialog State –based Model Intention Recognizer Learner‘s Intention Dialog State Update Example Expressions Pattern Matching Dialog Manager Feedback Example Expresssion DB

EXPERIMENT SET-UP Primitive data set Immigration domain 192 dialogs, 3517 utterances (18. 32 utt/dialog) Annotation Manually annotated each utterance with the speaker’s intention and component slot-values Automatically annotated each utterance with the discourse information

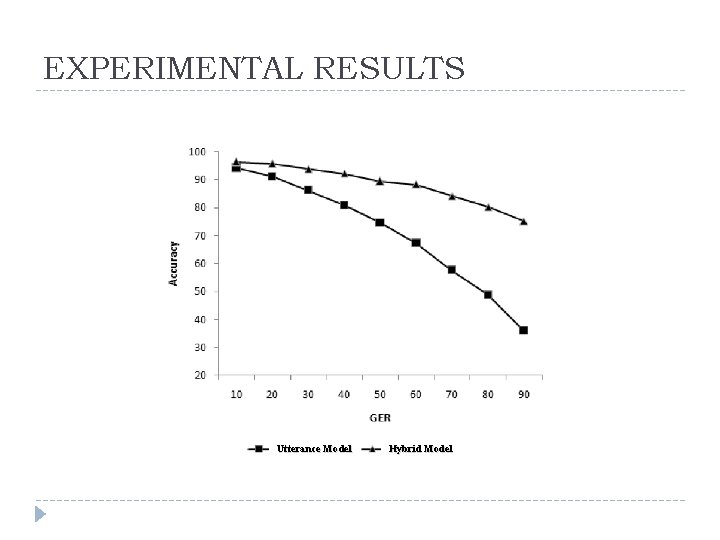

EXPERIMENTAL RESULTS Utterance Model Hybrid Model

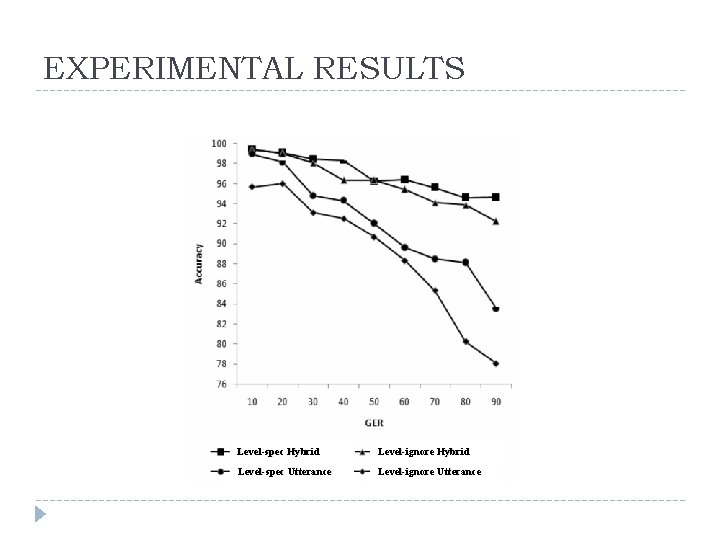

EXPERIMENTAL RESULTS Level-spec Hybrid Level-ignore Hybrid Level-spec Utterance Level-ignore Utterance

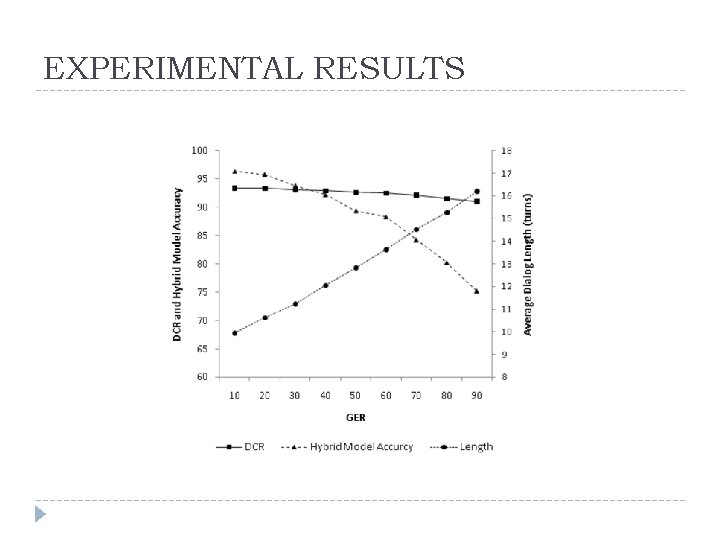

EXPERIMENTAL RESULTS

Demo: POSTECH DB-CALL initial version 2008

3. Translation Assistance

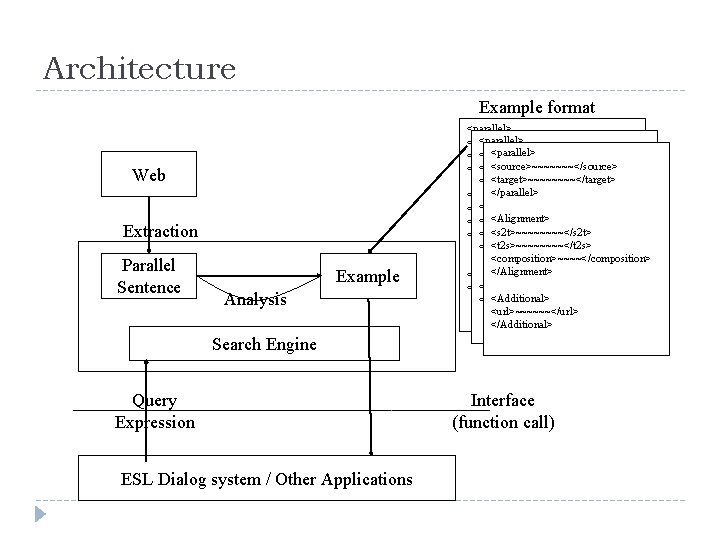

Architecture Example format Web Extraction Parallel Sentence Example Analysis <parallel> <source>~~~~~~~</source> <target>~~~~~~~~</target> </parallel> <target>~~~~</target> </parallel> <Alignment Info> <s 2 t>~~~~</s 2 t> <Alignment> <s 2 t>~~~~~~~~</s 2 t> <t 2 s>~~~~~~~~</t 2 s> <composition>~~~~<composition> <t 2 s>~~~~</t 2 s> <composition>~~~~<composition>~~~~</composition> </Alignment> <Additional> <url>~~~~~~</url> </Additional> Search Engine Query Expression ESL Dialog system / Other Applications Interface (function call)

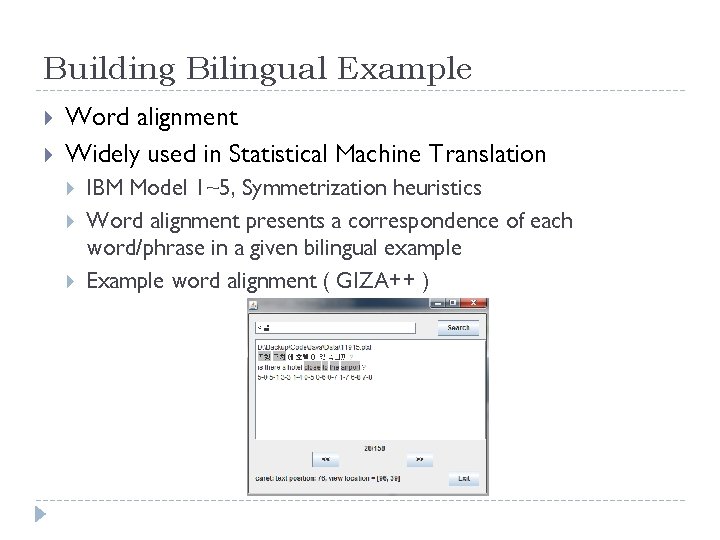

Building Bilingual Example Word alignment Widely used in Statistical Machine Translation IBM Model 1~5, Symmetrization heuristics Word alignment presents a correspondence of each word/phrase in a given bilingual example Example word alignment ( GIZA++ )

4. Comprehension Assistance

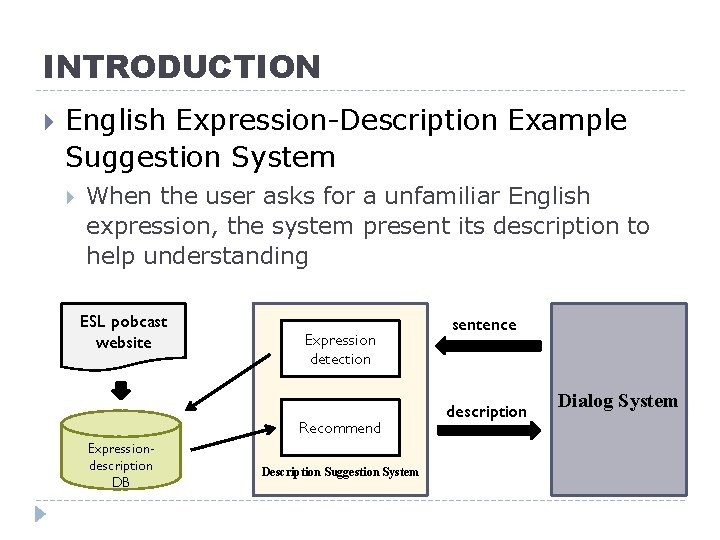

INTRODUCTION English Expression-Description Example Suggestion System When the user asks for a unfamiliar English expression, the system present its description to help understanding ESL pobcast website Expression detection Recommend Expressiondescription DB Description Suggestion System sentence description Dialog System

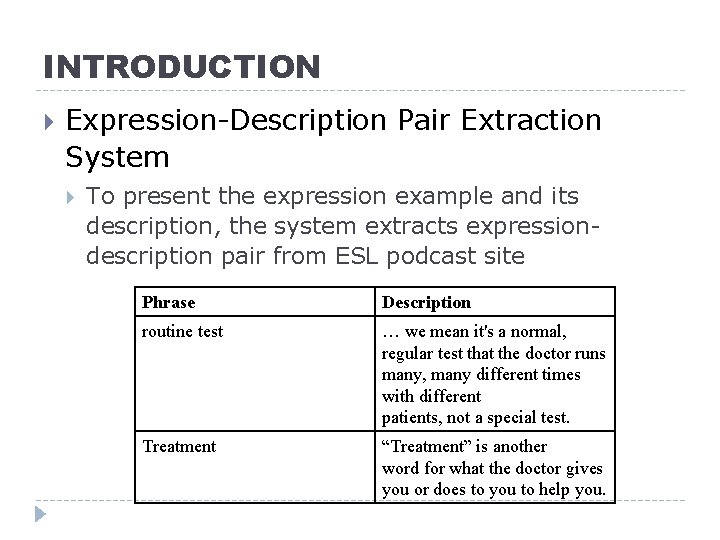

INTRODUCTION Expression-Description Pair Extraction System To present the expression example and its description, the system extracts expressiondescription pair from ESL podcast site Phrase Description routine test … we mean it's a normal, regular test that the doctor runs many, many different times with different patients, not a special test. Treatment “Treatment” is another word for what the doctor gives you or does to you to help you.

![EXAMPLE [script] [description] EXAMPLE [script] [description]](http://slidetodoc.com/presentation_image_h2/30baa82c31b8a108f8ff65e0cd71e563/image-41.jpg)

EXAMPLE [script] [description]

![EXAMPLE [script] [description] EXAMPLE [script] [description]](http://slidetodoc.com/presentation_image_h2/30baa82c31b8a108f8ff65e0cd71e563/image-42.jpg)

EXAMPLE [script] [description]

Language Learner Simulation

1. User Simulation

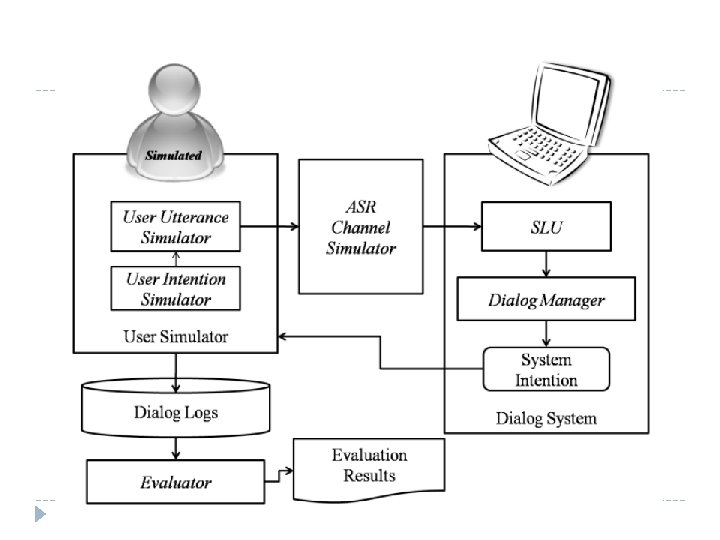

INTRODUCTION User Simulation For Spoken Dialog System Developing `simulated user’ who can replace real users Application Automated evaluation of Spoken Dialog System Detecting potential flaws Predicting overall behaviors of system Learning dialog strategy in reinforcement learning framework

PROBLEM & GOAL PROBLEM How to model real user User Intention simulation User Surface simulation ASR channel simulation GOAL Natural Simulation Diverse Simulation Controllable Simulation

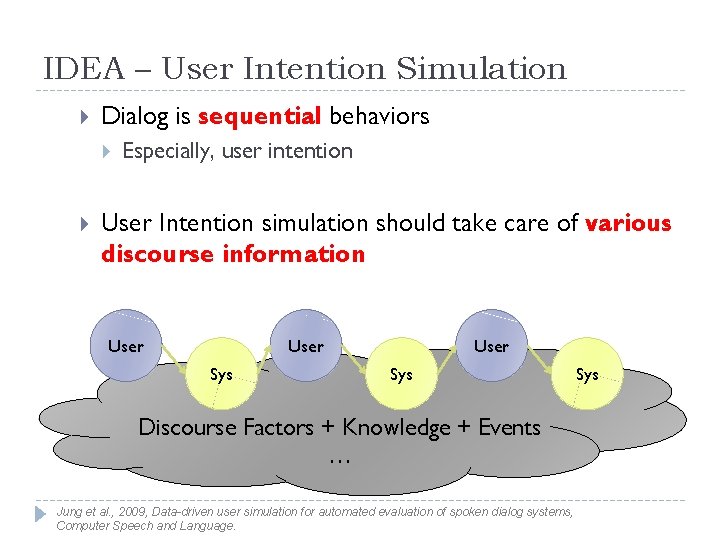

IDEA – User Intention Simulation Dialog is sequential behaviors Especially, user intention User Intention simulation should take care of various discourse information User Sys Discourse Factors + Knowledge + Events … Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language. Sys

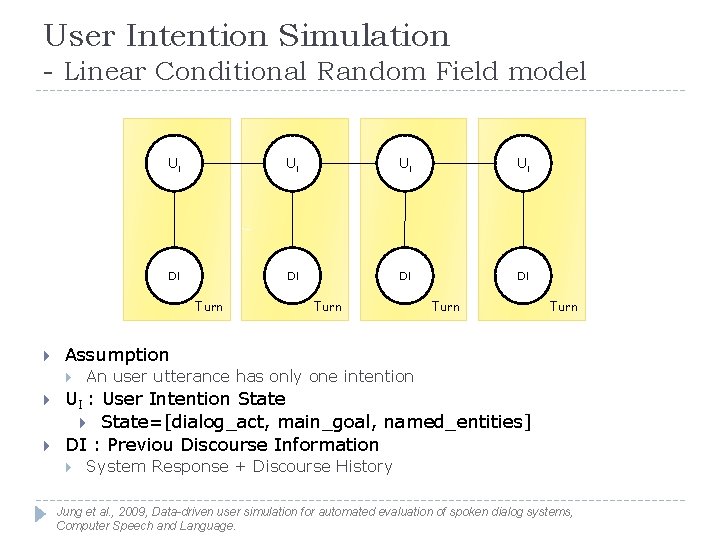

User Intention Simulation - Linear Conditional Random Field model UI UI DI DI Turn Assumption Turn An user utterance has only one intention UI : User Intention State=[dialog_act, main_goal, named_entities] DI : Previou Discourse Information System Response + Discourse History Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

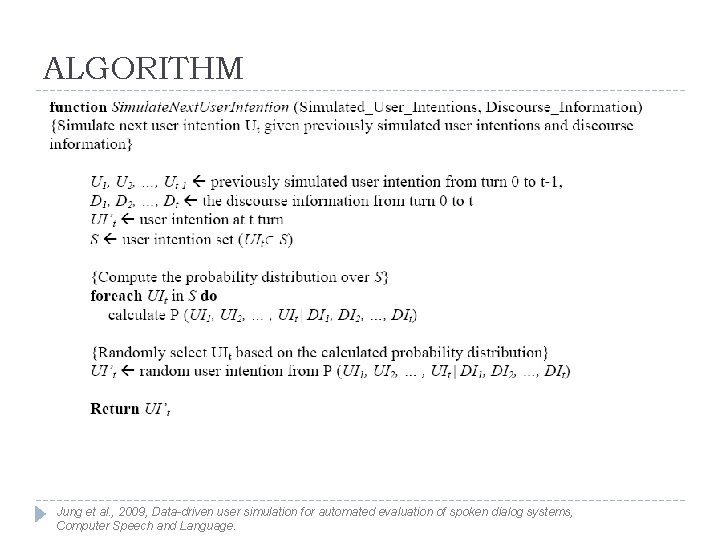

ALGORITHM Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

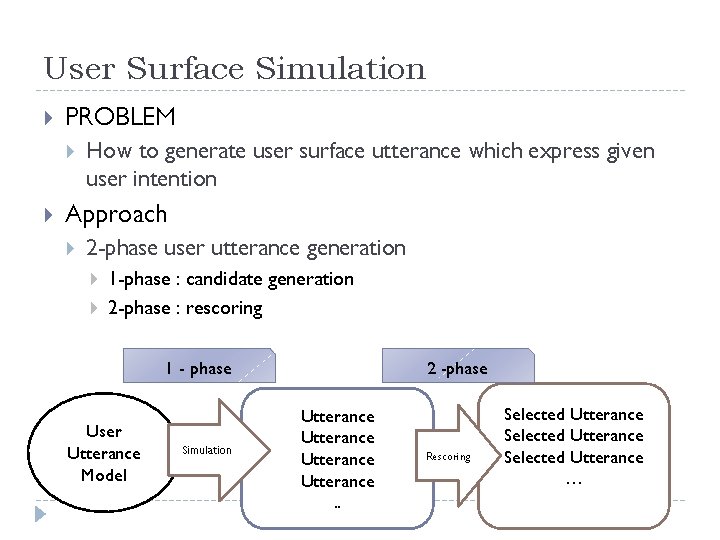

User Surface Simulation PROBLEM How to generate user surface utterance which express given user intention Approach 2 -phase user utterance generation 1 -phase : candidate generation 2 -phase : rescoring 1 - phase User Utterance Model Simulation 2 -phase Utterance. . Rescoring Selected Utterance …

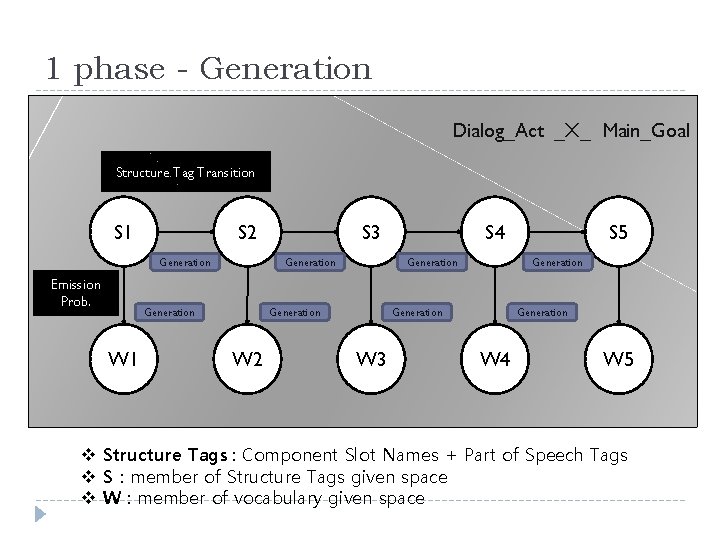

1 phase - Generation Dialog_Act _X_ Main_Goal Structure Tag Transition S 1 S 2 Generation Emission Prob. W 2 S 4 Generation W 3 S 5 Generation W 1 S 3 W 4 W 5 v Structure Tags : Component Slot Names + Part of Speech Tags v S : member of Structure Tags given space v W : member of vocabulary given space

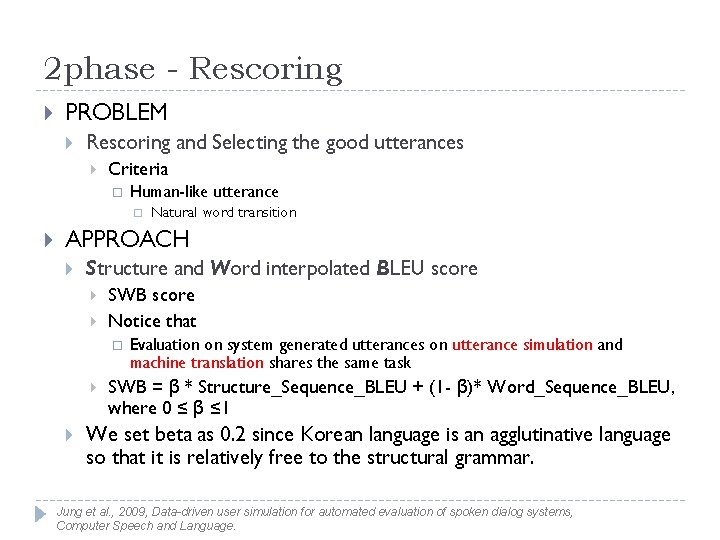

2 phase - Rescoring PROBLEM Rescoring and Selecting the good utterances Criteria Human-like utterance Natural word transition APPROACH Structure and Word interpolated BLEU score SWB score Notice that Evaluation on system generated utterances on utterance simulation and machine translation shares the same task SWB = β * Structure_Sequence_BLEU + (1 - β)* Word_Sequence_BLEU, where 0 ≤ β ≤ 1 We set beta as 0. 2 since Korean language is an agglutinative language so that it is relatively free to the structural grammar. Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

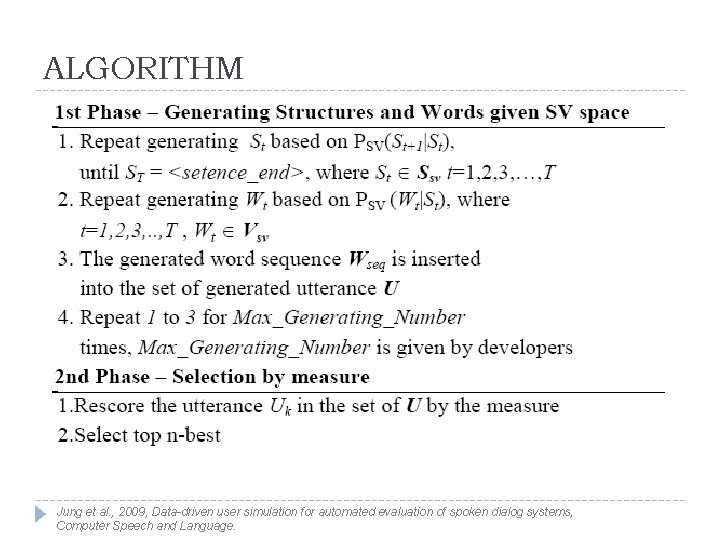

ALGORITHM Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

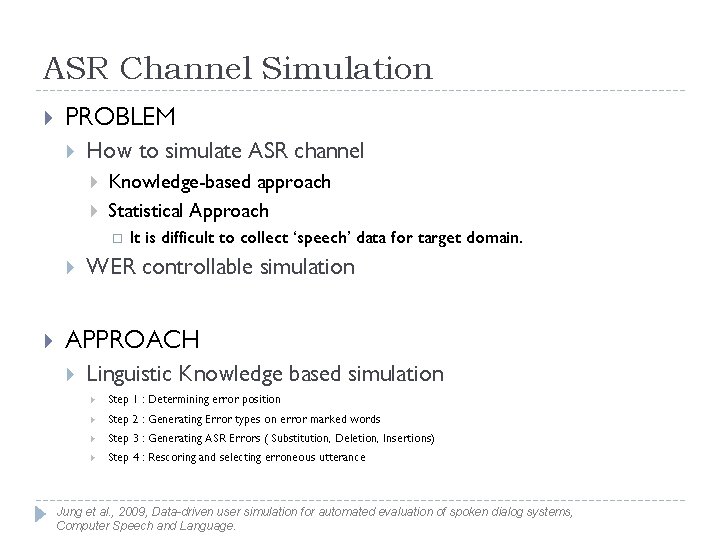

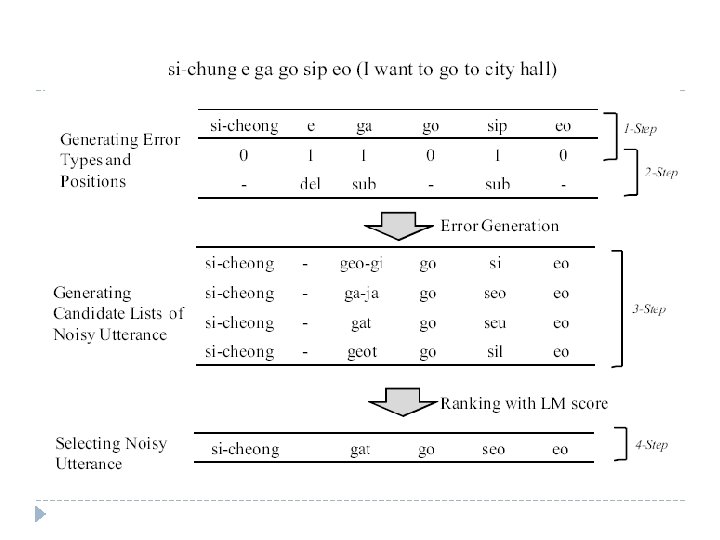

ASR Channel Simulation PROBLEM How to simulate ASR channel Knowledge-based approach Statistical Approach It is difficult to collect ‘speech’ data for target domain. WER controllable simulation APPROACH Linguistic Knowledge based simulation Step 1 : Determining error position Step 2 : Generating Error types on error marked words Step 3 : Generating ASR Errors ( Substitution, Deletion, Insertions) Step 4 : Rescoring and selecting erroneous utterance Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

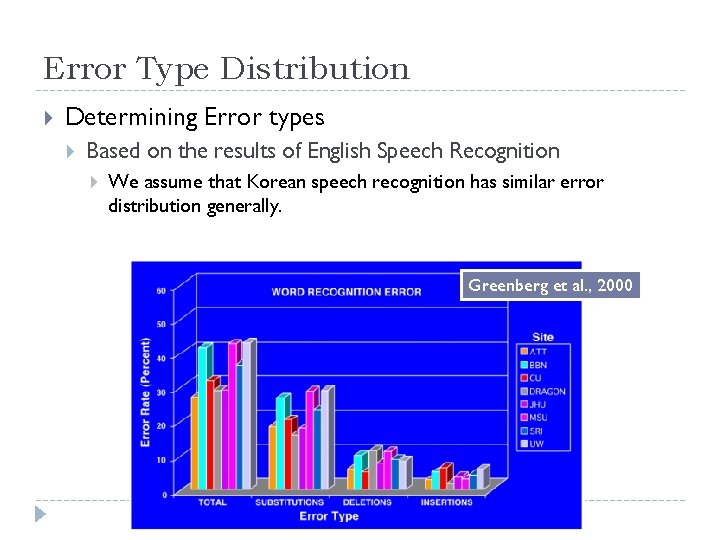

Error Type Distribution Determining Error types Based on the results of English Speech Recognition We assume that Korean speech recognition has similar error distribution generally. Greenberg et al. , 2000

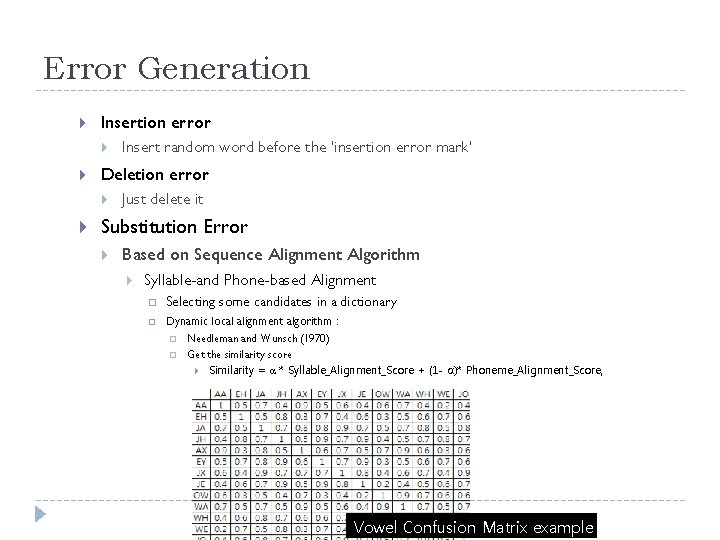

Error Generation Insertion error Deletion error Insert random word before the ‘insertion error mark’ Just delete it Substitution Error Based on Sequence Alignment Algorithm Syllable-and Phone-based Alignment Selecting some candidates in a dictionary Dynamic local alignment algorithm : Needleman and Wunsch (1970) Get the similarity score Similarity = α * Syllable_Alignment_Score + (1 - α)* Phoneme_Alignment_Score, where 0 ≤ α ≤ 1 Vowel Confusion Matrix example

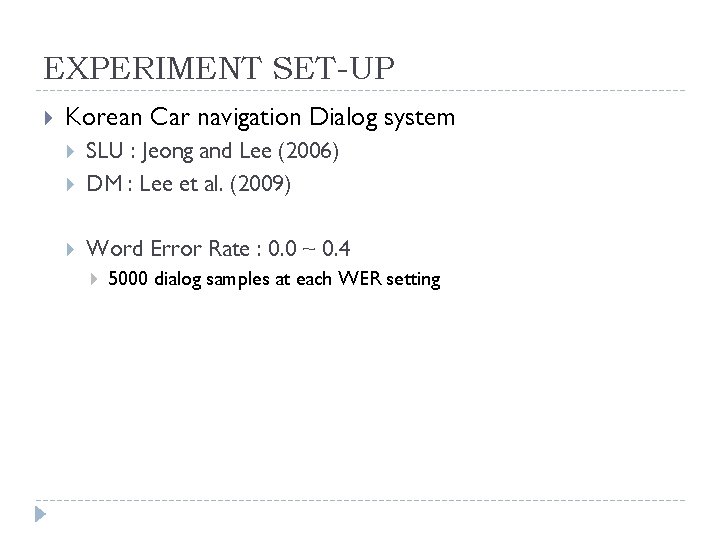

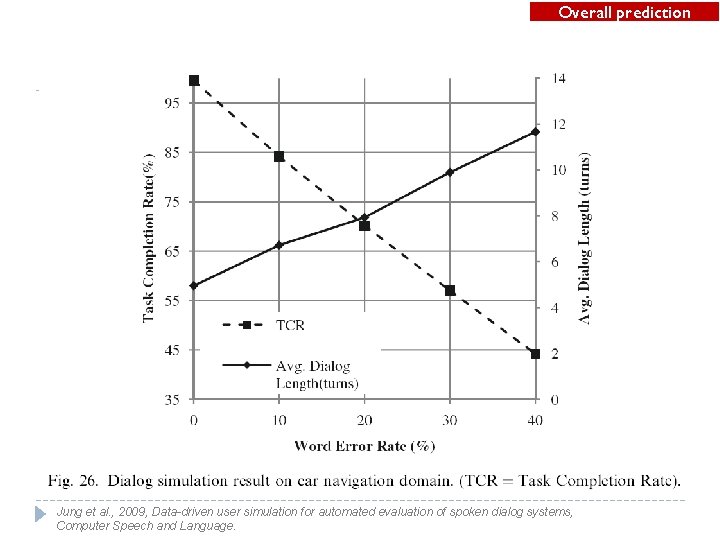

EXPERIMENT SET-UP Korean Car navigation Dialog system SLU : Jeong and Lee (2006) DM : Lee et al. (2009) Word Error Rate : 0. 0 ~ 0. 4 5000 dialog samples at each WER setting

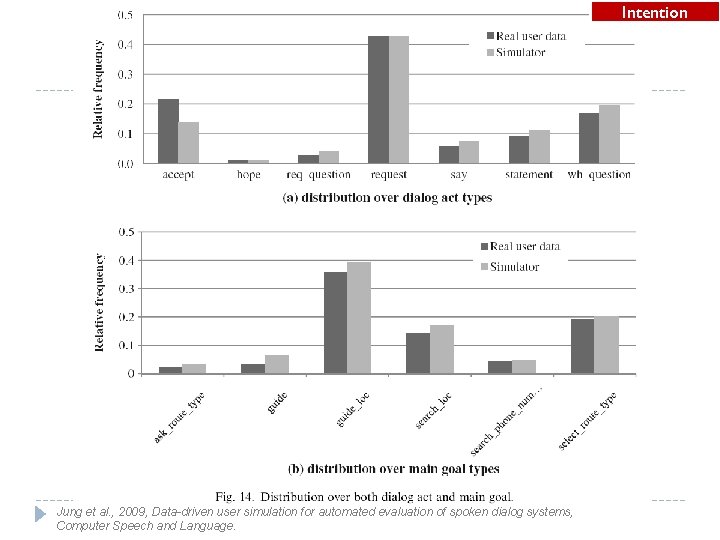

Intention Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

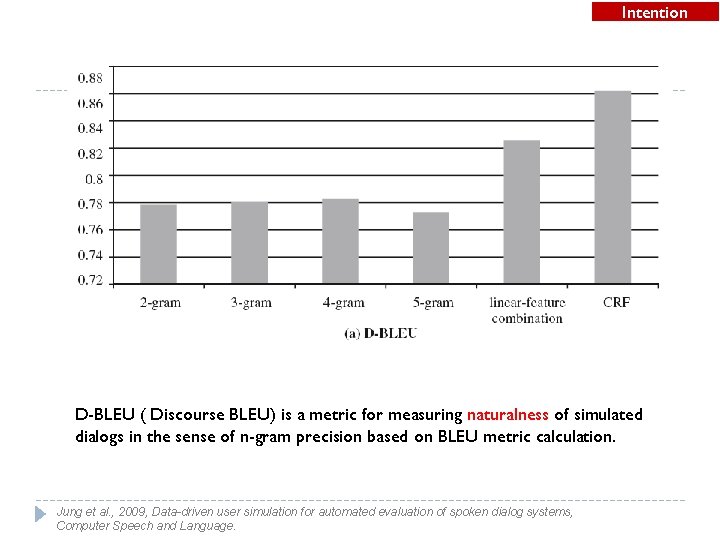

Intention D-BLEU ( Discourse BLEU) is a metric for measuring naturalness of simulated dialogs in the sense of n-gram precision based on BLEU metric calculation. Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

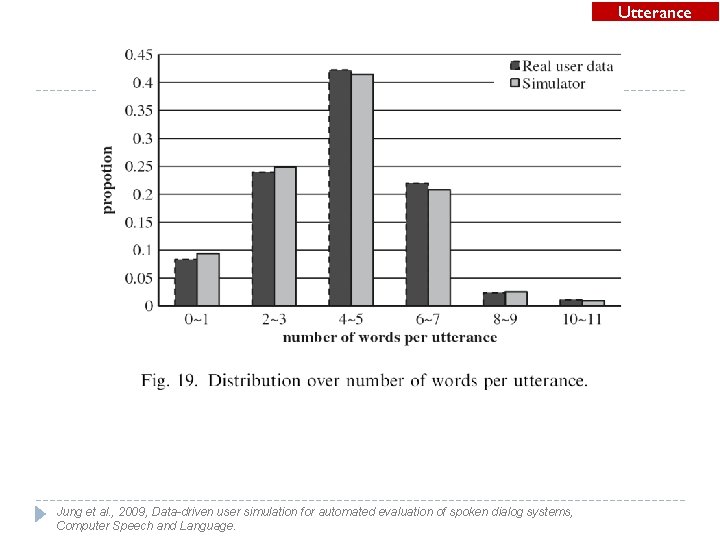

Utterance Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

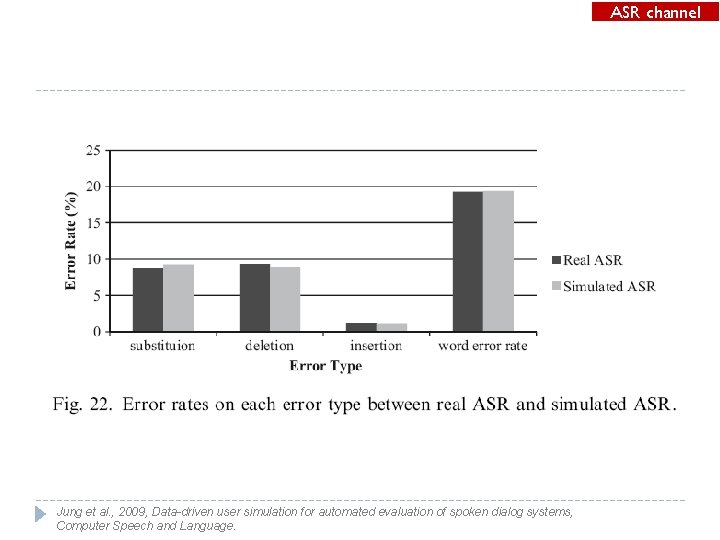

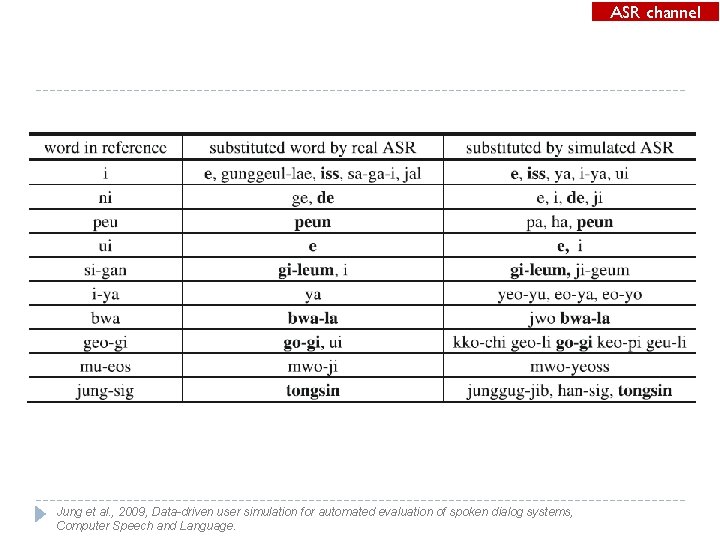

ASR channel Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

ASR channel Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

Overall prediction Jung et al. , 2009, Data-driven user simulation for automated evaluation of spoken dialog systems, Computer Speech and Language.

2. Grammar Error Simulation

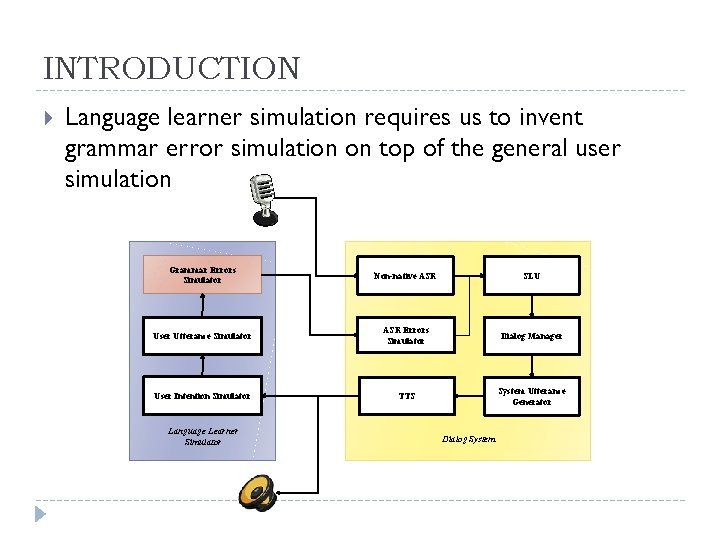

INTRODUCTION Language learner simulation requires us to invent grammar error simulation on top of the general user simulation Grammar Errors Simulator Non-native ASR SLU User Utterance Simulator ASR Errors Simulator Dialog Manager User Intention Simulator TTS System Utterance Generator Language Learner Simulator Dialog System

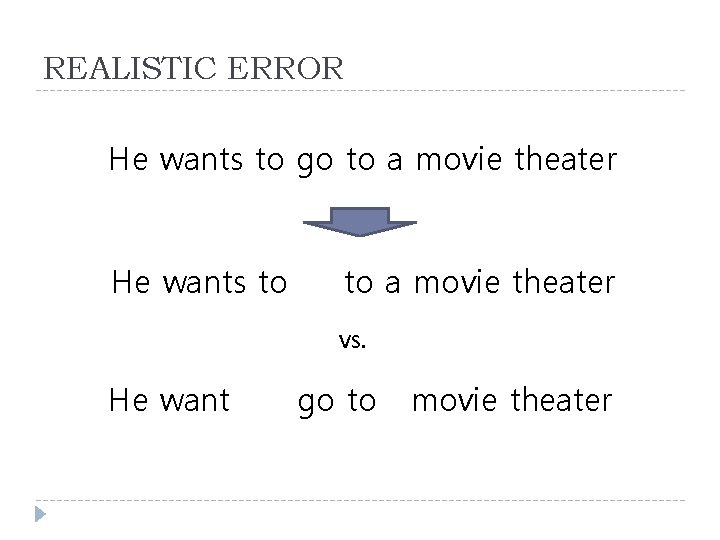

REALISTIC ERROR He wants to go to a movie theater He wants to to a movie theater VS. He want go to movie theater

PROBLEMS How to incorporate expert knowledge about error characteristics of Korean language learners into the statistical model Subject-verb agreement errors Omission errors of the preposition of prepositional verbs Omission errors of articles Etc.

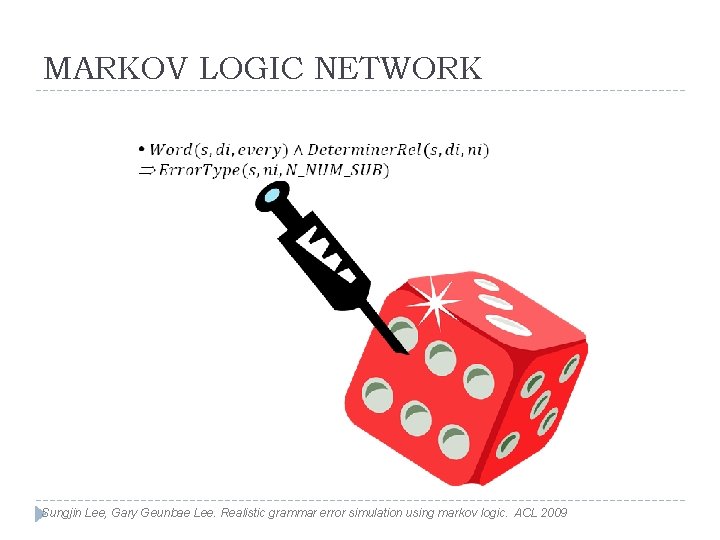

MARKOV LOGIC NETWORK Sungjin Lee, Gary Geunbae Lee. Realistic grammar error simulation using markov logic. ACL 2009

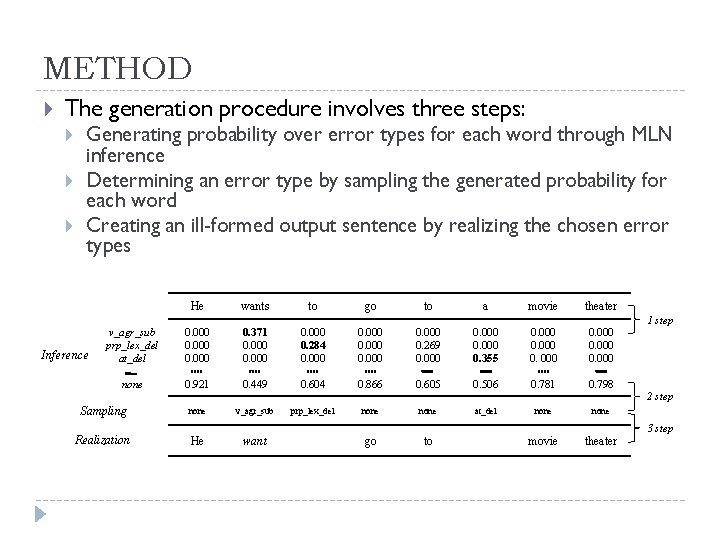

METHOD The generation procedure involves three steps: Generating probability over error types for each word through MLN inference Determining an error type by sampling the generated probability for each word Creating an ill-formed output sentence by realizing the chosen error types He wants to go to a movie theater v_agr_sub prp_lex_del at_del 0. 000 0. 371 0. 000 0. 284 0. 000 0. 269 0. 000 0. 355 0. 000 none 0. 921 0. 449 0. 604 0. 866 0. 605 0. 506 0. 781 0. 798 Sampling none v_agr_sub prp_lex_del none at_del none Realization He want go to movie theater Inference 1 step 2 step 3 step

EXPERIMENT SET-UP Data Sets NICT JLE Corpus Dividing the 167 error annotated files into 3 level groups: Beginner(1 -4) : 2, 905 Intermediate(5 -6) : 3, 296 Advanced(7 -9) : 2, 752 Evaluation 10 -fold cross validations performed for each group The validation results were added together across the rounds

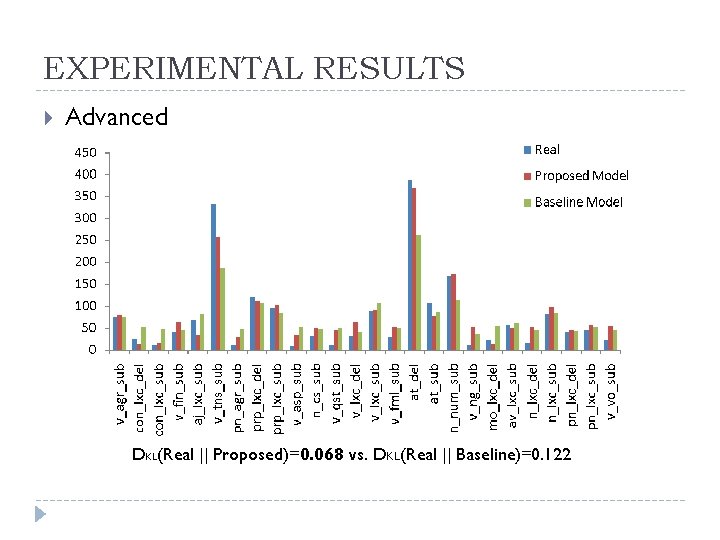

EXPERIMENTAL RESULTS Advanced DKL(Real || Proposed)=0. 068 vs. DKL(Real || Baseline)=0. 122

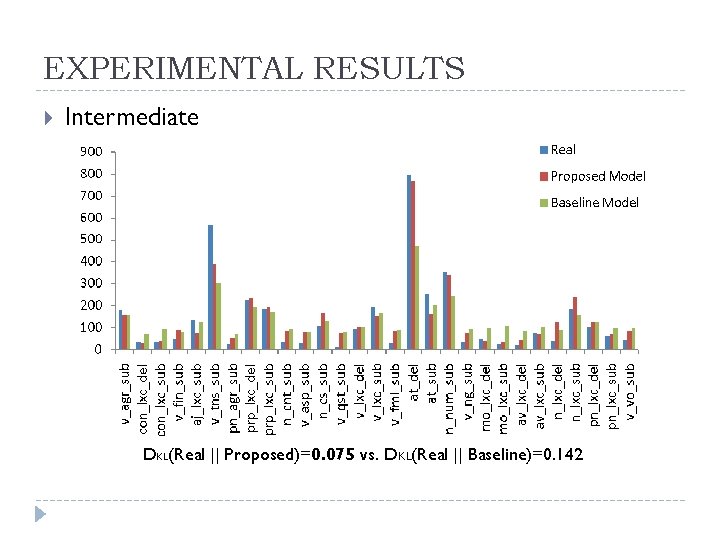

EXPERIMENTAL RESULTS Intermediate DKL(Real || Proposed)=0. 075 vs. DKL(Real || Baseline)=0. 142

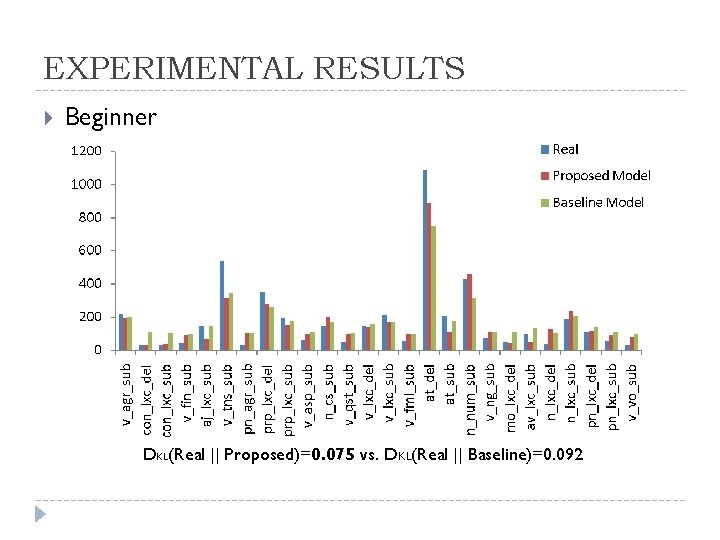

EXPERIMENTAL RESULTS Beginner DKL(Real || Proposed)=0. 075 vs. DKL(Real || Baseline)=0. 092

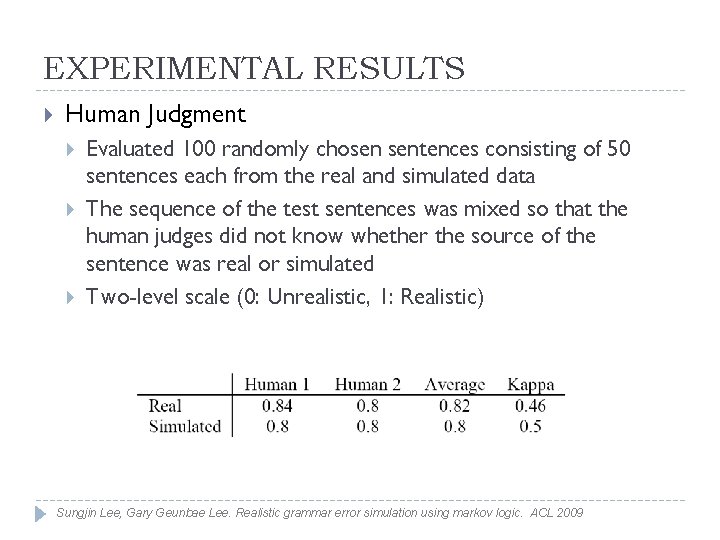

EXPERIMENTAL RESULTS Human Judgment Evaluated 100 randomly chosen sentences consisting of 50 sentences each from the real and simulated data The sequence of the test sentences was mixed so that the human judges did not know whether the source of the sentence was real or simulated Two-level scale (0: Unrealistic, 1: Realistic) Sungjin Lee, Gary Geunbae Lee. Realistic grammar error simulation using markov logic. ACL 2009

Q&A

- Slides: 77