Partofspeech tagging A simple but useful form of

Part-of-speech tagging A simple but useful form of linguistic analysis

Parts of Speech • Perhaps starting with Aristotle in the West (384– 322 BCE), there was the idea of having parts of speech • • a. k. a lexical categories, word classes, “tags”, POS It comes from Dionysius Thrax of Alexandria (c. 100 BCE) the idea that is still with us that there are 8 parts of speech • But actually his 8 aren’t exactly the ones we are taught today • Thrax: noun, verb, article, adverb, preposition, conjunction, participle, pronoun • School grammar: noun, verb, adjective, adverb, preposition, conjunction, pronoun, interjection

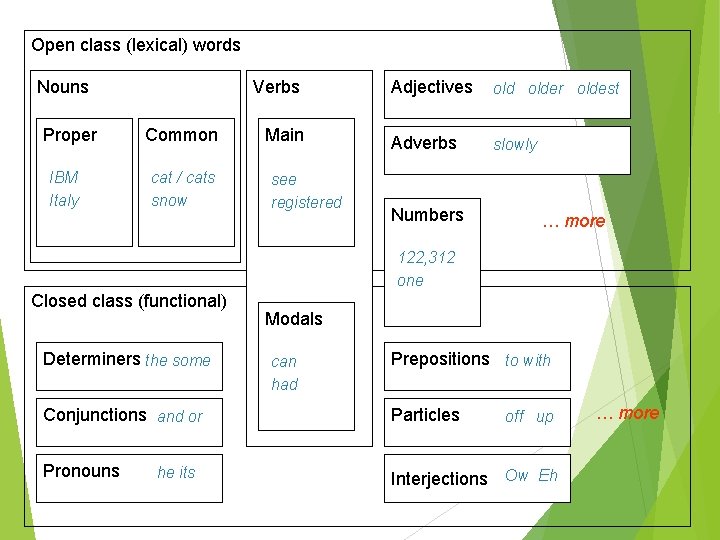

Open class (lexical) words Nouns Proper IBM Italy Verbs Common cat / cats snow Main see registered Adjectives older oldest Adverbs slowly Numbers … more 122, 312 one Closed class (functional) Determiners the some Modals can had Prepositions to with Conjunctions and or Particles Pronouns Interjections Ow Eh he its off up … more

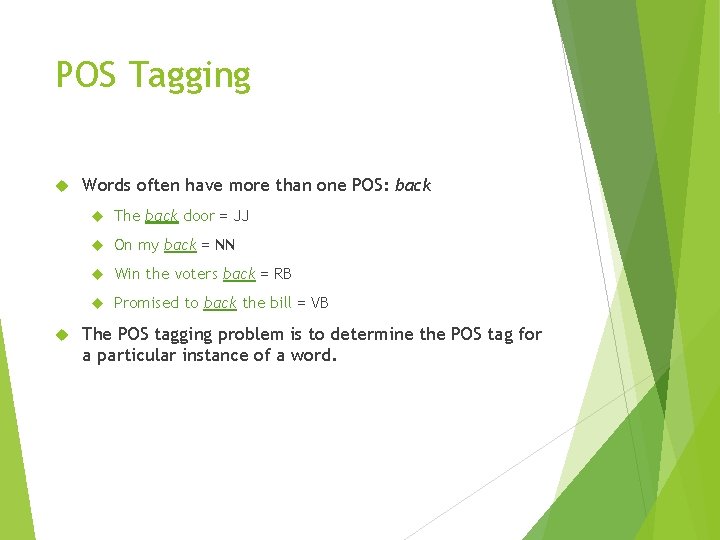

POS Tagging Words often have more than one POS: back The back door = JJ On my back = NN Win the voters back = RB Promised to back the bill = VB The POS tagging problem is to determine the POS tag for a particular instance of a word.

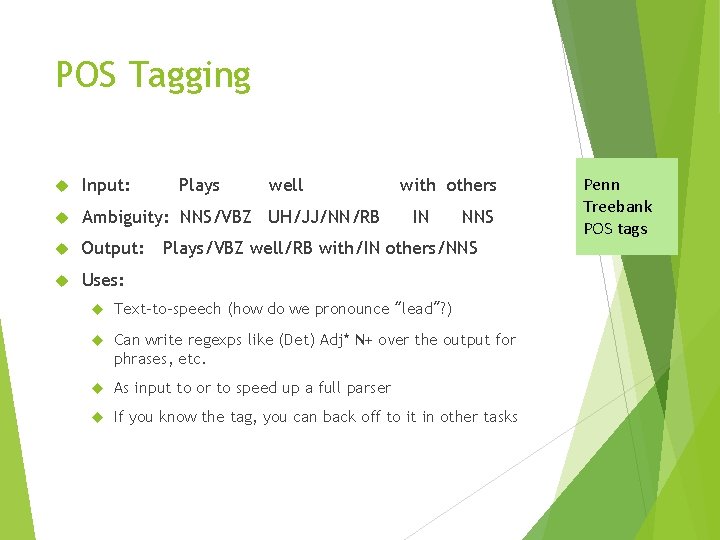

POS Tagging Input: Plays well Ambiguity: NNS/VBZ UH/JJ/NN/RB Output: Uses: with others IN NNS Plays/VBZ well/RB with/IN others/NNS Text-to-speech (how do we pronounce “lead”? ) Can write regexps like (Det) Adj* N+ over the output for phrases, etc. As input to or to speed up a full parser If you know the tag, you can back off to it in other tasks Penn Treebank POS tags

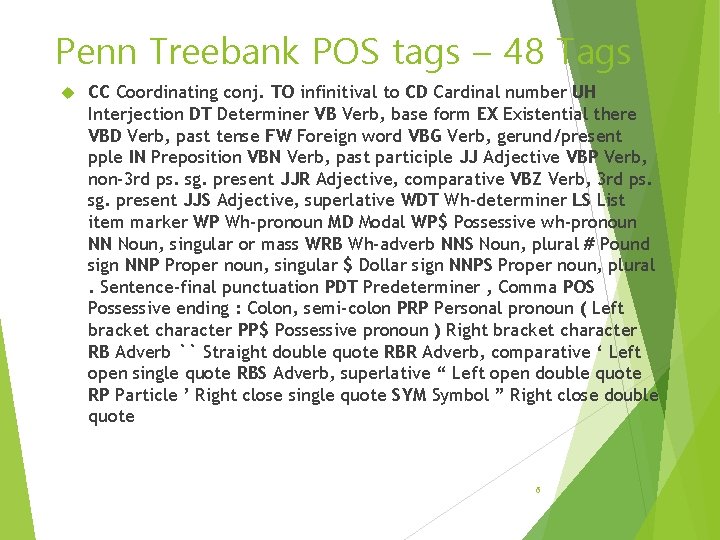

Penn Treebank POS tags – 48 Tags CC Coordinating conj. TO infinitival to CD Cardinal number UH Interjection DT Determiner VB Verb, base form EX Existential there VBD Verb, past tense FW Foreign word VBG Verb, gerund/present pple IN Preposition VBN Verb, past participle JJ Adjective VBP Verb, non-3 rd ps. sg. present JJR Adjective, comparative VBZ Verb, 3 rd ps. sg. present JJS Adjective, superlative WDT Wh-determiner LS List item marker WP Wh-pronoun MD Modal WP$ Possessive wh-pronoun NN Noun, singular or mass WRB Wh-adverb NNS Noun, plural # Pound sign NNP Proper noun, singular $ Dollar sign NNPS Proper noun, plural. Sentence-final punctuation PDT Predeterminer , Comma POS Possessive ending : Colon, semi-colon PRP Personal pronoun ( Left bracket character PP$ Possessive pronoun ) Right bracket character RB Adverb `` Straight double quote RBR Adverb, comparative ‘ Left open single quote RBS Adverb, superlative “ Left open double quote RP Particle ’ Right close single quote SYM Symbol ” Right close double quote 6

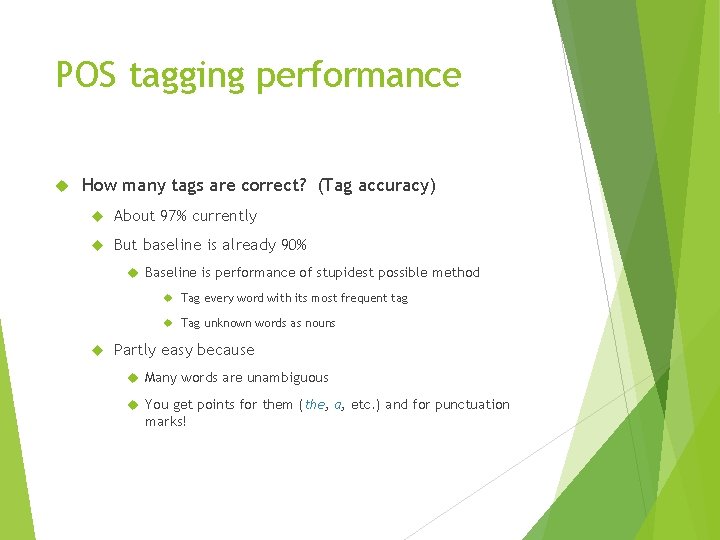

POS tagging performance How many tags are correct? (Tag accuracy) About 97% currently But baseline is already 90% Baseline is performance of stupidest possible method Tag every word with its most frequent tag Tag unknown words as nouns Partly easy because Many words are unambiguous You get points for them (the, a, etc. ) and for punctuation marks!

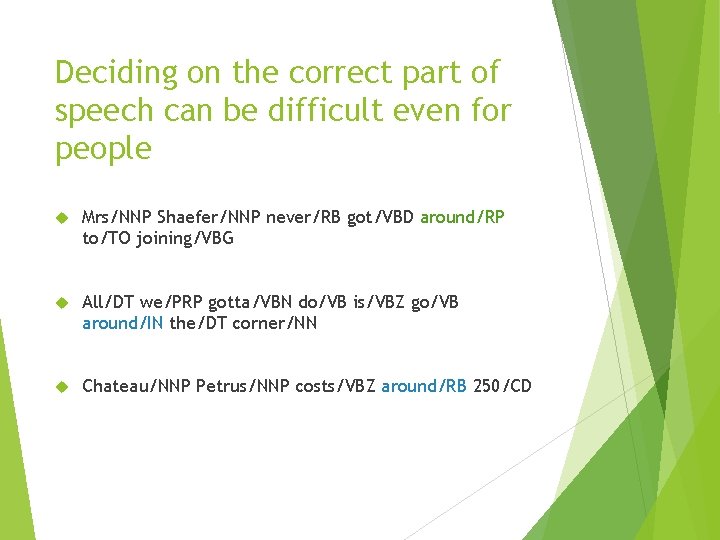

Deciding on the correct part of speech can be difficult even for people Mrs/NNP Shaefer/NNP never/RB got/VBD around/RP to/TO joining/VBG All/DT we/PRP gotta/VBN do/VB is/VBZ go/VB around/IN the/DT corner/NN Chateau/NNP Petrus/NNP costs/VBZ around/RB 250/CD

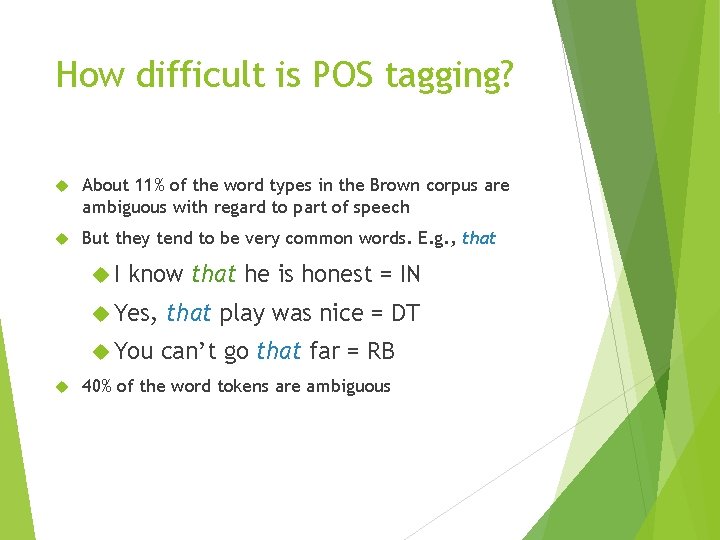

How difficult is POS tagging? About 11% of the word types in the Brown corpus are ambiguous with regard to part of speech But they tend to be very common words. E. g. , that I know that he is honest = IN Yes, that play was nice = DT You can’t go that far = RB 40% of the word tokens are ambiguous

HMM Tagging 10

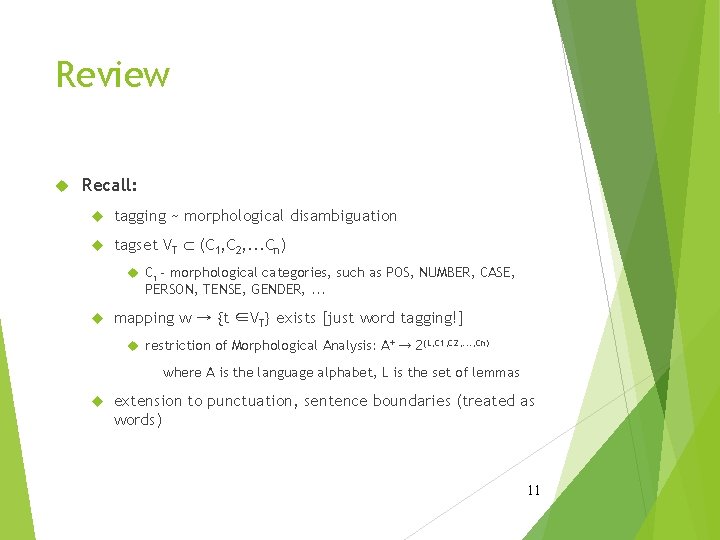

Review Recall: tagging ~ morphological disambiguation tagset VT Ì (C 1, C 2, . . . Cn) Ci - morphological categories, such as POS, NUMBER, CASE, PERSON, TENSE, GENDER, . . . mapping w → {t ∈VT} exists [just word tagging!] restriction of Morphological Analysis: A+ → 2(L, C 1, C 2, . . . , Cn) where A is the language alphabet, L is the set of lemmas extension to punctuation, sentence boundaries (treated as words) 11

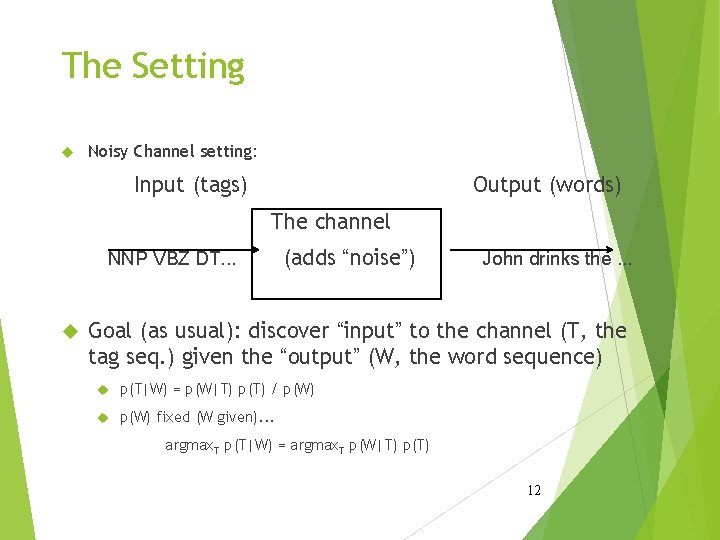

The Setting Noisy Channel setting: Input (tags) Output (words) The channel NNP VBZ DT. . . (adds “noise”) John drinks the. . . Goal (as usual): discover “input” to the channel (T, the tag seq. ) given the “output” (W, the word sequence) p(T|W) = p(W|T) p(T) / p(W) fixed (W given). . . argmax. T p(T|W) = argmax. T p(W|T) p(T) 12

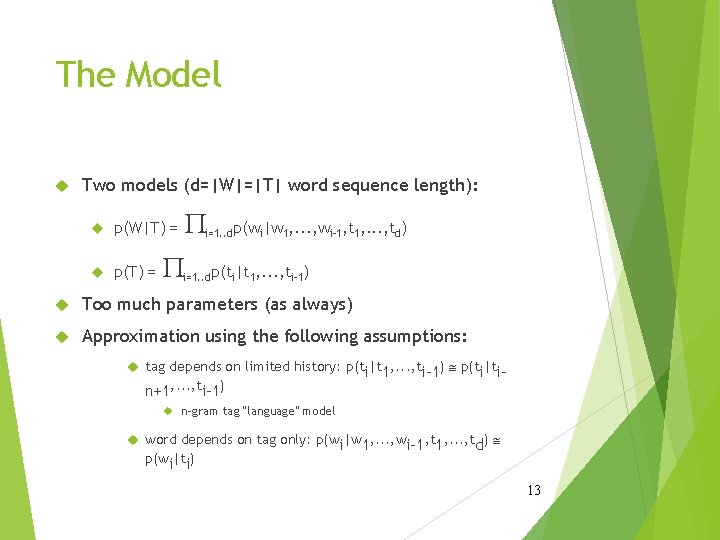

The Model Two models (d=|W|=|T| word sequence length): p(W|T) = p(T) = P P i=1. . dp(wi|w 1, . . . , wi-1, t 1, . . . , td) i=1. . dp(ti|t 1, . . . , ti-1) Too much parameters (as always) Approximation using the following assumptions: tag depends on limited history: p(t |t , . . . , t i 1 n+1, . . . , ti-1) @ p(ti|ti- n-gram tag “language” model word depends on tag only: p(w |w , . . . , w p(wi|ti) i 1 i-1, t 1, . . . , td) @ 13

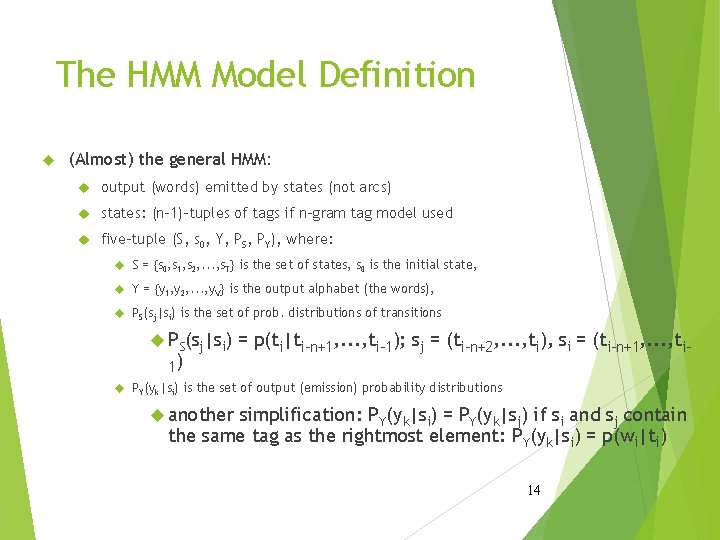

The HMM Model Definition (Almost) the general HMM: output (words) emitted by states (not arcs) states: (n-1)-tuples of tags if n-gram tag model used five-tuple (S, s 0, Y, PS, PY), where: S = {s 0, s 1, s 2, . . . , s. T} is the set of states, s 0 is the initial state, Y = {y 1, y 2, . . . , y. V} is the output alphabet (the words), PS(sj|si) is the set of prob. distributions of transitions PS(sj|si) 1) = p(ti|ti-n+1, . . . , ti-1); sj = (ti-n+2, . . . , ti), si = (ti-n+1, . . . , ti- PY(yk|si) is the set of output (emission) probability distributions another simplification: PY(yk|si) = PY(yk|sj) if si and sj contain the same tag as the rightmost element: PY(yk|si) = p(wi|ti) 14

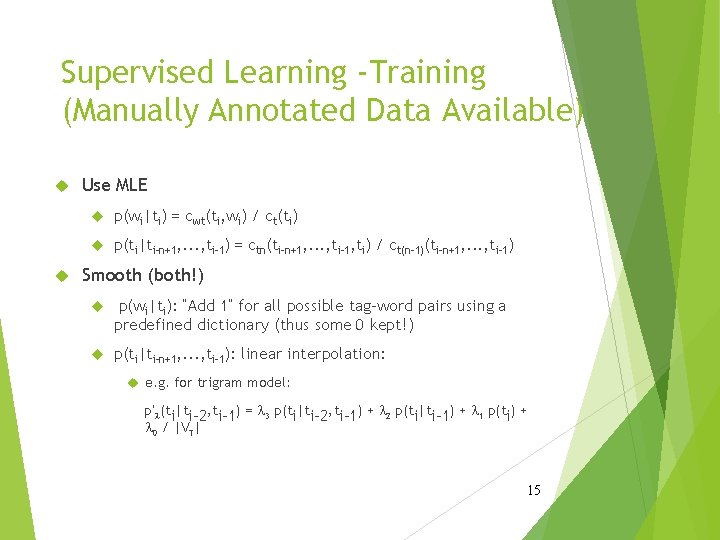

Supervised Learning -Training (Manually Annotated Data Available) Use MLE p(wi|ti) = cwt(ti, wi) / ct(ti) p(ti|ti-n+1, . . . , ti-1) = ctn(ti-n+1, . . . , ti-1, ti) / ct(n-1)(ti-n+1, . . . , ti-1) Smooth (both!) p(wi|ti): “Add 1” for all possible tag-word pairs using a predefined dictionary (thus some 0 kept!) p(ti|ti-n+1, . . . , ti-1): linear interpolation: e. g. for trigram model: p’l(ti|ti-2, ti-1) = l 3 p(ti|ti-2, ti-1) + l 2 p(ti|ti-1) + l 1 p(ti) + l 0 / |VT| 15

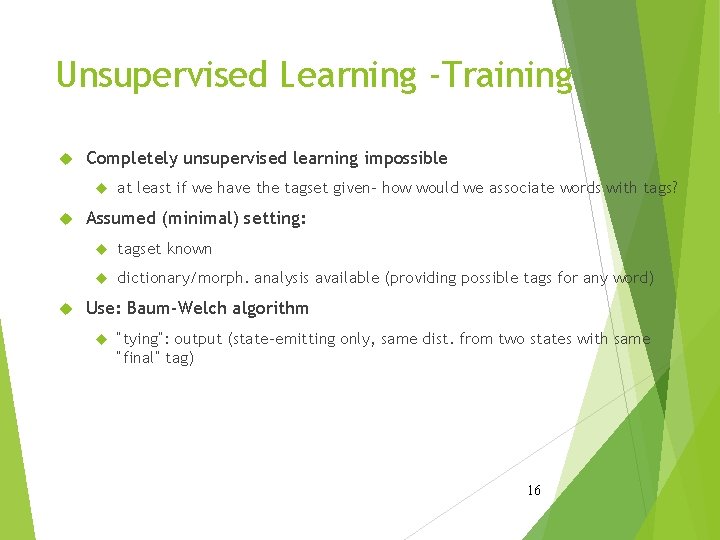

Unsupervised Learning -Training Completely unsupervised learning impossible at least if we have the tagset given- how would we associate words with tags? Assumed (minimal) setting: tagset known dictionary/morph. analysis available (providing possible tags for any word) Use: Baum-Welch algorithm “tying”: output (state-emitting only, same dist. from two states with same “final” tag) 16

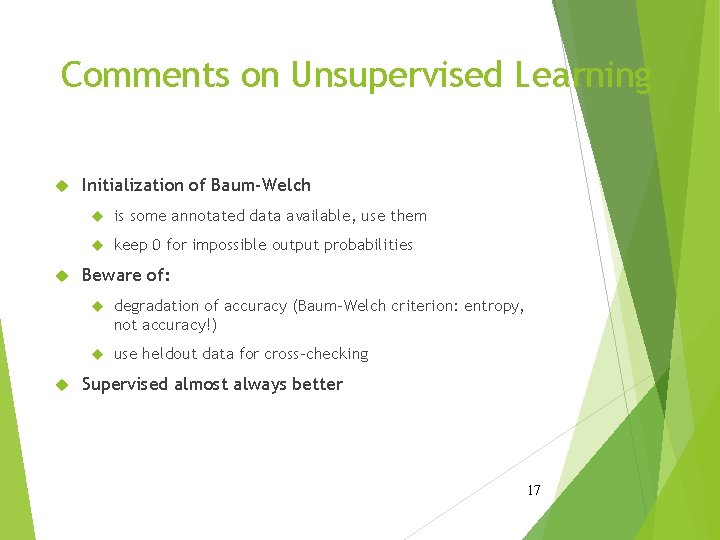

Comments on Unsupervised Learning Initialization of Baum-Welch is some annotated data available, use them keep 0 for impossible output probabilities Beware of: degradation of accuracy (Baum-Welch criterion: entropy, not accuracy!) use heldout data for cross-checking Supervised almost always better 17

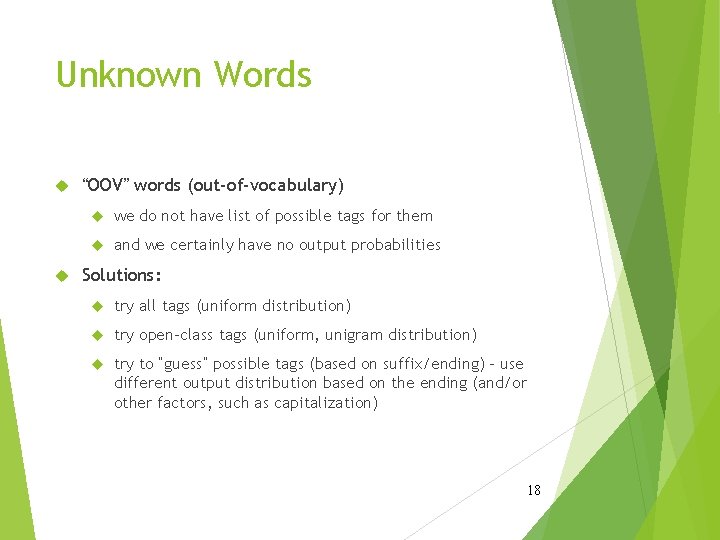

Unknown Words “OOV” words (out-of-vocabulary) we do not have list of possible tags for them and we certainly have no output probabilities Solutions: try all tags (uniform distribution) try open-class tags (uniform, unigram distribution) try to “guess” possible tags (based on suffix/ending) - use different output distribution based on the ending (and/or other factors, such as capitalization) 18

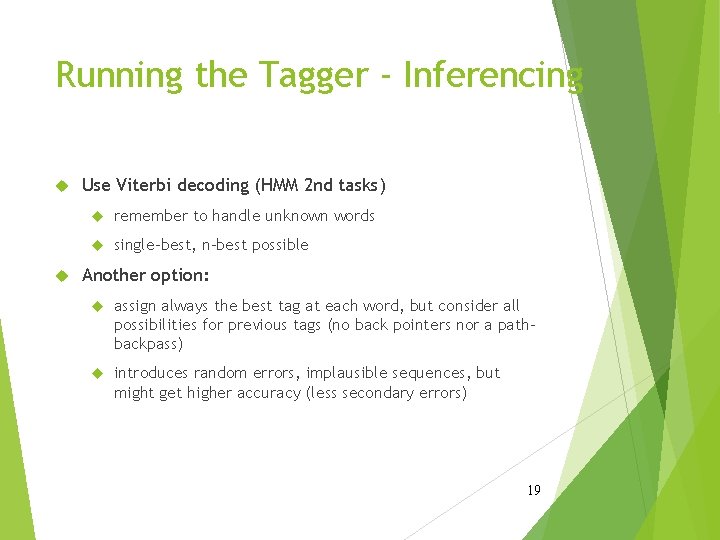

Running the Tagger - Inferencing Use Viterbi decoding (HMM 2 nd tasks) remember to handle unknown words single-best, n-best possible Another option: assign always the best tag at each word, but consider all possibilities for previous tags (no back pointers nor a pathbackpass) introduces random errors, implausible sequences, but might get higher accuracy (less secondary errors) 19

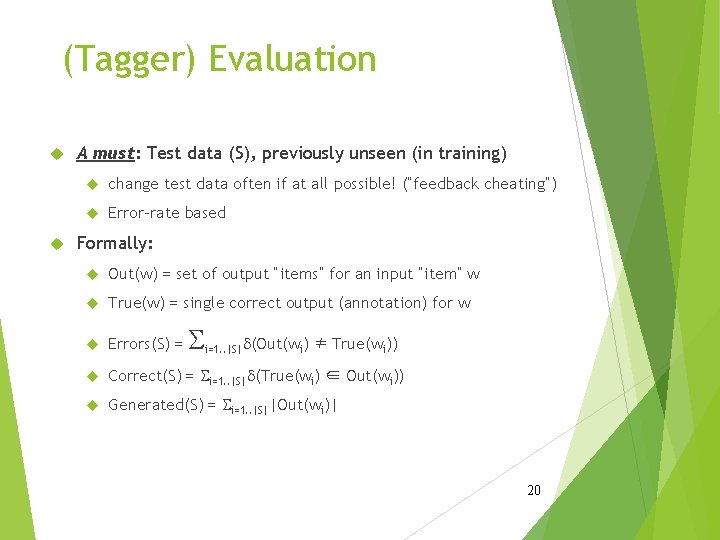

(Tagger) Evaluation A must: Test data (S), previously unseen (in training) change test data often if at all possible! (“feedback cheating”) Error-rate based Formally: Out(w) = set of output “items” for an input “item” w True(w) = single correct output (annotation) for w Errors(S) = Correct(S) = Si=1. . |S|d(True(wi) ∈ Out(wi)) Generated(S) = Si=1. . |S||Out(wi)| S i=1. . |S|d(Out(wi) ≠ True(wi)) 20

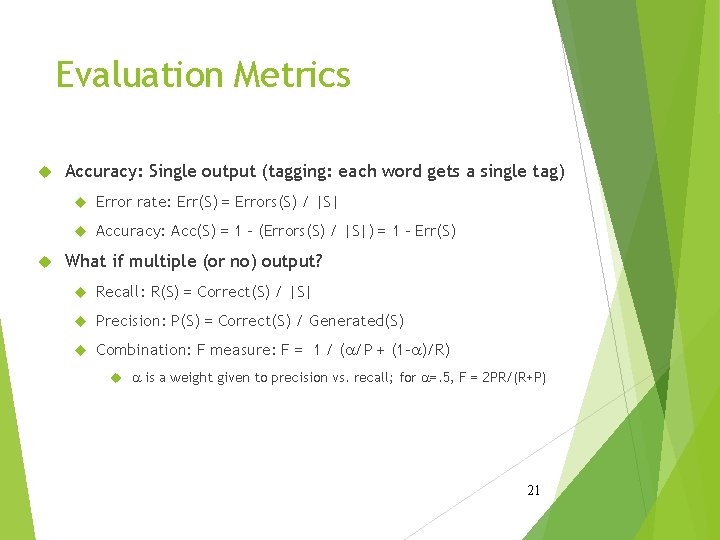

Evaluation Metrics Accuracy: Single output (tagging: each word gets a single tag) Error rate: Err(S) = Errors(S) / |S| Accuracy: Acc(S) = 1 - (Errors(S) / |S|) = 1 - Err(S) What if multiple (or no) output? Recall: R(S) = Correct(S) / |S| Precision: P(S) = Correct(S) / Generated(S) Combination: F measure: F = 1 / (a/P + (1 -a)/R) a is a weight given to precision vs. recall; for a=. 5, F = 2 PR/(R+P) 21

Maximum Entropy Tagging 22

The Task, Again Recall: tagging ~ morphological disambiguation tagset VT Ì (C 1, C 2, . . . Cn) Ci - morphological categories, such as POS, NUMBER, CASE, PERSON, TENSE, GENDER, . . . mapping w → {t ∈VT} exists restriction of Morphological Analysis: A+ → 2(L, C 1, C 2, . . . , Cn) where A is the language alphabet, L is the set of lemmas extension to punctuation, sentence boundaries (treated as words) 23

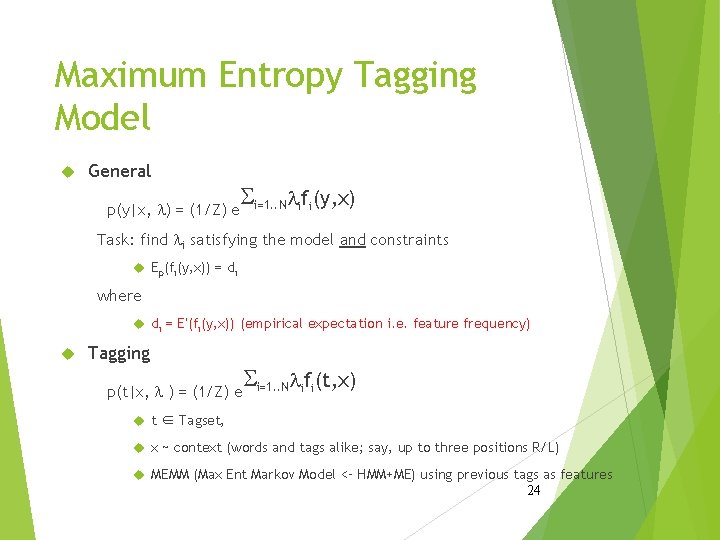

Maximum Entropy Tagging Model General p(y|x, l) = (1/Z) e Si=1. . Nlifi(y, x) Task: find li satisfying the model and constraints Ep(fi(y, x)) = di where di = E’(fi(y, x)) (empirical expectation i. e. feature frequency) Tagging p(t|x, l ) = (1/Z) e Si=1. . Nlifi(t, x) t ∈ Tagset, x ~ context (words and tags alike; say, up to three positions R/L) MEMM (Max Ent Markov Model <– HMM+ME) using previous tags as features 24

Features for Tagging Context definition two words back and ahead, two tags back, current word: xi = (wi-2, ti-2, wi-1, ti-1, wi+1, wi+2) features may ask any information from this window e. g. : previous tag is DT previous two tags are PRP$ and MD, and the following word is “be” current word is “an” suffix of current word is “ing” do not forget: feature also contains ti, the current tag: feature #45: suffix of current word is “ing” & the tag is VBG ⇔ f 45 = 1 25

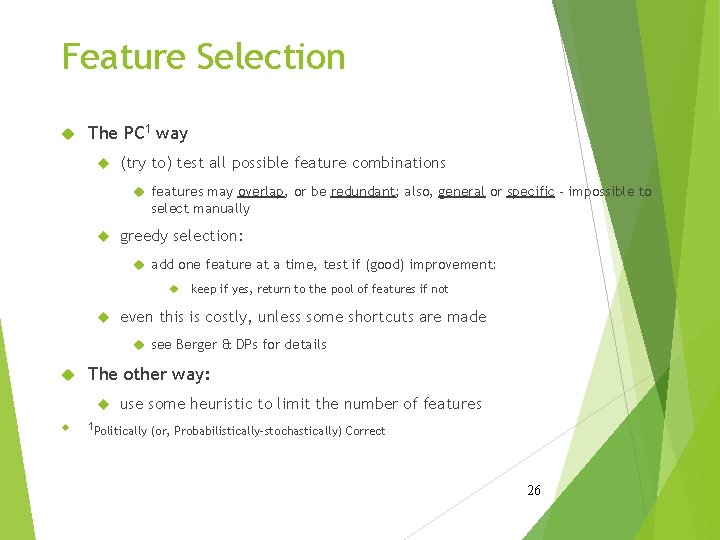

Feature Selection The PC 1 way (try to) test all possible feature combinations features may overlap, or be redundant; also, general or specific - impossible to select manually greedy selection: add one feature at a time, test if (good) improvement: keep if yes, return to the pool of features if not even this is costly, unless some shortcuts are made see Berger & DPs for details The other way: use some heuristic to limit the number of features 1 Politically (or, Probabilistically-stochastically) Correct 26

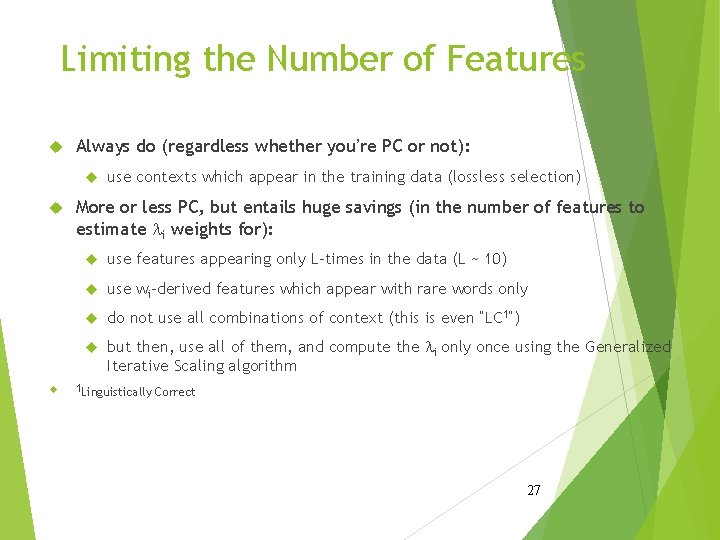

Limiting the Number of Features Always do (regardless whether you’re PC or not): use contexts which appear in the training data (lossless selection) More or less PC, but entails huge savings (in the number of features to estimate li weights for): use features appearing only L-times in the data (L ~ 10) use wi-derived features which appear with rare words only do not use all combinations of context (this is even “LC 1”) but then, use all of them, and compute the li only once using the Generalized Iterative Scaling algorithm 1 Linguistically Correct 27

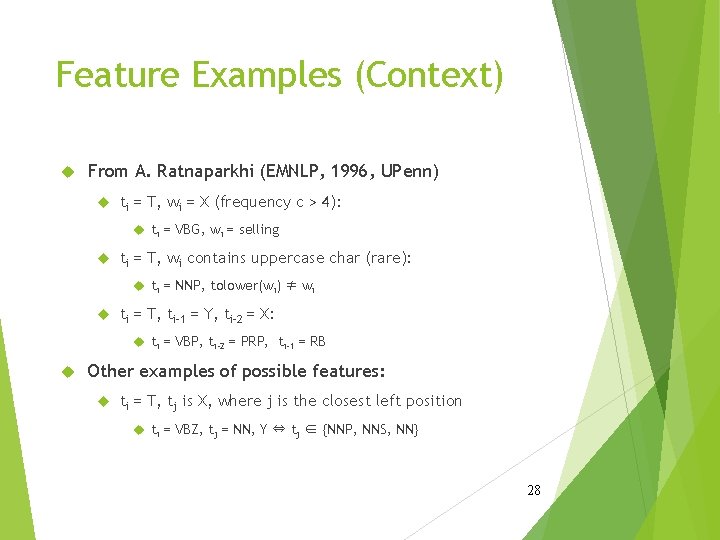

Feature Examples (Context) From A. Ratnaparkhi (EMNLP, 1996, UPenn) ti = T, wi = X (frequency c > 4): ti = VBG, wi = selling ti = T, wi contains uppercase char (rare): ti = NNP, tolower(wi) ≠ wi ti = T, ti-1 = Y, ti-2 = X: ti = VBP, ti-2 = PRP, ti-1 = RB Other examples of possible features: ti = T, tj is X, where j is the closest left position ti = VBZ, tj = NN, Y ⇔ tj ∈ {NNP, NNS, NN} 28

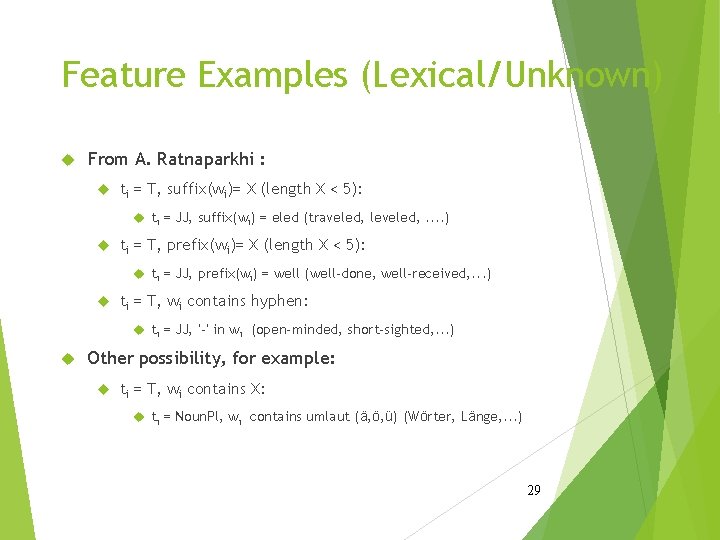

Feature Examples (Lexical/Unknown) From A. Ratnaparkhi : ti = T, suffix(wi)= X (length X < 5): ti = JJ, suffix(wi) = eled (traveled, leveled, . . ) ti = T, prefix(wi)= X (length X < 5): ti = JJ, prefix(wi) = well (well-done, well-received, . . . ) ti = T, wi contains hyphen: ti = JJ, ‘-’ in wi (open-minded, short-sighted, . . . ) Other possibility, for example: ti = T, wi contains X: ti = Noun. Pl, wi contains umlaut (ä, ö, ü) (Wörter, Länge, . . . ) 29

“Specialized” Word-based Features List of words with most errors (WSJ, Penn Treebank): about, that, more, up, . . . Add “specialized”, detailed features: ti = T, wi = X, ti-1 = Y, ti-2 = Z: ti = IN, wi = about, ti-1 = NNS, ti-2 = DT possible only for relatively high-frequency words Slightly better results (also, inconsistent [test] data) 30

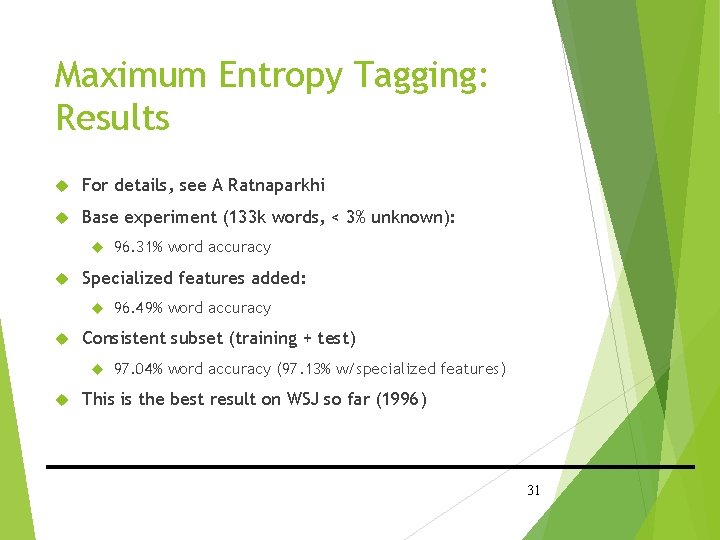

Maximum Entropy Tagging: Results For details, see A Ratnaparkhi Base experiment (133 k words, < 3% unknown): Specialized features added: 96. 49% word accuracy Consistent subset (training + test) 96. 31% word accuracy 97. 04% word accuracy (97. 13% w/specialized features) This is the best result on WSJ so far (1996) 31

Tagging, Tagsets, and Morphology 32

The task of (Morphological) Tagging Formally: A+ → T A is the alphabet of phonemes (A+ denotes any non-empty sequence of phonemes) often: phonemes ~ letters T is the set of tags (the “tagset”) Recall: 6 levels of language description: phonetics. . . phonology. . . morphology. . . syntax. . . meaning. . . Recall: A+ → 2(L, C 1, C 2, . . . , Cn) → T morphology g gin tag - a step aside: tagging: disambiguation ( ~ “select”) 33

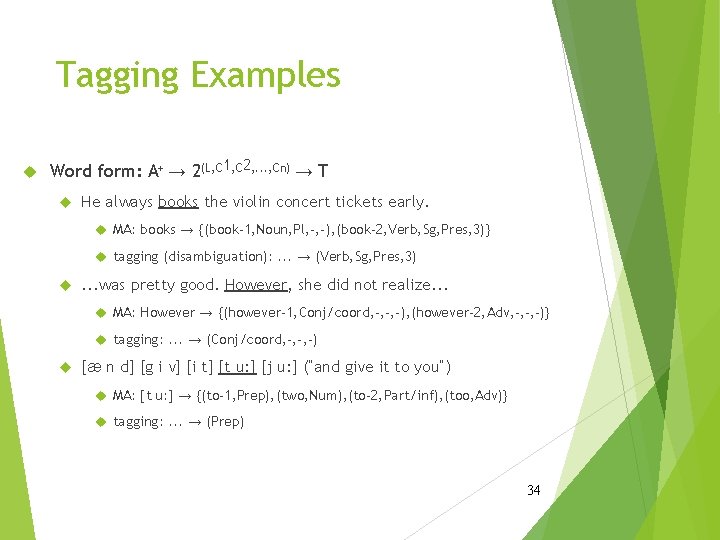

Tagging Examples Word form: A+ → 2(L, C 1, C 2, . . . , Cn) → T He always books the violin concert tickets early. MA: books → {(book-1, Noun, Pl, -, -), (book-2, Verb, Sg, Pres, 3)} tagging (disambiguation): . . . → (Verb, Sg, Pres, 3) . . . was pretty good. However, she did not realize. . . MA: However → {(however-1, Conj/coord, -, -, -), (however-2, Adv, -, -, -)} tagging: . . . → (Conj/coord, -, -, -) [æ n d] [g i v] [i t] [t u: ] [j u: ] (“and give it to you”) MA: [t u: ] → {(to-1, Prep), (two, Num), (to-2, Part/inf), (too, Adv)} tagging: . . . → (Prep) 34

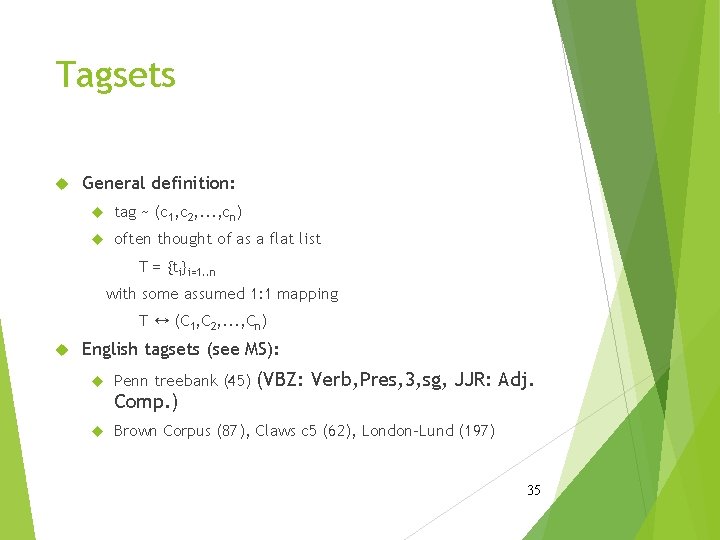

Tagsets General definition: tag ~ (c 1, c 2, . . . , cn) often thought of as a flat list T = {ti}i=1. . n with some assumed 1: 1 mapping T ↔ (C 1, C 2, . . . , Cn) English tagsets (see MS): Penn treebank (45) Comp. ) (VBZ: Verb, Pres, 3, sg, JJR: Adj. Brown Corpus (87), Claws c 5 (62), London-Lund (197) 35

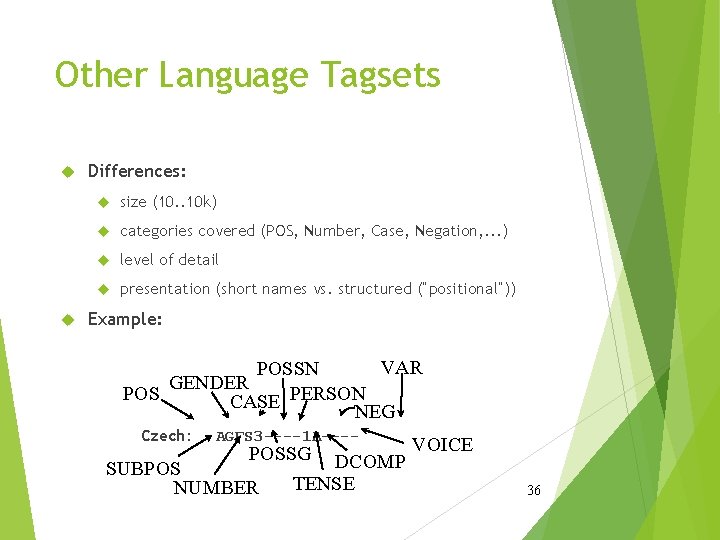

Other Language Tagsets Differences: size (10. . 10 k) categories covered (POS, Number, Case, Negation, . . . ) level of detail presentation (short names vs. structured (“positional”)) Example: VAR POSSN GENDER POS CASE PERSON NEG Czech: AGFS 3 ----1 A---- POSSG DCOMP VOICE SUBPOS TENSE NUMBER 36

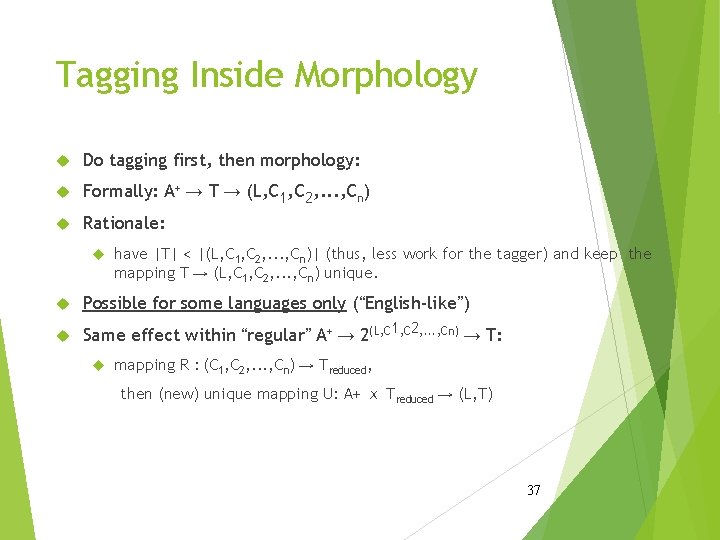

Tagging Inside Morphology Do tagging first, then morphology: Formally: A+ → T → (L, C 1, C 2, . . . , Cn) Rationale: have |T| < |(L, C 1, C 2, . . . , Cn)| (thus, less work for the tagger) and keep the mapping T → (L, C 1, C 2, . . . , Cn) unique. Possible for some languages only (“English-like”) Same effect within “regular” A+ → 2(L, C 1, C 2, . . . , Cn) → T: mapping R : (C 1, C 2, . . . , Cn) → Treduced, then (new) unique mapping U: A+ ⅹ Treduced → (L, T) 37

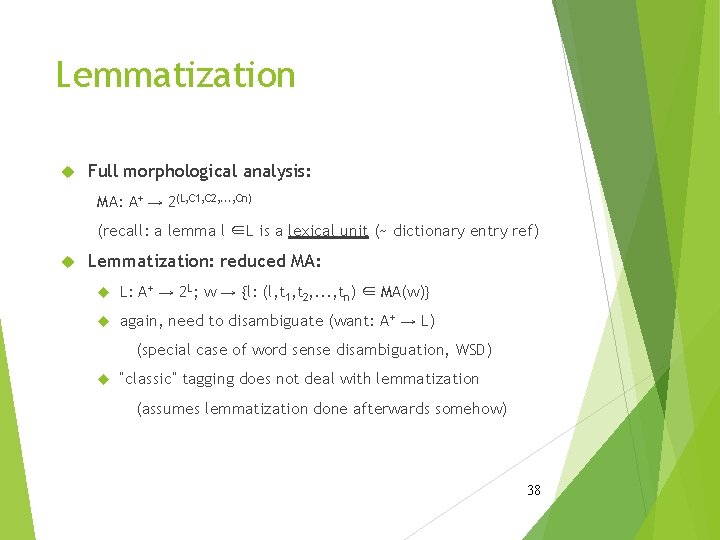

Lemmatization Full morphological analysis: MA: A+ → 2(L, C 1, C 2, . . . , Cn) (recall: a lemma l ∈L is a lexical unit (~ dictionary entry ref) Lemmatization: reduced MA: L: A+ → 2 L; w → {l: (l, t 1, t 2, . . . , tn) ∈ MA(w)} again, need to disambiguate (want: A+ → L) (special case of word sense disambiguation, WSD) “classic” tagging does not deal with lemmatization (assumes lemmatization done afterwards somehow) 38

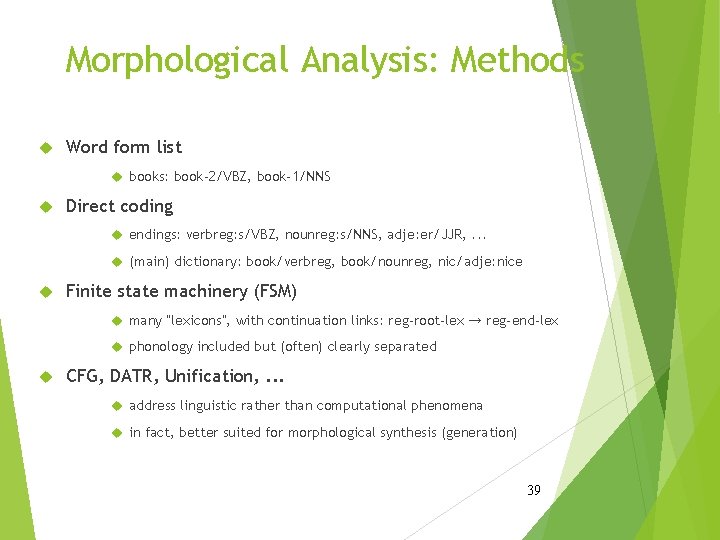

Morphological Analysis: Methods Word form list books: book-2/VBZ, book-1/NNS Direct coding endings: verbreg: s/VBZ, nounreg: s/NNS, adje: er/JJR, . . . (main) dictionary: book/verbreg, book/nounreg, nic/adje: nice Finite state machinery (FSM) many “lexicons”, with continuation links: reg-root-lex → reg-end-lex phonology included but (often) clearly separated CFG, DATR, Unification, . . . address linguistic rather than computational phenomena in fact, better suited for morphological synthesis (generation) 39

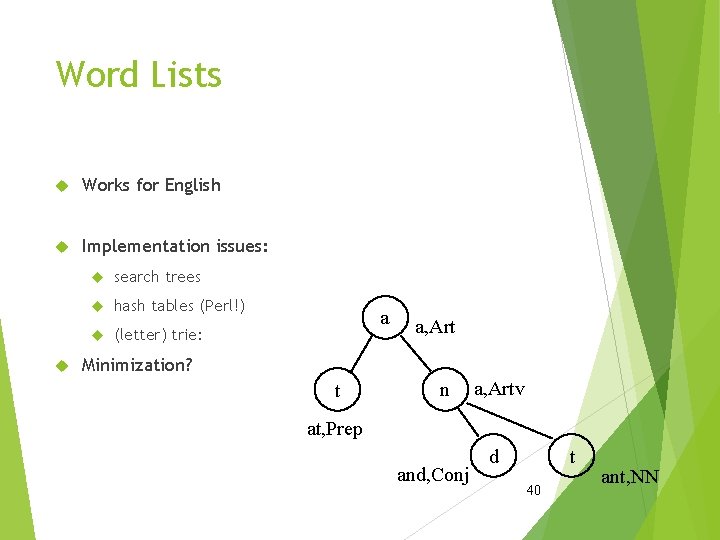

Word Lists Works for English Implementation issues: search trees hash tables (Perl!) (letter) trie: a a, Art Minimization? t n a, Artv at, Prep and, Conj d t 40 ant, NN

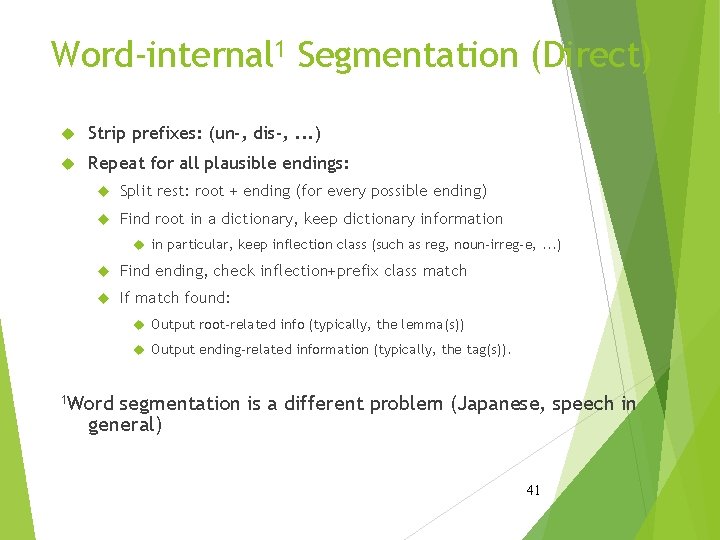

Word-internal 1 Segmentation (Direct) Strip prefixes: (un-, dis-, . . . ) Repeat for all plausible endings: Split rest: root + ending (for every possible ending) Find root in a dictionary, keep dictionary information in particular, keep inflection class (such as reg, noun-irreg-e, . . . ) Find ending, check inflection+prefix class match If match found: Output root-related info (typically, the lemma(s)) Output ending-related information (typically, the tag(s)). 1 Word segmentation is a different problem (Japanese, speech in general) 41

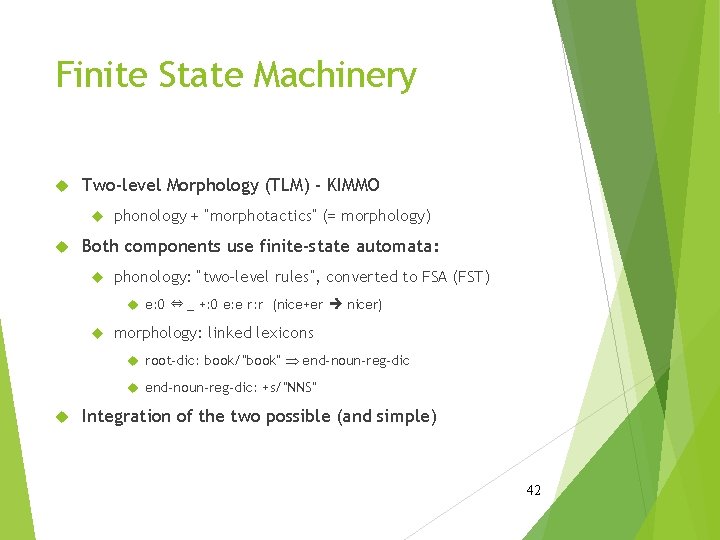

Finite State Machinery Two-level Morphology (TLM) - KIMMO phonology + “morphotactics” (= morphology) Both components use finite-state automata: phonology: “two-level rules”, converted to FSA (FST) e: 0 ⇔ _ +: 0 e: e r: r (nice+er nicer) morphology: linked lexicons root-dic: book/”book” Þ end-noun-reg-dic: +s/”NNS” Integration of the two possible (and simple) 42

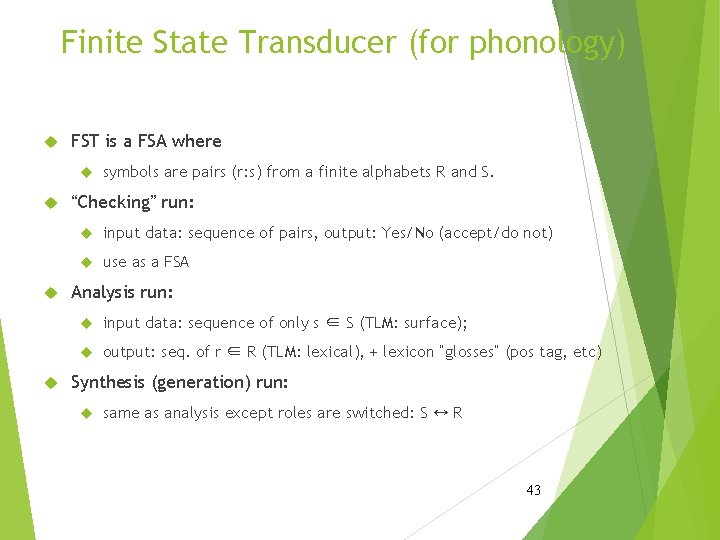

Finite State Transducer (for phonology) FST is a FSA where symbols are pairs (r: s) from a finite alphabets R and S. “Checking” run: input data: sequence of pairs, output: Yes/No (accept/do not) use as a FSA Analysis run: input data: sequence of only s ∈ S (TLM: surface); output: seq. of r ∈ R (TLM: lexical), + lexicon “glosses” (pos tag, etc) Synthesis (generation) run: same as analysis except roles are switched: S ↔ R 43

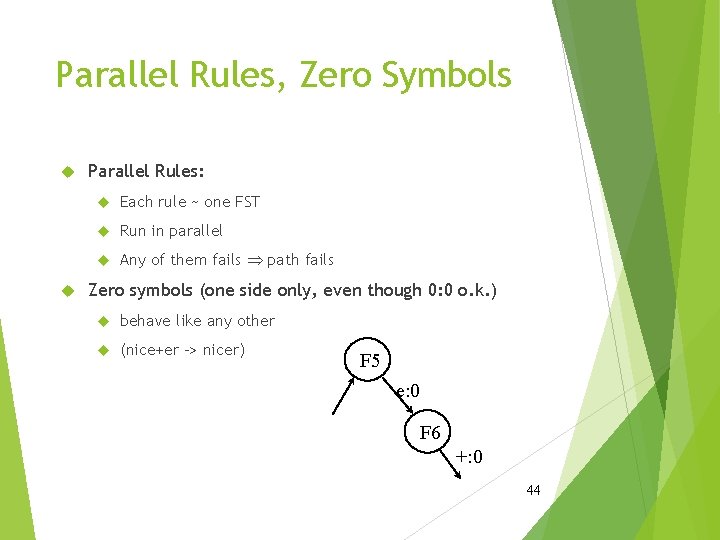

Parallel Rules, Zero Symbols Parallel Rules: Each rule ~ one FST Run in parallel Any of them fails Þ path fails Zero symbols (one side only, even though 0: 0 o. k. ) behave like any other (nice+er -> nicer) F 5 e: 0 F 6 +: 0 44

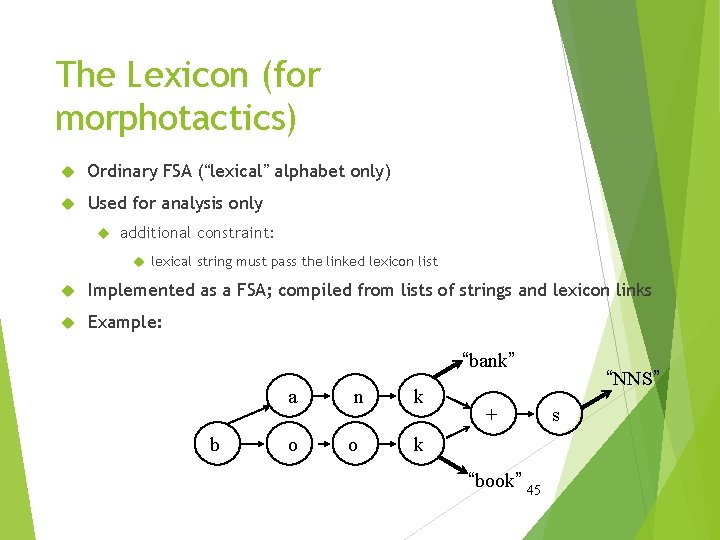

The Lexicon (for morphotactics) Ordinary FSA (“lexical” alphabet only) Used for analysis only additional constraint: lexical string must pass the linked lexicon list Implemented as a FSA; compiled from lists of strings and lexicon links Example: “bank” b a n k o o k + “book” 45 “NNS” s

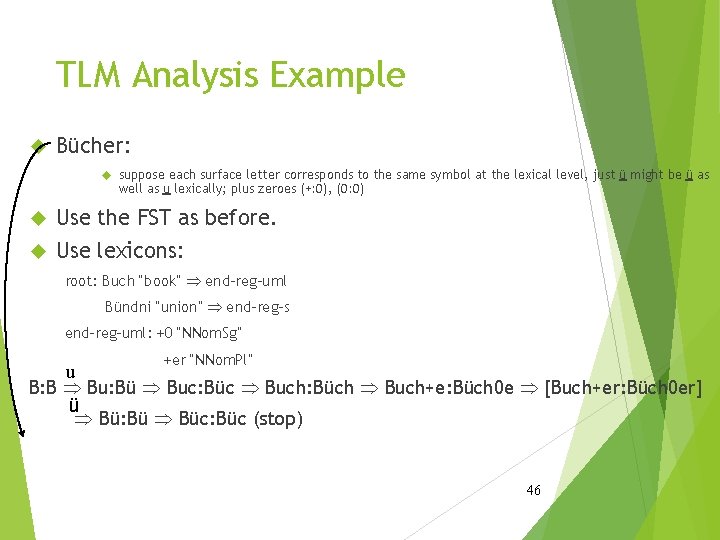

TLM Analysis Example Bücher: suppose each surface letter corresponds to the same symbol at the lexical level, just ü might be ü as well as u lexically; plus zeroes (+: 0), (0: 0) Use the FST as before. Use lexicons: root: Buch “book” Þ end-reg-uml Bündni “union” Þ end-reg-s end-reg-uml: +0 “NNom. Sg” u +er “NNom. Pl” B: B Þ Bu: Bü Þ Buc: Büc Þ Buch: Büch Þ Buch+e: Büch 0 e Þ [Buch+er: Büch 0 er] ü Þ Bü: Bü Þ Büc: Büc (stop) 46

TLM: Generation Do not use the lexicon (well you have to put the “right” lexical strings together somehow!) Start with a lexical string L. Generate all possible pairs l: s for every symbol in L. Find all (hopefully only 1!) traversals through the FST which end in a final state. From all such traversals, print out the sequence of surface letters. 47

TLM: Some Remarks Parallel FST (incl. final lexicon FSA) can be compiled into a (gigantic) FST maybe not so gigantic (XLT - Xerox Language Tools) “Double-leveling” the lexicon: allows for generation from lemma, tag needs: rules with strings of unequal length Rule Compiler Karttunen, Kay PC-KIMMO: free version from www. sil. org (Unix, too) 48

- Slides: 48