Deterministic PartofSpeech Tagging with FiniteState Transducers by Emmanuel

Deterministic Part-of-Speech Tagging with Finite-State Transducers by Emmanuel Roche and Yves Schabes 정유진 KLE Lab. CSE POSTECH 98. 10. 16 CS 730 B Statistical NLP

Introduction o Stochastic approaches to NLP have often been preferred to rulebased approaches o Eric Brill (1992) : rule-based tagger by inferring rules from a training corpus m rules are automatically acquired m require drastically less space than stochastic tagger m but, considerably slow Deterministic Finite-State Transducer (Subsequential Transducer) CS 730 B Statistical NLP 2

Overview of Brill’s Tagger o Structure of the tagger m Lexical tagger (Initial tagger) m Unknown word tagger m Contextual tagger o Inefficiency m Individual rules is compared at each token of the input (Fig. 3) m Potential interaction between rules (Fig. 1) o Complexity : RKn R : # of contextual rules n : # of input words K : max # of tokens which rules require CS 730 B Statistical NLP 3

Finite-State Transducer (1) o Finite-State Transducer T = ( , Q, i, F, E) : finite alphabet Q : finite set of states i : initial state F : set of final state E : set of transitions (q, a, w, q’) on Q ( { }) * Q T = ( , Q, i, F, , , ) : deterministic state transition func. ( q a = q’) : deterministic emission func. ( q a = w’ ) : final emission func. ( (q) = w for q F ) o Deterministic F. S. Transducer CS 730 B Statistical NLP 4

Finite-State Transducer (2) o state transition function d (q, a) = {q’ Q | w’ * and (q, a, w’, q’) E} o emission function (q, a, q’) = {w’ * | (q, a, w’, q’) E} CS 730 B Statistical NLP 5

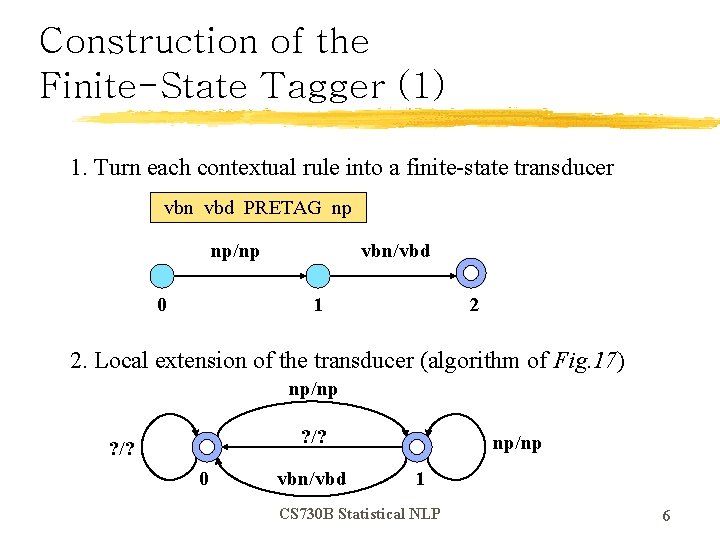

Construction of the Finite-State Tagger (1) 1. Turn each contextual rule into a finite-state transducer vbn vbd PRETAG np np/np 0 vbn/vbd 1 2 2. Local extension of the transducer (algorithm of Fig. 17) np/np ? /? 0 vbn/vbd np/np 1 CS 730 B Statistical NLP 6

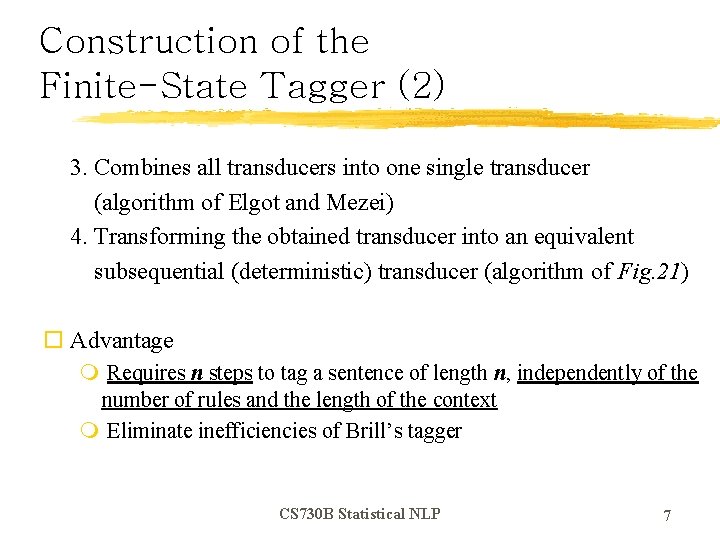

Construction of the Finite-State Tagger (2) 3. Combines all transducers into one single transducer (algorithm of Elgot and Mezei) 4. Transforming the obtained transducer into an equivalent subsequential (deterministic) transducer (algorithm of Fig. 21) o Advantage m Requires n steps to tag a sentence of length n, independently of the number of rules and the length of the context m Eliminate inefficiencies of Brill’s tagger CS 730 B Statistical NLP 7

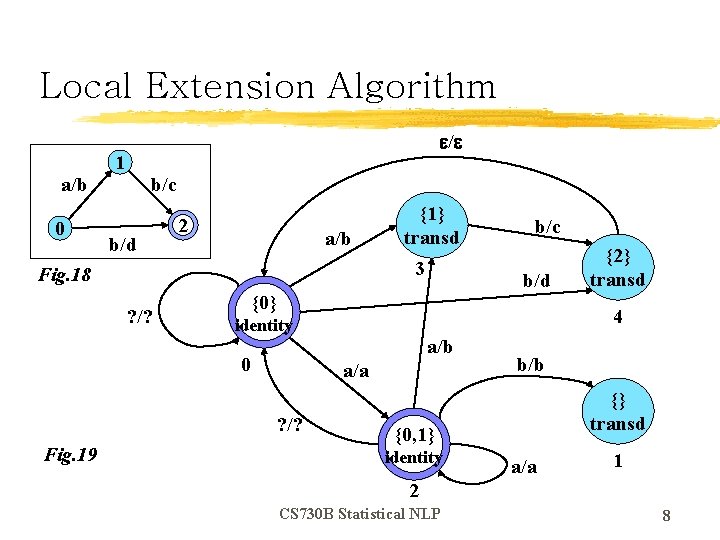

Local Extension Algorithm a/b 0 / 1 b/c b/d 2 a/b 3 Fig. 18 ? /? b/c b/d {0} a/b a/a ? /? {2} transd 4 identity 0 Fig. 19 {1} transd b/b {} transd {0, 1} identity a/a 1 2 CS 730 B Statistical NLP 8

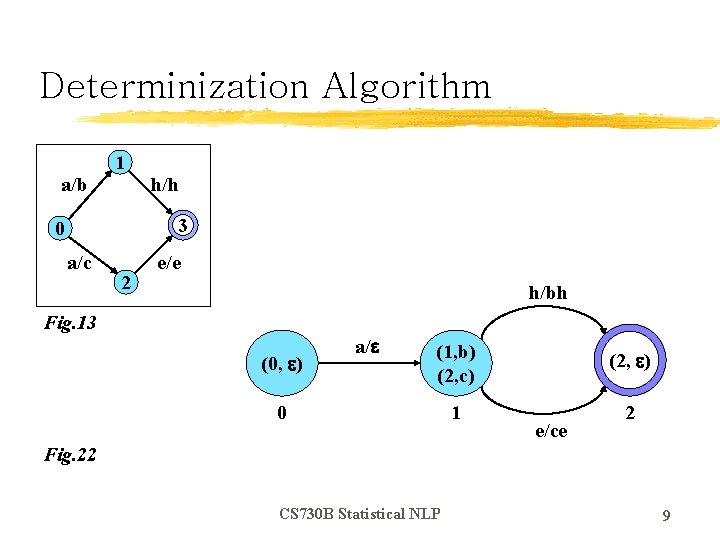

Determinization Algorithm a/b 1 h/h 3 0 a/c 2 e/e h/bh Fig. 13 (0, ) a/ (1, b) (2, c) (2, ) 1 2 0 e/ce Fig. 22 CS 730 B Statistical NLP 9

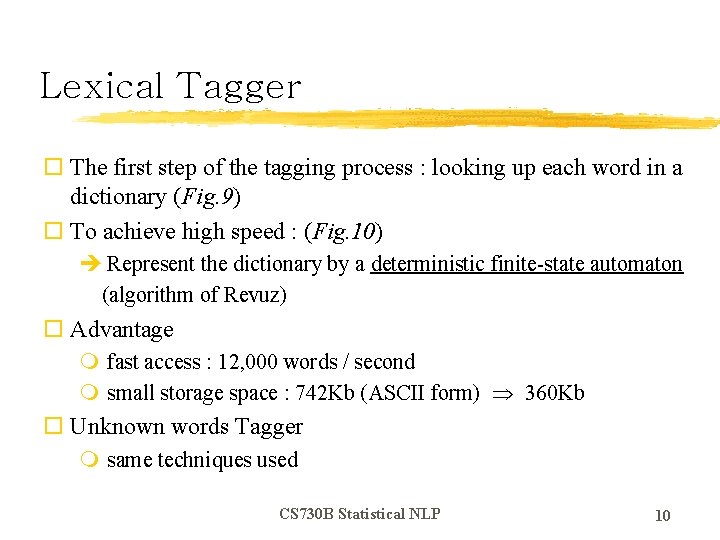

Lexical Tagger o The first step of the tagging process : looking up each word in a dictionary (Fig. 9) o To achieve high speed : (Fig. 10) è Represent the dictionary by a deterministic finite-state automaton (algorithm of Revuz) o Advantage m fast access : 12, 000 words / second m small storage space : 742 Kb (ASCII form) 360 Kb o Unknown words Tagger m same techniques used CS 730 B Statistical NLP 10

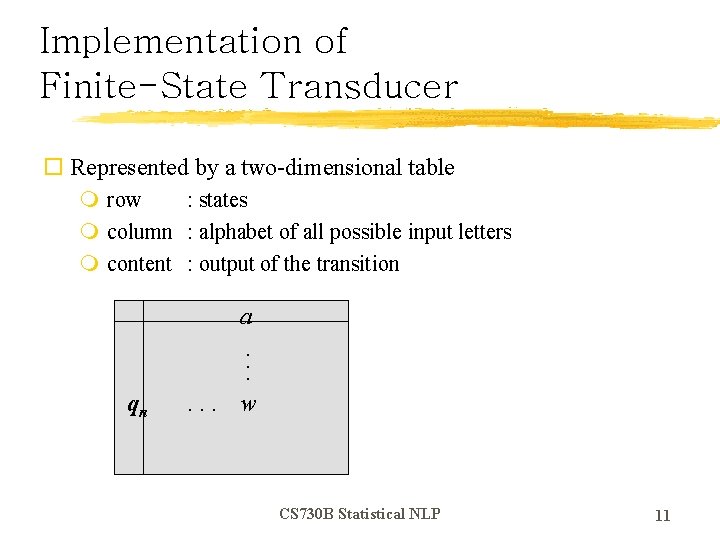

Implementation of Finite-State Transducer o Represented by a two-dimensional table m row : states m column : alphabet of all possible input letters m content : output of the transition a. . . qn . . . w CS 730 B Statistical NLP 11

Evaluation o Overall performance comparison (Fig. 11) m Stochastic Tagger : Church’s trigram tagger (1988) m Rule-based Tagger : Brill’s tagger m All taggers were trained on the Brown corpus and used same lexicon of Fig. 10 o Speeds of the different parts of finite-state tagger (Fig. 12) m Low-level factors (storage access) dominate the computation CS 730 B Statistical NLP 12

- Slides: 12