PartofSpeech Tagging A Canonical FiniteState Task 600 465

- Slides: 32

Part-of-Speech Tagging A Canonical Finite-State Task 600. 465 - Intro to NLP - J. Eisner 1

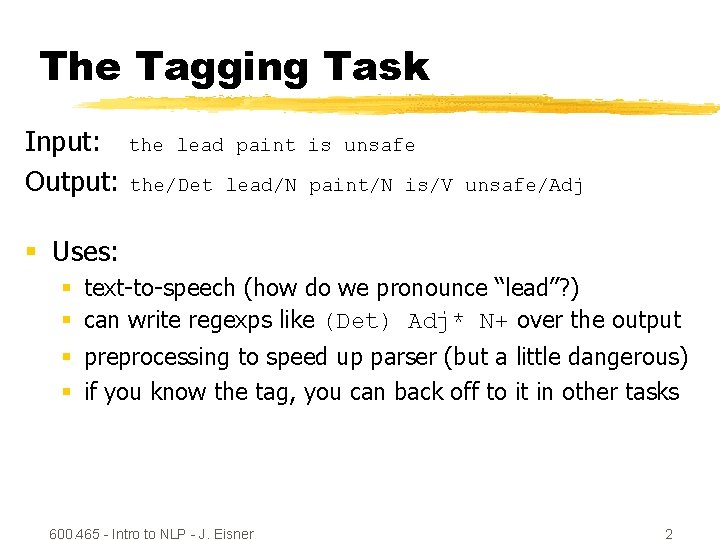

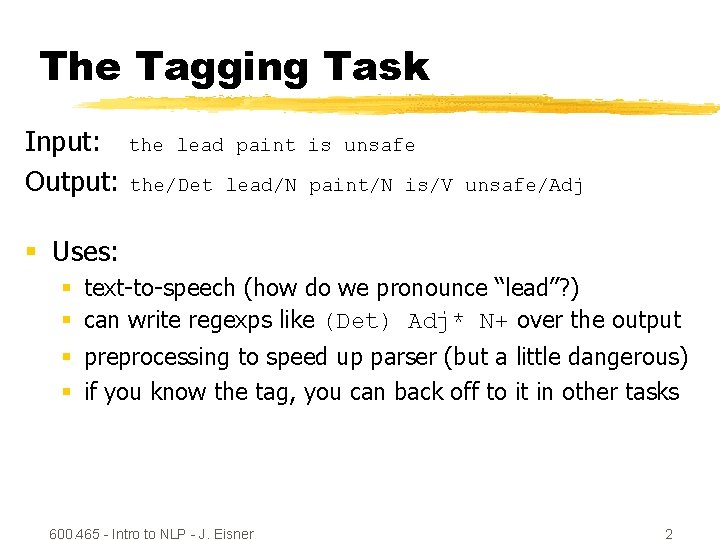

The Tagging Task Input: the lead paint Output: the/Det lead/N is unsafe paint/N is/V unsafe/Adj § Uses: § text-to-speech (how do we pronounce “lead”? ) § can write regexps like (Det) Adj* N+ over the output § preprocessing to speed up parser (but a little dangerous) § if you know the tag, you can back off to it in other tasks 600. 465 - Intro to NLP - J. Eisner 2

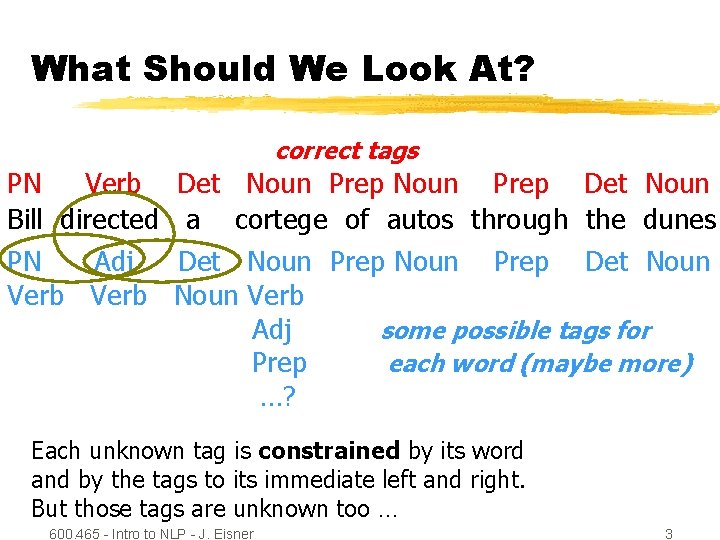

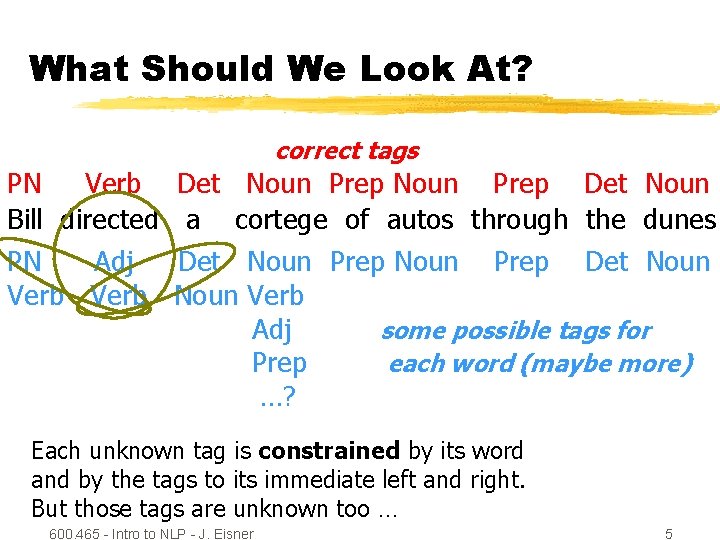

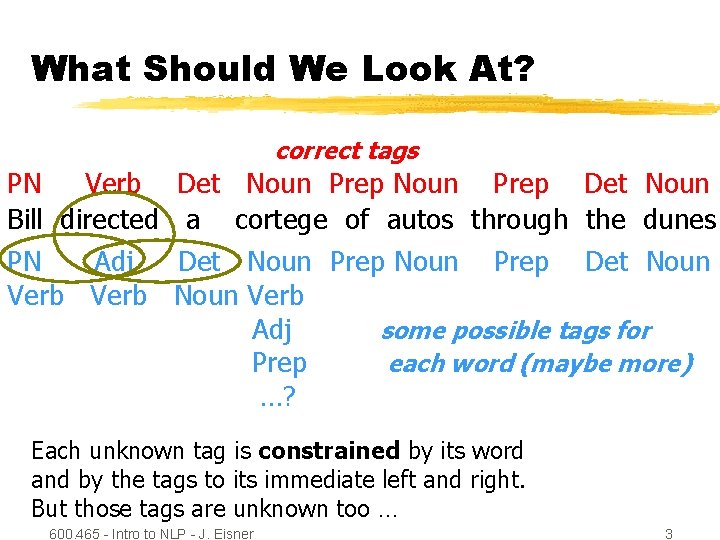

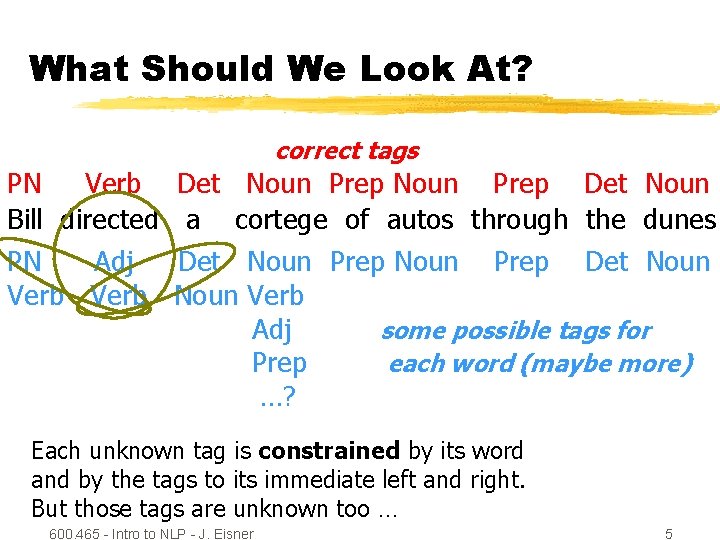

What Should We Look At? correct tags PN Verb Bill directed PN Adj Verb Det Noun Prep Det Noun a cortege of autos through the dunes Det Noun Prep Det Noun Verb Adj some possible tags for Prep each word (maybe more) …? Each unknown tag is constrained by its word and by the tags to its immediate left and right. But those tags are unknown too … 600. 465 - Intro to NLP - J. Eisner 3

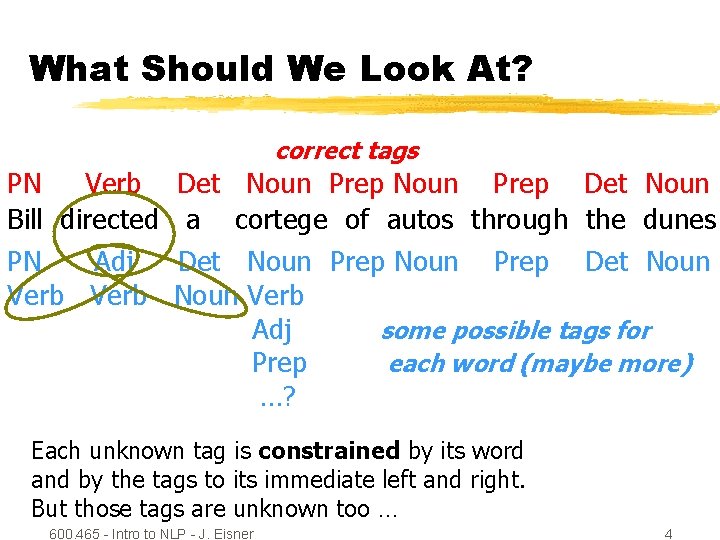

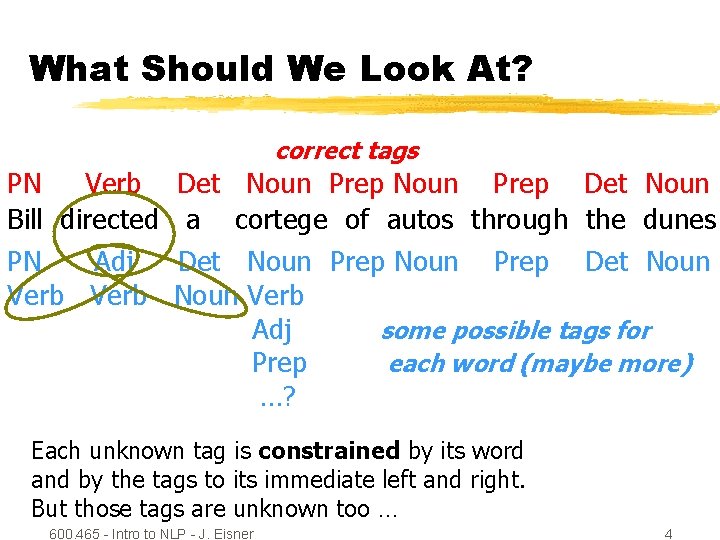

What Should We Look At? correct tags PN Verb Bill directed PN Adj Verb Det Noun Prep Det Noun a cortege of autos through the dunes Det Noun Prep Det Noun Verb Adj some possible tags for Prep each word (maybe more) …? Each unknown tag is constrained by its word and by the tags to its immediate left and right. But those tags are unknown too … 600. 465 - Intro to NLP - J. Eisner 4

What Should We Look At? correct tags PN Verb Bill directed PN Adj Verb Det Noun Prep Det Noun a cortege of autos through the dunes Det Noun Prep Det Noun Verb Adj some possible tags for Prep each word (maybe more) …? Each unknown tag is constrained by its word and by the tags to its immediate left and right. But those tags are unknown too … 600. 465 - Intro to NLP - J. Eisner 5

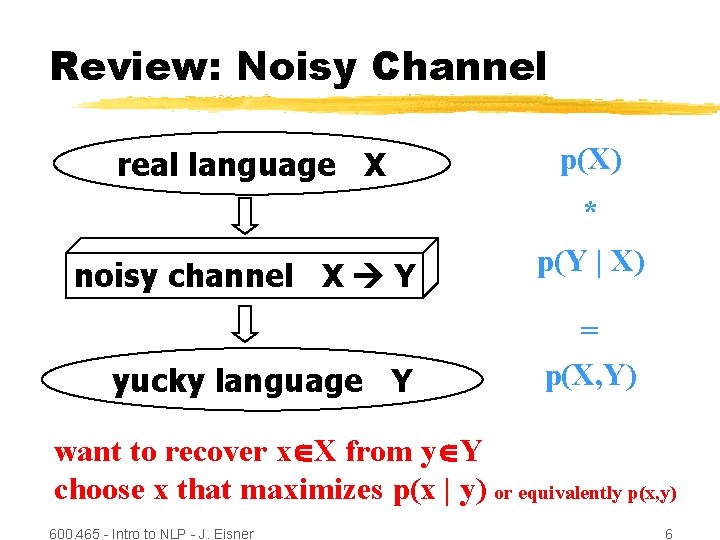

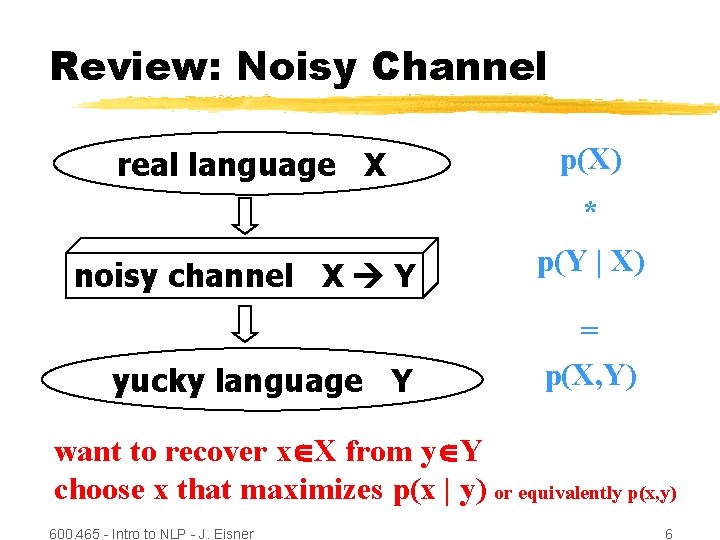

Review: Noisy Channel real language X p(X) * noisy channel X Y yucky language Y p(Y | X) = p(X, Y) want to recover x X from y Y choose x that maximizes p(x | y) or equivalently p(x, y) 600. 465 - Intro to NLP - J. Eisner 6

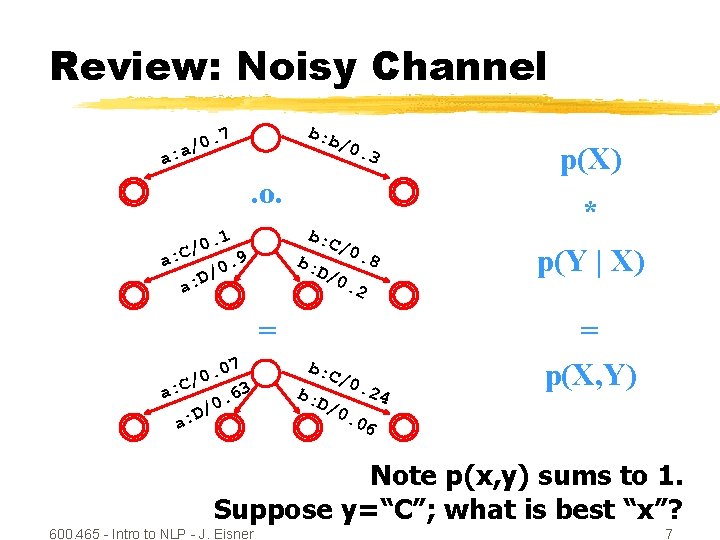

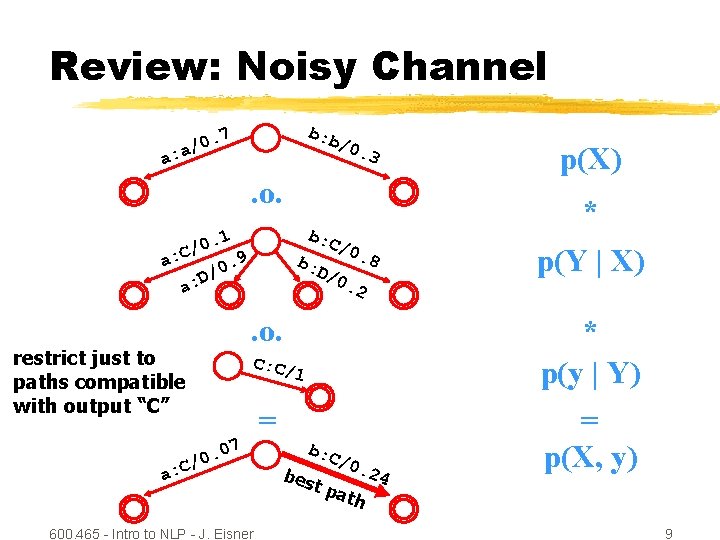

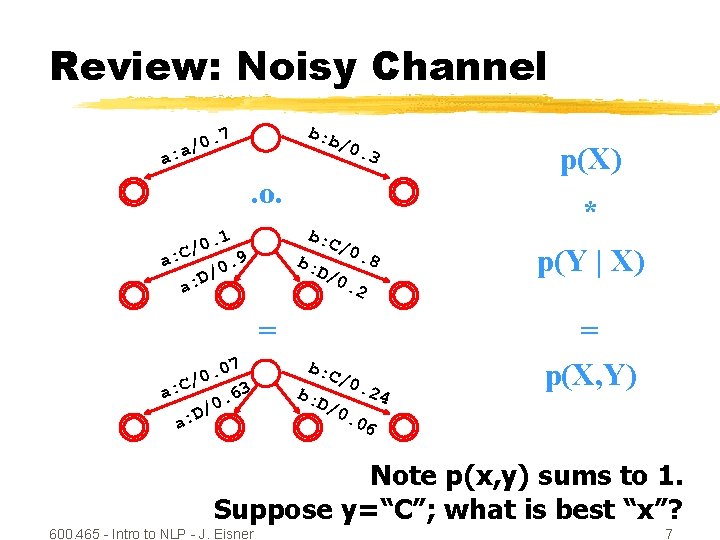

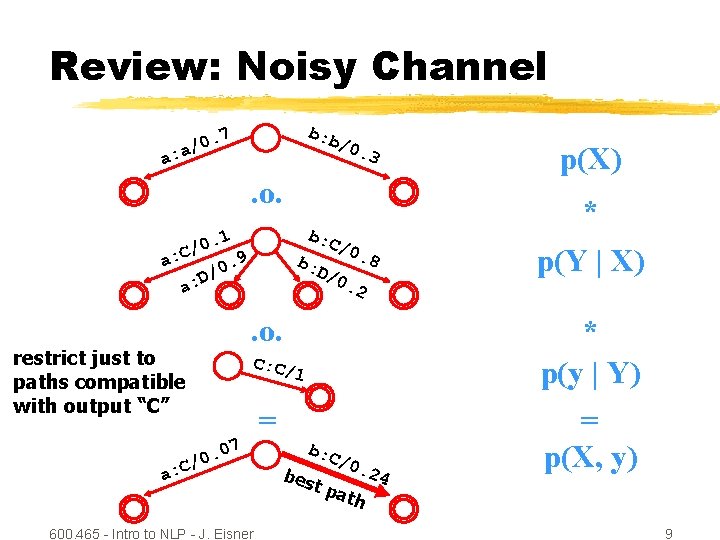

Review: Noisy Channel b: b . 7 0 / a a: /0. 3 . o. b: C . 1 0 / 9 a: C 0. / D a: b: D/ * /0. 8 0. b: C b: p(Y | X) 2 = 07. 0 / a: C 63. /0 D a: p(X) D/ /0. 24 = p(X, Y) 06 Note p(x, y) sums to 1. Suppose y=“C”; what is best “x”? 600. 465 - Intro to NLP - J. Eisner 7

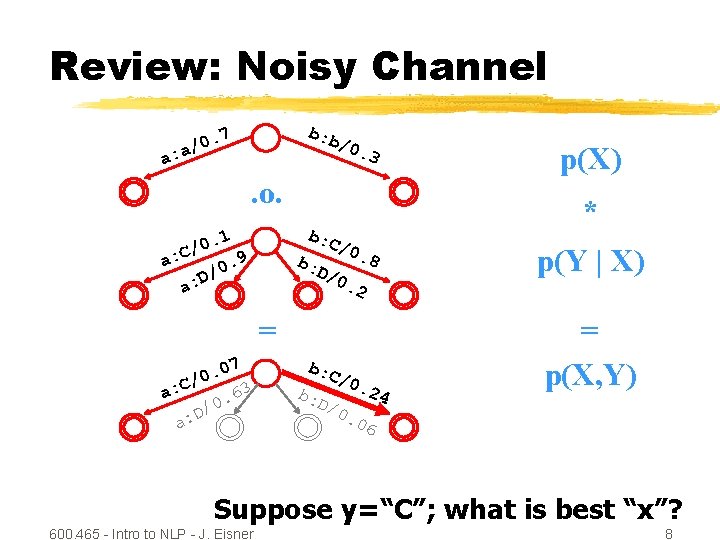

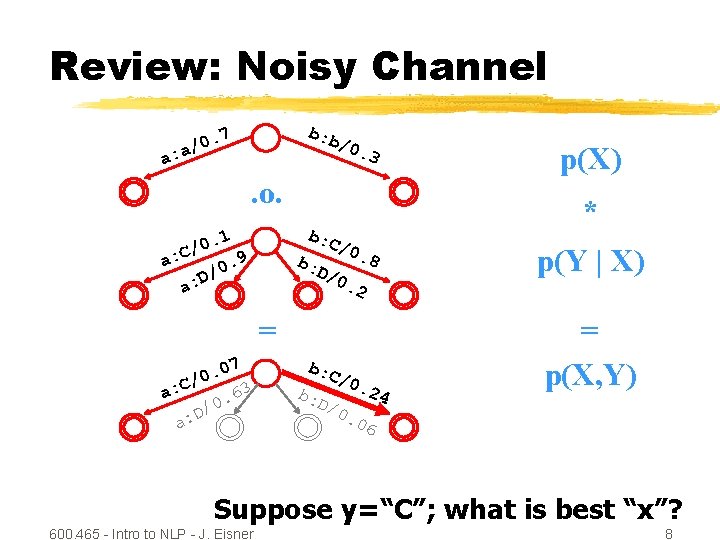

Review: Noisy Channel b: b . 7 0 / a a: /0. 3 . o. b: C . 1 0 / 9 a: C 0. / D a: b: D/ * /0. 8 0. b: C b: p(Y | X) 2 = 07. 0 / a: C 63. /0 D a: p(X) D/ /0. 24 = p(X, Y) 06 Suppose y=“C”; what is best “x”? 600. 465 - Intro to NLP - J. Eisner 8

Review: Noisy Channel b: b . 7 0 / a a: /0. 3 . o. b: C . 1 0 / 9 a: C 0. / D a: b: D/ * /0. 8 0. C: C/ 1 = 7 . 0 0 / C a: 600. 465 - Intro to NLP - J. Eisner b: C bes p(Y | X) 2 . o. restrict just to paths compatible with output “C” p(X) /0. t pa th 24 * p(y | Y) = p(X, y) 9

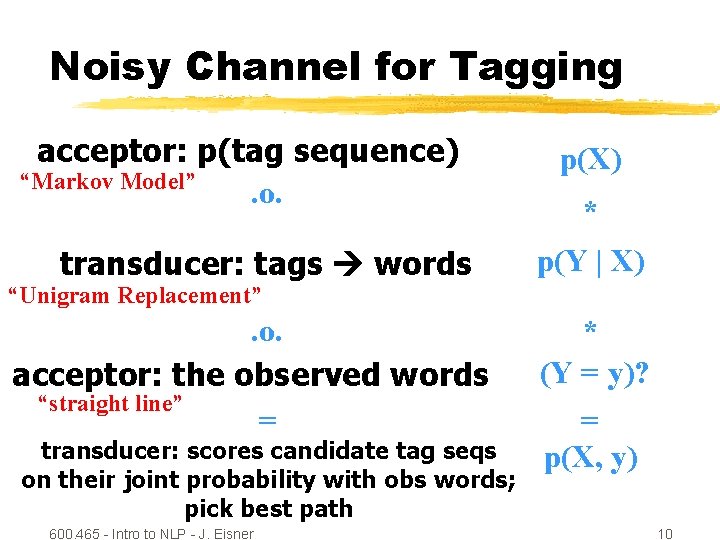

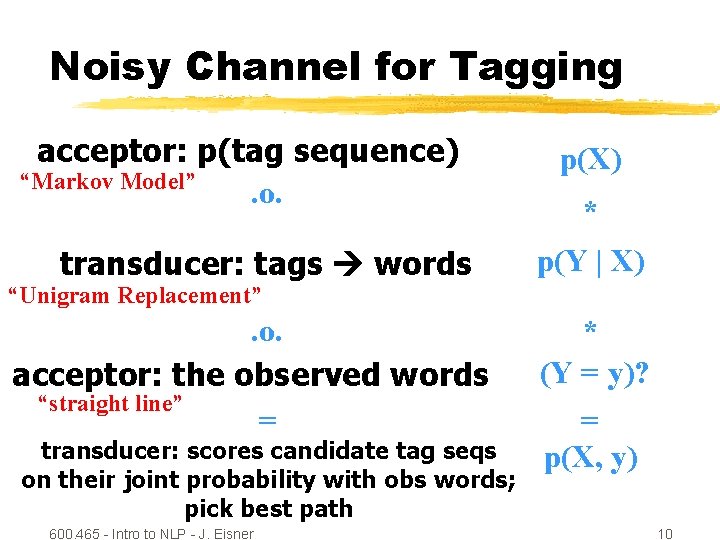

Noisy Channel for Tagging. 7 0 / a b: b /0. : 3 acceptor: p(tag sequence) a “Markov Model”. o. . 1 0 / 9 a: C 0. / : D a Replacement” b: C /0. 8 transducer: tags. D/ words 0. “Unigram b: * p(Y | X) 2 . o. C: C/ 1 acceptor: the observed words “straight line” = b: C 07 transducer: scores candidate tag seqs. 0 / / 0. 24 : C b a e on their joint probabilityst with obs words; pat pick best pathh 600. 465 - Intro to NLP - J. Eisner p(X) * (Y = y)? = p(X, y) 10

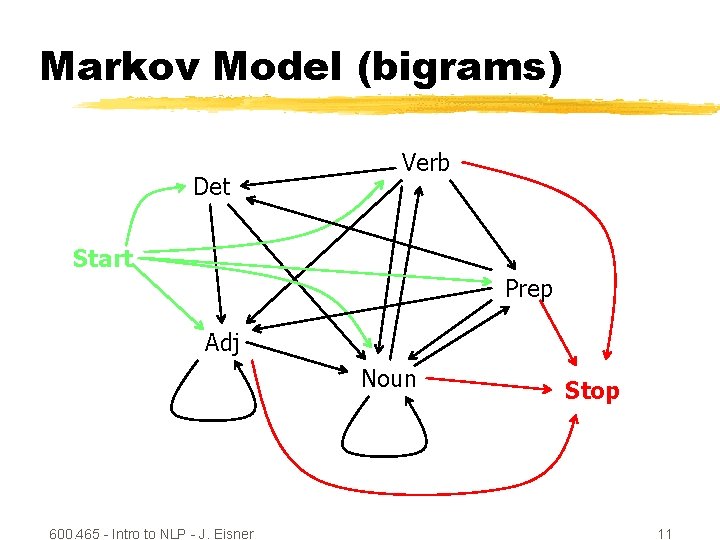

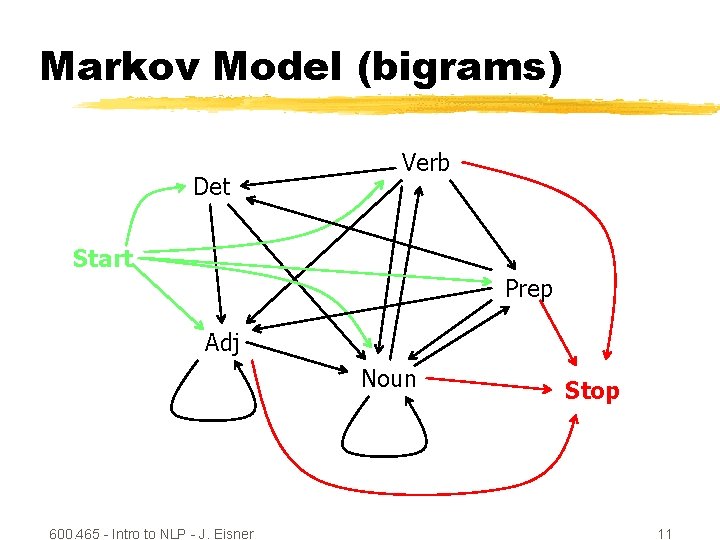

Markov Model (bigrams) Det Verb Start Prep Adj Noun 600. 465 - Intro to NLP - J. Eisner Stop 11

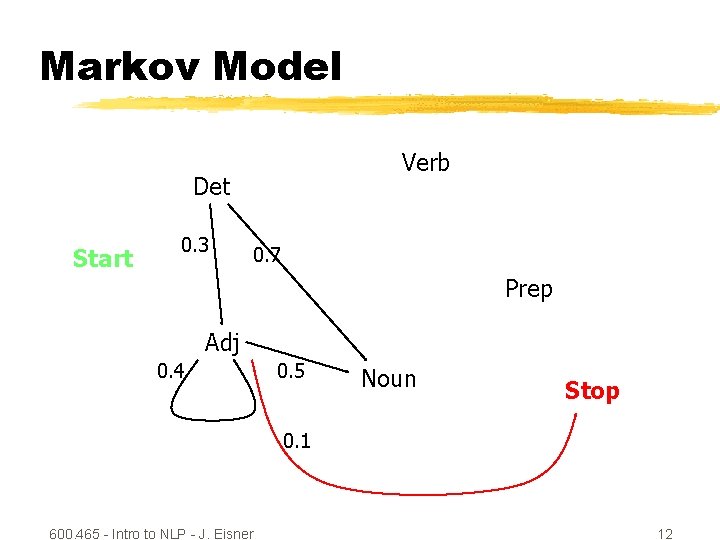

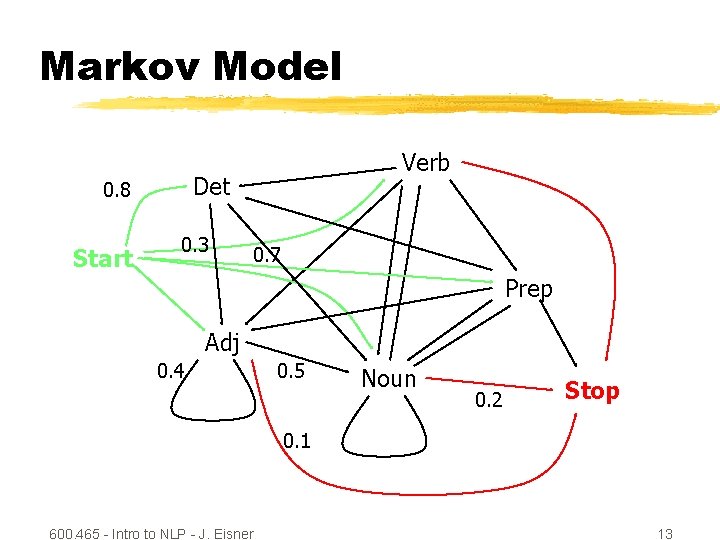

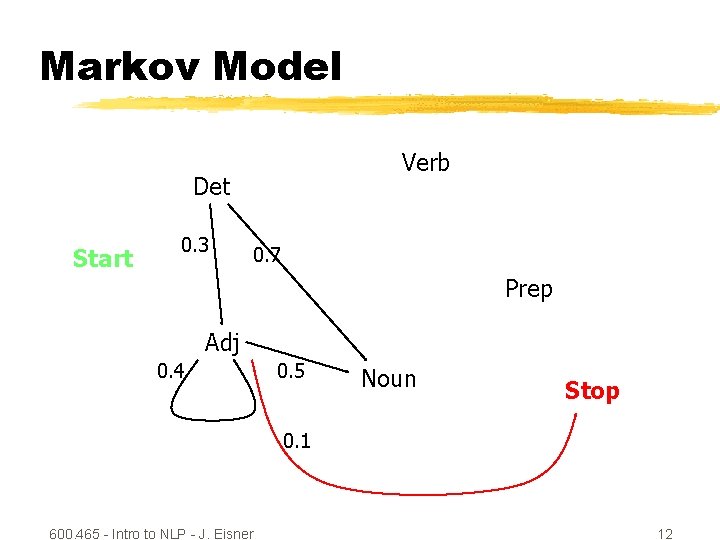

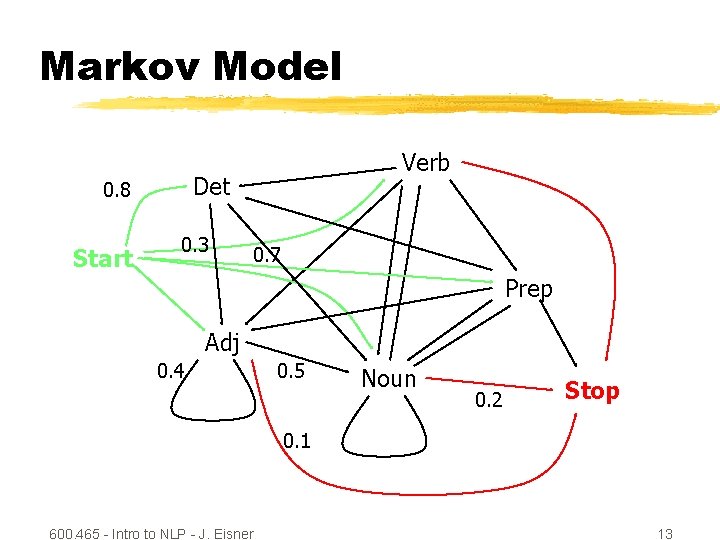

Markov Model Verb Det Start 0. 3 0. 7 Prep Adj 0. 4 0. 5 Noun Stop 0. 1 600. 465 - Intro to NLP - J. Eisner 12

Markov Model Det 0. 8 Start Verb 0. 3 0. 7 Prep Adj 0. 4 0. 5 Noun 0. 2 Stop 0. 1 600. 465 - Intro to NLP - J. Eisner 13

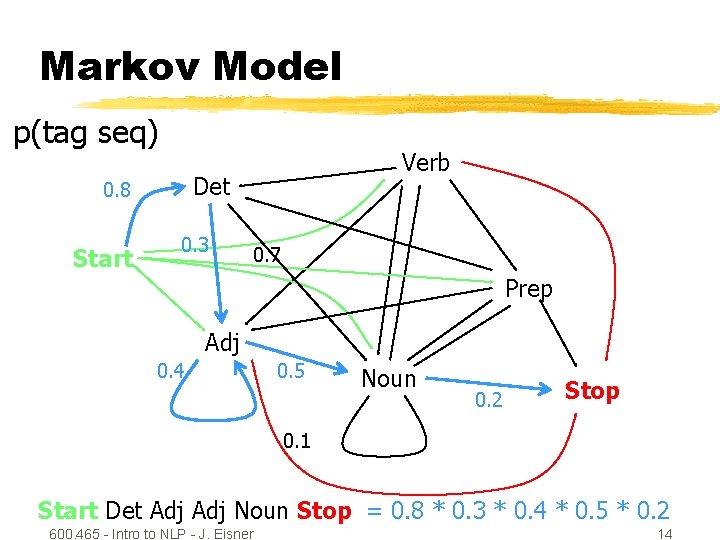

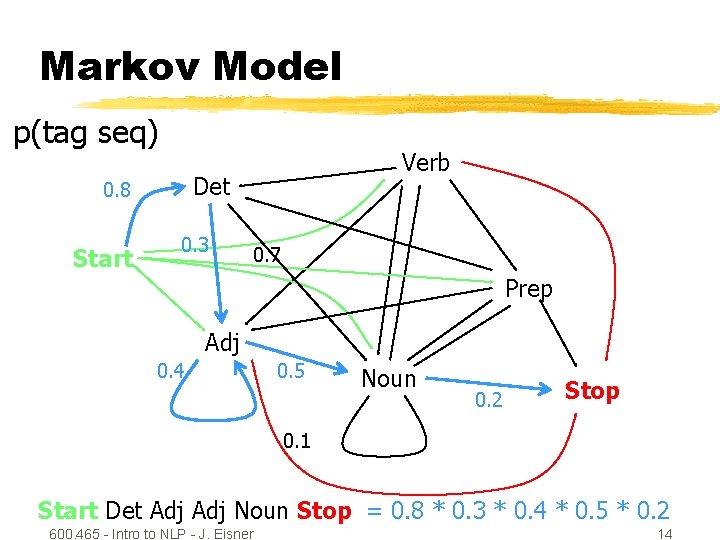

Markov Model p(tag seq) Det 0. 8 Start Verb 0. 3 0. 7 Prep Adj 0. 4 0. 5 Noun 0. 2 Stop 0. 1 Start Det Adj Noun Stop = 0. 8 * 0. 3 * 0. 4 * 0. 5 * 0. 2 600. 465 - Intro to NLP - J. Eisner 14

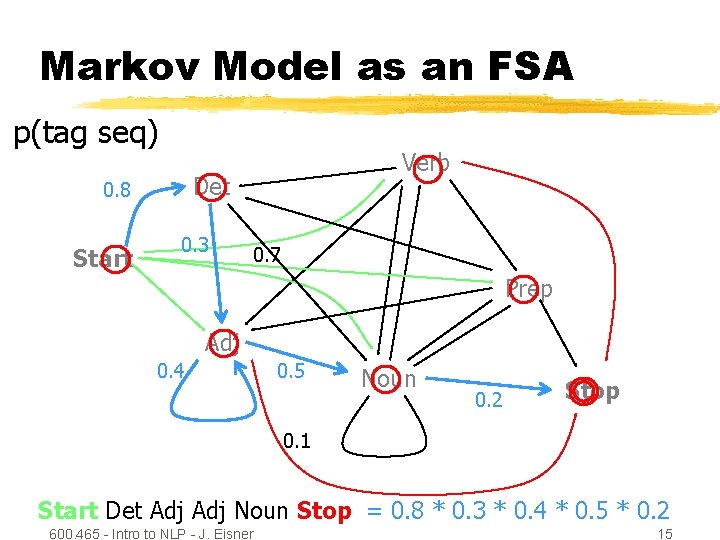

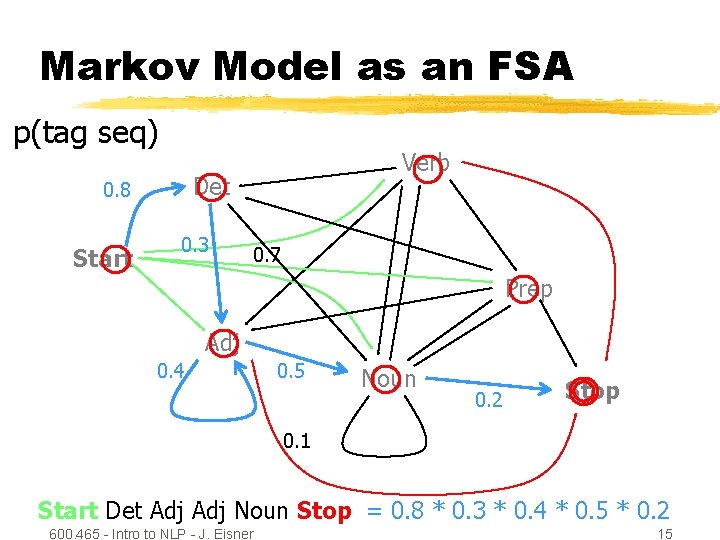

Markov Model as an FSA p(tag seq) Det 0. 8 Start Verb 0. 3 0. 7 Prep Adj 0. 4 0. 5 Noun 0. 2 Stop 0. 1 Start Det Adj Noun Stop = 0. 8 * 0. 3 * 0. 4 * 0. 5 * 0. 2 600. 465 - Intro to NLP - J. Eisner 15

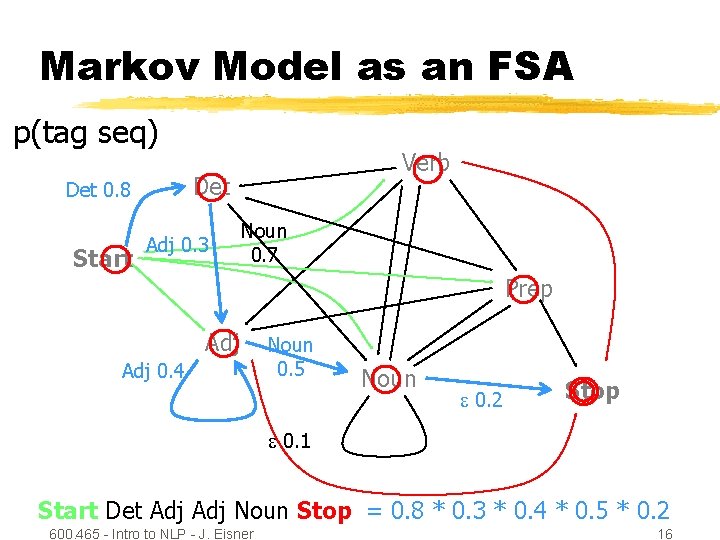

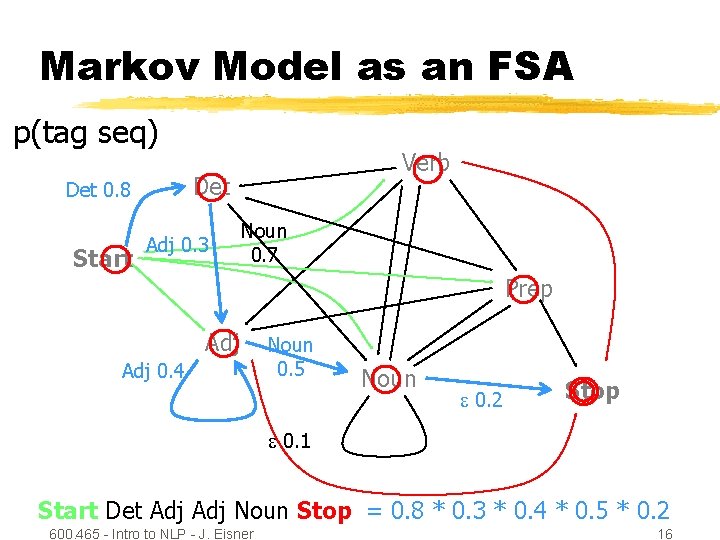

Markov Model as an FSA p(tag seq) Det 0. 8 Start Verb Adj 0. 3 Noun 0. 7 Prep Adj 0. 4 Noun 0. 5 Noun 0. 2 Stop 0. 1 Start Det Adj Noun Stop = 0. 8 * 0. 3 * 0. 4 * 0. 5 * 0. 2 600. 465 - Intro to NLP - J. Eisner 16

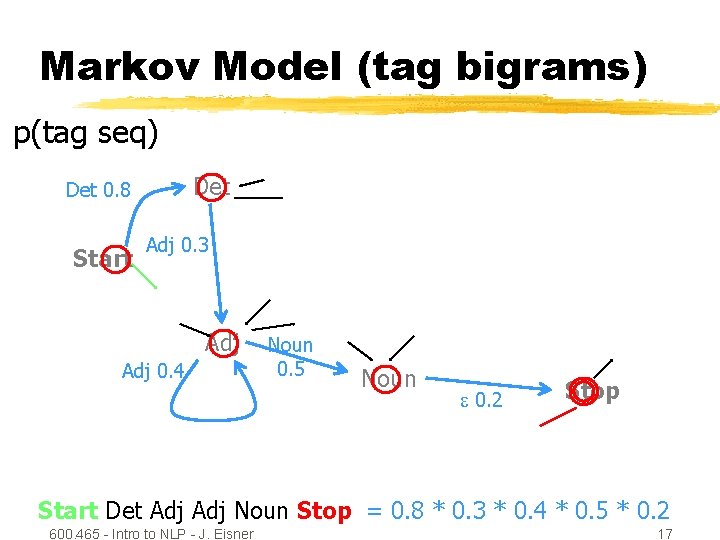

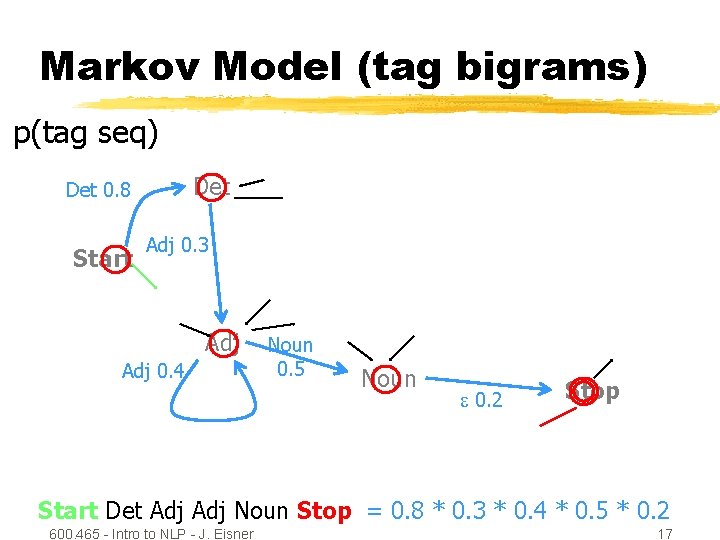

Markov Model (tag bigrams) p(tag seq) Det 0. 8 Start Adj 0. 3 Adj 0. 4 Noun 0. 5 Noun 0. 2 Stop Start Det Adj Noun Stop = 0. 8 * 0. 3 * 0. 4 * 0. 5 * 0. 2 600. 465 - Intro to NLP - J. Eisner 17

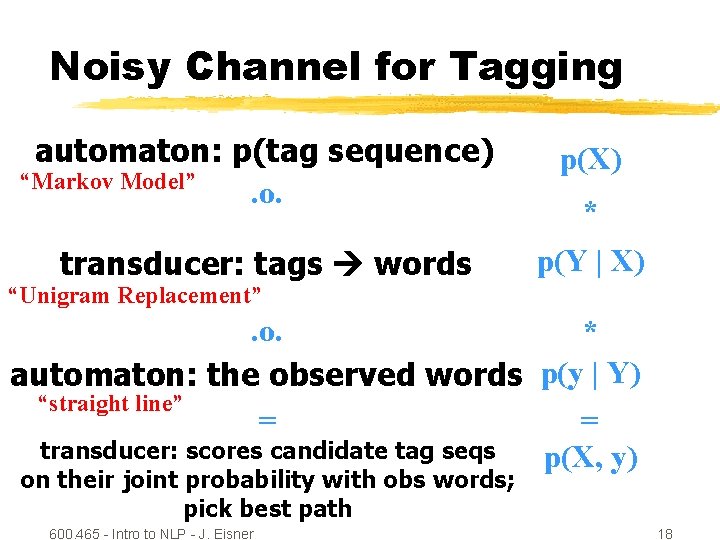

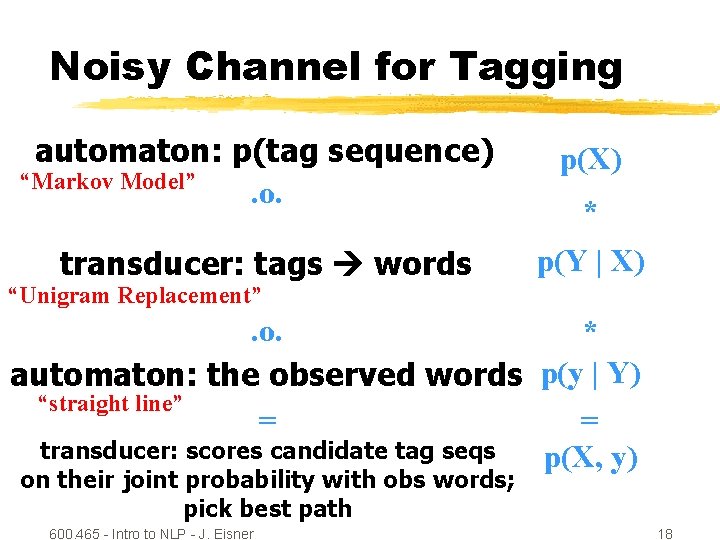

Noisy Channel for Tagging automaton: p(tag sequence) “Markov Model”. o. p(X) transducer: tags words p(Y | X) * “Unigram Replacement” . o. * automaton: the observed words p(y | Y) “straight line” = = transducer: scores candidate tag seqs p(X, y) on their joint probability with obs words; pick best path 600. 465 - Intro to NLP - J. Eisner 18

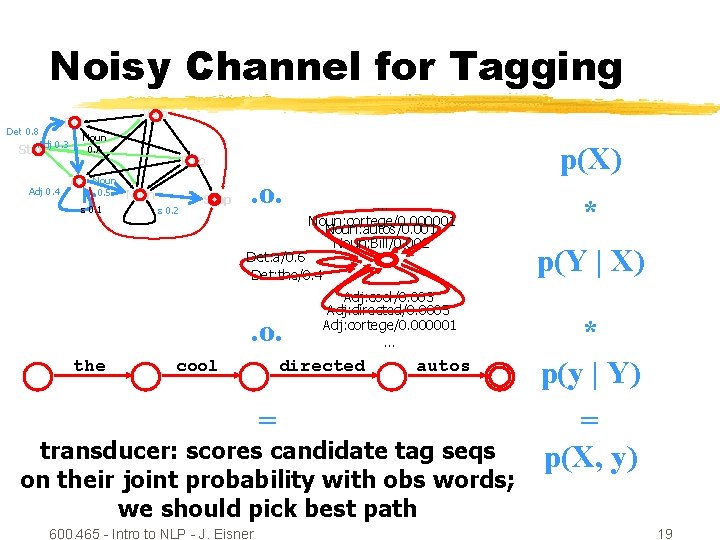

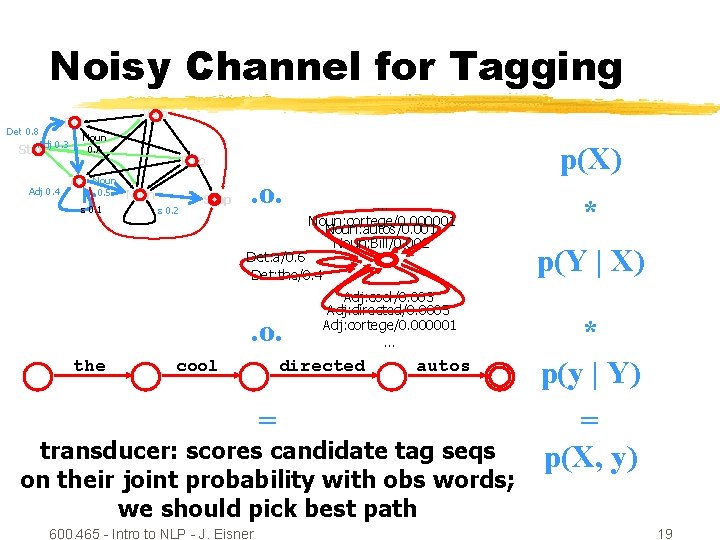

Noisy Channel for Tagging Verb Det 0. 8 Adj 0. 3 Start Adj 0. 4 Noun 0. 7 Adj Noun 0. 5 0. 1 p(X) Prep Noun 0. 2 Stop . o. … Noun: cortege/0. 000001 Noun: autos/0. 001 Noun: Bill/0. 002 Det: a/0. 6 Det: the/0. 4 . o. the cool Adj: cool/0. 003 Adj: directed/0. 0005 Adj: cortege/0. 000001 … directed = autos transducer: scores candidate tag seqs on their joint probability with obs words; we should pick best path 600. 465 - Intro to NLP - J. Eisner * p(Y | X) * p(y | Y) = p(X, y) 19

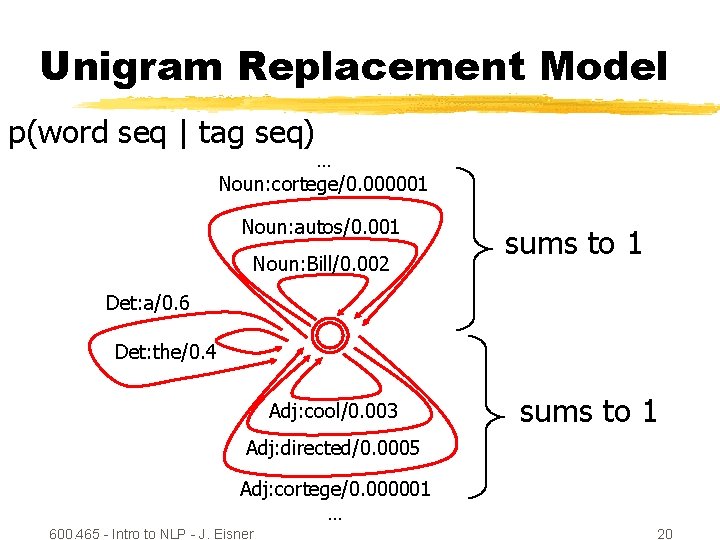

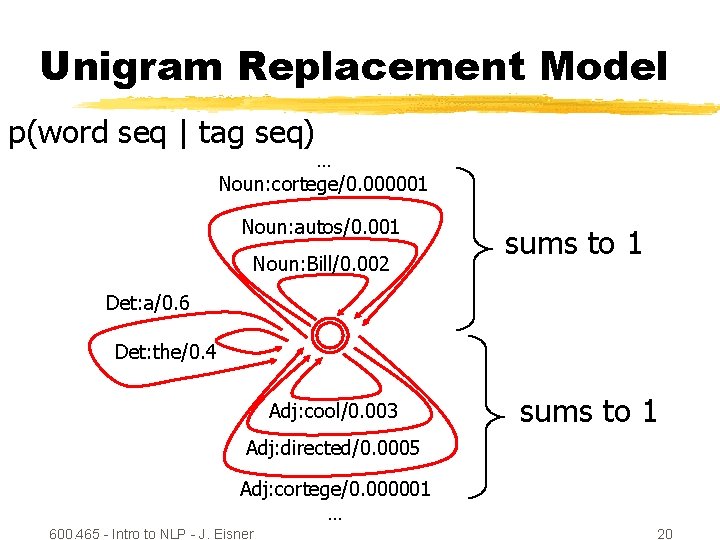

Unigram Replacement Model p(word seq | tag seq) … Noun: cortege/0. 000001 Noun: autos/0. 001 Noun: Bill/0. 002 sums to 1 Det: a/0. 6 Det: the/0. 4 Adj: cool/0. 003 sums to 1 Adj: directed/0. 0005 Adj: cortege/0. 000001 … 600. 465 - Intro to NLP - J. Eisner 20

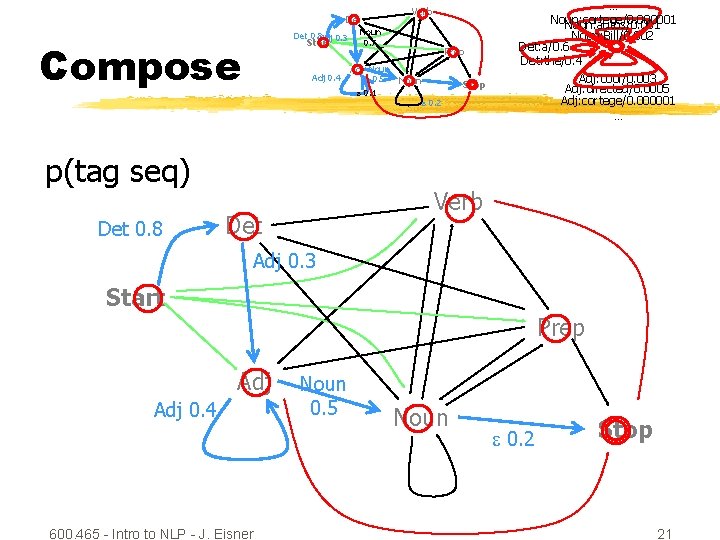

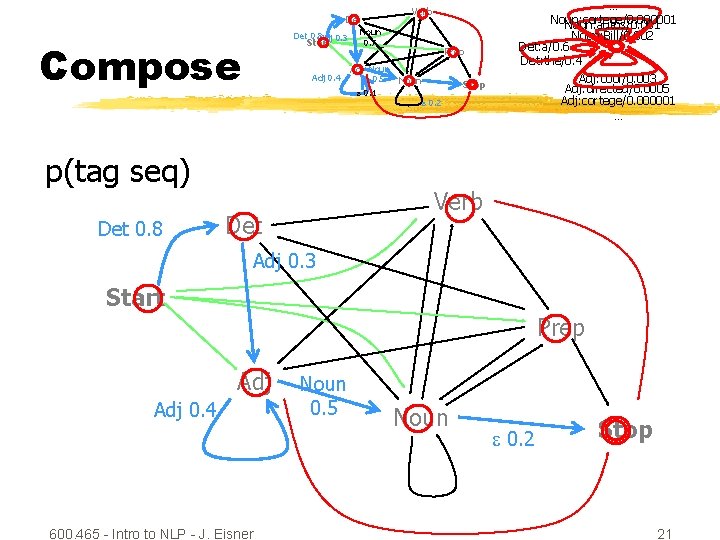

Verb Det 0. 8 Adj 0. 3 Compose Start Adj 0. 4 Adj Noun 0. 5 0. 1 p(tag seq) Det 0. 8 Noun 0. 7 Prep Noun Stop 0. 2 … Noun: cortege/0. 000001 Noun: autos/0. 001 Noun: Bill/0. 002 Det: a/0. 6 Det: the/0. 4 Adj: cool/0. 003 Adj: directed/0. 0005 Adj: cortege/0. 000001 … Verb Det Adj 0. 3 Start Prep Adj 0. 4 600. 465 - Intro to NLP - J. Eisner Noun 0. 5 Noun 0. 2 Stop 21

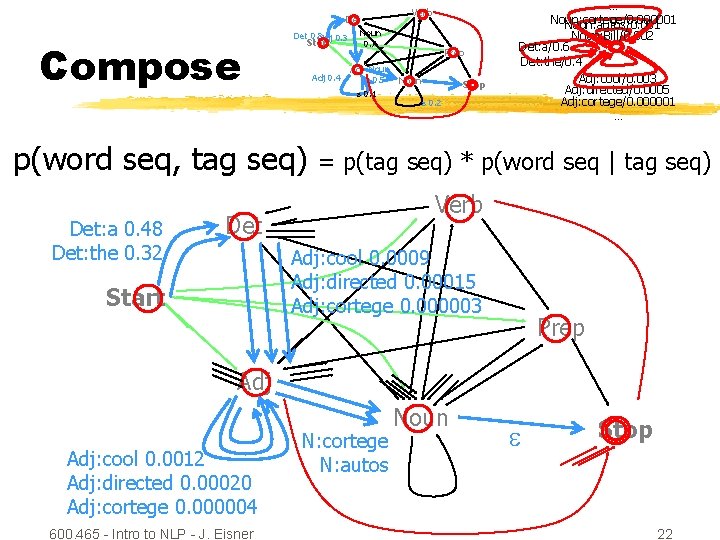

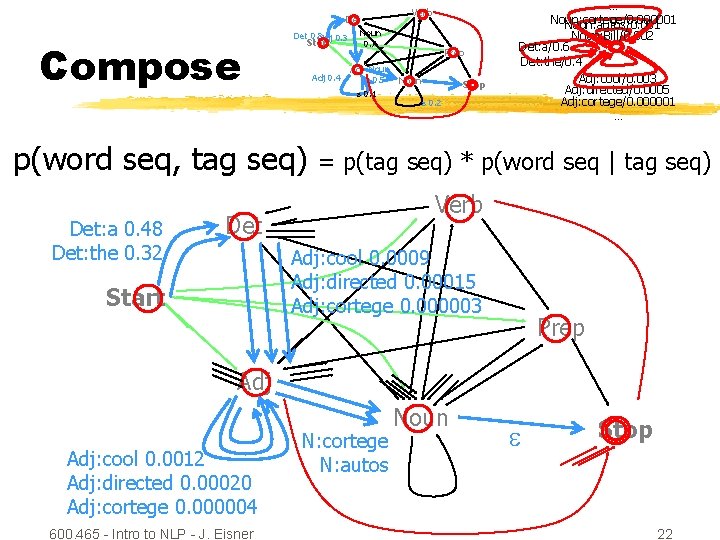

Verb Det Compose Det 0. 8 Adj 0. 3 Start Adj 0. 4 Adj Noun 0. 5 0. 1 p(word seq, tag seq) Det: a 0. 48 Det: the 0. 32 Noun 0. 7 Prep Noun Stop 0. 2 … Noun: cortege/0. 000001 Noun: autos/0. 001 Noun: Bill/0. 002 Det: a/0. 6 Det: the/0. 4 Adj: cool/0. 003 Adj: directed/0. 0005 Adj: cortege/0. 000001 … = p(tag seq) * p(word seq | tag seq) Verb Det Adj: cool 0. 0009 Adj: directed 0. 00015 Adj: cortege 0. 000003 Start Prep Adj: cool 0. 0012 Adj: directed 0. 00020 Adj: cortege 0. 000004 600. 465 - Intro to NLP - J. Eisner N: cortege N: autos Noun Stop 22

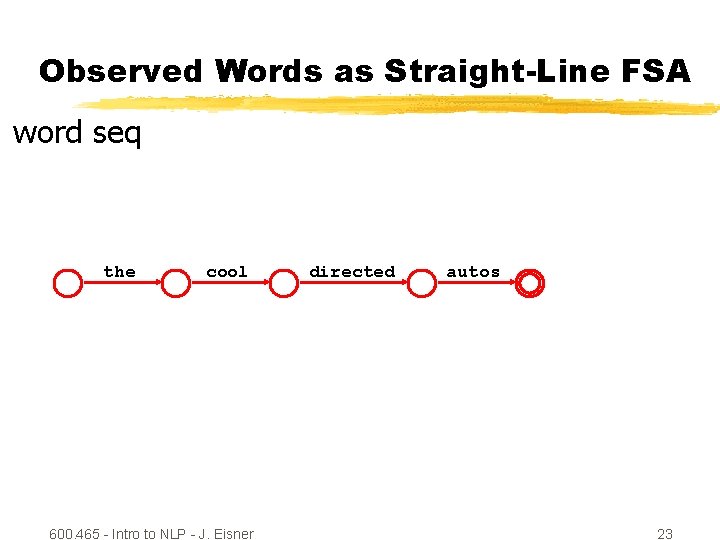

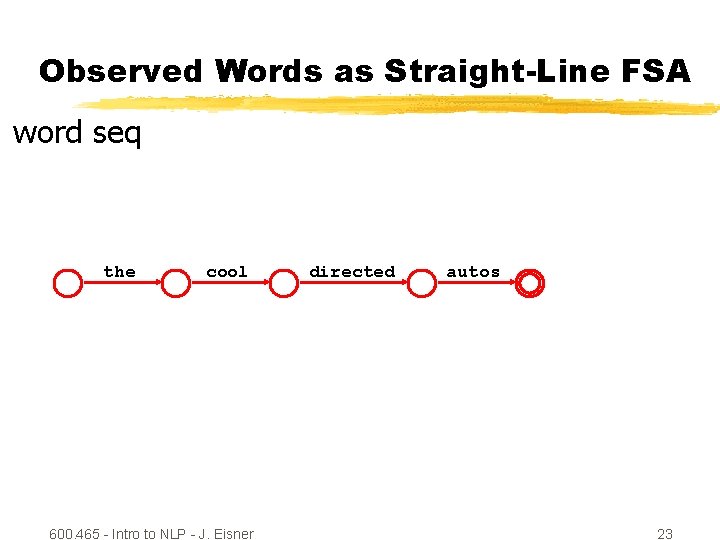

Observed Words as Straight-Line FSA word seq the cool 600. 465 - Intro to NLP - J. Eisner directed autos 23

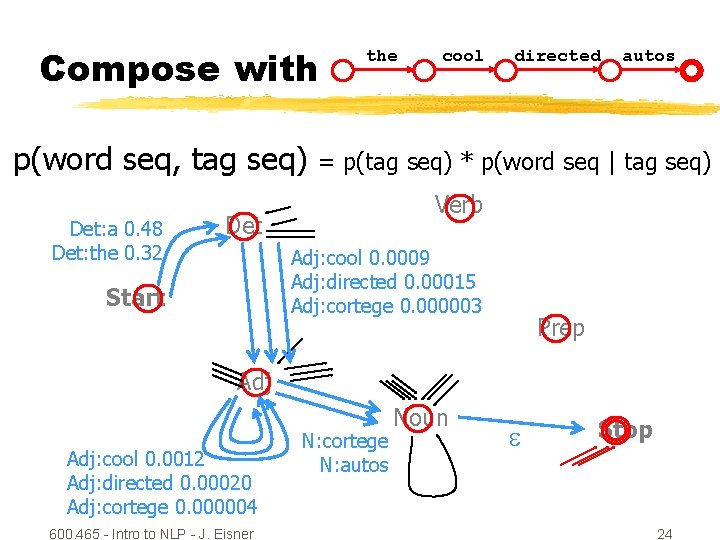

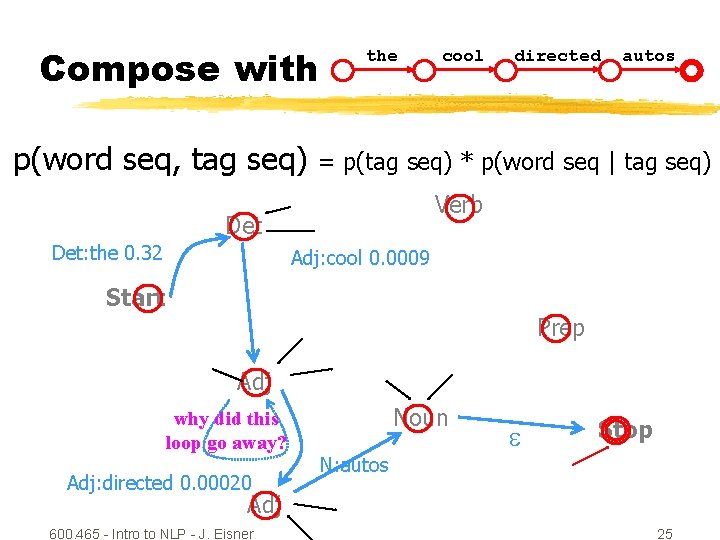

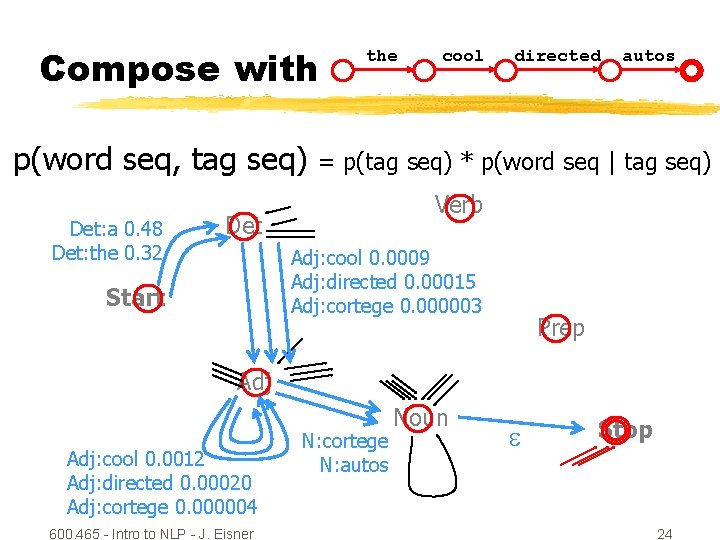

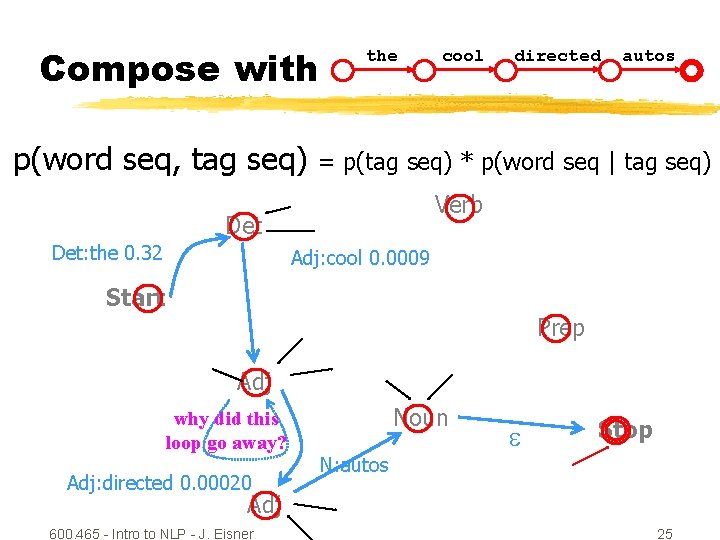

Compose with p(word seq, tag seq) Det: a 0. 48 Det: the 0. 32 the cool directed = p(tag seq) * p(word seq | tag seq) Verb Det Adj: cool 0. 0009 Adj: directed 0. 00015 Adj: cortege 0. 000003 Start autos Prep Adj: cool 0. 0012 Adj: directed 0. 00020 Adj: cortege 0. 000004 600. 465 - Intro to NLP - J. Eisner N: cortege N: autos Noun Stop 24

Compose with p(word seq, tag seq) the directed autos = p(tag seq) * p(word seq | tag seq) Verb Det: the 0. 32 cool Adj: cool 0. 0009 Start Prep Adj why did this loop go away? Adj: directed 0. 00020 Noun N: autos Stop Adj 600. 465 - Intro to NLP - J. Eisner 25

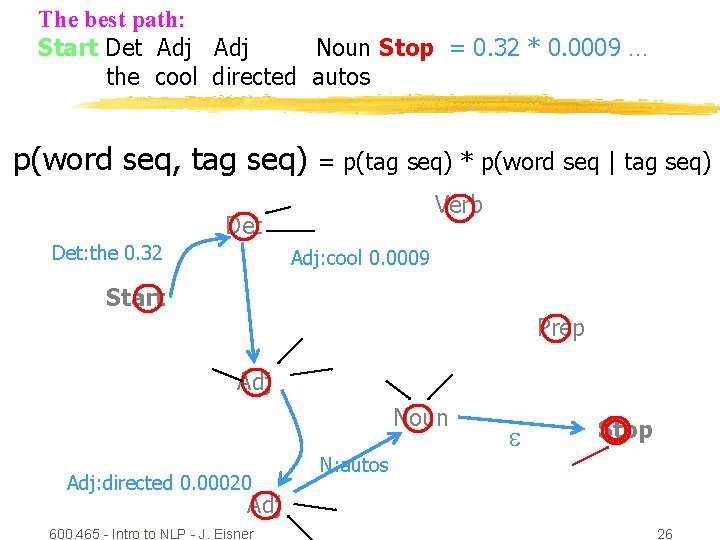

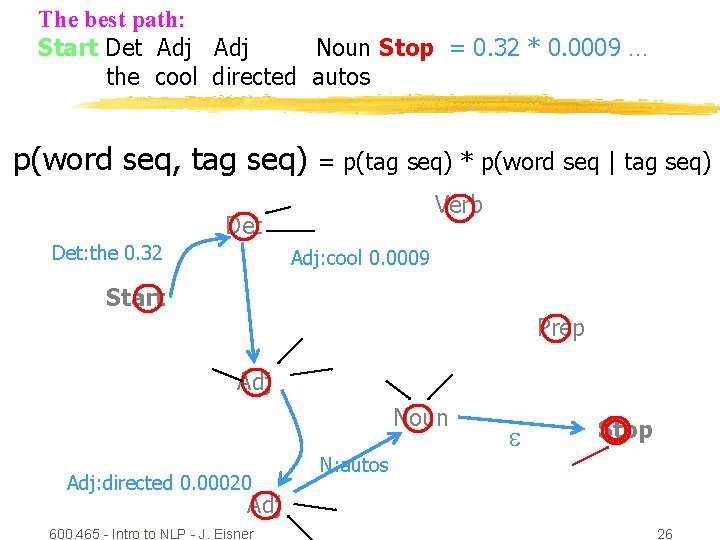

The best path: Start Det Adj Noun Stop = 0. 32 * 0. 0009 … the cool directed autos p(word seq, tag seq) = p(tag seq) * p(word seq | tag seq) Verb Det: the 0. 32 Adj: cool 0. 0009 Start Prep Adj Noun Adj: directed 0. 00020 N: autos Stop Adj 600. 465 - Intro to NLP - J. Eisner 26

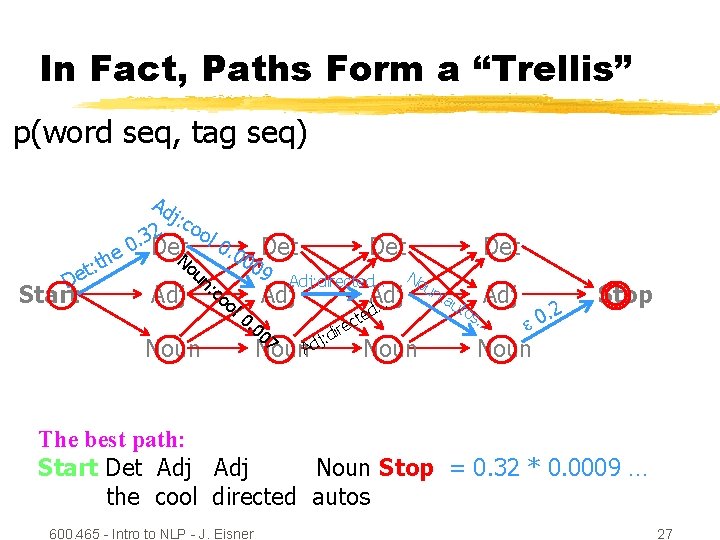

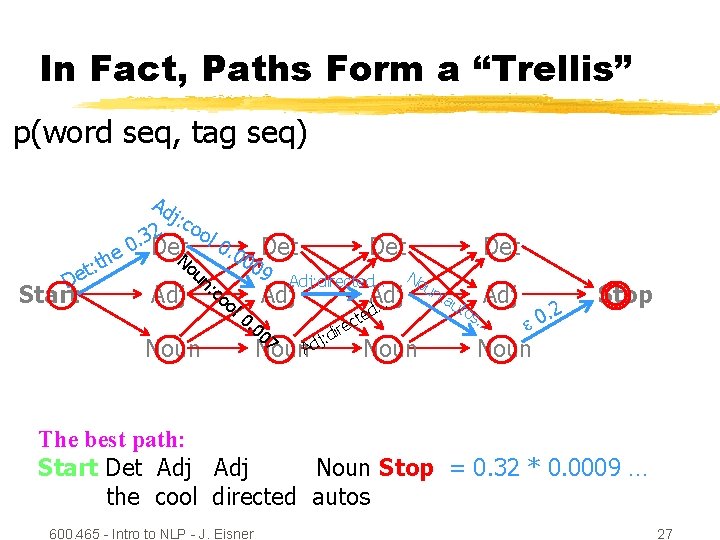

In Fact, Paths Form a “Trellis” p(word seq, tag seq) he t : et D Start Ad j: c 2 ool 3. 0 Det 0. No Adj un Noun Det 00 : c o ol 09 Det Adj: directed… 0. 0 Adj 07 Adj … Det No ed Noun. Ad j: d ct ire Noun un : au tos Adj … . 2 0 Stop Noun The best path: Start Det Adj Noun Stop = 0. 32 * 0. 0009 … the cool directed autos 600. 465 - Intro to NLP - J. Eisner 27

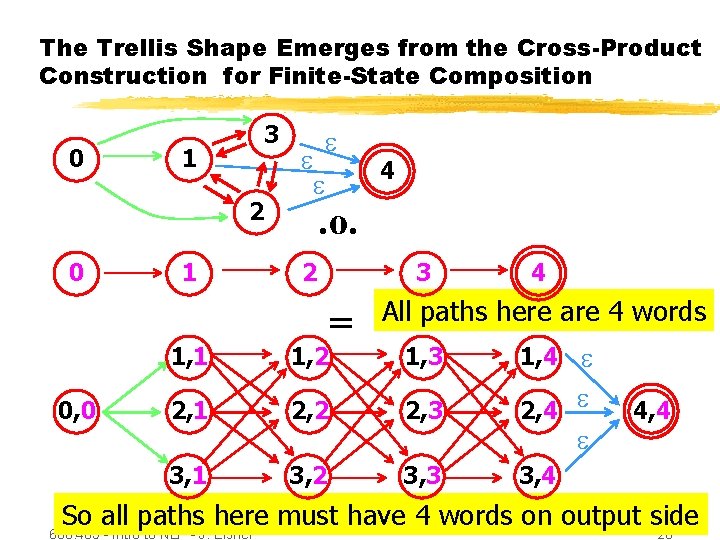

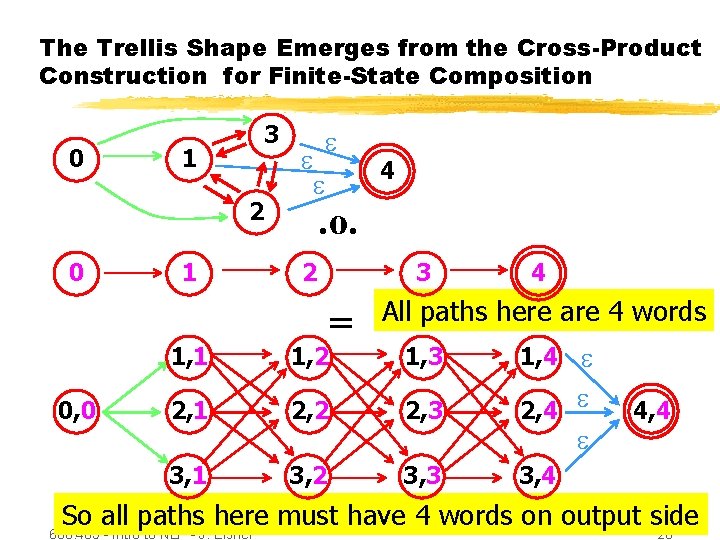

The Trellis Shape Emerges from the Cross-Product Construction for Finite-State Composition 0 3 1 2 0 0, 0 1 4 . o. 2 3 = 4 All paths here are 4 words 1, 3 1, 4 2, 2 2, 3 2, 4 3, 2 3, 3 3, 4 1, 1 1, 2 2, 1 3, 1 4, 4 So all paths here must have 4 words on output side 600. 465 - Intro to NLP - J. Eisner 28

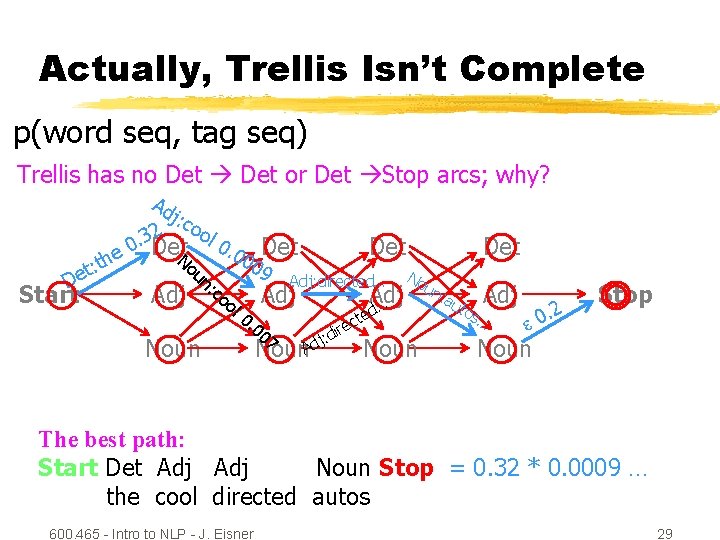

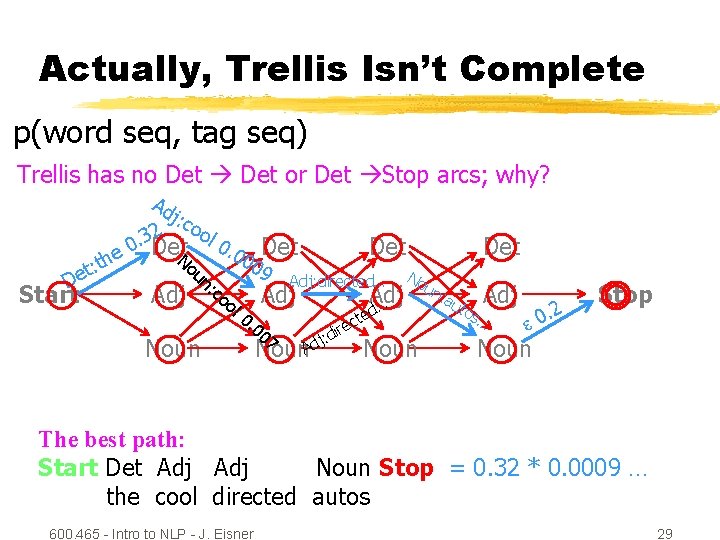

Actually, Trellis Isn’t Complete p(word seq, tag seq) Trellis has no Det or Det Stop arcs; why? he t : et D Start Ad j: c 2 ool 3. 0 Det 0. No Adj un Noun Det 00 : c o ol 09 Det Adj: directed… 0. 0 Adj 07 Adj … Det No ed Noun. Ad j: d ct ire Noun un : au tos Adj … . 2 0 Stop Noun The best path: Start Det Adj Noun Stop = 0. 32 * 0. 0009 … the cool directed autos 600. 465 - Intro to NLP - J. Eisner 29

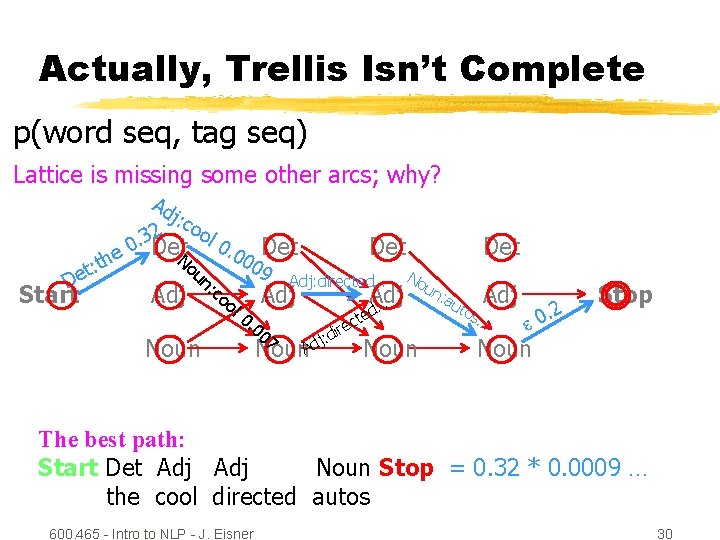

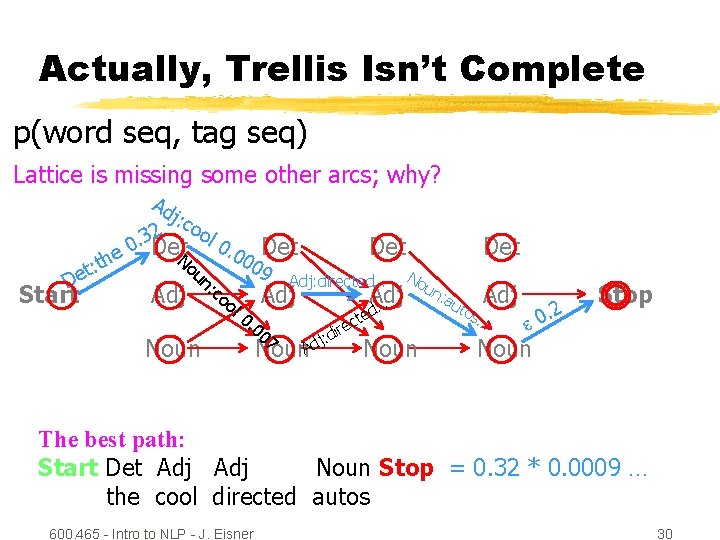

Actually, Trellis Isn’t Complete p(word seq, tag seq) Lattice is missing some other arcs; why? he t : et D Start Ad j: c 2 ool 3. 0 Det 0. No Adj un Noun Det 00 : c o ol 09 Det Adj: directed… 0. 0 Adj 07 Adj … Det No ed Noun. Ad j: d ct ire Noun un : au tos Adj … . 2 0 Stop Noun The best path: Start Det Adj Noun Stop = 0. 32 * 0. 0009 … the cool directed autos 600. 465 - Intro to NLP - J. Eisner 30

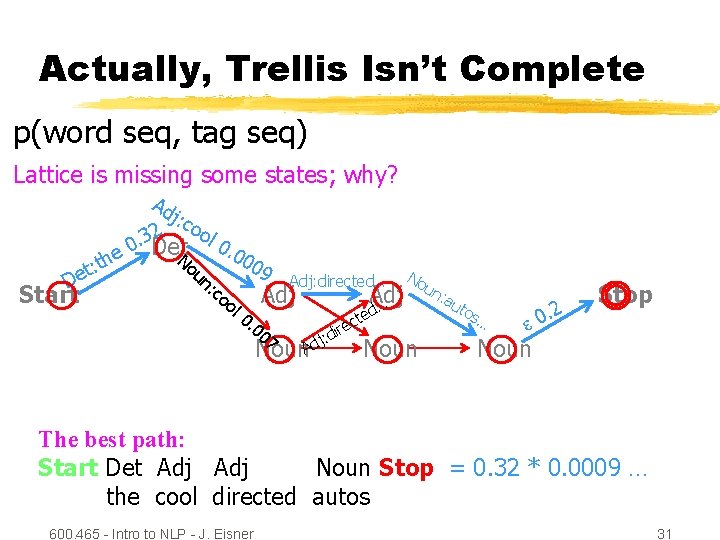

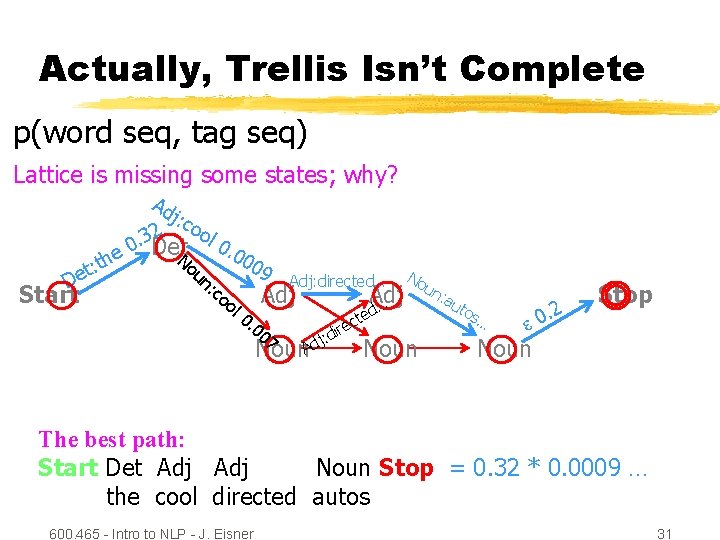

Actually, Trellis Isn’t Complete p(word seq, tag seq) Lattice is missing some states; why? De Start he t : t Ad j: c 2 ool 3. 0 Det 0. No un 00 : c o ol 09 Adj: directed… 0. 0 Adj 07 Adj … No ed Noun. Ad j: d ct ire Noun un : au tos … . 2 0 Stop Noun The best path: Start Det Adj Noun Stop = 0. 32 * 0. 0009 … the cool directed autos 600. 465 - Intro to NLP - J. Eisner 31

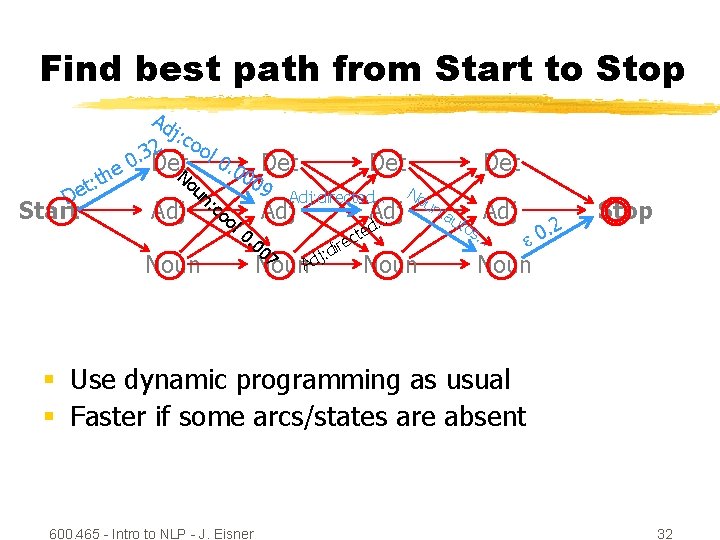

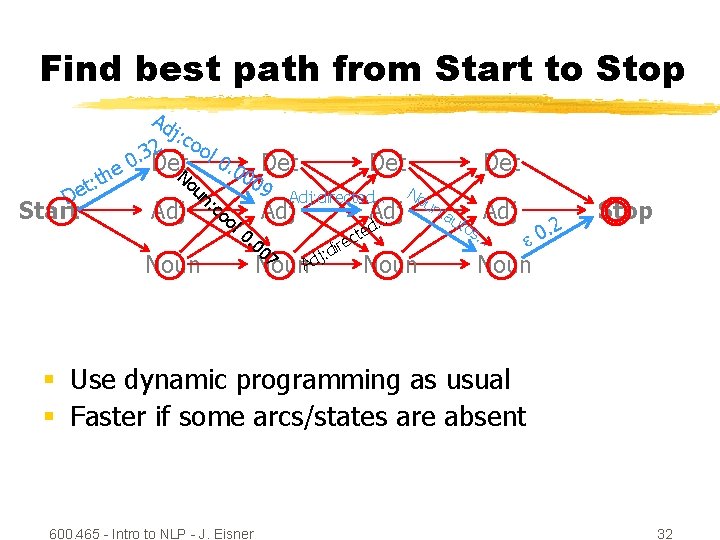

Find best path from Start to Stop he t : et D Start Ad j: c 2 ool 3. 0 Det 0. No Adj un Noun : c Det 00 oo 09 Det Adj: directed… Adj l 0 . 0 07 Adj … Det No ed Noun. Ad j: d ct ire Noun un : au tos Adj … . 2 0 Stop Noun § Use dynamic programming as usual § Faster if some arcs/states are absent 600. 465 - Intro to NLP - J. Eisner 32