PartofSpeech POS tagging See Eric Brill Partofspeech tagging

Part-of-Speech (POS) tagging See Eric Brill “Part-of-speech tagging”. Chapter 17 of R Dale, H Moisl & H Somers (eds) Handbook of Natural Language Processing, New York (2000): Marcel Dekker D Jurafsky & JH Martin: Speech and Language Processing, Upper Saddle River NJ (2000): Prentice Hall, Chapter 8 CD Manning & H Schütze: Foundations of Statistical Natural Language Processing, Cambridge, Mass (1999): MIT Press, Chapter 10. [skip the maths bits if too daunting]

Word categories • A. k. a. parts of speech (POSs) • Important and useful to identify words by their POS – To distinguish homonyms – To enable more general word searches • POS familiar (? ) from school and/or language learning (noun, verb, adjective, etc. ) 2

Word categories • Recall that we distinguished – open-class categories (noun, verb, adjective, adverb) – Closed-class categories (preposition, determiner, pronoun, conjunction, …) • While the big four are fairly clearcut, it is less obvious exactly what and how many closed-class categories there may be 3

POS tagging • Labelling words for POS can be done by – dictionary lookup – morphological analysis – “tagging” • Identifying POS can be seen as a prerequisite to parsing, and/or a process in its own right • However, there are some differences: – Parsers often work with the most simple set of word categories, subcategorized by feature (or attributevalue) schemes – Indeed the parsing procedure may contribute to the disambiguation of homonyms 4

POS tagging • POS tagging, per se, aims to identify wordcategory information somewhat independently of sentence structure … • … and typically uses rather different means • POS tags are generally shown as labels on words: John/NPN saw/VB the/AT book/NCN on/PRP the/AT table/NN. /PNC 5

What is a tagger? • Lack of distinction between … – Software which allows you to create something you can then use to tag input text, e. g. “Brill’s tagger” – The result of running such software, e. g. a tagger for English (based on the such-and-such corpus) • Taggers (even rule-based ones) are almost invariably trained on a given corpus • “Tagging” usually understood to mean “POS tagging”, but you can have other types of tags (eg semantic tags) 6

Tagging vs. parsing • Once tagger is “trained”, process consists straightforward look-up, plus local context (and sometimes morphology) • Tagger will attempt to assign a tag to unknown words, and to disambiguate homographs • “Tagset” (list of categories) usually larger with more distinctions than categories used in parsing 7

Tagset • Parsing usually has basic word-categories, whereas tagging makes more subtle distinctions • E. g. noun sg vs pl vs genitive, common vs proper, +is, +has, … and all combinations • Parser uses maybe 12 -20 categories, tagger may use 60 -100 8

Simple taggers • Default tagger has one tag per word, and assigns it on the basis of dictionary lookup – Tags may indicate ambiguity but not resolve it, e. g. NVB for noun -or-verb • Words may be assigned different tags with associated probabilities – Tagger will assign most probable tag unless – there is some way to identify when a less probable tag is in fact correct • Tag sequences may be defined by regular expressions, and assigned probabilities (including 0 for illegal sequences – negative rules) 9

Rule-based taggers • Earliest type of tagging: two stages • Stage 1: look up word in lexicon to give list of potential POSs • Stage 2: Apply rules which certify or disallow tag sequences • Rules originally handwritten; more recently Machine Learning methods can be used • cf transformation-based tagging, below 10

How do they work? • • • Tagger must be “trained” Many different techniques, but typically … Small “training corpus” hand-tagged Tagging rules learned automatically Rules define most likely sequence of tags Rules based on – Internal evidence (morphology) – External evidence (context) – Probabilities 11

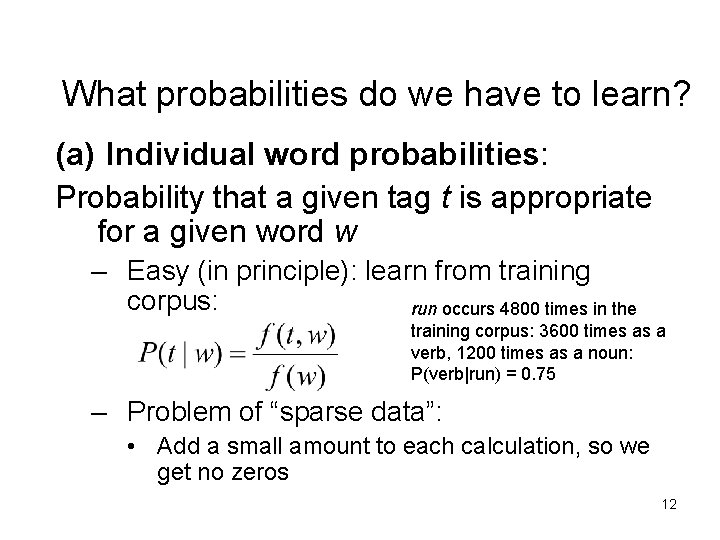

What probabilities do we have to learn? (a) Individual word probabilities: Probability that a given tag t is appropriate for a given word w – Easy (in principle): learn from training corpus: run occurs 4800 times in the training corpus: 3600 times as a verb, 1200 times as a noun: P(verb|run) = 0. 75 – Problem of “sparse data”: • Add a small amount to each calculation, so we get no zeros 12

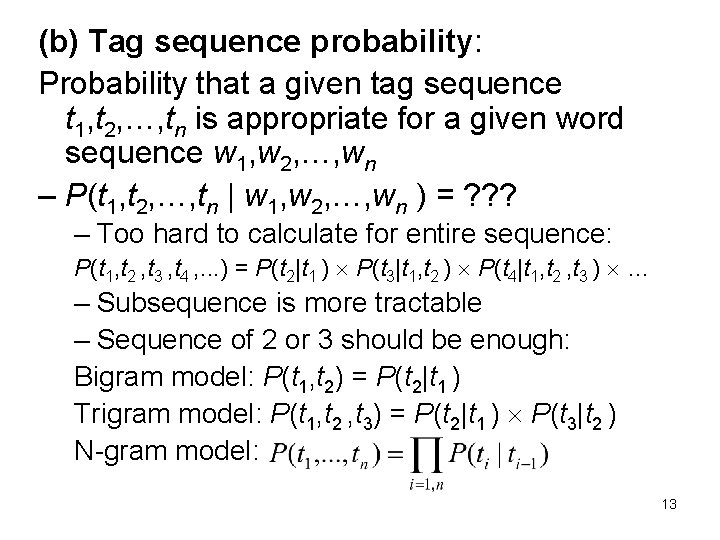

(b) Tag sequence probability: Probability that a given tag sequence t 1, t 2, …, tn is appropriate for a given word sequence w 1, w 2, …, wn – P(t 1, t 2, …, tn | w 1, w 2, …, wn ) = ? ? ? – Too hard to calculate for entire sequence: P(t 1, t 2 , t 3 , t 4 , . . . ) = P(t 2|t 1 ) P(t 3|t 1, t 2 ) P(t 4|t 1, t 2 , t 3 ) … – Subsequence is more tractable – Sequence of 2 or 3 should be enough: Bigram model: P(t 1, t 2) = P(t 2|t 1 ) Trigram model: P(t 1, t 2 , t 3) = P(t 2|t 1 ) P(t 3|t 2 ) N-gram model: 13

More complex taggers • Bigram taggers assign tags on the basis of sequences of two words (usually assigning tag to wordn on the basis of wordn-1) • An nth-order tagger assigns tags on the basis of sequences of n words • As the value of n increases, so does the complexity of the statistical calculation involved in comparing probability combinations 14

Stochastic taggers • Nowadays, pretty much all taggers are statistics-based and have been since 1980 s (or even earlier. . . Some primitive algorithms were already published in 60 s and 70 s) • Most common is based on Hidden Markov Models (also found in speech processing, etc. ) 15

(Hidden) Markov Models • Probability calculations imply Markov models: we assume that P(t|w) is dependent only on the (or, a sequence of) previous word(s) • (Informally) Markov models are the class of probabilistic models that assume we can predict the future without taking too much account of the past • Markov chains can be modelled by finite state automata: the next state in a Markov chain is always dependent on some finite history of previous states • Model is “hidden” if it is actually a succession of Markov models, whose intermediate states are of no interest 16

Supervised vs unsupervised training • Learning tagging rules from a marked-up corpus (supervised learning) gives very good results (98% accuracy) – Though assigning most probable tag, and “proper noun” to unknowns will give 90% • But it depends on having a corpus already marked up to a high quality • If this is not available, we have to try something else: – “forward-backward” algorithm – A kind of “bootstrapping” approach 17

Forward-backward (Baum-Welch) algorithm • Start with initial probabilities – If nothing known, assume all Ps equal • Adjust the individual probabilities so as to increase the overall probability. • Re-estimate the probabilities on the basis of the last iteration • Continue until convergence – i. e. there is no improvement, or improvement is below a threshold • All this can be done automatically 18

Transformation-based tagging • Eric Brill (1993) • Start from an initial tagging, and apply a series of transformations • Transformations are learned as well, from the training data • Captures the tagging data in much fewer parameters than stochastic models • The transformations learned (often) have linguistic “reality” 19

Transformation-based tagging • Three stages: – Lexical look-up – Lexical rule application for unknown words – Contextual rule application to correct mis-tags • Painting analogy 20

Transformation-based learning • Change tag a to b when: – Internal evidence (morphology) – Contextual evidence • One or more of the preceding/following words has a specific tag • One or more of the preceding/following words is a specific word • One or more of the preceding/following words has a certain form • Order of rules is important – Rules can change a correct tag into an incorrect tag, so another rule might correct that “mistake” 21

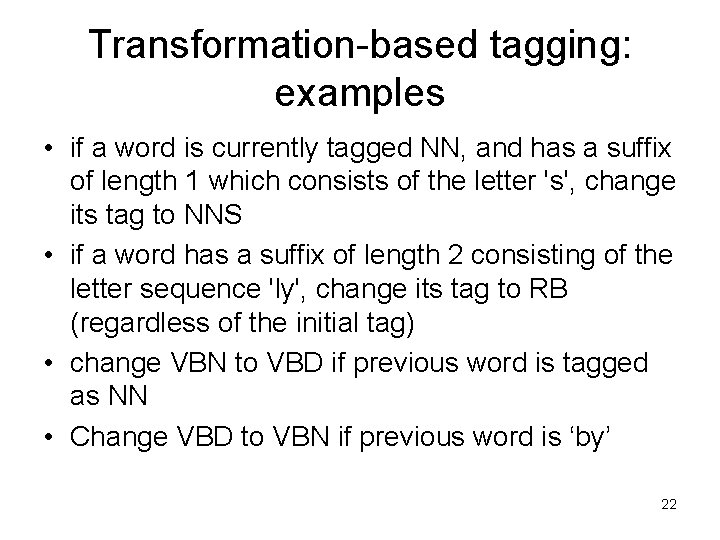

Transformation-based tagging: examples • if a word is currently tagged NN, and has a suffix of length 1 which consists of the letter 's', change its tag to NNS • if a word has a suffix of length 2 consisting of the letter sequence 'ly', change its tag to RB (regardless of the initial tag) • change VBN to VBD if previous word is tagged as NN • Change VBD to VBN if previous word is ‘by’ 22

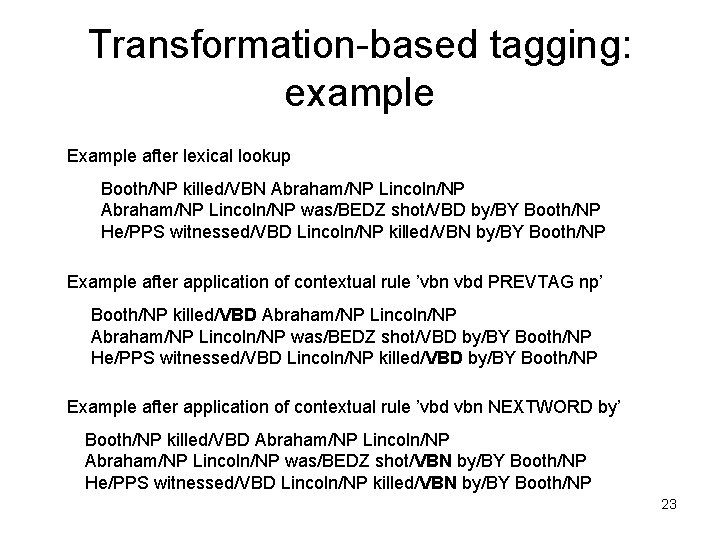

Transformation-based tagging: example Example after lexical lookup Booth/NP killed/VBN Abraham/NP Lincoln/NP was/BEDZ shot/VBD by/BY Booth/NP He/PPS witnessed/VBD Lincoln/NP killed/VBN by/BY Booth/NP Example after application of contextual rule ’vbn vbd PREVTAG np’ Booth/NP killed/VBD Abraham/NP Lincoln/NP was/BEDZ shot/VBD by/BY Booth/NP He/PPS witnessed/VBD Lincoln/NP killed/VBD by/BY Booth/NP Example after application of contextual rule ’vbd vbn NEXTWORD by’ Booth/NP killed/VBD Abraham/NP Lincoln/NP was/BEDZ shot/VBN by/BY Booth/NP He/PPS witnessed/VBD Lincoln/NP killed/VBN by/BY Booth/NP 23

Tagging – final word • Many taggers now available for download • Sometimes not clear whether “tagger” means – Software enabling you to build a tagger given a corpus – An already built tagger for a given language • Because a given tagger (2 nd sense) will have been trained on some corpus, it will be biased towards that (kind of) corpus – Question of goodness of match between original training corpus and material you want to use the tagger on 24

- Slides: 24