MULTICORE PROGRAMMING Course website http tbrown procs 798

MULTICORE PROGRAMMING Course website: http: //tbrown. pro/cs 798 Proving Linearizability Lecture 4 Trevor Brown

ANNOUNCEMENTS • Reminder: A 2 due Wednesday before class • If a non-trivial fraction of the class needs more time, we can extend this • (But, remember, we can only do this for 4 -5 assignments…)

LAST TIME • We described a stack • We argued that it offers lock-free progress • We did not prove linearizability… • Let’s do that now! • This is the main proof / theory in this course (AC/HS crosslisting) • Hopefully explanation is helpful for A 2!

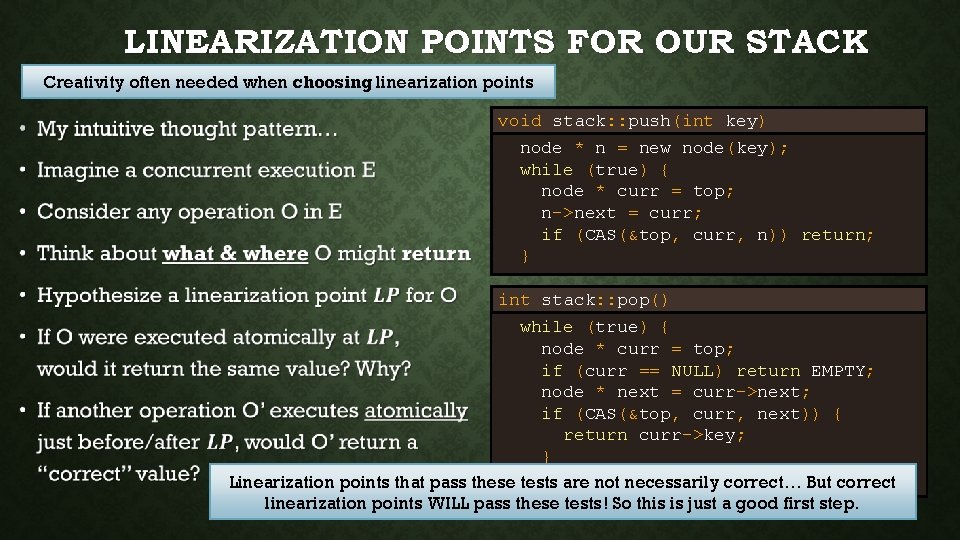

LINEARIZATION POINTS FOR OUR STACK Creativity often needed when choosing linearization points • void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } Linearization points that pass these } tests are not necessarily correct… But correct linearization points WILL pass these tests! So this is just a good first step.

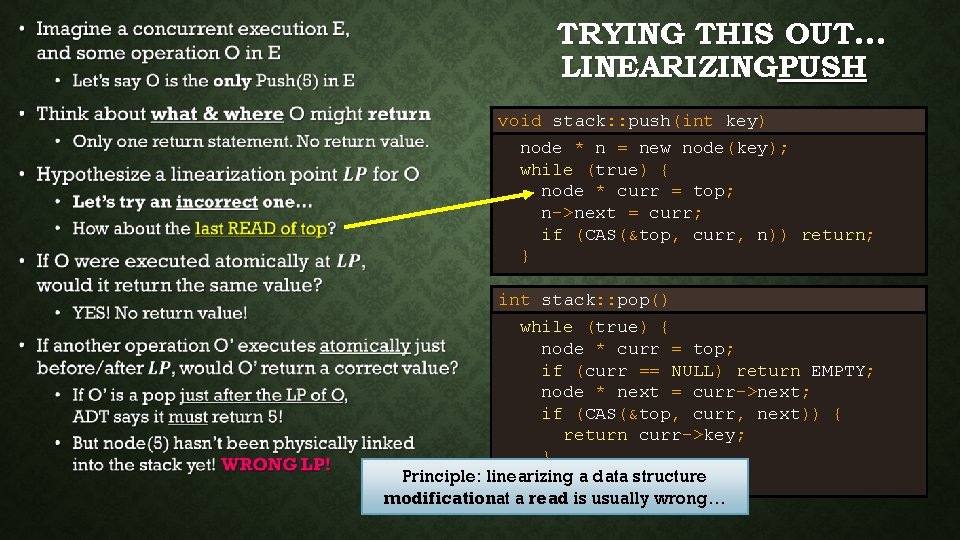

• TRYING THIS OUT… LINEARIZINGPUSH void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } Principle: linearizing a data structure } modificationat a read is usually wrong…

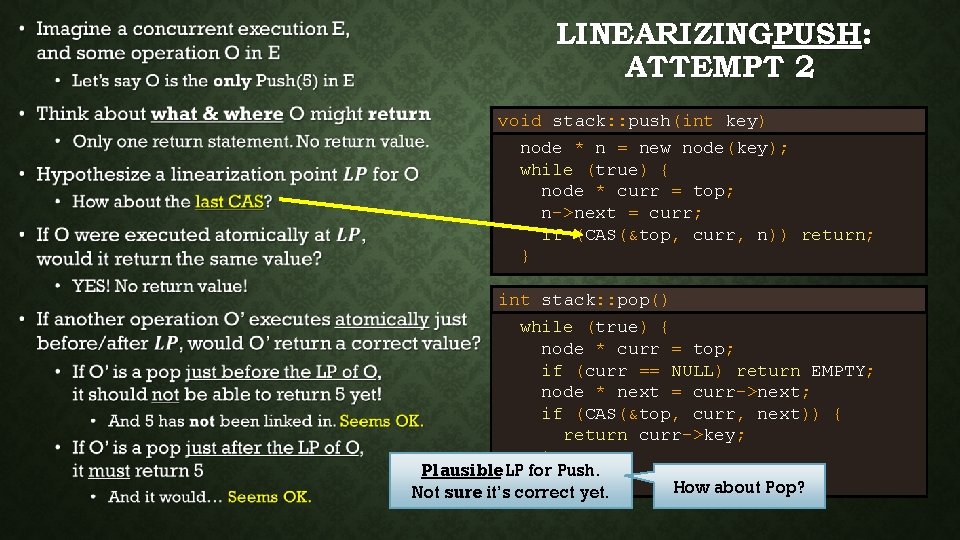

• LINEARIZINGPUSH: ATTEMPT 2 void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } Plausible. LP}for Push. How about Pop? Not sure it’s correct yet.

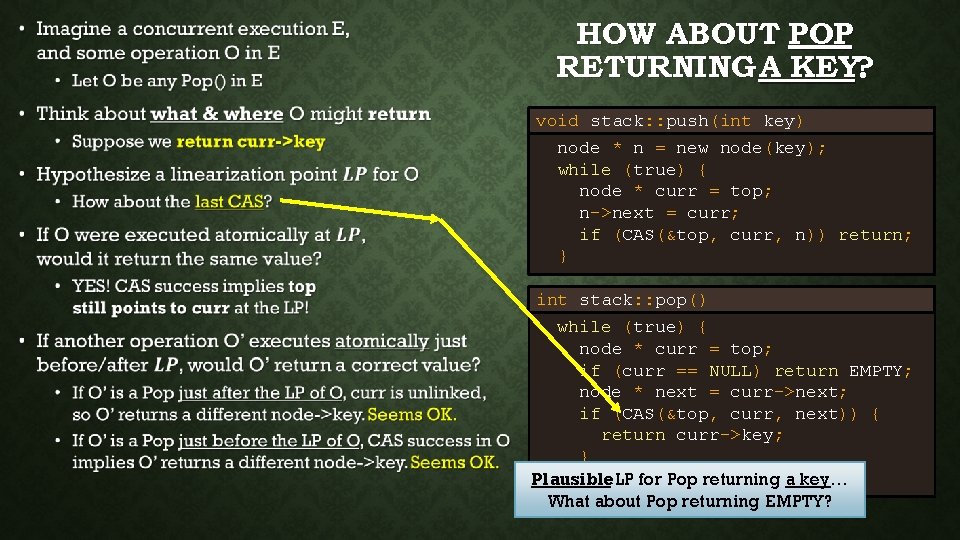

• HOW ABOUT POP RETURNING A KEY? void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } } Plausible LP for Pop returning a key… What about Pop returning EMPTY?

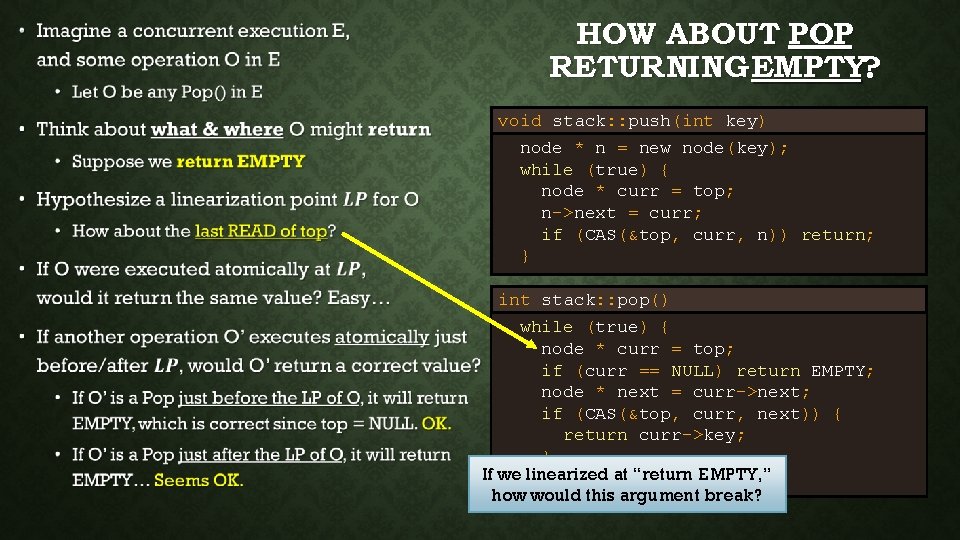

• HOW ABOUT POP RETURNING EMPTY? void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } If we}linearized at “return EMPTY, ” how would this argument break?

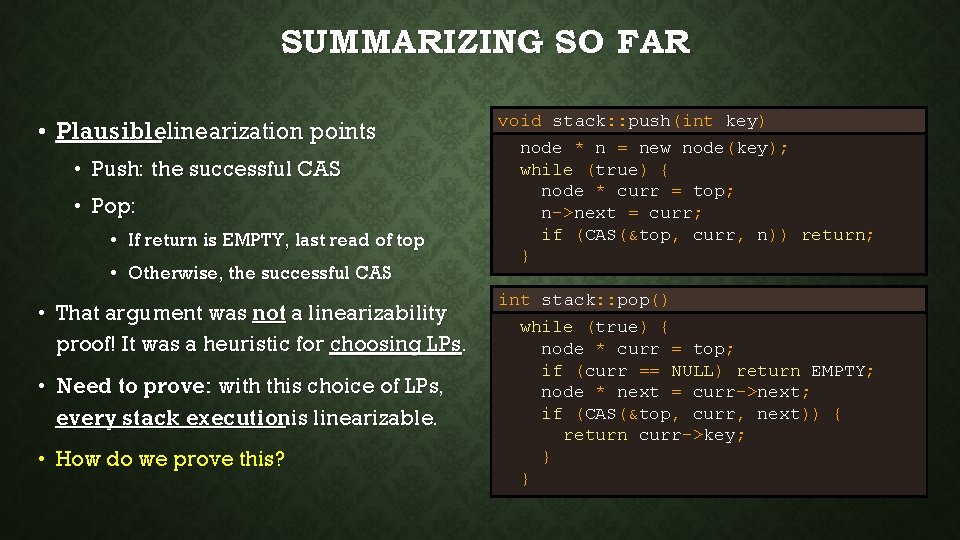

SUMMARIZING SO FAR • Plausiblelinearization points • Push: the successful CAS • Pop: • If return is EMPTY, last read of top • Otherwise, the successful CAS • That argument was not a linearizability proof! It was a heuristic for choosing LPs. • Need to prove: with this choice of LPs, every stack executionis linearizable. • How do we prove this? void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } }

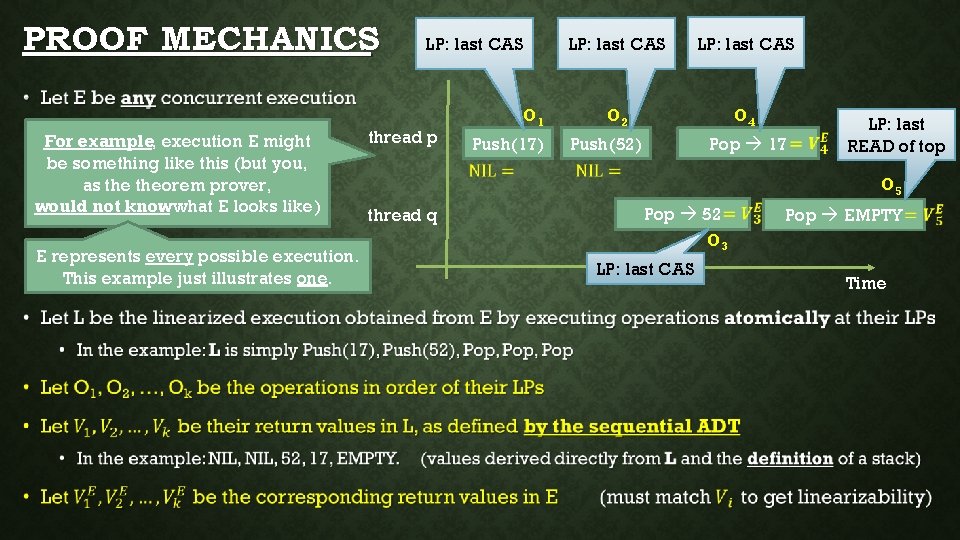

PROOF MECHANICS LP: last CAS • For example, execution E might be something like this (but you, as theorem prover, would not know what E looks like) E represents every possible execution. This example just illustrates one. thread p O 1 Push(17) LP: last CAS O 2 O 4 Push(52) Pop 17 LP: last READ of top O 5 thread q Pop 52 O 3 LP: last CAS Pop EMPTY Time

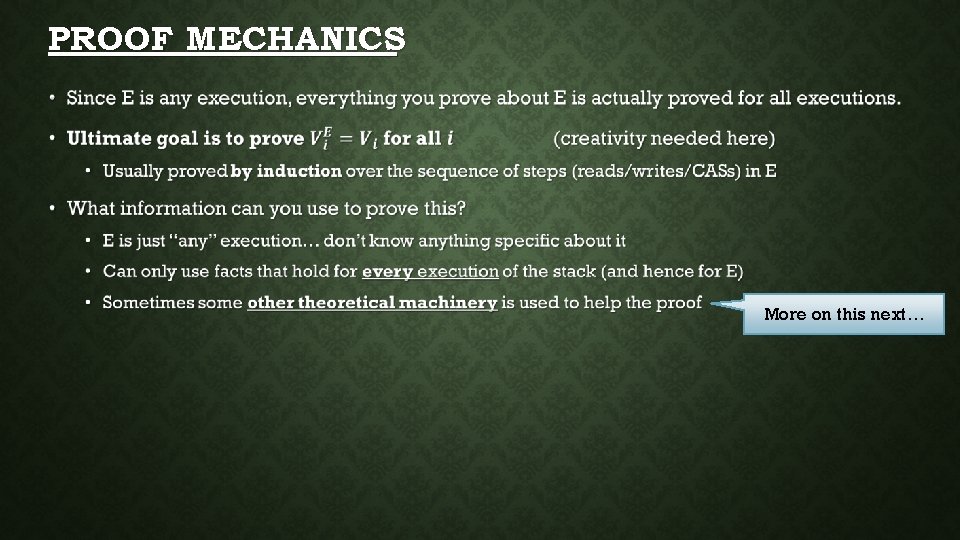

PROOF MECHANICS • More on this next…

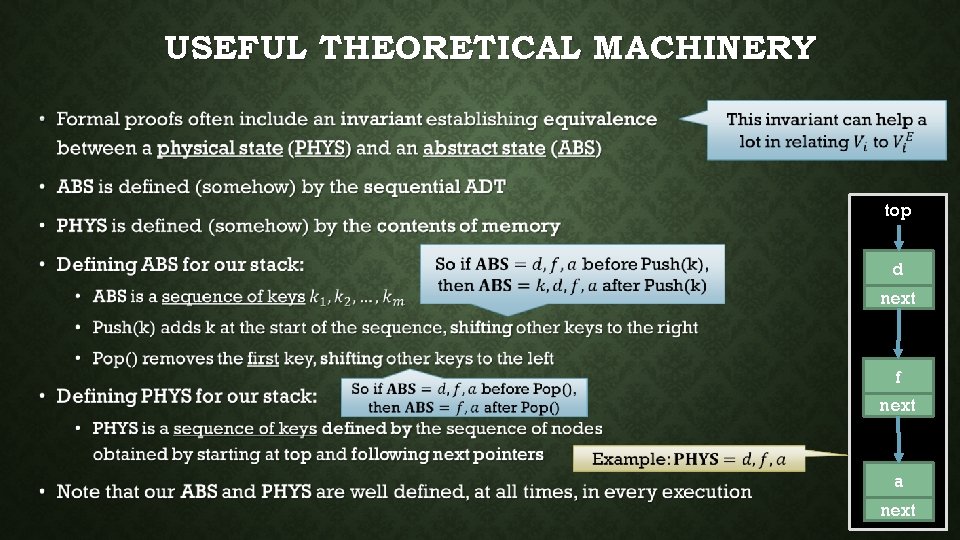

USEFUL THEORETICAL MACHINERY • top d next f next a next

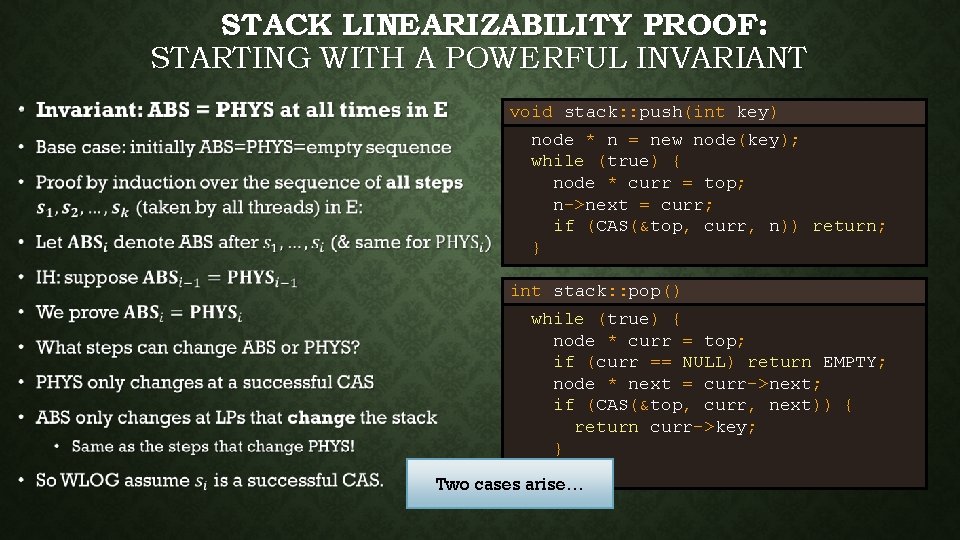

STACK LINEARIZABILITY PROOF: STARTING WITH A POWERFUL INVARIANT • void stack: : push(int key) node * n = new node(key); while (true) { node * curr = top; n->next = curr; if (CAS(&top, curr, n)) return; } int stack: : pop() while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } } Two cases arise…

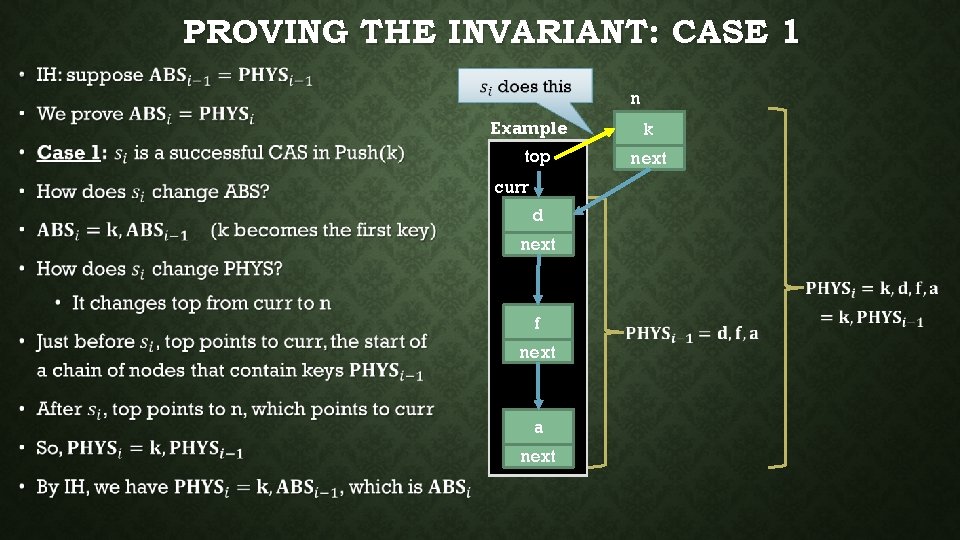

PROVING THE INVARIANT: CASE 1 • n Example top curr d next f next a next k next

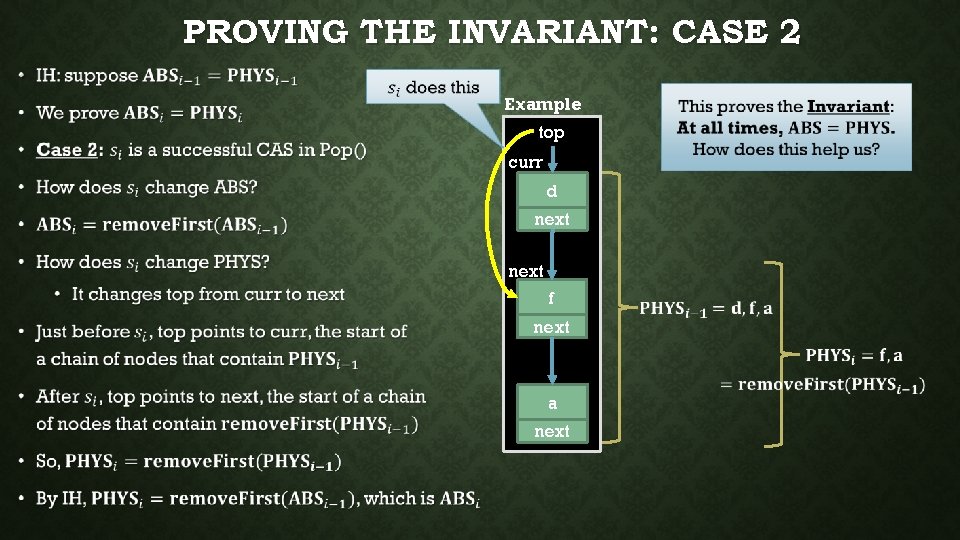

PROVING THE INVARIANT: CASE 2 • Example top curr d next f next a next

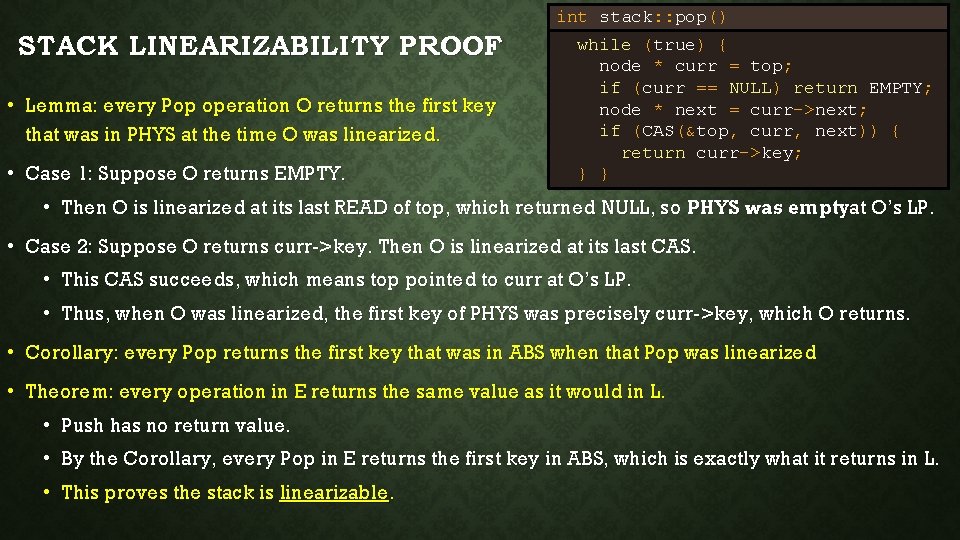

int stack: : pop() STACK LINEARIZABILITY PROOF • Lemma: every Pop operation O returns the first key that was in PHYS at the time O was linearized. • Case 1: Suppose O returns EMPTY. while (true) { node * curr = top; if (curr == NULL) return EMPTY; node * next = curr->next; if (CAS(&top, curr, next)) { return curr->key; } } • Then O is linearized at its last READ of top, which returned NULL, so PHYS was emptyat O’s LP. • Case 2: Suppose O returns curr->key. Then O is linearized at its last CAS. • This CAS succeeds, which means top pointed to curr at O’s LP. • Thus, when O was linearized, the first key of PHYS was precisely curr->key, which O returns. • Corollary: every Pop returns the first key that was in ABS when that Pop was linearized • Theorem: every operation in E returns the same value as it would in L. • Push has no return value. • By the Corollary, every Pop in E returns the first key in ABS, which is exactly what it returns in L. • This proves the stack is linearizable.

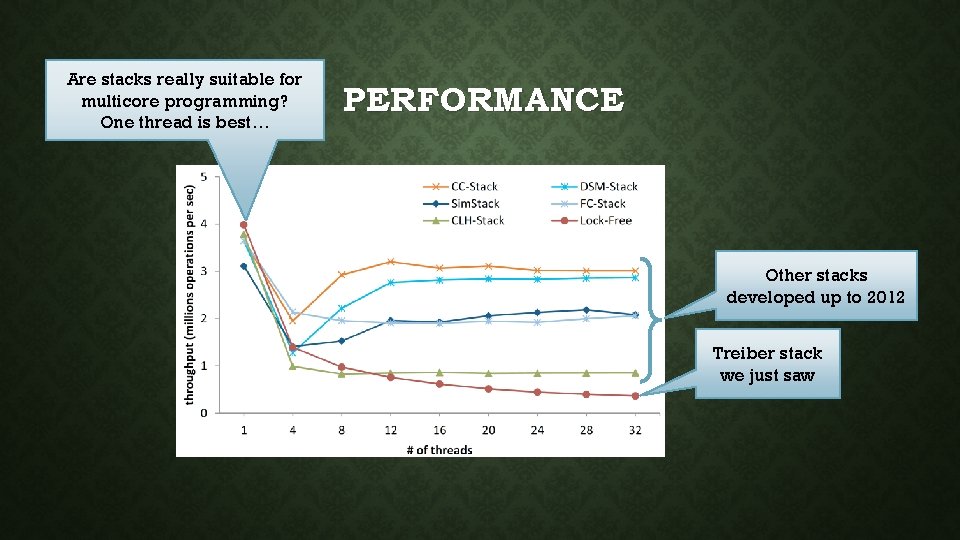

Are stacks really suitable for multicore programming? One thread is best… PERFORMANCE Other stacks developed up to 2012 Treiber stack we just saw

QUEUES • Like stacks, but FIFO instead of LIFO • Logical next step • Concurrent modification of two pointers (head/tail) rather than just one (stack top) • Not covering in detail (no implementation / proofs) • They don’t scale • Are they really useful? That’sworth talking about.

WHY WOULD WE WANT CONCURRENT STACKS OR QUEUES? • Suppose we have a fast concurrent queue • Do we care? • Why use a queue over something with no ordering guarantees? • Less ordering would allow more concurrency (and better performance) • Must need the order! • Can we actually use the ordering a concurrent queue provides to do anything useful?

EXAMPLE: BREADTH-FIRST SEARCH (BFS) • Graph traversal algorithm that depends on FIFO ordered queue • BFS(starting. Node, visit. Function) • q = new Queue Concurrent. Queue • q. enqueue(starting. Node) • while q is not empty Fun fact: replacing the queue with a stack yields depth-first search (DFS) • curr = q. dequeue() • visit. Function(curr) • for each neighbor n of curr • if n has not been visited and is not in q • q. enqueue(n) Refactor as operation: dequeue. And. Process. Threads execute this concurrently until q is empty.

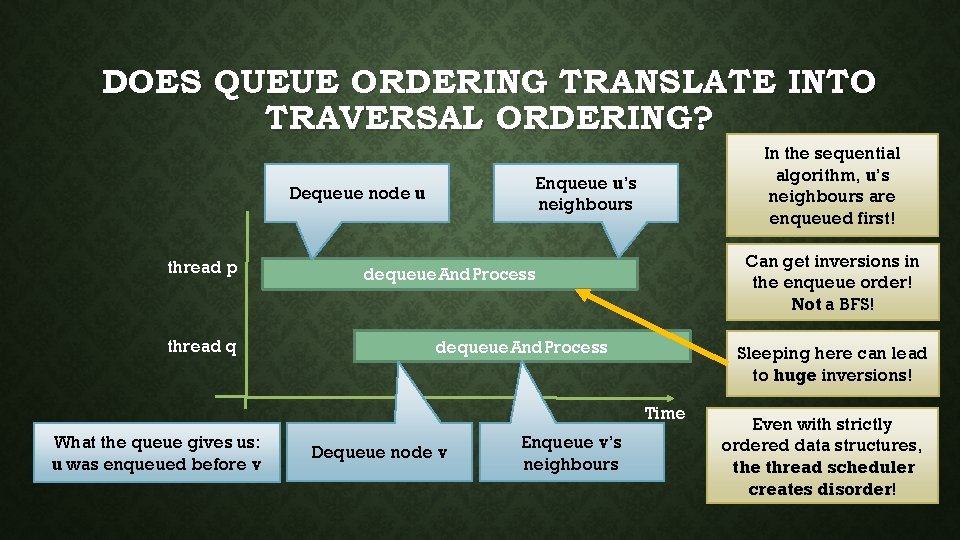

DOES QUEUE ORDERING TRANSLATE INTO TRAVERSAL ORDERING? Enqueue u’s neighbours Dequeue node u thread p thread q In the sequential algorithm, u’s neighbours are enqueued first! Can get inversions in the enqueue order! Not a BFS! dequeue. And. Process Sleeping here can lead to huge inversions! Time What the queue gives us: u was enqueued before v Dequeue node v Enqueue v’s neighbours Even with strictly ordered data structures, the thread scheduler creates disorder!

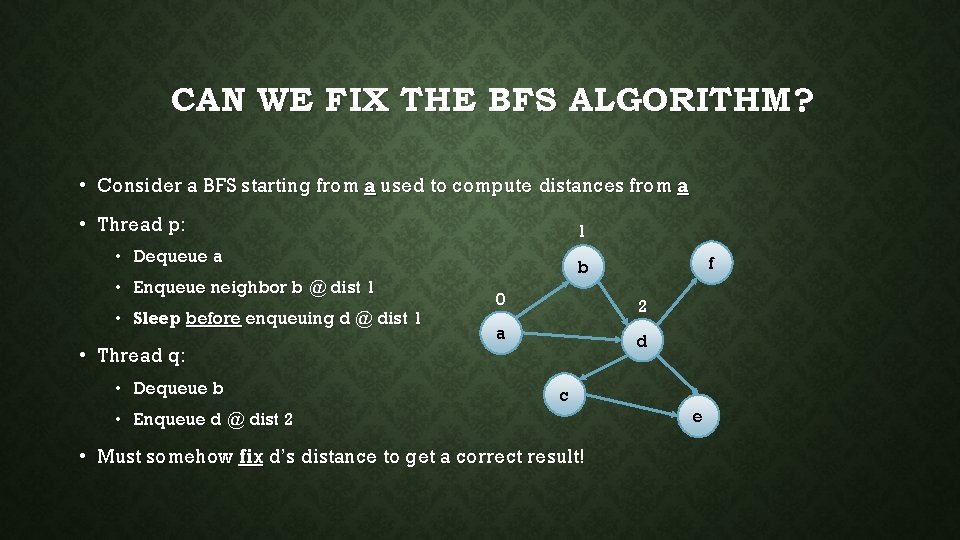

CAN WE FIX THE BFS ALGORITHM? • Consider a BFS starting from a used to compute distances from a • Thread p: 1 • Dequeue a • Enqueue neighbor b @ dist 1 • Sleep before enqueuing d @ dist 1 • Thread q: • Dequeue b f b 0 2 a d c • Enqueue d @ dist 2 • Must somehow fix d’s distance to get a correct result! e

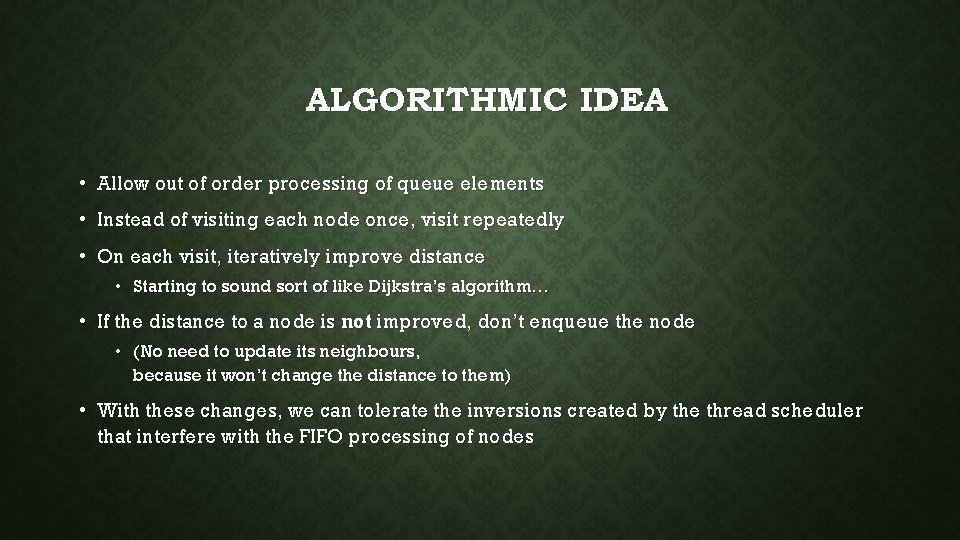

ALGORITHMIC IDEA • Allow out of order processing of queue elements • Instead of visiting each node once, visit repeatedly • On each visit, iteratively improve distance • Starting to sound sort of like Dijkstra’s algorithm… • If the distance to a node is not improved, don’t enqueue the node • (No need to update its neighbours, because it won’t change the distance to them) • With these changes, we can tolerate the inversions created by the thread scheduler that interfere with the FIFO processing of nodes

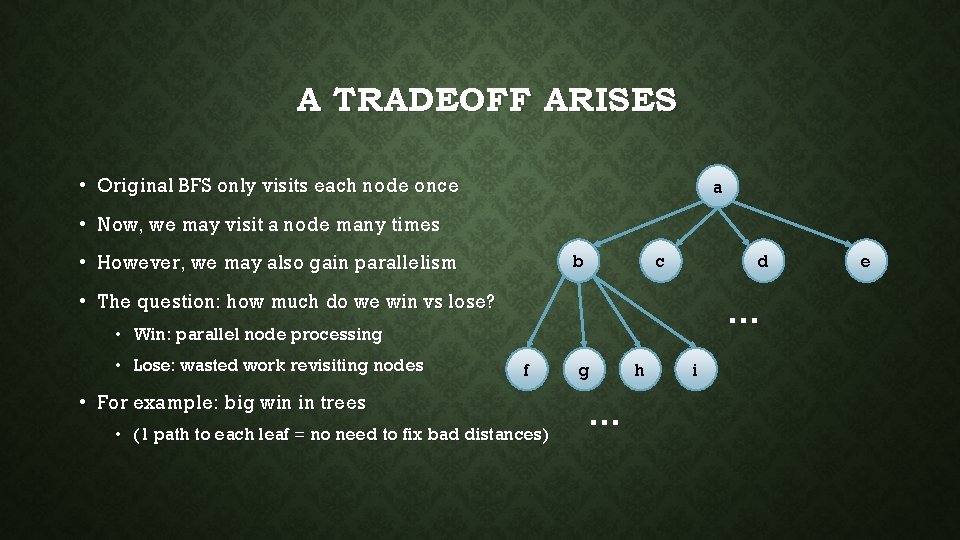

A TRADEOFF ARISES • Original BFS only visits each node once a • Now, we may visit a node many times c b • However, we may also gain parallelism d • The question: how much do we win vs lose? … • Win: parallel node processing • Lose: wasted work revisiting nodes f • For example: big win in trees • (1 path to each leaf = no need to fix bad distances) g … h i e

DIJKSTRA’S ALGORITHM IS SIMILAR • Dijkstra’s algorithm already incrementally improves distances • Like BFS, but with a priority queue that sorts by distance • Instead of dequeue, it uses dequeue. Min • Each node is only visited once • Because of the strict priority queue ordering • Without the strict priority ordering, nodes may need to be visited multiple times • Similar tradeoff can win by relaxing the ordering

ROLE OF ORDERING • Strict FIFO queues do not make it easy to implement concurrent BFS • Concurrent BFS does not need to rely on FIFO (Dijkstra’s similar) • How much should we order our data? • Strict orders kill concurrency • Random orders may perform poorly Meta-point: concurrency is diametrically opposed to ordering. Ordering synchronization waiting. • Data structures with relaxed ordering • Relaxed stacks, relaxed queues, relaxed priority queues • Typically provide bounds on how out-of-order things can get

• We are very unlikely to get past here in class. • I’m including the following slides just in case…

HARNESSING DISORDER Concurrent relaxed queues

RELAXED QUEUE OBJECT • Operations: • Enqueue(e) • Adds element e to the back of the queue • Dequeue() • Removes some elementfrom the queue and returns it • Meaningless without a quality guarantee • For example: “dequeue returns one of the k oldest keys in the queue” • (Otherwise it offers no ordering guarantees)

![MULTI-QUEUE [ABKLN 2018]: A CONCURRENT RELAXED QUEUE • Pick your favourite sequential or concurrent MULTI-QUEUE [ABKLN 2018]: A CONCURRENT RELAXED QUEUE • Pick your favourite sequential or concurrent](http://slidetodoc.com/presentation_image_h2/d68a448b3bcaf7487d3d628dea37bc17/image-30.jpg)

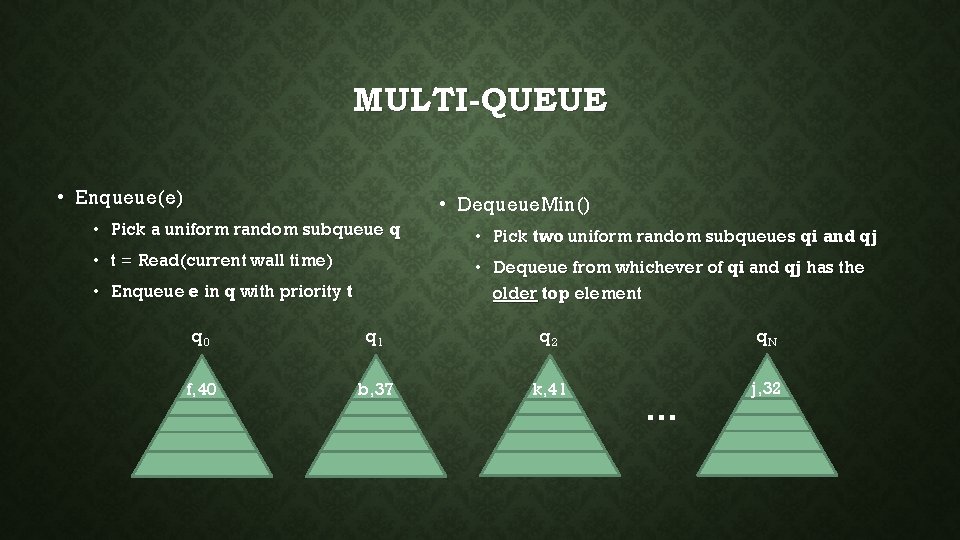

MULTI-QUEUE [ABKLN 2018]: A CONCURRENT RELAXED QUEUE • Pick your favourite sequential or concurrent priority queue implementation X • We will use X as an algorithmic building block • If X is sequential, we protect it with a lock • Idea: • Let N be the number of threads in the system • Assume threads have access to a consistent clock (wall time) • Create N separate priority queues of type X (called subqueues) • Threads will randomly pick subqueues to work on (in a particular way) • Prove dequeue operations return something “close” to the oldest key

PRIORITY QUEUE OBJECT • Stores keys and associated priorities • Operations: • Enqueue(e, pr) • Adds e to the priority queue with priority pr • Dequeue. Min() • Removes the highest priority element and returns it

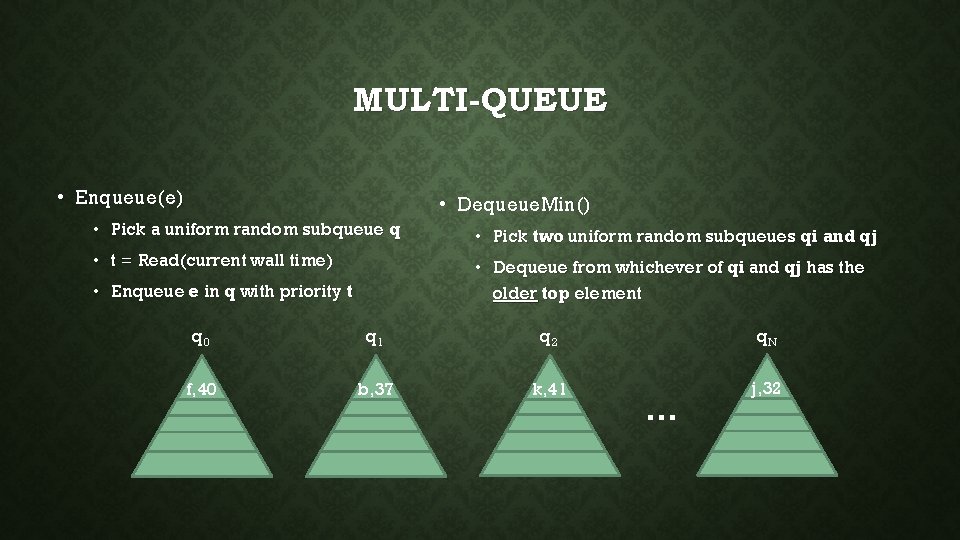

MULTI-QUEUE • Enqueue(e) • Dequeue. Min() • Pick a uniform random subqueue q • Pick two uniform random subqueues qi and qj • t = Read(current wall time) • Dequeue from whichever of qi and qj has the older top element • Enqueue e in q with priority t q 0 q 1 q 2 q. N f, 40 b, 37 k, 41 j, 32 …

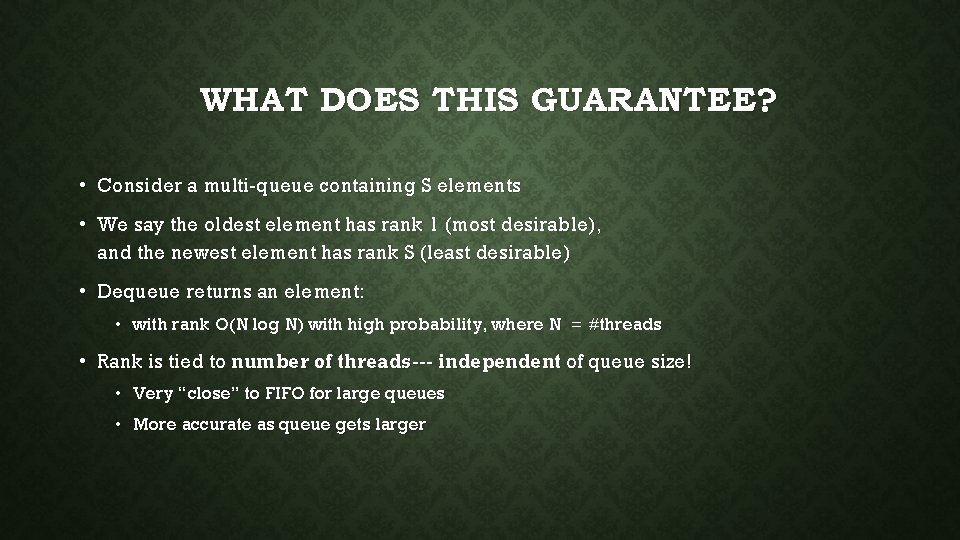

WHAT DOES THIS GUARANTEE? • Consider a multi-queue containing S elements • We say the oldest element has rank 1 (most desirable), and the newest element has rank S (least desirable) • Dequeue returns an element: • with rank O(N log N) with high probability, where N = #threads • Rank is tied to number of threads--- independent of queue size! • Very “close” to FIFO for large queues • More accurate as queue gets larger

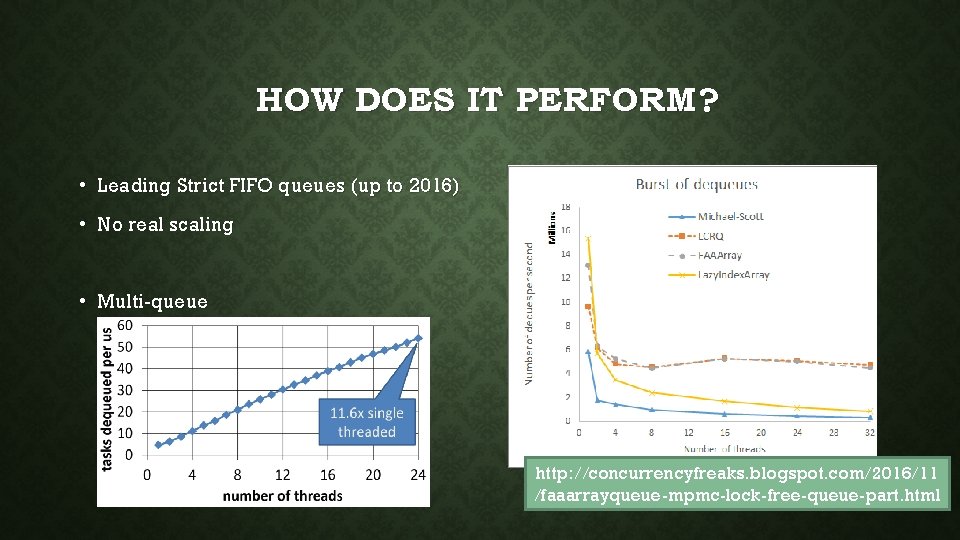

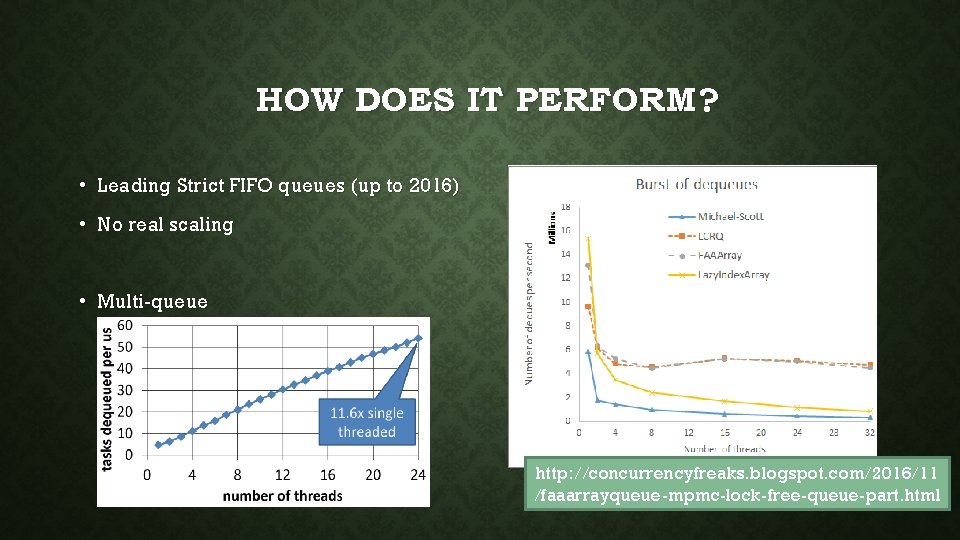

HOW DOES IT PERFORM? • Leading Strict FIFO queues (up to 2016) • No real scaling • Multi-queue http: //concurrencyfreaks. blogspot. com/2016/11 /faaarrayqueue-mpmc-lock-free-queue-part. html

HARNESSING DISORDER Concurrent relaxed queues

RELAXED QUEUE OBJECT • Operations: • Enqueue(e) • Adds element e to the back of the queue • Dequeue() • Removes some elementfrom the queue and returns it • Meaningless without a quality guarantee • For example: “dequeue returns one of the k oldest keys in the queue” • (Otherwise it offers no ordering guarantees)

![MULTI-QUEUE [ABKLN 2018]: A CONCURRENT RELAXED QUEUE • Pick your favourite sequential or concurrent MULTI-QUEUE [ABKLN 2018]: A CONCURRENT RELAXED QUEUE • Pick your favourite sequential or concurrent](http://slidetodoc.com/presentation_image_h2/d68a448b3bcaf7487d3d628dea37bc17/image-37.jpg)

MULTI-QUEUE [ABKLN 2018]: A CONCURRENT RELAXED QUEUE • Pick your favourite sequential or concurrent priority queue implementation X • We will use X as an algorithmic building block • If X is sequential, we protect it with a lock • Idea: • Let N be the number of threads in the system • Assume threads have access to a consistent clock (wall time) • Create N separate priority queues of type X (called subqueues) • Threads will randomly pick subqueues to work on (in a particular way) • Prove dequeue operations return something “close” to the oldest key

PRIORITY QUEUE OBJECT • Stores keys and associated priorities • Operations: • Enqueue(e, pr) • Adds e to the priority queue with priority pr • Dequeue. Min() • Removes the highest priority element and returns it

MULTI-QUEUE • Enqueue(e) • Dequeue. Min() • Pick a uniform random subqueue q • Pick two uniform random subqueues qi and qj • t = Read(current wall time) • Dequeue from whichever of qi and qj has the older top element • Enqueue e in q with priority t q 0 q 1 q 2 q. N f, 40 b, 37 k, 41 j, 32 …

WHAT DOES THIS GUARANTEE? • Consider a multi-queue containing S elements • We say the oldest element has rank 1 (most desirable), and the newest element has rank S (least desirable) • Dequeue returns an element: • with rank O(N log N) with high probability, where N = #threads • Rank is tied to number of threads--- independent of queue size! • Very “close” to FIFO for large queues • More accurate as queue gets larger

HOW DOES IT PERFORM? • Leading Strict FIFO queues (up to 2016) • No real scaling • Multi-queue http: //concurrencyfreaks. blogspot. com/2016/11 /faaarrayqueue-mpmc-lock-free-queue-part. html

RECAP • Complete linearizability proof for our lock-free stack • Challenges of actually using stacks/queues and other ordered data structures • Strictly ordered data structures such as queues • limited concurrency • algorithms such as BFS cannot easily harness this strict ordering • Relaxed data structures • somewhat ordered --- allow some inversions in the strict ordering (better scalability) • Ordered vs unordered sets

- Slides: 42