M Sc Thesis Defense Beyond Actions Discriminative Models

M. Sc. Thesis Defense Beyond Actions: Discriminative Models for Contextual Group Activities Tian Lan School of Computing Science Simon Fraser University August 12, 2010

Outline • Introduction • Group Activity Recognition with Context – Structure-level (latent structures) – Feature-level (Action Context descriptor) • Experiments

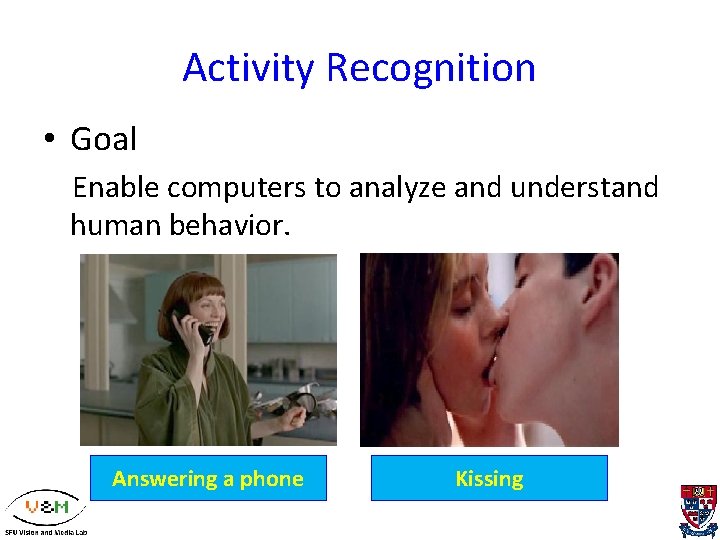

Activity Recognition • Goal Enable computers to analyze and understand human behavior. Answering a phone Kissing

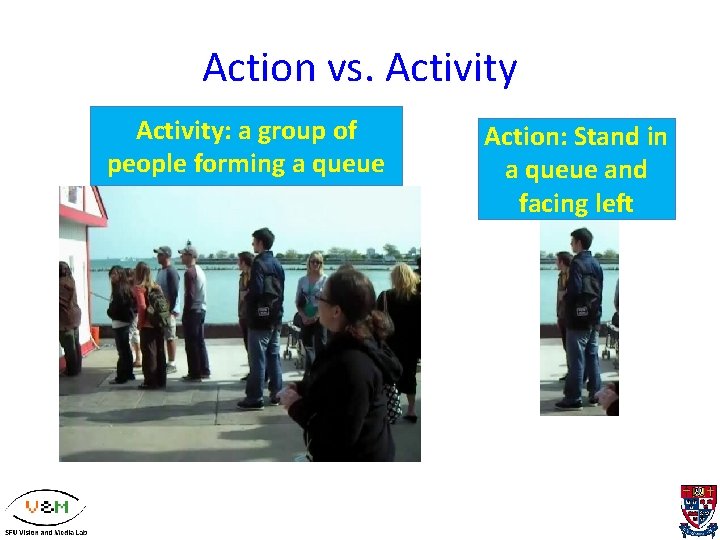

Action vs. Activity: a group of people forming a queue Action: Stand in a queue and facing left

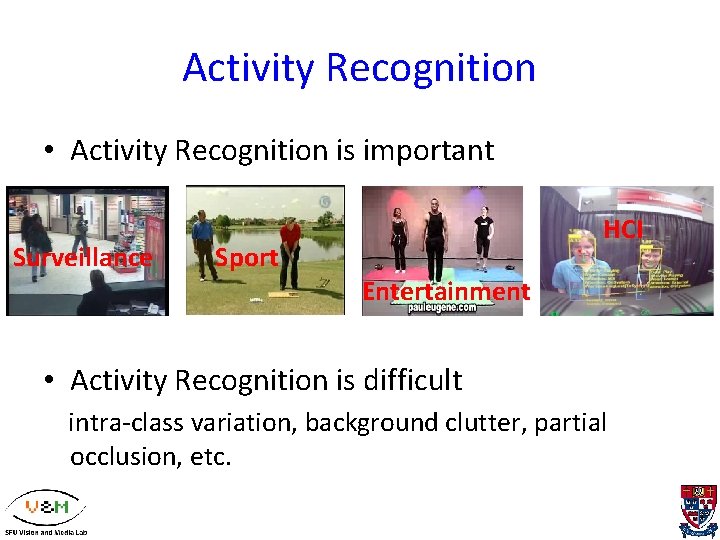

Activity Recognition • Activity Recognition is important Surveillance Sport HCI Entertainment • Activity Recognition is difficult intra-class variation, background clutter, partial occlusion, etc.

Group Activity Recognition • Motivation human actions are rarely performed in isolation, the actions of individuals in a group can serve as context for each other. • Goal explore the benefit of contextual information in group activity recognition in challenging real -world applications

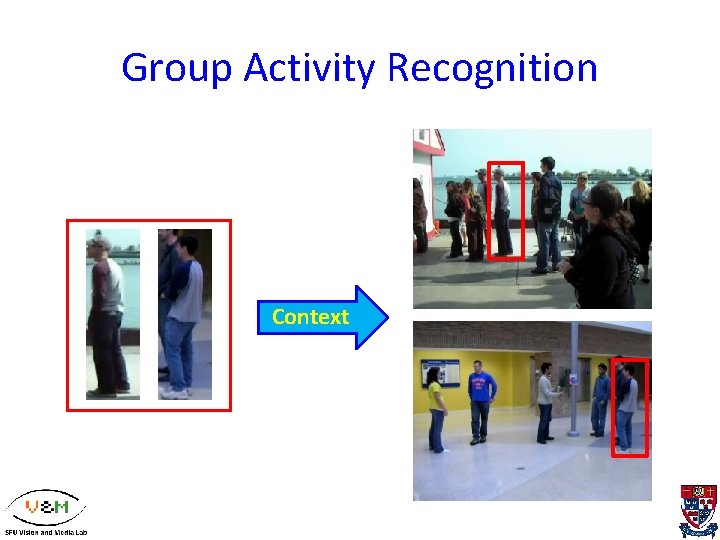

Group Activity Recognition Context

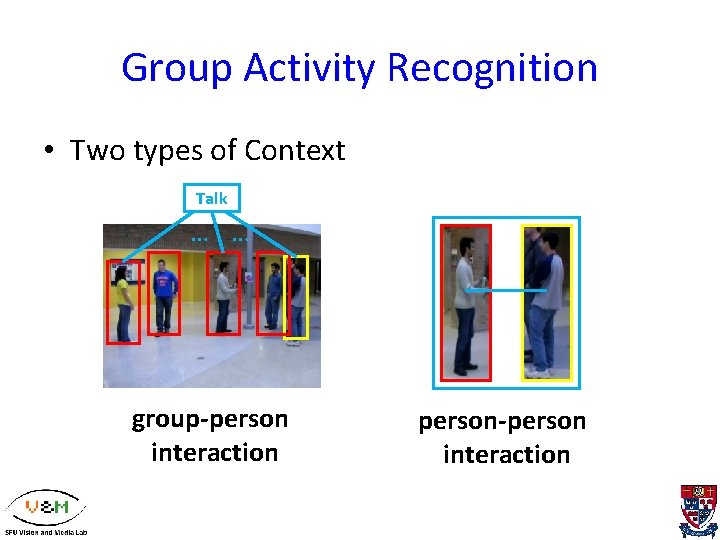

Group Activity Recognition • Two types of Context Talk … … group-person interaction person-person interaction

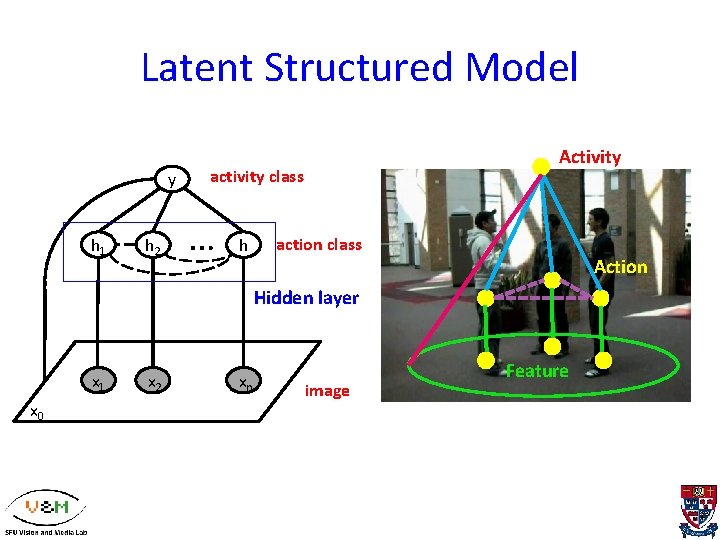

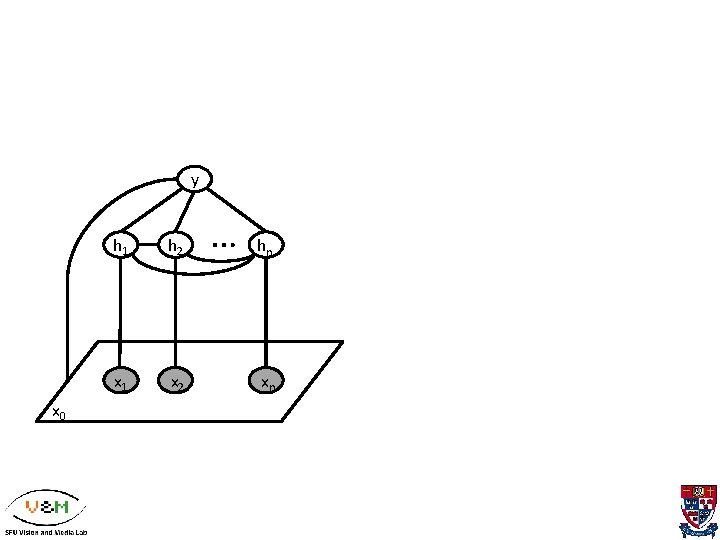

Latent Structured Model y h 1 h 2 Activity activity class … hy action class Action Hidden layer x 1 x 0 x 2 xn image Feature

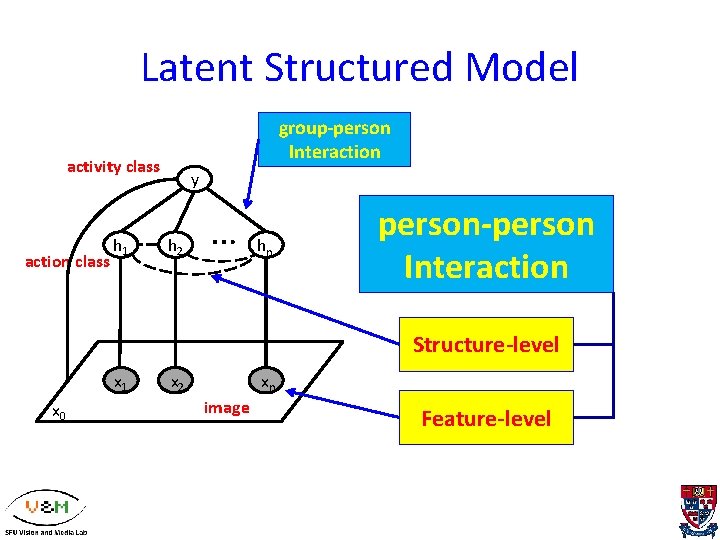

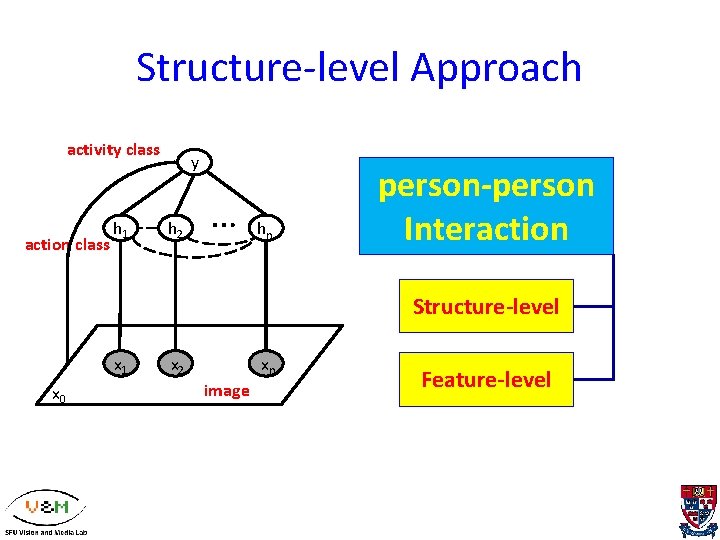

Latent Structured Model group-person Interaction activity class action class h 1 y h 2 … hyn person-person Interaction Structure-level x 1 x 0 x 2 xn image Feature-level

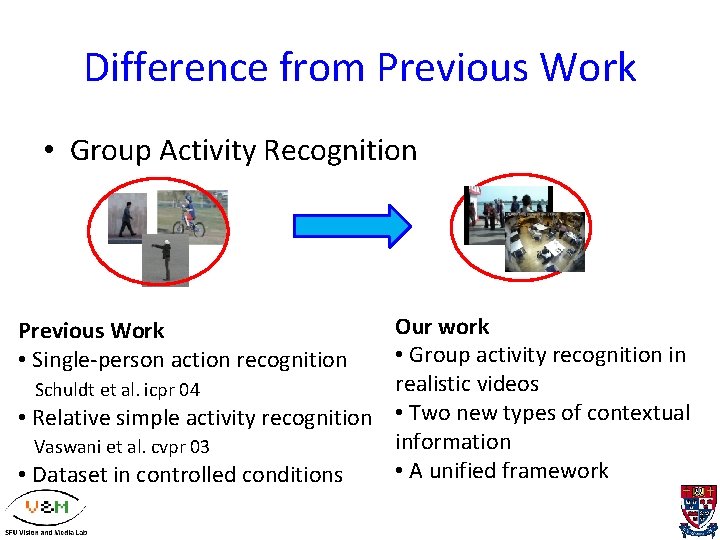

Difference from Previous Work • Group Activity Recognition Our work • Group activity recognition in realistic videos Schuldt et al. icpr 04 • Relative simple activity recognition • Two new types of contextual information Vaswani et al. cvpr 03 • A unified framework • Dataset in controlled conditions Previous Work • Single-person action recognition

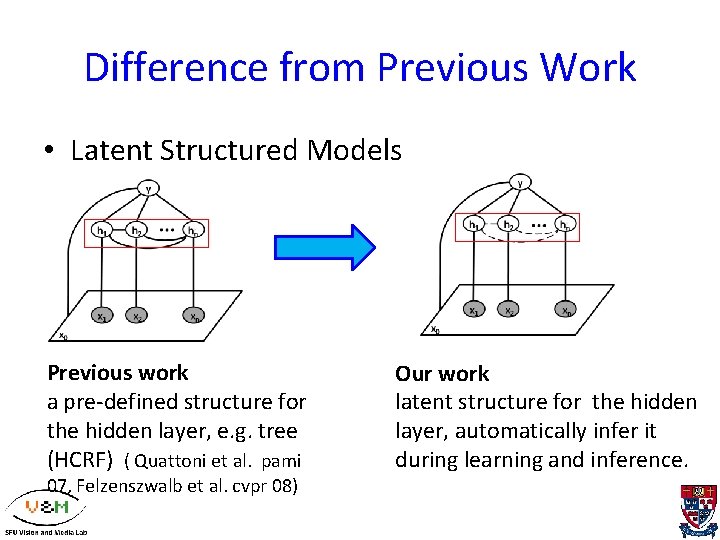

Difference from Previous Work • Latent Structured Models Previous work a pre-defined structure for the hidden layer, e. g. tree (HCRF) ( Quattoni et al. pami 07, Felzenszwalb et al. cvpr 08) Our work latent structure for the hidden layer, automatically infer it during learning and inference.

Outline • Introduction • Group Activity Recognition with Context – Structure-level (latent structures) – Feature-level (Action Context descriptor) • Experiments

Structure-level Approach activity class action class h 1 y h 2 … hyn person-person Interaction Structure-level x 1 x 0 x 2 xn image Feature-level

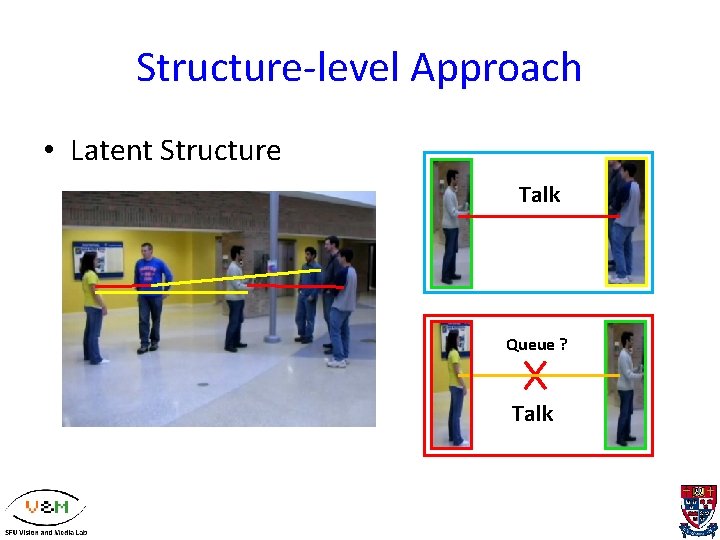

Structure-level Approach • Latent Structure Talk Queue ? Talk

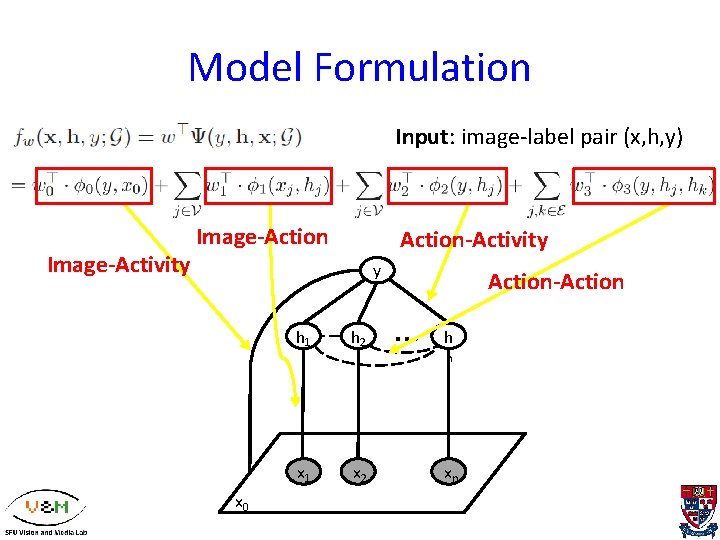

Model Formulation Input: image-label pair (x, h, y) Image-Activity Image-Action-Activity y h 1 x 0 h 2 x 2 Action-Action … hy n xn

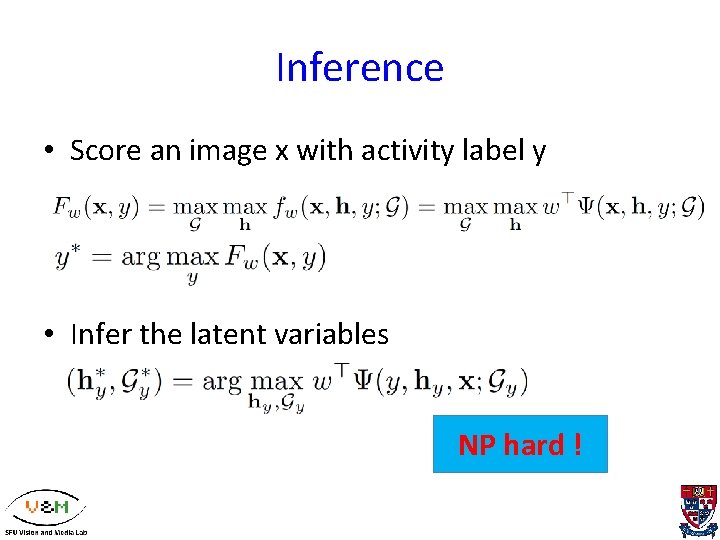

Inference • Score an image x with activity label y • Infer the latent variables NP hard !

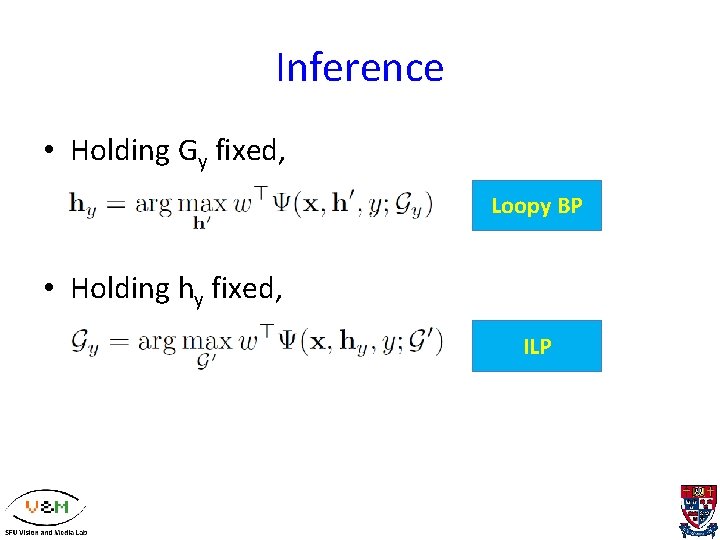

Inference • Holding Gy fixed, Loopy BP • Holding hy fixed, ILP

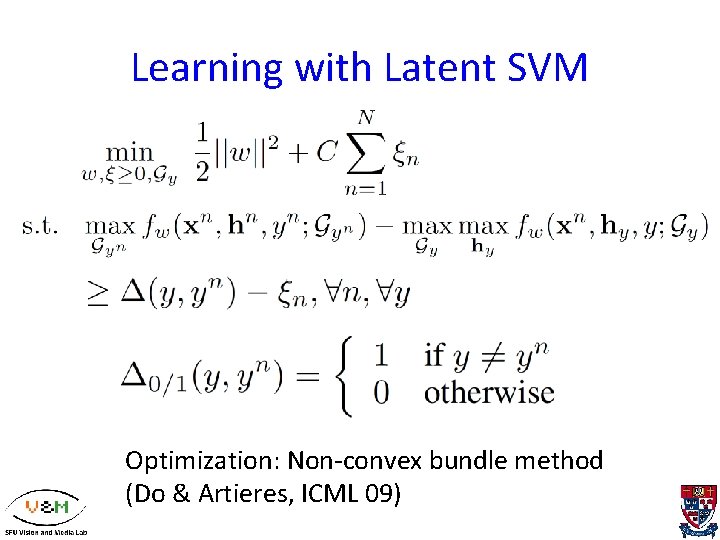

Learning with Latent SVM Optimization: Non-convex bundle method (Do & Artieres, ICML 09)

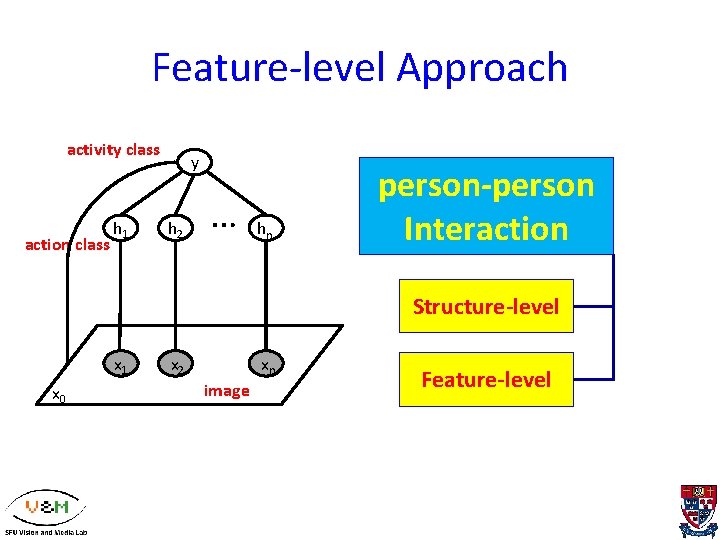

Feature-level Approach activity class action class h 1 y h 2 … hyn person-person Interaction Structure-level x 1 x 0 x 2 xn image Feature-level

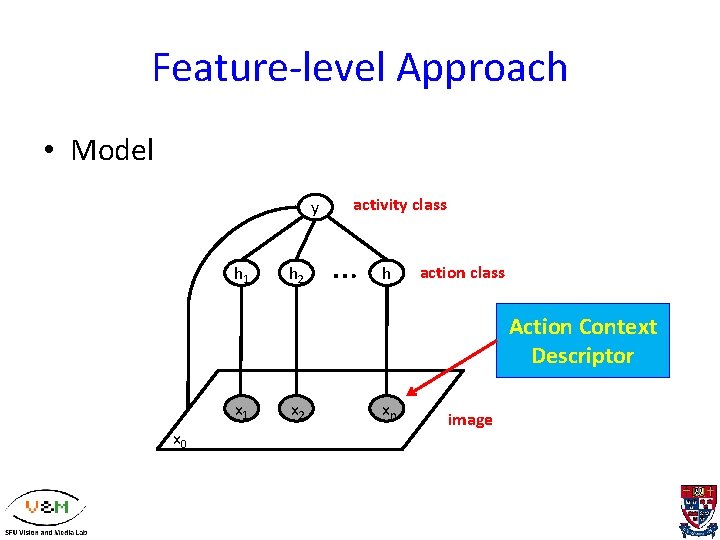

Feature-level Approach • Model y h 1 h 2 activity class … hy action class Action Context Descriptor x 1 x 0 x 2 xn image

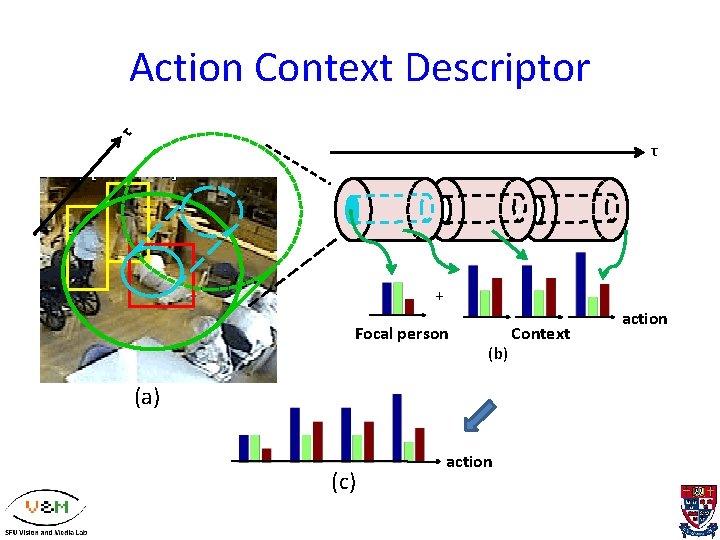

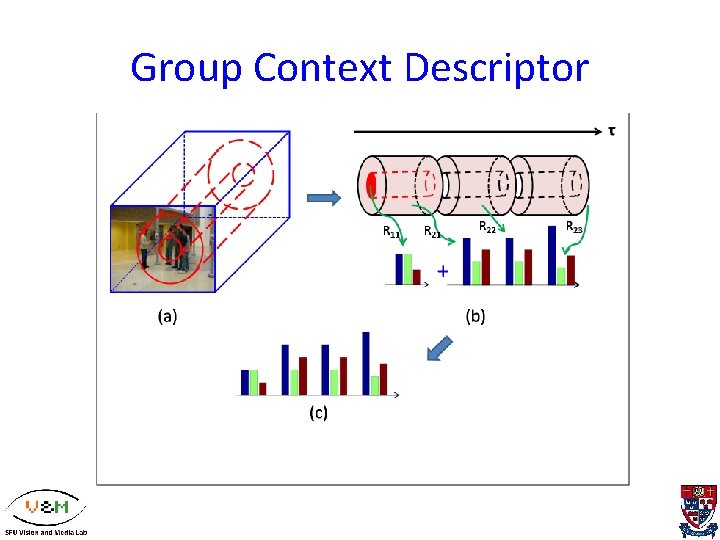

τ Action Context Descriptor τ z + Focal person (b) (a) (c) action Context action

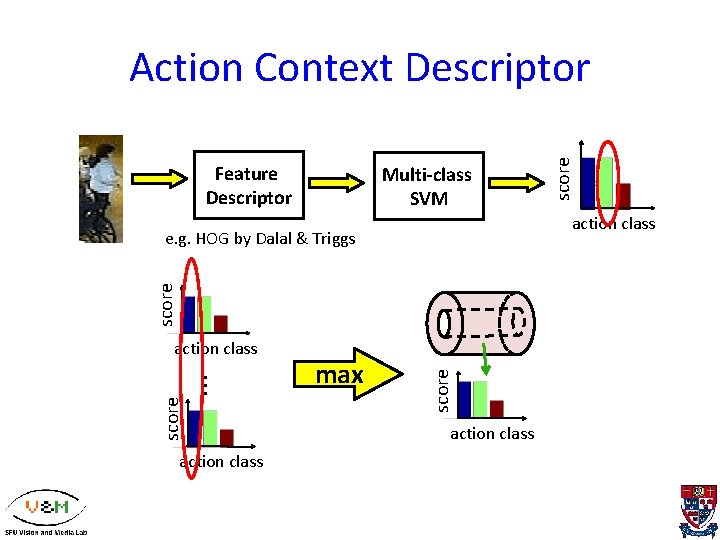

Feature Descriptor Multi-class SVM action class score … action class max score e. g. HOG by Dalal & Triggs action class score Action Context Descriptor action class

Outline • Introduction • Group Activity Recognition with Context – Structure-level (latent structures) – Feature-level (Action Context descriptor) • Experiments

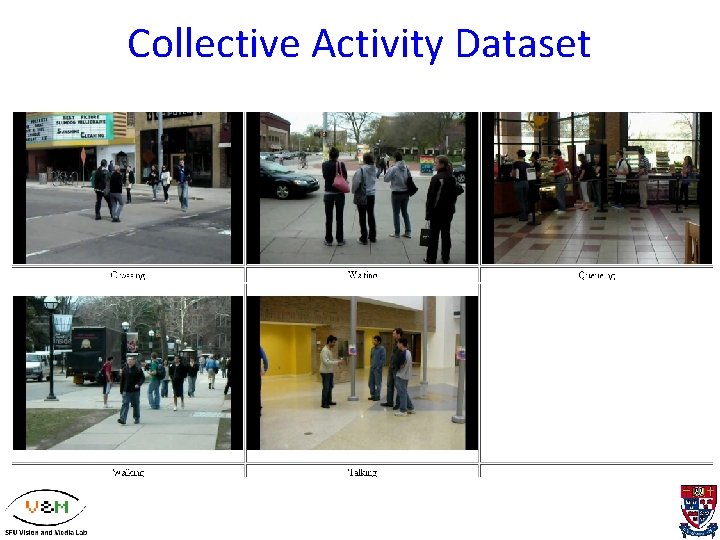

Dataset • Collective Activity Dataset (Choi et al. VS 09) • 5 action categories: crossing, waiting, queuing, walking, talking. (per person) • 44 video clips

Collective Activity Dataset

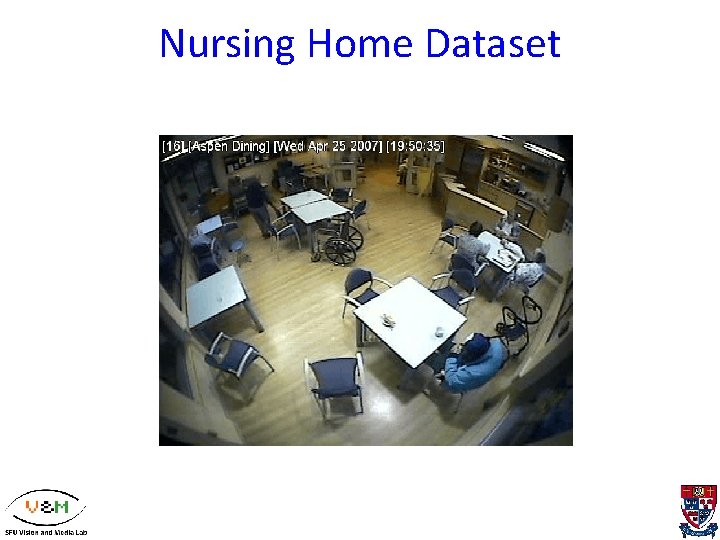

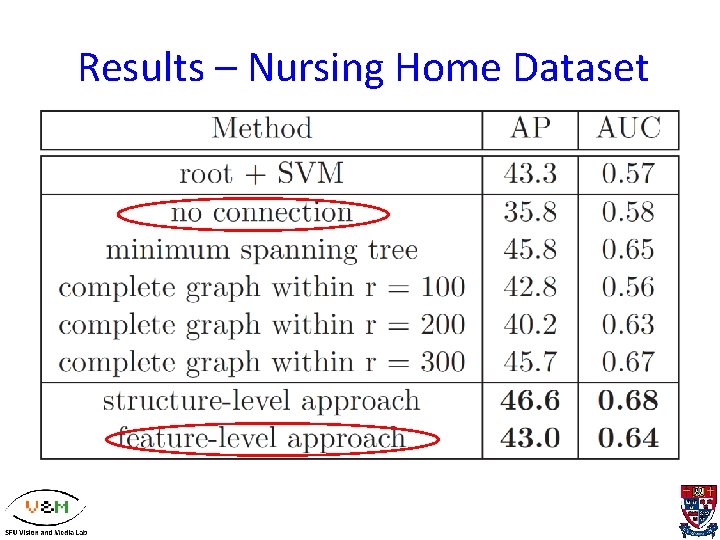

Dataset • Nursing Home Dataset • activity categories: fall, non-fall. (per image) • 5 action categories: walking, standing, sitting, bending and falling. (per person) • In total 22 video clips (2990 frames), 8 clips for test, the rest for training. 1/3 are labeled as fall.

Nursing Home Dataset

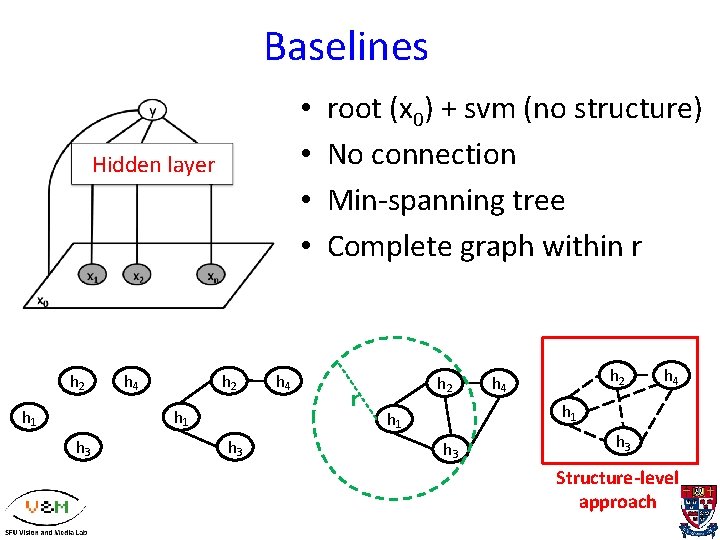

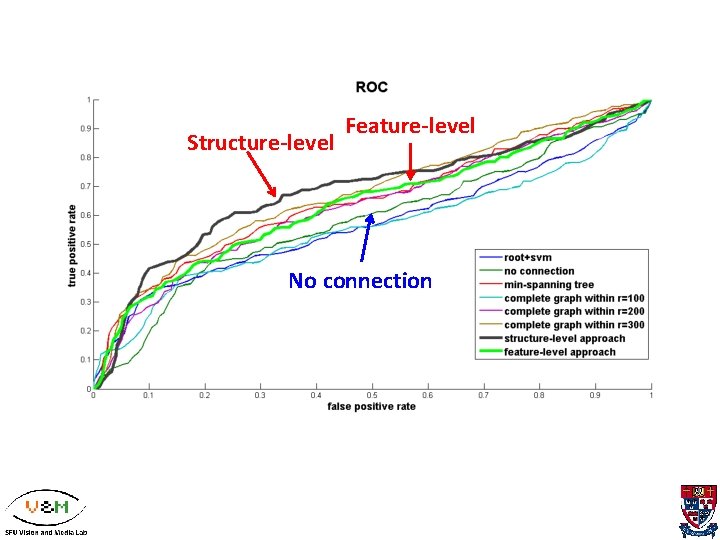

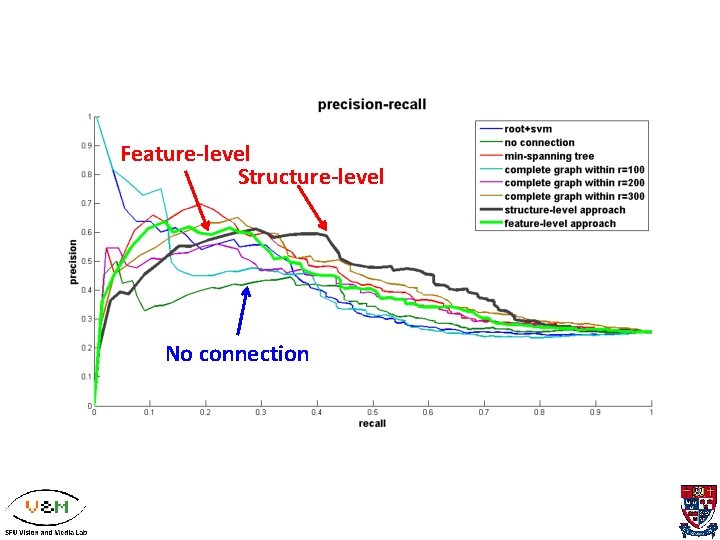

Baselines • • Hidden layer h 2 h 1 h 2 h 4 h 1 h 3 h 4 root (x 0) + svm (no structure) No connection Min-spanning tree Complete graph within r r h 2 h 4 h 1 h 3 Structure-level approach

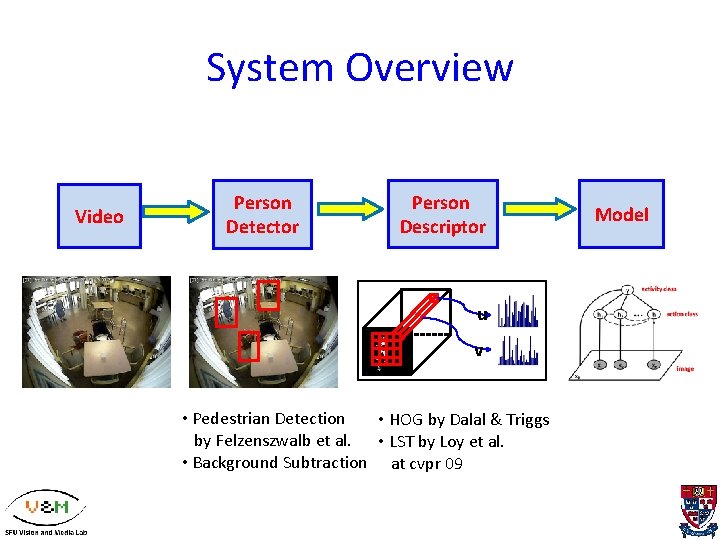

System Overview Video Person Detector Person Descriptor u v • Pedestrian Detection • HOG by Dalal & Triggs by Felzenszwalb et al. • LST by Loy et al. • Background Subtraction at cvpr 09 Model

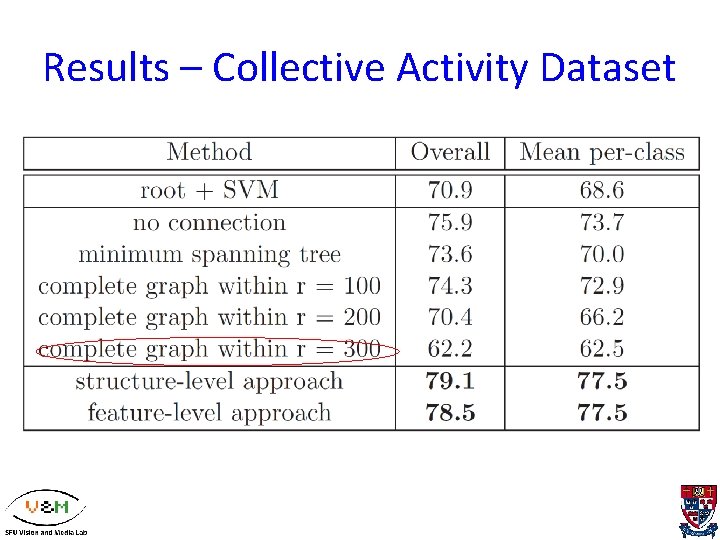

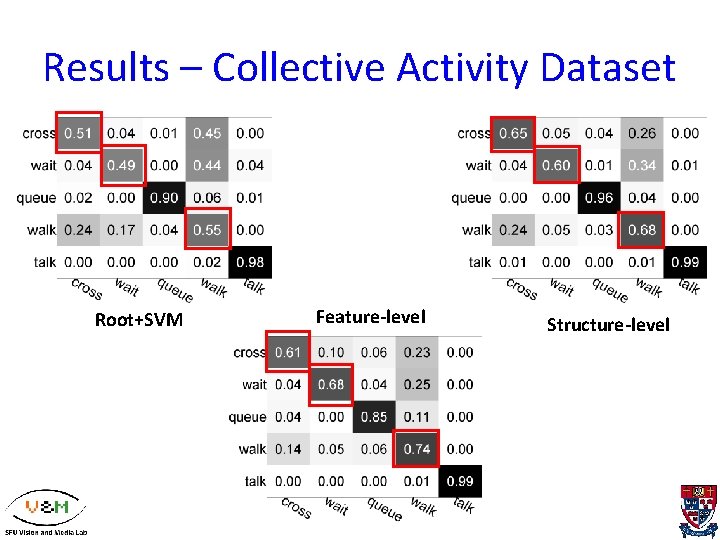

Results – Collective Activity Dataset

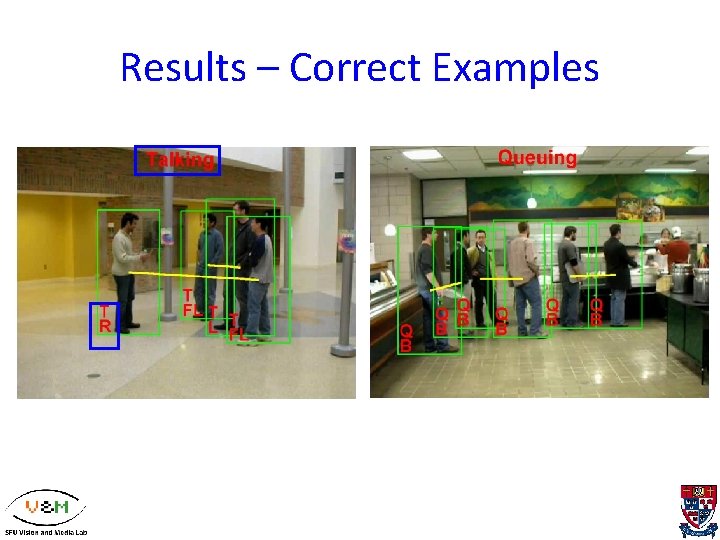

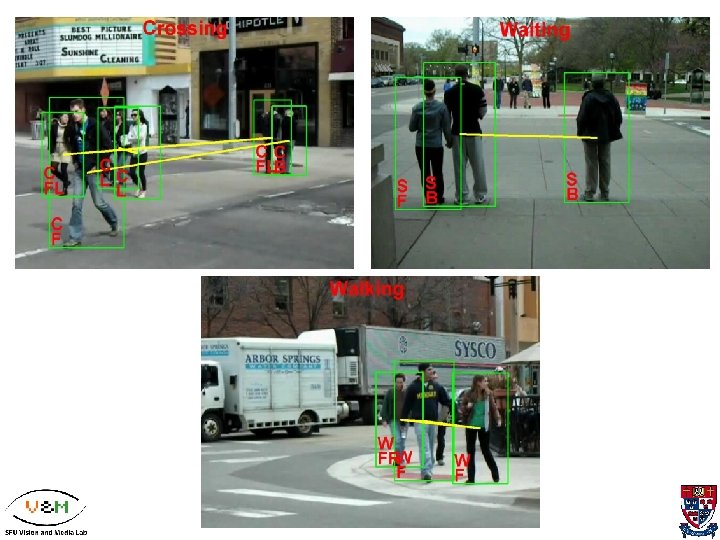

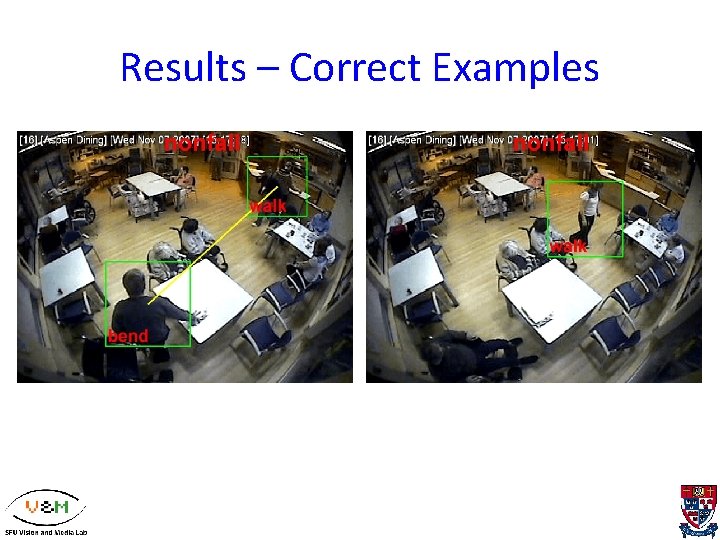

Results – Correct Examples

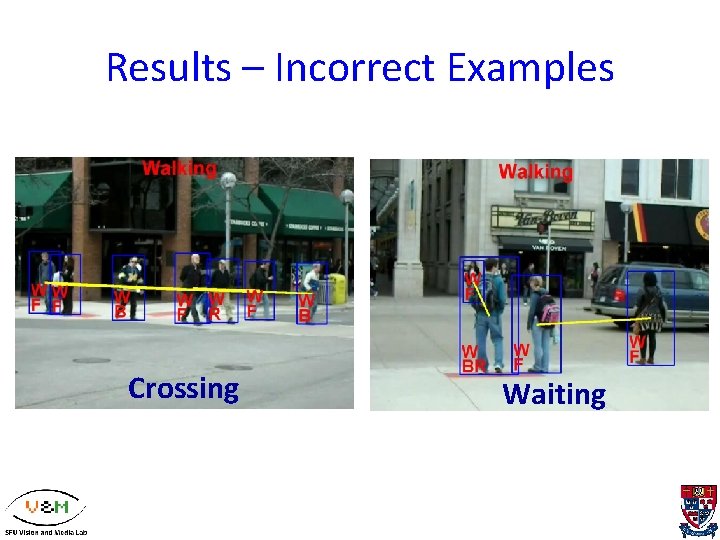

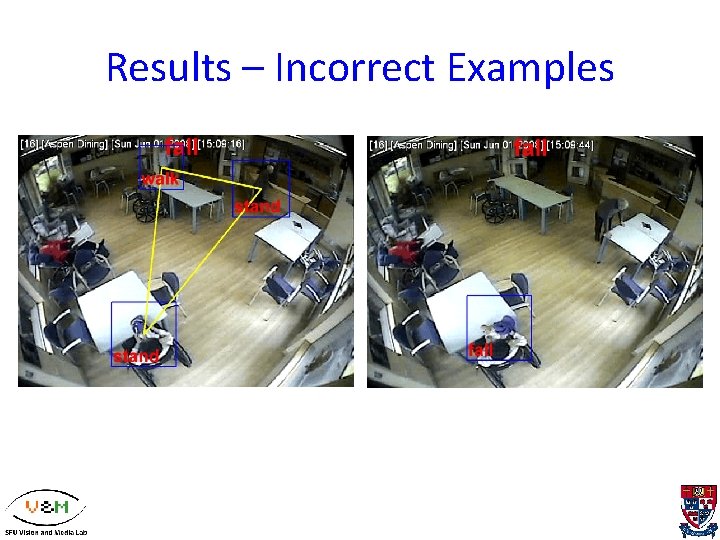

Results – Incorrect Examples Crossing Waiting

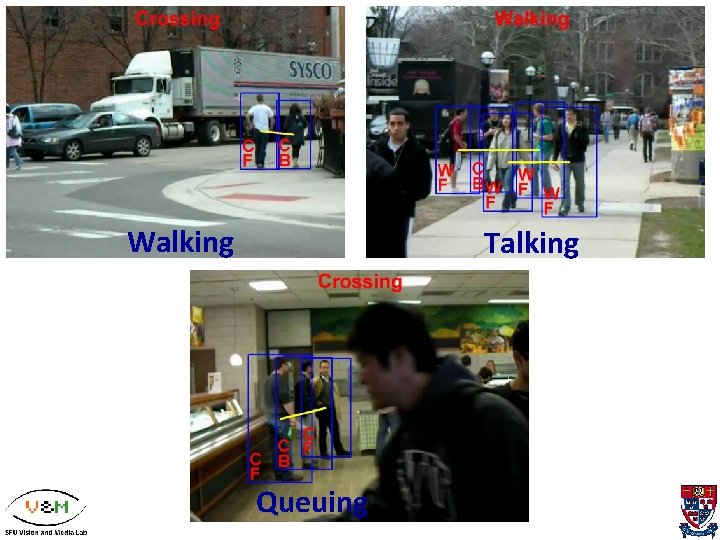

Walking Talking Queuing

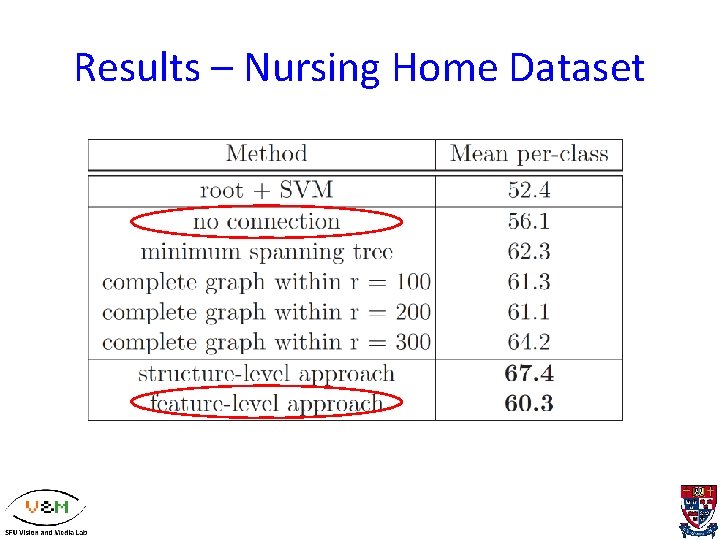

Results – Nursing Home Dataset

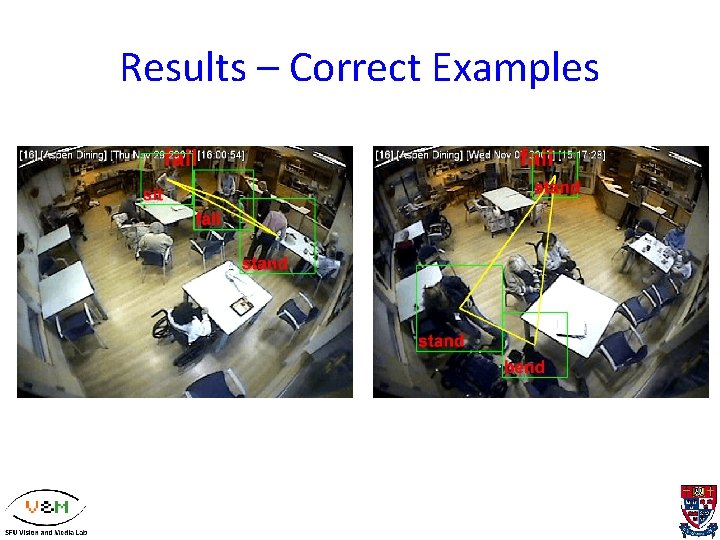

Results – Correct Examples

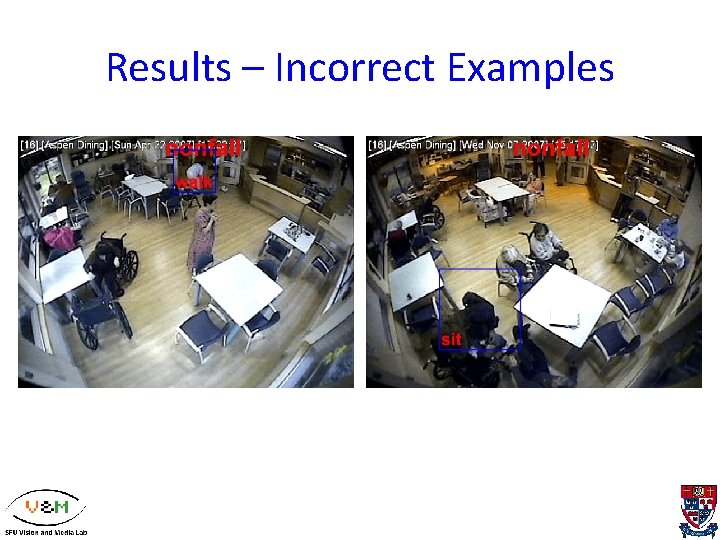

Results – Incorrect Examples

Conclusion • A discriminative model for group activity recognition with context. • Two new types of contextual information: – group-person interaction – person-person interaction • structure-level: Latent structure • Feature-level: Action Context descriptor • Experimental results demonstrate the effectiveness of the proposed model

Future Work • Modeling Complex Structures – Temporal dependencies among action • Contextual Feature Descriptors – How to encode discriminative context? • Weakly supervised Learning – e. g. multiple instance learning for fall detection

Thank you!

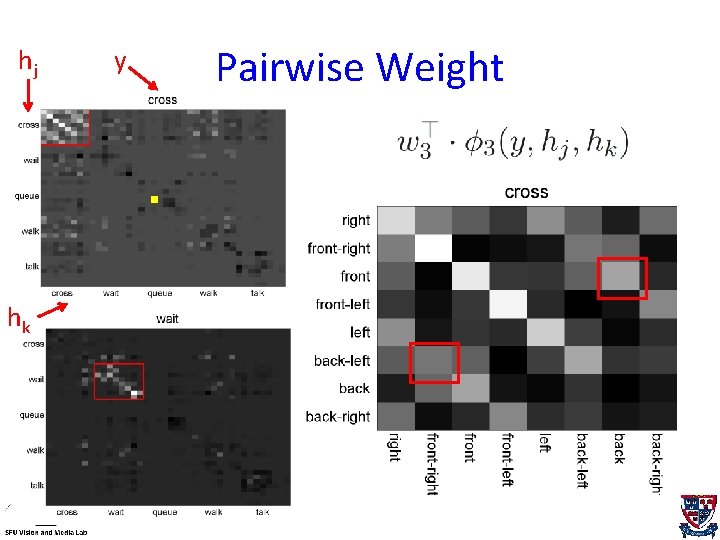

hj hk y Pairwise Weight

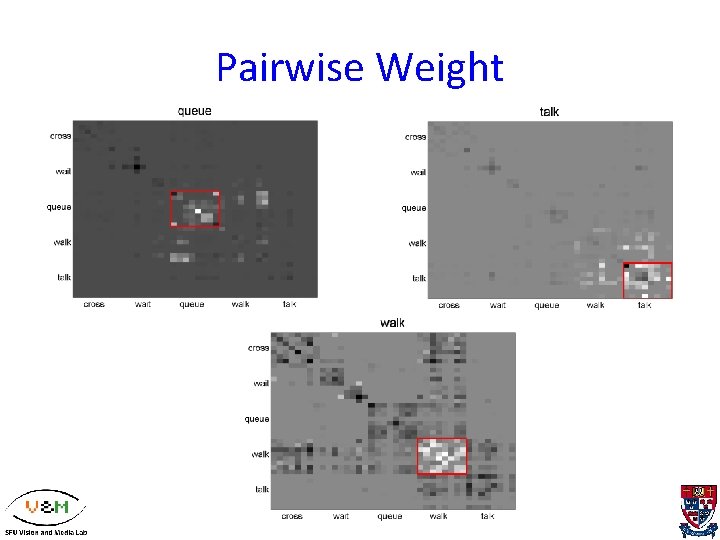

Pairwise Weight

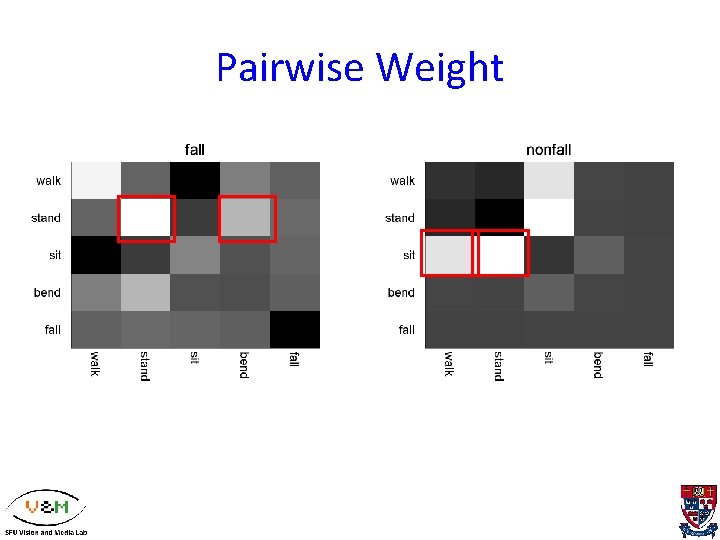

Pairwise Weight

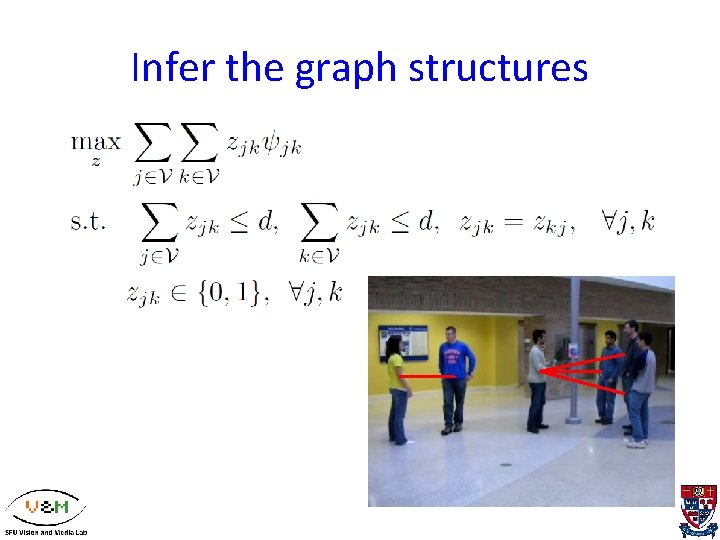

Infer the graph structures

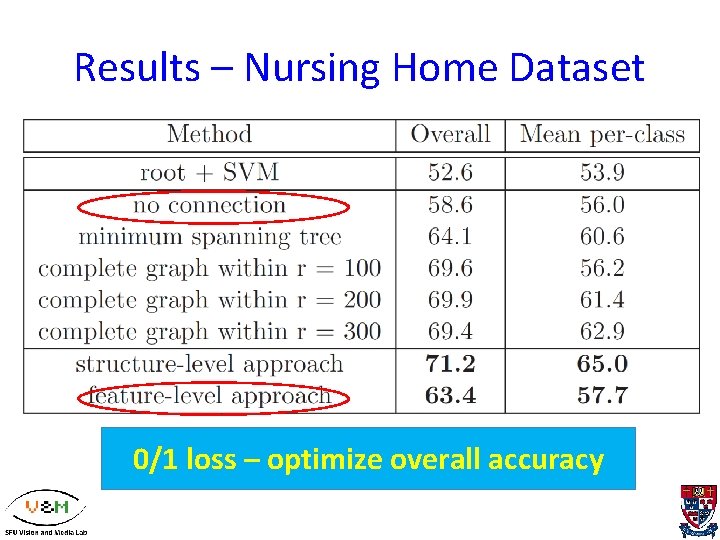

Results – Nursing Home Dataset 0/1 loss – optimize overall accuracy

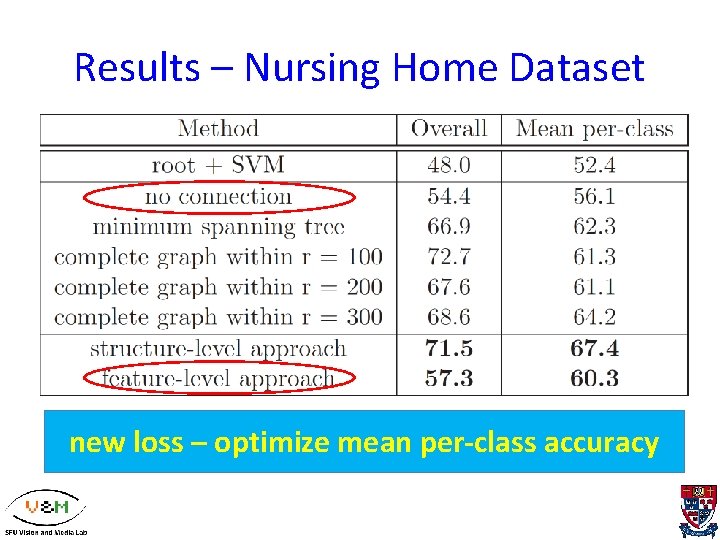

Results – Nursing Home Dataset new loss – optimize mean per-class accuracy

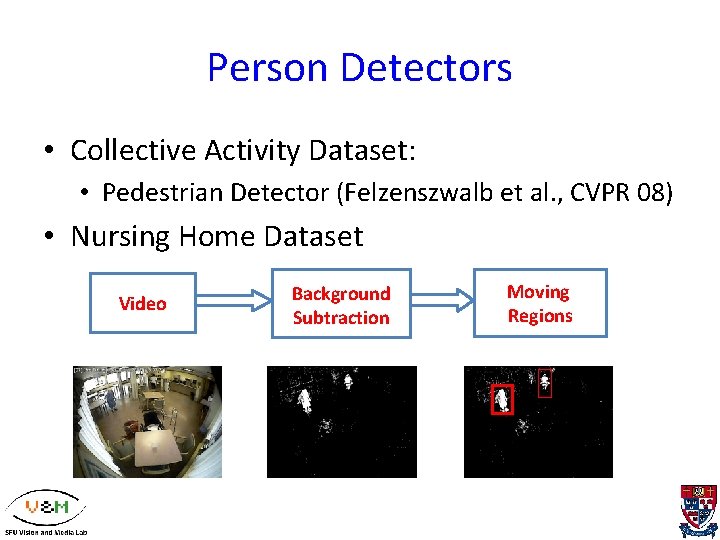

Person Detectors • Collective Activity Dataset: • Pedestrian Detector (Felzenszwalb et al. , CVPR 08) • Nursing Home Dataset Video Background Subtraction Moving Regions

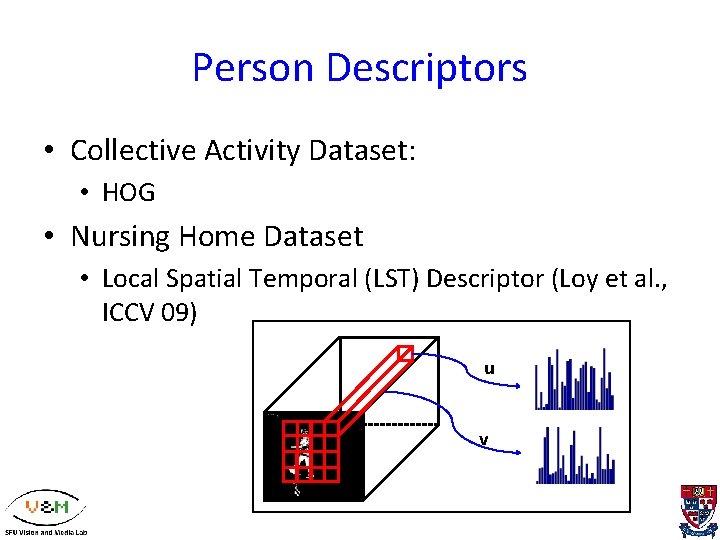

Person Descriptors • Collective Activity Dataset: • HOG • Nursing Home Dataset • Local Spatial Temporal (LST) Descriptor (Loy et al. , ICCV 09) u v

Results – Correct Examples

Results – Incorrect Examples

Results – Collective Activity Dataset Root+SVM Feature-level Structure-level

Group Context Descriptor

y x 0 h 1 h 2 x 1 x 2 … hyn xn

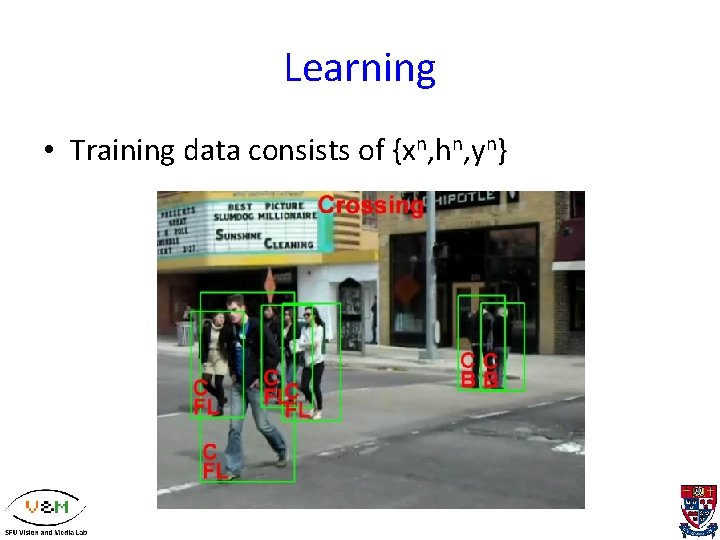

Learning • Training data consists of {xn, hn, yn}

Structure-level Feature-level No connection

Feature-level Structure-level No connection

Results – Nursing Home Dataset

- Slides: 59