JAWS JobAware Workload Scheduling for the Exploration of

JAWS: Job-Aware Workload Scheduling for the Exploration of Turbulence Simulations Tanu Malik Xiaodan Wang Johns Purdue Hopkins University Eric Perlman Randal Burns Tamas Budavari Charles Meneveau Alexander Szalay

Ensure high throughput for concurrent accesses to peta. Problem scale Scientific datasets l Turbulence Database Cluster – A new approach to data exploration l l – – Traditionally analyze dynamics on the fly Large simulations out of reach for many Scientists Stores complete space-time histories of DNS Exploration by querying simulation result 27 TB (velocity and pressure data on 10243 grid) Available to wide community over the Web JAWS: Job-Aware Workload Scheduling

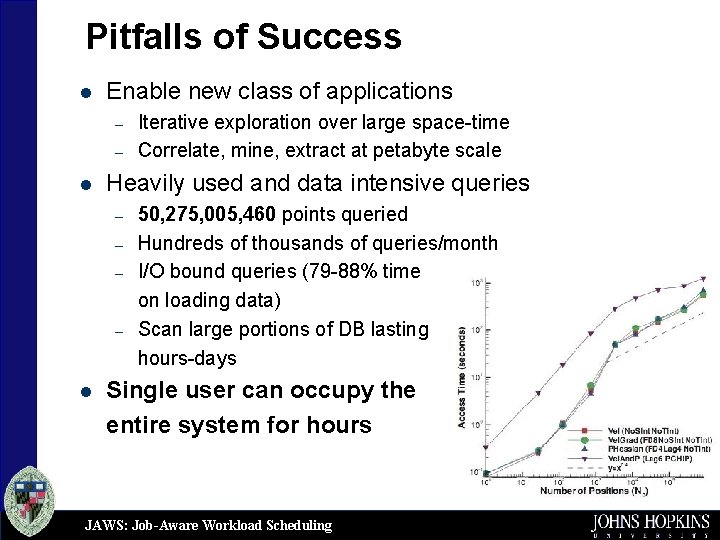

Pitfalls of Success l Enable new class of applications – – l Heavily used and data intensive queries – – l Iterative exploration over large space-time Correlate, mine, extract at petabyte scale 50, 275, 005, 460 points queried Hundreds of thousands of queries/month I/O bound queries (79 -88% time on loading data) Scan large portions of DB lasting hours-days Single user can occupy the entire system for hours JAWS: Job-Aware Workload Scheduling

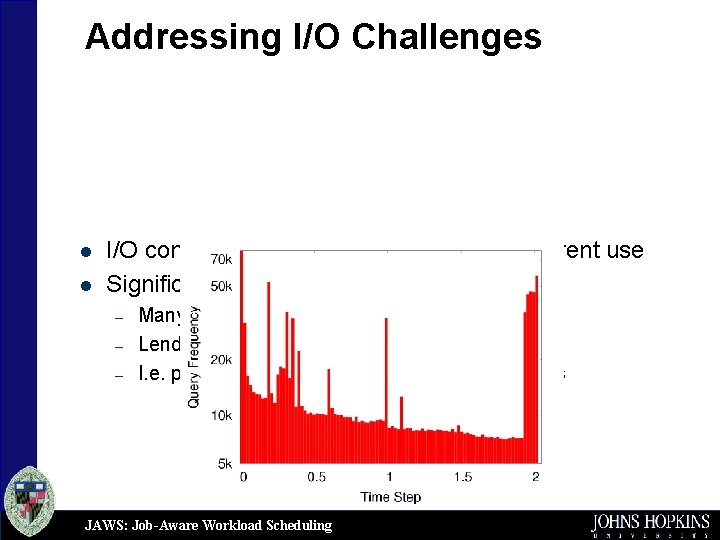

Addressing I/O Challenges l l I/O contention and congestion from concurrent use Significant data reuse between queries – – – Many large queries access the same data Lends to batch scheduling I. e. particles may cluster in turbulence structures JAWS: Job-Aware Workload Scheduling

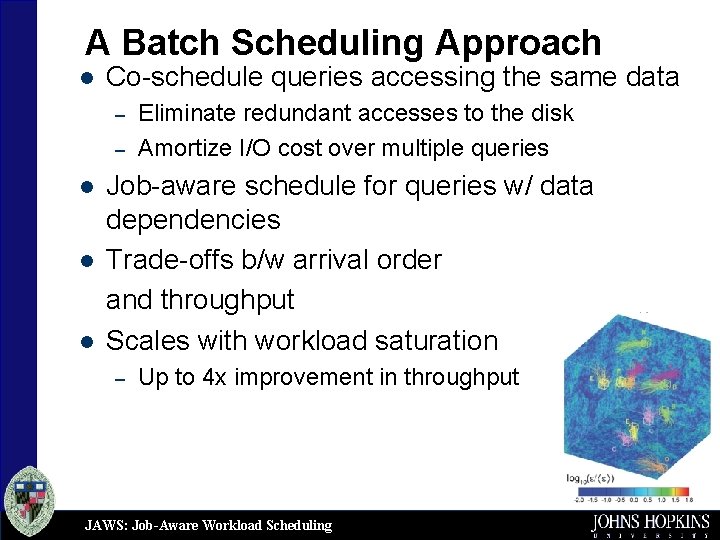

A Batch Scheduling Approach l Co-schedule queries accessing the same data – – l l l Eliminate redundant accesses to the disk Amortize I/O cost over multiple queries Job-aware schedule for queries w/ data dependencies Trade-offs b/w arrival order and throughput Scales with workload saturation – Up to 4 x improvement in throughput JAWS: Job-Aware Workload Scheduling

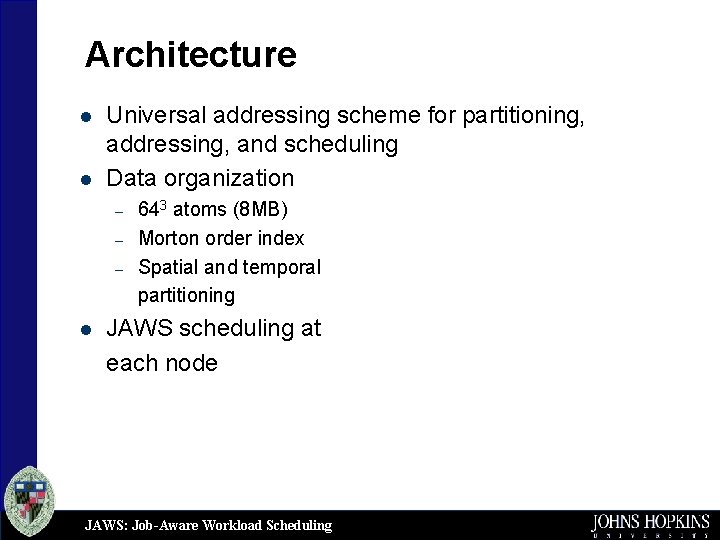

Architecture l l Universal addressing scheme for partitioning, addressing, and scheduling Data organization – – – l 643 atoms (8 MB) Morton order index Spatial and temporal partitioning JAWS scheduling at each node JAWS: Job-Aware Workload Scheduling

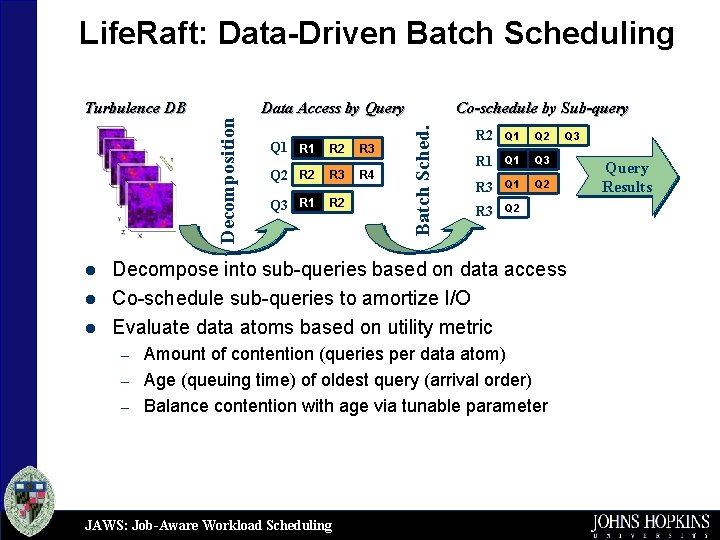

l l l Data Access by Query Q 1 R 2 R 3 Q 2 R 3 R 4 Q 3 R 1 R 2 Co-schedule by Sub-query Batch Sched. Turbulence DB Decomposition Life. Raft: Data-Driven Batch Scheduling R 2 Q 1 Q 2 R 1 Q 3 R 3 Q 1 Q 2 R 3 Q 2 Q 3 Decompose into sub-queries based on data access Co-schedule sub-queries to amortize I/O Evaluate data atoms based on utility metric – – – Amount of contention (queries per data atom) Age (queuing time) of oldest query (arrival order) Balance contention with age via tunable parameter JAWS: Job-Aware Workload Scheduling Query Results

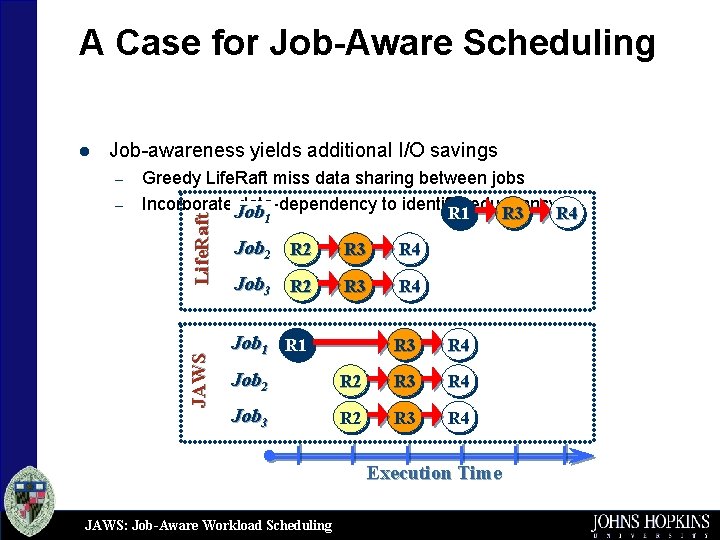

A Case for Job-Aware Scheduling Job-awareness yields additional I/O savings – JAWS – Greedy Life. Raft miss data sharing between jobs Incorporate. Job data-dependency to identify redundancy R 1 R 3 R 4 1 Life. Raft l Job 2 R 3 R 4 Job 3 R 2 R 3 R 4 Job 1 R 3 R 4 Job 2 R 3 R 4 Job 3 R 2 R 3 R 4 Execution Time JAWS: Job-Aware Workload Scheduling

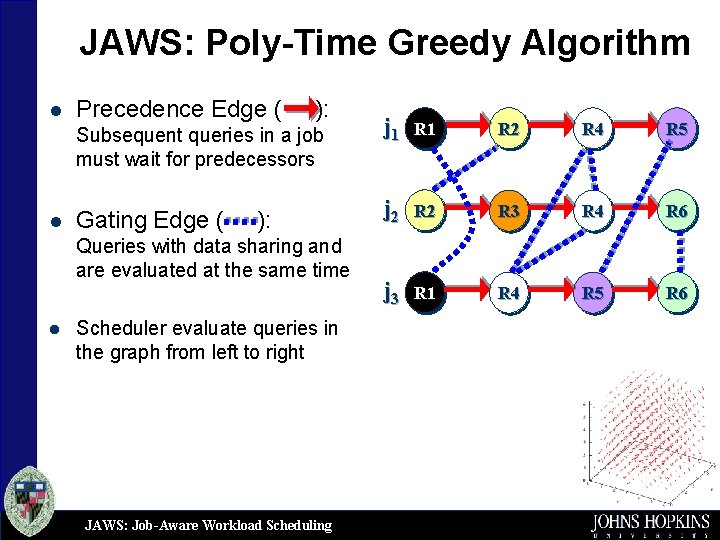

JAWS: Poly-Time Greedy Algorithm l Precedence Edge ( ): Subsequent queries in a job must wait for predecessors l Gating Edge ( ): Queries with data sharing and are evaluated at the same time l Scheduler evaluate queries in the graph from left to right JAWS: Job-Aware Workload Scheduling j 1 R 2 R 4 R 5 j 2 R 3 R 4 R 6 j 3 R 1 R 4 R 5 R 6

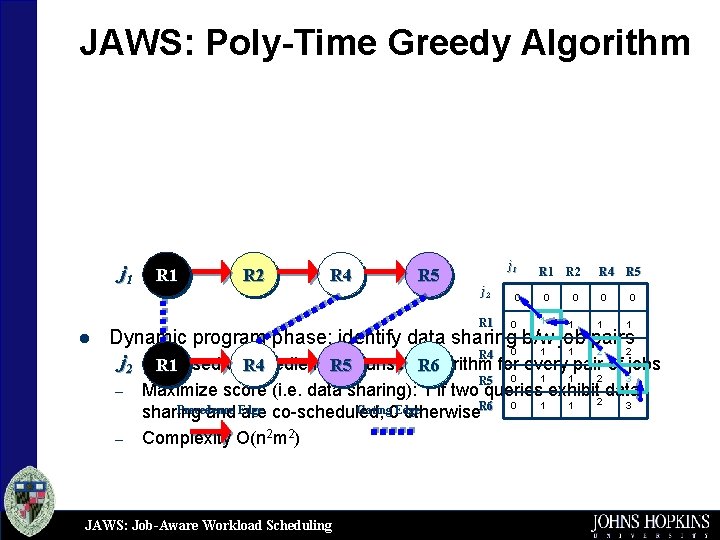

JAWS: Poly-Time Greedy Algorithm j 1 l R 1 R 2 R 4 R 5 j 1 j 2 0 R 1 R 2 0 0 R 4 R 5 0 0 R 1 0 1 1 R 5 0 1 1 2 3 Dynamic program phase: identify data sharing b/w job pairs 1 1 2 2 R 4 0 –j 2 DP algorithm for every pair of jobs R 1 based on. RNeedleman-Wunsch R 6 4 R 5 – – Maximize score (i. e. data sharing): 1 if two queries exhibit data 2 3 Precedence Gating 0 Edge sharing and Edge are co-scheduled, otherwise. R 6 0 1 1 Complexity O(n 2 m 2) JAWS: Job-Aware Workload Scheduling

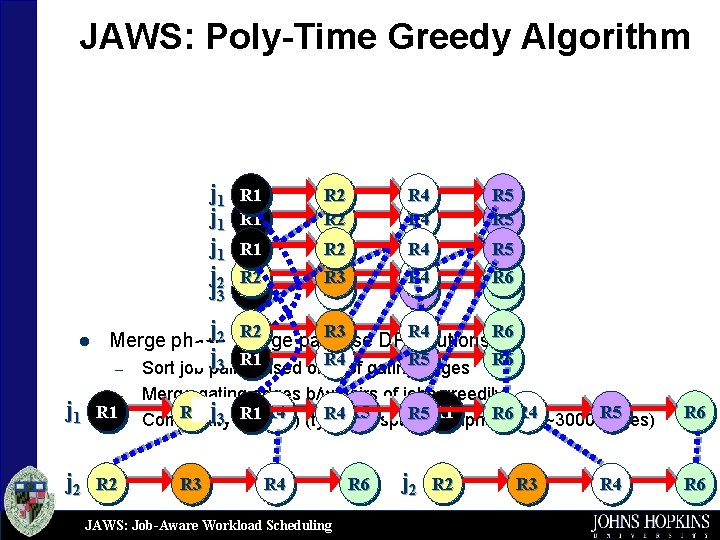

JAWS: Poly-Time Greedy Algorithm j 1 j 1 j j 32 l R 1 R 2 R 4 R 5 R 2 R 1 R 3 R 4 R 5 R 6 j R 2 R 3 R 4 j 3 R 1 based on. R 4 Sort job pairs # of gating. R 5 edges j 2 merge R 2 R 3 Merge phase: pairwise DP R 4 solutions R 6 – – j 1 R 1– j 2 R 6 R 6 Merge gating edges b/w pairs of jobs greedily j. R 5 R 2 j 3 O(n R 5 R 1 R 4 R 5 sparse 3 m 2) (typically 3 R 1 Complexity graphs. R 6 up. R 4 to ~3000 edges) R 3 R 4 JAWS: Job-Aware Workload Scheduling R 6 j 2 R 3 R 4 R 6

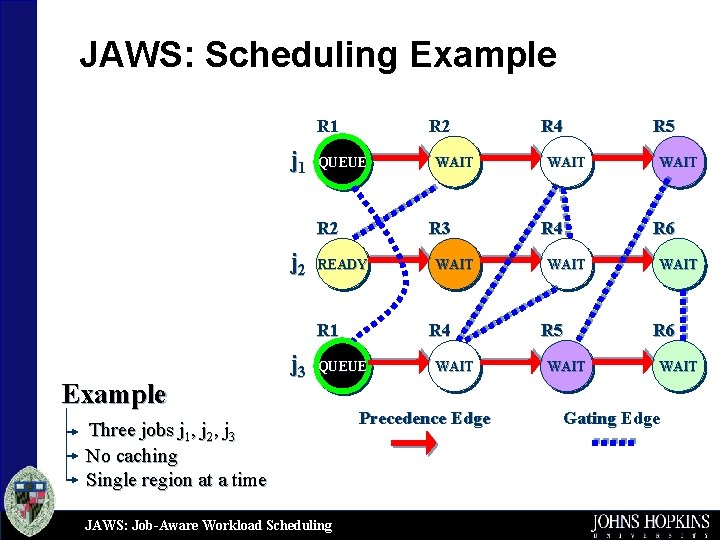

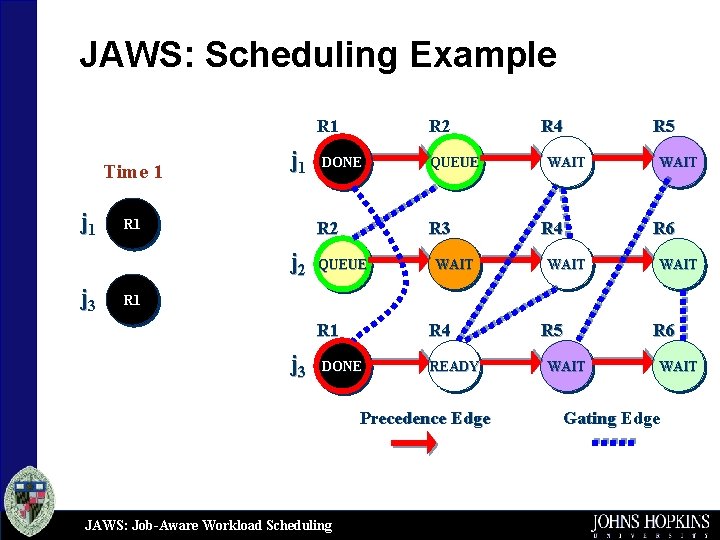

JAWS: Scheduling Example R 1 j 1 R 2 QUEUE R 2 j 2 R 3 READY R 1 Example j 3 JAWS: Job-Aware Workload Scheduling WAIT R 4 QUEUE Three jobs j 1, j 2, j 3 No caching Single region at a time WAIT Precedence Edge R 4 WAIT R 5 WAIT R 6 WAIT Gating Edge

JAWS: Scheduling Example R 1 Time 1 j 1 R 1 DONE R 2 j 3 R 2 QUEUE R 3 QUEUE WAIT R 4 WAIT R 5 WAIT R 6 WAIT R 1 j 3 R 4 DONE READY Precedence Edge JAWS: Job-Aware Workload Scheduling R 5 WAIT R 6 WAIT Gating Edge

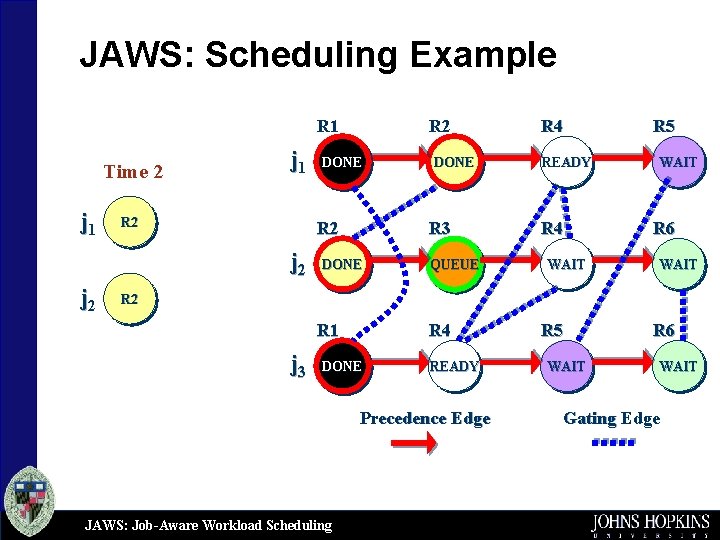

JAWS: Scheduling Example R 1 Time 2 j 1 R 2 DONE R 2 j 2 R 2 DONE R 3 DONE QUEUE R 4 READY R 4 WAIT R 5 WAIT R 6 WAIT R 2 R 1 j 3 R 4 DONE READY Precedence Edge JAWS: Job-Aware Workload Scheduling R 5 WAIT R 6 WAIT Gating Edge

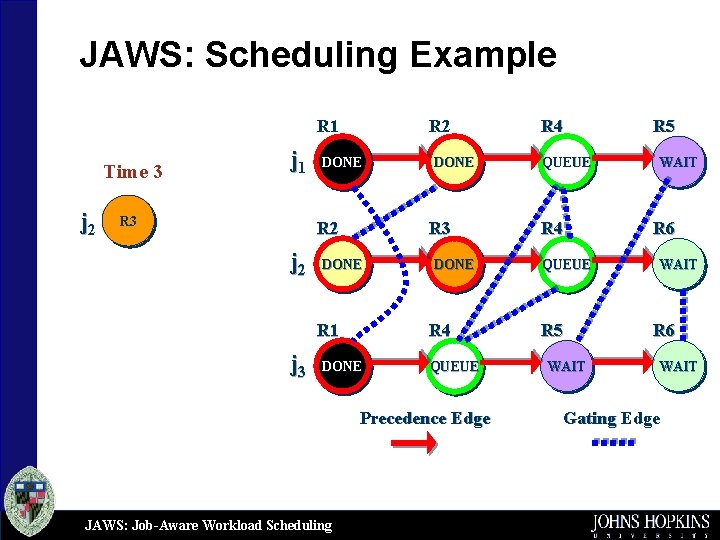

JAWS: Scheduling Example R 1 Time 3 j 2 j 1 R 3 R 2 DONE R 2 j 2 R 3 DONE R 1 j 3 DONE R 4 DONE QUEUE Precedence Edge JAWS: Job-Aware Workload Scheduling R 4 QUEUE R 5 WAIT R 6 WAIT Gating Edge

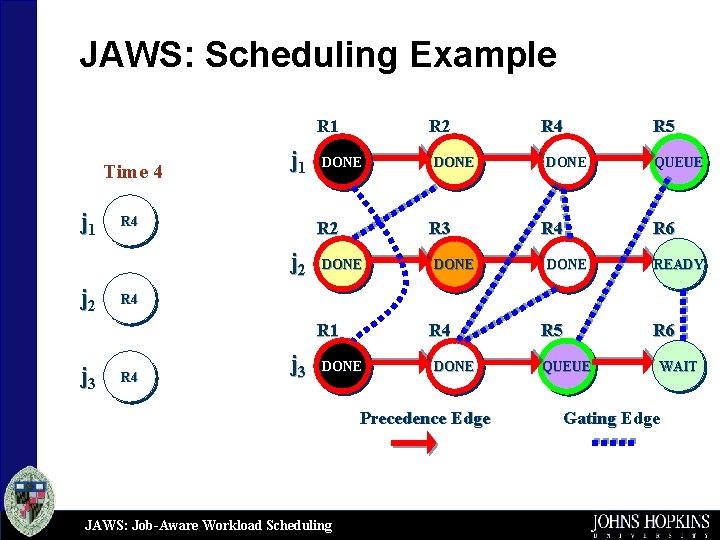

JAWS: Scheduling Example R 1 Time 4 j 1 R 4 DONE R 2 j 2 R 3 DONE R 4 DONE R 5 QUEUE R 6 READY R 4 R 1 j 3 DONE R 4 j 3 R 4 DONE Precedence Edge JAWS: Job-Aware Workload Scheduling R 5 QUEUE R 6 WAIT Gating Edge

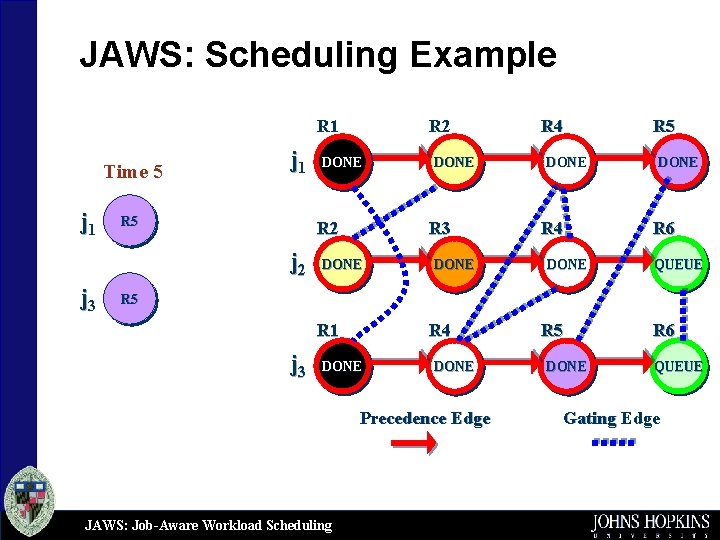

JAWS: Scheduling Example R 1 Time 5 j 1 R 5 DONE R 2 j 3 R 2 DONE R 3 DONE R 4 DONE R 5 DONE R 6 QUEUE R 5 R 1 j 3 R 4 DONE Precedence Edge JAWS: Job-Aware Workload Scheduling R 5 DONE R 6 QUEUE Gating Edge

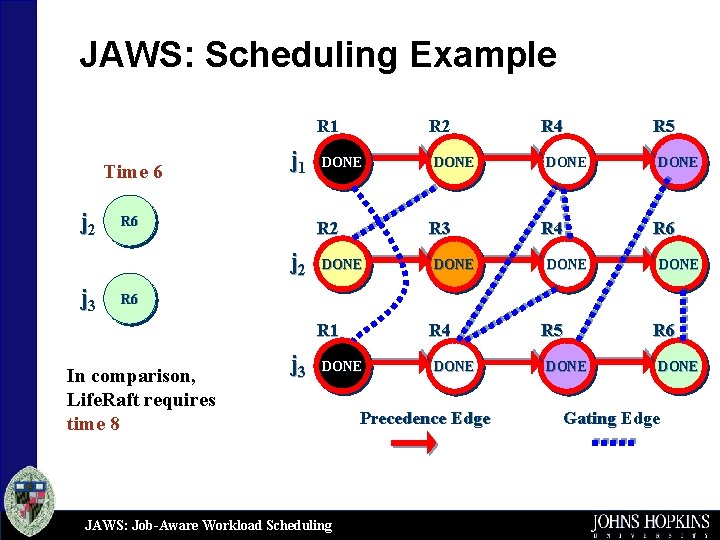

JAWS: Scheduling Example R 1 Time 6 j 2 j 1 R 6 DONE R 2 j 3 R 2 DONE R 3 DONE R 4 DONE R 5 DONE R 6 R 1 In comparison, Life. Raft requires time 8 j 3 R 4 DONE JAWS: Job-Aware Workload Scheduling DONE Precedence Edge R 5 DONE R 6 DONE Gating Edge

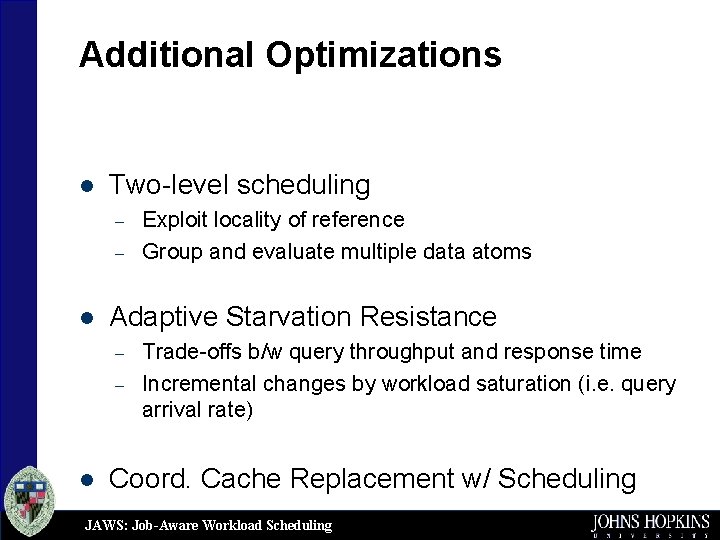

Additional Optimizations l Two-level scheduling – – l Adaptive Starvation Resistance – – l Exploit locality of reference Group and evaluate multiple data atoms Trade-offs b/w query throughput and response time Incremental changes by workload saturation (i. e. query arrival rate) Coord. Cache Replacement w/ Scheduling JAWS: Job-Aware Workload Scheduling

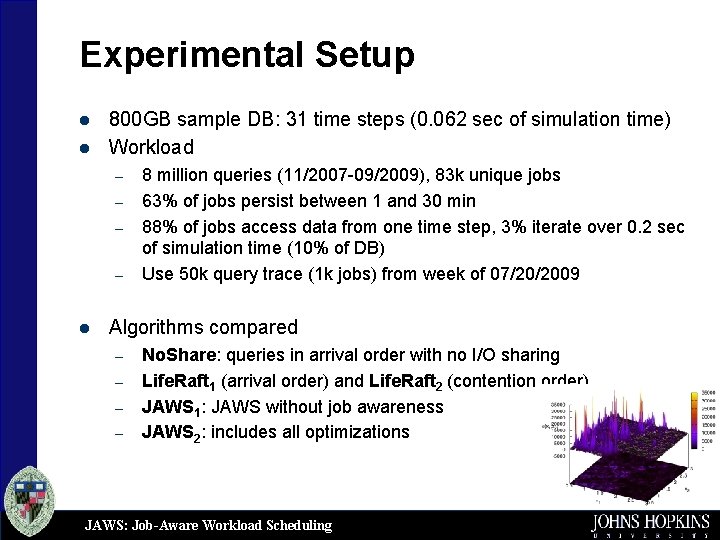

Experimental Setup l l 800 GB sample DB: 31 time steps (0. 062 sec of simulation time) Workload – – l 8 million queries (11/2007 -09/2009), 83 k unique jobs 63% of jobs persist between 1 and 30 min 88% of jobs access data from one time step, 3% iterate over 0. 2 sec of simulation time (10% of DB) Use 50 k query trace (1 k jobs) from week of 07/20/2009 Algorithms compared – – No. Share: queries in arrival order with no I/O sharing Life. Raft 1 (arrival order) and Life. Raft 2 (contention order) JAWS 1: JAWS without job awareness JAWS 2: includes all optimizations JAWS: Job-Aware Workload Scheduling

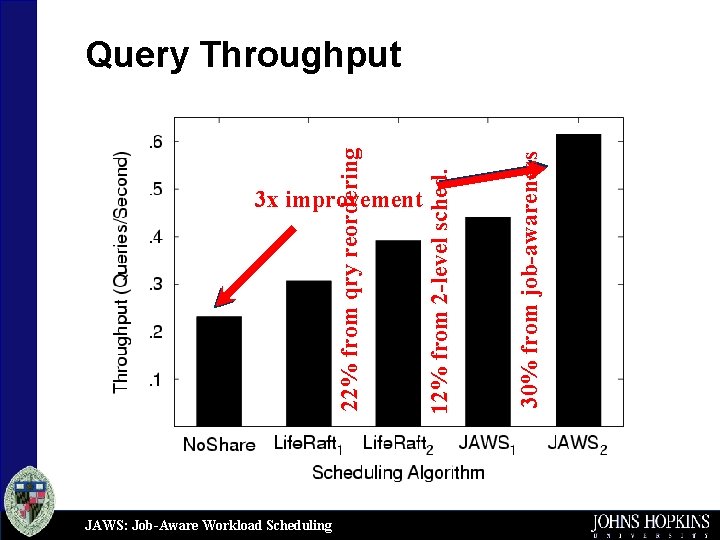

3 x improvement JAWS: Job-Aware Workload Scheduling 30% from job-awareness 12% from 2 -level sched. 22% from qry reordering Query Throughput

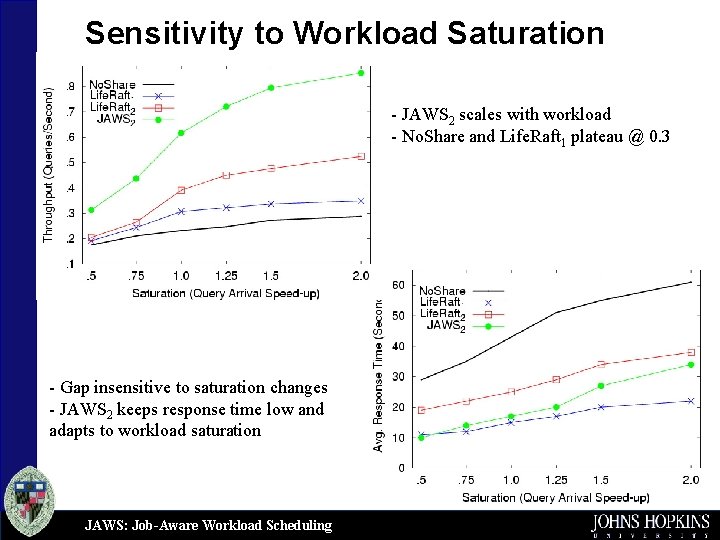

Sensitivity to Workload Saturation - JAWS 2 scales with workload - No. Share and Life. Raft 1 plateau @ 0. 3 - Gap insensitive to saturation changes - JAWS 2 keeps response time low and adapts to workload saturation JAWS: Job-Aware Workload Scheduling

Future Directions l Quality of service guarantees – – l Declarative style interfaces for job optimizations – – – l Supporting interactive queries Bounded completion time in proportion to query size Explicitly link related queries Pre-declare time and space of interest Pre-packaged op. that iterate over space/time inside DB Job-awareness crucial for Scientific workloads – – – Alleviates I/O contention across jobs Up to 4 x increase in throughput Scales with workload JAWS: Job-Aware Workload Scheduling

Questions? JAWS: Job-Aware Workload Scheduling

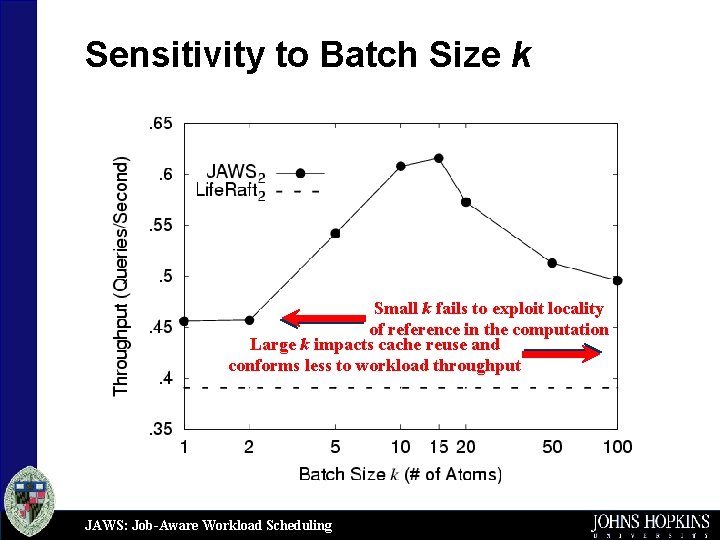

Sensitivity to Batch Size k Small k fails to exploit locality of reference in the computation Large k impacts cache reuse and conforms less to workload throughput JAWS: Job-Aware Workload Scheduling

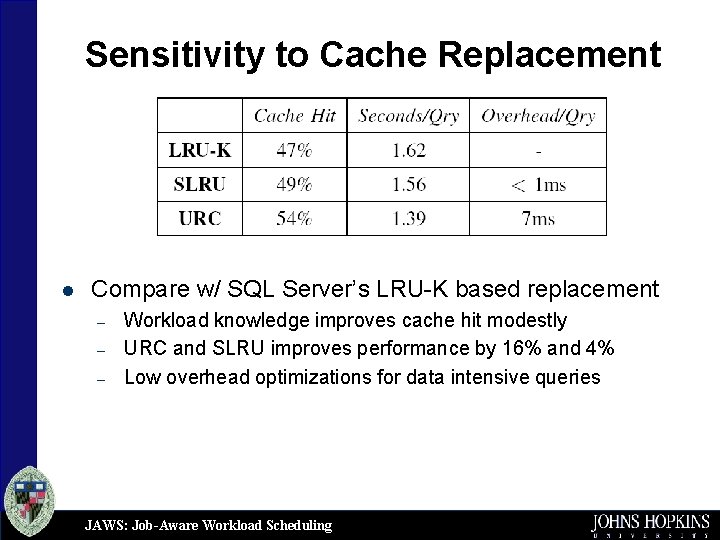

Sensitivity to Cache Replacement l Compare w/ SQL Server’s LRU-K based replacement – – – Workload knowledge improves cache hit modestly URC and SLRU improves performance by 16% and 4% Low overhead optimizations for data intensive queries JAWS: Job-Aware Workload Scheduling

- Slides: 26