Huffman Encoding Huffman code is method for the

- Slides: 30

Huffman Encoding § Huffman code is method for the compression for standard text documents. § It makes use of a binary tree to develop codes of varying lengths for the letters used in the original message. § Huffman code is also part of the JPEG image compression scheme. § The algorithm was introduced by David Huffman in 1952 as part of a course assignment at MIT. 1

Lecture No. 26 Data Structures Dr. Sohail Aslam 2

Huffman Encoding § To understand Huffman encoding, it is best to use a simple example. § Encoding the 32 -character phrase: "traversing threaded binary trees", § If we send the phrase as a message in a network using standard 8 -bit ASCII codes, we would have to send 8*32= 256 bits. § Using the Huffman algorithm, we can send the message with only 116 bits. 3

Huffman Encoding § List all the letters used, including the "space" character, along with the frequency with which they occur in the message. § Consider each of these (character, frequency) pairs to be nodes; they are actually leaf nodes, as we will see. § Pick the two nodes with the lowest frequency, and if there is a tie, pick randomly amongst those with equal frequencies. 4

Huffman Encoding § Make a new node out of these two, and make the two nodes its children. § This new node is assigned the sum of the frequencies of its children. § Continue the process of combining the two nodes of lowest frequency until only one node, the root, remains. 5

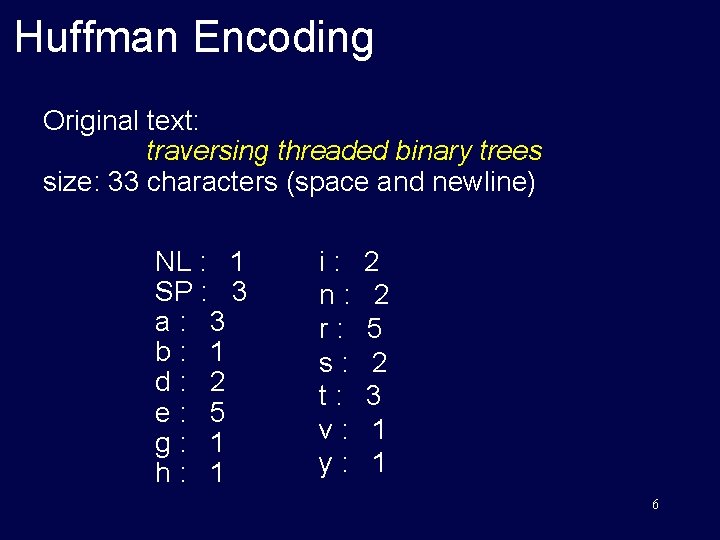

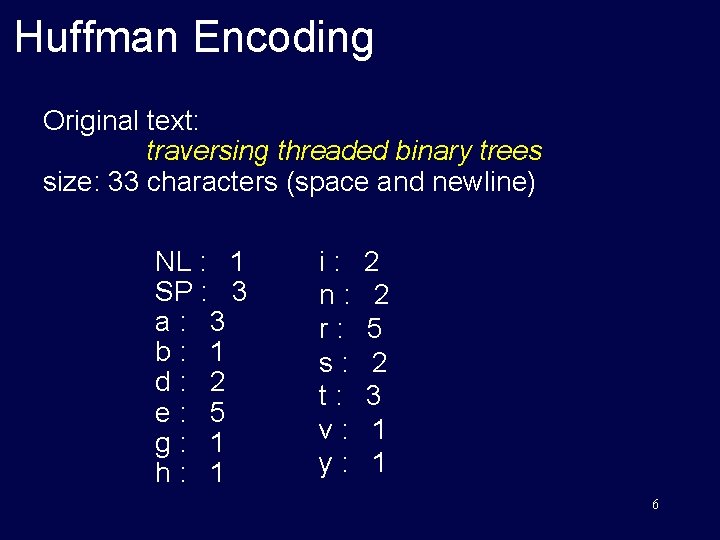

Huffman Encoding Original text: traversing threaded binary trees size: 33 characters (space and newline) NL : 1 SP : 3 a: 3 b: 1 d: 2 e: 5 g: 1 h: 1 i: n: r: s: t: v: y: 2 2 5 2 3 1 1 6

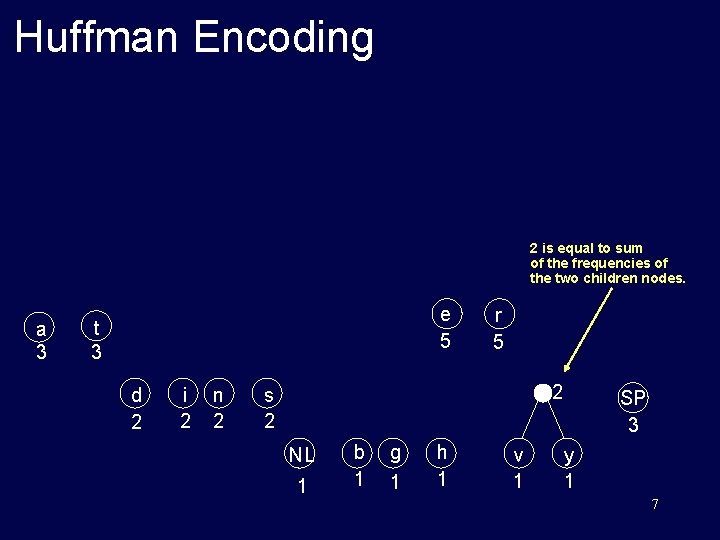

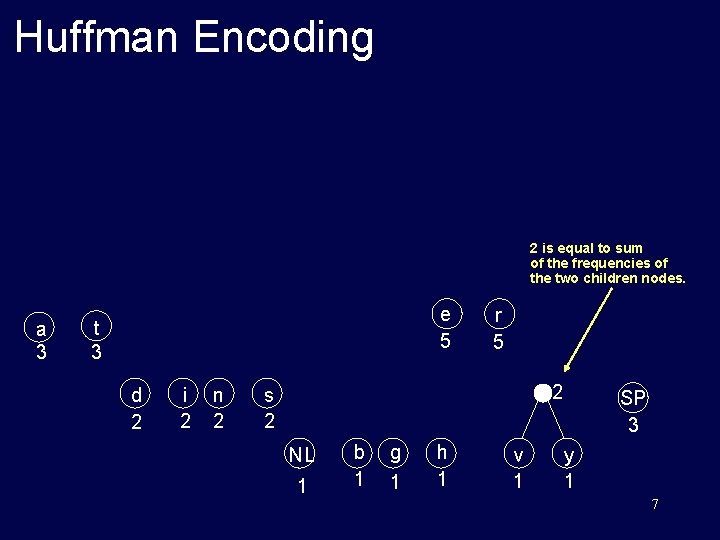

Huffman Encoding 2 is equal to sum of the frequencies of the two children nodes. a 3 e 5 t 3 d 2 i 2 n 2 r 5 2 s 2 NL 1 b 1 g 1 h 1 v 1 SP 3 y 1 7

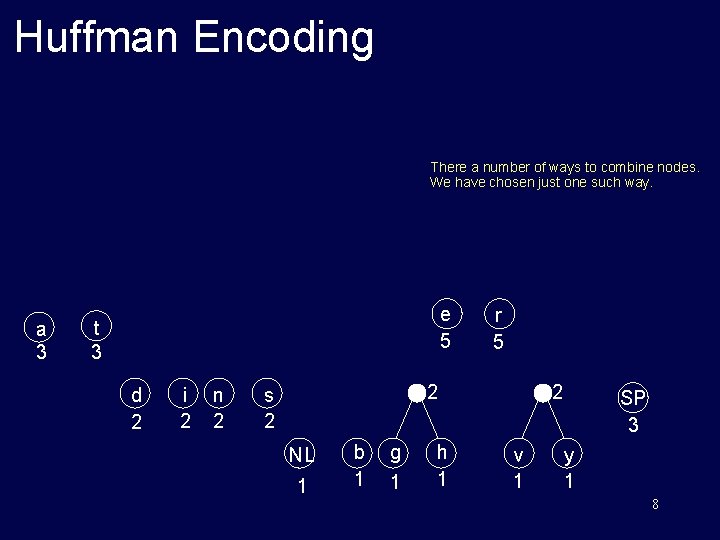

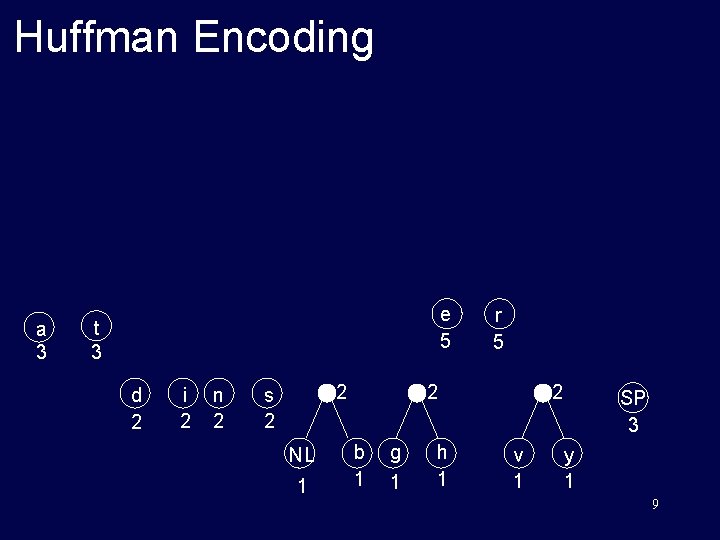

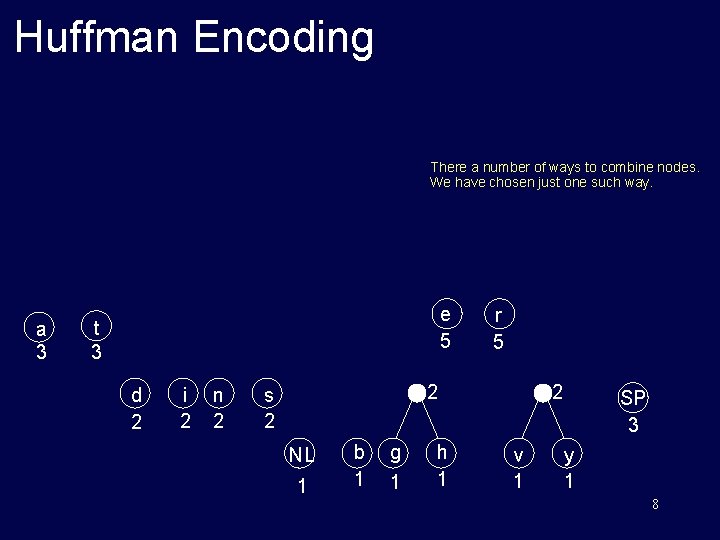

Huffman Encoding There a number of ways to combine nodes. We have chosen just one such way. a 3 e 5 t 3 d 2 i 2 n 2 r 5 2 s 2 NL 1 b 1 g 1 h 1 2 v 1 SP 3 y 1 8

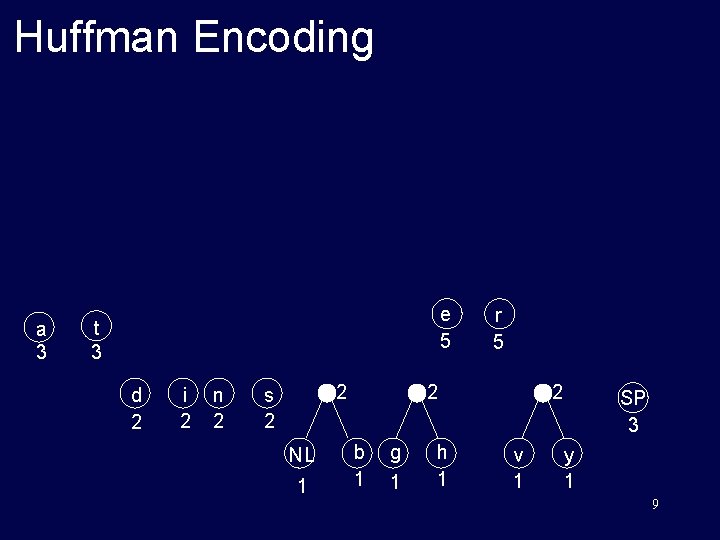

Huffman Encoding a 3 e 5 t 3 d 2 i 2 n 2 2 s 2 NL 1 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 9

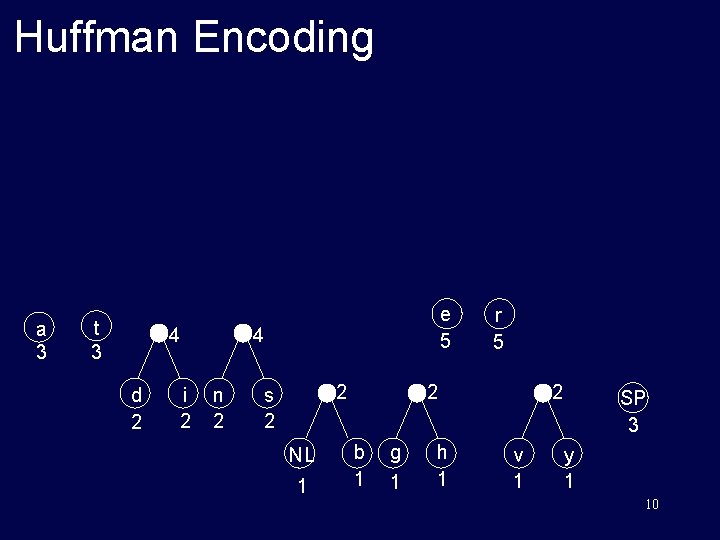

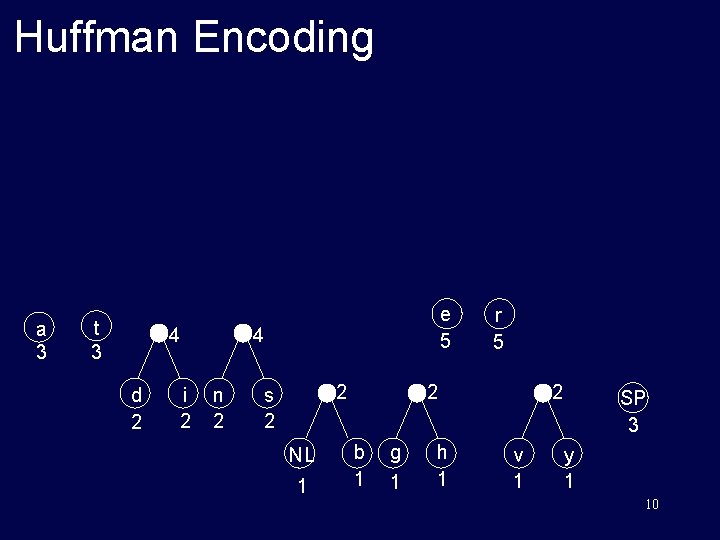

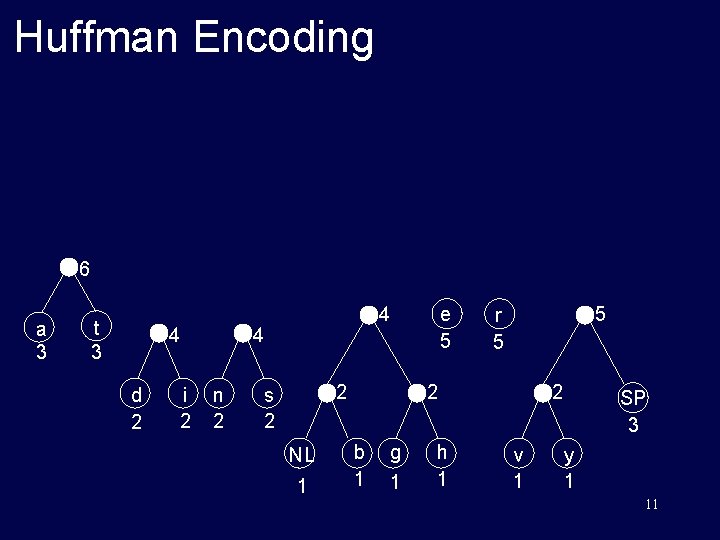

Huffman Encoding a 3 t 3 4 d 2 i 2 e 5 4 n 2 2 s 2 NL 1 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 10

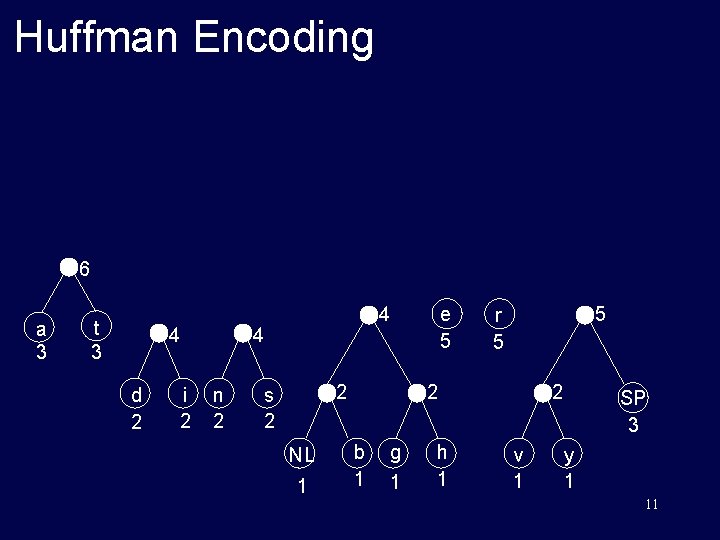

Huffman Encoding 6 a 3 t 3 4 d 2 i 2 4 n 2 e 5 4 2 s 2 NL 1 5 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 11

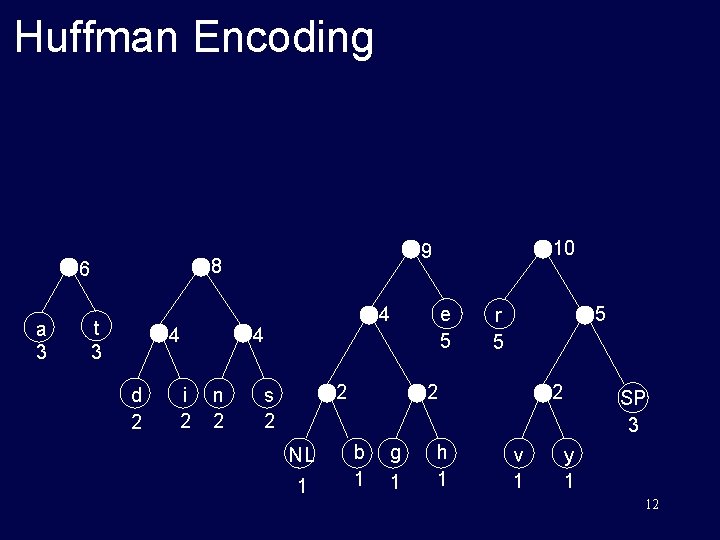

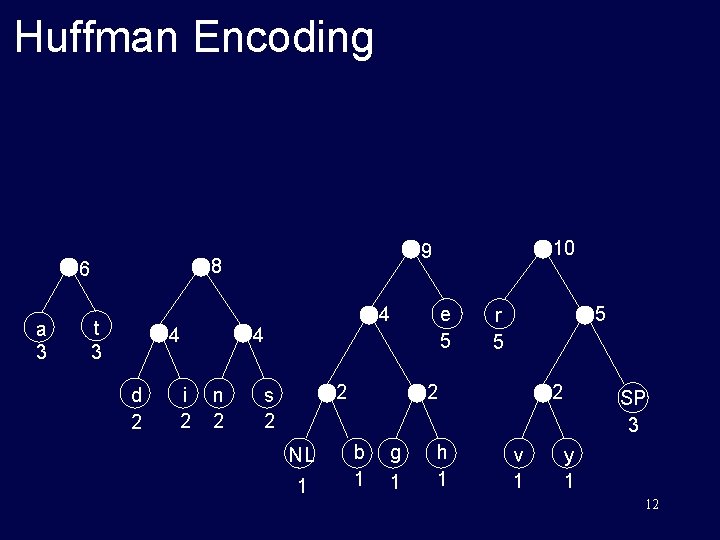

Huffman Encoding 8 6 a 3 t 3 4 d 2 i 2 e 5 4 4 n 2 10 9 2 s 2 NL 1 5 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 12

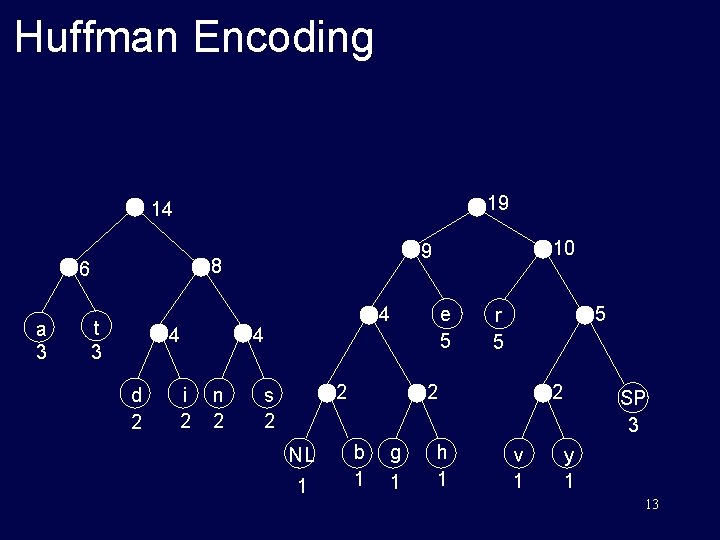

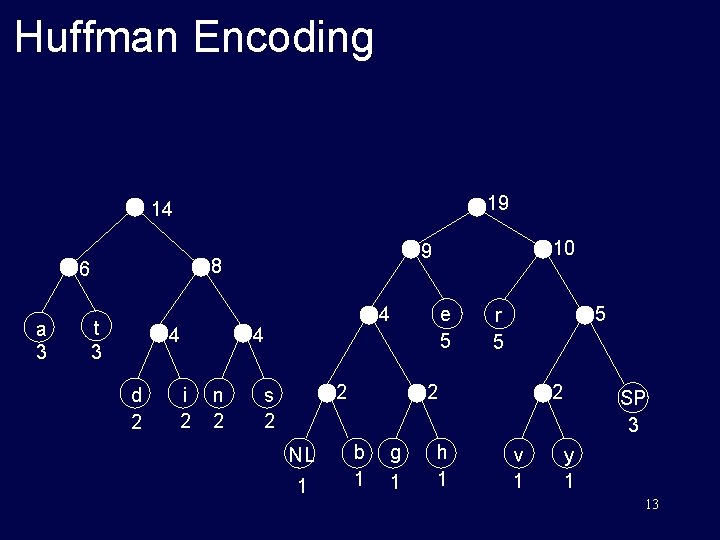

Huffman Encoding 19 14 8 6 a 3 t 3 4 d 2 i 2 e 5 4 4 n 2 10 9 2 s 2 NL 1 5 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 13

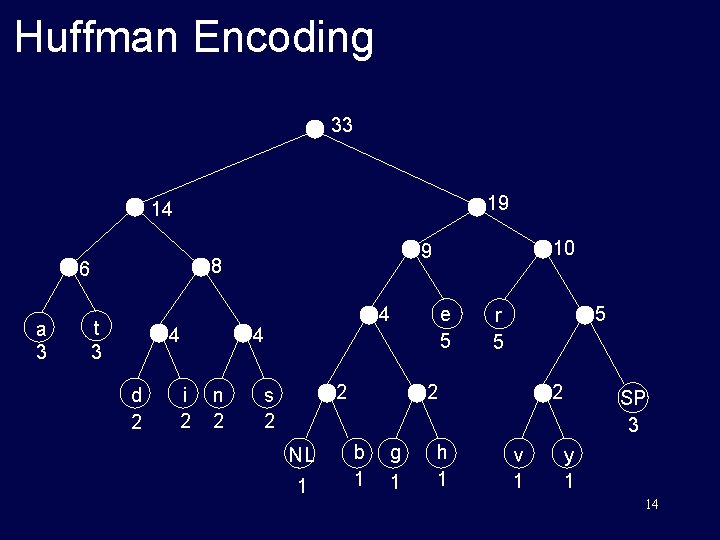

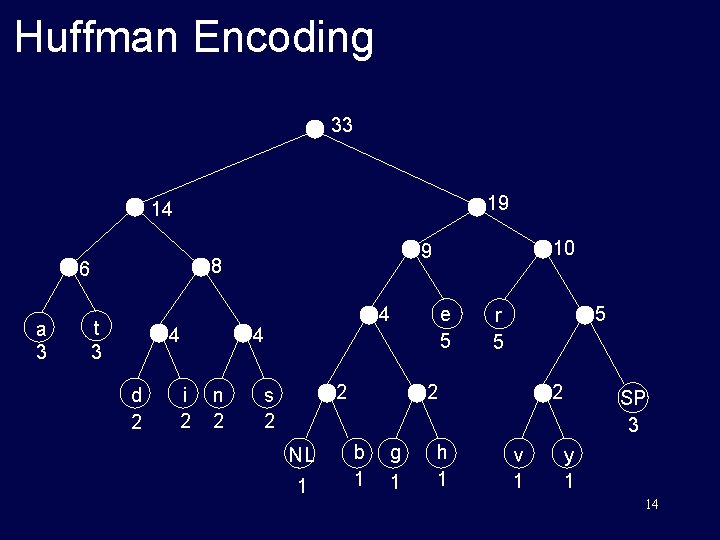

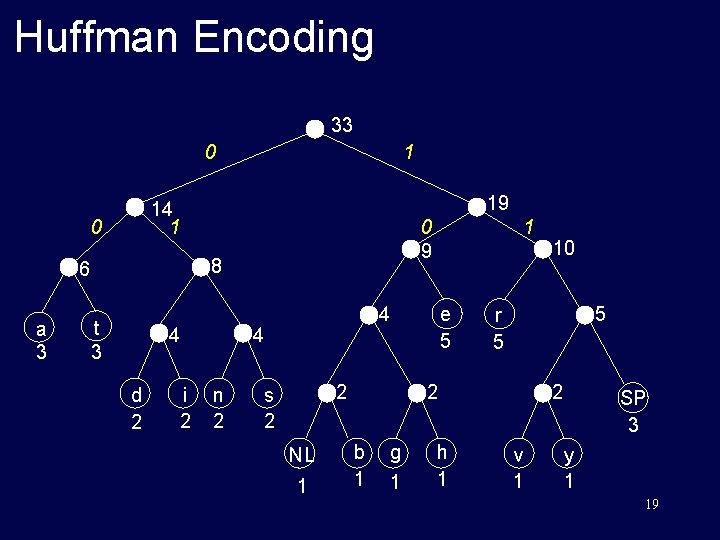

Huffman Encoding 33 19 14 8 6 a 3 t 3 4 d 2 i 2 e 5 4 4 n 2 10 9 2 s 2 NL 1 5 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 14

Huffman Encoding § List all the letters used, including the "space" character, along with the frequency with which they occur in the message. § Consider each of these (character, frequency) pairs to be nodes; they are actually leaf nodes, as we will see. § Pick the two nodes with the lowest frequency, and if there is a tie, pick randomly amongst those with equal frequencies. 15

Huffman Encoding § Make a new node out of these two, and make the two nodes its children. § This new node is assigned the sum of the frequencies of its children. § Continue the process of combining the two nodes of lowest frequency until only one node, the root, remains. 16

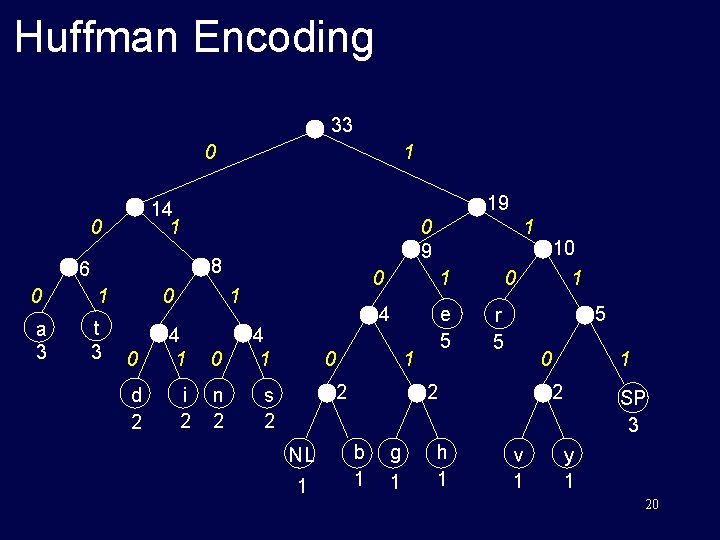

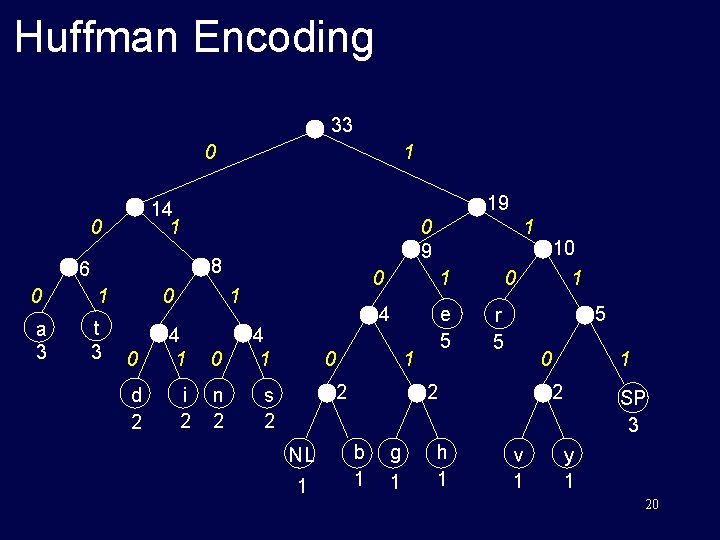

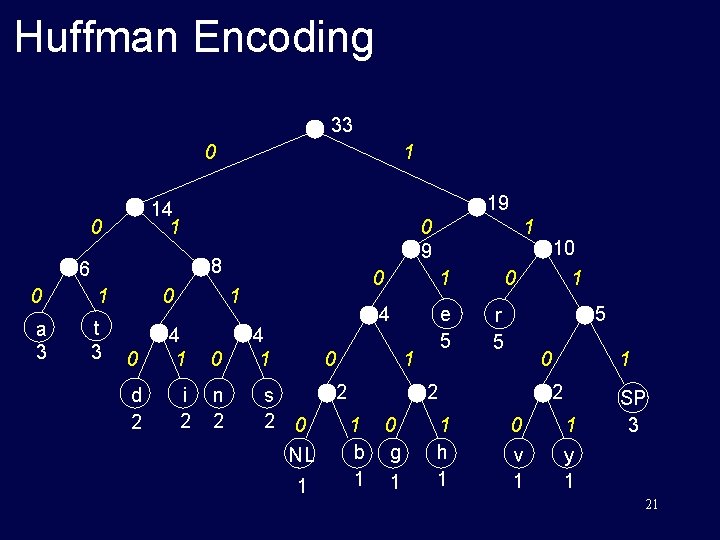

Huffman Encoding § Start at the root. Assign 0 to left branch and 1 to the right branch. § Repeat the process down the left and right subtrees. § To get the code for a character, traverse the tree from the root to the character leaf node and read off the 0 and 1 along the path. 17

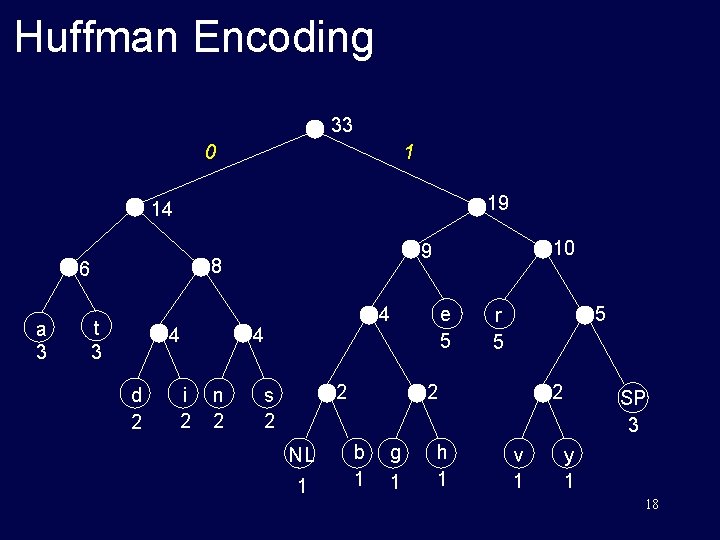

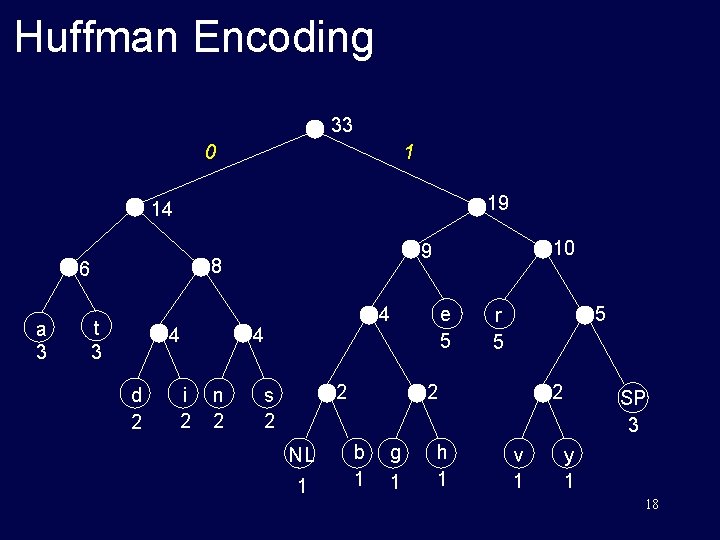

Huffman Encoding 33 0 1 19 14 8 6 a 3 t 3 4 d 2 i 2 e 5 4 4 n 2 10 9 2 s 2 NL 1 5 r 5 2 b 1 g 1 h 1 2 v 1 SP 3 y 1 18

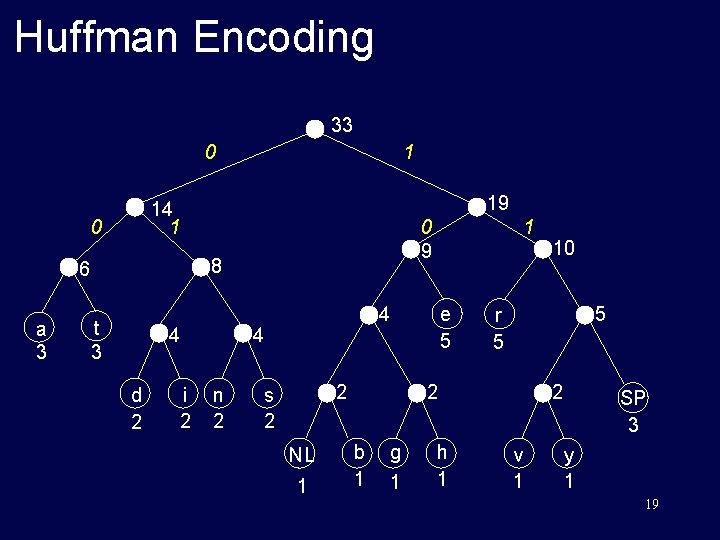

Huffman Encoding 33 0 19 14 1 0 0 9 8 6 a 3 1 t 3 4 d 2 i 2 e 5 4 4 n 2 1 2 s 2 NL 1 5 r 5 2 b 1 g 1 h 1 10 2 v 1 SP 3 y 1 19

Huffman Encoding 33 0 1 19 14 1 0 0 9 8 6 0 1 a 3 t 3 0 4 1 d 2 i 2 n 2 s 2 0 1 4 e 5 0 1 2 NL 1 1 0 g 1 h 1 1 5 r 5 0 2 b 1 10 1 2 v 1 SP 3 y 1 20

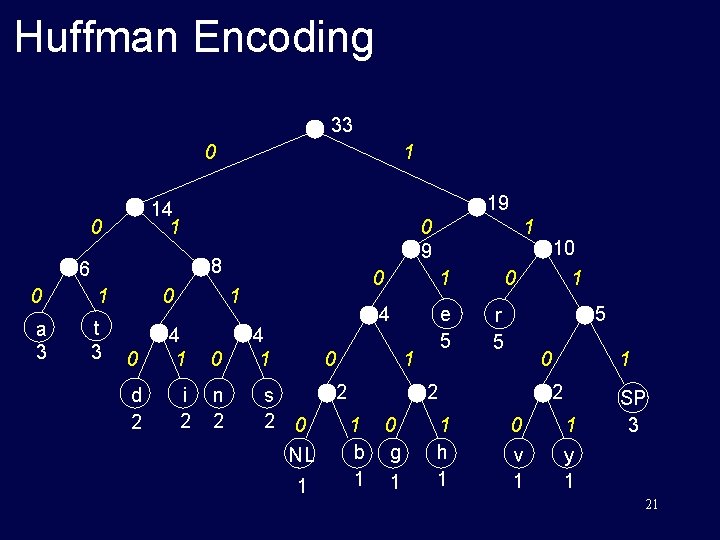

Huffman Encoding 33 0 1 19 14 1 0 0 9 8 6 0 1 a 3 t 3 0 4 1 d 2 i 2 n 2 s 2 0 1 4 e 5 0 1 2 0 NL 1 1 0 0 g 1 1 h 1 1 5 r 5 0 2 1 b 1 10 1 2 0 1 v 1 y 1 SP 3 21

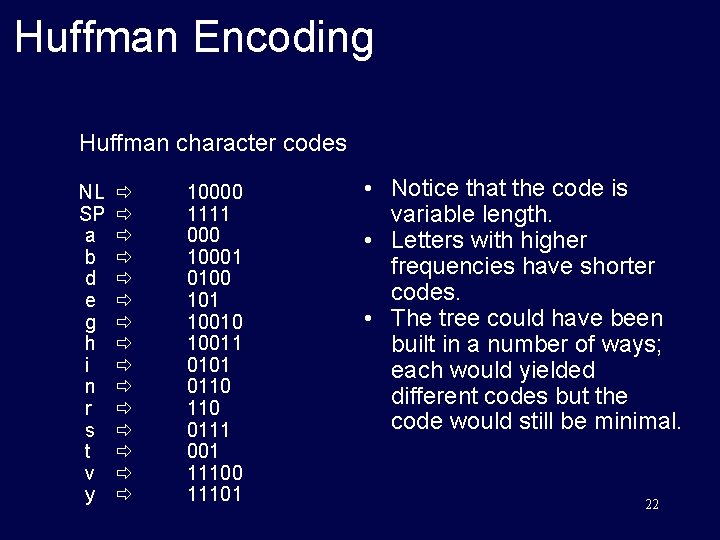

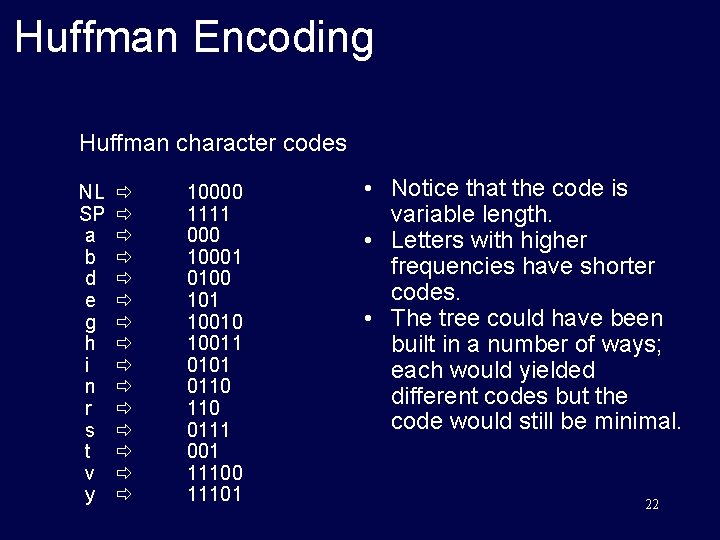

Huffman Encoding Huffman character codes NL SP a b d e g h i n r s t v y 10000 1111 000 10001 0100 101 10010 10011 0101 0110 0111 001 11100 11101 • Notice that the code is variable length. • Letters with higher frequencies have shorter codes. • The tree could have been built in a number of ways; each would yielded different codes but the code would still be minimal. 22

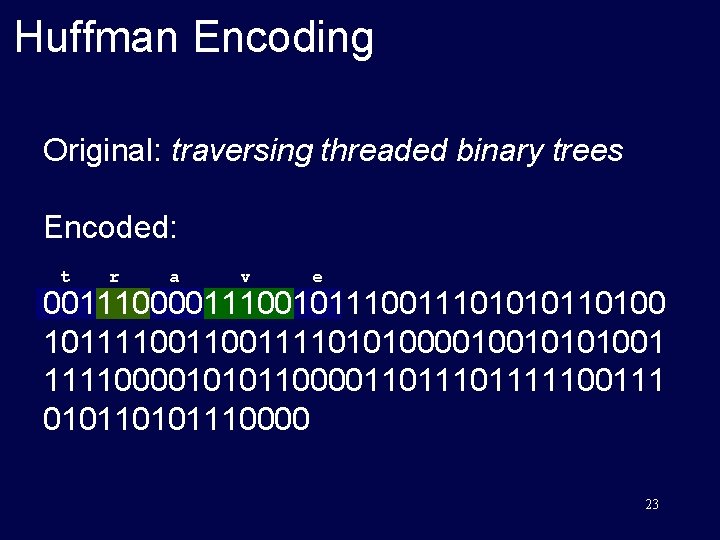

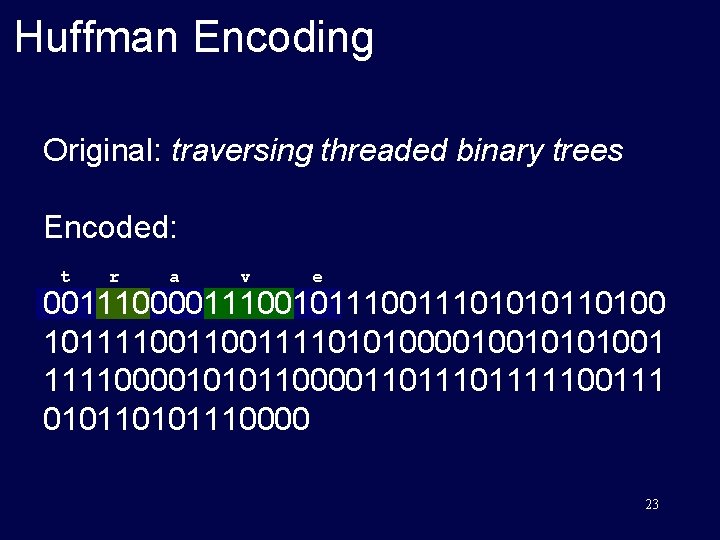

Huffman Encoding Original: traversing threaded binary trees Encoded: t r a v e 00111001011101010110100 1011110011110101000010010101001 1111000010101100001101111100111 0101110000 23

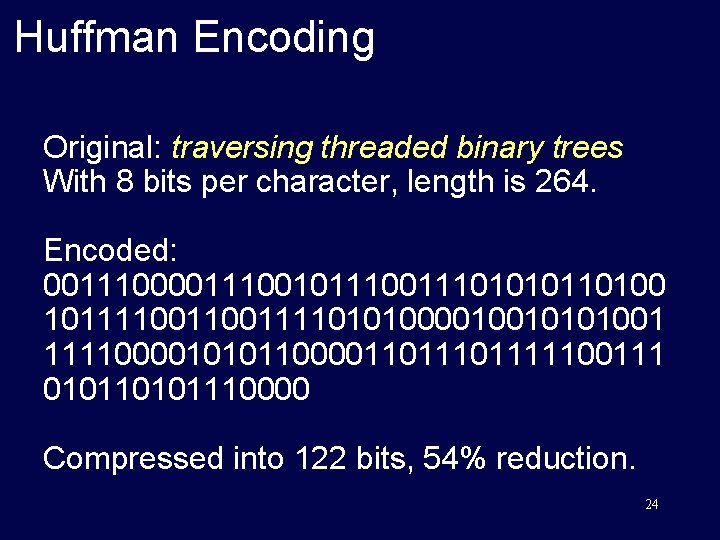

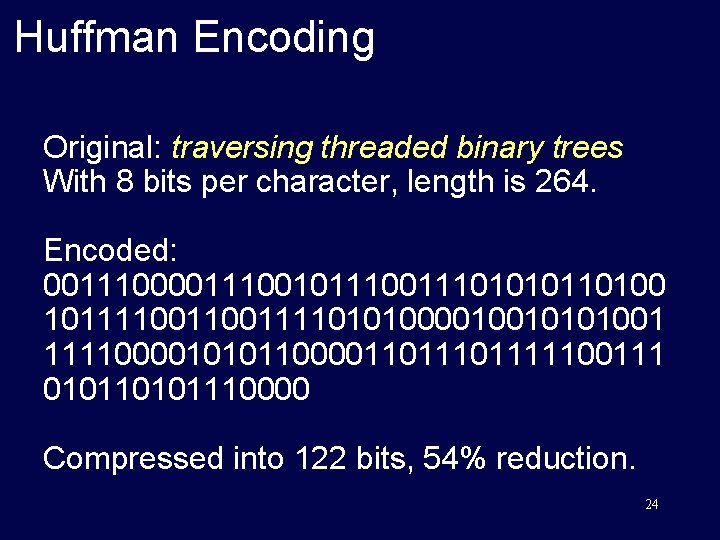

Huffman Encoding Original: traversing threaded binary trees With 8 bits per character, length is 264. Encoded: 00111001011101010110100 1011110011110101000010010101001 1111000010101100001101111100111 0101110000 Compressed into 122 bits, 54% reduction. 24

Mathematical Properties of Binary Trees 25

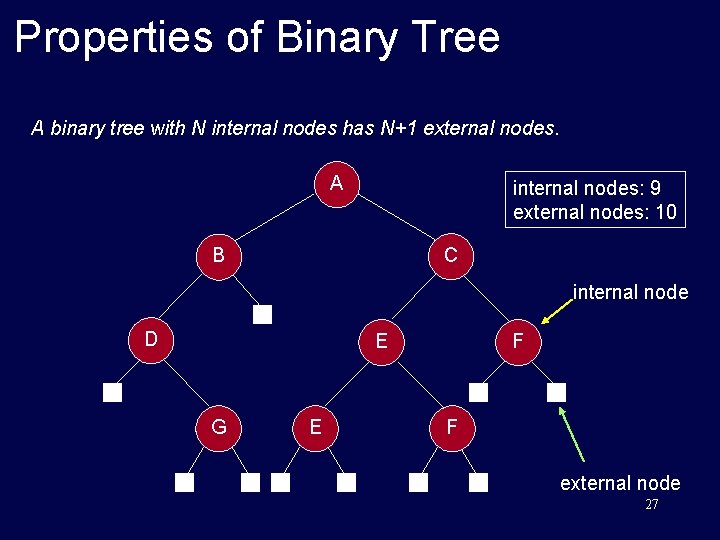

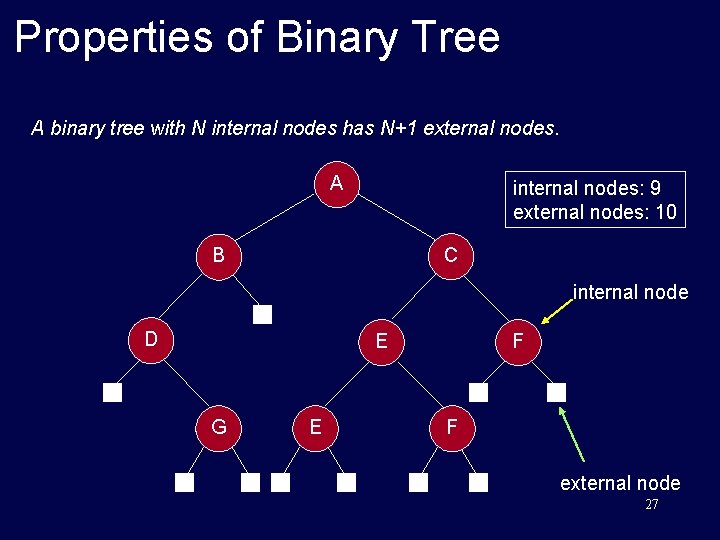

Properties of Binary Tree Property: A binary tree with N internal nodes has N+1 external nodes. 26

Properties of Binary Tree A binary tree with N internal nodes has N+1 external nodes. A internal nodes: 9 external nodes: 10 C B internal node D F E G E F external node 27

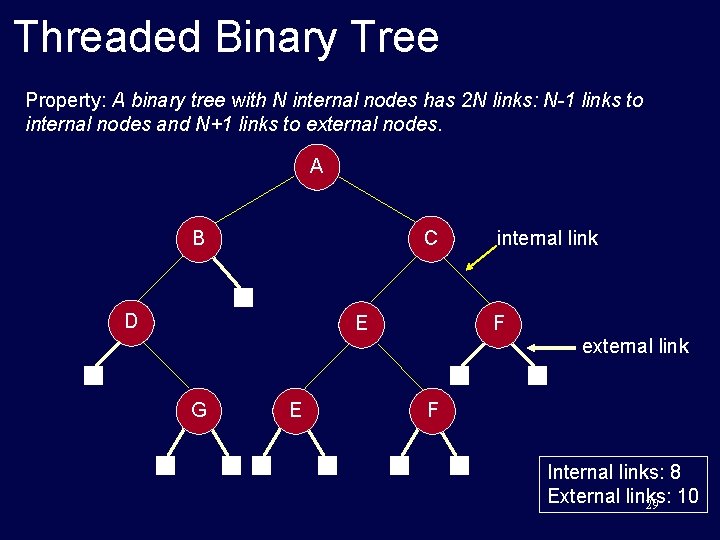

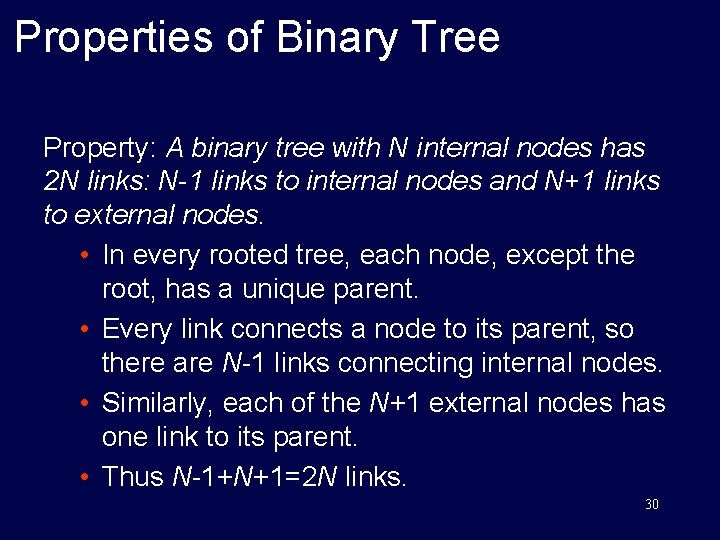

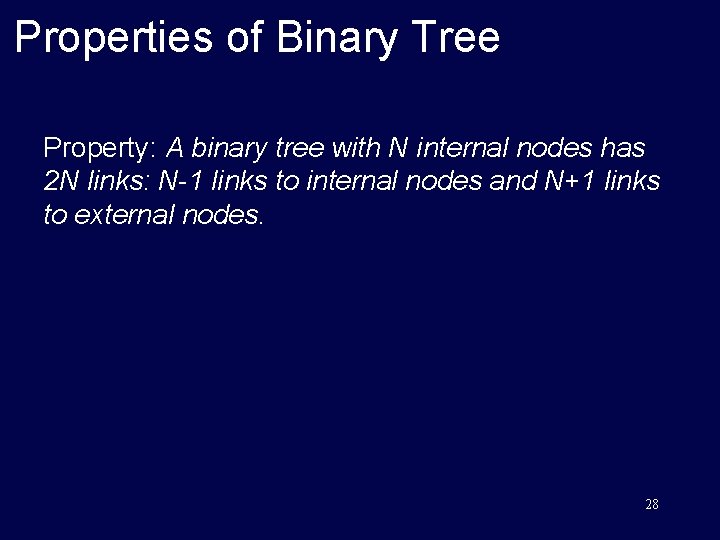

Properties of Binary Tree Property: A binary tree with N internal nodes has 2 N links: N-1 links to internal nodes and N+1 links to external nodes. 28

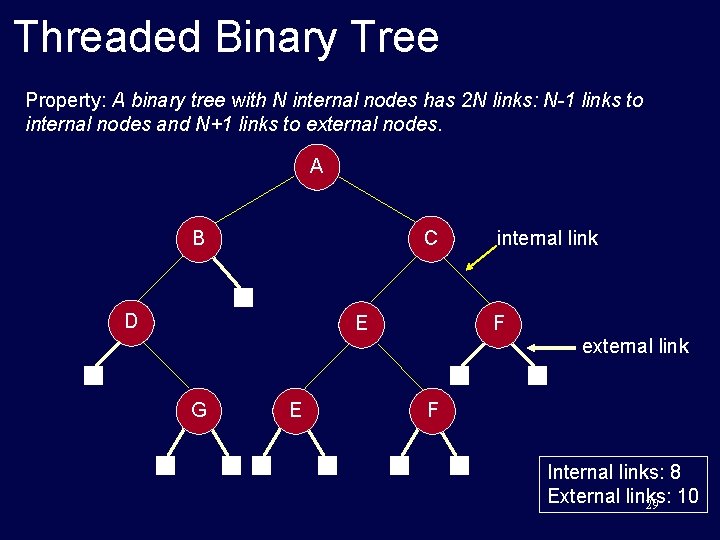

Threaded Binary Tree Property: A binary tree with N internal nodes has 2 N links: N-1 links to internal nodes and N+1 links to external nodes. A C B D internal link F E external link G E F Internal links: 8 External links: 29 10

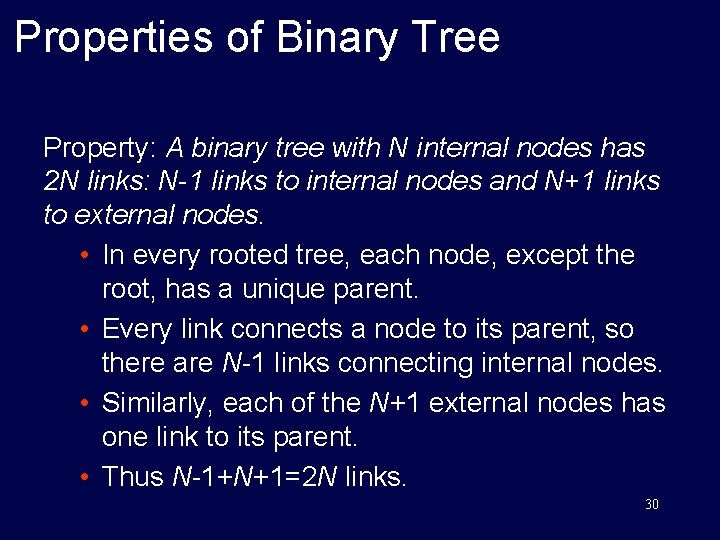

Properties of Binary Tree Property: A binary tree with N internal nodes has 2 N links: N-1 links to internal nodes and N+1 links to external nodes. • In every rooted tree, each node, except the root, has a unique parent. • Every link connects a node to its parent, so there are N-1 links connecting internal nodes. • Similarly, each of the N+1 external nodes has one link to its parent. • Thus N-1+N+1=2 N links. 30