Flow Radar A Better Net Flow For Data

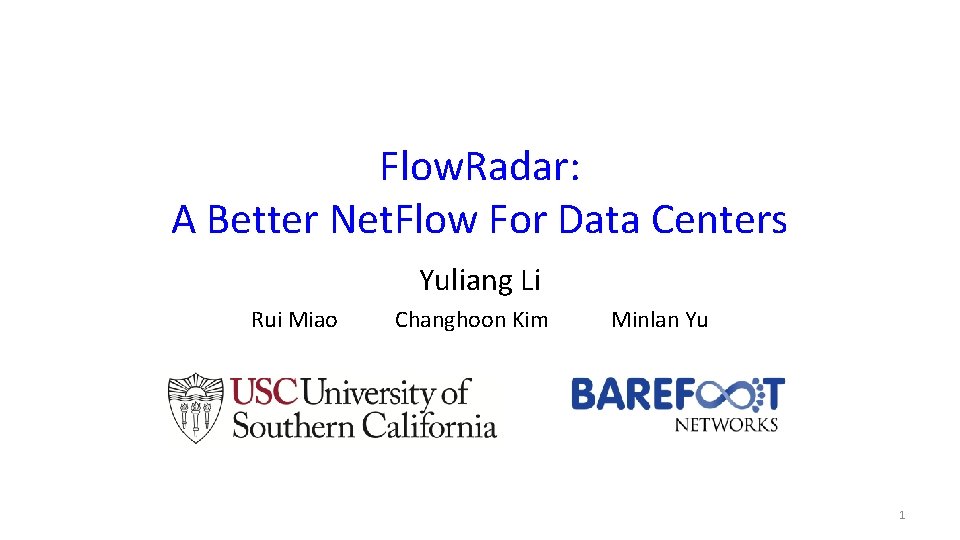

Flow. Radar: A Better Net. Flow For Data Centers Yuliang Li Rui Miao Changhoon Kim Minlan Yu 1

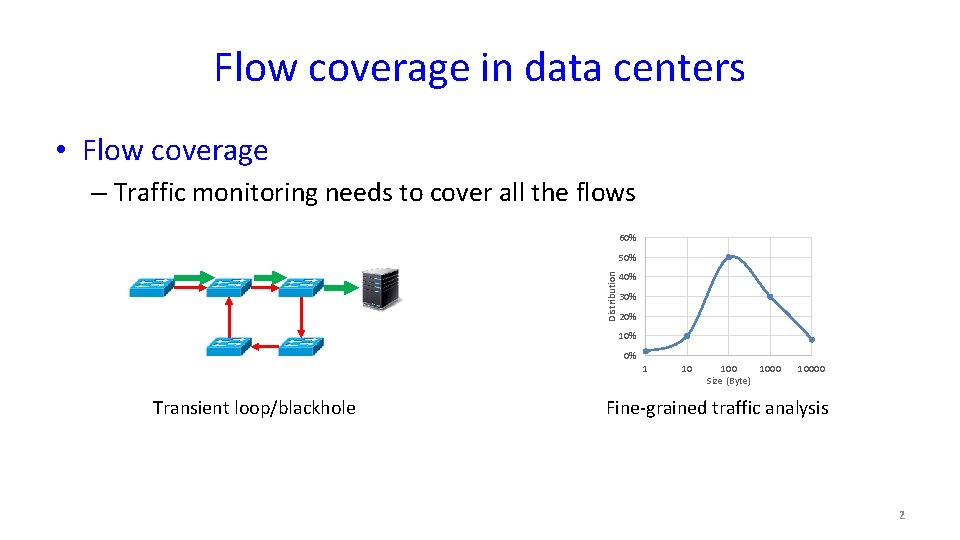

Flow coverage in data centers • Flow coverage – Traffic monitoring needs to cover all the flows 60% Distribution 50% 40% 30% 20% 10% 0% Transient loop/blackhole 1 10 1000 Size (Byte) 10000 Fine-grained traffic analysis 2

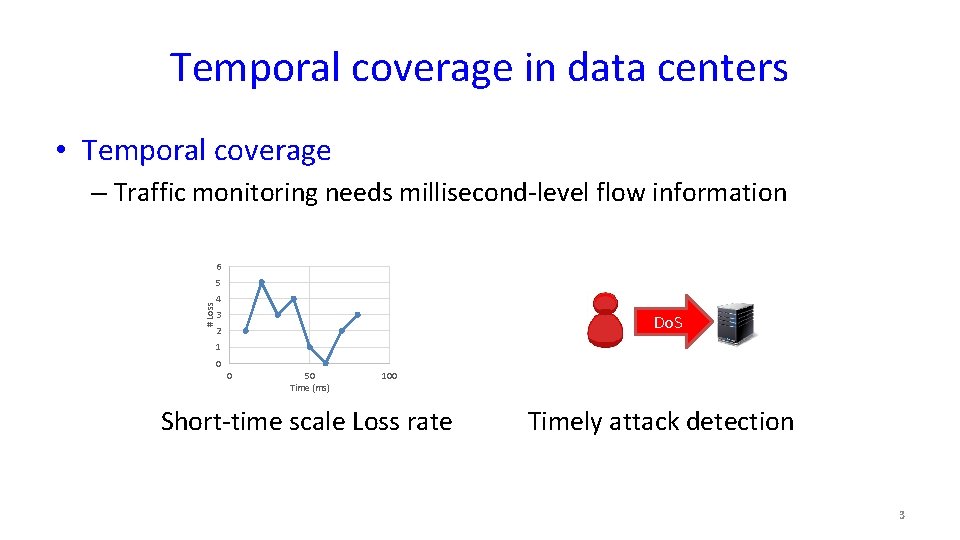

Temporal coverage in data centers • Temporal coverage – Traffic monitoring needs millisecond-level flow information 6 # Loss 5 4 3 Do. S 2 1 0 0 50 Time (ms) 100 Short-time scale Loss rate Timely attack detection 3

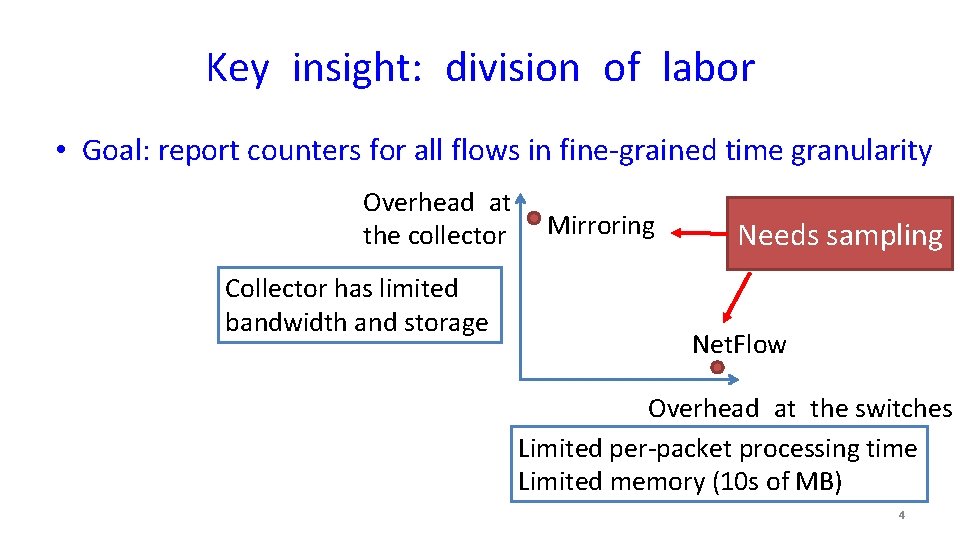

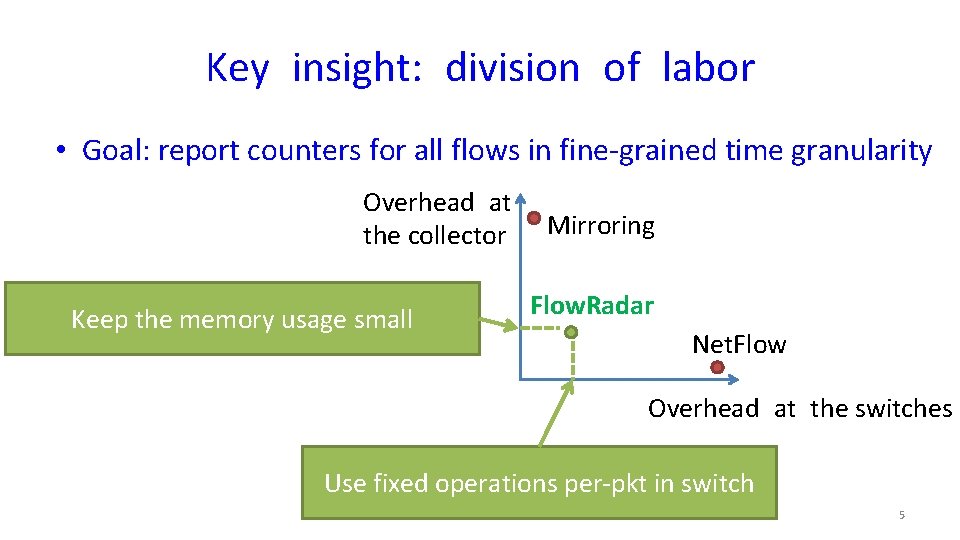

Key insight: division of labor • Goal: report counters for all flows in fine-grained time granularity Overhead at the collector Collector has limited bandwidth and storage Mirroring Needs sampling Net. Flow Overhead at the switches Limited per-packet processing time Limited memory (10 s of MB) 4

Key insight: division of labor • Goal: report counters for all flows in fine-grained time granularity Overhead at the collector Keep the memory usage small Mirroring Flow. Radar Net. Flow Overhead at the switches Use fixed operations per-pkt in switch 5

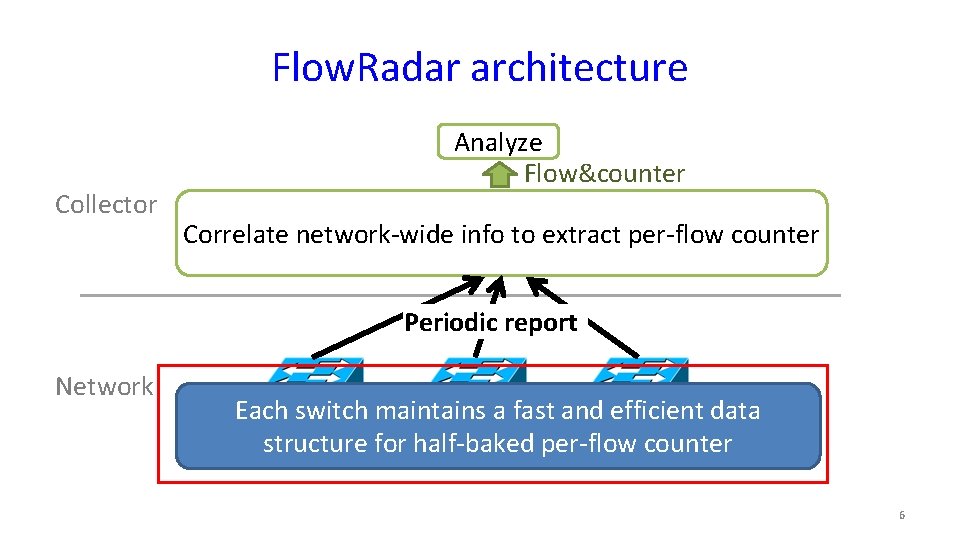

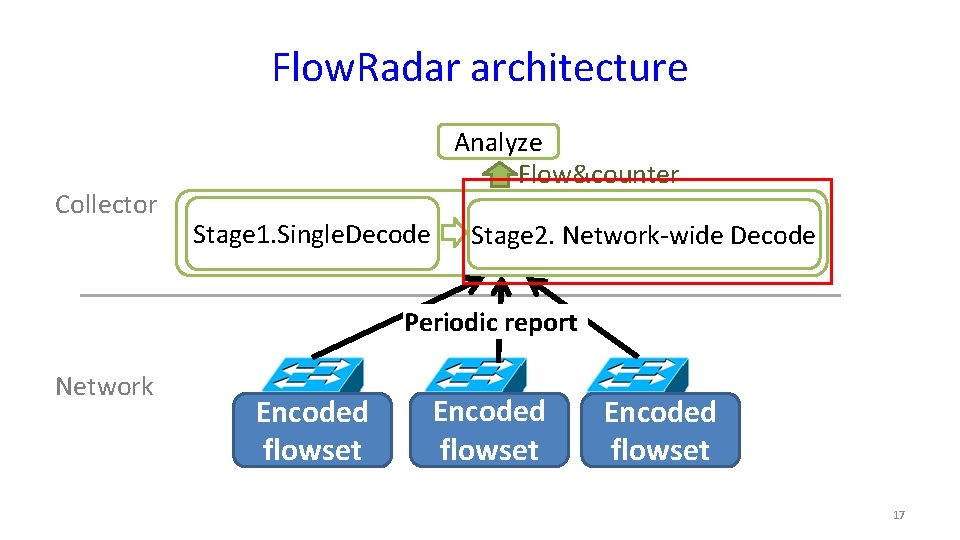

Flow. Radar architecture Collector Analyze Flow&counter Correlate network-wide info to extract per-flow counter Periodic report Network Each switch maintains a fast and efficient data structure for half-baked per-flow counter 6

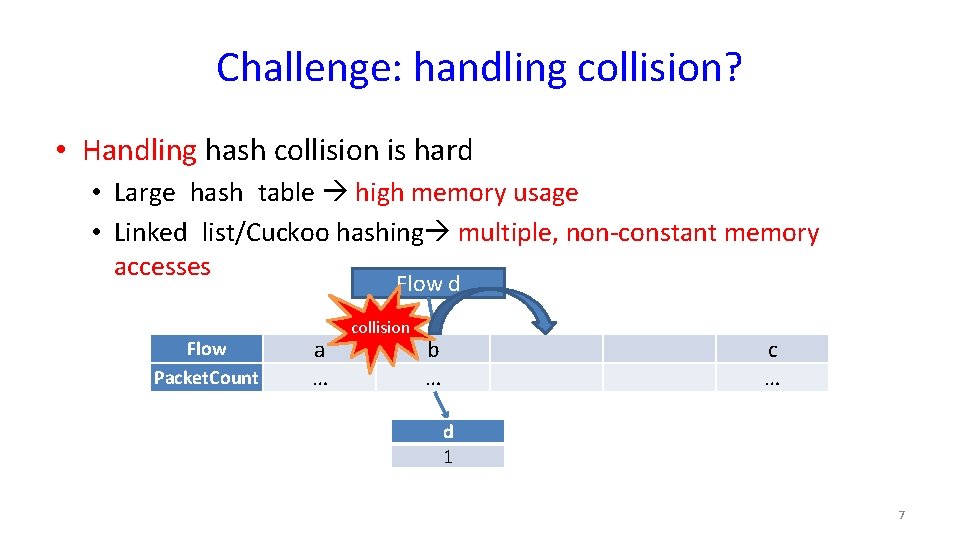

Challenge: handling collision? • Handling hash collision is hard • Large hash table high memory usage • Linked list/Cuckoo hashing multiple, non-constant memory accesses Flow d Flow Packet. Count a … collision b … c … d 1 7

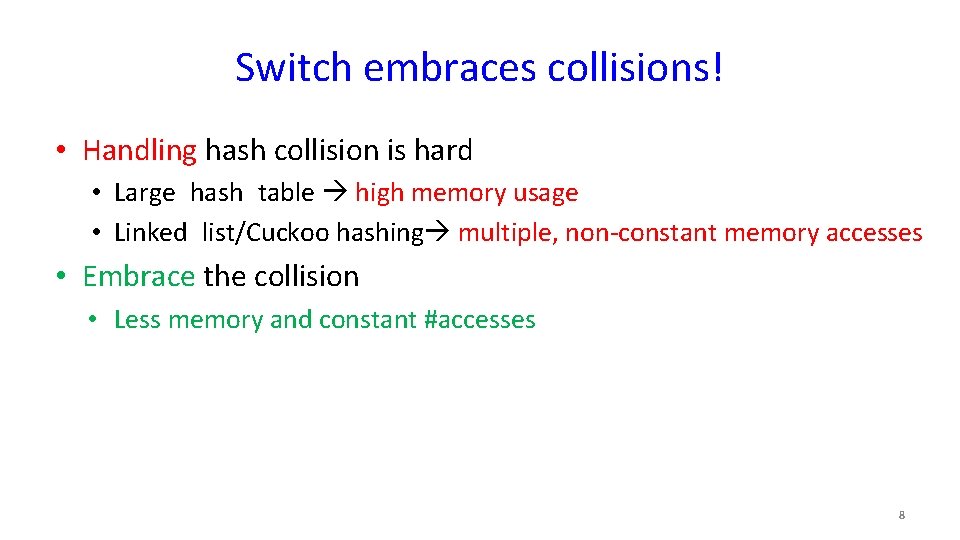

Switch embraces collisions! • Handling hash collision is hard • Large hash table high memory usage • Linked list/Cuckoo hashing multiple, non-constant memory accesses • Embrace the collision • Less memory and constant #accesses 8

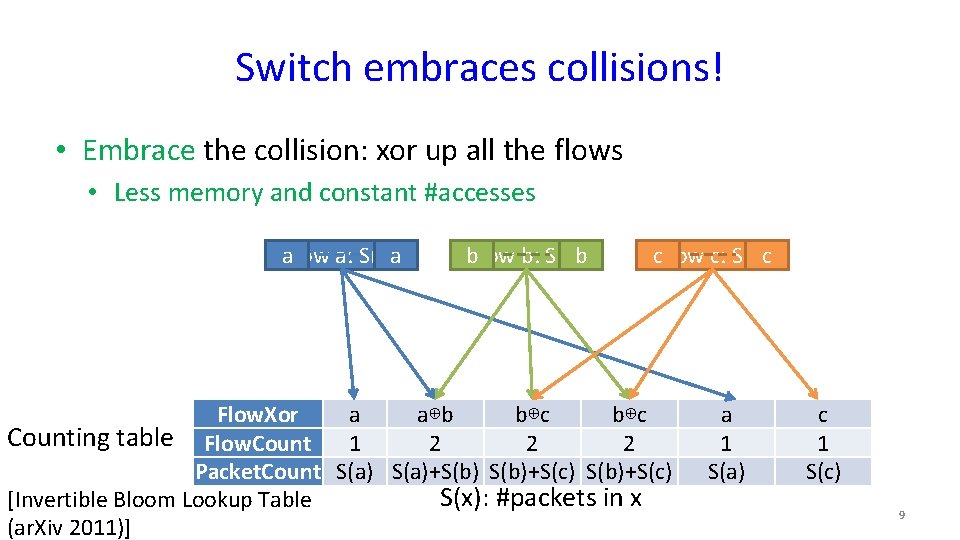

Switch embraces collisions! • Embrace the collision: xor up all the flows • Less memory and constant #accesses aflow a: S(a)a b. Flow b: S(b)b c. Flow c: S(c)c Flow. Xor a a⊕b b⊕c Counting table Flow. Count 1 2 2 2 Packet. Count S(a)+S(b)+S(c) S(x): #packets in x [Invertible Bloom Lookup Table (ar. Xiv 2011)] a 1 S(a) c 1 S(c) 9

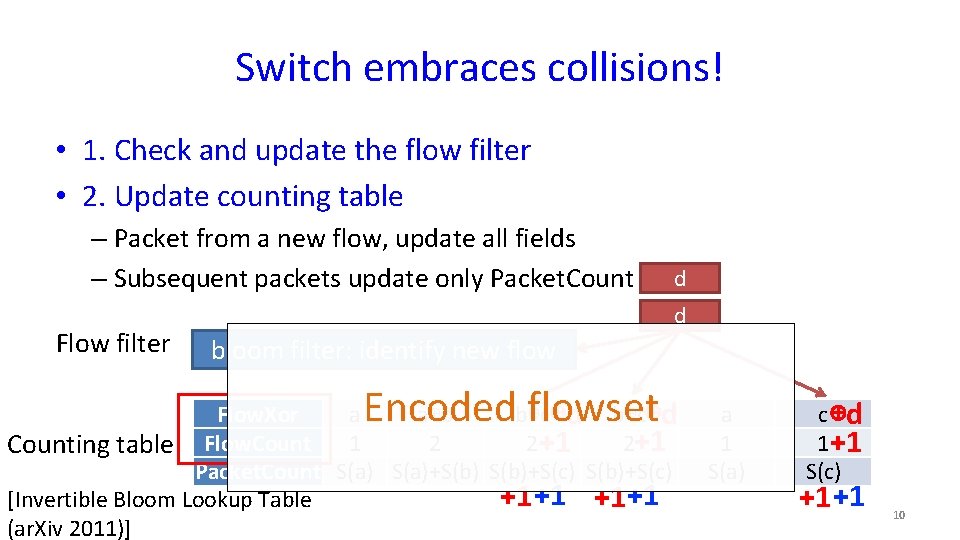

Switch embraces collisions! • 1. Check and update the flow filter • 2. Update counting table – Packet from a new flow, update all fields – Subsequent packets update only Packet. Count Flow filter d d bloom filter: identify new flow Encoded flowset Flow. Xor a a⊕b b⊕c⊕d 2 2 +1 2+1 Counting table Flow. Count 1 Packet. Count S(a)+S(b)+S(c) +1 +1 +1+1 [Invertible Bloom Lookup Table (ar. Xiv 2011)] a 1 S(a) c ⊕d 1+1 S(c) +1 +1 10

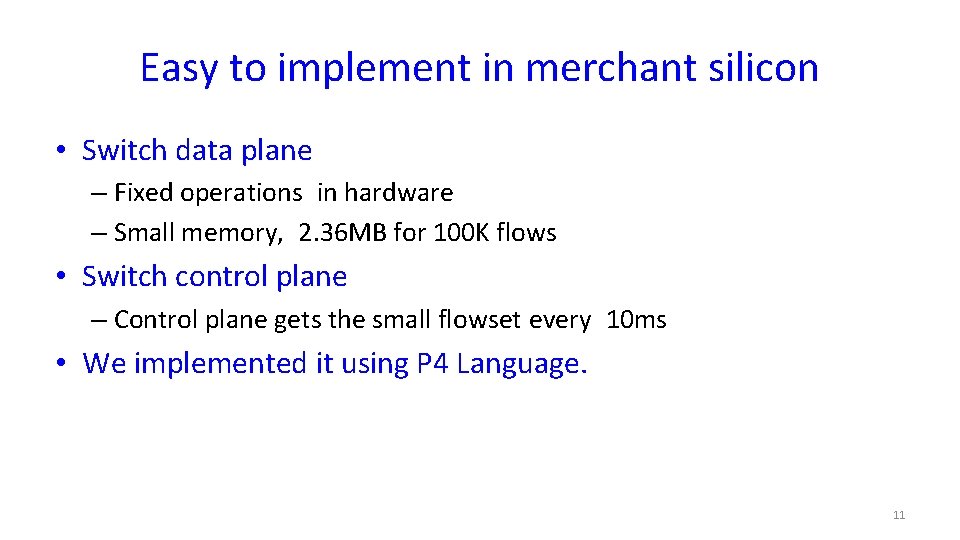

Easy to implement in merchant silicon • Switch data plane – Fixed operations in hardware – Small memory, 2. 36 MB for 100 K flows • Switch control plane – Control plane gets the small flowset every 10 ms • We implemented it using P 4 Language. 11

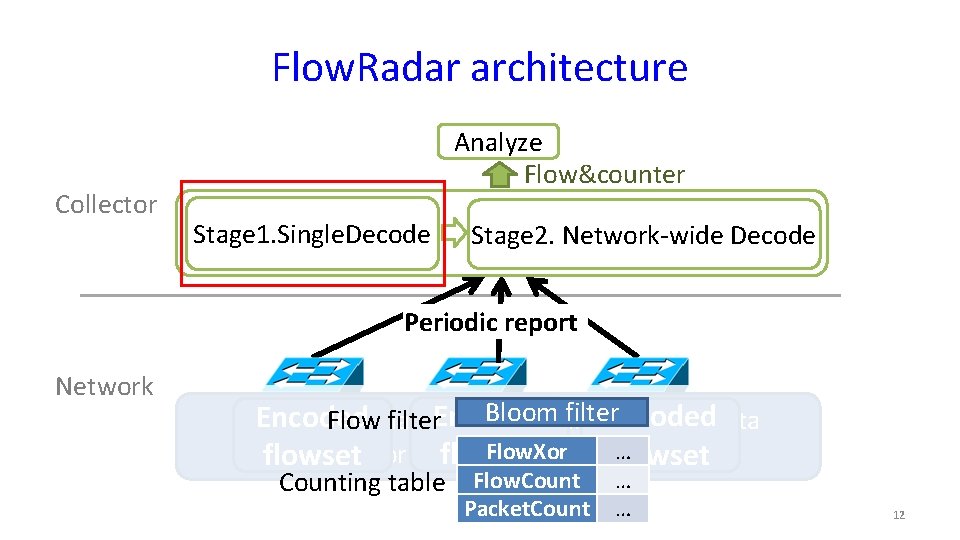

Flow. Radar architecture Collector Analyze Flow&counter Correlate network wide info to extract per-flow counter Stage 1. Single. Decode Stage 2. Network-wide Decode Periodic report Network Bloom filter Encoded Flowmaintains filter. Encoded Each switch a fast and efficient data Flow. Xor … counter structure for half-baked per-flowset flowset Counting table Flow. Count … Packet. Count … 12

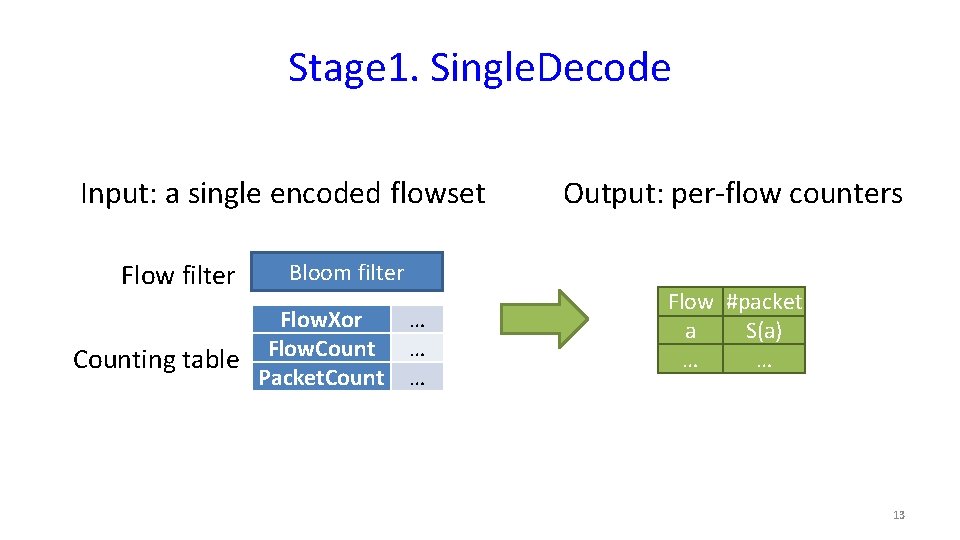

Stage 1. Single. Decode Input: a single encoded flowset Flow filter Output: per-flow counters Bloom filter Flow. Xor Flow. Counting table Packet. Count … … … Flow #packet a S(a) … … 13

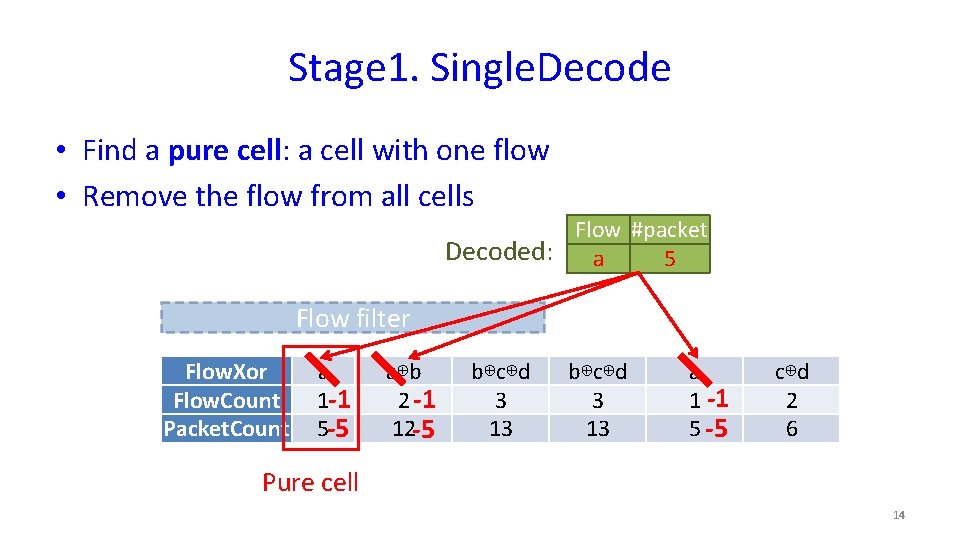

Stage 1. Single. Decode • Find a pure cell: a cell with one flow • Remove the flow from all cells Flow #packet Decoded: a 5 Flow filter Flow. Xor Flow. Count Packet. Count a 1 -1 5 -5 a⊕b 2 -1 12 -5 b⊕c⊕d 3 13 a 1 -1 5 -5 c⊕d 2 6 Pure cell 14

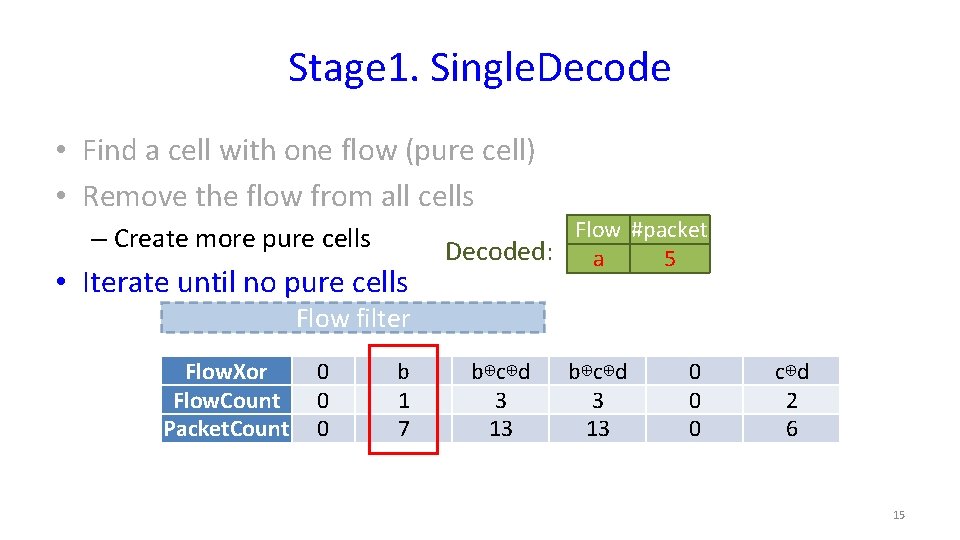

Stage 1. Single. Decode • Find a cell with one flow (pure cell) • Remove the flow from all cells – Create more pure cells • Iterate until no pure cells Flow #packet Decoded: a 5 Flow filter Flow. Xor Flow. Count Packet. Count 0 0 0 b 1 7 b⊕c⊕d 3 13 0 0 0 c⊕d 2 6 15

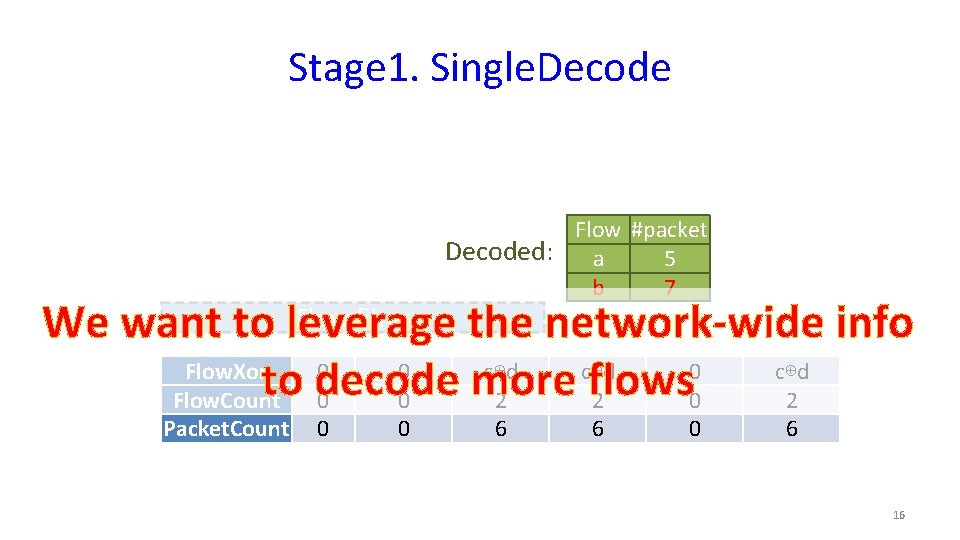

Stage 1. Single. Decode Flow #packet Decoded: a 5 b 7 Flow filter We want to leverage the network-wide info Flow. Xor 0 0 c⊕d to decode more flows Flow. Count 0 0 2 2 0 2 Packet. Count 0 0 6 6 0 6 16

Flow. Radar architecture Collector Analyze Flow&counter Stage 1. Single. Decode Stage 2. Network-wide Decode Periodic report Network Encoded flowset 17

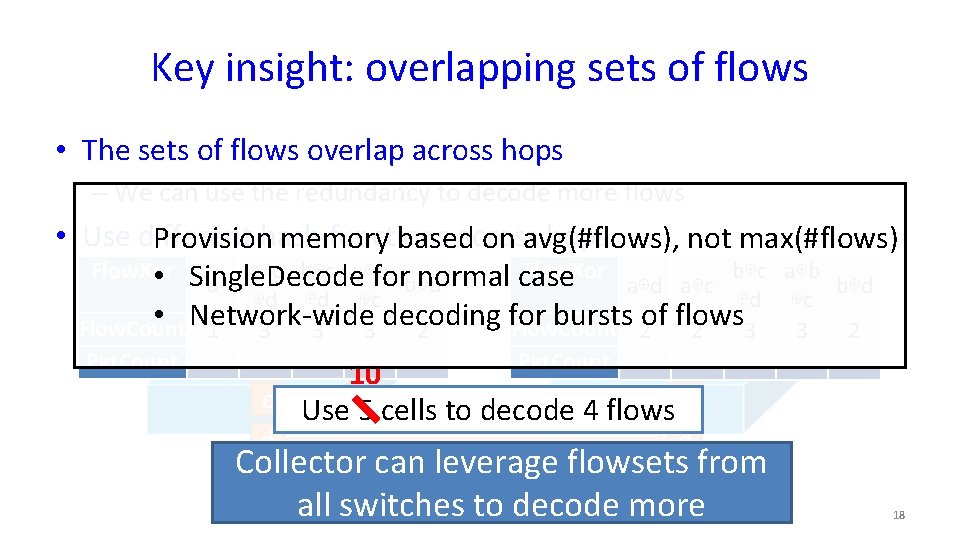

Key insight: overlapping sets of flows • The sets of flows overlap across hops – We can use the redundancy to decode more flows • Use different functions across hops Provisionhash memory based on avg(#flows), not max(#flows) Flow. Xor a⊕c b⊕c a⊕b Flow. Xor b⊕c a⊕b • Single. Decode for normal case a b⊕d a⊕c b⊕d ⊕d ⊕d ⊕c • Network-wide decoding for bursts 2 of flows a 2 3 3 2 Flow. Count 1 3 a 3 3 2 Pkt. Count b b Pkt. Count 10 c Use 5 cells to decode 4 flows c d d Collector can leverage flowsets from all switches to decode more 18

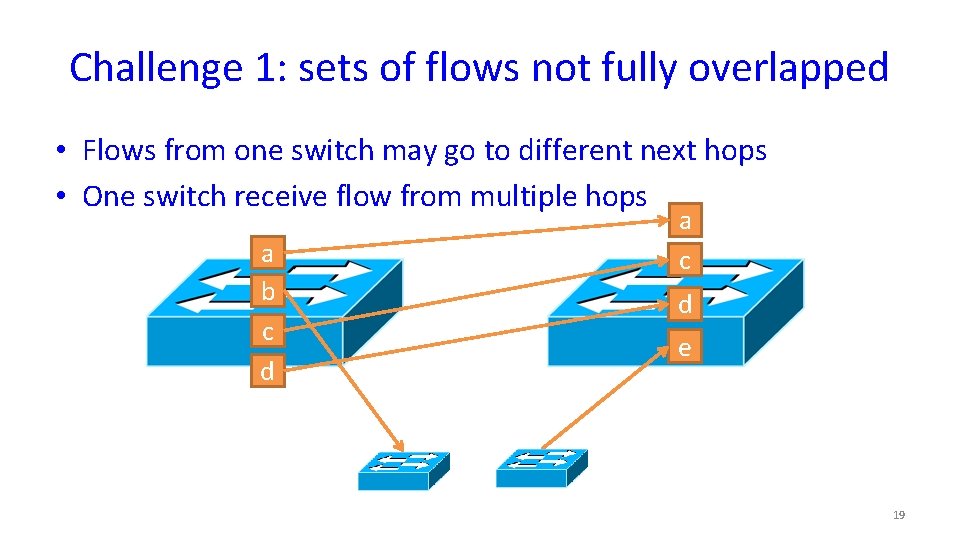

Challenge 1: sets of flows not fully overlapped • Flows from one switch may go to different next hops • One switch receive flow from multiple hops a b c d a c d e 19

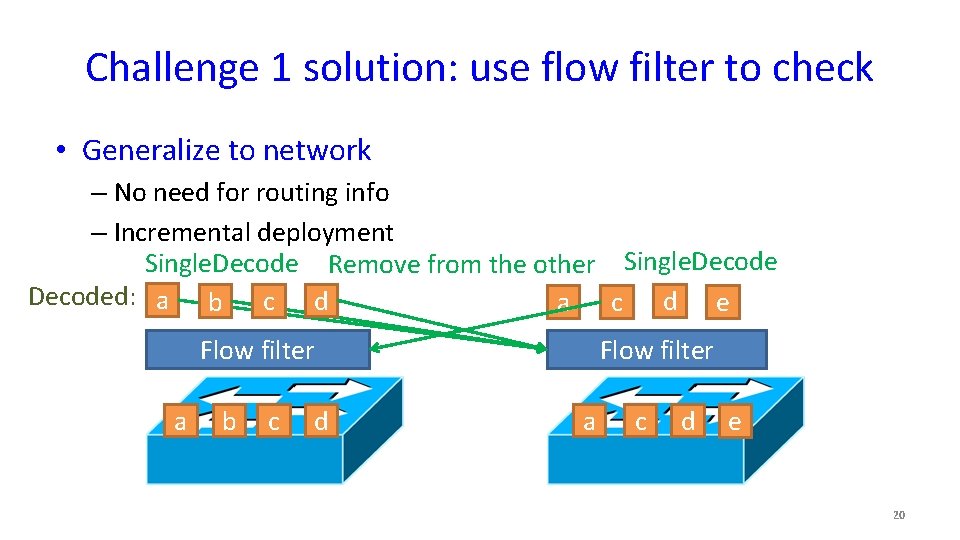

Challenge 1 solution: use flow filter to check • Generalize to network – No need for routing info – Incremental deployment Single. Decode Remove from the other Single. Decoded: a b c d a c d e Flow filter a b c d Flow filter a c d e 20

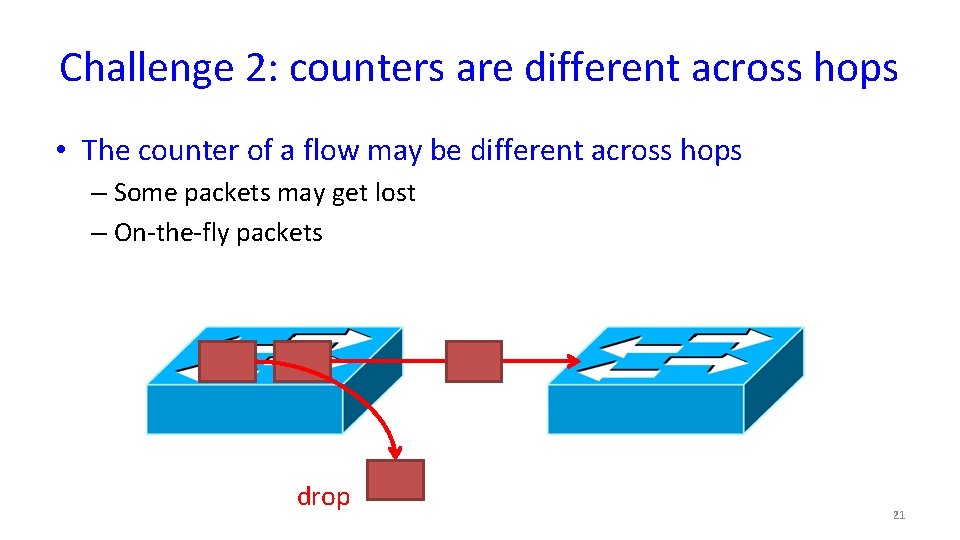

Challenge 2: counters are different across hops • The counter of a flow may be different across hops – Some packets may get lost – On-the-fly packets drop 21

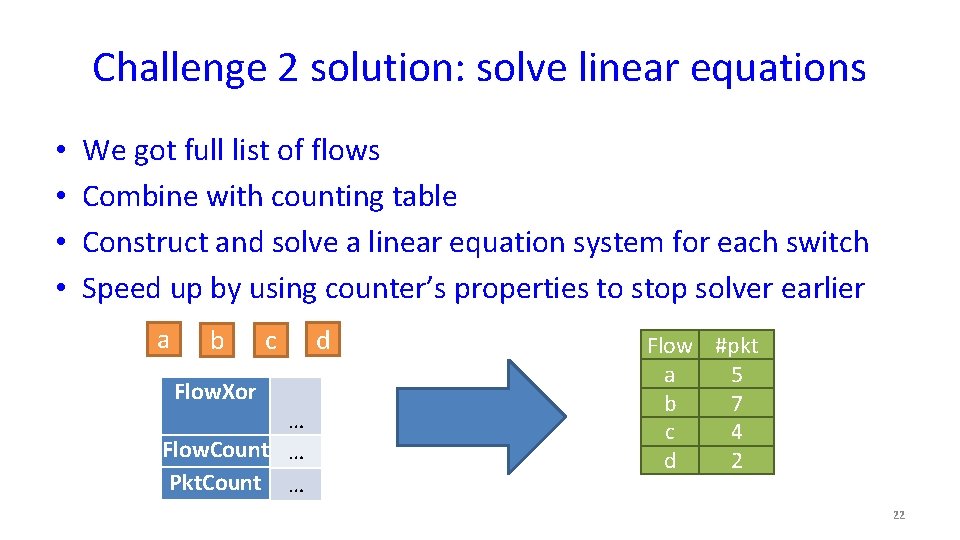

Challenge 2 solution: solve linear equations • • We got full list of flows Combine with counting table Construct and solve a linear equation system for each switch Speed up by using counter’s properties to stop solver earlier a b Flow. Xor c … Flow. Count … Pkt. Count … d Flow #pkt a 5 b 7 c 4 d 2 22

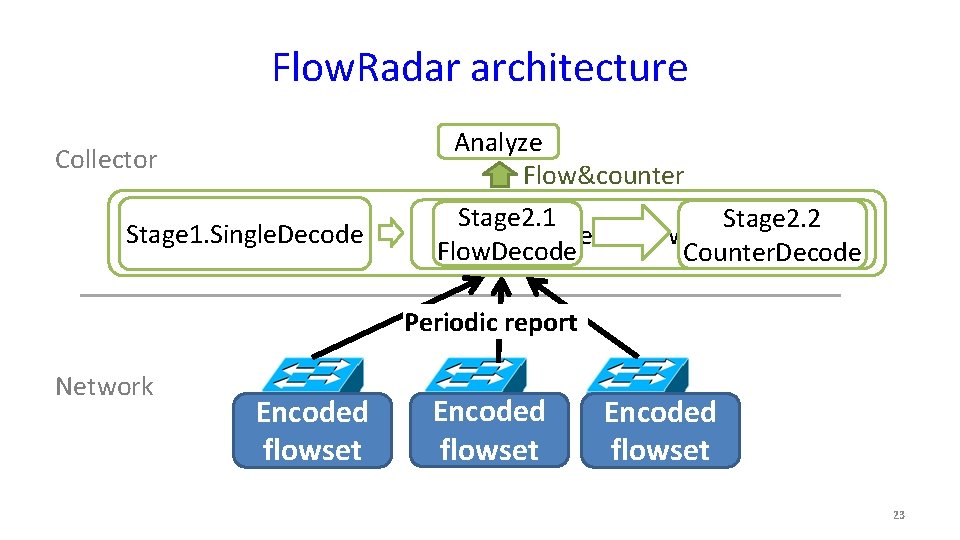

Flow. Radar architecture Collector Stage 1. Single. Decode Analyze Flow&counter Stage 2. 1 Stage 2. 2 Stage 2. Network-wide Decode Flow. Decode Counter. Decode Periodic report Network Encoded flowset 23

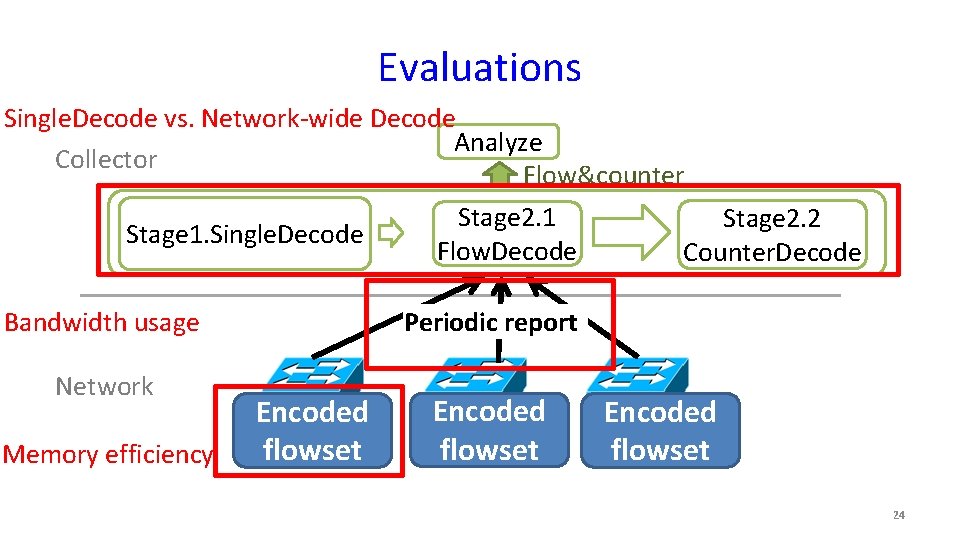

Evaluations Single. Decode vs. Network-wide Decode Analyze Collector Flow&counter Stage 2. 1 Stage 2. 2 Stage 1. Single. Decode Flow. Decode Counter. Decode Periodic report Bandwidth usage Network Memory efficiency Encoded flowset 24

Evaluation • Simulation of k=8 Fat. Tree (80 switches, 128 hosts) in ns 3 • Config the memory base on avg(#flow), – when burst of flows happens, use network-wide decode • The worst case is all switches are pushed to max(#flow) – Traffic: each switch has same number of flows, and thus same memory • Each switch reports the flowset every 10 ms. 25

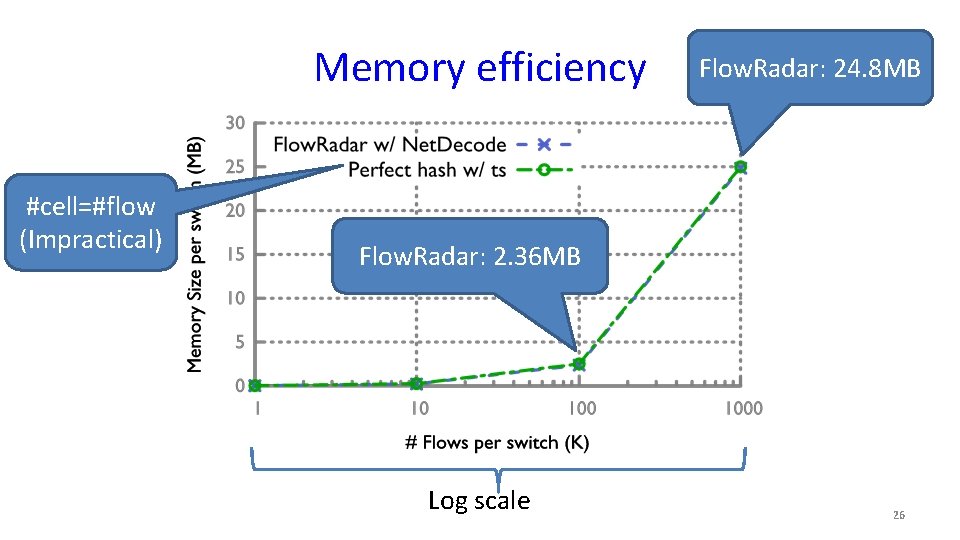

Memory efficiency #cell=#flow (Impractical) Flow. Radar: 24. 8 MB Flow. Radar: 2. 36 MB Log scale 26

Other results • Bandwidth usage – Only 0. 52% based on topology and traffic of Facebook data centers (sigcomm’ 15) • Net. Decode improvement over Single. Decode – Single. Decode 100 K flow, which takes 10 ms – Net. Decode 26. 8% more flows with the same memory, which takes around 3 sec 27

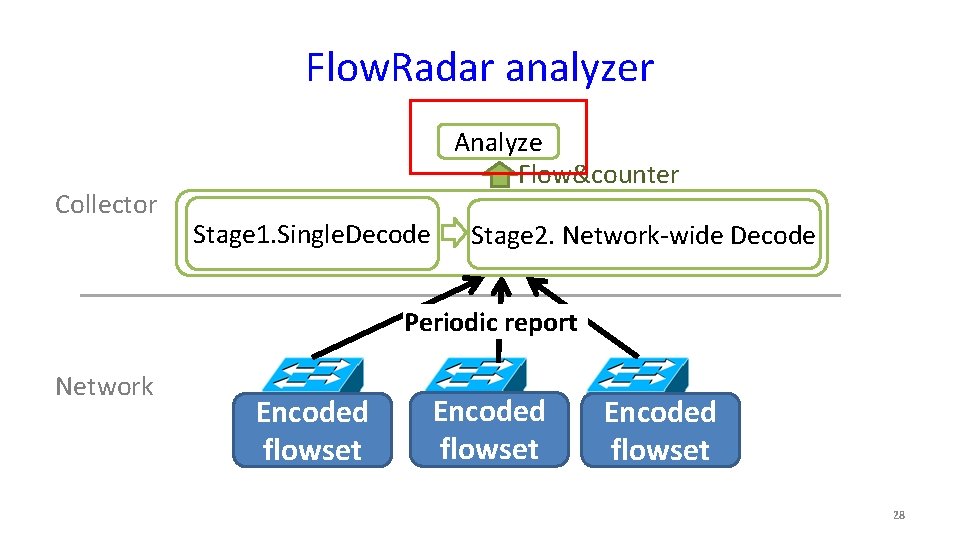

Flow. Radar analyzer Collector Analyze Flow&counter Stage 1. Single. Decode Stage 2. Network-wide Decode Periodic report Network Encoded flowset 28

Analysis applications • Flow coverage – Transient loop/blackhole – Error in match-action table – Fine-grained traffic analysis • Temporal coverage – Short time-scale per-flow loss rate – ECMP load imbalance – Timely attack detection 29

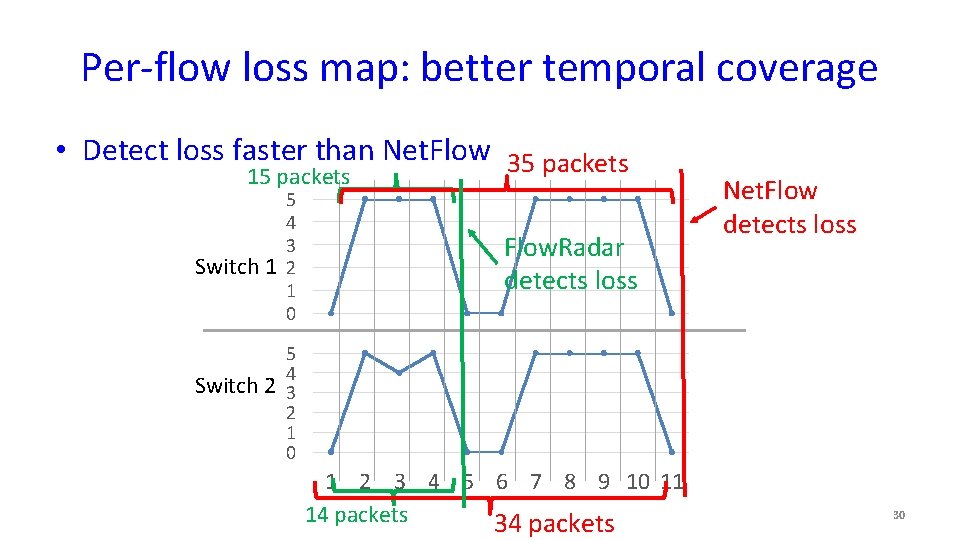

Per-flow loss map: better temporal coverage • Detect loss faster than Net. Flow 35 packets 15 packets Switch 1 Switch 2 5 4 3 2 1 0 Flow. Radar detects loss Net. Flow detects loss 5 4 3 2 1 0 1 2 3 4 5 6 7 8 9 10 11 14 packets 30

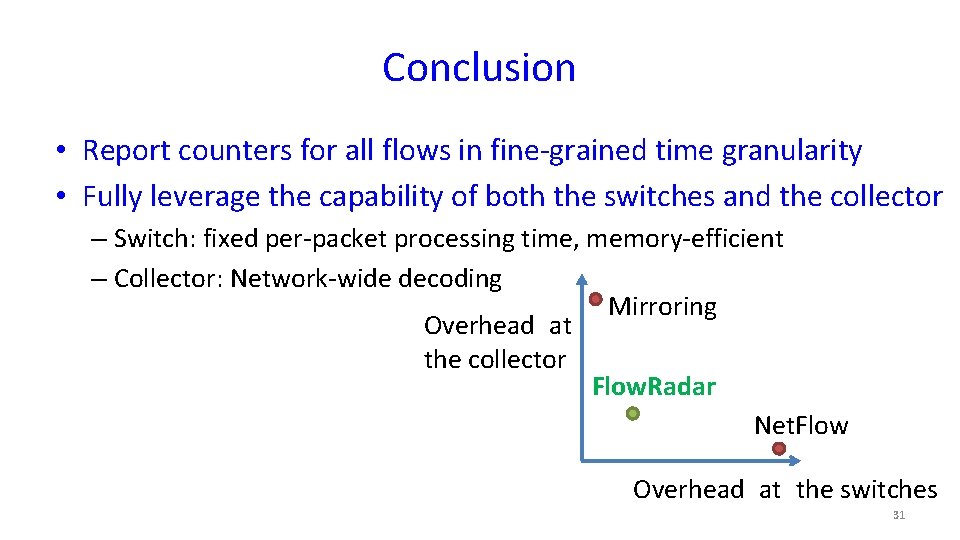

Conclusion • Report counters for all flows in fine-grained time granularity • Fully leverage the capability of both the switches and the collector – Switch: fixed per-packet processing time, memory-efficient – Collector: Network-wide decoding Mirroring Overhead at the collector Flow. Radar Net. Flow Overhead at the switches 31

- Slides: 31