EECS Electrical Engineering and Computer Sciences BERKELEY PAR

EECS Electrical Engineering and Computer Sciences BERKELEY PAR LAB P A R A L L E L C O M P U T I N G L A B O R A T O R Y Efficient Data Race Detection for Distributed Memory Parallel Programs Chang-Seo Park and Koushik Sen University of California Berkeley Paul Hargove and Costin Iancu Lawrence Berkeley Laboratory CS 267 Spring 2012 (3/8) Originally presented at Supercomputing 2011 (Seattle, WA)

EECS Electrical Engineering and Computer Sciences Current State of Parallel Programming BERKELEY PAR LAB § Parallelism everywhere! § Top supercomputer has 500 K+ cores § Quad-core standard on desktop / laptops § Dual-core smartphones § Parallelism and concurrency make programming harder § Scheduling non-determinism may cause subtle bugs § But, limited usage of testing and correctness tools § We like hero programmers § Hero programmers can find bugs (in sequential code) § Tools are hard to find and use 2

EECS Electrical Engineering and Computer Sciences Outline BERKELEY PAR LAB § Introduction § Example Bug and Motivation § Efficient Data Race Detection with Active Testing § Prediction phase § Confirmation phase § HOWTO: Primer on using UPC-Thrille § Conclusion § Q&A and Project Ideas 3

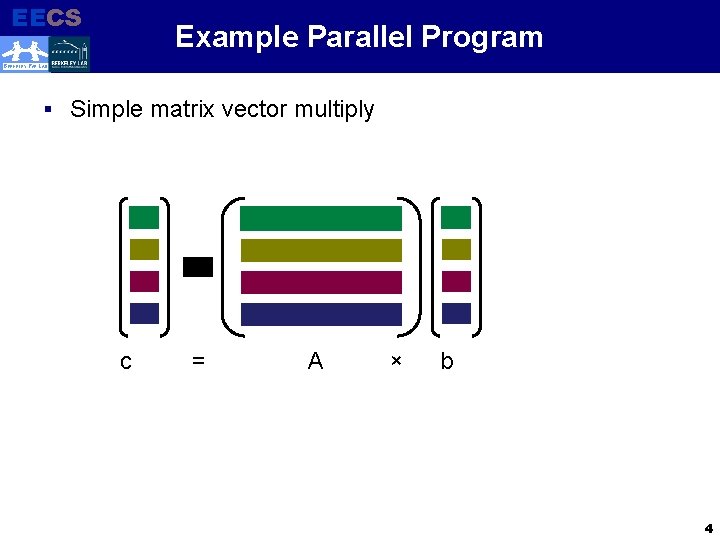

EECS Example Parallel Program Electrical Engineering and Computer Sciences BERKELEY PAR LAB § Simple matrix vector multiply c = A × b 4

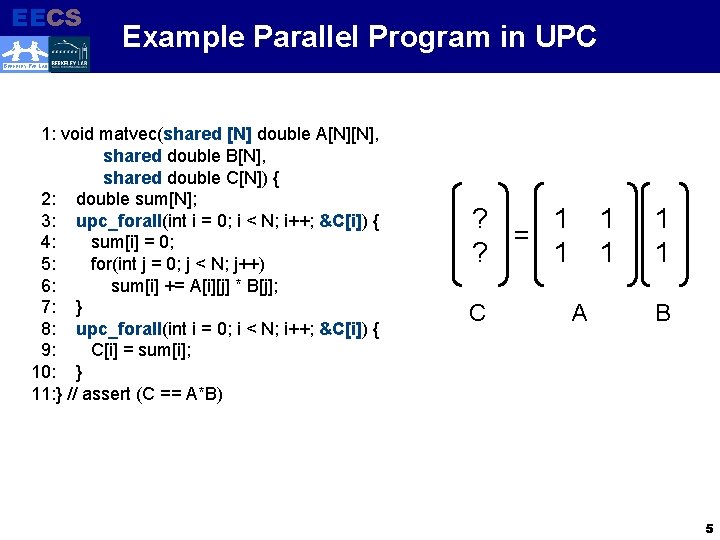

EECS Electrical Engineering and Computer Sciences Example Parallel Program in UPC BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) ? 1 = ? 1 C 1 1 A 1 1 B 5

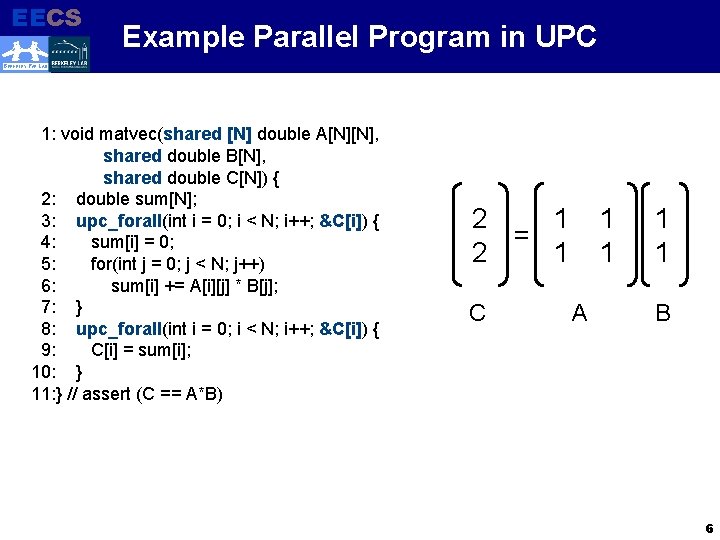

EECS Electrical Engineering and Computer Sciences Example Parallel Program in UPC BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) 2 1 = 2 1 C 1 1 A 1 1 B 6

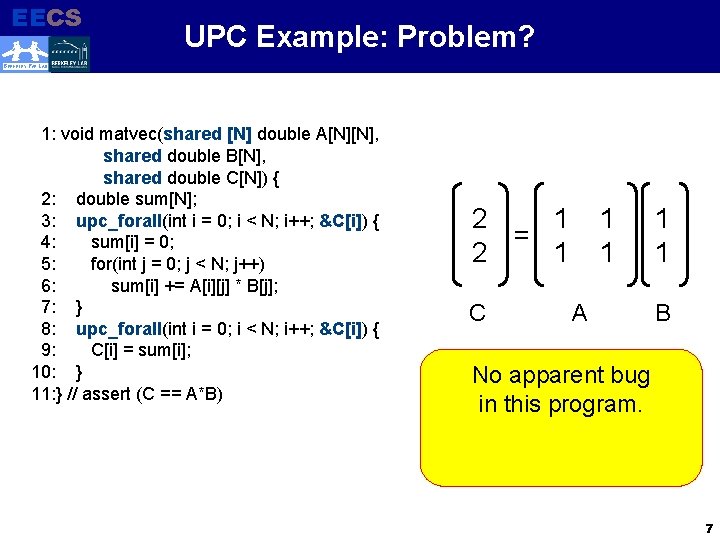

EECS Electrical Engineering and Computer Sciences UPC Example: Problem? BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) 2 1 = 2 1 C 1 1 A 1 1 B No apparent bug in this program. 7

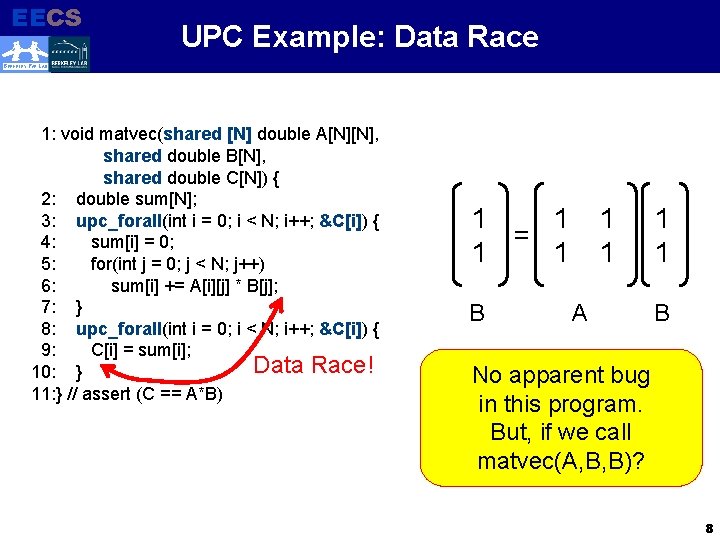

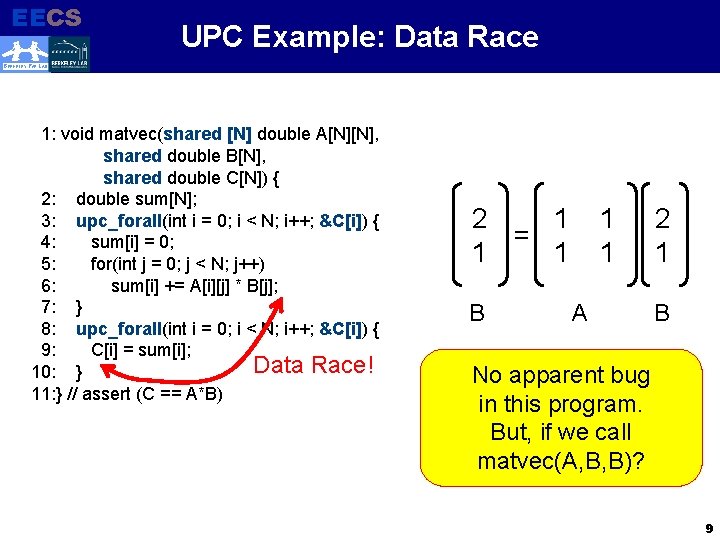

EECS Electrical Engineering and Computer Sciences UPC Example: Data Race BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; Data Race! 10: } 11: } // assert (C == A*B) 1 1 = 1 1 B 1 1 A 1 1 B No apparent bug in this program. But, if we call matvec(A, B, B)? 8

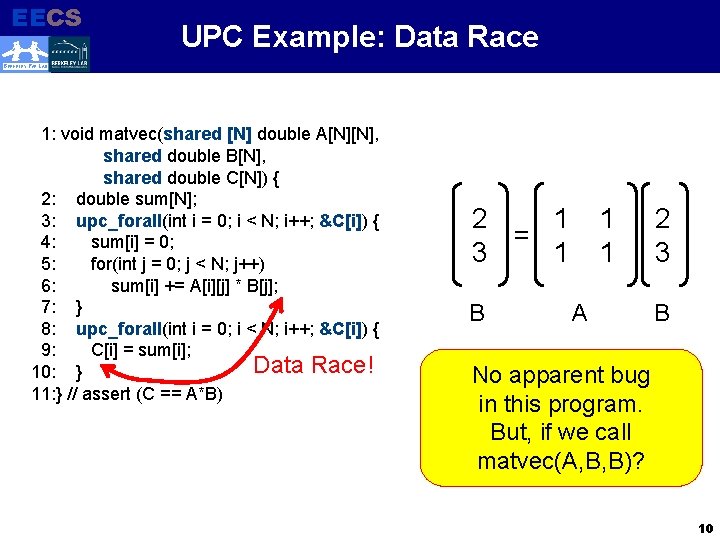

EECS Electrical Engineering and Computer Sciences UPC Example: Data Race BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; Data Race! 10: } 11: } // assert (C == A*B) 2 1 = 1 1 B 1 1 A 2 1 B No apparent bug in this program. But, if we call matvec(A, B, B)? 9

EECS Electrical Engineering and Computer Sciences UPC Example: Data Race BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; Data Race! 10: } 11: } // assert (C == A*B) 2 1 = 3 1 B 1 1 A 2 3 B No apparent bug in this program. But, if we call matvec(A, B, B)? 10

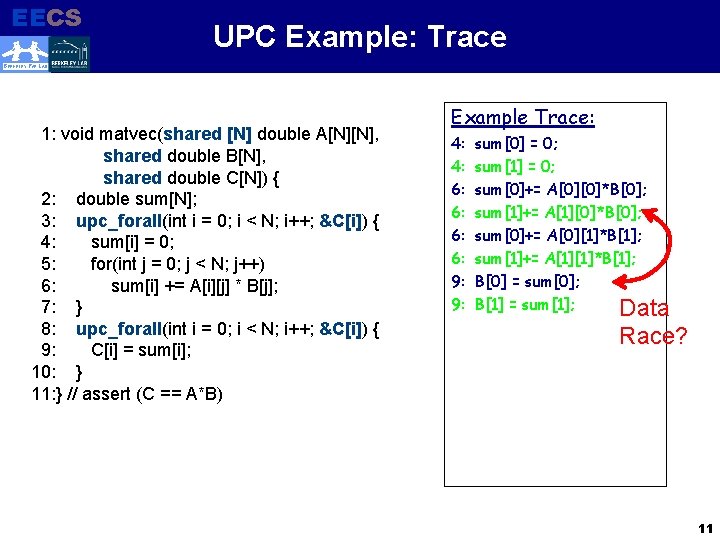

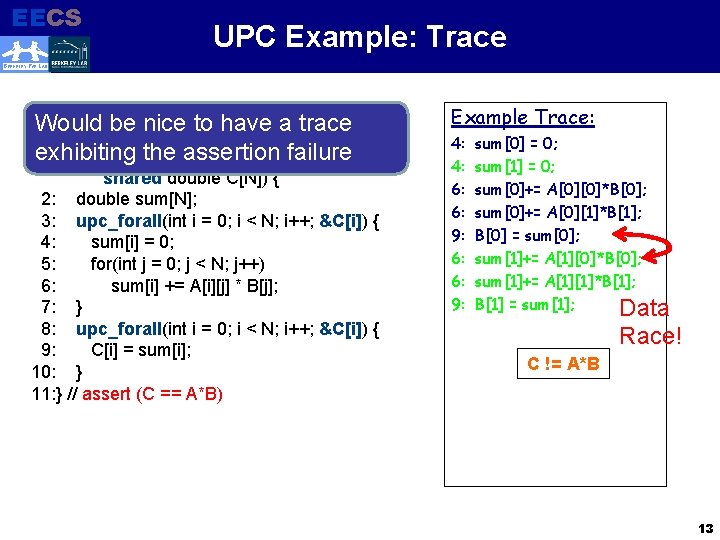

EECS Electrical Engineering and Computer Sciences UPC Example: Trace BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Example Trace: 4: 4: 6: 6: 9: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[1]+= A[1][0]*B[0]; sum[0]+= A[0][1]*B[1]; sum[1]+= A[1][1]*B[1]; B[0] = sum[0]; B[1] = sum[1]; Data Race? 11

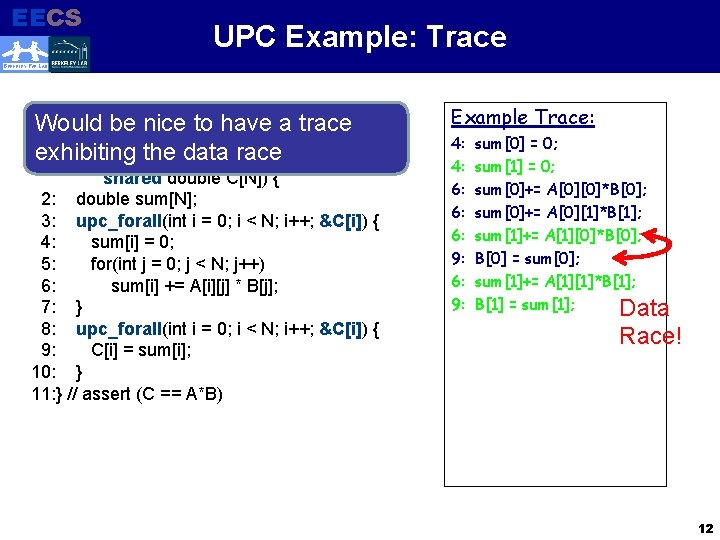

EECS Electrical Engineering and Computer Sciences UPC Example: Trace BERKELEY PAR LAB Would be nice to have a trace 1: void matvec(shared [N] double A[N][N], exhibiting thedouble data. B[N], race shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Example Trace: 4: 4: 6: 6: 6: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; sum[1]+= A[1][0]*B[0]; B[0] = sum[0]; sum[1]+= A[1][1]*B[1]; B[1] = sum[1]; Data Race! 12

EECS Electrical Engineering and Computer Sciences UPC Example: Trace BERKELEY PAR LAB Would be nice to have a trace 1: void matvec(shared [N] double A[N][N], exhibiting thedouble assertion shared B[N], failure shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Example Trace: 4: 4: 6: 6: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; B[0] = sum[0]; sum[1]+= A[1][0]*B[0]; sum[1]+= A[1][1]*B[1]; B[1] = sum[1]; Data Race! C != A*B 13

EECS Electrical Engineering and Computer Sciences Desiderata BERKELEY PAR LAB § Would be nice to have a trace § Showing a data race (or some other concurrency bug) § Showing an assertion violation due to a data race (or some other visible artifact) 14

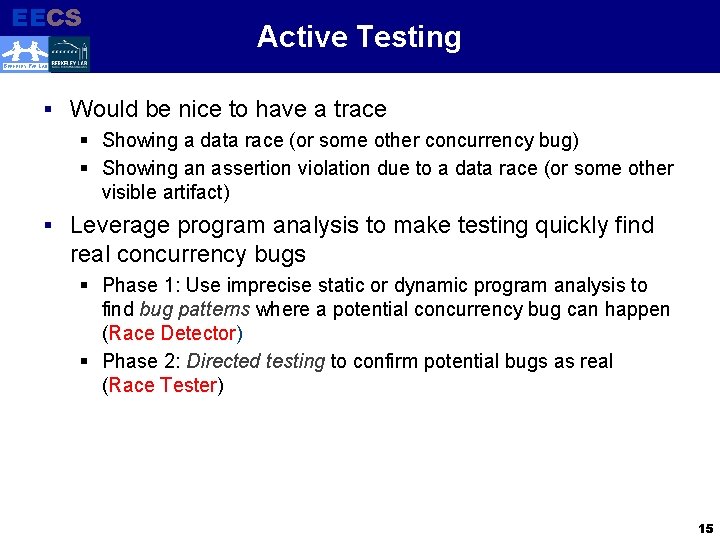

EECS Electrical Engineering and Computer Sciences Active Testing BERKELEY PAR LAB § Would be nice to have a trace § Showing a data race (or some other concurrency bug) § Showing an assertion violation due to a data race (or some other visible artifact) § Leverage program analysis to make testing quickly find real concurrency bugs § Phase 1: Use imprecise static or dynamic program analysis to find bug patterns where a potential concurrency bug can happen (Race Detector) § Phase 2: Directed testing to confirm potential bugs as real (Race Tester) 15

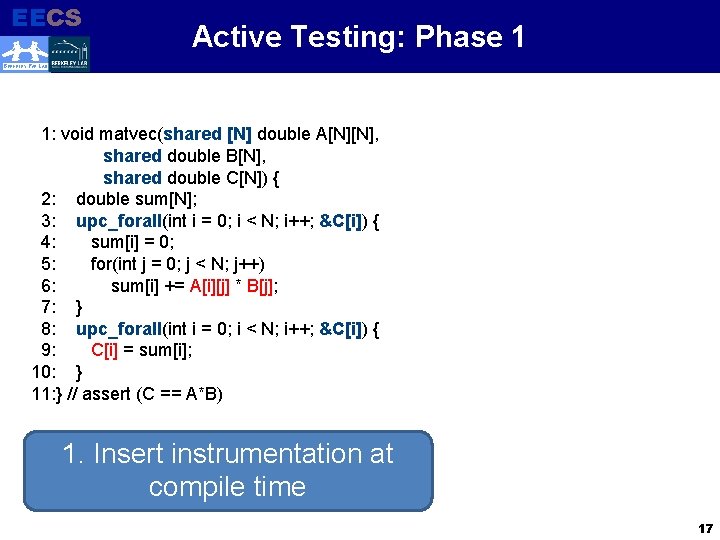

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 1 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) 16

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 1 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) 1. Insert instrumentation at compile time 17

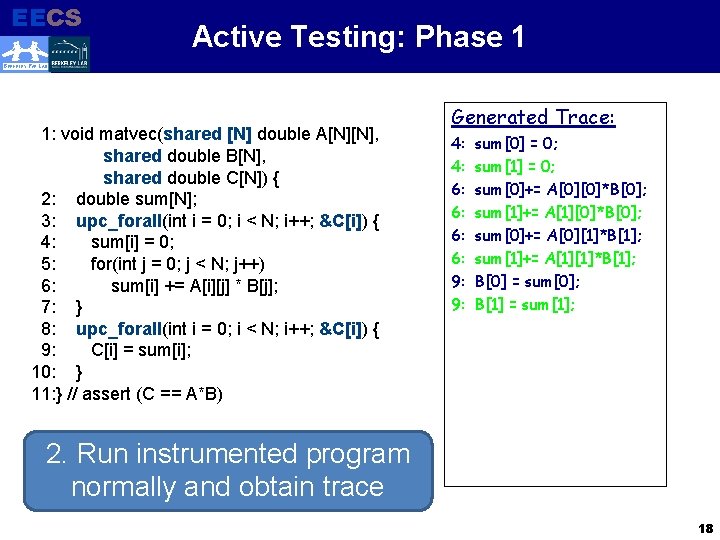

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 1 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Generated Trace: 4: 4: 6: 6: 9: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[1]+= A[1][0]*B[0]; sum[0]+= A[0][1]*B[1]; sum[1]+= A[1][1]*B[1]; B[0] = sum[0]; B[1] = sum[1]; 2. Run instrumented program normally and obtain trace 18

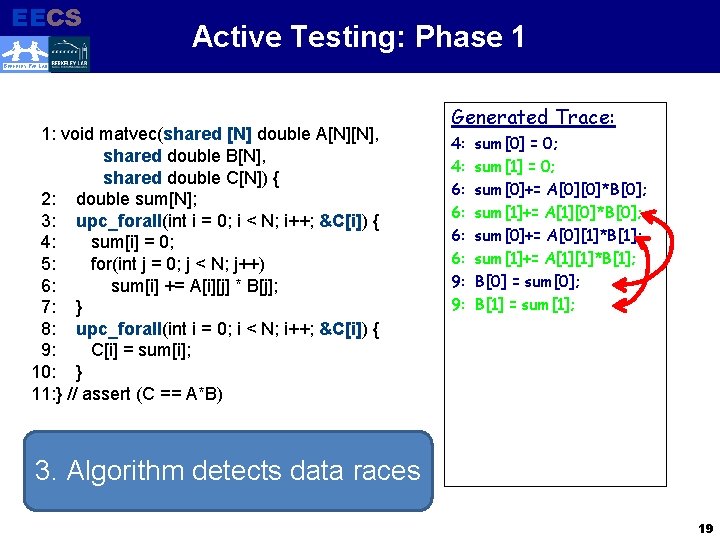

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 1 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Generated Trace: 4: 4: 6: 6: 9: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[1]+= A[1][0]*B[0]; sum[0]+= A[0][1]*B[1]; sum[1]+= A[1][1]*B[1]; B[0] = sum[0]; B[1] = sum[1]; 3. Algorithm detects data races 19

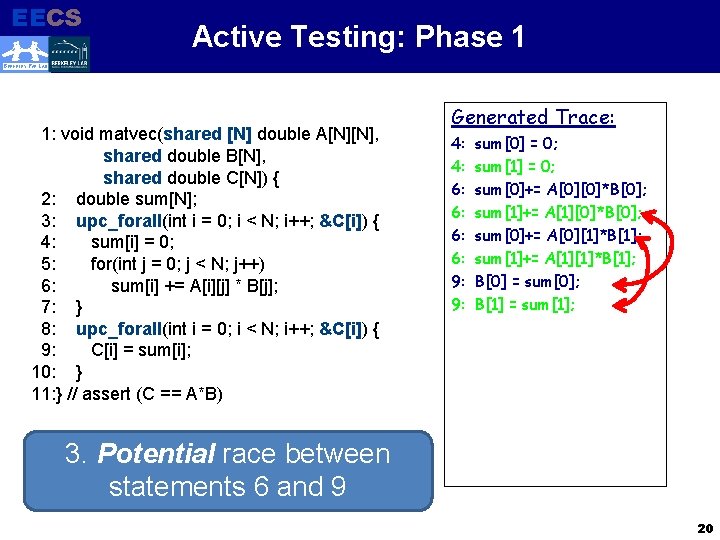

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 1 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Generated Trace: 4: 4: 6: 6: 9: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[1]+= A[1][0]*B[0]; sum[0]+= A[0][1]*B[1]; sum[1]+= A[1][1]*B[1]; B[0] = sum[0]; B[1] = sum[1]; 3. Potential race between statements 6 and 9 20

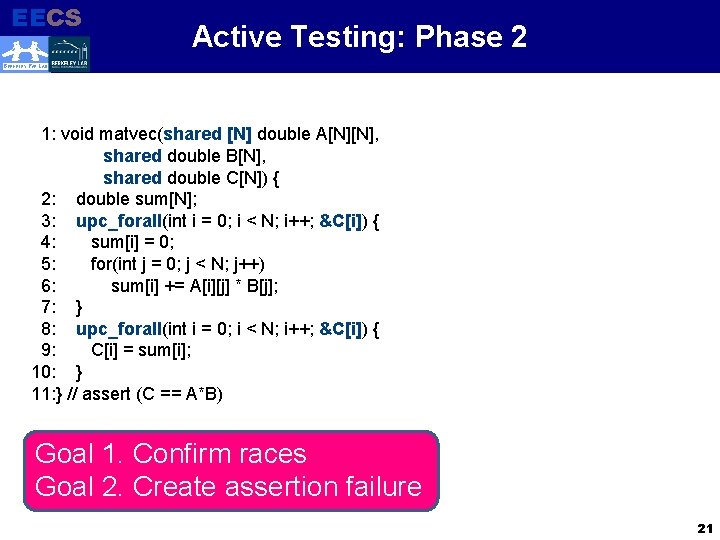

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Goal 1. Confirm races Goal 2. Create assertion failure 21

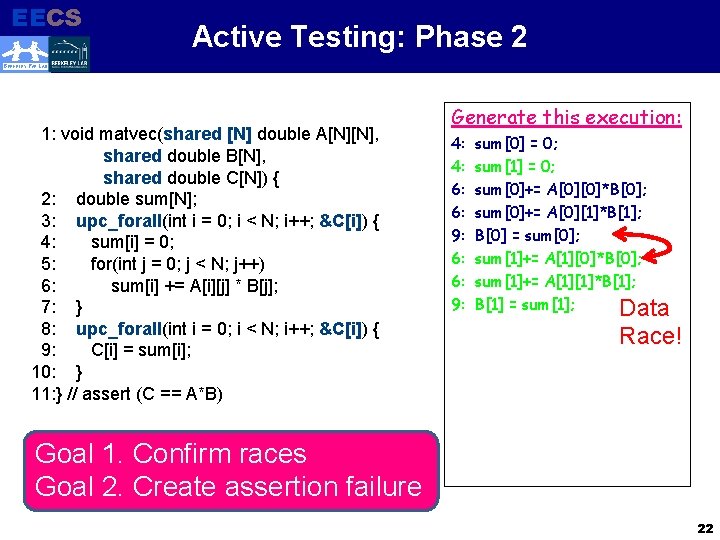

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Generate this execution: 4: 4: 6: 6: 9: sum[0] = 0; sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; B[0] = sum[0]; sum[1]+= A[1][0]*B[0]; sum[1]+= A[1][1]*B[1]; B[1] = sum[1]; Data Race! Goal 1. Confirm races Goal 2. Create assertion failure 22

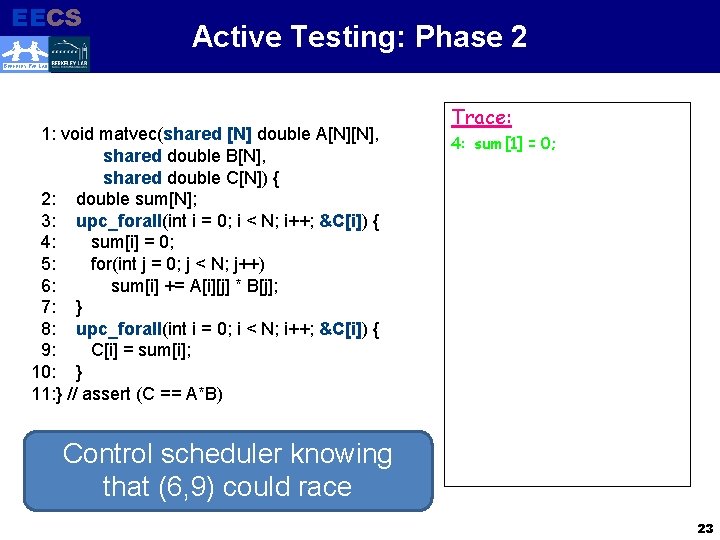

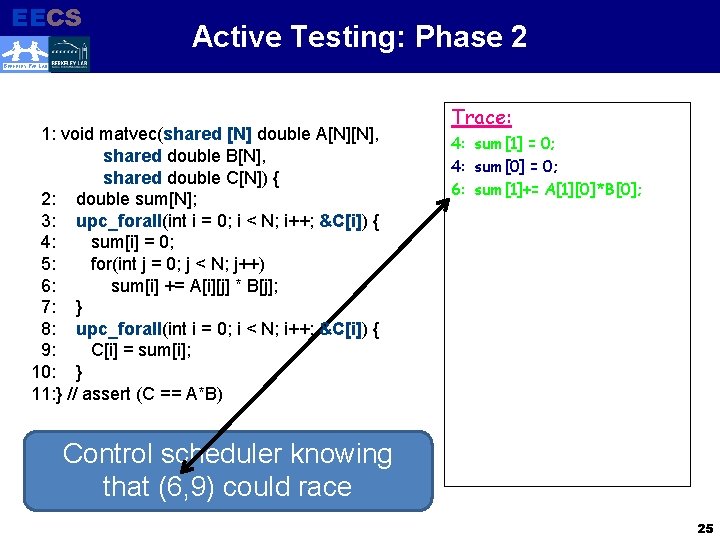

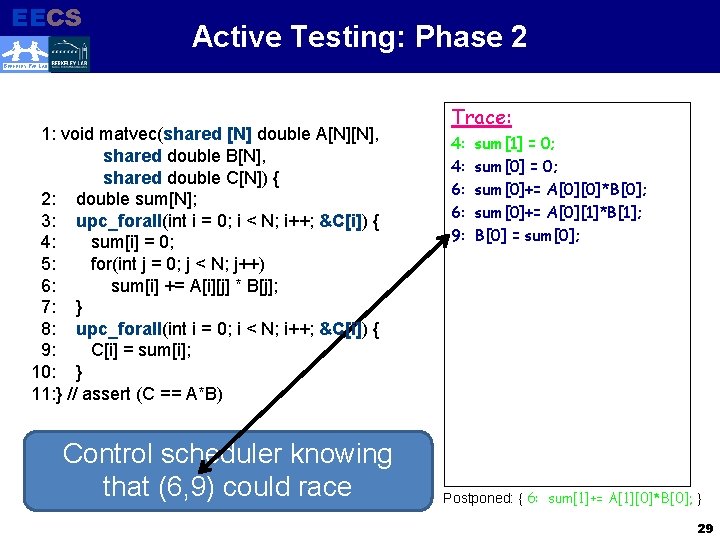

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Trace: 4: sum[1] = 0; Control scheduler knowing that (6, 9) could race 23

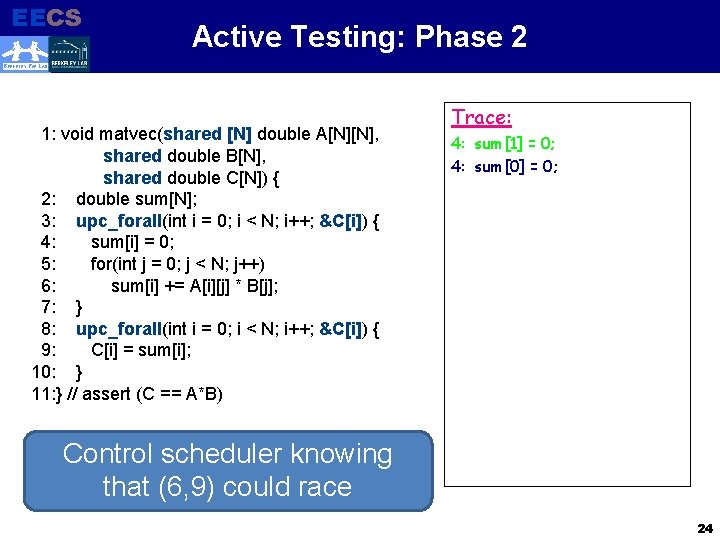

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Trace: 4: sum[1] = 0; 4: sum[0] = 0; Control scheduler knowing that (6, 9) could race 24

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Trace: 4: sum[1] = 0; 4: sum[0] = 0; 6: sum[1]+= A[1][0]*B[0]; Control scheduler knowing that (6, 9) could race 25

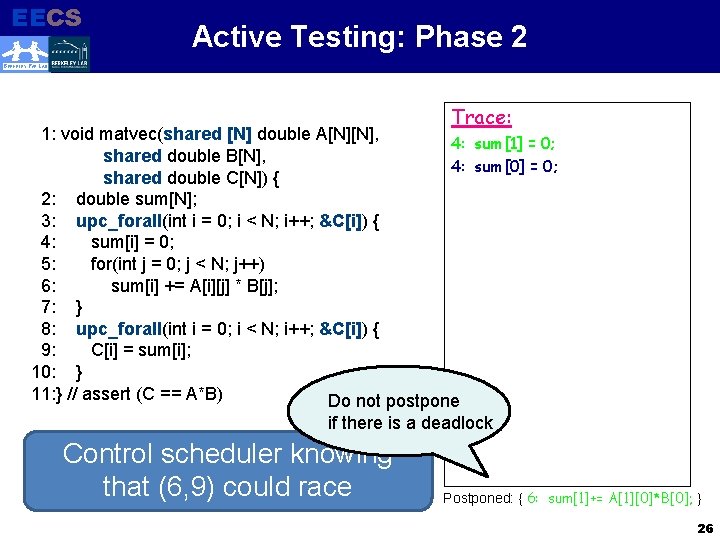

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB Trace: 1: void matvec(shared [N] double A[N][N], 4: sum[1] = 0; shared double B[N], 4: sum[0] = 0; shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Do not postpone if there is a deadlock Control scheduler knowing that (6, 9) could race Postponed: { 6: sum[1]+= A[1][0]*B[0]; } 26

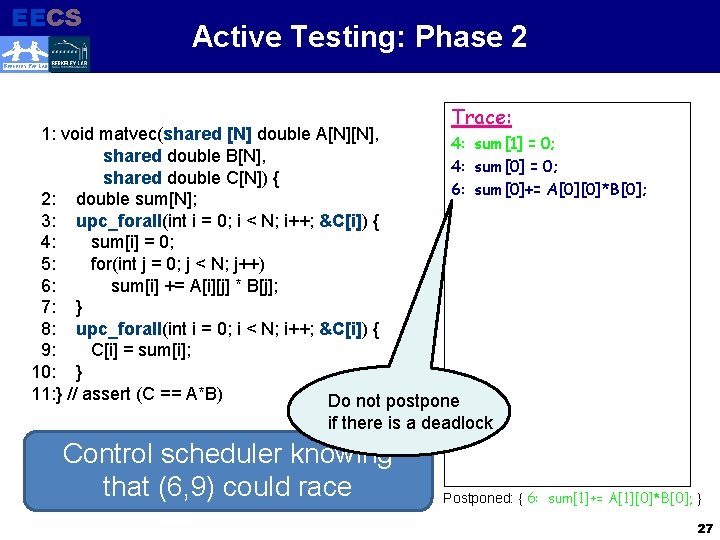

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB Trace: 1: void matvec(shared [N] double A[N][N], 4: sum[1] = 0; shared double B[N], 4: sum[0] = 0; shared double C[N]) { 6: sum[0]+= A[0][0]*B[0]; 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Do not postpone if there is a deadlock Control scheduler knowing that (6, 9) could race Postponed: { 6: sum[1]+= A[1][0]*B[0]; } 27

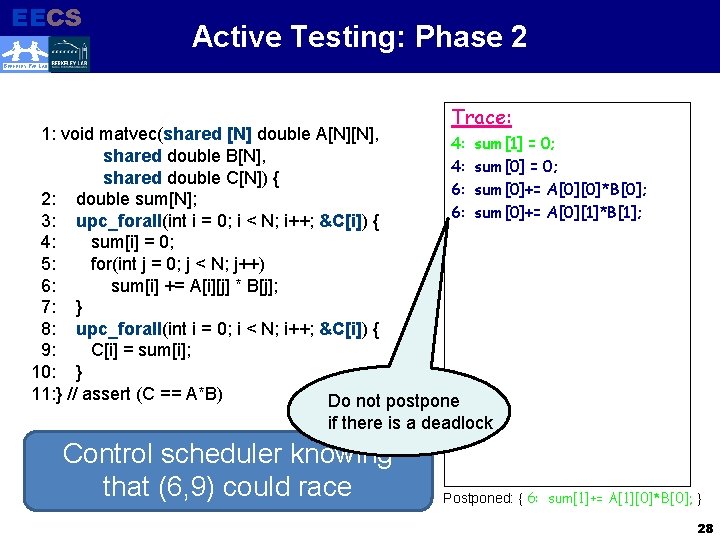

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB Trace: 1: void matvec(shared [N] double A[N][N], 4: shared double B[N], 4: shared double C[N]) { 6: 2: double sum[N]; 6: 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Do not postpone sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; if there is a deadlock Control scheduler knowing that (6, 9) could race Postponed: { 6: sum[1]+= A[1][0]*B[0]; } 28

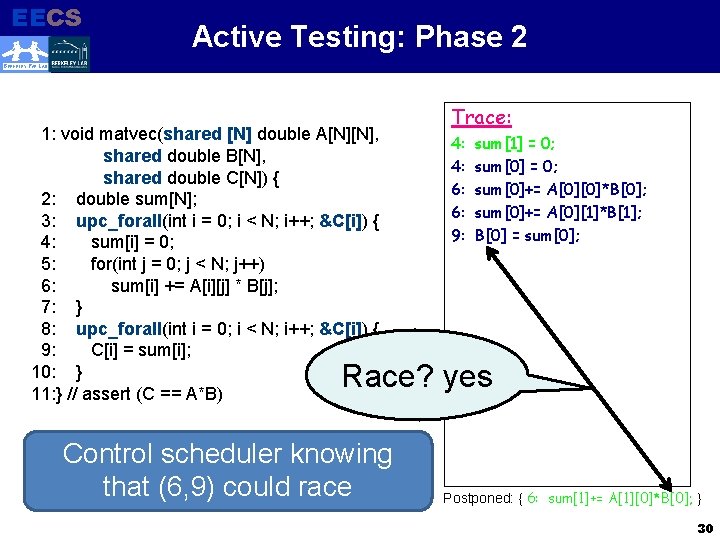

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Control scheduler knowing that (6, 9) could race Trace: 4: 4: 6: 6: 9: sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; B[0] = sum[0]; Postponed: { 6: sum[1]+= A[1][0]*B[0]; } 29

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Trace: 4: 4: 6: 6: 9: sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; B[0] = sum[0]; Race? yes Control scheduler knowing that (6, 9) could race Postponed: { 6: sum[1]+= A[1][0]*B[0]; } 30

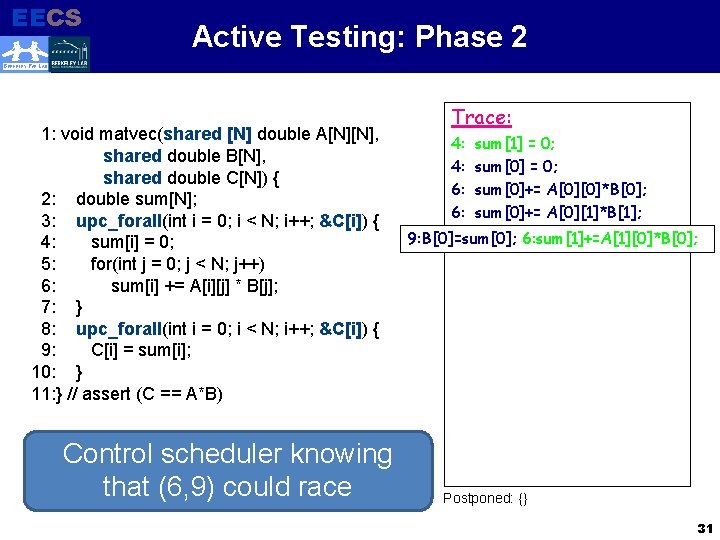

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Control scheduler knowing that (6, 9) could race Trace: 4: 4: 6: 6: sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; 9: B[0]=sum[0]; 6: sum[1]+=A[1][0]*B[0]; Postponed: {} 31

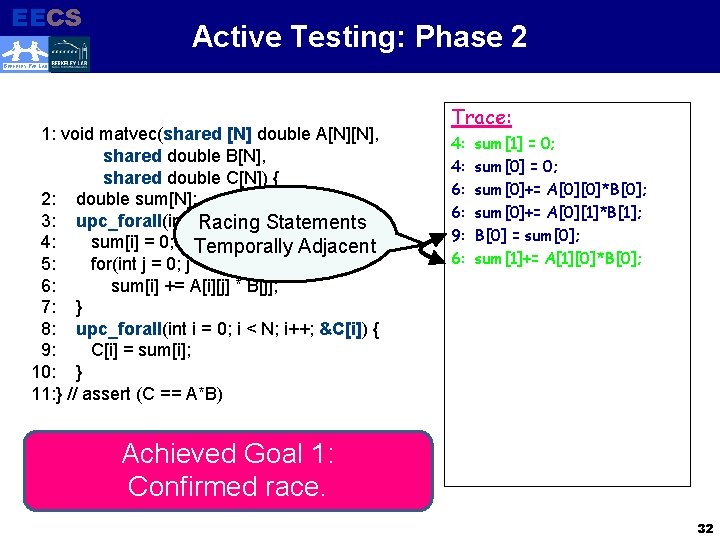

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i Racing = 0; i < N; i++; &C[i]) { Statements 4: sum[i] = 0; Temporally Adjacent 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Trace: 4: 4: 6: 6: 9: 6: sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; B[0] = sum[0]; sum[1]+= A[1][0]*B[0]; Achieved Goal 1: Confirmed race. 32

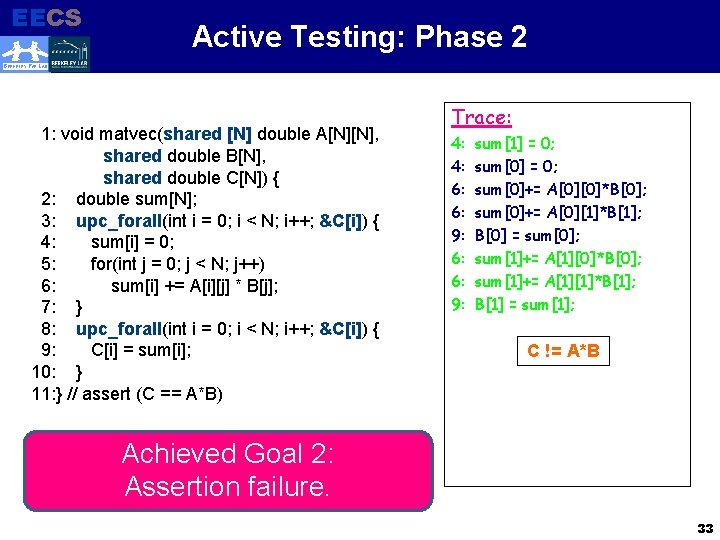

EECS Electrical Engineering and Computer Sciences Active Testing: Phase 2 BERKELEY PAR LAB 1: void matvec(shared [N] double A[N][N], shared double B[N], shared double C[N]) { 2: double sum[N]; 3: upc_forall(int i = 0; i < N; i++; &C[i]) { 4: sum[i] = 0; 5: for(int j = 0; j < N; j++) 6: sum[i] += A[i][j] * B[j]; 7: } 8: upc_forall(int i = 0; i < N; i++; &C[i]) { 9: C[i] = sum[i]; 10: } 11: } // assert (C == A*B) Trace: 4: 4: 6: 6: 9: sum[1] = 0; sum[0]+= A[0][0]*B[0]; sum[0]+= A[0][1]*B[1]; B[0] = sum[0]; sum[1]+= A[1][0]*B[0]; sum[1]+= A[1][1]*B[1]; B[1] = sum[1]; C != A*B Achieved Goal 2: Assertion failure. 33

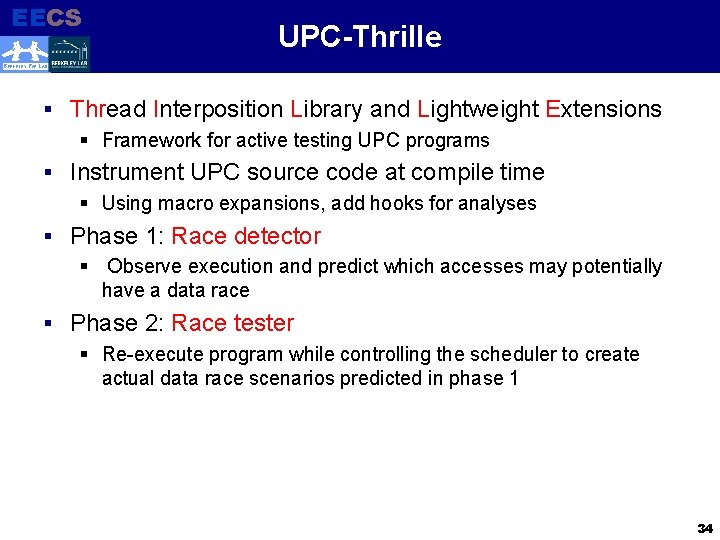

EECS Electrical Engineering and Computer Sciences UPC-Thrille BERKELEY PAR LAB § Thread Interposition Library and Lightweight Extensions § Framework for active testing UPC programs § Instrument UPC source code at compile time § Using macro expansions, add hooks for analyses § Phase 1: Race detector § Observe execution and predict which accesses may potentially have a data race § Phase 2: Race tester § Re-execute program while controlling the scheduler to create actual data race scenarios predicted in phase 1 34

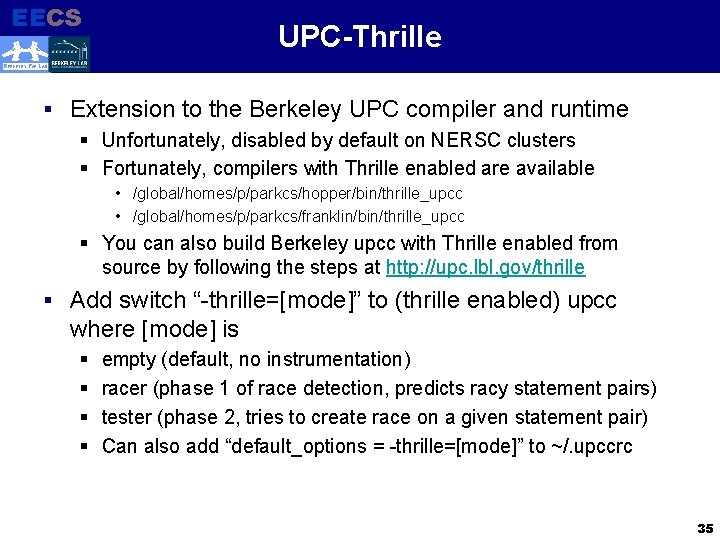

EECS UPC-Thrille Electrical Engineering and Computer Sciences BERKELEY PAR LAB § Extension to the Berkeley UPC compiler and runtime § Unfortunately, disabled by default on NERSC clusters § Fortunately, compilers with Thrille enabled are available • /global/homes/p/parkcs/hopper/bin/thrille_upcc • /global/homes/p/parkcs/franklin/bin/thrille_upcc § You can also build Berkeley upcc with Thrille enabled from source by following the steps at http: //upc. lbl. gov/thrille § Add switch “-thrille=[mode]” to (thrille enabled) upcc where [mode] is § § empty (default, no instrumentation) racer (phase 1 of race detection, predicts racy statement pairs) tester (phase 2, tries to create race on a given statement pair) Can also add “default_options = -thrille=[mode]” to ~/. upccrc 35

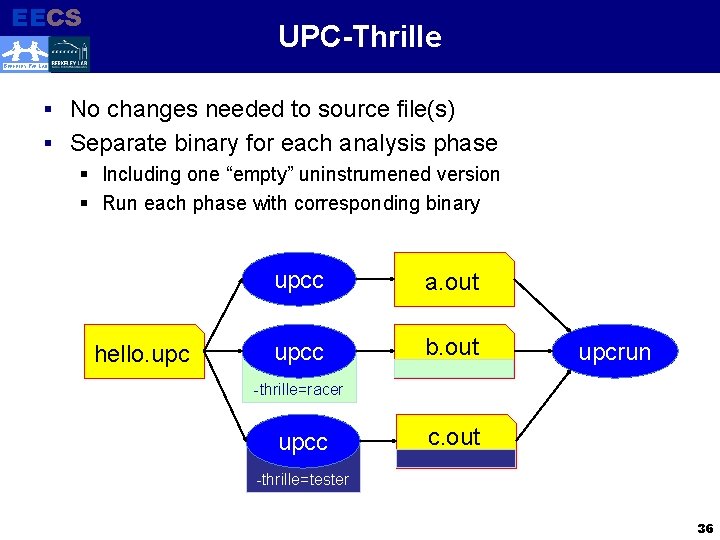

EECS UPC-Thrille Electrical Engineering and Computer Sciences BERKELEY PAR LAB § No changes needed to source file(s) § Separate binary for each analysis phase § Including one “empty” uninstrumened version § Run each phase with corresponding binary hello. upcc a. out upcc b. out upcrun -thrille=racer upcc c. out -thrille=tester 36

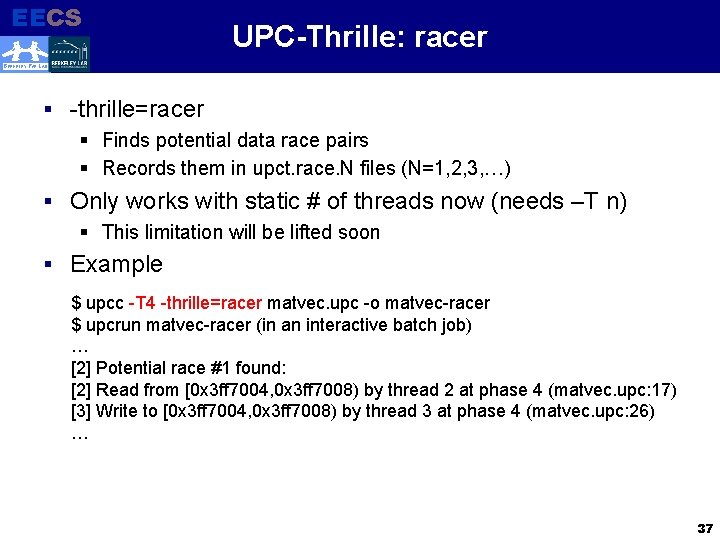

EECS Electrical Engineering and Computer Sciences UPC-Thrille: racer BERKELEY PAR LAB § -thrille=racer § Finds potential data race pairs § Records them in upct. race. N files (N=1, 2, 3, …) § Only works with static # of threads now (needs –T n) § This limitation will be lifted soon § Example $ upcc -T 4 -thrille=racer matvec. upc -o matvec-racer $ upcrun matvec-racer (in an interactive batch job) … [2] Potential race #1 found: [2] Read from [0 x 3 ff 7004, 0 x 3 ff 7008) by thread 2 at phase 4 (matvec. upc: 17) [3] Write to [0 x 3 ff 7004, 0 x 3 ff 7008) by thread 3 at phase 4 (matvec. upc: 26) … 37

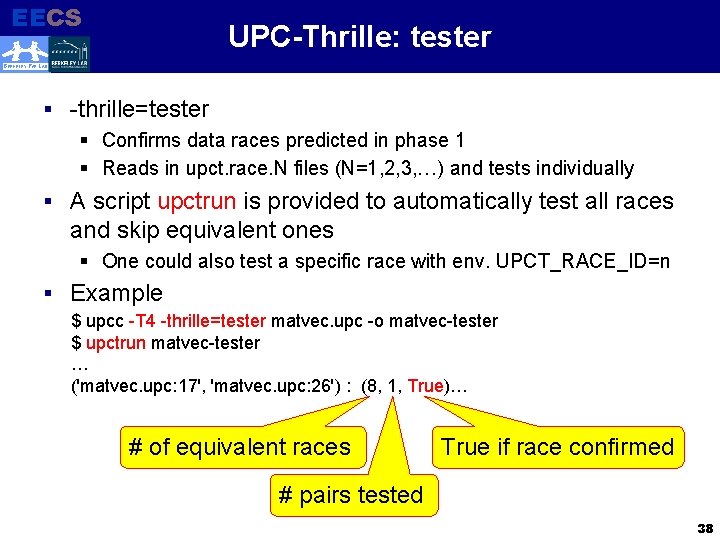

EECS UPC-Thrille: tester Electrical Engineering and Computer Sciences BERKELEY PAR LAB § -thrille=tester § Confirms data races predicted in phase 1 § Reads in upct. race. N files (N=1, 2, 3, …) and tests individually § A script upctrun is provided to automatically test all races and skip equivalent ones § One could also test a specific race with env. UPCT_RACE_ID=n § Example $ upcc -T 4 -thrille=tester matvec. upc -o matvec-tester $ upctrun matvec-tester … ('matvec. upc: 17', 'matvec. upc: 26') : (8, 1, True)… # of equivalent races True if race confirmed # pairs tested 38

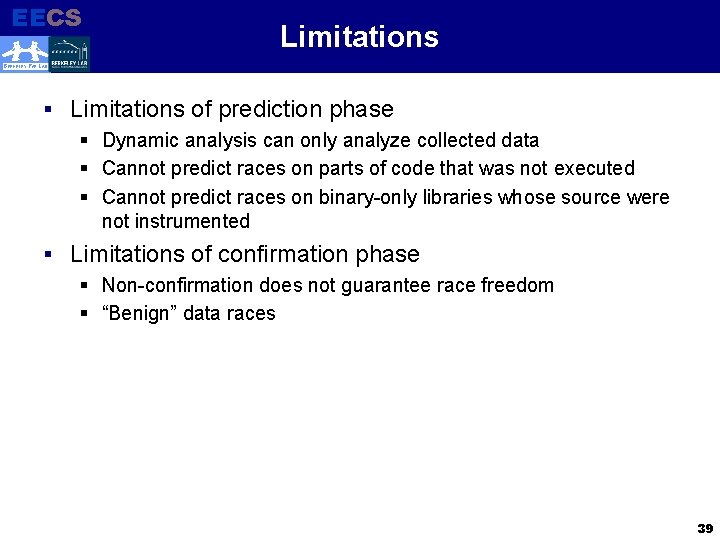

EECS Electrical Engineering and Computer Sciences Limitations BERKELEY PAR LAB § Limitations of prediction phase § Dynamic analysis can only analyze collected data § Cannot predict races on parts of code that was not executed § Cannot predict races on binary-only libraries whose source were not instrumented § Limitations of confirmation phase § Non-confirmation does not guarantee race freedom § “Benign” data races 39

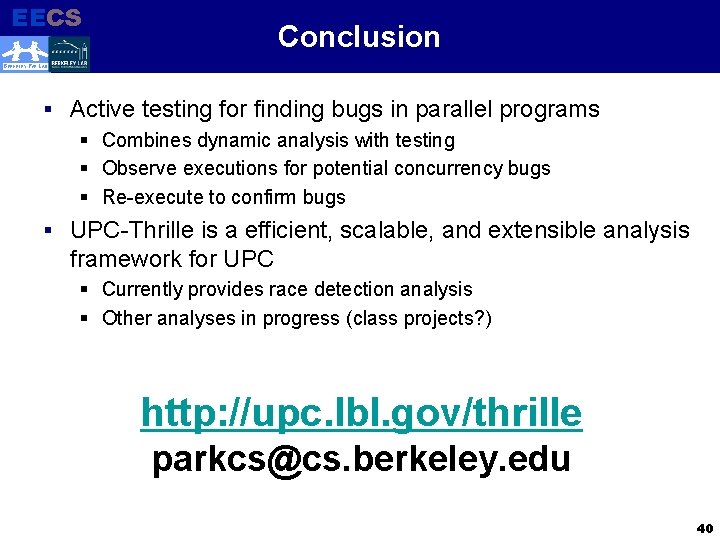

EECS Conclusion Electrical Engineering and Computer Sciences BERKELEY PAR LAB § Active testing for finding bugs in parallel programs § Combines dynamic analysis with testing § Observe executions for potential concurrency bugs § Re-execute to confirm bugs § UPC-Thrille is a efficient, scalable, and extensible analysis framework for UPC § Currently provides race detection analysis § Other analyses in progress (class projects? ) http: //upc. lbl. gov/thrille parkcs@cs. berkeley. edu 40

EECS Electrical Engineering and Computer Sciences BERKELEY PAR LAB 41

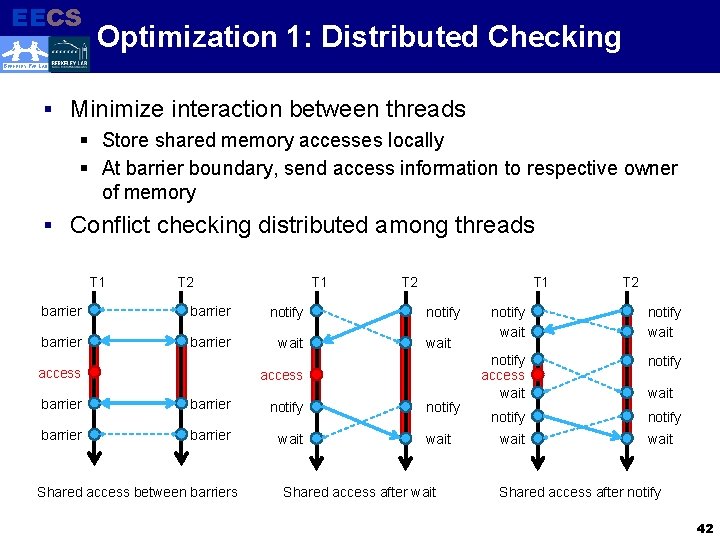

EECS Electrical Engineering and Computer Sciences Optimization 1: Distributed Checking BERKELEY PAR LAB § Minimize interaction between threads § Store shared memory accesses locally § At barrier boundary, send access information to respective owner of memory § Conflict checking distributed among threads T 1 T 2 T 1 barrier notify barrier wait access T 2 T 1 notify wait access barrier notify barrier wait Shared access between barriers notify wait Shared access after wait T 2 notify wait notify access wait notify wait Shared access after notify 42

EECS Electrical Engineering and Computer Sciences Optimization 2: Filter Redundancy BERKELEY PAR LAB § Information collected up to synchronization point may be redundant § Reading and writing to same memory address § Accessing same memory in different sizes or different locksets 43

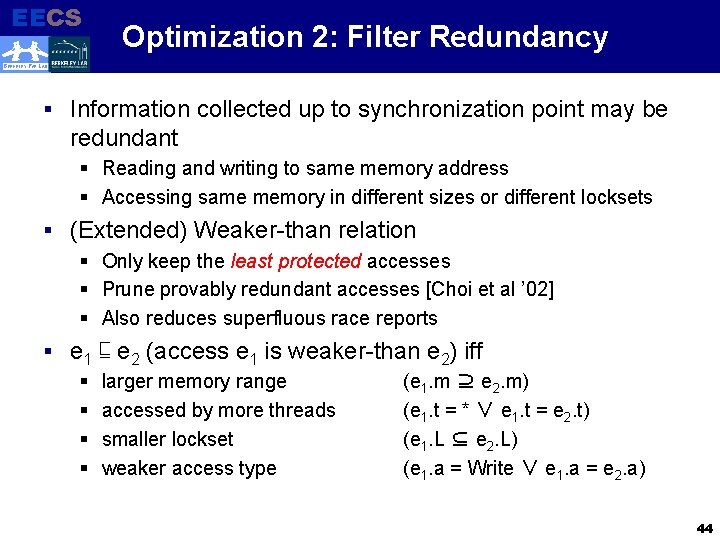

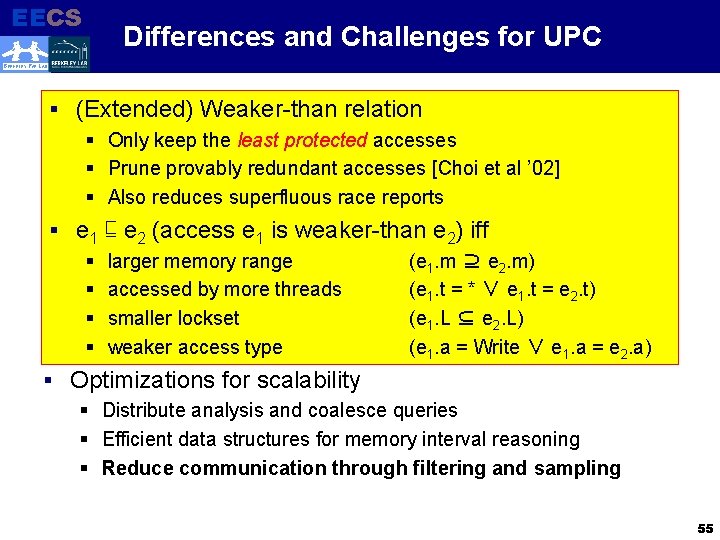

EECS Electrical Engineering and Computer Sciences Optimization 2: Filter Redundancy BERKELEY PAR LAB § Information collected up to synchronization point may be redundant § Reading and writing to same memory address § Accessing same memory in different sizes or different locksets § (Extended) Weaker-than relation § Only keep the least protected accesses § Prune provably redundant accesses [Choi et al ’ 02] § Also reduces superfluous race reports § e 1 § § ⊑ e 2 (access e 1 is weaker-than e 2) iff larger memory range accessed by more threads smaller lockset weaker access type (e 1. m ⊇ e 2. m) (e 1. t = * ∨ e 1. t = e 2. t) (e 1. L ⊆ e 2. L) (e 1. a = Write ∨ e 1. a = e 2. a) 44

EECS Electrical Engineering and Computer Sciences Optimization 3: Sampling BERKELEY PAR LAB § Scientific applications have tight loops § Same computation and communication pattern each time § Inefficient to check for races at every loop iteration § Reduce overhead by sampling § Probabilistically sample each instrumentation point § Reduce probability at each unsuccessful check § Set probability to 0 when race found (disable check) 45

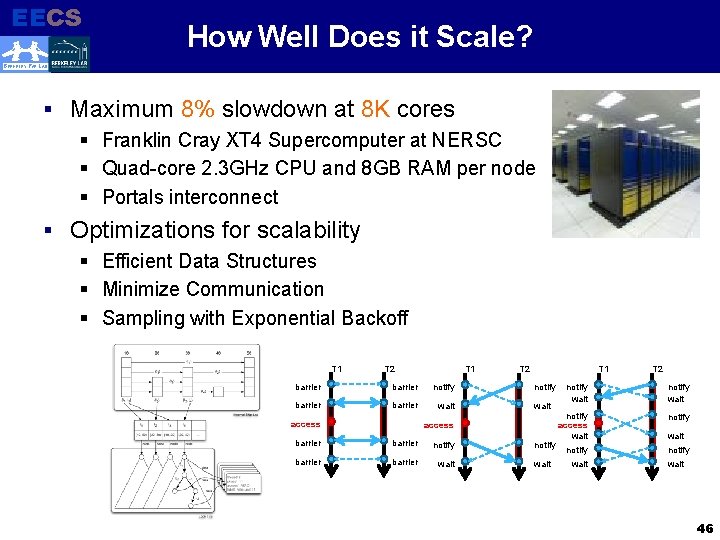

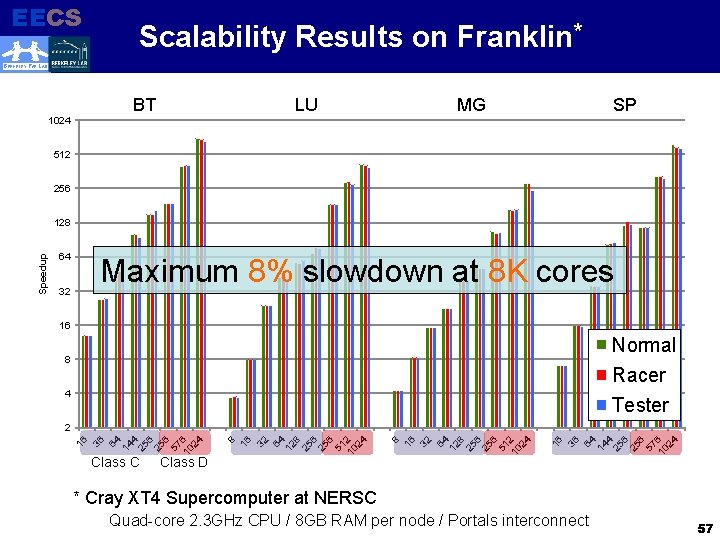

EECS Electrical Engineering and Computer Sciences How Well Does it Scale? BERKELEY PAR LAB § Maximum 8% slowdown at 8 K cores § Franklin Cray XT 4 Supercomputer at NERSC § Quad-core 2. 3 GHz CPU and 8 GB RAM per node § Portals interconnect § Optimizations for scalability § Efficient Data Structures § Minimize Communication § Sampling with Exponential Backoff T 1 T 2 T 1 barrier notify barrier wait access T 2 T 1 notify wait access barrier notify barrier wait notify wait T 2 notify wait notify access wait notify wait 46

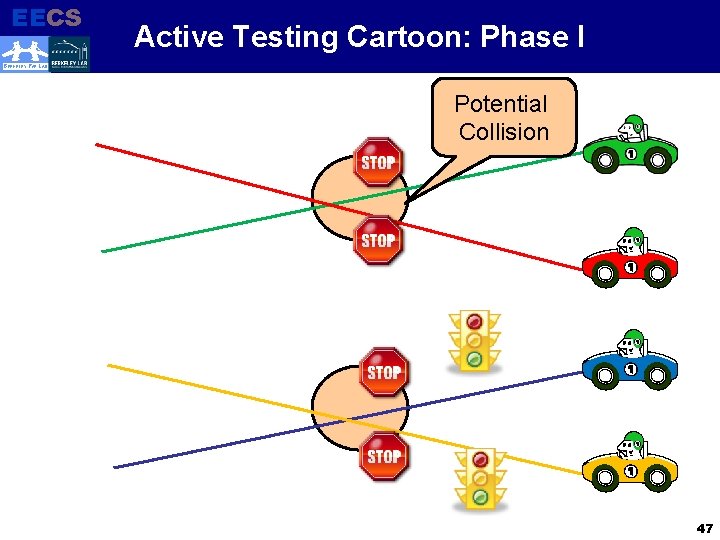

EECS Electrical Engineering and Computer Sciences Active Testing Cartoon: Phase I BERKELEY PAR LAB Potential Collision 47

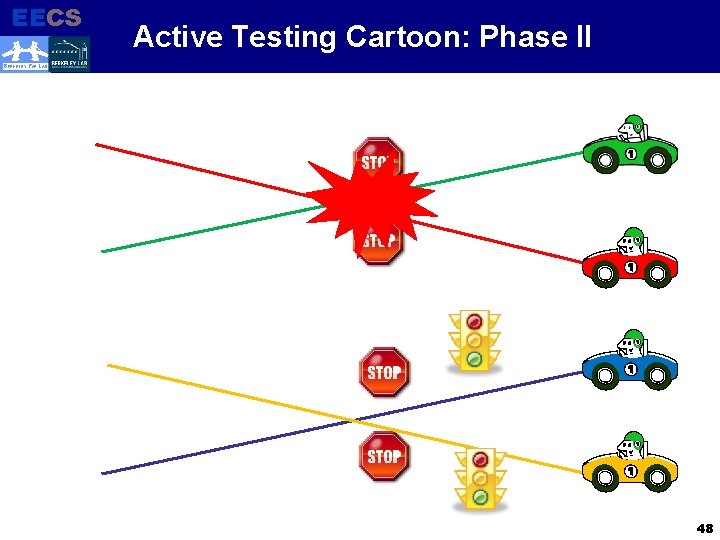

EECS Electrical Engineering and Computer Sciences Active Testing Cartoon: Phase II BERKELEY PAR LAB 48

EECS Electrical Engineering and Computer Sciences New Landscape for HPC BERKELEY PAR LAB § Shared memory for scalability and utilization § Hybrid programming models: MPI + Open. MP § PGAS: UPC, CAF, X 10, etc. § Asynchronous access to shared data likely to cause bugs § Unified Parallel C (UPC) § Parallel extensions to ISO C 99 standard for shared and distributed memory hardware § Single Program Multiple Data (SPMD) + Partitioned Global Address Space (PGAS) § Shared memory concurrency § Transparent access using pointers to shared data (array) § Bulk transfers with memcpy, memput, memget § Fine-grained (lock) and bulk (barrier) synchronization 49

EECS Electrical Engineering and Computer Sciences Phase 1: Checking for Conflicts BERKELEY PAR LAB § To predict possible races, § Need to check all shared accesses for conflicts § Collect information through instrumentation 50

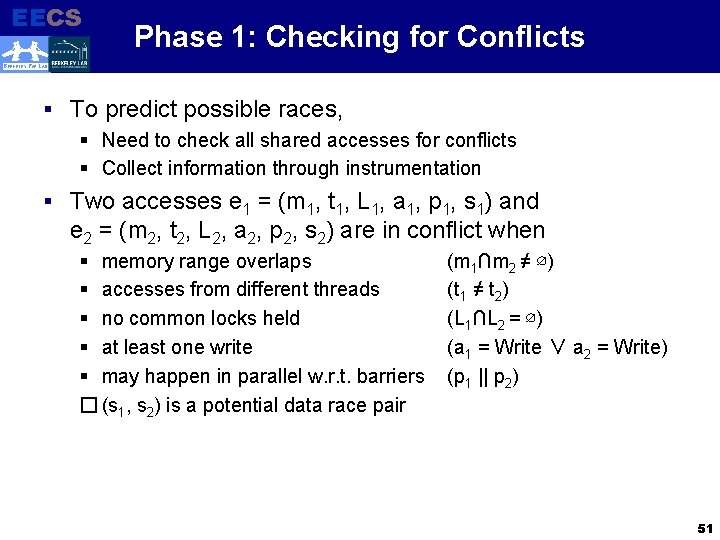

EECS Electrical Engineering and Computer Sciences Phase 1: Checking for Conflicts BERKELEY PAR LAB § To predict possible races, § Need to check all shared accesses for conflicts § Collect information through instrumentation § Two accesses e 1 = (m 1, t 1, L 1, a 1, p 1, s 1) and e 2 = (m 2, t 2, L 2, a 2, p 2, s 2) are in conflict when § memory range overlaps § accesses from different threads § no common locks held § at least one write § may happen in parallel w. r. t. barriers � (s 1, s 2) is a potential data race pair (m 1∩m 2 ≠ ∅) (t 1 ≠ t 2) (L 1∩L 2 = ∅) (a 1 = Write ∨ a 2 = Write) (p 1 || p 2) 51

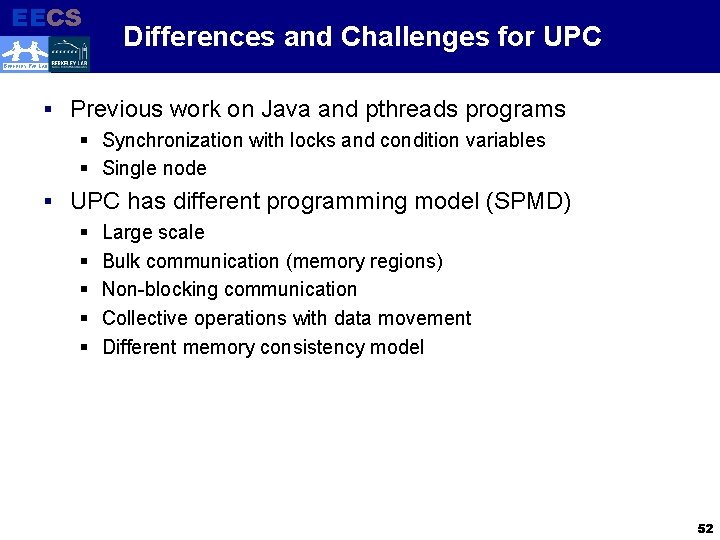

EECS Electrical Engineering and Computer Sciences Differences and Challenges for UPC BERKELEY PAR LAB § Previous work on Java and pthreads programs § Synchronization with locks and condition variables § Single node § UPC has different programming model (SPMD) § Large scale § Bulk communication (memory regions) § Non-blocking communication § Collective operations with data movement § Different memory consistency model 52

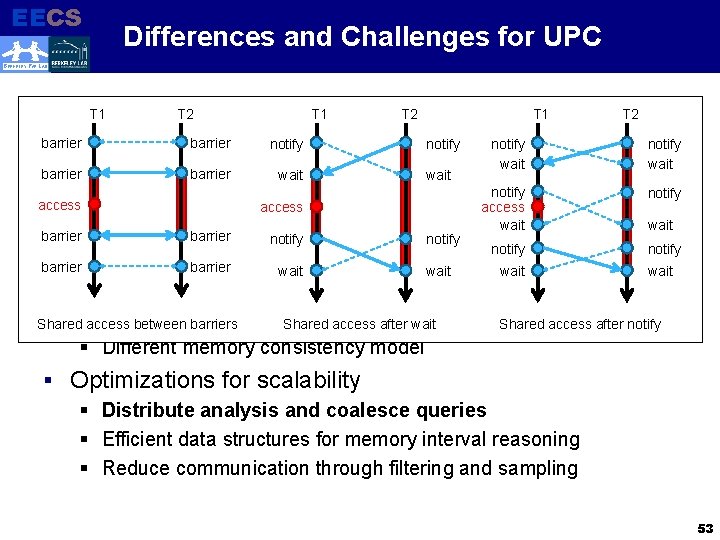

EECS Electrical Engineering and Computer Sciences Differences and Challenges for UPC BERKELEY PAR LAB § Previous work on Java. T 1 and pthreads programs T 1 T 2 T 1 locks and condition barrier§ Synchronization barrier with notify variables notify wait barrier§ Single node barrier wait notify UPC has differentaccess programming model access (SPMD) § access barrier§ Large scale barrier notify (memory regions) barrier§ Bulk communication barrier wait § Non-blocking communication Collective operations. Shared with access data movement Shared§access between barriers after wait § Different memory consistency model wait notify wait T 2 notify wait Shared access after notify § Optimizations for scalability § Distribute analysis and coalesce queries § Efficient data structures for memory interval reasoning § Reduce communication through filtering and sampling 53

EECS Electrical Engineering and Computer Sciences Differences and Challenges for UPC BERKELEY PAR LAB § Previous work on Java and pthreads programs § Synchronization with locks and condition variables § Single node § UPC has different programming model (SPMD) § Large scale § Bulk communication (memory regions) § Non-blocking communication § Collective operations with data movement § Different memory consistency model § Optimizations for scalability § Distribute analysis and coalesce queries § Efficient data structures for memory interval reasoning § Reduce communication through filtering and sampling 54

EECS Electrical Engineering and Computer Sciences Differences and Challenges for UPC BERKELEY PAR LAB §§ Previous work on Java and pthreads programs (Extended) Weaker-than relation §§ Synchronization withprotected locks andaccesses condition variables Only keep the least §§ Single Prune node provably redundant accesses [Choi et al ’ 02] § Also reduces superfluous race reports § UPC has different programming model (SPMD) § e§ 1 Large ⊑ e 2 (access e 1 is weaker-than e 2) iff scale §§ Bulk communication larger memory range(memory regions) (e 1. m ⊇ e 2. m) §§ Non-blocking accessed by communication more threads (e 1. t = * ∨ e 1. t = e 2. t) §§ Collective operations with data movement smaller lockset (e 1. L ⊆ e 2. L) §§ Different memorytype consistency model weaker access (e 1. a = Write ∨ e 1. a = e 2. a) § Optimizations for scalability § Distribute analysis and coalesce queries § Efficient data structures for memory interval reasoning § Reduce communication through filtering and sampling 55

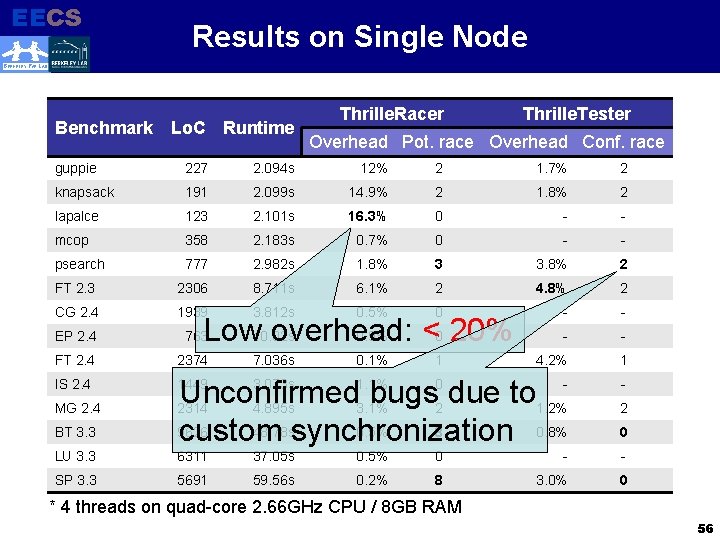

EECS Electrical Engineering and Computer Sciences Results on Single Node BERKELEY PAR LAB Benchmark Lo. C Runtime Thrille. Racer Thrille. Tester Overhead Pot. race Overhead Conf. race guppie 227 2. 094 s 12% 2 1. 7% 2 knapsack 191 2. 099 s 14. 9% 2 1. 8% 2 lapalce 123 2. 101 s 16. 3% 0 - - mcop 358 2. 183 s 0. 7% 0 - - psearch 777 2. 982 s 1. 8% 3 3. 8% 2 FT 2. 3 2306 8. 711 s 6. 1% 2 4. 8% 2 CG 2. 4 1939 3. 812 s 0. 5% 0 - - EP 2. 4 763 - - FT 2. 4 2374 4. 2% 1 IS 2. 4 1449 3. 073 s 1. 1% 0 Unconfirmed bugs due to 2314 4. 895 s 3. 1% 2 1. 2% 9626 48. 78 ssynchronization 0. 5% 8 0. 8% custom 2 LU 3. 3 6311 37. 05 s 0. 5% 0 - - SP 3. 3 5691 59. 56 s 0. 2% 8 3. 0% 0 MG 2. 4 BT 3. 3 Low 10. 02 s overhead: <0 20% 0. 9% 7. 036 s 0. 1% 1 0 * 4 threads on quad-core 2. 66 GHz CPU / 8 GB RAM 56

EECS Scalability Results on Franklin* Electrical Engineering and Computer Sciences BERKELEY PAR LAB BT 1024 LU MG SP 512 256 64 Maximum 8% slowdown at 8 K cores 32 16 Normal 8 Racer 4 Tester 64 14 4 25 6 57 10 6 24 36 16 32 8 16 32 64 12 8 25 6 51 10 2 24 Class D 64 12 8 25 6 51 10 2 24 Class C 8 16 64 14 4 25 6 57 10 6 24 36 2 16 Speedup 128 * Cray XT 4 Supercomputer at NERSC Quad-core 2. 3 GHz CPU / 8 GB RAM per node / Portals interconnect 57

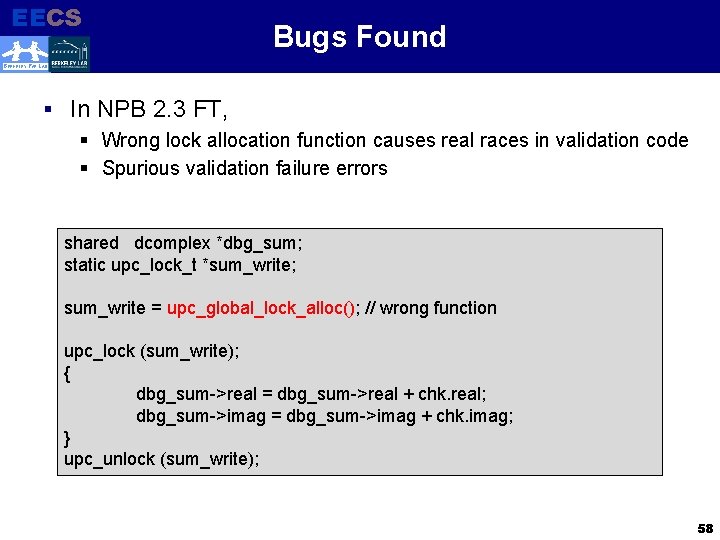

EECS Electrical Engineering and Computer Sciences Bugs Found BERKELEY PAR LAB § In NPB 2. 3 FT, § Wrong lock allocation function causes real races in validation code § Spurious validation failure errors shared dcomplex *dbg_sum; static upc_lock_t *sum_write; sum_write = upc_global_lock_alloc(); // wrong function upc_lock (sum_write); { dbg_sum->real = dbg_sum->real + chk. real; dbg_sum->imag = dbg_sum->imag + chk. imag; } upc_unlock (sum_write); 58

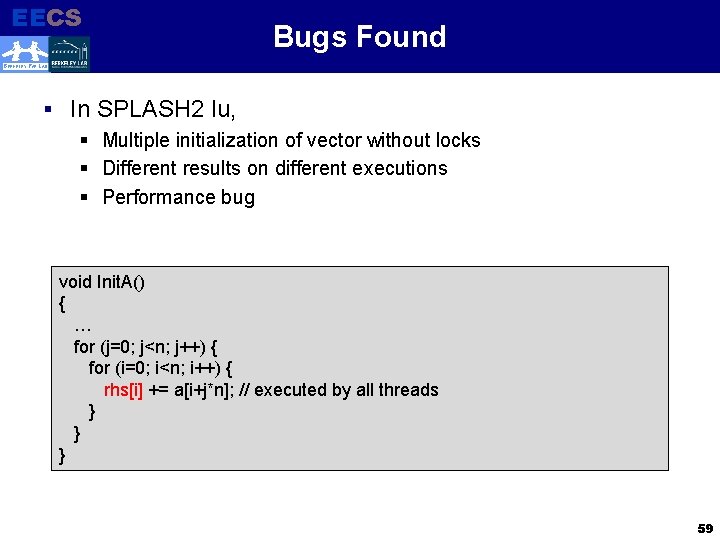

EECS Bugs Found Electrical Engineering and Computer Sciences BERKELEY PAR LAB § In § § § SPLASH 2 lu, Multiple initialization of vector without locks Different results on different executions Performance bug void Init. A() { … for (j=0; j<n; j++) { for (i=0; i<n; i++) { rhs[i] += a[i+j*n]; // executed by all threads } } } 59

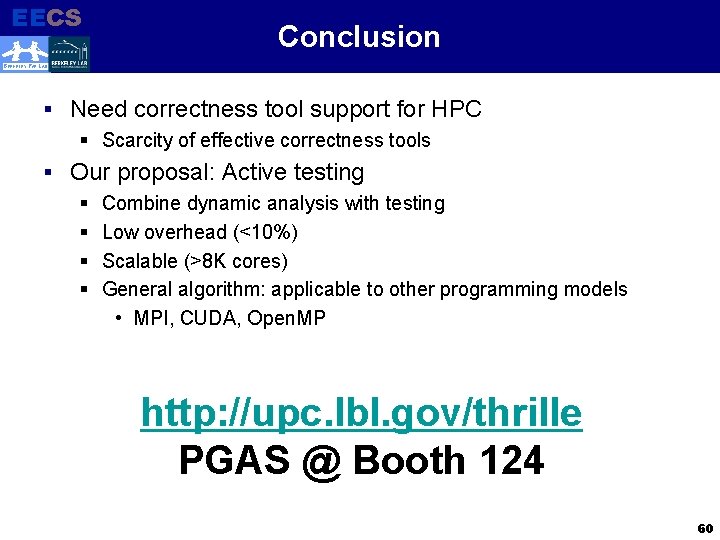

EECS Electrical Engineering and Computer Sciences Conclusion BERKELEY PAR LAB § Need correctness tool support for HPC § Scarcity of effective correctness tools § Our proposal: Active testing § Combine dynamic analysis with testing § Low overhead (<10%) § Scalable (>8 K cores) § General algorithm: applicable to other programming models • MPI, CUDA, Open. MP http: //upc. lbl. gov/thrille PGAS @ Booth 124 60

- Slides: 60