DUAL STRATEGY ACTIVE LEARNING presenter Pinar Donmez 1

- Slides: 27

DUAL STRATEGY ACTIVE LEARNING presenter: Pinar Donmez 1 Joint work with Jaime G. Carbonell 1 & Paul N. Bennett 2 1 Language Technologies Institute, Carnegie Mellon University 2 Microsoft Research

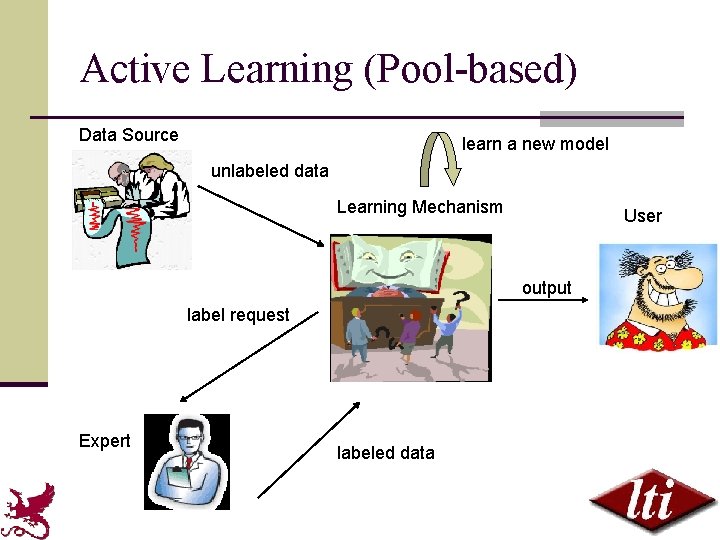

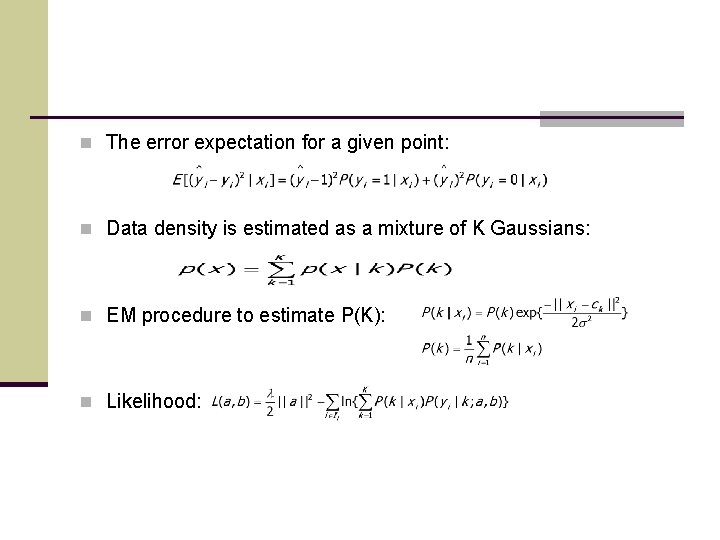

Active Learning (Pool-based) Data Source learn a new model unlabeled data Learning Mechanism User output label request Expert labeled data

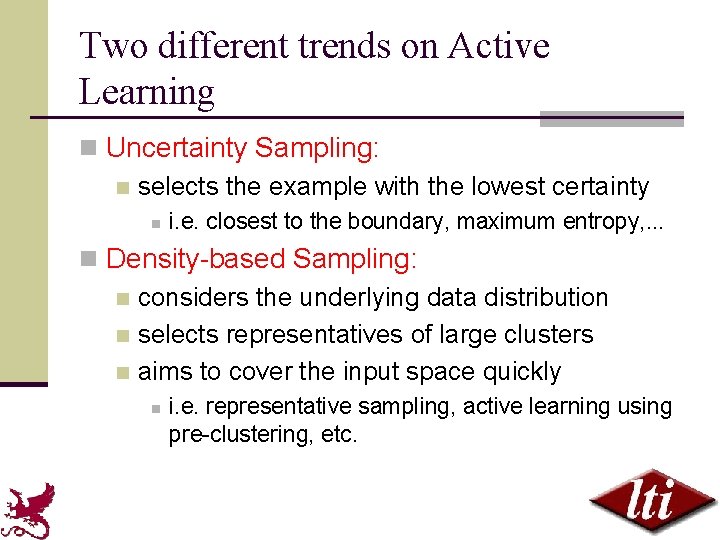

Two different trends on Active Learning n Uncertainty Sampling: n selects the example with the lowest certainty n i. e. closest to the boundary, maximum entropy, . . . n Density-based Sampling: n considers the underlying data distribution n selects representatives of large clusters n aims to cover the input space quickly n i. e. representative sampling, active learning using pre-clustering, etc.

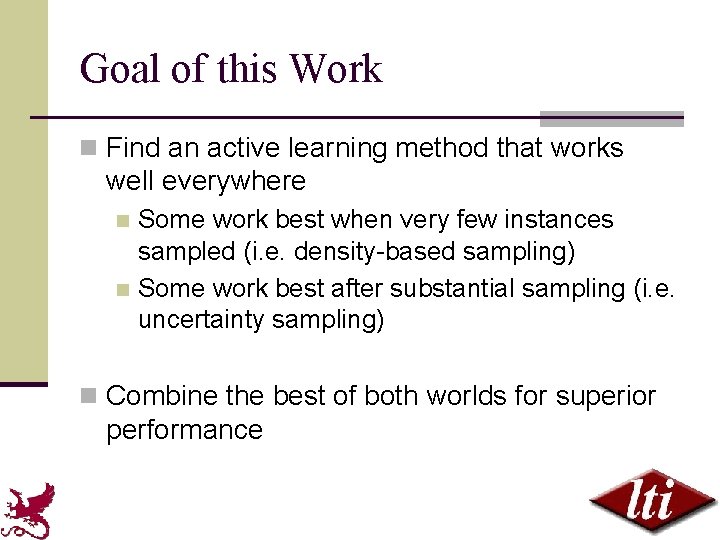

Goal of this Work n Find an active learning method that works well everywhere Some work best when very few instances sampled (i. e. density-based sampling) n Some work best after substantial sampling (i. e. uncertainty sampling) n n Combine the best of both worlds for superior performance

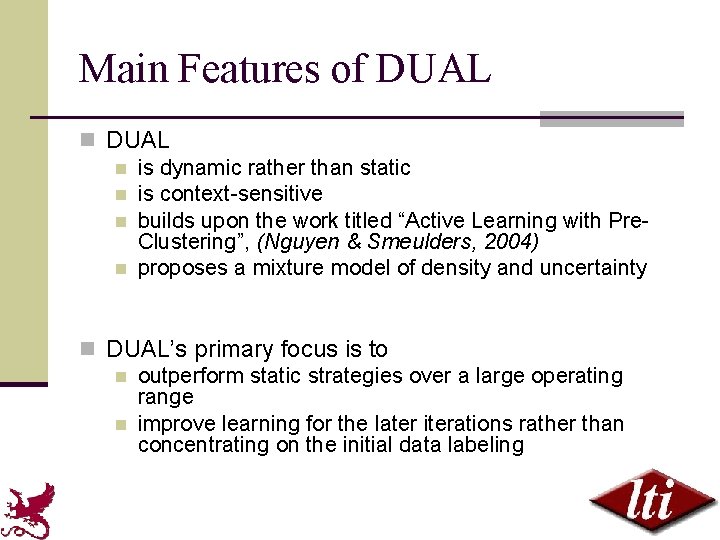

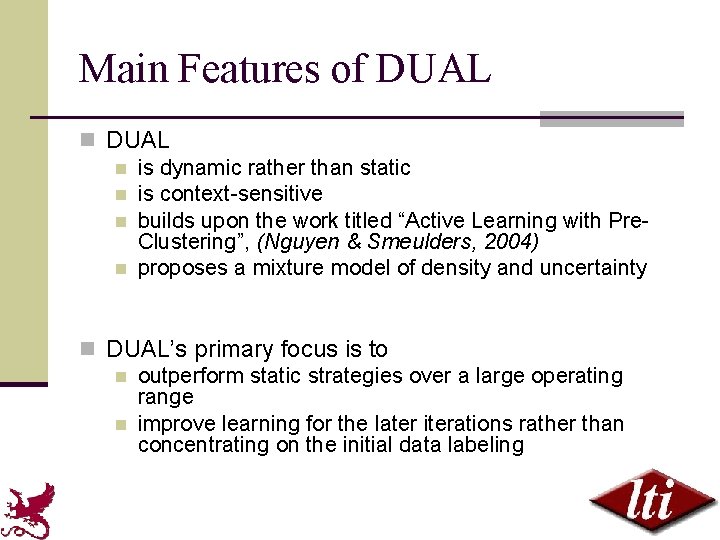

Main Features of DUAL n is dynamic rather than static n is context-sensitive n builds upon the work titled “Active Learning with Pre. Clustering”, (Nguyen & Smeulders, 2004) n proposes a mixture model of density and uncertainty n DUAL’s primary focus is to n outperform static strategies over a large operating range n improve learning for the later iterations rather than concentrating on the initial data labeling

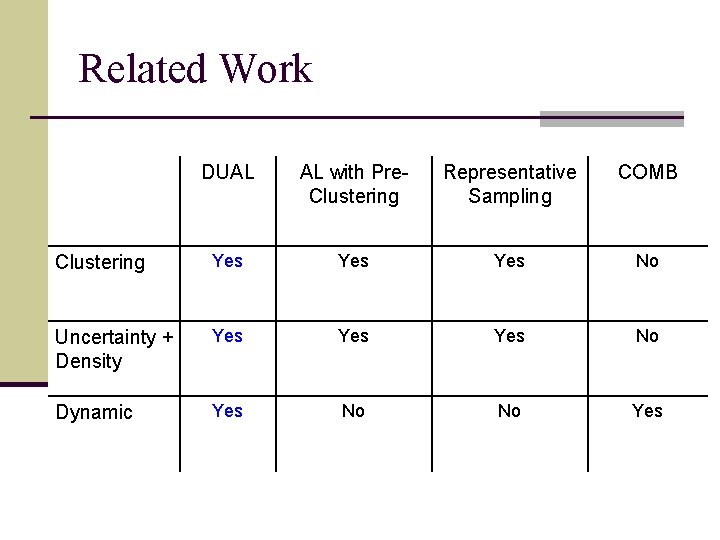

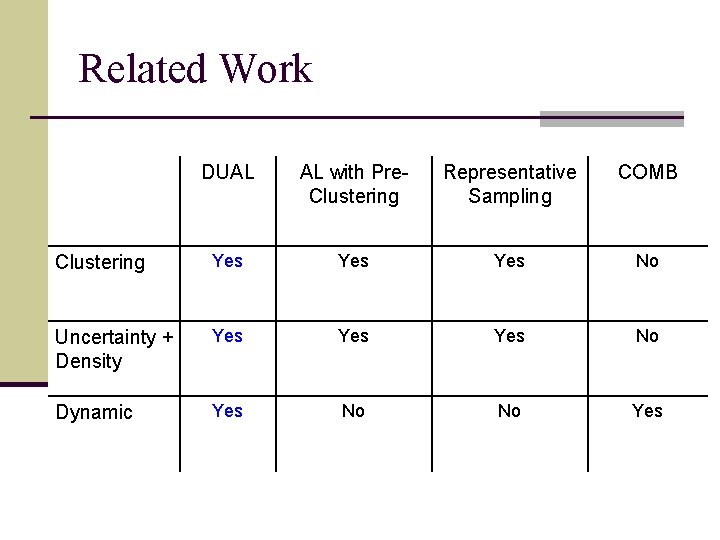

Related Work DUAL AL with Pre. Clustering Representative Sampling COMB Clustering Yes Yes No Uncertainty + Density Yes Yes No Dynamic Yes No No Yes

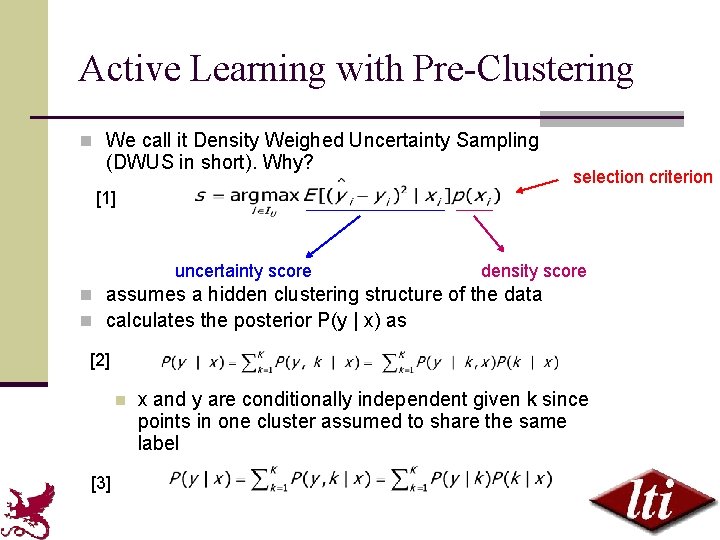

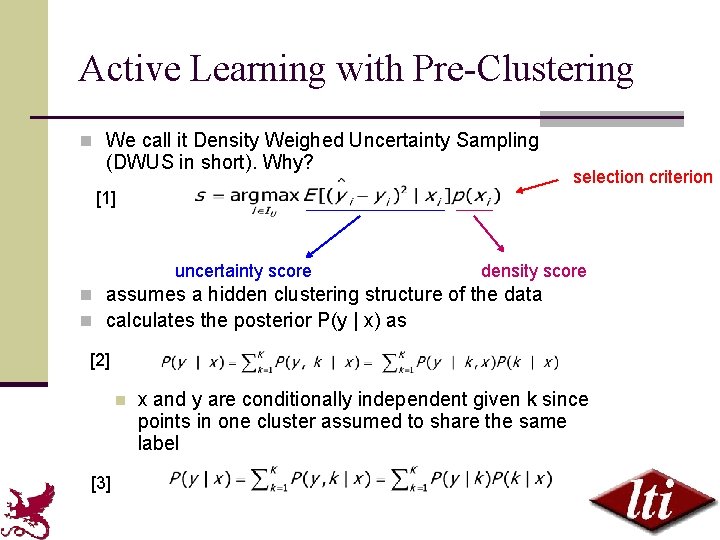

Active Learning with Pre-Clustering n We call it Density Weighed Uncertainty Sampling (DWUS in short). Why? selection criterion [1] uncertainty score density score n assumes a hidden clustering structure of the data n calculates the posterior P(y | x) as [2] n [3] x and y are conditionally independent given k since points in one cluster assumed to share the same label

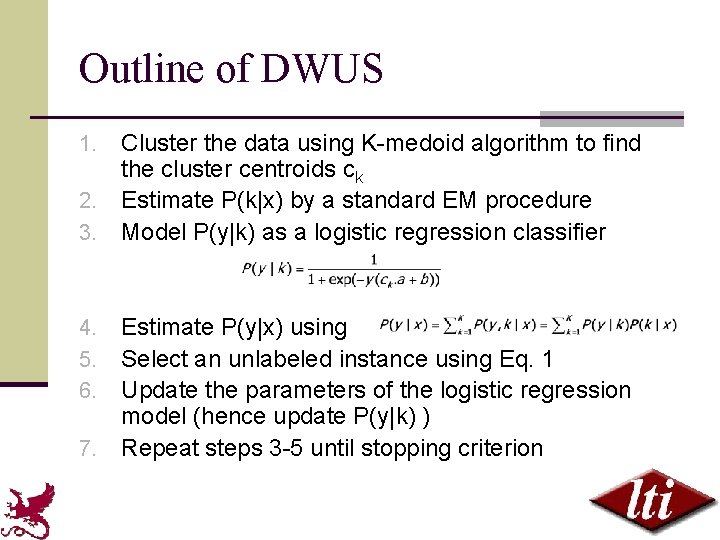

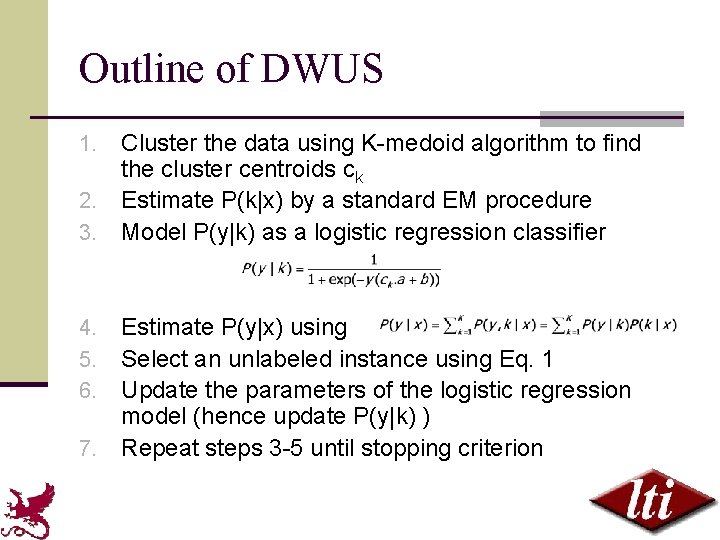

Outline of DWUS Cluster the data using K-medoid algorithm to find the cluster centroids ck 2. Estimate P(k|x) by a standard EM procedure 3. Model P(y|k) as a logistic regression classifier 1. Estimate P(y|x) using Select an unlabeled instance using Eq. 1 Update the parameters of the logistic regression model (hence update P(y|k) ) 7. Repeat steps 3 -5 until stopping criterion 4. 5. 6.

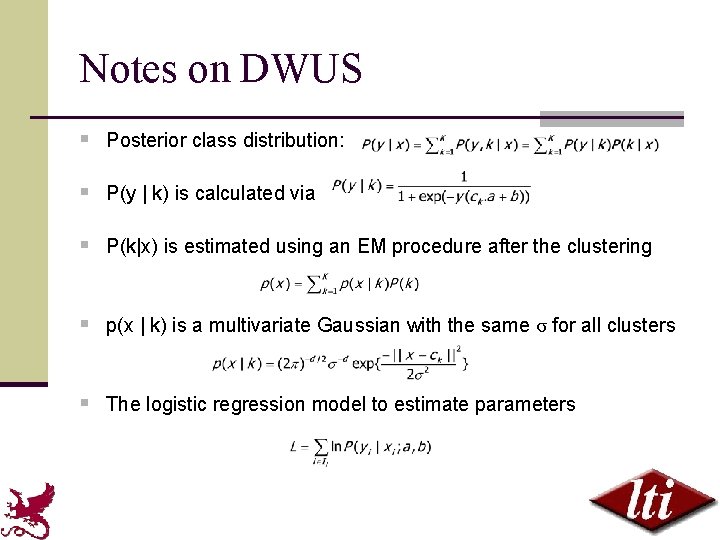

Notes on DWUS § Posterior class distribution: § P(y | k) is calculated via § P(k|x) is estimated using an EM procedure after the clustering § p(x | k) is a multivariate Gaussian with the same σ for all clusters § The logistic regression model to estimate parameters

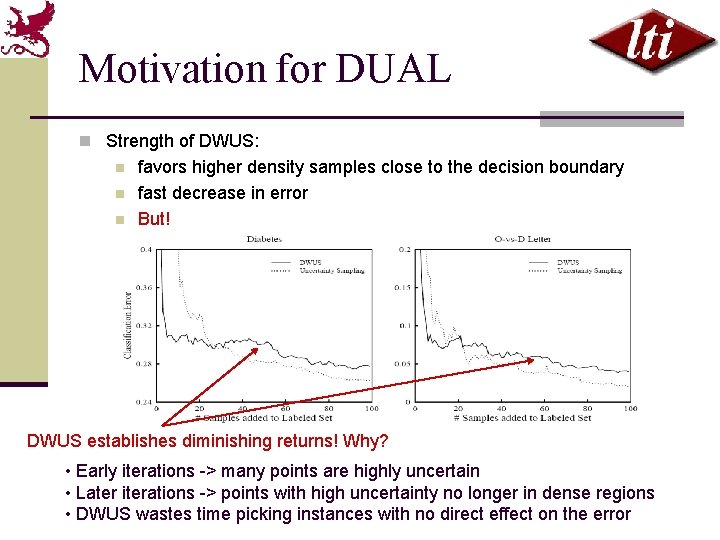

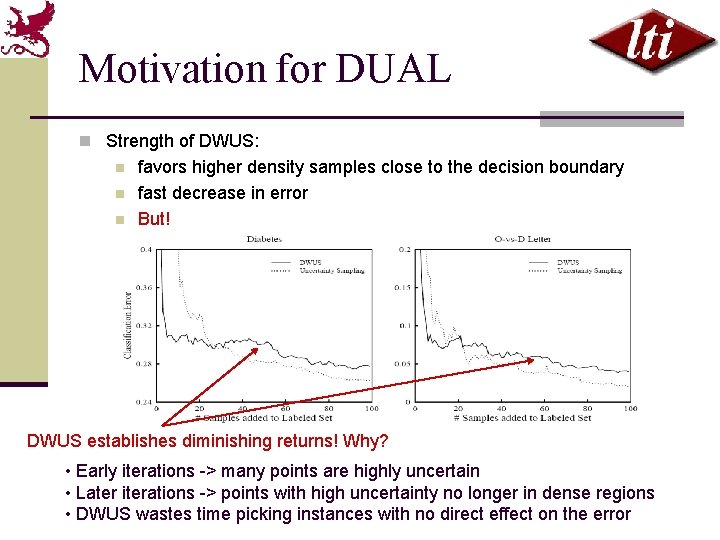

Motivation for DUAL n Strength of DWUS: n n n favors higher density samples close to the decision boundary fast decrease in error But! DWUS establishes diminishing returns! Why? • Early iterations -> many points are highly uncertain • Later iterations -> points with high uncertainty no longer in dense regions • DWUS wastes time picking instances with no direct effect on the error

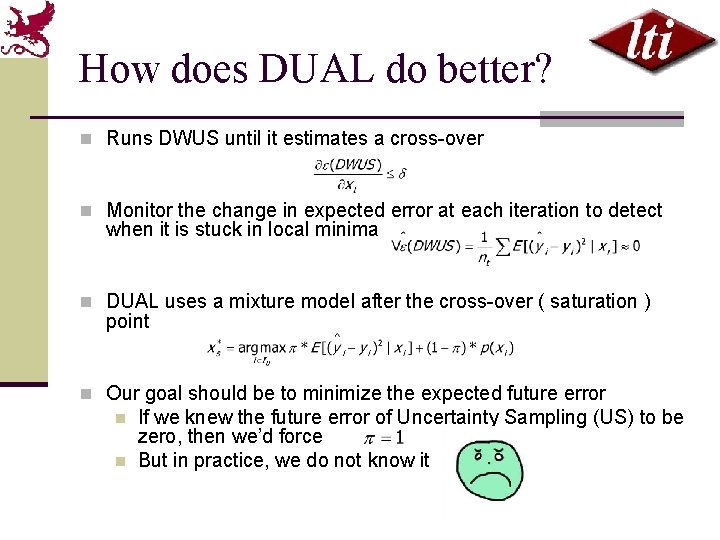

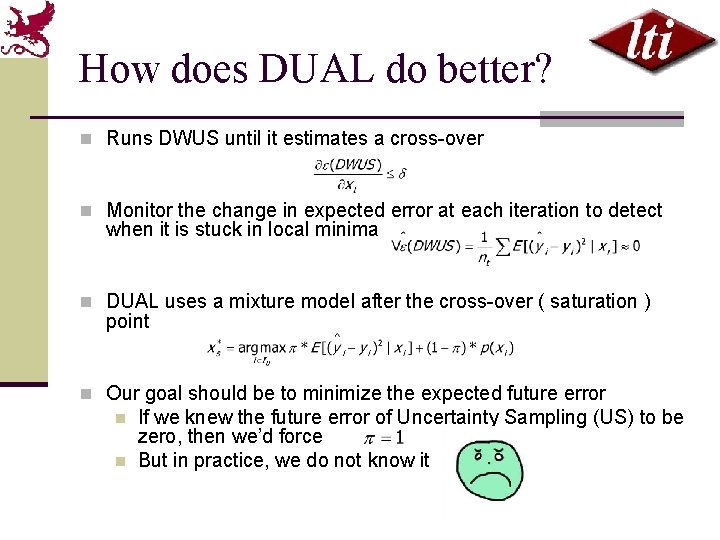

How does DUAL do better? n Runs DWUS until it estimates a cross-over n Monitor the change in expected error at each iteration to detect when it is stuck in local minima n DUAL uses a mixture model after the cross-over ( saturation ) point n Our goal should be to minimize the expected future error n n If we knew the future error of Uncertainty Sampling (US) to be zero, then we’d force But in practice, we do not know it

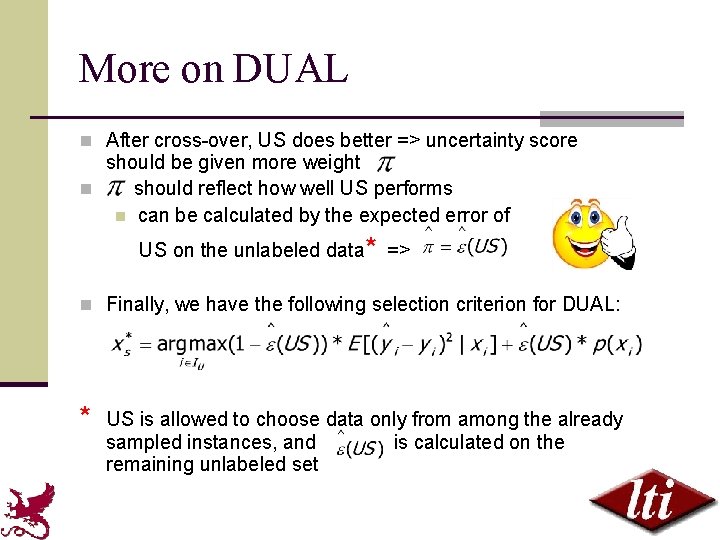

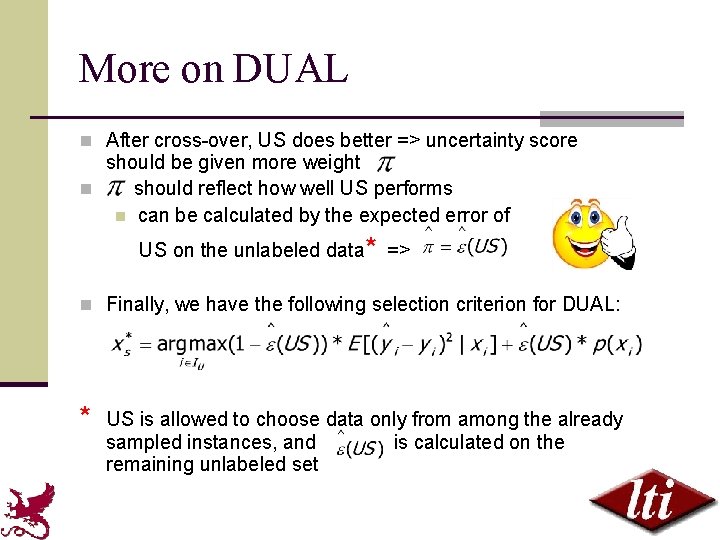

More on DUAL n After cross-over, US does better => uncertainty score should be given more weight n should reflect how well US performs n can be calculated by the expected error of US on the unlabeled data* => n Finally, we have the following selection criterion for DUAL: * US is allowed to choose data only from among the already sampled instances, and is calculated on the remaining unlabeled set

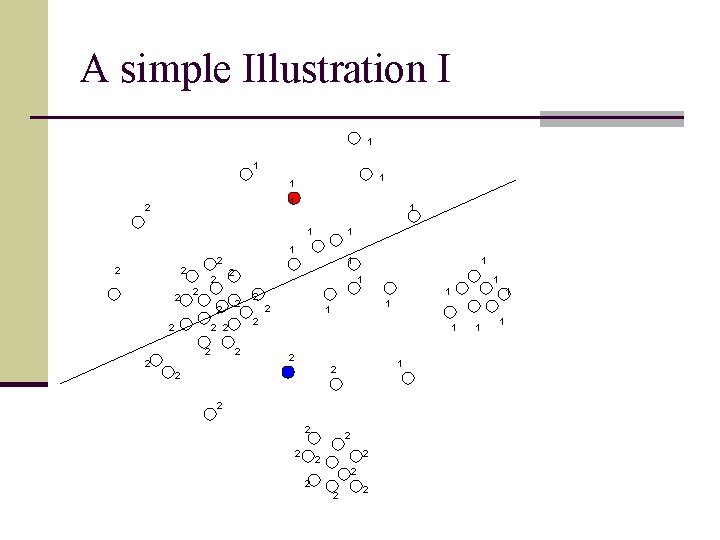

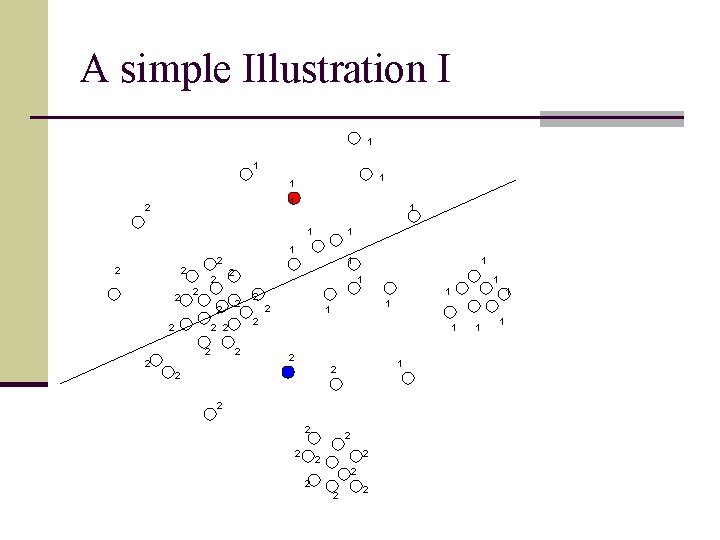

A simple Illustration I 1 1 1 2 2 1 2 2 2 1 1 1 2 2 1 1 2 2 2 2 1 1

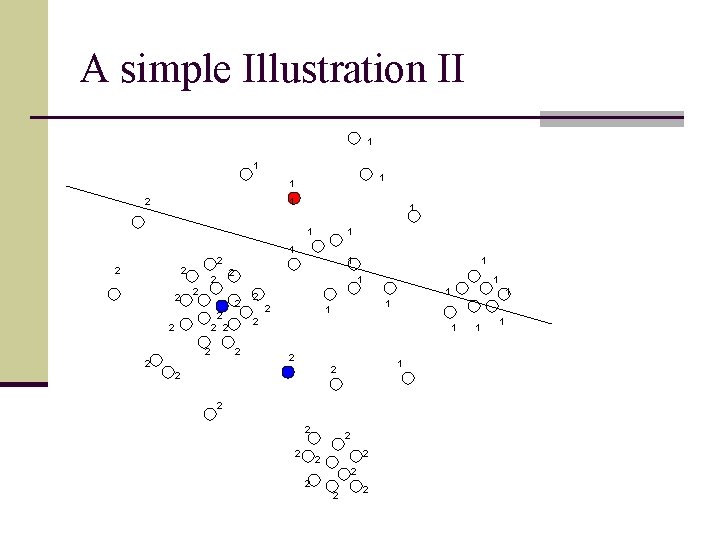

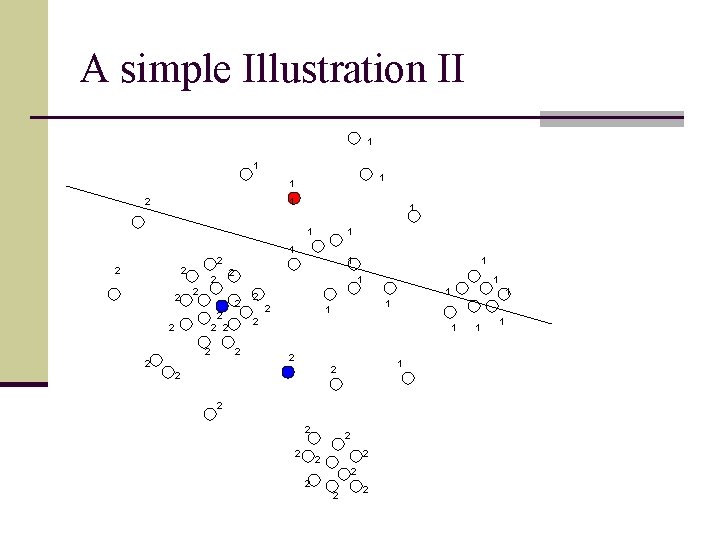

A simple Illustration II 1 1 2 1 1 1 2 2 2 1 1 2 2 2 1 1 1 2 2 1 1 2 2 2 2 1 1

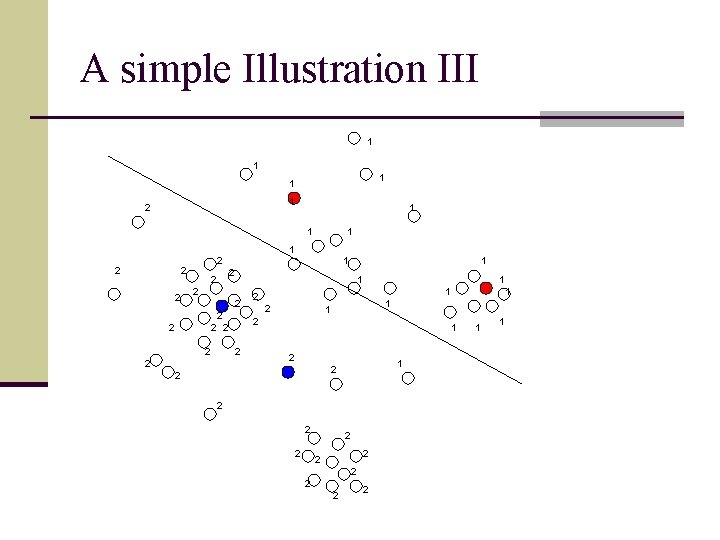

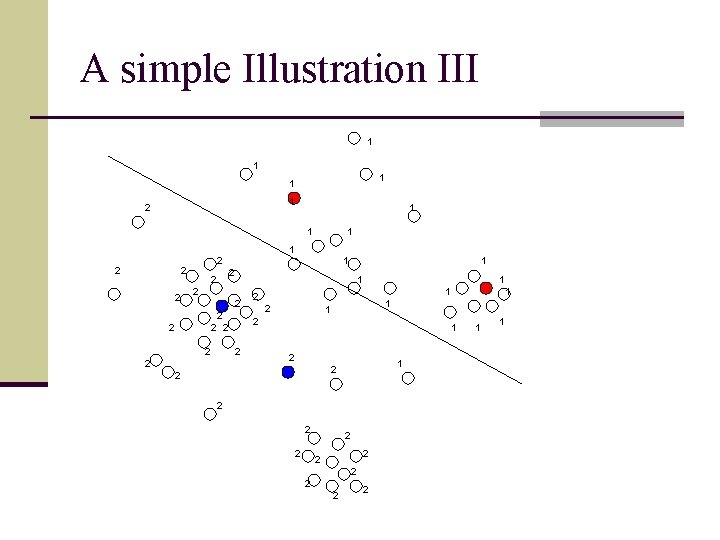

A simple Illustration III 1 1 1 2 2 1 2 2 2 2 1 1 1 2 2 2 2 1 1

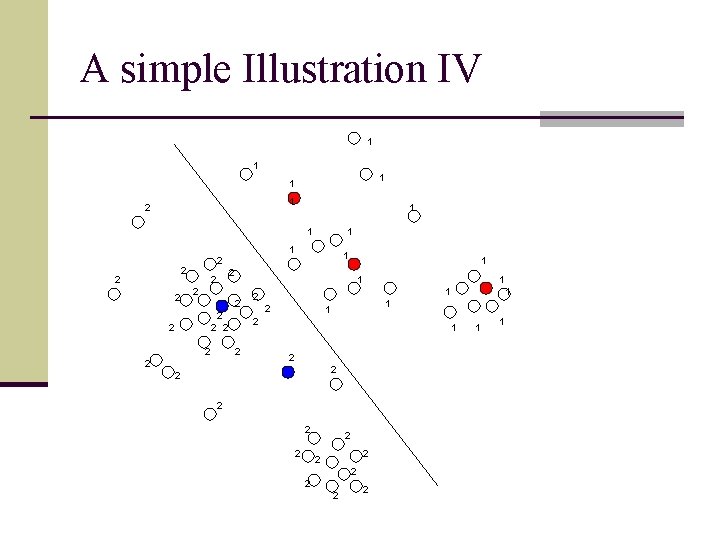

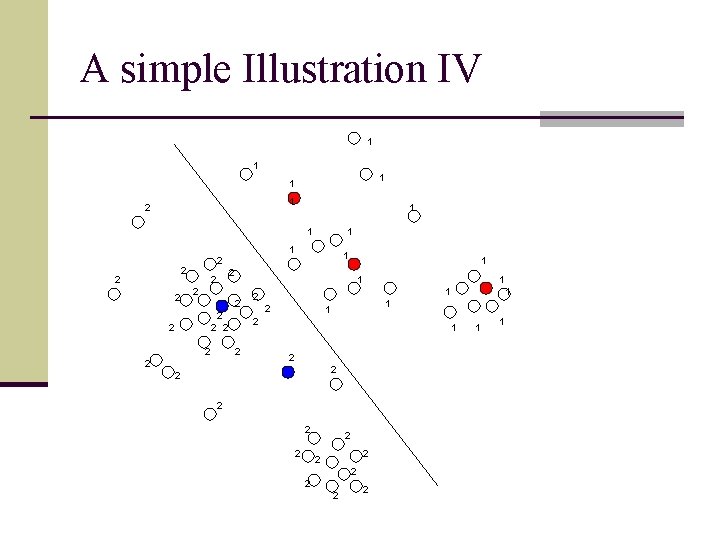

A simple Illustration IV 1 1 1 2 2 1 2 2 2 2 1 1 1 2 2 2 2 1 1

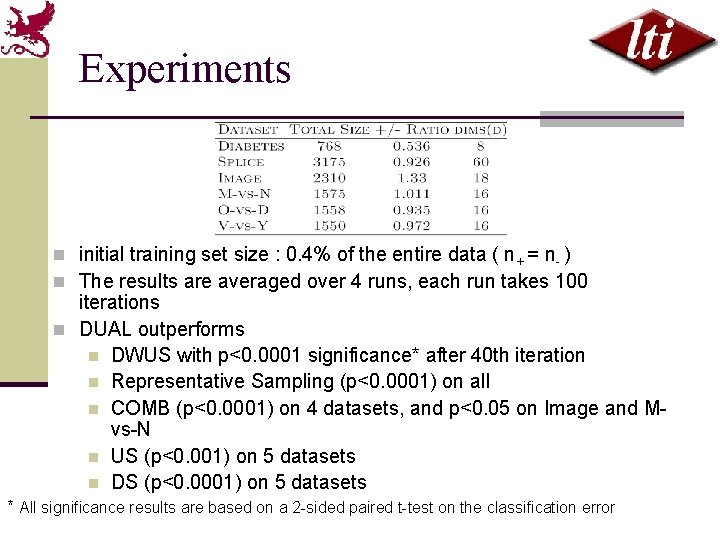

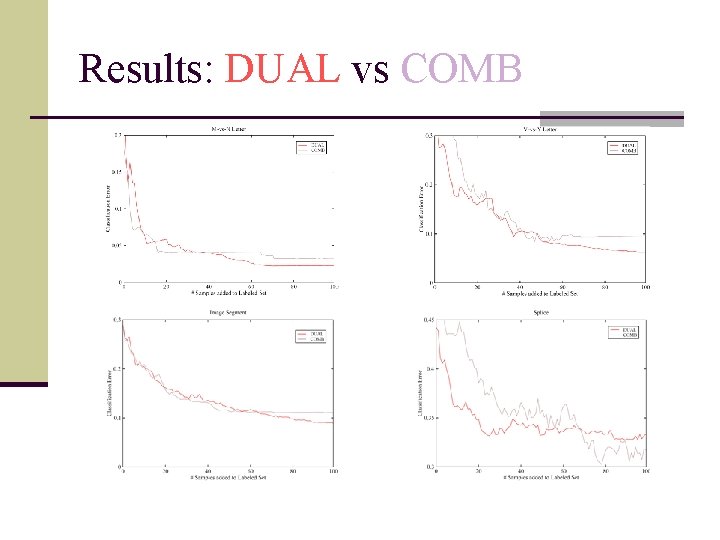

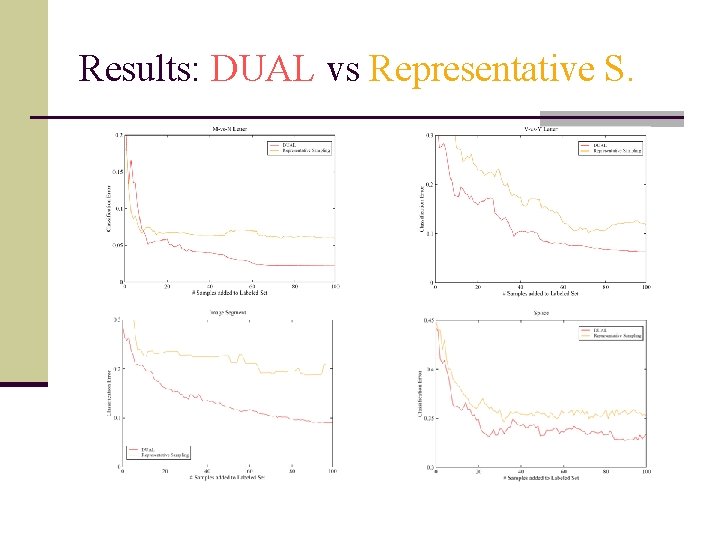

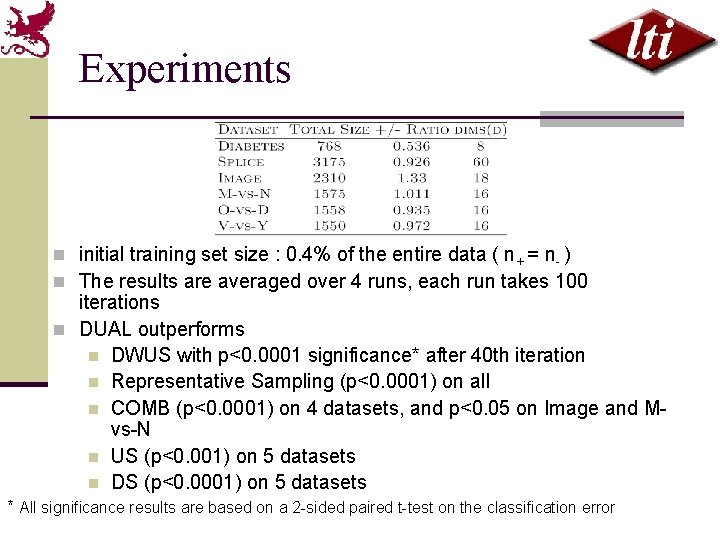

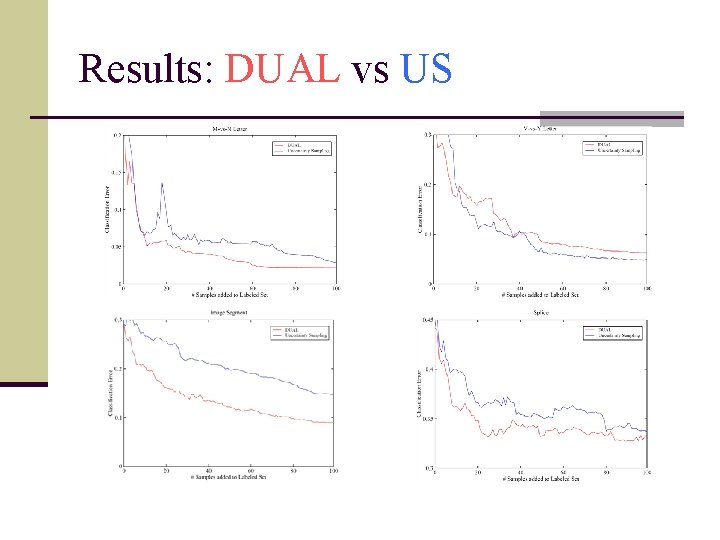

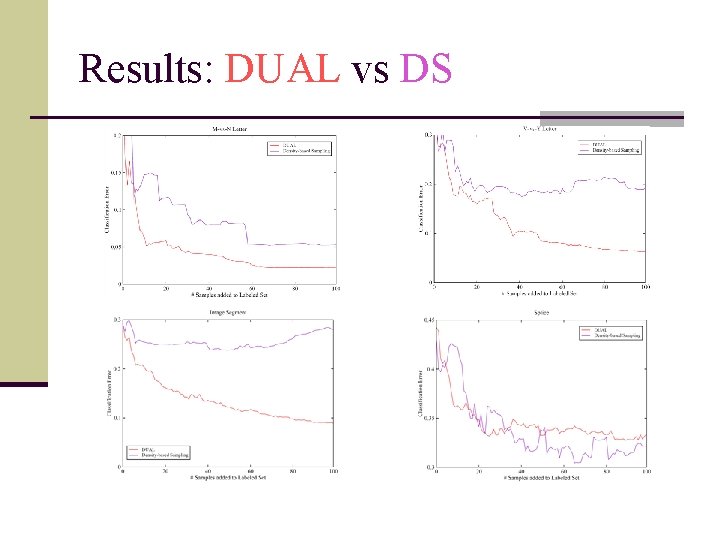

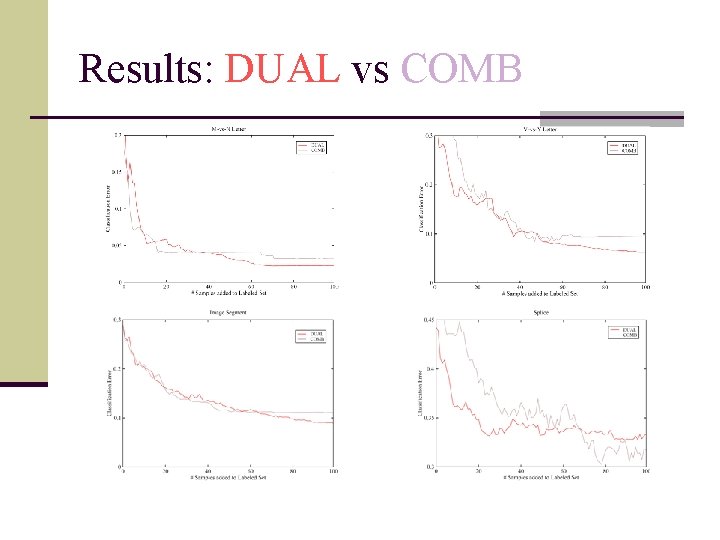

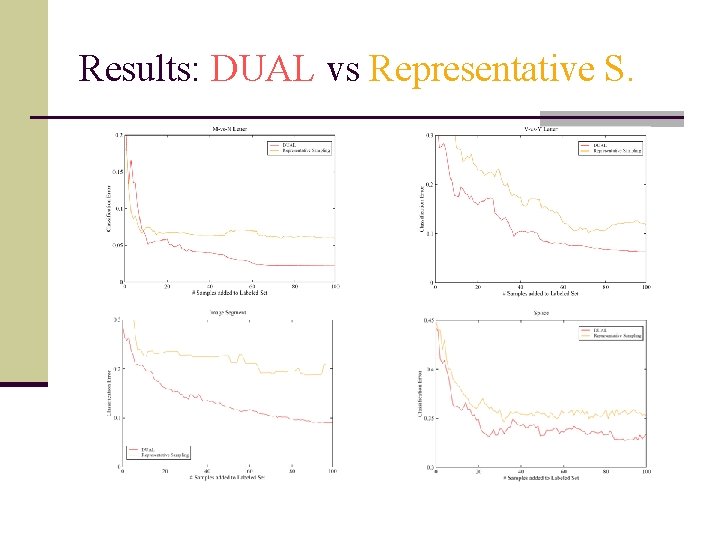

Experiments n initial training set size : 0. 4% of the entire data ( n+ = n- ) n The results are averaged over 4 runs, each run takes 100 iterations n DUAL outperforms n DWUS with p<0. 0001 significance* after 40 th iteration n Representative Sampling (p<0. 0001) on all n COMB (p<0. 0001) on 4 datasets, and p<0. 05 on Image and Mvs-N n US (p<0. 001) on 5 datasets n DS (p<0. 0001) on 5 datasets * All significance results are based on a 2 -sided paired t-test on the classification error

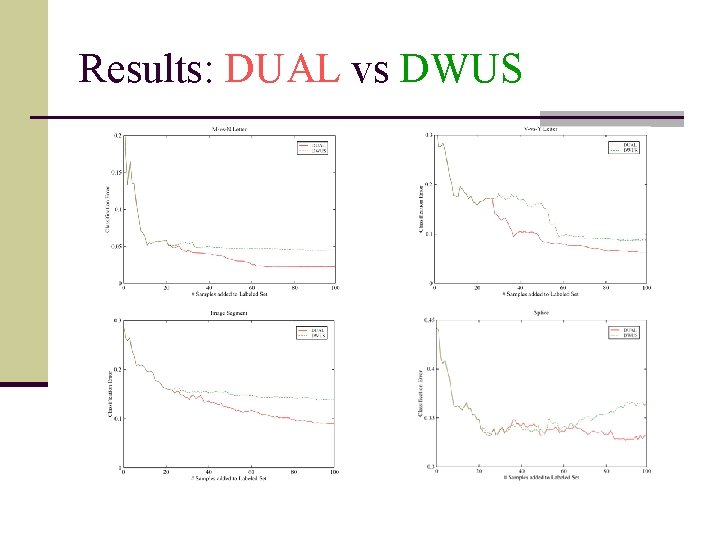

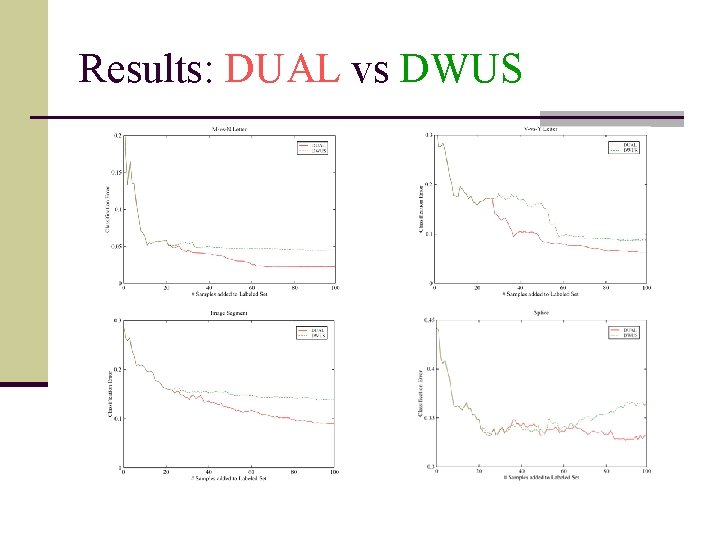

Results: DUAL vs DWUS

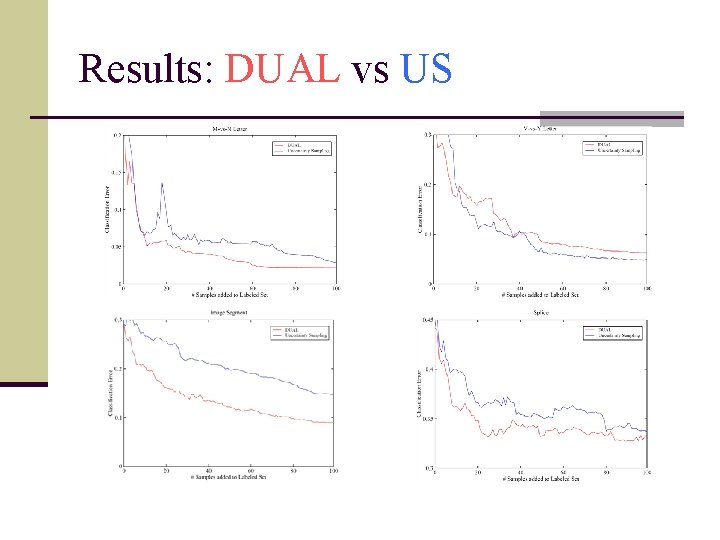

Results: DUAL vs US

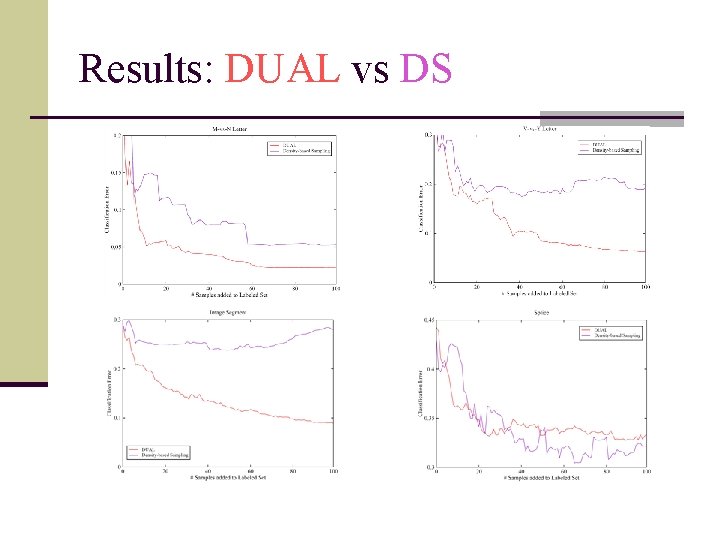

Results: DUAL vs DS

Results: DUAL vs COMB

Results: DUAL vs Representative S.

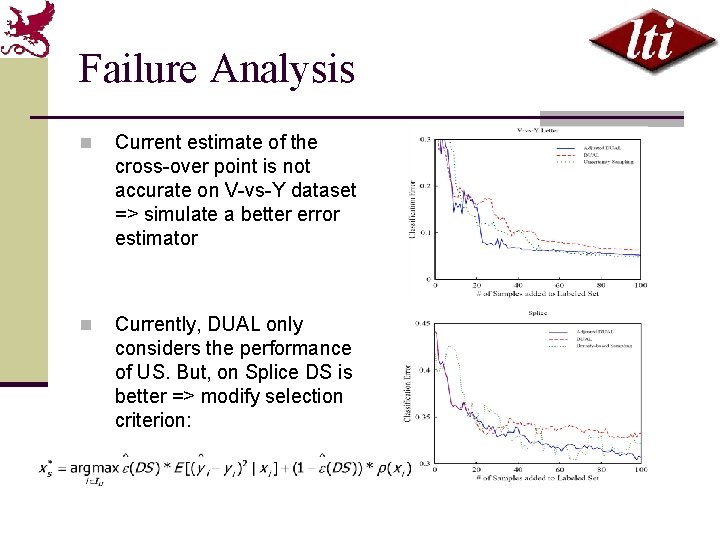

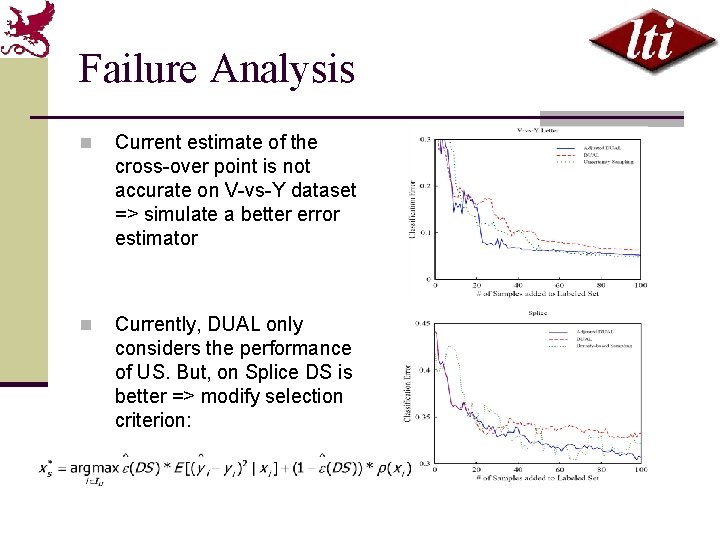

Failure Analysis n Current estimate of the cross-over point is not accurate on V-vs-Y dataset => simulate a better error estimator n Currently, DUAL only considers the performance of US. But, on Splice DS is better => modify selection criterion:

Conclusion ü DUAL robustly combines density and uncertainty (can be generalized to other active sampling methods which exhibit differential performance) ü DUAL leads to more effective performance than individual strategies ü DUAL shows the error of one method can be estimated using the data labeled by the other ü DUAL can be applied to multi-class problems where the error is estimated either globally or at the class or the instance level

Future Work Ø Generalize DUAL to estimate which method is currently dominant or use a relative success weight Ø Apply DUAL to more than two strategies to maximize the diversity of an ensemble Ø Investigate better techniques to estimate the future classification error

THANK YOU!

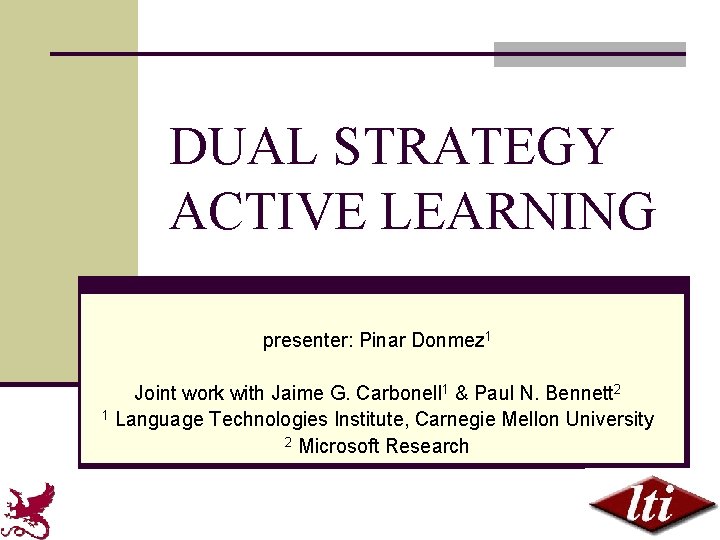

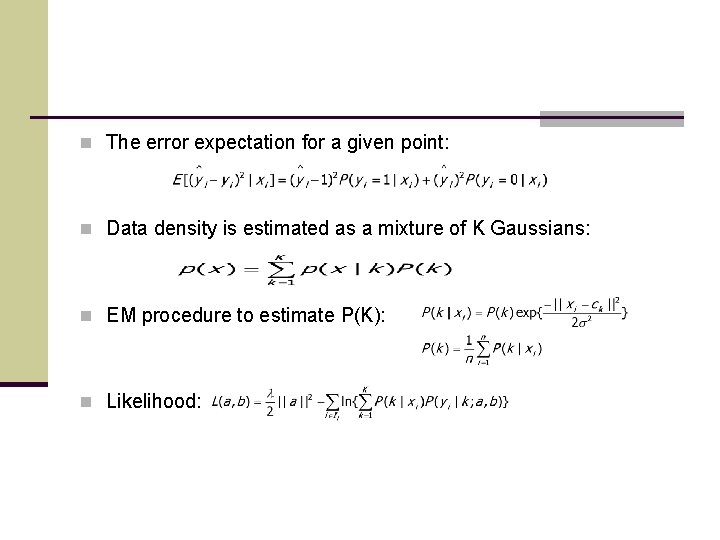

n The error expectation for a given point: n Data density is estimated as a mixture of K Gaussians: n EM procedure to estimate P(K): n Likelihood: