daumkakao S 2 Graph A largescale graph database

daumkakao S 2 Graph : A large-scale graph database with Hbase

Reference 1. HBase Conference 2015 1. http: //www. slideshare. net/HBase. Con/use-cases-session-5 2. https: //vimeo. com/128203919 2. Deview 2015 3. Apache Con Big. Data Europe 1. http: //sched. co/3 zt. M 2

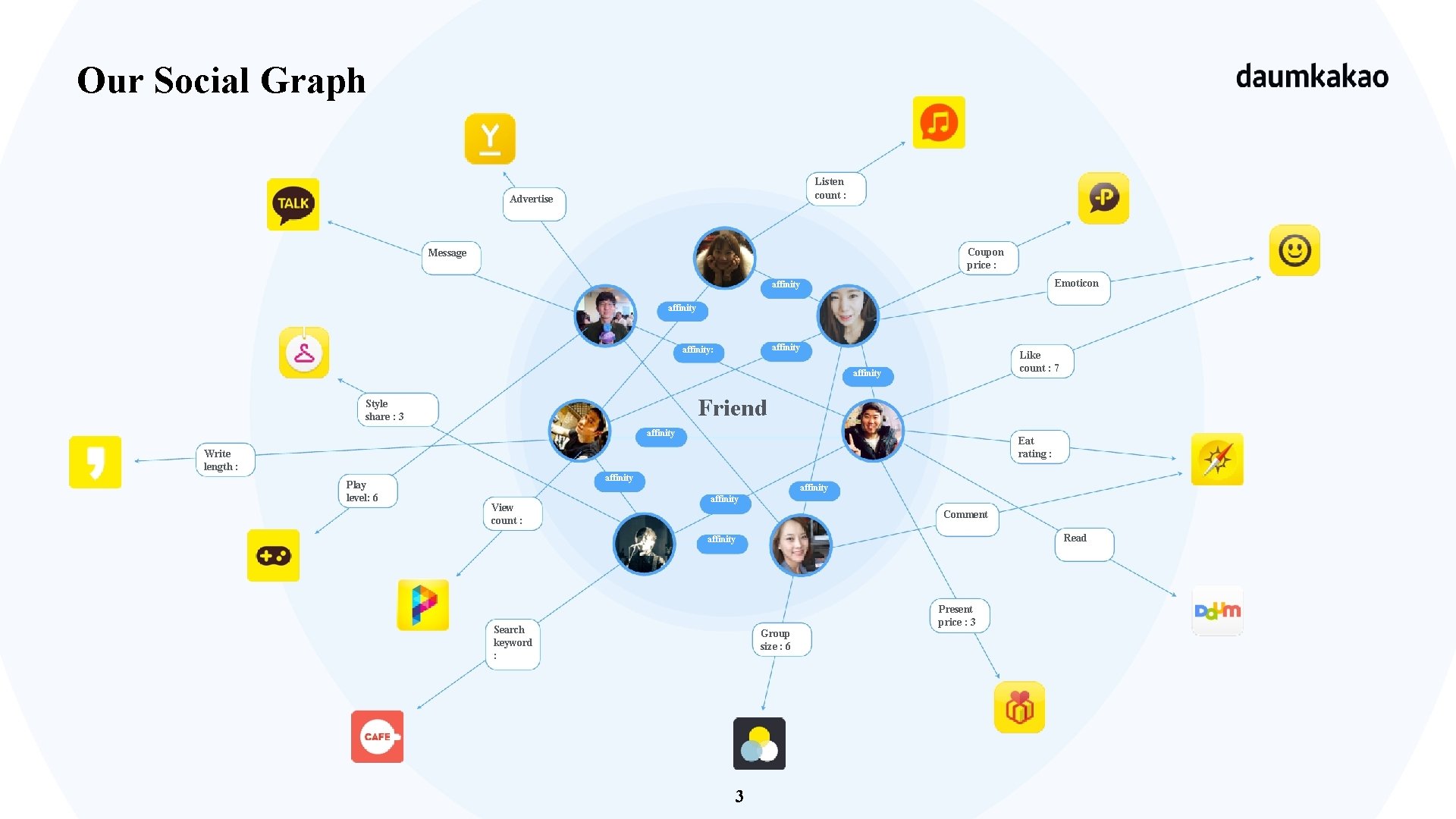

Our Social Graph Listen count : Advertise Coupon price : Message Emoticon affinity: Like count : 7 affinity Friend Style share : 3 affinity Eat rating : Write length : Play level: 6 affinity View count : affinity Comment Read affinity Search keyword : Group size : 6 3 Present price : 3

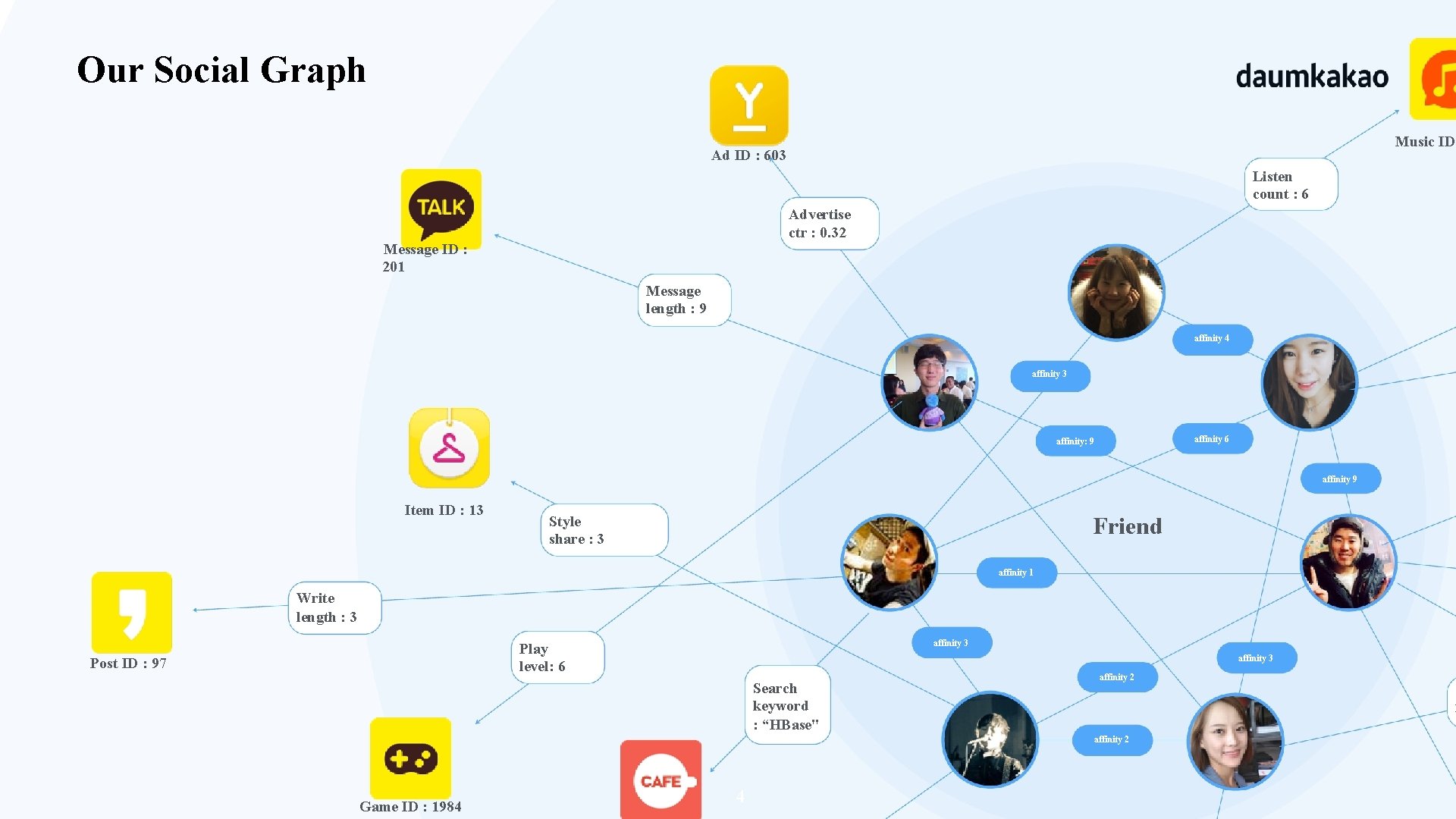

Our Social Graph Music ID Ad ID : 603 Listen count : 6 Advertise ctr : 0. 32 Message ID : 201 Message length : 9 affinity 4 affinity 3 affinity 6 affinity: 9 affinity 9 Item ID : 13 Friend Style share : 3 affinity 1 Write length : 3 affinity 3 Play level: 6 Post ID : 97 affinity 3 Search keyword : “HBase" affinity 2 C l affinity 2 Game ID : 1984 4

Technical Challenges 1. Large social graph constantly changing a. Scale more than, social network: 10 billion edges, 200 million vertices, 50 million update on existing edges. user activities: over 1 billion new edges per day 5

Technical Challenges (cont) 2. Low latency for breadth first search traversal on connected data. a. performance requirement peak graph-traversing query per second: 20000 response time: 100 ms 6

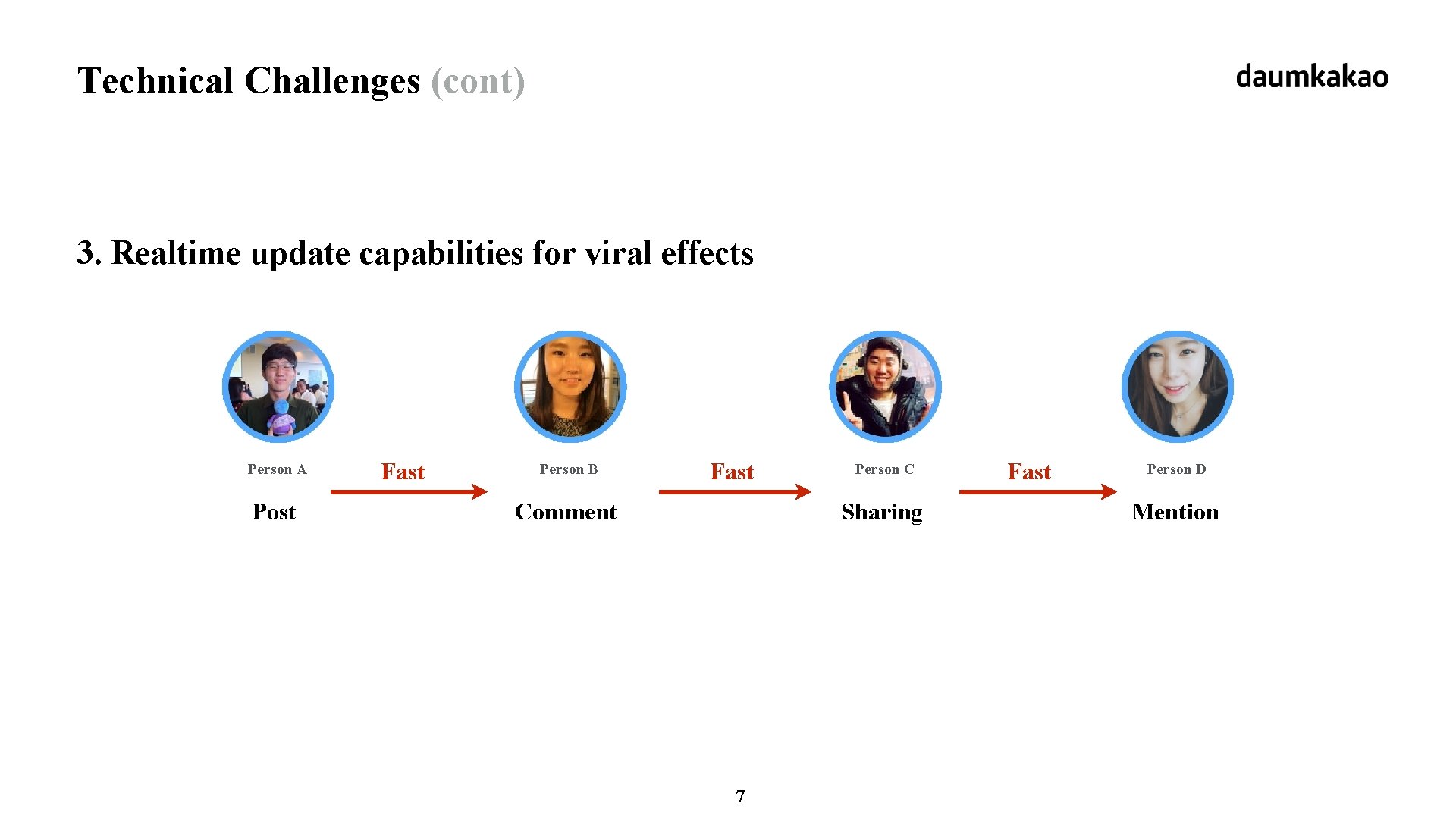

Technical Challenges (cont) 3. Realtime update capabilities for viral effects Person A Post Fast Person B Fast Comment Person C Sharing 7 Fast Person D Mention

Technical Challenges (cont) 4. Support for Dynamic Ranking logic a. Push strategy: Hard to change data ranking logic dynamically. b. Pull strategy: Enables user to try out various data ranking logics. 8

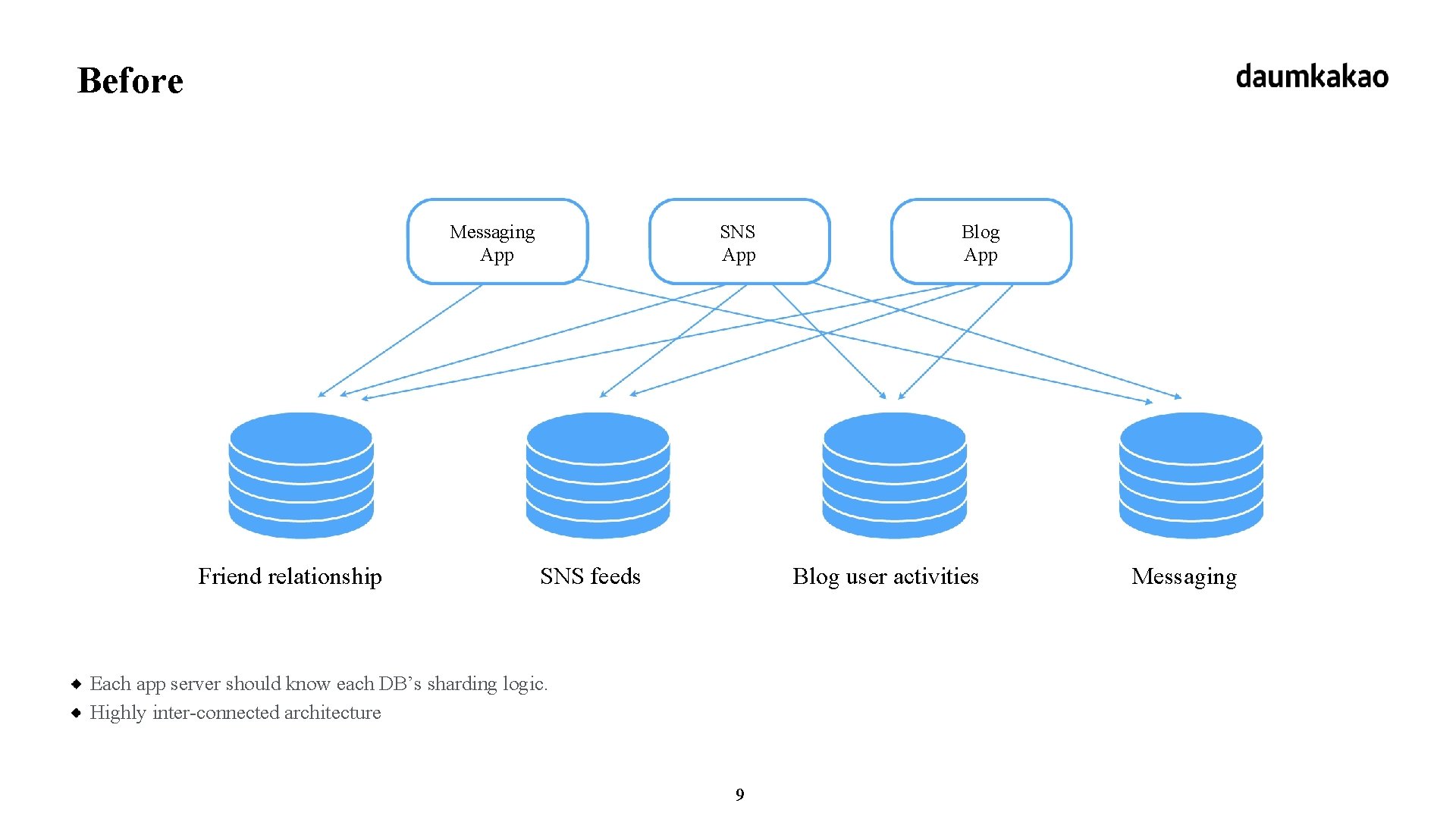

Before Messaging App Friend relationship SNS App SNS feeds Blog App Blog user activities Each app server should know each DB’s sharding logic. Highly inter-connected architecture 9 Messaging

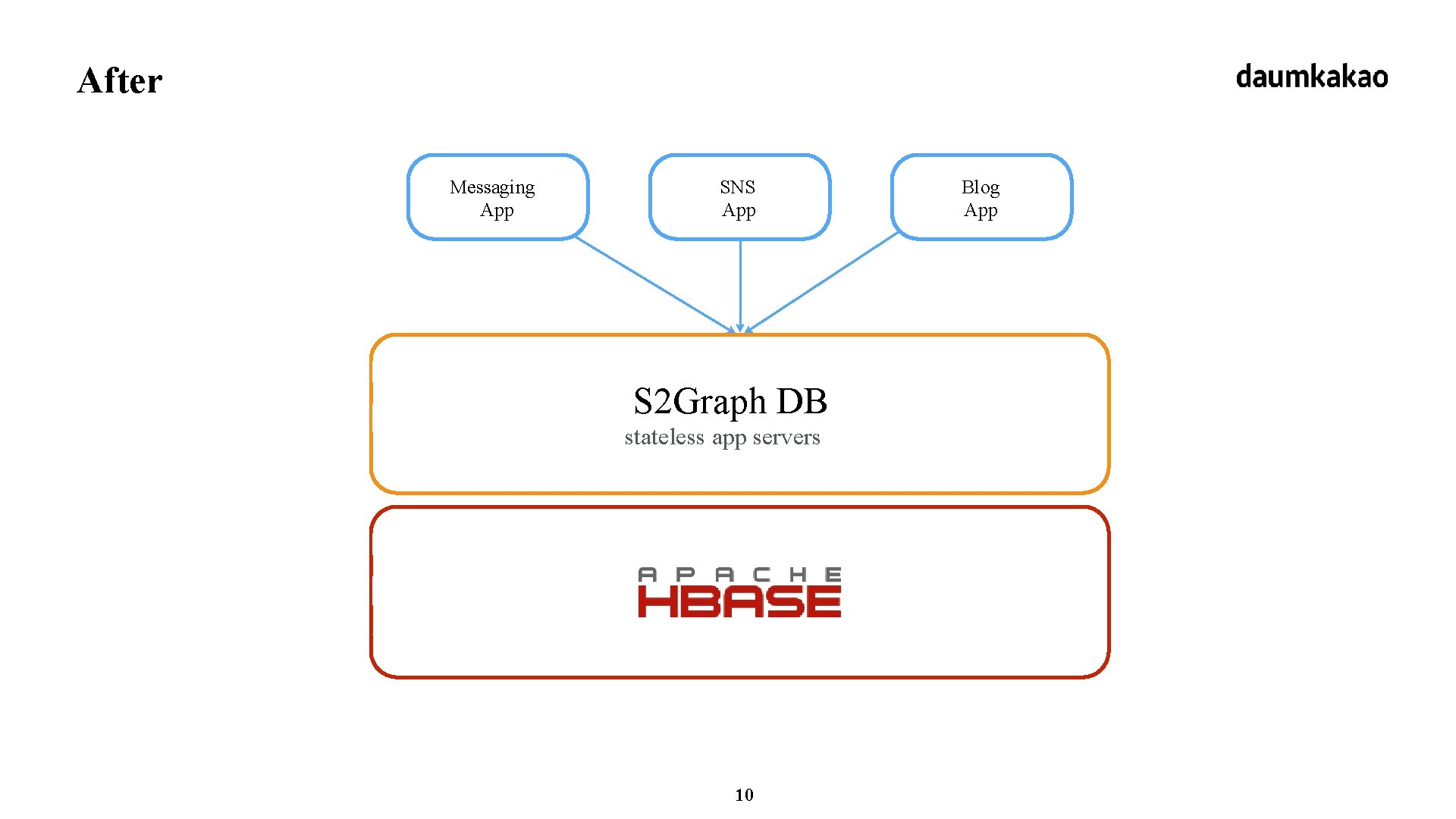

After Messaging App SNS App S 2 Graph DB stateless app servers 10 Blog App

daumkakao What is S 2 Graph?

What is S 2 Graph? Storage-as-a-Service + Graph API = Realtime Breadth First Search 12

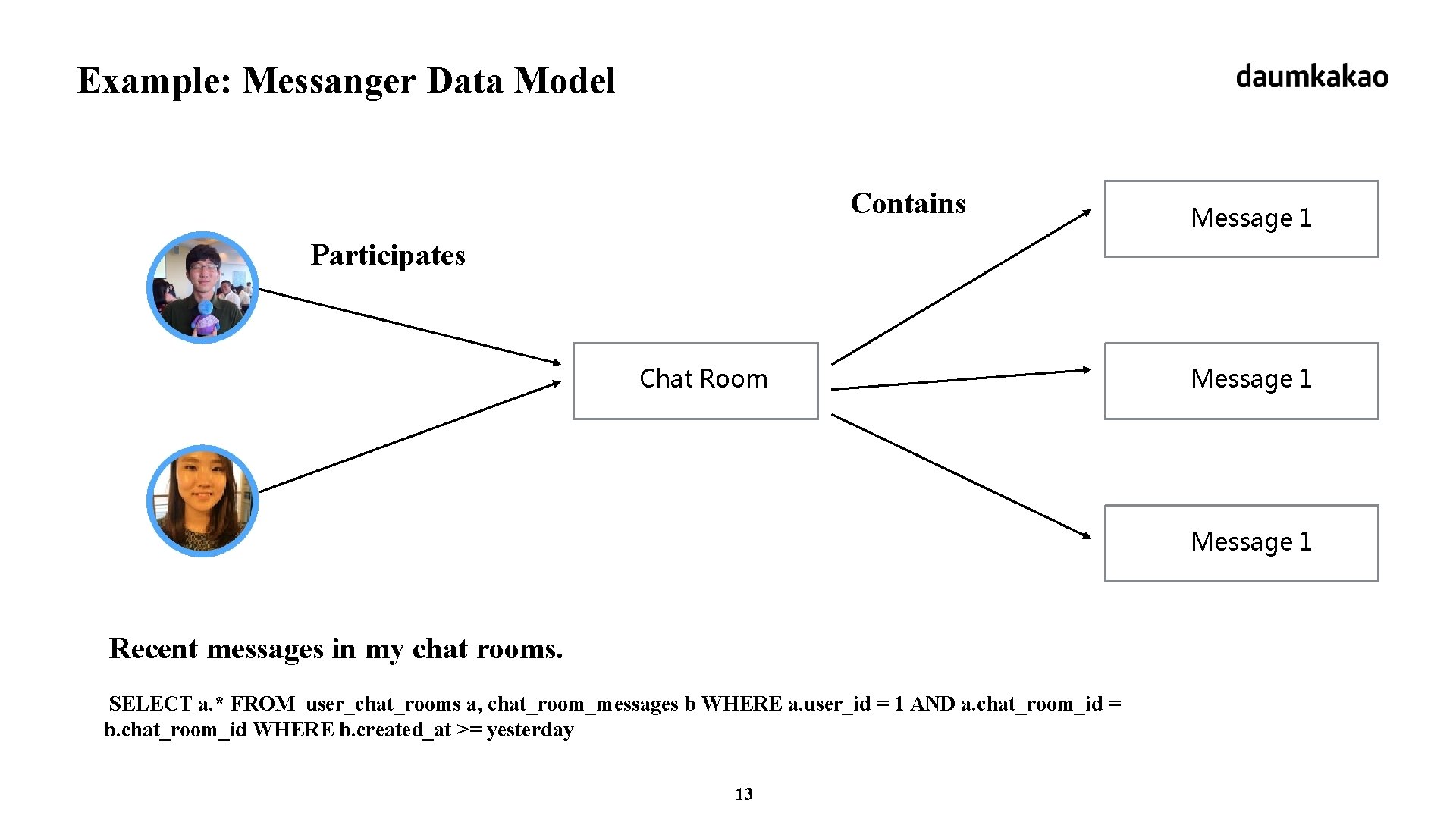

Example: Messanger Data Model Contains Message 1 Participates Chat Room Message 1 Recent messages in my chat rooms. SELECT a. * FROM user_chat_rooms a, chat_room_messages b WHERE a. user_id = 1 AND a. chat_room_id = b. chat_room_id WHERE b. created_at >= yesterday 13

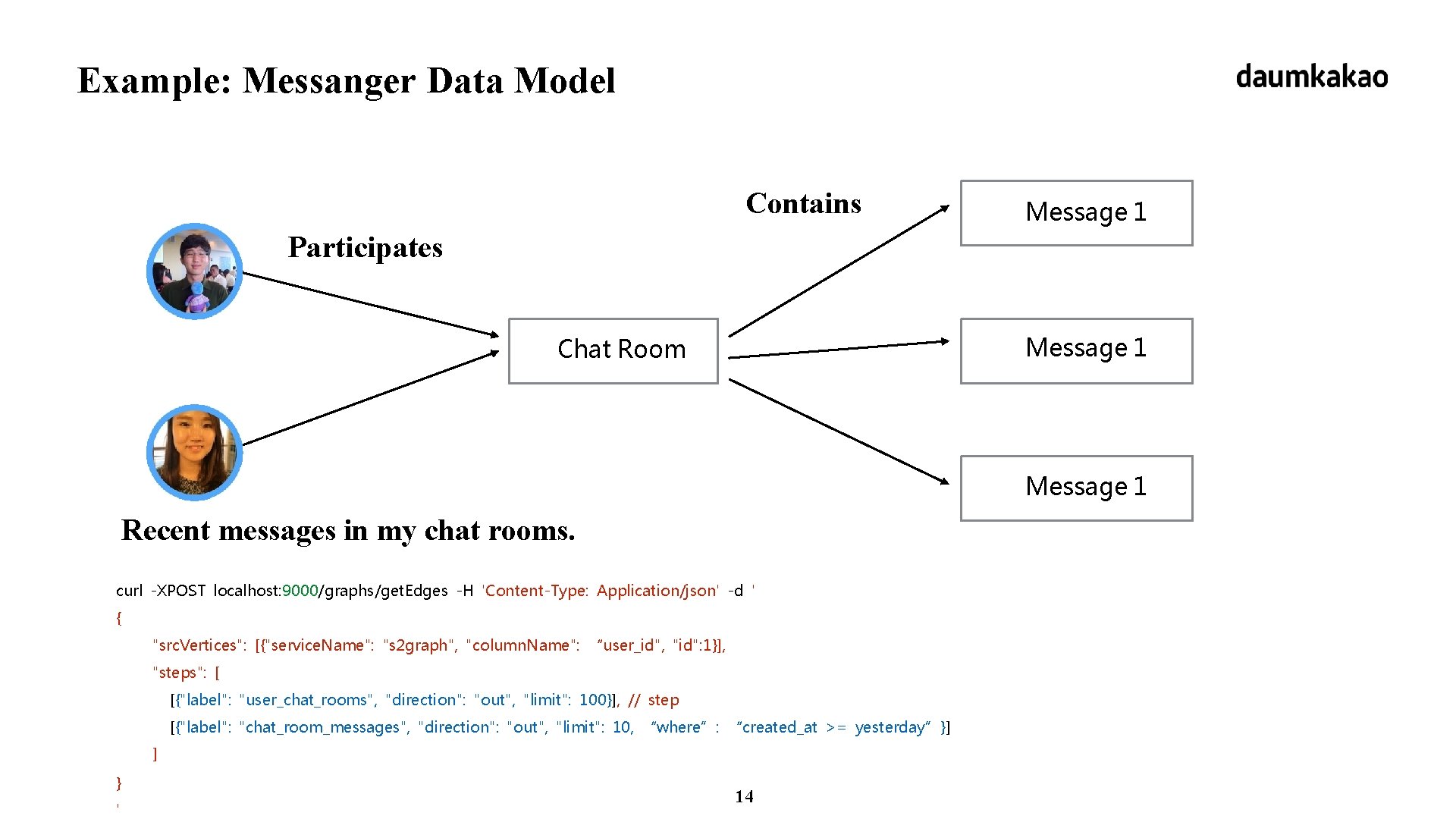

Example: Messanger Data Model Contains Message 1 Participates Message 1 Chat Room Message 1 Recent messages in my chat rooms. curl -XPOST localhost: 9000/graphs/get. Edges -H 'Content-Type: Application/json' -d ' { "src. Vertices": [{"service. Name": "s 2 graph", "column. Name": “user_id", "id": 1}], "steps": [ [{"label": "user_chat_rooms", "direction": "out", "limit": 100}], // step [{"label": "chat_room_messages", "direction": "out", "limit": 10, “where”: “created_at >= yesterday”}] ] } ' 14

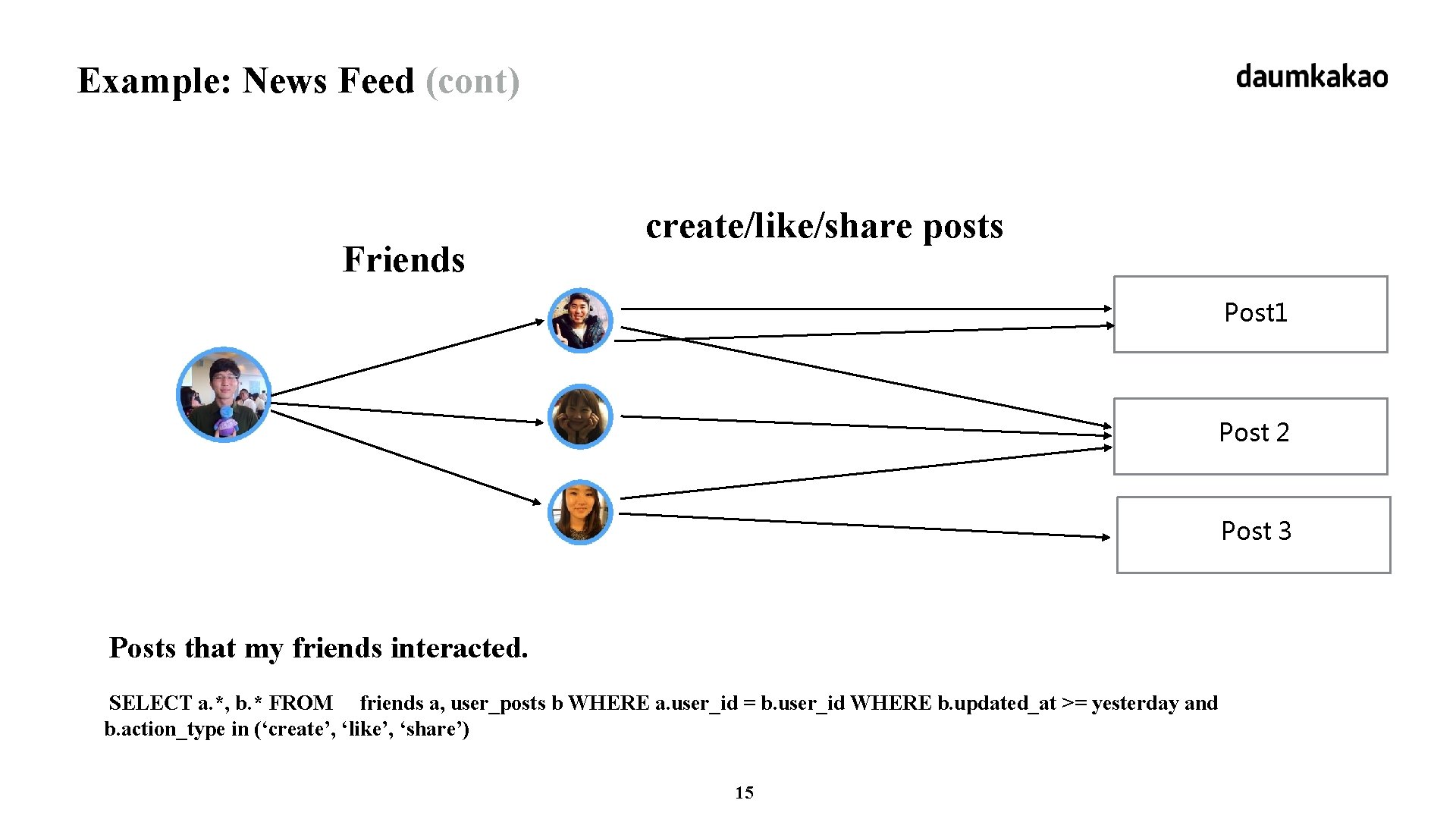

Example: News Feed (cont) Friends create/like/share posts Post 1 Post 2 Post 3 Posts that my friends interacted. SELECT a. *, b. * FROM friends a, user_posts b WHERE a. user_id = b. user_id WHERE b. updated_at >= yesterday and b. action_type in (‘create’, ‘like’, ‘share’) 15

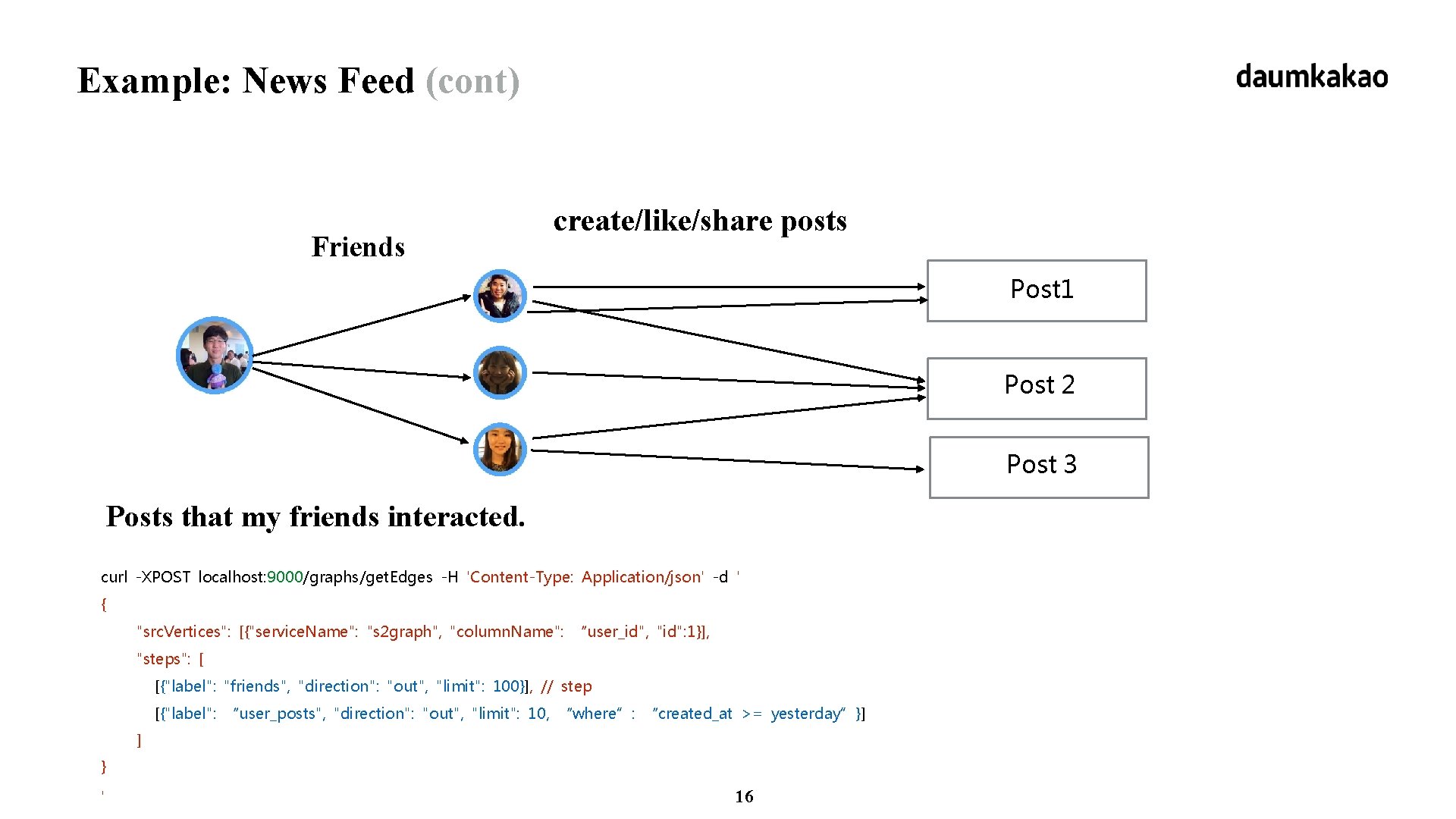

Example: News Feed (cont) Friends create/like/share posts Post 1 Post 2 Post 3 Posts that my friends interacted. curl -XPOST localhost: 9000/graphs/get. Edges -H 'Content-Type: Application/json' -d ' { "src. Vertices": [{"service. Name": "s 2 graph", "column. Name": “user_id", "id": 1}], "steps": [ [{"label": "friends", "direction": "out", "limit": 100}], // step [{"label": “user_posts", "direction": "out", "limit": 10, “where”: “created_at >= yesterday”}] ] } ' 16

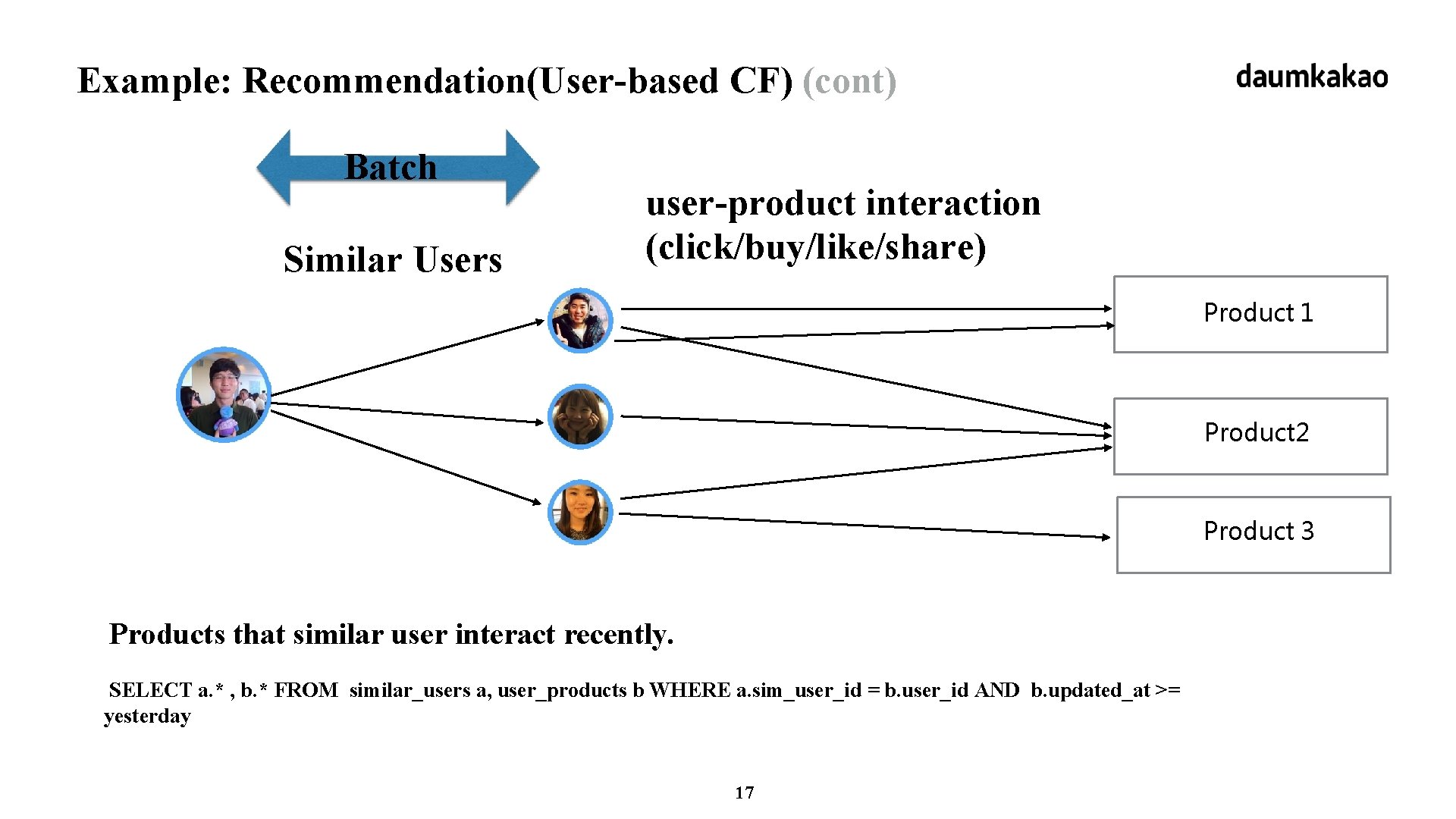

Example: Recommendation(User-based CF) (cont) Batch Similar Users user-product interaction (click/buy/like/share) Product 1 Product 2 Product 3 Products that similar user interact recently. SELECT a. * , b. * FROM similar_users a, user_products b WHERE a. sim_user_id = b. user_id AND b. updated_at >= yesterday 17

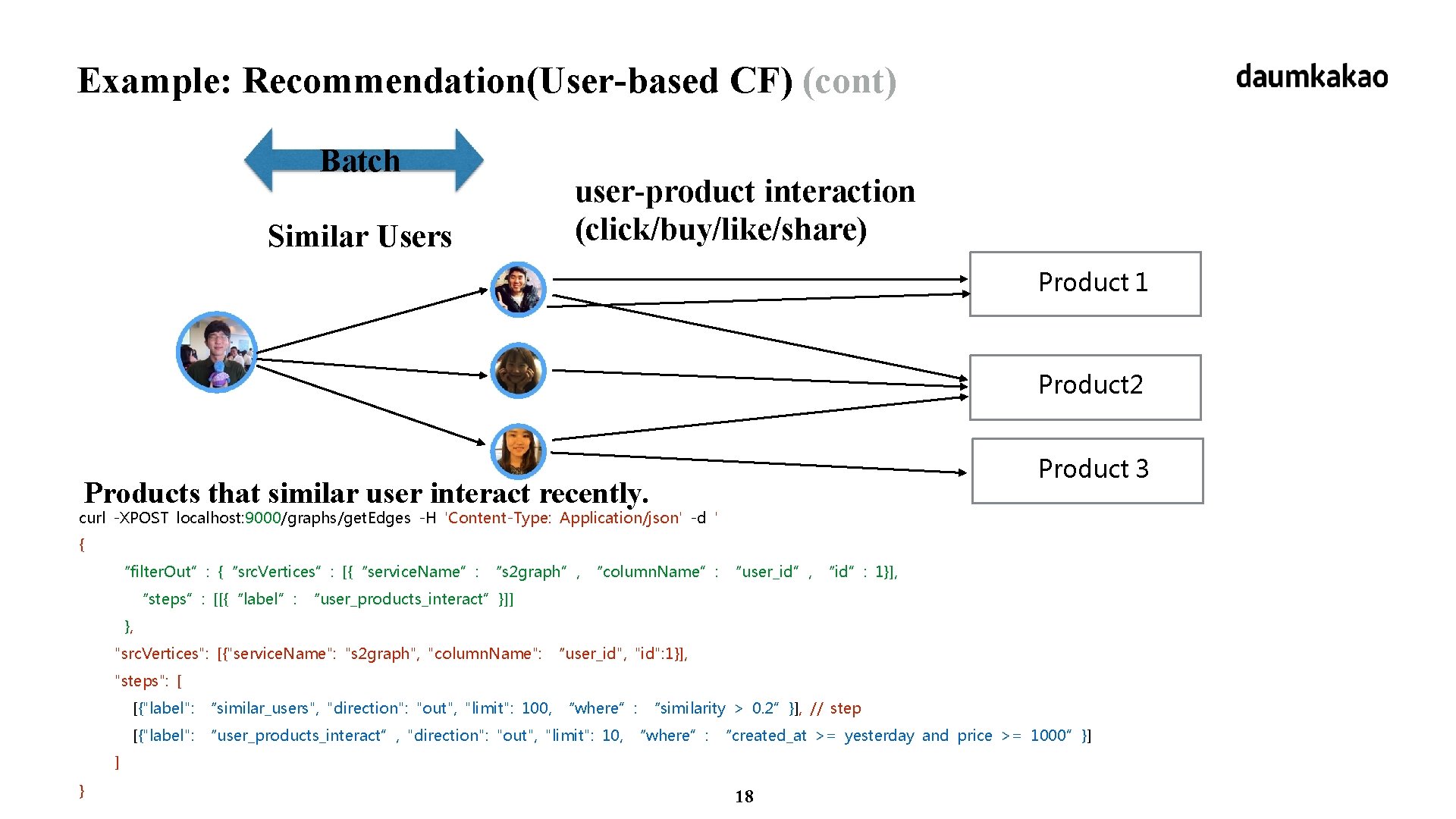

Example: Recommendation(User-based CF) (cont) Batch Similar Users user-product interaction (click/buy/like/share) Product 1 Product 2 Product 3 Products that similar user interact recently. curl -XPOST localhost: 9000/graphs/get. Edges -H 'Content-Type: Application/json' -d ' { “filter. Out”: {“src. Vertices”: [{“service. Name”: “s 2 graph”, “column. Name”: “user_id”, “id”: 1}], “steps”: [[{“label”: “user_products_interact”}]] }, "src. Vertices": [{"service. Name": "s 2 graph", "column. Name": “user_id", "id": 1}], "steps": [ [{"label": “similar_users", "direction": "out", "limit": 100, “where”: “similarity > 0. 2”}], // step [{"label": “user_products_interact”, "direction": "out", "limit": 10, “where”: “created_at >= yesterday and price >= 1000”}] ] } 18

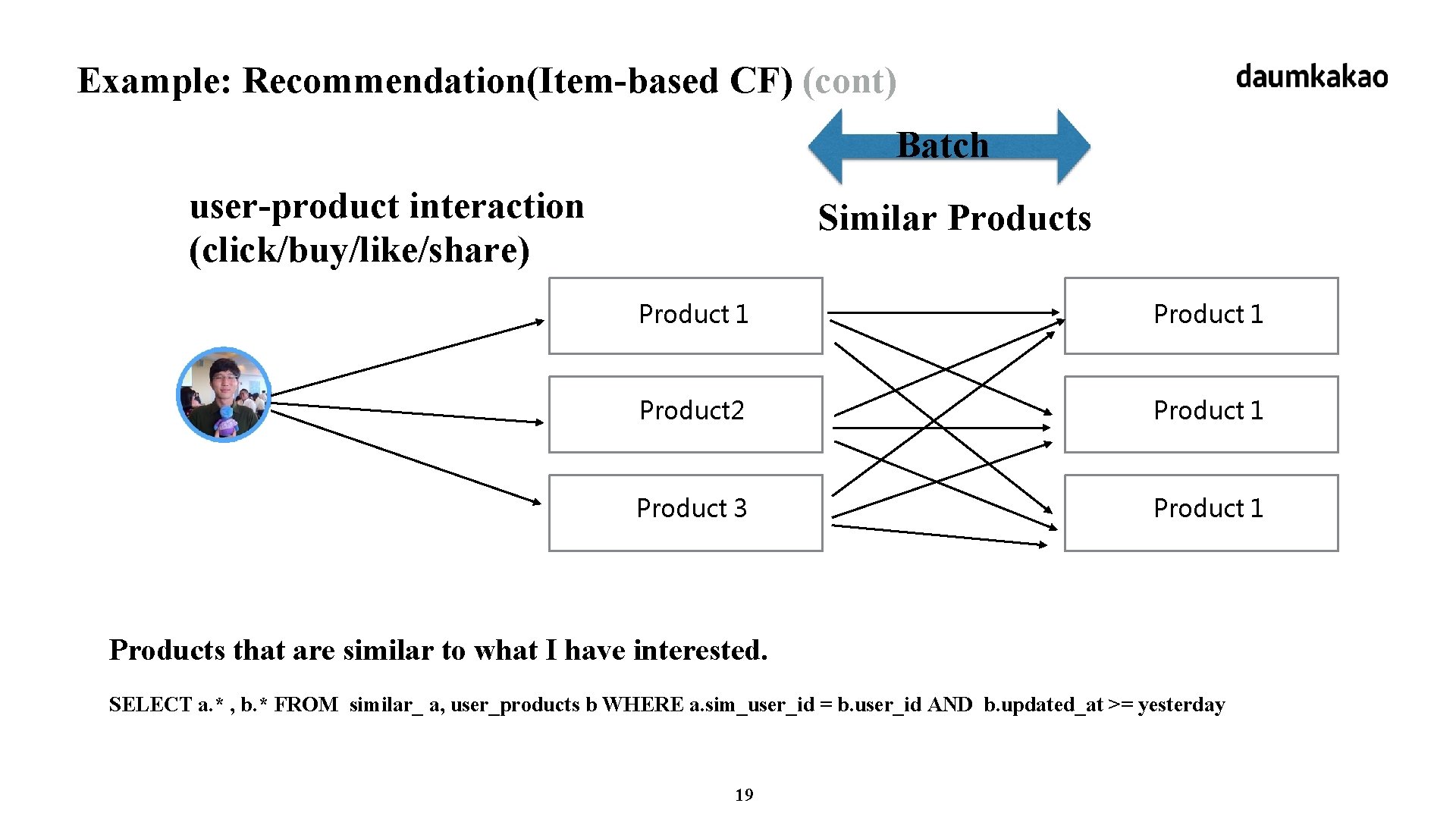

Example: Recommendation(Item-based CF) (cont) Batch user-product interaction (click/buy/like/share) Similar Products Product 1 Product 2 Product 1 Product 3 Product 1 Products that are similar to what I have interested. SELECT a. * , b. * FROM similar_ a, user_products b WHERE a. sim_user_id = b. user_id AND b. updated_at >= yesterday 19

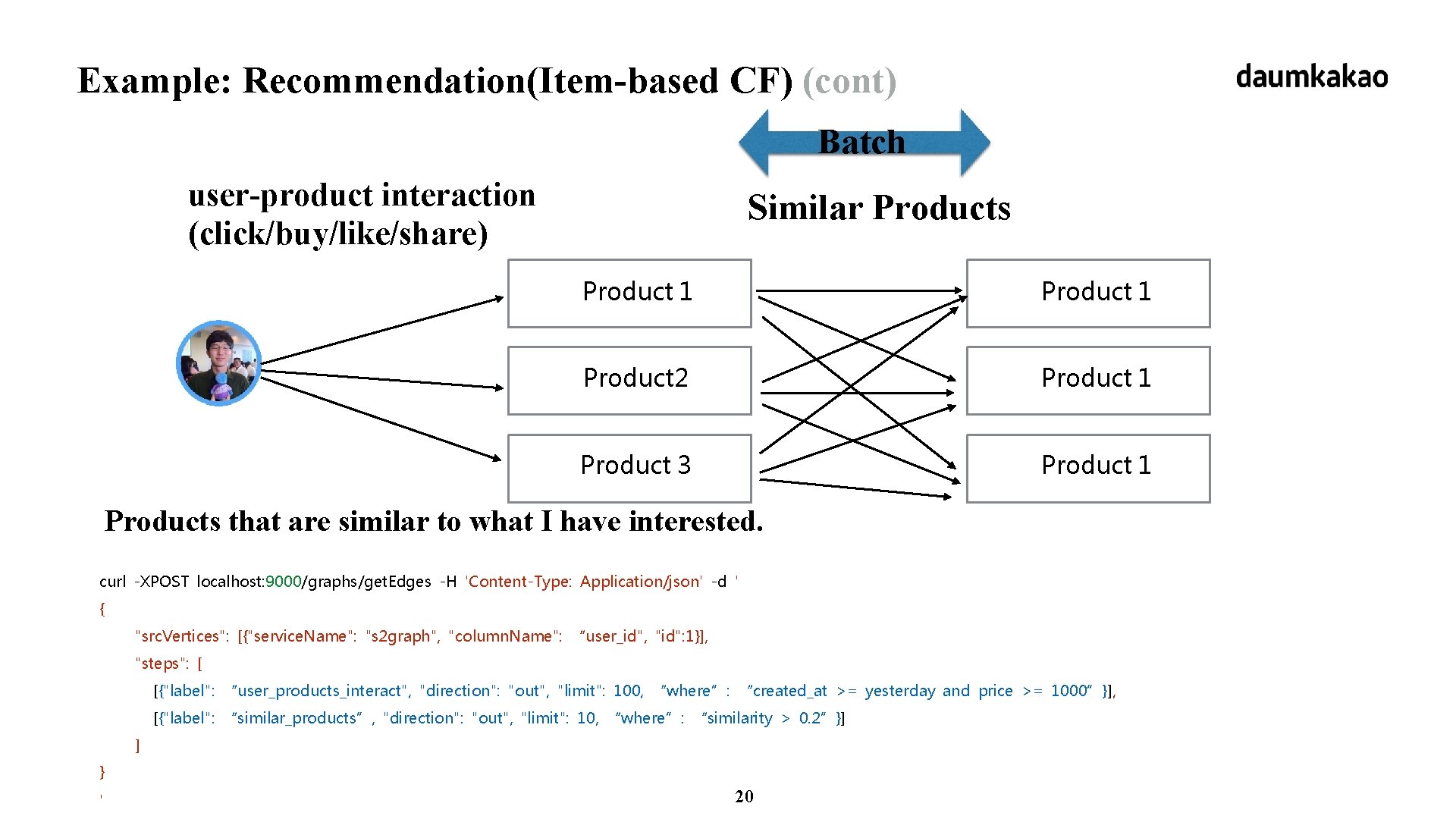

Example: Recommendation(Item-based CF) (cont) Batch user-product interaction (click/buy/like/share) Similar Products Product 1 Product 2 Product 1 Product 3 Product 1 Products that are similar to what I have interested. curl -XPOST localhost: 9000/graphs/get. Edges -H 'Content-Type: Application/json' -d ' { "src. Vertices": [{"service. Name": "s 2 graph", "column. Name": “user_id", "id": 1}], "steps": [ [{"label": “user_products_interact", "direction": "out", "limit": 100, “where”: “created_at >= yesterday and price >= 1000”}], [{"label": “similar_products”, "direction": "out", "limit": 10, “where”: “similarity > 0. 2”}] ] } ' 20

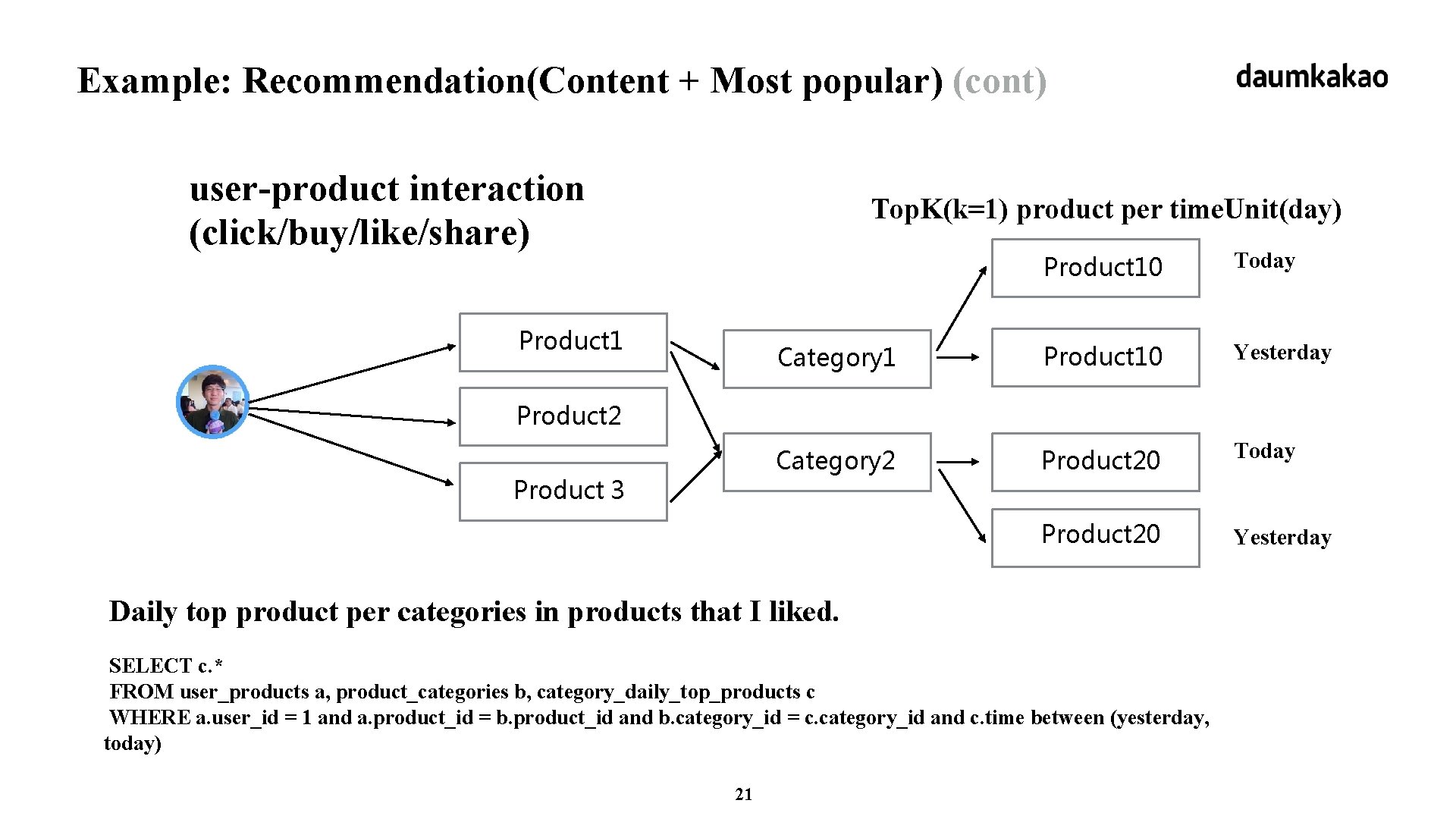

Example: Recommendation(Content + Most popular) (cont) user-product interaction (click/buy/like/share) Top. K(k=1) product per time. Unit(day) Product 10 Today Category 1 Product 10 Yesterday Category 2 Product 20 Today Product 20 Yesterday Product 2 Product 3 Daily top product per categories in products that I liked. SELECT c. * FROM user_products a, product_categories b, category_daily_top_products c WHERE a. user_id = 1 and a. product_id = b. product_id and b. category_id = c. category_id and c. time between (yesterday, today) 21

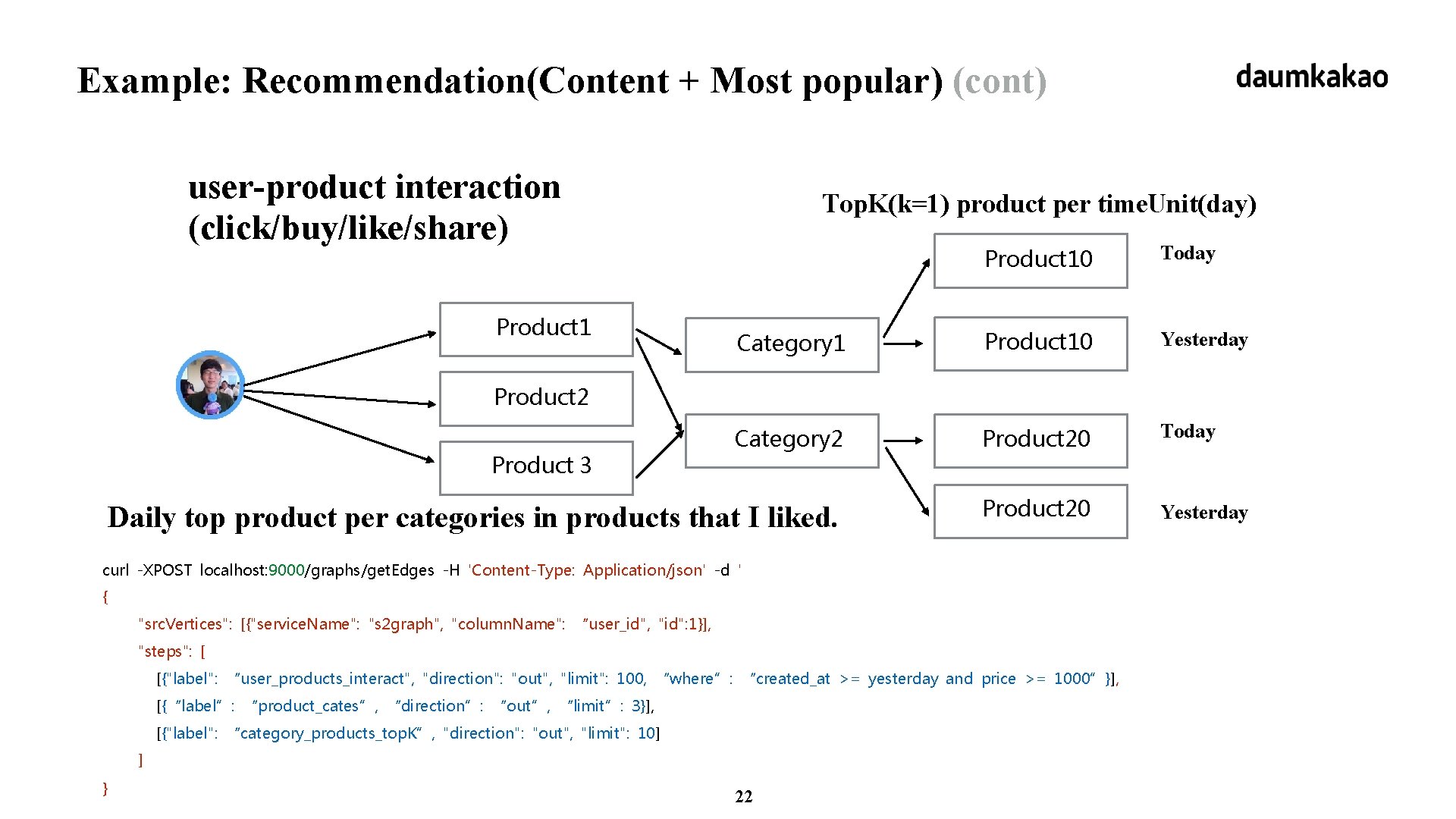

Example: Recommendation(Content + Most popular) (cont) user-product interaction (click/buy/like/share) Top. K(k=1) product per time. Unit(day) Product 10 Today Category 1 Product 10 Yesterday Category 2 Product 20 Today Daily top product per categories in products that I liked. Product 20 Yesterday Product 1 Product 2 Product 3 curl -XPOST localhost: 9000/graphs/get. Edges -H 'Content-Type: Application/json' -d ' { "src. Vertices": [{"service. Name": "s 2 graph", "column. Name": “user_id", "id": 1}], "steps": [ [{"label": “user_products_interact", "direction": "out", "limit": 100, “where”: “created_at >= yesterday and price >= 1000”}], [{“label”: “product_cates”, “direction”: “out”, “limit”: 3}], [{"label": “category_products_top. K”, "direction": "out", "limit": 10] ] } 22

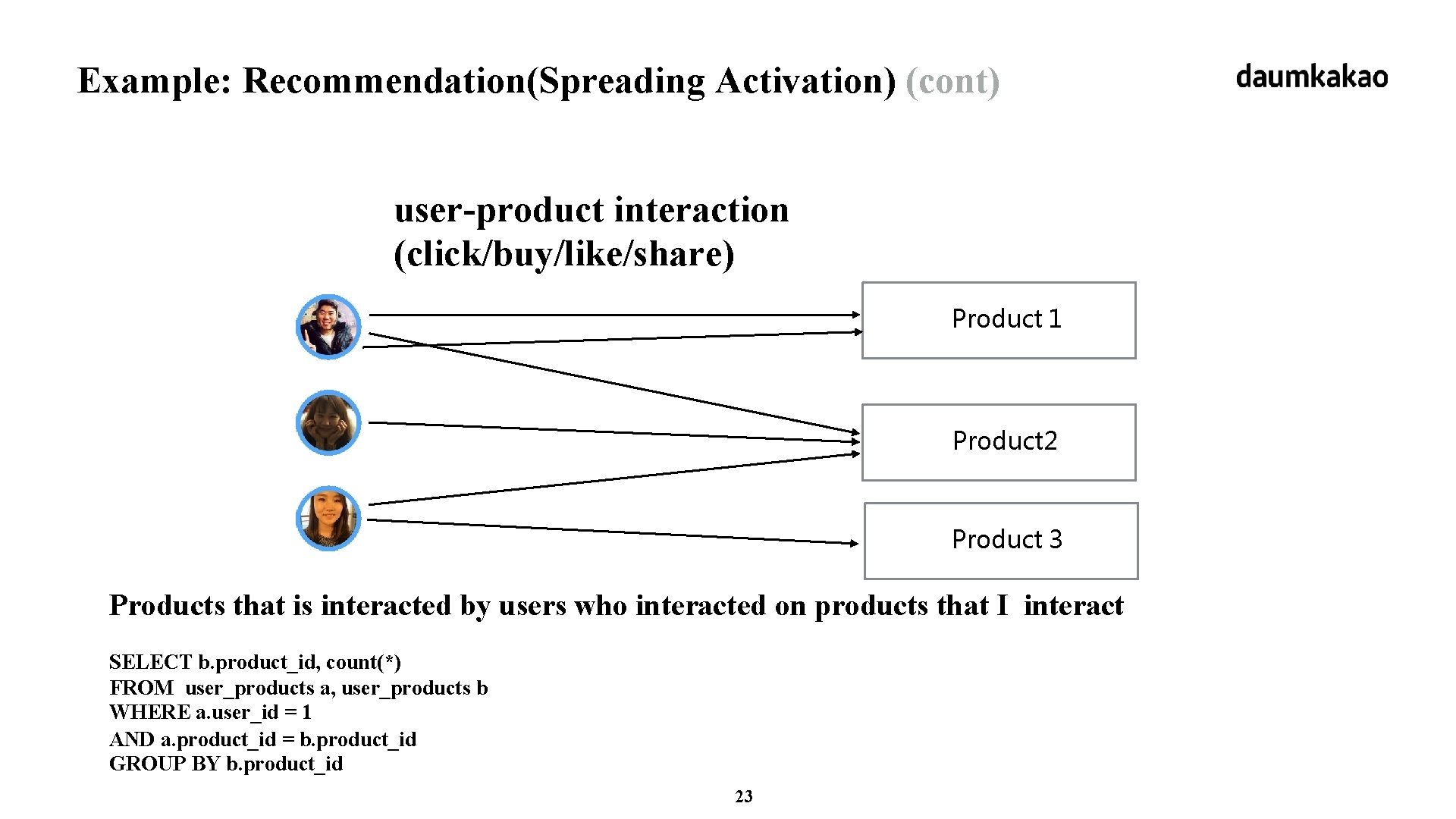

Example: Recommendation(Spreading Activation) (cont) user-product interaction (click/buy/like/share) Product 1 Product 2 Product 3 Products that is interacted by users who interacted on products that I interact SELECT b. product_id, count(*) FROM user_products a, user_products b WHERE a. user_id = 1 AND a. product_id = b. product_id GROUP BY b. product_id 23

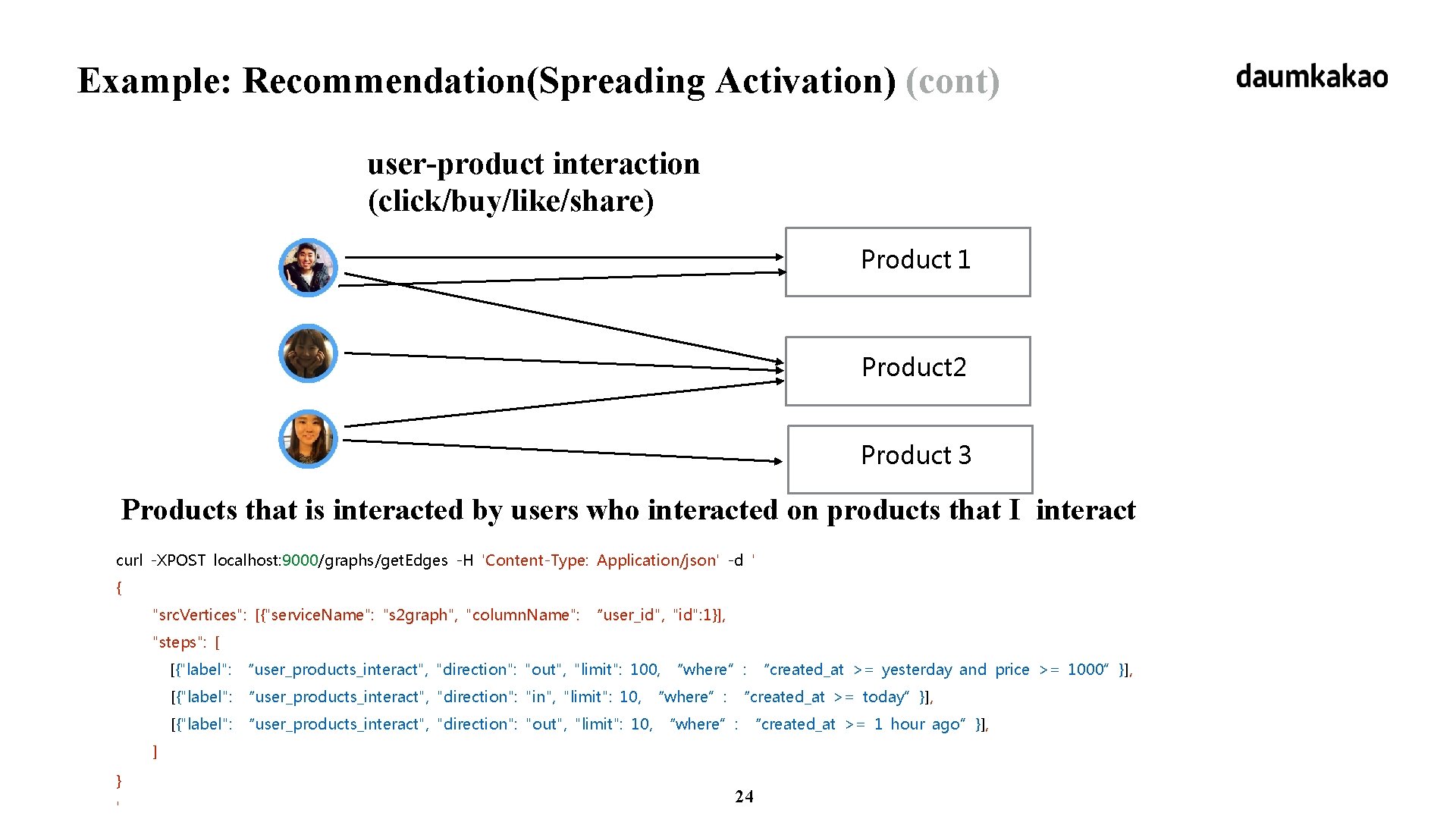

Example: Recommendation(Spreading Activation) (cont) user-product interaction (click/buy/like/share) Product 1 Product 2 Product 3 Products that is interacted by users who interacted on products that I interact curl -XPOST localhost: 9000/graphs/get. Edges -H 'Content-Type: Application/json' -d ' { "src. Vertices": [{"service. Name": "s 2 graph", "column. Name": “user_id", "id": 1}], "steps": [ [{"label": “user_products_interact", "direction": "out", "limit": 100, “where”: “created_at >= yesterday and price >= 1000”}], [{"label": “user_products_interact", "direction": "in", "limit": 10, “where”: “created_at >= today”}], [{"label": “user_products_interact", "direction": "out", "limit": 10, “where”: “created_at >= 1 hour ago”}], ] } ' 24

Realization 1. These examples resemble graphs. 2. Object is. Vertex, Relationship is Edge. 3. Necessary APIs: breadth first search on large scale graph. 25

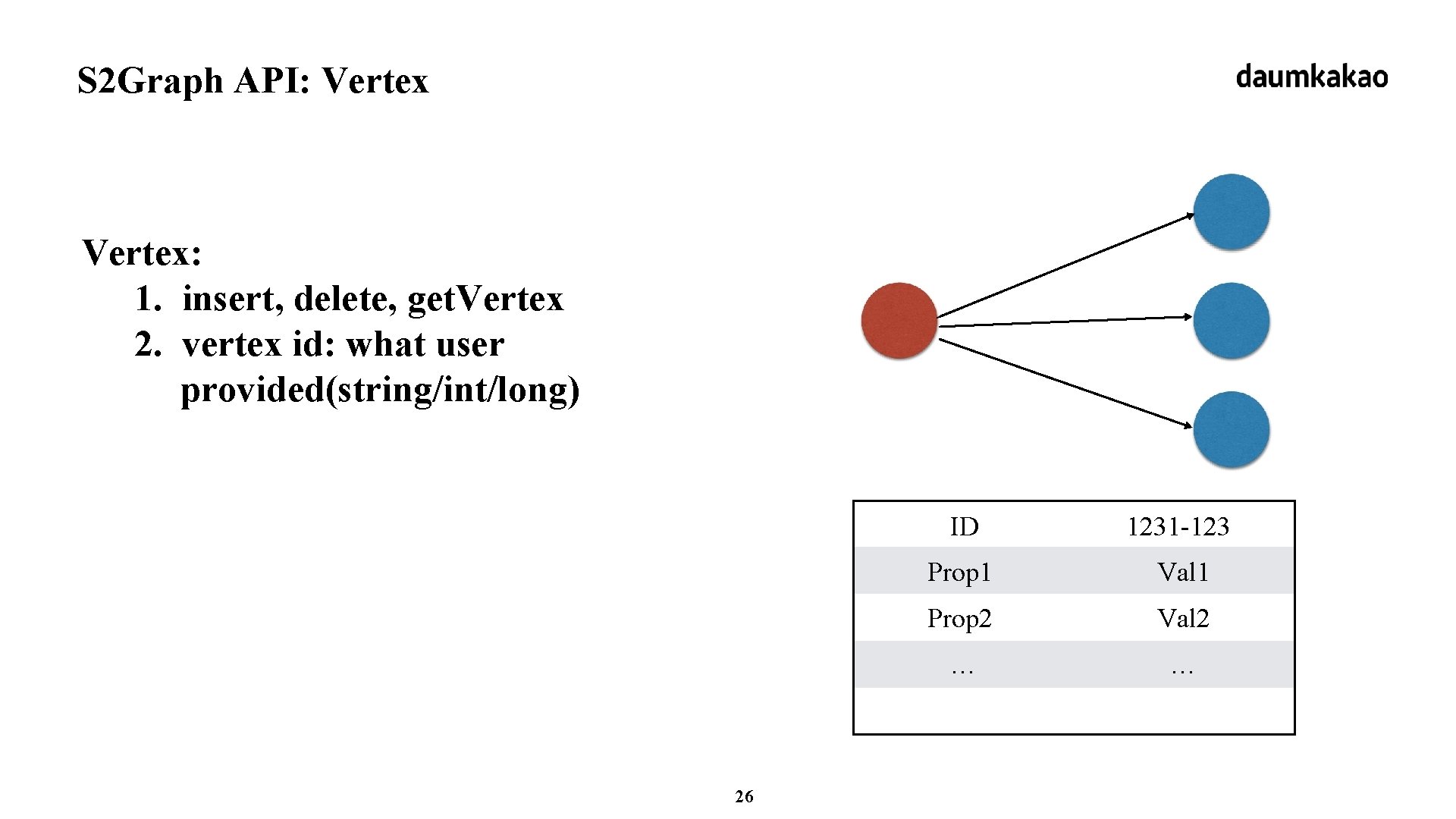

S 2 Graph API: Vertex: 1. insert, delete, get. Vertex 2. vertex id: what user provided(string/int/long) 26 ID 1231 -123 Prop 1 Val 1 Prop 2 Val 2 … …

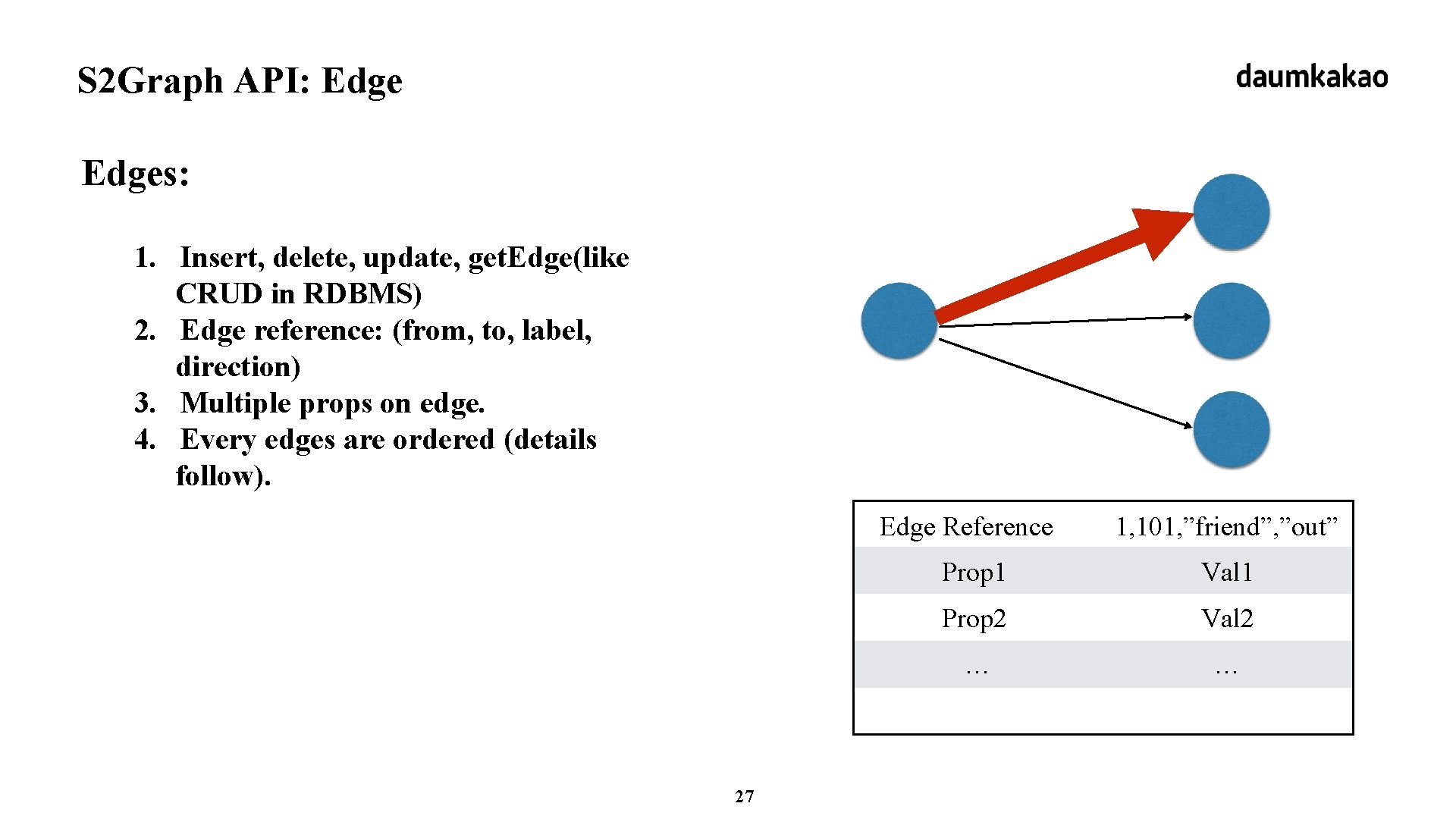

S 2 Graph API: Edges: 1. Insert, delete, update, get. Edge(like CRUD in RDBMS) 2. Edge reference: (from, to, label, direction) 3. Multiple props on edge. 4. Every edges are ordered (details follow). 27 Edge Reference 1, 101, ”friend”, ”out” Prop 1 Val 1 Prop 2 Val 2 … …

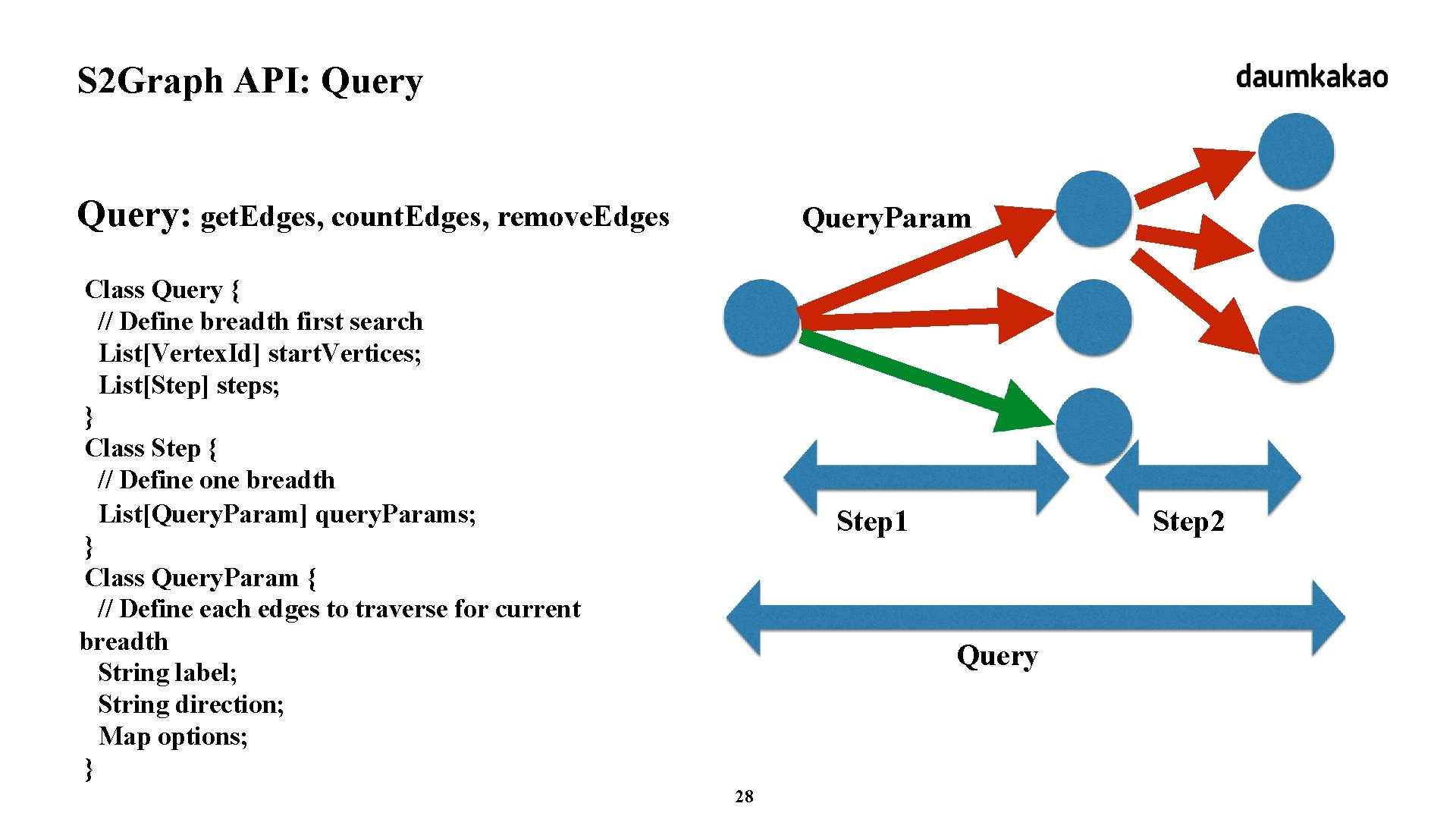

S 2 Graph API: Query: get. Edges, count. Edges, remove. Edges Query. Param Class Query { // Define breadth first search List[Vertex. Id] start. Vertices; List[Step] steps; } Class Step { // Define one breadth List[Query. Param] query. Params; } Class Query. Param { // Define each edges to traverse for current breadth String label; String direction; Map options; } Step 1 Step 2 Query 28

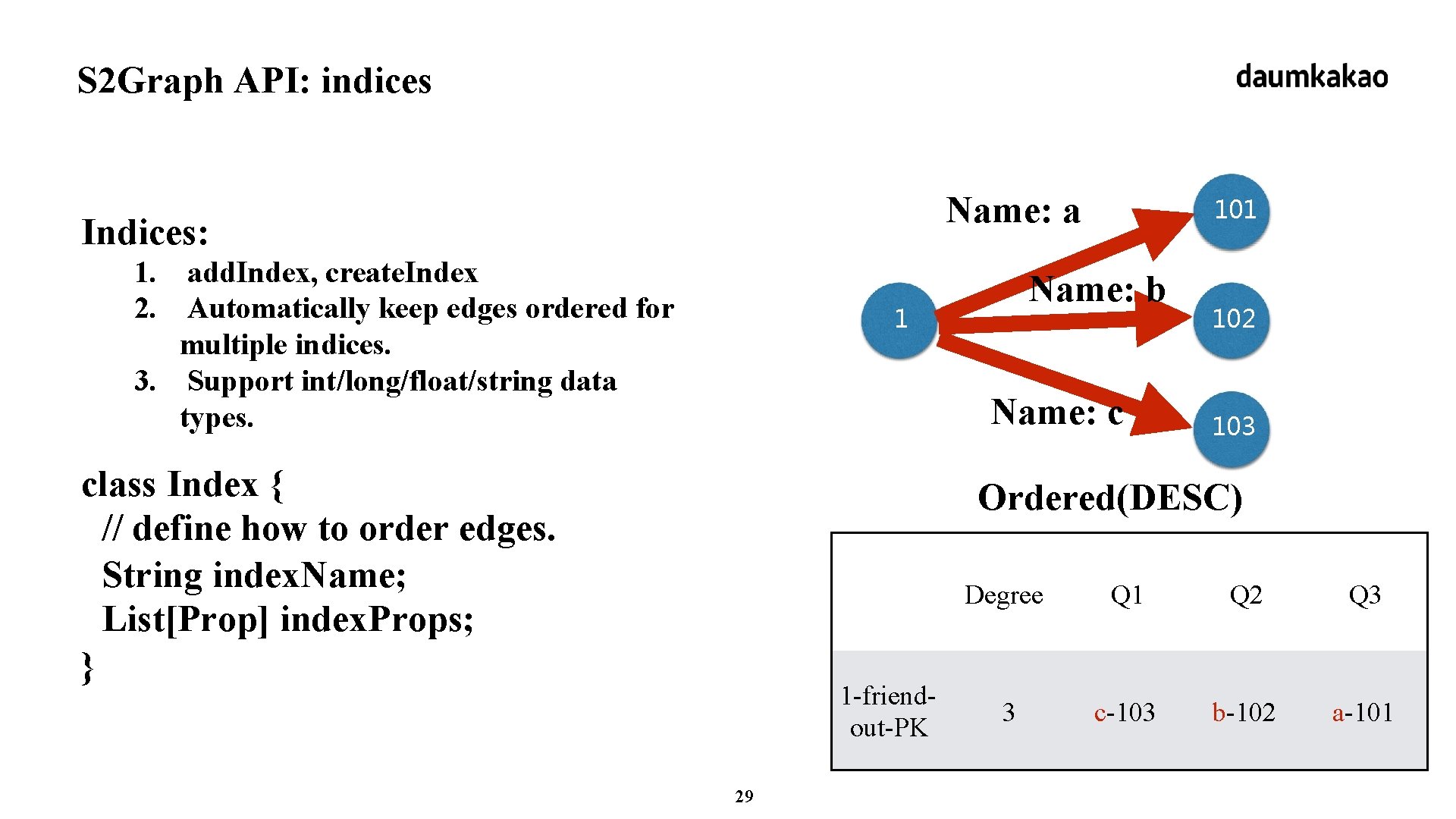

S 2 Graph API: indices Name: a Indices: 1. add. Index, create. Index 2. Automatically keep edges ordered for multiple indices. 3. Support int/long/float/string data types. 101 Name: b 1 Name: c class Index { // define how to order edges. String index. Name; List[Prop] index. Props; } 102 103 Ordered(DESC) 1 -friendout-PK 29 Degree Q 1 Q 2 Q 3 3 c-103 b-102 a-101

What is S 2 Graph Storage-as-a-Service + Graph API = Realtime Breadth First Search S 2 Graph is Not support global computation(not like Apache Giraph, graph. X). Not support graph algorithm like page rank, shortest path. 30

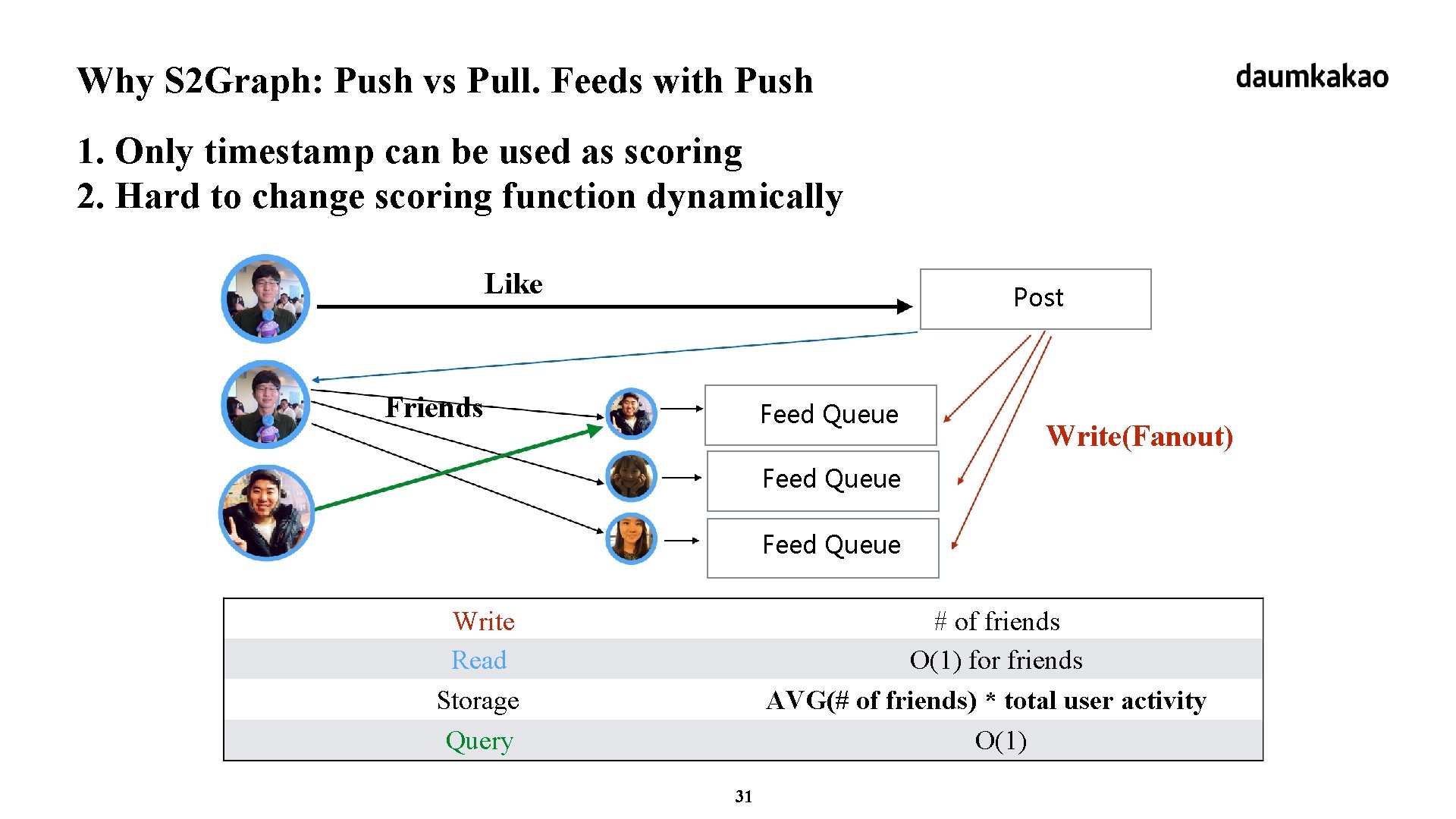

Why S 2 Graph: Push vs Pull. Feeds with Push 1. Only timestamp can be used as scoring 2. Hard to change scoring function dynamically Like Post Friends Feed Queue Write(Fanout) Feed Queue Write Read Storage Query # of friends O(1) for friends AVG(# of friends) * total user activity O(1) 31

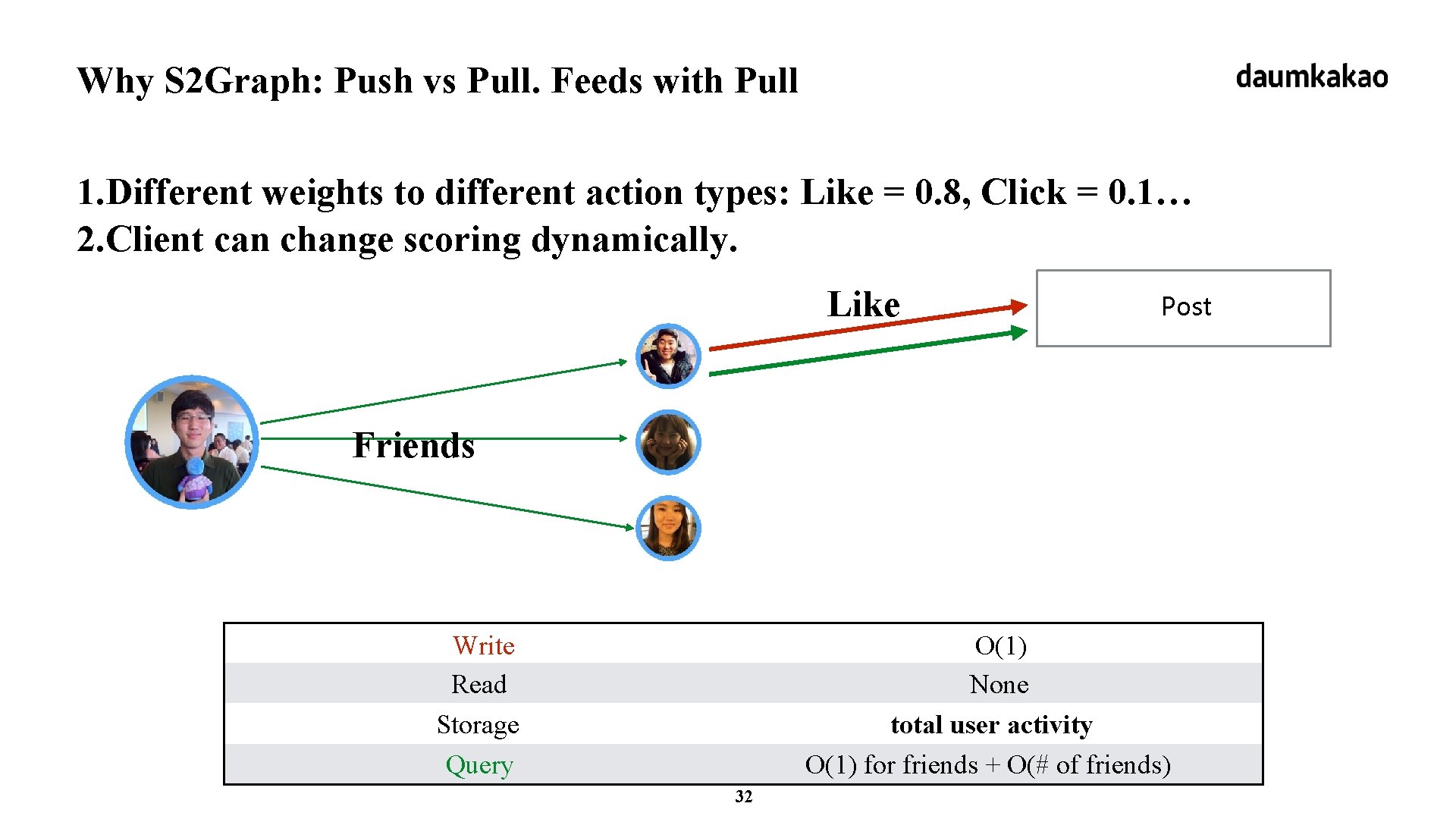

Why S 2 Graph: Push vs Pull. Feeds with Pull 1. Different weights to different action types: Like = 0. 8, Click = 0. 1… 2. Client can change scoring dynamically. Like Post Friends Write Read Storage Query O(1) None total user activity O(1) for friends + O(# of friends) 32

Why S 2 Graph: S 2 Graph Supports Pull + Push Pull >> push only if 1. fast response time: 10 ~ 100 ms 2. throughput: 10 K ~ 20 K QPS S 2 Graph provide linear scalability on 1. number of machine. 2. bfs search space(how many edges that single query will traverse). more detail on benchmark section later. 33

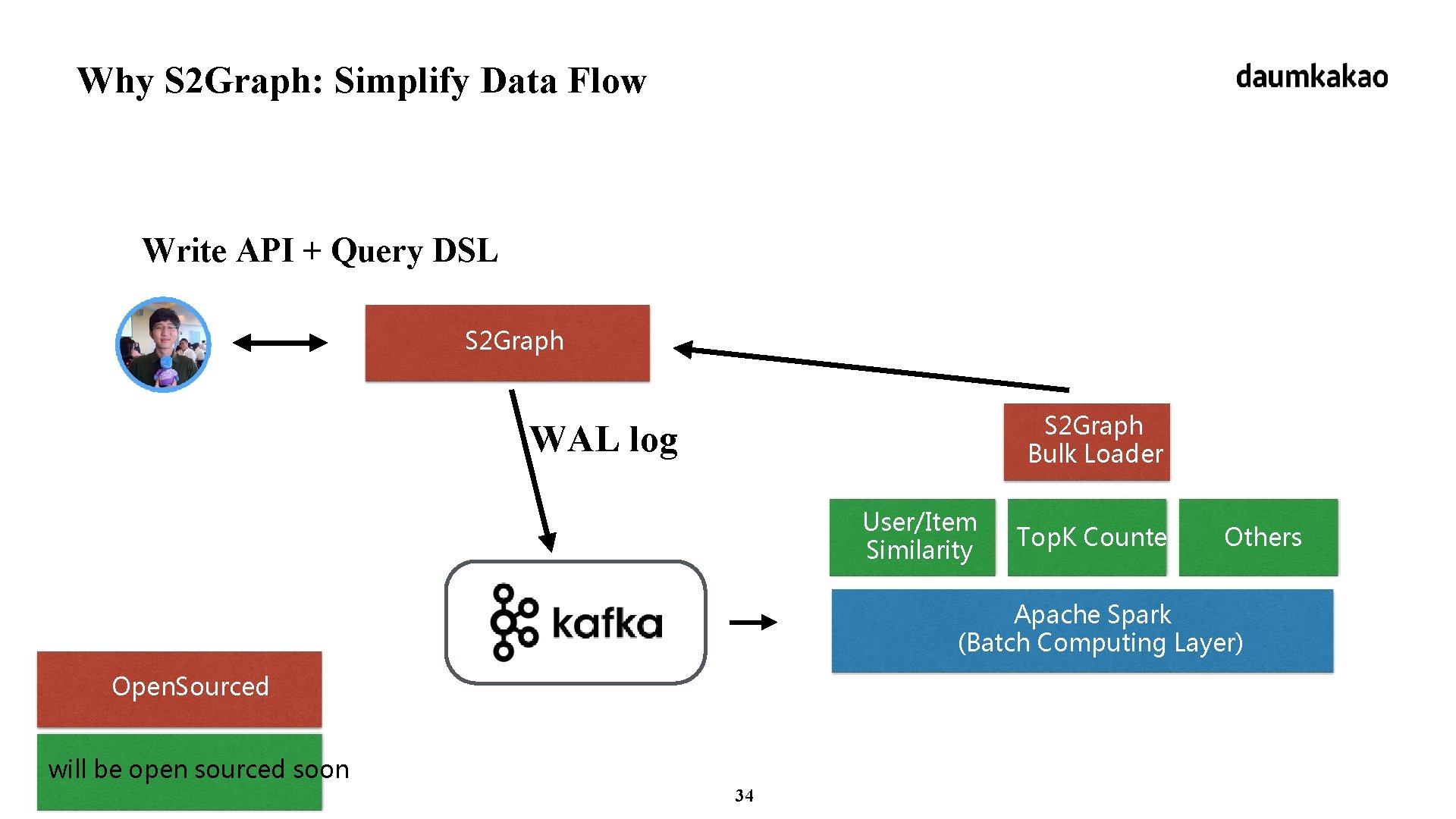

Why S 2 Graph: Simplify Data Flow Write API + Query DSL S 2 Graph Bulk Loader WAL log User/Item Similarity Top. K Counter Others Apache Spark (Batch Computing Layer) Open. Sourced will be open sourced soon 34

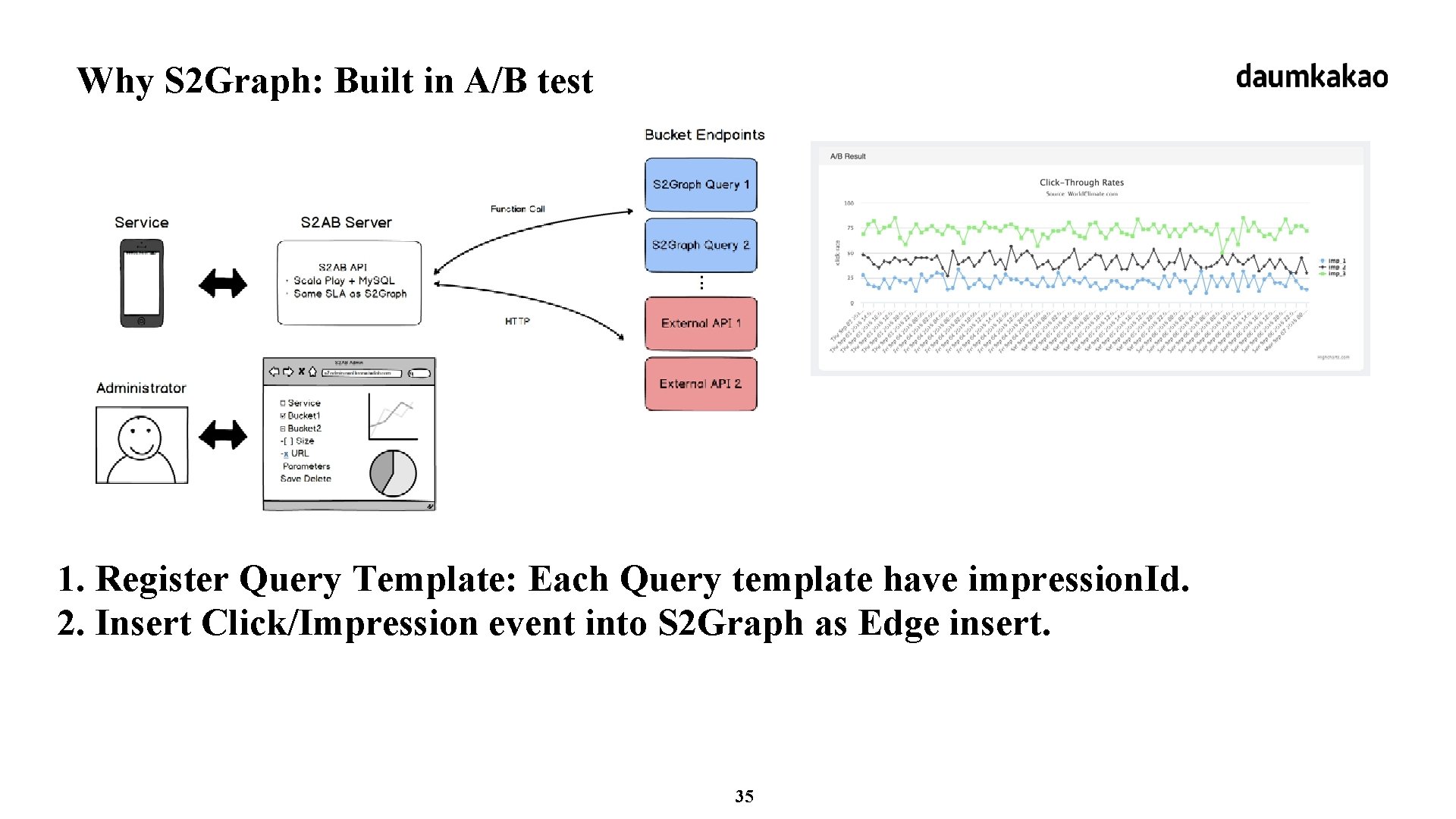

Why S 2 Graph: Built in A/B test 1. Register Query Template: Each Query template have impression. Id. 2. Insert Click/Impression event into S 2 Graph as Edge insert. 35

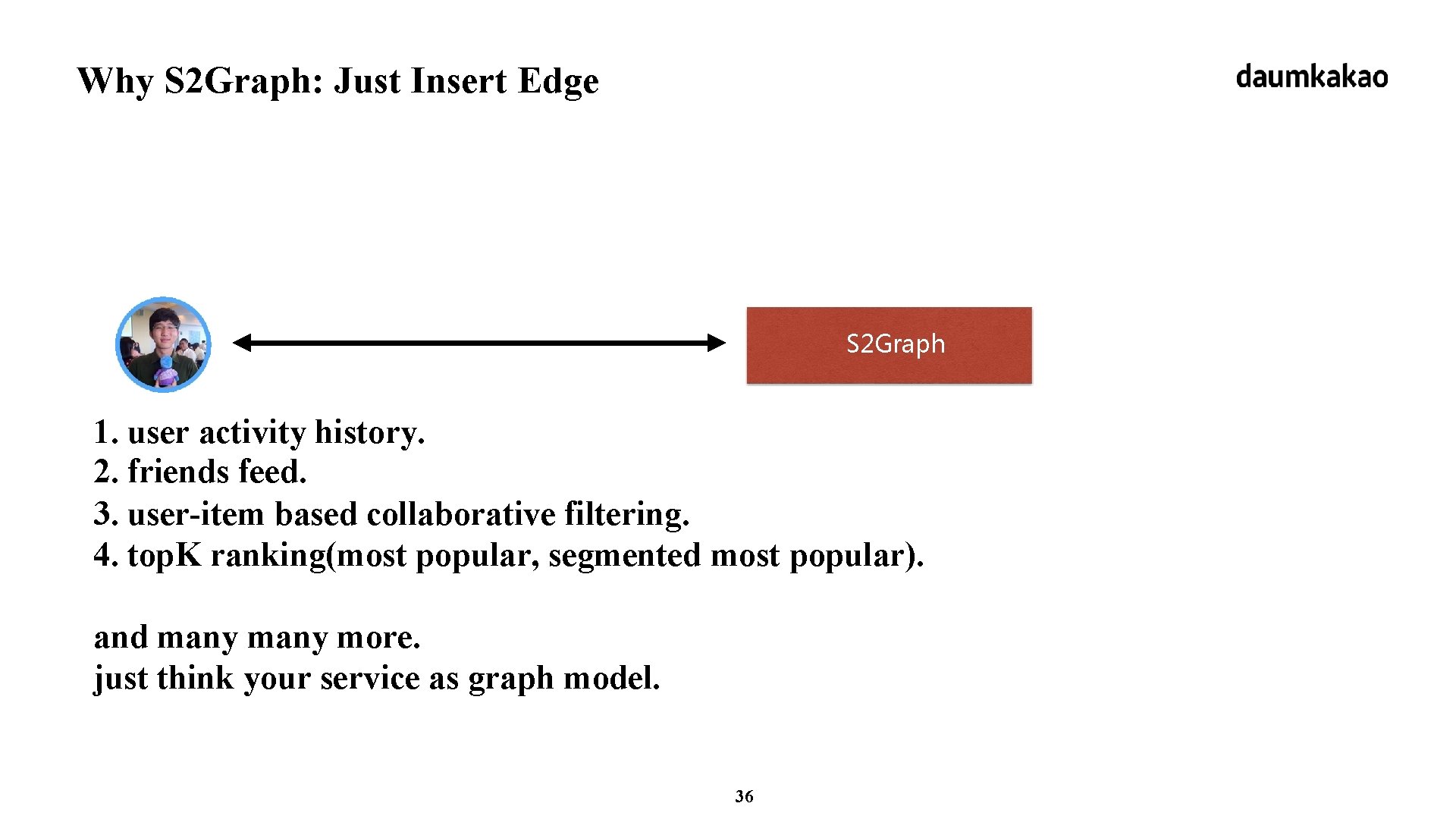

Why S 2 Graph: Just Insert Edge S 2 Graph 1. user activity history. 2. friends feed. 3. user-item based collaborative filtering. 4. top. K ranking(most popular, segmented most popular). and many more. just think your service as graph model. 36

daumkakao S 2 Graph Internal

Detail: previous talk on HBase. Con 2015 1. https: //vimeo. com/128203919 2. http: //www. slideshare. net/HBase. Con/use-cases-session-5 38

daumkakao Benchmarks

HBase Table Configuration 1. set. Durability(Durability. ASYNC_WAL) 2. set. Compression. Type(Compression. Algorithm. LZ 4) 3. set. Bloom. Filter. Type(Bloom. Type. Row) 4. set. Data. Block. Encoding(Data. Block. Encoding. FAST_DIFF) 5. set. Block. Size(32768) 6. set. Block. Cache. Enabled(true) 7. pre-split by (Intger. Max. Value / region. Count). region. Count = 120 when create table(on 20 region server). 40

HBase Cluster Configuration • each machine: 8 core, 32 G memory, SSD • hfile. block. cache. size: 0. 6 • hbase. hregion. memstore. flush. size: 128 MB • otherwise use default value from CDH 5. 3. 1 • s 2 graph rest server: 4 core, 16 G memory 41

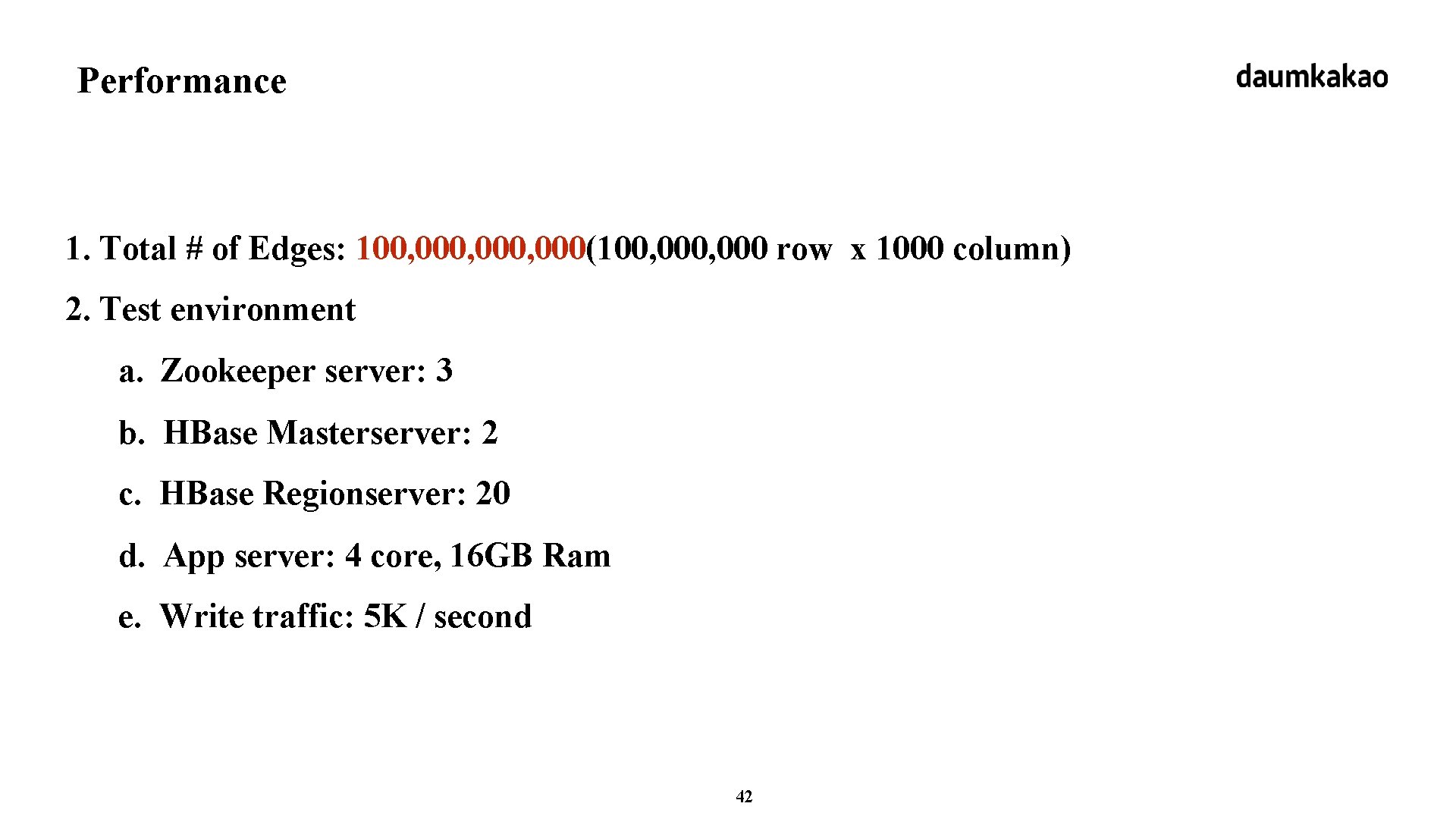

Performance 1. Total # of Edges: 100, 000, 000(100, 000 row x 1000 column) 2. Test environment a. Zookeeper server: 3 b. HBase Masterserver: 2 c. HBase Regionserver: 20 d. App server: 4 core, 16 GB Ram e. Write traffic: 5 K / second 42

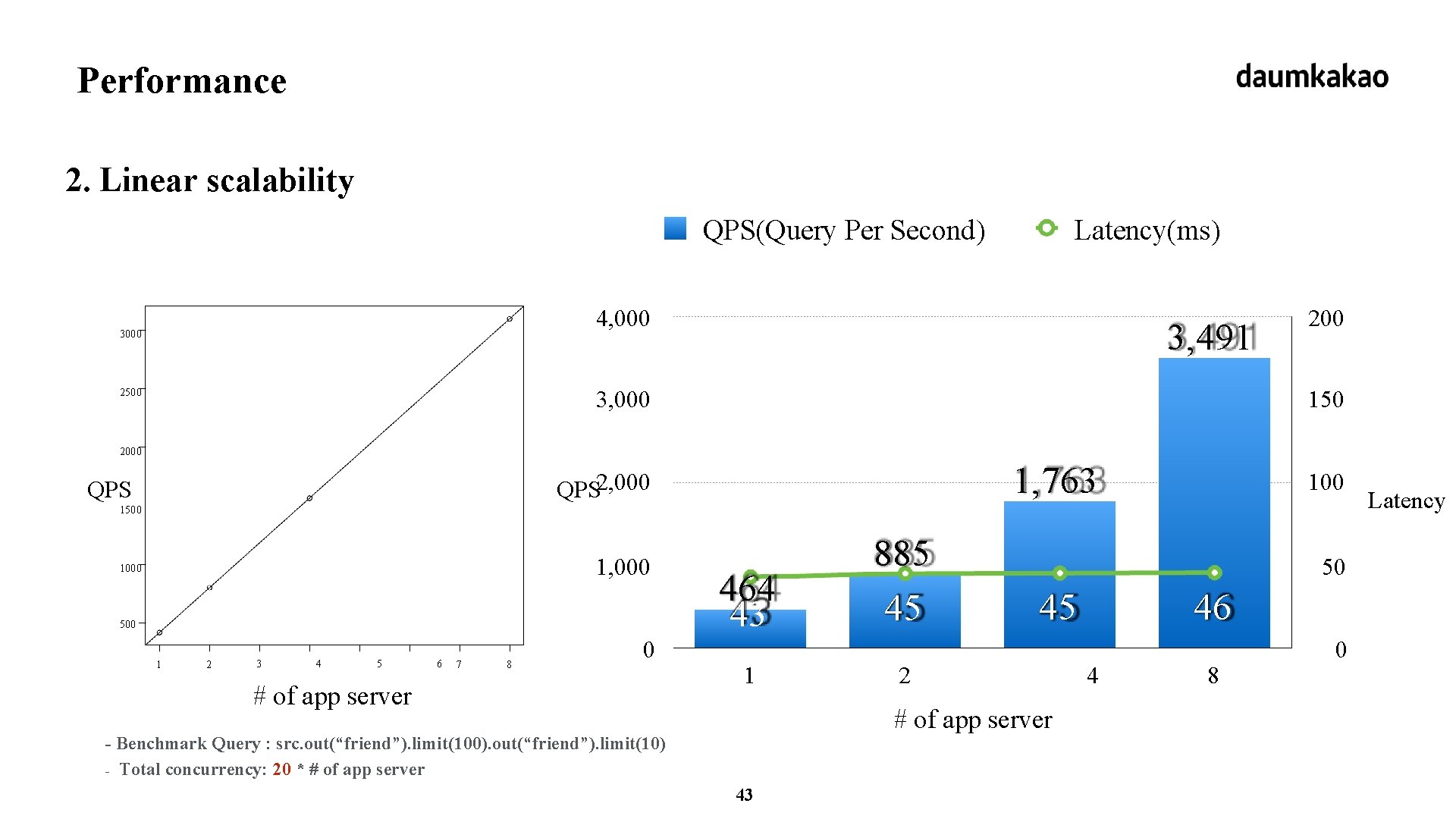

Performance 2. Linear scalability QPS(Query Per Second) Latency(ms) 4, 000 3, 491 3, 000 2500 200 150 2000 1, 763 QPS 2, 000 QPS 100 1500 1, 000 1000 500 1 2 3 4 5 6 7 8 0 # of app server 464 43 1 885 45 50 45 2 # of app server - Benchmark Query : src. out(“friend”). limit(100). out(“friend”). limit(10) - Total concurrency: 20 * # of app server 43 46 4 8 0 Latency

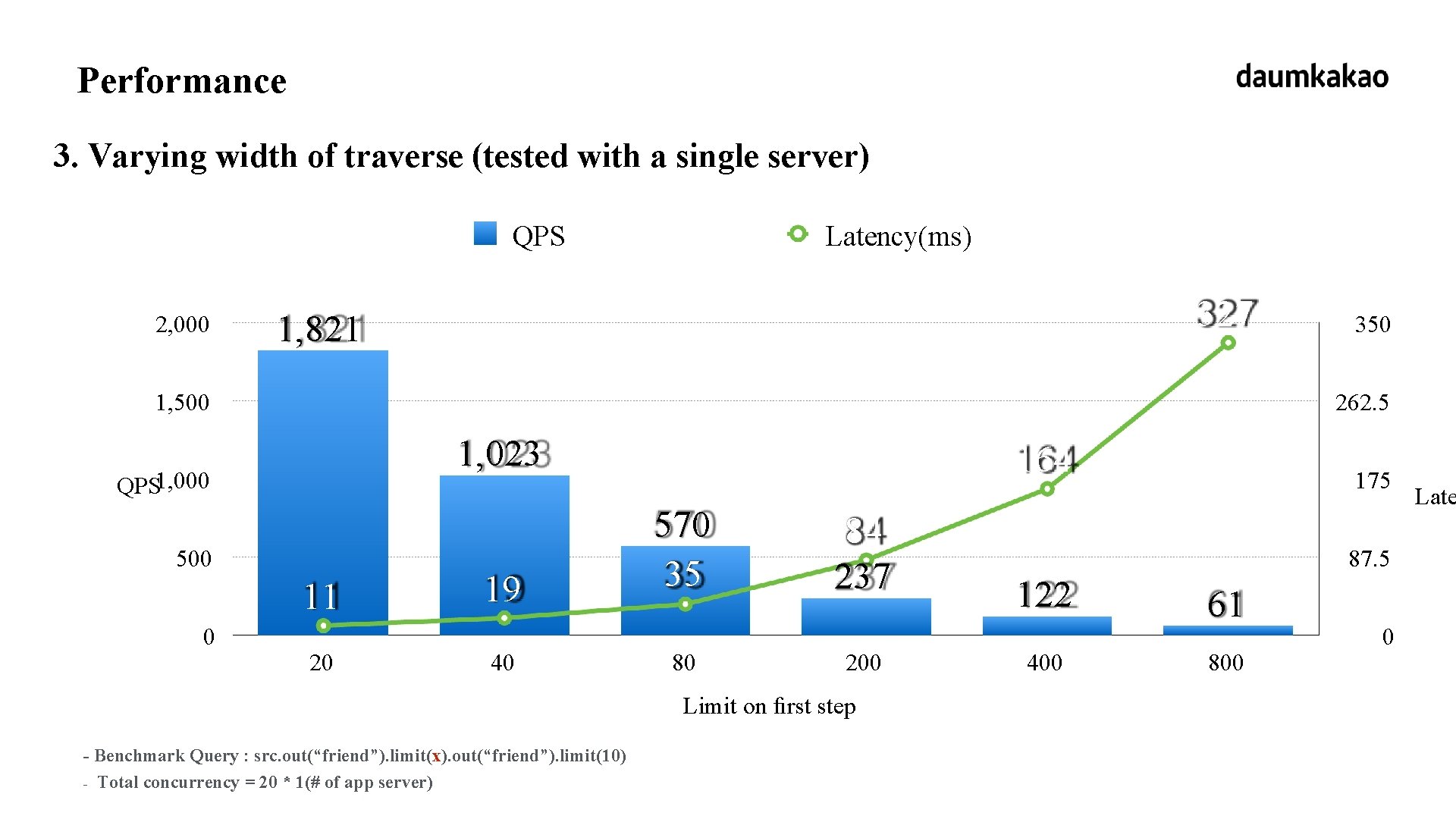

Performance 3. Varying width of traverse (tested with a single server) QPS 2, 000 Latency(ms) 327 1, 821 1, 500 262. 5 1, 023 QPS 1, 000 500 11 0 350 20 19 40 164 570 35 80 84 237 200 Limit on first step - Benchmark Query : src. out(“friend”). limit(x). out(“friend”). limit(10) - Total concurrency = 20 * 1(# of app server) 175 87. 5 122 400 61 800 0 Late

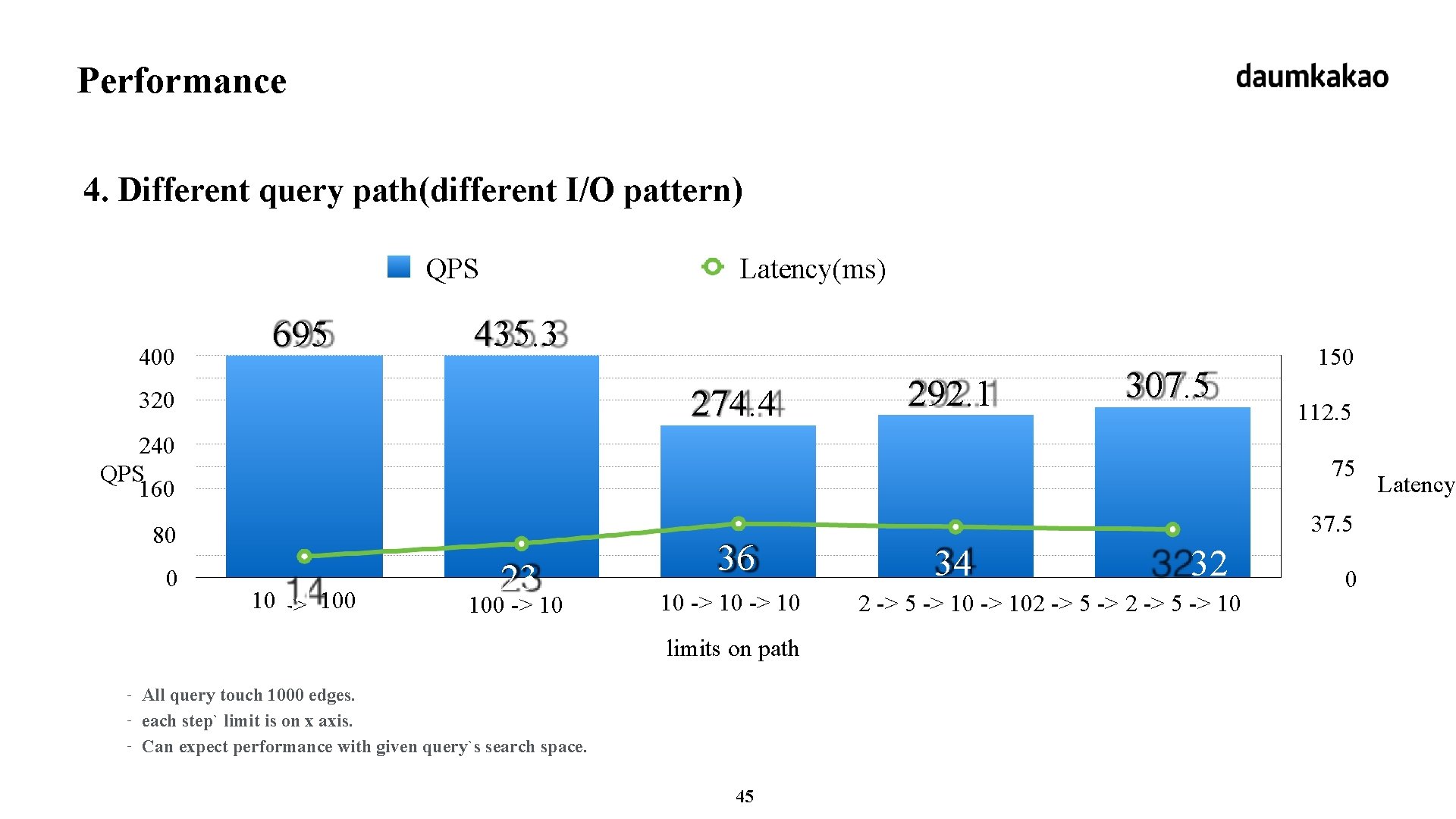

Performance 4. Different query path(different I/O pattern) QPS 400 695 Latency(ms) 435. 3 274. 4 320 292. 1 307. 5 150 112. 5 240 QPS 160 75 80 37. 5 0 1014 -> 100 23 100 -> 10 36 10 -> 10 limits on path - All query touch 1000 edges. each step` limit is on x axis. Can expect performance with given query`s search space. 45 34 32 2 -> 5 -> 102 -> 5 -> 10 0 Latency

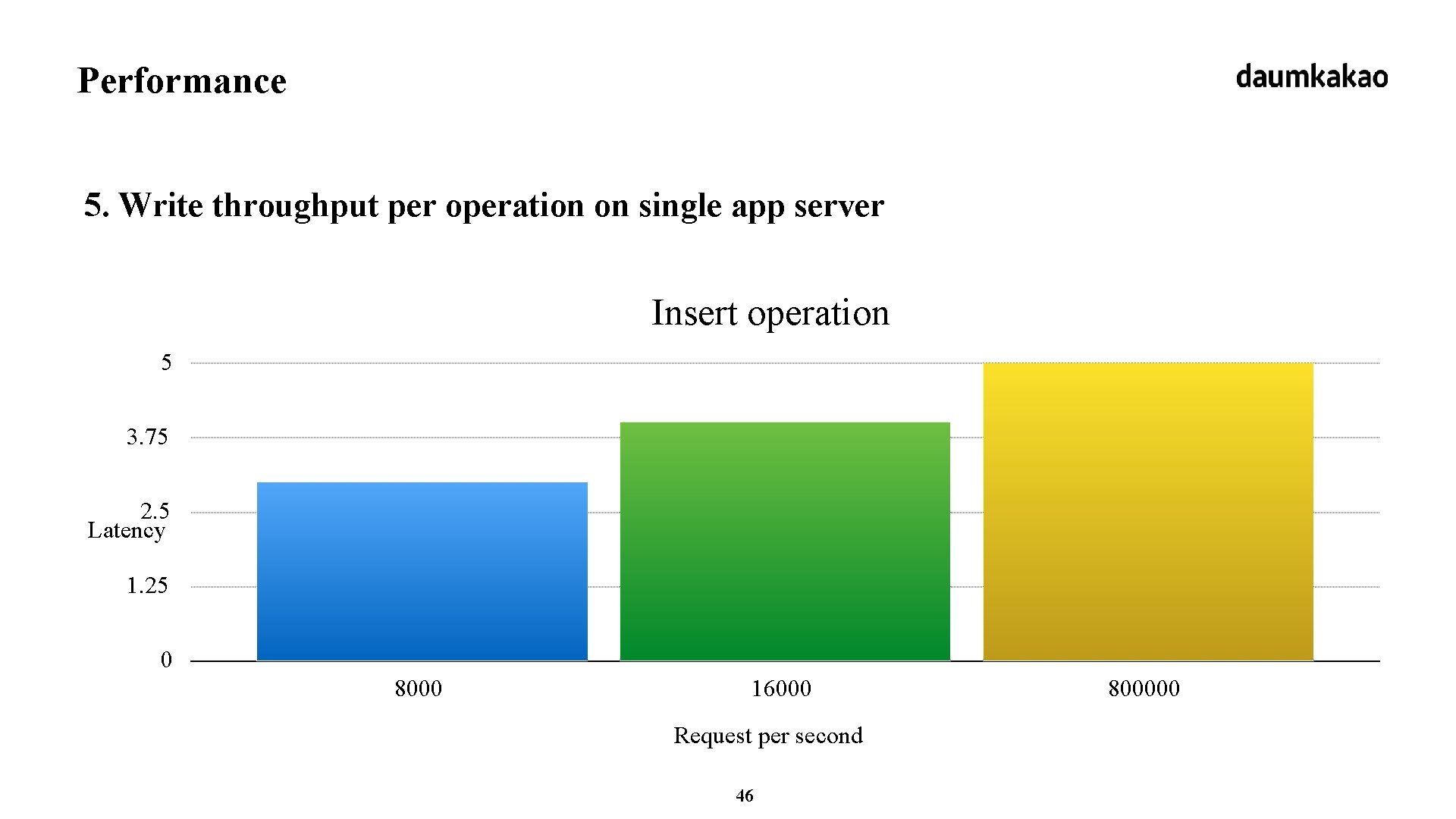

Performance 5. Write throughput per operation on single app server Insert operation 5 3. 75 2. 5 Latency 1. 25 0 8000 16000 Request per second 46 800000

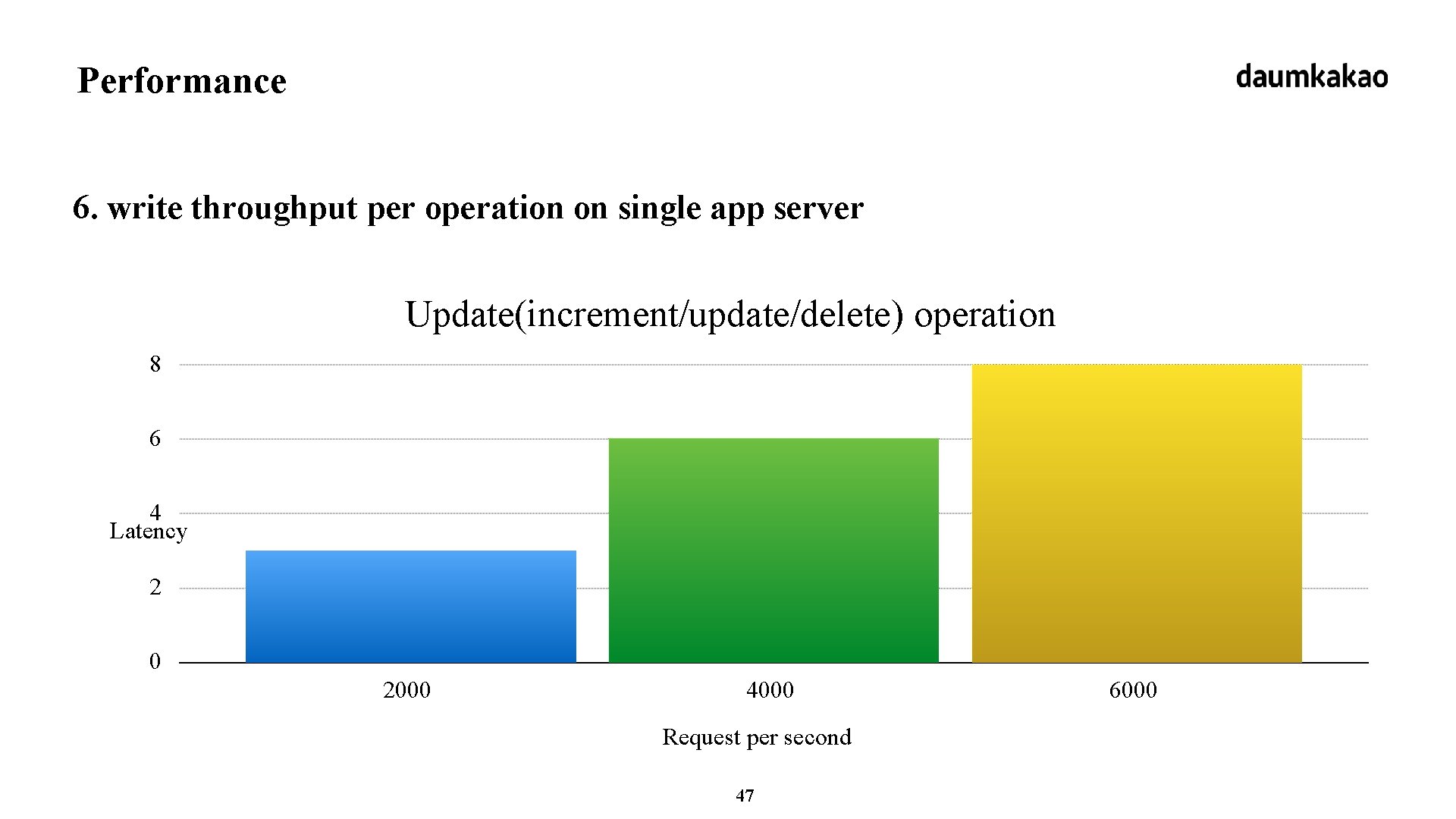

Performance 6. write throughput per operation on single app server Update(increment/update/delete) operation 8 6 4 Latency 2 0 2000 4000 Request per second 47 6000

Stats 1. HBase cluster per IDC (2 IDC) - 3 Zookeeper Server - 2 HBase Master - (20 + 40) HBase Slave 2. App server per IDC - 10 server for write-only - 30 server for query only 3. Real traffic - read: 10 K ~ 20 K request per second - - now mostly 2 step queries with limit 100 on first step. write: over 5 k ~ 10 k request per second 48

49

- Slides: 49