DATA MINING By Shubhangi K Patil Head Department

DATA MINING By Shubhangi K. Patil Head, Department of Computer Science 1

Related Concepts • Database/OLTP systems • Fuzzy sets and Fuzzy logic • Information Retrieval • Decision Support systems • Data Warehousing / Dimensional modeling/ OLAP systems • Web search engines • Statistics • Machine learning • Pattern Matching

Database/OLTP(On-line transaction processing) systems A database is a collection of data associated with some organization or enterprise DBMS is the software used to access a database ER model is often used to abstractly view data of DBMS Relational database is a collection of tables consisting of a set of attributes (fields) and a large set of tuples (records). Relational algebra provides a set of operations that can be performed on relations Database query language SQL is an excellent tool for extracting shallow knowledge from data while data mining discovers hidden knowledge If we know what you are looking for query language can be used – manual data mining 3

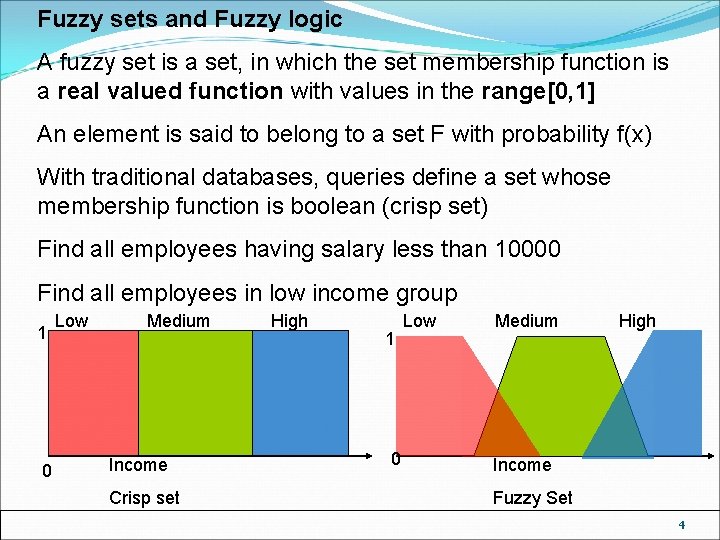

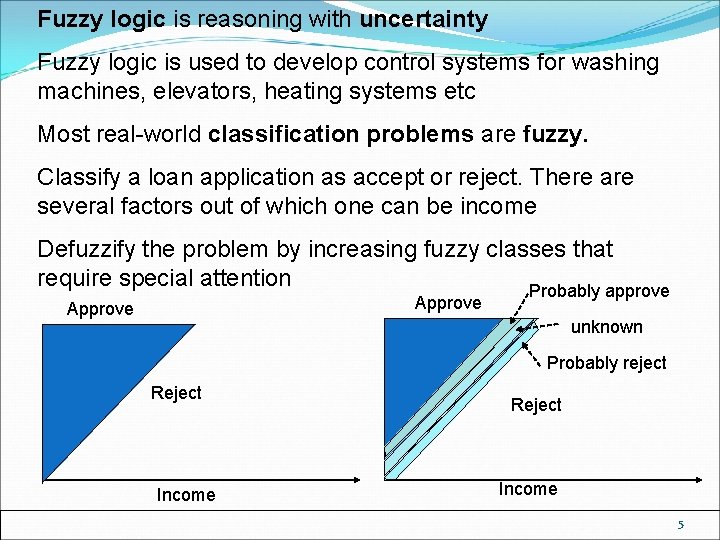

Fuzzy sets and Fuzzy logic A fuzzy set is a set, in which the set membership function is a real valued function with values in the range[0, 1] An element is said to belong to a set F with probability f(x) With traditional databases, queries define a set whose membership function is boolean (crisp set) Find all employees having salary less than 10000 Find all employees in low income group 1 0 Low Medium Income Crisp set High 1 0 Low Medium High Income Fuzzy Set 4

Fuzzy logic is reasoning with uncertainty Fuzzy logic is used to develop control systems for washing machines, elevators, heating systems etc Most real-world classification problems are fuzzy. Classify a loan application as accept or reject. There are several factors out of which one can be income Defuzzify the problem by increasing fuzzy classes that require special attention Probably approve Approve unknown Probably reject Reject Income 5

Information Retrieval IR involves retrieving desired information from textual data IR problems are unstructured documents, approximate search based on keywords and notion of relevance Typical IR requests are classification tasks The retrieval of documents is based on a calculation of similarity measure which describes likelihood that retrieved document is relevant The effectiveness is measured by looking at precision , recall and F-score Precision = [Relevant and Retrieved ] [Retrieved] Recall = [Relevant and Retrieved] F-score= recall x precision [Relevant] (recall+precision)1/2 IR has had a major impact on the development of data mining

Decision Support systems Systems provide specific information needed by management in order to improve decision making process The queries are ad-hoc MIS (Management IS) DSS (Decision support system) EIS (Executive IS) ESS(Executive Support system) Data mining evolved as a set of tools that assist in the overall DSS process DSS gives mangers tools needed to make intelligent decisions

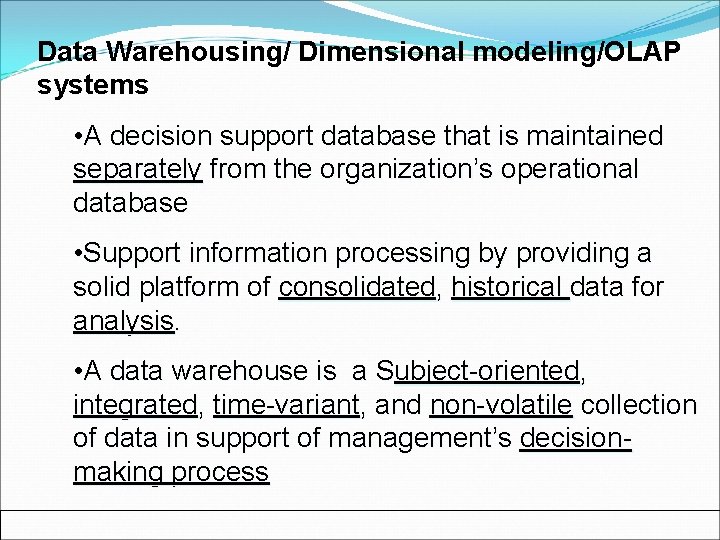

Data Warehousing/ Dimensional modeling/OLAP systems • A decision support database that is maintained separately from the organization’s operational database • Support information processing by providing a solid platform of consolidated, historical data for analysis. • A data warehouse is a Subject-oriented, integrated, time-variant, and non-volatile collection of data in support of management’s decisionmaking process

Subject-oriented -Organized around major subjects, such as customer, supplier, product sales etc. Provide a simple and concise view around particular subject by excluding data that is not useful for decision process Modeled in accordance with decision makers needs and not transaction processing needs Integrated - Constructed by integrating multiple, heterogeneous data sources- relational databases, flat files, on-line transaction records Data cleaning and data integration techniques are applied. Ensure consistency in naming conventions, encoding structures, attribute measures, etc. among different data sources

Time-variant -The time horizon for the data warehouse is significantly longer than that of operational systems – Operational database: current value data – Data warehouse data: provide information from a historical perspective (e. g. , past 5 -10 years) Every key structure in the data warehouse – Contains an element of time, explicitly or implicitly – But the key of operational data may or may not contain “time element”

Non-volatile A physically separate store of data transformed from the operational environment • Operational update of data does not occur in the data warehouse environment – Does not require transaction processing, recovery, and concurrency control mechanisms – Requires only two operations in data accessing: • initial loading of data and access of data

Data Warehouse vs. Heterogeneous DBMS • Traditional heterogeneous DB integration: A query driven approach – Build wrappers/mappings on top of heterogeneous databases – A meta-dictionary is used to translate the query into queries appropriate for individual heterogeneous sites involved, and the results are integrated into a global answer set – Complex information filtering, compete for resource • Data Warehouse update-driven, high performance Information from heterogeneous sources is integrated in advance and stored in warehouses for direct query and analysis

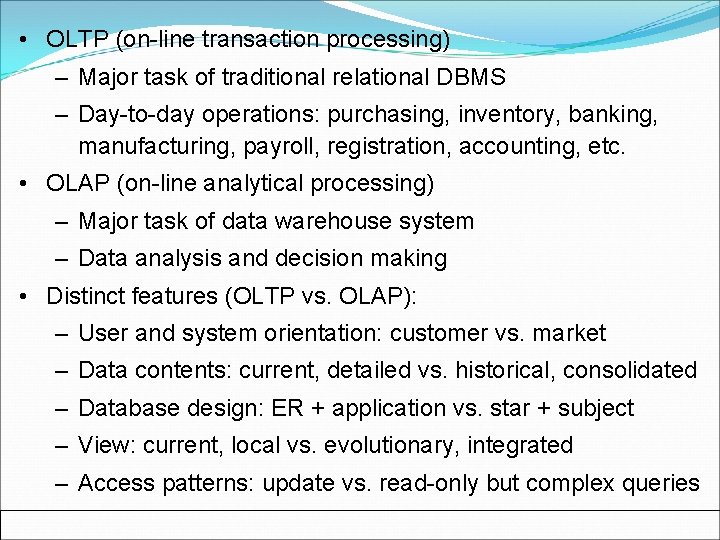

• OLTP (on-line transaction processing) – Major task of traditional relational DBMS – Day-to-day operations: purchasing, inventory, banking, manufacturing, payroll, registration, accounting, etc. • OLAP (on-line analytical processing) – Major task of data warehouse system – Data analysis and decision making • Distinct features (OLTP vs. OLAP): – User and system orientation: customer vs. market – Data contents: current, detailed vs. historical, consolidated – Database design: ER + application vs. star + subject – View: current, local vs. evolutionary, integrated – Access patterns: update vs. read-only but complex queries

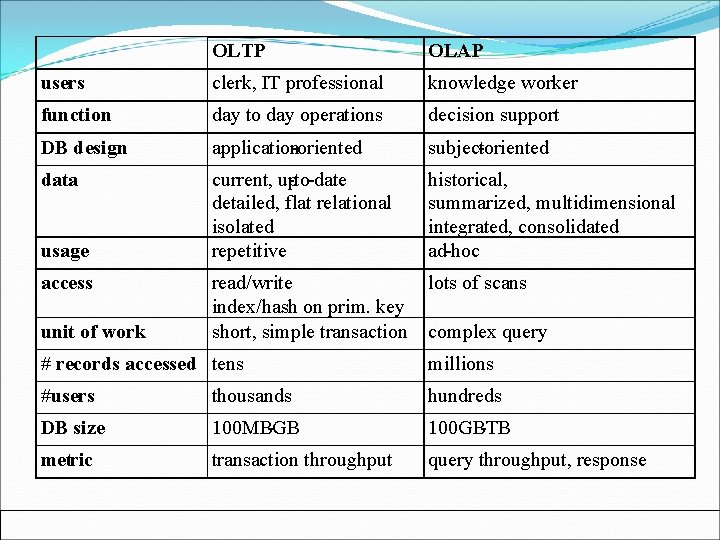

OLTP OLAP users clerk, IT professional knowledge worker function day to day operations decision support DB design application-oriented subject-oriented data current, up-to-date detailed, flat relational isolated repetitive historical, summarized, multidimensional integrated, consolidated ad-hoc read/write index/hash on prim. key short, simple transaction lots of scans usage access unit of work complex query # records accessed tens millions #users thousands hundreds DB size 100 MB-GB 100 GB-TB metric transaction throughput query throughput, response

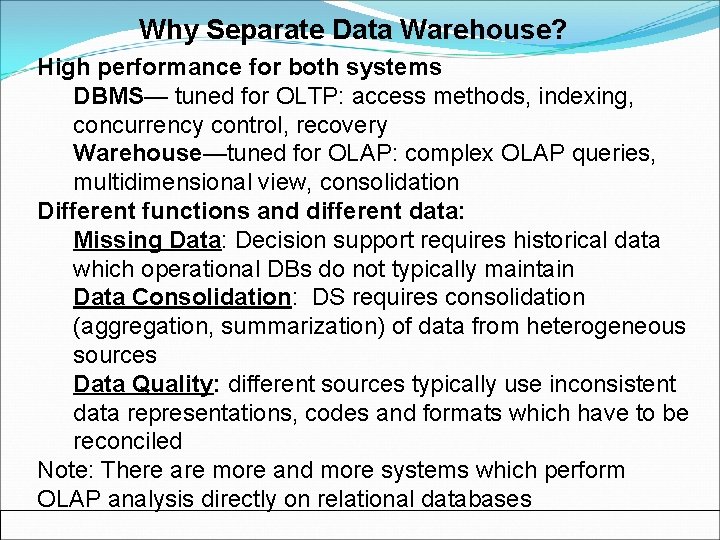

Why Separate Data Warehouse? High performance for both systems DBMS— tuned for OLTP: access methods, indexing, concurrency control, recovery Warehouse—tuned for OLAP: complex OLAP queries, multidimensional view, consolidation Different functions and different data: Missing Data: Decision support requires historical data which operational DBs do not typically maintain Data Consolidation: DS requires consolidation (aggregation, summarization) of data from heterogeneous sources Data Quality: different sources typically use inconsistent data representations, codes and formats which have to be reconciled Note: There are more and more systems which perform OLAP analysis directly on relational databases

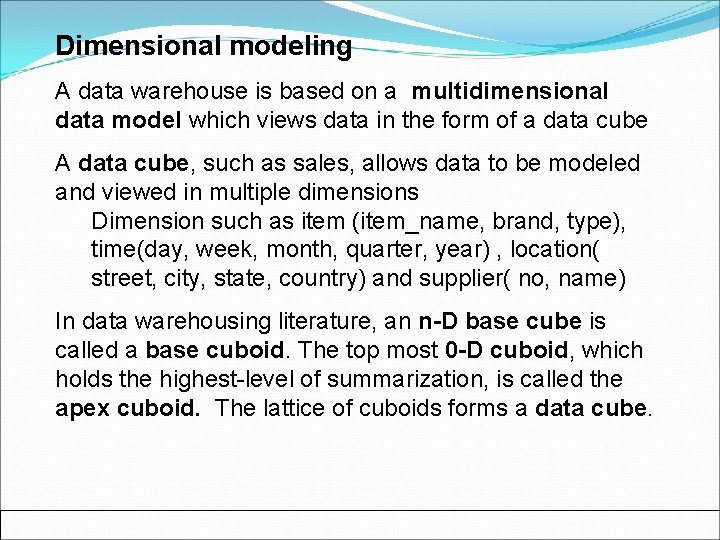

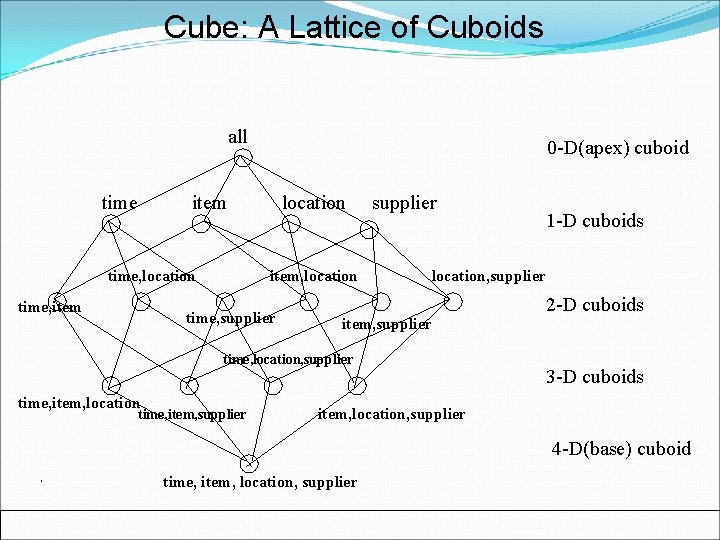

Dimensional modeling A data warehouse is based on a multidimensional data model which views data in the form of a data cube A data cube, such as sales, allows data to be modeled and viewed in multiple dimensions Dimension such as item (item_name, brand, type), time(day, week, month, quarter, year) , location( street, city, state, country) and supplier( no, name) In data warehousing literature, an n-D base cube is called a base cuboid. The top most 0 -D cuboid, which holds the highest-level of summarization, is called the apex cuboid. The lattice of cuboids forms a data cube.

Cube: A Lattice of Cuboids all time 0 -D(apex) cuboid item time, location time, item location item, location time, supplier location, supplier item, supplier time, location, supplier time, item, location time, item, supplier 1 -D cuboids 2 -D cuboids 3 -D cuboids item, location, supplier 4 -D(base) cuboid time, item, location, supplier

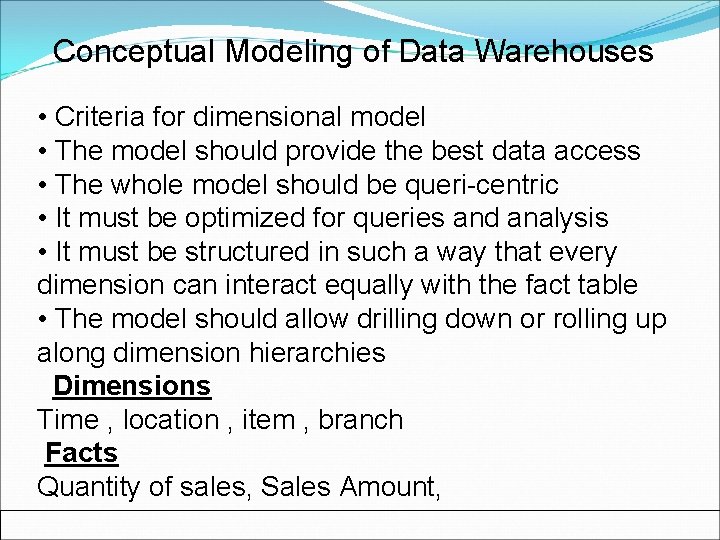

Conceptual Modeling of Data Warehouses • Criteria for dimensional model • The model should provide the best data access • The whole model should be queri-centric • It must be optimized for queries and analysis • It must be structured in such a way that every dimension can interact equally with the fact table • The model should allow drilling down or rolling up along dimension hierarchies Dimensions Time , location , item , branch Facts Quantity of sales, Sales Amount,

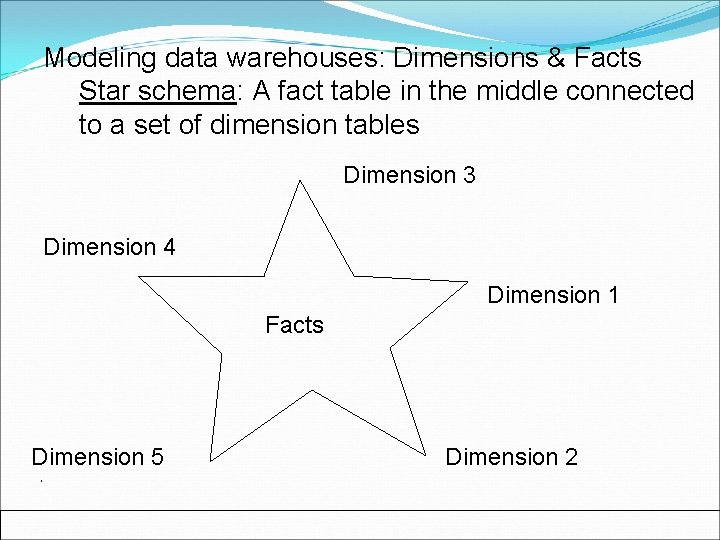

Modeling data warehouses: Dimensions & Facts Star schema: A fact table in the middle connected to a set of dimension tables Dimension 3 Dimension 4 Dimension 1 Facts Dimension 5 Dimension 2

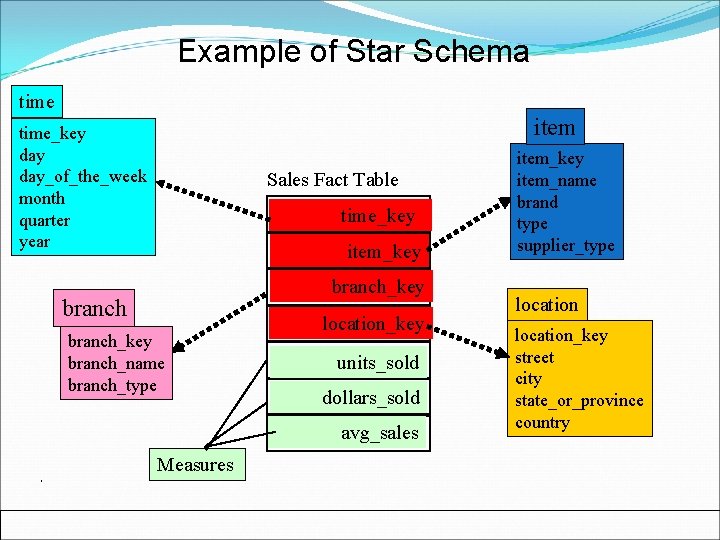

Example of Star Schema time item time_key day_of_the_week month quarter year Sales Fact Table time_key item_key branch_key branch_name branch_type location_key units_sold dollars_sold avg_sales Measures item_key item_name brand type supplier_type location_key street city state_or_province country

Conceptual Modeling of Data Warehouses Design Decisions Choosing the process – selecting the subjects for the first set of logical structures to be designed Choosing the grain- determining the level of detail for the data in the data structures Identifying and conforming the dimensionschoosing the dimensions to be included Choosing the facts-Selecting the metrics or units of measurements to be included Choosing the duration of the database- determining how far back in time one should go for historical data

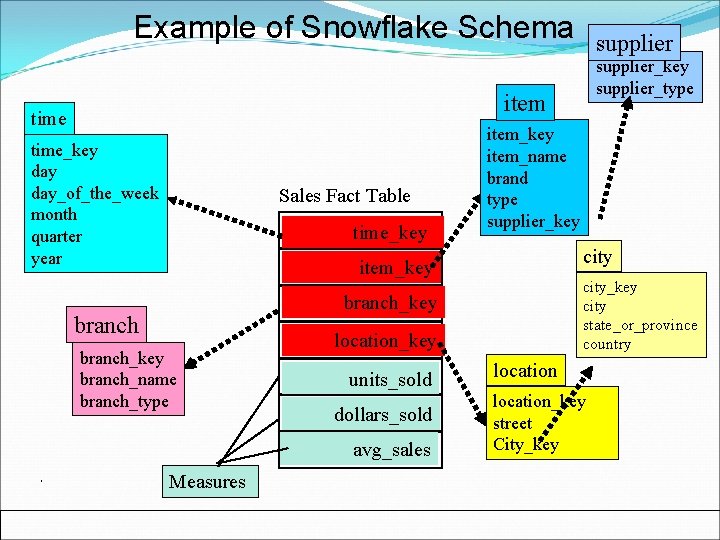

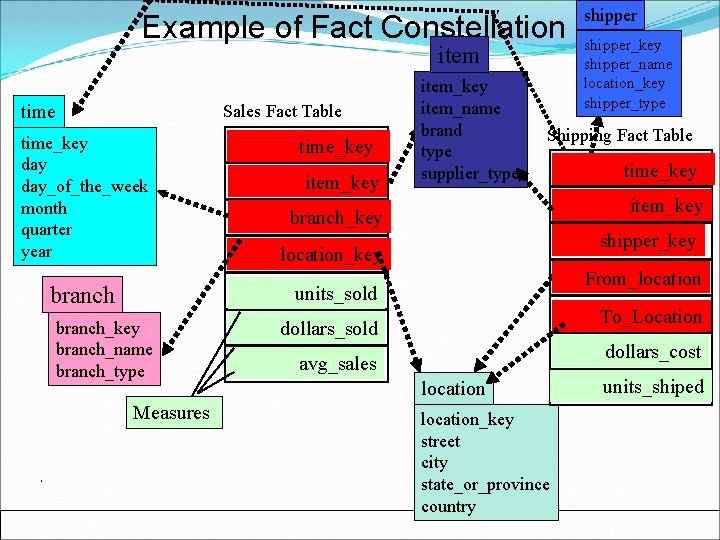

Snowflake schema: A refinement of star schema where some dimensional hierarchy is normalized into a set of smaller dimension tables, forming a shape similar to snowflake ‘Snowflaking’ or the normalization of the dimension tables can be done in many different ways The item and location dimensions have been normalized to give rise to two more tables supplier and city Fact constellations: Multiple fact tables share dimension tables, viewed as a collection of stars, therefore called galaxy schema or fact constellation

Example of Snowflake Schema supplier_key supplier_type item time_key day_of_the_week month quarter year Sales Fact Table time_key item_name brand type supplier_key city item_key city state_or_province country branch_key branch_name branch_type location_key units_sold dollars_sold avg_sales Measures location_key street City_key

Example of Fact Constellation item time Sales Fact Table time_key day_of_the_week month quarter year branch time_key Sales Fact Table item_key item_name brand type supplier_type Measures shipper_key shipper_name location_key shipper_type Shipping Fact Table branch_key time_key Sales Fact Table item_key shipper_key location_key From_location units_sold branch_key branch_name branch_type shipper To_Location dollars_sold dollars_cost avg_sales location_key street city state_or_province country units_shiped

Inside a dimension table Dimension table key- Primary key that uniquely identifies rows in the table Table is wide-has many columns or attributes Fewer number of records- fewer rows than fact table-dimension table in hundreds –facts in millions Textual attributes- attributes are of textual formatthey represent textual descriptions of a business dimension Attributes not directly related-Some attributes are not related to one another such as brand supplier_type in tem dimension table but both are attributes of item

Not Normalized- For efficient query performance, it is best if the query takes attributes from dimension table and goes directly to the fact table. If dimension tables are normalized, query travels through intermediary tables affecting performance. Dimension tables are flattened out normalized in star schema while some dimensions are normalized in snowflake schema Drilling down , Rolling up- The attributes in a dimension table provide the ability to get to the details from higher levels of aggregation to lower levels of details Get the total sales by city and drill down to total sales by zip or roll up to total sales by state

Multiple Hierarchies- Dimension tables often provide for multiple hierarchies so that drilling down may be performed along any of the multiple hierarchies The marketing department may have its own way of classifying items by defining item types while the accounting department may group items by defining its own item types. In this case the item table will contain both the attributes marketing_item_type and accounting_item_type

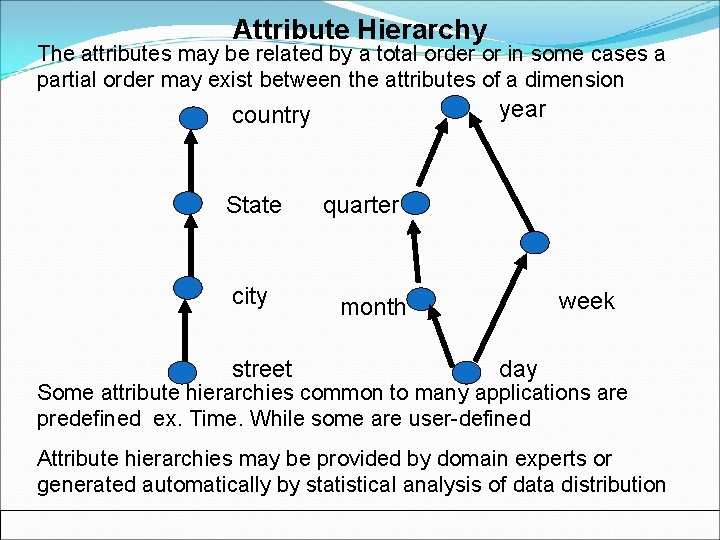

Attribute Hierarchy The attributes may be related by a total order or in some cases a partial order may exist between the attributes of a dimension year country State city street quarter week month day Some attribute hierarchies common to many applications are predefined ex. Time. While some are user-defined Attribute hierarchies may be provided by domain experts or generated automatically by statistical analysis of data distribution

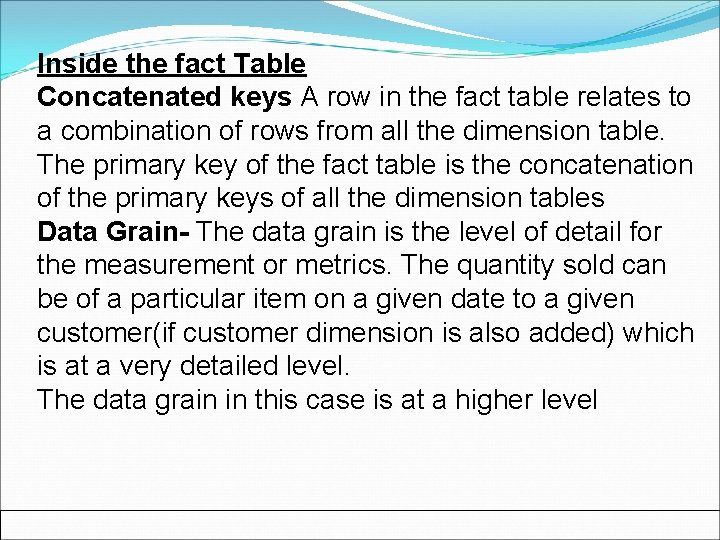

Inside the fact Table Concatenated keys A row in the fact table relates to a combination of rows from all the dimension table. The primary key of the fact table is the concatenation of the primary keys of all the dimension tables Data Grain- The data grain is the level of detail for the measurement or metrics. The quantity sold can be of a particular item on a given date to a given customer(if customer dimension is also added) which is at a very detailed level. The data grain in this case is at a higher level

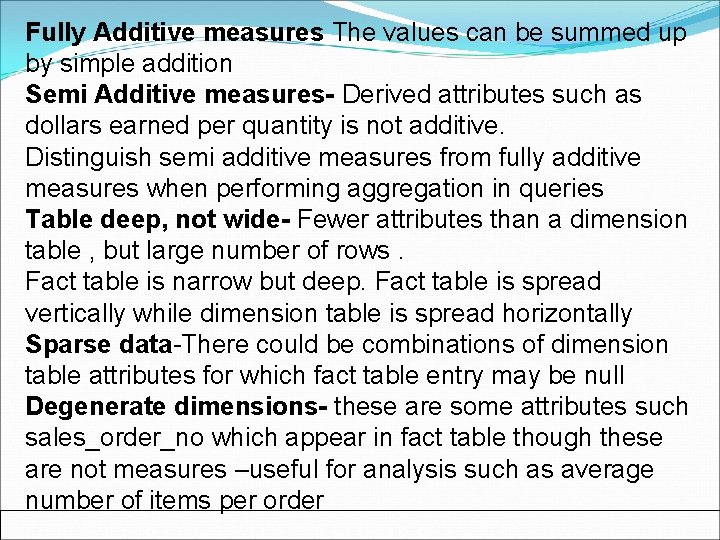

Fully Additive measures The values can be summed up by simple addition Semi Additive measures- Derived attributes such as dollars earned per quantity is not additive. Distinguish semi additive measures from fully additive measures when performing aggregation in queries Table deep, not wide- Fewer attributes than a dimension table , but large number of rows. Fact table is narrow but deep. Fact table is spread vertically while dimension table is spread horizontally Sparse data-There could be combinations of dimension table attributes for which fact table entry may be null Degenerate dimensions- these are some attributes such sales_order_no which appear in fact table though these are not measures –useful for analysis such as average number of items per order

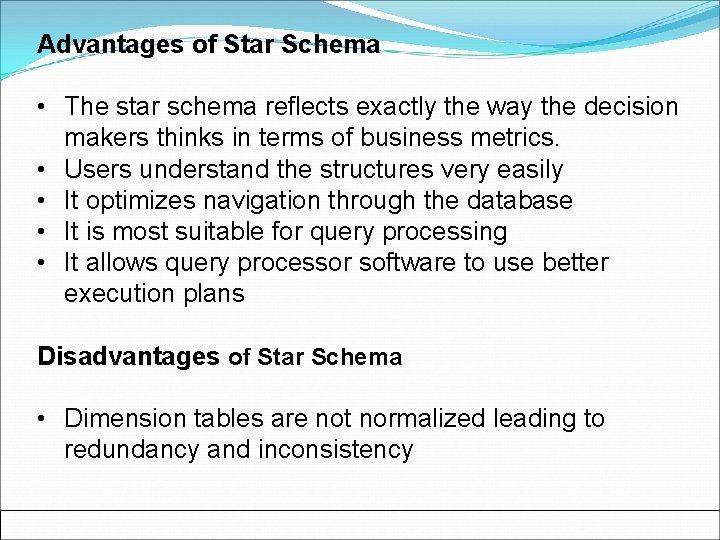

Advantages of Star Schema • The star schema reflects exactly the way the decision makers thinks in terms of business metrics. • Users understand the structures very easily • It optimizes navigation through the database • It is most suitable for query processing • It allows query processor software to use better execution plans Disadvantages of Star Schema • Dimension tables are not normalized leading to redundancy and inconsistency

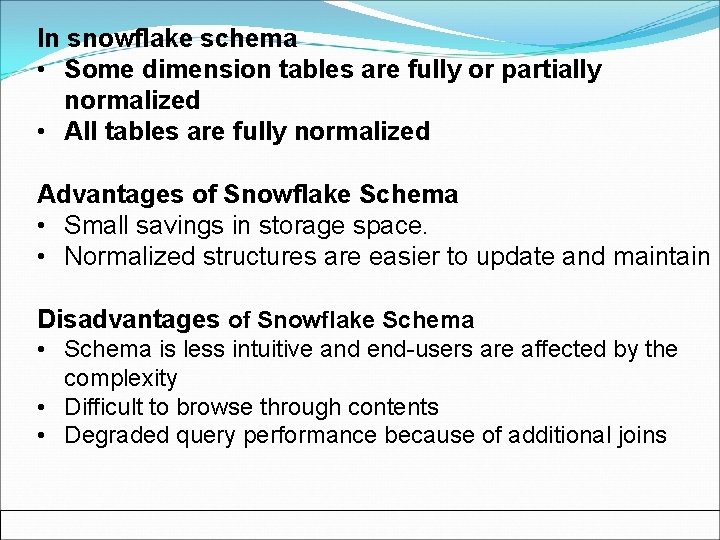

In snowflake schema • Some dimension tables are fully or partially normalized • All tables are fully normalized Advantages of Snowflake Schema • Small savings in storage space. • Normalized structures are easier to update and maintain Disadvantages of Snowflake Schema • Schema is less intuitive and end-users are affected by the complexity • Difficult to browse through contents • Degraded query performance because of additional joins

Advantages of having a Data warehouse §Provides a competitive advantage by allowing performance measurement and critical adjustments which helps in winning over a competitor §Enhance business productivity as the information quickly available can be used to take corrective action §It facilitates customer relationship management by using the information across all lines of business, all departments and all markets. §It brings about cost reduction by tracking trends, patterns and exceptions in a consistent and reliable manner To design a warehouse one need to understand analyze business needs

Typical OLAP Operations Roll up (drill-up): summarize data by climbing up hierarchy or by dimension reduction • Drill down (roll down): reverse of roll-up from higher level summary to lower level summary or detailed data, or introducing new dimensions • Slice and dice: project and select Slice-selection on one dimension of cube resulting in a subcube Dice-selection operation on two or more dimensions • Pivot (rotate): reorient the cube, visualization, 3 D to series of 2 D planes Other operations • Drill across: involving (across) more than one fact table • Drill through: through the bottom level of the cube to its back-end relational tables (using SQL)

Web search engines are used to search data on the web Conventional search engines suffer from several problems Abundance-There is lot of data but very less relevant data Limited Coverage- It is impossible to search the entire web, search engines use indices which are periodically updated Limited query – Access based on simple keyword based searching- Other properties such as popularity of pages can also be used Limited Customization- Results do not depend on the background or the knowledge of the user- Customization based using user profile Web mining consists of content , structure and usage mining

Statistics Sampling is used to tackle scalability problems. Statistical inference using a subset of the population Statistical measures of central tendency such as mean median etc are heavily used in data mining. They are in effective in the presence of outliers Statistical measures of data dispersion are also used for understanding data distribution Correlation analysis is used in Association mining Regression analysis is used in Prediction

Machine learning examines how to write program that learns During learning process, data mining algorithms use sample data to create a model for the data Testing phase applies the model to remaining and future data Pattern Matching It is a type of classification Data can be placed into correct classes when the similarity with the pattern is established

Thank You

- Slides: 39