Congestion Control for High BandwidthDelay Product Networks Dina

Congestion Control for High Bandwidth-Delay Product Networks Dina Katabi, Mark Handley, Charlie Rohrs SIGCOMM 2002, August 19 -23, 2002, Pittsburgh, Pennsylvania, USA, Copyright 2002 ACM 1 -58113 -570 -X/02/0008 Presented by Oleg Rekutin and Joe Frate 1

Outline • • • • Introduction Definitions Previous Work TCP Shortcomings XCP Design Rationale XCP Protocol Efficiency Controller and Fairness Controller Stability Analysis Performance Comparison with other schemes (RED, CSFQ, etc) Differential Bandwidth Allocation Security Deployment 2

Introduction Main idea: to provide a new transport layer protocol that performs well when there is a high bandwidth-delay product (e. Xplicit Control Protocol) • XCP Algorithm Goals – The primary goal is to solve TCP’s limitations in high-bandwidth largedelay environments. – To more efficiently acquire spare bandwidth. – To decouple fairness control from utilization control so as to more fairly allocate bandwidth, particularly among flows with substantially different RTTs that are competing for the same bottleneck. – Distinguish error losses from congestion losses. – Facilitate detection of misbehaving sources. – Provide an incentive for both end users and network providers to deploy the protocol. 3

Definitions • congestion window – the number of bits a sender is allowed to transmit (according to the sender). • bandwidth – the capacity of a link, in bits/second. • delay – the round-trip-time (RTT), including acknowledgements, of a packet, in seconds. • bandwidth-delay product – the product of the bandwidth (bits/second) and the delay (seconds). The result of this product is the amount of bits that a sender can send in a burst, that achieves 100% utilization of the link (i. e. , it completely fills the pipe). • congestion control dynamics – abstract as a control loop with feedback delay. In the context of congestion control, AQM would be a controller, and the controlled signal is the aggregate traffic traversing the link. 4

Previous Work • Additive Increase Multiplicative Decrease (AIMD) • Multiplicative Increase Multiplicative Decrease • Slow Start • Active Queue Management (AWM) • TCP Congestion Control • Explicit Congestion Notification proposal (ECN). 5

Additive Increase Multiplicative Decrease (AIMD) • Upon congestion (lost packet in TCP), sender exponentially (multiplicatively) cuts its send rate (cuts rate in half), until there is no congestion. • Once there is no congestion, sender linearly (additively) increases its send rate, to gradually reach maximum throughput capable in the network. 6

Multiplicative Increase Multiplicative Decrease (MIMD) • Sender exponentially (doubles) increases its send rate, until congestion is indicated. • Sender exponentially decreases (cuts in half) its send rate, until there is no congestion. 7

Slow Start • Slow start uses MIMD at the start of a transmission, to try to quickly reach its threshold. 8

TCP Congestion Control • Sender has a “congestion window” which dictates how many packets can be sent (in conjunction with the receiver window). • Initially, use slow start to increase this congestion window. • When congestion is experienced, multiplicatively decrease this congestion window until there is no congestion, and then additively increase packet flow to reacquire available bandwidth. • A packet loss indicates congestion to the sender. When a packet times out, the TCP sender assumes congestion, and begins decreasing its congestion window multiplicatively. 9

Explicit Congestion Notification proposal (ECN) A proposal to add explicit congestion information to IP. K. Ramakrishnan 2481 AT&T Labs Research Category: Experimental S. Floyd LBNL January 1999 Copyright © The Internet Society (1999) 10

TCP Instability • “Mathematical analysis of current congestion control algorithms reveals that, regardless of the queuing scheme, as the delay-bandwidth product increases, TCP becomes oscillatory and prone to instability”. • More of a problem as more and more high-speed links, and large-delay links (such as satellite links), are added to Internet. • RED, REM, Proportional Integral Controller, Virtual Queue, and AVQ (when link capacity is large enough) have all been shown to become oscillatory and prone to instability as bandwidth-delay product increases. • Low et. al. argue that any AQM scheme is unlikely to maintain stability over high-capacity or large-delay links. 11

TCP Inefficiency • TCP’s use of an additive increase policy limits ability to acquire spare bandwidth to one packet per RTT. • Bandwidth-delay product of a single flow over very high-bandwidth links may be many thousands of packets. • As the bandwidth-delay product increases, it takes much longer for TCP to increase its rate of transmission (since it could take thousands of RTTs to getting back to full utilization after congestion). 12

TCP Inefficiency (continued) • Increase in link capacity does not improve the transfer delay of short flows • With short flows, there is not enough time for Slow Start to acquire spare bandwidth. 13

TCP Unfairness • TCP’s throughput is inversely proportional to the RTT • As more flows traverse satellite links or wireless WANs (links with high delay), there will be unfair allocation among flows with substantially different RTTs competing for the same bottleneck capacity. 14

TCP Impact • Only beginning to see problems of high bandwidth -delay products in current Internet. • For example, TCP over satellite links has revealed network utilization issues and TCP’s bias (unfairness) against long RTT flows. • Currently these problems are addressed using adhoc mechanisms. • Problem will grow over time (more high-speed and high-delay links). • A new protocol is needed. 15

e. Xplicit Control Protocol (XCP) • A solution is the e. Xplicit Control Protocol (XCP), which remains efficient, fair, scalable, and stable as the bandwidth-delay product increases. • XCP is stable and efficient regardless of the link capacity, round trip delay, or the number of sources. • XCP outperforms TCP in conventional environments. • XCP decouples utilization control from fairness control. • XCP achieves fair bandwidth allocation, high utilization, small standing queue size, and near-zero packet drops, with both steady and highly varying traffic. • XCP does not maintain any per-flow state in routers and requires few CP cycles per packet. • Able to deploy along with TCP (“TCP-friendly”). 16

XCP Design Rationale • Think of design without caring about backward compatibility or deployment (what would congestion control architecture look like if designing from scratch). • Want tight and nimble congestion control, so as to reach maximum throughput as quickly as possible. • Want fair allocation, especially in environments where different flows have different RTTs. 17

XCP Design Rationale: more precise congestion control • TCP use of packet loss as a congestion control indicator is effectively a binary signal, meaning that in TCP, there are only two signals: “there is no congestion” or “there is congestion”. • Tight and nimble congestion control requires more congestion information than this. We want routers to send a congestion signal that indicates the degree of congestion. • By allowing routers to explicitly giving the sender more detail about the congestion state, the XCP enables the sender to decrease their sending windows quickly during high congestion, and do only small reductions when close to capacity. 18

XCP Design Rationale: source adjustments based on the feedbackloop • Congestion control becomes unstable with large feedback delay (characteristic of congestion loop with feedback delay). • To counter this, source routers must slow down as the feedback delay increases. 19

XCP Design Rationale: source adjustments based on the feedbackloop (continued) • How much the source routers should slow down should be adjusted according to the delay in the feedback loop, so that as delay increases, sources should decrease their sending rates. • How should source routers slow down their rates based on the delay, so that stability is established? XCP conjectures from control theory that: – Congestion feedback based on rate-mismatch should be inversely proportional to delay. – Congestion feedback based on queue-mismatch should be inversely proportional to the square of the delay. 20

XCP Design Rationale: Maximum utilization with unknown and changing parameters • The XCP design should allow maximum utilization in a way that is independent of unknown parameters, particularly the number of flows present on the link. • Control theory states that a controller must react as quickly as the dynamics of the controlled signal; in the context of network traffic, this means that XCP needs to react quickly as the number of flows on the link change. 21

XCP Design Rationale: Maximum utilization with unknown and changing parameters (continued) • AQM (currently used as a controller) seeks to match aggregate traffic with link capacity. In TCP it also manages flow queues with constant parameters. Since the number of flows in a link is not constant, AQM cannot be fast enough to operate with an arbitrary number of TCP flows; i. e. , AQM cannot separate link utilization as it depends on constants indicating the number of flows on the link. • Design needs to make the dynamics of the aggregate traffic independent from the number of flows 22

Decouple efficiency control and fairness control • The design needs to allow maximum utilization of a link (aggregate of all flows on that link) independent of how many flows are in it. • The design needs to allow fair bandwidth allocation among all flows, which depends on the number of flows on that link. • To achieve both of these goals in an optimal way, need to decouple “efficiency control” from “fairness control”. We need an algorithm to maximize aggregate utilization, and an algorithm to maximize fairness across flows on the link. 23

Decouple efficiency control and fairness control (continued) • “Efficiency control” is the control of utilization or congestion; i. e. , it attempts to maximize utilization of the link by all the flows using that link. • “Fairness control” is the control of fairness of link utilization among the flows using that link; i. e. , it attempts to distribute utilization of that link to the flows in a way that is fair depending on what the definition of “fair” is, which varies). 24

Decouple efficiency control and fairness control (continued) • Having two controllers simplifies the design and analysis of each controller, since the requirements of each are reduced (vs. having one controller that does both tasks). • Separate controllers also allows changes to be made to one controller without affecting the other. • Separate controllers facilitates implementation of a differential service policy (so that some flows get more bandwidth than others), as only the fairness controller would need to be modified. 25

XCP Protocol • XCP protocol is a joint design of end-systems (senders) and routers (sources). • Window-based congestion control protocol intended for best effort traffic. • Flexible architecture that can easily support differentiated services. • Initial presentation assumes pure XCP network (later it will be shown that XCP and TCP can coexist). 26

Protocol: Framework (how control information flows in the network) • Senders maintain a congestion window “cwnd”, and a round trip time “rtt”. • These values are communicated to routers via a congestion header in every packet. • Routers monitor the input traffic rate to each of their output queues. • Based on the difference between the link bandwidth rate and its input traffic rate, the router signals to the sender to increase or decrease its congestion window, by modifying the congestion header of the data packets. This happens for each router along the path. • The router divides feedback among flows based on their “cwnd” and “rtt” values, so that the bandwidth converges to fairness. • This feedback (congestion header) is sent back to the router in an acknowledgement. When the sender receives this acknowledgement, it updates its “cwnd” value accordingly. 27

Protocol: The Congestion Header • Carried by every XCP packet. • Used to communicate a flow’s state to the routers and feedback from the routers on to the receivers. • Field “H_cwnd” is the sender’s current congestion window. • Field “H_rtt” is the sender’s current RTT estimate. • “H_cwnd” and “H_rtt” are filled in by sender and never modified. • Field “H_feedback” takes positive or negative values, and are modified by routers along the path. These modifications indicate to the sender whether to increase or decrease their congestion window. 28

Protocol: The XCP Sender • Maintains congestion window of outstanding packets (“cwnd”), and an estimate of the RTT (“rtt”). • Attaches congestion header to packets upon departure. Sets “H_cwnd” to its current “cwnd” and “H_rtt” to its current “rtt”. • In the first packet of a flow, “H_rtt” is set to zero (does not yet have a valid estimate). • Sets “H_feedback” to the desired increase in the congestion window. With a desired rate “r”, the desired increase in the congestion window is: (r * rtt – cwnd), divided by the number of packets. • This “H_feedback” value is based on the congestion feedback, and allows the sender to reach the desired rate after just one RTT. • XCP responds to packet losses in the same way as TCP (packet losses are rare in XCP). 29

Protocol: The XCP Receiver • Similar to TCP receiver. • Only difference is that when acknowledging a packet, it copies the congestion header from the data packet to its acknowledgement (so that the sender can get precise congestion feedback). 30

The XCP Router: The Control Laws • Job of XCP router is to compute the feedback to maximize aggregate link utilization and to maximize fairness. • XCP does not drop packets (since it does not use dropped packets to indicate congestion). • Congestion leading to dropped packets is rare in XCP; but when it does occur, a distinct dropping policy is used (Drop. Tail, etc. ). • XCP uses an “efficiency controller” to compute maximum aggregate utilization, and a “fairness controller” to compute fairness. 31

The XCP Router: The Control Laws (continued) • Both controllers compute their estimates over the average RTT of the flows traversing the link (smooths the burstiness of a window-based control protocol). • XCP makes control decisions every average RTT (this is the control interval). Needs to observe results of previous control decisions before making a new control decision. • Maintains per-link estimation-control timer of the average RTT on that link. When this timer times out, the router updates its estimates and control decisions. 32

Protocol: The Efficiency Controller • Purpose is to aggregate link utilization while minimizing drop rate and persistent queues. • Does not care about fairness among flows. • Computes a desired increase or decrease in the number of bytes that the aggregate traffic transmits in a control interval (average RTT); i. e. , the increase or decrease of the congestion window. 33

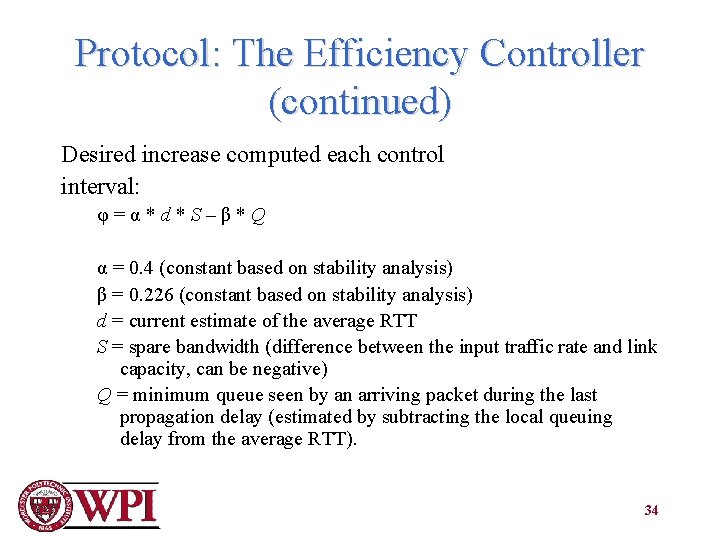

Protocol: The Efficiency Controller (continued) Desired increase computed each control interval: φ=α*d*S–β*Q α = 0. 4 (constant based on stability analysis) β = 0. 226 (constant based on stability analysis) d = current estimate of the average RTT S = spare bandwidth (difference between the input traffic rate and link capacity, can be negative) Q = minimum queue seen by an arriving packet during the last propagation delay (estimated by subtracting the local queuing delay from the average RTT). 34

Protocol: The Efficiency Controller (continued) • φ=α*d*S–β*Q • Proportional to spare bandwidth, based on S (S increases or decreases value, therefore increases or decreases congestion window). • Also gives feedback when input traffic matches the capacity, as value is proportional to the persistent queue as well (longer queues increase this value, allows persistent queues to be drained). • Spare bandwidth S is multiplied by the average RTT (d), since the feedback is in bytes. • Value is set to “H_feedback”, and indicates only how to change aggregate traffic on link. 35

Protocol: The Fairness Controller • Used to allocate bandwidth increase or decrease across individual flows to achieve fairness. • Uses AIMD to converge to fairness (same principle used by TCP). • Compute per-packet feedback according to the policy, ensures fairness as long as φ is not 0 (or close to 0): – if φ > 0, allocate it so that the increase in throughput of all flows is the same. – if φ < 0, allocate it so that the decrease in throughput of a flow is proportional to its current throughput. 36

Protocol: The Fairness Controller (continued) • What happens when φ is 0 or close to 0 (optimal efficiency)? Need to prevent fairness convergence from stalling. • “Bandwidth Shuffling”: Simultaneous allocation and de-allocation of bandwidth such that the total traffic rate (and consequently the efficiency) does not change, yet the throughput of each individual flow changes gradually to approach the flow’s fair share. 37

Protocol: The Fairness Controller (continued) Bandwidth Shuffling traffic is computed as follows: h = max(0, γ * y - |φ|) y is the input traffic in an average RTT γ is a constant set to 0. 1 38

Protocol: The Fairness Controller (continued) • With “h = max(0, γ * y - |φ|)”, it is ensured that 10% of traffic is redistributed (to converge to fairness) according to AIMD. • 10% is a tradeoff between the time to converge to fairness and the disturbance the shuffling imposes on a system that is around optimal efficiency. 39

Protocol: The Fairness Controller (continued) • Next we need to reshuffle the traffic. • Traffic is redistributed according to AIMD. • Done by computing per-packet feedback that allows the Fairness Controller (FC) to enforce the policies to converge to fairness. • Increase law is additive and decrease law is multiplicative, so compute feedback for a packet i as combination of a positive feedback pi and a negative feedback ni: – H_feedbacki = pi - ni 40

Protocol: The Fairness Controller (continued) • First, compute case when the aggregate feedback is positive (φ > 0). • Want to increase throughput of all flows by the same amount. So for any flow i, increase in throughput is going to be proportional to the same constant. • Since window-based protocol, want to compute change in congestion window rather than the change in throughput. • The change in the congestion window of flow i is the change in its throughput multiplied by its RTT. • Hence, the change in the congestion window of flow i should be proportional to the flow’s RTT. 41

Protocol: The Fairness Controller (continued) • Next step is to translate this desired change of congestion window to per-packet feedback, that is reported in the congestion header. • Total change in congestion window of a flow is the sum of the per-packet feedback it receives. • So per-packet feedback is obtained by dividing the change in congestion window by the expected number of packets from flow i that the router sees in a control interval d. 42

Protocol: The Fairness Controller (continued) • This per-packet-feedback value (pi) is proportional to the flow’s congestion window divided by its packet size (both in bytes): cwndi/si • This value is inversely proportional to its congestion window divided by its packet size (rtt^2 i / (cwndi/si) 43

Protocol: The Fairness Controller (continued) • So, positive feedback pi is given by: – pi = εp * (rtti^2 * si / cwndi/si) – εp is a constant • Total increase in traffic rate is: – h + max(φ, 0) / d – “max(φ, 0)” ensures that we are computing positive feedback 44

Protocol: The Fairness Controller (continued) • “h + max(φ, 0) / d” ensures that we are computing the positive feedback. • This is equal to the sum of the incrase in the rates of all flows in the aggregate, which is the sum of the positive feedback a flow has received divided by its RTT, which is “Σ pi/rtti” summed L times (“L” is the number of packets seen by the router in an average RTT). • εp can be derived as “h + max(φ, 0) / d” divided by d * this summation. 45

Protocol: The Fairness Controller (continued) • Similar calculation for decrease, when φ < 0 • Want the decrease in the throughput of flow i to be proportional to its current throughput • So the desired change in the flow’s congestion window is proportional to its current congestion window • The desired per-packet feedback is the desired change in the congestion window divided by the expected number of packets from this flow that the router sees in an interval d. • So the per-packet negative feedback should be propportoinal to the packet size multipleied by its flow’s RTT, so ni is: ni = εp * rtti * si 46

Protocol: The Fairness Controller (continued) • Total decrease in the aggregate traffic rate is the sum of the decrease in the rates of all flows in the aggregate: h + max(-φ, 0) / d = Σ ni/rtti (summed L times). • εp can be derived as “h + max(-φ, 0) / d” divided by d * Σsi (sum of all packets over a control interval). 47

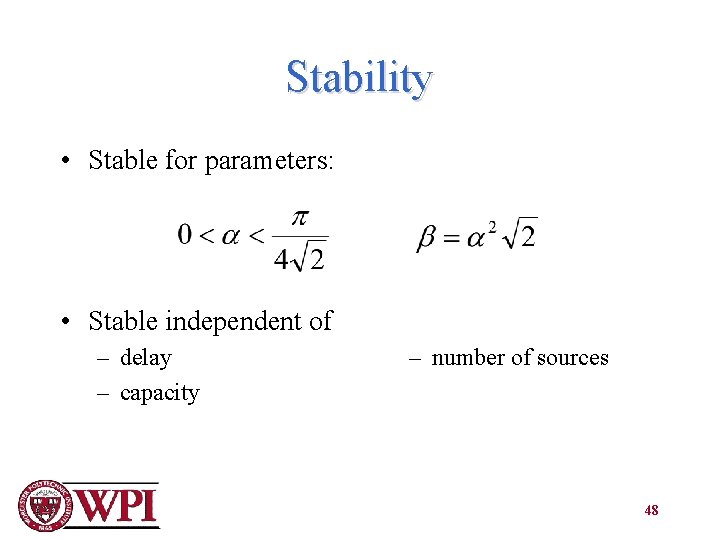

Stability • Stable for parameters: • Stable independent of – delay – capacity – number of sources 48

Simulation Setup • Non-XCP algorithms settings = respective authors’ recom • ECN enabled on all Packet size: 1000 bytes • Buffer size: delay-bandwidth product 49

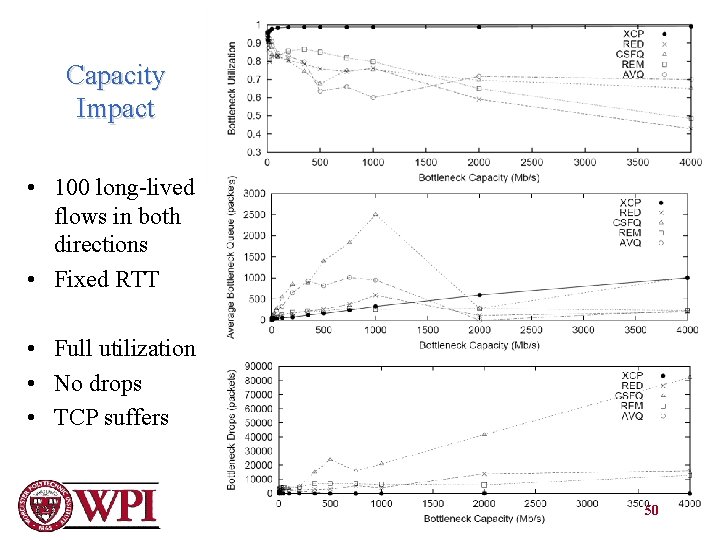

Capacity Impact • 100 long-lived flows in both directions • Fixed RTT • Full utilization • No drops • TCP suffers 50

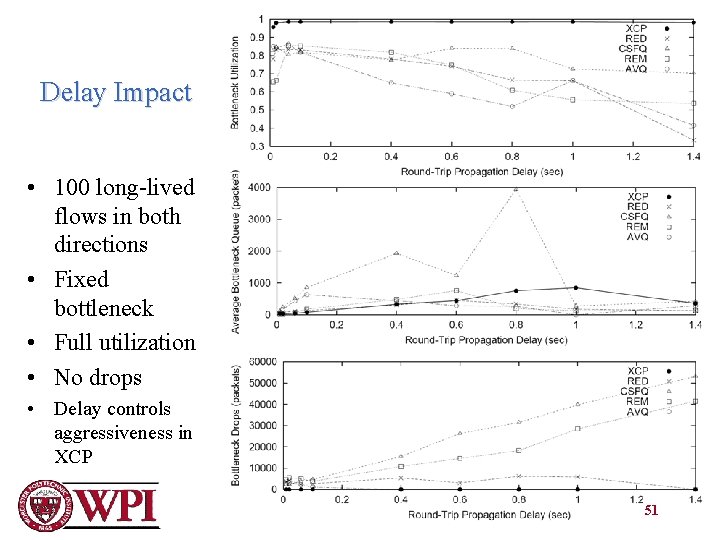

Delay Impact • 100 long-lived flows in both directions • Fixed bottleneck • Full utilization • No drops • Delay controls aggressiveness in XCP 51

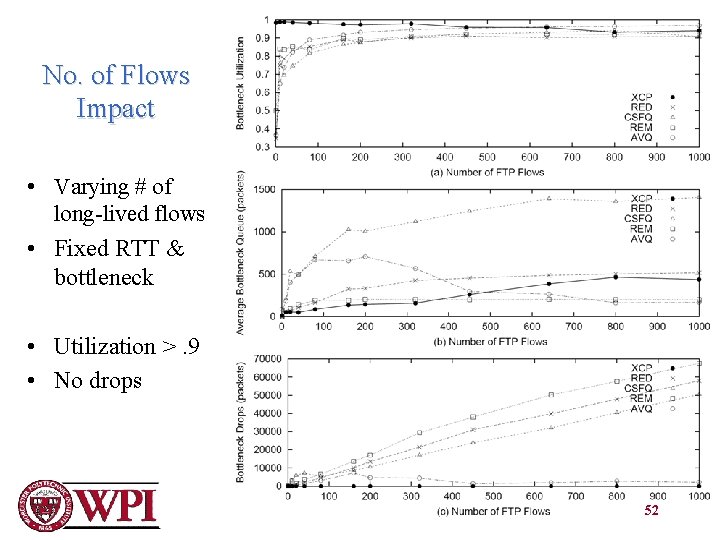

No. of Flows Impact • Varying # of long-lived flows • Fixed RTT & bottleneck • Utilization >. 9 • No drops 52

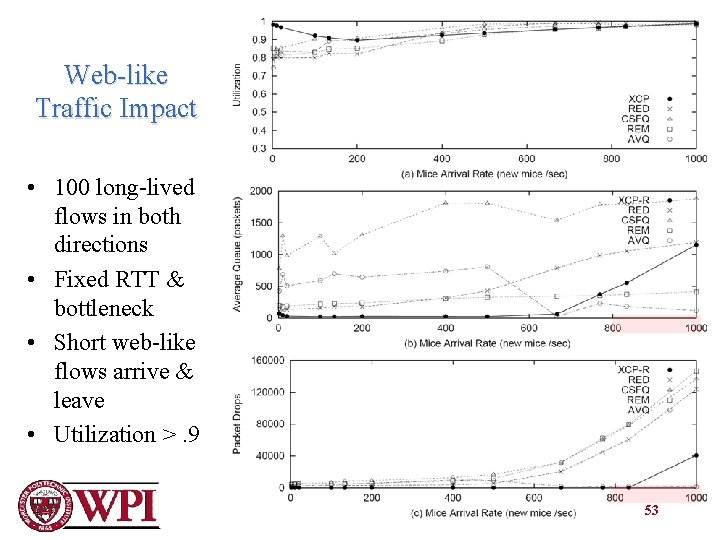

Web-like Traffic Impact • 100 long-lived flows in both directions • Fixed RTT & bottleneck • Short web-like flows arrive & leave • Utilization >. 9 53

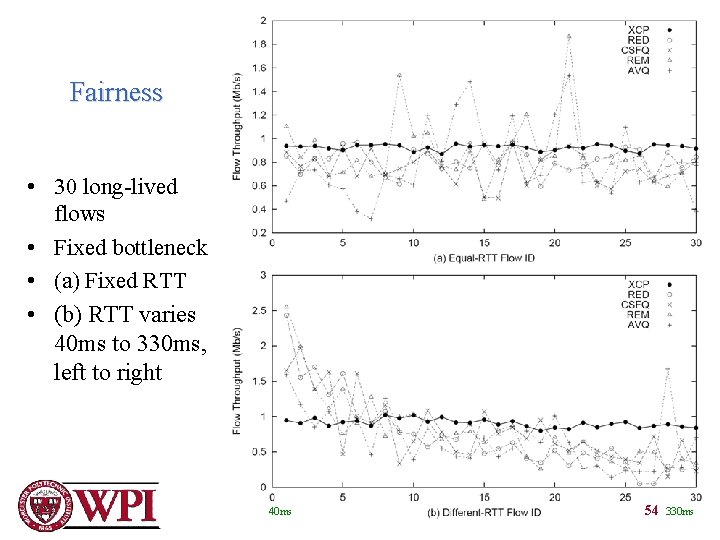

Fairness • 30 long-lived flows • Fixed bottleneck • (a) Fixed RTT • (b) RTT varies 40 ms to 330 ms, left to right 40 ms 54 330 ms

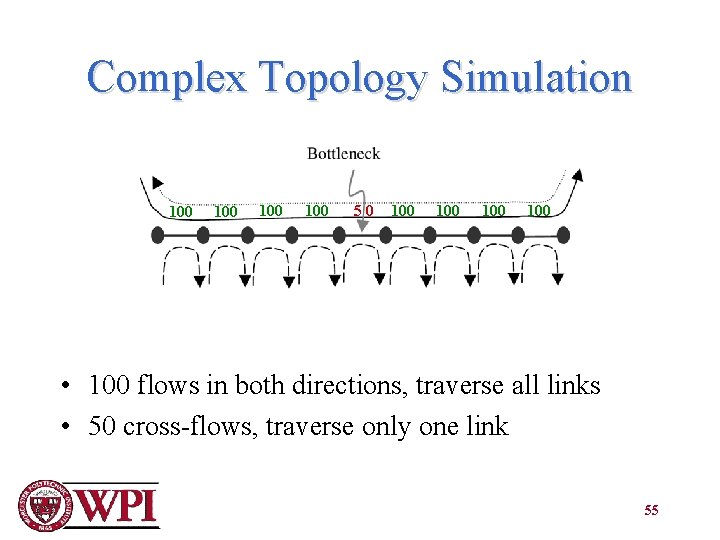

Complex Topology Simulation 100 100 50 100 100 • 100 flows in both directions, traverse all links • 50 cross-flows, traverse only one link 55

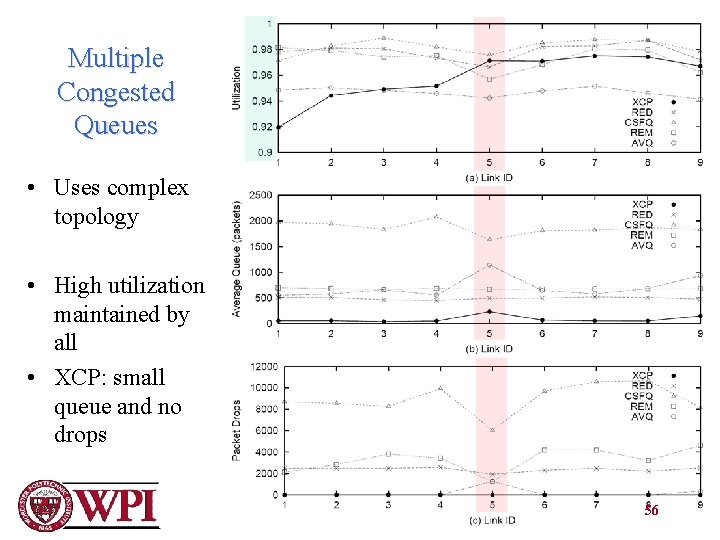

Multiple Congested Queues • Uses complex topology • High utilization maintained by all • XCP: small queue and no drops 56

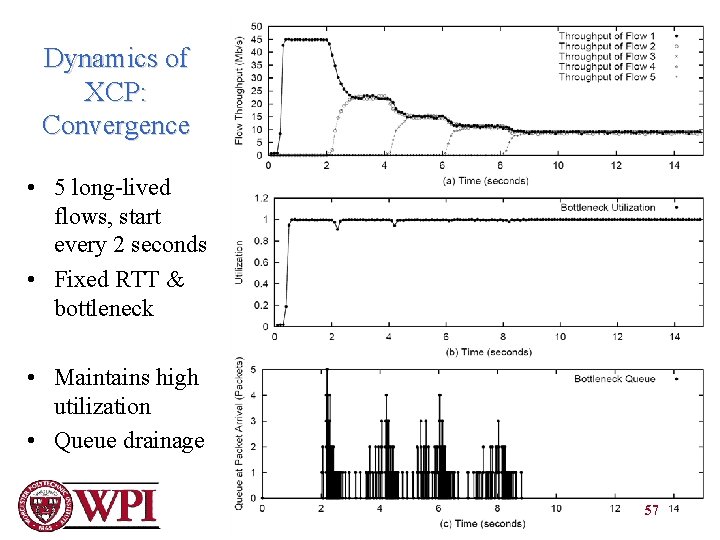

Dynamics of XCP: Convergence • 5 long-lived flows, start every 2 seconds • Fixed RTT & bottleneck • Maintains high utilization • Queue drainage 57

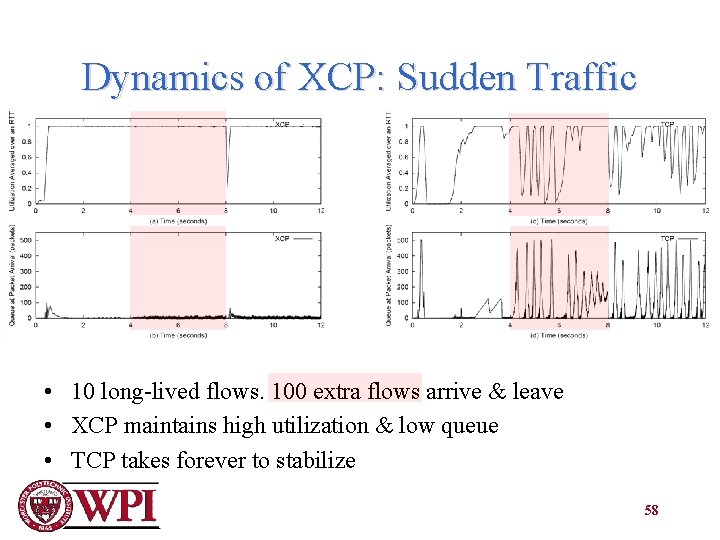

Dynamics of XCP: Sudden Traffic • 10 long-lived flows. 100 extra flows arrive & leave • XCP maintains high utilization & low queue • TCP takes forever to stabilize 58

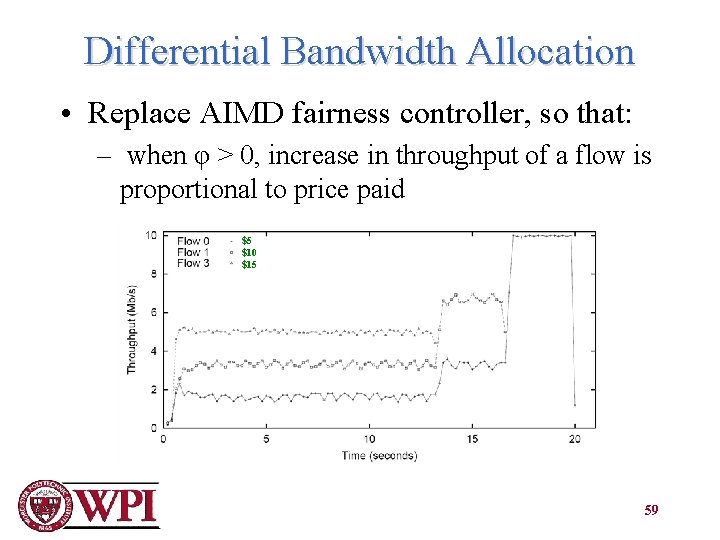

Differential Bandwidth Allocation • Replace AIMD fairness controller, so that: – when φ > 0, increase in throughput of a flow is proportional to price paid $5 $10 $15 59

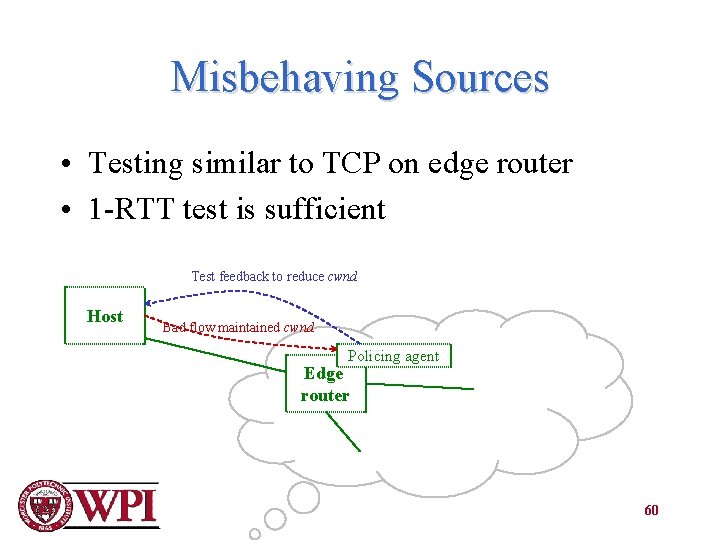

Misbehaving Sources • Testing similar to TCP on edge router • 1 -RTT test is sufficient Test feedback to reduce cwnd Host Bad flow maintained cwnd Policing agent Edge router 60

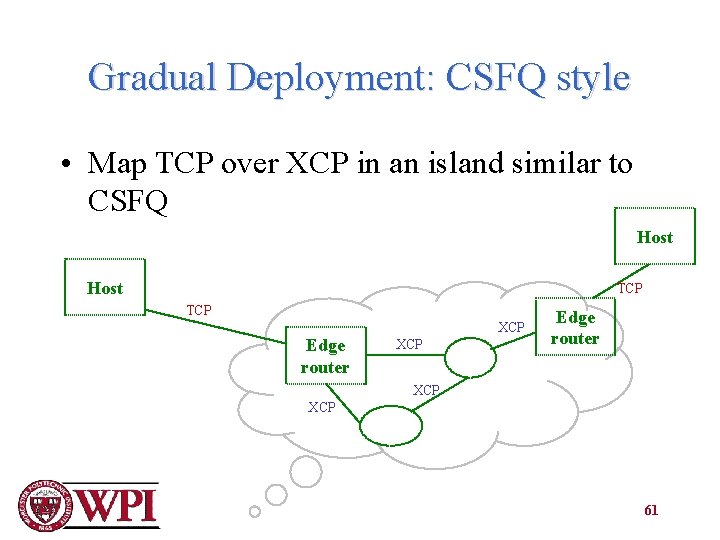

Gradual Deployment: CSFQ style • Map TCP over XCP in an island similar to CSFQ Host TCP Edge router XCP XCP 61

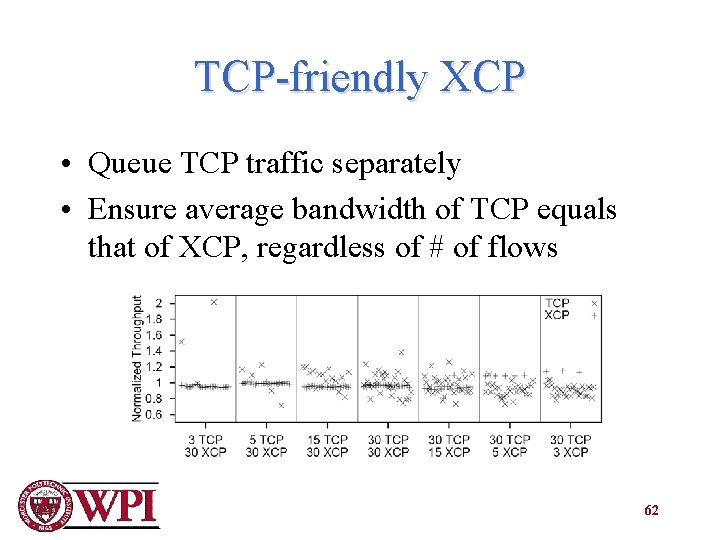

TCP-friendly XCP • Queue TCP traffic separately • Ensure average bandwidth of TCP equals that of XCP, regardless of # of flows 62

- Slides: 62