Chapter 12 SecondaryStorage Structure n 12 1 Overview

- Slides: 54

Chapter 12 Secondary-Storage Structure n 12. 1 Overview of Mass Storage Structure n 12. 2 Disk Structure n 12. 3 Disk Attachment n 12. 4 Disk Scheduling n 12. 5 Disk Management n 12. 6 Swap-Space Management n 12. 7 RAID Structure l Disk Attachment n 12. 8 Stable-Storage Implementation n 12. 9 Tertiary Storage Devices l Operating System Issues l Performance Issues Operating System Principles 12. 1 Silberschatz, Galvin and Gagne © 2005

Objectives n Describe the physical structure of secondary and tertiary storage devices and the resulting effects on the uses of the devices l http: //www. tomshardware. tw/review, 2/ l http: //www. zdnet. com. tw/enterprise/column/phoenix/ n Explain the performance characteristics of mass-storage devices n Discuss operating-system services provided for mass storage, including RAID and HSM (hierarchical storage management) Operating System Principles 12. 2 Silberschatz, Galvin and Gagne © 2005

12. 1 Overview of Mass Storage Structure n Magnetic disks provide bulk of secondary storage of modern computers l Drives rotate at 60 to 200 times per second (3600 to 12000 rpm) l Transfer rate is rate at which data flow between drive and computer (400 Mb-6 Gb/sec) l Positioning time (random-access time) is time to move disk arm to desired cylinder (seek time, 4 micro seconds) and time for desired sector to rotate under the disk head (rotational latency, 3 micro seconds) l Example: Hitachi Ultrastar C 10 K 300 (http: //www. zdnet. com. tw/news/hardware/0, 2000085676, 201370 89, 00. htm) l Head crash results from disk head making contact with the disk surface 4 Normally Operating System Principles cannot be repaired 12. 3 Silberschatz, Galvin and Gagne © 2005

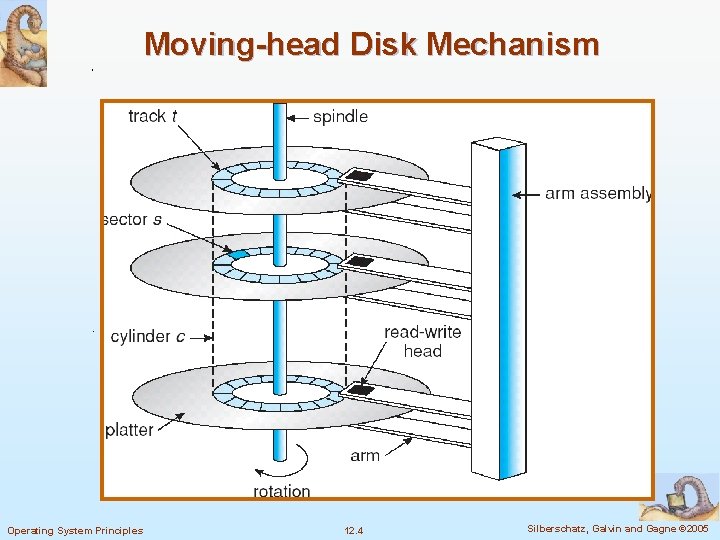

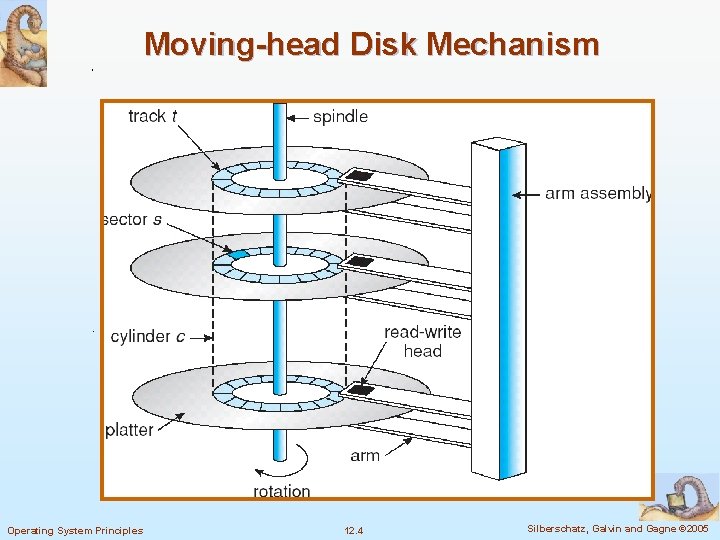

Moving-head Disk Mechanism Operating System Principles 12. 4 Silberschatz, Galvin and Gagne © 2005

Overview n Disks can be removable l Held in plastic case n Drive attached to computer via I/O bus l Buses vary, including EIDE, ATA (Advanced Technology Attachment), SATA (Serial ATA), USB, FC (Fiber Channel), SCSI (Small Computer-System Interface) l Host controller in computer uses bus to talk to disk controller built into drive or storage array using memorymapped I/O ports l Disk controllers usually have built-in cache (MB) Operating System Principles 12. 5 Silberschatz, Galvin and Gagne © 2005

n Magnetic tape l Was early secondary-storage medium l Relatively permanent and holds large quantities of data l Access time slow l Random access ~1000 times slower than disk l Mainly used for backup, storage of infrequently-used data, transfer medium between systems l Kept in spool and wound or rewound past read-write head l Once data under head, transfer rates comparable to disk l 20 -200 GB typical storage l Common technologies are 4 mm, 8 mm, 19 mm, ¼ inch, ½ inch, LTO-2 (Linear Tape-Open) and SDLT (Super Digital Linear Tape) LTO-5 (1. 5 TB) http: //www. zdnet. com. tw/enterprise/column/phoenix/0, 2003032776, 2 0145004, 00. htm 4 最新 Operating System Principles 12. 6 Silberschatz, Galvin and Gagne © 2005

12. 2 Disk Structure n Disk drives are addressed as large 1 -dimensional arrays of logical blocks, where the logical block is the smallest unit of transfer l A logical block is usually of size 512 bytes n The 1 -dimensional array of logical blocks is mapped into the sectors of the disk sequentially. l Sector 0 is the first sector of the first track on the outermost cylinder l Mapping proceeds in order through that track, then the rest of the tracks in that cylinder, and then through the rest of the cylinders from outermost to innermost Operating System Principles 12. 7 Silberschatz, Galvin and Gagne © 2005

n In practice, it is difficult to convert a logical block number into cylinder, track, sector : defective sectors and the number of sectors per track is not a constant l CD-ROM and DVD-ROM increase their rotation speed as the head moves from the outer to inner tracks to keep the same data rate (the density of bits per track is constant, constant linear velocity, CLV) l In some disks, the rotation speed is constant, and the density of bits decreases from inner tracks to outer tracks to keep the data rate constant (constant angular velocity, CAV) Operating System Principles 12. 8 Silberschatz, Galvin and Gagne © 2005

12. 3 Disk Attachment (skip) n Host-attached storage accessed through I/O ports talking to I/O busses l IDE, ATA, SCSI, FC n SCSI itself is a bus, up to 16 devices on one cable, SCSI initiator requests operation and SCSI targets perform tasks l Each target can have up to 8 logical units (disks attached to device controller n FC is high-speed serial architecture l Can be switched fabric with 24 -bit address space – the basis of storage area networks (SANs) in which many hosts attach to many storage units l Can be arbitrated loop (FC-AL) of 126 devices Operating System Principles 12. 9 Silberschatz, Galvin and Gagne © 2005

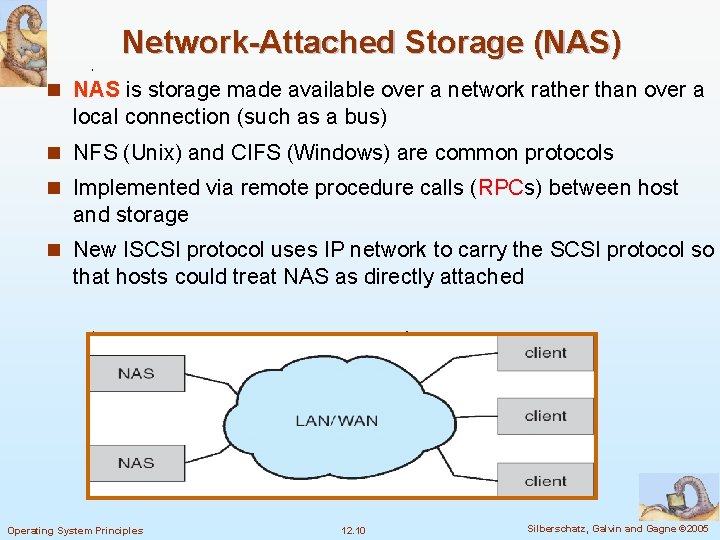

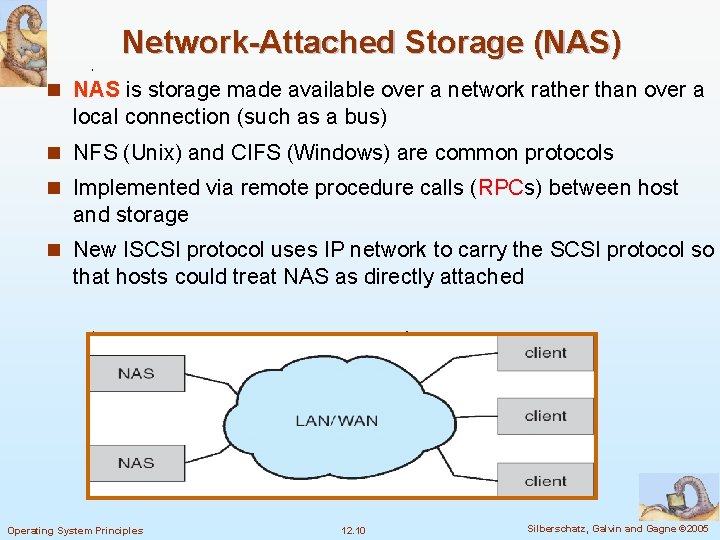

Network-Attached Storage (NAS) n NAS is storage made available over a network rather than over a local connection (such as a bus) n NFS (Unix) and CIFS (Windows) are common protocols n Implemented via remote procedure calls (RPCs) between host and storage n New ISCSI protocol uses IP network to carry the SCSI protocol so that hosts could treat NAS as directly attached Operating System Principles 12. 10 Silberschatz, Galvin and Gagne © 2005

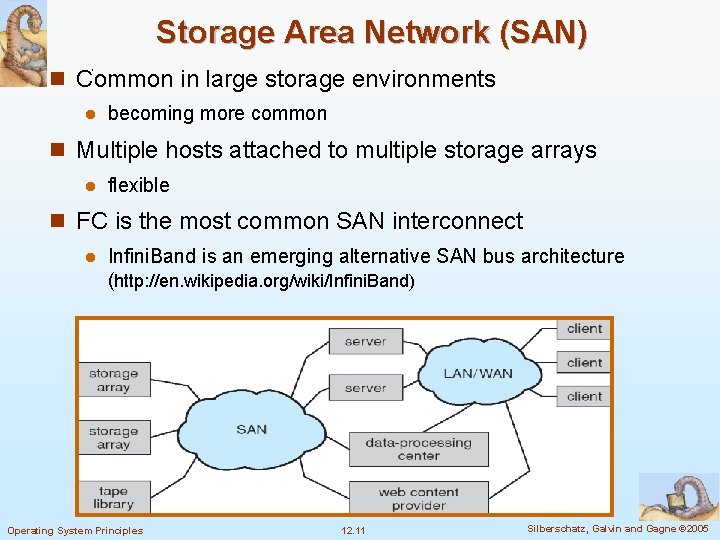

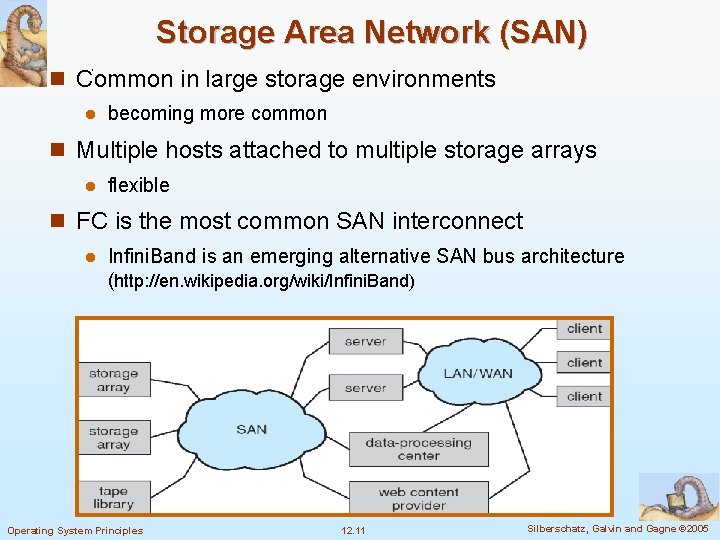

Storage Area Network (SAN) n Common in large storage environments l becoming more common n Multiple hosts attached to multiple storage arrays l flexible n FC is the most common SAN interconnect l Infini. Band is an emerging alternative SAN bus architecture (http: //en. wikipedia. org/wiki/Infini. Band) Operating System Principles 12. 11 Silberschatz, Galvin and Gagne © 2005

12. 4 Disk Scheduling n The operating system is responsible for using hardware efficiently — for the disk drives, this means having a fast access time and disk bandwidth n Access time has two major components l Seek time is the time for the disk are to move the heads to the cylinder containing the desired sector. l Rotational latency is the additional time waiting for the disk to rotate the desired sector to the disk head. n Minimize seek time l Seek time seek distance n Disk bandwidth is the total number of bytes transferred, divided by the total time between the first request for service and the completion of the last transfer Operating System Principles 12. 12 Silberschatz, Galvin and Gagne © 2005

Disk I/O System Call n The system call for disk I/O specifies l This operation is input or output l The disk address for the transfer l Memory address for the transfer l The number of sectors to be transferred n For a multiprogramming system, normally, the disk request queue has several pending requests Operating System Principles 12. 13 Silberschatz, Galvin and Gagne © 2005

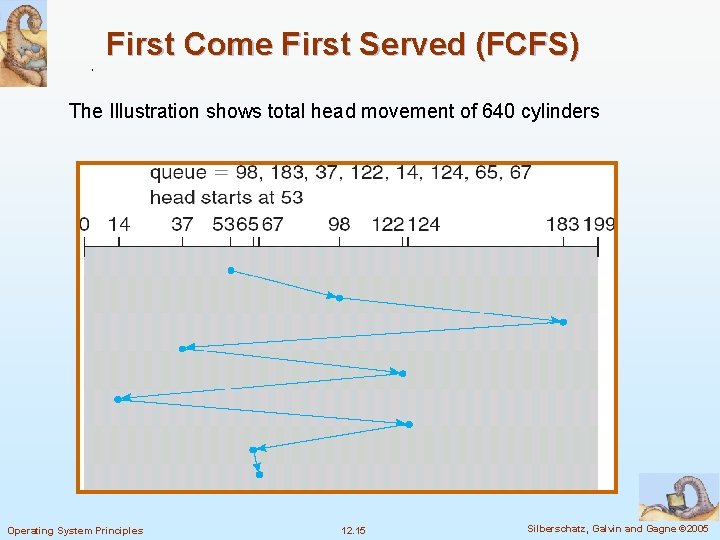

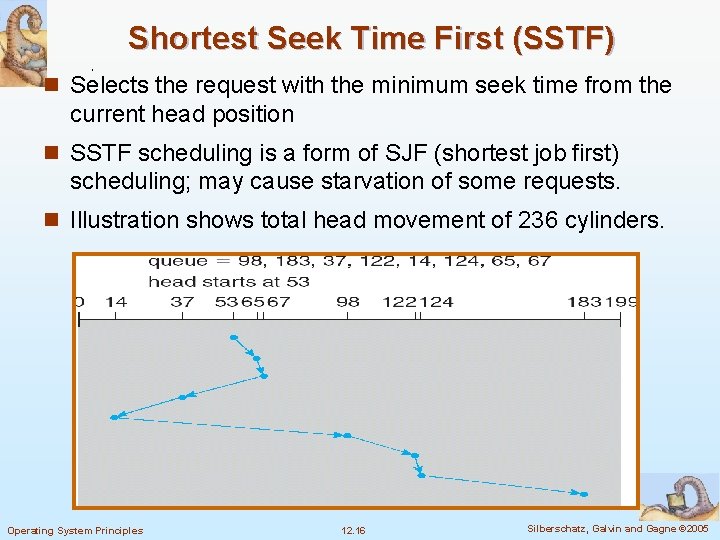

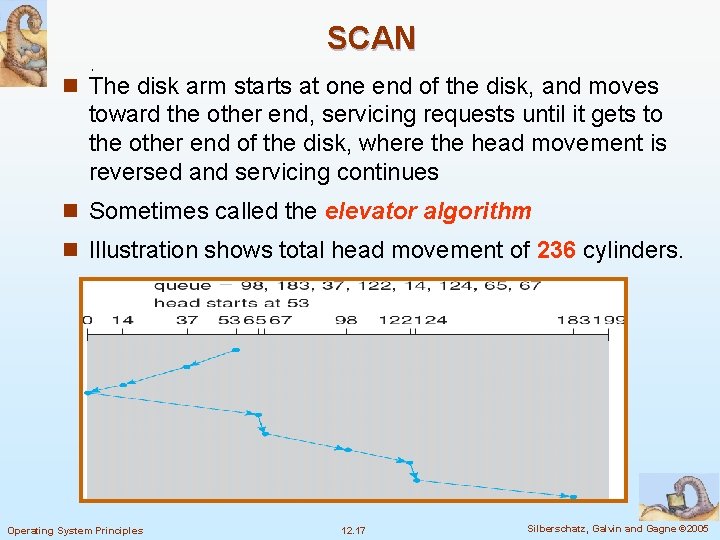

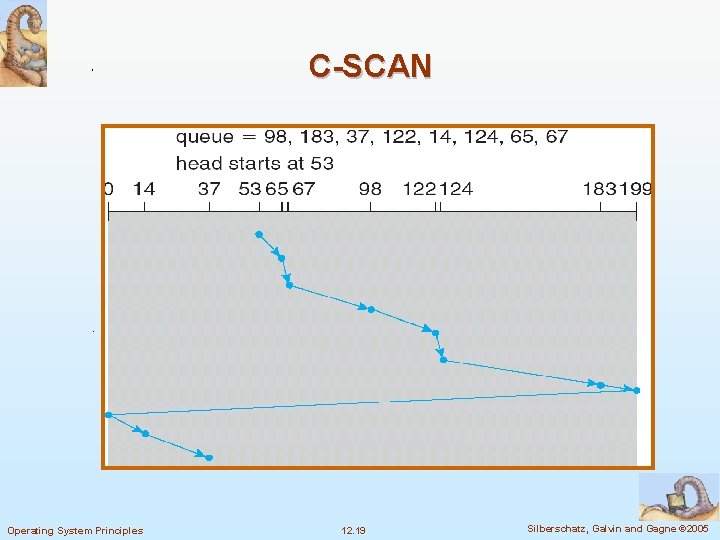

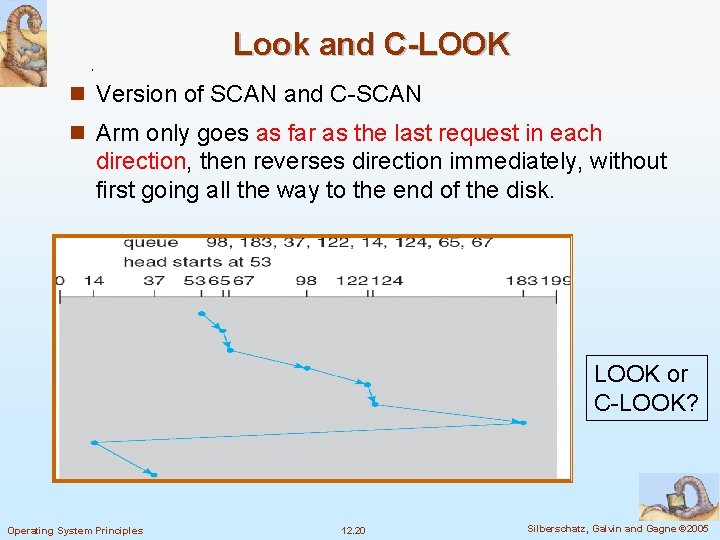

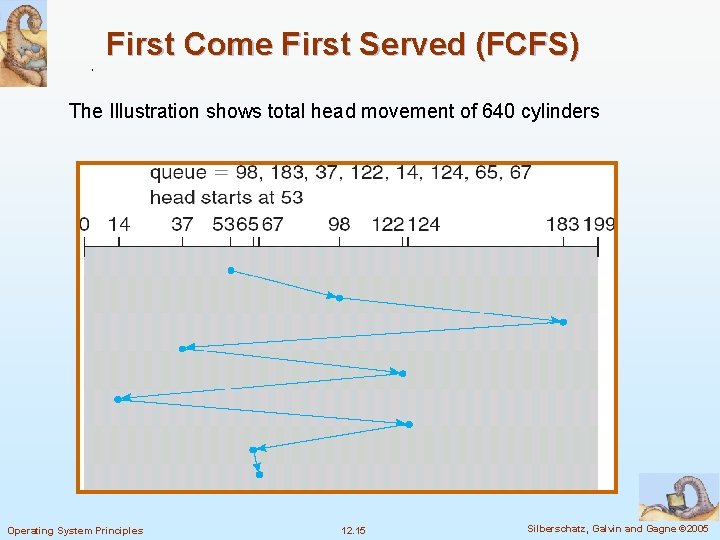

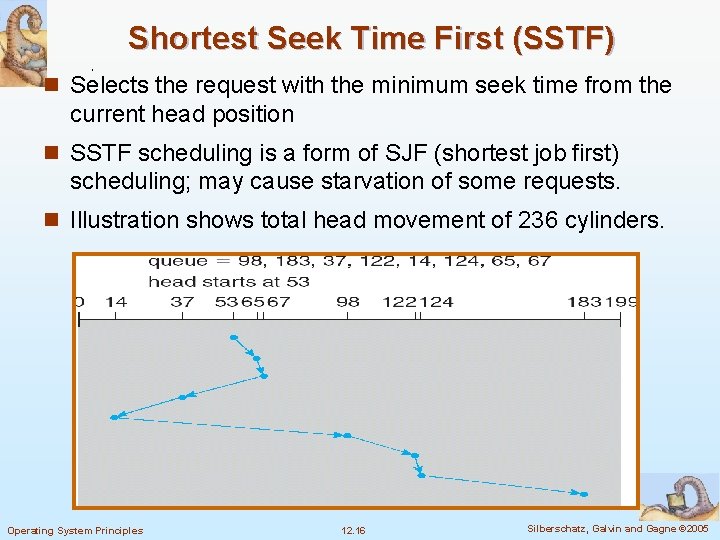

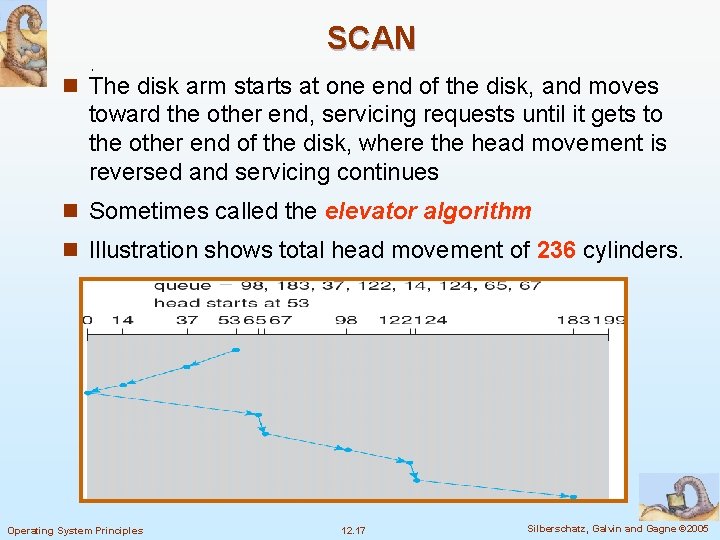

Disk Scheduling n Several algorithms exist to schedule the servicing of disk I/O requests. n We illustrate them with a disk request queue of requests for blocks on cylinders (0 -199): 98, 183, 37, 122, 14, 124, 65, 67 n The sequence indicates their order in time n Suppose the disk head pointer is initially at cylinder 53 Operating System Principles 12. 14 Silberschatz, Galvin and Gagne © 2005

First Come First Served (FCFS) The Illustration shows total head movement of 640 cylinders Operating System Principles 12. 15 Silberschatz, Galvin and Gagne © 2005

Shortest Seek Time First (SSTF) n Selects the request with the minimum seek time from the current head position n SSTF scheduling is a form of SJF (shortest job first) scheduling; may cause starvation of some requests. n Illustration shows total head movement of 236 cylinders. Operating System Principles 12. 16 Silberschatz, Galvin and Gagne © 2005

SCAN n The disk arm starts at one end of the disk, and moves toward the other end, servicing requests until it gets to the other end of the disk, where the head movement is reversed and servicing continues n Sometimes called the elevator algorithm n Illustration shows total head movement of 236 cylinders. Operating System Principles 12. 17 Silberschatz, Galvin and Gagne © 2005

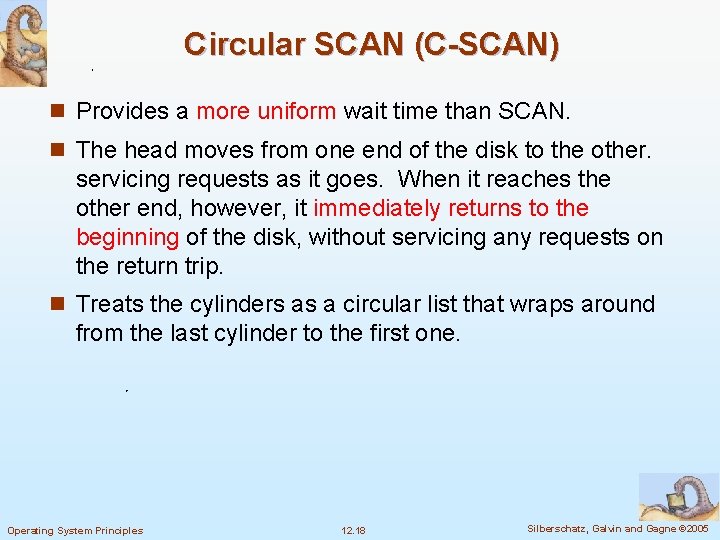

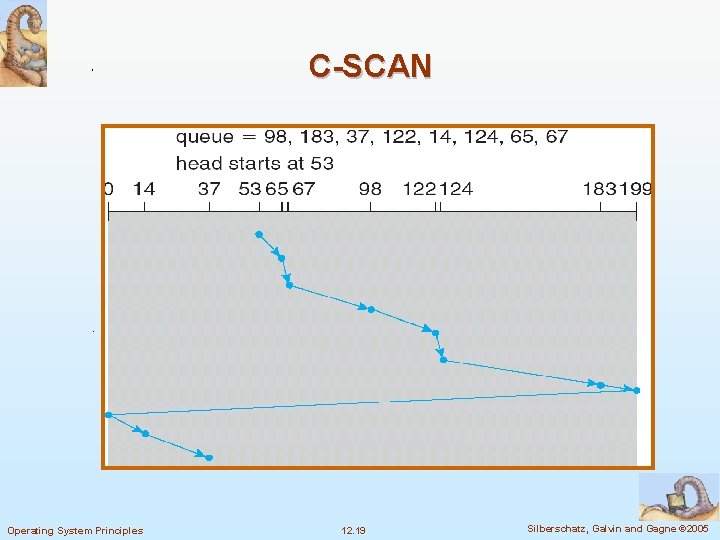

Circular SCAN (C-SCAN) n Provides a more uniform wait time than SCAN. n The head moves from one end of the disk to the other. servicing requests as it goes. When it reaches the other end, however, it immediately returns to the beginning of the disk, without servicing any requests on the return trip. n Treats the cylinders as a circular list that wraps around from the last cylinder to the first one. Operating System Principles 12. 18 Silberschatz, Galvin and Gagne © 2005

C-SCAN Operating System Principles 12. 19 Silberschatz, Galvin and Gagne © 2005

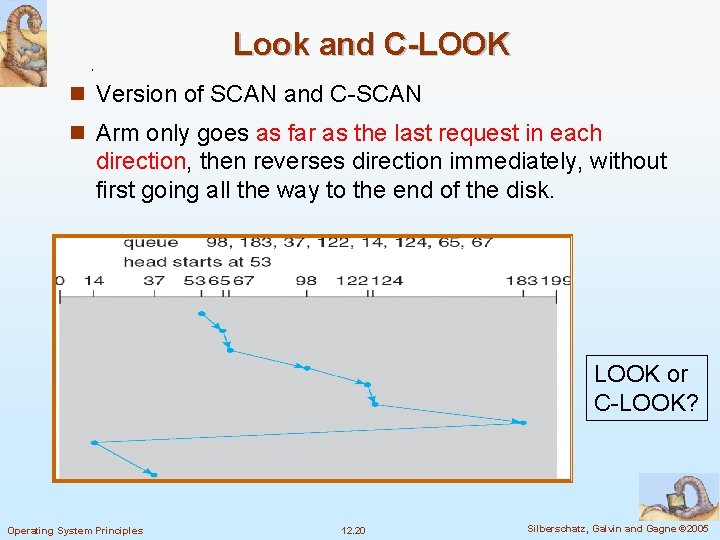

Look and C-LOOK n Version of SCAN and C-SCAN n Arm only goes as far as the last request in each direction, then reverses direction immediately, without first going all the way to the end of the disk. LOOK or C-LOOK? Operating System Principles 12. 20 Silberschatz, Galvin and Gagne © 2005

Selecting a Disk-Scheduling Algorithm n SSTF is common and has a natural appeal n SCAN and C-SCAN perform better for systems that place a heavy load on the disk n Performance depends on the number and types of requests n Requests for disk service can be influenced by the file- allocation method n The disk-scheduling algorithm should be written as a separate module of the operating system, allowing it to be replaced with a different algorithm if necessary n Either SSTF or LOOK is a reasonable choice for the default algorithm Operating System Principles 12. 21 Silberschatz, Galvin and Gagne © 2005

Selecting a Disk-Scheduling Algorithm n Difficult to schedule for improved rotational latency n Disk manufacturers have implemented disk scheduling algorithms in the controller hardware n In practice, OS has other constraints l Demand paging may take priority over application I/O l Writes are more urgent than reads if cache is running out of free pages l Sometimes the order of writes must be kept to make the file system robust in case of system crashes Operating System Principles 12. 22 Silberschatz, Galvin and Gagne © 2005

12. 5 Disk Management n Low-level formatting, or physical formatting — Dividing a disk into sectors that the disk controller can read and write. l Header and trailer of sector contain information used by the disk controller, such as a sector number and an error-correcting code (ECC) n Most hard disks are low-level formatted at the factory n To use a disk to hold files, the operating system still needs to record its own data structures on the disk l Partition the disk into one or more groups of cylinders 4 Each l partition is treated as a separate disk Logical formatting or “creation of a file system” 4 Maps Operating System Principles of free and allocated space and an empty directory 12. 23 Silberschatz, Galvin and Gagne © 2005

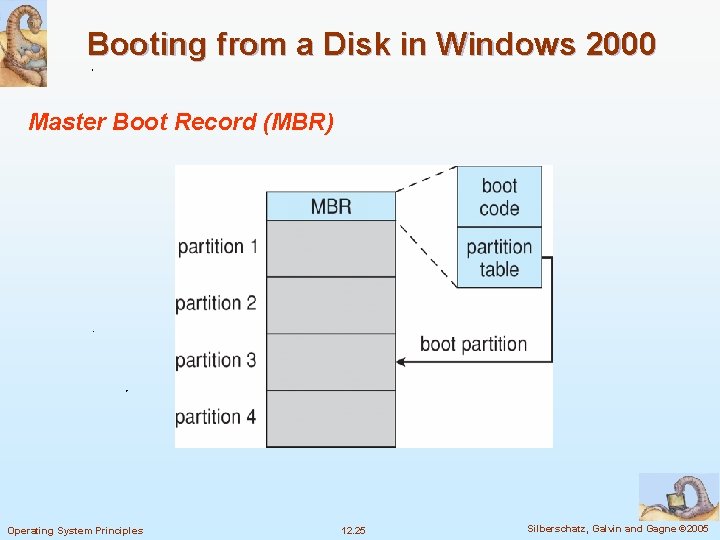

n To increase efficiency, most file system group blocks together into clusters l Disk I/O is via blocks, but file I/O is via clusters n Some OS’s allow raw-disk l Example: DBMS n Boot block initializes system l The bootstrap is stored in ROM. l Only Bootstrap loader program is stored l A disk with a boot partition is called a boot disk or system disk Operating System Principles 12. 24 Silberschatz, Galvin and Gagne © 2005

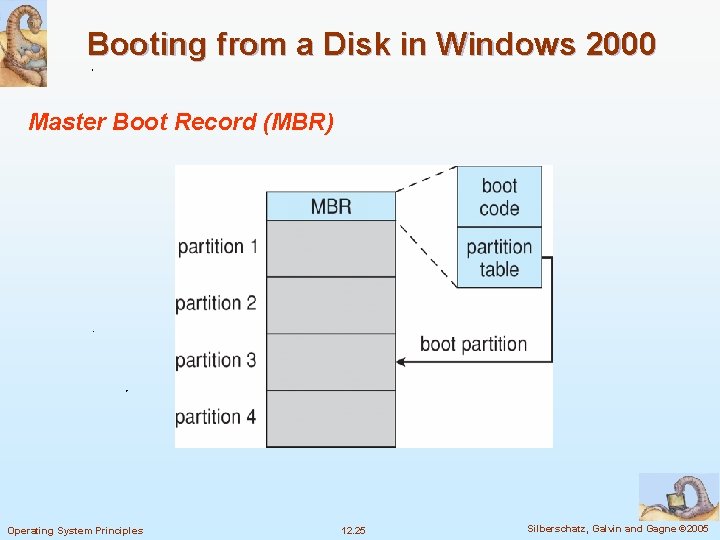

Booting from a Disk in Windows 2000 Master Boot Record (MBR) Operating System Principles 12. 25 Silberschatz, Galvin and Gagne © 2005

Bad Blocks n Bad blocks are handled manually on simple disks, such as disks with IDE controllers l Example: chkdsk in MS-DOS n Sophisticated disk controller, like SCSI, maintains a list of bad blocks l Sector sparing: the controller could replace each bad sector logically with a spare sector 4 May invalidate optimization of disk-scheduling 4 Solution: provide spare sectors in each cylinder and a spare cylinder as well l Sector slipping: remaps all sectors from the defective one to the next available sector by moving them all down one spot Operating System Principles 12. 26 Silberschatz, Galvin and Gagne © 2005

12. 6 Swap-Space Management n Swapping (Section 8. 2) is to move least active process between main memory and disk l Systems actually combine swapping with virtual memory techniques and swap pages, not entire processes n Swap-space — Virtual memory uses disk space as an extension of main memory l Its goal is to provide best throughput for the virtual memory system l The amount of needed swap space depends on 4 Amount of physical memory, amount of virtual memory, the way virtual memory is used l It is safer to overestimate the amount of swap space required 4 Linux suggest setting swap space to double the amount of physical memory Operating System Principles 12. 27 Silberschatz, Galvin and Gagne © 2005

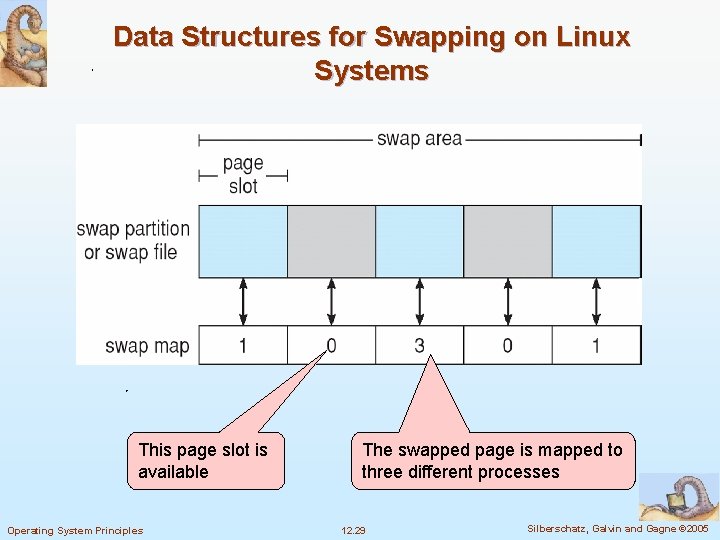

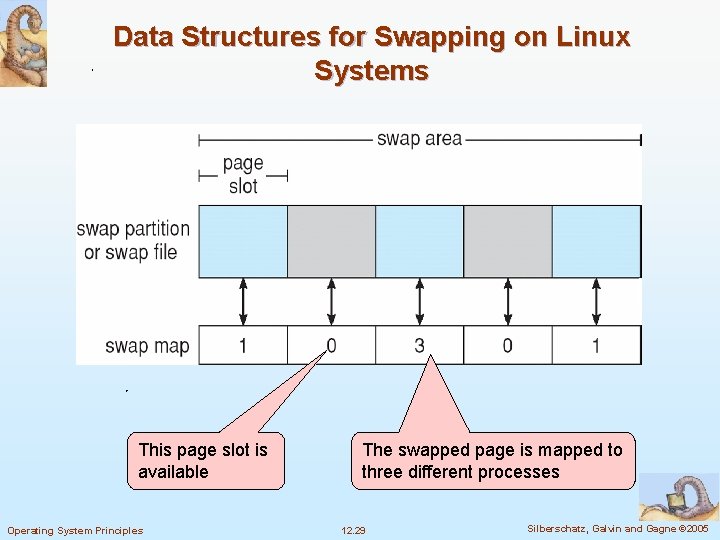

n Swap-space can be carved out of the normal file system as a very large file: too inefficient l more commonly, it can be in a separate raw disk partition. The goal is to optimize for speed rather than for storage efficiency n Swap-space management In Solaris 1, swap space is used for pages of anonymous memory, which includes memory for the stack, heap, and uninitialized data of a process l Solaris 2 allocates swap space only when a page is forced out of physical memory, not when the virtual memory page is first created. l In Linux, swap space is also used for pages of anonymous memory or for regions of memory shared by several processes 4 Each swap area consists of a series of 4 -KB page slots. Associated with each swap area is a swap map: an array of integer counters to indicate the number of mappings to the swapped page. Kernel uses swap maps to track swap-space use. l Operating System Principles 12. 28 Silberschatz, Galvin and Gagne © 2005

Data Structures for Swapping on Linux Systems This page slot is available Operating System Principles The swapped page is mapped to three different processes 12. 29 Silberschatz, Galvin and Gagne © 2005

12. 7 RAID Structure n RAID -- Redundant Array of Inexpensive (or Independent) Disks l multiple disk drives provides reliability via redundancy n The mean time to failure (loss of data) of a mirrored volume depends on the mean time to failure of the two individual disks and the mean time to repair l If the mean time to failure of a disk is 100, 000 hours and the mean time to repair is 10 hours, then the mean time to loss of data of the mirrored disk system is 1000002/(2*10) = 500 * 106 hours l Handling power failure: 4 Write one copy first, then the next, so that one of the two copies is always consistent 4 Add Operating System Principles a NVRAM (nonvolatile RAM ) cache to the RAID array 12. 30 Silberschatz, Galvin and Gagne © 2005

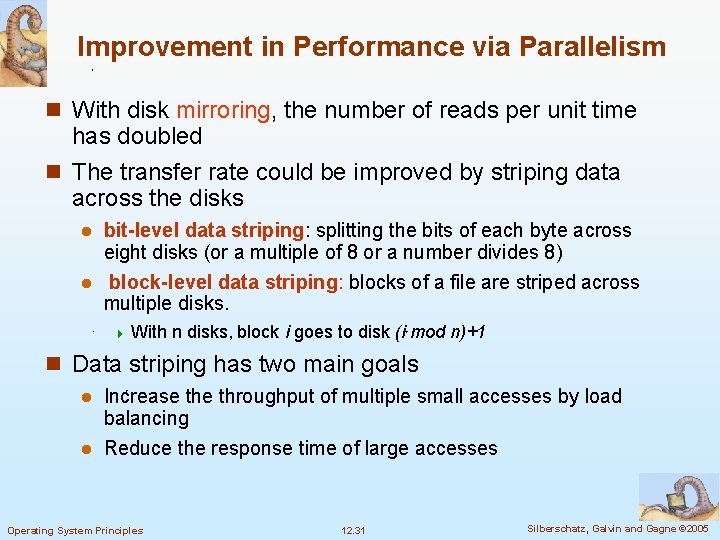

Improvement in Performance via Parallelism n With disk mirroring, the number of reads per unit time has doubled n The transfer rate could be improved by striping data across the disks bit-level data striping: splitting the bits of each byte across eight disks (or a multiple of 8 or a number divides 8) l block-level data striping: blocks of a file are striped across multiple disks. l 4 With n disks, block i goes to disk (i mod n)+1 n Data striping has two main goals Increase throughput of multiple small accesses by load balancing l Reduce the response time of large accesses l Operating System Principles 12. 31 Silberschatz, Galvin and Gagne © 2005

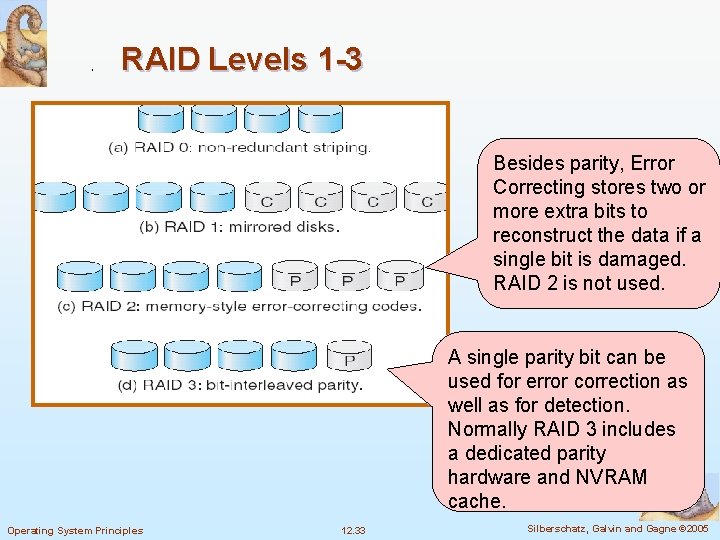

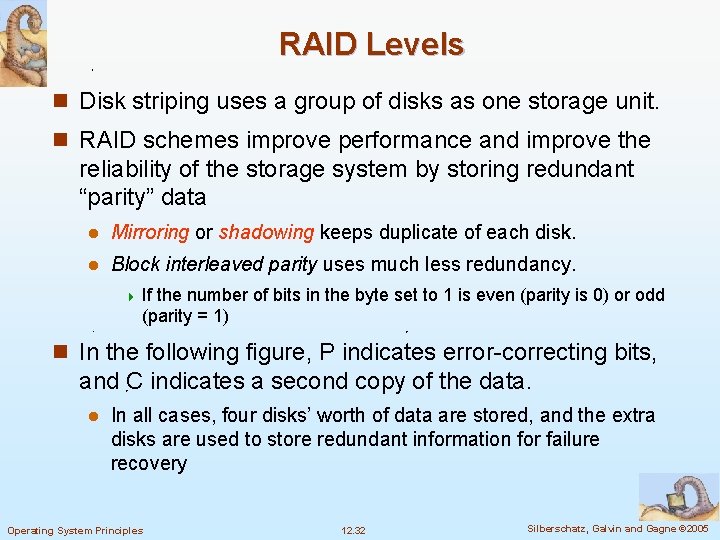

RAID Levels n Disk striping uses a group of disks as one storage unit. n RAID schemes improve performance and improve the reliability of the storage system by storing redundant “parity” data l Mirroring or shadowing keeps duplicate of each disk. l Block interleaved parity uses much less redundancy. 4 If the number of bits in the byte set to 1 is even (parity is 0) or odd (parity = 1) n In the following figure, P indicates error-correcting bits, and C indicates a second copy of the data. l In all cases, four disks’ worth of data are stored, and the extra disks are used to store redundant information for failure recovery Operating System Principles 12. 32 Silberschatz, Galvin and Gagne © 2005

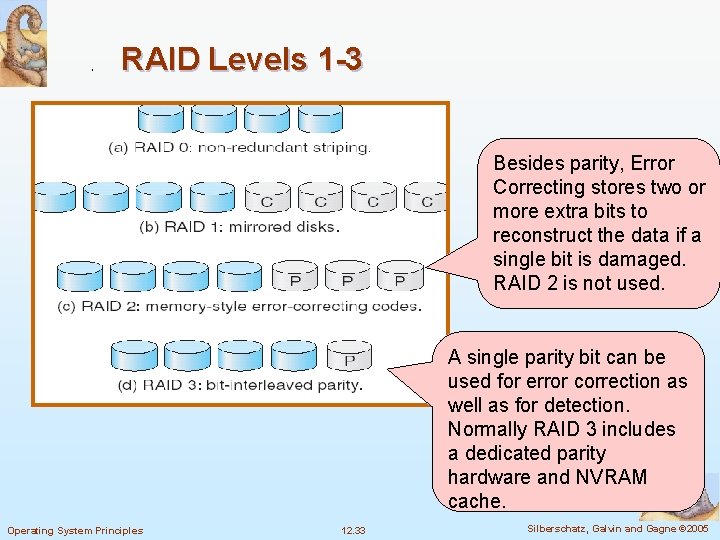

RAID Levels 1 -3 Besides parity, Error Correcting stores two or more extra bits to reconstruct the data if a single bit is damaged. RAID 2 is not used. A single parity bit can be used for error correction as well as for detection. Normally RAID 3 includes a dedicated parity hardware and NVRAM cache. Operating System Principles 12. 33 Silberschatz, Galvin and Gagne © 2005

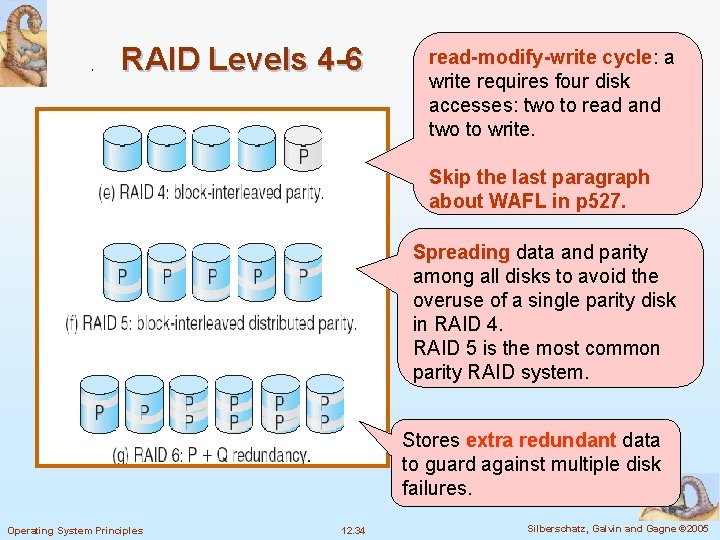

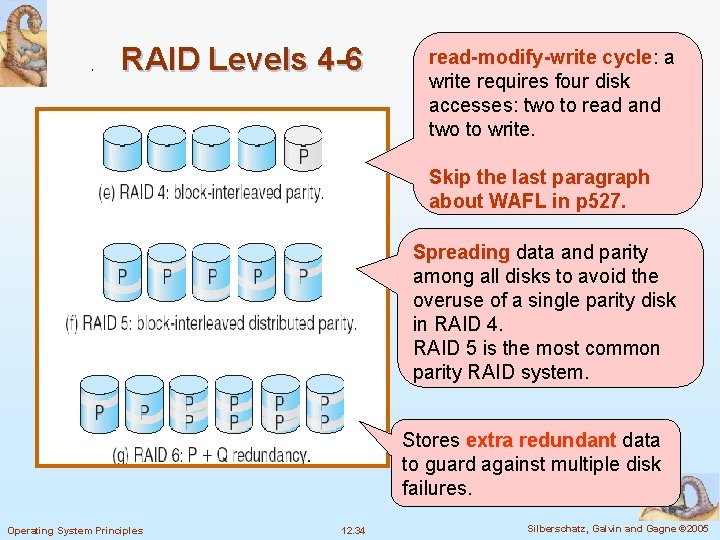

RAID Levels 4 -6 read-modify-write cycle: a write requires four disk accesses: two to read and two to write. Skip the last paragraph about WAFL in p 527. Spreading data and parity among all disks to avoid the overuse of a single parity disk in RAID 4. RAID 5 is the most common parity RAID system. Stores extra redundant data to guard against multiple disk failures. Operating System Principles 12. 34 Silberschatz, Galvin and Gagne © 2005

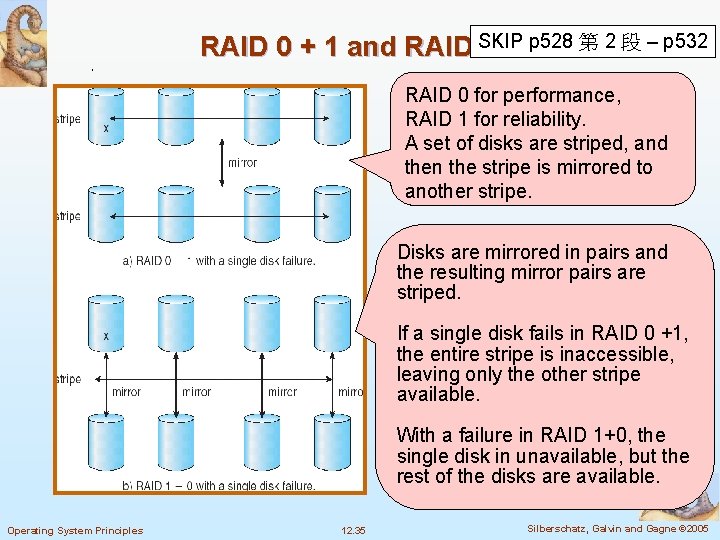

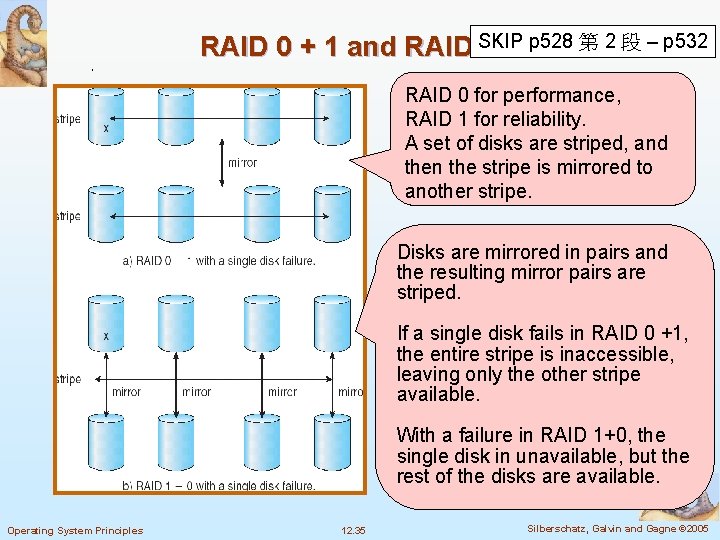

p 528 第 2 段 – p 532 RAID 0 + 1 and RAID SKIP 1+0 RAID 0 for performance, RAID 1 for reliability. A set of disks are striped, and then the stripe is mirrored to another stripe. Disks are mirrored in pairs and the resulting mirror pairs are striped. If a single disk fails in RAID 0 +1, the entire stripe is inaccessible, leaving only the other stripe available. With a failure in RAID 1+0, the single disk in unavailable, but the rest of the disks are available. Operating System Principles 12. 35 Silberschatz, Galvin and Gagne © 2005

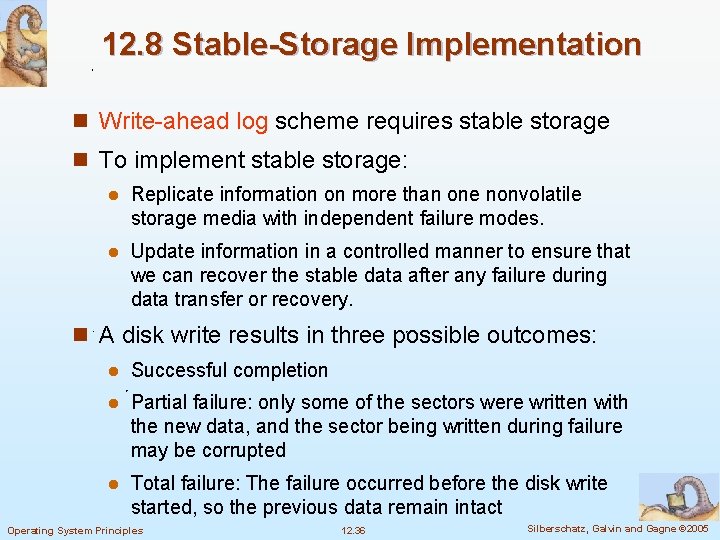

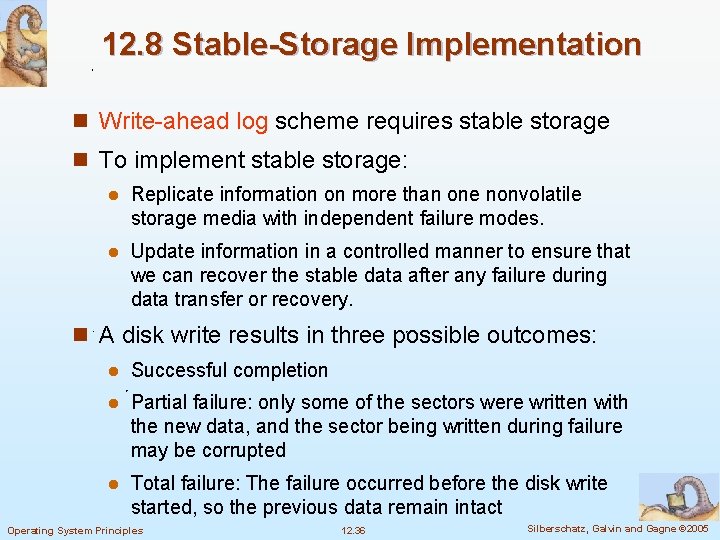

12. 8 Stable-Storage Implementation n Write-ahead log scheme requires stable storage n To implement stable storage: l Replicate information on more than one nonvolatile storage media with independent failure modes. l Update information in a controlled manner to ensure that we can recover the stable data after any failure during data transfer or recovery. n A disk write results in three possible outcomes: l Successful completion l Partial failure: only some of the sectors were written with the new data, and the sector being written during failure may be corrupted l Total failure: The failure occurred before the disk write started, so the previous data remain intact Operating System Principles 12. 36 Silberschatz, Galvin and Gagne © 2005

n To be able to recover from a disk writing failure, the system must maintain two physical blocks for each logical block. An output operation is executed as follows: l Write the data onto the first physical block l When the first write complete successfully, write the same data onto the second physical block l Declare the operation complete only after the second write completes successfully n Recovery process l If both blocks are the same with no error, then no action l If a block contains a detected error, then replace it with the value of the other block l If neither block contains a detectable error, but their contents differ, then replace the content of the first block with that of the second n Performance could be improved by using NVRAM cache Operating System Principles 12. 37 Silberschatz, Galvin and Gagne © 2005

12. 9 Tertiary Storage Devices n Low cost is the defining characteristic of tertiary storage n Generally, tertiary storage is built using removable media n Common examples of removable media are floppy disks and CD-ROMs; other types, like MO (magneto-optic disk) and tapes, are available Operating System Principles 12. 38 Silberschatz, Galvin and Gagne © 2005

Removable Disks n Floppy disk — thin flexible disk coated with magnetic material, enclosed in a protective plastic case l Most floppies hold about 1 MB; similar technology is used for removable disks that hold more than 1 GB l Removable magnetic disks can be nearly as fast as hard disks, but they are at a greater risk of damage from exposure n Optical disks do not use magnetism; they employ special materials that are altered by laser light to have relative dark or bright spots l The phase-change disk drive uses laser light at three different powers 4 Low to read data 4 Medium to erase the disk by melting and refreezing the recording medium into a crystalline state 4 High disk Operating System Principles to melt the medium into the amorphous state to write the 12. 39 Silberschatz, Galvin and Gagne © 2005

Removable Disks n A magneto-optic disk records data on a rigid platter coated with magnetic material. l Laser heat is used to amplify a large, weak magnetic field to record a bit. l Laser light is also used to read data (Kerr effect) 4 The polarization of the laser beam is rotated clockwise or counterclockwise depending on the orientation of the magnetic field. l The magneto-optic head flies much farther from the disk surface than a magnetic disk head, and the magnetic material is covered with a protective layer of plastic or glass; resistant to head crashes Operating System Principles 12. 40 Silberschatz, Galvin and Gagne © 2005

Removable Disks n Read-write disks: The data on these disks can be modified over and over n WORM (“Write Once, Read Many Times”) disks can be written only once. l Thin aluminum film sandwiched between two glass or plastic platters l To write a bit, the drive uses a laser light to burn a small hole through the aluminum; information can be destroyed but not altered l Very durable and reliable n Read Only disks, such ad CD-ROM and DVD, com from the factory with the data pre-recorded by pressing, instead of burning Operating System Principles 12. 41 Silberschatz, Galvin and Gagne © 2005

Tapes n Compared to a disk, a tape is less expensive and holds more data, but random access is much slower l Tape is an economical medium for purposes that do not require fast random access, e. g. , backup copies of disk data, holding huge volumes of data n Large tape installations typically use robotic tape changers that move tapes between tape drives and storage slots in a tape library l stacker – library that holds a few tapes l silo – library that holds thousands of tapes n A disk-resident file can be archived to tape for low cost storage; the computer can stage it back into disk storage for active use. A robotic tape library is called a near-line storage SKIP 12. 9. 1. 3 Operating System Principles 12. 42 Silberschatz, Galvin and Gagne © 2005

Operating System Issues n Major OS jobs are to manage physical devices and to present a virtual machine abstraction to applications n For hard disks, the OS provides two abstraction: l Raw device – an array of data blocks l File system – the OS queues and schedules the interleaved requests from several applications n How about removable storages? Operating System Principles 12. 43 Silberschatz, Galvin and Gagne © 2005

Application Interface n Most OSs handle removable disks almost exactly like fixed disks l A new cartridge is formatted an empty file system is generated on the disk n Tapes are presented as a raw storage medium. An application does not open a file on the tape, it opens the whole tape drive as a raw device l Usually the tape drive is reserved for the exclusive use of that application until the application exits or closes the tape device l Since the OS does not provide file system services, the application must decide how to use the array of blocks l Since every application makes up its own rules for how to organize a tape, a tape full of data can generally only be used by the program that created it Operating System Principles 12. 44 Silberschatz, Galvin and Gagne © 2005

Tape Drives n The basic operations for a tape drive differ from those of a disk drive l locate positions the tape to a specific logical block, not an entire track (corresponds to seek) l The read position operation returns the logical block number where the tape head is l The space operation enables relative motion l Most tape drives have a variable block size, which is determined when the block is written. 4 If an area of defective tape is encountered during writing, that area is skipped and the block is written again l Tape drives are “append-only” devices; updating a block in the middle of the tape also effectively erases everything beyond that block l An EOT mark is placed after a block that is written Operating System Principles 12. 45 Silberschatz, Galvin and Gagne © 2005

File Naming n The issue of naming files on removable media is especially difficult when we want to write data on a removable cartridge on one computer, and then use the cartridge in another computer l Contemporary OSs generally leave the name space problem unsolved for removable media, and depend on applications and users to figure out how to access and interpret the data. n Some kinds of removable media (e. g. , CDs and DVDs) are so well standardized that all computers use them the same way Operating System Principles 12. 46 Silberschatz, Galvin and Gagne © 2005

Hierarchical Storage Management (HSM) n A hierarchical storage system extends the storage hierarchy beyond primary memory and secondary storage to incorporate tertiary storage — usually implemented as a jukebox of tapes or removable disks n Usually incorporate tertiary storage by extending the file system l Small and frequently used files remain on disk. l Large, old, inactive files are archived to the jukebox. n HSM is usually found in supercomputing centers and other large installations that have enormous volumes of data Operating System Principles 12. 47 Silberschatz, Galvin and Gagne © 2005

Speed Performance Issues: Speed, Reliability, Cost n Two aspects of speed in tertiary storage are bandwidth and latency n Bandwidth is measured in bytes per second l Sustained bandwidth – average data rate during a large transfer; # of bytes/transfer time 4 Data l rate when the data stream is actually flowing Effective bandwidth – average over the entire I/O time, including seek or locate, and cartridge switching 4 Drive’s Operating System Principles overall data rate 12. 48 Silberschatz, Galvin and Gagne © 2005

n Access latency – amount of time needed to locate data. l Access time for a disk – move the arm to the selected cylinder and wait for the rotational latency; < 5 milliseconds l Access on tape requires winding the tape reels until the selected block reaches the tape head; tens or hundreds of seconds l Generally say that random access within a tape cartridge is about a thousand times slower than random access on disk l If a jukebox is involved, the access latency is much higher n The low cost of tertiary storage is a result of having many cheap cartridges share a few expensive drives n A removable library is best devoted to the storage of infrequently used data, because the library can only satisfy a relatively small number of I/O requests per hour Operating System Principles 12. 49 Silberschatz, Galvin and Gagne © 2005

Reliability n A fixed disk drive is likely to be more reliable than a removable disk or tape drive n An optical cartridge is likely to be more reliable than a magnetic disk or tape n A head crash in a fixed hard disk generally destroys the data, whereas the failure of a tape drive or optical disk drive often leaves the data cartridge unharmed Operating System Principles 12. 50 Silberschatz, Galvin and Gagne © 2005

Cost n Main memory is much more expensive than disk storage n The cost per megabyte of hard disk storage is competitive with magnetic tape if only one tape is used per drive n The cheapest tape drives and the cheapest disk drives have had about the same storage capacity over the years n Tertiary storage gives a cost savings only when the number of cartridges is considerably larger than the number of drives Operating System Principles 12. 51 Silberschatz, Galvin and Gagne © 2005

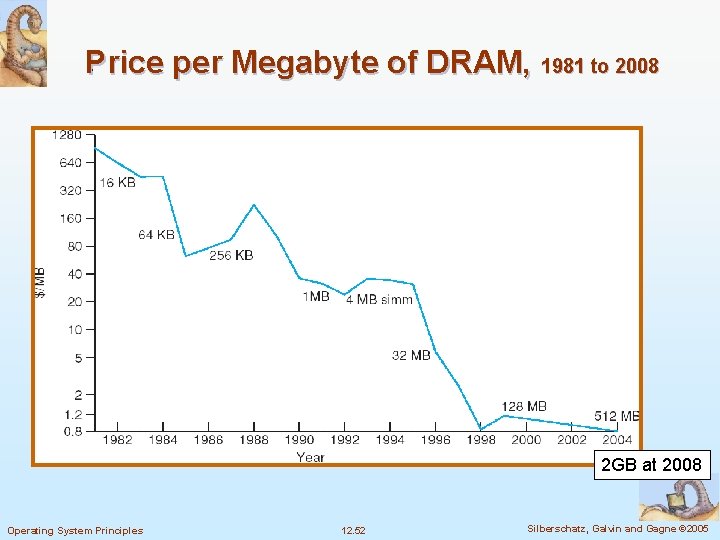

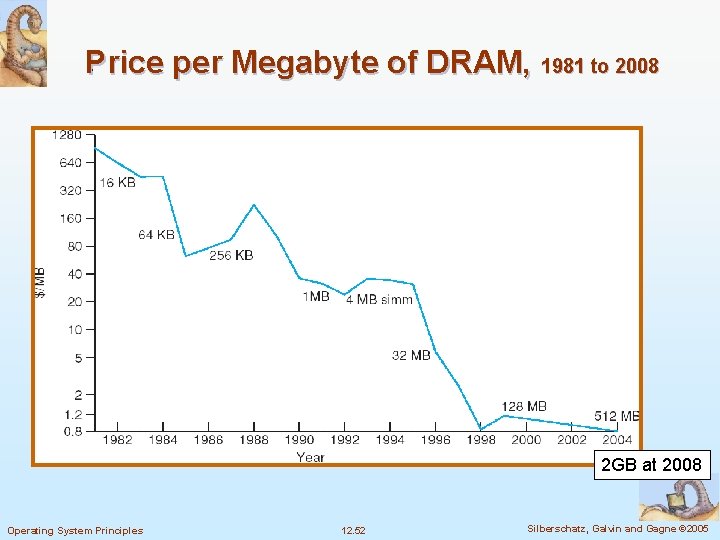

Price per Megabyte of DRAM, 1981 to 2008 2 GB at 2008 Operating System Principles 12. 52 Silberschatz, Galvin and Gagne © 2005

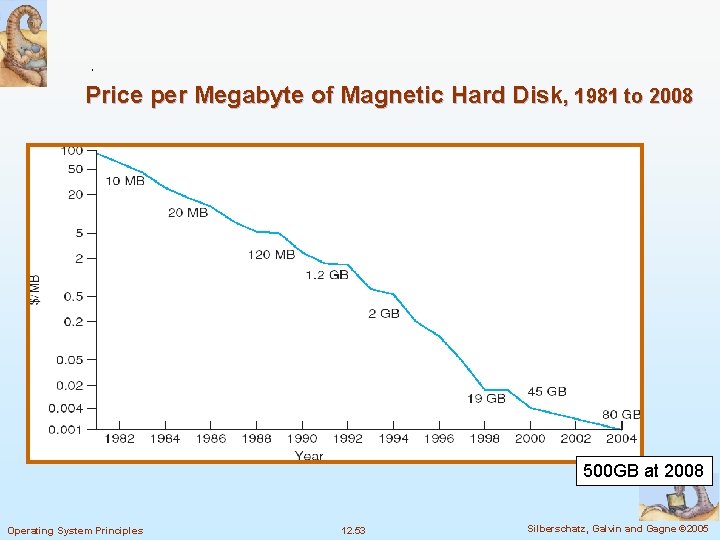

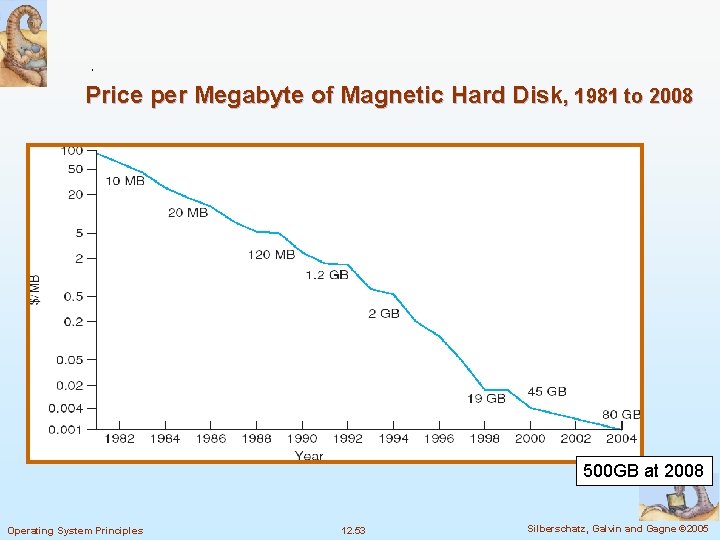

Price per Megabyte of Magnetic Hard Disk, 1981 to 2008 500 GB at 2008 Operating System Principles 12. 53 Silberschatz, Galvin and Gagne © 2005

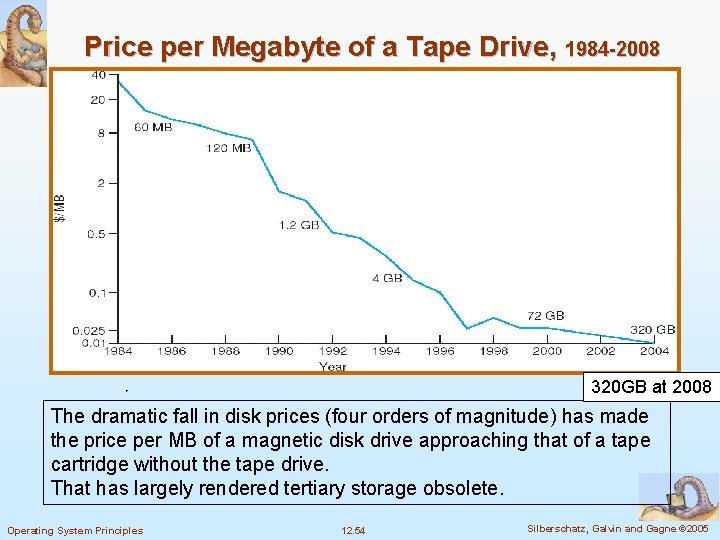

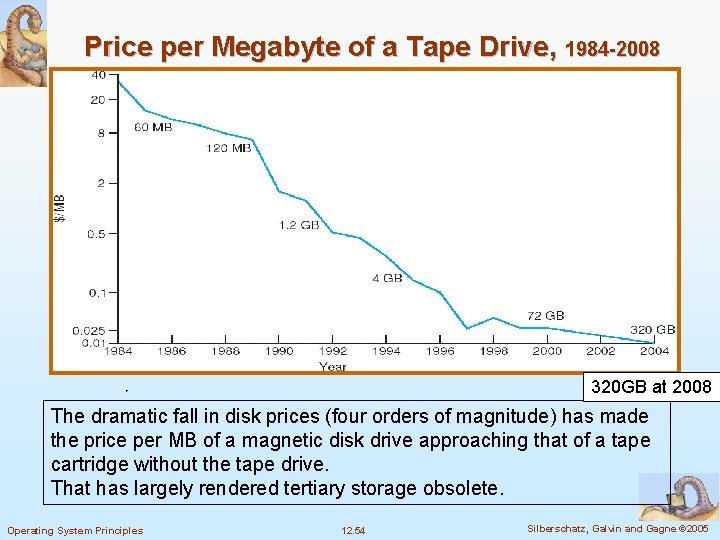

Price per Megabyte of a Tape Drive, 1984 -2008 320 GB at 2008 The dramatic fall in disk prices (four orders of magnitude) has made the price per MB of a magnetic disk drive approaching that of a tape cartridge without the tape drive. That has largely rendered tertiary storage obsolete. Operating System Principles 12. 54 Silberschatz, Galvin and Gagne © 2005