CAM CONSTRAINTAWARE APPLICATION MAPPING FOR EMBEDDED SYSTEMS Luis

CAM: CONSTRAINT-AWARE APPLICATION MAPPING FOR EMBEDDED SYSTEMS Luis A. Bathen, Nikil D. Dutt

Outline 2 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining Customized Security Policy Generation Related Work Conclusion CASA '10 9/16/2020

Outline 3 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining Customized Security Policy Generation Related Work Conclusion CASA '10 9/16/2020

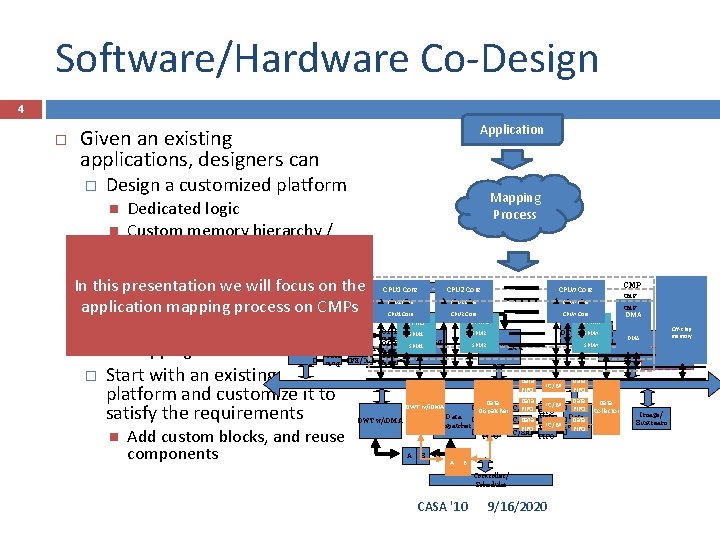

Software/Hardware Co-Design 4 Application Given an existing applications, designers can Design a customized platform Take an existing platform and Data Collector Start with an existing platform and customize it to satisfy the requirements CPU 2 Core CPUn Core CPU 1 Core CPU 2 Core Data BPC/BAC FIFO Data BPC/BAC FIFO � CPU 1 Core Add custom blocks, and reuse components CPUn Core SPM 2 SPM 1 SPMn DC_LS, SPMn MCT, … B CMP Off-chip memory Off-chip CMP DMA memory Off-chip memory DMA CPU Data BPC/BAC FIFO Data Data BPC/BAC FIFO Dispatcher Data FIFO BPC/BAC FIFO DWT w/i. DMA A CMP Tier 2 AMBA 2. 0 DWT w/i. DMA SPMn DWT w/i. DMA � In this presentation will focus on the efficiently mapwe the application mapping application on itprocess on CMPs Data allocation and Task mapping Data Dispatcher Mapping Process Dedicated logic Custom memory hierarchy / communication architecture B A � Controller/ Scheduler A Data BPC/BAC FIFO Collector FIFO Data BPC/BAC FIFO Data Collector FIFO B Controller/ Scheduler CASA '10 9/16/2020 Image/ Bitstream

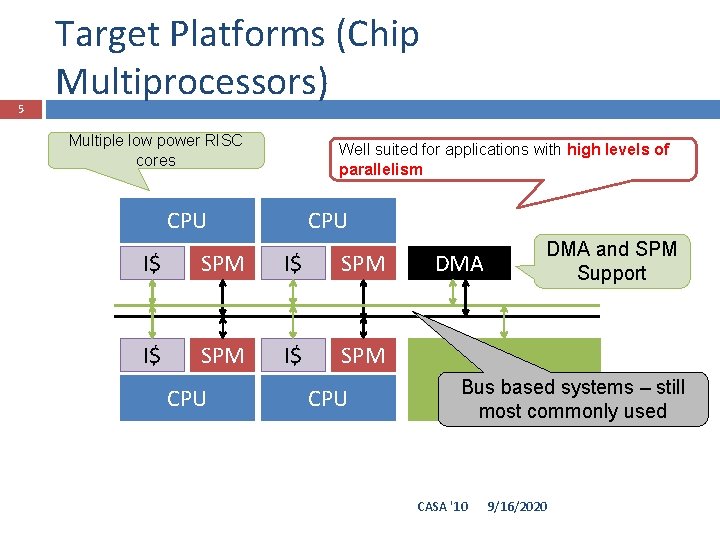

5 Target Platforms (Chip Multiprocessors) Multiple low power RISC cores Well suited for applications with high levels of parallelism CPU I$ SPM CPU DMA and SPM Support RAM Bus based systems – still most commonly used CASA '10 9/16/2020

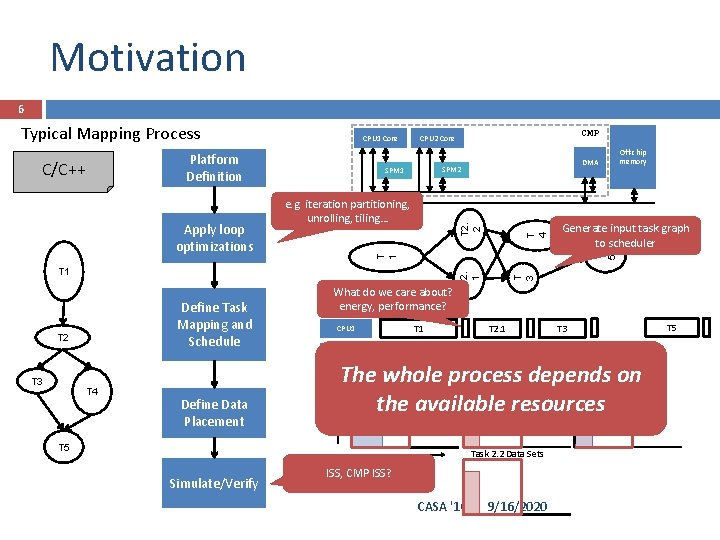

Motivation 6 CPU 1 Core Platform Definition SPM 2 SPM 1 T 2. 2 e. g. iteration partitioning, unrolling, tiling… T 2 T 3 T 4 Define Data Placement T 1 CPU 2 T 2. 1 T 2. 2 T 5 T 3 T 4 The whole process depends on Task 3 Data Sets Task 1 Data Sets Task 2. 1 Data Sets the available resources T 5 Task 2. 2 Data Sets Size Simulate/Verify Generate input task graph to scheduler T 5 What do we care about? energy, performance? Time Define Task Mapping and Schedule Off-chip memory T 3 T 1 T 2. 1 Apply loop optimizations DMA T 1 C/C++ CMP CPU 2 Core T 4 Typical Mapping Process ISS, CMP ISS? CASA '10 9/16/2020

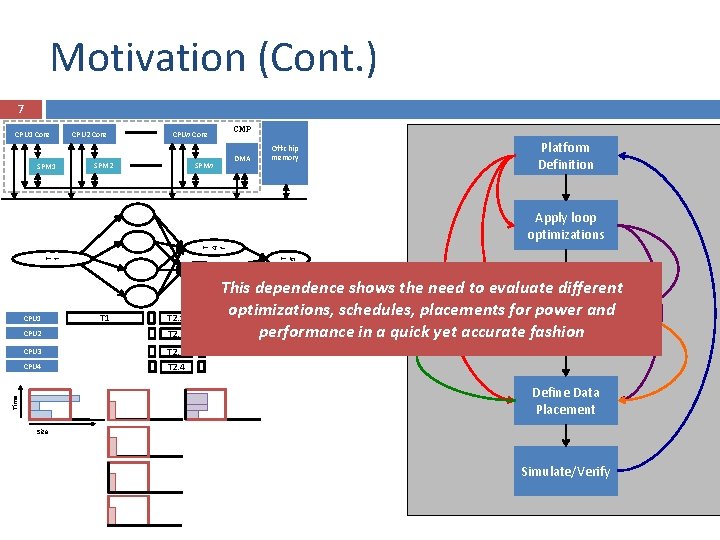

Motivation (Cont. ) 7 CPU 1 Core SPM 1 CPU 2 Core CMP CPUn Core SPM 2 DMA SPMn Platform Definition Off-chip memory T 3 T 5 T 1 T 4 f Apply loop optimizations CPU 1 T 2. 1 CPU 2 T 2. 2 CPU 3 T 2. 3 CPU 4 T 2. 4 This dependence shows the need to evaluate different Define Task optimizations, schedules, placements for power and Mapping and T 5 T 3 Schedule T 4 performance in a quick yet accurate fashion Time Define Data Placement Size Simulate/Verify CASA '10 9/16/2020

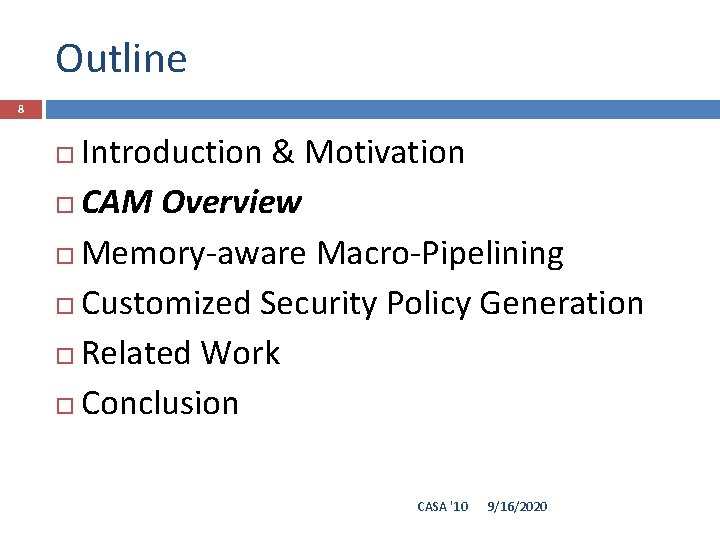

Outline 8 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining Customized Security Policy Generation Related Work Conclusion CASA '10 9/16/2020

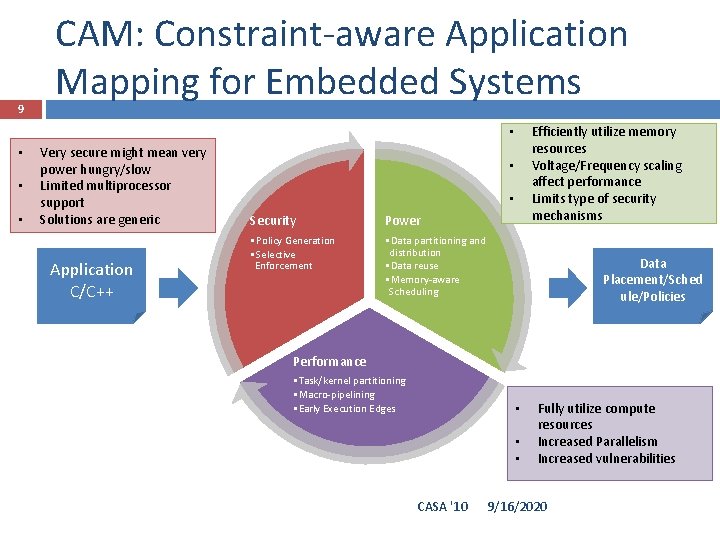

9 CAM: Constraint-aware Application Mapping for Embedded Systems Efficiently utilize memory resources Voltage/Frequency scaling affect performance Limits type of security mechanisms • • Very secure might mean very power hungry/slow Limited multiprocessor support Solutions are generic Application C/C++ • • Security Power • Policy Generation • Selective Enforcement • Data partitioning and distribution • Data reuse • Memory-aware Scheduling Data Placement/Sched ule/Policies Performance • Task/kernel partitioning • Macro-pipelining • Early Execution Edges • • • CASA '10 Fully utilize compute resources Increased Parallelism Increased vulnerabilities 9/16/2020

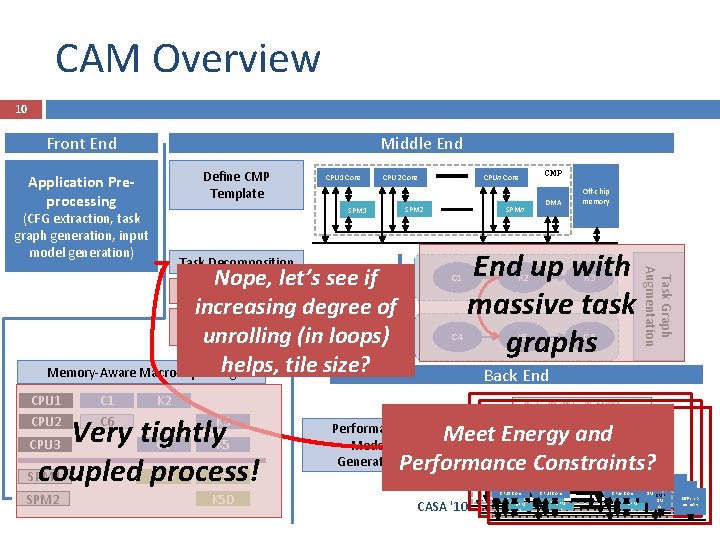

CAM Overview 10 Front End Middle End Define CMP Template Application Preprocessing CPU 1 Core CPU 2 Core SPM 1 (CFG extraction, task graph generation, input model generation) SPM 2 C 6 C 4 DMA Off-chip memory End up with massive task graphs K 2 K 3 K 5 C 6 Back End K 2 CPU 3 Very tightly C 4 K 5 coupled process! SPM 1 K 2 D K 3 D CPU 2 C 1 CMP Task Graph Augmentation C 1 SPMn Task Decomposition Task Nope, let’s see 1 if Data Reuse Analysis increasing degree of Early Execution Edge Task Generation unrolling (in loops) 2 helps, tile size? Memory-Aware Macro-Pipelining CPU 1 CPUn Core K 3 K 5 D Meet Energy and Performance Constraints? Performance Model Generation CASA '10 CPU 2 Core CPU 1 Core SPM CPU 2 Core CPU 1 Core 2 SPM 1 SPM 2 1 9/16/2020 CPUn Core CMP DM CMP Off-chip CPUn Core SPM memory Off-chip A DM CPUn Core CMP memory n SPM Off-chip A DM n SPM memory A n

Outline 11 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining �ESTImedia ‘ 08, ’ 09 Customized Security Policy Generation Related Work Conclusion CASA '10 9/16/2020

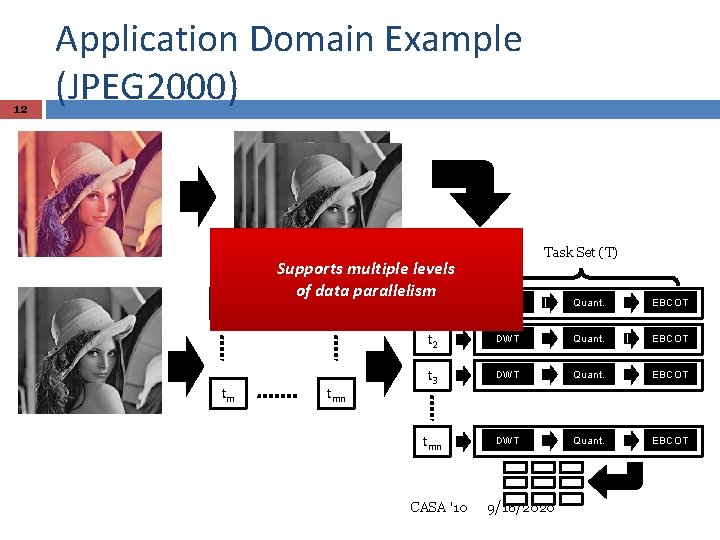

12 Application Domain Example (JPEG 2000) t 1 tm t 2 Supports multiple levels of data parallelism tn tmn Task Set (T) t 1 DWT Quant. EBCOT t 2 DWT Quant. EBCOT t 3 DWT Quant. EBCOT tmn DWT Quant. EBCOT CASA '10 9/16/2020

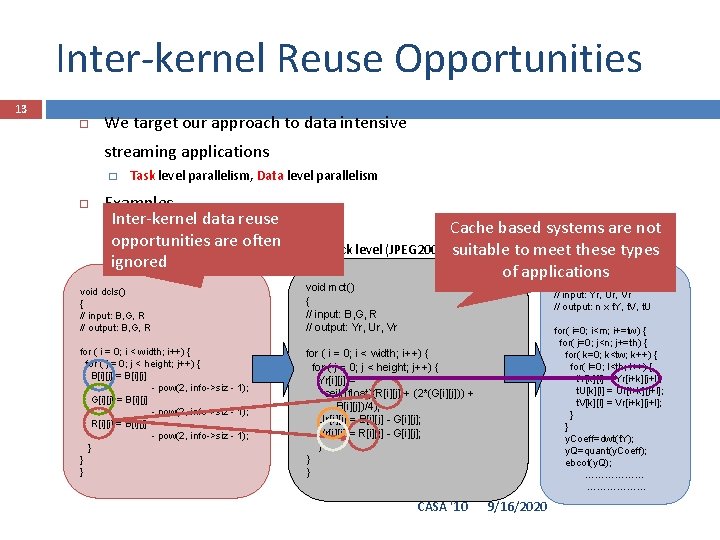

Inter-kernel Reuse Opportunities 13 We target our approach to data intensive streaming applications � Task level parallelism, Data level parallelism Examples Inter-kernel data reuse � Macroblock level (H. 264) Cache based systems are not opportunities are often � Component level, Tile level, Code block level (JPEG 2000) suitable to meet these types ignored void tiling() of applications { // input: Yr, Ur, Vr // output: n x t. Y, t. V, t. U void dcls() { // input: B, G, R // output: B, G, R void mct() { // input: B, G, R // output: Yr, Ur, Vr for ( i = 0; i < width; i++) { for ( j = 0; j < height; j++) { B[i][j] = B[i][j] - pow(2, info->siz - 1); G[i][j] = B[i][j] - pow(2, info->siz - 1); R[i][j] = B[i][j] - pow(2, info->siz - 1); } } } for ( i = 0; i < width; i++) { for ( j = 0; j < height; j++) { Yr[i][j] = ceil((float)(R[i][j] + (2*(G[i][j])) + B[i][j])/4); Ur[i][j] = B[i][j] - G[i][j]; Vr[i][j] = R[i][j] - G[i][j]; } } } CASA '10 for( i=0; i<m; i+=tw) { for( j=0; j<n; j+=th) { for( k=0; k<tw; k++) { for( l=0; l<th; l++) { t. Y[k][l] = Yr[i+k][j+l]; t. U[k][l] = Ur[i+k][j+l]; t. V[k][l] = Vr[i+k][j+l]; } } y. Coeff=dwt(t. Y); y. Q=quant(y. Coeff); ebcot(y. Q); ………………. . . 9/16/2020

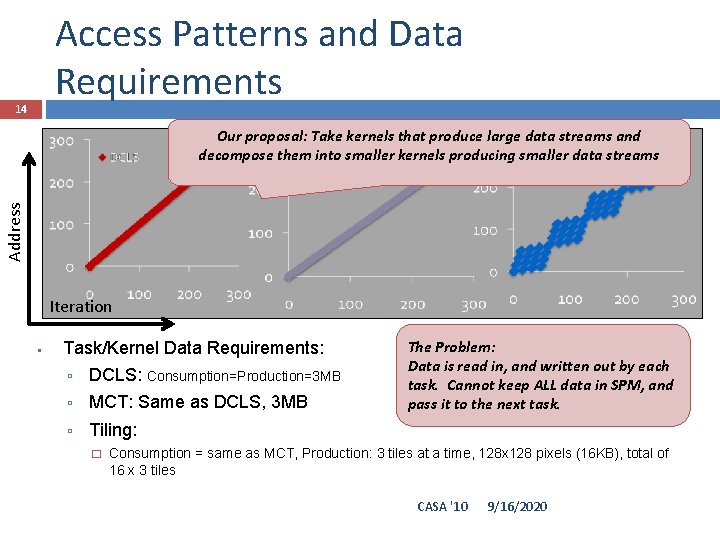

Access Patterns and Data Requirements 14 Address Our proposal: Take kernels that produce large data streams and decompose them into smaller kernels producing smaller data streams Iteration Task/Kernel Data Requirements: DCLS: Consumption=Production=3 MB MCT: Same as DCLS, 3 MB Tiling: � The Problem: Data is read in, and written out by each task. Cannot keep ALL data in SPM, and pass it to the next task. Consumption = same as MCT, Production: 3 tiles at a time, 128 x 128 pixels (16 KB), total of 16 x 3 tiles CASA '10 9/16/2020

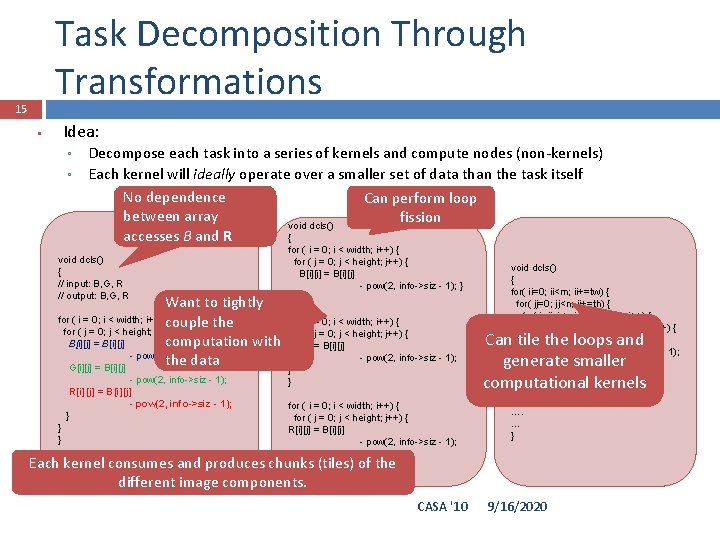

Task Decomposition Through Transformations 15 Idea: Decompose each task into a series of kernels and compute nodes (non-kernels) Each kernel will ideally operate over a smaller set of data than the task itself No dependence Can perform loop between array fission void dcls() accesses B and R { void dcls() { // input: B, G, R // output: B, G, R Want to tightly for ( i = 0; i < width; i++) couple { the for ( j = 0; j < height; j++) { computation with B[i][j] = B[i][j] - pow(2, info->siz - 1); the data G[i][j] = B[i][j] - pow(2, info->siz - 1); R[i][j] = B[i][j] - pow(2, info->siz - 1); } } } for ( i = 0; i < width; i++) { for ( j = 0; j < height; j++) { B[i][j] = B[i][j] - pow(2, info->siz - 1); } } for ( i = 0; i < width; i++) { for ( j = 0; j < height; j++) { G[i][j] = B[i][j] - pow(2, info->siz - 1); } } for ( i = 0; i < width; i++) { for ( j = 0; j < height; j++) { R[i][j] = B[i][j] - pow(2, info->siz - 1); } } } void dcls() { for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { B[i][j-i] = B[i][j-i] - pow(2, info->siz - 1); } } } …. …. … } Can tile the loops and generate smaller computational kernels Each kernel consumes and produces chunks (tiles) of the different image components. CASA '10 9/16/2020

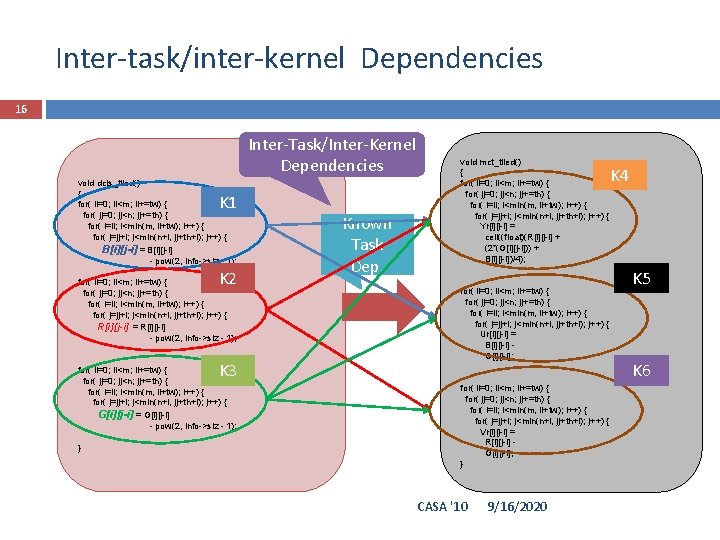

Inter-task/inter-kernel Dependencies 16 Inter-Task/Inter-Kernel Dependencies void dcls_tiled() { for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { B[i][j-i] = B[i][j-i] - pow(2, info->siz - 1); K 1 K 2 for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { R[i][j-i] = R[i][j-i] - pow(2, info->siz - 1); K 3 for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { G[i][j-i] = G[i][j-i] - pow(2, info->siz - 1); } Known Task Dep. void mct_tiled() { for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { Yr[i][j-i] = ceil((float)(R[i][j-i] + (2*(G[i][j-i])) + B[i][j-i])/4); for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { Ur[i][j-i] = B[i][j-i] G[i][j-i]; for( ii=0; ii<m; ii+=tw) { for( jj=0; jj<n; jj+=th) { for( i=ii; i<min(m, ii+tw); i++) { for( j=jj+i; j<min(n+i, jj+th+i); j++) { Vr[i][j-i] = R[i][j-i] G[i][j-i]; } CASA '10 9/16/2020 K 4 K 5 K 6

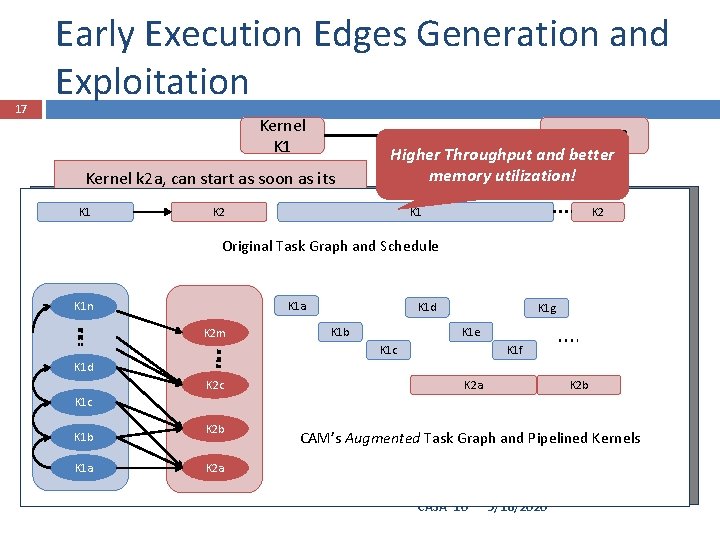

17 Early Execution Edges Generation and Exploitation Kernel K 1 Kernel k 2 a, can start as soon as its dependencies (Kernel iterations K 1 a, K 1 K 2 K 1 b, K 1 c finish their execution) Kernel K 2 Higher Throughput and better memory utilization! K 1 K 2 Original Task Graph and Schedule K 1 d K 1 c. K 1 a K 1 n K 2 m K 1 b K 1 d K 2 c K 1 d K 1 g K 1 e K 1 b K 1 c K 1 f K 2 a K 1 c K 1 b K 1 a K 2 b K 2 a K 2 b CAM’s Augmented Task Graph and Pipelined Kernels K 2 a CASA '10 9/16/2020

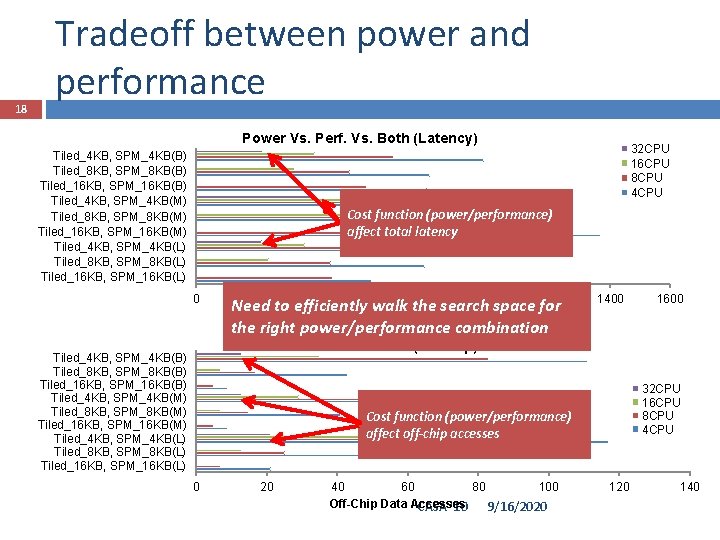

18 Tradeoff between power and performance Power Vs. Perf. Vs. Both (Latency) Tiled_4 KB, SPM_4 KB(B) Tiled_8 KB, SPM_8 KB(B) Tiled_16 KB, SPM_16 KB(B) Tiled_4 KB, SPM_4 KB(M) Tiled_8 KB, SPM_8 KB(M) Tiled_16 KB, SPM_16 KB(M) Tiled_4 KB, SPM_4 KB(L) Tiled_8 KB, SPM_8 KB(L) Tiled_16 KB, SPM_16 KB(L) 32 CPU 16 CPU 8 CPU 4 CPU Cost function (power/performance) affect total latency 0 200 400 600 800 1000 Need to efficiently walk the search space 1200 for Millions the right power/performance combination 1400 1600 Power Vs. Perf. Vs. Both (Off-chip) Tiled_4 KB, SPM_4 KB(B) Tiled_8 KB, SPM_8 KB(B) Tiled_16 KB, SPM_16 KB(B) Tiled_4 KB, SPM_4 KB(M) Tiled_8 KB, SPM_8 KB(M) Tiled_16 KB, SPM_16 KB(M) Tiled_4 KB, SPM_4 KB(L) Tiled_8 KB, SPM_8 KB(L) Tiled_16 KB, SPM_16 KB(L) 32 CPU 16 CPU 8 CPU 4 CPU Cost function (power/performance) affect off-chip accesses 0 20 40 60 80 100 Off-Chip Data Accesses CASA '10 9/16/2020 140

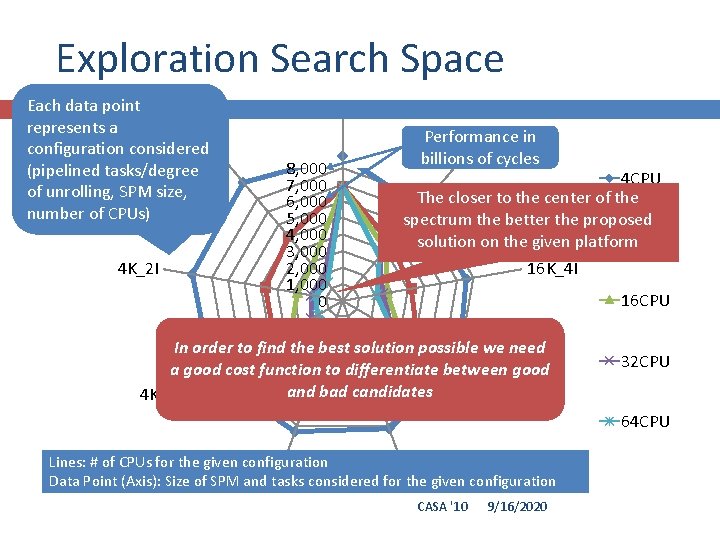

Exploration Search Space 19 Each data point represents a configuration considered 4 K_4 I (pipelined tasks/degree of unrolling, SPM size, number of CPUs) 4 K_2 I 16 K_1 I 8, 000 7, 000 6, 000 5, 000 4, 000 3, 000 2, 000 1, 000 0 Performance in 16 K_2 I billions of cycles 4 CPU The closer to the center of the spectrum the better the proposed 8 CPU solution on the given platform 16 K_4 I 16 CPU In order to find the best solution possible we need a good cost function to differentiate between good and bad candidates 4 K_1 I 8 K_1 I 32 CPU 64 CPU Lines: # of CPUs for the given configuration 8 K_2 I Data Point (Axis): Size of SPM 8 K_4 I and tasks considered for the given configuration CASA '10 9/16/2020

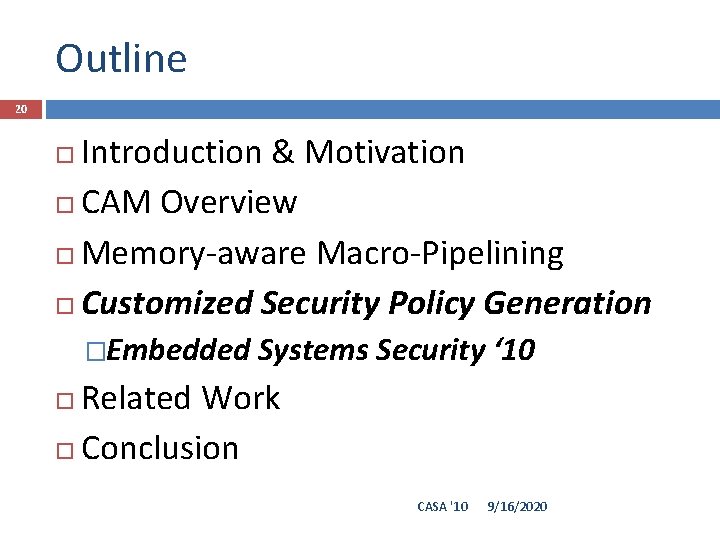

Outline 20 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining Customized Security Policy Generation �Embedded Systems Security ‘ 10 Related Work Conclusion CASA '10 9/16/2020

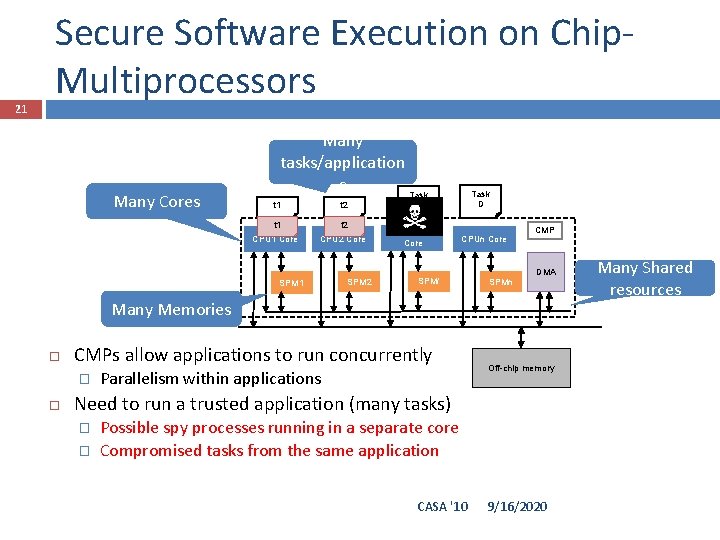

21 Secure Software Execution on Chip. Multiprocessors Many Cores Many tasks/application s Task t 1 t 2 CPU 1 Core CPU 2 Core SPM 1 SPM 2 Task D C CPUi Core CPUn Core SPMi SPMn CMP DMA Many Memories CMPs allow applications to run concurrently � Parallelism within applications Off-chip memory Need to run a trusted application (many tasks) � � Possible spy processes running in a separate core Compromised tasks from the same application CASA '10 9/16/2020 Many Shared resources

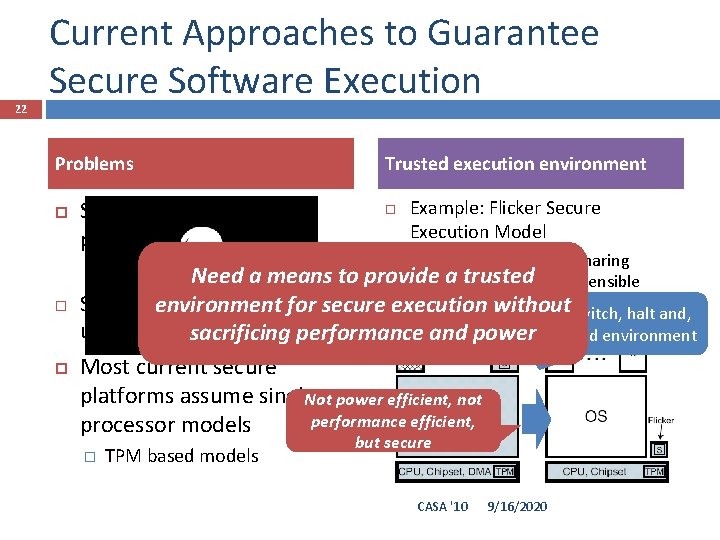

22 Current Approaches to Guarantee Secure Software Execution Problems Side-channel attacks are possible in CMP systems Example: Flicker Secure Execution Model eliminate resource sharing during execution of sensible code without Softwareenvironment exploits leverage for secure execution Context switch, halt and, use of C legacy code performance and power sacrificing build trusted environment � Trusted execution environment � Through resource Need asharing means to provide a trusted Most current secure platforms assume single. Not power efficient, not performance efficient, processor models � TPM based models but secure CASA '10 9/16/2020

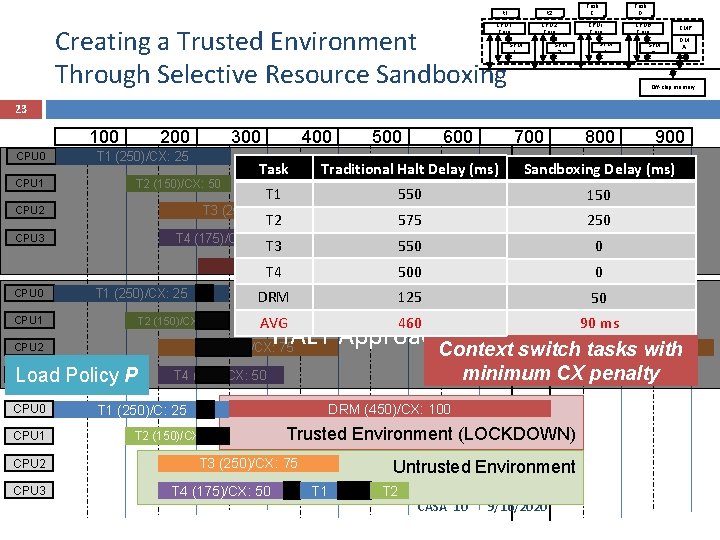

Task C t 1 t 2 CPU 1 Core CPU 2 Core Creating a Trusted Environment Through Selective Resource Sandboxing SPM 2 SPM 1 Task D CPUi Core SPM i CPUn Core CMP SPM n DM A Off-chip memory 23 100 CPU 1 200 T 1 (250)/CX: 25 T 2 (150)/CX: 50 T 1 (250)/CX: 25 CPU 1 Load Policy P CPU 1 CPU 2 CPU 3 700 800 900 Sandboxing Delay (ms) T 1 550 T 1 150 T 2 575 T 2 250 T 3 550 T 3 0 T 4 DRM (450)/CX: 100 500 T 4 0 DRM T 1 50 125 AVG DRM (450)/CX: 460 100 AVG 90 ms. T 2 HALT Approach. Context switch tasks with T 3 (250)/CX: 75 CPU 2 CPU 0 600 Traditional Halt Delay (ms) Task DRM T 2 (150)/CX: 50 500 Task T 4 (175)/CX: 50 CPU 3 400 T 3 (250)/CX: 75 CPU 2 CPU 0 300 T 4 minimum CX penalty T 4 (175)/CX: 50 DRM (450)/CX: 100 T 1 (250)/C: 25 T 2 (150)/CX: 50 Trusted Environment (LOCKDOWN) T 3 (250)/CX: 75 T 4 (175)/CX: 50 Untrusted Environment T 1 T 2 CASA '10 9/16/2020

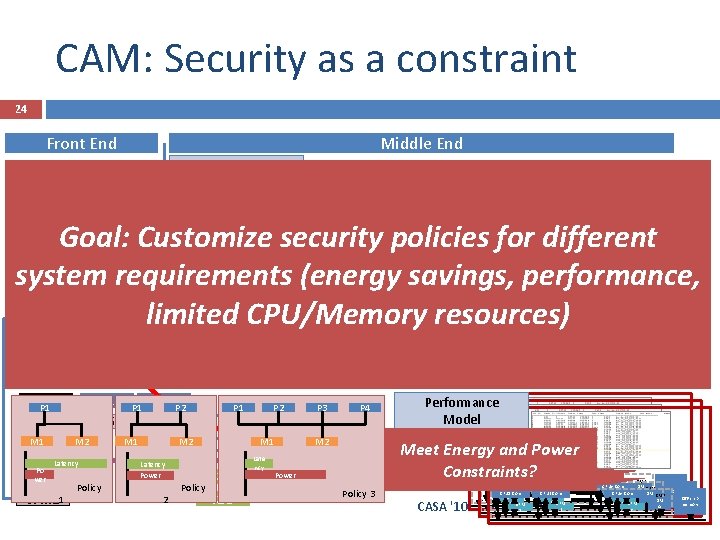

CAM: Security as a constraint 24 Front End Middle End Define CMP Application Pre. Template processing (CFG extraction, taskpolicies Done creating Task Decomposition graph generation, input given power/performance Data Reuse model requirements? generation) CPU 1 Core CPU 2 Core CPUn Core CMP Nope, let us generate a policy using. Off-chip memory DMA SPM 2 SPMn SPM 1 more/less resources – re-define CMP Task 1 loops) helps, tile size? Secure Policy Generation (Schedule + Mapping) CPU 1 P 1 C 6 CPU 2 M 1 CPU 3 Po M 2 Latency SPM 1 wer SPM 21 Policy P 1 M 1 K 2 P 2 C 4 M 2 Latency Power K 2 D 2 Policy K 3 Task 2 unsec buf 1 sec local buf 2. 1 K 2 C 1 t 2 t 1 sec shared Buf 2. 2 sec local K 5 var C 4 K 3 C 6 Task Graph Augmentation Goal: Customize security policies for different Early Execution Edge system requirements (energy savings, performance, Generation Nope, let’s see if increasing limited CPU/Memory resources) degree. Security of unrolling (in Requirements Back End P 1 K 5 K 3 D K 5 D P 2 M 1 Late ncy P 3 P 4 M 2 Performance Model Generation Meet Energy and Power Constraints? Power Policy 3 CASA '10 CPU 2 Core CPU 1 Core SPM CPU 2 Core CPU 1 Core 2 SPM 1 SPM 2 1 9/16/2020 CPUn Core CMP DM CMP Off-chip CPUn Core SPM memory Off-chip A DM CPUn Core CMP memory n SPM Off-chip A DM n SPM memory A n

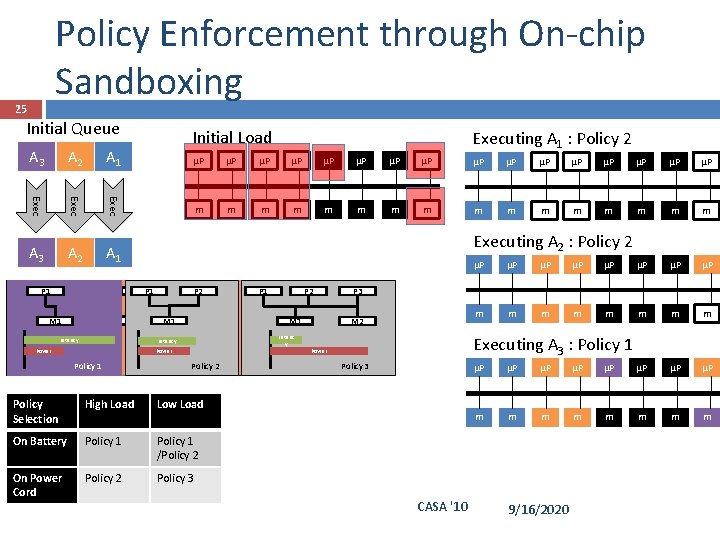

Policy Enforcement through On-chip Sandboxing 25 Initial Queue A 3 A 2 A 1 Exec A 3 A 2 A 1 P 1 P 1 M 1 M 2 Initial Load Executing A 1 : Policy 2 μP μP μP μP m m m m Executing A 2 : Policy 2 P 1 P 1 M 1 Latency P 2 P 2 P 2 M 1 M 2 Power Policy 1 1 Policy 2 22 Policy Selection High Load Low Load On Battery Policy 1 /Policy 2 On Power Cord Policy 2 Policy 3 P 2 P 2 P 1 M 1 Latency Latency Power P 1 P 3 M 1 M 2 Latenc y Power μP μP m m m m P 4 P 3 P 4 M 3 M 2 Executing A 3 : Policy 1 Power Policy 33 3 CASA '10 μP μP m m m m 9/16/2020

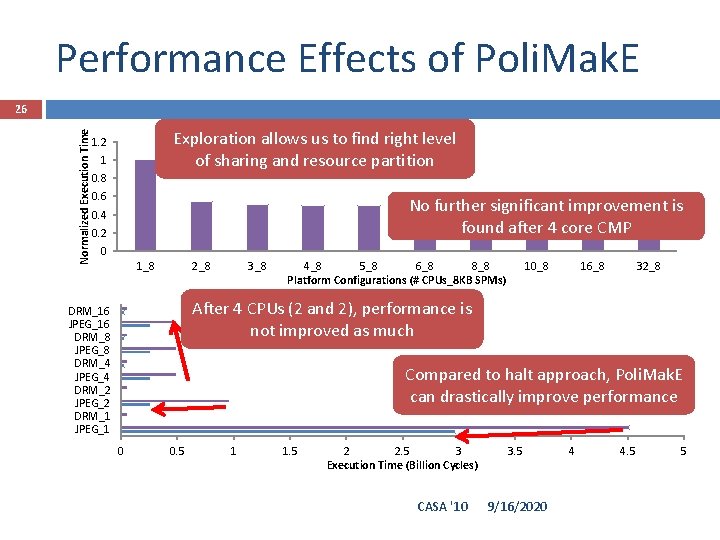

Performance Effects of Poli. Mak. E Normalized Execution Time 26 Exploration allows us to find right Time level Normalized Execution of sharing and resource partition 1. 2 1 0. 8 0. 6 No further significant improvement is found after 4 core CMP 0. 4 0. 2 0 1_8 2_8 3_8 4_8 5_8 6_8 8_8 Platform Configurations (# CPUs_8 KB SPMs) 10_8 16_8 32_8 Halt Approachis After 4 CPUs (2 Poli. Mak. E and 2), Vs. performance Halt as much Poli. Mak. E not improved DRM_16 JPEG_16 DRM_8 JPEG_8 DRM_4 JPEG_4 DRM_2 JPEG_2 DRM_1 JPEG_1 Compared to halt approach, Poli. Mak. E can drastically improve performance 0 0. 5 1 1. 5 2 2. 5 3 Execution Time (Billion Cycles) CASA '10 3. 5 9/16/2020 4 4. 5 5

Outline 27 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining Customized Security Policy Generation Related Work Conclusion CASA '10 9/16/2020

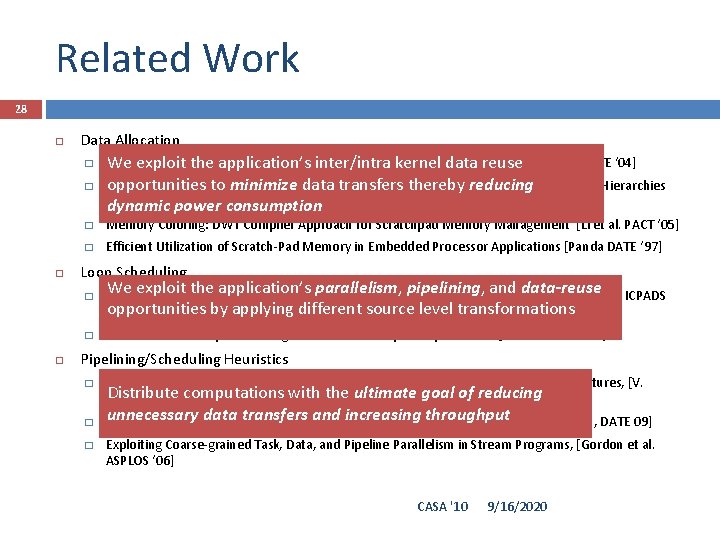

Related Work 28 Data Allocation � � Data Analysis Technique for Software-Controlled Memory We Reuse exploit the application’s inter/intra kernel data. Hierarchies reuse [Issein DATE ‘ 04] opportunities to minimize data transfers thereby reducing Multiprocessor System-on-Chip Data Reuse Analysis for Exploring Customized Memory Hierarchies [Issenin DACpower ‘ 06] dynamic consumption � Memory Coloring: DWT Compiler Approach for Scratchpad Memory Management [Li et al. PACT ‘ 05] � Efficient Utilization of Scratch-Pad Memory in Embedded Processor Applications [Panda DATE ‘ 97] Loop Scheduling We exploit the application’s parallelism, pipelining, and data-reuse � Loop Scheduling with Complete Memory Latency Hiding on Multi-core Architecture [C. Xue ICPADS opportunities by applying different source level transformations ’ 04] � SPM Conscious Loop Scheduling for Embedded Chip Multiprocessors [L. Xue ICPADS ‘ 06] Pipelining/Scheduling Heuristics � � � Integrated Scratchpad Memory Optimization and Task Scheduling for MPSo. C Architectures, [V. Distribute with the ultimate goal of reducing Suhendra et alcomputations. CASES ‘ 06]. unnecessary data. Task transfers and increasing throughput Pipelined Data Parallel Mapping/Scheduling Technique for MPSo. C [Yang, H. et al. , DATE 09] Exploiting Coarse-grained Task, Data, and Pipeline Parallelism in Stream Programs, [Gordon et al. ASPLOS ‘ 06] CASA '10 9/16/2020

![Related Work (Cont. ) 29 Pure software solutions (complementary) Can be[24], complimentary but[10], no Related Work (Cont. ) 29 Pure software solutions (complementary) Can be[24], complimentary but[10], no](http://slidetodoc.com/presentation_image/310d7be3445577550a07607d5d5de3bb/image-29.jpg)

Related Work (Cont. ) 29 Pure software solutions (complementary) Can be[24], complimentary but[10], no side channel protection � CCured Stack. Guard Smashguard [25, Pointguard [26] Hardware Assisted Full platform support for secure software execution might be an � Patel etbest al. [27], Zambreno ettoal. [28], et al. in cases is limited only aare few. Arora applications Tooverkill the of security our knowledge, we the first to[30] Platforms propose (complementary) the idea of customized policy making to No energy/performance awareness nor a means to map an � ARM Trust. Zone [33], software SECA [8], execution AEGIS [31] guarantee secure for CMPs application to the platform (left to programmer) Halt/Execute � Flickr Current isolation approaches do not offer efficient Isolation (power/performance) means to run applications on multiprocessors � IBM CELL Vault, Agarwal et al. [12] CASA '10 9/16/2020

Outline 30 Introduction & Motivation CAM Overview Memory-aware Macro-Pipelining Customized Security Policy Generation Related Work Conclusion CASA '10 9/16/2020

Conclusion 31 Discussed CAM, a software mapping and scheduling methodology for multimedia and data intensive applications Progressively transforms the application’s code to discover and exploit � � Tightly couple transformations, with data reuse analysis, scheduling and mapping � Inter-kernel data reuse Parallelism opportunities Tightly couple computation with its data Explores, generates and exploits customized policy making to guarantee secure software execution Current enhancements include � � Reliability awareness Move towards heterogeneous MPSo. Cs and CGRAs CASA '10 9/16/2020

Thank you! 32 CASA '10 9/16/2020

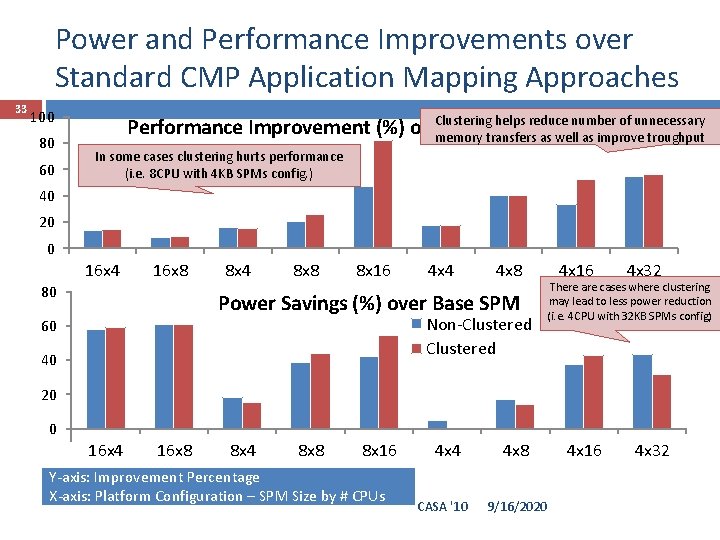

Power and Performance Improvements over Standard CMP Application Mapping Approaches 33 100 80 60 Clustering helps reduce number of unnecessary Performance Improvement (%) over Base SPM memory transfers as well as improve troughput In some cases clustering hurts performance (i. e. 8 CPU with 4 KB SPMs config. ) Non-Clustered 40 20 0 16 x 4 16 x 8 80 8 x 4 8 x 8 8 x 16 4 x 4 4 x 8 Power Savings (%) over Base SPM Non-Clustered 60 40 4 x 16 4 x 32 There are cases where clustering may lead to less power reduction (i. e. 4 CPU with 32 KB SPMs config) 20 0 16 x 4 16 x 8 8 x 4 8 x 8 8 x 16 -20 Y-axis: Improvement Percentage X-axis: Platform Configuration – SPM Size by # CPUs 4 x 4 CASA '10 4 x 8 9/16/2020 4 x 16 4 x 32

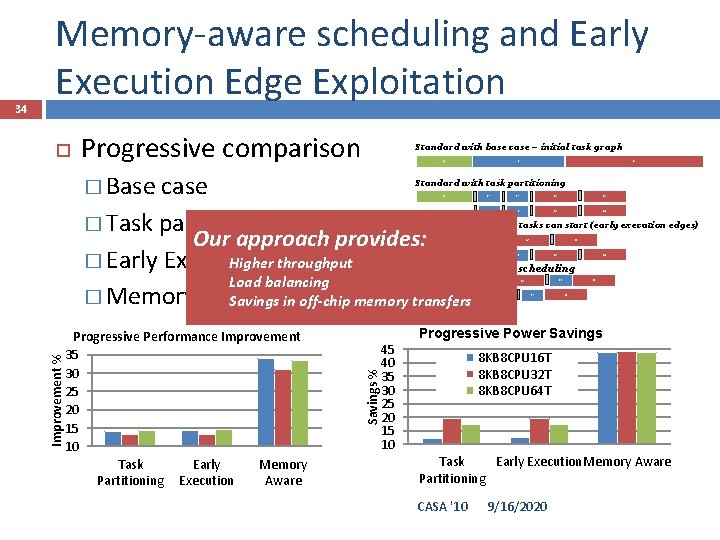

Progressive comparison Standard with base case – initial task graph A � Base B case � Task partitioning Our approach provides: Higher+ throughput � Early Execution Task Partitioning Load balancing � Memory Aware Savings. Scheduling in off-chip memory transfers C i Standard with task partitioning A B 1 B 3 C 1 C 3 B 2 B 4 C 2 C 4 After analyzing when tasks can start (early execution edges) A B 3 B 1 C 3 C 1 B 2 B 4 C 2 Memory aware task scheduling A B 1 Progressive Performance Improvement 35 30 25 20 15 10 Task Early Memory Partitioning Execution Aware B 2 B 4 C 2 C 1 B 3 C 4 C 3 Progressive Power Savings % Improvement % 34 Memory-aware scheduling and Early Execution Edge Exploitation 45 40 35 30 25 20 15 10 8 KB 8 CPU 16 T 8 KB 8 CPU 32 T 8 KB 8 CPU 64 T Task Early Execution. Memory Aware Partitioning CASA '10 9/16/2020

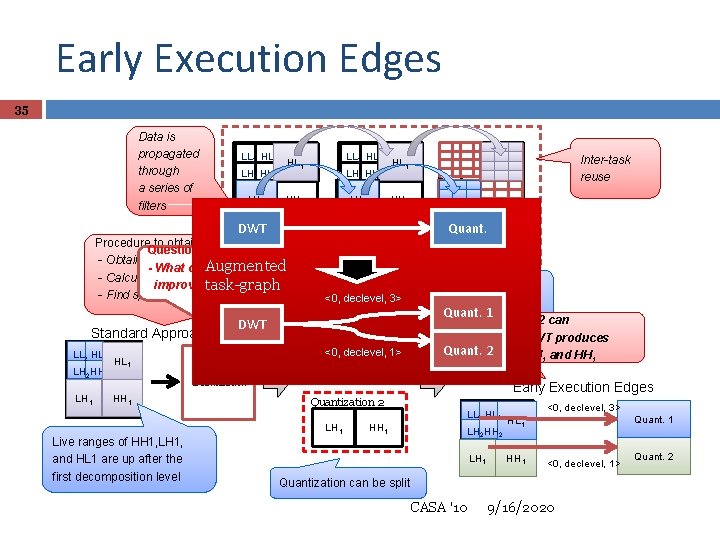

Early Execution Edges 35 Data is propagated through a series of filters LL 2 HL 2 LH 2 HH 2 LH LH 11 LL 2 HL HL 11 LH 2 HH 2 LH LH 11 HH 1 Inter-task reuse HL 11 HL HH 1 DWT Quant. DWTedges: Quant. EBCOT Procedure to obtain early execution Question: - Obtain list of independent data sets (HH 1, etc. ) Augmented - What can we do to operates over Quantization - Calculate the live range for each data set EBCOT operates over improve Individual throughput? task-graph subbands - Find split points for tasks and split them <0, declevel, 3> codeblocks from (HH 1, HH 2, etc. ) Quant. 1 the same subband Quantization 2 can Standard Approach LL 2 HL 2 LH 2 HH 2 LH 1 DWT declevel, 1> LL<0, 2 HL 1 Quantization HH 1 Live ranges of HH 1, LH 1, Quantization waits for and HL 1 are up after the DWT to finish first decomposition level Quantization 1 LH 2 HL 1 start after DWT produces Quant. 2 subbands LH 1 and HH 1 Early Execution Edges Quantization 2 LH 1 HH 1 LL 2 HL HL 22 HL 11 LH LH 22 HH HH 22 LH 11 LH HH 11 <0, declevel, 3> Quant. 1 <0, declevel, 1> Quantization can be split CASA '10 9/16/2020 Quant. 2

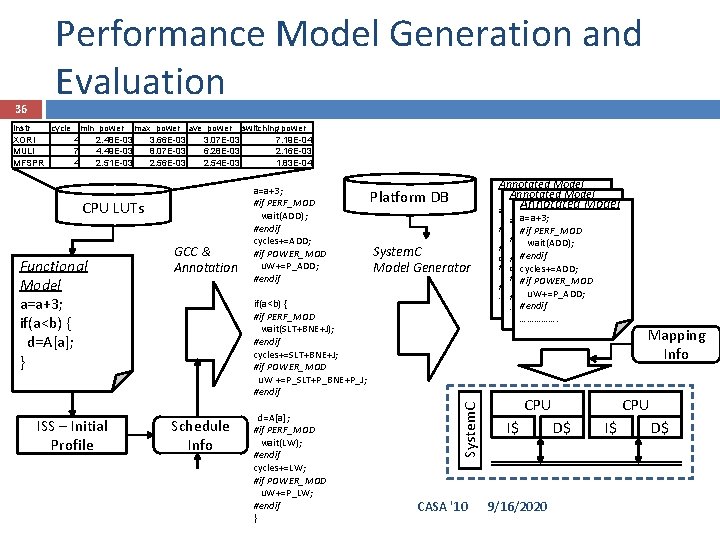

36 Performance Model Generation and Evaluation instr cycle min_power max_power ave_power switching power XORI 4 2. 48 E-03 3. 66 E-03 3. 07 E-03 7. 19 E-04 MULI 7 4. 49 E-03 8. 07 E-03 6. 28 E-03 2. 16 E-03 MFSPR 4 2. 51 E-03 2. 56 E-03 2. 54 E-03 1. 83 E-04 CPU LUTs ISS – Initial Profile Annotated Model Platform DB Annotated Model System. C Model Generator if(a<b) { #if PERF_MOD wait(SLT+BNE+J); #endif cycles+=SLT+BNE+J; #if POWER_MOD u. W +=P_SLT+P_BNE+P_J; #endif Schedule Info d=A[a]; #if PERF_MOD wait(LW); #endif cycles+=LW; #if POWER_MOD u. W+=P_LW; #endif } a=a+3; #if PERF_MOD wait(ADD); #endif cycles+=ADD; #endif #if POWER_MOD cycles+=ADD; u. W+=P_ADD; #if POWER_MOD #endifu. W+=P_ADD; ……………. #endifu. W+=P_ADD; #endif ……………. System. C Functional Model a=a+3; if(a<b) { d=A[a]; } GCC & Annotation a=a+3; #if PERF_MOD wait(ADD); #endif cycles+=ADD; #if POWER_MOD u. W+=P_ADD; #endif CASA '10 Mapping Info CPU I$ 9/16/2020 CPU D$ I$ D$

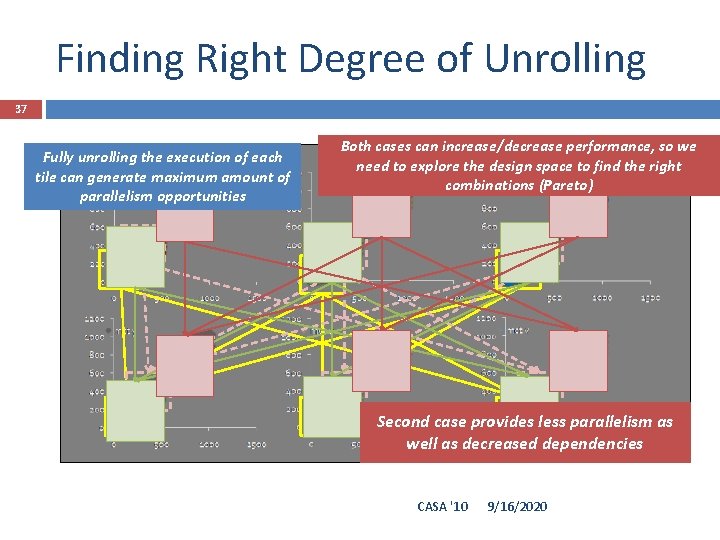

Finding Right Degree of Unrolling 37 Fully unrolling the execution of each tile can generate maximum amount of parallelism opportunities Both cases can increase/decrease performance, so we need to explore the design space to find the right combinations (Pareto) Second case provides less parallelism as well as decreased dependencies CASA '10 9/16/2020

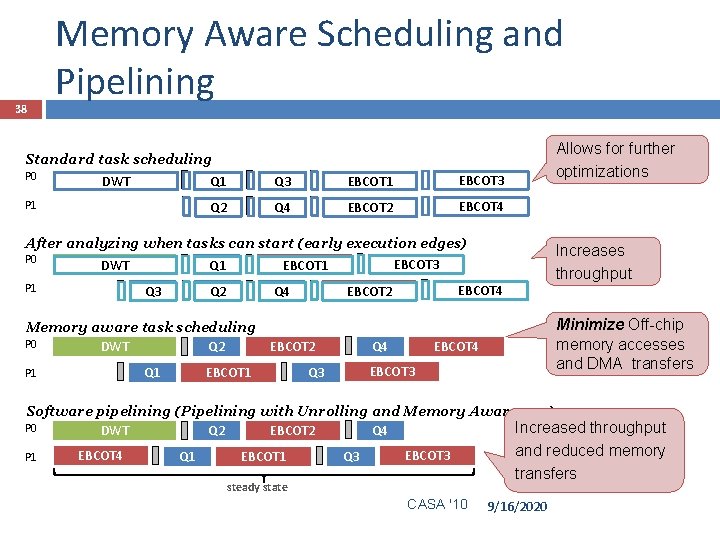

38 Memory Aware Scheduling and Pipelining Standard task scheduling P 0 DWT Q 1 Q 3 EBCOT 1 EBCOT 3 P 1 Q 4 EBCOT 2 EBCOT 4 Q 2 Allows for further optimizations After analyzing when tasks can start (early execution edges) P 0 EBCOT 3 DWT Q 1 EBCOT 1 P 1 Q 3 Q 2 Q 4 Memory aware task scheduling P 0 DWT Q 2 Q 1 P 1 EBCOT 4 EBCOT 2 Q 4 EBCOT 2 Increases throughput Minimize Off-chip memory accesses and DMA transfers EBCOT 4 EBCOT 3 Q 3 Software pipelining (Pipelining with Unrolling and Memory Awareness) Increased throughput Q 4 DWT Q 2 EBCOT 2 P 0 P 1 EBCOT 4 Q 1 EBCOT 1 Q 3 EBCOT 3 steady state CASA '10 and reduced memory transfers 9/16/2020

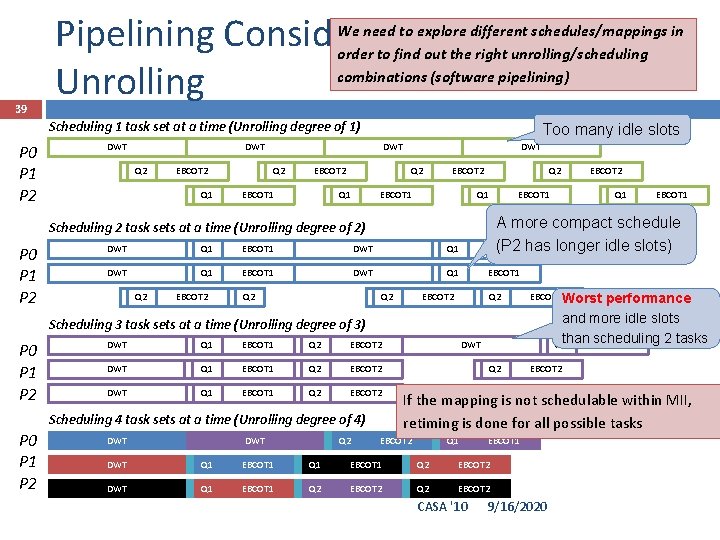

Pipelining Considering Unrolling We need to explore different schedules/mappings in order to find out the right unrolling/scheduling combinations (software pipelining) 39 Scheduling 1 task set at a time (Unrolling degree of 1) P 0 P 1 P 2 DWT Too many idle slots DWT Q 2 EBCOT 2 Q 1 DWT Q 2 EBCOT 1 DWT Q 2 Q 1 EBCOT 2 EBCOT 1 Q 1 EBCOT 1 DWT Q 1 EBCOT 1 Q 2 EBCOT 2 Q 2 DWT Q 1 EBCOT 1 39 Q 2 EBCOT 2 DWT Q 1 EBCOT 1 Q 2 EBCOT 2 Scheduling 4 task sets at a time (Unrolling degree of 4) P 0 P 1 P 2 Q 1 DWT EBCOT 2 Q 2 EBCOT 2 Worst performance and more idle slots 2 tasks Q 1 than scheduling EBCOT 1 Q 2 EBCOT 2 Scheduling 3 task sets at a time (Unrolling degree of 3) P 0 P 1 P 2 EBCOT 2 A more compact schedule (P 2 has longer idle slots) EBCOT 1 Scheduling 2 task sets at a time (Unrolling degree of 2) P 0 P 1 P 2 Q 2 DWT If the mapping is not schedulable within MII, retiming is done for all possible tasks EBCOT 2 Q 1 EBCOT 1 DWT Q 1 EBCOT 1 Q 2 EBCOT 2 CASA '10 9/16/2020

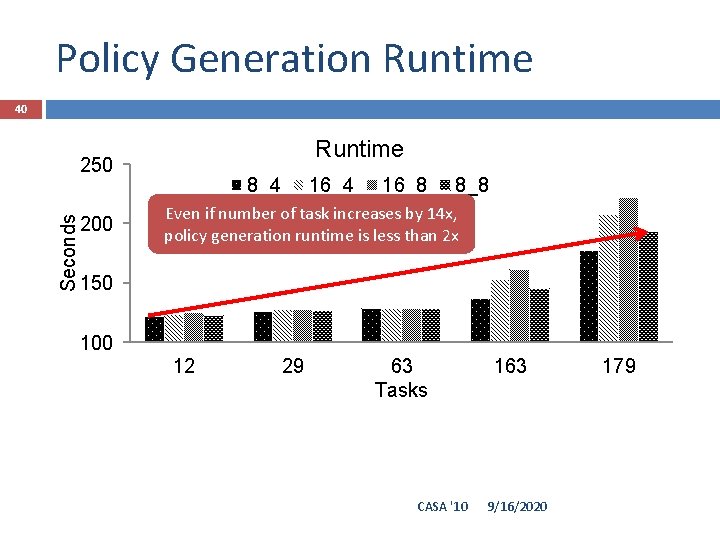

Policy Generation Runtime 40 Runtime Seconds 250 200 8_4 16_8 8_8 Even if number of task increases by 14 x, policy generation runtime is less than 2 x 150 100 12 29 63 Tasks CASA '10 163 9/16/2020 179

- Slides: 40