Benchmarking Working Group A status report Manfred Alef

Benchmarking Working Group A status report Manfred Alef Domenico Giordano Michele Michelotto (presenter)

Working group activities • 11 phone conferences since last October • Planning presentation in next pre-GDB in June • Fast benchmark • HS 06 • Meltdown and Spectre • Reference Workload • SPEC CPU 2017 • Move to new portal Benchmarking WG 2

Benchmarking session at next pre-GDB • Originally in April 10 th • Moved to June 12 th • Agenda in coming weeks Benchmarking WG 3

Fast Benchmark • Alice and LHCb are using DB 12 • They can run DB 12 (one benchmark copy) inside their jobs • Useful to assess the performances of a queue/virtual machine in a certain moment due to the load • There is no demand from Atlas and CMS for a separate fast benchmark Benchmarking WG 4

HS 06 • Still very useful to understand the impact of security patches or new processors • All claim of divergence from application code when carefully analyzed had some explanation so no it’s no broken • Is 10 years old: so we need a replacement • Application code is compiled 64 bit while the standard HS 06 is 32 bit • Application code footprint increasing with technological evolution • CPU 2006 is being retired by SPEC • See for example use of HS 06 to understand the new Skylake Benchmarking WG 5

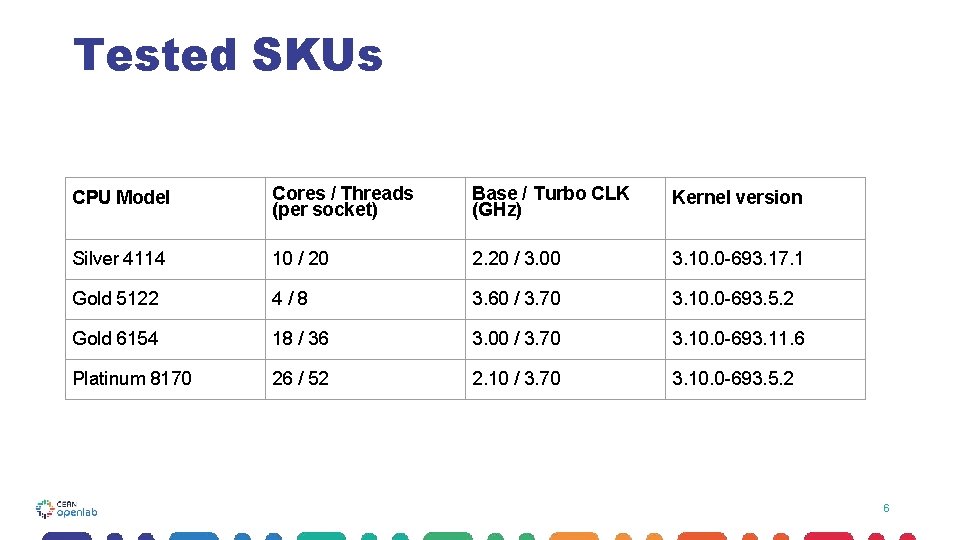

Tested SKUs CPU Model Cores / Threads (per socket) Base / Turbo CLK (GHz) Kernel version Silver 4114 10 / 20 2. 20 / 3. 00 3. 10. 0 -693. 17. 1 Gold 5122 4/8 3. 60 / 3. 70 3. 10. 0 -693. 5. 2 Gold 6154 18 / 36 3. 00 / 3. 70 3. 10. 0 -693. 11. 6 Platinum 8170 26 / 52 2. 10 / 3. 70 3. 10. 0 -693. 5. 2 6

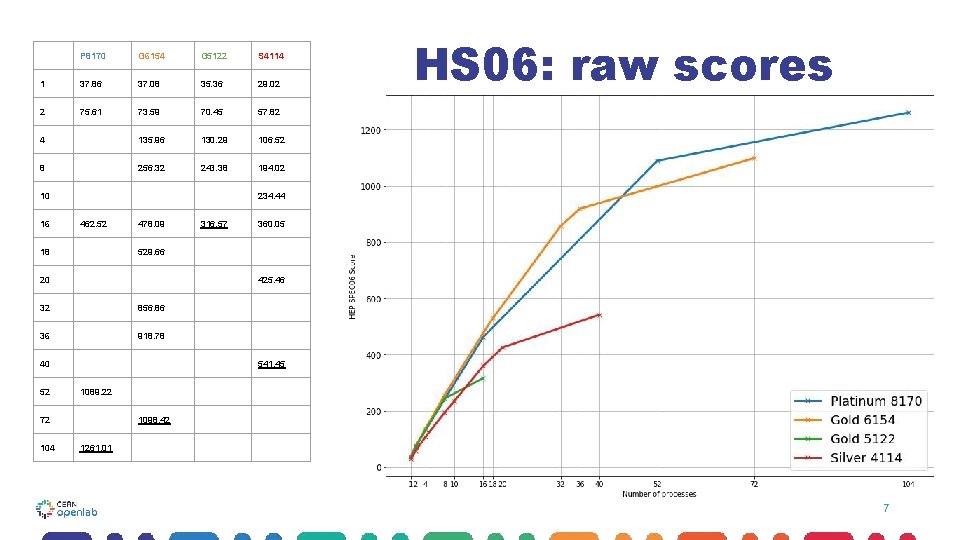

P 8170 G 6154 G 5122 S 4114 1 37. 86 37. 08 35. 36 29. 02 2 75. 61 73. 59 70. 45 57. 82 4 135. 96 130. 29 106. 52 8 256. 32 243. 38 194. 02 10 16 234. 44 462. 52 18 478. 09 360. 05 425. 46 32 856. 86 36 918. 78 40 541. 45 1089. 22 72 104 316. 57 529. 66 20 52 HS 06: raw scores 1098. 42 1261. 01 7

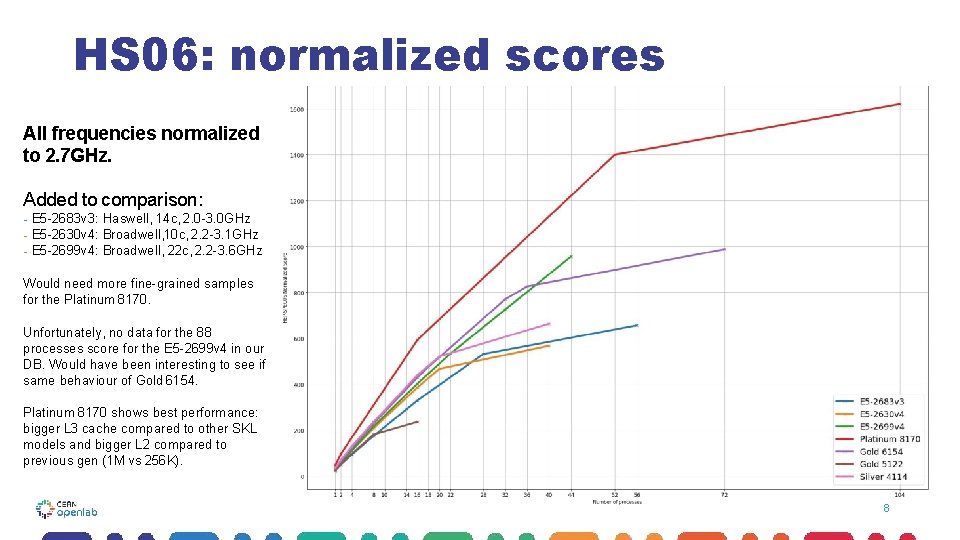

HS 06: normalized scores All frequencies normalized to 2. 7 GHz. Added to comparison: - E 5 -2683 v 3: Haswell, 14 c, 2. 0 -3. 0 GHz - E 5 -2630 v 4: Broadwell, 10 c, 2. 2 -3. 1 GHz - E 5 -2699 v 4: Broadwell, 22 c, 2. 2 -3. 6 GHz Would need more fine-grained samples for the Platinum 8170. Unfortunately, no data for the 88 processes score for the E 5 -2699 v 4 in our DB. Would have been interesting to see if same behaviour of Gold 6154. Platinum 8170 shows best performance: bigger L 3 cache compared to other SKL models and bigger L 2 compared to previous gen (1 M vs 256 K). 8

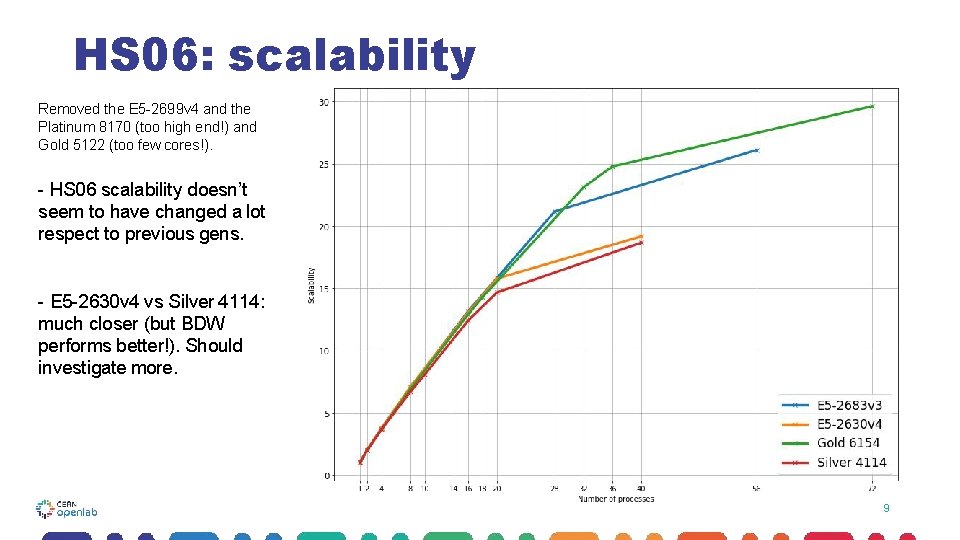

HS 06: scalability Removed the E 5 -2699 v 4 and the Platinum 8170 (too high end!) and Gold 5122 (too few cores!). - HS 06 scalability doesn’t seem to have changed a lot respect to previous gens. - E 5 -2630 v 4 vs Silver 4114: much closer (but BDW performs better!). Should investigate more. 9

Conclusion General considerations ● ● Use of Hyper. Threading never leads to worst scalability (HT=OFF is not convenient? ); No use of AVX 512, which is a key component of Skylake; Turbo. Boost doesn't look like a big issue for this kind of comparisons. Different kernel versions! ○ No security fixes for Gold 5122 and Platinum 8170 (3. 10. 0 -693. 5. 2) ○ Available for Gold 6154 (3. 10. 0 -693. 11. 6) ○ Spectre v 2 removed on Silver 4114 (3. 10. 0 -693. 17. 1) Future work ● ● ● Power consumption benchmarks Fast benchmarks, SPEC 2017, … Accurate HS 06 analysis (maybe focusing on less SKUs) FULL REPORT: https: //luatzori. web. cern. ch/docs/Skylake_HS 06_Full_Report. html 1 0

HS 06 replacement • Two alternatives • Use of a frozen application code • Pro: No licensing , will use code written by same people using the core application software • Cons: No guarantee that will run on other architecture, that will stable for many years, Future support (e. g. Docker container format) not guaranteed • A subset of CPU 2017 • Pro: Industry standard, will run on almost all type of processors and instruction set, supported by SPEC • Cons: Need a license. Benchmarking WG 11

Wiki for SPEC CPU 2017 • https: //twiki. cern. ch/twiki/bin/view/HEPIXBMKWG-SPEC 2017 • Documentation on how to install and run • Config files • All prep work by Domenico then we almost stopped because of Meltdown/Spectre • Planning a “Hackathon” on CPU 2017 for end of May to give a new momentum to CPU 2017 Benchmarking WG 12

Running SPEC CPU 2017 Running the benchmark: ➔ Only RATE metrics, no SPEED benchmarks ● Memory restrictions at WLCG sites: 2 GB per job slot ➔ Not 4 2018 -02 -09 mimicking the parallel run model of HS 06 Manfred Alef: Towards HS 17 (Iteration #0) Steinbuch Centre of Computing

Running SPEC CPU 2017 Running the benchmark: ➔ All 10+13 RATE benchmarks ● runlist = intrate fprate ● Desired bset scores can be calculated from the included individual benchmark results (geometric mean) � Benchmark sets used in this first assessments: � cpp = fprate_mixed_cpp. bset + intrate_any_cpp. bset (7 benchmarks: 507, 511, 520, 523, 526, 531, and 541) � int = intrate. bset (10 benchmarks) � fp = fprate. bset (13 benchmarks) � 520 = 520. omnetpp_r (single benchmark) 5 2018 -02 -09 Manfred Alef: Towards HS 17 (Iteration #0) Steinbuch Centre of Computing

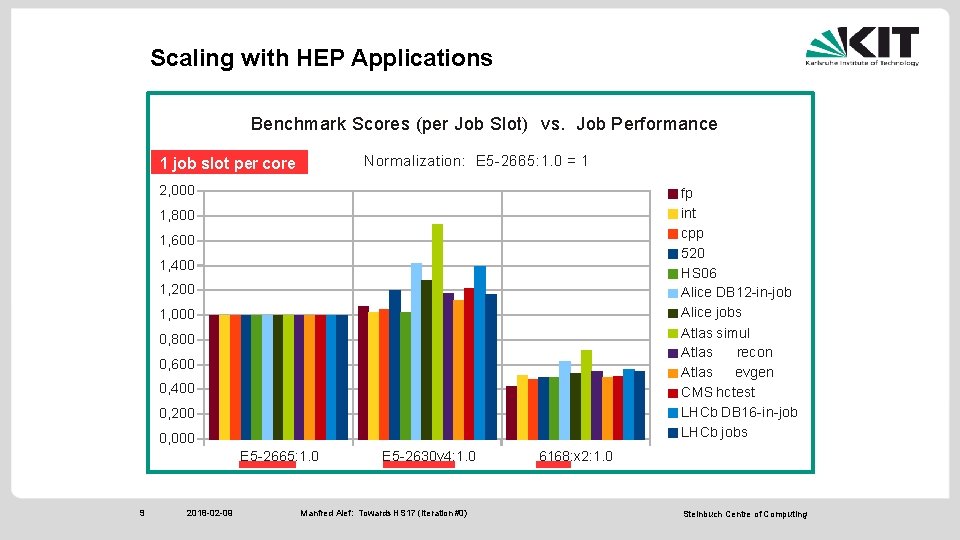

Running SPEC CPU 2017 Systems under Test (dual-socket servers): ➔ Intel Xeon: ● E 5 -2665 (8 -core, Sandy Bridge) � 16, 24, or 32 job slots ● E 5 -2630 v 4 (10 -core, Broadwell) � 20, 32, or 40 job slots ➔ AMD Opteron: ● 6168 (12 -core) ◆ 24 job slots 6 2018 -02 -09 Manfred Alef: Towards HS 17 (Iteration #0) Steinbuch Centre of Computing

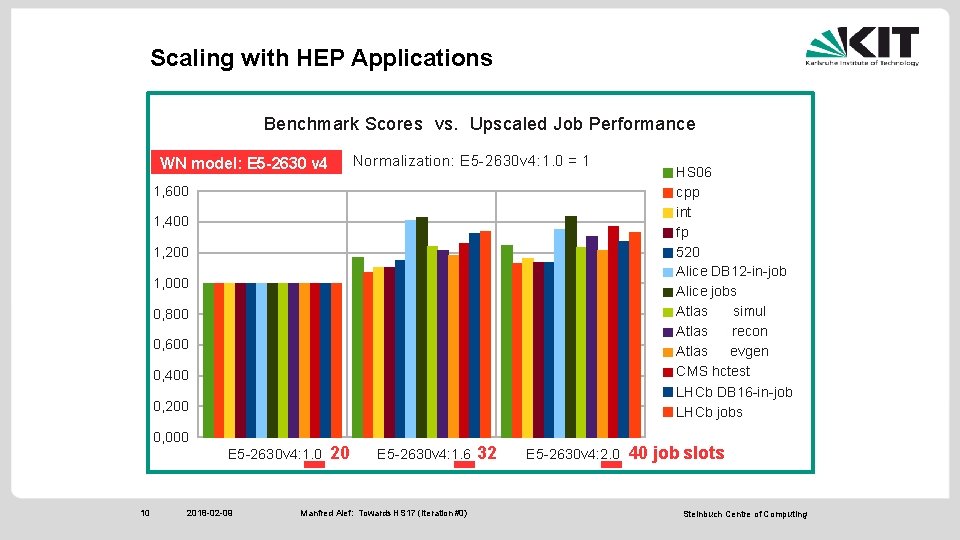

Running SPEC CPU 2017 Running the benchmark: ➔ Number of RATE benchmark copies = number of job slots ● On Broadwell (2 x 10 -core) systems: 20, 32, or 40 job slots (1. 0, 1. 6, or 2. 0 slots per physical core) 7 2018 -02 -09 Manfred Alef: Towards HS 17 (Iteration #0) Steinbuch Centre of Computing

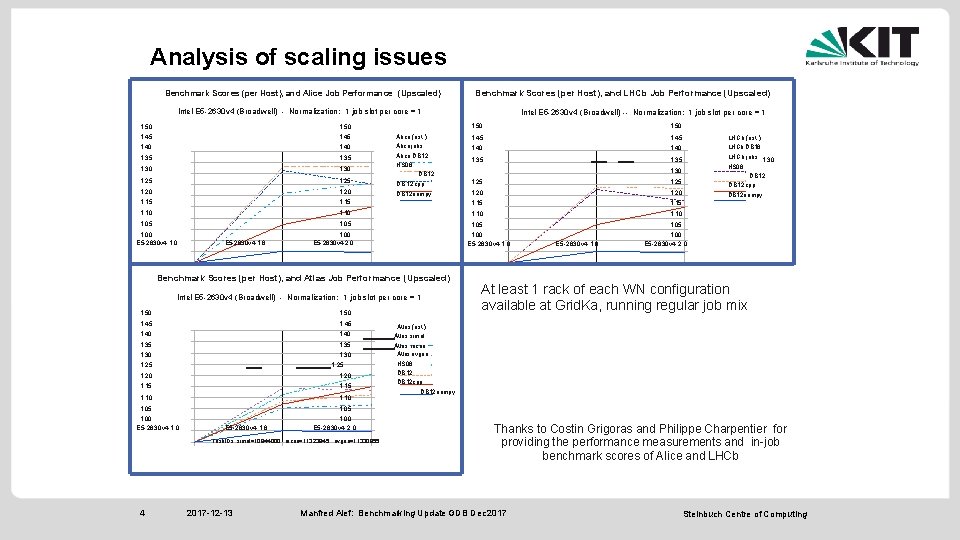

Scaling with HEP Applications Comparing benchmark results with performance scores (in units of events/s) of HEP application reported by users ➔ Many thanks to Philippe Charpentier (LHCb), Costin Grigoras (Alice), and Valentin Kuznetsow (CMS) for providing measured values ➔ Atlas: performance statistics from Bigpanda, Task. IDs: ● 10944000 (simul), 11323845 (recon), 11330855 (evgen) 8 2018 -02 -09 Manfred Alef: Towards HS 17 (Iteration #0) Steinbuch Centre of Computing

Scaling with HEP Applications Benchmark Scores (per Job Slot) vs. Job Performance Normalization: E 5 -2665: 1. 0 = 1 1 job slot per core 2, 000 fp int cpp 520 HS 06 Alice DB 12 -in-job Alice jobs Atlas simul Atlas recon Atlas evgen CMS hctest LHCb DB 16 -in-job LHCb jobs 1, 800 1, 600 1, 400 1, 200 1, 000 0, 800 0, 600 0, 400 0, 200 0, 000 E 5 -2665: 1. 0 9 2018 -02 -09 E 5 -2630 v 4: 1. 0 Manfred Alef: Towards HS 17 (Iteration #0) 6168: x 2: 1. 0 Steinbuch Centre of Computing

Scaling with HEP Applications Benchmark Scores vs. Upscaled Job Performance Normalization: E 5 -2630 v 4: 1. 0 = 1 WN model: E 5 -2630 v 4 0, 400 HS 06 cpp int fp 520 Alice DB 12 -in-job Alice jobs Atlas simul Atlas recon Atlas evgen CMS hctest 0, 200 LHCb DB 16 -in-job LHCb jobs 1, 600 1, 400 1, 200 1, 000 0, 800 0, 600 0, 000 E 5 -2630 v 4: 1. 0 10 2018 -02 -09 20 E 5 -2630 v 4: 1. 6 Manfred Alef: Towards HS 17 (Iteration #0) 32 E 5 -2630 v 4: 2. 0 40 job slots Steinbuch Centre of Computing

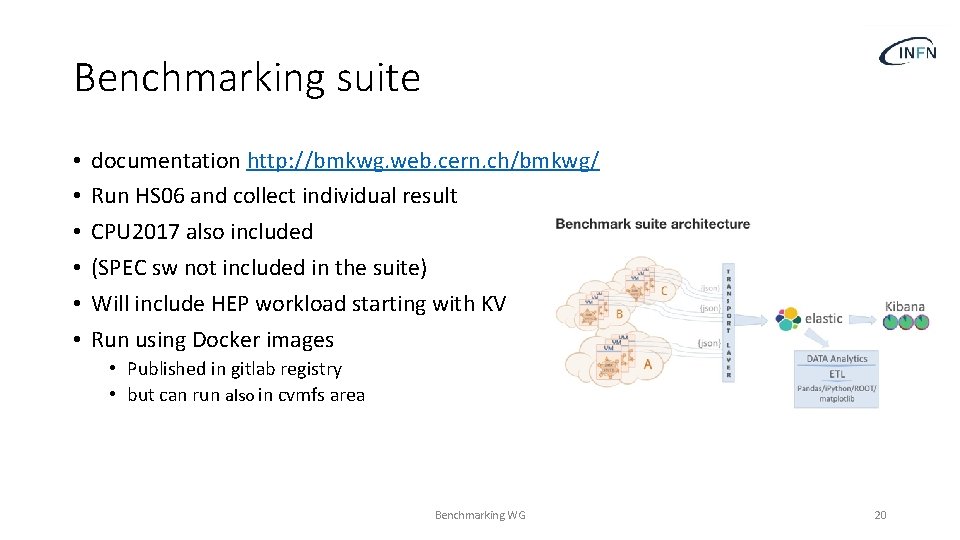

Benchmarking suite • • • documentation http: //bmkwg. web. cern. ch/bmkwg/ Run HS 06 and collect individual result CPU 2017 also included (SPEC sw not included in the suite) Will include HEP workload starting with KV Run using Docker images • Published in gitlab registry • but can run also in cvmfs area Benchmarking WG 20

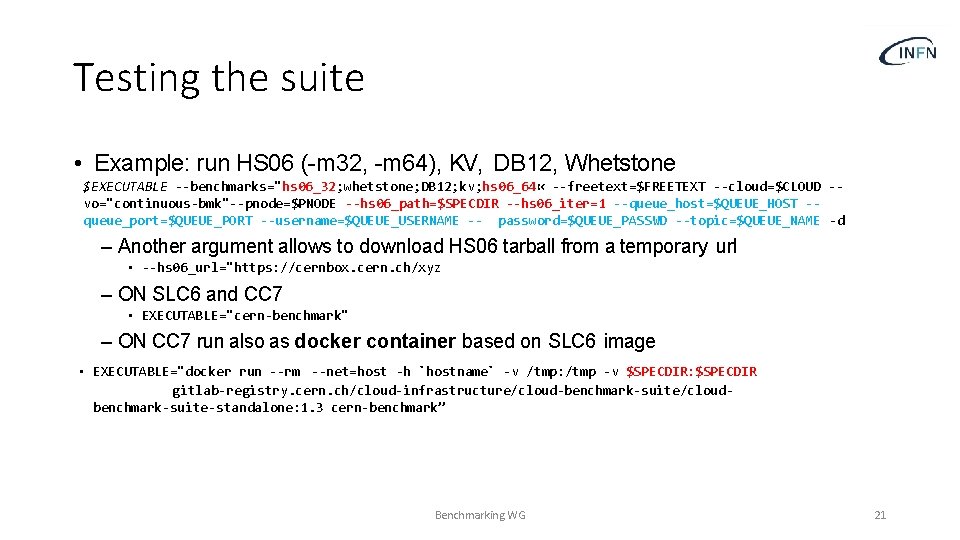

Testing the suite • Example: run HS 06 (-m 32, -m 64), KV, DB 12, Whetstone $EXECUTABLE --benchmarks="hs 06_32; whetstone; DB 12; kv; hs 06_64 « --freetext=$FREETEXT --cloud=$CLOUD -vo="continuous-bmk"--pnode=$PNODE --hs 06_path=$SPECDIR --hs 06_iter=1 --queue_host=$QUEUE_HOST -queue_port=$QUEUE_PORT --username=$QUEUE_USERNAME -- password=$QUEUE_PASSWD --topic=$QUEUE_NAME -d – Another argument allows to download HS 06 tarball from a temporary url • --hs 06_url="https: //cernbox. cern. ch/xyz – ON SLC 6 and CC 7 • EXECUTABLE="cern-benchmark" – ON CC 7 run also as docker container based on SLC 6 image • EXECUTABLE="docker run --rm --net=host -h `hostname` -v /tmp: /tmp -v $SPECDIR: $SPECDIR gitlab-registry. cern. ch/cloud-infrastructure/cloud-benchmark-suite/cloudbenchmark-suite-standalone: 1. 3 cern-benchmark” Benchmarking WG 21

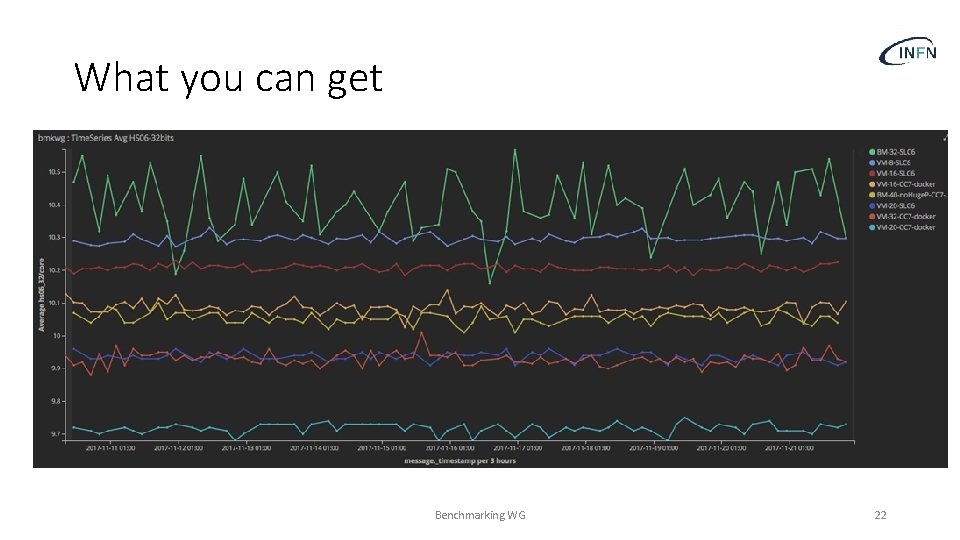

What you can get Benchmarking WG 22

Analysis of scaling issues Benchmark Scores (per Host), and Alice Job Performance (Upscaled) Benchmark Scores (per Host), and LHCb Job Performance (Upscaled) Intel E 5 -2630 v 4 (Broadwell) - Normalization: 1 job slot per core = 1 1, 50 1, 45 1, 40 1, 35 1, 30 1, 25 1, 20 1, 15 Alice (est. ) Alice jobs Alice DB 12 HS 06 DB 12 cpp DB 12 numpy Intel E 5 -2630 v 4 (Broadwell) -- Normalization: 1 job slot per core = 1 1. 50 1. 45 1. 40 1. 35 LHCb (est. ) LHCb DB 16 1. 25 LHCb jobs 1. 30 HS 06 DB 12 cpp 1. 20 DB 12 numpy 1. 15 1, 10 1, 05 1, 00 E 5 -2630 v 4: 1. 6 1. 00 E 5 -2630 v 4: 2. 0 1. 00 E 5 -2630 v 4: 1. 0 Benchmark Scores (per Host), and Atlas Job Performance (Upscaled) Intel E 5 -2630 v 4 (Broadwell) - Normalization: 1 job slot per core = 1 1. 50 1. 45 1. 40 1. 35 1. 30 1. 25 1. 20 1. 15 1. 10 1. 05 1. 00 E 5 -2630 v 4: 1. 6 1. 00 E 5 -2630 v 4: 2. 0 Task. IDs: simul=10944000, recon=11323845, evgen=11330855 4 2017 -12 -13 1. 30 E 5 -2630 v 4: 1. 6 1. 00 E 5 -2630 v 4: 2. 0 At least 1 rack of each WN configuration available at Grid. Ka, running regular job mix Atlas (est. ) Atlas simul Atlas recon Atlas evgen HS 06 DB 12 cpp DB 12 numpy Thanks to Costin Grigoras and Philippe Charpentier for providing the performance measurements and in-job benchmark scores of Alice and LHCb Manfred Alef: Benchmarking Update GDB Dec 2017 Steinbuch Centre of Computing

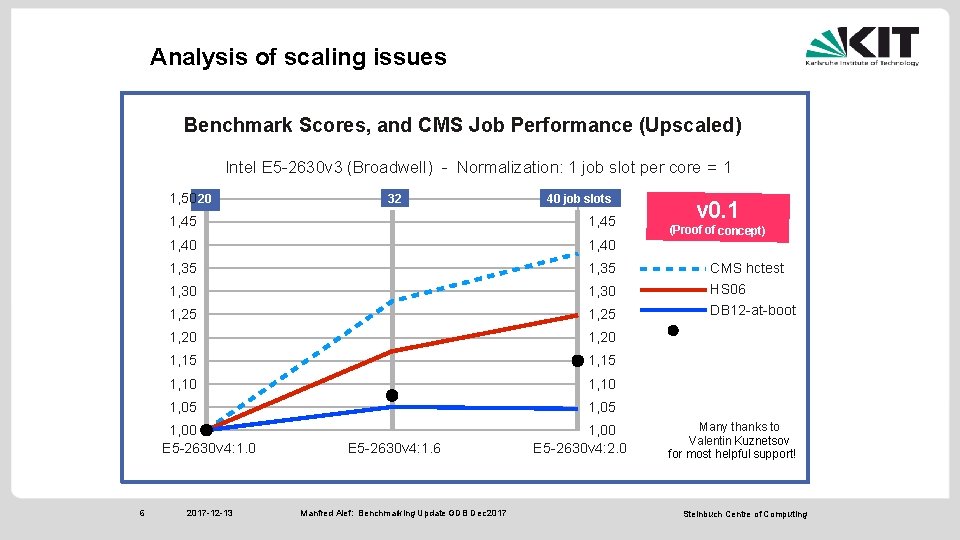

Analysis of scaling issues Benchmark Scores, and CMS Job Performance (Upscaled) Intel E 5 -2630 v 3 (Broadwell) - Normalization: 1 job slot per core = 1 1, 50 20 40 job slots 1, 50 1, 45 1, 40 1, 35 1, 30 1, 25 1, 20 1, 15 1, 10 1, 05 1, 00 E 5 -2630 v 4: 1. 0 6 32 2017 -12 -13 E 5 -2630 v 4: 1. 6 Manfred Alef: Benchmarking Update GDB Dec 2017 1, 00 E 5 -2630 v 4: 2. 0 v 0. 1 (Proof of concept) CMS hctest HS 06 DB 12 -at-boot Est. Price Many thanks to Valentin Kuznetsov for most helpful support! Steinbuch Centre of Computing

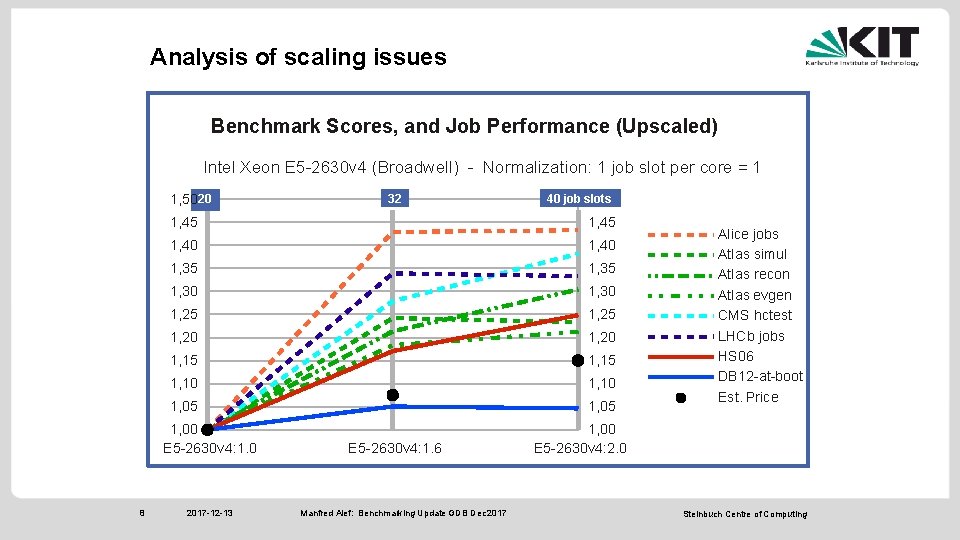

Analysis of scaling issues Almost all inspected accounting data demonstrate significant performance increase on hosts configured with more than 1 job slot per physical cores ➔ Exceeding HS 06 benchmark scores ➔ In average, best performance per HS 06 score at about 1. 5 job slots per core. . . ➔. . . which is also the most economic solution (in units of application performance per $/€/. . . ) 7 2017 -12 -13 Manfred Alef: Benchmarking Update GDB Dec 2017 Steinbuch Centre of Computing

Analysis of scaling issues Benchmark Scores, and Job Performance (Upscaled) Intel Xeon E 5 -2630 v 4 (Broadwell) - Normalization: 1 job slot per core = 1 1, 5020 40 job slots 1, 50 1, 45 1, 40 1, 35 1, 30 1, 25 1, 20 1, 15 1, 10 1, 05 1, 00 E 5 -2630 v 4: 1. 0 8 32 2017 -12 -13 E 5 -2630 v 4: 1. 6 Manfred Alef: Benchmarking Update GDB Dec 2017 Alice jobs Atlas simul Atlas recon Atlas evgen CMS hctest LHCb jobs HS 06 DB 12 -at-boot Est. Price 1, 00 E 5 -2630 v 4: 2. 0 Steinbuch Centre of Computing

Meltdown and Spectre • At end of December / beginning of January appeared 3 vulnerabilities on several processor from Intel/AMD/Arm • We almost stopped all activities on CPU 2017 to investigate the impact of the patches to block the vulnerabilities or at least mitigate them • Strong interaction with CERN IT security team (Vincent Brillault) • We asked all experiments to report Benchmarking WG 27

Spectre v 2 • Some older intel processor never received a patch for Spectre • Many site reported instabilities after the patch for Spectre • Intel recommended to stop deployment of patches as some could introduce higher than expected reboots and other unexpected behaviors • Site manager have to choose between security vs stability • Impact on performances small but non negligible • NEWS: In April Intel has released a set of microcode file. No updated RPM for SL/Cent. OS available. Most silicon vendor have also released corresponding BIOS updates Benchmarking WG 28

Meltdown-Spectre patches: measurements at CERN 12 Jan 2018; David Smith on behalf of IT-DI-LCG

Test machine, no virtualization • Haswell-EP • 2 x E 5 -2630 v 3 (2 x 8 physical cores => 32 with SMT) • 2 x 800 GB SSD (Intel DC S 3510 series) • 8 x 18 ASF 1 G 72 PZ-2 G 1 A 2 => 64 Gi. B memory • Centos 7 • Previously 3. 10. 0 -514. 10. 2 (no-KPTI), now 3. 10. 0 -693. 11. 6 (with KPTI) • Now using Intel microcode firmware update 06 -3 f-02 revision 0 x 3 b

Method + Results • Test run procedure • Input data set (~32 GB) is pre-staged locally; 4 copies, identical and duplicated to provide separate copies per job instance • Empty page cache • Start 4 instances of the ATLAS Pile job (ATHENA_PROC_NUMBER=8) within a few seconds • Original run was done in October, run with patches was done 9 Jan • The job does contact external services for some configuration data • Results: median (q 1/q 3), for previous : patched • Real: • User (CPU): • System (CPU): 14474 s (-10/+11) 96012 s (-89/+90) : 1086 s (-1/+3) : 15112 s (-9/+24) 97384 s (-42/+22) : 2058 s (-4/+11) • Appears to be (+2. 4% cpu, +4. 4% real) penalty on this run with the recent kernel + firmware • Maybe be some sources of systematic error that are not accounted for, especially on real time, e. g. an external service is involved and original and new runs were done several months apart

Meltdown and Spectre Serhan Mete UC Irvine ATLAS SPOT Meeting 24/01/2018

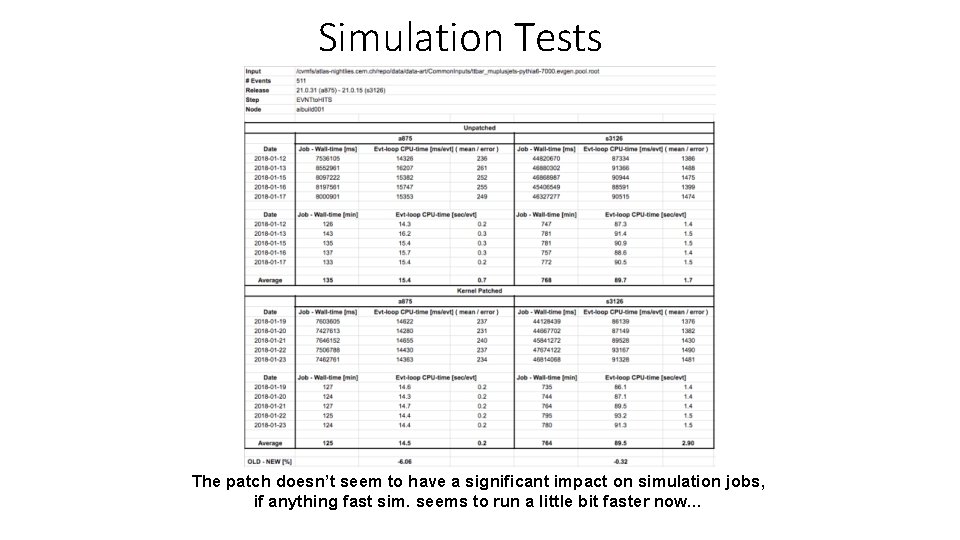

Simulation Tests The patch doesn’t seem to have a significant impact on simulation jobs, if anything fast sim. seems to run a little bit faster now…

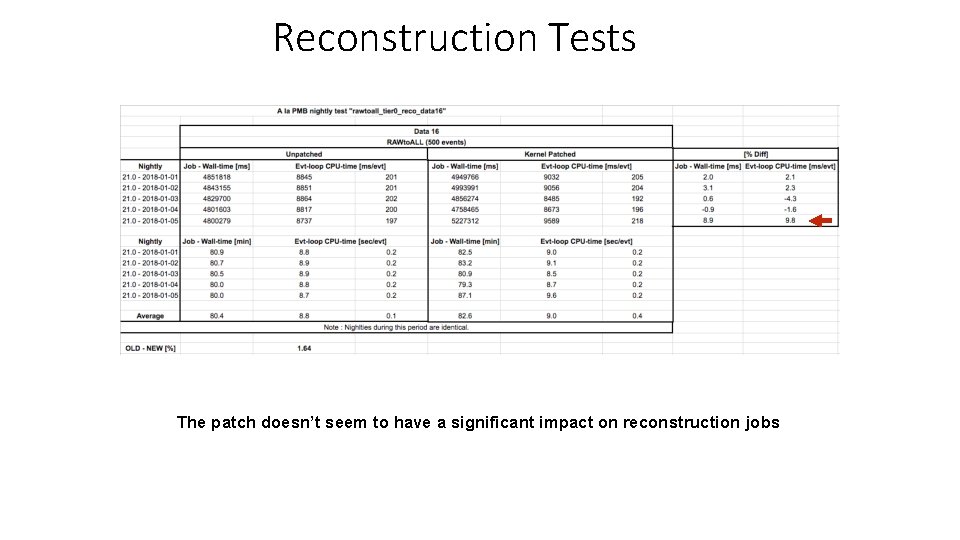

Reconstruction Tests The patch doesn’t seem to have a significant impact on reconstruction jobs

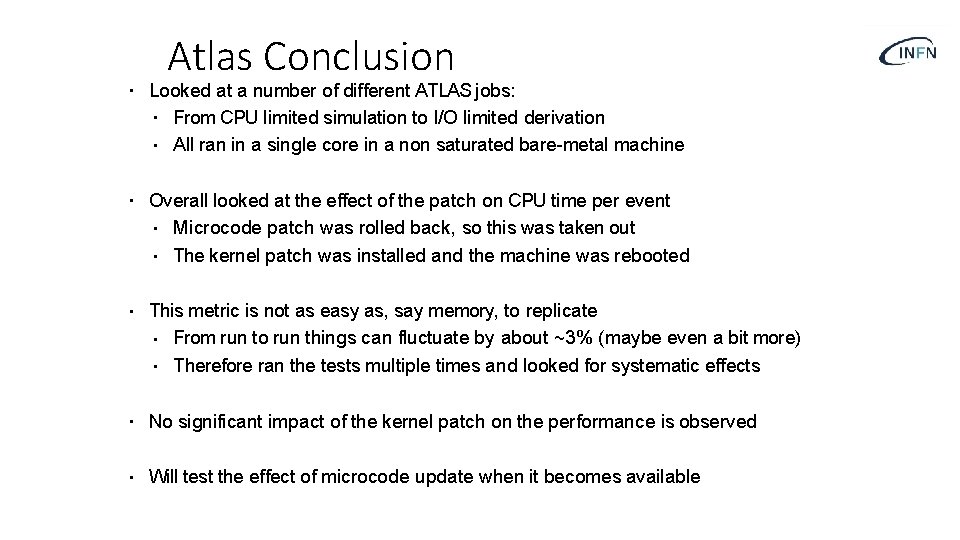

Atlas Conclusion • Looked at a number of different ATLAS jobs: • From CPU limited simulation to I/O limited derivation • All ran in a single core in a non saturated bare-metal machine • Overall looked at the effect of the patch on CPU time per event • Microcode patch was rolled back, so this was taken out • The kernel patch was installed and the machine was rebooted • This metric is not as easy as, say memory, to replicate • From run to run things can fluctuate by about ~3% (maybe even a bit more) • Therefore ran the tests multiple times and looked for systematic effects • No significant impact of the kernel patch on the performance is observed • Will test the effect of microcode update when it becomes available

PIC/CMS feedback Performance measurements for Meltdown-Spectre vulnerabilities C. Acosta, J. Flix

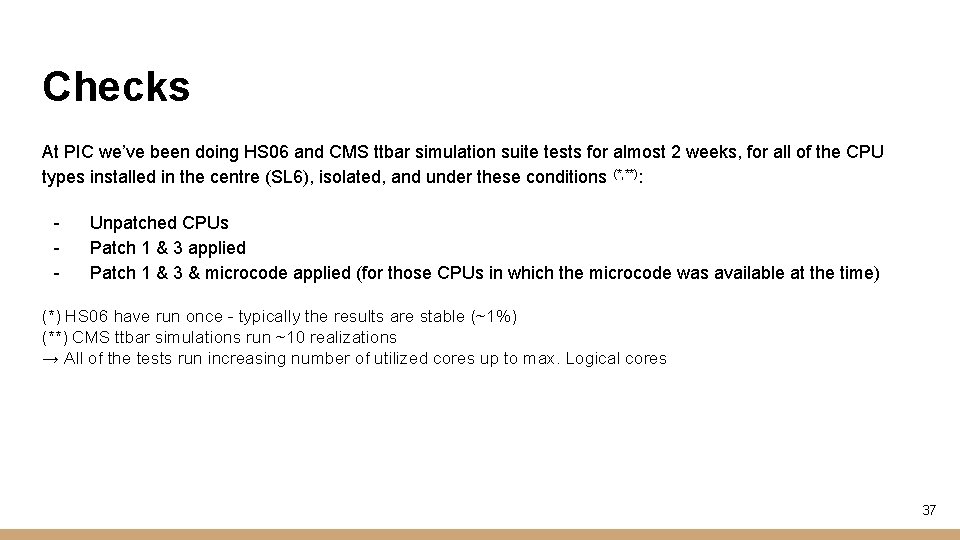

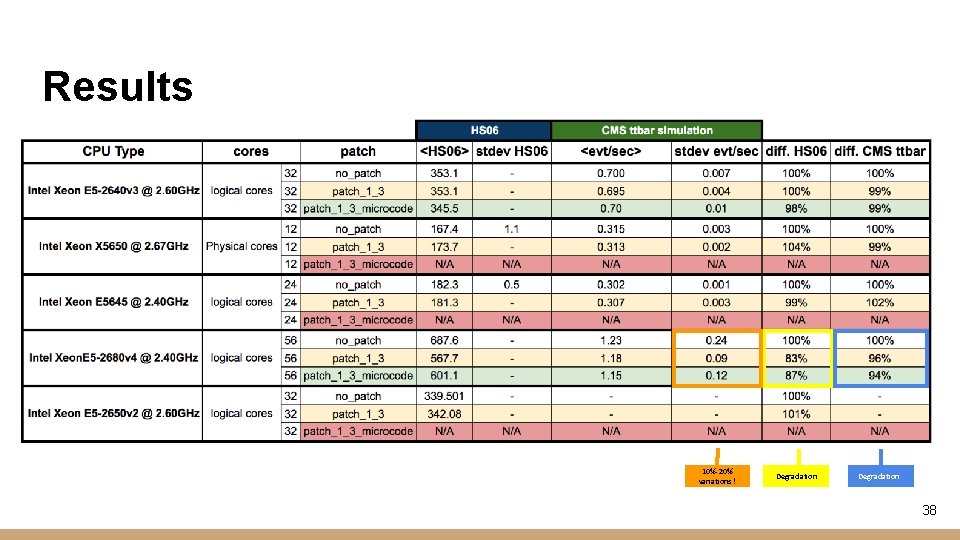

Checks At PIC we’ve been doing HS 06 and CMS ttbar simulation suite tests for almost 2 weeks, for all of the CPU types installed in the centre (SL 6), isolated, and under these conditions (*, **): - Unpatched CPUs Patch 1 & 3 applied Patch 1 & 3 & microcode applied (for those CPUs in which the microcode was available at the time) (*) HS 06 have run once - typically the results are stable (~1%) (**) CMS ttbar simulations run ~10 realizations → All of the tests run increasing number of utilized cores up to max. Logical cores 37

Results 10%-20% variations ! Degradation 38

E 5 -2680 v 4 39

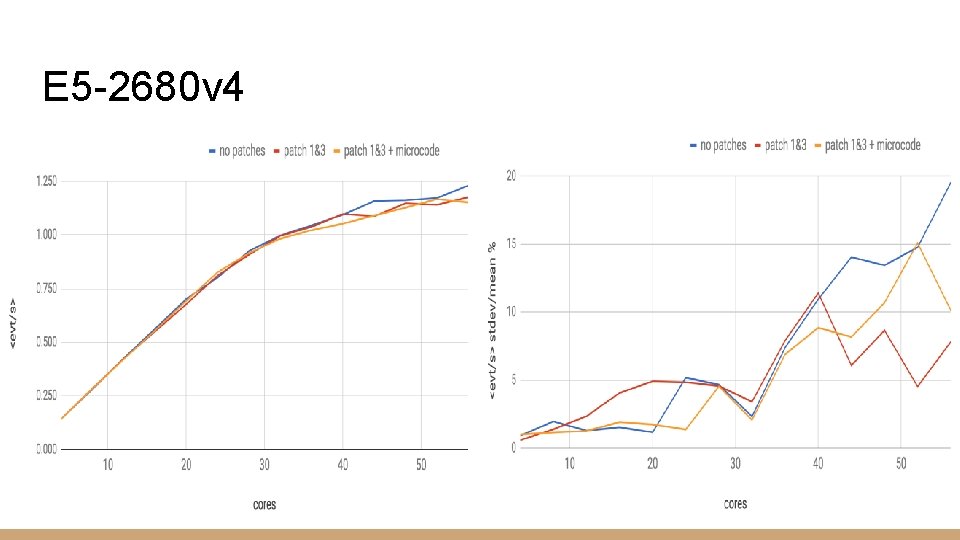

40

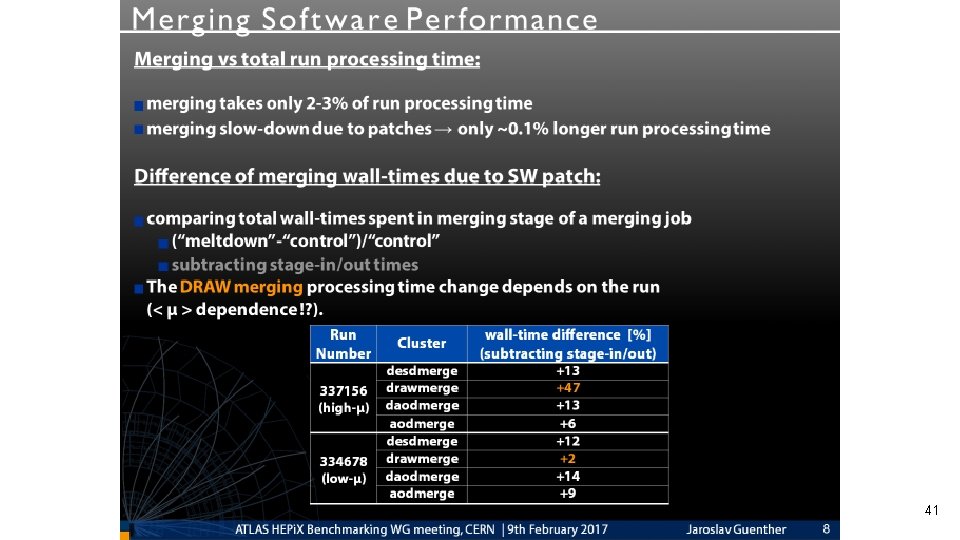

41

42

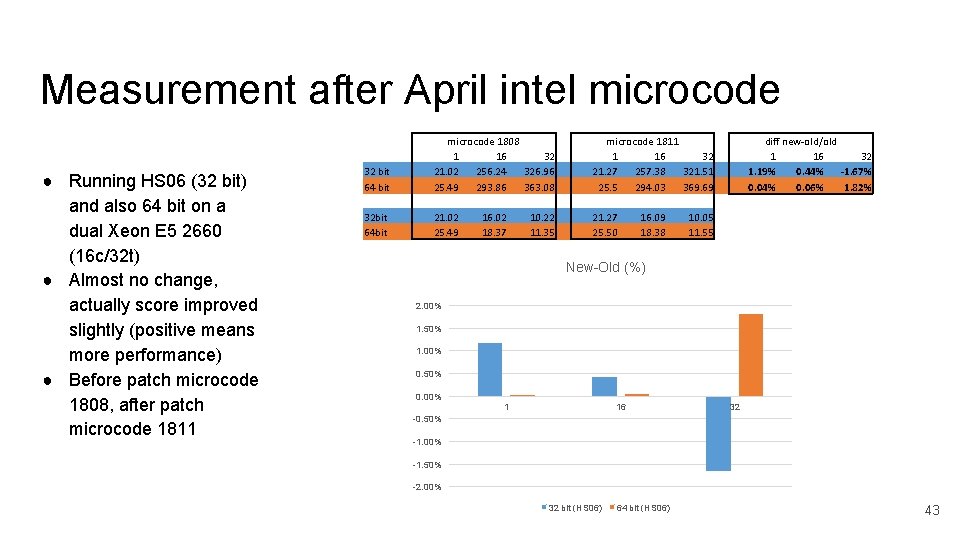

Measurement after April intel microcode ● Running HS 06 (32 bit) and also 64 bit on a dual Xeon E 5 2660 (16 c/32 t) ● Almost no change, actually score improved slightly (positive means more performance) ● Before patch microcode 1808, after patch microcode 1811 32 bit 64 bit microcode 1808 1 16 32 21. 02 256. 24 326. 96 25. 49 293. 86 363. 08 microcode 1811 1 16 32 21. 27 257. 38 321. 51 25. 5 294. 03 369. 69 32 bit 64 bit 21. 02 25. 49 21. 27 25. 50 16. 02 18. 37 10. 22 11. 35 16. 09 18. 38 diff new-old/old 1 16 32 1. 19% 0. 44% -1. 67% 0. 04% 0. 06% 1. 82% 10. 05 11. 55 New-Old (%) 2. 00% 1. 50% 1. 00% 0. 50% 0. 00% 1 16 32 -0. 50% -1. 00% -1. 50% -2. 00% 32 bit (HS 06) 64 bit (HS 06) 43

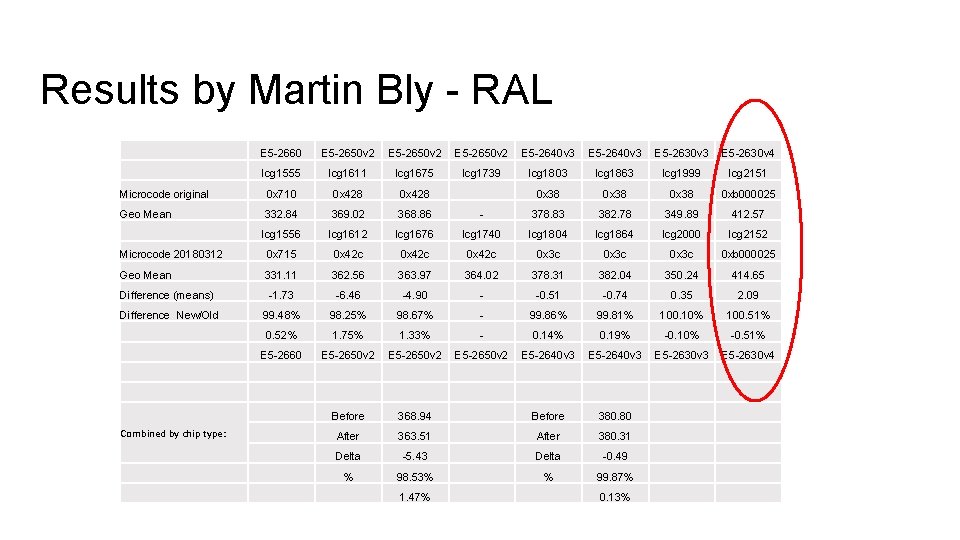

Results by Martin Bly - RAL E 5 -2660 E 5 -2650 v 2 E 5 -2640 v 3 E 5 -2630 v 4 lcg 1555 lcg 1611 lcg 1675 lcg 1739 lcg 1803 lcg 1863 lcg 1999 lcg 2151 Microcode original 0 x 710 0 x 428 0 x 38 0 xb 000025 Geo Mean 332. 84 369. 02 368. 86 - 378. 83 382. 78 349. 89 412. 57 lcg 1556 lcg 1612 lcg 1676 lcg 1740 lcg 1804 lcg 1864 lcg 2000 lcg 2152 Microcode 20180312 0 x 715 0 x 42 c 0 x 3 c 0 xb 000025 Geo Mean 331. 11 362. 56 363. 97 364. 02 378. 31 382. 04 350. 24 414. 65 Difference (means) -1. 73 -6. 46 -4. 90 - -0. 51 -0. 74 0. 35 2. 09 Difference New/Old 99. 48% 98. 25% 98. 67% - 99. 86% 99. 81% 100. 10% 100. 51% 0. 52% 1. 75% 1. 33% - 0. 14% 0. 19% -0. 10% -0. 51% E 5 -2660 E 5 -2650 v 2 E 5 -2640 v 3 E 5 -2630 v 4 Before 368. 94 Before 380. 80 After 363. 51 After 380. 31 Delta -5. 43 Delta -0. 49 % 98. 53% % 99. 87% Combined by chip type: 1. 47% 0. 13%

Reminder: Tools • To run benchmarks – Benchmarking suite (for details see https: //indico. cern. ch/event/671504/) – Benchmarks: HS 06 (32 bits, 64 bits), Atlas KV (Geant 4 100 Single muon events), SPEC 2017 (only C++ benchmarks) • NB: in KV athena 17. 8. 0. 9 is used • NB: each benchmark runs in the most pessimistic scenario – all logical cores busy running simultaneously the same workload – as the approach adopted in HS 06 D. Giordano HEPi. X Benchmarking Working Group 04/05/2017

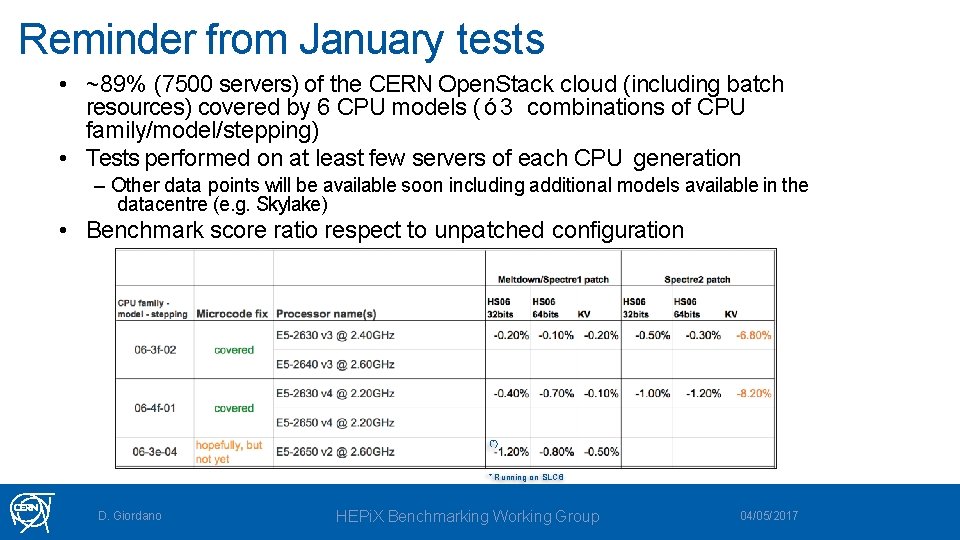

Reminder from January tests • ~89% (7500 servers) of the CERN Open. Stack cloud (including batch resources) covered by 6 CPU models ( ó 3 combinations of CPU family/model/stepping) • Tests performed on at least few servers of each CPU generation – Other data points will be available soon including additional models available in the datacentre (e. g. Skylake) • Benchmark score ratio respect to unpatched configuration (*) * Running on SLC 6 D. Giordano HEPi. X Benchmarking Working Group 04/05/2017

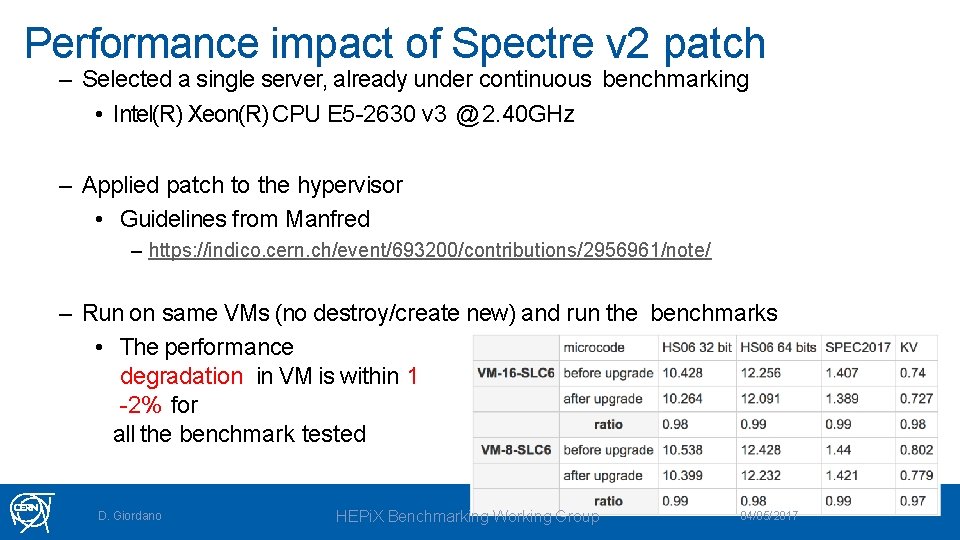

Performance impact of Spectre v 2 patch – Selected a single server, already under continuous benchmarking • Intel(R) Xeon(R) CPU E 5 -2630 v 3 @ 2. 40 GHz – Applied patch to the hypervisor • Guidelines from Manfred – https: //indico. cern. ch/event/693200/contributions/2956961/note/ – Run on same VMs (no destroy/create new) and run the benchmarks • The performance degradation in VM is within 1 -2% for all the benchmark tested D. Giordano HEPi. X Benchmarking Working Group 04/05/2017

Spectre Next Generation – Spectre-NG • Recent news of eight (8) new Spectre like vulnerabilities • 4 high risk – 4 medium risk • Most dangerous in Virtual Machines or Cloud environments • One could theoretically left attackers bypass VM isolation from cloud systems to steal sensitive data such as password or digital keys • Waiting for the patches to (RE)measure the impact on application code and HS 06 • Possible performances impact to be checked as soon as patches become available • Rumors of first wave of patches and end of May (21 st ? ) second wave end of August • AMD and ARM also could be vulnerable Benchmarking WG 48

System performance modelling WG • After last HEPi. X meeting a new working group was born • Main topic “System performances modelling” • https: //indico. cern. ch/category/9733/ • Several of their tasks connected with the CPU benchmark WG could contribute and vice versa. • They also plan bi-weekly meeting, usually on Wed 4 pm Benchmarking WG 49

The new portal • HEPi. X site moved to a new portal thanks to Michel Jouvin • After successful move the Rome site has been recently closed • HS 06 Benchmarking page is available there http: //w 3. hepix. org/2017/10/28/Welcome. html • And cross-linked to our twiki https: //twiki. cern. ch/twiki/bin/view/HEPIX/Cpu. Benchmarking WG 50

Benchmarking WG 51

Backup Slides Benchmarking WG 52

Speculative Execution • Shared principle: • • • Multiple naming conventions: • • Use speculative execution to bypass protections Execution is reverted, but traces remain (CPU caches) Google Project Zero: Variant 1/2/3 Press releases: Meltdown & Spectre CVE-2017 -{5753, 5715, 5754} Our convention: • • • Spectre Variant 1 (CVE-2017 -5753) Spectre Variant 2 (CVE-2017 -5715) Meltdown/Variant 3 (CVE-2017 -5754) 2018/01/11 Vincent Brillault 5 3

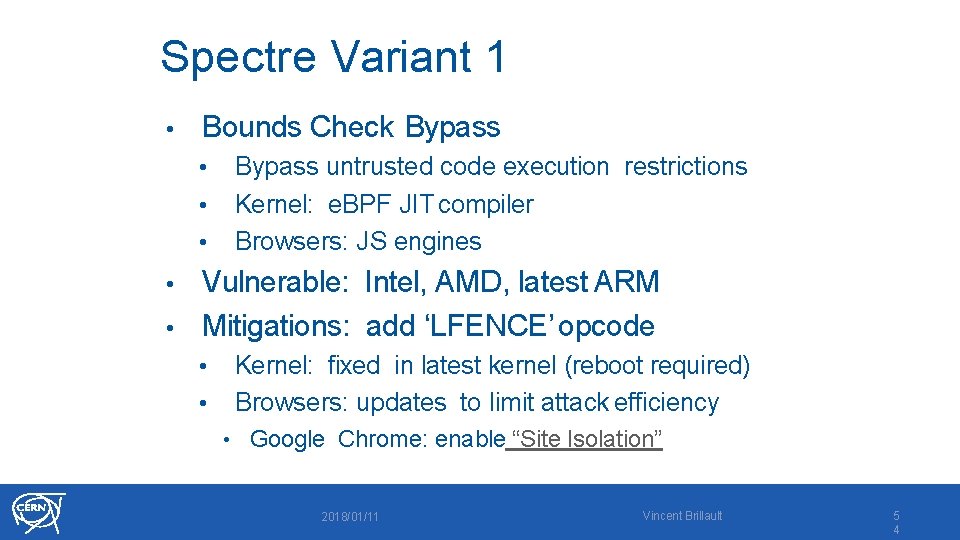

Spectre Variant 1 • Bounds Check Bypass • • • Bypass untrusted code execution restrictions Kernel: e. BPF JIT compiler Browsers: JS engines Vulnerable: Intel, AMD, latest ARM • Mitigations: add ‘LFENCE’ opcode • • • Kernel: fixed in latest kernel (reboot required) Browsers: updates to limit attack efficiency • Google Chrome: enable “Site Isolation” 2018/01/11 Vincent Brillault 5 4

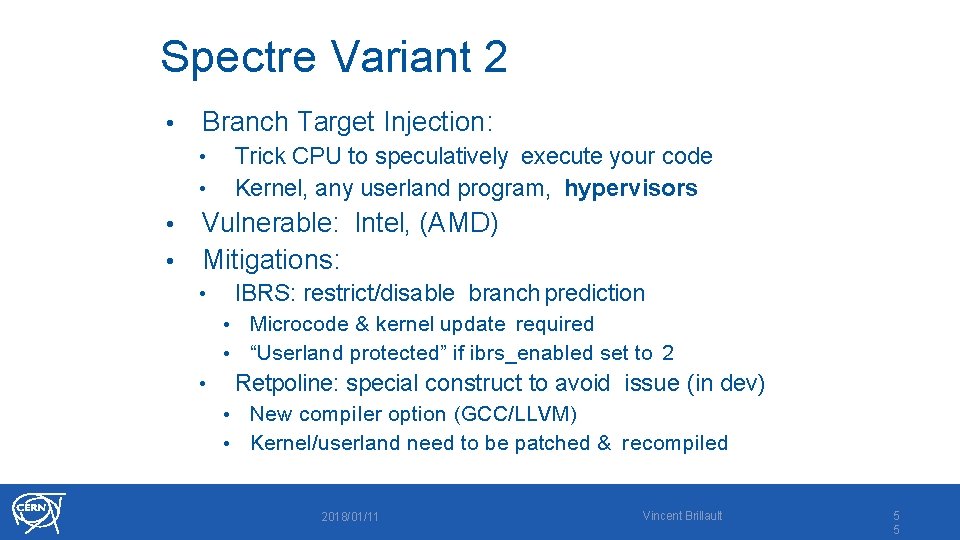

Spectre Variant 2 • Branch Target Injection: Trick CPU to speculatively execute your code Kernel, any userland program, hypervisors • • Vulnerable: Intel, (AMD) Mitigations: IBRS: restrict/disable branch prediction • • • Microcode & kernel update required “Userland protected” if ibrs_enabled set to 2 Retpoline: special construct to avoid issue (in dev) • New compiler option (GCC/LLVM) • Kernel/userland need to be patched & recompiled • 2018/01/11 Vincent Brillault 5 5

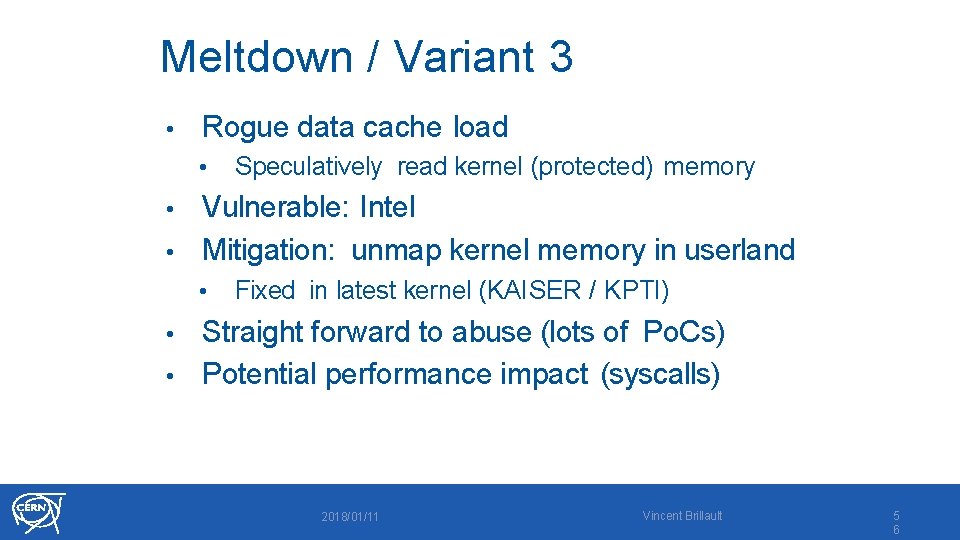

Meltdown / Variant 3 • Rogue data cache load • Speculatively read kernel (protected) memory Vulnerable: Intel • Mitigation: unmap kernel memory in userland • • Fixed in latest kernel (KAISER / KPTI) Straight forward to abuse (lots of Po. Cs) • Potential performance impact (syscalls) • 2018/01/11 Vincent Brillault 5 6

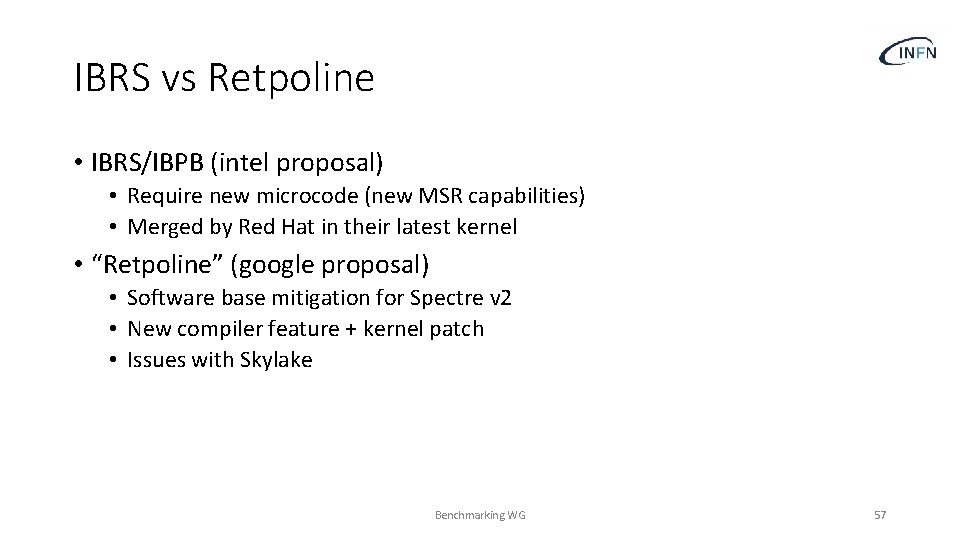

IBRS vs Retpoline • IBRS/IBPB (intel proposal) • Require new microcode (new MSR capabilities) • Merged by Red Hat in their latest kernel • “Retpoline” (google proposal) • Software base mitigation for Spectre v 2 • New compiler feature + kernel patch • Issues with Skylake Benchmarking WG 57

- Slides: 57