Apprenticeship Learning for Robotic Control Pieter Abbeel Stanford

Apprenticeship Learning for Robotic Control Pieter Abbeel Stanford University Joint work with: Andrew Y. Ng, Adam Coates, J. Zico Kolter and Morgan Quigley

Motivation for apprenticeship learning Pieter Abbeel

Outline n Preliminary: reinforcement learning. n Apprenticeship learning algorithms. n Experimental results on various robotic platforms. Pieter Abbeel

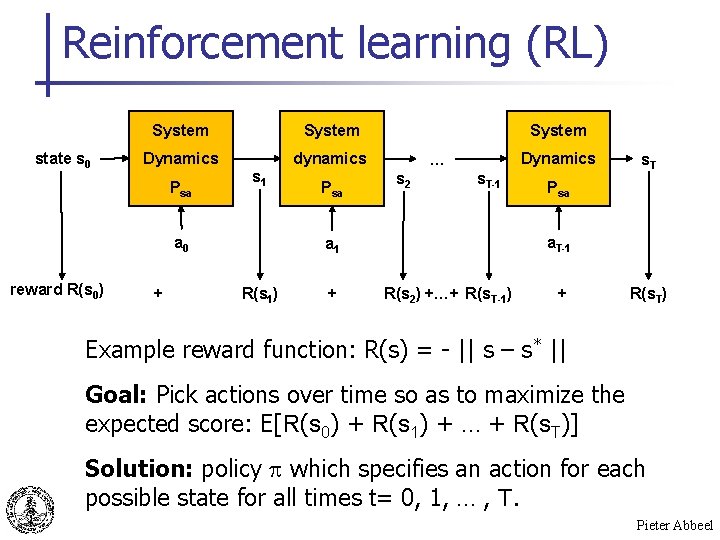

Reinforcement learning (RL) state s 0 System Dynamics dynamics Psa s 1 a 0 reward R(s 0) + Psa System Dynamics … s 2 s. T-1 + Psa a. T-1 a 1 R(s 1) s. T R(s 2) +…+ R(s. T-1) + R(s. T) Example reward function: R(s) = - || s – s* || Goal: Pick actions over time so as to maximize the expected score: E[R(s 0) + R(s 1) + … + R(s. T)] Solution: policy which specifies an action for each possible state for all times t= 0, 1, … , T. Pieter Abbeel

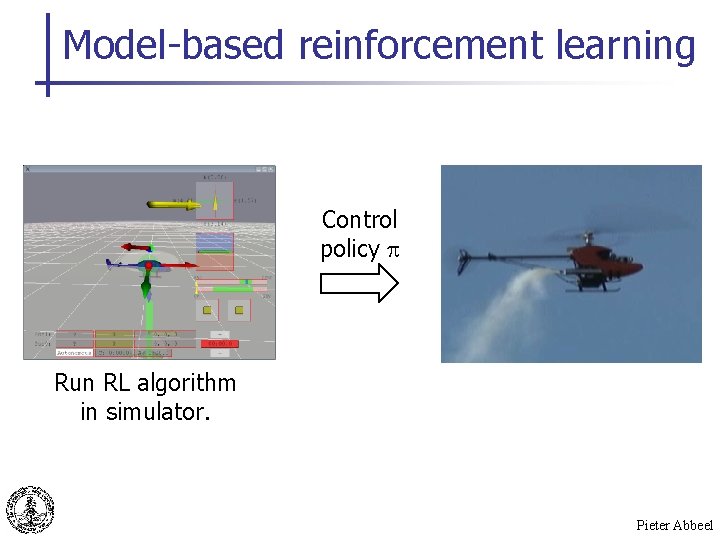

Model-based reinforcement learning Control policy Run RL algorithm in simulator. Pieter Abbeel

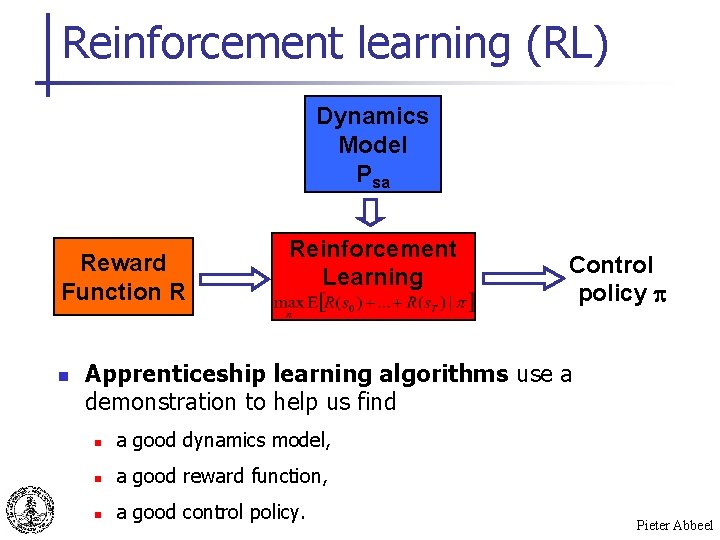

Reinforcement learning (RL) Dynamics Model Psa Reward Function R n Reinforcement Learning Control policy p Apprenticeship learning algorithms use a demonstration to help us find n a good dynamics model, n a good reward function, n a good control policy. Pieter Abbeel

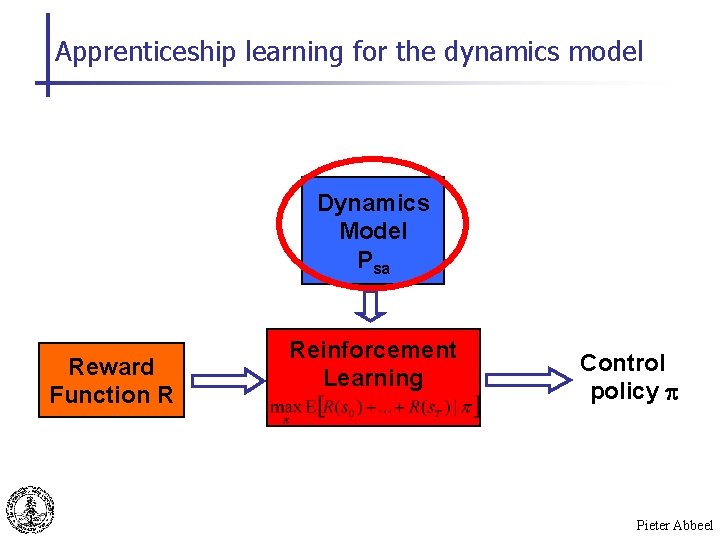

Apprenticeship learning for the dynamics model Dynamics Model Psa Reward Function R Reinforcement Learning Control policy p Pieter Abbeel

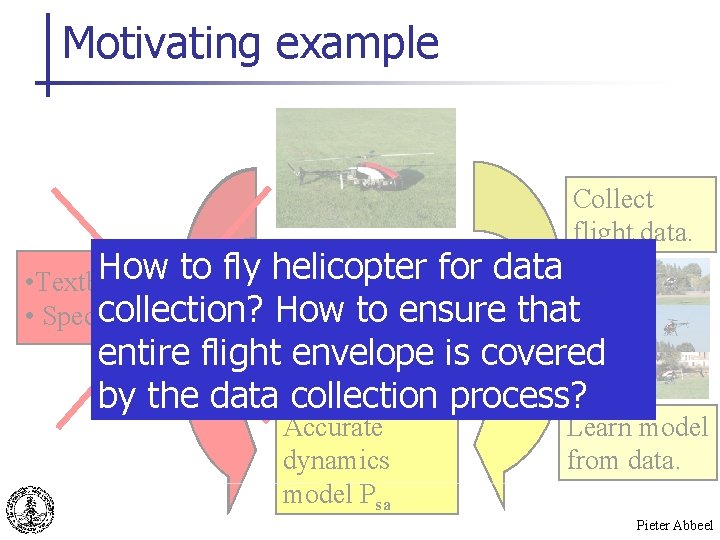

Motivating example Collect flight data. How to fly helicopter for data • Textbook model collection? How to ensure that • Specification entire flight envelope is covered by the data collection process? Accurate dynamics model Psa Learn model from data. Pieter Abbeel

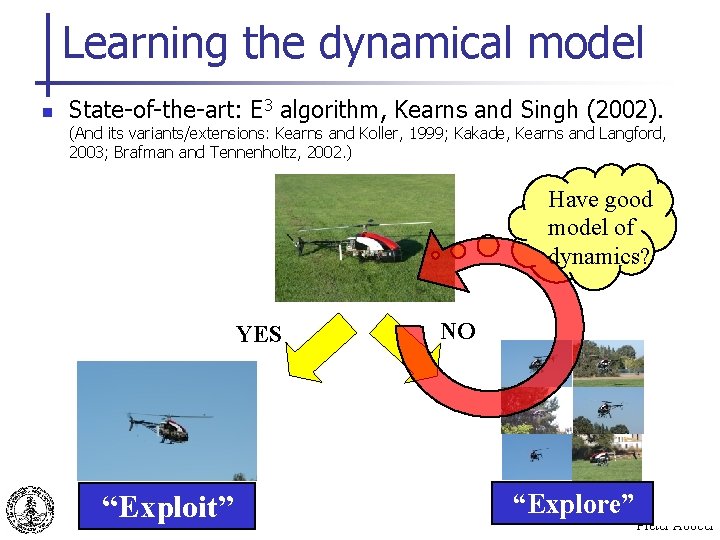

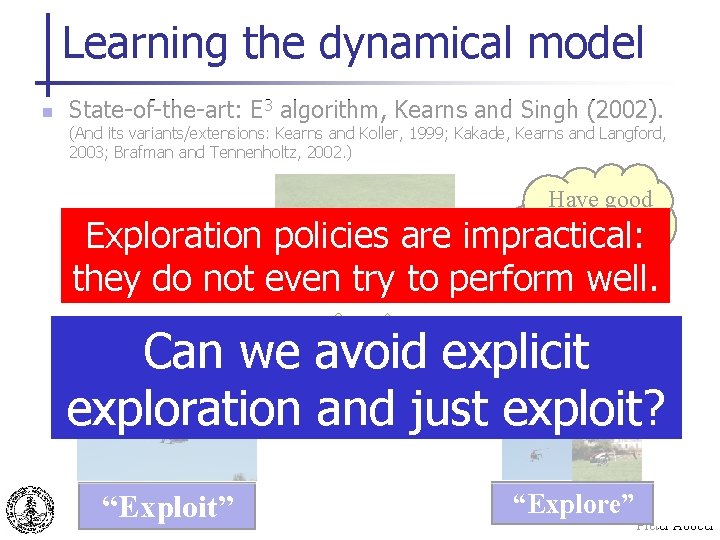

Learning the dynamical model n State-of-the-art: E 3 algorithm, Kearns and Singh (2002). (And its variants/extensions: Kearns and Koller, 1999; Kakade, Kearns and Langford, 2003; Brafman and Tennenholtz, 2002. ) Have good model of dynamics? YES “Exploit” NO “Explore” Pieter Abbeel

Learning the dynamical model n State-of-the-art: E 3 algorithm, Kearns and Singh (2002). (And its variants/extensions: Kearns and Koller, 1999; Kakade, Kearns and Langford, 2003; Brafman and Tennenholtz, 2002. ) Have good model of impractical: dynamics? Exploration policies are they do not even try to perform well. NO Can we avoid explicit exploration and just exploit? YES “Exploit” “Explore” Pieter Abbeel

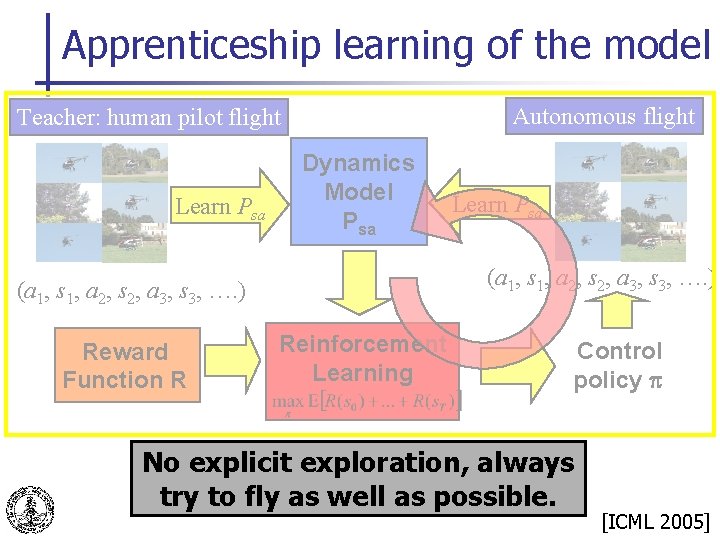

Apprenticeship learning of the model Autonomous flight Teacher: human pilot flight Learn Psa Dynamics Model Psa (a 1, s 1, a 2, s 2, a 3, s 3, …. ) Reward Function R Learn Psa Reinforcement Learning Control policy p No explicit exploration, always try to fly as well as possible. [ICML 2005] Pieter Abbeel

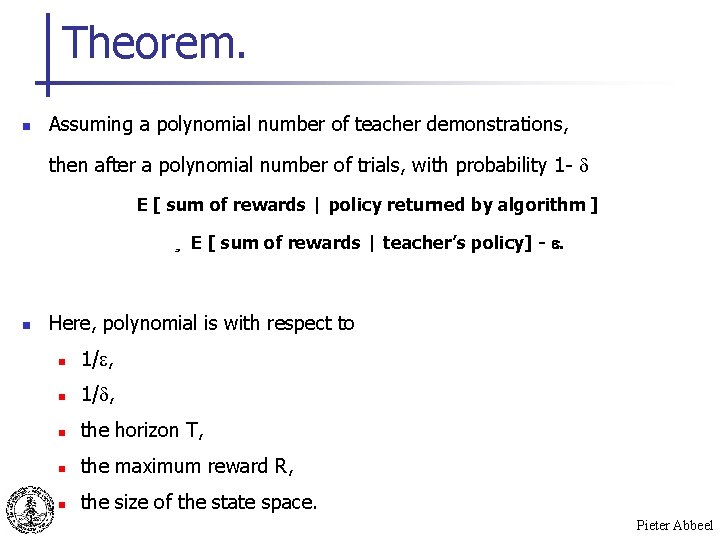

Theorem. n Assuming a polynomial number of teacher demonstrations, then after a polynomial number of trials, with probability 1 - E [ sum of rewards | policy returned by algorithm ] ¸ E [ sum of rewards | teacher’s policy] - . n Here, polynomial is with respect to n 1/ , n the horizon T, n the maximum reward R, n the size of the state space. Pieter Abbeel

Learning the dynamics model n Details of algorithm for learning dynamics model: n Exploiting structure from physics n Lagged learning criterion [NIPS 2005, 2006] Pieter Abbeel

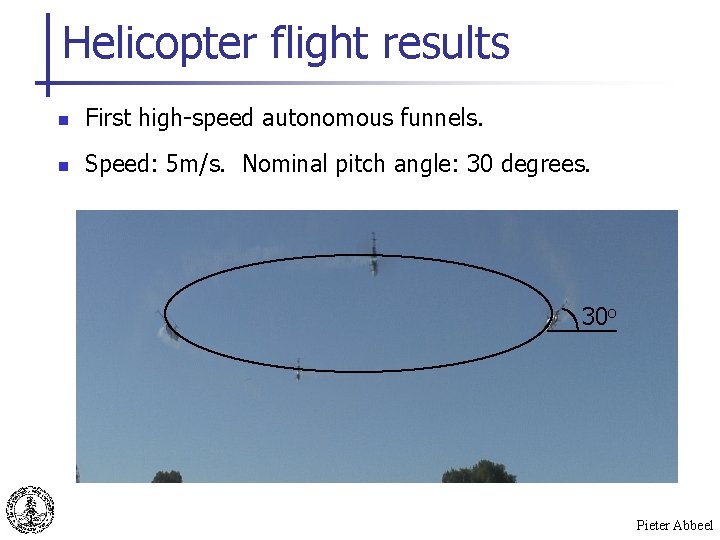

Helicopter flight results n First high-speed autonomous funnels. n Speed: 5 m/s. Nominal pitch angle: 30 degrees. 30 o Pieter Abbeel

Autonomous nose-in funnel Pieter Abbeel

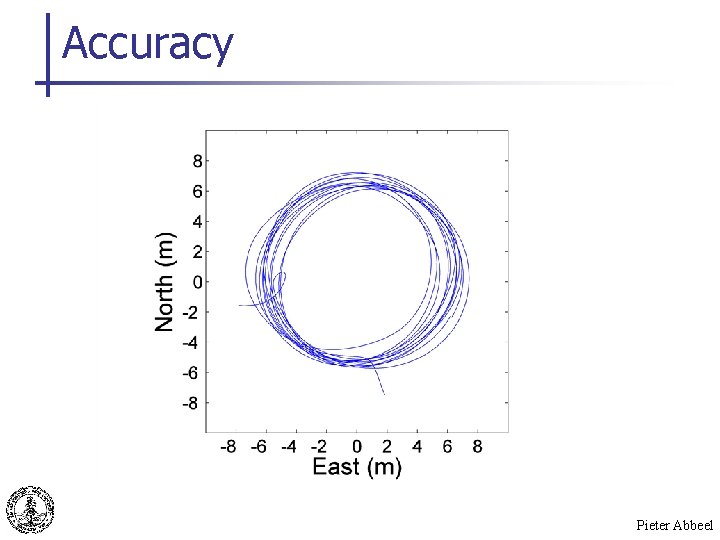

Accuracy Pieter Abbeel

Autonomous tail-in funnel Pieter Abbeel

Key points n n Unlike exploration methods, our algorithm concentrates on the task of interest. Bootstrapping off an initial teacher demonstration is sufficient to perform the task as well as the teacher. Pieter Abbeel

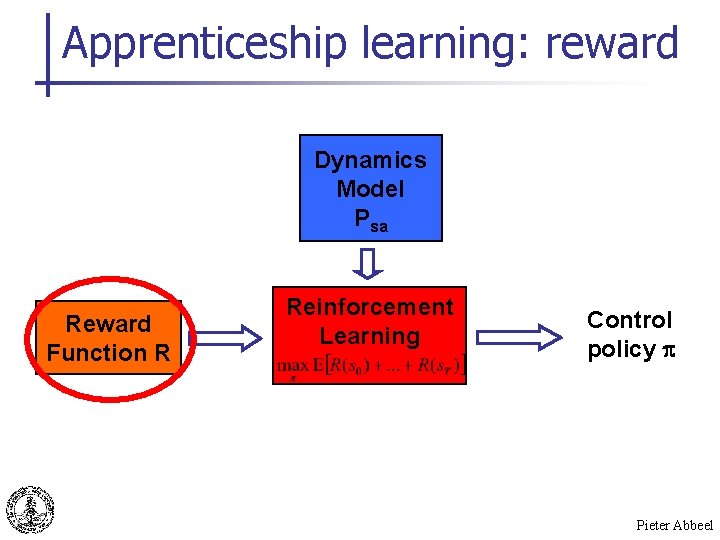

Apprenticeship learning: reward Dynamics Model Psa Reward Function R Reinforcement Learning Control policy p Pieter Abbeel

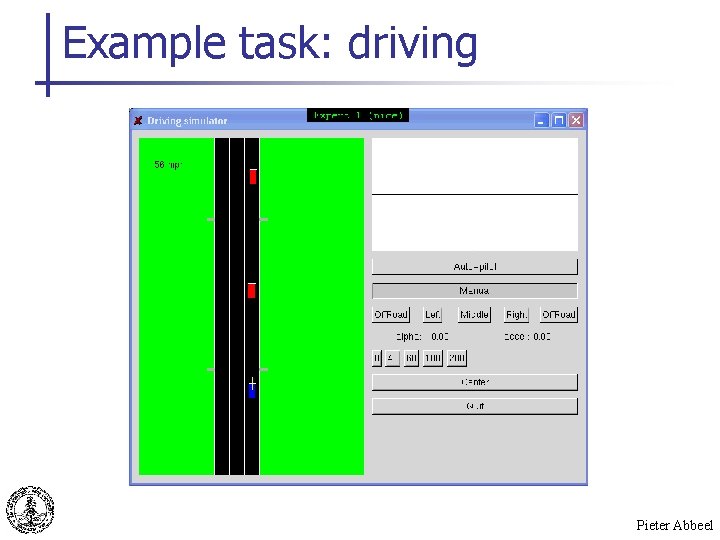

Example task: driving Pieter Abbeel

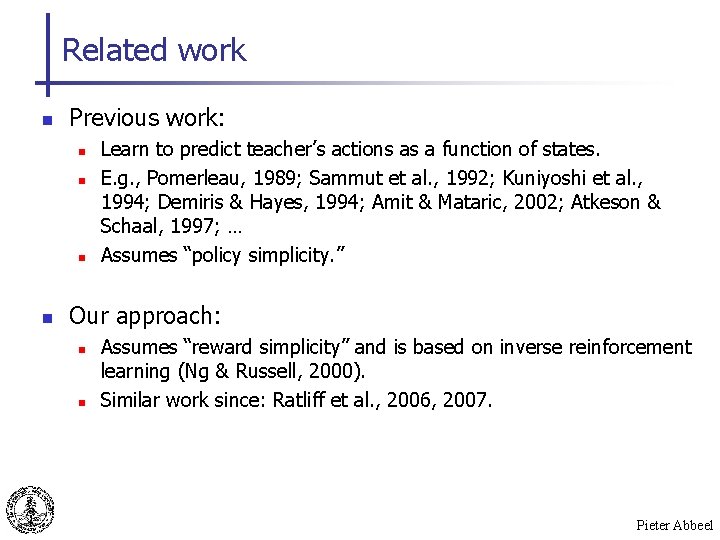

Related work n Previous work: n n Learn to predict teacher’s actions as a function of states. E. g. , Pomerleau, 1989; Sammut et al. , 1992; Kuniyoshi et al. , 1994; Demiris & Hayes, 1994; Amit & Mataric, 2002; Atkeson & Schaal, 1997; … Assumes “policy simplicity. ” Our approach: n n Assumes “reward simplicity” and is based on inverse reinforcement learning (Ng & Russell, 2000). Similar work since: Ratliff et al. , 2006, 2007. Pieter Abbeel

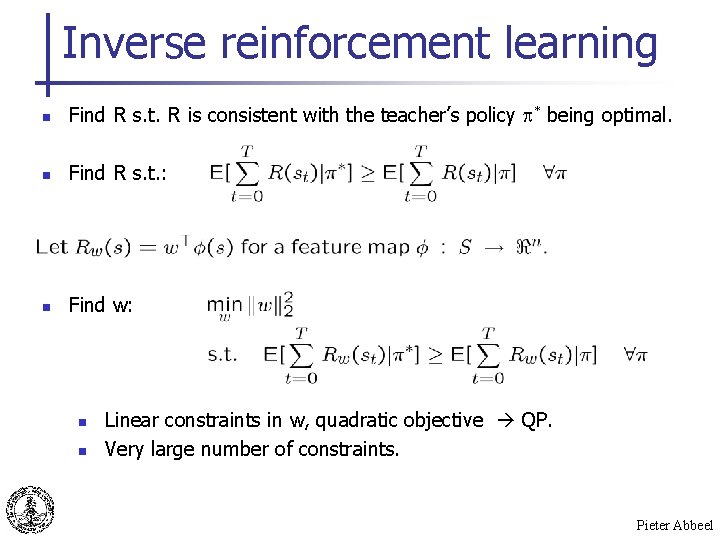

Inverse reinforcement learning n Find R s. t. R is consistent with the teacher’s policy * being optimal. n Find R s. t. : n Find w: n n Linear constraints in w, quadratic objective QP. Very large number of constraints. Pieter Abbeel

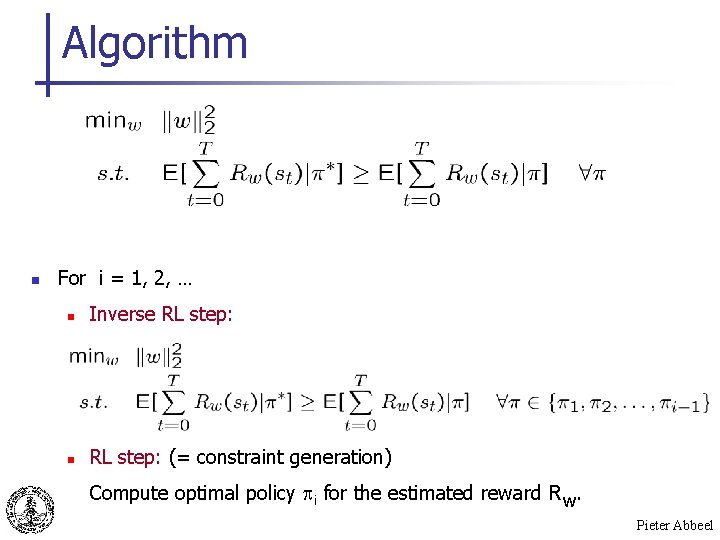

Algorithm n For i = 1, 2, … n Inverse RL step: n RL step: (= constraint generation) Compute optimal policy i for the estimated reward Rw. Pieter Abbeel

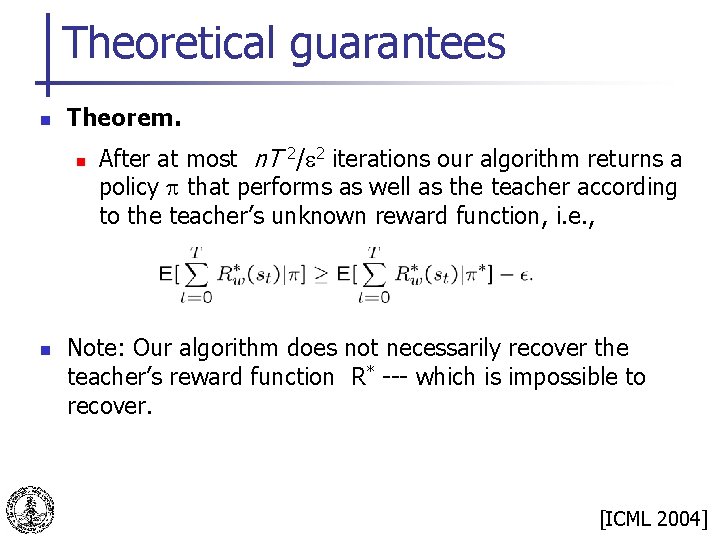

Theoretical guarantees n Theorem. n n After at most n. T 2/ 2 iterations our algorithm returns a policy that performs as well as the teacher according to the teacher’s unknown reward function, i. e. , Note: Our algorithm does not necessarily recover the teacher’s reward function R* --- which is impossible to recover. [ICML 2004] Pieter Abbeel

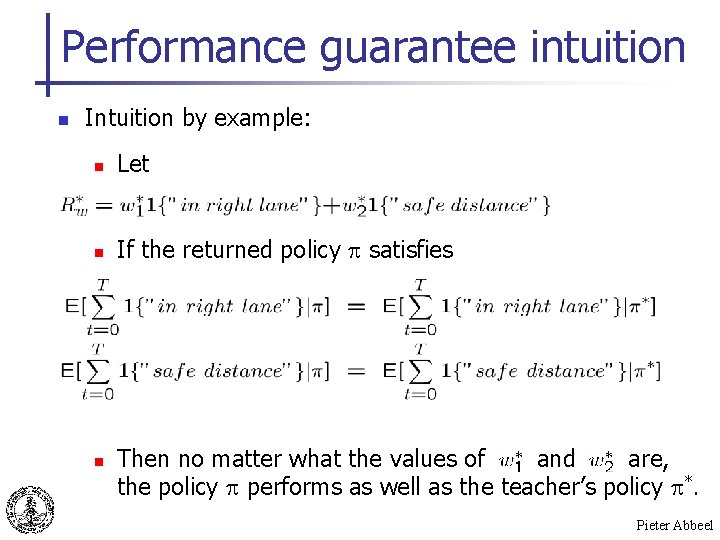

Performance guarantee intuition n Intuition by example: n Let n If the returned policy satisfies n Then no matter what the values of and are, the policy performs as well as the teacher’s policy *. Pieter Abbeel

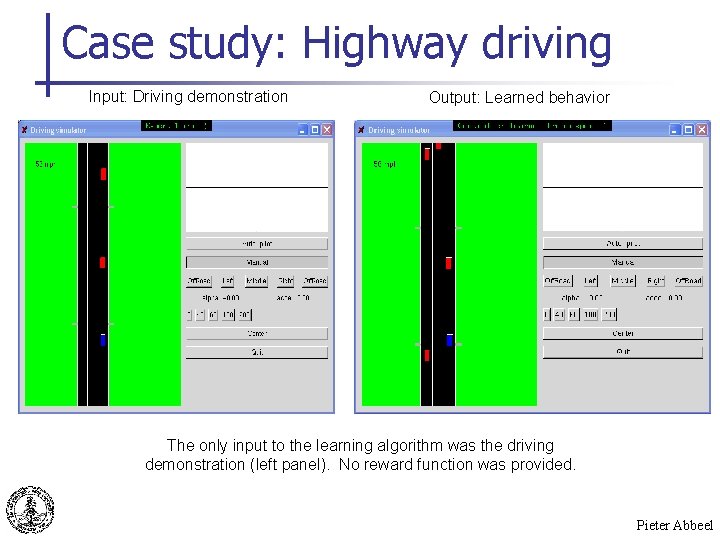

Case study: Highway driving Input: Driving demonstration Output: Learned behavior The only input to the learning algorithm was the driving demonstration (left panel). No reward function was provided. Pieter Abbeel

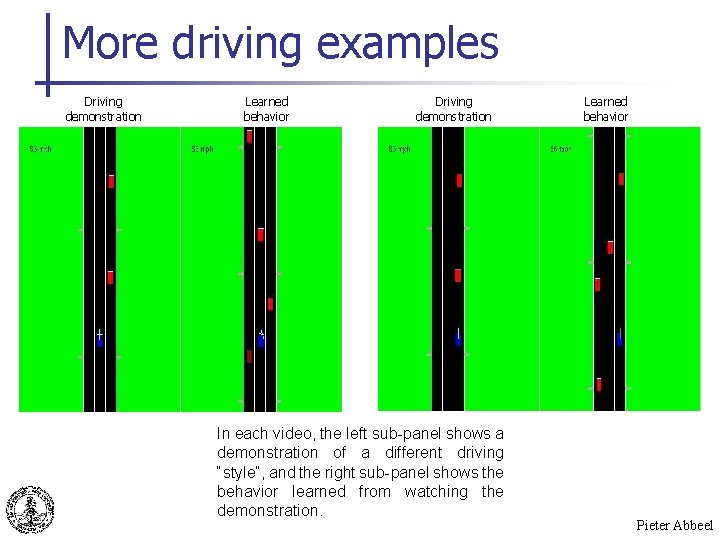

More driving examples Driving demonstration Learned behavior Driving demonstration In each video, the left sub-panel shows a demonstration of a different driving “style”, and the right sub-panel shows the behavior learned from watching the demonstration. Learned behavior Pieter Abbeel

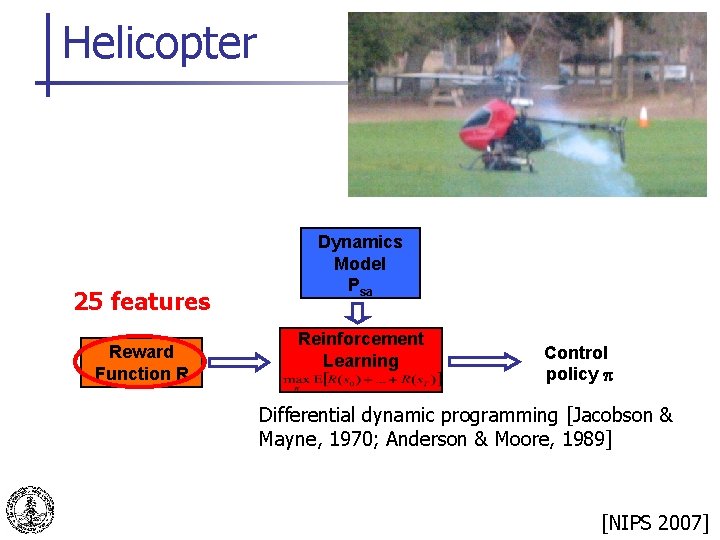

Helicopter 25 features Reward Function R Dynamics Model Psa Reinforcement Learning Control policy p Differential dynamic programming [Jacobson & Mayne, 1970; Anderson & Moore, 1989] Pieter Abbeel [NIPS 2007]

![Autonomous aerobatics n [Show helicopter movie in Media Player. ] Pieter Abbeel Autonomous aerobatics n [Show helicopter movie in Media Player. ] Pieter Abbeel](http://slidetodoc.com/presentation_image_h2/e4a7195a9719bb5215ce01364ab3482b/image-30.jpg)

Autonomous aerobatics n [Show helicopter movie in Media Player. ] Pieter Abbeel

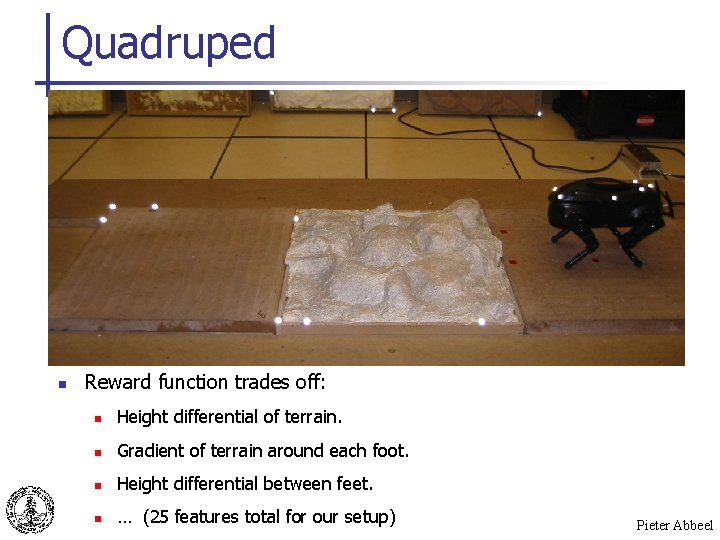

Quadruped Pieter Abbeel

Quadruped n Reward function trades off: n Height differential of terrain. n Gradient of terrain around each foot. n Height differential between feet. n … (25 features total for our setup) Pieter Abbeel

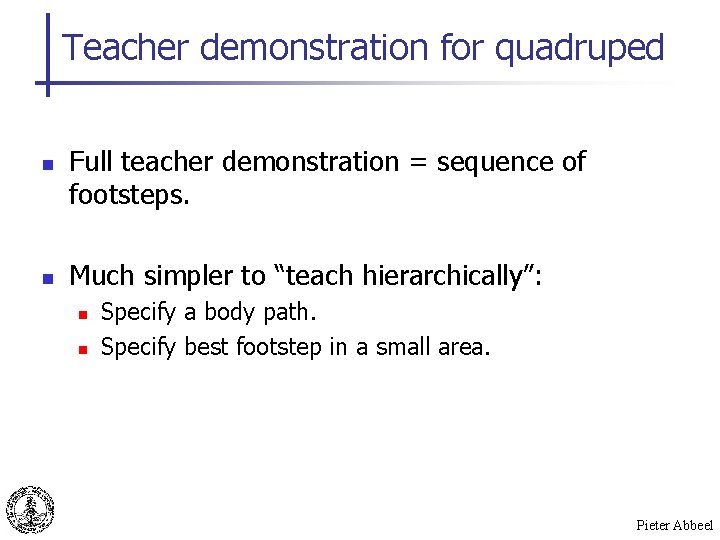

Teacher demonstration for quadruped n n Full teacher demonstration = sequence of footsteps. Much simpler to “teach hierarchically”: n n Specify a body path. Specify best footstep in a small area. Pieter Abbeel

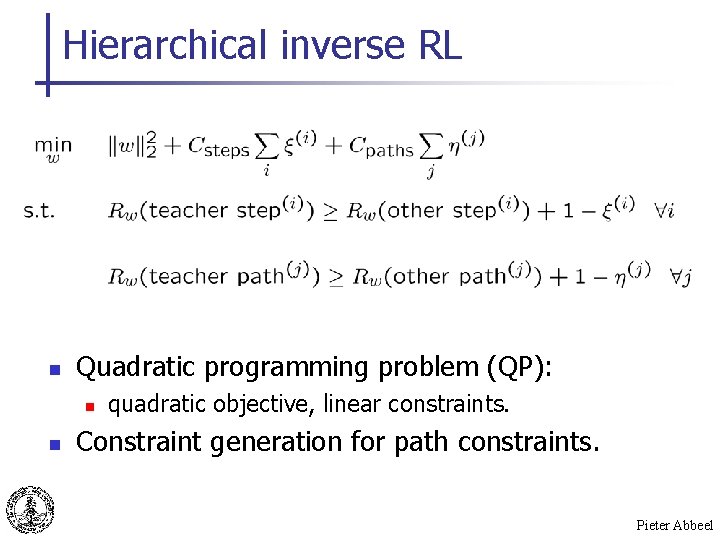

Hierarchical inverse RL n Quadratic programming problem (QP): n n quadratic objective, linear constraints. Constraint generation for path constraints. Pieter Abbeel

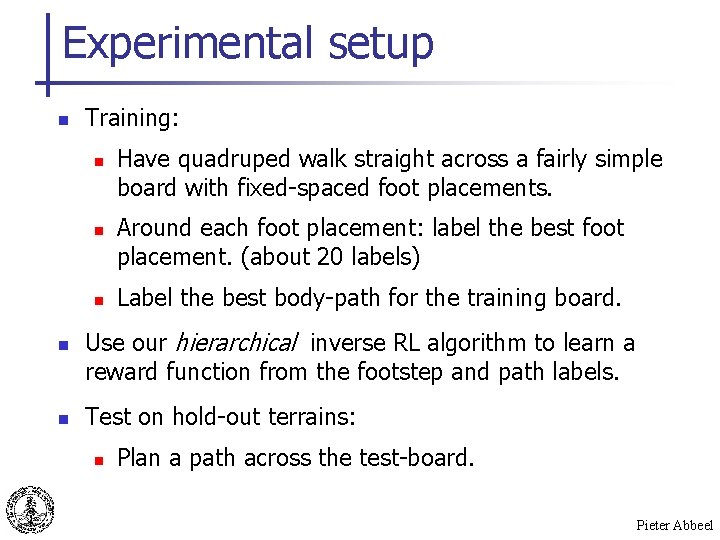

Experimental setup n Training: n n n Have quadruped walk straight across a fairly simple board with fixed-spaced foot placements. Around each foot placement: label the best foot placement. (about 20 labels) Label the best body-path for the training board. Use our hierarchical inverse RL algorithm to learn a reward function from the footstep and path labels. Test on hold-out terrains: n Plan a path across the test-board. Pieter Abbeel

![Quadruped on test-board n [Show movie in Media Player. ] Pieter Abbeel Quadruped on test-board n [Show movie in Media Player. ] Pieter Abbeel](http://slidetodoc.com/presentation_image_h2/e4a7195a9719bb5215ce01364ab3482b/image-36.jpg)

Quadruped on test-board n [Show movie in Media Player. ] Pieter Abbeel

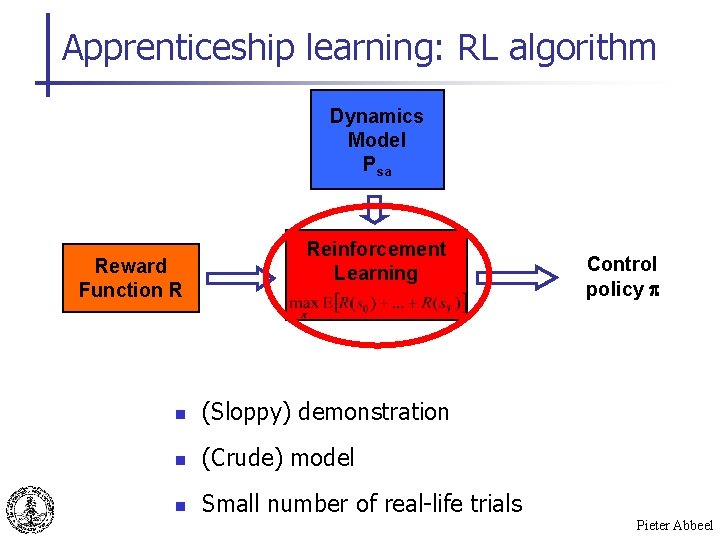

Apprenticeship learning: RL algorithm Dynamics Model Psa Reward Function R Reinforcement Learning n (Sloppy) demonstration n (Crude) model n Small number of real-life trials Control policy p Pieter Abbeel

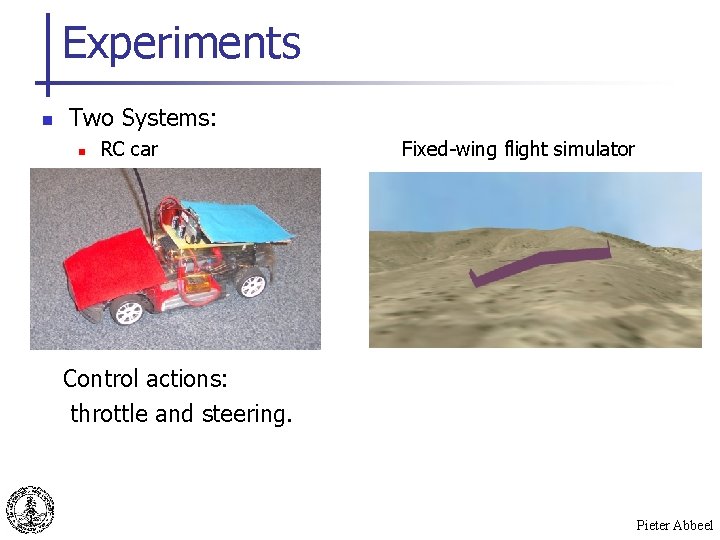

Experiments n Two Systems: n RC car Fixed-wing flight simulator Control actions: throttle and steering. Pieter Abbeel

RC Car: Circle Pieter Abbeel

RC Car: Figure-8 Maneuver Pieter Abbeel

Conclusion n n Apprenticeship learning algorithms help us find better controllers by exploiting teacher demonstrations. Our current work exploits teacher demonstrations to find n a good dynamics model, n a good reward function, n a good control policy. Pieter Abbeel

Acknowledgments n n Adam Coates, Morgan Quigley, Andrew Y. Ng J. Zico Kolter, Andrew Y. Ng n n Andrew Y. Ng Morgan Quigley, Andrew Y. Ng Pieter Abbeel

- Slides: 43