An Analysis of Facebook Photo Caching Qi Huang

An Analysis of Facebook Photo Caching Qi Huang, Ken Birman, Robbert van Renesse (Cornell), Wyatt Lloyd (Princeton, Facebook), Sanjeev Kumar, Harry C. Li (Facebook)

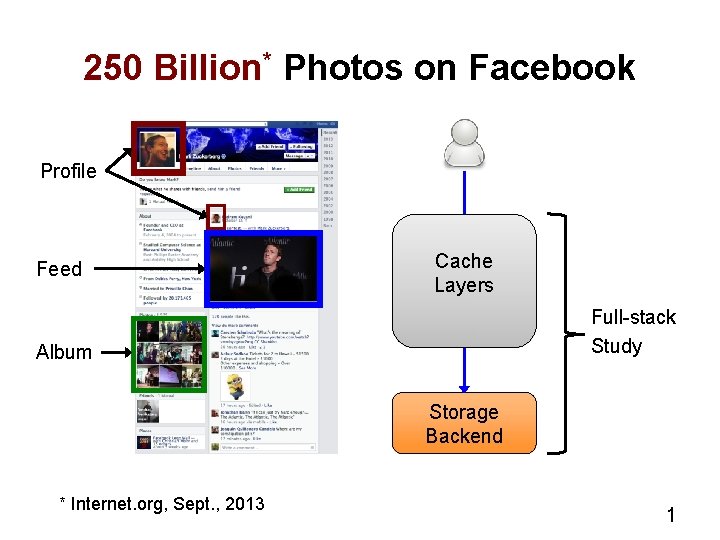

250 Billion* Photos on Facebook Profile Feed Cache Layers Full-stack Study Album Storage Backend * Internet. org, Sept. , 2013 1

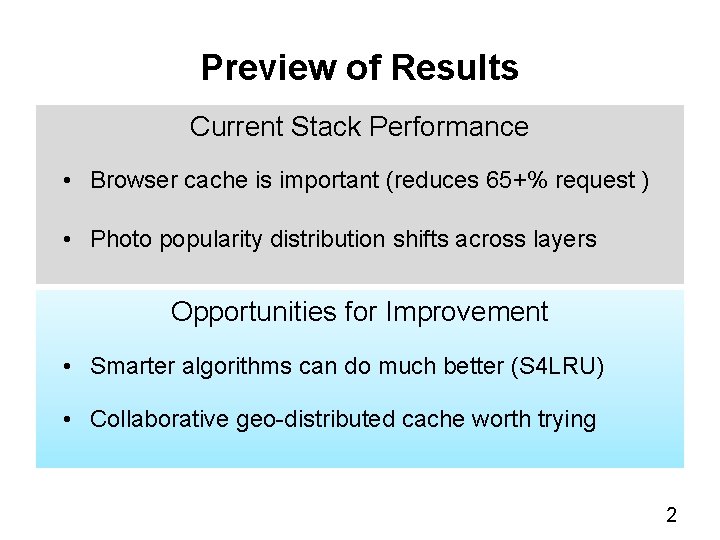

Preview of Results Current Stack Performance • Browser cache is important (reduces 65+% request ) • Photo popularity distribution shifts across layers Opportunities for Improvement • Smarter algorithms can do much better (S 4 LRU) • Collaborative geo-distributed cache worth trying 2

Facebook Photo-Serving Stack Client 3

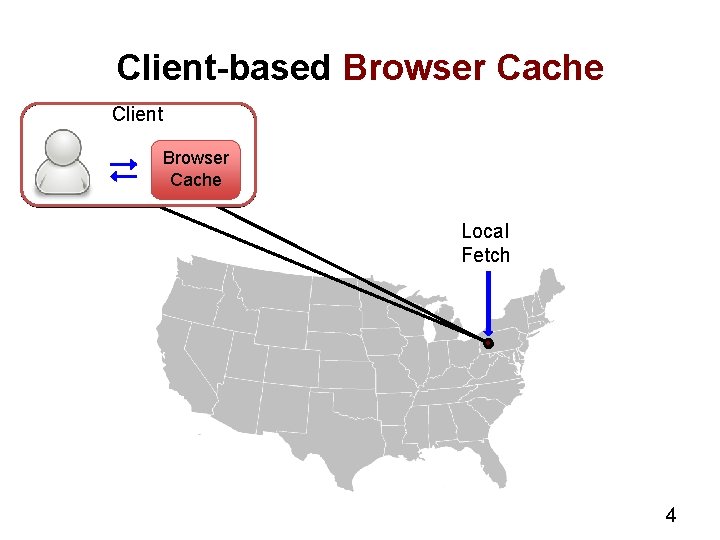

Client-based Browser Cache Client Browser Cache Local Fetch 4

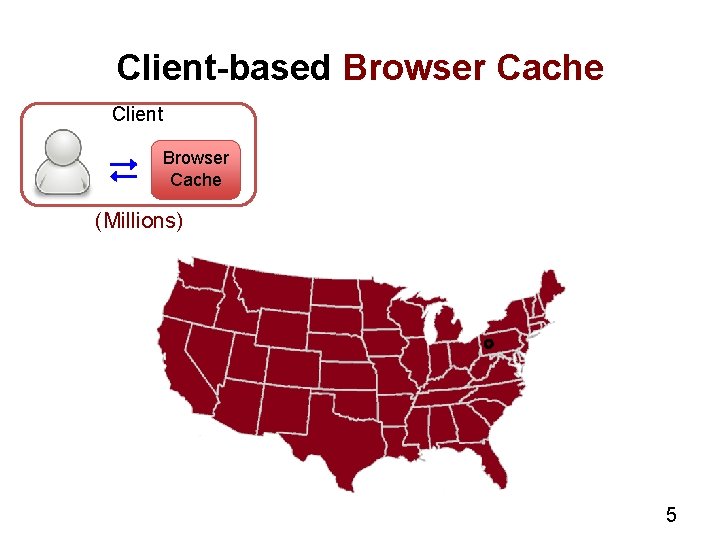

Client-based Browser Cache Client Browser Cache (Millions) 5

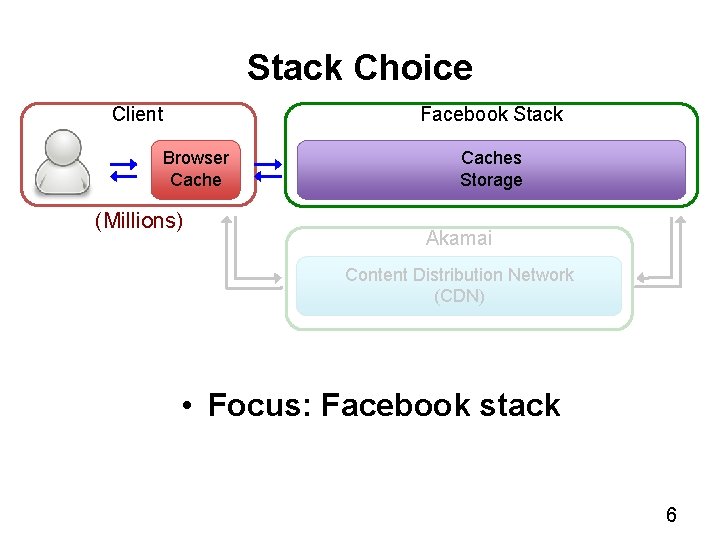

Stack Choice Client Facebook Stack Browser Cache (Millions) Caches Storage Akamai Content Distribution Network (CDN) • Focus: Facebook stack 6

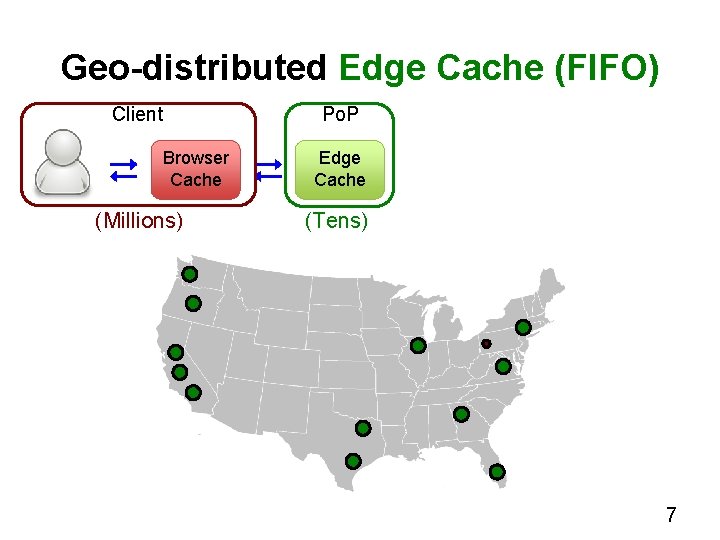

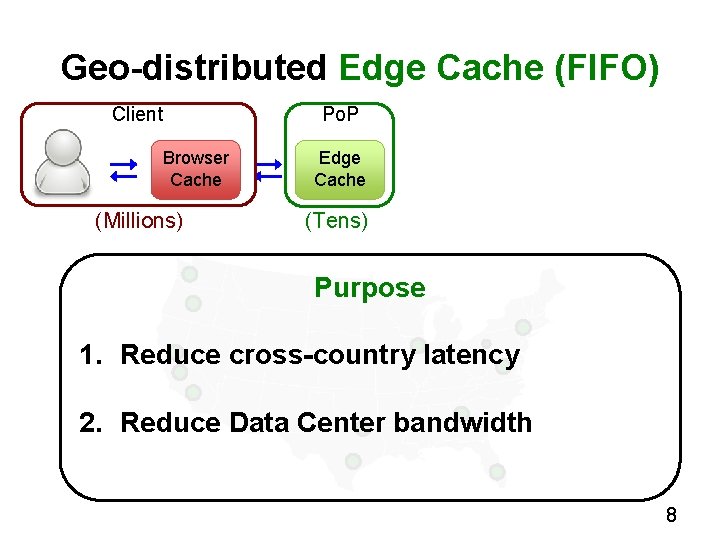

Geo-distributed Edge Cache (FIFO) Client Browser Cache (Millions) Po. P Edge Cache (Tens) 7

Geo-distributed Edge Cache (FIFO) Client Browser Cache (Millions) Po. P Edge Cache (Tens) Purpose 1. Reduce cross-country latency 2. Reduce Data Center bandwidth 8

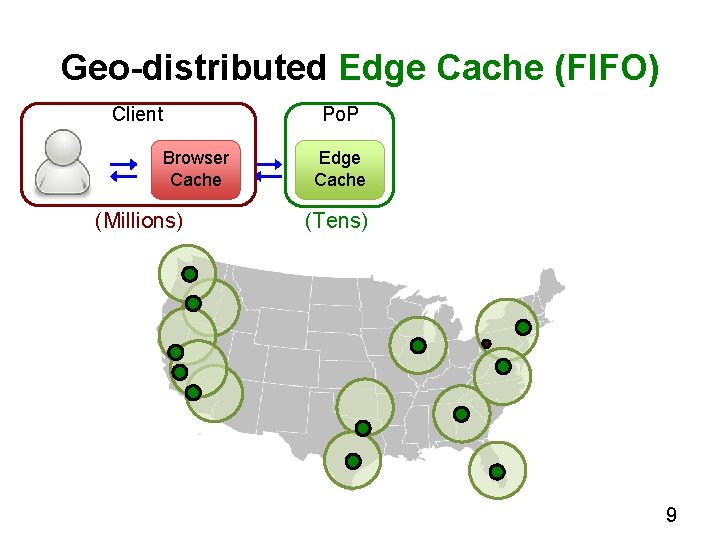

Geo-distributed Edge Cache (FIFO) Client Browser Cache (Millions) Po. P Edge Cache (Tens) 9

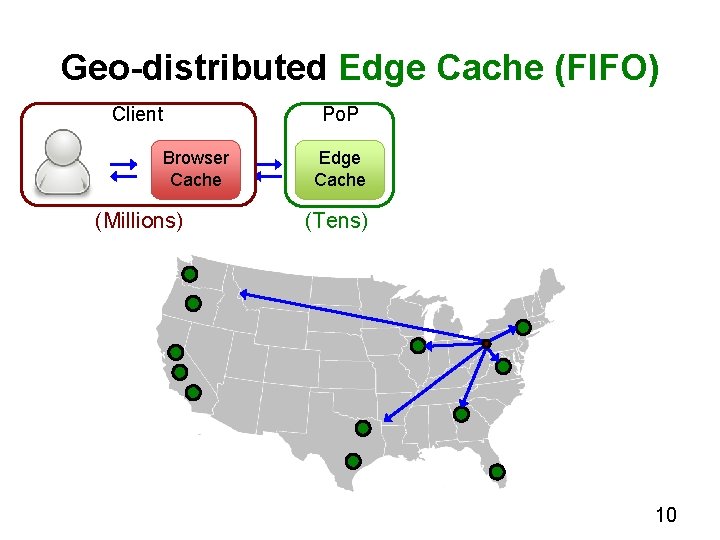

Geo-distributed Edge Cache (FIFO) Client Browser Cache (Millions) Po. P Edge Cache (Tens) 10

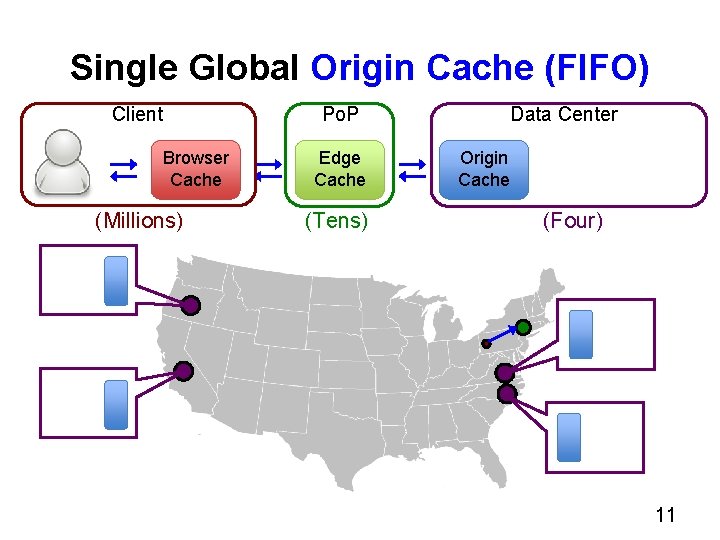

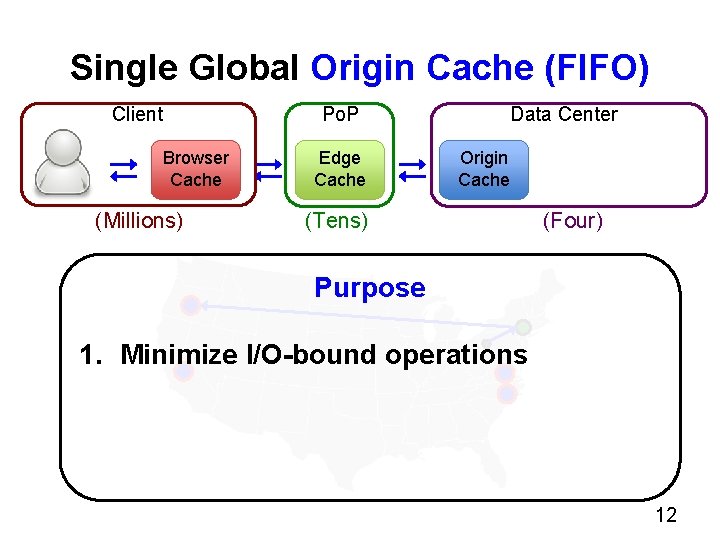

Single Global Origin Cache (FIFO) Client Browser Cache (Millions) Data Center Po. P Edge Cache (Tens) Origin Cache (Four) 11

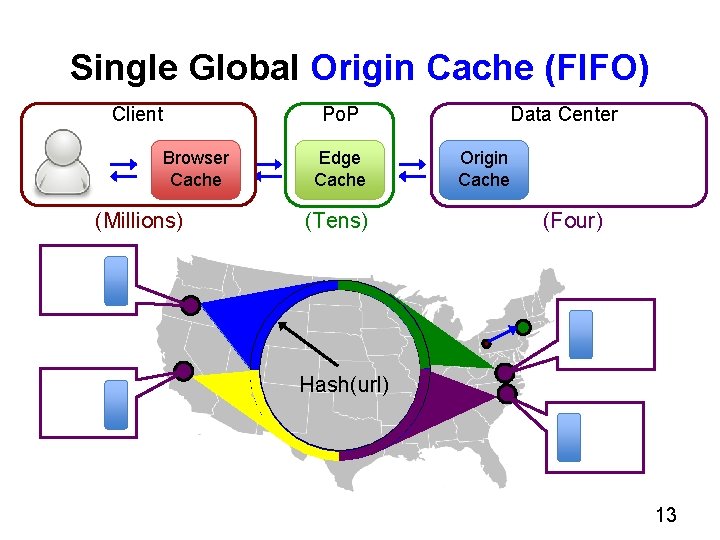

Single Global Origin Cache (FIFO) Client Browser Cache (Millions) Data Center Po. P Edge Cache Origin Cache (Tens) (Four) Purpose 1. Minimize I/O-bound operations 12

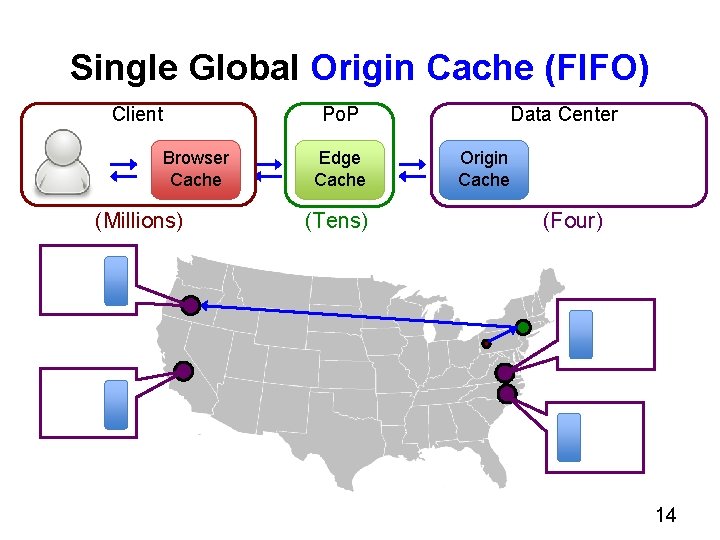

Single Global Origin Cache (FIFO) Client Browser Cache (Millions) Data Center Po. P Edge Cache (Tens) Origin Cache (Four) Hash(url) 13

Single Global Origin Cache (FIFO) Client Browser Cache (Millions) Data Center Po. P Edge Cache (Tens) Origin Cache (Four) 14

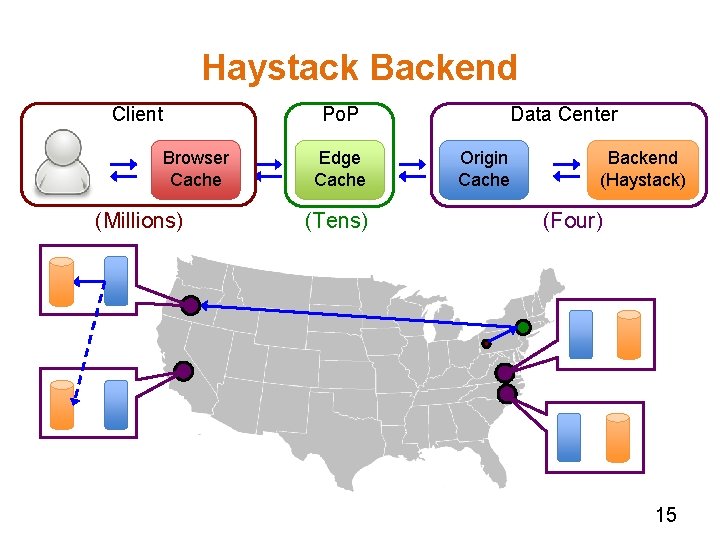

Haystack Backend Client Browser Cache (Millions) Data Center Po. P Edge Cache (Tens) Origin Cache Backend (Haystack) (Four) 15

How did we collect the trace? 16

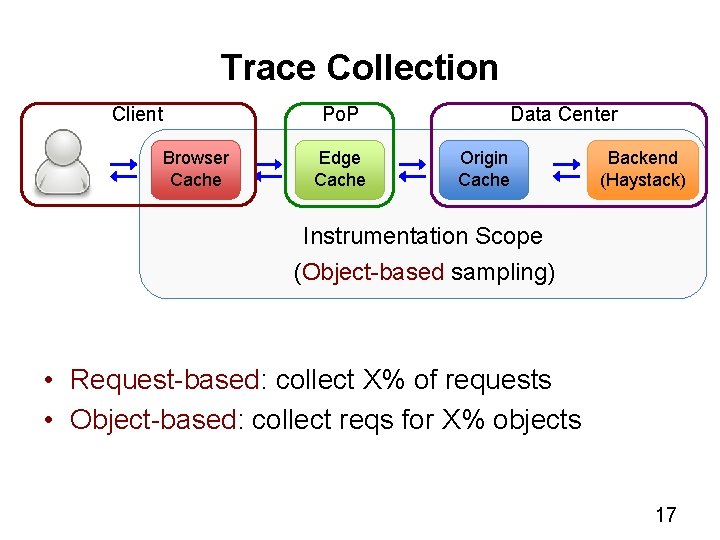

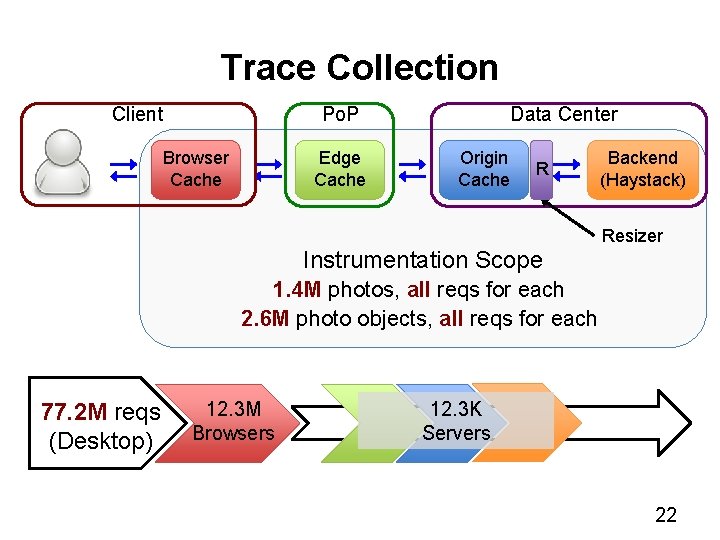

Trace Collection Client Browser Cache Data Center Po. P Edge Cache Origin Cache Backend (Haystack) Instrumentation Scope (Object-based sampling) • Request-based: collect X% of requests • Object-based: collect reqs for X% objects 17

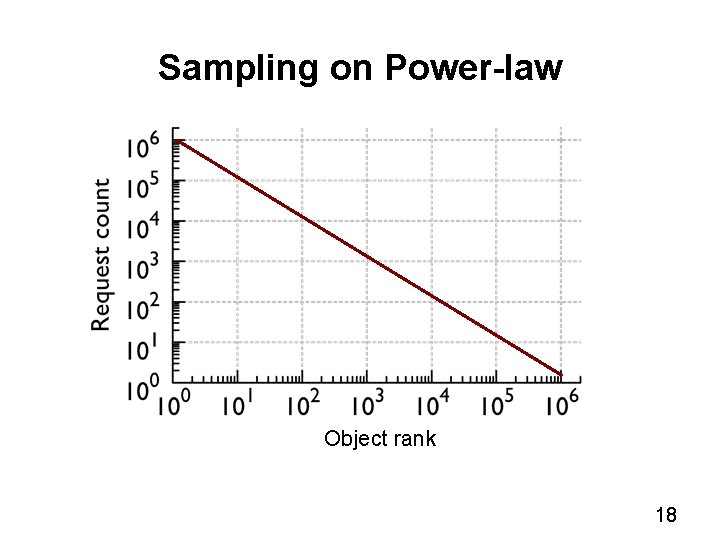

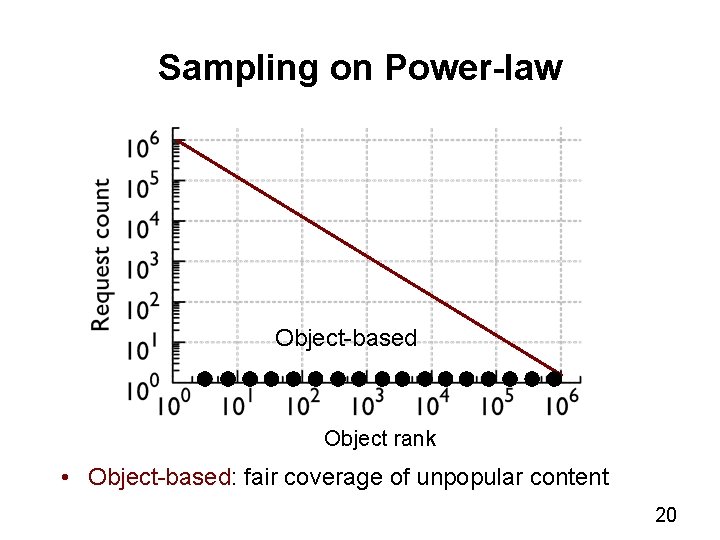

Sampling on Power-law Object rank 18

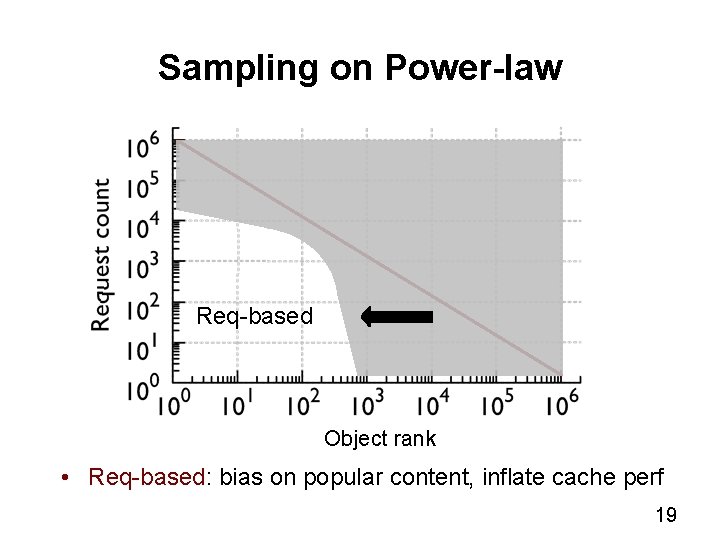

Sampling on Power-law Req-based Object rank • Req-based: bias on popular content, inflate cache perf 19

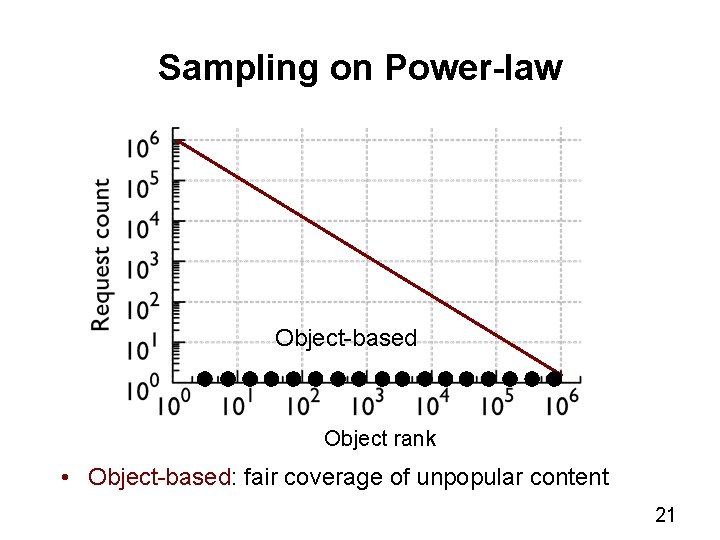

Sampling on Power-law Object-based Object rank • Object-based: fair coverage of unpopular content 20

Sampling on Power-law Object-based Object rank • Object-based: fair coverage of unpopular content 21

Trace Collection Client Data Center Po. P Browser Cache Edge Cache Origin Cache R Instrumentation Scope Backend (Haystack) Resizer 1. 4 M photos, all reqs for each 2. 6 M photo objects, all reqs for each 77. 2 M reqs (Desktop) 12. 3 M Browsers 12. 3 K Servers 22

Analysis • Traffic sheltering effects of caches • Photo popularity distribution • Size, algorithm, collaborative Edge • In paper – Stack performance as a function of photo age – Stack performance as a function of social connectivity – Geographical traffic flow 23

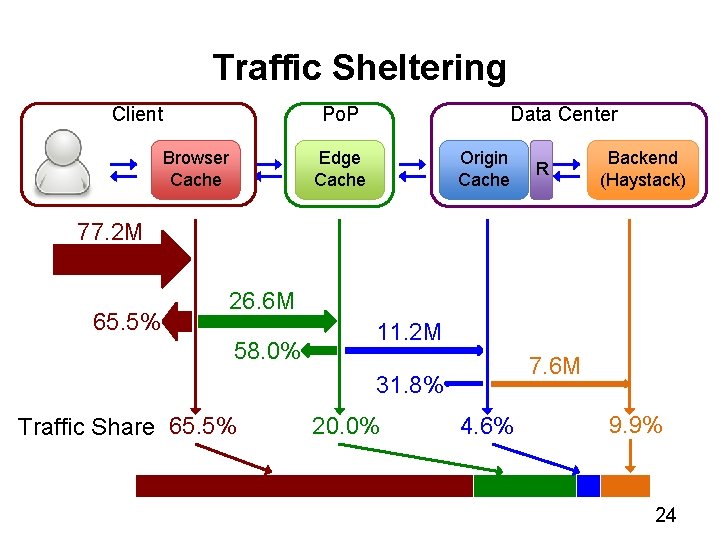

Traffic Sheltering Client Data Center Po. P Browser Cache Edge Cache Origin Cache R Backend (Haystack) 77. 2 M 65. 5% 26. 6 M 58. 0% 11. 2 M 7. 6 M 31. 8% Traffic Share 65. 5% 20. 0% 4. 6% 9. 9% 24

Photo popularity and its cache impact 25

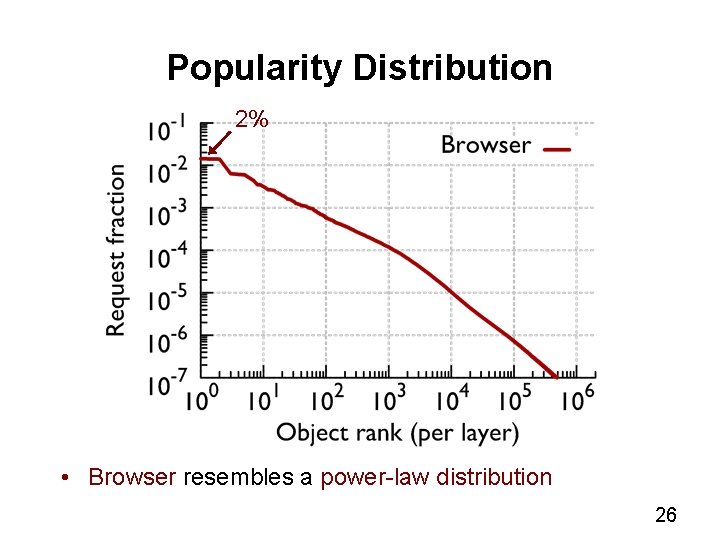

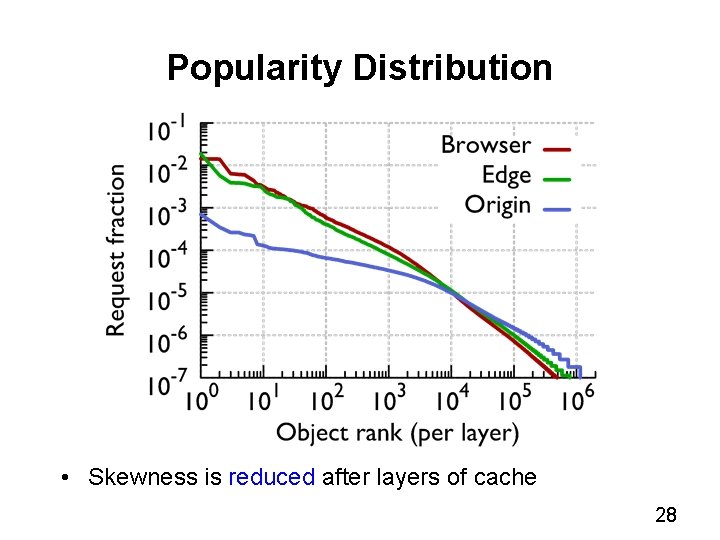

Popularity Distribution 2% • Browser resembles a power-law distribution 26

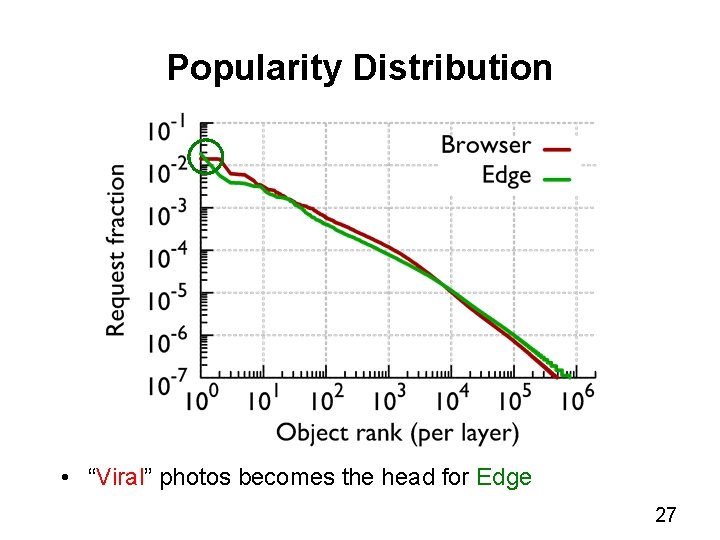

Popularity Distribution • “Viral” photos becomes the head for Edge 27

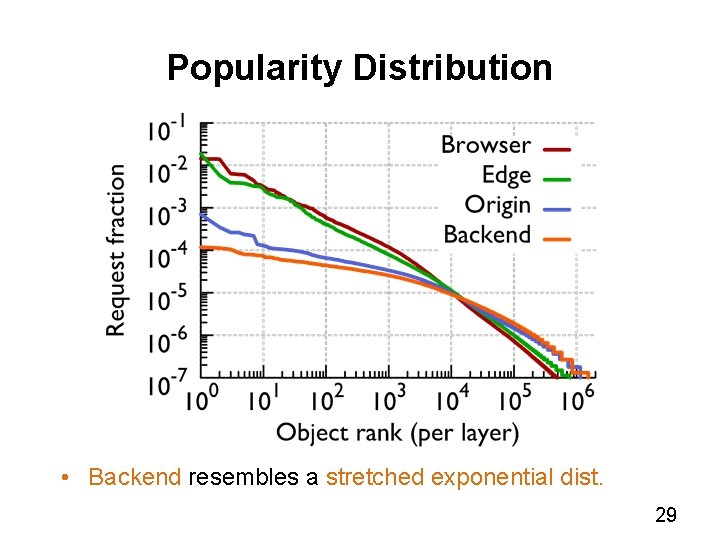

Popularity Distribution • Skewness is reduced after layers of cache 28

Popularity Distribution • Backend resembles a stretched exponential dist. 29

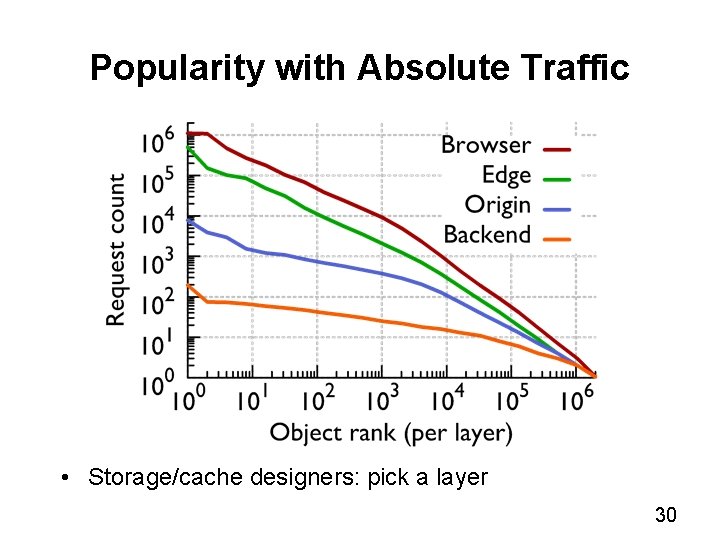

Popularity with Absolute Traffic • Storage/cache designers: pick a layer 30

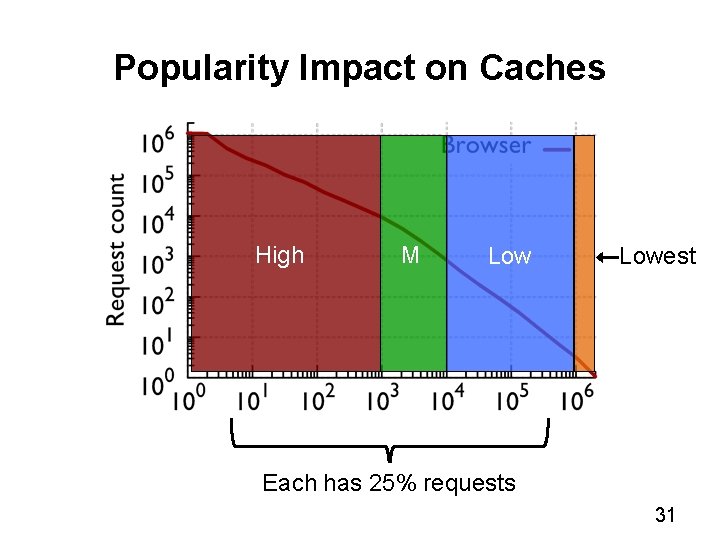

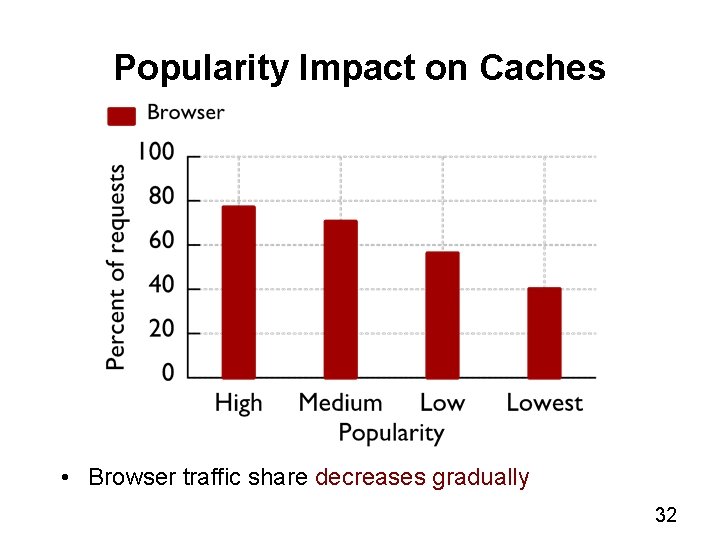

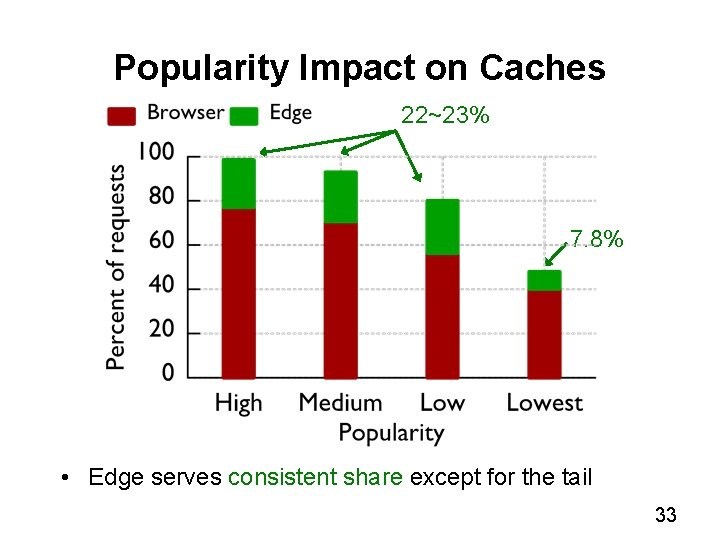

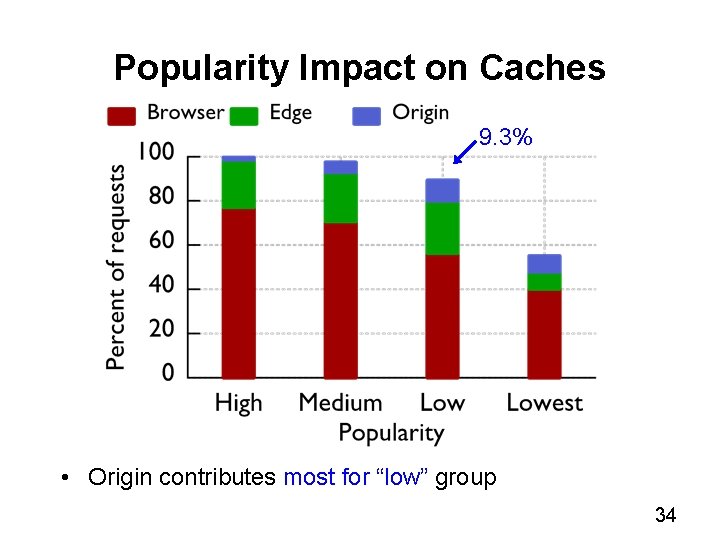

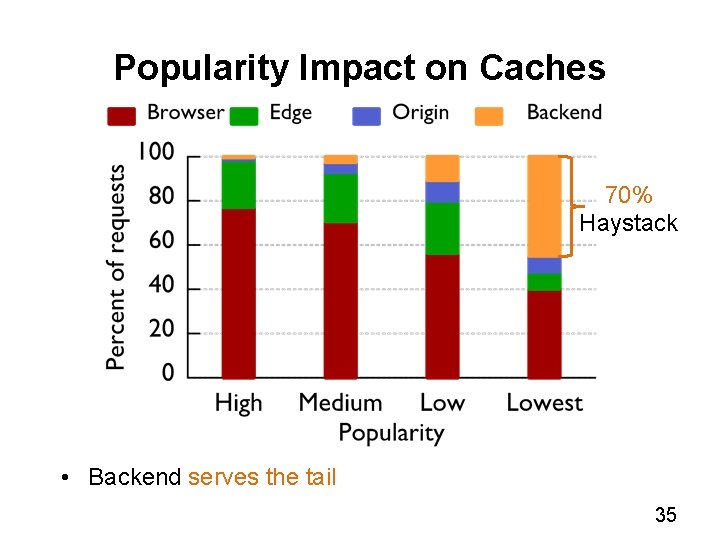

Popularity Impact on Caches High M Lowest Each has 25% requests 31

Popularity Impact on Caches • Browser traffic share decreases gradually 32

Popularity Impact on Caches 22~23% 7. 8% • Edge serves consistent share except for the tail 33

Popularity Impact on Caches 9. 3% • Origin contributes most for “low” group 34

Popularity Impact on Caches 70% Haystack • Backend serves the tail 35

Can we make the cache better? 36

Simulation • Replay the trace (25% warm up) • Estimate the base cache size • Evaluate two hit-ratios (object-wise, byte-wise) 37

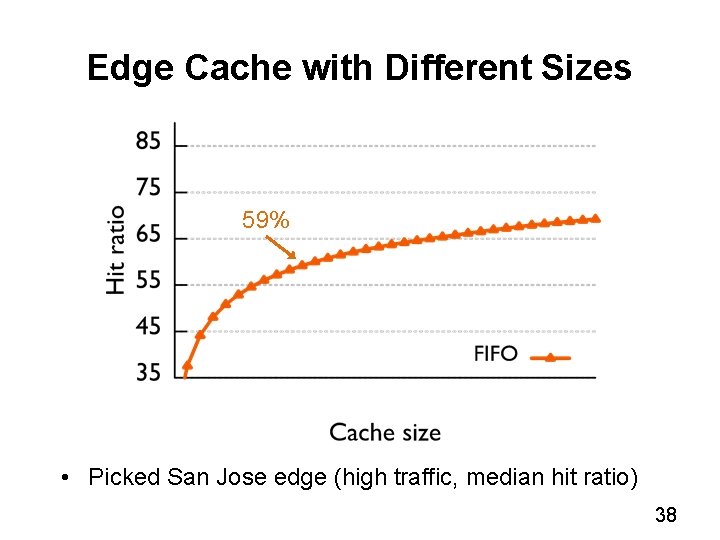

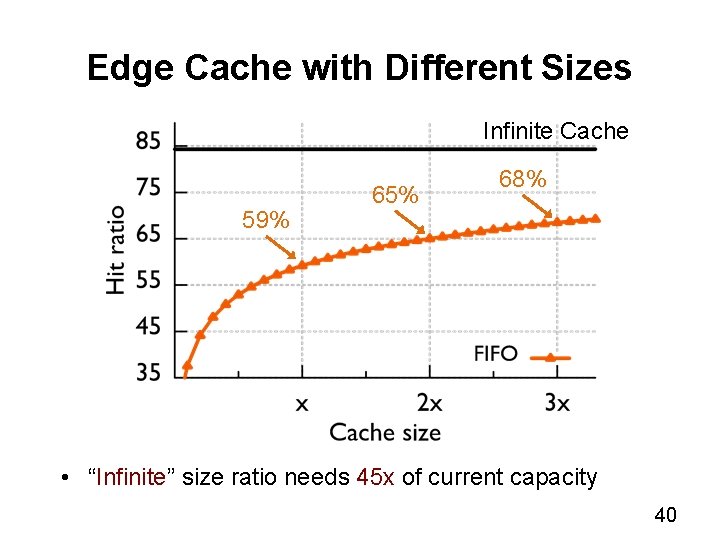

Edge Cache with Different Sizes 59% • Picked San Jose edge (high traffic, median hit ratio) 38

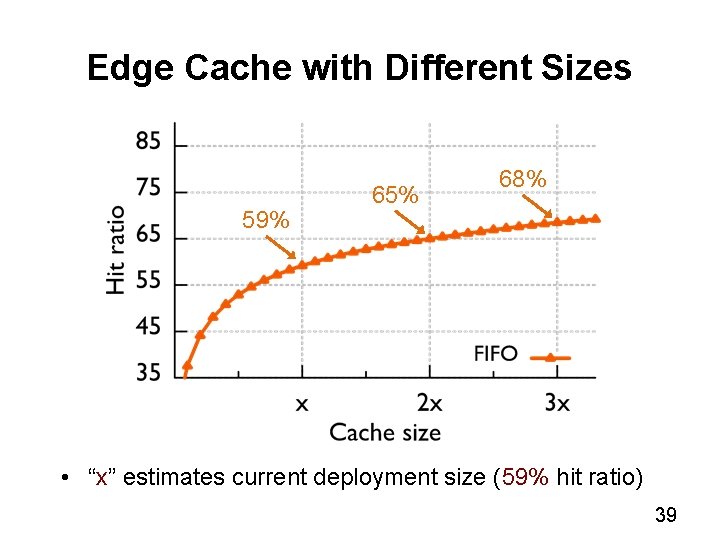

Edge Cache with Different Sizes 59% 65% 68% • “x” estimates current deployment size (59% hit ratio) 39

Edge Cache with Different Sizes Infinite Cache 59% 65% 68% • “Infinite” size ratio needs 45 x of current capacity 40

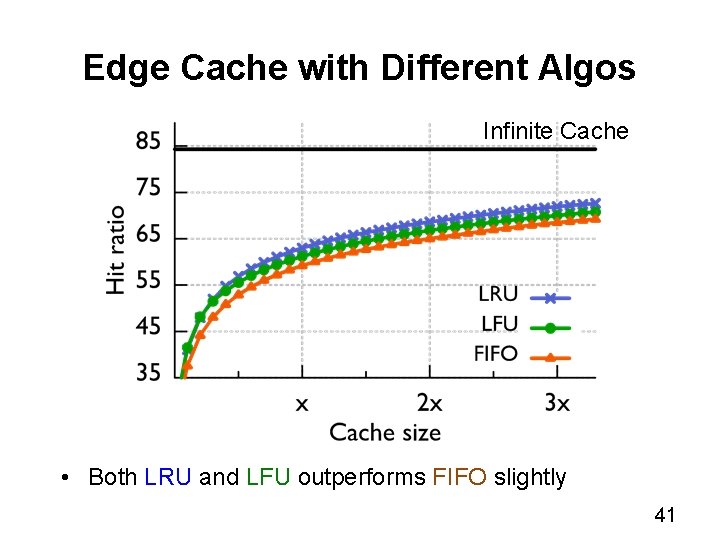

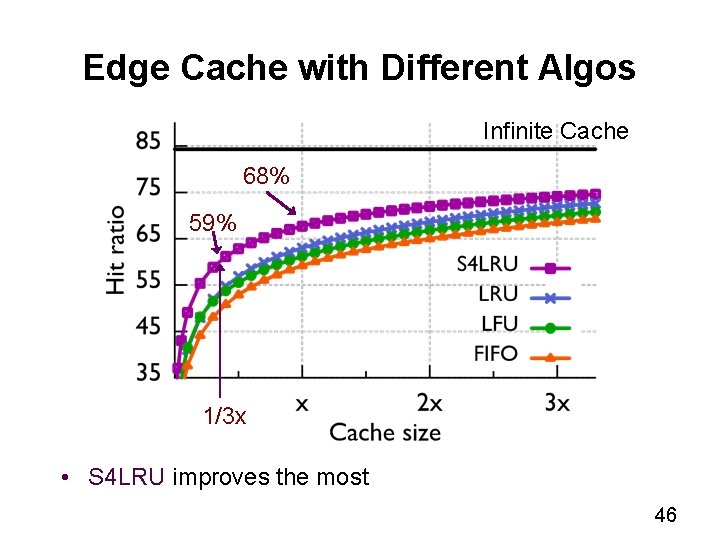

Edge Cache with Different Algos Infinite Cache • Both LRU and LFU outperforms FIFO slightly 41

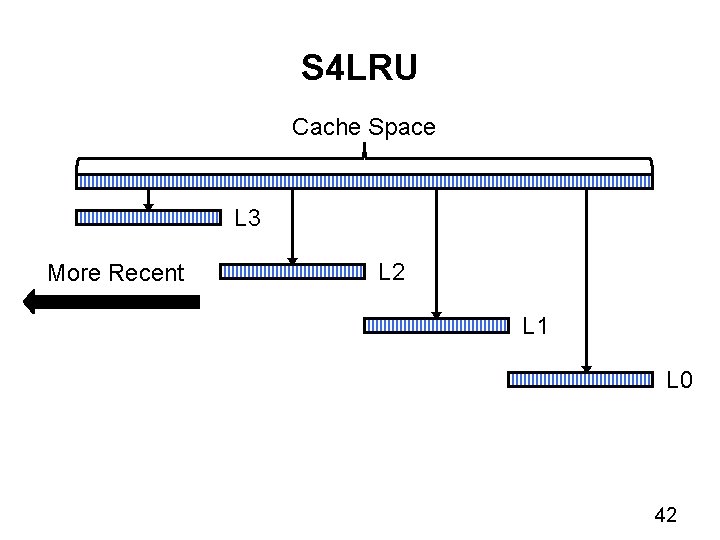

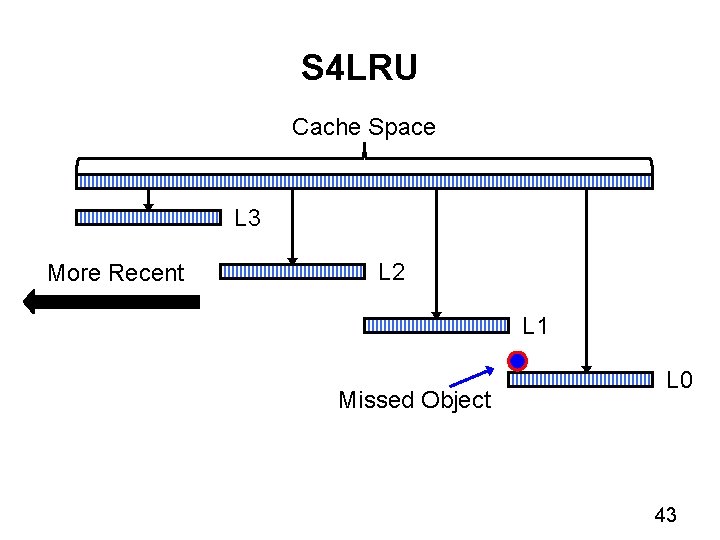

S 4 LRU Cache Space L 3 More Recent L 2 L 1 L 0 42

S 4 LRU Cache Space L 3 More Recent L 2 L 1 Missed Object L 0 43

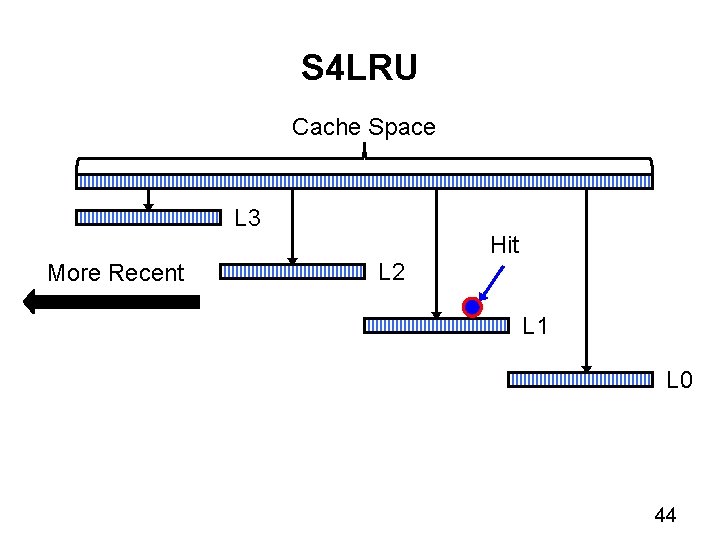

S 4 LRU Cache Space L 3 More Recent L 2 Hit L 1 L 0 44

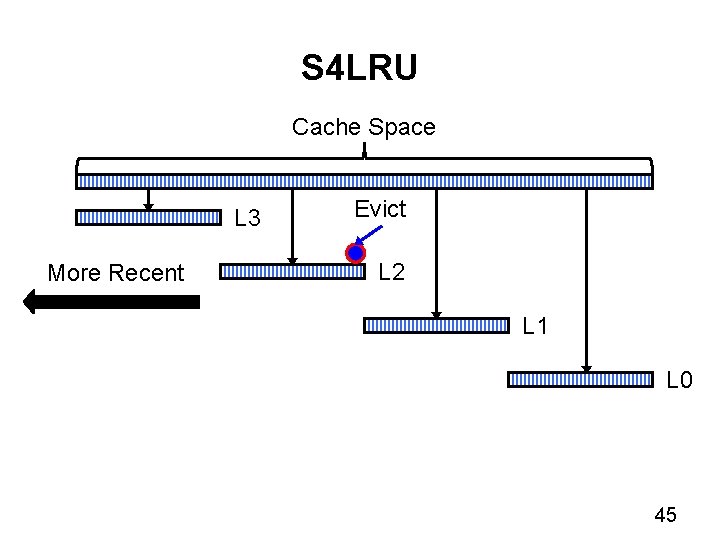

S 4 LRU Cache Space L 3 More Recent Evict L 2 L 1 L 0 45

Edge Cache with Different Algos Infinite Cache 68% 59% 1/3 x • S 4 LRU improves the most 46

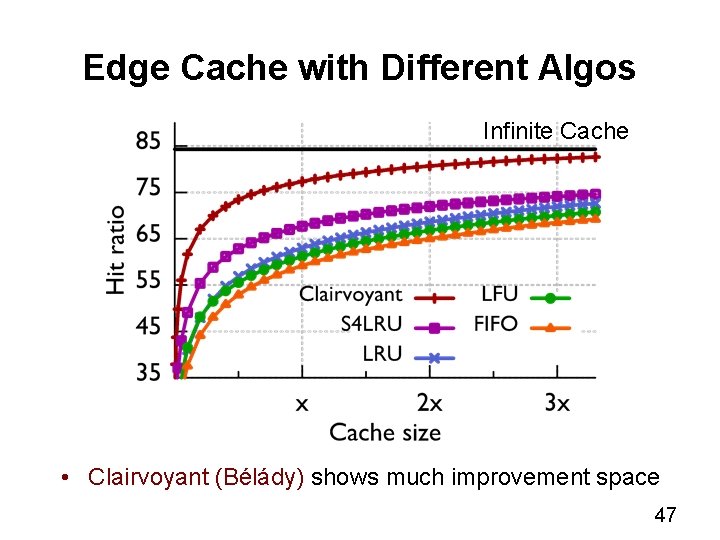

Edge Cache with Different Algos Infinite Cache • Clairvoyant (Bélády) shows much improvement space 47

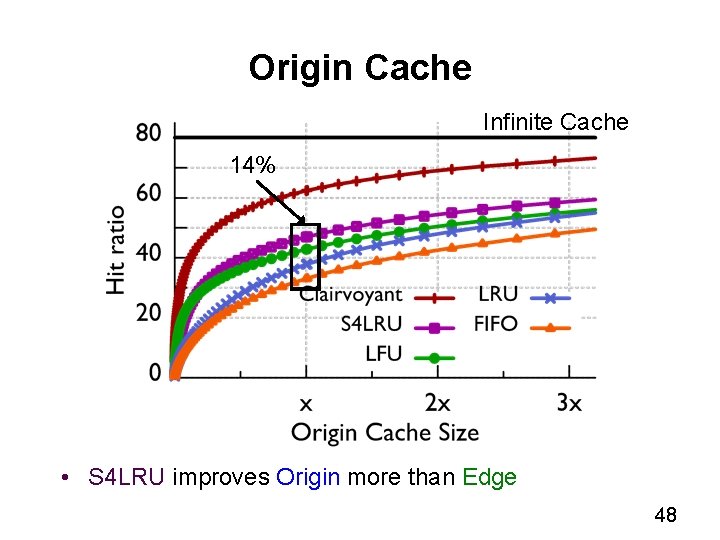

Origin Cache Infinite Cache 14% • S 4 LRU improves Origin more than Edge 48

Which Photo to Cache • Recency & frequency leads S 4 LRU • Does age, social factors also play a role? 49

Collaborative cache on the Edge 50

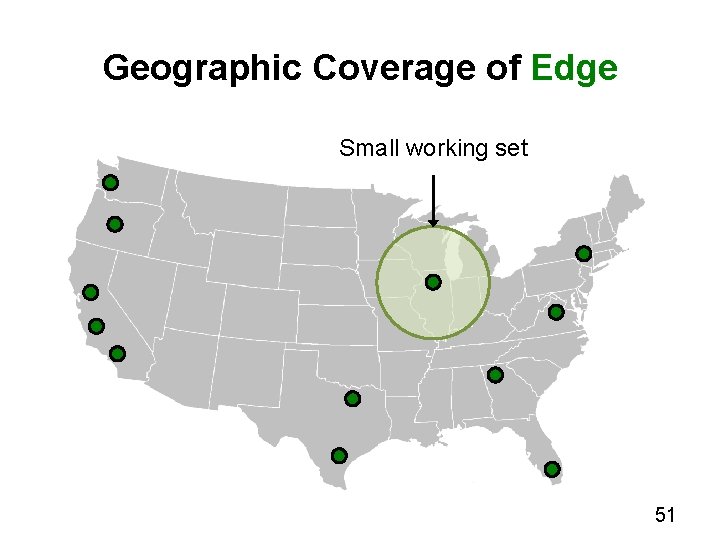

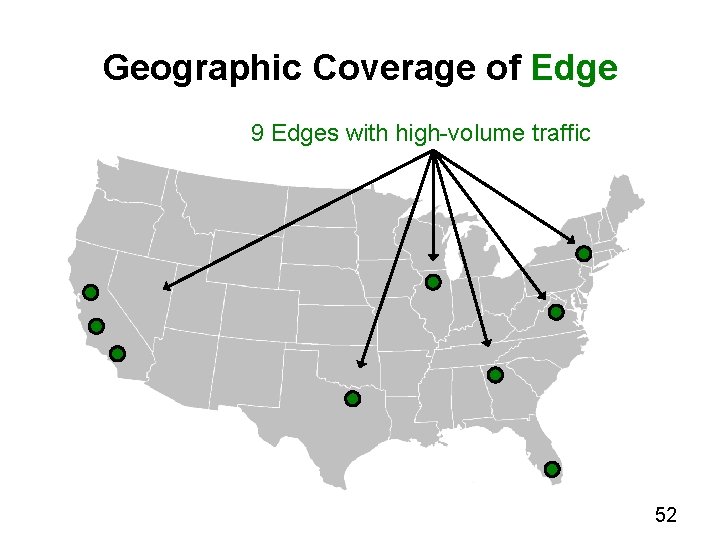

Geographic Coverage of Edge Small working set 51

Geographic Coverage of Edge 9 Edges with high-volume traffic 52

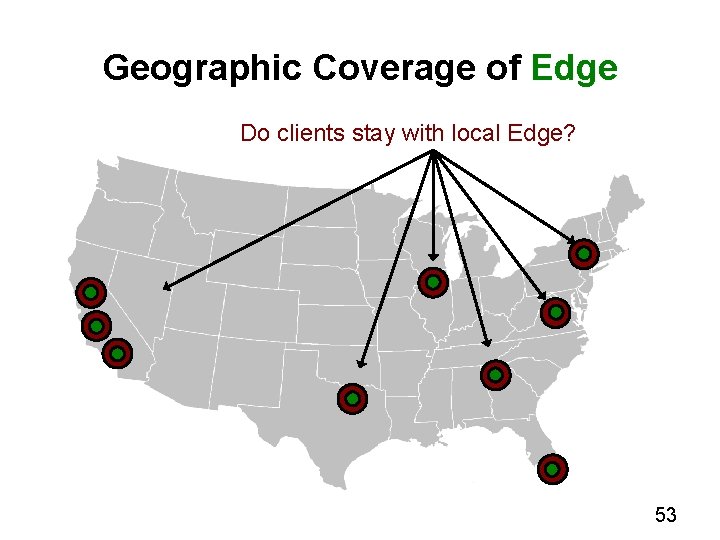

Geographic Coverage of Edge Do clients stay with local Edge? 53

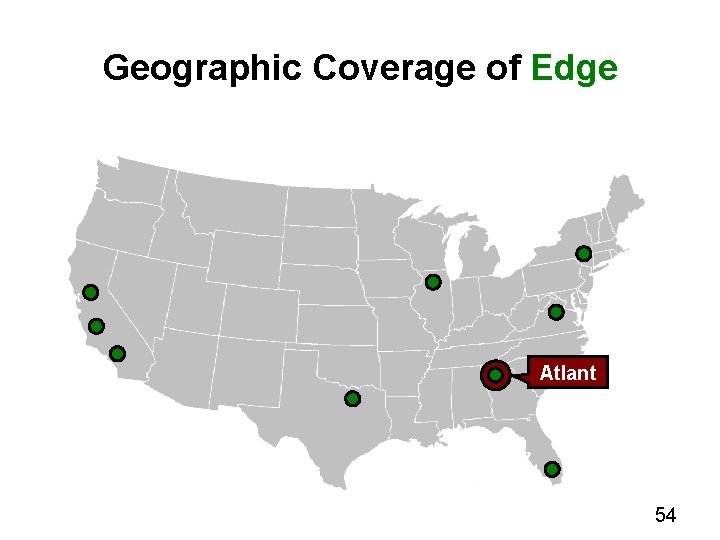

Geographic Coverage of Edge Atlant a 54

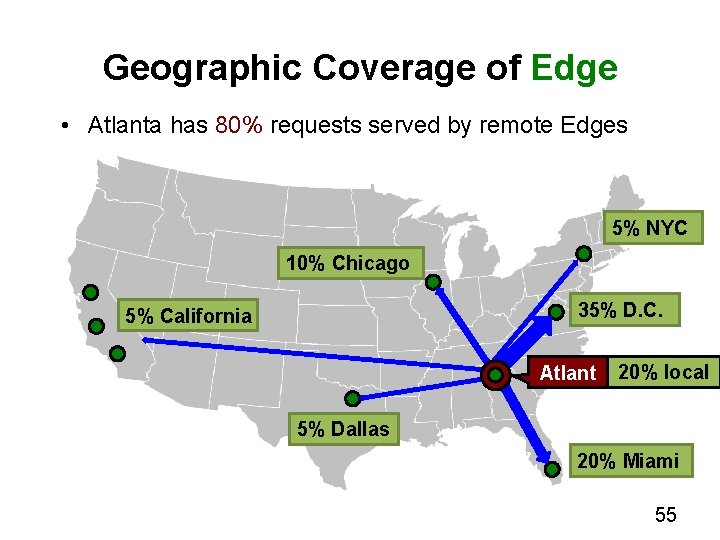

Geographic Coverage of Edge • Atlanta has 80% requests served by remote Edges 5% NYC 10% Chicago 35% D. C. 5% California Atlant a 20% local 5% Dallas 20% Miami 55

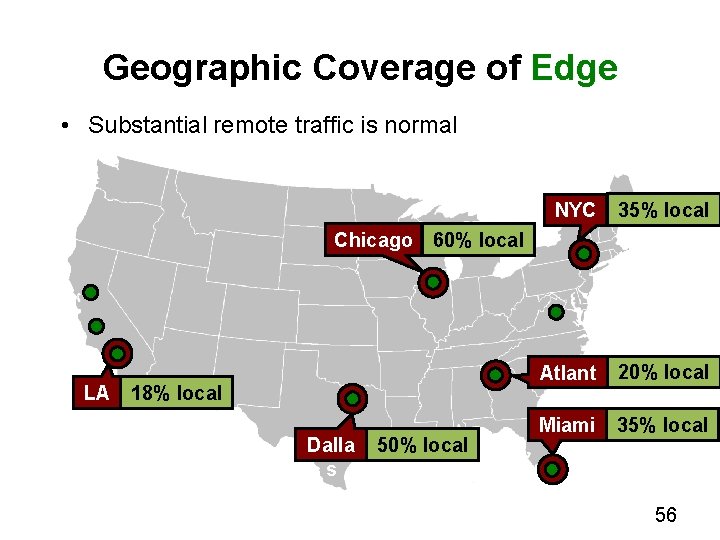

Geographic Coverage of Edge • Substantial remote traffic is normal NYC 35% local Atlant a Miami 20% local Chicago 60% local LA 18% local Dalla s 50% local 35% local 56

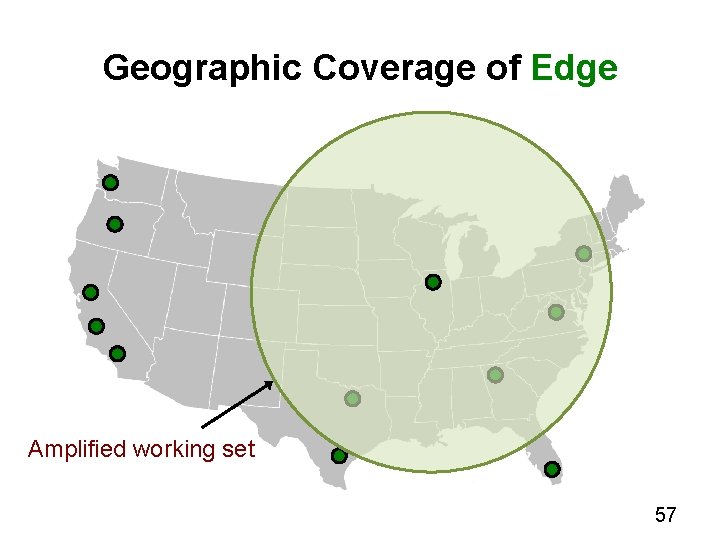

Geographic Coverage of Edge Amplified working set 57

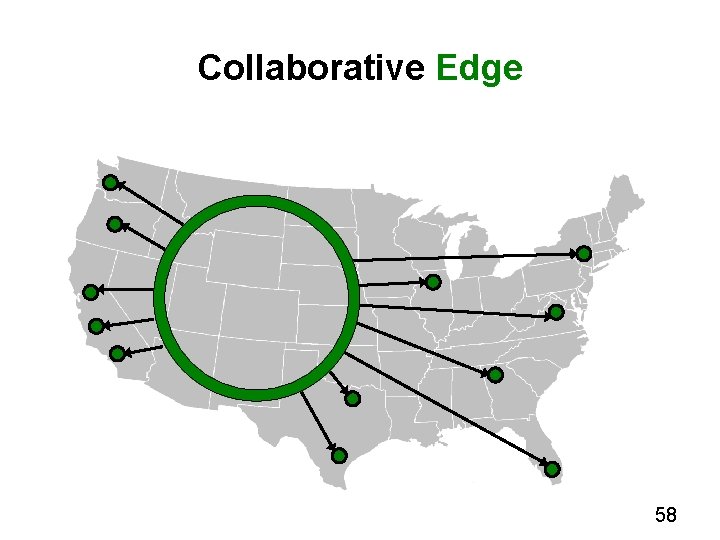

Collaborative Edge 58

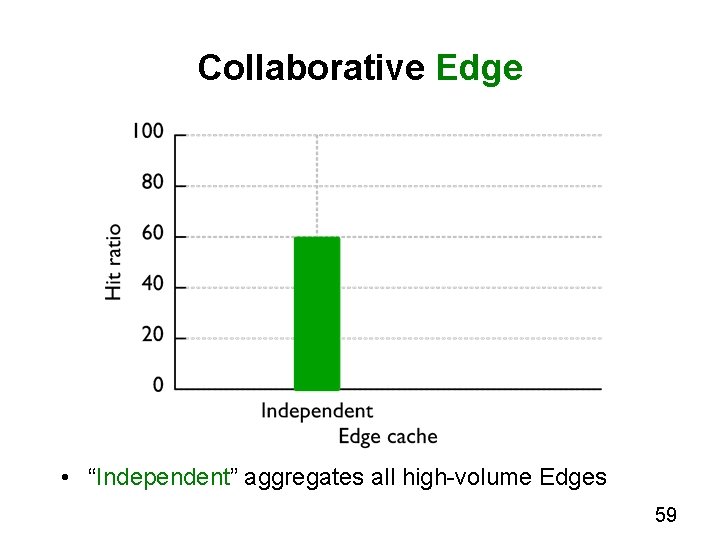

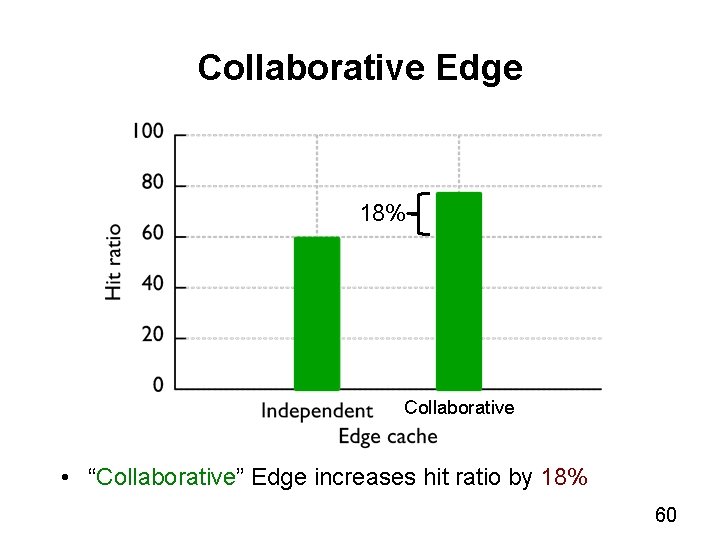

Collaborative Edge • “Independent” aggregates all high-volume Edges 59

Collaborative Edge 18% Collaborative • “Collaborative” Edge increases hit ratio by 18% 60

Related Work Storage Analysis BSD file system (SOSP ’ 85), Sprite ( SOSP ’ 91), NT (SOSP ’ 99), Net. App (SOSP ’ 11), i. Bench (SOSP ’ 11) Content Distribution Analysis Cooperative caching (SOSP ’ 99), CDN vs. P 2 P (OSDI ’ 02), P 2 P (SOSP ’ 03), Coral. CDN (NSDI ’ 10), Flash crowds (IMC ’ 11) Web Analysis Zipfian (INFOCOM ’ 00), Flash crowds (WWW ’ 02), Modern web traffic (IMC ’ 11) 61

Conclusion • Quantify caching performance • Quantify popularity changes across layers of caches • Recency, frequency, age, social factors impact cache • Outline potential gain of collaborative caching 62

- Slides: 63