Web Archaeology Raymie Stata Compaq Systems Research Center

Web Archaeology Raymie Stata Compaq Systems Research Center raymie. stata@compaq. com www. research. compaq. com/SRC

What is Web Archaeology? § The study of the content of the Web – exploring the Web – sifting through data – making valuable discoveries § Difficult! Because the Web is: – Boundless – Dynamic – Radically decentralized

Some recent results § Empirical studies – Quality of almost-breadth-first crawling – Structure of the Web – Random walks (size of search engines) § Improving the Web experience – Better and more precise search results – Surfing assistants and tools § Data mining – Technologies for “page scraping”

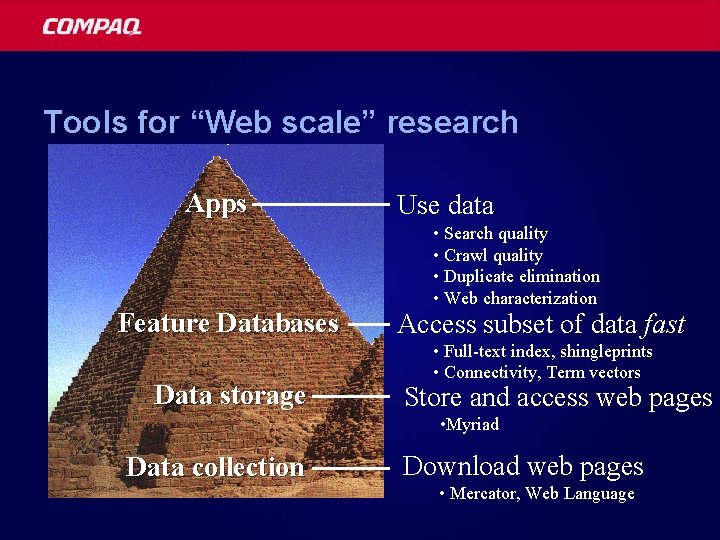

Tools for “Web scale” research Apps Feature Databases Data storage Use data • Search quality • Crawl quality • Duplicate elimination • Web characterization Access subset of data fast • Full-text index, shingleprints • Connectivity, Term vectors Store and access web pages • Myriad Data collection Download web pages • Mercator, Web Language

Web-scale crawling Mercator Atrax

The Mercator web crawler § A high-performance web crawler – Downloads and processes web pages § Written entirely in Java – Runs on any Java-capable platform § Extensible – Wide range of possible configurations – Users can plug in new modules at run-time

Mercator design points § Extensible – Well-chosen extensibility points – Framework for configuration § Multiple threads, synchronous I/O – vs. single thread, asynchronous I/O § Checkpointing – Allows crawls to be restarted – Modules export prepare, commit

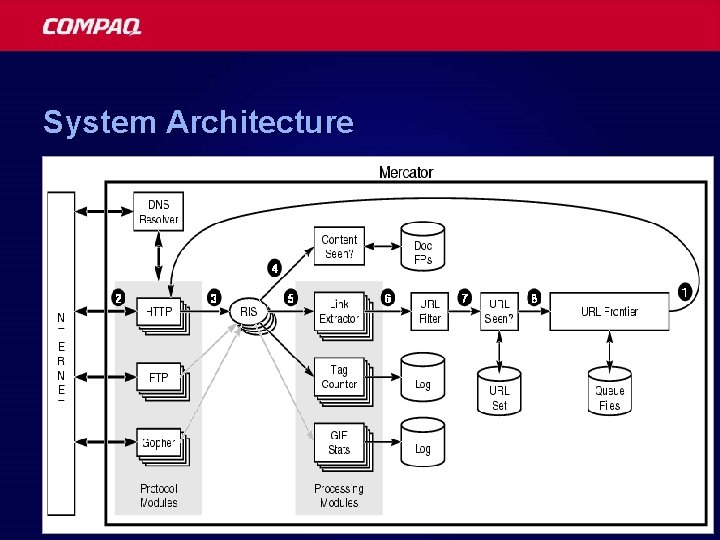

System Architecture

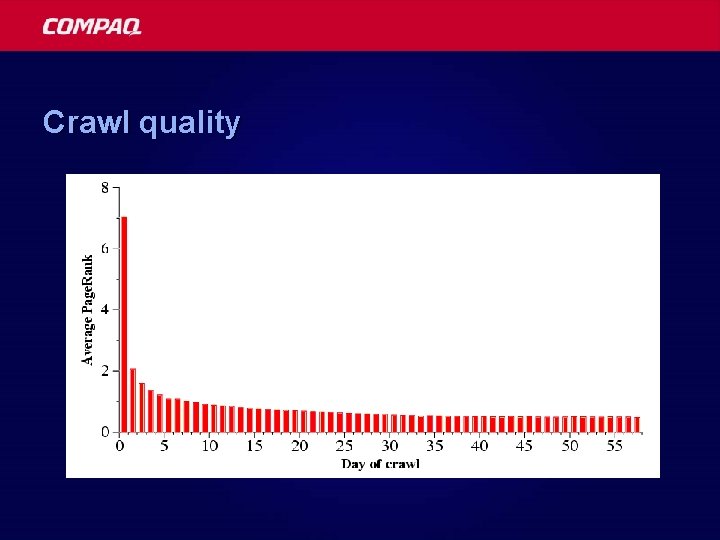

Crawl quality

Atrax, a distributed version of Mercator § Distributes load across cluster of crawlers § Partitions data structures across crawlers § No central bottleneck § Network bandwidth is limiting factor

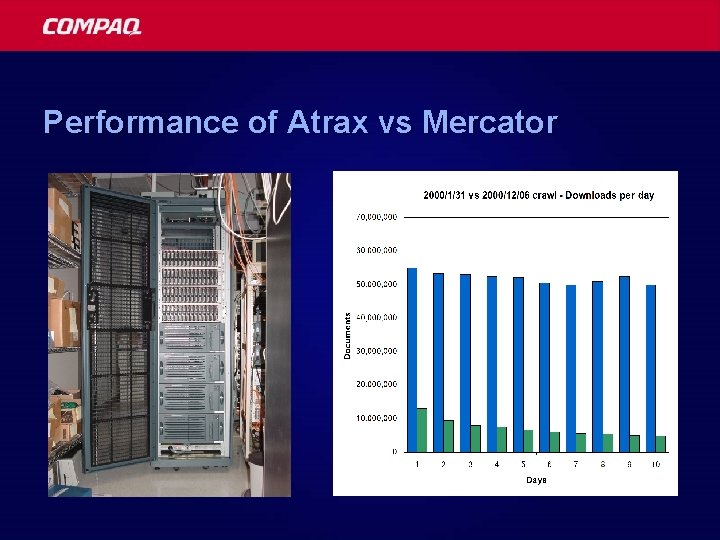

Performance of Atrax vs Mercator

Myriad -- new project § A very large, archival storage system – Scalable to a petabyte § With function shipping – Supports data mining

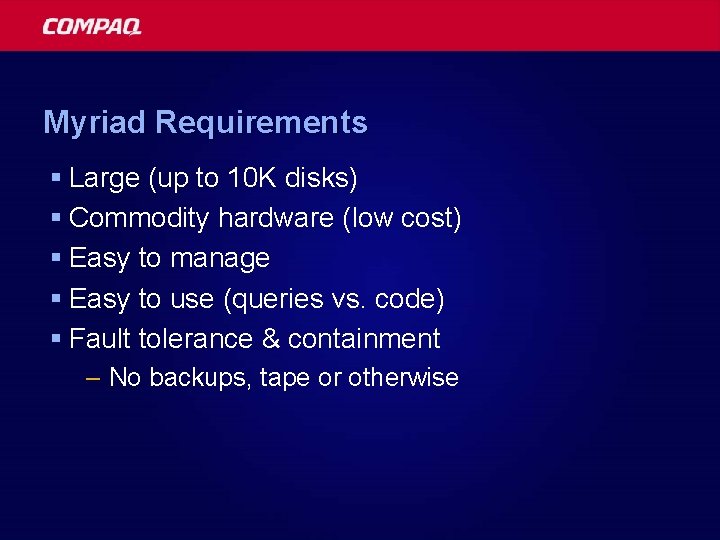

Myriad Requirements § Large (up to 10 K disks) § Commodity hardware (low cost) § Easy to manage § Easy to use (queries vs. code) § Fault tolerance & containment – No backups, tape or otherwise

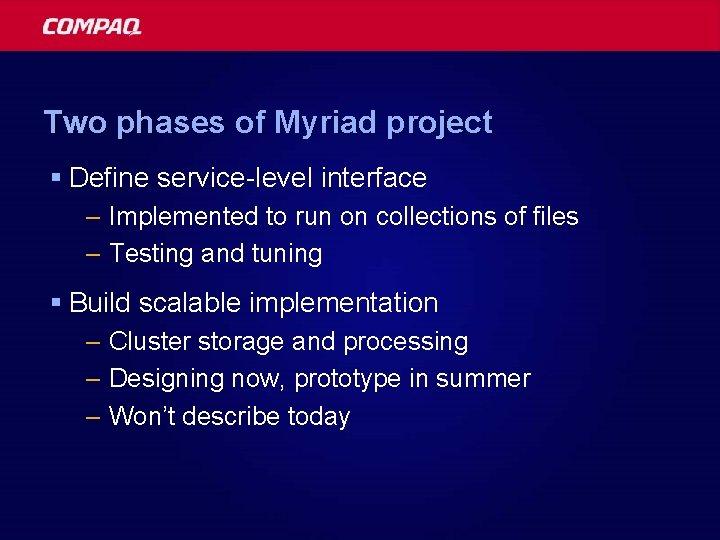

Two phases of Myriad project § Define service-level interface – Implemented to run on collections of files – Testing and tuning § Build scalable implementation – Cluster storage and processing – Designing now, prototype in summer – Won’t describe today

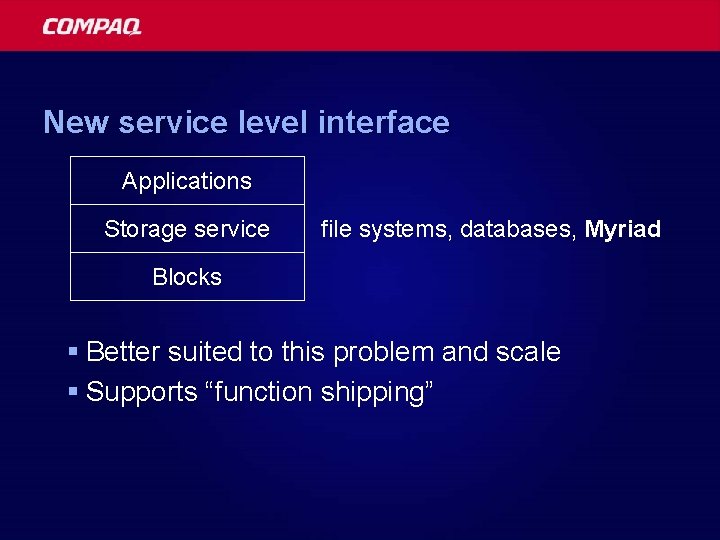

New service level interface Applications Storage service file systems, databases, Myriad Blocks § Better suited to this problem and scale § Supports “function shipping”

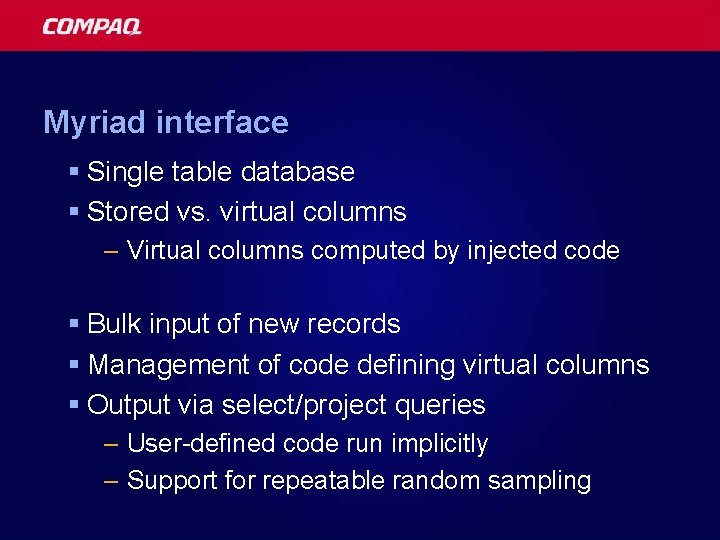

Myriad interface § Single table database § Stored vs. virtual columns – Virtual columns computed by injected code § Bulk input of new records § Management of code defining virtual columns § Output via select/project queries – User-defined code run implicitly – Support for repeatable random sampling

![Example Myriad query [samplingprob=0. 1, samplingseed=321223421332] select name, length where insertion. Date < Date(00/01/01) Example Myriad query [samplingprob=0. 1, samplingseed=321223421332] select name, length where insertion. Date < Date(00/01/01)](http://slidetodoc.com/presentation_image_h/a1a8b550e8e2e26d0ac442ba4ad0a4ad/image-17.jpg)

Example Myriad query [samplingprob=0. 1, samplingseed=321223421332] select name, length where insertion. Date < Date(00/01/01) && mime. Type == “text/html”;

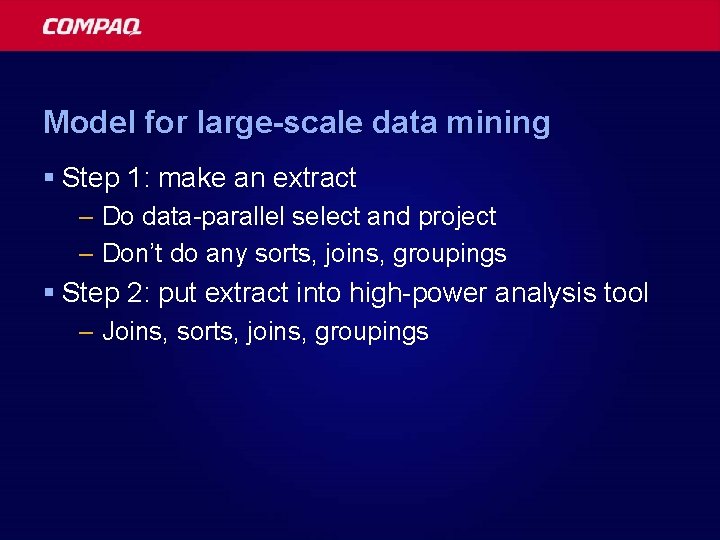

Model for large-scale data mining § Step 1: make an extract – Do data-parallel select and project – Don’t do any sorts, joins, groupings § Step 2: put extract into high-power analysis tool – Joins, sorts, joins, groupings

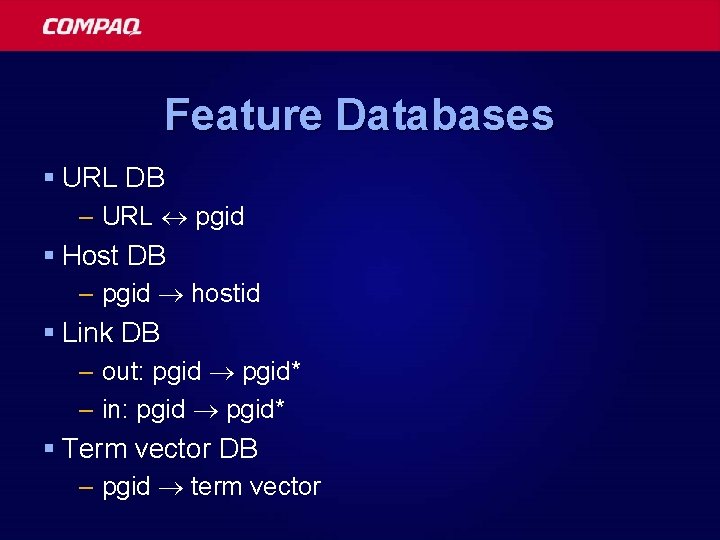

Feature Databases § URL DB – URL pgid § Host DB – pgid hostid § Link DB – out: pgid* – in: pgid* § Term vector DB – pgid term vector

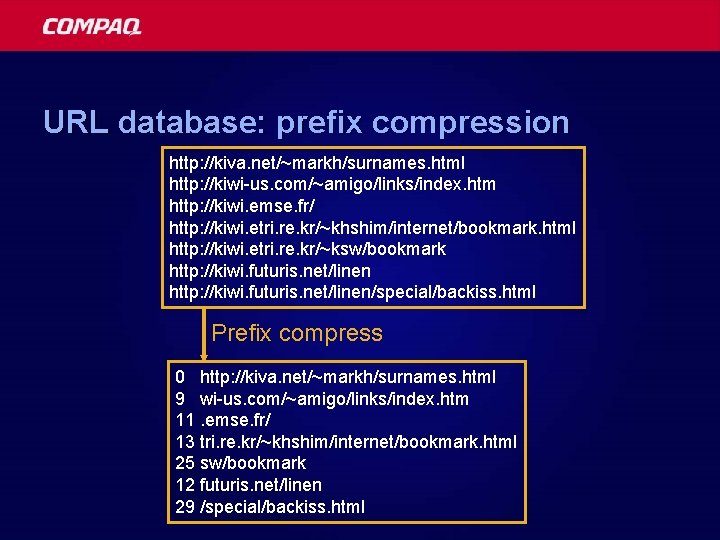

URL database: prefix compression http: //kiva. net/~markh/surnames. html http: //kiwi-us. com/~amigo/links/index. htm http: //kiwi. emse. fr/ http: //kiwi. etri. re. kr/~khshim/internet/bookmark. html http: //kiwi. etri. re. kr/~ksw/bookmark http: //kiwi. futuris. net/linen/special/backiss. html Prefix compress 0 http: //kiva. net/~markh/surnames. html 9 wi-us. com/~amigo/links/index. htm 11. emse. fr/ 13 tri. re. kr/~khshim/internet/bookmark. html 25 sw/bookmark 12 futuris. net/linen 29 /special/backiss. html

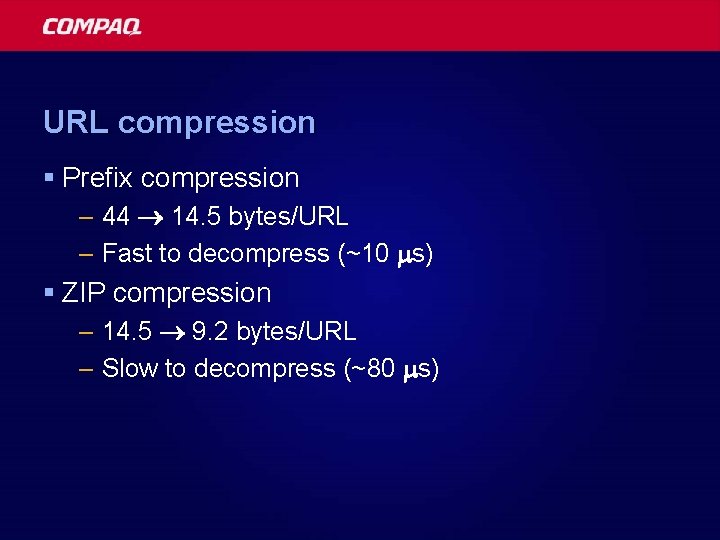

URL compression § Prefix compression – 44 14. 5 bytes/URL – Fast to decompress (~10 s) § ZIP compression – 14. 5 9. 2 bytes/URL – Slow to decompress (~80 s)

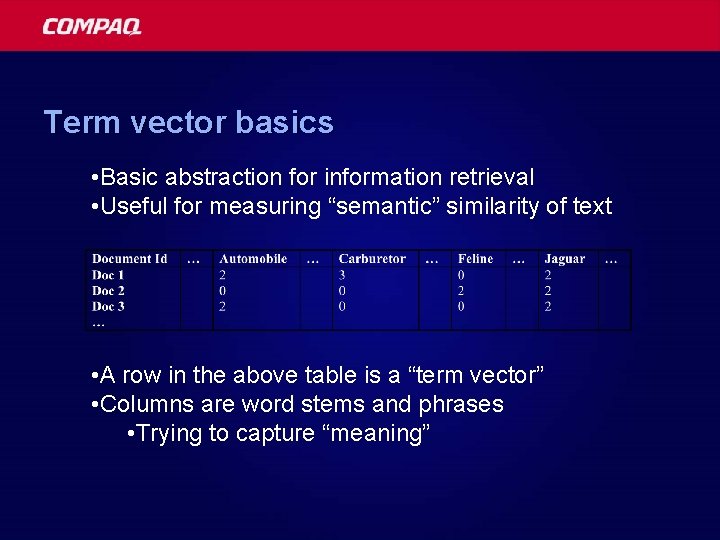

Term vector basics • Basic abstraction for information retrieval • Useful for measuring “semantic” similarity of text • A row in the above table is a “term vector” • Columns are word stems and phrases • Trying to capture “meaning”

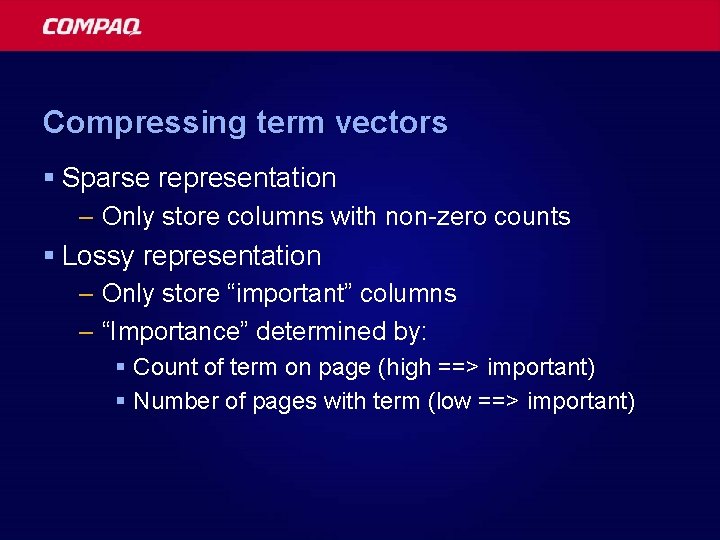

Compressing term vectors § Sparse representation – Only store columns with non-zero counts § Lossy representation – Only store “important” columns – “Importance” determined by: § Count of term on page (high ==> important) § Number of pages with term (low ==> important)

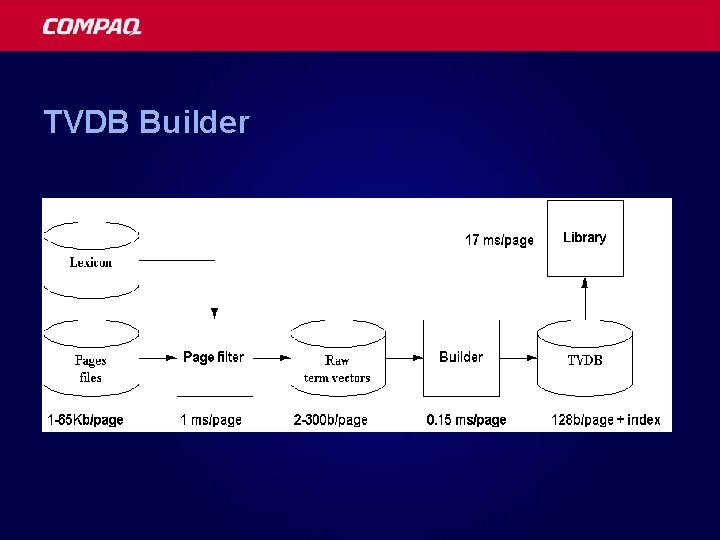

TVDB Builder

Applications § Categorizing pages § Topic distillation § Filtering pages § Identifying languages § Identifying running text § Relevance feedback (“more like this”) § Abstracting pages

Categorization § “Bulls take over”

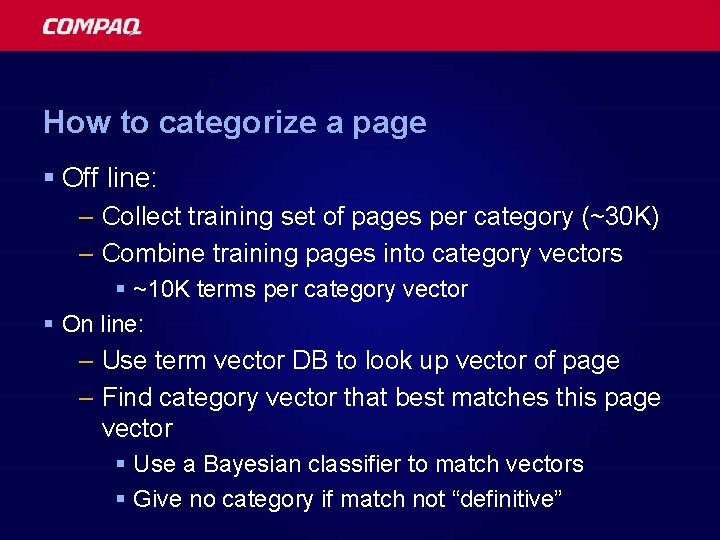

How to categorize a page § Off line: – Collect training set of pages per category (~30 K) – Combine training pages into category vectors § ~10 K terms per category vector § On line: – Use term vector DB to look up vector of page – Find category vector that best matches this page vector § Use a Bayesian classifier to match vectors § Give no category if match not “definitive”

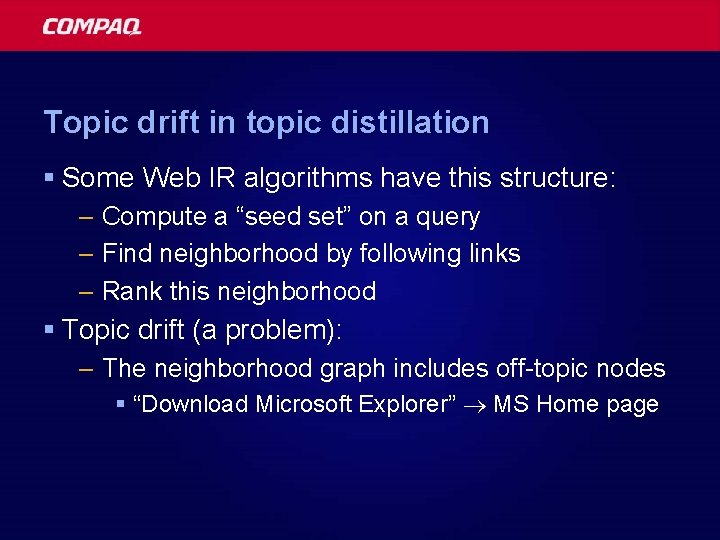

Topic drift in topic distillation § Some Web IR algorithms have this structure: – Compute a “seed set” on a query – Find neighborhood by following links – Rank this neighborhood § Topic drift (a problem): – The neighborhood graph includes off-topic nodes § “Download Microsoft Explorer” MS Home page

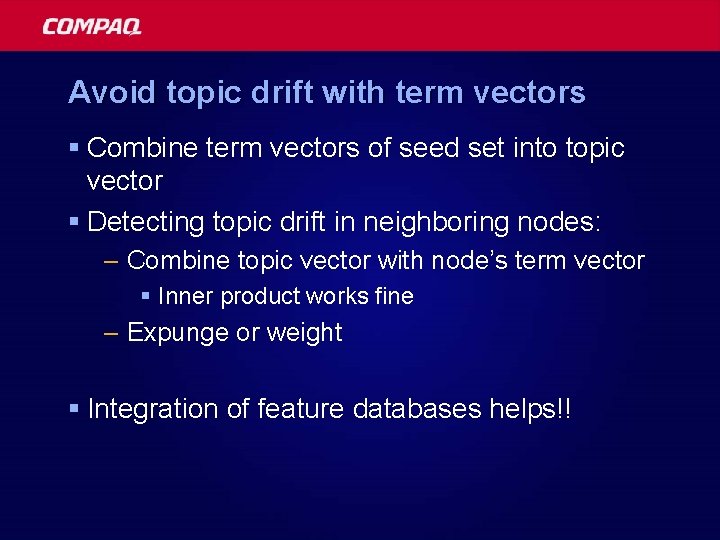

Avoid topic drift with term vectors § Combine term vectors of seed set into topic vector § Detecting topic drift in neighboring nodes: – Combine topic vector with node’s term vector § Inner product works fine – Expunge or weight § Integration of feature databases helps!!

Link database § Goals – Fit links into RAM (fast lookup) – Build in 24 hours § Applications – Page ranking – Web structure – Mirror site detection – Related page detection

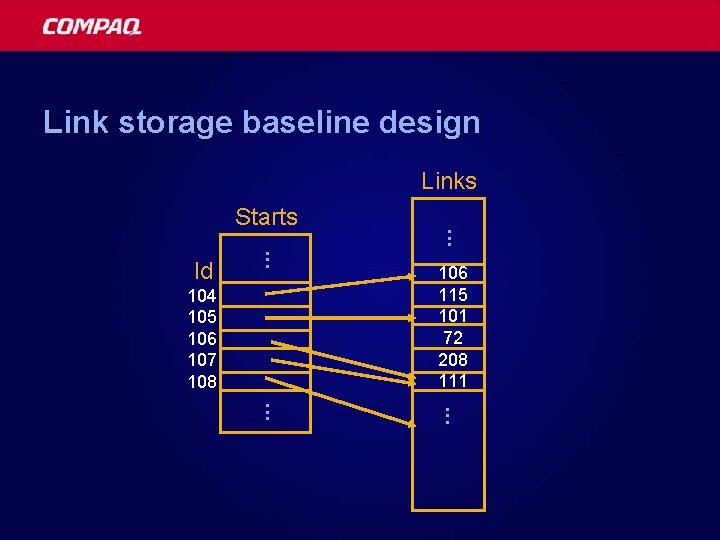

Link storage baseline design Links. . . Id 104 105 106 107 108 . . . Starts 106 115 101 72 208 111 . . .

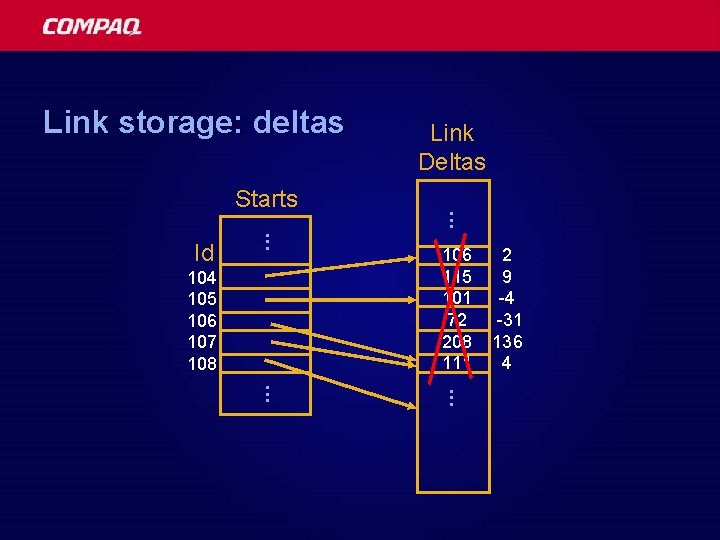

Link storage: deltas . . . Id 104 105 106 107 108 . . . Starts Link Deltas 106 115 101 72 208 111 2 9 -4 -31 136 4 . . .

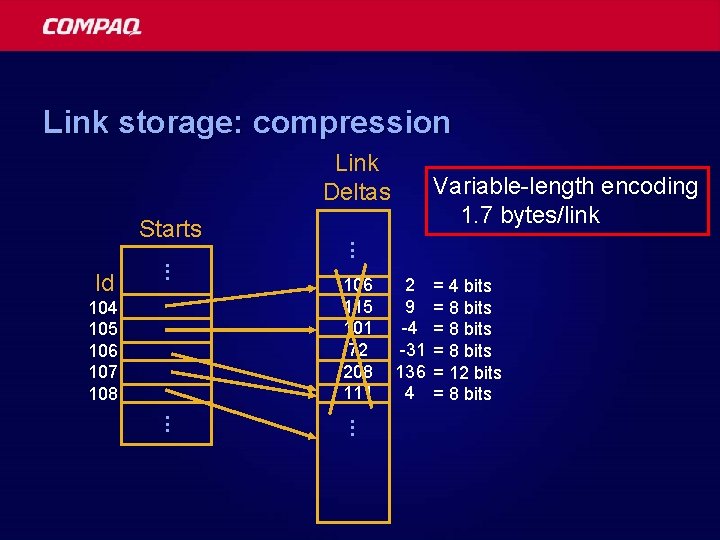

Link storage: compression Link Deltas. . . Id 104 105 106 107 108 . . . Starts Variable-length encoding 1. 7 bytes/link 106 115 101 72 208 111 2 9 -4 -31 136 4 = 4 bits = 8 bits = 12 bits = 8 bits . . .

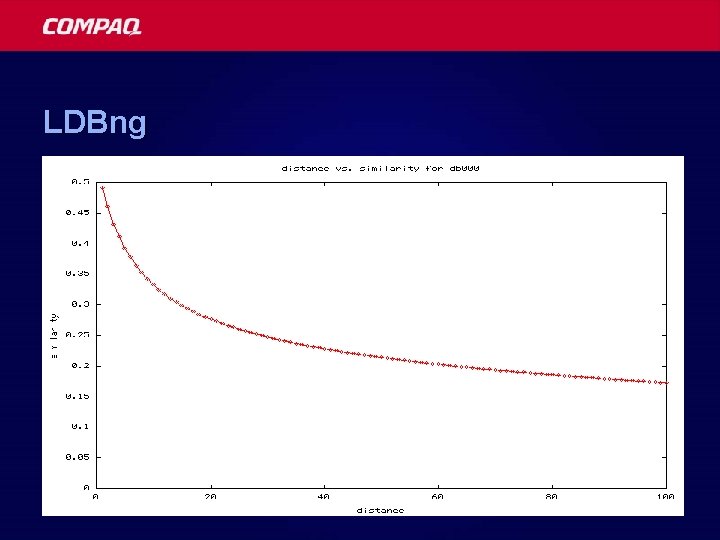

LDBng

The future of Web Archaeology § Driving applications – Web search -- “finding things on the web” § Page classification (topic, community, type) § Purpose-specific search – Web “asset management” (what’s on my site? ) – Automated information extraction (price robots) § Multi-billion page web § Dynamics

- Slides: 36