Validation and Overfitting Problem of Decision Tree Lecture

Validation and Over‐fitting Problem of Decision Tree Lecture 8

Outline 1. Weka, data preparation and Visualization 2. Highly Branching Attributes 3. Over‐fitting problem 4. Confusing Matrix and F‐Score 5. Validation Methods

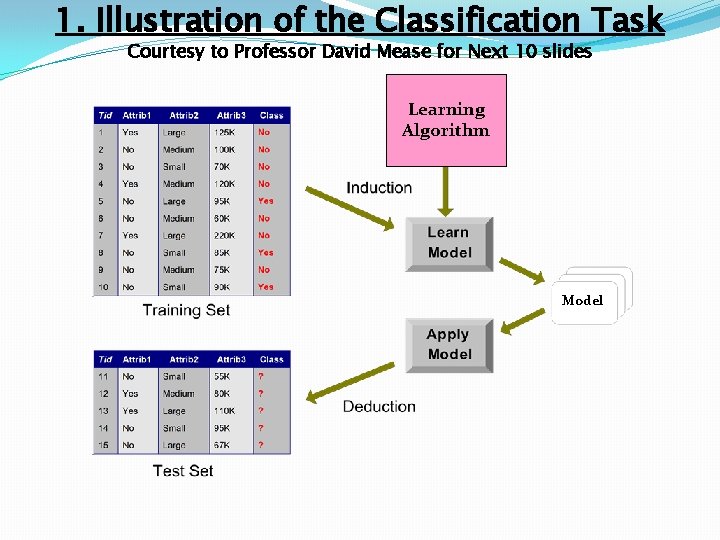

1. Illustration of the Classification Task Courtesy to Professor David Mease for Next 10 slides Learning Algorithm Model

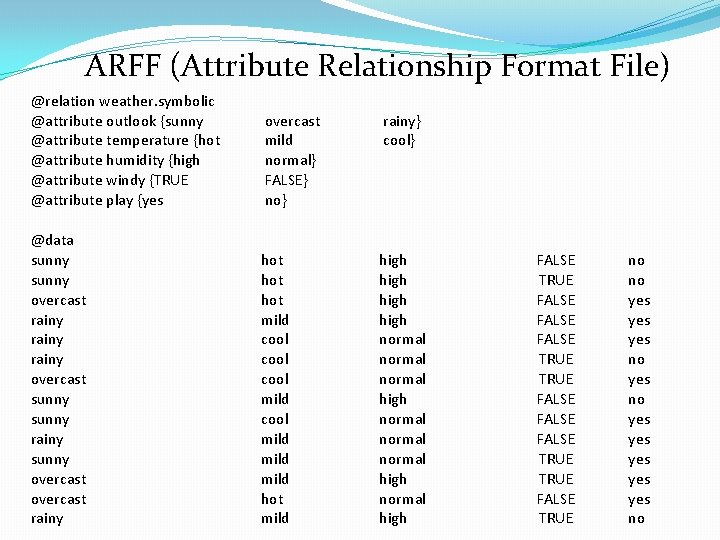

ARFF (Attribute Relationship Format File) @relation weather. symbolic @attribute outlook {sunny @attribute temperature {hot @attribute humidity {high @attribute windy {TRUE @attribute play {yes overcast mild normal} FALSE} no} rainy} cool} @data sunny overcast rainy overcast sunny rainy sunny overcast rainy hot hot mild cool mild hot mild high normal normal high FALSE TRUE FALSE FALSE TRUE FALSE TRUE no no yes yes yes no

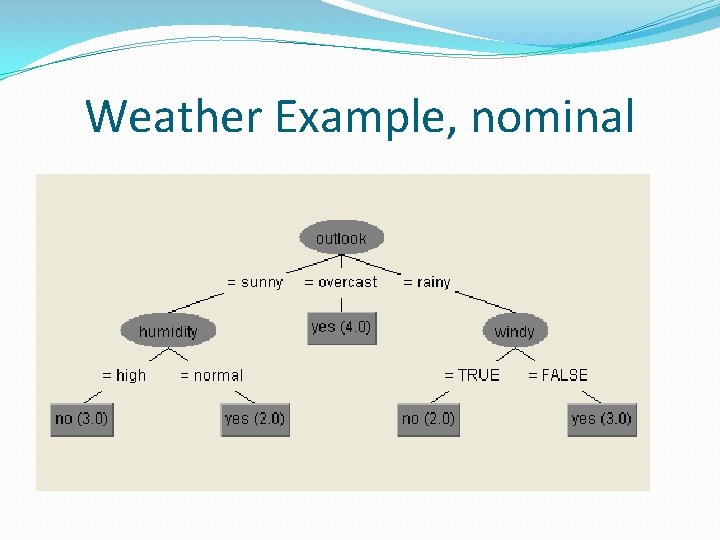

Weather Example, nominal

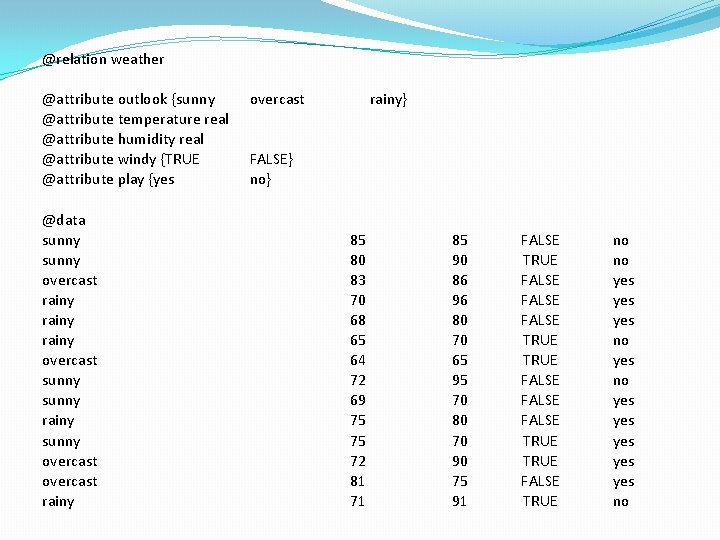

@relation weather @attribute outlook {sunny @attribute temperature real @attribute humidity real @attribute windy {TRUE @attribute play {yes @data sunny overcast rainy overcast sunny rainy sunny overcast rainy} FALSE} no} 85 80 83 70 68 65 64 72 69 75 75 72 81 71 85 90 86 96 80 70 65 95 70 80 70 90 75 91 FALSE TRUE FALSE FALSE TRUE FALSE TRUE no no yes yes yes no

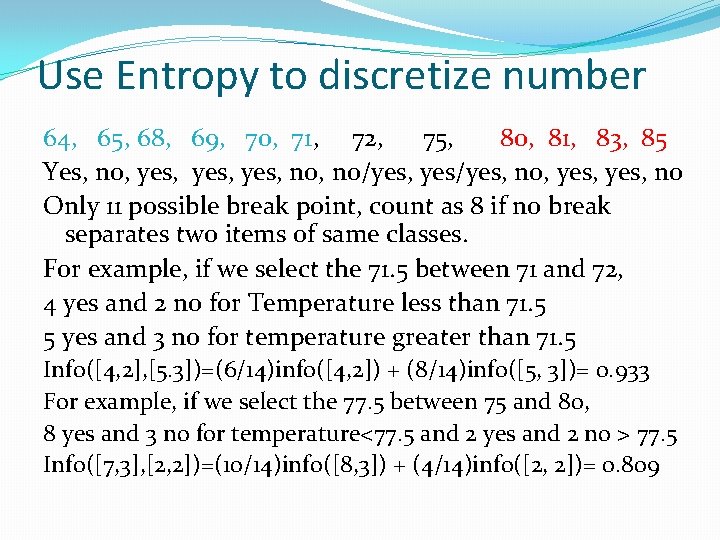

Use Entropy to discretize number 64, 65, 68, 69, 70, 71, 72, 75, 80, 81, 83, 85 Yes, no, yes, no/yes, yes/yes, no, yes, no Only 11 possible break point, count as 8 if no break separates two items of same classes. For example, if we select the 71. 5 between 71 and 72, 4 yes and 2 no for Temperature less than 71. 5 5 yes and 3 no for temperature greater than 71. 5 Info([4, 2], [5. 3])=(6/14)info([4, 2]) + (8/14)info([5, 3])= 0. 933 For example, if we select the 77. 5 between 75 and 80, 8 yes and 3 no for temperature<77. 5 and 2 yes and 2 no > 77. 5 Info([7, 3], [2, 2])=(10/14)info([8, 3]) + (4/14)info([2, 2])= 0. 809

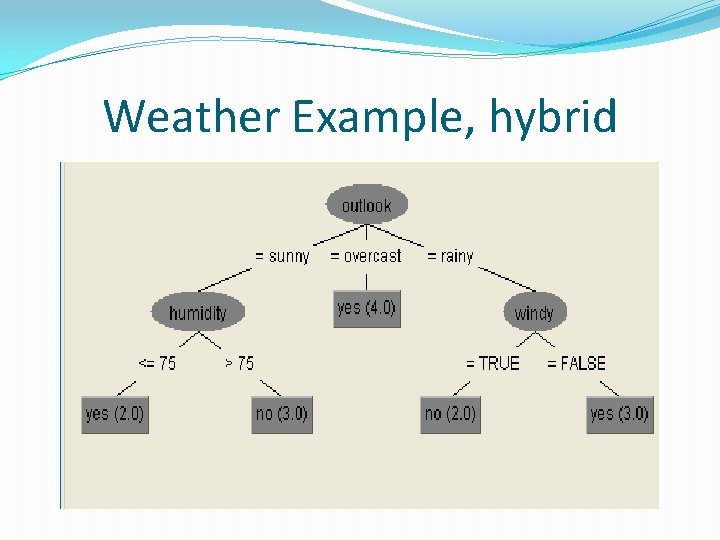

Weather Example, hybrid

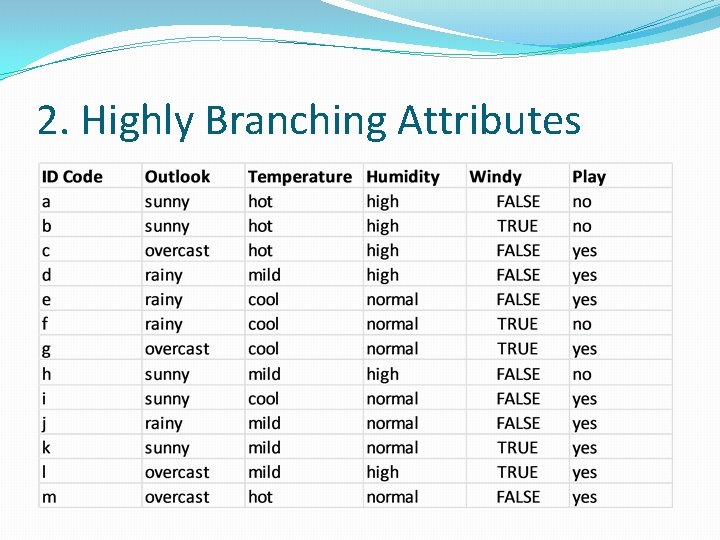

2. Highly Branching Attributes

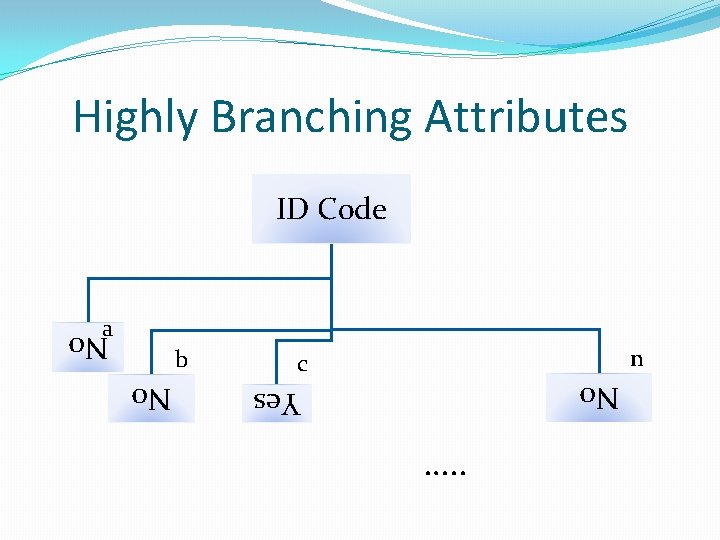

Highly Branching Attributes ID Code a n c No Yes No b …. . No

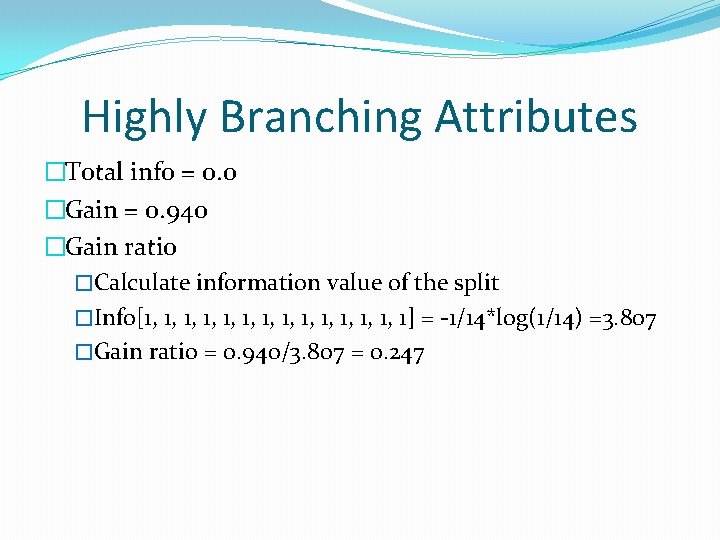

Highly Branching Attributes �Total info = 0. 0 �Gain = 0. 940 �Gain ratio �Calculate information value of the split �Info[1, 1, 1, 1, 1] = -1/14*log(1/14) =3. 807 �Gain ratio = 0. 940/3. 807 = 0. 247

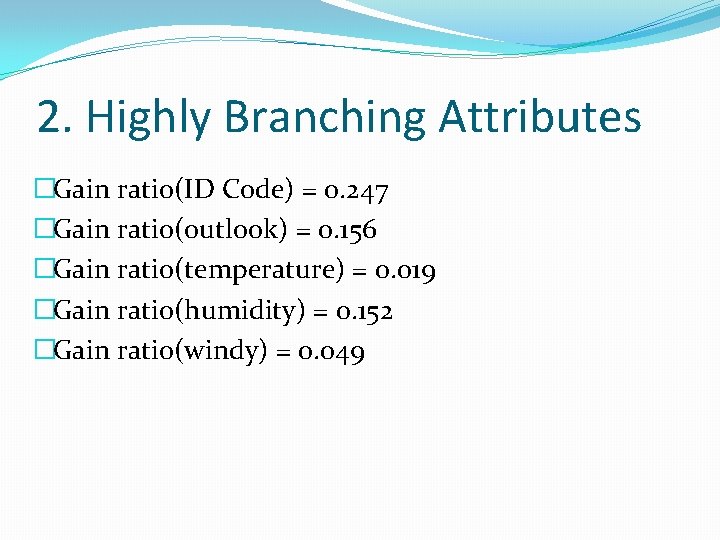

2. Highly Branching Attributes �Gain ratio(ID Code) = 0. 247 �Gain ratio(outlook) = 0. 156 �Gain ratio(temperature) = 0. 019 �Gain ratio(humidity) = 0. 152 �Gain ratio(windy) = 0. 049

3. MODEL OVERFITTING �A learning algorithm is said to overfit relative to a simpler one if it is more accurate in fitting known data (hindsight) but less accurate in predicting new data (foresight) �Past experience can be divided into two groups �Information that is relevant for the future �Irrelevant information ("noise") �Generally occurs when a model is excessively complex �Can exaggerate minor fluctuations in the data

3. OVERFITTING CAUSES �Noise �Lack of data – in particular lack of representative samples �As the number of comparisons increases, it becomes more likely that there will be a difference �Model complexity �Use algorithm of multiple comparison procedure

Predicate Stock rise/Fall in 10 days Assume that the random guess gives ½ chance correct The probability to predicate 8 days correctly is C(10, 8) + C(10, 9) + C(10, 10) / 2^10 = 0. 0547 Which is very unlikely, but possible Strategy is to select the analyst who makes the most correct predication in the 10 days (hindsight) The Probability that at least one of the 50 makes 8 correct guess is 1 – (1 -0, 0547)^50 = 0. 9399, likely. How do you know he will be as lucky in next 10 days?

OVERFITTING SOLUTIONS �Occam’s Razor �William of Ockham (c. 1287 -1347) �Medieval philosopher �Term razor refers to distinguishing between two cases by "shaving away" unnecessary assumptions or cutting apart two similar conclusions. �"simpler explanations are, other things being equal, generally better than more complex ones” �Heuristic idea

OCCAM’S RAZOR �Newton: "We are to admit no more causes of natural things than such as are both true and sufficient to explain their appearances. Therefore, to the same natural effects we must, so far as possible, assign the same causes. ” �Einstein: "It can scarcely be denied that the supreme goal of all theory is to make the irreducible basic elements as simple and as few as possible without having to surrender the adequate representation of a single datum of experience” �Often paraphrased as "Everything should be kept as simple as possible, but no simpler than necessary. "

3. OCCAM”S RAZOR �Minimum Description Length (MDL) �Formalization of Occam’s razor �Best hypothesis is that which leads to best compression �Total cost – Cost(tree, data) = Cost(tree) + Cost(data|tree)

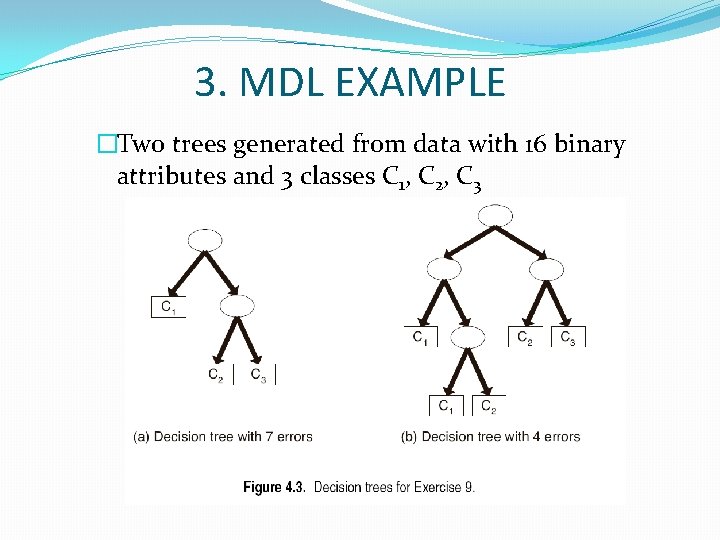

3. MDL EXAMPLE �Two trees generated from data with 16 binary attributes and 3 classes C 1, C 2, C 3

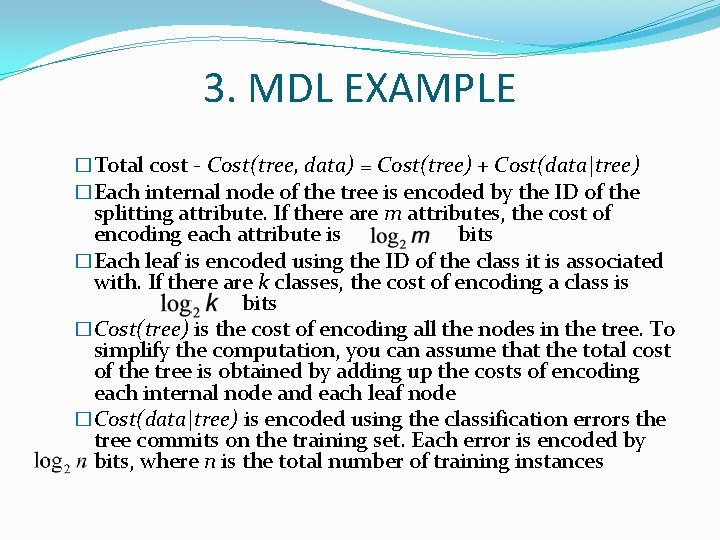

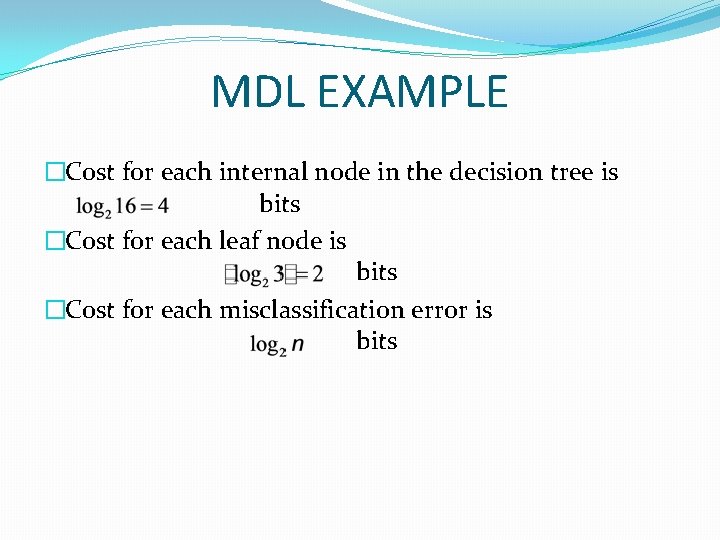

3. MDL EXAMPLE �Total cost - Cost(tree, data) = Cost(tree) + Cost(data|tree) �Each internal node of the tree is encoded by the ID of the splitting attribute. If there are m attributes, the cost of encoding each attribute is bits �Each leaf is encoded using the ID of the class it is associated with. If there are k classes, the cost of encoding a class is bits �Cost(tree) is the cost of encoding all the nodes in the tree. To simplify the computation, you can assume that the total cost of the tree is obtained by adding up the costs of encoding each internal node and each leaf node �Cost(data|tree) is encoded using the classification errors the tree commits on the training set. Each error is encoded by bits, where n is the total number of training instances

MDL EXAMPLE �Cost for each internal node in the decision tree is bits �Cost for each leaf node is bits �Cost for each misclassification error is bits

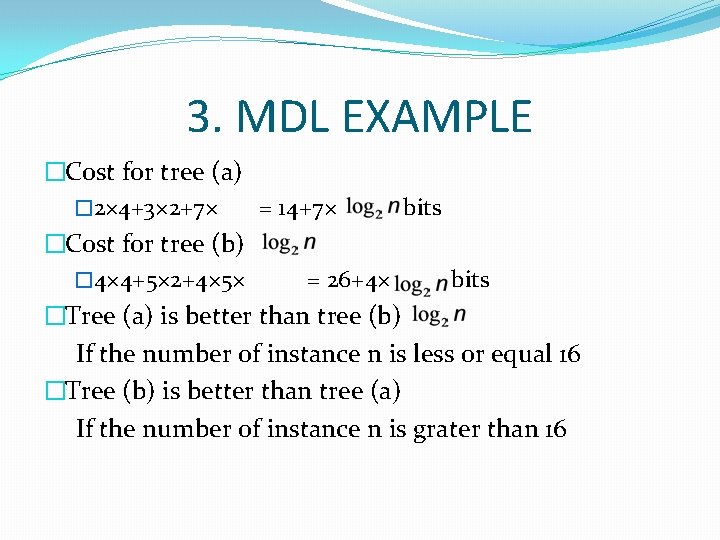

3. MDL EXAMPLE �Cost for tree (a) � 2× 4+3× 2+7× = 14+7× bits �Cost for tree (b) � 4× 4+5× 2+4× 5× = 26+4× bits �Tree (a) is better than tree (b) If the number of instance n is less or equal 16 �Tree (b) is better than tree (a) If the number of instance n is grater than 16

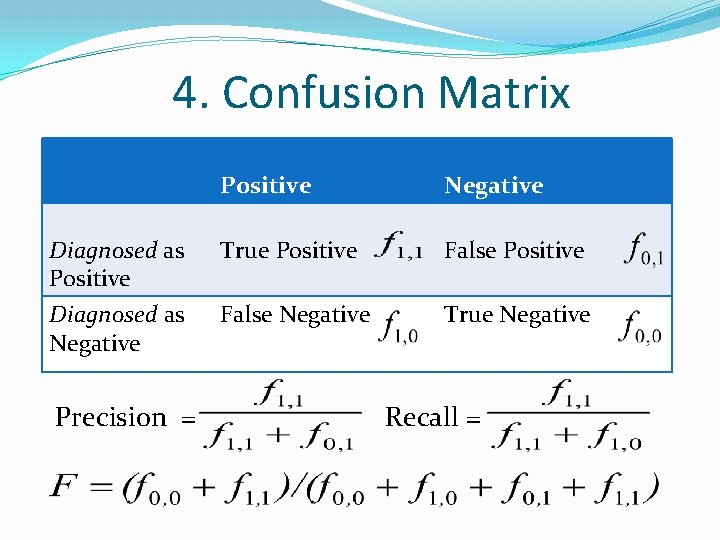

4. Confusion Matrix Positive Negative Diagnosed as Positive True Positive False Positive Diagnosed as Negative False Negative True Negative Precision = Recall =

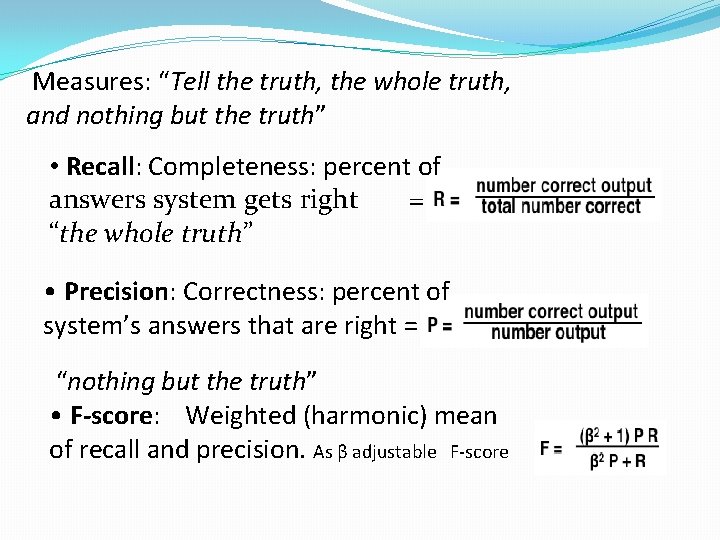

Measures: “Tell the truth, the whole truth, and nothing but the truth” • Recall: Completeness: percent of answers system gets right = “the whole truth” • Precision: Correctness: percent of system’s answers that are right = “nothing but the truth” • F‐score: Weighted (harmonic) mean of recall and precision. As β adjustable F‐score

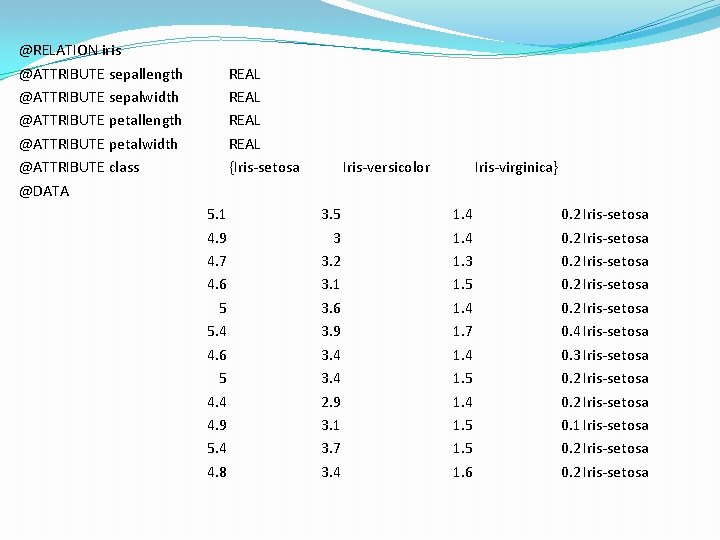

@RELATION iris @ATTRIBUTE sepallength @ATTRIBUTE sepalwidth @ATTRIBUTE petallength @ATTRIBUTE petalwidth @ATTRIBUTE class @DATA REAL {Iris‐setosa 5. 1 4. 9 4. 7 4. 6 5 5. 4 4. 6 5 4. 4 4. 9 5. 4 4. 8 Iris‐versicolor 3. 5 3 3. 2 3. 1 3. 6 3. 9 3. 4 2. 9 3. 1 3. 7 3. 4 Iris‐virginica} 1. 4 1. 3 1. 5 1. 4 1. 7 1. 4 1. 5 1. 6 0. 2 Iris‐setosa 0. 4 Iris‐setosa 0. 3 Iris‐setosa 0. 2 Iris‐setosa 0. 1 Iris‐setosa 0. 2 Iris‐setosa

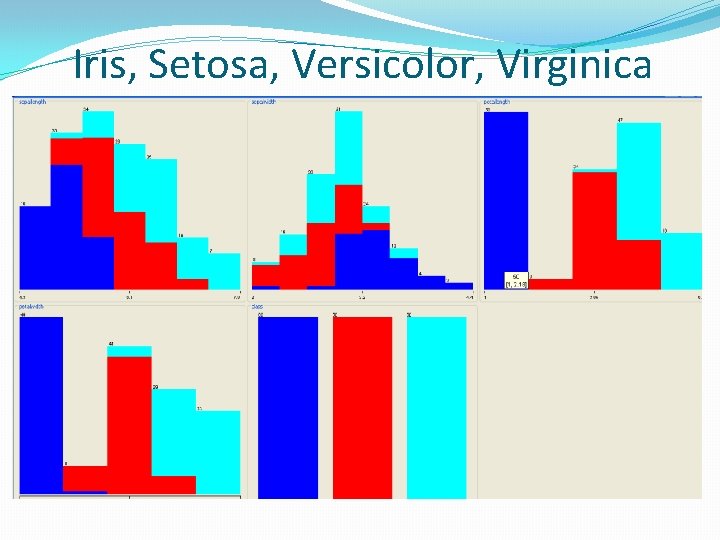

Iris, Setosa, Versicolor, Virginica

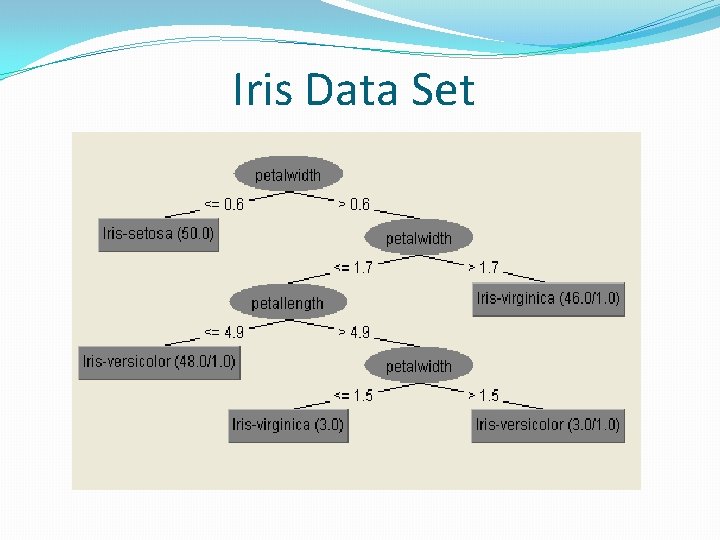

Iris Data Set

5. Model Testing Correctly Classified Instances 144 Incorrectly Classified Instances 6 === Detailed Accuracy By Class === 96 4 % % TP Rate FP Rate Precision Recall F-Measure 0. 98 0 1 0. 98 0. 99 0. 94 0. 03 0. 94 0. 952 0. 96 0. 03 0. 941 0. 96 0. 95 0. 961 Weighted Avg. 0. 96 0. 02 0. 96 === Confusion Matrix === a b c <-- classified as 49 1 0 | a = Iris-setosa 0 47 3 | b = Iris-versicolor 0 2 48 | c = Iris-virginica ROC Area Class Iris-setosa Iris-versicolor Iris-virginica 0. 968

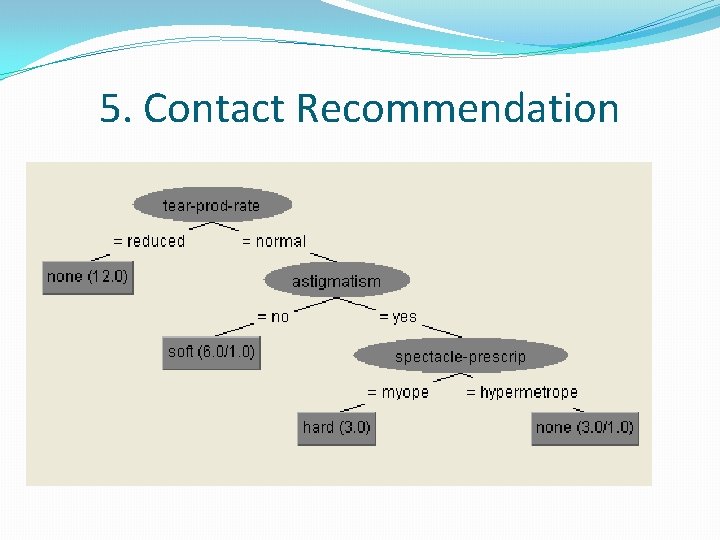

5. Contact Recommendation

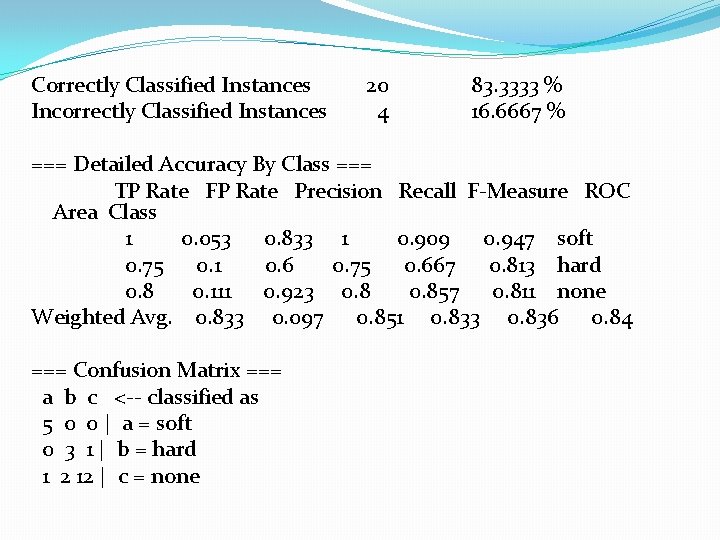

Correctly Classified Instances Incorrectly Classified Instances 20 4 83. 3333 % 16. 6667 % === Detailed Accuracy By Class === TP Rate FP Rate Precision Recall F-Measure ROC Area Class 1 0. 053 0. 833 1 0. 909 0. 947 soft 0. 75 0. 1 0. 6 0. 75 0. 667 0. 813 hard 0. 8 0. 111 0. 923 0. 857 0. 811 none Weighted Avg. 0. 833 0. 097 0. 851 0. 833 0. 836 0. 84 === Confusion Matrix === a b c <-- classified as 5 0 0 | a = soft 0 3 1 | b = hard 1 2 12 | c = none

Think about Cost Sensitive Learning �Diagnostics of patients for possible cancer �Identify potential terrorists �Send email to potential customers (400, 000 households for which the response rate will be 0. 2%). �Keyword, ROC (Receiver Operating Characteristics) Used to characterize the trade-off between the hit rate and false-alarm rate over noise channels. 5. 7 of Witten’s book.

Question 1. What is the good definition of learning Machine vs. Human learning? �To get knowledge of something by study, experience, or being taught �To become aware by information or from observation �To commit to memory �To be informed of or to ascertain �To receive instruction 2. What is the Minimal Description Length Principle?

- Slides: 32