Thwarting cache based side channel attacks using virtual

Thwarting cache based side channel attacks using virtual caches Shyam Murthy Advisor: Dr. Gurindar. S. Sohi

Key contributions ● Mitigating L 1 conflict based attacks ● Randomize at coarser granularity (page) and ensure per-process isolation ● Implicit remapping of hash entries exploiting shorter page lifetimes ● Replacement policy change to enable more frequent remapping

Cache based side channel attacks Broadly divided into two classes: ● Conflict based attacks: Attacker tries to learn the mapping of addresses to sets in the cache ● Reuse based attacks: Attacker relies on the fact that a previously accessed data item will be cached, attacks are insensitive to the location of the line in the cache

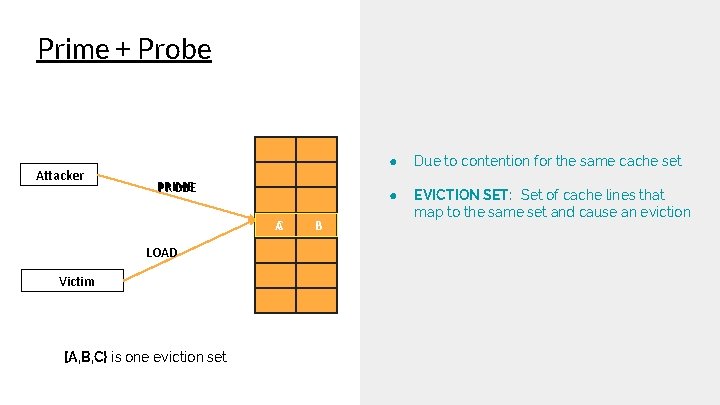

Conflict based attacks ● ● ● These attacks rely on data dependent resource contention An example could be bits of a secret key that is used to compute an address which we use to perform a cache lookup Variation of this is the Prime+Probe, described in the next slide

Prime + Probe Attacker PRIME PROBE AC LOAD Victim {A, B, C} is one eviction set B ● Due to contention for the same cache set ● EVICTION SET: Set of cache lines that map to the same set and cause an eviction

Reuse based attacks ● ● Relies on the attacker and victim sharing data Insensitive to the location of the data in the cache Reuse of the same data will result in a subsequent cache hit An example of such an attack is Flush+Reload

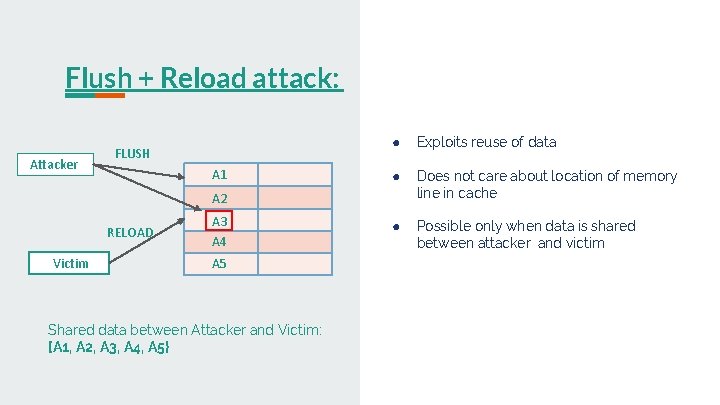

Flush + Reload attack: Attacker FLUSH A 1 ● Exploits reuse of data ● Does not care about location of memory line in cache ● Possible only when data is shared between attacker and victim A 2 RELOAD Victim A 3 A 4 A 5 Shared data between Attacker and Victim: {A 1, A 2, A 3, A 4, A 5}

Prior Work

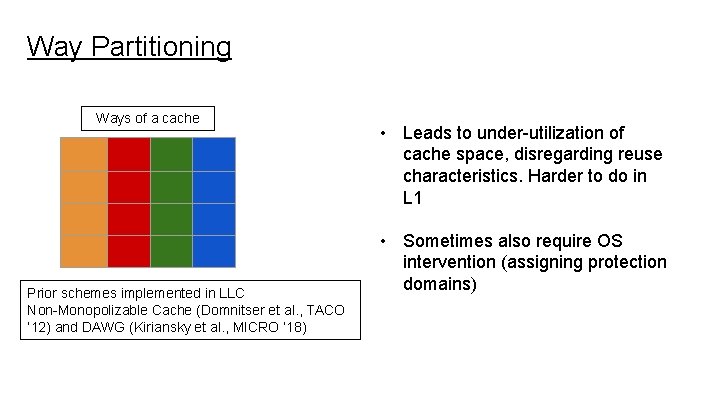

Way Partitioning Ways of a cache Prior schemes implemented in LLC Non-Monopolizable Cache (Domnitser et al. , TACO ’ 12) and DAWG (Kiriansky et al. , MICRO ‘ 18) • Leads to under-utilization of cache space, disregarding reuse characteristics. Harder to do in L 1 • Sometimes also require OS intervention (assigning protection domains)

New. Cache (MICRO ‘ 08) ● Performs randomization using a Randomized Mapping table ● Mapping done at a cache line granularity in the L 1 cache. (too fine) ● Additionally storage requirements scale with number of cache sets and number of concurrently running applications ● Need something more coarse grained and that provides per process isolation

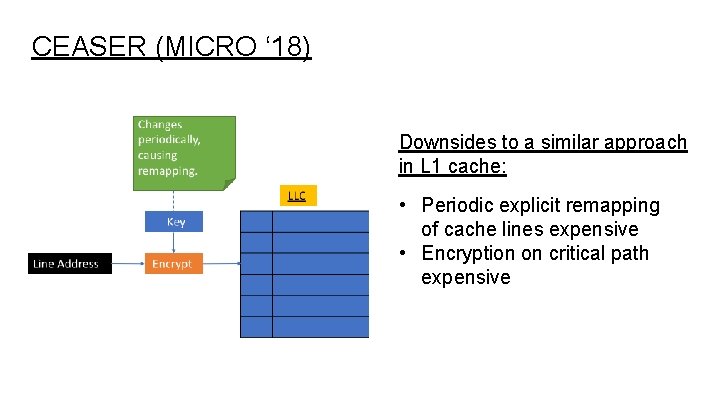

CEASER (MICRO ‘ 18) Downsides to a similar approach in L 1 cache: • Periodic explicit remapping of cache lines expensive • Encryption on critical path expensive

Virtual Cache VC-DSR Recently proposed virtual cache design Data cached with only one (primary) virtual address Dynamic Synonym Remapping Synonym virtual address mapped to primary virtual address with which data cached 12

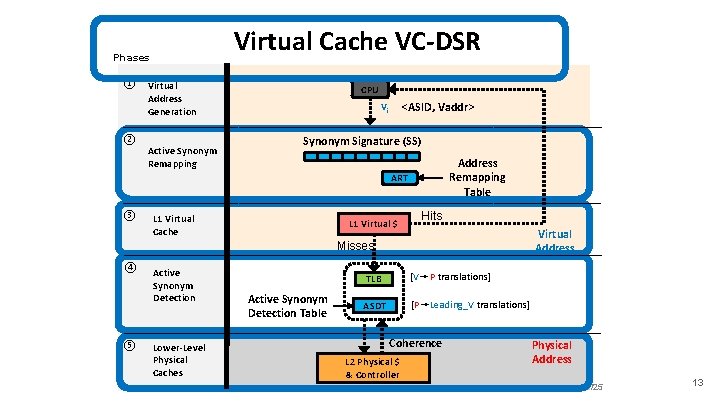

Virtual Cache VC-DSR Phases ① Virtual Address Generation ② Active Synonym Remapping CPU <ASID, Vaddr> Vi Synonym Signature (SS) Address Remapping Table ART ③ L 1 Virtual Cache L 1 Virtual $ Hits Virtual Address Misses ④ ⑤ Active Synonym Detection Lower-Level Physical Caches Active Synonym Detection Table TLB [V➙P translations] ASDT [P➙Leading_V translations] Coherence L 2 Physical $ & Controller Physical Address /25 13

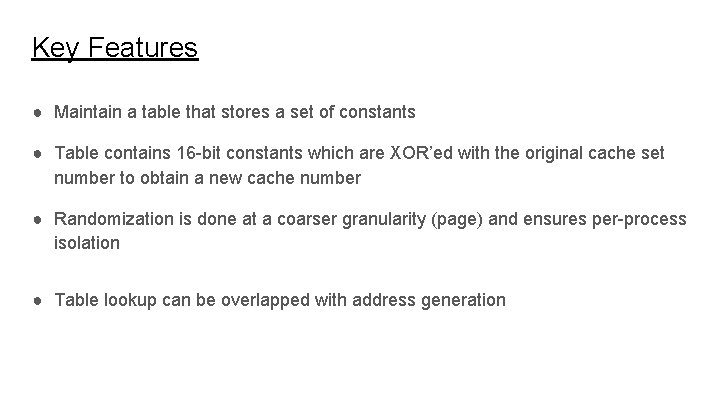

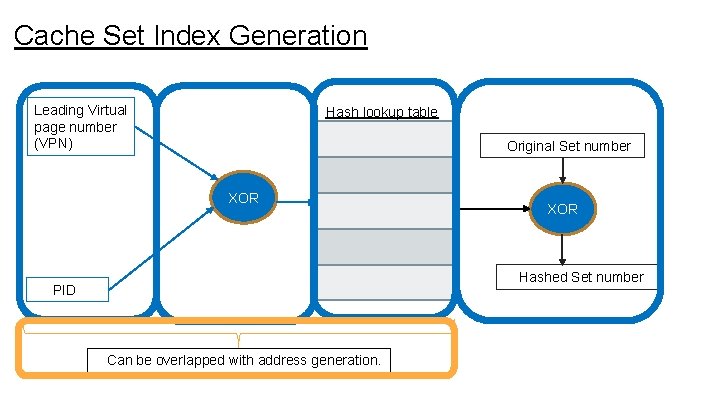

Key Features ● Maintain a table that stores a set of constants ● Table contains 16 -bit constants which are XOR’ed with the original cache set number to obtain a new cache number ● Randomization is done at a coarser granularity (page) and ensures per-process isolation ● Table lookup can be overlapped with address generation

Threat model ● A conservative approach we take is to say that the time for a successful attack, is the time it takes for an attacker to learn an eviction set

Cache Set Index Generation Leading Virtual page number (VPN) Hash lookup table Original Set number XOR Hashed Set number PID Can be overlapped with address generation.

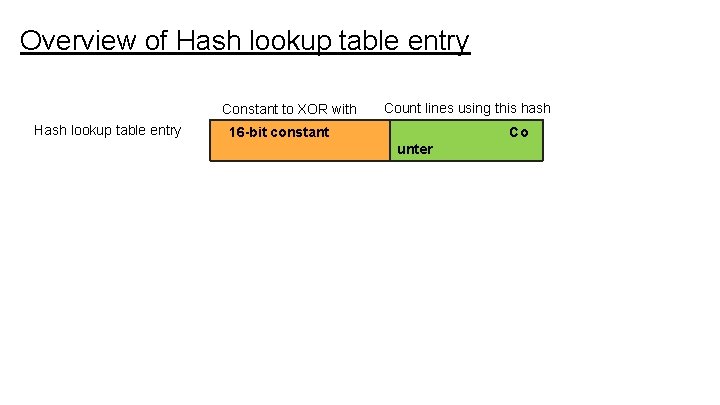

Overview of Hash lookup table entry Constant to XOR with Hash lookup table entry Count lines using this hash 16 -bit constant Co unter

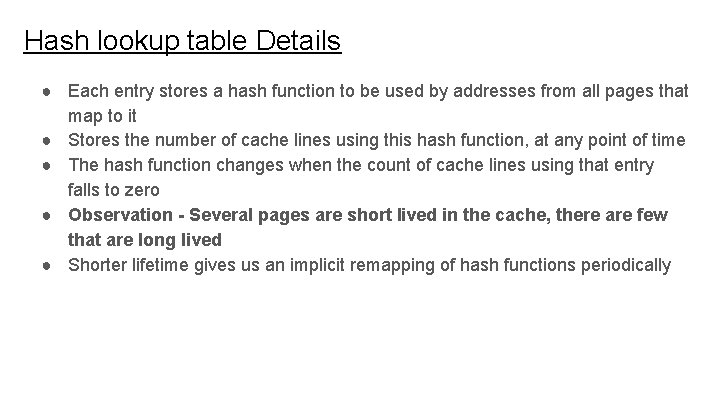

Hash lookup table Details ● Each entry stores a hash function to be used by addresses from all pages that map to it ● Stores the number of cache lines using this hash function, at any point of time ● The hash function changes when the count of cache lines using that entry falls to zero ● Observation - Several pages are short lived in the cache, there are few that are long lived ● Shorter lifetime gives us an implicit remapping of hash functions periodically

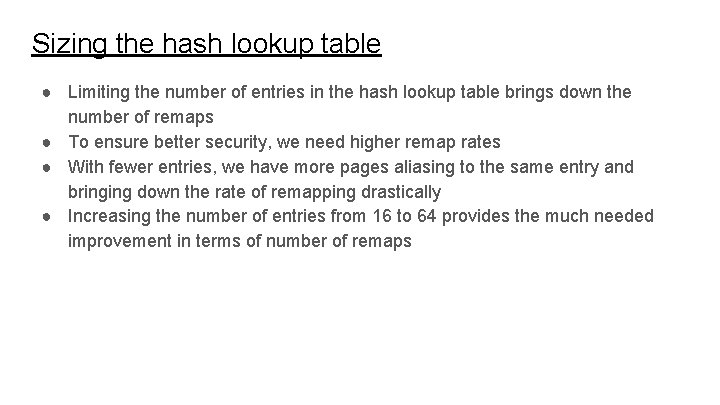

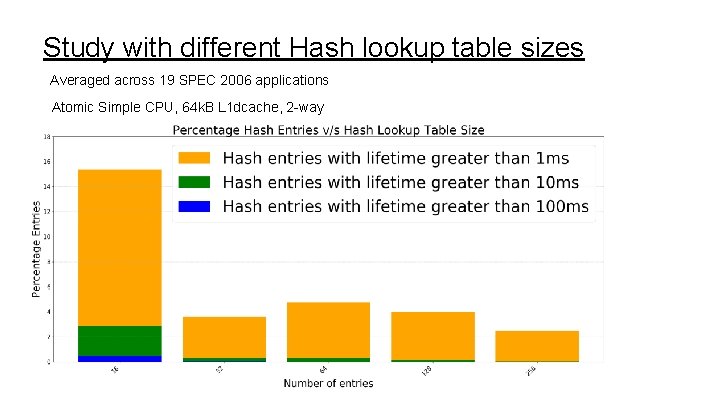

Sizing the hash lookup table ● Limiting the number of entries in the hash lookup table brings down the number of remaps ● To ensure better security, we need higher remap rates ● With fewer entries, we have more pages aliasing to the same entry and bringing down the rate of remapping drastically ● Increasing the number of entries from 16 to 64 provides the much needed improvement in terms of number of remaps

Study with different Hash lookup table sizes Averaged across 19 SPEC 2006 applications Atomic Simple CPU, 64 k. B L 1 dcache, 2 -way

Impact on Hit rate with varying number of entries ● The net impact on hit rate is minimal for all these cases ● Worst case impact on hit rate is 0. 07% ● These numbers could vary based on random seed value, but the key is that the impact on hit rate is very minimal, with the hashing scheme introduced ● Hashing is the same for all cache lines that are a part of the same page

Hash Table counter updates and lookup ● Upon cache lines eviction, the count of cache lines from the page is updated in the ASDT ● Simultaneously, we can update the entry in the hash lookup table ● When this counter falls to zero, the hash function in use is changed for the entry ● Table lookup can be performed in parallel with the address generation ● The base register can be used to perform the lookup corresponding to page and page+1 (in case of an overflow)

Issues with hashing function ● Our hashing function is not one-to-one, so there can be multiple sets that get hashed to the same set after getting hashed ● A naive implementation would need larger tags (similar to size of a tag in a fully associative cache), but we are currently exploring ways to alleviate this problem

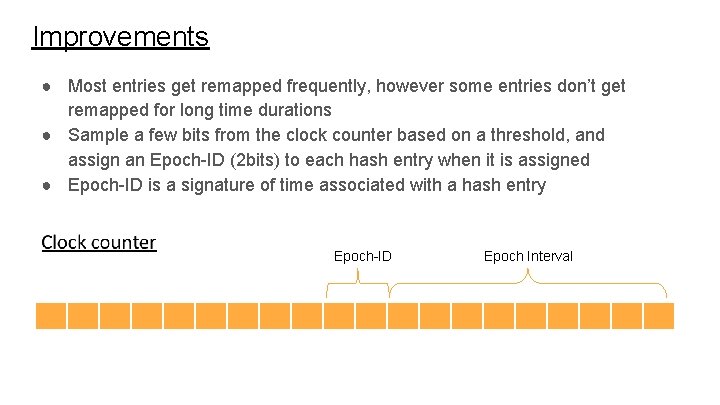

Improvements ● Most entries get remapped frequently, however some entries don’t get remapped for long time durations ● Sample a few bits from the clock counter based on a threshold, and assign an Epoch-ID (2 bits) to each hash entry when it is assigned ● Epoch-ID is a signature of time associated with a hash entry Epoch-ID Epoch Interval

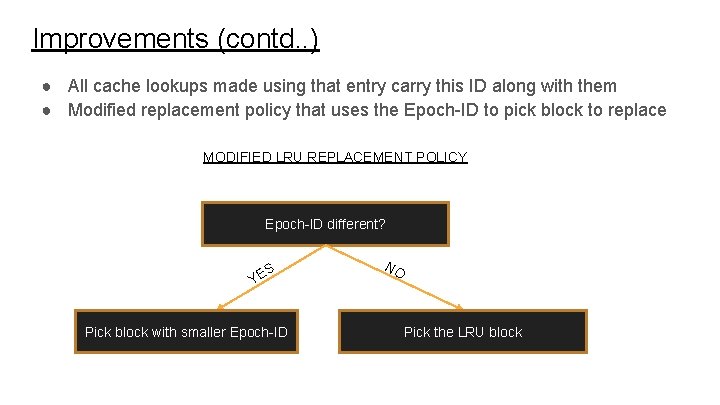

Improvements (contd. . ) ● All cache lookups made using that entry carry this ID along with them ● Modified replacement policy that uses the Epoch-ID to pick block to replace MODIFIED LRU REPLACEMENT POLICY Epoch-ID different? S YE Pick block with smaller Epoch-ID NO Pick the LRU block

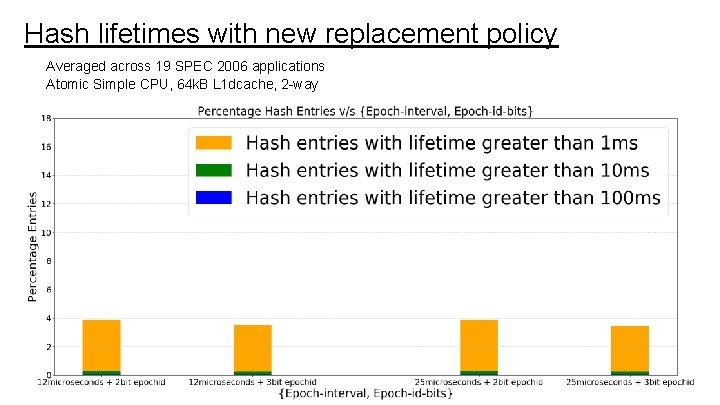

Hash lifetimes with new replacement policy Averaged across 19 SPEC 2006 applications Atomic Simple CPU, 64 k. B L 1 dcache, 2 -way

Cache hit rate impact with new replacement policy ● Impact on the hit rate is minimal, around 0. 4% in the worst case

Limitations to the replacement policy approach Entries assigned higher Epoch-ID’s tend to stay in the cache longer, if they see contention from cache lines with lower Epoch-IDs Sometimes, this even reduces remapping w. r. t original Implicitly rely on cache set contention and cannot be used to provide a guarantee

VIVT/VIPT/PIPT ● Remap rate is something not as important for a VIVT design, because different processes do not share the same hashing functions ● VIPT caches, this methodology would not work, because we would have different hash functions that get used in case of a synonymous access, taking them to different cache locations ● PIPT cache (I think getting a better remap rate will be most useful in this context)

Acknowlegements ● Dr. Gurindar S. Sohi ● Dr. Swapnil Haria ● Krati Agrawal

- Slides: 30